Abstract

This paper examines the potential uses of ChatGPT in generating assessment tasks that can be used across different disciplines in higher education. To illustrate this, we provide examples from three courses in the disciplines of industrial engineering and applied linguistics: Project Analysis and Control, Manufacturing Processes, and Introduction to Linguistics. Our examination of ChatGPT focuses on how some of the common, but time-consuming, tasks can be generated by using ChatGPT as a supportive instructional tool. We observed in our analysis that ChatGPT demonstrates a high level of performance in generating assessment questions and tasks that are accurate and on-topic, and a level of creativity and flexibility in its question generation capabilities. However, it is important to note that ChatGPT is not designed to replace human expertise or judgment. It is crucial that instructors carefully evaluate the reliability and accuracy of the assessments or information generated by ChatGPT.

Introduction

The use of online learning platforms has become increasingly prevalent in higher education to increase access and flexibility for students, particularly with the COVID-19 pandemic. As such, new computer- or web-based technologies are frequently introduced and/or repurposed for teaching and learning, such as Artificial Intelligence (AI)-powered chatbots. Chatbots are computer programs designed to simulate conversation with human users, especially over the Internet. They can be used for a variety of purposes, such as customer service, entertainment, and information retrieval.

One AI-powered chatbot technology that was released in November 2022 is ChatGPT, a chatbot that uses a large language model called Generative Pre-training Transformer 3 to generate responses to user input. ChatGPT, also defined as “a type of AI that uses deep learning (a form of machine learning) to process and generate natural language text” (Susnjak, 2022), has marked a significant advancement in AI that involves natural language processing and reasoning. This technology produces text similar to human-generated texts and is capable of engaging in complex conversations, providing information on a wide range of topics, and generating accurate answers to challenging questions that require advanced analysis, synthesis, and application of information.

Although ChatGPT's potential uses by students for generating essays and its risks for increasing plagiarism have received significant attention, its potential uses by instructors in higher education remain under-examined (Qadir, 2022; Qadir et al., 2022). As such, we explored capabilities of ChatGPT in generating course assessment tasks in two disciplinary contexts, industrial engineering (IE) and applied linguistics. In this paper, we share how some common assessment tasks in these fields can be generated by using ChatGPT and analyze their accuracy and appropriateness. We conclude with recommendations for higher education instructors on how to use ChatGPT to enhance their instruction.

Chatbots in Education

There have been a number of studies conducted on chatbots that generally focused on evaluating the effectiveness of chatbots for various tasks, such as customer service (Xu et al., 2017), language translation (Park & Aiken, 2019), and content generation (Fang et al., 2018). Some studies have also looked at the impact of chatbots on human-human communication (Hill et al., 2015; Lew & Walther, 2023), and the ethical and social implications of using chatbots in different contexts (Park et al., 2021).

There have also been a number of studies on the use of chatbot technology in education, focusing on a variety of applications, such as answering students’ questions (Clarizia et al., 2018; Ranoliya et al., 2017; Sinha et al., 2020), teaching computer programming concepts (Okonkwo & Ade-Ibijola, 2020; Zhao et al., 2020), assessing students’ performance (Benotti et al., 2017; Durall & Kapros, 2020), and providing administrative services (Röhrig & Heß, 2019). Some studies have also conducted literature reviews to summarize existing knowledge on the topic (Chinedu & Ade-Ibijola, 2021; Pérez et al., 2020).

There is also evidence from multiple research studies that chatbots can be effectively utilized in educational settings (Durall & Kapros, 2020; Hien et al., 2018; Nguyen et al., 2019; Ranoliya et al., 2017). For instance, the implementation of chatbots in education has the potential to enhance students’ learning experiences and increase satisfaction (Winkler & Söllner, 2018). Chatbots allow teachers to upload information about the course, assignments, and other course requirements to an online platform for easy access by students (Akcora et al., 2018; Yang & Evans, 2019) and maximize student learning abilities and achievement (Clarizia et al., 2018; Murad et al., 2019). Moreover, chatbots support student learning by providing them quick answers to their questions, which can help keep students engaged as a result of this interactive and comfortable learning environment (Adamopoulou & Moussiades, 2020; Molnár & Szüts, 2018). Finally, the use of chatbots in education allows for efficient and quick communication (Alias et al., 2019; Sreelakshmi et al., 2019).

Despite the potential benefits of using AI-powered chatbots in education, there are also potential risks for educators and students. One concern is the possibility that students may rely too heavily on chatbots for assistance with assignments and projects, leading to a decline in critical thinking and problem-solving skills. Researchers have also argued that such heavy reliance on assistance from chatbots on assignments encourages academic dishonesty and plagiarism (Ruane et al., 2019). These studies highlight the need for careful consideration of the ethical implications of using AI in education.

ChatGPT in Education

ChatGPT (https://openai.com/blog/chatgpt/) is an AI-powered chatbot developed by OpenAI (https://openai.com/), a research organization focused on AI. It is based on a variant of the popular Generative Pre-training Transformer (GPT) language model, which is designed to generate human-like text in real time. ChatGPT is intended to enable computers to communicate with humans in a more natural and intuitive way, by generating text that is similar to the way a human would speak or write.

ChatGPT was publicly released in November 2022 and has since gained widespread attention due to its advanced capabilities in natural language processing and reasoning. It is able to engage in sophisticated dialogue, providing information on a wide range of topics and answering complex questions that require an advanced level of analysis, synthesis, and application of information. ChatGPT is also able to generate critical questions itself, the type of questions that educators in different disciplines might use to assess students’ competencies. In addition to these capabilities, ChatGPT has many potential applications, including language translation, content generation, customer service, and higher education. In the context of higher education, ChatGPT could potentially be used to create personalized and interactive learning experiences for students, as well as to provide instant feedback and support to help students stay motivated and on track with their studies.

As in the previous studies on chatbots’ potential risks in educational contexts, a few publications also recently pointed out ChatGPT's potential risks to increase plagiarism and jeopardize academic integrity in higher education, especially if used by students to complete their schoolwork (such as writing essays and research papers, solving problems, cheating in online exams, etc.) (Stokel-Walker, 2022; Susnjak, 2022). On the other hand, similar to other chatbots, ChatGPT also has the potential to enhance education by providing students personalized, on-demand access to information and resources, and by creating an interactive, adaptive learning environment to meet the unique needs and learning styles of individual students. Additionally, ChatGPT and similar technologies can assist educators by providing them with new tools for creating and delivering educational content, as well as for assessing student progress and understanding. ChatGPT has been proposed as a potential tool for automated essay scoring, dialogue-based assessment, multiple-choice assessment, and adaptive assessment by its developer, OpenAI (Atlas, 2023). However, to the best of our knowledge, there are no published articles specifically discussing the use of ChatGPT for assessment purposes in higher education. Therefore, this paper aims to investigate the use of ChatGPT in designing instructional assessments and tasks in higher education, by providing perspectives across different disciplines, specifically IE and applied linguistics. Our purpose is to suggest ways to effectively use ChatGPT in a manner that improves teaching and learning.

Designing Instructional Tasks and Assessments with ChatGPT

Context and Approach to Generating Instructional Tasks and Assessments

Before we explain our approach to generating tasks and assessments using ChatGPT, it is useful to provide information about our higher education context. The first author, a professor of IE at a higher education institution in the United States (US), teaches a variety of courses in the department's undergraduate and graduate programs. A primary responsibility is to lead a course on project management which covers the principles and techniques used to plan, execute, and control projects in various settings. In addition to the project management course, he also teaches a class on manufacturing processes and technology that covers topics such as process selection, quality control, and lean manufacturing. The second author is a faculty member in an English Department at a US higher education institution, teaching courses on applied linguistics and methods and assessment strategies in Teaching English to Speakers of Other Languages (TESOL). Prior to this role, she also taught English as a Second Language (ESL) to international students preparing for post-secondary and graduate school education in the US and in Turkey. These disciplines reflect the expertise and interests of the professors involved in this project. Our goal is to showcase the capabilities of ChatGPT for assessment design and demonstrate its potential use in a variety of disciplines. By providing examples from a STEM field (IE) and the social sciences (linguistics), we hope that professors of higher education across disciplines will gain perspectives relevant for their own contexts.

To explore the potential uses of ChatGPT in designing instructional tasks, materials, and assessments, we focused on commonly used assessment types in higher education contexts that are time-consuming to prepare and require critical, in-depth thinking and extensive research and analysis. We determined specific commands to be provided to ChatGPT. Identifying and providing the right command is a crucial step in ensuring accurate and relevant responses from ChatGPT. While it depends on the specific use case and desired outcome, some general practices in generating prompts include being clear and specific, keeping prompts short and to the point, providing context, using appropriate vocabulary, and being consistent. The prompt generation process is similar to using keywords in any search engine, and typically involves several rounds of experimentation and refinement to optimize prompts for the specific use case and desired outcome. It is also important to evaluate and refine the responses generated by ChatGPT. This involves analyzing the output for accuracy, relevance, and appropriateness, and making adjustments to the prompts as necessary to improve the quality of the responses. In cases where the prompt is not specific and contextualized enough, the responses and outputs may not be relevant or useful which will require further revisions of the prompt provided to ChatGPT.

For this paper, we focused the discussion and analysis on four assessment tasks: multiple-choice questions, case study scenarios, mathematical problems, and linguistic data analysis tasks. In the sections that follow, we discuss the benefits and uses of each task, as well as difficulties for instructors. Then, by focusing on a unit in a sample core course in IE and applied linguistics, we asked ChatGPT to generate the relevant tasks. We then present, analyze, and discuss each of the tasks considering the purposes of each assessment, the relevant universal intellectual standards, such as clarity, accuracy, and relevance (Paul & Elder, 2013), and assessment design principles, such as those offered for designing multiple-choice items (Carneson et al., 2016).

Designing Multiple-Choice Questions Using ChatGPT

Multiple-choice questions can provide a quick and efficient way for instructors to assess students’ understanding of course material, test knowledge of specific facts, concepts, and terminology, and identify areas where students need additional support or clarification. Designing effective multiple-choice questions, however, is one of the most difficult tasks that instructors face when creating quizzes and exams. To create reliable and valid multiple-choice items, instructors have to ensure that the stem of the question is clear and concise, choices are written in the same grammatical structure and length, correct answers are randomly placed, and distractors are challenging for item discrimination purposes (Carneson et al., 2016).

To assess the capacity of ChatGPT to generate thought-provoking multiple-choice questions, we focused on a unit in Project Analysis and Control, a core course in the IE curriculum. Project Analysis and Control is designed to provide a socio-technical perspective to the management of projects. The content of this course deals with planning, scheduling, organizing, and implementing projects, such as, product development, construction, information systems, new business, and special events. Primary emphasis of the course is on the project management process and tools. Organization strategy and project selection is a common topic in this course.

The following commands were given to ChatGPT to generate the multiple-choice questions: “Generate five difficult

“Generate another set of five questions in the same topic”

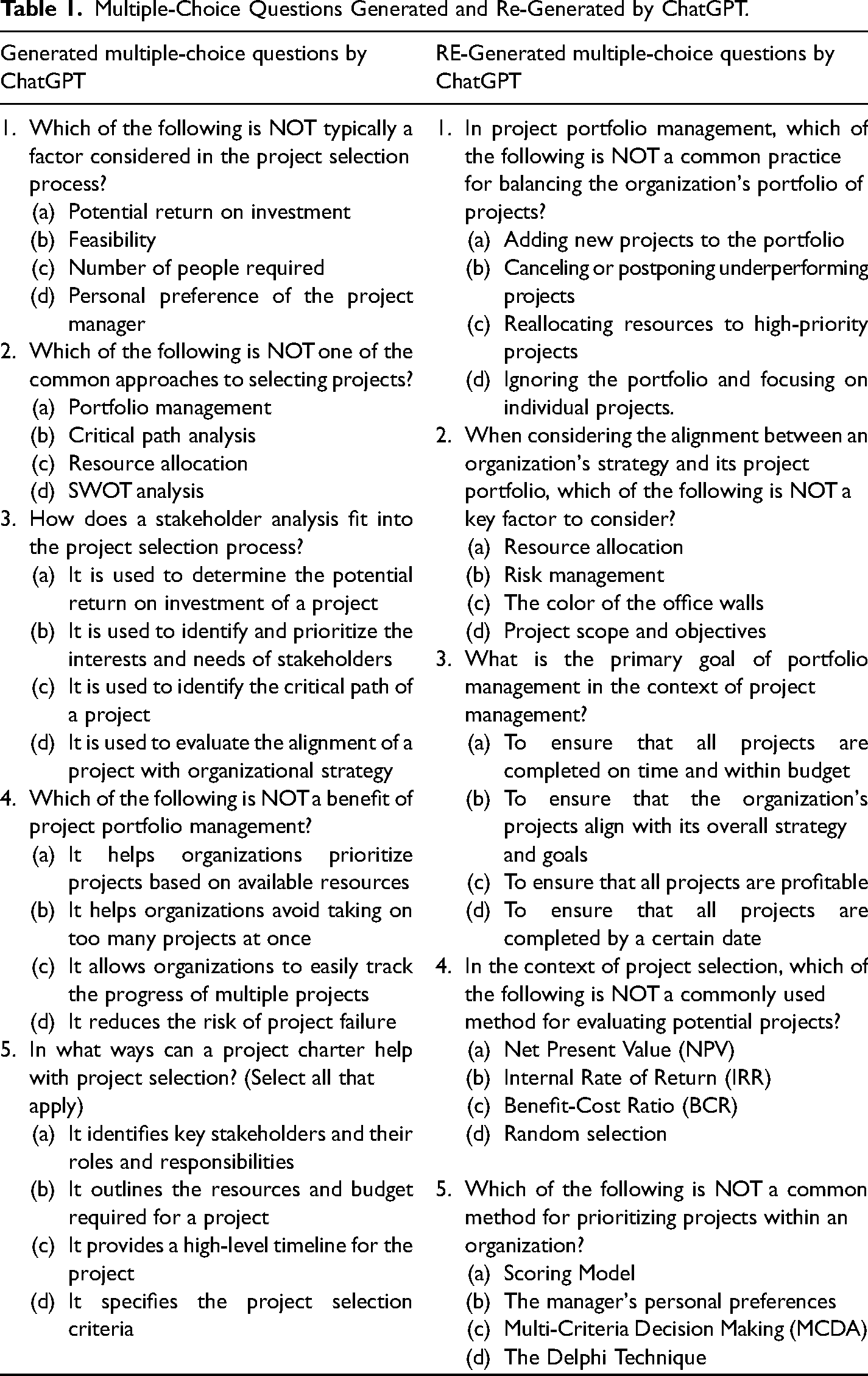

As can be seen from Table 1 below, we asked ChatGPT to “generate” and also “regenerate” multiple-choice items because ChatGPT has the capability to regenerate multiple-choice questions on the same topic but with different variations. Regenerating another set of questions on the same topic is beneficial because it ensures that students are exposed to a variety of questions and problem-solving scenarios. This prevents students from memorizing answers to a specific set of questions and encourages them to understand the underlying concepts and principles. Providing students with new and challenging problems to solve can also increase their engagement and motivation in a course.

Multiple-Choice Questions Generated and Re-Generated by ChatGPT.

The multiple-choice questions generated by ChatGPT appear to be relevant and accurately represent the topic. The question stems are concisely and clearly written in as few words as possible. Question stems do not provide a clue to the correct alternative, and when a negative stem is used (e.g., Questions 1, 2, and 4), the word NOT is capitalized to catch the attention of the test-taker to avoid a reliability concern. Similarly, alternatives for each question in both generated and re-generated questions follow similar grammatical patterns. For instance, Question 1 in the generated questions, and Questions 4 and 5 in the re-generated questions, all consist of a noun phrase. As such, all the alternatives in Question 1 in the re-generated questions start with a gerund (i.e., nominalization of a verb by adding the -ing suffix, such as adding, canceling, ignoring, etc.). Moreover, alternatives in each question are written in similar length, and the position of the correct alternative varies across the generated or re-generated questions. Distractors in the alternatives seem to be carefully chosen and equally challenging, except the alternative C in Re-generated Question 2 (“the color of the office walls), which is obviously irrelevant and can easily be eliminated by the test-takers. Therefore, although the multiple-choice items generated by ChatGPT have face validity, instructors are advised to review them before using them directly in their classes.

Although it is not possible to determine the effectiveness of these multiple-choice items generated by ChatGPT without collecting data from test-takers, these aspects of the question stems and alternatives increase the degree of item facility and item discrimination values of each question, resulting in more reliable and valid assessments. In that sense, using ChatGPT as a supportive tool in generating multiple-choice questions would be a good alternative for instructors who want to create such assessment tasks without worrying about typical reliability issues.

Designing Case Study Scenarios Using ChatGPT

Case study approach in teaching and learning, also referred to as “the case-method”, can be defined as “an approach to teaching and research that draws almost entirely upon real-world examples for its knowledge acquisition and informing activities.” (Gill, 2011). Case studies are an important tool for teaching project management courses in IE because they provide students with a realistic and practical context for learning the concepts and principles of project management. Case studies allow students to apply their knowledge and skills to real-world scenarios, which helps deepen their understanding of the subject matter through these hands-on learning experiences (Merseth, 1991). Students learn how to navigate complex project environments and how to apply project management methodologies and tools to resolve problems (Boyce, 1993). By exposing students to real-life complex scenarios, case studies develop students’ critical thinking, problem-solving, and decision-making skills that are essential for project management (Africa, 2003). Furthermore, case studies often require students to work in teams, which help develop their teamwork and collaboration skills. They learn how to work together to achieve common goals and to share knowledge and resources. Finally, case studies require students to present their findings and recommendations in written or oral form, which helps them to develop communication skills that are essential for project management (Africa, 2003).

However, developing a case study can be a challenging task for college professors (Stearns, 2009). Firstly, it is a time-consuming process that requires extensive research and analysis. Secondly, professors may not have the expertise or experience in the specific industry or topic that the case study covers. In addition, developing a case study requires balancing the level of detail to make it accessible for the students without overwhelming them with too much information (Golich, 2000). Finally, a well-written case study is engaging, interactive, and makes students think critically, which can be challenging for professors to achieve as they need to keep in mind the students’ level of understanding, interests, and learning styles (Herreid, 2011).

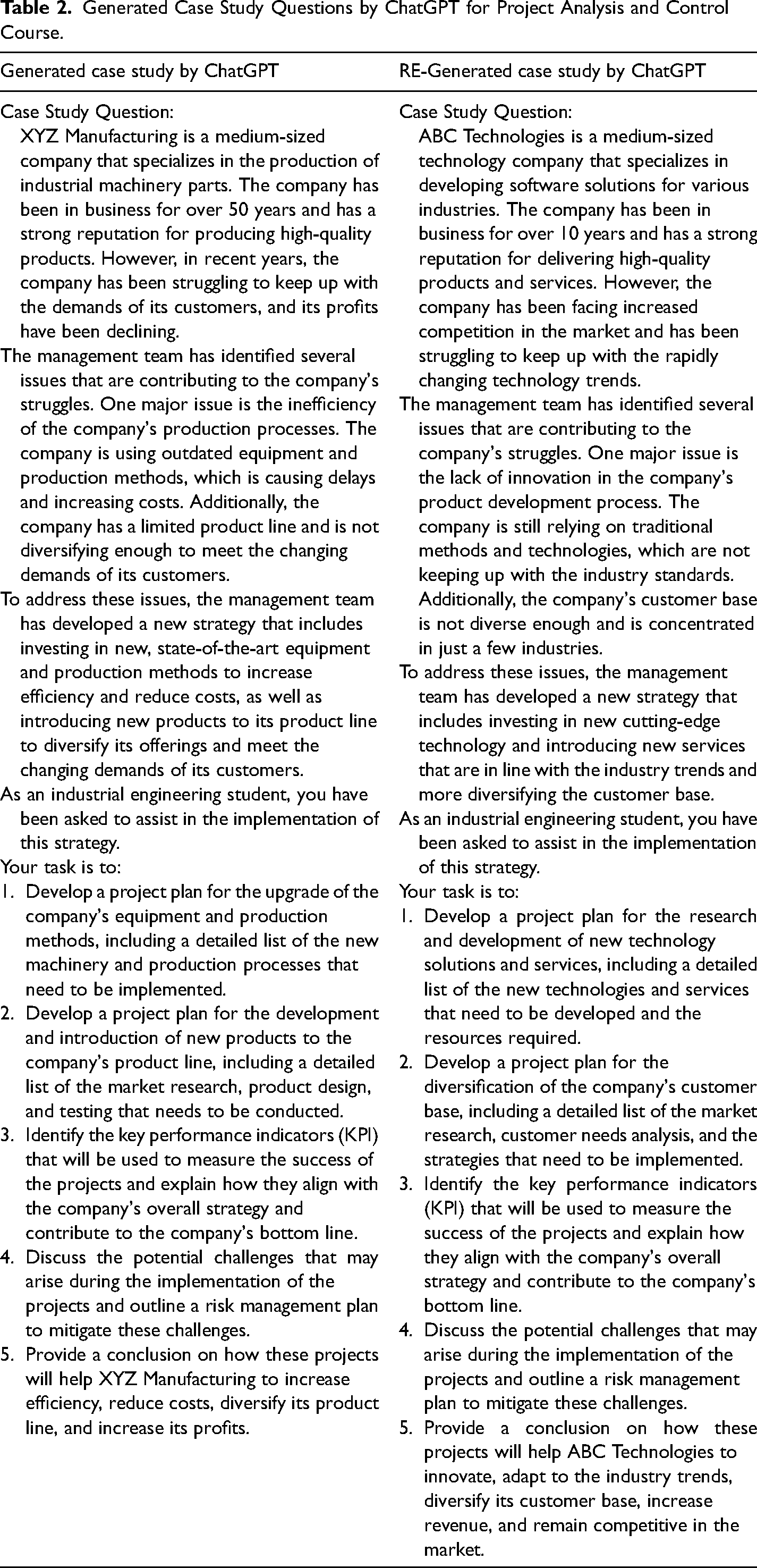

To assess the capacity of ChatGPT in generating case study scenarios, we provided the following commands to ChatGPT, on the same topic of organization strategy and project selection (Table 2): “Generate a

Generated Case Study Questions by ChatGPT for Project Analysis and Control Course.

“Generate another case study in the same topic”

The generated case studies provide detailed and appropriate scenarios to be used as an assessment for IE students because they present a realistic scenario that is one of the four key components that are crucial for effective case design (Grimes, 2019; Nilson, 2016). Each case study generated by ChatGPT above requires a solution for a company facing challenges and the need for implementing new strategies to improve performance. Each scenario provides a clear understanding of the company's background, current issues, and the proposed solution, which is investing in new equipment and production methods, and introducing new products to diversify their offerings.

According to (Nilson, 2016), a case should also require students to draw on their prior knowledge, preferably from course content that they are familiar with. The tasks given to the students in the tables above are relevant to their field of study and align with the overall strategy of the company. The students are tasked with developing project plans, identifying key performance indicators, and discussing potential challenges and a risk management plan. These tasks require the students to apply the theoretical concepts they have learned in the classroom to a real-world scenario, which is an effective way to evaluate their understanding and ability to apply their knowledge. The third key component specified by (Nilson, 2016) is that a case should have some level of ambiguity, allowing students to develop their own unique problem-solving approaches and solutions. If a case lacks a unique process or outcome, students may become disinterested in the task. Finally, a good case should generate a sense of urgency in students. Although students are aware that the case is not real, creating a time-sensitive and/or serious scenario is more likely to capture their attention and elicit an engaged response. The generated case study by ChatGPT provides a clear understanding of how the proposed projects will help the company increase efficiency, reduce costs, diversify its product line, and increase its profits.

Although this case study offers a relevant and engaging scenario for teaching and learning IE principles, it would be beneficial to include more information about the company's internal structure and culture, as well as the budget and timeline for the project to make this case scenario more effective. It is important for students to understand how the company's organizational structure, policies, and culture can affect the success of the project. Without this information, it may be difficult for students to develop a comprehensive risk management plan that addresses potential challenges that may arise during the implementation of the project. Another limitation of the case study is that it does not provide enough information about the budget and timeline for the project. While it is understandable that this information may not be readily available, it is important for students to understand how budget constraints and timeline considerations can affect the project's success.

Several creative writing elements that can enhance the content of a case study were outlined by (Atkinson, 2008). These include the setting, which refers to the time and place where the case takes place. Additionally, the plot, or the sequence of events that make up the case, can help to engage the reader. Characters are also important, as they can bring the case to life and make it more relatable. Conflict is another key element, as it creates tension and interest in the case. Finally, a fitting conclusion is essential, as it helps to wrap up the case and provide closure to the reader. It appears that the case study generated by ChatGPT may not have incorporated all of the creative writing elements mentioned. Specifically, the setting, plot, characters, conflict, and a fitting conclusion were not mentioned in the case study. This could potentially limit the reader's engagement and understanding of the case, as these elements can help to bring the case to life and make it more relatable. Therefore, it may be beneficial to incorporate these elements into future case studies to improve their effectiveness.

Designing Mathematical Word Problems Using ChatGPT

To create mathematical word problems, we selected another core course in the IE curriculum: Manufacturing Processes. This course focuses on fundamental and applied sciences in the processing of materials, specifically, the effects of processing on the manufactured parts, selection of processing methods, and their relationship with material properties. Contemporary and non-traditional processes used in manufacturing are also covered.

Mathematical problems in manufacturing processes provide students with the opportunity to apply mathematical concepts to real-world situations, helping them understand how mathematical concepts are used to analyze and optimize manufacturing systems. These problems also aid in developing problem-solving and critical thinking skills (Große, 2015), which can be beneficial for students pursuing careers in manufacturing as it allows them to gain a more holistic understanding of the field.

Creating mathematical problems for a course can be challenging for instructors (Daroczy et al., 2015). This is because manufacturing processes are continually changing, requiring instructors to stay current with the latest developments and trends to create relevant problems. Additionally, instructors must create a variety of problems that suit different levels of understanding and challenge students to think critically (Christou et al., 2005). As a result, many instructors may choose to avoid creating mathematical problems, or may not be able to create effective problems (Grigg, 2012). This can lead them to use publishers’ ready-to-use problems, which might increase cheating due to the availability of these questions online.

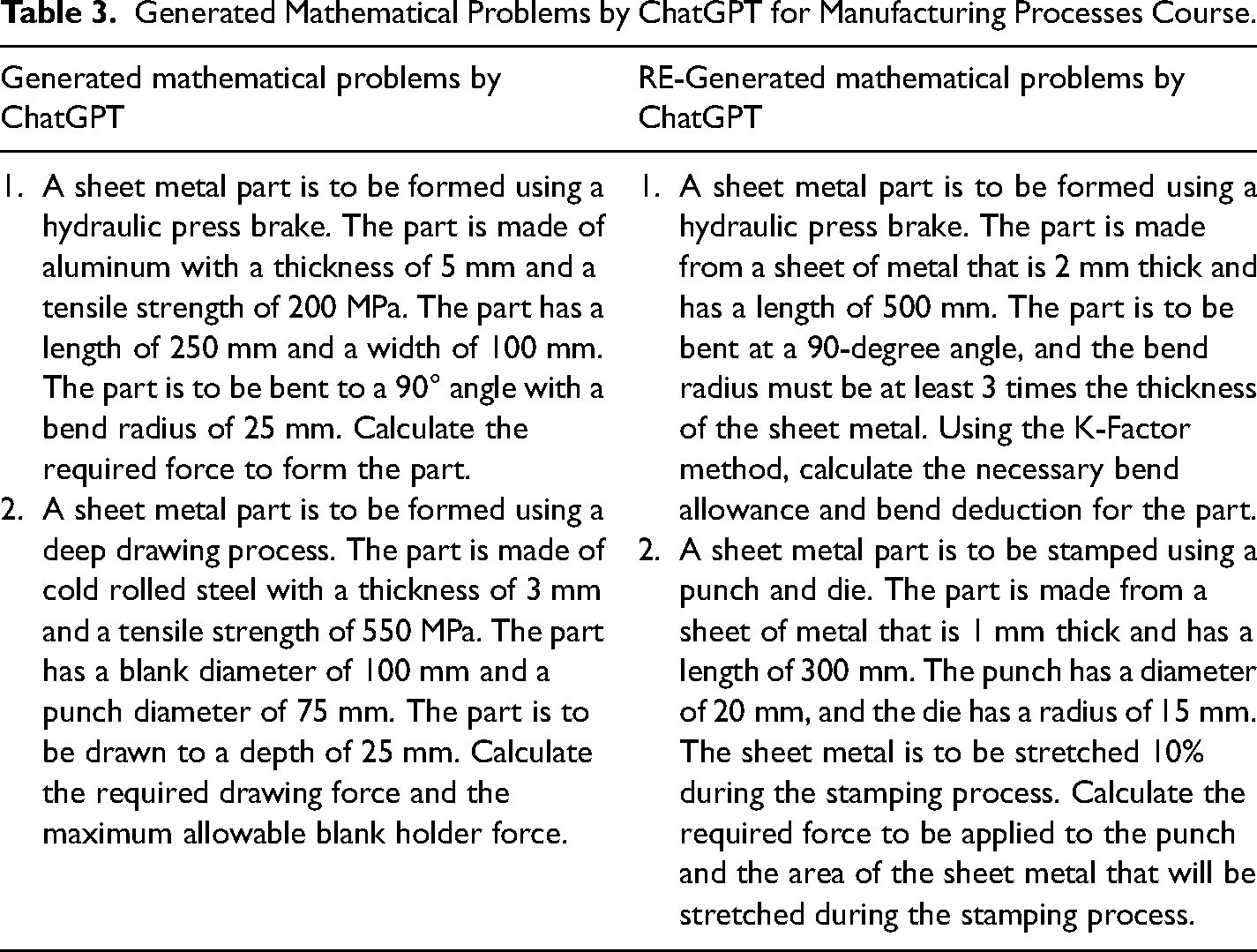

To assess the capacity of ChatGPT to generate mathematical word problems suitable in the sheet metal topic for undergraduate students, we provided ChatGPT with the following command. Table 3 shows the generated and re-generated mathematical problems. “Generate two

Generated Mathematical Problems by ChatGPT for Manufacturing Processes Course.

“Generate another set of two mathematical questions in the same topic”

These problems are an effective way to evaluate students’ understanding of sheet metal forming using a hydraulic press brake. The first problem is a good problem to assess a student's knowledge on sheet metal working because it requires the student to apply their understanding of the material properties and dimensions of the sheet metal, as well as the process of forming the metal using a hydraulic press brake. The problem also requires the student to use formulas and calculations to determine the required force, which tests their ability to apply their knowledge to a practical situation. Additionally, the problem has clear and specific parameters, which makes it easy to evaluate the student's solution and determine their level of understanding. The second problem presents a scenario for a sheet metal part that is to be formed using a deep drawing process and challenges students to apply their knowledge of material properties, geometry, and mechanics to calculate the necessary force to form the part. It provides specific information about the material properties and dimensions of the part, which is good as it makes the problem more realistic.

Both problems seem appropriate for a manufacturing or engineering class, as they require knowledge of material properties, manufacturing processes, and force calculations. However, the problems lack realistic or practical context, which may make them less engaging or relevant to students. Using realistic scenarios in mathematics problems can enhance student interest, promote a positive attitude towards mathematics, and enable students to apply their real-life experience to mathematical problem solving, according to research (Boaler, 2008). To improve the problems, it would be helpful to provide more context or background information, such as a real-world application or scenario in which the sheet metal parts are used. This would make the problems more interesting and relevant to students and help them see the practical application of the concepts they are learning.

Additionally, it would be beneficial to include more open-ended or creative elements to the problems (Woods et al., 1997). For example, students could be asked to design a product or part that requires the use of sheet metal and then calculate the necessary forces and dimensions. This would encourage students to use their creativity and problem-solving skills (Lampert, 2001), which are important in engineering and manufacturing fields (Mourtos et al., 2004).

Designing Linguistic Data Analysis Tasks Using ChatGPT

Introductory linguistics courses are fundamental parts of the curriculum in both undergraduate- and graduate-level applied linguistics and TESOL programs (Garshick, 1998). These courses help students understand how languages work and introduce them to sub-fields of linguistics such as phonology (sound system of languages), morphology (rules of word structure and word formation), syntax (rules of sentence and phrase structure), semantics (word meaning in language), and pragmatics (appropriate language use in social contexts). Also, these courses typically provide an introduction to applied linguistics fields such as sociolinguistics, psycholinguistics, neurolinguistics, and language acquisition.

Typical tasks in an Introduction to Linguistics course focus on assessing students’ understanding of the linguistic principles by analyzing linguistic data. For example, students might be given a list of plural words and asked to analyze the rule of pluralization of words in this language by analyzing the patterns (morphology). Or, they may be given a conversation between two speakers and asked to analyze the conversation according to turn-taking rules (pragmatics). In order to create these analysis tasks, instructors need to obtain or have access to linguistic data, or create their own, which could be time-consuming and lack authenticity. In addition, instructors need to make sure they do not violate confidentiality when using natural language data. For instance, instead of using AI technology, instructors could use real emails that were sent to them, but this would be unethical. Therefore, linguistics instructors may find it useful to have a tool to generate linguistic data that reflect natural human language and conversation and can then be turned into an analysis task for students. Since ChatGPT is capable of generating texts in different genres (such as dialogs, interviews, argumentative essays, and emails), it may be a supportive tool for creating linguistic data analysis tasks.

To generate linguistic data, we first focused on one specific genre: emails. Writing appropriate emails in English, particularly to authority figures, is generally difficult for both domestic and international students in the US higher education context (Chen, 2006; Fe'lix-Brasdefer, 2012). It sometimes requires pedagogical interventions (Nguyen, 2018). It is useful for students to study and analyze samples of emails not only to apply their knowledge of linguistics (pragmatics in this case), but also for their own understanding of politeness in academic and professional settings which helps them achieve their goals in email communication.

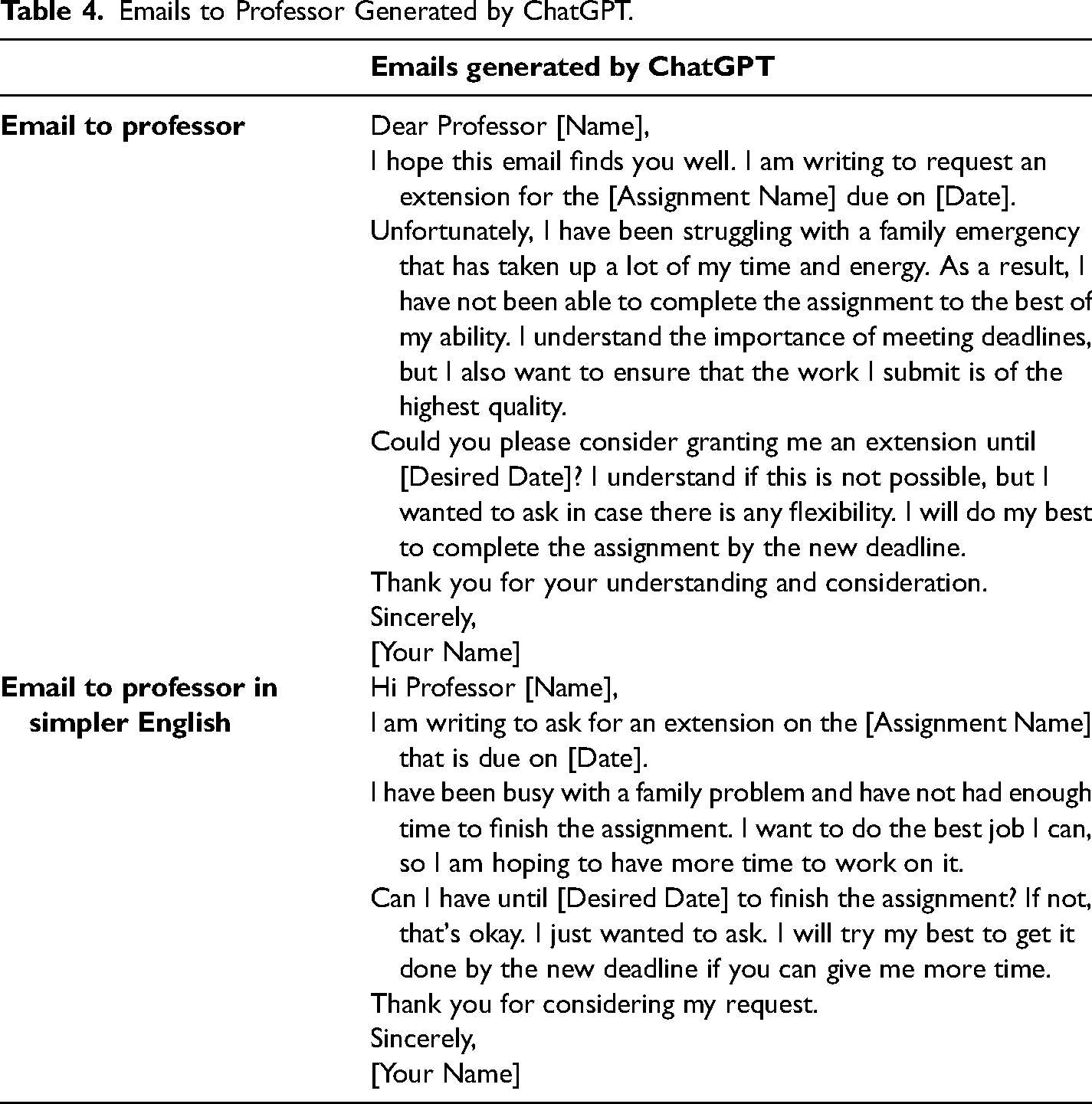

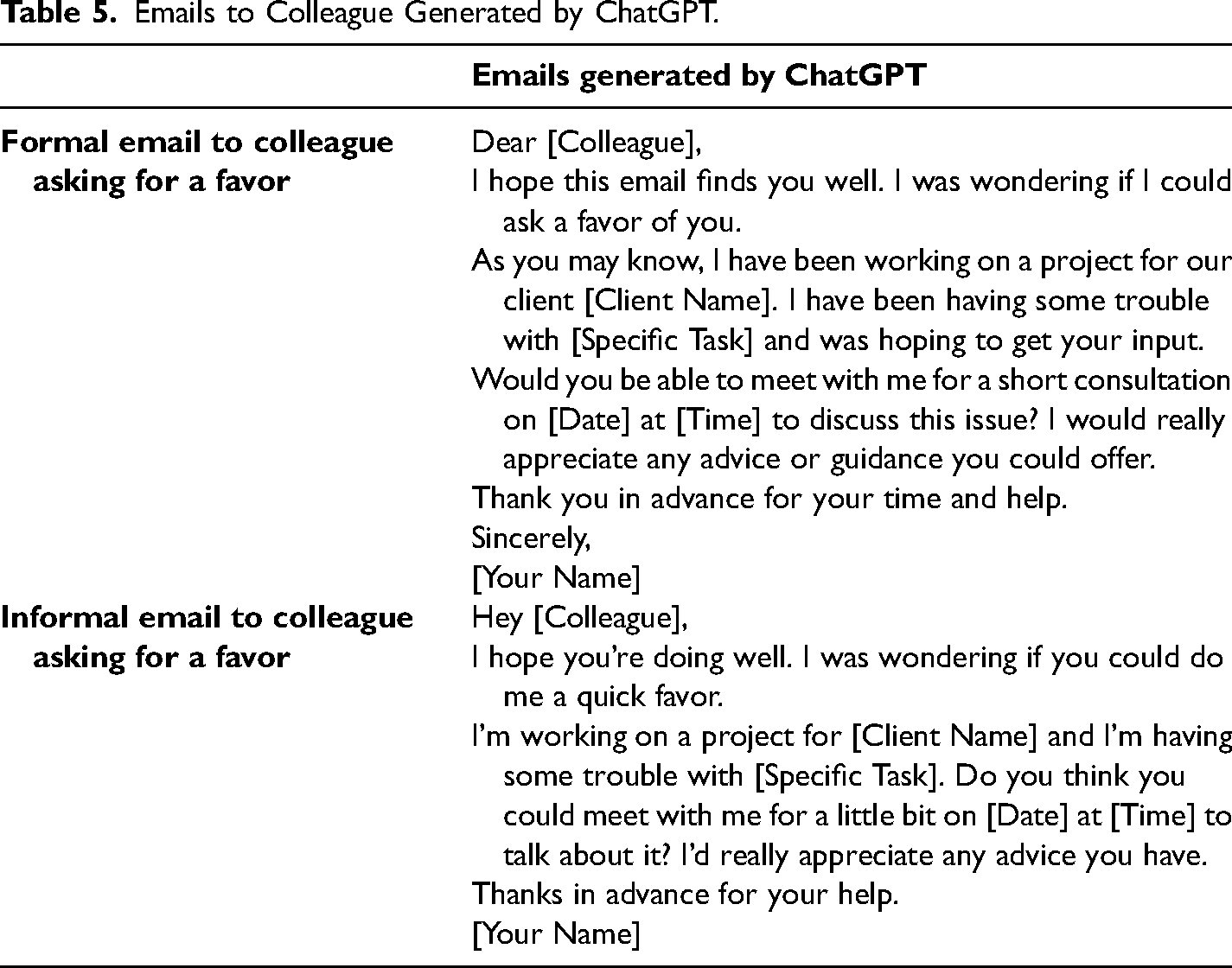

By giving the following commands to ChatGPT, we generated four emails written to request an extension from a professor and from a colleague (see Tables 4 and 5). “write an email to a professor asking for extension for an assignment”

Emails to Professor Generated by ChatGPT.

Emails to Colleague Generated by ChatGPT.

“write this email with simpler English”

“write an email to a colleague asking for a favor”

“write this email in informal English”

These emails are in general, appropriately written following the genre conventions of writing emails considering the status and symmetrical and asymmetrical relationships between the reader and the writer of the emails (i.e., student-to-professor, colleague-to-colleague). Each email includes appropriate opening and closing moves (“Dear” in formal ones and “Hi” and “Hey” in informal ones; “Sincerely”; “Thank you in advance”). In addition, all emails include, to some degree, politeness strategies expected in request speech acts (e.g., Biesenbach-Lucas, 2007; Hartford & Bardovi-Harlig, 1996). For instance, both emails written to the professor in Table 4 include lexico-syntactic politeness markers (e.g., “please,” “would you”), indirect requests (“Could you please consider granting me an extension…” and “Do you think you could meet with me…”), and mitigating moves such as explanations (“I have been struggling with a family emergency that has taken up a lot of my time and energy,” and “I am having some trouble with.. and I was hoping to get your input”). Additionally, the emails written to the professor in Table 4 (indicating asymmetrical status relationship) as opposed to those written to colleague in Table 5 (indicating symmetrical relationship) avoid imposition on the reader by saying “I completely understand if it is not possible” or “If not, it's okay.” The writer also uses indirect language to soften the request by saying “I am writing to request a letter of recommendation from you” rather than making a direct demand. The emails also follow the expected level of formality in English usage, as can be seen in Table 5. Email 1 is written in more formal English than email 2 in Table 5. For all these reasons, these emails generated by ChatGPT look similar to those that would be generated by humans and can be utilized in teaching the genre of emails in US higher education settings.

Once an instructor obtains email linguistic data through ChatGPT such as the ones above, then they can create a data analysis task after a discussion of politeness theory during a pragmatics unit in an introductory linguistics course. A similar activity could also be created for the purposes of teaching ESL students in US higher education settings. Analysis questions that could be posed to linguistics students include:

Identify the terms of address as well as the opening and closing moves in the emails above. Discuss in what ways these emails follow (or not) politeness rules, according to politeness theory. Illustrate your analysis with specific evidence from the emails above. In what ways may the writer's use of English affect whether or not the reader accepts the request? Provide specific evidence from the emails above. In what ways may the relationship between the reader and the speaker affect how the email is written? Provide specific evidence from the emails above. Identify the differences between formal and informal emails.

Although the email linguistic data generated by ChatGPT appear to be very similar to human-generated emails, particularly those produced by native speakers of English in the US when requesting a favor/extension, we advise instructors to consider their learning objectives and the content they want to assess through these tasks to further evaluate the appropriateness of the linguistic data for their assessment objectives. The linguistic data generated by ChatGPT can be revised and changed by the instructors to best suit their needs.

Discussion and Further Suggestions

In this paper, we presented a few possible uses of ChatGPT in generating assessment tasks from three courses: Project Analysis and Control, Manufacturing Processes, and Introduction to Linguistics. We particularly focused on showing how some common, but time-consuming, tasks can be generated by using ChatGPT as a supportive instructional tool. The results of our examination of ChatGPT showed that it was able to generate assessment questions and tasks that were relevant and appropriate for a given topic. When tested on a variety of topics, ChatGPT demonstrated a high level of performance in generating questions that were accurate and on-topic, as long as the right prompts were provided. In addition, ChatGPT was able to generate questions that were novel and indicated a level of creativity and flexibility in its question generation capabilities. Although we shared examples of multiple-choice, case-study, mathematical problems, and linguistic data analysis tasks, ChatGPT is capable of generating other assessment tasks such as fill-in-the-blanks or discussion prompts and texts in genres other than emails, such as essays, paragraphs, conversations, and interviews. It is also worth mentioning that, although it was out of our scope for this paper, ChatGPT provides sample correct answers and answer key for multiple-choice, case study, and math problems tasks, if the command given to ChatGPT specifies that the answers be given.

It should also be acknowledged that while ChatGPT can be a useful tool for generating assessment tasks and instructional materials, AI-based technologies are not designed to replace human expertise or judgment, especially not in education. As such, we advise that instructors carefully evaluate the reliability and accuracy of the assessments or information generated in ChatGPT and cross-reference its suggestions with other reliable sources to verify accuracy. Moreover, to improve the validity, reliability, and authenticity of the assessments, tasks generated by ChatGPT should always be customized by instructors considering their own purposes with the assessment, students’ readiness for and familiarity with the assessment task, and students’ language proficiency. Regardless of the purposes for using ChatGPT, it is important to remember that ChatGPT is a machine learning model, and its knowledge is based on the information that was available to it at the time of its training. There may be situations where ChatGPT's understanding of a topic is incomplete or out of date and tasks may lead to misunderstanding, be inaccurate, or include flaws. We advise instructors to use their own expertise and judgement in using ChatGPT for assessment purposes, and to approach it as a supportive tool to enhance instruction rather than to replace it. Finally, it should also be acknowledged that years of teaching experience is likely to positively impact the amount of time spent in designing assessments using ChatGPT. The more experienced the instructor is in designing instructional materials and assessments, the less time-consuming it will be to generate assessment tasks using ChatGPT.

While our exploration provides valuable insights into the potential of ChatGPT for designing course assessment tasks, it is important to acknowledge the potential limitations. First, the effectiveness of the assessment questions and tasks generated by ChatGPT was not tested, as it was out of the scope of this paper. Therefore, it is not known how well these assessments perform in comparison to other types of assessment questions. Future studies could evaluate the effectiveness of the generated assessment tasks, based on data obtained from test-takers, to statistically assess their reliability and validity. In order to test these questions with students, we would advise that instructors first work with a small group of students to see how they respond to the questions and to identify any potential issues or areas for improvement. Next, they could analyze the results of the pilot test to determine if the questions are measuring what instructors intended them to measure (i.e., their validity) and if they are appropriate for the students’ level of understanding. Based on the feedback from the pilot test, instructors can revise the questions as necessary and conduct additional testing to ensure their effectiveness. Another strategy would be to use peer reviews by asking colleagues to review these tasks before they are implemented for classroom assessment purposes. By implementing these strategies, instructors can ensure the questions generated by ChatGPT effectively measure student learning and provide valuable insights for teaching and assessment.

In addition, it is crucial that the future works consider the cultural and social implications of using ChatGPT-generated questions, and whether they would be accepted by different cultures and backgrounds. While ChatGPT may be a useful tool for developing assessment tasks in the contexts where individual instructors are expected to design their own course exams and assessments (e.g., North American context), it may not be as effective or relevant in other cultural or educational settings where assessments are prepared and dictated by a centralized office, such as the Ministry of Education. In such cases, the use of ChatGPT to design assessments may be limited or not applicable at all. Finally, we have not incorporated information on students’ perspectives on these tasks as this is an area of ongoing research. These limitations suggest that further research and examination is needed to fully understand the potential of ChatGPT in higher education and how to use it in an ethical and effective manner.

Conclusion

In this paper, we explored the uses of ChatGPT for generating assessment tasks across various courses, highlighting its potential as a supportive instructional tool. Our examination demonstrated ChatGPT's ability to produce relevant and novel assessment questions, showcasing its creativity and flexibility. Although we presented examples of different assessment types, ChatGPT is capable of generating diverse tasks, including essays, paragraphs, and discussions. We emphasized that ChatGPT should complement, not replace, human expertise, encouraging instructors to customize assessments and cross-reference its suggestions with reliable sources. Furthermore, we acknowledged the need for instructors to evaluate ChatGPT-generated assessments for validity and accuracy. Despite providing valuable insights, we recognized the importance of testing the effectiveness of generated tasks through pilot tests and peer reviews. Additionally, we stressed the consideration of cultural and social implications when using ChatGPT-generated questions in diverse educational settings. While ChatGPT holds potential as an effective tool for educators, further research is essential to fully understand its capabilities and use it ethically and effectively in higher education. This paper aimed to outline ChatGPT's applicability in course assessments from a cross-disciplinary perspective, acknowledging its value in enhancing the assessment process and teaching environments.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.