Abstract

Online course-packs are marketed as improving grades in introductory-level coursework, yet it is unknown whether these course-packs can effectively replace, as opposed to supplement, in-class instruction. This study compared learning outcomes for Introductory Psychology students in hybrid and traditional sections, with hybrid sections replacing 30% of in-class time with online homework using the MyPsychLab course-pack and Blackboard course management system. Data collected over two semesters (N = 730 students in six hybrid and nine traditional sections of ∼50 students) indicated equivalent final-grade averages and rates of class attrition. Although exam averages did not differ by class format, exam grades in hybrid sections decreased to a significantly greater extent over the course of the semester than in traditional sections. MyPsychLab homework grades in hybrid sections correlated with exam grades, but were relatively low (66.4%) due to incomplete work—suggesting that hybrid students may have engaged with course materials less than traditional students. Faculty who taught in both formats noted positive features of hybrid teaching, but preferred traditional classes, citing challenges in time management and student usage of instructional technology. Although hybrid students often reported difficulties or displeasure in working online about half of them indicated interest in taking other hybrid classes.

Introduction

Forms of online education have expanded rapidly as digital technologies have become ubiquitous. As early as the 2000–2001 academic year, 90% of 2-year and 89% of 4-year public institutions offered online education (Tallent-Runnels et al., 2006), although what constitutes online education often varies across institutions and educational contexts as elements of online and traditional instruction may be combined in a variety of ways (Moore, Dickson-Deane, & Galven, 2011). The reasons for the increase in online education are both administrative (e.g., more available seats if physical classrooms are needed for less time), and pedagogical (e.g., students need competence in online technologies/communication for their future careers) (Poirier, 2010; Thor, 2010; Young, 2002). Internet-based course management systems (CMS), such as Blackboard, Moodle, or Canvas, and publisher-provided course-packs, such as MyPsychLab, WileyPLUS, or LaunchPad, have made it feasible to teach courses fully or partially online by providing virtual classroom spaces and ready-made online modules to accompany textbook chapters. Incorporating these digital tools into course design provides students with opportunities to develop technological skills (Gerard, Gerard, & Casile, 2009; Powers, Brooks, McCloskey, Sekerina, & Cohen, 2013). Whether this translates into more effective teaching and learning remains controversial, especially for introductory-level survey courses where students may lack adequate preparation to utilize the tools effectively (El Mansour & Mumpinga, 2007).

Use of Publisher-provided Course-packs to Support Learning

Surveys of undergraduates in Introductory Psychology courses suggest that many students do not know how to study effectively (Gurung, 2005; Gurung, Weidert, & Jeske, 2010). Moreover, students' preferred study methods (i.e., re-reading notes and highlighting textbooks) are largely ineffective in fostering long-term retention of course material (Dunlosky, Rawson, Marsh, Nathan, & Willingham, 2013). Publisher-provided course-packs aim to improve student-learning outcomes through structured activities that supplement textbook readings, as hands-on interactive activities have been shown to aid online learning (Koedinger, Kim, Jia, McLaughlin, & Bier, 2015). These course-packs, consisting of multi-media resources (e.g., videos, case studies, experimental simulations, and quizzes) accompanied by an e-book, are aggressively marketed as proven to improve learning outcomes. For example, MyPsychLab (2016) claims that it delivers “consistent, measurable gains in student learning outcomes, retention, and subsequent course success.” Similar claims are made by publishers of other online course-packs, for example, WileyPLUS (2016) and LearningCurve (2016). Despite such publisher claims, no randomized controlled studies exist that systematically compare learning outcomes as a function of student access to one of these course-packs.

Nevertheless, publisher-provided course-packs appear to align with what researchers have identified as effective study habits involving self-testing, distributed practice, and interleaved practice (Brown, Roediger, & McDaniel, 2014). Self-testing promotes the retrieval and long-term retention of information to a greater extent than re-reading notes and highlighting textbooks—the latter methods increasing fluency in processing, but not retrieval, thus leading students to overestimate what they know (Karpicke, Butler, & Roediger, 2009). Indeed, in a study correlating study behaviors and exam scores, various methods of self-testing lagged just behind attending class as the most effective study behavior (Gurung et al., 2010). MyPsychLab heavily utilizes self-testing through quizzes; students may review questions they answered incorrectly by going back to the text then retaking the quiz.

Distributed practice, or spaced exposure to key concepts, has been shown to be more effective than massed practice for long-term retention of material (Cepeda, Pashler, Vul, Wixted, & Rohrer, 2006; Seabrook, Brown, & Solity, 2005). MyPsychLab encourages distributed practice through multiple, short assignments that can be completed over the course period. The MyPsychLab calendar function makes it easy for students to keep track of deadlines while working through assignments at their own pace. Interleaved practice, which involves working on multiple problem types within a single study session, has been shown to enhance retention (Rau, Aleven, & Rummel, 2013; Taylor & Rohrer, 2009). MyPsychLab encourages interleaved practice by providing varied learning activities for each chapter. As students complete homework assignments, they are exposed to information in multiple formats (e.g., interviews, case studies, and experiments) that may lead them to reflect on course topics and concepts in different ways.

Given these features, course-packs like MyPsychLab may help students to study smarter and thus outperform students in traditional classes. However, for such benefits to accrue requires students to engage independently with the course-pack, and be self-motivated to persist when confronted with technical issues or potentially challenging content. Despite the ubiquity of technology in their lives, undergraduates are often unfamiliar with course-packs and may require considerable technical support to succeed (Gerard et al., 2009; Wach, Broughton, & Powers, 2011). This results in additional work for instructors, who must include the task of teaching students to use course-packs in their course objectives. While publishers do provide technical support, students may rely on instructors rather than call-in centers to answer technical questions. For instructors, mastering course-pack features in order to troubleshoot student technical difficulties is time consuming and potentially frustrating (Cowie & Nichols, 2010).

More generally, successful engagement with an online course-pack may crucially depend on self-regulatory skills. Students need to motivate themselves to complete homework by setting goals, managing time, delaying gratification, inhibiting distraction, and self-reflecting on their performance (Bembenutty, 2009). Maintaining one's focus in the face of distraction may be especially challenging in digital environments, where studying is pitted against online forms of entertainment, such as social media, video games, and web surfing (e.g., Jacobsen & Forste, 2011; Levine, Waite, & Bowman, 2007). Students who believe in their capabilities, value homework as a task that enhances learning, and have high self-efficacy are more likely to be persistent in the face of distraction (Ramdass & Zimmerman, 2011). However, many college students lack prior background and interest in academic course material, and have obligations that compete for their attention. For these students, remaining motivated to complete weekly online homework might prove to be an arduous task.

Is Hybrid Instruction Effective?

With the increased adoption of online forms of instruction, the U.S. Department of Education, Office of Planning, Evaluation, and Policy Development (2010) conducted two meta-analyses to evaluate learning outcomes as a function of course format. The first compared fully online courses with traditional courses, and found negligible differences in learning outcomes (N = 27 studies; average effect size g = +0.05). The second compared web-enhanced courses (combining online and face-to-face instruction) with traditional courses and documented slightly better outcomes for web-enhanced courses (N = 23 studies; average effect size g = +0.35). However, as noted in the report, web-enhanced courses typically included additional learning time and instructional units; hence, the observed benefits were likely to stem from added instruction, as opposed to the advantages of online media. The increased workload associated with web-enhanced instruction is referred to as the “course and a half syndrome” (Gerard et al., 2009).

Few studies have directly compared learning outcomes for students in hybrid and traditional courses with equivalent numbers of instructional hours: Cottle and Glover (2011) compared learning outcomes for two hybrid sections of Human Development (each ∼50 students, total N = 99) with one larger traditional section (N = 110), and reported that hybrid students received higher final grades (M = 89.7%) than traditional students (M = 82.4%). Others have reported comparable grades across hybrid and traditional formats: for Computer Networks and Communications (Delialioglu & Yildirim, 2007); and for Introductory Statistics (Utts, Sommer, Acredolo, Maher, & Mathews, 2003). In the latter study, hybrid students (N = 77) were more likely than their peers in a large traditional section (N = 208) to report that the course improved their computer/Internet skills; however, they also reported that the hybrid course was much more work than a traditional course, with less positive evaluations overall (Utts et al., 2003). Note that all of these studies compared classes of unequal size—with small hybrid sections compared to larger traditional sections. Given evidence that learning outcomes are better for students in smaller classes (Chapman & Ludlow, 2010) it is of necessity to compare outcomes for hybrid and traditional sections of comparable size.

In comparing hybrid and traditional courses, no previous study has examined the effectiveness of publisher-provided course-packs, such as MyPsychLab, as a replacement for in-class instruction. However, despite the lack of any randomized controlled study, two correlational studies (within web-enhanced classrooms) claim benefits of MyPsychLab usage (Cramer, Ross, Orr, & Marcoccia, 2012; McKenzie et al., 2013). Each study documented improved exam performance with increased online engagement, and attributed benefits to study aids that allowed students to self-test for mastery of key concepts. However, an alternative interpretation is that better-prepared students were more likely to use MyPsychLab. Indeed, McKenzie and colleagues (2013) reported that only 39–53% of students logged into MyPsychLab for each of five units, which suggested a lack of motivation to use the course-pack.

The Current Study

Our study used a quasi-experimental design to compare learning outcomes in public university students taking Introductory Psychology (PSY100) in a hybrid or traditional format. Although students self-selected into course formats, students in hybrid and traditional sections had similar demographic characteristics and all sections were of comparable size: ∼50 students/section. Data were collected at the College of Staten Island (CSI), a four-year college offering associate and baccalaureate degrees to a diverse student body residing in the New York City metropolitan area and commuting to college.

To compare learning outcomes across hybrid and traditional formats, we used departmental exams for all sections, and examined the relationship between online homework and exam grades in hybrid sections. We surveyed instructors and students about the hybrid course, and their preferences in course formats for teaching and learning. Three hypotheses guided this research.

Despite the potential of MyPsychLab to enhance learning through individualized study and self-testing, we expected students to lack familiarity with instructional technology and motivation to work independently. Thus, we predicted that there would be no overall benefit of hybrid instruction relative to traditional instruction. We expected students to report challenges using instructional technology and a preference for traditional instruction. Given the required time for instructors to develop skills in using MyPsychLab and in supporting student usage, we expected instructors to show a preference for traditional instruction.

Method

We compared traditional PSY100 sections that met twice a week for a total of 165 minutes with hybrid sections that met once per week for 115 minutes, with the 30% reduction in time replaced with online modules. We used the textbook Psychology: From Inquiry to Understanding (Lilienfeld, Lynn, Namy, & Woolf, 2011), accompanied by MyPsychLab (www.mypsychlab.com). Hybrid sections and traditional sections had identical enrollment caps (50 students/section), and used the same in-class videos, demonstrations, activities, and exams. Due to time constraints, in-class lectures and discussions in hybrid sections were drastically reduced relative to traditional sections. Hybrid sections were introduced as part of a university-wide initiative to provide web-enhanced instruction, http://hybrid.commons.gc.cuny.edu/. Hybrid sections were listed in the schedule of classes as requiring online work, with students self-selecting into traditional and hybrid sections based on seat availability and convenience. The scheduling of hybrid and traditional sections was arranged by the Psychology Department based on classroom availability.

Participants

Description of Sample

The sample at enrollment comprised 730 students (291 hybrid, and 439 traditional) in 15 sections ranging from 46 to 54 students. While students self-selected into course sections, surveys collected on the first day of class showed that 67% of hybrid students enrolled without awareness of the hybrid status of the course, even though course registration indicated online work. An additional 6% said they knew that they were enrolled in a hybrid course, but did not know what that was. This suggests that ∼73% of hybrid students were unaware of taking a course with online requirements although they did know that their class met only one time per week for approximately 2 hours. The reduced class time potentially attracted students with greater constraints on their time.

Exam grades, exam count, final grades, and attrition rates were gathered with departmental permission from final grade-books, with data de-identified to ensure student anonymity. The study was completed with institutional review board approval, classified as exempt.

Data Collection

Data were collected from hybrid sections and traditional sections taught in parallel and covering the same content. Each week, traditional sections had approximately one hour of class activities in the form of demonstrations, videos, and simulations and two hours of lecture and discussion, whereas hybrid sections had approximately one hour of class activities (identical to the traditional sections), one hour of lecture and discussion, and one hour of online homework. However, in addition to the 30% reduction in class time, one class period in each hybrid section was devoted to training students on MyPsychLab and Blackboard, with additional in-class time devoted weekly to answer questions about online work. Hybrid sections and traditional sections also differed in the format of the textbook: Hybrid students were required to purchase access to MyPsychLab, and were encouraged to use the e-book to minimize costs. Traditional students were instructed to purchase textbooks without MyPsychLab access. All students had access to Blackboard. Traditional sections used Blackboard only for posting grades.

Exam grades and exam count

Instructors reported raw exam scores for each of three departmental exams. These exams were non-cumulative, 25-question, multiple-choice tests, covering 3–4 chapters and administered to hybrid sections and traditional sections. Exam questions were selected from the Pearson test-bank by PSY100 coordinators and modified as needed to improve wording. Exam questions focused on key terms, experiments, and theories. We computed exam count as a dependent variable to provide an index of attendance on exam dates.

Final grades

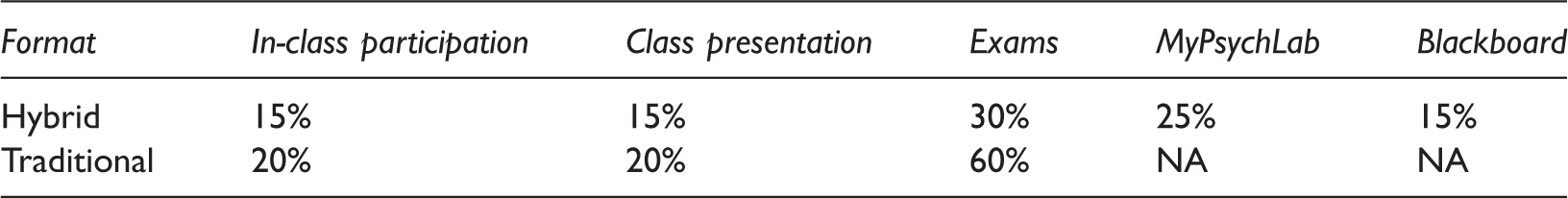

Final Grade Calculation for Hybrid and Traditional Sections

MyPsychLab homework

All hybrid sections were assigned the same online homework, which included 5-question, quick-review quizzes and 1–2 question quizzes based on multi-media activities (videos and simulations) on MyPsychLab, as well as discussion questions posted to Blackboard; see Appendix A. The quick-review quizzes and multi-media activities reviewed key concepts from the chapters, with immediate feedback provided after each quiz question. We attempted to equate time-on-task across formats by assigning hybrid students ∼45 minutes of videos and simulations and ∼15 minutes of quizzes and discussion questions to replace one hour of in-class instructional time per week.

MyPsychLab grades were collected from hybrid sections to examine the relationship between MyPsychLab homework and exam grades. MyPsychLab grades were based on the percentage correct of assigned work. Because MyPsychLab allows students to retake quizzes without penalty for prior incorrect answers, the percentage correct correlates highly with work completed. We did not use time logged into MyPsychLab as a measure of engagement because students could remain logged onto the site for hours while inactive, and login times included access to the e-text as well as homework.

Student surveys

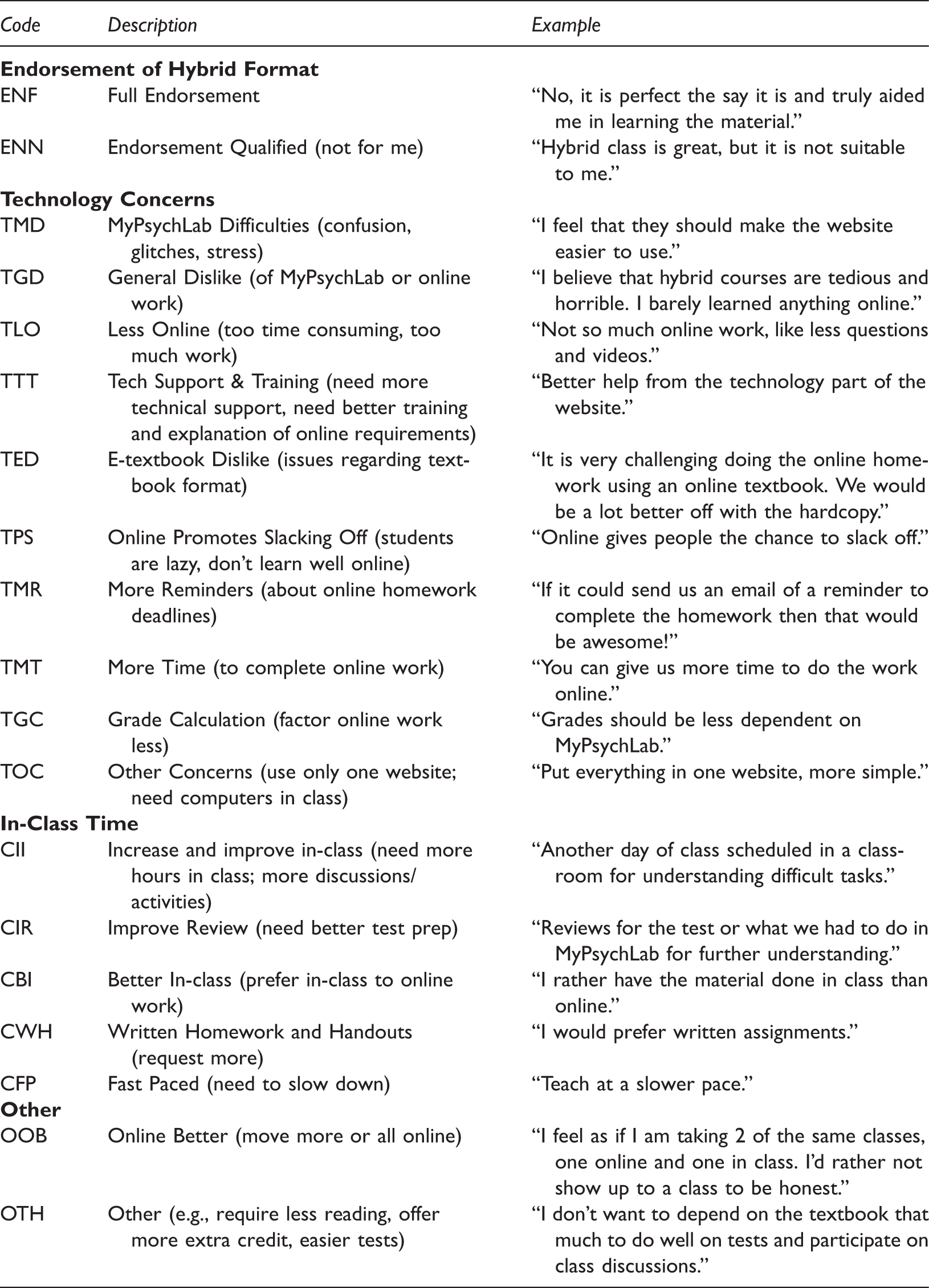

Coding Scheme for Survey 2 and Survey 3 Open-Ended Questions

Faculty questionnaire

Three instructors who taught in both formats were asked to list up to three things that were positive about the hybrid format, up to three things that were negative about it, and to indicate which format was preferred and why; see Appendix E. This survey was distributed at the end of data collection for the purpose of comparing their experiences across formats.

Results

Course Outcomes

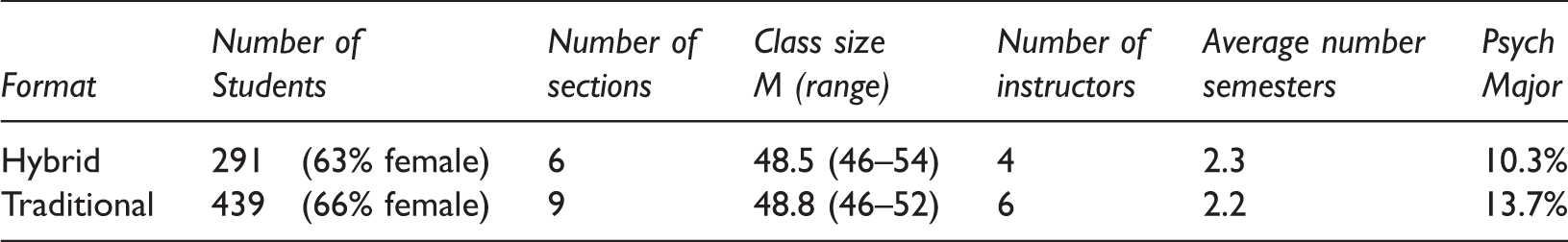

This study compared hybrid sections and traditional sections of Introductory Psychology. While there were more sections of the traditional format (nine compared to six) and thus more students in this format, the sections were statistically equivalent in the distribution of male/female students, class size, number of semesters of college attendance, and declared Psychology majors, all p-values ≥ 0.33 (see Table 1 for means).

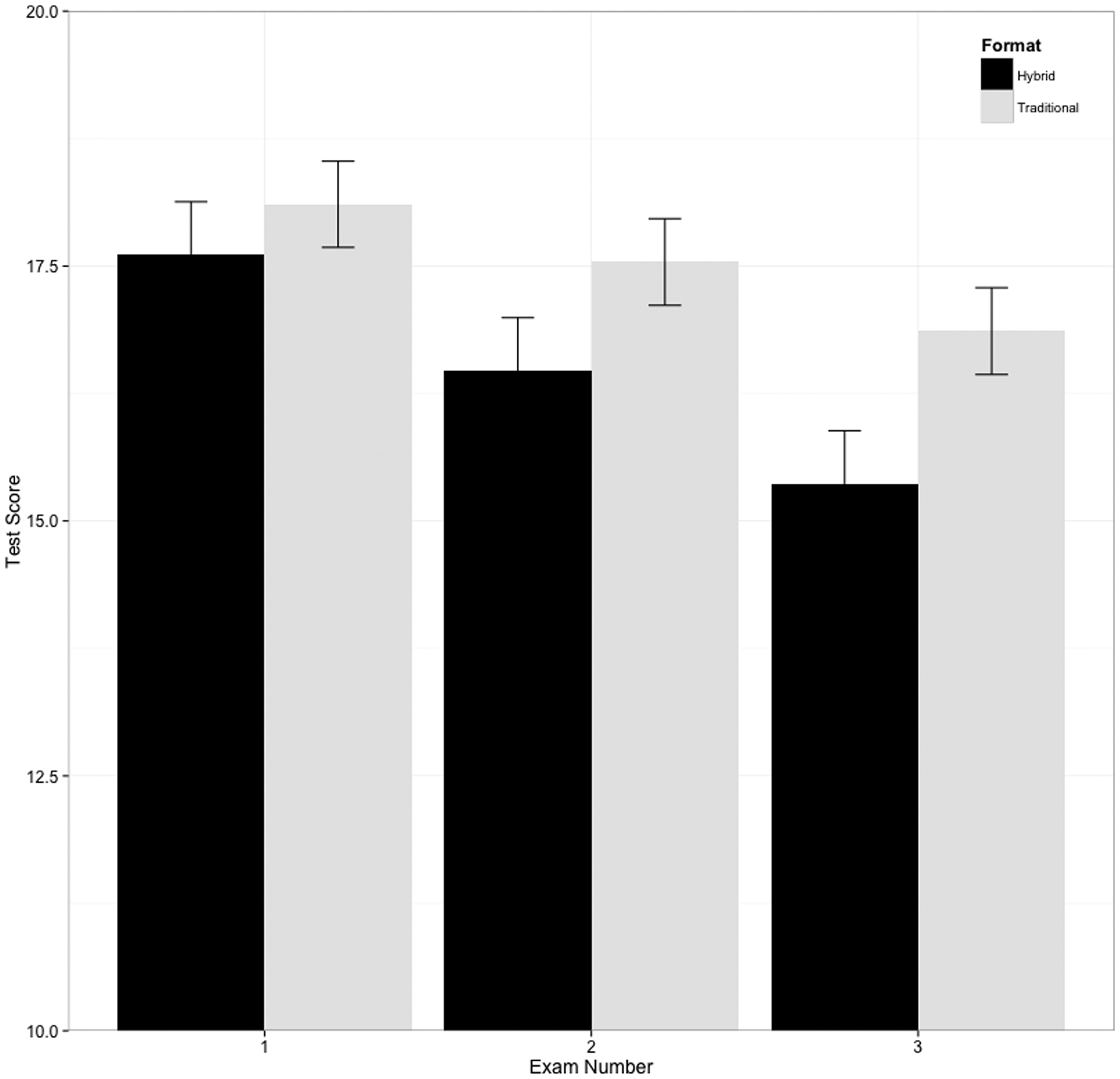

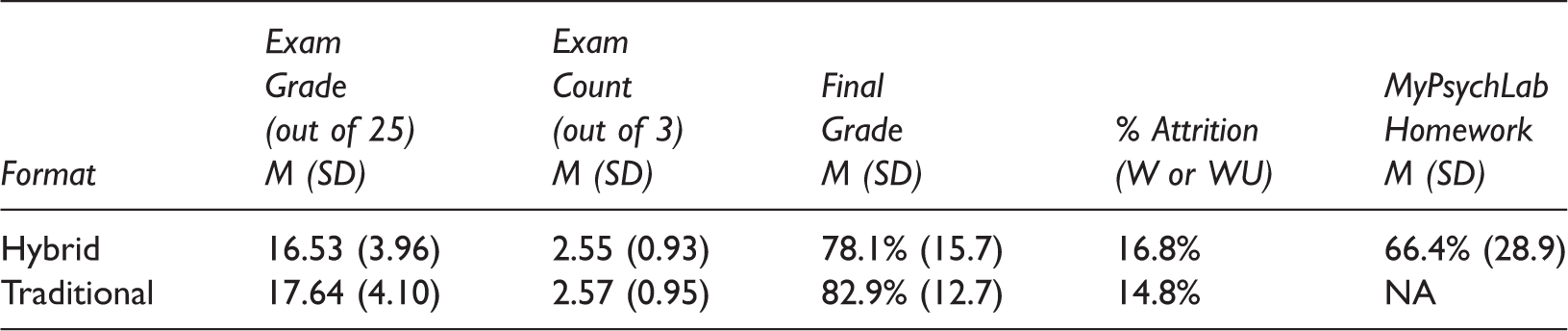

Table 4 shows descriptive statistics for outcome measures as a function of course format. Preliminary analyses, using multilevel Poisson regression with by-class random intercepts, revealed no significant difference between the two groups in number of exams (out of three) taken (B = 0.001, Z = 0.15, p = 0.88). To compare exam scores (number correct out of 25 questions) as a function of class format, a multilevel linear regression was fit in the lme4 1.1-7 (Bates, Maechler, Bolker, & Walker, 2014) package in R, with participants nested within class sections and exams nested within participants. To distinguish the three exams, covering different topics and administered at different points in the semester, exam number was included in the model; note that any effect of exam number might reflect either different content or the time of the test. Course type and exam number were both sum coded. Random intercepts were included at both the participant and section level. While it is logically possible to include random slopes at the class section level, the small number of course sections (N = 15) precluded reliable estimation of these effects. Statistical inference was conducted using the lmerTest package (Kuzntsova, Brockhoff, & Christensen, 2014), with Satterthwaite approximated degrees of freedom. Results revealed a significant main effect of exam number, F(2, 1227.72) = 51.90, p<0.001, a non-significant main effect for format, F(1, 13.03) = 2.53, p = 0.14, and a significant interaction between exam number and format, F(2, 1227.72) = 4.35, p = 0.01. As shown in Figure 1, successive exams were apparently more difficult, with hybrid students showing a steeper decline in performance over time than traditional students.

Mean grades for each exam as a function of class format. Total number of questions per exam = 25. Mean Class Averages as a Function of Format (N = 6 hybrid sections, 9 traditional sections)

To test for an effect of instructor on course outcomes, we fit an additional model with instructor as a dummy-coded factor variable. The factor for instructor was non-significant, F(7, 5.97) = 2.34, p = 0.16, while the main effect of exam number and the interaction between exam number and format remained significant, F(2, 1228.09) = 51.98, p < 0.001 and F(2, 1228.1) = 4.34, p = 0.01, respectively. However, a likelihood ratio test indicated that including instructor significantly improved model fit, χ2 (7) = 19.749, p = 0.006. Given this ambiguous evidence of an instructor effect, an additional model was fit to students of the instructors who taught both traditional sections and hybrid sections (random effects included by-participants as there were very few sections in this sample (N = 6), and instructor was included as a dummy variable). As with the model reported earlier, this model revealed a significant main effect of exam number (F(2, 474.11) = 8.07, p < 0.001), and an interaction between exam number and format (F(2, 474.15) = 12.01, p < 0.001) with a non-significant effect of format (F(1, 256.03) = 2.33, p = 0.13).

To examine the interaction in the full sample, a post-hoc test of interactions was conducted using the phia package (De Rosario-Martinez, 2015). While exam scores decreased significantly from Exam 1 to Exam 3 in both hybrid and traditional classes, χ2(1) = 71.46, p < 0.001, and χ2(1) = 33.16, p < 0.001, respectively, the difference between Exam 1 and 3 was larger for hybrid than for traditional students, χ2(1) = 8.64, p = 0.01. The phia package was also used to test for condition effects. Results revealed that differences between traditional and hybrid students at Exams 1 and 2 were non-significant, χ2(1) = 0.54, p = 0.46, χ2(1) = 2.52 p = 0.22, respectively, while the difference at Exam 3 was marginally significant, χ2(1) = 4.95 p = 0.078.

To test whether the interaction could reflect differential attrition across groups, withdrawal rates were compared: 49 of 291 students (16.8%) in the hybrid classes withdrew before the end of the semester while 65 of 439 students (14.8%) in the traditional classes withdrew. A multilevel, logistic regression with by-class random intercepts revealed that this difference was non-significant (B = –0.21, Z = –0.616, p = 0.54). Students who did not withdraw from the class were compared in final grades. A multilevel linear regression with by-class random intercepts revealed no significant difference between the two groups in final grades (B = 4.9, t(13) = 1.93, p = 0.08).

For hybrid students, the average score on MyPsychLab homework assignments was 66.4% (SD = 28.9). To examine whether MyPsychLab homework correlated with exam scores, an average exam score was calculated for each participant. A multilevel linear model revealed a significant, positive relationship between exam and MyPsychLab homework grades, B = 0.04, t(237.45) = 6.72, p < 0.001; r(240) = 0.40, p < 0.001.

Surveys of Students in Hybrid Sections

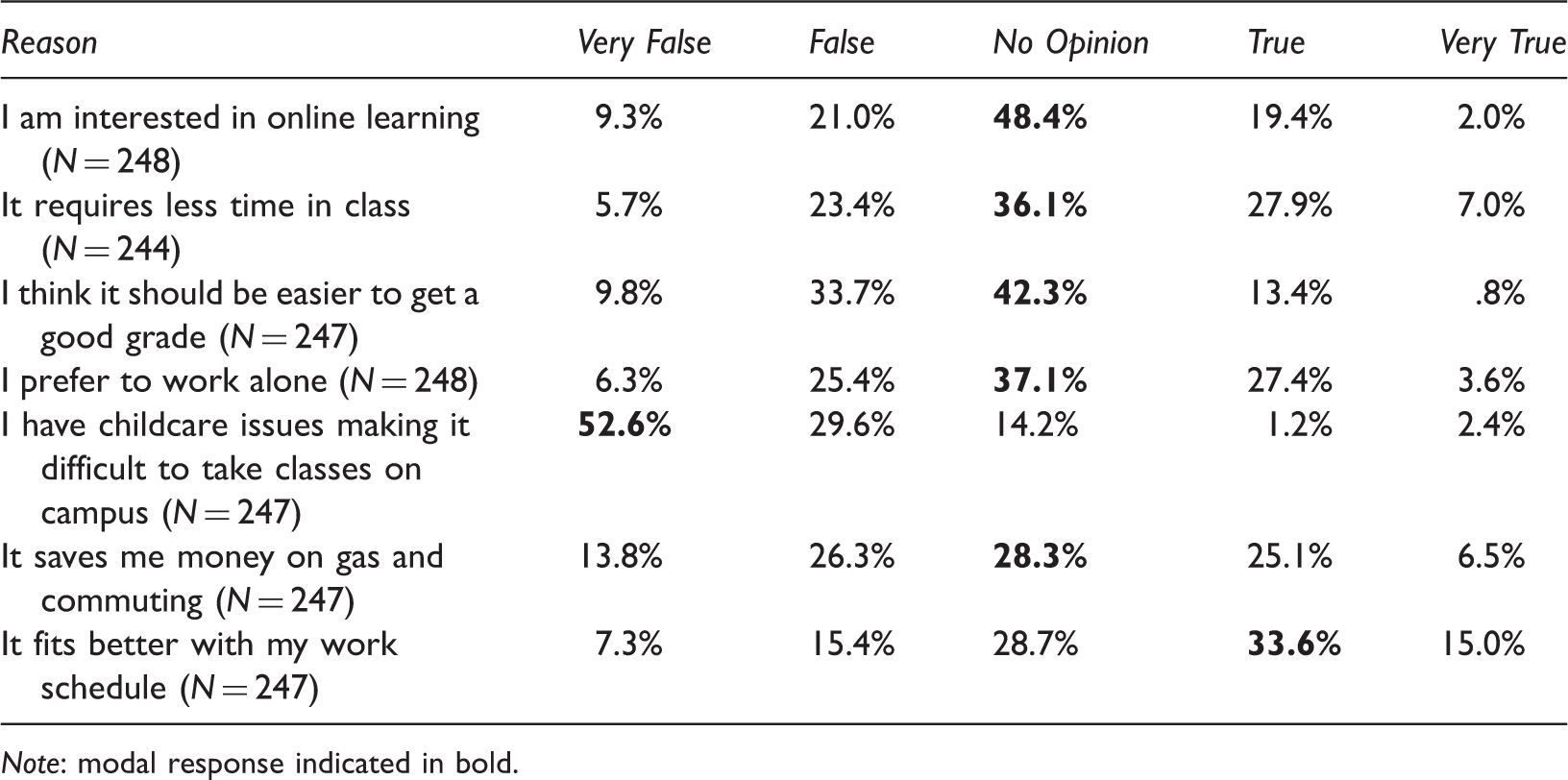

Survey 1

Survey 1 (Intake)—My main reason for taking a hybrid class is that:

Note: modal response indicated in bold.

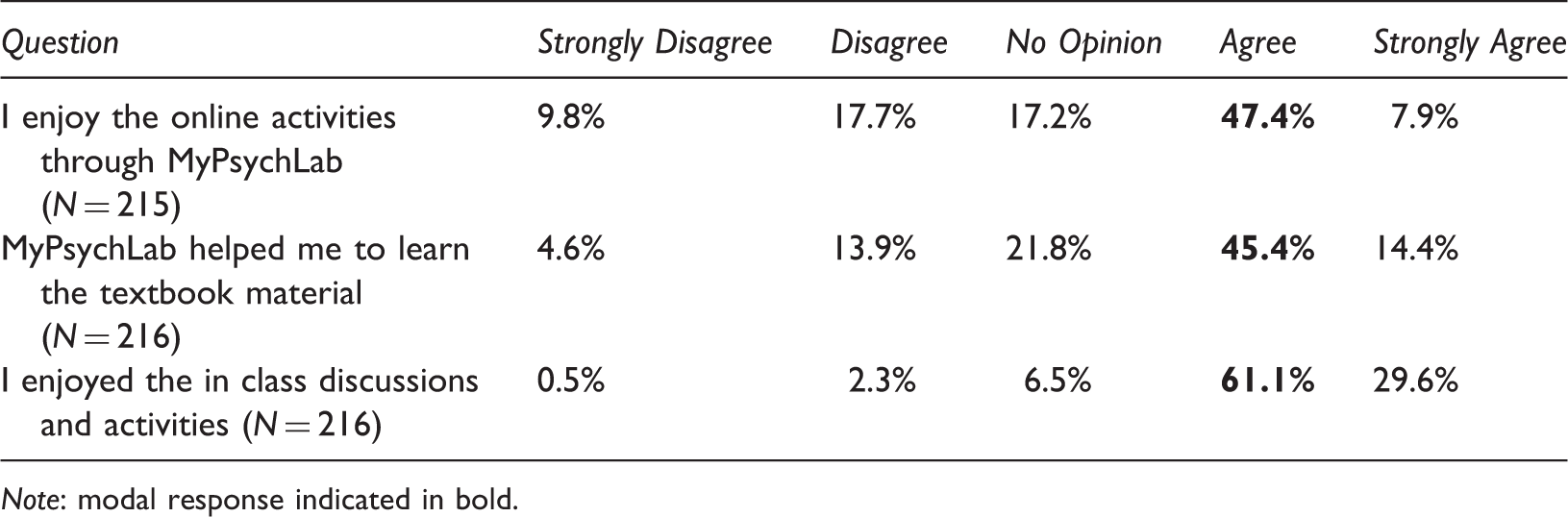

Survey 2

Survey 2 (Midterm)

Note: modal response indicated in bold.

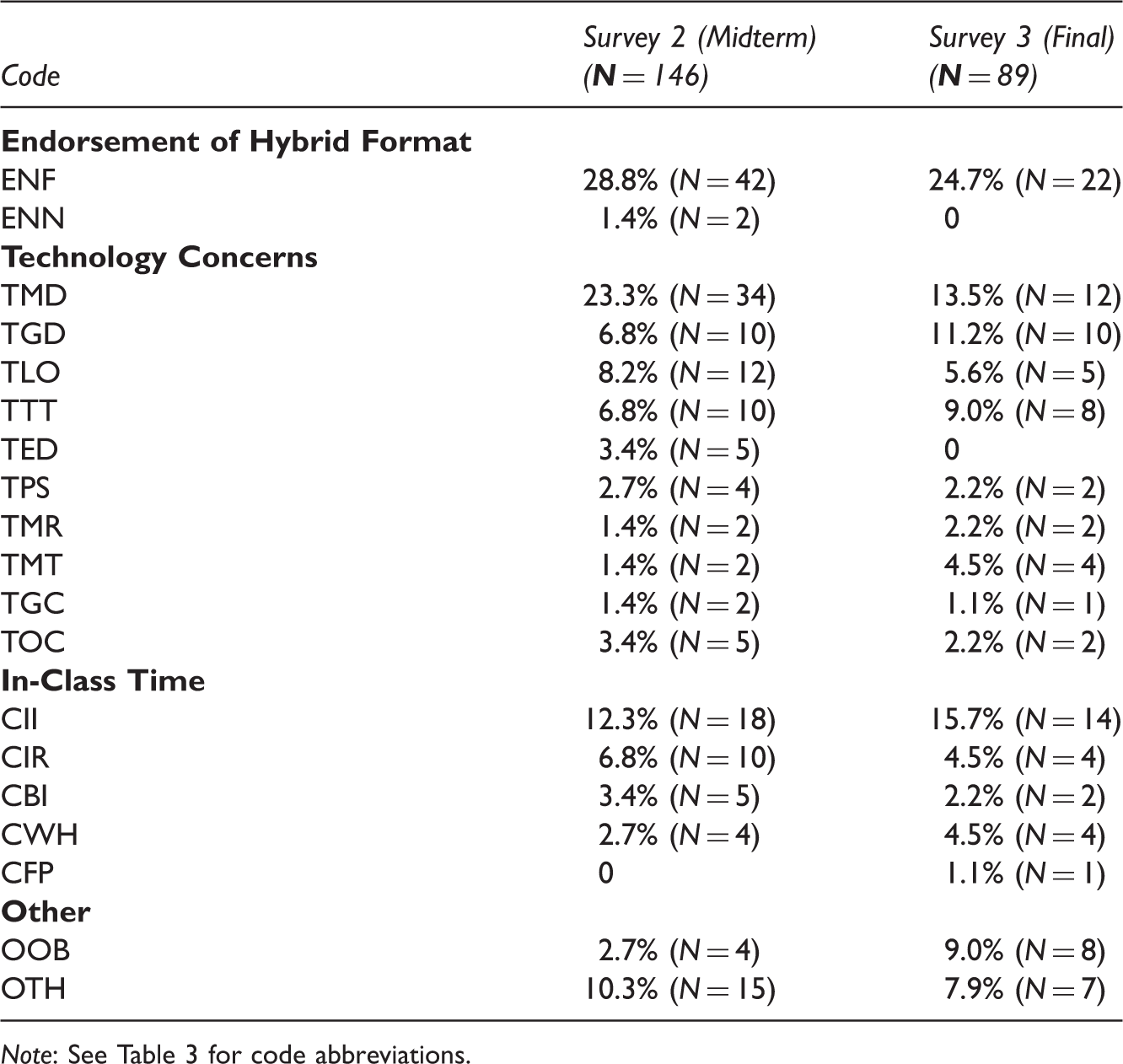

Qualitative Responses to Open-Ended Questions of Surveys 2 and 3 (percentages for each column exceed 100% due to multiple codes per response)

Note: See Table 3 for code abbreviations.

Survey 3

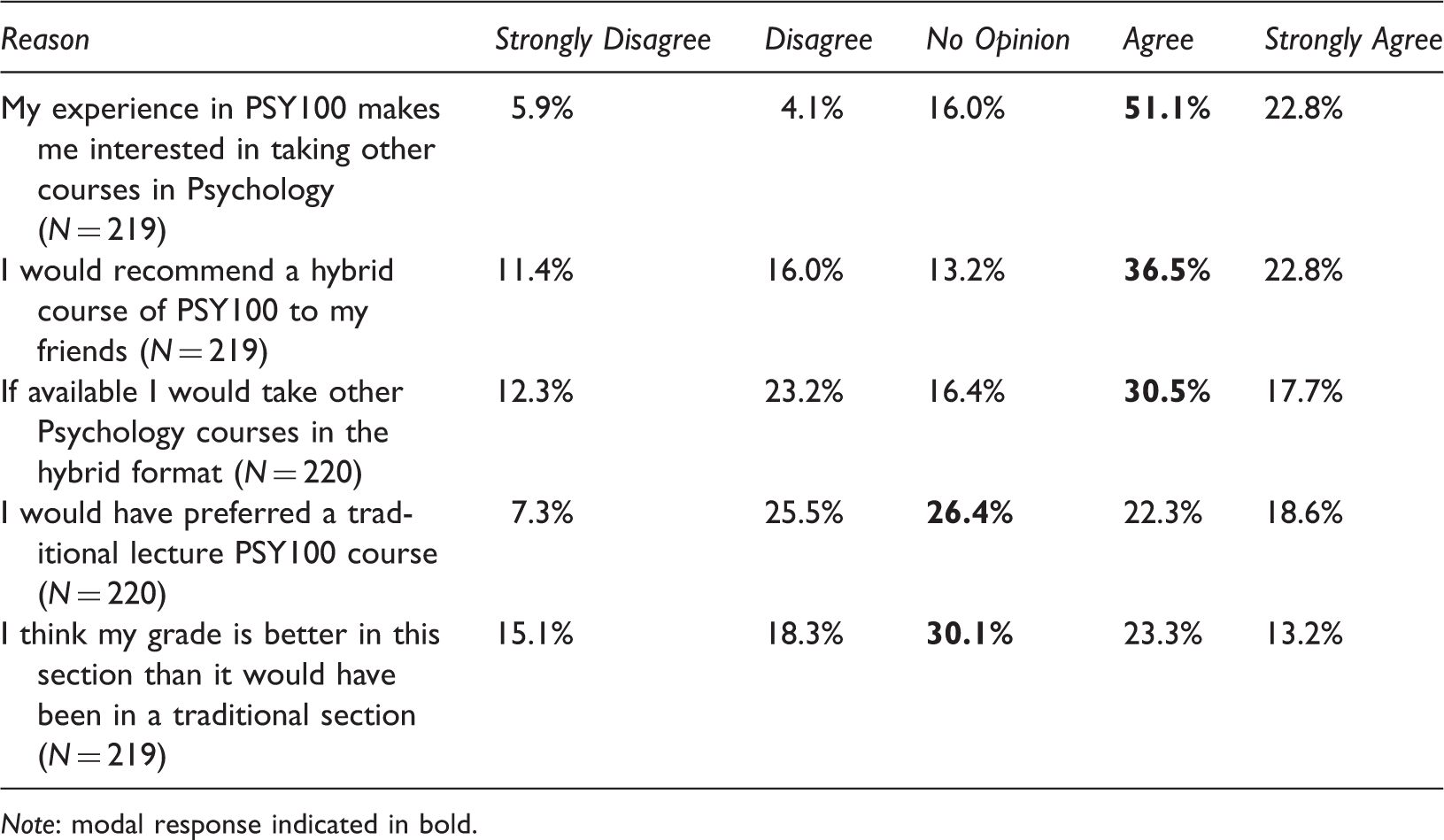

Survey 3 (Exit)—Overall experience

Note: modal response indicated in bold.

At the end-of-semester survey, students provided critical feedback through the open-ended question “What can we do to improve the course in the future?” Student feedback reflected the same themes as expressed at midterm. Of the 89 students who answered the open-ended question (i.e., 40% of those who completed Survey 3), 25% (N = 22) provided positive feedback, whereas 75% (N = 67) made one or more recommendations for improvement; see Table 7. Student recommendations fell under the broad categories of “Technology”, “In-Class Time”, and “Other”, with inter-rater percentage agreement of 87%.

Instructor Perceptions of Hybrid and Traditional Classes

The three instructors who taught in both formats were asked to provide up to three positive and three negative statements about the hybrid course format; see Appendix E for questionnaire. Although all three instructors mentioned positive features of the hybrid course, they unanimously expressed a preference for traditional sections. Positive comments acknowledged the flexibility of the format, as in “The hybrid course offers students an opportunity to work around their own schedules by limiting the amount of time they have to be in class.” Online homework was viewed as a means of exposing students to course materials prior to class and encouraging independent learning, as reflected in comments like “The hybrid format better encouraged students to activate their critical thinking skills by engaging with the material on their own rather than strictly relying on the traditional professor-as-expert” and “Students instantly received feedback after taking (online) tests and were able to check for content mastery or go back to the assigned chapter readings to self-correct their answers.”

Negative comments highlighted recurrent problems with instructional technology, homework completion, and time management, as in “Some students experienced difficulty setting up the online portion and continued to have difficulties throughout the semester”, “I experienced an increase in student excuses for why an assignment had not been completed on time”, and “I sometimes felt overloaded with managing both environments. I had to make sure the technical problems were resolved, assign and correct online assignments, constantly remind students to complete their work on time, read and bring the online responses and discussions into the classroom. Back in the classroom, I had to pay close attention to class-time management.”

Discussion

Given the growing popularity of hybrid teaching and the aggressive marketing of publisher-provided online course-packs as learning tools, we compared students in 15 sections of Introductory Psychology (six hybrid and nine traditional) using exam averages, final grades, and attrition rates as measures of learning outcomes. Although the self-selection of students into sections created a quasi-experimental design, we were able to compare students' performance on departmental exams in course sections of comparable size, taught in the same semesters, and covering the same content, but differing in the format of 30% of instructional time. Overall, our results failed to support publisher claims that course-packs improve learning outcomes (e.g., Cramer, 2013; Hudson et al., 2014), as there was no evidence of superior performance for hybrid students utilizing MyPsychLab in comparison to students receiving traditional instruction.

Additionally, we observed a significantly larger decrease in exam grades over the course of the semester for students in the hybrid sections relative to their peers in the traditional sections. The worsening exam performance over the course of the semester might be due to progressively more difficult course material in Exams 2 and 3 and/or to student burnout or difficulties in keeping up with their coursework over time (cf. Alarcon, Edward, & Menke, 2011). Given that the drop in exam grades was greater in the hybrid sections, this suggests that hybrid students may have struggled to learn more difficult concepts independently through MyPsychLab, and may have benefited from more explicit teaching (Alfieri, Brooks, Aldrich, & Tenenbaum, 2011). On average, hybrid students completed only 66% of their required MyPsychLab homework, which suggests that they may have spent less time engaged with the course materials than their peers in the traditional sections. Students may have procrastinated in completing MyPsychLab assignments, which resulted in their skipping assignments—underscoring the need for instructors to motivate students to work online (cf. Osborne, Kriese, Tobey, & Johnson, 2009; Pan, Gunter, Sivo, & Cornell, 2005). Given that MyPsychLab homework was a course requirement and contributed substantially to final grade calculations, low homework grades suggest resistance to using online course-packs (see also Cramer et al., 2012; McKenzie et al., 2013; Vigentini, 2009).

In line with prior correlational studies, as well as studies demonstrating a link between the amount of time logged into a required CMS and student grades in hybrid courses (DeNeui & Dodge, 2006; Forte & Root, 2011), MyPsychLab grades correlated with exam grades. This correlation suggests benefits of self-testing for learning (cf. Roediger & Butler, 2011; Roediger & Karpicke, 2006, for reviews), but might also be interpreted as evidence that more capable students are more likely to complete assigned homework than their less capable peers. As suggested by prior research (Ramdass & Zimmerman, 2011), students with stronger self-regulation skills, motivation, and perceived responsibility for learning are more likely to complete homework assignments and be more effective in engaging in self-testing and assessment.

Overlapping Student and Faculty Concerns about Instructional Technology

Both instructors and students voiced concerns about the technology component of the hybrid course, specifically with respect to time management and frustration with technical difficulties. As many students had little-to-no grasp of the online course requirements at the outset of the course, they required considerable technical support and encouragement to succeed. Whereas students requested that more in-class time be devoted to online work, instructors felt that online support took time away from covering course topics. Students also suggested that more class time was needed for hands-on activities, discussions, and exam review—with some expressing a more general concern that instructors needed to teach at a slower pace. Slowing down, however, was not possible if the hybrid sections were to cover the same topics as the traditional sections.

Technical difficulties were exacerbated as MyPsychLab underwent revision over the course of the study, as new features were added and the website was expanded and re-configured. Unfortunately, optimal web-browsers changed across MyPsychLab versions, and publisher representatives sometimes failed to have up-to-date information about compatibility. A frequent comment was that the videos failed to load, which might account for comments that fewer videos be used. As we have no comparative data, we simply do not know whether students would have fared any better with a different course-pack (e.g., WileyPLUS, and LaunchPad). Furthermore, it should be emphasized that many students caught on quickly and apparently thrived, as indicated by end-of-semester comments like “The format of the PsychLab was a little confusing at first, but overall the class was great and I would definitely take another hybrid class.”

Matching Instructional Formats to Student Preferences

Given the range of student engagement with the online work and recurrent complaints about MyPsychLab, it would seem advantageous for students to self-select into course formats that match their interests in using instructional technology. Online coursework offers students the flexibility of completing their studies at their convenience, while aiding institutions in meeting increased enrollment demands, especially for courses at the introductory level (Powers et al., 2013; Tallent-Runnels et al., 2006). However, hybrid courses require personal responsibility and motivation to pursue learning independently; students with these qualities may like the flexibility of working online in combination with reduced in-class instruction. In contrast, students who work better in highly structured environments tend to prefer traditional courses (Jensen, 2011).

As indicated by the survey taken on the first day of class, the majority of students self-selected into hybrid sections without noticing online requirements. Such lack of awareness—reflected in statements like “It's not the course that bothered me. I just didn't know what I was getting myself into when I joined this hybrid course”—precludes students' careful consideration of how varying course demands might fit their expectations. In this regard, several students commented that more information regarding the hybrid format was needed before registration. Results of surveys taken at midterm and at the end of the semester indicate that the hybrid format was not a good fit for a substantial number of students: 28% of hybrid students who took the survey at midterm disagreed with “I enjoy the online activities through MyPsychLab”, and 19% disagreed with “MyPsychLab helped me to learn the textbook material”. This level of satisfaction with MyPsychLab cannot be directly compared to other studies (e.g., Cramer et al., 2012; McKenzie et al. 2013), as none have required course-pack usage and rates of voluntary usage have been low. Our study appears to be the first to examine student satisfaction with a publisher-provided course-pack when its usage was required.

In addition to the varying online requirements, students in hybrid and traditional sections used different textbook formats—with hybrid students encouraged to purchase the e-text, and traditional students encouraged to purchase paper copies. Although we did not collect data on student textbook usage, we are fairly certain that most hybrid students purchased e-texts. Notably, several students reported that they disliked the e-text in the surveys, which fits with other reports of student preferences for paper textbooks (Shepperd, Grace, & Koch, 2008; Woody, Daniel, & Baker, 2010). Students may require more time to read material in e-texts as compared to standard textbooks, perhaps due to tendencies to multi-task while engaged with digital media (Daniel & Woody, 2013). Taken together with student comments that the e-text was confusing, hybrid instructors may need to teach reading skills specific to the use of e-texts, such as how to look up unfamiliar vocabulary and use links to access supplementary materials, as well as techniques for reducing multi-tasking to encourage focused attention when reading online.

Limitations

Our study had a number of limitations that should be addressed in future work. First, because students self-selected into course sections based on scheduling and seat availability, we cannot be certain that the students who enrolled in hybrid sections were fully comparable to their peers in traditional sections. While the majority of hybrid students seemed unaware they had enrolled in a section with online requirements, they did know that their class met only once per week for two hours. Given that the traditional sections met twice per week, the hybrid sections may have attracted students with greater constraints on their time due, for example, to employment, family obligations, or heavier course loads. Future research should include a broader range of questions, as well as a pre-test to assess prior knowledge of course material, to ascertain how student characteristics might influence the self-selection process for enrolling in hybrid versus traditional sections. Although the demographic information we collected did not distinguish the students as a function of instructional format, it is impossible to know whether other factors, such as students' self-regulation skills, study habits, motivation to learn, and overall grade point average, might have distinguished the groups.

Second, we acknowledge other instructional features besides the number of in-class hours that distinguished the two groups, including the final grade calculations and the textbook formats. The use of different formulae for computing final grades across formats—necessitated by the need to credit hybrid students for completing online homework—may have increased the perceived stakes of exams for traditional students relative to their peers in hybrid sections. Likewise, the adoption of an e-text, intended to reduce expenses for hybrid students required to purchase MyPsychLab access, may have influenced study habits, for example, by increasing the tendency to engage with the Internet while reading.

A third limitation is that we collected midterm and end-of-semester surveys only from hybrid students, and we interviewed only the instructors who had taught in both formats. Thus, we do not know to what extent students and instructors in traditional sections had course concerns (e.g., too fast pace, and need for more time for in-class activities) that mirrored the concerns of hybrid students and instructors. Although rates of class attrition were similar in hybrid and traditional sections (averaging ∼16%), students' reasons for dropping out may or may not have been the same. (Note that none of the hybrid students who dropped the course had logged into MyPsychLab, which suggests that the online requirements may have deterred them, although other explanations may be possible as well.) Whereas our decision to use anonymous surveys may have encouraged students to express frank views about the structure of the hybrid course, it prevented our linking survey responses with course outcome measures. Thus, we do not know how students' attitudes towards MyPsychLab, the e-text, and other aspects of online instruction impacted their exam and homework grades and attrition, nor could we control for the demographic information collected through the surveys (e.g., gender, and major) in the statistical analyses.

Finally, as our study looked specifically at MyPsychLab as the implementation for the online component of hybrid instruction, we cannot be certain that our results would generalize to other course-packs, nor do we know which course-pack features were most beneficial for learning. Many publisher-provided course-packs are similar in providing quick review quizzes based on textbook material as well as integrated multimedia activities, but differ in details. For example, MyPsychLab provides links to the e-book to assist the student in relating each activity with what they are reading. LearningCurve, part of Macmillan's LaunchPad, provides “adaptive” quizzes tailored to each student's knowledge level, with questions leading up to a target level of mastery. McGraw-Hill's Connect also uses adaptive quizzes, but with a focus on meta-cognition. Given the observed correlations between online homework completed and exam grades in our study and others (e.g., Cramer et al., 2012; McKenzie et al., 2013), the extent to which students are motivated to engage with the online course materials may prove to be the limiting factor in their utility, as all of the publisher-provided course-packs share features designed for effective self-testing, assessment, and review.

Conclusions

Instructional technology has been promoted as a means of creating interactive, learner-focused classrooms for diverse students (Collins & Halverson, 2009; Moeller & Reitzes, 2011). Publisher-provided course-packs have been designed to promote good study habits with ready-made study modules and direct links to an e-text. However, at large public universities like ours, students tend to have poor preparation to engage with instructional technology, and many find online work to be a burden (Wach et al., 2011). By assisting students in navigating online requirements in an introductory-level course, we were able to promote technological literacy as a learning objective. Nonetheless, we observed costs to learning in the hybrid environment over the course of the semester in terms of exam grades. For the hybrid format to reach its potential may require better integration of online and in-class work as well as further supports to increase student engagement. With increased usage of instructional technologies, researchers need to investigate how specific course-pack features and modules impact learning so that online work can be assigned with careful thought to its purpose.

Footnotes

Acknowledgements

First authorship is shared by Powers and Brooks. Preliminary results were presented at the Annual Meeting of the Eastern Psychological Association, March 2013, and the College of Staten Island Undergraduate Research Conference, April 2013. The authors would like to thank Kenny Zeng and Naomi J. Aldrich for their help with data collection and tabulation.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The research was supported by a Teaching with Technology mini-grant from the College of Staten Island, City University of New York.