Abstract

Hepatitis B is a significant global health concern and poses a substantial burden on public health systems. Short video platforms such as TikTok and Bilibili have become important channels for health information dissemination. However, the quality and reliability of Hepatitis B-related content on these platforms remain unclear. The objective of our research is to evaluate the quality of information regarding Hepatitis B disseminated on the TikTok and Bilibili short video platforms. On April 1, 2025, we systematically collected the top 100 Hepatitis B-related short videos from TikTok and Bilibili, totaling 200 videos. Basic video information was extracted, and video quality and reliability were assessed using the Global Quality Scale (GQS), modified DISCERN (mDISCERN), and JAMA benchmarks. Spearman correlation analysis was performed to examine the relationship between engagement metrics and quality scores. TikTok videos demonstrated greater user engagement, as evidenced by higher metrics for likes, comments, and shares, and also achieved superior reliability scores compared to Bilibili. Specifically, the median reliability scores for TikTok videos were mDISCERN: 4 (3-4) and JAMA: 3 (3-3), whereas for Bilibili videos, these scores were mDISCERN: 3 (3-4) and JAMA: 2 (2-3). In terms of content quality, as assessed by the GQS, both platforms exhibited similar levels (TikTok: 4 [3-4], Bilibili: 4 [3-4]). Additionally, videos uploaded by hepatologists consistently showed higher quality and reliability. Spearman correlation analysis indicated significant but weak positive correlations between engagement metrics (likes, comments, shares, saves) and both GQS and JAMA scores; however, no significant correlation was observed with mDISCERN scores. The overall quality and reliability of Hepatitis B-related short videos were moderate, with TikTok videos outperforming Bilibili videos in reliability. Videos created by hepatologists demonstrated higher quality and reliability. We recommend that the public exercise caution when consuming health information from short videos to avoid potential misinformation.

Introduction

Hepatitis B is a significant global health concern, with an estimated 316 million people living with chronic Hepatitis B virus (HBV) infection worldwide. 1 The disease poses a substantial burden on public health systems, contributing to liver cirrhosis, hepatocellular carcinoma, and other severe liver diseases. 2 The prevalence of Hepatitis B varies widely by region, influenced by factors such as vaccination programs, transmission routes, and socioeconomic conditions. 3 In China, despite significant progress in reducing the prevalence of Hepatitis B through vaccination, the disease remains a major public health challenge.4,5 The clinical importance of Hepatitis B is underscored by its potential for chronicity and the associated long-term health implications. 6 These considerations underscore the paramount importance of elevating public awareness and understanding of Hepatitis B, as this is essential for achieving better health outcomes and alleviating the overall disease burden.

With the emergence of the concept of health literacy, the global demand for disease and health education has grown increasingly significant. Leading health organizations, including the National Institutes of Health, the US Department of Health and Human Services, and the American Medical Association, consistently recommend developing patient education materials at a sixth-grade reading level to ensure accessibility. 7 The digital revolution has fundamentally transformed health information acquisition patterns. 8 It is known that approximately half of the adult population consults the internet for health-related information. 9 Short-video platforms like TikTok and Bilibili have emerged as predominant health information sources due to their accessibility, engaging formats, and interactive features.10,11 These platforms leverage visually compelling content, particularly videos, to facilitate the dissemination of complex medical knowledge, thereby significantly enhancing information accessibility and public engagement while making health information easier to absorb and remember. 12 The quality of such information is paramount, as evidence confirms that patients who acquire accurate knowledge about disease causes, pathophysiology, and treatment protocols demonstrate better participation in and compliance with therapeutic regimens. 13 However, the absence of peer-review mechanisms and stringent regulatory oversight has resulted in highly variable content quality, with numerous videos containing misleading or inaccurate information. Several studies have indicated that the majority of health-related content on TikTok lacks scientific accuracy, while Bilibili also faces similar issues concerning the completeness and reliability of its content.14,15

Although existing studies have evaluated video quality for various conditions (eg, laryngeal carcinoma, 16 colorectal polyps, 17 rectal cancer, 18 metabolic dysfunction-associated steatotic liver disease 19 ) on these platforms, the reliability of Hepatitis B-specific content remains largely unexamined. This study employs a multidimensional assessment framework to systematically evaluate the quality and reliability of Hepatitis B-related videos on TikTok and Bilibili. Our findings aim to provide evidence-based recommendations for public health information selection while informing platform content regulation policies to optimize health communication in the digital era.

Methods

Search Strategy and Data Collection

This study is a cross-sectional content analysis aimed at evaluating the quality and reliability of publicly available hepatitis B-related health information on mainstream short-video platforms in China. On April 1, 2025, we systematically collected the top 100 search results for “Hepatitis B” (Chinese: “乙型病毒性肝炎”) from both Bilibili and TikTok (China’s version) using newly registered accounts to control for algorithmic bias (Figure 1). After removing duplicate videos (defined as identical content from different uploaders) and irrelevant entries based on title screening, we obtained a final sample of 100 videos per platform. We confined our analysis to the top 100 videos, as multiple studies have demonstrated that videos beyond the top 100 exert no significant influence on the analytical outcomes.14,20,21 Furthermore, our final sample of 200 videos exceeds the sample sizes of numerous comparable studies in the field and enabled the detection of statistically significant differences in our primary outcomes between platforms. For each video, we recorded the title, uploader characteristics, duration, engagement metrics (likes, comments, shares, saves), and days since published.

Flow chart of study selection and inclusion of videos related to Hepatitis B (乙型病毒性肝炎).

Classification of Videos

The videos were classified by upload source (hepatologists, non-hepatologists, patients, and science communicators), content type (detection, disease knowledge, treatment, and reports and news), and format (animation and live videos), providing a comprehensive categorization framework for analysis. For more detailed information, please refer to the online Supplemental material.

Methodology for Assessing Video Quality and Reliability

Video quality and reliability were systematically evaluated using 3 validated instruments: the Global Quality Score (GQS) assessed overall content quality, while the modified DISCERN scale (mDISCERN) and JAMA benchmark criteria were employed to evaluate reliability. The mDISCERN instrument assessed reliability through 5 dichotomous items (1 = present, 0 = absent), with total scores categorized into 5 reliability tiers: unreliable (0-1), marginally reliable (2), moderately reliable (3), largely reliable (4), and highly reliable (5). 22 The JAMA score evaluated 4 fundamental quality attributes (authorship, attribution, currency, and disclosure), yielding a maximum possible score of 4 points. 23 Content quality was further assessed using the GQS 5-point Likert scale (1 = exceptionally poor to 5 = excellent quality). 24 For more detailed information, please refer to the online Supplemental material.

Evaluation Process

Two trained medical evaluators (BW C and WJ S) independently scored all videos following standardized training to ensure rating consistency. In cases where the ratings from the 2 evaluators were inconsistent, an arbitrator (XY C) was consulted to assign the final score. Thereafter, all authors unanimously agreed on the final ratings.

Ethics Consideration and Consent to Participate

This study did not require approval by the local Research Ethics Board as it involved publicly available data only. All information was accessed and obtained publicly, and no interaction with any individual was involved. No personal information, clinical data, or human specimens were used. All data were anonymized and presented in aggregate form, ensuring that no individual content creator could be identified from the reported results.

Statistical Analyses

Given the non-parametric distribution of the data, results are presented as medians with interquartile ranges (IQR). Nonparametric tests were applied for group comparisons: the Mann–Whitney U test for 2-group analyses and the Kruskal–Wallis H test for multi-group comparisons. Inter-rater reliability, calculated using Cohen’s κ coefficient, was interpreted per Landis and Koch criteria: κ > 0.80 (almost perfect agreement), 0.61 to 0.80 (substantial agreement), 0.41 to 0.60 (moderate agreement), and ≤0.40 (poor agreement). Spearman’s correlation assessed (a) inter-variable relationships among video characteristics and (b) associations between video ratings and other parameters. All tests were 2-tailed, with P < .05 considered statistically significant. Data analysis was conducted using GraphPad Prism version 9.0.0 for Windows.

Result

Video Characteristics

Based on our keyword search, we obtained 200 videos for data extraction and analysis: 100 from TikTok and 100 from Bilibili. The general characteristics of the videos are presented in Table 1, which shows that compared to TikTok videos, Bilibili videos had longer durations and more days since publication, but fewer likes, comments, shares, and saves (P < .001).

Characteristics of the Videos in TikTok and Bilibili.

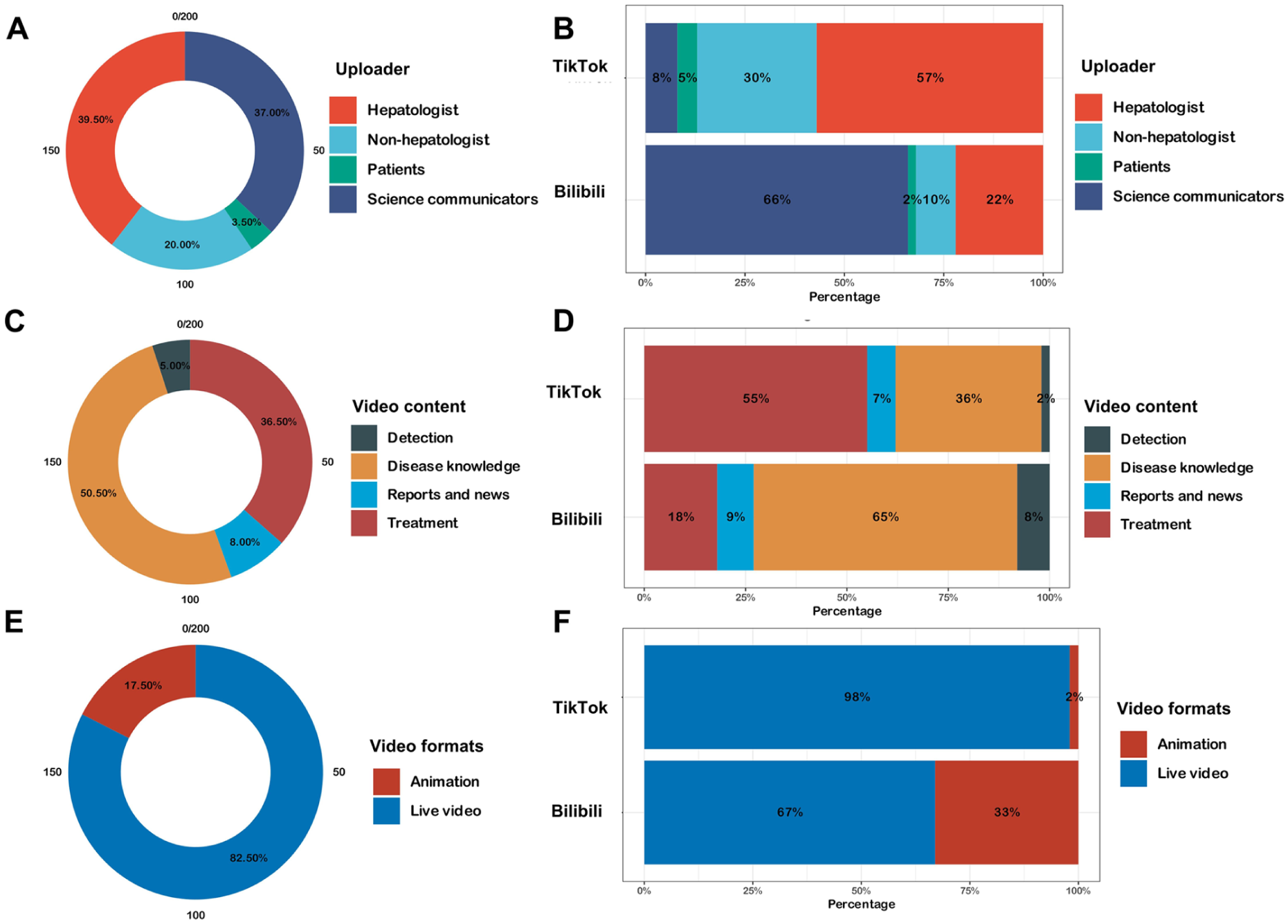

Figure 2 and Tables 2 and 3 provide a detailed breakdown of the video characteristics on Bilibili and TikTok. Regarding the sources of the videos, science communicators uploaded the most videos on Bilibili (66/100, 66%), followed by hepatologists (22/100, 22%). On TikTok, hepatologists (57/100, 57%) and non-hepatologists (30/100, 30%) were the primary video uploaders. Compared to videos uploaded by science communicators, videos posted by hepatologists and non-hepatologists on TikTok receive fewer likes, comments, saves, and shares. On both Bilibili and TikTok, the most prevalent video content types were disease knowledge and treatment, comprising 65% and 55% of the videos, respectively. Conversely, videos related to detection, reports and news were the least common on both platforms. However, detection-related videos received a higher number of likes, comments, saves, and shares on both platforms. Additionally, 67% (67/100) of the videos on Bilibili were live videos, while 33% (33/100) were animations. On TikTok, 98% (98/100) were live videos, and 2% (2/100) were animations. Despite the popularity of live videos on both platforms, animations were more popular on TikTok.

Percentage of videos according to video uploaders, video contents, and video formats on TikTok and BiliBili. (A) Overall types of video uploaders. (B) Types of video uploaders on TikTok and Bilibili respectively. (C) Overall categorization of video content. (D) Categorization of video content on TikTok and Bilibili. (E) Overall distribution of video formats. (F) Distribution of video formats on TikTok and Bilibili.

Characteristics of the Videos Across Sources and Content in Bilibili.

Characteristics of the Videos Across Sources and Content in TikTok.

Video Quality and Reliability Assessments

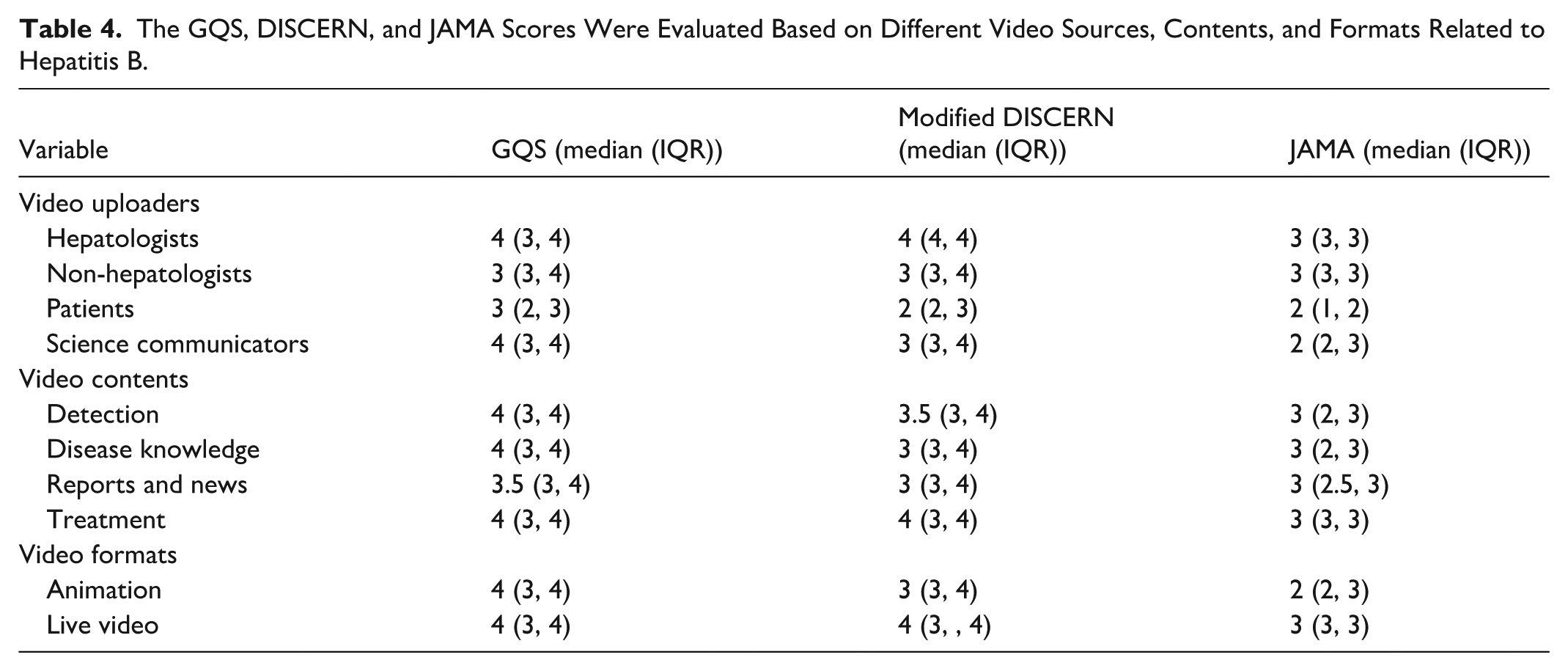

The video quality was assessed using the GQS, while reliability was evaluated through the mDISCERN and JAMA scores (Table 4). There was a high degree of concordance, with a κ value of 0.81.

The GQS, DISCERN, and JAMA Scores Were Evaluated Based on Different Video Sources, Contents, and Formats Related to Hepatitis B.

Comparison of Platforms

The assessment of video quality and reliability revealed evident disparities between the 2 platforms. TikTok videos achieved median (IQR) scores of 4 (3-4) for GQS, 4 (3-4) for mDISCERN, and 3 (3-3) for JAMA. Bilibili content showed comparable yet slightly lower median scores: 4 (3-4) for GQS, 3 (3-4) for mDISCERN, and 2 (2-3) for JAMA. Comparative analysis indicated that TikTok outperformed Bilibili in terms of reliability, with statistically significant superiority in the JAMA and mDISCERN metrics (P < .001). However, no significant difference was observed in the GQS metric (P = .587), suggesting comparable content quality between the 2 platforms (Figure 3).

The mDISCERN, GQS, and JAMA score of videos related to Hepatitis B on TikTok and BiliBili. (A) Comparison of mDISCERN between TikTok and BiliBili videos. (B) Comparison of GQS score between TikTok and BiliBili videos. (C) Comparison of JAMA score between TikTok and BiliBili videos. (D) Ridge plot showing the overall distribution of mDISCERN score. (E) Ridge plot showing the overall distribution of GQS. (F) Ridge plot showing the overall distribution of JAMA score. NS indicates not significant (P ≥ .05). **P < .01, ***P < .001.

Comparison of Uploaders

Hepatologists consistently exhibited superior video quality in all 3 assessment criteria. Non-hepatologists and science communicators typically produced higher-quality videos than patients, yet their performance still lagged behind that of gastroenterologists. Notably, patient-generated videos consistently ranked lowest across all scoring systems (Figure 4).

The mDISCERN, GQS, and JAMA score of videos related to Hepatitis B from different video uploaders. (A) mDISCERN score. (B) GQS score. (C) JAMA score. **P < .01, ***P < .001.

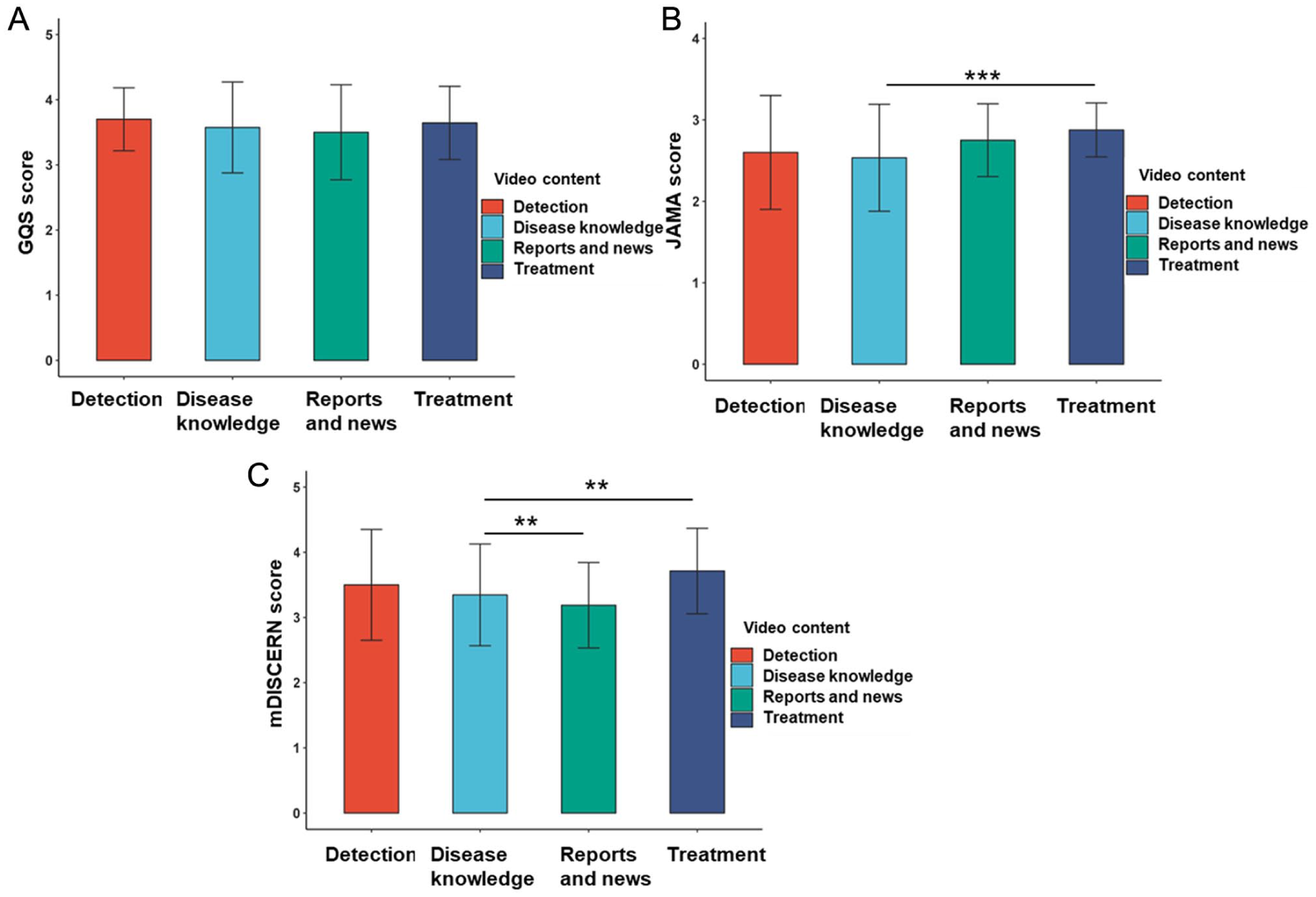

Comparison of Content

In terms of content quality, the 4 types of video content exhibit no significant differences in the GQS scoring system, all demonstrating moderate to good quality. However, there are some differences in reliability: videos related to Treatment show higher reliability in both the JAMA and mDISCERN scoring systems (Figure 5).

The mDISCERN, GQS, and JAMA score of videos related to Hepatitis B from different video content. (A) mDISCERN score. (B) GQS score. (C) JAMA score. *P < .05, **P < .01, ***P < .001.

Comparison of Format

Within the GQS scoring framework, the content quality assessments of animated and live videos are remarkably similar, failing to exhibit any statistically significant differences. Conversely, in the context of reliability evaluations conducted via the JAMA and mDISCERN scoring systems, live videos consistently achieve markedly superior scores when juxtaposed against their animated counterparts (Figure 6).

The mDISCERN, GQS, and JAMA score of videos related to Hepatitis B from different video format. (A) mDISCERN score. (B) GQS score. (C) JAMA score. NS indicates not significant (P ≥ .05). **P < .01, ***P < .001.

Spearman Correlation Analysis

Given the non-normal distribution of the data, Spearman’s rank correlation analysis was employed to examine the relationships between video engagement metrics (likes, comments, shares, and saves), video duration, time since publication, and 3 quality assessment scales (see Figure 7 and Tables 5 and 6). The analysis revealed significant positive correlations among all engagement metrics (P < .05), with correlation coefficients (r) ranging from .85 to .95, indicating a strong association among these variables. Video duration demonstrated significant negative correlations with likes (r = −.46, P < .001), comments (r = −.36, P < .001), shares (r = −.47, P < .001), and saves (r = −.40, P < .001), suggesting that longer videos tend to have lower engagement metrics. In contrast, a significant positive correlation was observed between video duration and days since publication (r = .26, P < .001), implying that longer videos are often published earlier.

Spearman correlation analysis among different video variables, mDISCERN, GQS, and JAMA score concerning Hepatitis B videos. *P < .05, **P < .01, ***P < .001.

Spearman Correlation Analysis Between the Video Variables.

Significant at P < .05.

Spearman Correlation Analysis Between Video Variables and the GQS, Modified DISCERN, and JAMA Scores.

Significant at P < .05.

In terms of quality assessment, GQS scores and JAMA scores showed weak but significant positive correlations with engagement metrics such as likes, comments, shares, and saves, whereas mDISCERN scores did not exhibit correlations with these metrics. Notably, JAMA scores exhibited significant negative correlations with both video duration (r = −.46, P < .001) and days since publication (r = −.34, P < .001), indicating that high-quality videos, as assessed by the JAMA criteria, tend to be shorter in duration and have a shorter time since publication.

Discussion

This cross-sectional study yielded 3 principal findings regarding Hepatitis B-related information on short-video platforms. Firstly, while the overall content quality was similar between TikTok and Bilibili, TikTok videos demonstrated significantly higher reliability as measured by both the JAMA and mDISCERN instruments. Secondly, the source of the video was a critical determinant of quality; content created by hepatologists was consistently superior in both quality and reliability compared to that from non-specialists, patients, or science communicators. Thirdly, a notable dissociation was observed between quality and popularity, as videos with higher reliability scores did not consistently achieve greater user engagement. These core findings highlight both the potential and the pitfalls of using short-video platforms for public health communication regarding Hepatitis B.

Hepatitis B is a significant global health concern, affecting millions of individuals worldwide and posing a substantial burden on public health systems. 25 Effective health communication is critical for disease prevention, early detection, and treatment adherence. In recent years, social media platforms have emerged as powerful tools for health communication, reaching vast audiences with diverse information.26,27 For instance, Bilibili has evolved into a comprehensive knowledge-sharing platform with strong educational communities, while TikTok dominates mobile health content consumption in China. These 2 platforms represent the dominant short-video ecosystems in mainland China, where other international platforms like YouTube, Facebook and Instagram have limited accessibility. Both platforms specialize in the short-form video format that characterizes contemporary health communication trends, allowing for methodologically consistent comparisons. This focused selection ensures our findings are directly applicable to the primary health information sources for Hepatitis B patients in Chinese-speaking populations. Nevertheless, the absence of rigorous medical content vetting processes on these platforms contributes to substantial variability in information quality. 28 Recognizing both the persistent health education needs of Hepatitis B patients and the expanding influence of short-form video content in medical communication, this study conducted a systematic assessment of Hepatitis B-Related video quality and reliability across these platforms, aiming to facilitate patient identification of trustworthy health information.

To our knowledge, the quality of online Hepatitis B information, particularly on short-video platforms, remains underexplored. As the pioneering study addressing this research gap, our findings reveal that while the videos on both platforms were similar in terms of content quality, there were significant differences in reliability. Videos on TikTok scored significantly higher in reliability according to both the JAMA and mDISCERN scoring systems compared to those on Bilibili. This discrepancy primarily stems from systematic differences between the 2 platforms in content format, dissemination mechanisms, and most critically, moderation policies and incentive structures. TikTok’s short-video format facilitates rapid production and distribution, while Bilibili’s longer videos typically involve extended production cycles, resulting in slower updates and weaker interactivity. TikTok creators prioritize conciseness and accessibility to meet users’ need for quick information acquisition. In contrast, although Bilibili hosts substantial high-quality content, its overall quality varies significantly, with some videos being overly specialized or lengthy, making it difficult for users to extract key information efficiently. Notably, TikTok employs a stricter, proactive moderation system for health information, explicitly prohibiting “misleading medical claims” through automated keyword filtering and dedicated human review. While Bilibili also conducts moderation, it remains less centralized regarding health misinformation, relying more on post-publication user reports and community feedback, which may lead to delayed or inconsistent handling of unreliable content. Furthermore, platform incentive structures differ substantially. TikTok’s algorithm prioritizes engagement and completion rates, rewarding creators who present clear, credible, and easily digestible information—often by citing sources and displaying credentials. Conversely, Bilibili’s ecosystem encourages depth, discussion, and community interaction, which may accommodate or even reward speculative or opinion-based content within its longer-form, discussion-oriented culture. Collectively, these factors result in significant differences in content style and quality between the platforms, ultimately influencing the reliability of health information.

Notably, videos created by healthcare professionals, particularly hepatologists, showed significantly higher educational value, quality, and reliability than those from other sources. This advantage likely stems from their specialized expertise, comprehensive understanding of clinical guidelines, and updated research knowledge. In contrast, non-professional creators (eg, patients and science communicators) predominantly relied on personal experiences and subjective opinions, potentially introducing informational biases. These findings underscore the substantial impact of professional background on content quality. However, the data reveal a key paradox: high-quality content did not translate into high user engagement. On the Bilibili platform, the median number of likes for videos by hepatologists was only 90 (IQR: 28-186), significantly lower than that for non-hepatologists, which stood at 306 (IQR: 198-920); on TikTok, although the median number of likes for videos by hepatologists reached 4173 (IQR: 1138-12 134), it was still lower than that for science communicators, which was 11 569 (IQR: 6059-25 012.5). These findings are consistent with the results of Chen et al., in that videos posted by thyroid experts in studies related to thyroid nodules, despite their superior content quality, received relatively less attention in terms of likes, comments, saves, and shares. 29 In addition, similar phenomena have been observed in several other comparable studies, indicating that higher-quality videos do not necessarily attract more attention.30,31

This engagement-reliability paradox appears to stem from multiple factors. The rigorous terminology and complex professional language in expert-created videos likely present comprehension barriers for lay audiences, potentially limiting their appeal. Additionally, platform algorithms that prioritize highly interactive content over quality may further exacerbate this gap. 32 Furthermore, short videos entail a tension between educational rigor and entertainment appeal. Professional content often prioritizes factual accuracy over engaging elements such as humor, trending audio, and dynamic editing, which are algorithmically promoted and drive user engagement. Beyond the dimensions of quality and reliability assessed in this study, the health literacy demands of video content warrant careful consideration. While hepatologist-created videos scored higher on reliability metrics, their actual comprehensibility for the general public remains uncertain. The multimodal nature of short videos—integrating visual demonstrations, verbal explanations, and on-screen text—creates both opportunities and challenges for meeting diverse health literacy needs. When reliable health information is not presented in an understandable format, it fails to achieve its educational purpose regardless of scientific quality. This underscores the urgent need for healthcare professionals to develop skills in creating content that balances scientific accuracy with accessibility, while platforms should implement algorithms that value both reliability and comprehensibility in content distribution. Future research should incorporate systematic evaluation of both linguistic complexity and visual clarity using validated health literacy assessment tools to ensure that reliable information is truly understandable to its intended audience.

Content type also influenced video quality and reliability. Videos focusing on treatment exhibited higher reliability scores, possibly because treatment-related information is more likely to be based on established clinical guidelines and research findings. Conversely, videos related to detection, while less common, received higher engagement metrics, suggesting that the public is highly interested in early detection methods. This highlights the need for platforms to encourage the production of high-quality detection-related content to meet public demand.

This study employed Spearman correlation analysis to investigate the relationship between video quality and user engagement metrics. The results demonstrated significant positive correlations (P < .05) among likes, comments, favorites, and shares, indicating strong interrelationships between these engagement indicators, consistent with previous research findings.29,33 This strong correlation indicates that positive user feedback is likely to be expressed through various forms of interaction. Video duration was found to have significant negative correlations with engagement metrics, implying that shorter videos are more effective in capturing user attention and fostering interaction. This finding highlights the importance of brevity in digital content to maintain user engagement. In terms of quality assessment, GQS and JAMA scores showed significant positive correlations with engagement metrics, indicating that high-quality, reliable content is more likely to be engaged with by users. However, mDISCERN scores did not exhibit significant correlations with engagement metrics, suggesting that while reliability is important, other factors such as content presentation and user experience also play crucial roles in driving engagement. Notably, JAMA scores exhibited significant negative correlations with video duration and days since publication, indicating that high-quality videos, as assessed by the JAMA criteria, tend to be shorter and more recent. This highlights the importance of timely, concise content in maintaining user interest and trust. Furthermore, our analysis revealed moderate intercorrelations among JAMA, mDISCERN, and GQS scores. This indicates that while these assessment tools emphasize different aspects, they share common ground in evaluating overall video quality and reliability. In conclusion, although video quality demonstrates some association with user engagement, this relationship is not strong and is influenced by multiple factors. Therefore, relying solely on engagement metrics to assess video quality and reliability would be insufficient. Platform operators and content creators should place greater emphasis on scientific rigor and professional standards to enhance the quality and reliability of health information dissemination.

Our findings offer valuable insights for health policy development, platform content regulation, and public health communication strategies. These insights carry specific implications for Hepatitis B patients seeking information, healthcare providers who recommend resources, and public health campaigns. For patients, the variability in video reliability underscores the need to prioritize content from verified hepatologists while exercising caution with patient-generated and non-professional sources. Healthcare providers should recognize the heterogeneous quality of short video content and consider actively recommending or creating reliable materials to supplement patient education. Public health campaigns could leverage the high engagement potential of these platforms by collaborating with authoritative creators and developing concise, evidence-based messaging tailored to short video formats.

To translate these implications into concrete actions, we propose a multi-level framework for systemic improvement. Platform operators should optimize their content recommendation algorithms by prioritizing source credibility (such as verified professional credentials) alongside engagement metrics, thereby enhancing the dissemination of reliable health information. Regulatory and professional bodies should establish and promote a clear, visible social media verification system for medical practitioners to increase the transparency and credibility of authoritative sources. Simultaneously, user education initiatives should be strengthened through in-platform literacy prompts, micro-learning content developed in collaboration with health organizations, and practical checklists to help viewers critically evaluate medical videos. These coordinated measures would improve the overall ecosystem of health information dissemination on short video platforms.

This study possesses several strengths: (1) This study presents a systematic evaluation of Hepatitis B-related video quality and reliability across major short-video platforms. Our concurrent analysis of both TikTok and Bilibili platforms effectively controls for platform-specific biases, significantly enhancing the representativeness and reliability of our findings. (2) We implemented a comprehensive assessment framework integrating GQS, mDISCERN, and JAMA scoring systems to provide a multidimensional evaluation of content quality and reliability. (3) our comparative analysis of different uploader categories revealed the substantial influence of professional background on video quality. These findings offer valuable insights for health policy development, platform content regulation, and public health communication strategies. We specifically recommend that platforms enhance their content review mechanisms and prioritize the dissemination of professionally-produced, high-quality health information to improve public health literacy. Additionally, our results highlight the importance of specialized training for healthcare professionals and their active engagement in digital health communication to elevate the overall quality of medical information on short-video platforms.

However, this study has several limitations that should be acknowledged. Firstly, as a single-time-point analysis, our findings reflect a snapshot of content shaped by platform algorithms and do not capture longitudinal trends or seasonal variations in health information dissemination. Secondly, the classification of video uploaders primarily relies on verification by short-video platforms. Although platforms may reference uploaders’ credentials such as medical practitioner licenses during verification, overall, potential misclassification bias may occur due to missing or incorrect verification information. Thirdly, the focus on TikTok and Bilibili, while methodologically deliberate for studying short-form video ecosystems, means our findings may not fully represent the health information landscape on other platforms, such as AI-powered innovative developments, YouTube, and online web channels. We cannot overlook the fact that YouTube remains a key source for longer medical content, 34 AI platforms provide synthesized information, 35 and official health websites offer higher editorial standards. 7 Fourthly, the study lacks assessments of the following: assessment of accuracy or medical correctness of short video content; viewer perceptions of content quality, potential behavioral or knowledge outcomes from video exposure; potential selection bias in videos. Additionally, although we employed standardized assessment tools (GQS, mDISCERN, and JAMA scales) and maintained high inter-rater reliability, potential biases inherent in subjective scoring should be considered. Notwithstanding these limitations, this research establishes an important baseline understanding of Hepatitis B information quality on short-video platforms and demonstrates the value of systematic content evaluation in this domain. Future research should expand the sample size and incorporate multi-language, multi-regional short-video platforms for comparative analysis to enhance the external validity of the findings. To advance this field, studies could also examine content evolution through longitudinal designs, assess user comprehension and retention, and extend the evaluation framework to other health conditions.

Conclusion

In our study, we collected and evaluated 200 Hepatitis B-related videos from TikTok and Bilibili. The overall content quality was similar between the 2 platforms, but TikTok videos showed significantly higher reliability based on JAMA and mDISCERN scores. Videos uploaded by hepatologists and those focusing on treatment were found to be of higher quality and reliability. This underscores the importance of professional expertise in health content creation. We suggest that short video platforms should enhance their review mechanisms to improve the reliability of health information. Additionally, users should be cautious and selective, prioritizing content from verified medical professionals to ensure they access accurate and reliable health information.

Supplemental Material

sj-docx-1-inq-10.1177_00469580261441434 – Supplemental material for Evaluating the Quality and Reliability of Hepatitis B-Related Short Videos on BiliBili and TikTok: A Cross-Sectional Study

Supplemental material, sj-docx-1-inq-10.1177_00469580261441434 for Evaluating the Quality and Reliability of Hepatitis B-Related Short Videos on BiliBili and TikTok: A Cross-Sectional Study by Bowen Cheng, Wenjie Shi, Shu Wang, Huanbing Liu, Jing Xiong and Xiaoyan Chen in INQUIRY: The Journal of Health Care Organization, Provision, and Financing

Supplemental Material

sj-docx-2-inq-10.1177_00469580261441434 – Supplemental material for Evaluating the Quality and Reliability of Hepatitis B-Related Short Videos on BiliBili and TikTok: A Cross-Sectional Study

Supplemental material, sj-docx-2-inq-10.1177_00469580261441434 for Evaluating the Quality and Reliability of Hepatitis B-Related Short Videos on BiliBili and TikTok: A Cross-Sectional Study by Bowen Cheng, Wenjie Shi, Shu Wang, Huanbing Liu, Jing Xiong and Xiaoyan Chen in INQUIRY: The Journal of Health Care Organization, Provision, and Financing

Supplemental Material

sj-pdf-1-inq-10.1177_00469580261441434 – Supplemental material for Evaluating the Quality and Reliability of Hepatitis B-Related Short Videos on BiliBili and TikTok: A Cross-Sectional Study

Supplemental material, sj-pdf-1-inq-10.1177_00469580261441434 for Evaluating the Quality and Reliability of Hepatitis B-Related Short Videos on BiliBili and TikTok: A Cross-Sectional Study by Bowen Cheng, Wenjie Shi, Shu Wang, Huanbing Liu, Jing Xiong and Xiaoyan Chen in INQUIRY: The Journal of Health Care Organization, Provision, and Financing

Footnotes

Acknowledgements

The authors would like to express their gratitude to the participants who participated in the study.

Abbreviation

HBV: Hepatitis B; GQS: global quality score.

Ethical Considerations

This study did not require approval by the local Research Ethics Board as it involved publicly available data only. All information was accessed and obtained publicly, and no interaction with any individual was involved. No personal information, clinical data, or human specimens were used. All data were anonymized and presented in aggregate form, ensuring that no individual content creator could be identified from the reported results.

Consent to Participate

Due to the use of publicly available data with no personal identifiers, informed consent was not applicable.

Author Contributions

Chen XY contributed to the study conception design; Cheng BW, Chen XY, and Xiong J were responsible for the review and scoring of the videos; Wang S and Liu HB conducted the data analysis; Cheng BW and Shi WJ prepared the manuscript; Chen XY and Xiong J interpreted the data and revised the manuscript; All authors contributed to the article and approved the final manuscript.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was supported by The First Affiliated Hospital of Nanchang University Young Talents Research and Cultivation Fund (No. YFYPY202433), The Jiangxi Provincial Health Commission Science and Technology Plan Project (No. 202510223), and The Central Government-guided Local Science and Technology Development Fund (No. 20221ZDG020070).

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The data utilized and/or examined in this study can be accessed from the corresponding author upon request, provided that the request is justified and reasonable.*

Supplemental Material

Supplemental material for this article is available online.

Patient and Public Involvement

Patients or the public were not involved in any aspect of our research, including its design, conduct, reporting, or dissemination plans.