Abstract

The eye and facial tracking technology incorporated in the Meta Quest Pro plays a significant role in research exploring visual attention, cognitive processes, user interaction, and human behavior analysis. However, differences in eye anatomy, such as Outer canthal distance (OCD), Interpupillary distance (IPD), and Intercanthal distance (ICD), may impact the accuracy & reliability of eye movement data. This preliminary study aims to evaluate the impact of inter-eye distance variations on eye-movement-based feature extraction in VR environments using the head-mounted device Meta Quest Pro. The data collection process involved the recruitment of 16 participants, comprising 10 males and 6 females, within the age range of 21 to 30 years. This specific range was selected to minimize inter-individual variability, enhance experimental control, and ensure consistency in cognitive and behavioral responses, given that reaction time, attentional regulation, and visual processing speed are known to vary with age. The process is followed by eye measurements (OCD, IPD, and ICD), an eye calibration test before the experiment, and 3 experimental tasks: fixed gaze task, regular eye movement task, and irregular eye movement task. The Meta Quest Pro recorded participants’ eye movements while they performed VR-based tasks, followed by the extraction of eye-related features from the device. The dataset underwent Shapiro–Wilk, and D’Agostino–Pearson normality tests, followed by statistical analysis (Spearman Correlation, Kruskal–Wallis, and Mann–Whitney U test) to assess differences in eye-movement correlations across wide, narrow, and average eye distance groups. The results of statistical tests did not indicate a significant influence of inter-eye distance on eye movement tracking accuracy. Across all inter-eye distances, both Kruskal–Wallis and Mann–Whitney U tests showed no significant differences (all P ≥ .60), strong bilateral correlations were observed for all gaze features (Spearman ρ = 0.84-0.99), and spatial accuracy remained stable across groups, with angular error ranging from 1.11° to 1.36°.

Keywords

Introduction

Virtual reality (VR) has changed human–computer interactions by providing interactive and immersive experiences. It enhances user engagement in various fields, such as healthcare, training simulations, gaming, and research. 1 Current advancements have integrated eye-movement tracking technology into head-mounted display (HMD) systems, gaining importance in applications such as gaze-based interactions, accessibility improvements, and usability enhancement. 2 By tracking user eye movements, HMD systems can improve interaction methods and visual rendering and allow for more intuitive user experiences. 3 By creating more realistic virtual avatars, eye-movement tracking can be utilized to enhance teamwork and social interaction in virtual reality settings. 4 Eye movements are a type of nonverbal communication that offer social indications crucial for successful in-person interactions. 5 The quality of communication among human parties in virtual reality can be enhanced using eye-movement data to control the user’s virtual avatar’s gaze behavior. 5 Various factors influence the quality of HMD eye and facial tracking, including the participants, deployed platform, hardware manufacturer, and experimental environment.6,7 An initial review of the signal quality of VR eye-movement tracking was discussed in an earlier research, 8 which measured the precision and spatial accuracy of the device in 12 individuals in confined and unconstrained environments. Research on eye tracking accuracy in HMDs is limited. Sipatchin et al conducted a thorough analysis of the Vive Pro Eye and discussed the merits and restrictions of its eye tracker. 9 Rocchi et al reviewed the eye-tracking functionalities of Meta Quest Pro and HTC Vive Focus 3 under different distances and head movement conditions. Their results showed higher spatial accuracy for Meta Quest Pro, whereas the precision was better for HTC Vive Focus 3. There was a reduction in the performance of the peripheral FOV at greater distances. Some of the limitations of this study include the lack of latency assessment, a narrow age range, and simplified VR environments. 10

In HMDs, 3 methods are generally employed for eye movement tracking: electrooculography (EOG), video oculography, and scleral search coils. 11 Electrooculography (EOG) is an eye monitoring technique that tracks the potential difference within the eye and quantitatively measures the difference between the retina and cornea at rest (in the absence of stimulation). Movement in gaze direction results in a change in this potential. The electrodes can be placed at the interfacing positions on the face of the HMD with relative ease. This method, although lacking in precision, is useful for monitoring voluntary and involuntary eye movements even when the eyes are shut.12,13 Among the eye-tracking techniques utilized in commercial head-mounted displays, video oculography (VOG) is the most popular option because it guarantees an adequate level of precision and is noninvasive. It captures the movement of a subject’s eyes using cameras mounted on a headset. 14

Eye tracking has also become a key area of research in virtual reality (VR), particularly for enabling hands-free interaction and navigation within a virtual space. This method has great potential for assisting individuals with motor impairments. In a comparative study by Blattgerste et al, 15 eye gaze and head gaze were evaluated for dwell-time-based user interface interactions within AR and VR systems. The results indicated that eye gaze led to faster task completion and fewer errors in all environments. Additionally, more participants (15 out of 24) favored eye gaze over head gaze, bearing witness to its convenience. Despite their benefits, eye-gaze interactions have several practical drawbacks. 15 Eye-movement tracking applications are notable in cognitive studies involving VR environments. For instance, König et al 16 studied the learning outcomes associated with acquiring spatial knowledge through navigation in virtual reconstructions compared with using an interactive city map. By evaluating eye-tracking data, they discovered that the VR participants navigated through the environment as if they possessed actual spatial comprehension, suggesting that they understood navigation in a more practical sense.

In Adhanom et al, 11 the authors provided a comprehensive analysis of eye movement tracking in VR, highlighting both its potential and limitations. They suggested the importance of continuing research into semi-autonomous or multimodal interactions, such as Midas Touch, to solve problems. Their study also highlighted that improving eye- and facial-tracking accuracy would provide better outcomes in different fields, such as education, training, user interaction, and usability.

The Meta Quest Pro VR device was released in October 2022 with an in-built eye and facial feature tracker. It processes gaze data in real time and uses an infrared camera to estimate the user’s gaze direction. Meta Quest Pro allows eye data collection in both PC and stand-alone modes, facilitating versatile research applications. 17

As VR technology continues to improve, understanding the impact of differences in eye anatomy, such as inter-eye distances, on eye-tracking accuracy is crucial. 18 Inter-eye distances, including the Interpupillary distance (IPD), Outercanthal distance (OCD), and Inter-canthal distance (ICD), vary significantly among individuals and may influence the accuracy and reliability of eye-movement data extraction from HMDs. Understanding the impact of inter-eye distance on eye-movement feature extraction is important for enhancing calibration accuracy and ensuring stable data collection in both research and industrial applications. Furthermore, variations in eye anatomy can effect the accuracy of extracted eye movement data or sometimes result in missing data. Thus, investigating the influence of these anatomical differences on feature extraction performance is crucial for enhancing the accuracy and reliability of the data. Anatomical differences in the eyes can effect how efficiently VR headsets track eye movements. In real-world behavioral studies involving children or adults, inaccurate eye movement data extraction can lead to false interpretations. For example, a child may be considered distracted or unresponsive, not because of their actual behavior but because of the misalignment of the eye movement tracker in VR. Similarly, in therapy and serious gaming scenarios, particularly for children with ADHD or autism, inaccurate eye-movement data can effect attention evaluation and responsiveness. Inaccurate eye movement data can provide misleading feedback regarding a child’s engagement. Considering real-world scenarios, our study plays an important role in examining whether the anatomical differences in the eyes effect the accuracy of eye movement feature extraction in VR using Meta Quest Pro.

This preliminary study analyzed the inter-eye distances (IPD, OCD, and ICD) and their impact on eye movement data extraction from Meta Quest Pro. These anatomical measures were selected because they directly effect the alignment between a user’s eyes and eye-tracking sensors in head-mounted displays (HMDs), potentially influencing eye movement accuracy. Data collection involved measuring these eye distances, followed by an eye calibration test before the start of the experiment, which included fixed gaze and blink, regular eye movement, and irregular eye movement tasks. Meta Quest Pro tracked the eye movements of participants while they engaged in VR-based tasks, followed by eye-related feature extraction. The collected features were subjected to data normality tests (Shapiro–Wilk and D’ Agostino–Pearson), followed by statistical analyses (Spearman correlation, Kruskal–Wallis, and Mann–Whitney U statistics) to evaluate differences in eye-movement relationships across participants categorized into wide, narrow, and average eye distance groups. This study is important for learning disabilities research, child and adult behavior analysis, and human–computer interaction, ensuring that eye data remains accurate and reliable across different users. To address these concerns, this study addressed the following research questions:

The remainder of this paper is organized as follows: Section 2 presents the proposed method, including subject selection, device specifications, data acquisition, and details of VR tasks. Section 3 presents the results and discusses the findings in detail. Finally, Section 4 summarizes the research by providing a conclusion and final remarks on the work conducted, along with suggestions for future work.

Methods

Participant Selection

We recruited 10 male participants and 6 female participants of different ethnicities from Soonchunhyang University (female aged 21-27 years, and male aged 23-30 years with no serious illnesses) to participate in the study. The data was collected between 12-Feburary-2025 and 12-March-2025. The experiments were conducted at Soonchunhyang University. The experimental protocols were reviewed and approved by the Institutional Review Board (IRB, reference number: 1040875-2024-09-010) on the human subject’s research and the ethics committees of Soonchunhyang University. All methods in the study were performed in accordance with relevant guidelines and regulations, including the Declaration of Helsinki. This study was carried out in compliance with the STROBE (Strengthening the Reporting of Observational Studies in Epidemiology) guidelines for observational research reporting. 19 Written informed consent was obtained from all participants prior to participation. All participants including male and female subjects had normal vision or corrected-to-normal vision. The 4 participants who wore glasses attained corrected to normal vision through their spectacles, and thus met the inclusion criteria. Both male and female participant’s prior experience with VR was not required. All participants were thoroughly briefed on the tasks prior to the experiment. Furthermore, the participants performed an eye-calibration test to enhance their experience in the VR environment.

Apparatus and Software

The software used for VR Applications was Unity 2021.3.45f1. The stimulus was developed and run on a Windows 10 Operating System computer, processor: Intel(R) Core(TM) i7-8700K CPU @ 3.70 GHz. The same computer was used to display the VR content on Meta Quest Pro. The Meta Quest Pro was connected through an Air Link with the PC. The data were recorded using the built-in tracking features of Meta Quest Pro. Meta XR All In 1 SDK (UPM) Version 72.0 was used to track the features.20,21 Meta Quest Pro was selected for this study because of its advanced eye and facial tracking capabilities, adjustable IPD settings, and increasing use in behavioral and cognitive VR research, making it an ideal device for assessing whether eye movement data remains consistent across varying inter-eye anatomical differences.

VR Tasks

This study aims to investigate the impact of inter-eye distances Interpupillary Distance (IPD), Intercanthal Distance (ICD), and Outercanthal Distance (OCD)—on eye movement feature extraction accuracy in a virtual reality environment using the Meta Quest Pro headset. To analyze the effect of the inter-eye distance on eye movement feature extraction, 3 controlled tasks were designed in a virtual environment. These tasks included fixed gaze with blinking, regular movement, and irregular movement.

Fixed Gaze and Blinking Task

In this task, participants were instructed to fixate on a virtual sphere displayed at a fixed position for 30 s in a virtual environment. During this period, the participants were asked to blink 20 times, enabling the system to capture eye-closeness features (EyesClosedL, EyesClosedR). The virtual sphere was placed at a fixed distance of 1 m to maintain the uniform experimental conditions. This 3D sphere served as the visual target for tracking eye blinks. Figure 1a shows the virtual setup used to monitor the eye blinks. Eye openness and closeness were recorded continuously during the task. The study employed a within-subjects design in which each participant completed the same blink task. The VR camera was placed at the origin (0,0,0) of the Unity world coordinate system.

VR Tasks: (a) fixed gaze and blinking task and (b) spheres shown at different directions (left, right, up, down) ±40° eccentricity relative to center.

Irregular Movement Task

After the gaze fixation task, participants were asked to perform the irregular movement task designed for vertical and horizontal eye movement evaluation through smooth and predictable gaze shifts across predefined targets. In the task, 4 spheres were sequentially displayed for 5 s in the up, down, left, and right directions relative to the central gaze position. The left and right spheres were positioned horizontally, and the upper and lower spheres were placed vertically, as shown in Figure 1b. The stimuli were placed at the extreme points of 2 spheres: the extreme left and extreme right. The participants were asked to keep their heads steady and use only their eyes to track the stimuli in the left, right, up, and down directions. This task examined the movement patterns of both eyes. Table 1 lists the extracted eye-movement features.

Extracted Eye Movement Features.

Regular Movement Task

In the regular movement task, the participants were asked to follow a moving object as it moved in a square or rectangular path within the virtual environment. Each object was displayed for 10 s. Similar to the fixed gaze and irregular movement tasks, this task employed a within-subjects design in which each participant completed the same movement task. The fixed gaze, irregular movement, and regular movement tasks had dark backgrounds in a non-reflective environment. Table 1 lists the extracted eye movement features.

Procedure

The participants were invited to the lab one at a time for a one-time visit to perform the VR task. Upon arrival, participants’ inter-eye distances were calculated using a measuring tape, a procedure shown to be clinically acceptable accuracy for anatomical measurements.22,23 These measurements included Interpupillary, Outercanthal, and Intercanthal Distances.

After recording the eye distances, the participants were fitted with a Meta Quest Pro headset and guided through an eye calibration test. A detailed description of the tasks is provided for the smooth conduct of the experiments. During the experiment, participants were asked to perform the tasks described in Section 2.3 (fixed gaze, irregular movement, and regular movement tasks). The fixed gaze and blinking task was used to extract eye closeness features, while the irregular and regular movement tasks were designed to extract eye movement features (left, right, up, and down movements). The eye movement and eyeblink data extracted from Meta Quest Pro were stored in CSV files on a computer. Figure 2 shows the overall workflow of our approach. Table 2 shows a sample of eye movement data extracted from Meta Quest Pro in CSV files. The values are presented on a normalized 0 to 1 scale, which shows the eye’s movement recorded by Meta Quest pro.

Workflow of our approach.

Sample of Eye Movement Data Extracted from Meta Quest Pro.

A detailed statistical analysis was performed to analyze the impact of inter-eye distance on eye movement feature extraction. Statistical methods included normality tests, correlation analysis, and nonparametric tests to identify the relationships and differences between eye movement features for different eye distance groups (wide, average, and narrow) based on IPD, ICD, and OCD.

The normality of the extracted eye features was checked using the D’Agostino–Pearson test and the Shapiro–Wilk test. Tests were conducted to determine the normal distribution of eye movement features. The Spearman correlation test measured the relationships between eye movement features, particularly the consistency of eye closeness in both eyes, horizontal eye movement correlations, and vertical eye movement correlations. The Kruskal–Wallis test was conducted to check the comparison and to determine the significant differences between eye movement features across different eye distance groups (IPD, ICD, and OCD). Equation (1) shows the equation for the Kruskal–Wallis H test statistic.

Let k denote the number of groups, the number of observations in group, and the rank sum (sum of ranks assigned to values in a particular group) for group. N denotes the total number of observations across all groups. The constant 12 is a scaling factor. The Kruskal–Wallis test statistic H was calculated using equation (1). To further investigate the pairwise differences between the eye distance groups, the Mann–Whitney U test was performed. Figure 2 illustrates the overall flow of this study.

Results

Data Normality Test

D’Agostino’s K-squared and Shapiro–Wilk tests were conducted 24 on eye distances IPD, ICD, and OCD on various eye movement features to determine whether the distribution of each feature was statistically normal. The results of the D’Agostino’s and Shapiro–Wilk tests are presented in Table 3. In the Shapiro–Wilk test, a W static value closer to 1 indicates normality, and if (P < .05), it shows that the distribution of data is not normal. In our data, the p-values for both tests across all features and groups were 0.0 (or <0.001), indicating that none of the features follow a normal distribution. Similarly, the D’Agostino test produced high statistical values, further confirming the non-normality of the data. Because the data for all eye movement features failed both normality tests, the use of the Mann–Whitney U test and the Kruskal–Wallis test (nonparametric tests) was appropriate. Moreover, the Spearman correlation provided a more accurate assessment of relationships between features compared with the Pearson correlation.

Shapiro-Wilk and D’agostino Normality Check.

Spearman Correlation Analysis

Spearman correlation analysis was performed to analyze the relationship between the extracted eye movement features across different eye distances, including OCD, IPD, and ICD.

Interpupillary Distance

In the analysis of IPD, synchronization of downward gaze was highly consistent across all groups (wide, narrow, and average), indicating that downward gaze tracking was not effected by pupil distance. Similarly, upward and downward gaze movements (EyesLookUpL vs EyesLookDownL −0.68 to −0.82 and EyesLookUpR vs EyesLookDownR −0.67 to −0.83) were negatively correlated in all IPD groups, indicating natural opposition to vertical eye movement regardless of Interpupillary Distance.

Performance for bilateral same-direction eye movement, as measured by the correlations of EyesLookLeftL with EyesLookLeftR and EyesLookRightL with EyesLookRightR, showed uniformly high synchronization across all IPD bands. For the left gaze, the highest correlation was found in the wide IPD group (r = .93), followed closely by the average (r = .92) and narrow (r = .90) groups, indicating that binocular left gaze movements are robustly aligned across a range of facial anatomies. The right gaze produced the strongest correlation in the narrow group (r = .94), with a slight decrease in the average (.93) and wide (.93) groups. These results demonstrate that Interpupillary Distance does not greatly impair coordinated eye movement, and variations in Interpupillary distances have a minimal impact on the accuracy of eye movement feature extraction in Meta Quest Pro. Table 4 shows the results of Spearman’s correlation for the average, narrow, and wide Interpupillary Distances.

Spearman Correlation of Interpupillary Distances Between Eyes.

Intercanthal Distance

The Intercanthal Distance is the horizontal distance between the inner corners of the eyes. The analysis of eye movement features extracted from Meta Quest Pro in relation to ICD revealed that the synchronization of EyesLookDownL and EyesLookDownR features remained highly stable across all ICD groups, indicating that the variations in inner eye spacing did not effect downward gaze movement in Meta Quest Pro. The correlation between left and right gaze (EyesLookLeftL vs EyesLookLeftR) was the highest in the wide and narrow ICD group (.93), indicating highly stable synchronization. The average ICD group had a slightly lower correlation coefficient (.91). Furthermore, upward and downward eye movements showed negative correlations in all groups (wide, average, and narrow), indicating a natural opposition in vertical eye movement. The difference in blink synchronization was not statistically significant; the wide ICD distance showed greater stability than the narrow and average ICD distances.

Overall, the Spearman correlation analysis for variations in Intercanthal Distance suggests that ICD variations do not significantly effect eye movement tracking or the accuracy of eye-based feature extraction using Meta Quest Pro. Table 5 shows the Spearman correlations of the wide, average, and narrow Intercanthal Distances.

Spearman Correlation of Intercanthal Distances Between Eyes.

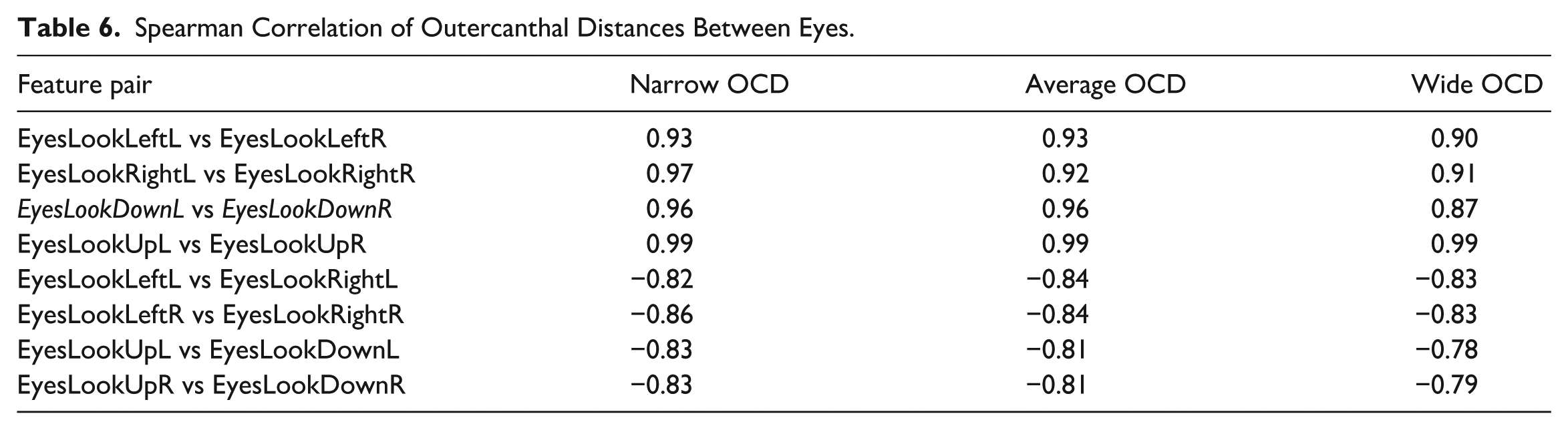

Outercanthal Distance

Outercanthal Distance (OCD) is defined as the distance between the outermost corners of the eyes. It is an important parameter for extracting eye movement features from Meta Quest Pro. The analysis of eye movement features across OCD groups revealed that downward gaze tracking was not influenced by the outercanthal distance, as downward gaze synchronization for both eyes (EyesLookDownL and EyesLookDownR) showed a consistently higher correlation across all OCD groups. Similarly, upward and downward eye movements exhibited negative correlations (−0.70 to –0.84) across all OCD groups. Thus, it aligns with the natural eye-movement behavior. Furthermore, blink coordination (EyesClosedL and EyesClosedR) was the highest in the average OCD group, whereas the narrow group showed more variability compared with the average and wide groups.

Across all OCD groups, individuals showed consistently high levels of binocular coordination during horizontal eye movements, with little differentiation between the groups. The narrow OCD group had the strongest synchronization during right gaze, where EyesLookRightL correlated with EyesLookRightR at 0.97. The average and narrow OCD group displayed slightly higher coordination during left gaze (EyesLookLeftL vs EyesLookLeftR = 0.93). Although a few differences were present, they were relatively small, indicating that the horizontal eye movement consistency remained robust. These results show that variations in OCD distances do not significantly influence the system’s ability to accurately capture eye movements, and that OCD distances do not significantly influence eye movement tracking performance. Table 6 shows the Spearman correlations for wide, average, and narrow OCD groups.

Spearman Correlation of Outercanthal Distances Between Eyes.

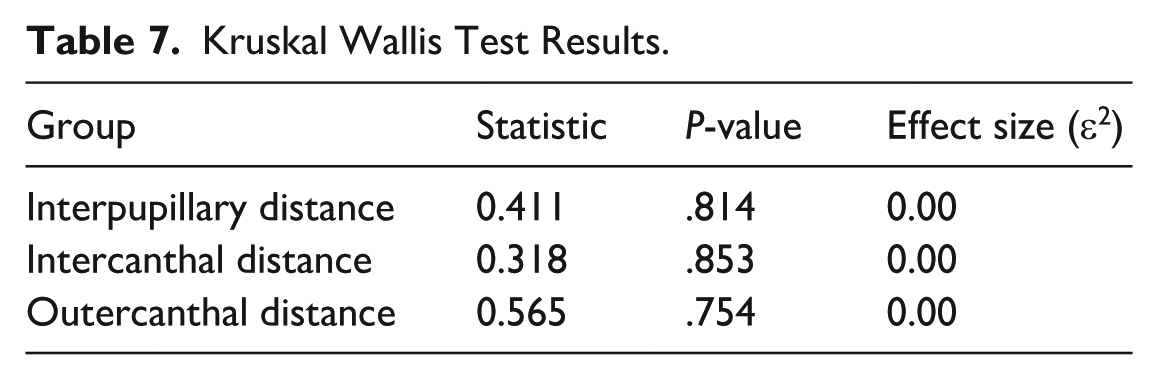

Kruskal Wallis & Mann Whitney U Test

To further investigate the influence of differences in OCD, IPD, and ICD on the extraction of eye movement features from Meta Quest Pro and to analyze missing or incorrect data, the Kruskal–Wallis test was conducted. This non-parametric test assessed whether statistically significant differences existed in eye movement feature relations among the wide, narrow, and average groups for each distance category. The results revealed that the P-values for IPD, ICD, and OCD were .814, .853, and .754, respectively. Because all P-values were higher than .05, the findings showed no statistically significant differences in eye movement feature relations among the wide, narrow, and average groups. Corresponding effect sizes (≈0.00) showed negligible differences between groups, these findings indicate consistent trends across IPD, ICD, and OCD groups and shows the reliability of using Meta Quest pro. Although the group comparisons produced non-significant differences with negligible effect sizes, the small sample size may limit the ability to detect subtle or moderate effects. Since, this study is designed as preliminary study, future study will include a-priori power analysis and larger, more diverse subjects. Table 7 summarizes the results of the Kruskal–Wallis test. These findings show that variations in inter-eye distance did not significantly influence eye movement feature extraction in Meta Quest Pro.

Kruskal Wallis Test Results.

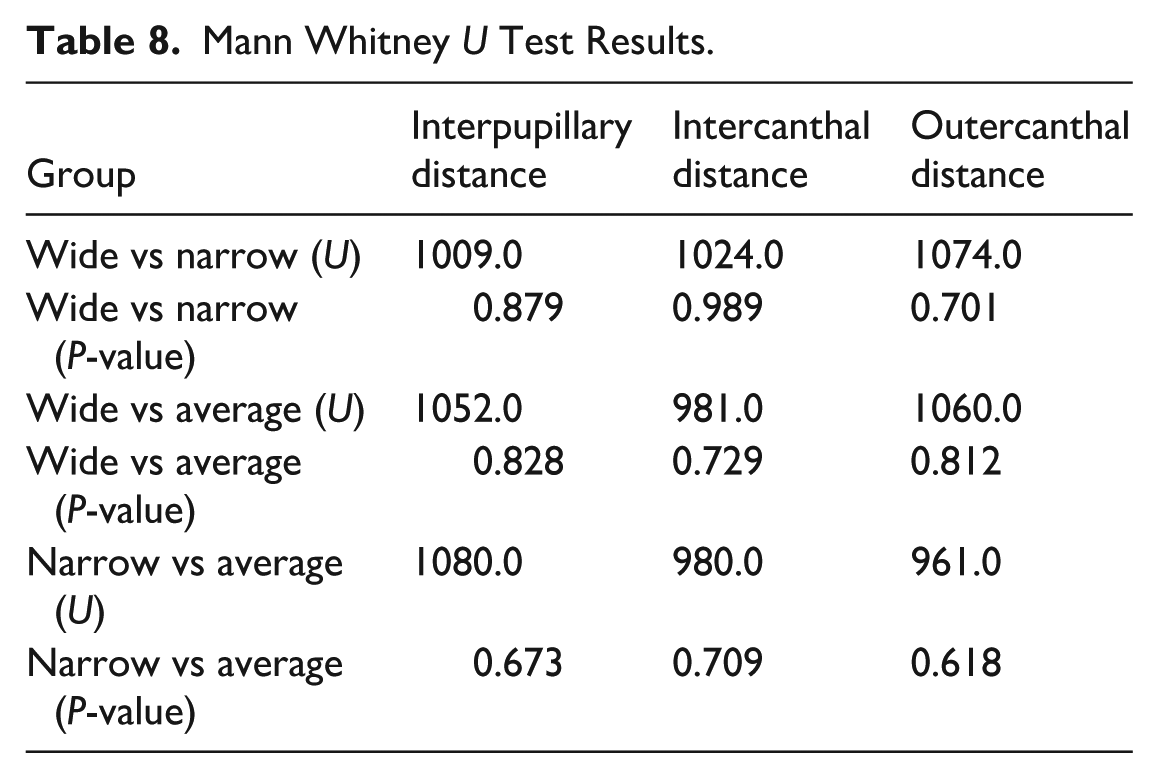

For further validation, the Mann–Whitney U test was used to compare pairwise groupings (wide vs narrow, wide vs average, and narrow vs average) for each of the distance categories (IPD, OCD, and ICD). All comparisons between groups had a P-value of >.05. No major differences were observed between the groups. Thus, the Mann–Whitney U test aligns with the Kruskal–Wallis results, confirming that inter-eye distances do not significantly influence the eye movement feature extraction from Meta Quest Pro, as the distributions do not vary significantly across the wide, narrow, and average groups. Table 8 presents the Mann–Whitney U test results.

Mann Whitney U Test Results.

Angular Error

To analyze whether anatomical differences influence gaze performance in VR, angular error was calculated for each group. Table 9 shows the angular error. Consistent performance across all categories (IPD, ICD and OCD) is shown, with angular error ranging from 1.1° to 1.35°. The Kruskal–Wallis test showed no group effects either angular error (P > .05). The stable angular error values indicate Meta Quest pro’s reliable feature extraction. These features include gaze direction signals like EyesLookLeftL, EyesLookLeftR, EyesLookRightL, EyesLookRightR, EyesLookUpL, EyesLookUpR, EyesLookDownL, EyesLookDownR, EyesClosedL, EyesClosedR.

Spatial Accuracy (Angular Error) for Anatomical Groups.

Conclusion

This preliminary study analyzed the impact of variations in inter-eye distance, including IPD, ICD, and OCD, on the accuracy of eye movement feature extraction (EyesLookLeftL, EyesLookLeftR, EyesLookRightL, EyesLookRightR, EyesLookUpL, EyesLookUpR, EyesLookDownL, and EyesLookDownR) using Meta Quest Pro. Participants performed fixed gaze, regular movement, and irregular movement tasks in a VR-based environment to evaluate the consistency of eye movements across different eye anatomical structures. This study answered the following research questions.

Spearman’s correlation analysis was conducted to answer the research questions. This was followed by the Kruskal–Wallis and Mann–Whitney U tests, which compared eye movement features across groups categorized by wide, narrow, and average IPD, ICD, and OCD values. The results revealed no significant influence of inter-eye distance on eye movement tracking accuracy. These findings demonstrate that the eye-movement relationship remains stable across different eye distances, demonstrating the robustness of Meta Quest Pro in capturing reliable eye movement data. As such, Meta Quest Pro is suitable for use in applications involving child and adult behavior monitoring, where reliable eye-movement data are important for assessing attention and emotional states. Similarly, in educational VR, accurate data are important for measuring student focus. Our study supports the reliability of VR devices, particularly Meta Quest Pro, in these areas. This study is important for advancing research in human–computer interaction, behavior analysis, and learning disabilities.

This preliminary study has some limitations and areas for future research. First the sample size was small, limited number of participants allowed for detailed observation, a high level of experimental precision, and minimized variability, although we expanded the subjects size to include both male and female participants, the overall number of participants remains small. As a result, the present study is regarded as pilot study. Similarly, our study involved limited age group. The specific range was selected to minimize inter-individual variability, enhance experimental control, and ensure consistency in cognitive and behavioral responses, given that reaction time, attentional regulation, and visual processing speed are known to vary with age. In the future research diverse participants with larger age bracket should be included such as children. Second, a priori power analysis was not conducted due to subject recruitment limitation and the study is designed as pilot study for assessing the Meta Quest Pro’s feasibility. Future studies should include a-priori power analysis to determine the sample size required to achieve adequate power for detecting effects of interest. Third, the present study only considered Meta Quest Pro for experiments, which provided significant insights. In the future research, Meta Quest pro’s results shall be validated against other commercially available eye tracking systems for better generalization. Fourth, system latency was not measured or analyzed in this study. In the future quantitative latency assessments across different VR devices can be performed to understand the effect of delay on data quality. Fifth, the future studies should include the impact of eyeglass wear on accuracy. Sixth, in future studies the study can be expanded with multimodal integration, for example merging eye tracking with behavioral and physiological data for enhanced understanding of user interaction in VR environments.

Footnotes

Acknowledgements

We are grateful to all the participants in the study for their contributions.

Ethical Considerations

The experimental protocols were reviewed and approved by the Institutional Review Board (IRB, reference number: 1040875-2024-09-010) on the human subject’s research and the ethics committees of Soonchunhyang University. All methods in the study were performed in accordance with relevant guidelines and regulations.

Consent to Participate

Written informed consent was obtained from all participants prior to participation.

Consent for Publication

Not applicable. Does not contain any individual’s data in any form (including images, videos, or details).

Author Contributions

Conceptualization: S.A.N.R.; Methodology: S.A.N.R., J.W.; Software: S.A.N.R.; Formal analysis: S.A.N.R., S.-A.L., G.L J.W.; Writing – original draft preparation: S.A.N.R., J.W.; Writing – review and editing: G.L., S.-A.L., S.S., Y.C., J.-H.P., Y.N.; Supervision: Y.C., Y.N.; Project administration: Y.N.; Funding acquisition: Y.N. S.A.N.R. and J.W. contributed equally to this work. All authors approved the final version of the manuscript.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Research Foundation of Korea (NRF) grant funded by the Korea government (MSIT) (No. RS-2023-00218176) and the Soonchunhyang University Research Fund.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

All data would be provided on contacting the corresponding author.