Abstract

In this article, we outline an original, creative method for capturing the multifaceted ways in which digital technologies shape social life. We outline a framework for engaging participants in creative writing and drawing techniques to support ‘meeting and greeting’ their ‘algorithmic selves’. Algorithmic selves offer datafied reflections of individuals’ social media use, represented through platform approximated advertising categories. These categories include identities, such as ‘female’ or ‘male’, and marketing interests as ‘dog lovers’, ‘first time buyers’ or ‘feminists’. Our method builds on Les Back’s calls for ‘a more artful form of sociology’ that is able to think with technology. By using algorithmic selves to mobilise creative enquiry in this way, we argue that researchers can better discern how technology users make sense of their data, the ways in which identity can be co-constructed by social media platforms, and how our interactions with technology ultimately shape social lives in meaningful and highly affective ways. Our method offers a craft-based framework for understanding imaginations, associations and connections with data profiling, and making these understandings available for participant reflection and researcher analysis. This method can also support research participants in taking creative ownership and building agency around their interactions with social media platforms.

Keywords

Introduction

In this article, we outline an original, creative method for capturing the multifaceted ways in which digital technologies shape social life. We have developed our approach through a series of public research workshops, entitled ‘Algorithmic Autobiographies and Fictions’, in which we use creative writing and drawing techniques to support social media users to ‘meet and greet’ their ‘algorithmic selves’. Our method builds on calls for ‘a more artful form of sociology’ that is able to think with technology (Back, 2012, p. 20). To do so, we repurpose commercially collected social media data that claim to identify and manage users’ identities, such as ‘female’ or ‘male’, and marketing interests as ‘dog lovers’, ‘first time buyers’ or ‘feminists’. Commercially collected data do not tell a holistic story alone – we understand these data to be ‘lively’, called to being through imagination and interpretation (Back & Puwar, 2012). Thus, our method looks to capture individuals’ experiences of these data, reflecting on how these data produce social meaning and, ultimately, how these data shape participation in social life. Our approach fits within a broadened definition of digital sociological methods, specifically one which advocates for the critical and reflective use of digital platforms in qualitative social enquiry (Gangneux, 2019).

In this article, we use build on notions of algorithmic and databased selfhood (Cheney-Lippold, 2011; Jarrett, 2014; Pasquale, 2015) to describe the ‘algorithmic self’ generated by targeted profiling systems: a reflection of individuals’ social media use, represented through platform approximated advertising interests. Users’ selected advertising interests are public and accessible on many top-tier social media platforms in line with recent ‘transparency’ initiatives. These ‘ad interest categories’ are represented by a word or phrase, and include objects, brand names, behaviours, activities, religion and relationship statuses. These interests offer datafied formations of selfhood that not only computationally mirror or represent individuals but also reshape individuals’ online experiences – by determining how their profiles are brokered by first and third parties, and thereby how they experience social media platforms (Cheney-Lippold, 2017; Jarrett, 2014). By using algorithmic selves to mobilise creative enquiry in this way, we argue that researchers can better discern how technology users make sense of their data, the ways in which identity can be co-constructed by social media platforms, and how our interactions with technology ultimately shape social lives in meaningful and highly affective ways.

Locating the self

Cheney-Lippold defines ‘algorithmic identities’ (Cheney-Lippold, 2011) as commercial and proprietary interpretations generated from the ‘fragments of data’ created by individuals’ online activities. Algorithmic identities are ‘rewritable, partially erasable and fully modulatory’ (Cheney-Lippold, 2017, p. 192), although these complex processes are not always obvious as software design elides the messy, contradictory nature of algorithmic decision making (Amoore, 2019). In practice, technologies produce multiple narratives that can be ‘endlessly repatterned and retold so as to produce a multiplicity of meanings’ (Jacobsen, 2022, p. 1091). In this vein, algorithmic selves are in a constant state of becoming, with many potential outcomes and possible futures.

Our approach to the algorithmic self recognises individuals’ role in co-producing their approximated data categorisations, through their own interpretation and reflection on their categorised identity. In this vein, the algorithmic self draws from feminist interpretations of Foucault’s ‘techniques of the self’, in which ‘top-down’ readings of power are supplemented with ‘an analysis of how the individual comes to understand [themselves] as a subject’ (McNay, 1992, p. 49) and ‘seek[s] to interpret their experiences’ (McNay, 1992, p. 52). In this sense, we argue algorithmic selves are based on social media data (just-in-time commercial advertising categorisations), which are constantly reworked and reframed, and brought to life through individuals’ subjective reflections. These reflections are highly mutable based on individuals’ emotions, experiences and contexts.

In our workshops, we have chosen to invite participants to meet their ‘algorithmic self’ for two reasons. Firstly, we seek to reject the offputtingly technical or even dystopian perspectives found in some contemporary critical privacy literature. For example, in her much discussed book Surveillance Capitalism, Zuboff writes that social media’s ‘commercial surveillance’ project ‘produces a psychic numbing that inures us to the realities of being tracked, parsed, mined and modified’ and eventually ‘leaves us singing in our chains’ (Zuboff, 2019, p. 11). To be sure, we do understand surveillance capitalism as a coherent and pressing threat to many strains of social life. We also note that social media users are increasingly aware that their personal data are used by platforms for targeted marketing purposes and to make profit, and are reluctantly resigned to the fact that if they want to use social media then they must allow their data to be collected (Ofcom, 2020). Recent research suggests that (contrary to its aims) the EU’s attempts to increase public understanding of data tracking through the GDPR have resulted in a kind of ‘cookie fatigue’ wherein users feel overwhelmed by the huge amount of privacy information they are expected to understand and consent to (Kelion, 2018). Users are fatigued – but they also routinely discuss, imagine, theorise around and develop affective relationships with the technology that they use (Bishop, 2019; Bucher, 2018; Kant, 2020). Fatigue is not the end of the conversation, and ‘singing in our chains’ presents an uncomfortable and inaccurate metaphor. In our workshop we use the concept of the algorithmic self to support participants in taking creative ownership and building agency around their interactions with social media platforms.

A second reason for centring the algorithmic self is to prompt users’ understandings of their place within mutually shaped ‘human–data assemblages’, namely the changing relationships engendered by and with participants’ data (Lupton, 2020). Drawing the algorithmic self allows users to articulate complex technical topics which can be difficult to put into words. Writing about meeting their algorithmic self allows participants to construct, and take ownership of their own data narratives. In both creative practices, participants combine different parts of their collected data to show their perceptions, representations and understanding. This aspect of the workshop is important, as we recognise that there is a continued need to account for the ways that data capture is often based on ‘colonial lineage’, which is experienced in a highly unequal way under imperial capital (Arora, 2019, p. 371; Benjamin, 2019).

With these inequalities in mind, our approach to data profiling gives participants room to communicate their relationships with their data using their own diverse context, narratives and voices. Our workshop is designed to restructure participants’ understanding away from ‘top-down’ approaches, which calcify technology producers as experts and users as passive victims.

We open this article by reflecting on the methods which have inspired our examination of data capture, including sociological techniques for ‘listening in’ to users’ understandings, theories and approaches to technology use. We then outline how our approach has been augmented by feminist artistic research methods and autobiographical writing methods. We offer a guide for running the research workshop, specifying instructions for identifying social media advertising data, reflecting on these data, and how to support participants in building their algorithmic selves. We explain how to structure tasks in which participants draw and write with their algorithmic selves. We then offer a reflection on how to facilitate participant sharing sessions through the process of ‘collective biography’ (Gonick et al., 2011), followed by introducing some strategies for analysing the pictures and stories that participants produce. We conclude with ethical considerations, and future directions for this workshop and our wider methodology.

We have developed this workshop based on our own research projects: firstly on the ways users build technological understanding through knowledge sharing and gossip (Bishop, 2019, 2020), and secondly on how the anticipation and perception of advertising personalisation shape users’ sense of selves (Kant, 2020). Over three years, we have run these workshops in a variety of settings to over 200 participants, including to bleary-eyed revellers on a Saturday morning at a popular music festival, in undergraduate/postgraduate modules at both our universities, and to colleagues at critical media studies conferences. Running the session to these audiences (in addition to online/offline) has informed the flexibility and adaptability of the workshop design. There are many ways that our workshop can be developed and extended based on different research questions and projects. In the following article we present our reflections on how we have designed and run the workshop thus far, including some examples of participant contributions for illustrative purposes.

Researching social media platforms

Social media platforms specialise in ‘microtargeting’ users with advertising based on identity and interest categories. Some of these categories may have been self-identified by users, but many are algorithmically approximated (Bucher, 2021). It is notable that the ‘how ads work on Facebook’ resource page includes no mention of algorithms (Facebook, 2022). Instead, there is simply a list of data used to inform ad targeting. This list of data sources includes demographic information, location information, activity on and off the Facebook platforms, and information from ‘partners’ – which, as van der Vlist and Helmond (2021) show, include data intermediaries, brokers and marketplaces.

We consider our approach to be ‘algorithmic’ for two reasons. Firstly, algorithms are the step-by-step recipes that guide particular outcomes, and are often used to make sense of heterogeneous data in commercial contexts (Bucher, 2018). In this vein, algorithms are used to bring Facebook ad data together. Secondly, it is relevant to note that we take an ‘algorithms as culture’ approach in this article. In other words we recognise algorithms ‘not as stable objects interacted with from many perspectives, but as the manifold consequences of a variety of human practices’ (Seaver, 2017, p. 4). In this sense, we use our method and workshop to creatively capture the everyday confusion, contradictions and processes surrounding algorithmic media.

Ultimately, individuals cannot know how their data profiles are constituted: commercial data profiling and targeting are currently deployed in computational and legislative opacity (Trott et al., 2021). Although it is tempting to be overwhelmed by this opacity, it can also serve as an obstructive distraction or a ‘red herring’ for those looking to research algorithmic technology (Bucher, 2018). After all, though they may be transparent, such profiles continue to constitute ‘algorithmic identities’ (Cheney-Lippold, 2017, p. 5), ‘database subjects’ (Jarrett, 2014, p. 27) that change the material constitution of users’ daily experiences (Kant, 2020). Instead of seeing algorithms and technology as a ‘black box’ to shine light into, we can see these technologies as ‘eventful’ by examining the many ways they shape experience (Bucher, 2018). In other words, those looking to examine our mutually-shaped interactions with technology should look to creative and speculative methods that will ‘make the algorithm speak’ (Bucher, 2018, p. 60). Researchers have approached these technologies using a number of strategic, creative, methodological strategies, which we consider in the following section. Each has informed the design of our workshop and wider methodology.

Our workshop method builds heavily on qualitative research which has ‘listened in’ to the ideas, understandings and stories that users develop about how technologies work (Christin, 2020a; DeVito et al., 2017; Eslami et al., 2016). Everyday users’ ideas are often defined as folk theories: namely, intuitive responses that help people process and make sense of complex phenomena (Gelman & Legare, 2011). In her field-leading research, Bucher interviewed ‘ordinary users’ who had publicly tweeted missives and observations about the Facebook algorithm. These individuals held strong beliefs about how Facebook worked, enlivened by an ‘algorithmic imaginary’ informing ‘what algorithms are, what they should be, how they function and what these imaginations in turn make possible’ (Bucher, 2017, p. 40). Although it is often technically impossible to untangle how social media platforms work, folk theories and imaginaries are a valuable source of data on how technology impacts everyday life, as these theories guide how users orientate their engagement with the social media platforms (Bishop, 2019). In this vein, existing research has focused on how software is mutually created, solidified and enlivened by human and non-human actors ‘by focusing on the waves and ripples’ that take place between algorithms and social actors (Christin, 2020b, p. 906). Social media data can also be used directly in qualitative research processes: Gangneux (2019), for instance, used interview participants’ Facebook ‘Search History’ as a probe to facilitate reflection and discussion in interviews. This use of ‘lively’ social media data in qualitative sociological research promotes the generation of ‘thick data’ on the many ways in which the digital impacts our everyday social engagements.

‘Listening in’ to folk theories often reveals that individuals’ engagement with technology is highly emotional, embodied and affective. For example, Ytre-Arne and Moe (2021) found algorithms were perceived by social media users as helpful (at times), but also experienced them as irritating and world-narrowing. Other research has shown that women using social media found that their targeted ads are based on reductive or sexist stereotypes, making them feel ‘bored’ or ‘infuriated’ (Ruckenstein & Granroth, 2020, p. 19). Siles et al.’s (2020) work on Spotify’s algorithms has shown that users can both find them to be helpful in catering to their interests ‘like a little buddy’, but also over-familiar and intrusive, even drawing comparisons to a ‘stalker’. In further work, Büchi et al. (2021) found that Facebook users were surprised at the inaccuracy of their data when confronted with their ad interests. Their experiences often stood in direct contradiction to the sophisticated and powerful representations of Facebook in media (Büchi et al., 2021). The authors refer to these sentiments as ‘algorithmic disillusionment’ in that ‘algorithms appear less powerful and useful but more fallible and inaccurate than previously thought’ (Buchi et al., 2021, p. 2).

Kennedy (2020) argues that time and again, users’ articulations of profiling do not match their use of social media: users say that they condemn profiling to be privacy-invading and yet continue to use and indeed embrace privacy-invading services. Kant (2020) found similar contradictions at work in how users express their engagement with targeting algorithms as both convenient but creepy. We highlight these not to ‘blame’ users for expressing contractions – instead, as Kennedy stresses, such findings suggest that we must find new means to equip both researchers and the public with a more complex vocabulary in datafication to resolve this contradiction. Although our work has mostly been orientated within the UK, we recognise that global experiences of technology offer different structures, languages and attitudes around privacy (Arora, 2019).

Research suggests that improving individuals’ ‘algorithmic literacy’ may be a way to reframe public vocabulary of targeting (Henderson et al., 2020). ‘Algorithmic literacy’ (Swart, 2021) builds on ‘data literacy’ (Kennedy et al., 2015) to focus specifically on educating individuals in algorithmic processes in ways that can further ‘users’ awareness, knowledge, imaginaries, and tactics around algorithms’ (Swart, 2021, p. 2). This qualitatively-grounded research into users’ engagement with targeting/profiling algorithms has so far largely relied on established data collection methods of interviewing (Bucher, 2016; Ruckenstein & Granroth, 2020), walkthroughs/participant observation (Swart, 2021) and surveying (Büchi et al., 2021). Taken together, these approaches capture technology users’ diverse and contradictory emotions and theories. However, we seek to build on methodological design that supports participants in developing agency in their ongoing relationships with technology. Lupton’s (2020) ‘thinking with care’ response to research invites participants to create their own ‘data persona’ through prompts. In so doing, data become detached ‘from a technical and intangible phenomenon that can be difficult to conceptualise’ and towards ‘an embodied phenomenon’ which centres research participants (Lupton, 2020, p. 3177). This feminist approach can resist techno-deterministic, ‘top-down’ approaches to studying data, algorithms and social media platforms.

Our workshops seek to build on this qualitative work by integrating methodologies that do not rely on discourses of educating users, or understanding their knowledge of datafication. In light of Kennedy and Kant’s sentiments that people don’t yet quite know how to talk about targeting algorithms, we argue there is a pressing need to demystify profiling algorithms through innovative, creative, engaging – and indeed fun – ways that can open up new avenues for algorithmic understanding.

Feminist and artistic methodologies

Social media platforms have deepened material inequalities for those experiencing intersecting forms of marginalisation (Noble, 2018). As our workshop looks to users’ embodied experience of these social media platforms, our workshop naturally finds its roots in feminist theory and activism (D’Ignazio & Klein, 2020).

Because feminist research is often marginalised in academia, researchers have had the (dubious) ‘freedom’ to embrace strategies for knowledge creation that are viewed by the academy as less ‘scientific’ (McRobbie, 1982; Skeggs, 1995). In this manner, feminist projects have long incorporated creative and artistic methods. Creative methods also support participants in articulating themes which can be more ‘emotionally entangled’ and ‘fleshy’ (Gunaratnam & Hamilton, 2017). Art therefore becomes useful in feminist research as it supports the communication and translation of complex emotional responses to intersecting inequalities. Indeed, as Audrey Lorde puts it, ‘poetry is the way we give name to the nameless so it can be thought’ (Lorde, 2007, p. 37). Similarly, Sandra Weber argues that images can be used to ‘capture the ineffable, the hard to put into words’ (Weber, 2008, p. 44). Creative methodologies prompt imaginative thinking; they offer a pathway to make the familiar strange.

Creative methods can also offer a useful prompt for elucidating unfamiliar or complex research topics, in which participants may not feel confident about using or defining ‘technical’ language. Human–Computer Interaction (HCI) literature shows a strong precedent for using drawing methodologies. For example, Siles et al. (2020) used ‘rich pictures’ as a research technique: they invited research participants to create diagrams depicting how they believe the Spotify algorithm works. Vertesi et al. (2016) invited individuals to draw maps of their data use and management, prompting these participants to remember and articulate the use of manifold devices, systems and processes. Poole and Peyton (2013) have invited teen research participants to create ‘video collages’ and ‘mash ups’ which help them to articulate their experiences around health and health management. Methodologies rooted in creative composition offer an important prompt for the discussion of folk theories, but are limited in their concentration on the technical process, eliding how technology is co-constructed through social life. In our workshop we focus on creative drawing prompts which allow for flexible, interpretative self-representation that reveal the ‘character signifiers’ that people associate with identity formations (Wood et al., 2020). During the workshop, our participants see their own supposed interests reflected back at them – they are confronted with an algorithmic self which is co-constructed by software. By reflecting on this information about their identity provided via the platform, they use artistic practice to ‘adopt someone else’s gaze, see someone else’s point of view’ as an important point for comparison (Weber, 2008, p. 45). We note the specific ways in which identities are translated into images in later sections, in which we outline our method.

Though creative writing and drawing might seem distinctly ‘analogue’, we consider these workshops to be critically ‘digital’. ‘Digital methods’ is a broad term that ‘can be defined as the repurposing of the inscriptions generated by digital media for the study of collective phenomena’ (Venturini et al., 2018, p. 4195). Such methods can involve the design of software or tools to support researchers’ understanding of internet and social media cultures, but also often include the ‘repurposing’ of ‘natively digital objects’ (Rogers, 2013, p. 1). In our workshops we take a qualitative approach to repurposing social media ad settings as digital objects. Though this repurposing requires no coding knowledge, network analysis or software building, we argue our method is indeed ‘digital’ because firstly, it follows other qualitative methods – such as Light et al.’s (2018) walkthrough method – in the systemic analysis of digital media platforms and tools to extend social-political and cultural critique. Secondly, in framing our method as ‘digital’ we look to disrupt popular data-positivist discourses that assume computational analytics to be the most ‘valid’ methods for elucidating the complexities of digital everyday life.

Algorithmic autobiography and fiction

Creative non-fiction and ethnographic storytelling have historically been an important methodological resource in sociology (Barone, 1995a), because creative writing reveals narrative constructs that frame identity in everyday life (Atkinson, 2008). Such narrative constructs are detectable in Ad Preference profiles: social media users are characterised as ‘male’, ‘female’, ‘dog lover’, ‘house owner’. They are categorised as interested in ‘humour’, ‘horror films’, ‘American football’, in ways that construct a narrative that tells users’ ongoing, datafied life story – one sold to advertisers and presented to users in their ad profile. In asking participants to write and draw about their algorithmic identities (both visualising them and meeting them, as we explore below), we ask our participants to engage in a form of autobiography or autofiction: ‘a self-produced text’ that tells a story, in full or in part, about its writer’s life (Gunzenhauser, 2001).

Unlike other forms of autobiography, our workshops focus on automated autobiographies: (auto)biographies in part written by algorithmic infrastructures. Iaconesi and Persico (2016) note that the ‘auto’ in autobiography takes on a double meaning in the context of algorithmic profiling: ‘auto, because [user profiles] are automatically collected. And Auto, because we produce and express these bits of memory ourselves, in our daily lives, through our ordinary performances, like entries in an ubiquitous diary’ (Iaconesi & Persico, 2016). Our project looks to take these questions of agency, authenticity and algorithmic autobiography through user-friendly writing and drawing practices. As Smith notes, such practices ‘reveal agency or the desire for agency because they show how meanings are created for people, how people create meaning for themselves and how people engage with the world around them’ (2001, p. 28). Autobiographical writing and drawing can help create meaning-making for research participants (Couser, 2001) but can also reveal the ‘slipperiness of the truth’ (Gunzenhauser, 2001, p. 77) about how the world is made meaningful – and in the case of data profiling, how users’ lifeworlds are made meaningful and commodifiable through social media data. Our workshops thus expose the apparent ‘truth value’ (Couser, 2001) of targeting data in ways that acknowledge that algorithmic identities are co-constitutionally ‘written’ by the actions of users themselves, but also algorithmic, commercially-driven actors.

Back notes that live, responsive methodologies must expand the ‘vantage point for social observation’, and where possible, advocate for the generation of multiple vantage points (Back, 2012, p. 34). In line with this argument, our participants’ imaginary creates an ‘as if’ world (Barone, 1995b) that is at once credible, but shows how the author selects, structures and combines different elements to show their perceptions, representations and understanding. We wanted to encourage participants to critically engage with the fact that their ad settings are ‘personal’ to them, creating norms in our everyday ‘media life’ wherein we may have come to ‘see and identify ourselves through the “eyes” of the algorithm’ (Bucher, 2017, pp. 34–35).

Setting up the workshop

We have run the Algorithmic Autobiographies and Fictions workshops in diverse spaces, including tents at music festivals, conference rooms at city festivals, and in university rooms for undergraduate and postgraduate teaching. In setting up the workshops, we follow Bourdieu’s (1989) invitation to reflect on the relationship between physical space and power relations. Where possible, we have organised the workshop space to encourage participants to connect and collaborate through group seating. Fostering opportunities for social interactions between participants can therefore make for an important aspect of the research workshop, countering participants’ experiences of social media data as individualising and alienating. As feminist researchers, we have built the importance of talk into the workshop (McRobbie, 1982). Encouraging collaboration between participants opens up space for discussion, humour and reflection, which can foster solidarity, and opportunities for collaborative learning.

We have run the workshop for between 1.5 and 2 hours, but longer sessions could be beneficial. Practically, the materials required to run the workshop include a projector for the introductory presentations, tables or hard surfaces to support writing and drawing, and artistic materials such as pens and paper. Over periods of lockdown, we have run this workshop online over video conferencing software such as Zoom. Although the possibilities for collaboration can be more limited in a digital context, online workshops can increase participation from inaccessible groups outside of urban centres, or for those with caring responsibilities (Shamsuddin et al., 2021). For example, in 2020 we ran the workshop digitally for the South African National Arts Festival from our homes in the UK. Attendees from all over South Africa and beyond ended up taking part.

Introducing key concepts

We open the workshop with a short introductory presentation that clarifies common questions about cookies and online privacy, and targeted advertising. Of course, researchers can tailor the introductory materials to their own interests. We have built the ‘live’ and ‘crafty’ nature of our research (Back & Puwar, 2012) into the introductory materials. For example, we communicate the overwhelming, confusing and intrusive nature of cookie notices by showing screen grabbed examples from journalistic and other well-known sites. We seek to explain key definitions related to privacy, data collection and profiling in a clear and accessible manner. For example, we use The Daily Mash’s cookie notice, which simply says ‘whatever’ (Dailymash, 2021) to highlight the humorous yet dismissive nature of public tendency towards the overwhelming requests for consent that the BBC has described as ‘cookie fatigue’ (Kelion, 2018).

Following our introduction to the key definitions, we support participants in locating their own advertising data. We give participants the option to access their ‘ad preferences’ data on either Facebook, Instagram or Google through public facing pages that are commonly defined as ‘advertising transparency resources’. Through these resources, platforms provide users with information about the categories used by advertisers to target them. These ‘ad interest categories’ are represented by a word or phrase, and include objects, brand names, behaviours, activities, religion and relationship statuses. In order to make participants comfortable and lead by example, we offer participants some of our own examples from Facebook, which include ‘yoga’, ‘Western Christianity’ and ‘friends of men who have a birthday in less than 30 days’. Looking to our Instagram ‘ad interests’, our examples include ‘street fashion’, ‘gin’ and ‘motor vehicle’. Finally, Google allows users to see their ‘ad personalisation’; here we found ‘dairy and eggs’, ‘in a relationship’ and ‘condiments and dressings’. We offer workshops participants a handout which outlines step-by-step instructions to help them find their ad interests on all three social media platforms. Social media platforms change the location or titles of these resources regularly. Researchers should therefore review participant handouts for accuracy before each session.

Missing data

In this section we will briefly address questions surrounding the veracity of ad targeting data. Researchers have noted that ad targeting data are often missing in platform transparency resources (Edelson et al., 2018). In addition, many platforms’ explanations about precisely how ad data have been gathered are also often incomplete or vague (Leerssen et al., 2019). For example, Facebook’s ‘ad interest’ categories exclude data purchased from data brokers, which means ‘users have no way of knowing what data broker attributes advertisers can use to target them’, even though evidence suggests these data are routinely used in ad targeting on the platform (Andreou et al., 2018). More recently, a Facebook blog promotes the fact that transparency resources are being improved by the company:

Now, you’ll see more detailed targeting, including the interests or categories that matched you with a specific ad. It will also be clearer where that information came from (e.g. the website you may have visited or Page you may have liked), and we’ll highlight controls you can use to easily adjust your experience. (Facebook, 2019)

While Facebook might be praised for striving to improve these tools, this decision was made at Facebook’s discretion, and could change at any time. Platforms grant or restrict access to data for institutions and researchers; in this vein they are becoming key political intermediaries (Dommett, 2021).

The reliance on platforms to provide workshop data raises a methodological question – what happens if these data are made unavailable? How significant is it that ad interests are proprietary, limited or inaccurate? In answering these questions, we recognise that platforms’ data gatekeeping function has serious implications for researchers, and other democratic actors such as journalists and policymakers (Dommett, 2021). Yet, we also note that in the face of data instability, the very fact of missing data can become an important research finding, and prompt for critical reflection. We take the experience of one workshop participant, Hannah, 1 as an example. Hannah described herself as an avid Instagram user during the workshop. However, when she looked at her transparency resources, she did not have any listed ‘ad interests’ – the page was blank. This finding prompted a group reflection on why, sharing theories that varied from the cultural to the technical. Participants discussed use of ad blockers, Hannah’s contradictory interests (her ‘girliness’ and video game hobbies), and her early Instagram adoption, as she had opened her account before the application had been purchased by Facebook. Hannah discussed and developed her understanding of her ad targeting – even though meaningful data about her targeted interests were missing. This example suggests that our workshop could offer a creative avenue to support research into ad transparency, in the face of the volatility of research resources provided by social media platforms.

On occasion participants do not have a social media presence (or a Google account), and therefore cannot obtain their targeted data via the platforms that we cover in the workshop. In this case, we invite participants to reflect on their targeted ads on search engines, news websites and other online sites. Participants reflected scholarly findings that those who do not have social media profiles will still find it nearly impossible to withdraw themselves from online data collection (Cheney-Lippold, 2017). Participants using search engines or visiting news websites will be followed by ads for homeware they have searched for, for confusing clickbait selling ‘buttless pyjamas’ (Wodinsky, 2020), for cryptocurrency brokers or funeral planning services. These ads are the product of anticipated advertising interests (Skeggs & Yuill, 2019). They give clues to data brokers’ and ad networks’ perception of who web users are, and what they want, and are therefore entirely sufficient to be utilised within the workshop. Though grounded in memory recall rather than ‘factual’ data profiles, we found that inviting ad reflection still led participants to acknowledge their place within wider targeting systems. These responses bode well for the use of this method to reflect on algorithmic curation and targeting beyond advertising data, such as search results or TikTok ‘for you’ pages.

From missing data to endless ad interests

In the next part of the workshop, we ask participants to spend a short time reviewing their ‘ad interests’ (or supplementary ad data) and encourage them to write down their initial thoughts, feelings and ideas. For this part of the workshop we are led by Light et al.’s (2018) interdisciplinary ‘walkthrough’ method, which involves a guided step by step research approach for engaging with software. As ‘walking methodologies’ loosely guide social exploration (O’Neill & Roberts, 2019), the walkthrough method offers a framework for users to scrutinise software slowly, and to develop field notes based on the images, instructions, notifications and nudges they encounter. This method is particularly suitable for our workshop as it blends research approaches used in STS and cultural studies to support users in analysing how software design is translated into social meaning. Upon interacting with an application or software, the walkthrough method invites users to note the ‘connotations and cultural associations’ that technology invokes (Light et al., 2018, p. 892). In this vein, we encourage users to reflect on their ad data and how it is represented to them, making notes on the connections and emotions that data evoke.

It is particularly important to give participants the space to reflect on their interests during the workshop because, somewhat contrary to the issues associated with data opacity, social media users’ lists of ad topics are often extremely long and unwieldy. For example, as of late 2021 Sophie had over 550 distinct ad interests on Instagram, over 200 on Google and over 210 on Facebook, whilst Tanya had over 540 ad interest categories on Facebook, and over 170 on Google. Participants’ selection and representation of their ad interests is thus highly subjective, which is then reflected in their creative outputs. As participants pick and suture their ad interests to form their algorithmic self, we recall the feminist methodological metaphor of building a ‘patchwork quilt’, in which the process of selection and representation becomes an important part of a methodology itself (Koelsch, 2012). Feminist methodologies are designed to reconfigure top-down power structures, and recognise ‘identity and personal relationships as multiple, fluid, and layered’ (Vacchelli & Peyrefitte, 2018, p. 2). Through selecting ad interests that stand out to them, participants co-construct narratives around their digital identities, informed by their own identities, contexts and voices.

Participants select their ad topics for various reasons. Existing internet studies research finds that some social media users feel legitimised by ad topics that positively reflect their sense of self, categorising some interests as particularly ‘good’ to have (Kant, 2021). Other research shows that Facebook users found distinct ad topics to be particularly creepy, as if they were taken from private conversations (Büchi et al., 2021). In running the workshops, we have found that participants selected ad topics because they were accurate, inaccurate, emotional, funny, aspirational or private – among many other reasons. The motivations for selection are often highlighted in the drawing and writing sessions. This creative selective process also helped reveal participants’ ‘algorithmic disillusionment’ with their ad settings – despite, or indeed because, of their extensiveness (Büchi et al., 2021). Through artistic methods participants have space to communicate the complex ways in which identity and social media merge, as they are reflected through their algorithmic selves.

Drawing algorithmic selves

As outlined in our introductory sections, drawing is a method that helps to capture experiences, explanations and feelings that can be difficult to explain or hard to put into words. In this vein, we open with the drawing session, to support participants in ‘thinking through’ the ad topics data they have located and reviewed. We offer some questions to participants as prompts to draw their algorithmic self. These include: ‘what are their interests?’, ‘do they look like you?’, ‘do they have the same gender identity as you?’, ‘where are they, what kind of surroundings do they inhabit?’ We emphasise that ‘no artistic skill is required’ to alleviate nervousness that participants may have about their level of ability or experience. Many participants draw their algorithmic self as a version of how they look in ‘real life’, albeit taking on attributes and interests that are representative of their ad data. They may draw themselves skateboarding, or wearing the aspirational designer clothes listed in their ad topics. Others draw a collection of ad interests, perhaps arranged within a physical space like a bedroom. Participants sometimes engage in more abstract representations like animals, spirits or monsters.

We have also found that participants often augment their drawings with text. Text is used to verbally emphasise particular points and themes, and to anchor particular intentions and meaning in their artwork. In this vein, algorithmic self drawing is a ‘live methodology’ as participants creatively add notes, captions, dialogue and annotations (Back & Puwar, 2012). One workshop participant, Lucy, drew her algorithmic self asking ‘which apple should I eat today, Gucci or Prada?’ This dialogue playfully emphasises the two ad interests that stood out to Lucy in her data – designer fashion and health and wellness. We encourage researchers to take note of how participants use annotations; they are a way for participants to ‘layer’ meaning into artistic representation, and to add context and connections to their artistic representations (Balmer, 2021). They are used by participants to signpost particular interpretations of participants’ work.

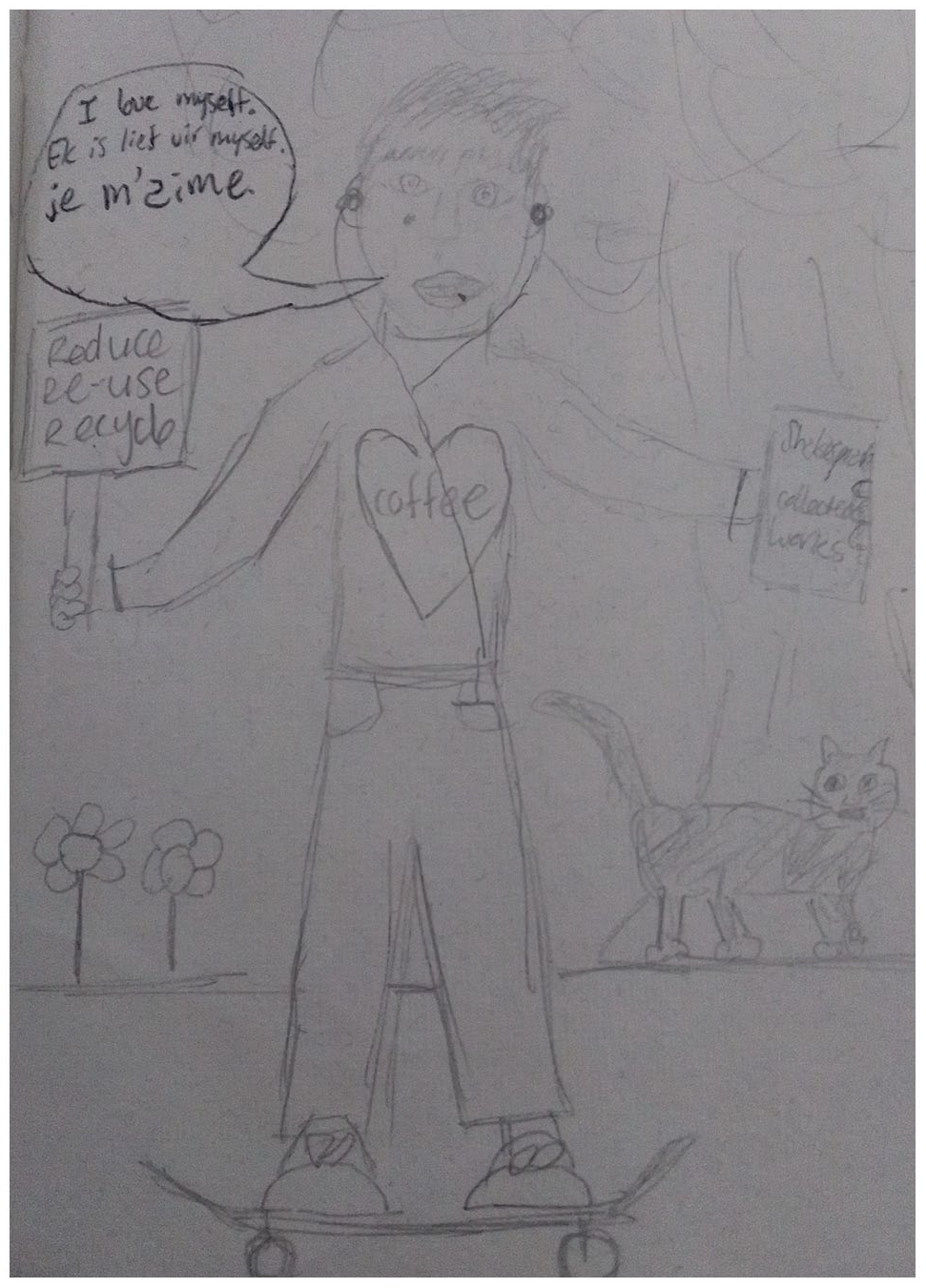

Talk, via speech bubbles, can also be used to emphasise the representation of spoken language in ad data. For example, Daniel, a South African workshop participant, drew his algorithmic self saying ‘I love myself’ in English, in addition to ‘ich is lief vir myself’ a direct translation of this sentiment in Afrikans (Image 1). Data tracking is experienced differently across geographic and linguistic contexts: our algorithmic selves speak multiple languages. English language is baked into social media platform design, and many indigenous, or so-called minority languages must negotiate under-representation on social media platforms (Lee, 2017). Moreover, users may choose to speak in different languages on social media to orientate their self-presentations to particular audiences, for example for family, or for friends. This can mean algorithmic selves look different across platforms. Another participant, Josie, drew a speech bubble next to her ‘Instagram’ algorithmic self, exclaiming ‘I like the language!’ in Korean. On the other hand, her ‘Facebook’ algorithmic self was surrounded by dollar signs with a very different speech bubble – ‘Designer only darling’ – written in English. We explore the relationship between language and advertising transparency resources in more depth in other work (Kant & Bishop, forthcoming). However, we briefly raise this point here to demonstrate the importance of considering attending to the textual layers within the drawings. Participants creatively stitch together fragments of data capture, to communicate their complex identities and experiences within the workshop.

Daniel’s drawing.

Writing with algorithmic selves

In our second workshop task, we ask participants to write about meeting their algorithmic self. We leave the choice of writing genre open to workshop participants; it can take the form of a short story, a play, a poem, or a scripted conversation. We ask participants to think about a meeting in order to prompt reflection about the consistencies, contradictions and connections between their own self-narratives and their algorithmic selves. Herein we are inspired by the use of the ‘story completion method’ which involves ‘providing a story stem’ involving fictional characters (Lupton, 2021). Participants are asked to write what happened next. This method is particularly useful as it encourages participants to think both ‘creatively and speculatively’ about the entanglements between the human and non-human (Lupton, 2021, p. 69). As participants construct a narrative in which they imagine meeting their algorithmic selves, they can recognise their potentiality and their limitations, and indeed how they can be cared for.

Engaging with autobiographical writing invites participants to reflect on how all data are collected through subjective practices (Letherby, 2002). In other words, reflecting on an algorithmic self opens space for participants to question the veracity and slippery truth of social media data, and to reflect on the conditions of its collection. Recent scholarship has suggested that we now live under ‘surveillance capitalism’, in which ‘people become visible, knowable, and shareable in a new way’ (Zuboff, 2015, p. 77). The writing task in this workshop offers a space to reflect on the capacities, but also the limits of this knowledge. As Back (2012) observes, sociologists must think critically about private corporations’ claims around the comprehensiveness and veracity of the data they collect and sell.

As participants write about their algorithmic selves, they bring to life the inconsistent and mutable nature of ad interest data. One workshop participant showed the contradictory nature of her ad data through writing a story in which her ‘Instagram self’ has an argument with her ‘Facebook self’. She reflects on the congruity of these algorithmic selves by referring to them as ‘sisters’, yet their interests and temperaments are materially distinct:

‘Are you even listening to me?’ Facebook self wailed. ‘If I don’t have these clothes I’ll be nothing!’. ‘Maybe you should address that in yourself’ Instagram Self replied, unmoved by the irony of the fact that her own personality was constricted by little other than the most recent books she had read, an intense foreboding anxiety and a too early reading of Chris Kraus’s I love Dick.

In this vein, creative writing and drawing allows participants a way to subvert and negotiate the ‘truth expectations’ that we expected from gatekeepers of knowledge (McWatt, 2021). Creative writing and drawing reveal the complexities of ad profiles as both ‘anchored selves’ and ‘abundant selves’ (Szulc, 2019): forms of selfhood that are both fixed and made infinitely questionable through targeting profiles. In forms of autobiographical writing, the idea of a holistic self is questioned: ‘the possibility of a cohesive self and the ability either to know or to tell the “truth” about such a self’ (Gunzenhauser, 2001, p. 77). Indeed, participants playfully engage with creative methods to challenge the legitimacy of data profiles’ ostensive scientific truths. The truthfulness of data has been challenged as a form of ideological positivism – despite what data profiles say, the numbers never ‘speak for themselves’ and data are not raw or neutral (boyd & Crawford, 2012, pp. 665–666). Ad setting profiles are curated and structured to look and feel like fact. Our methodology can support participants in engaging with data creatively and flexibly. Writing and drawing can be used as prompts to delve deeper into what social truths are being told. In the case of our enquiry, participants question how social media platforms are selling their identities and interests to marketers. They often used the exact same data to tell new and (equally absurd) stories.

Sharing and collaborative biography

During this part of the workshop, participants present their creative work to other attendees, who respond with thoughts and reflections. This structure is informed by the feminist methodology of ‘collective biography’ in which stories and memories are shared with a group, and then revised based on feedback from group members (Gonick et al., 2011). Firstly, this practice is rooted in the feminist mandate to enable a ‘dynamic rapport of mutual support and co-production’ (Vacchelli & Peyrefitte, 2018, p. 2). We feel it is important that our workshop offers space for a collaborative response, particularly given the highly individualistic nature of ad interests. Secondly, this practice allows participants to support each other in making sense of their advertising data, and to reflect on how this connects to their own identities and technology use. As participants read out their work, others may offer folk theories about how this version of their algorithmic selves may have been inferred by platforms, or why particular ad interests are either concurrent or unusual within the group. Folk theories can augment the feelings, thoughts and theories raised during creative work with an important sense making strategy (Eslami et al., 2016; Ytre-Arne & Moe, 2021). Despite participants not being ‘expert’ users, the theories shared are often imaginative, speculative and technically sophisticated, showing the many ways in which boundaries around system ‘experts’ are often arbitrary.

It has been important for us to participate in the exercises as workshop facilitators. We would encourage other researchers running workshops to do the same. We do this to prompt reflexivity about the research process, and minimise researchers’ artistry in distancing themselves from the research process as ‘observers’ (Ayrton, 2020). We do not want to shy away from the ways that we co-construct this experience as we enact it (Back, 2012). We decided that we as the authors/researchers should also share our personal stories during the workshop so this contributed to situate us in a more equal position with the research participants. Furthermore, despite repeating the exercises with each workshop, the changing nature of ad settings and our own ‘algorithmic imaginaries’ (Bucher, 2016) mean that our own writings and drawings are different every time – a consideration that could be critically explored in ongoing workshops with the same groups of participants.

Analysis (drawings)

In this section, we offer brief guidance on taking apart participants’ creative work in order to make these research objects salient and ready for critical analysis. As we have noted earlier in this article, we collect (or take pictures of) participants’ drawings and stories at the end of research workshops. In analysing drawings, we have turned to approaches used in systems research, particularly Bell et al.’s (2019) methods for interpreting rich pictures. Their approach adds a formalised layer for analysing creative research objects, as researchers are encouraged to break down participants’ drawings into a grid of nine segments (Bell et al., 2019). These segments are then examined for iconography, metaphors, colours and text. In our approach, we have coded these segments using grounded theory – in which patterns are identified and extrapolated in order to identify conceptual categories (Thornberg & Charmaz, 2014). Once identified, these categories are constantly checked and refined, and are later integrated into a theoretical framework (Thornberg & Charmaz, 2014). Examples of our coded categories include emotions (love, anxiety, fear), hobbies (baking, yoga), nature (plants, trees),and politics (environmental activism, political media).

We believe that this systematic approach fruitfully dovetails with analytical approaches from sociological and cultural studies. Guided by the latter, we have analysed images and stories to identify how participants produce and represent their own subjectivity and selfhood using language and cultural repertoire (Gray, 2002). In this vein, it is important for the researcher to pay attention to both ‘content’ and ‘form’ – in other words, attending to what research participants are saying, and how they are saying it (Gray, 2002, p. 132).

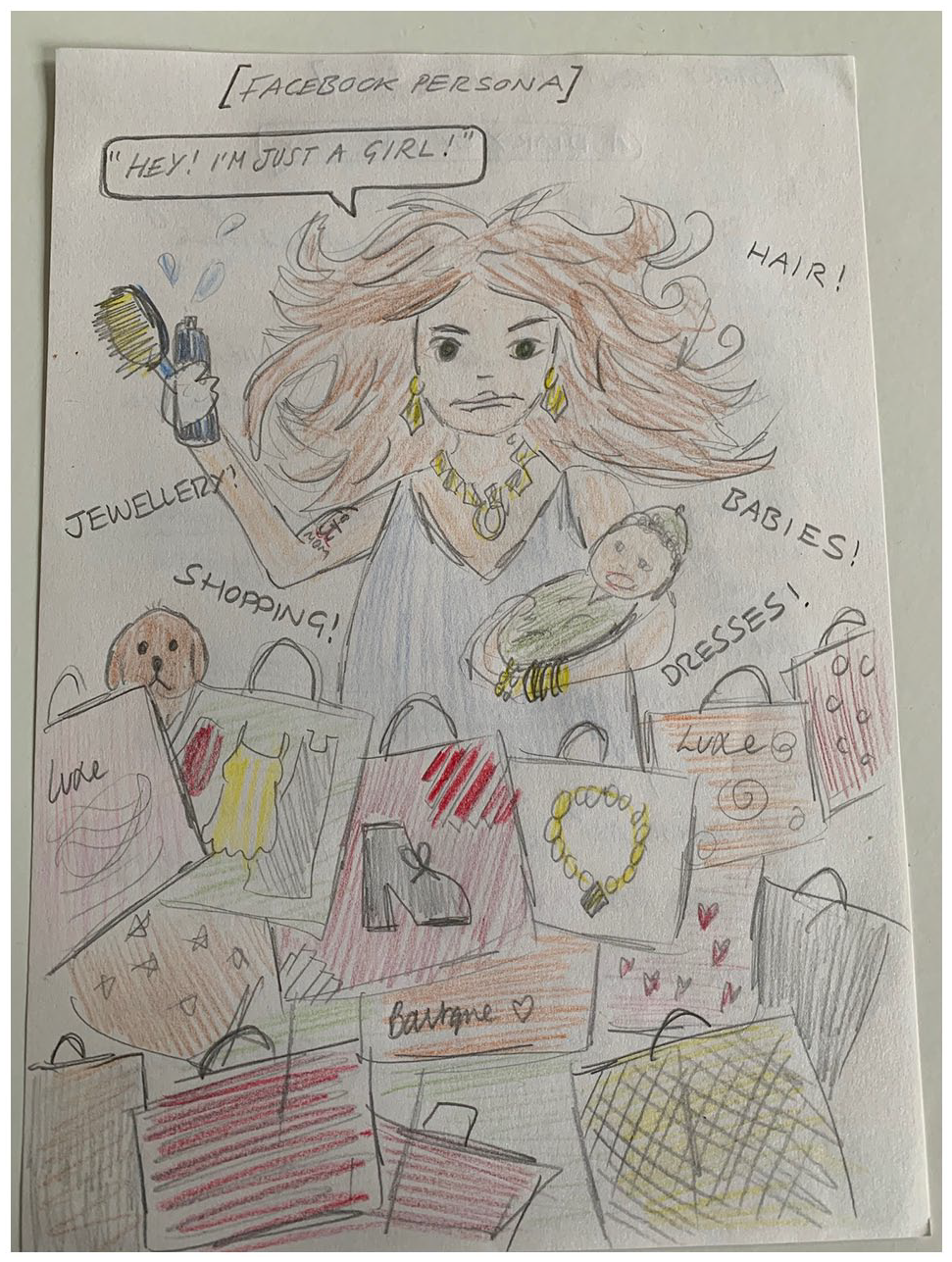

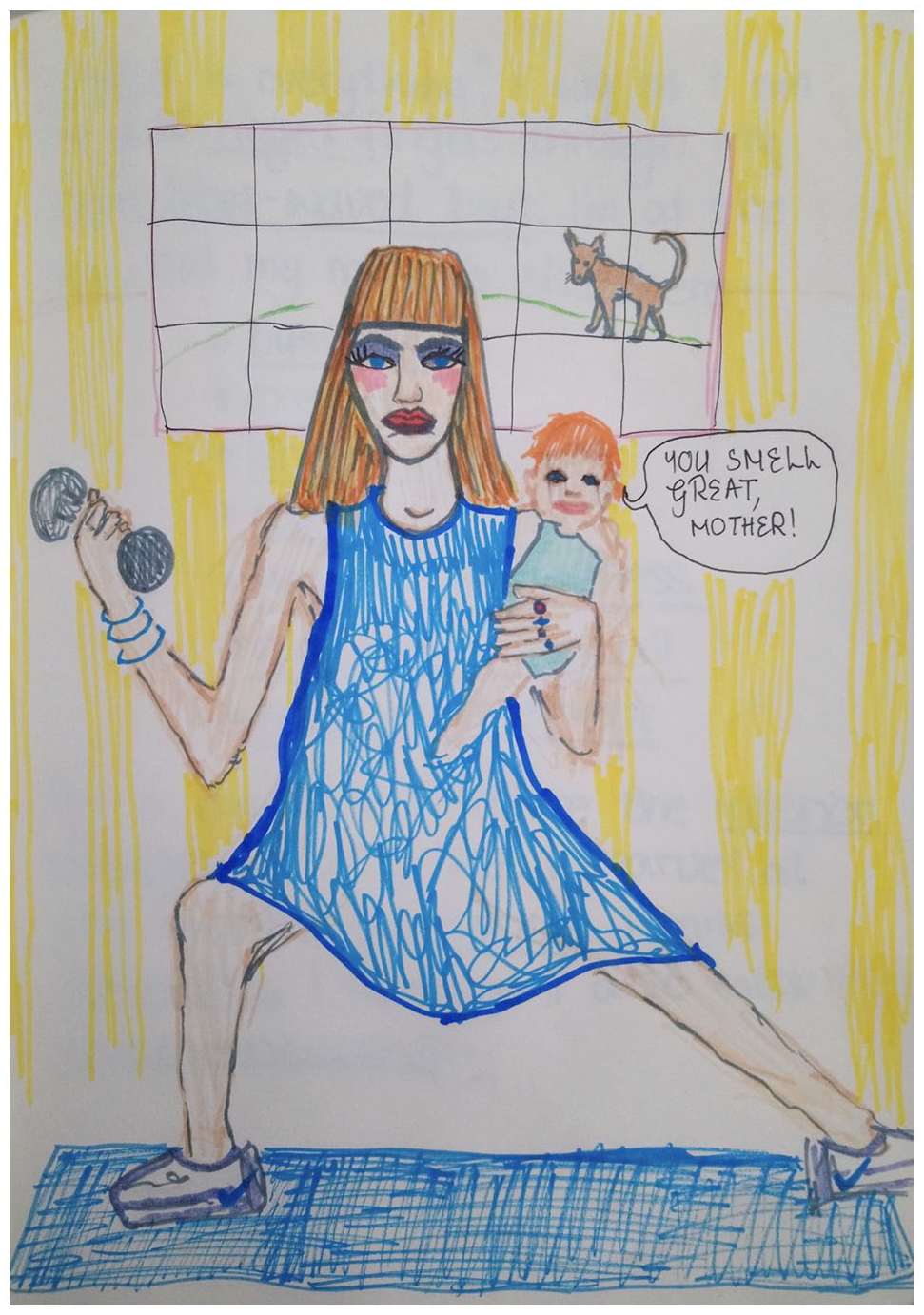

To illustrate our approach, we briefly can briefly consider one preliminary analysis of images produced during an academic conference, in which the majority of participants identified as women. In examining content, we noted that a frequent form of iconography used were stereotypical representations of femininity, and particularly babies and motherhood. This theme was made particularly meaningful, as images of babies were often represented next to a plethora other interests, responsibilities and themes. For example, Kylie’s algorithmic self cradles a baby, amongst overwhelming and overlapping depictions of hairstyling and luxury shopping bags (Image 2). Jessica’s algorithmic self straddles a baby on her hip, while weightlifting in a dress and full makeup (Image 3).

Kylie.

Jessica.

As these symbols of femininity were tangled together, we turned to critiques of unmanageable expectations within neoliberal femininity – the impossibility of ‘having it all’ (Gill, 2007; Gillis & Hollows, 2009; McRobbie, 2015) . We were able to tease out how participants negotiate humorous responses to stereotypical, normative and gendered categorisation which has been shown in previous work to ‘interpellate users’ as ‘fertile subjects’ (Reed & Kant, 2022). Although algorithmic identities are immutable and constantly reshaped (Cheney-Lippold, 2017), familiar biological pressures followed these women around, and in turn became an outsized feature of their algorithmic self.

Secondly, in attending to form, we can see that participants often use colour to add texture to their illustrations. For example Jessica used bright primary colours in her drawing to underscore the highly gendered nature of her ad interests. She used vivid blues and yellows in a manner evocative of the 1950s archetype of the ‘Stepford wife’. In this vein, we note that colour can be a ‘hot line to emotion’ within artistic methodologies (Baker, 2003, p. 188). Jessica also drew herself with heavy makeup in the picture; the clashing colours make her algorithmic self seem almost clown-like in nature. Her makeup appears humorous, but also a little sinister, giving an uncanny edge to her artwork, and signals her discomfort with the ad interests. Not only, then, can we reflect on how participants are recognised and categorised by Facebook advertising as mothers, or interested in motherhood, but we can capture how affective responses to ad data shapes participants’ drawings.

Analysis (stories)

We turn to discourse analysis in order to interpret participants’ stories. A feminist application of discourse analysis recognises the constructed nature of all social life, including categories such as the self – stories ‘reflect contemporary discourses’ which participants use to ‘make sense of experience’ (Kitzinger & Powell, 1995, p. 350). In a similar approach to the analysis of pictures, we break drawings apart into fragments, which can be regrouped into themes. One of our common themes was ‘mutual shaping’, namely instances in which we noted that participants discussed how ad interests shaped how they saw themselves. Through drawing and writing, participants could question the truth-value of ad settings, and their ability to accurately represent participants’ complex, lived selfhood. For example, Zara reflects on her algorithmic self with ambivalence in the following poem:

I am depicted as mindless, unfeeling and vain. Consuming luxury, devouring fame. I am empty and superficial. Stocking up on weight loss drugs and shame I am well-dressed but not well liked. I only have the Facebook algorithm (and myself) to blame.

Zara opens the poem with distance, stating how she has been ‘depicted’, but later she switches to claiming her algorithmic identity, using ‘I am’. The interests that she is reflecting on appear to be exaggerated and one-dimensional. However, the poem ends with the observation that both she, and the Facebook algorithm, are at fault for this unflattering portrait. As she has implicated her own social media use, the reader is left wondering about the accuracy of these (often distressing) ad interests. Confronting our data, how our data are witnessed and constructed by social media platforms is often a highly affective process (Lupton, 2017).

Ethical considerations

Workshop facilitators will have some access to personal user information during the workshop, namely aspects of participants’ social media profiles, including their ad interests. This access offers a point for ethical consideration: as Tromble (2021, p. 6) states, ‘we must recognize the responsibilities we carry when working with digital data, particularly to the people represented in those data’. In line with this sentiment we deliberately limit the participant data collected during the workshop, avoiding the collection of any ‘raw’ ad targeting interests, or any of the content of their targeted ads. Although we recognise the benefits of the projects designed to digitally ‘ride along’ with users to monitor how they are being targeted (e.g. Ad Observer, 2021), we seek to augment these monitoring and accountability approaches through feminist methods of care (Lupton, 2020), to afford voices and support to participants. In doing so, our workshops illuminate ad targeting, but also reframe discussions beyond discourses of fact, data and knowledge – refocusing on individual agency and creativity. We designed this project to support participants in understanding and using data targeting for their own creative purposes, and in meaningfully participating in targeting research – on both individual and collective levels. We therefore encourage researchers running this workshop to orientate data collection around participants’ own voices through their drawings, writing samples, and through recording collective sharing sessions.

Conclusion

In this article we offer a critical and creative method for studying social life as it is lived alongside social media platforms. In developing our method, we have engaged with the interdisciplinary roots of digital sociological enquiry (Daniels et al., 2017). We have mobilised concepts from sociology, technology studies and feminist enquiry to present a framework for creative and critical reflection on social media data. Our method seeks to add to the body of work extending digital sociological methods beyond gathering big data, towards creatively thinking with accessible social media data. Commercially collected data are called to being through imagination and interpretation (Back & Puwar, 2012). As we have integrated creative enquiry into our methodology, we offer an opportunity for workshop participants to take creative ownership of their data, and at times, to build agency online and to work towards developing algorithmic literacy. Prior to their engagement with the guided activities in the workshop, many participants across backgrounds (festival revellers and academics alike) were unaware of the ad transparency resources provided by social media platforms. As they were guided to engage with the resources, we facilitated discussions about how participants can practically intervene in their ad targeting processes, and which interventions were possible. For example, many groups chose to ‘opt out’ of personalised ad targeting on Google, after they became aware of the option. Through creative writing and drawing, we have observed that participants tangibly see how their algorithmic selves are constructed and crucially could start to learn how to navigate the tools to control these self-representations.

We believe there are many directions that researchers could take this methodology, according to their own research training and interests. We should note that creative drawing techniques in Human–Computer Interaction and related disciplines are often used as probes within other qualitative research methods such as interviews and focus groups (Siles et al., 2020; Vertesi et al., 2016). Probes can help participants remember points they wish to discuss, articulate complex topics and illustrate their perspectives more clearly (Gangneux, 2019). We have not yet conducted interviews, or recorded the collective response parts of our workshop. However we believe that this approach would be very complementary to our research methodology, and offer one avenue for researchers to ‘thicken’ the data collected from participants’ creative outputs.

In closing, we should point out that platform-provided tools are not transparency panaceas. They are unstable proprietary resources developed by social media platforms to serve their own interests (Dommett, 2021); they also have errors and absent data (Leerssen et al., 2019). We have reflected on the limitations of transparency resources throughout this article and note that they require ongoing public oversight and improvement. However, we have also shown that our methodology is flexible and versatile, despite these challenges. We have offered a craft-based framework for understanding imaginations, associations and connections with data profiling, and making these understandings available for participant reflection and researcher analysis.

Footnotes

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.