Abstract

The emergence of agentic artificial intelligence (AI) presents a novel frontier for cybersecurity research, yet its potential to simulate complex human behaviours in controlled environments remains underdeveloped. While extensive literature examines employee compliance with cybersecurity policies, it lacks leveraging agentic AI. To bridge this gap, this study implements a novel four-phase research design to identify the configurations predicting employee compliance intentions (CI) with institutional policies in the e-government sector. The proposed approach integrates agentic AI simulations, where AI agents emulate employee responses to multi-scenario vignettes. The study first employs a grounded theory approach, following the Gioia methodology, to code AI-generated qualitative data into theoretically grounded themes. Subsequently, it utilises AI agents to weight quantitative responses. The analysis reveals distinct behavioural archetypes (i.e. embracers, negotiators and resisters). Finally, it reports fuzzy-set qualitative comparative analysis (fsQCA) to move beyond net effects and uncover specific pathways of conditions that consistently lead to high CI. This work foregrounds agentic AI simulations as a pioneering tool for behavioural analysis. It offers a replicable methodology for investigating complex socio-technical phenomena and suggests new avenues for simulation-based inquiry. This research establishes a conceptual foundation for facilitating theory development and methodological innovation using agentic AI in simulation.

1. Introduction

E-government services comprise digital infrastructure underlying essential government services such as health care, finance, transportation and taxation. 1 These services are central to the attainment of the United Nations’ Sustainable Development Goals for governments across the globe. 2 After the COVID-19 pandemic, digital platforms became the predominant medium through which governments engaged with citizens, even in regions where previous research reported limited adoption and public mistrust.3–6 The exigencies of accessing public services during national lockdowns rendered online channels unavoidable. Governments worldwide strived to enhance and implement e-government services in efforts to bridge the digital divide. 7

This abrupt reliance on digital services paved new paths for cybercriminals. 8 Concerns about how these services store, process and safeguard personal information continue to grow, as incidents of large-scale data breaches become more frequent, costing organisations up to £284.44 million annually. 9 Statista reports, the estimated cost of cybercrimes will reach up to £12.792 trillion by the year 2029. 10 The global estimates mount to billions of dollars, and many sectors, including e-government, are unable to match the sophistication of cybercriminals, which fall prey to ransomware, malwares or phishing attacks. 11 However, documented cyber incidents and their corresponding remediation strategies remain scarce for many countries. Consequently, locally derived lessons frequently remain siloed, thereby constraining the transference of empirically validated practices to contexts where they can yield significant impact. 12

Globally, the highest concentrations of cybercrimes are reported in America (43%), Europe (32%) and Asia (14%), with the lowest incidence observed in Australia (1.5%). 13 In 2023, government agencies across the globe experienced a significant surge, with reported cases increasing from 40,000 in early 2023 to 100,000 by August 2023.13,14 This sharp rise is alarming, highlighting the urgent need for stronger cybercrime mitigation practices in e-government services.

Effective cybercrime prevention requires a strategically aligned framework that synthesises managerial mechanisms and technological systems. A critical dimension highlighted in prior scholarship pertains to building resilient management frameworks, but a thorough delineation of management practices and employee intentions remains conspicuously lacking within e-government service contexts. 15 Moreover, most research designs in previous literature either focus on managers or employees but have seldom considered the behavioural archetypes as different groups in their analysis. Aiming to fulfil these research gaps, this study focuses on examining the effects of management-driven behavioural practices on cybersecurity policy adherence within e-government institutions along with the configurations of behavioural factors needed to achieve high employee-compliance intention (CI) in these settings. In so doing, this paper introduces a novel architecture leveraging the strong potential of agentic artificial intelligence (AI) in multi-agent simulation, as discussed below.

In the past few years, AI has taken over at an exponential scale, with its transformative potential for both public and private sectors. In spite of ethical and regulatory concerns, it is now implemented across platforms, and the technology has become a new ‘normal’ across smartphones, computers and online services.16,17 In addition, the rapid diffusion of AI, through ChatGPT, DeepSeek, Grok and others, has intensified security concerns for data governance in public services. With controversies around algorithmic bias (i.e. preference of human advice over algorithmic advice 18 and decision-making consequences), its use in data generation has received limited attention.19,20

Agentic AI marks a transformative leap in data generation, 21 offering profound potential to sculpt smart simulations. Enabling agents to use sophisticated reasoning to solve tasks with minimal human supervision, 22 agentic AI combines learning ability, autonomy, reactivity and proactivity, 21 hence offering new venues for studying multi-agent systems. 23 Overall, it is shifting the approaches to behavioural paradigms, with many studies pointing out the potential of human–AI combinations. 24 Researchers are now proposing that agentic AI systems can serve as reasonable proxies for human subjects (especially for studying behavioural intentions and decision-making) and highlighting the importance of embedding these capabilities into social science analytics tools like simulations. 25

User simulation represents an expanding transdisciplinary domain with significant relevance for both simulation and AI researchers, and practitioners. It entails the development of intelligent agents that emulate human user interactions with AI systems, thereby facilitating the modelling and analysis of user behaviour, the generation of synthetic training data and the systematic evaluation of interactive AI systems under controlled and reproducible conditions. 26 As the integration of human behaviour remains a persistent challenge in agent-based modelling and simulation, 27 agentic AI introduces a paradigm shift, significantly enhancing the performance and efficacy of multi-agent systems.

A particularly promising domain for its effective implementation is cybercrime prevention, especially within e-government services. While extensive literature examines employee compliance with cybersecurity policies, these studies have not leveraged the capabilities of agentic AI within a multi-method framework to understand the underlying complexities. To bridge this gap, this study develops and implements a novel four-phase research design to identify the set configurations predicting employee CIs with institutional policies in the e-government sector. The proposed approach integrates agentic AI simulations with established analytical techniques.

2. Background

Cybercrime prevention requires a strategically coordinated approach which integrates cybersecurity training and technical safeguards in addition to managerial and technical governance. Extant work posits the significance of targeted awareness initiatives, 28 with most of the academic discourse in cybersecurity converging on a framework of five interdependent phases: preparation, prevention, detection, response and recovery. These functions are conceptualised on a cyclic continuum. The prepare phase includes risk assessment, policy frameworks, deciding incident response and conducting security simulation. Preventive strategies involve technical defence, enforcing policies and initiating behaviourally informed training to develop secure digital habits. Detection involves monitoring and reporting for effective anomaly identification. Responding includes mobilising resources and managerial oversight based on clearly defined roles and responsibilities. Finally, learning from incidents and structured reviews on what has been found marks an effective recovery. 29

Previously, Straub and Welke 30 posited that the perceived certainty of detection and consequences thereafter serves as a critical discouragement for offenders committing cybercrimes. Therefore, employee training on security awareness serves a dual purpose: (1) educating employees about threats, policies and vulnerabilities and (2) communicating the institutional seriousness of enforcement. Similar to McLaughlin and Gogan, 29 Straub and Welke 30 also propose a four-stage cycle for effective information security within institutes through deterrence, prevention, detection and recovery, which are further expanded by literature.31–35

Studies also point to resources, employee training and compliance in strengthening cybersecurity.36,37 However, when policies are implemented, their effectiveness depends on compliance, without which these measures are insufficient. 34 Despite the increase in reliance on the managerial and technical controls, alongside multitude of institutional policies, the managerial aspects and employee behaviour are often overlooked in both academic research and practical applications. 38 This mainly stems from the multitude of challenges in primary data collection within these e-government institutes and related agencies.

Such data challenges make the integration of agentic AI imperative to the advancement of cybersecurity research. Agentic AI describes computational systems endowed with autonomous, goal–oriented behaviour. Unlike traditional AI that operates under explicit supervision or narrow task constraints, agentic AI dynamically adapts its objectives in response to changing contexts and can collaborate or compete with other agents in multi-agent ecosystems. In the realm of cybersecurity research, investigating agentic AI opens new avenues for theorising behavioural and organisational implications within digital infrastructures. This paper focuses on the methodological advantages provided by agentic AI for investigating less explored dimensions of cybercrime prevention within e-government research.

Agentic AI has gained significant attention because of its ability to not only respond to a user’s prompt but also to act on it directly. This means it can take control of its digital environment, perceive new information and adapt to complete a task. In short, it can execute tasks by managing its own workflow and the tools it has access to. 39 Sen and Jakkaraju 40 developed agent-based simulations that modelled AI–human collaboration to decompose tasks, leading to improved organisational decision-making in complex environments previously not handled by small language models. Likewise, Kanumolu et al. 41 showed how agentic AI could generate new business ideas and improve innovation pipelines by evaluating patents. Emphasising their importance, Hu et al. 42 proposed a benchmark for social sciences research to utilise agentic AI, which can better handle data generation while not leading to algorithm bias.

Technological advancements in this field are progressing at an unprecedented rate, with agentic AI being currently (i.e. September 2025) available through OpenAI ChatGPT 5 model via their built-in interface. The agent designed by OpenAI is powerful enough to act autonomously, make decisions and act upon them to achieve goals. Whereas the earlier large language models (LLMs) rely solely on input data (prompts) and exhibit only moderate emulation of human agency, the advent of agentic AI marks a step-change into a new landscape. 22

This paper proposes a novel approach to using agentic AI for exploring how management-driven behavioural practices influence cybersecurity policy adherence within e-government institutions. In so doing, we simulated agentic AI in ChatGPT 5 model to weight variables based on three international cybersecurity frameworks from 300 agents (extended to n = 500, 1000 and 2000), each posing as an employee working within the e-government services setting. To maintain consistency, we kept the same scenarios throughout for all employees and used the responses to initially conduct grounded theory analysis using Gioia’s Method, 43 as explained in detail below.

3. Methods

AI presents a transformative potential for scenario and decision-making research by enabling dynamic modelling of complex systems, simulation of multifaceted outcomes and integration of vast data sets. Moreover, AI-driven tools can facilitate generating tailored scenarios responsive to stakeholder inputs, thereby enhancing their strength and practical relevance. Researchers have started suggesting active use of AI-generated scenarios as effective methodological tools for applied research. 17

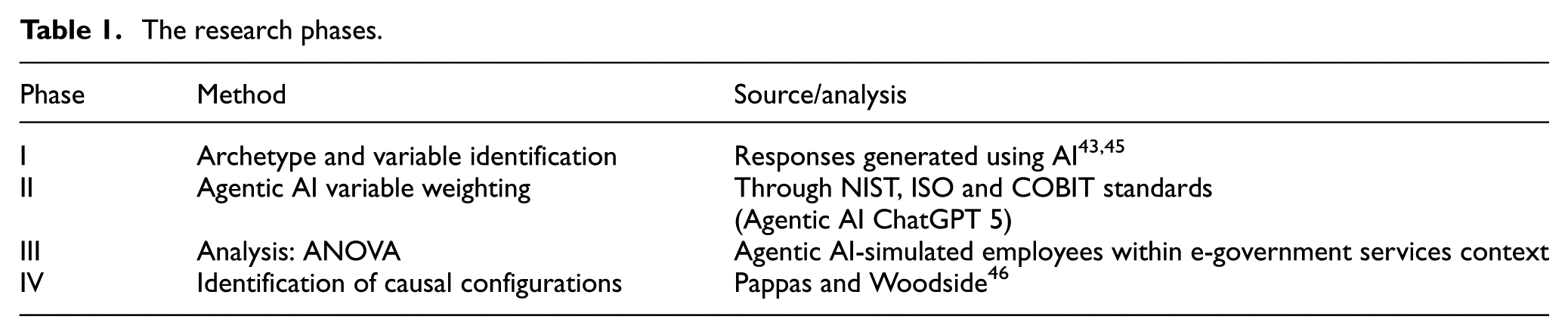

It is also argued that the AI tools can produce numerous scenarios at minimal cost and time, offering significant value for research while simultaneously facing challenges like inconsistent quality, lack of contextual relevance and ethical concerns. 17 To overcome such issues, instead of generating scenarios from AI, this study used three scenarios employed in previous work 44 and followed this step with responses generated from AI agents. Appendix I gives details of these scenarios. The study, therefore, further develops on the multi-method presented in Table 1.

The research phases.

Phase I addresses the methodological challenge stressed by Ramirez et al. 45 Regarding the generation of compelling insights through scenario-based research, this study employed three scenarios operationalised within one leading large language model – ChatGPT 5. These scenario executions facilitated a comparative analysis of AI-generated managerial responses to cybercrime prevention in e-government contexts. Finally, the Gioia methodology is applied to systematically analyse the generated data and structure the emergent concepts into a coherent framework. 17

Phase II involves the themes originating within the grounded theory being converted into measurable variables and weighted using agentic AI-based simulation reflecting three international cybersecurity frameworks: National Institute of Standard and Technology (NIST), International Organisation for Standardisation (ISO) and Control Objectives for Information and Related Technology (COBIT). Weights were assigned from 1 to 5 (where 1 indicates less likely and 5 indicates highly likely) based on the responses generated in agentic ChatGPT.

With Phase II depicting behavioural dimensions such as CI, the generated scores were used to conduct Phase III analysis of variance test, while the final Phase IV reports fuzzy-set qualitative comparative analysis (fsQCA) to identify the best possible configuration for employee compliance. 46 The fsQCA results are further expanded in the ‘Discussion’ section to report less effective configurations.

4. Findings

4.1. Phase I – Archetype and variable identification

Traditional research methods, though rigorous, often struggle to produce innovative and practically relevant insights. Scenario-based approaches offer a solution through creative exploration and bridge the gap between academic theory and real-world application through participatory processes. This study adopts such an approach to examine AI-driven cybercrime prevention within e-government services, illustrating the method’s capacity to generate actionable knowledge that informs both scholarly discourse and policy practice. 45

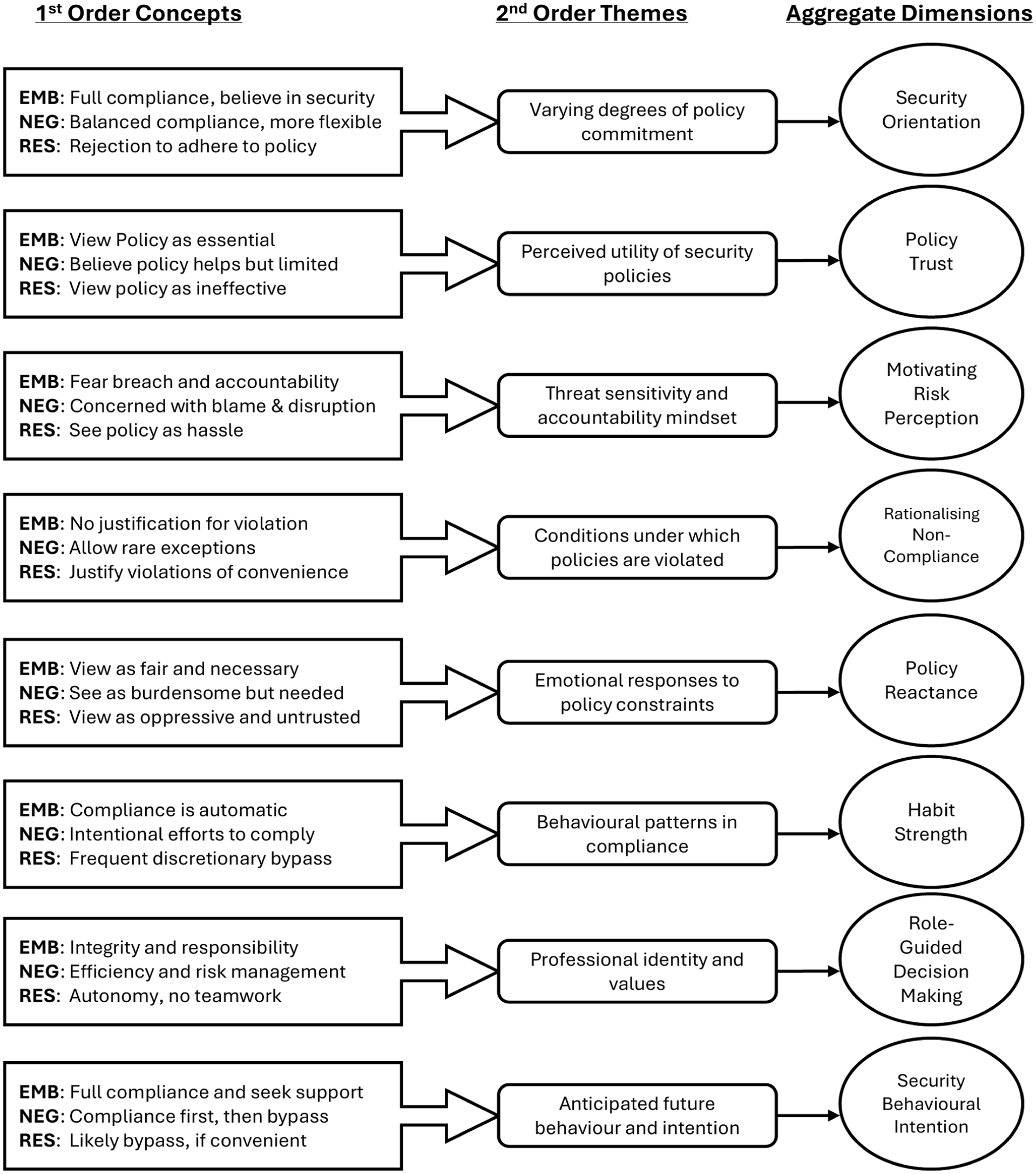

To keep a balance, we utilised three scenarios previously used in different research settings. 44 These scenarios were used in the context of policy compliance, namely USB drive, workstation logout and passwords in a company’s context, which we have changed to e-government services. We took 10 responses per scenario from ChatGPT and performed a grounded theory analysis using Gioia methodology on the data. The Gioia method systematically transforms respondents’ narratives into theoretical constructs through three-level coding (first-order concepts, second-order themes and aggregate dimensions) while maintaining informant authenticity in theory development. This inductive methodology was selected for its proven ability to facilitate theory discovery directly from empirical data, making it well-suited for exploring the emergent and context-specific nature of the simulated employee responses. Rather than imposing a pre-existing theoretical lens, our approach allowed for the systematic development of a conceptual framework anchored in the distinct patterns and themes observed within the data. One interesting identification was three behavioural categories of employees which originated from the analysis, that is embracers, negotiators and resisters.

The objective of this phase was, therefore, to generate original, data-driven findings. 47 Following grounded theory approach, the analysis involved open codes, axial codes and selective coding, we noticed that three different behavioural categories emerged within the data, namely enablers (EMB), negotiators (NEG) and resisters (RES). Keeping their views separated in classifying each dimension enhanced the analysis and provided a group understanding that not all employees might adhere to similar views. This also showed the efficiency of the AI model (ChatGPT 5) in responding to scenarios. Figure 1 differentiates the three different arch-types of employees but consolidate them in core themes.

Grounded theory analysis, data structure.

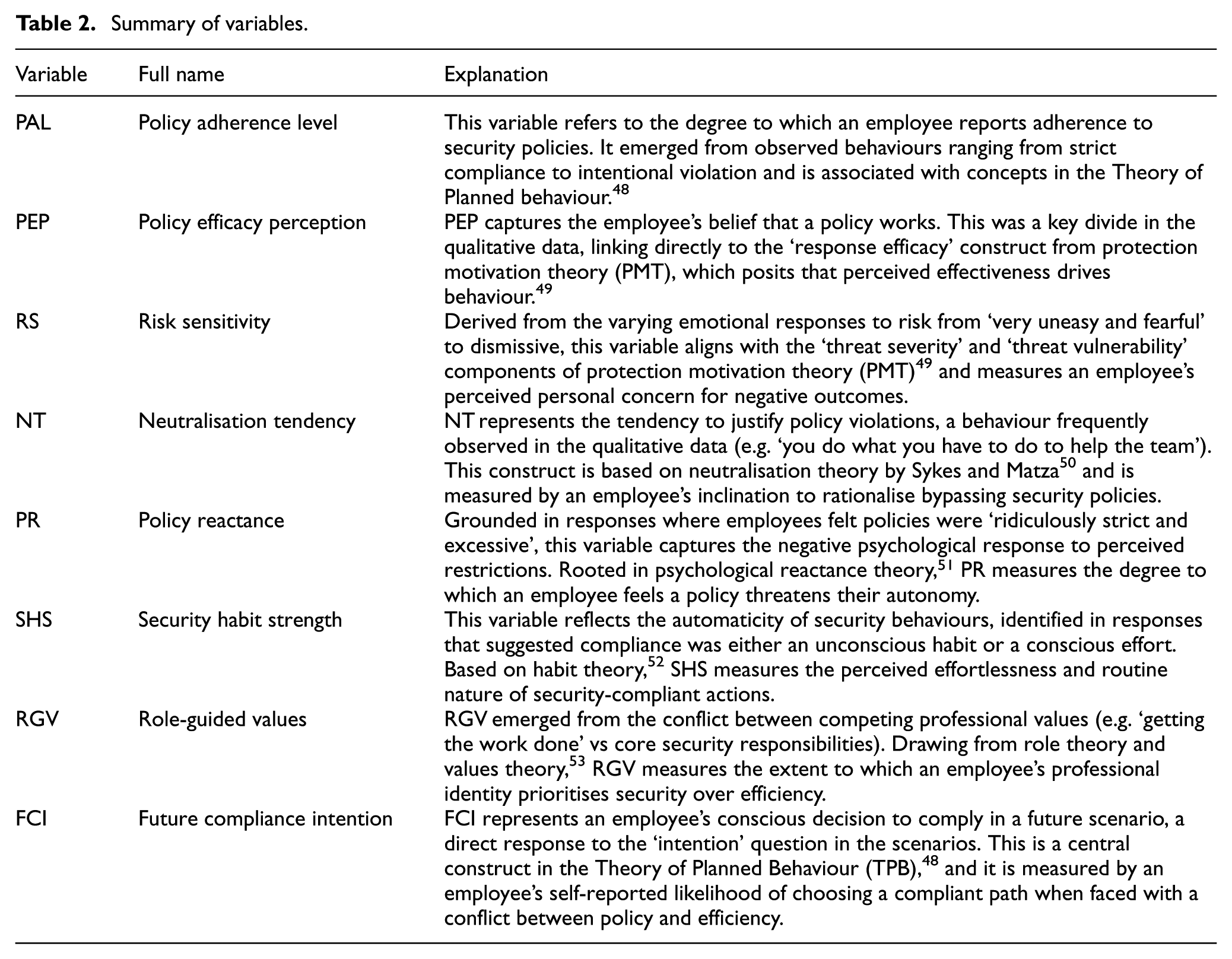

Drawing on grounded theory analysis, the study further explains the theoretical foundation of the variables identified from the analysis. These variables originate from established literature and collectively inform individual compliance behaviours in multiple domains. Table 2 presents a detailed summary and explanation of these variables, along with their foundations within the body of behavioural literature.

Summary of variables.

Although Table 2 confirms the variables roots in prior literature, to confirm their practical applicability, we mapped these variables to three international cybersecurity frameworks (NIST, ISO, COBIT), ensuring their broad cybersecurity protocol coverage. This is further explained in Phase II.

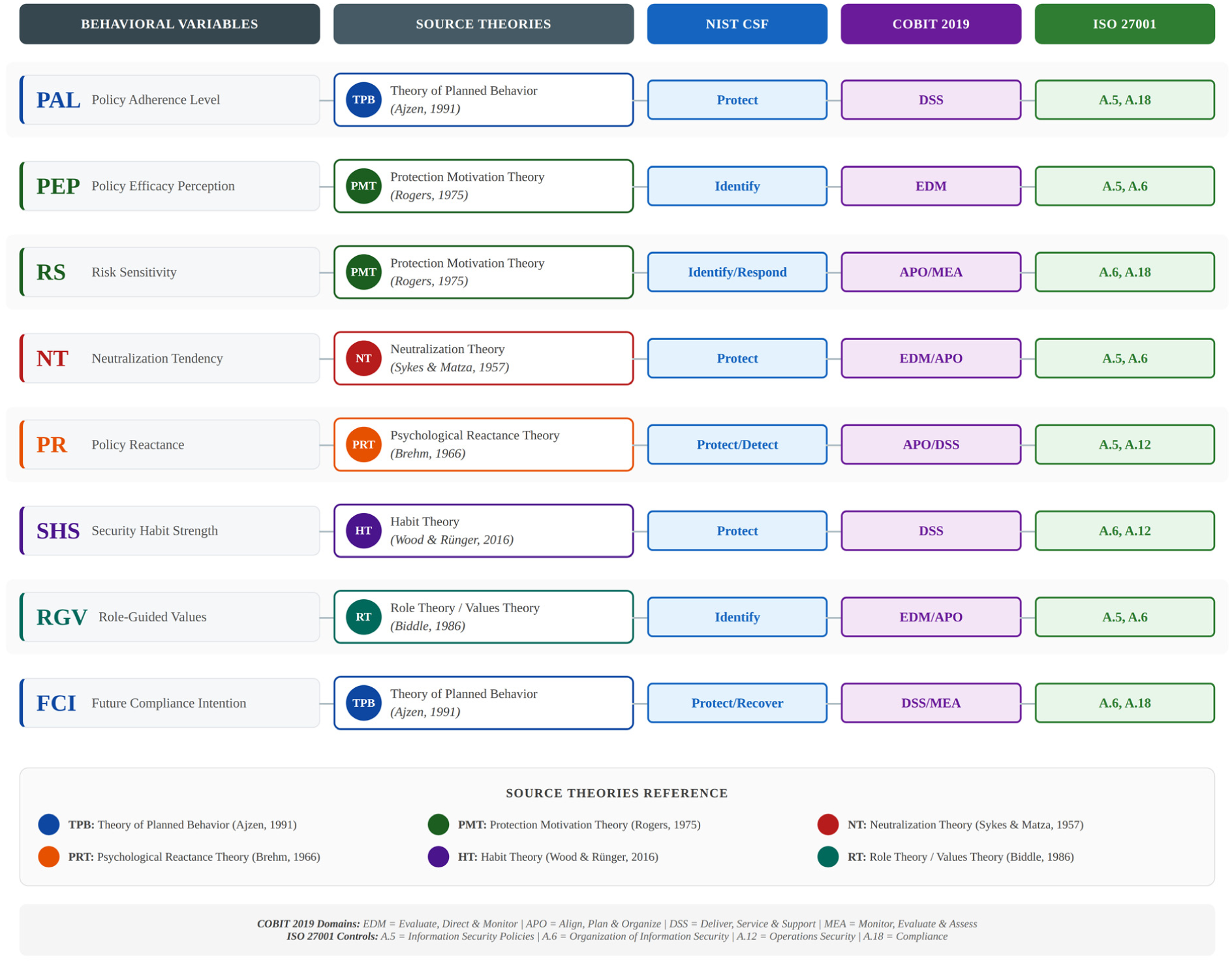

4.2. Phase II: Agentic AI variable weighting

Next, we simulated these variables in the context of three industrial standards (NIST, ISO, COBIT) to cover all industry-grade cybersecurity framework compliance dimensions. Then, we blindly scored them through agentic AI-ChatGPT 5 prompt to generate initially 300 model responses as separate entities, for all variables by weighting them from 1 to 5 (where 1 indicates less likely and 5 indicates highly likely) to adhere. Figure 2 is a snippet of theoretical origin of these variables and how they were mapped to international cybersecurity frameworks to ensure practical relevance.

Variable flowchart – supporting theories and cybersecurity frameworks

The NIST cybersecurity framework identifies broad categories which further fall within a certain domain of COBIT framework. These further map on to the security controls presented by ISO framework, which must be adhered to achieve the targeted outcome. For instance, protect from NIST can be achieved by following guidelines from COBIT and ISO, as shown in Figure 2. This provided us with necessary data to conduct next phase.

4.3. Phase III: Analysis (ANOVA)

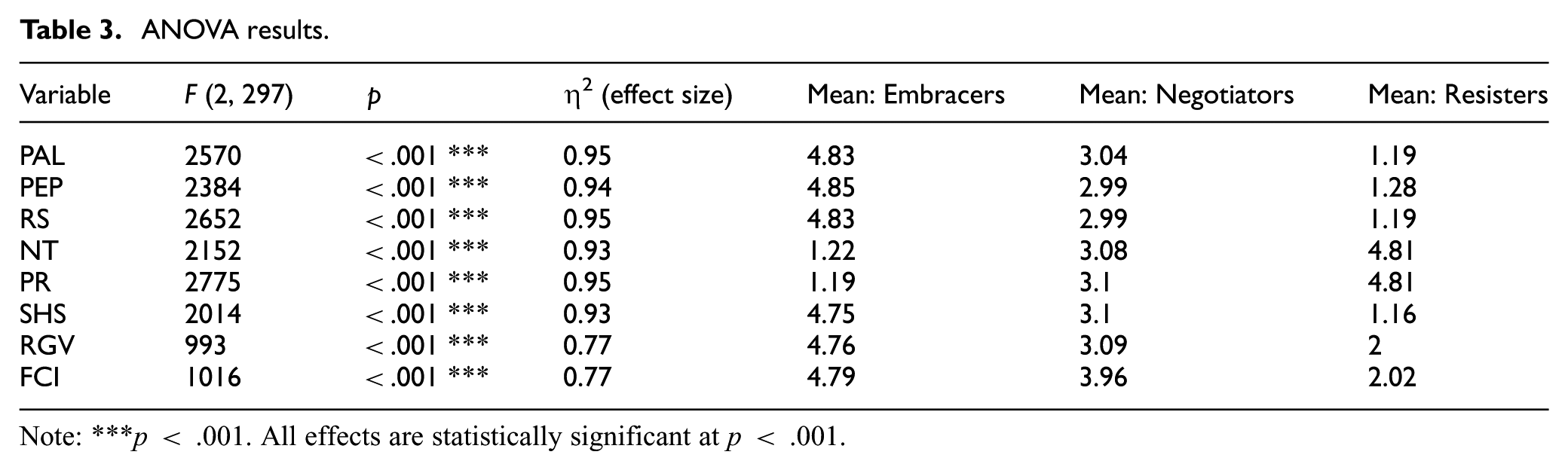

Once a complete weighted data set was collected through 300 agents posing as e-government services employees, we run dimension comparison across all variables in R software based on their behavioural profile as embracers, negotiators and resisters using one-way ANOVA, as shown in Table 3.

ANOVA results.

Note: ***p < .001. All effects are statistically significant at p < .001.

The results indicate significant differences in cybersecurity-related behaviours and perceptions across the three archetypes, embracers, negotiators, and resisters. All variables demonstrated highly significant differences (p < .001), with large effect sizes (η2 ranging from .77 to .95), suggesting strong distinctions between groups. Embracers consistently showed the highest levels of policy adherence (M = 4.83), policy efficacy perception (M = 4.85), risk sensitivity (M = 4.83), security habit strength (M = 4.75), role-guided values (M = 4.76) and future CI (M = 4.79), while maintaining low levels of neutralisation tendency (NT) (M = 1.22) and policy reactance (M = 1.19).

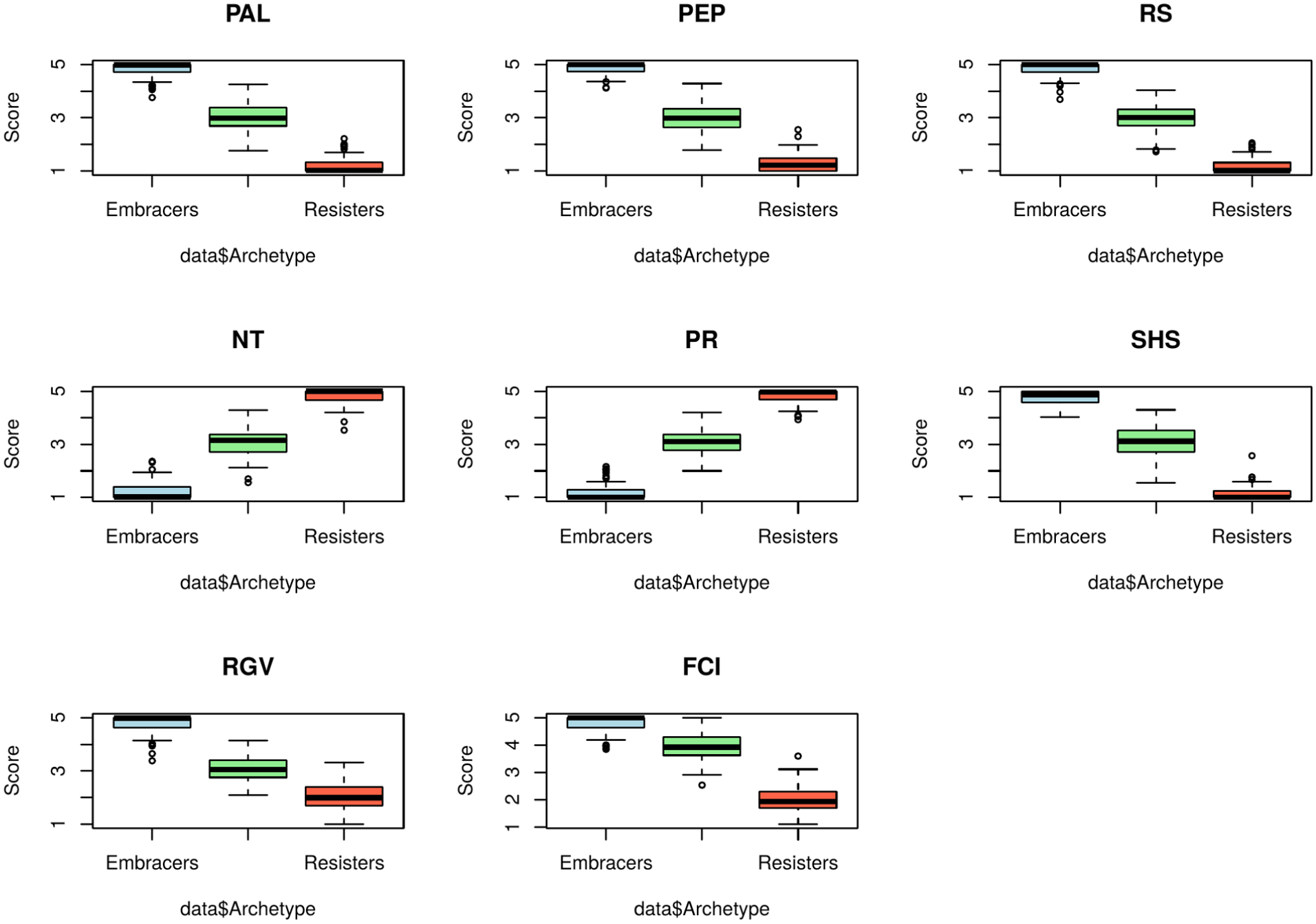

In contrast, resisters scored lowest on protective behaviours (e.g. PAL = 1.19; SHS = 1.16) and highest on neutralisation (M = 4.81) and reactance (M = 4.81), reflecting a pattern of resistance to cybersecurity policies. Negotiators generally fell between the two groups across all measures. These findings support the conceptual validity of the archetypal framework and highlight the critical behavioural dimensions, particularly policy adherence, neutralisation and role-guided values that influence an individual’s intention to comply with cybersecurity policies. The extremely high F-values and very low p-values confirm strong statistical significance, indicating that these group differences are also highly practically significant. The boxplots shown in Figure 3 provide visual evidence that the three employee archetypes are highly distinct. Consistently, embracers exhibit the most security-positive scores, resisters the most negative and negotiators occupy a clear middle ground.

ANOVA boxplots and differences based on archetypes

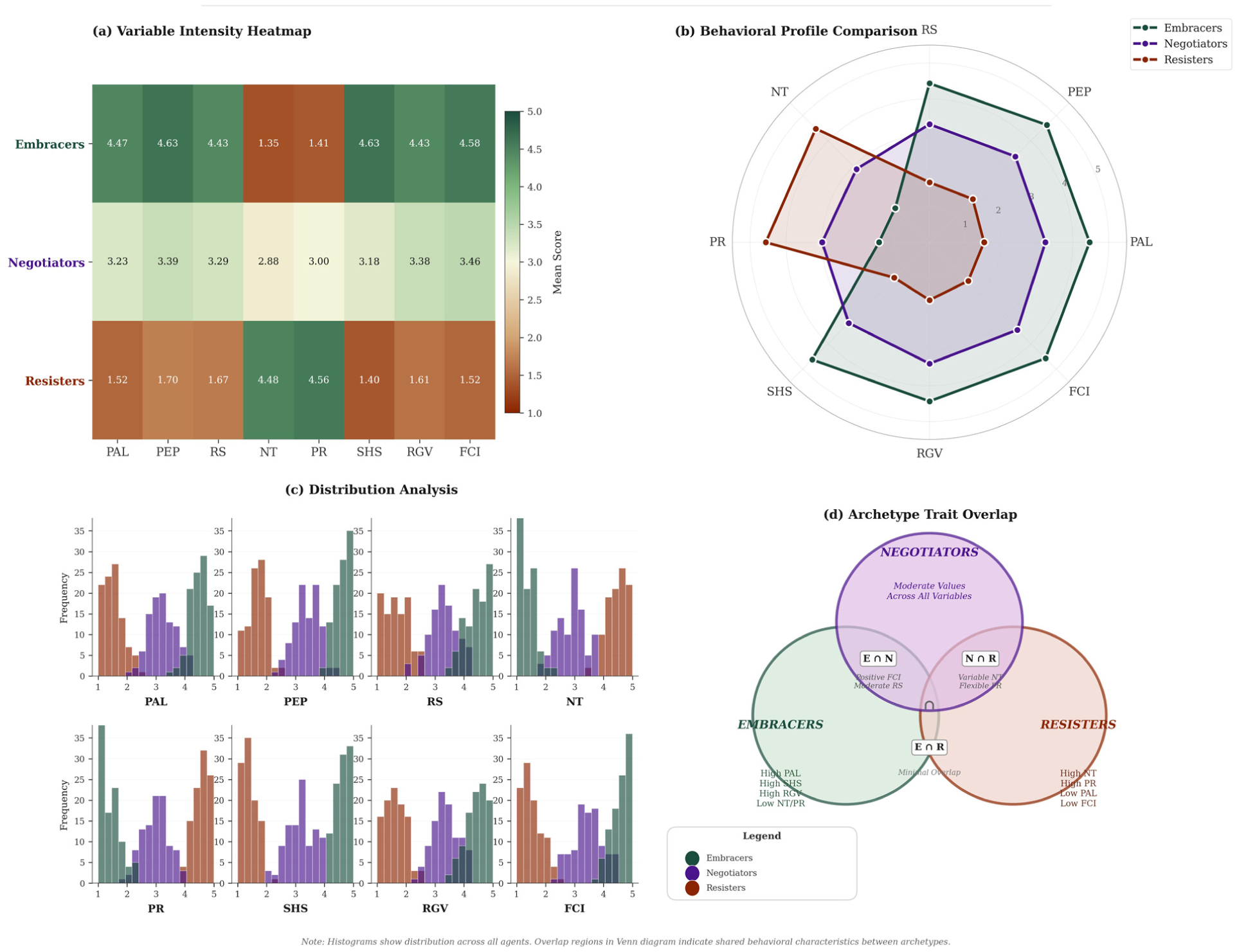

Similarly, Figure 4 provides a visual check on the archetype differences and CI via an amalgamation of a heatmap, spider plot, histogram and Venn diagram of responses. The heatmap shows mean values scores across archetypes and variables. Spider plot compares the profiles across the variables score in data set. Histograms show the distribution across the variables, clearly differentiating the three archetypes, and the Venn diagrams present the overlap of all three behavioural profiles. Note that certain overlaps exist, but they do not confirm the configurations where the outcome is achievable, and this is further discussed in Phase IV.

Behavioural archetype analysis (300 agents)

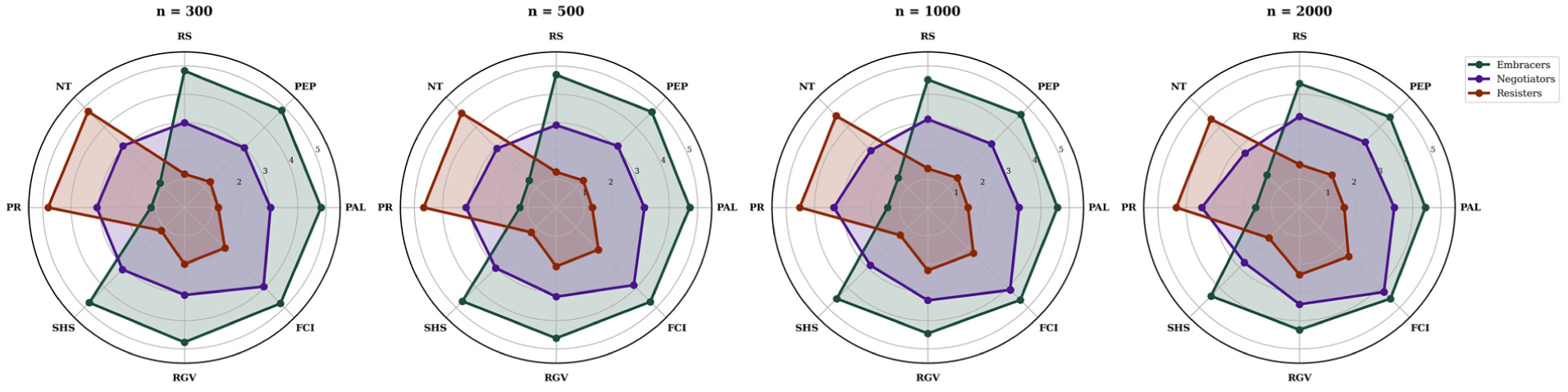

To further confirm the robustness of behavioural archetypes, a sensitivity analysis was conducted using different sample sizes (i.e. n = 500, n = 1000 and n = 2000). Figure 5 provides these visual representations across the sample size variants and reveals the stability of the pattern irrespective of sample size. This confirms the robustness within the archetype framework, showing the persistence and consistency of the pattern across the simulated data sets.

Sensitivity analysis (multiple samples)

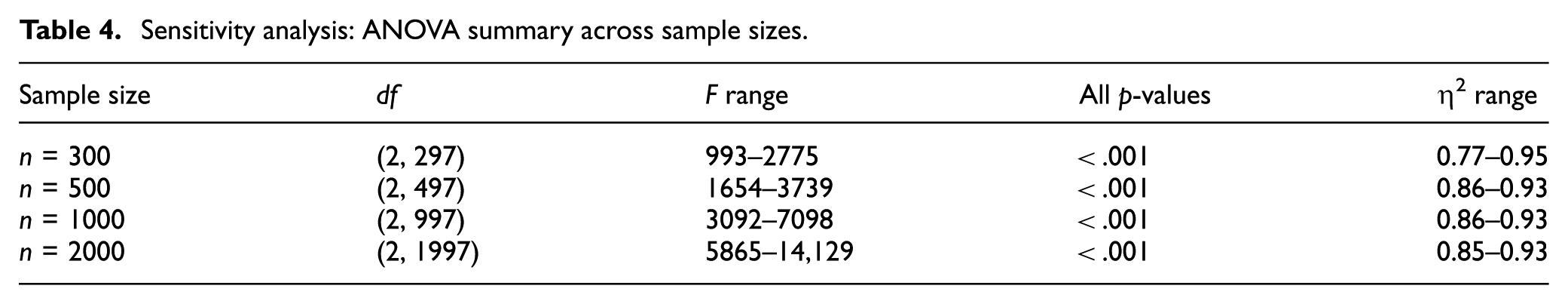

Summarised in Table 4, ANOVA results remain stable across the simulated sample sizes (n = 300, 500, 1000 and 2000), confirming the stability of archetype differences. Establishing the robustness of findings across sample sizes, the next phase involves conducting the configurational checks.

Sensitivity analysis: ANOVA summary across sample sizes.

4.4. Phase IV: Identification of causal configurations

Building on the quantitative model, this study now employs fsQCA to move beyond linear relationships. 46 This method identifies the specific combinations of conditions or ‘configurations’ that lead to high or low policy adherence. By uncovering these complex configurations, we aim to provide a more holistic understanding of employee behaviour to inform employee future CI.

fsQCA offers a sophisticated methodological alternative to traditional variable-centric analyses. It bridges the divide between qualitative, case-oriented logic and quantitative, correlational rigour by identifying complex causal configurations that are sufficient for an outcome to occur. A core strength of fsQCA lies in its use of set theory and a rigorous calibration process, whereby raw data are transformed into set-membership scores ranging from [0, 1].

This calibration is a critical feature, as it enables researchers to impose theoretically meaningful cut-points for interpreting qualitative distinctions while simultaneously facilitating the precise quantitative placement of cases relative to one another. Consequently, fsQCA satisfies the core methodological concerns of both qualitative and quantitative research.54,55

Thus, we employed fsQCA using the QCA package in R Studio. Following the protocol for fsQCA from Pappas and Woodside and Vis,46,55 The first step was to calibrate data in fuzzy-set (0, 1) suitable for fsQCA. This was completed in R Studio, since our coding was weights; we used three anchored threshold c (1.5, 3.1, 4.5) at 5, 50 and 95 percentiles. 46 Next, the data were calibrated in R based on these thresholds, developing the truth tables to further analyse.

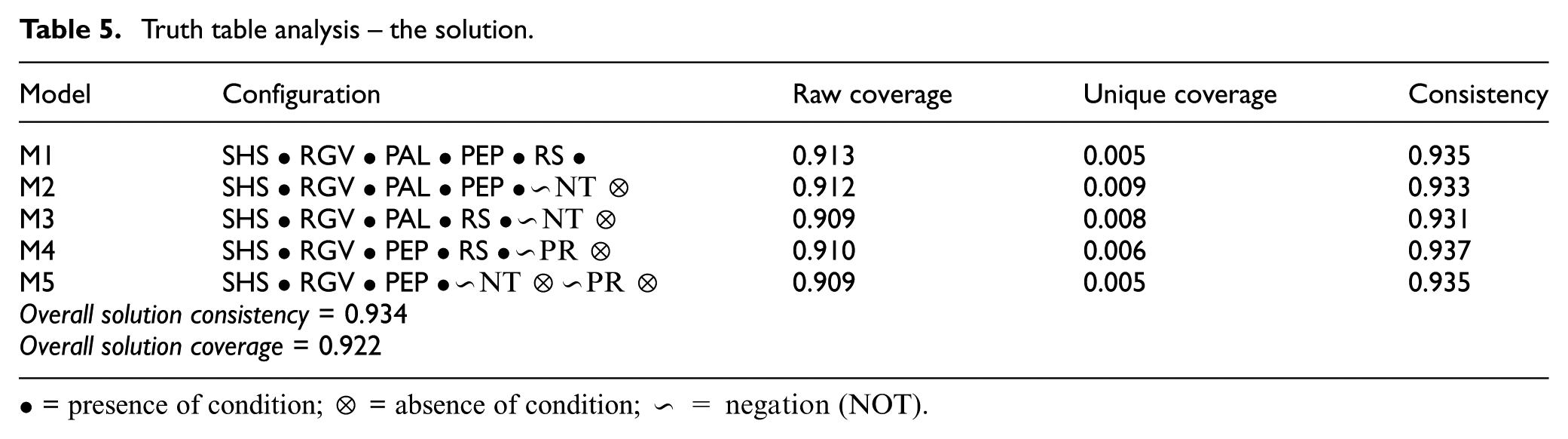

Table 5, the fsQCA solution, results reveal five configurations (M1–M5) that consistently lead to high future CI in cybercrime prevention. All models include security habit strength (SHS) and role-governance values (RGV), indicating their foundational role. The absence of NTs and Policy Reactance (PR) appears in some models, highlighting that removing psychological justifications for non-compliance strengthens cybersecurity intentions. High overall consistency (0.934) and coverage (0.922) confirm that these condition sets explain most of the cases, supporting a systemic, multi-factor approach to institutional future CI of employees if conditions are met. However, this yet does not predict the intention; thus, it is important to establish the predicted validity of these models.

Truth table analysis – the solution.

• = presence of condition; ⊗ = absence of condition; ∼ = negation (NOT).

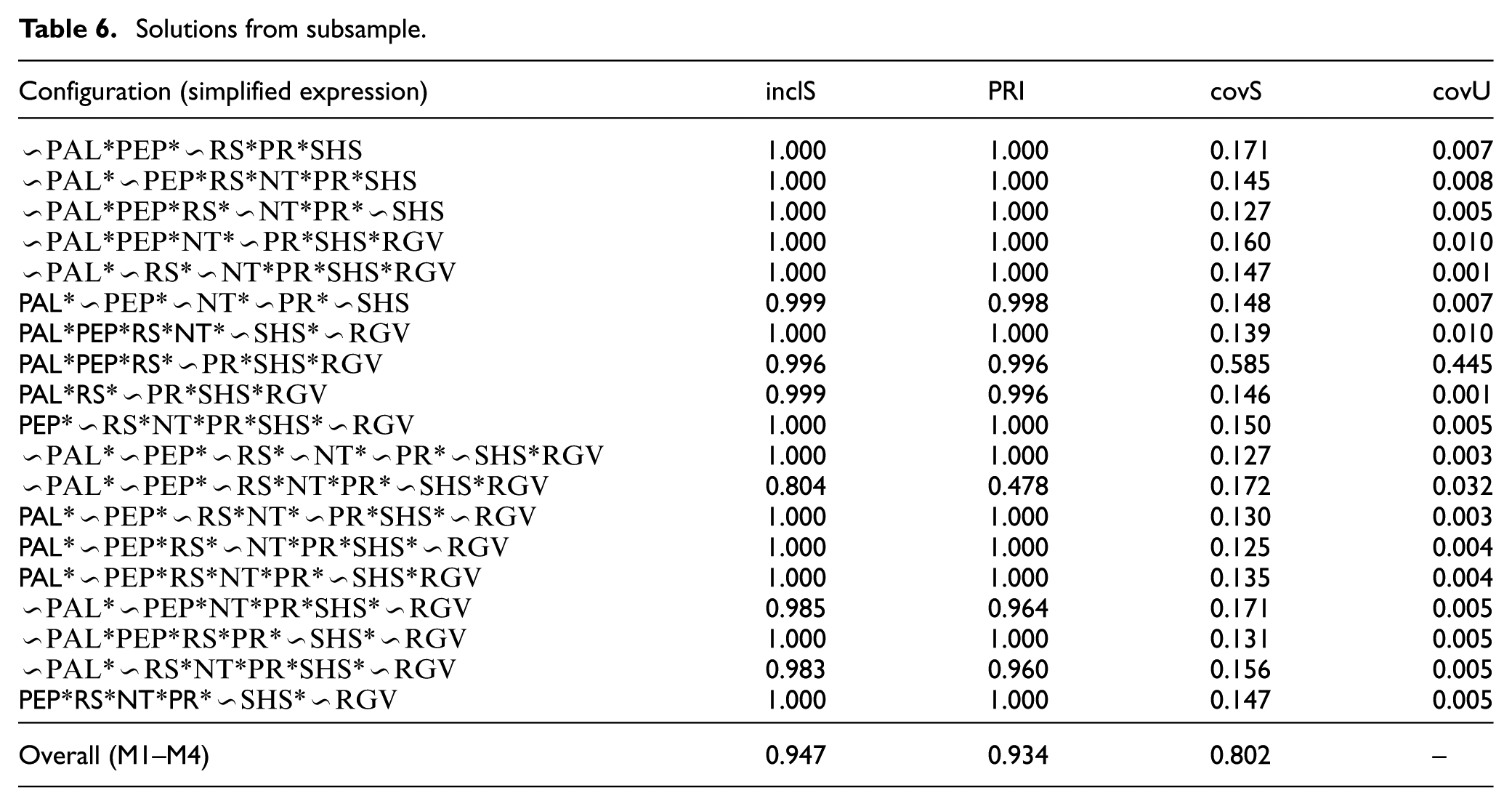

To establish predictive validity, the research employs a split-sample approach. The total sample is first randomly partitioned into a subsample and a holdout sample. The analysis is then performed on the subsample to identify the causal configurations, as outlined in the preceding sections. The predictive power of these findings is subsequently assessed by testing them against the independent holdout sample. This rigorous validation procedure, which is applicable across both empirical-positivist and interpretative research paradigms, significantly enhances the methodological strength and generalisability of the fsQCA results by confirming their ability to predict outcomes (see details in Appendix II), for cases not used in the initial model building presented in Table 5, while the predictive model results are presented in Table 6.

Solutions from subsample.

The predictive validity test confirms that the configurations identified in the primary analysis (M1–M4) reliably explain outcomes in the holdout sample. All configurations demonstrate high consistency (inclS ≥ 0.947), with substantial overall solution consistency (0.934) and coverage (0.802). All outcome values are above required thresholds. 56 However, one configuration stands out: PAL*PEP*RS*∼PR*SHS*RGV has a unique coverage of 0.445. This is an exceptional result, meaning that this specific combination of a high policy adherence level (PAL), policy efficacy perception (PEP) and risk sensitivity (RS), along with a low policy reactance (PR) and a high SHS, uniquely explains 44.5% of the cases where the outcome (FCI) is present, which established that the absence of policy reactance and NT achieves high CI. Conversely, some configurations include positive factors but lead to non-compliance.

For instance, configurations (∼PAL*∼PEP*RS*NT*PR*SHS and PAL*PEP*RS*NT*∼SHS *RGV) show that partial presence of positive factors is insufficient to achieve compliance, especially when combined with NT or PR. This pattern explains a critical theoretical insight: protective factors do not operate independently but rather interact configurationally. Elevated NT appears particularly effective in weakening otherwise compliant profiles. This suggests NT can override behavioural intentions regardless of positive inclination. These findings are practically significant for organisations. Organisations must not assume that employees with strong security habits are immune to non-compliance, but rather, interventions must actively monitor and disrupt neutralisation techniques across all employee profiles.

5. Discussion

This study was designed to provide a multi-method simulated exploration on employee CI using agentic AI within e-government services, from the development of a grounded theory to the empirical validation of its core tenets and, finally, the identification of complex configurational set. The findings from this multi-phased approach collectively portray a detailed picture of how future CI may be enhanced, offering significant theoretical and practical contributions.

The initial phase, grounded in a grounded theory analysis,47 comprised of scenario-based employee responses, yielding identification of a set of core themes and concepts (Figure 1). These qualitative insights were then systematically operationalised into eight distinct variables, each with a clear conceptual and theoretical foundation (Figure 2), thus establishing an empirically grounded model for quantitative investigation. A key finding from this phase was the identification of three distinct employee archetypes: embracers, negotiators, and resisters, each representing a unique behavioural orientation towards security policies.

The quantitative phase provided strong empirical validation for the existence and distinctiveness of these three archetypes. The ANOVA results (Table 3) demonstrated highly significant differences in the mean scores of all eight variables across the groups (p < .001). The large effect sizes (η2 ranging from 0.77 to 0.95) indicate that these differences are not only statistically significant but also highly practically meaningful. As visually confirmed in the (Figures 3 and 4), embracers consistently exhibit high scores on security-positive variables such as PEP and SHS, while resisters show a clear reversal, with high scores on security-negative variables like NT and policy reactance (PR). The negotiator archetype consistently occupied a distinct middle ground, solidifying its role as a pragmatically oriented group seeking a balance between security and efficiency.

A sensitivity analysis was conducted using n = 500, 1000 and 2000, to check the stability of the proposed archetypal framework. The analysis showed that the three behavioural archetypes remained robust with minimal natural variation. This confirmed that archetype framework shows consistency even if the sample size is increased (as summarised in Figure 5).

Building on these findings, the fourth phase of the research employed fsQCA to transcend the limitations of linear analysis and uncover the complex, configurational set driving employee compliance behaviour for cybercrime prevention. The fsQCA results (Tables 5 and 6) revealed a highly vigorous solution with a strong overall solution consistency of 0.934 and a coverage of 0.922. A central finding was the principle of equifinality, as multiple distinct configurations were identified as sufficient for both high and low future CI. This demonstrates that there is no single path to compliance or deviation; rather, it is the intricate interplay and combination of conditions, such as the presence of high SHS combined with low policy reactance (PR) = high FCI, or a high NT combined with low RS = low FCI, that consistently leads to a specific outcome. These findings move beyond simply stating that one variable predicts another and instead provide a nuanced, holistic ‘recipe’ of the conditions under which a specific outcome is likely to occur.

The results also contradict with previous research on employee CI where Habit is continuously found ineffective in predicting employee policy CI.44,57 On the contrary, we argue that it is the configuration sets that lead variables to respond differently across varying settings. For instance, in our case, the unique coverage configuration shows involvement of high SHS. Therefore, establishing a variable’s impact through traditional statistical measures can be significant in one setting but cannot be conclusive. This challenges the variable’s impact generalisability in connection to set theory, based on which we further argue that it is important to identify conceptual sets (configurations) rather than simply possessing variables with independent effects to fully understand a variable’s practical relevance.

This study makes several key theoretical contributions. First, it introduces and empirically validates an integrated model that synthesises concepts from established behavioural theories (e.g. protection motivation theory, psychological reactance theory and habit theory) to explain the complex phenomenon. Second, the use of agentic AI is timely due to its recent release and exceptional capabilities. This technology enabled the prompts to simulate in multiple environments acting as multiple agents at the same time. Such simulations can exhibit characteristics mimicking real-world cases and provide solutions in contexts where data collection is not easy due to restricted or confidential nature of work and non-disclosure agreements in place.

Finally, by using fsQCA, the research challenges the predominant reliance on linear, variable-centric methods in security behaviour research, demonstrating the value of a configurational approach for understanding complex social phenomena. The findings reveal that set configurations interact in a non-additive, interconnected manner, which traditional statistical techniques are less equipped to capture.

The practical implications of this research are substantial. The identification of three distinct employee archetypes argues against a ‘one-size-fits-all’ approach to cybersecurity policy and training. Instead, organisations need to focus on developing targeted interventions tailored to each group. For instance, for resisters, efforts should be focused on reducing policy reactance and challenging NTs. On the contrary, for negotiators, policy enforcement and training should emphasise the usability of secure alternatives to address their pragmatic concerns. The fsQCA results further enhance this by providing a precise roadmap for interventions. For example, knowing that a specific combination of conditions is sufficient for non-compliance allows managers to target multiple root causes simultaneously, thereby developing more effective and resource-efficient mitigation strategies.

6. Conclusion

This research sought to identify the configurations that foster employee CI with cybersecurity policies within e-government services. Through a novel four-phase methodology integrating agentic AI simulations, grounded theory, ANOVA and fsQCA, we established the grounded theory, anchored its relevance in literature, successfully identified three distinct employee archetypes (i.e. embracers, negotiators and resisters) and uncovered the specific causal recipes that lead to compliance within these groups.

Situating these findings within a broader methodological context, this study points to the value of multi-agent modelling within cybersecurity contexts. Our research demonstrates the significance and impact of a multi-method design, where the integration of simulation, qualitative analysis and configurational methods overcomes the limitations of a single approach, yielding more holistic insights.

Furthermore, we advocate for the expanded use of agentic AI simulations as a key component of this multi-method toolkit. This approach enables researchers to rigorously investigate complex, sensitive or difficult-to-replicate real-world scenarios in a controlled environment. By leveraging well-prompted AI agents, we can explore multifaceted problems efficiently, saving significant time and resources while mitigating the risks associated with sensitive and challenging inquiries in field-based research.

By introducing a novel hybrid architecture leveraging agentic AI to simulate the influence of management-driven behavioural strategies on cybersecurity policy adherence, this study has not only developed an empirically grounded model of employee cybersecurity behaviour but also has provided a new framework for understanding the complex dynamics that drive it. The findings offer a compelling argument for moving beyond simple linear causality and embracing a more holistic, configurational perspective to effectively address challenges of cybersecurity.

Future research would benefit from adapting longitudinal designs to observe changes in behaviour over time and exploring the generalisability of the proposed model with its variables and its archetypes to other sectors. It would be especially promising to compare such cybersecurity simulation studies in different contexts (e.g. health care vs finance vs education). Future fsQCA research could also explore the causal configurations for each archetype more deeply to understand the conditions under which they shift towards either compliance or deviation. Looking into smart futures, further work on agentic AI and its evolution to AI super agents promises to provide unchartered routes into studying resilience and agility in multi-agent modelling and simulation frameworks.

Footnotes

Appendix 1

Appendix 2

Acknowledgements

Northumbria University Rights Retention Policy supports authors to assert their rights in their published research. The policy confirms that members of academic staff own the copyright to their scholarly works and grant a licence to the University to make the author accepted manuscript (AAM) available in the Northumbria Research Portal (Pure) under a creative commons licence (CC BY).

Ethical considerations

This article does not contain any studies with human or animal participants.

Consent to participate

There are no patients or participants in this study. Therefore, consent to participate and consent to publication are not required and applicable for this study.

Consent for publication

All authors have read and agreed to the submitted version of the manuscript.

Funding

The authors received no financial support for the research, authorship and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Data availability

All data sets that support the findings of this study are attached.