Abstract

Centralized security architectures and conventional encryption methods are often cumbersome for edge computing environments, where lightweight, efficient, and real-time processing is essential. This study proposes a lightweight data protection framework for secure edge transmission using IOTA-MAM. The solution uses IOTA to enhance data integrity, prevent tampering, and protect privacy at the edge. The Masked Authenticated Messaging protocol is optimized for more efficient batch message generation, delivery, and verification, reducing computational and communication burdens, particularly for edge devices with limited resources. When assessed under high load—2400 edge nodes managing 80,000 tasks—the system achieves an 85.7% recall rate, 11.2% task failure rate, and average service latency of 6.5 s. The results indicate that the proposed framework balances security, throughput, and latency, providing robust privacy and integrity guarantees while reducing operational overhead. This work contributes to a practical and scalable solution that meets the security and responsiveness demands of modern edge computing applications.

Introduction

With the proliferation of 5G networks and explosive growth in Internet of Things (IoT) devices, edge computing 1 has become a key enabler of digital transformation, particularly in the Industrial Internet of Things (IIoT). Offloading data processing tasks to the network edge reduces bandwidth consumption, enhances resource allocation efficiency, and improves real-time responsiveness. These advantages have made edge computing increasingly vital in data-intensive and latency-sensitive scenarios. However, the frequent transmission of data across edge nodes and the integration of diverse, heterogeneous devices have introduced substantial challenges in terms of security and privacy.

Mainstream edge security solutions2,3 are widely adopted; however, they exhibit three significant limitations when applied to resource-constrained edge environments. First, centralized security architectures impose substantial communication overhead and are vulnerable to single failure points, contradicting the distributed and autonomous design principles of edge computing. Second, traditional encryption algorithms, such as RSA 4 and AES, 5 are computationally intensive and impose considerable processing burdens on low-power edge devices. Third, blockchain6,7 and other distributed ledger technologies (DLTs) enhance data integrity and traceability through decentralized consensus mechanisms. However, their reliance on energy-intensive consensus protocols—such as proof of work—and linear block structures leads to excessive power consumption, low throughput, and high latency, making them unsuitable for practical deployment in edge settings.

In contrast to traditional blockchain systems, lightweight DLTs based on directed acyclic graphs (DAGs) 8 offer superior scalability and efficiency, making them well-suited for edge environments in the IoT. These DAG-based systems support parallel transaction processing, eliminate transaction fees, and do not require miners, making them an appealing alternative for resource-constrained edge nodes. IOTA 9 stands out as a representative implementation among them. It adopts an asynchronous consensus mechanism in which each new transaction validates two previous ones, significantly reducing energy consumption and transaction latency while enhancing scalability.

The lightweight, decentralized, and privacy-friendly design of IOTA is well-suited for edge scenarios. However, its deployment in complex edge environments faces three significant issues. First, the batch strategy of MAM is fixed, which limits its responsiveness to real-time load changes and affects latency-sensitive applications. 10 Second, Winternitz one-time signatures are efficient for signing; however, signing each message places a significant load on end devices. 11 Third, receivers typically handle verification processes, which can cause computational bottlenecks and reduce performance at the edge during peak traffic times.

Researchers have proposed extensions to the MAM protocol to address these limitations. Abdullah et al. 12 combined MAM with Merkle tree structures to reduce the complexity of the signature chain. Alshaikhli et al. 13 developed a lightweight consensus algorithm that offloads partial verification tasks to edge gateways, enabling hierarchical authentication. However, these approaches are based on fixed configurations and do not dynamically optimize throughput, latency, and energy consumption—an essential requirement in volatile edge environments.

A synthesis of current literature reveals three key limitations in existing edge security schemes: (1) lack of adaptability to dynamic network conditions; (2) absence of a unified optimization framework for end-to-end data protection; and (3) performance trade-offs when enhancing security, which undermines industrial requirements for high throughput and low latency.

This paper uses the lightweight encryption features of IOTA and proposes an improved MAM-based framework for secure edge data transmission to tackle these challenges. The protocol guarantees data confidentiality, integrity, and immutability during transmission and storage while ensuring high throughput and efficient message validation. The primary contribution of this work is a lightweight data transmission protection framework for secure edge computing, named the IOTA-MAM Edge Guard Framework. Its key innovations and demonstrated capabilities are threefold:

A novel secure transmission framework integrating optimized IOTA protocols. The framework architecturally combines Masked Authenticated Messaging (MAM) for layered encryption with Winternitz One-Time Signatures (W-OTS) for quantum-resistant authentication. This design provides end-to-end confidentiality, integrity, and non-repudiation for data transmitted between edge devices and gateways, establishing a full link protection mechanism. A dynamic adaptive optimization engine for efficient resource utilization. At the core of the framework is a hybrid trigger mechanism (message-count and time-delay) coupled with a Markov-modulated Poisson process (MMPP

14

) model. This engine dynamically optimizes batch publishing frequency and size in real-time based on network load, achieving a Pareto-optimal balance between throughput, latency, and energy consumption—a critical requirement for volatile edge environments. Demonstrated scalability and efficiency supporting large-scale deployments. The framework is designed to minimize verification overhead. It achieves constant-time O(1) signature verification at the edge gateway through a pre-shared Merkle root anchor, bypassing the need for linear signature checks. Extensive simulations validate the framework's robustness in a large-scale environment of 2400 edge nodes managing 80,000 tasks, sustaining a transmission rate of over 1200 TPS while maintaining low latency.

The remainder of this paper is structured as follows: Section II reviews the state-of-the-art edge data security technologies. Section III outlines the design of the proposed encryption and scheduling mechanisms. Section IV presents comparative resource scheduling, reliability, and data protection experiments. Section V assesses the system performance. Section VI concludes the paper and discusses directions for future research.

Related work

With the rise of decentralized edge architectures and resource-constrained environments, edge computing increasingly encounters challenges in ensuring real-time performance and data privacy. These issues, exacerbated by device heterogeneity and distributed topologies, have driven research into lightweight security mechanisms for edge environments. This section reviews the key technical pathways for protecting edge data, focusing on four aspects: lightweight encryption, the evolution of distributed ledger technologies, limitations of IOTA-MAM protocols, and corresponding mitigation strategies.

Data encryption and access control in edge computing

Conventional cryptographic algorithms are foundational for ensuring data confidentiality; however, their computational overhead renders them ill-suited for low-power edge nodes. Consequently, recent research has focused on developing lightweight and efficient alternatives. Shahidinejad et al. 15 proposed a lattice-based dynamic access control framework that reduces the average update delay at edge nodes to 32.57 ms and supports real-time privilege updates. Alrashdi et al. 16 proposed a secure communication protocol driven by the IoTs and introduced the BSCP-SG framework to verify the authenticity of smart meters, reducing communication and computational costs. Zhang et al. 17 introduced a zero-knowledge proof-based authentication mechanism (ZK-Auth) that improves communication efficiency in edge node authentication, achieving approximately 23.4% lower overhead compared with conventional multi-party authentication schemes. 18 In summary, while these cryptographic advancements reduce overhead, their reliance on centralized management often makes them unsuitable for the fully decentralized and dynamic nature of edge computing topologies.

Evolution of DLTs and the lightweight advantages of DAGs

The decentralization and immutability of blockchain 19 have made it promising for ensuring data integrity in edge computing. However, consensus protocols based on Proof of Work are limited by high energy consumption, low throughput, and confirmation delays. Huang 20 introduced an energy management blockchain framework combining Hyperledger Fabric, attribute-based access control, and homomorphic encryption. However, the system remains heavyweight with a maximum throughput of 45 TPS and increasing latency as the block size grows. Zhang et al. 21 proposed the Nano framework using the Financial Blockchain Shenzhen Consortium Blockchain Open Source to secure data, reaching 50 TPS. Nevertheless, scalability, decentralized revocation, and dynamic access control remain areas for improvement.

Researchers have resorted to lightweight DLTs based on DAGs, 22 with IOTA as an example to overcome these limitations. The asynchronous transaction verification of IOTA—where each new transaction validates two predecessors—eliminates mining, supports concurrency, and enables scalability beyond 1200 TPS. Ehtisham Ul et al. 23 integrated MAM with lightweight homomorphic encryption to enhance industrial data privacy; however, its static batch strategy led to reduced throughput under high concurrency. Gangwani et al. 24 incorporated Winternitz One-Time Signatures (W-OTS) into MAM to reduce signing costs by approximately 97%. However, the absence of load-adaptive mechanisms increased latency during traffic surges. These challenges highlight the need for an adaptive DLT framework that dynamically balances throughput, latency, and resource efficiency. Thus, while DAG-based DLTs, such as IOTA, present a promising alternative to blockchains for the edge, their native protocols still require significant optimization to handle the real-time, adaptive demands of practical edge computing scenarios.

Technical selection justification and comparative analysis of DLT platforms

The resource-constrained edge environment demands that the DLT platform provide ultra-low energy consumption, high throughput, low latency, zero transaction costs, and native data security. We review the mainstream DLT platforms and compare them in Table 1.

25 Comparison of mainstream distributed ledger technologies.

IOTA is the only DLT with a native integrated data security layer, and its DAG structure is the only DLT that currently satisfies the four key criteria of energy efficiency, low delay, security, and cost. The DAG infrastructure, along with the security layer of MAM and W-OTS energy efficiency control, makes its edge specificity the best. Its DAG structure fundamentally avoids the bottlenecks of traditional blockchain throughput limits and energy wastage issues. The native integration of infrastructure and the MAM security layer offers a scalable, license-free, zero-cost trust foundation for the framework, while other platforms are forced to make compromises in at least one aspect.

Although 25 provides a general DLT comparison, we have reconfigured the evaluation framework to focus on edge-specific constraints and overall security.

The designation of IOTA as a “lightweight” Distributed Ledger Technology (DLT) stems from fundamental architectural differences that make it uniquely suited for resource-constrained environments compared to traditional blockchains. This lightweight nature is manifested in three core aspects:

Absence of Miners and Transaction Fees: Unlike blockchain platforms that rely on a competitive network of miners/validators to perform computationally intensive proof of work (PoW) or stake funds in proof of stake (PoS) to secure the network, IOTA employs a different consensus mechanism. In the Tangle, each new transaction directly confirms two previous ones. This “users-are-the-validators” model eliminates the need for specialized, energy-intensive miners and consequently removes the requirement for transaction fees. This means edge devices can transmit data without incurring cost or waiting for miner inclusion, making microtransactions and high-volume data streams feasible.

Scalable, parallel structure (DAG vs. linear blockchain): traditional blockchains process transactions in linear, sequential blocks, creating a bottleneck where block size and generation interval limit throughput. IOTA's Tangle, structured as a directed acyclic graph (DAG), allows for asynchronous and parallel attachment of transactions. As network activity increases, more transactions become available for verification, theoretically increasing the network's throughput and reducing confirmation times, rather than congesting it. This parallel processing capability is critical for the high-volume, low-latency demands of edge and IoT networks.

Energy Efficiency: The computational effort required for a device to issue a transaction in IOTA is minimal. The lightweight PoW it performs is not for consensus but for spam prevention and is orders of magnitude less demanding than Bitcoin's or Ethereum's (historically) PoW. This low energy footprint allows it to run efficiently on devices with limited battery and processing power, a prerequisite for most edge and IoT deployments.

These architectural choices—feeless transactions, parallel processing, and low energy consumption—are why IOTA is classified as “lightweight” in Table 1 and form the foundational rationale for its selection as the underlying DLT for our proposed edge security framework.

System design

This paper proposes a lightweight data protection framework based on the IOTA-MAM to address these gaps. The design introduces a hybrid-trigger mechanism and a Merkle tree-based batch verification framework to optimize publishing frequency, batch size, and computational load. Unlike static MAM channels, the proposed dynamic hybrid-triggering model dynamically adjusts data publishing in real-time based on message volume and latency thresholds, thereby maintaining scalability under high concurrency. The Merkle-based batch signing scheme reduces the overhead of frequent cryptographic operations, and offloading verification to edge gateways enhances overall system responsiveness. Simulation demonstrates a Pareto-optimal balance among throughput, latency, and energy efficiency, providing a practical and evolvable protection strategy for edge environments with limited resources.

The proposed framework comprises three main entities, while the other simulation modules remain unchanged.

Data Source & MAM Publisher (Edge Device 1): Handles data collection, initial processing, and MAM-based layered encryption using AES-256-GCM with session keys generated dynamically. It creates batches through a hybrid trigger system and signs the BatchHash with W-OTS before submitting to the IOTA Tangle via a restricted MAM channel. MAM Subscriber & Verifier (Edge Gateway): Serves as a trusted intermediary that subscribes to MAM channels on the IOTA Tangle DAG ledger. It conducts batch verification using Merkle Root anchors, consolidates validated data, and handles decryption with pre-shared side keys. Data Consumer (Edge Device 2): Receives decrypted data streams from the gateway and verifies end-to-end authenticity using authentication tags embedded in MAM messages.

Preliminaries

Before presenting the proposed system, we first offer a brief overview of the relevant technologies.

IOTA's ledger data is organized in a directed acyclic graph (DAG) called Tangle, which serves as a lightweight, distributed ledger consensus mechanism. This consensus employs a one-time encryption signature (W-OTS) scheme that is free, scalable, and provides strong security. The DAG forms the core of IOTA. Tangle saves transactions, the first transaction on the left is called genesis, and the gray square on the right represents the most recent transaction. Each transaction is represented as a vertex in the graph, and when a new transaction is added to Tangle, it selects two previous transactions for approval, thereby adding two new edges to the graph. Transactions approved for transaction 2 are marked in red, and all transactions approved for transaction 2 are indicated in blue, as shown in Figure 1.

Tangle structure in IOTA.

MAM is IOTA's second-layer data transmission protocol. It securely transmits, stores, and retrieves encrypted data streams through the Tangle, with no limit on data size. MAM uses a Merkle tree-based signature scheme to sign cryptographic digests of encrypted messages, effectively protecting data integrity and privacy. Restricted mode adds the authorization key to private mode, and NextRoot is the pointer that connects to the following message. Sidekey is only used to decrypt masked messages. People without sidekeys can locate messages through root, but they cannot understand what is loaded there. The address used to connect to the network is the hash value of the authorization key and Merkle root. The message publisher can stop using the authentication key without changing its channel ID (i.e. Merkle tree), so if necessary, the subscriber's access rights can be effectively revoked. When a key change event occurs, the new authentication key must be distributed to all parties allowed to track the flow. As shown in Figure 2.

Masked authenticated messaging diagram.

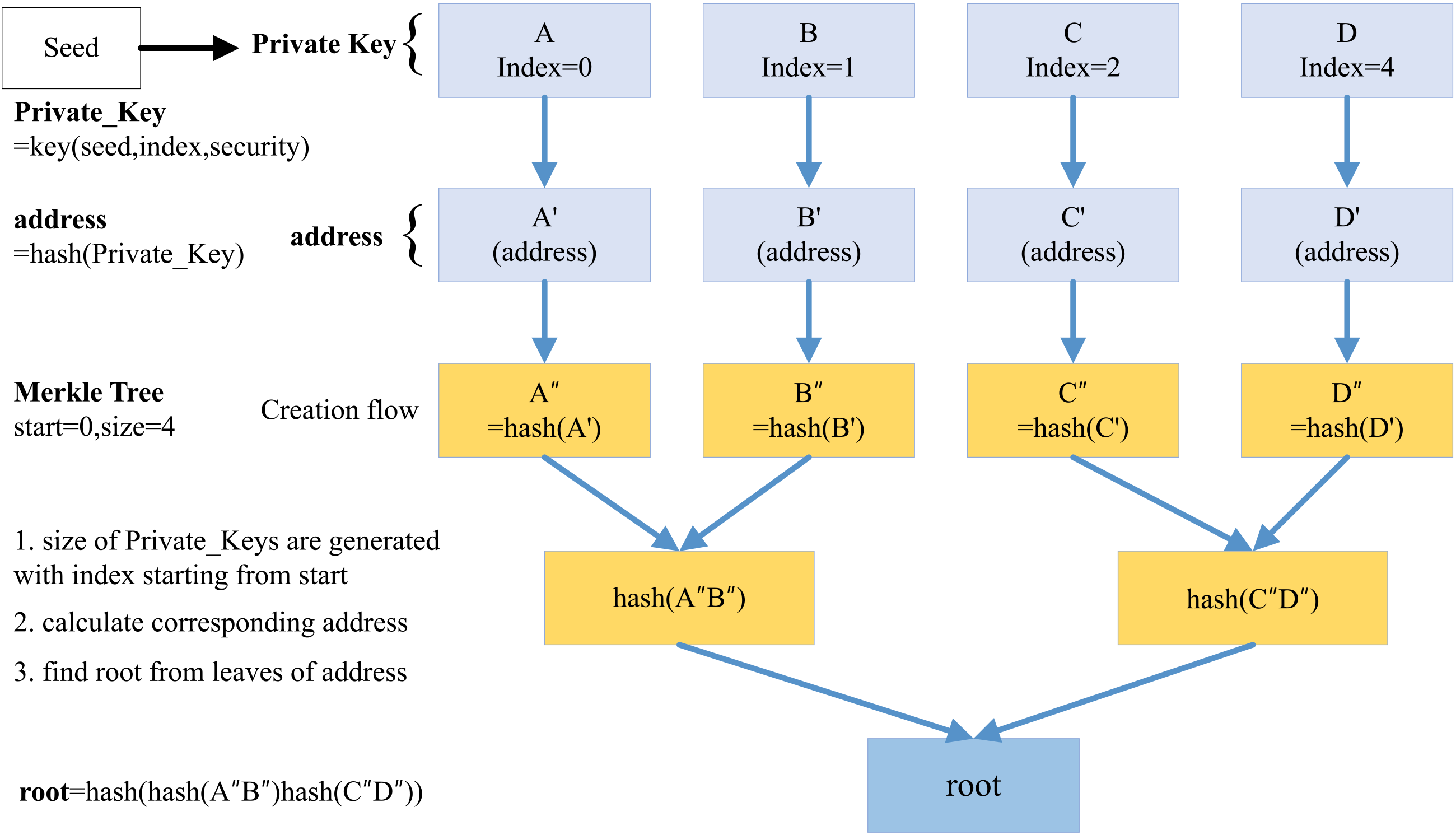

Merkle tree is a type of hash tree: its leaf nodes contain hashes of data blocks, and non-leaf nodes contain hashes of their child nodes. The root of the tree represents the entire data set. This hierarchical structure enables the efficient verification of data integrity over small portions of data, thereby improving performance. Merkle tree has integer parameters start and size. They represent the index of the address generated from the Seed. A, B, C, and D are private keys generated by index = 0, 1, 2, and 3. A’, B’, C’, and D’ are the corresponding addresses. A”, B”, C”, D" = hash (address) respectively. Before reaching root, you can shrink A”, B”, C”, and D” teams by combining them and hashing them again. Unable to retrieve the previous content from the root. As shown in Figure 3.

Merkle tree structure diagram.

The Winternitz one-time signature scheme generates a new private key for each signature via a one-way function that maps messages to unique signatures. It requires fewer private key bits, producing more minor keys and signatures, which makes it more efficient and suitable for resource-constrained environments. Additionally, it can resist attacks such as key reuse and forgery.

Side key is a pre-shared symmetric key that encrypts messages within the MAM protocol, ensuring that only authorized nodes holding the key can decrypt and read the data.

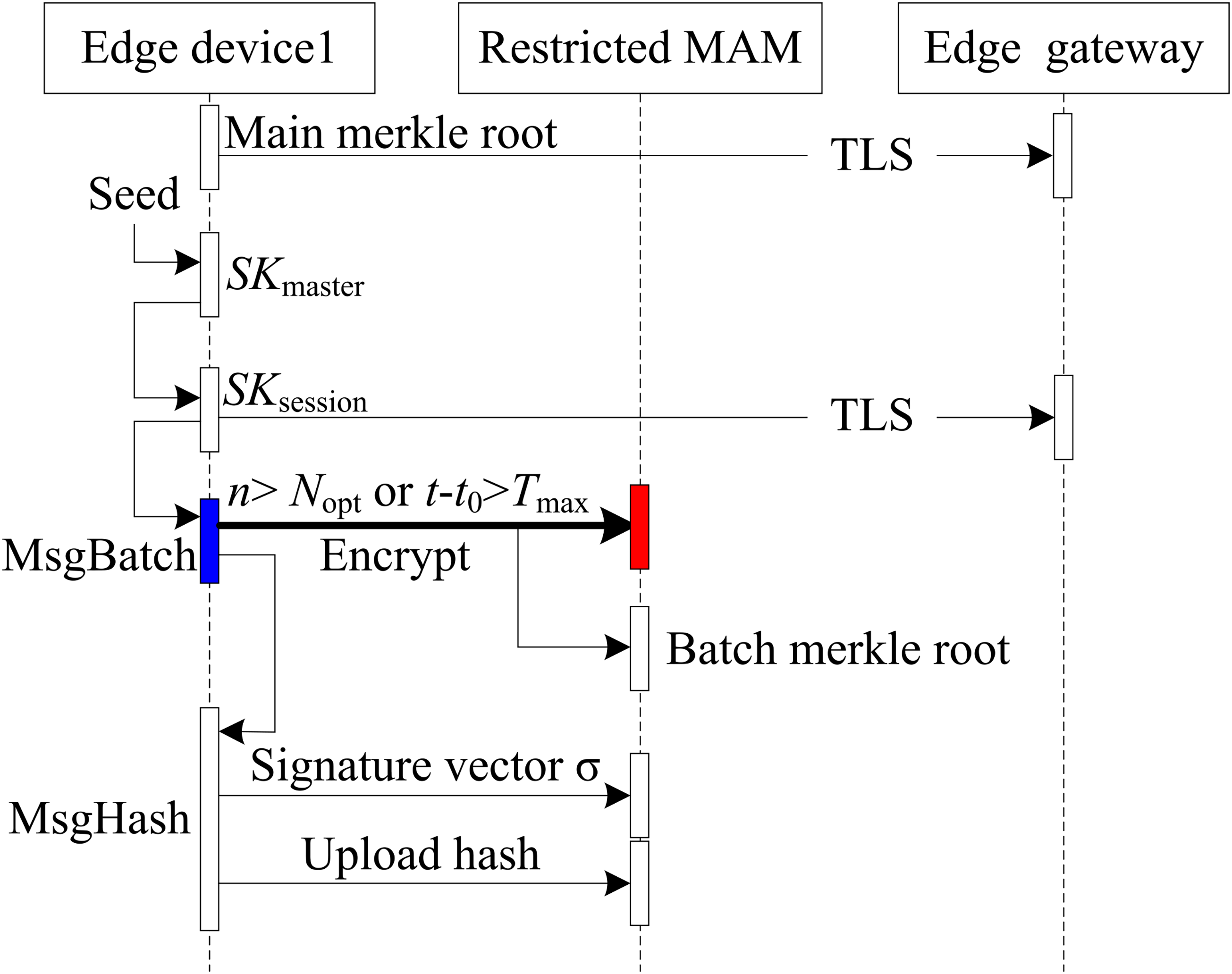

The lightweight security framework proposed for edge computing is built on the MAM protocol and a hybrid-trigger model (Figure 4). It aims to achieve high throughput, low latency, and efficient data protection in IIoT environments.

Encrypted data transmission architecture.

The framework incorporates the lightweight distributed ledger technology of IOTA and the MAM protocol to facilitate secure and efficient data transmission. Merkle root values and side keys ensure authorized parties can access the transmitted data. A dynamic hybrid-triggering strategy is introduced to balance real-time responsiveness with batch-processing efficiency. AES-256-GCM encryption and W-OTS are used on the security layer to enable low-cost, batch-wise authentication. Furthermore, the Tangle network of IOTA provides a decentralized, parallel verification mechanism, enhancing the integrity and tamper-resistance of data in transit.

To promote understanding, this framework's operation mode is mainly explained in three stages, covering the entire data life cycle from creation to reliable use.

Phase 1: Device boot-up and channel preparation

When the edge node (edge device 1) is powered on for the first time, the system will perform initialization:

Derive the Merkle root (for the subsequent batch signature anchor) and the Side Key (for the subsequent data encryption key) based on the device's unique IOTA Seed, as indicated by label ① in Figure 4. The side key is pre-shared with the authorized Edge Device 2 via the out-of-band secure channel. This step addresses the issue of “who will ensure the integrity and confidentiality of subsequent communications” for the discontinuity in Figure 4.

Phase 2: Data generation & secure packaging

The Batch Construction module groups multiple pieces of original data into batches based on the mixed trigger strategy. See Figure 4, Label ②.

The framework calculates the Batch Hash and uses W-OTS + Merkle Root to generate a one-time signature. At the same time, it employs Side Key + AES-256-GCM to encrypt the entire batch. This aligns with Figure 4. Bid numbers ③ and ④. The encrypted payload, along with its signature and timestamp, is encapsulated into a MAM message and published to the IOTA Tangle. In this stage, “how to give consideration to real-time and security in a high concurrency and low computing environment” is translated into specific operations. Corresponds to Figure 4. Label ⑤.

Phase 3: Downstream verification & consumption

When Edge Device 2 receives a message through MAM subscription, it corresponds to Figure 4. Label ⑥. The Edge Gateway verifies the W-OTS signature locally and requires only a single comparison with the pre-stored Merkle Root, which is an O (1) time complexity operation. Corresponding to the label ⑦ of Figure 4. After passing verification, use the pre-shared side key to decrypt, then verify integrity with the AES-GCM Tag. This corresponds to label ⑧ in Figure 4. Finally, reliable plain text data can be obtained for the upper application. So far, end-to-end data integrity, confidentiality, and non-repudiation are all closed loops.

The framework operates across three functional layers to achieve lightweight and secure design goals. At the access control layer, side-key encryption facilitates end-to-end allowed communication. At the integrity assurance layer, pre-distributed Merkle roots reduce the complexity of the batch verification from O (n) to O (1). At the adaptive optimization layer, the hybrid-trigger mechanism dynamically adjusts the batch size in response to real-time network load, ensuring a Pareto-optimal balance between throughput (>1200 TPS) and latency (<500 ms), making it suitable for resource-constrained IoT edge environments.

Regarding batch construction and secure publishing workflow (Figure 5), once the trigger conditions are satisfied, the system executes the secure transmission protocol to ensure that each data batch is reliably delivered from the edge device to the distributed ledger and verified by subscribed nodes.

Batch encryption and publishing workflow.

Batch construction

A dynamic hybrid-triggering strategy is introduced for efficient batch construction to meet the high throughput and low latency requirements of edge computing. Assume that the edge device

Here,

This paper establishes a nonlinear constrained optimization model to determine the optimal batch size and maximize the system throughput as in Equation (4), and the constraint condition is the equipment energy consumption budget

Given the dynamic load change, this paper proposes the Markov-modulated Poisson process (MMPP) to model the network traffic. It defines the batch queuing delay as in Equation (5), where

We determined the real-time performance of data by defining the maximum waiting time of batch release as

Key parameters for batch construction.

The abovementioned model and optimization strategy can dynamically adapt to changes in the edge computing environment, ensure adjusted parameters under dynamic load, and balance throughput, energy consumption, and delay. After the batch construction, the system enters the hierarchical encryption phase to ensure data confidentiality.

Layered encryption

The proposed framework adopts a layered encryption architecture that combines symmetric encryption with lightweight data masking to ensure data confidentiality while minimizing encryption overhead. First, a temporary session key is derived from a pre-shared master side key

Dynamic signature generation

W-OTS is used for batch authentication owing to their lightweight nature and quantum resistance, which avoids the vulnerability of ECDSA

37

to quantum computing threats. The signature binds

The one-time signature key is derived from the edge terminal master seed based on the BIP-32 hierarchical deterministic wallet standard. Concerning the j-th signature operation of device

Second, the hash is divided into blocks and verified. According to the National Institute of Standards and Technology, the block length balances security and efficiency. With the Winternitz parameter

The third step is signature generation and optimization. The corresponding signature fragment

Trusted ledger commitment

The encrypted batch

Subscription-based verification

The authorized subscribing node verifies the validity of the signature through the pre-synchronized Merkle Root

Finally, the authentication Tag (Equation (10)) is verified. If

In the message verification and reception phase, verification proceeds when the edge gateway acts as the data subscriber (Figure 6). Upon receiving a batch publication notification via the MAM channel, the gateway performs the following procedures to verify the authenticity of the source and the data integrity:

Cryptographic Integrity Validation: First, the gateway retrieves the published batch message from the Tangle distributed ledger. Subsequently, it performs cryptographic verification using the preconfigured Merkle root associated with the publisher's identity. The gateway traverses the Merkle tree structure of the publisher's public key to validate the one-time signature of the batch hash. Two essential conditions must be met for the batch to be considered authentic: (a) The signing key must belong to a valid sub-branch derived from the trusted Merkle root. (b) The signature must comply with the predefined Winternitz-OTS parameters.

Verification flowchart of the edge gateway as the data receiver.

Successful verification confirms that the data originates from a trusted source and has not been altered in transit. The batch hash is then recorded to prevent replay attacks and redundant processing, and the verification status register is updated accordingly.

2. Structured Reporting and Secure Decryption: Following successful verification, the gateway generates a structured verification report, which includes a unique batch identifier (a hash or global index), the publisher's Merkle root, and a binary verification flag (Verified = True/False). The gateway invokes the pre-shared Side Key (SK) to initiate layered decryption for validated batches (Verified = True), restoring the plaintext messages for downstream processing. In addition, the authentication tag (Tag) is verified during decryption to ensure message integrity.

The system initiates a secure response protocol when verification fails (Verified = False). The unverified batch is promptly discarded, an entry is logged in the audit, and a replay-attack alert is activated. This process prevents invalid or potentially malicious data from infiltrating the application layer, thereby maintaining the trusted execution environment of the system.

When the edge gateway performs only batch message verification and other terminals serve as data receivers, Figure 7 illustrates the corresponding subscription verification process. Verified messages are forwarded to the target terminal for decryption while the MAM channel state and the batch hash table of the gateway are simultaneously updated. The edge gateway sends a structured message on the verification result to the associated terminal device or upper-layer application. This message contains the following key fields: the unique batch identifier (a hash value or global index code), the publisher's Merkle root, and the binary verification status flag (verified = true/false). The subscriber device performs layered decryption on successfully verified batches to recover the original plaintext message for subsequent processing. During decryption, the authentication tag (Tag) is verified in parallel to ensure data integrity. Suppose verification fails (verified = false). In that case, the system activates the security handling protocol: the abnormal batch data packet is immediately discarded, a security event record is logged, and a replay-attack alert is generated.

Verification flow chart of the terminal as a data receiver.

The system ensures that edge devices only receive and process data that have been authenticated and remain untampered through the workflow above. Furthermore, eavesdroppers cannot interpret the data content without possessing the corresponding side key. The batching mechanism significantly reduces the computational load on edge devices by amortizing cryptographic operations and aggregating communication overhead. Thus, each batch requires a single signature (instead of signing each message individually) and corresponds to a single ledger transaction.

Meanwhile, the hybrid trigger strategy maintains near real-time responsiveness, avoiding excessive waiting. When the data generation rate is low, the time threshold trigger ensures timely batch publication. This design balances throughput and latency, allowing the system to adapt to changing network conditions while maintaining a stable performance baseline.

Simulation experiments

Experimental setup

System environment: This study implements the proposed IOTA-based framework using the DeepEdge edge computing simulation platform, a simulation platform based on DeepCloudSim developed by Baris Yamansavascilar. 38 Comparative evaluations against baseline configurations validate the proposed approach regarding data security, system overhead, and operational efficiency.

System configuration: DeepEdge is based on the EdgeCloudSim simulator 39 and is configured with 14 edge servers, each containing eight virtual machines (VMs) rated at 10 GIPS, along with a cloud server with 4 VMs rated at 100 GIPS. The platform accommodates dynamic task generation for up to 2400 mobile devices, with task types that include augmented reality and health monitoring. The double deep Q-Network algorithm enhances task scheduling and resource allocation during high-load conditions.

Parameter settings: The parameter configurations for the simulation environment are consistent with those defined in the original DeepEdge framework to ensure fair comparison, as listed in Table 3. Experimental results are also compared for benchmarking with those generated using the simulation setup proposed by Sonmez et al., 40 which is similarly built on EdgeCloudSim and adopts a Fuzzy Workload Orchestration algorithm for resource scheduling.

Simulation parameters.

Evaluation metrics: The evaluation metrics include the recall rate, service latency, task failure rate, and overall service time. The performance of the proposed solution is compared with the Fuzzy-based orchestration scheme and the original DeepEdge framework using these metrics. In addition, neither of the baseline schemes incorporates data security or privacy protection.

Recall rate

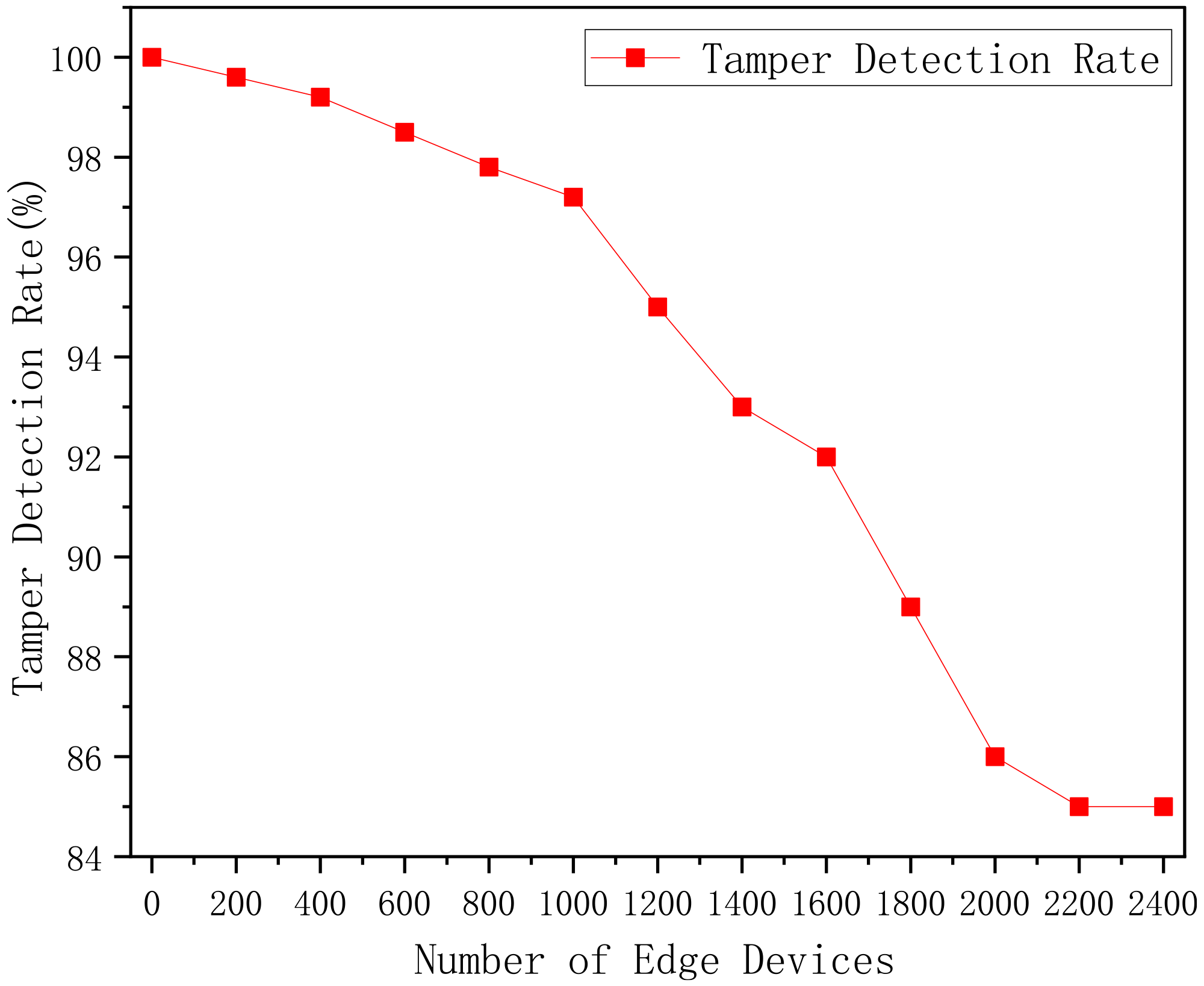

The recall rate evaluates the capability of the system to detect maliciously tampered data, and a tampering injection test was designed for this purpose. In each simulation round, a fixed proportion (10%) of task requests was randomly selected as maliciously modified samples. The injected tampering operations involved manipulating task data content, forging or altering one-time signatures, and modifying data hash values.

The tampered tasks were published via the MAM channel of IOTA, where such manipulations would cause digital signature and integrity verification failures. In the proposed scheme, the edge node performs batch-level security verification after receiving a task. This includes verifying the correspondence between the MAM one-time signature and the Merkle tree hash, executing AES-256-GCM decryption and validating the authentication tag, and cross-checking the batch sequence number and other auxiliary integrity fields. When any of the above steps fails, the task is identified as “tampered” and is rejected without processing. The recall rate is calculated using the formula

Figure 8 reveals that the recall rate decreases from 100% to 85.7% as edge devices increase from 0 to 2400. Under low load, combining MAM batch encryption, W-OTS signatures, and the intelligent scheduling strategy of DeepEdge ensures a near-perfect recall. However, as the system load intensifies, the hybrid-triggered batching model is activated more frequently, leading to resource contention at edge nodes. This results in partial verification failures due to insufficient computing or networking capacity, reducing detection effectiveness. These findings indicate that under high concurrency, system performance is constrained by the processing capacity of edge gateways and verification latency associated with the MAM protocol.

Recall test.

Overall task failure rate

The task failure rate reflects the proportion of tasks that are not completed under a given system load. Tasks fail when the arrival rate exceeds the computational capacity of the edge and cloud resources. In such cases, tasks may violate their maximum tolerable latency constraints (i.e. service level agreement (SLA) violations), be dropped due to resource exhaustion, and be considered failed.

In the simulation, each edge device issues 35 task requests. The failure rate is observed as edge devices increase from 0 to 2400. Figure 9 reveals that under low load, the task failure rate of all three schemes is approximately zero, indicating that all submitted tasks can be promptly processed. With the increasing number of edge devices, the overall computational load progressively rises, leading to a corresponding increase in failure rate. Nevertheless, the proposed scheme maintains a favorable performance trend. When the number of edge devices reaches 2400, the task failure rate remains at 11.2%, representing a 22.5% reduction compared to the original DeepEdge baseline. These results further demonstrate the ability of the scheme to achieve a synergistic optimization between data security and task deliverability, even under high-load conditions.

Task failure rate test.

Overall service time

The overall service time refers to the total duration of a task, measured from the issue of a terminal device to its complete execution within the edge computing system. This metric captures network transmission delay and the processing time at edge and cloud nodes, serving as a comprehensive indicator of system-level service efficiency under varying load conditions. The proposed lightweight, distributed ledger-based security framework was evaluated using the DeepEdge simulation platform to assess the impact of enhanced data protection mechanisms on service latency.

Figure 10 illustrates the experimental results, presenting the additional security verification overhead introduced by integrating the lightweight distributed ledger technology of IOTA. We optimized batch signature generation and Merkle tree–based validation to reduce the computational burden and essential cryptographic operations—including data encryption, signing, and verification—inevitably increasing processing time. Moreover, the hybrid-triggered batching strategy causes noticeable queuing delays under high load because of data accumulation. The computational bottlenecks at edge nodes and gateways further exacerbate the increase in overall service time. Nevertheless, the DeepEdge and Fuzzy-based baseline schemes perform relatively strongly under high load, with shorter service delays, albeit without incorporating data security mechanisms.

Overall service time.

Failure rate of multi-source heterogeneous application tasks

We comprehensively evaluated the scheduling adaptability and robustness of various tasks under a unified scheduling strategy by statistically analyzing the failure rates of four task categories—augmented reality (AR), pervasive health (PH), image rendering (IR), and infotainment (IF)—under different load conditions within the simulation environment. Figure 11 illustrates the corresponding performance curves.

Variation in the number of failed tasks for different application types. IOTA + DeepEdge outperforms other approaches in successfully completed tasks if the number of mobile devices increases in the network. (a) Augmented Reality APP. (b) Pervasive Health APP. (c) Image Rendering APP. (d) Infotainment APP.

The data in this figure are not obtained from independently running single tasks but rather from a unified multi-task environment (with a task ratio of AR:PH:IR:IF = 3:3:2:2). Under various device quantity conditions, the failure rates of each task type are separately extracted. The four types of tasks differ significantly in latency sensitivity, resource consumption, and task generation frequency—Table 4 lists relevant indicators.

Application properties in the simulator.

The experiment compares the fine-grained task failure rates of the proposed scheme against those of the DeepEdge and Fuzzy schemes by simulating the execution of four task types across varying numbers of mobile devices. Figure 11 reveals that under light-load conditions with fewer than 1800 terminal devices, all three scheduling strategies can maintain task failure rates below 1%, demonstrating strong environmental adaptability and baseline scheduling performance.

However, with the increasing number of mobile terminals, particularly in medium-to-high-load scenarios exceeding 1800 devices, the performance gap among the schemes gradually widens. When the number of terminals reaches 2,400, the proposed scheme remains effective for handling heterogeneous tasks, with task failure rates consistently remaining below 17%, indicating excellent scalability and robustness. In contrast, the DeepEdge scheme exhibits a task failure rate of 52% for image rendering tasks, whereas the Fuzzy scheme records a 24.5% failure rate for pervasive health tasks. The proposed scheme demonstrates higher resource utilization efficiency and system stability when accommodating large-scale terminal access and diverse task demands while effectively balancing task execution in terms of real-time responsiveness and security.

Results and discussion

This section analyzes the performance of the edge computing system after integrating IOTA-based data security mechanisms, focusing on three key evaluation metrics: recall rate, task failure rate, and overall service time, under varying load conditions.

Analysis of the recall rate

Each simulation round included a controlled injection of 10% tampered task requests to assess the capability of the system in detecting malicious data. The system maintained a high recall rate as the number of edge devices increased to 1000. However, when they exceeded this threshold, the increasing data volume led to a rise in false negatives, causing the recall rate to drop to approximately 85.7%.

Under low load, the combination of MAM one-time signatures and Merkle tree–based batch verification allowed the system to detect all tampered tasks promptly, maintaining near-perfect detection performance. As the load increased, the hybrid-triggered batch processing mechanism was activated more frequently, constraining the computational resources and network bandwidth at the edge nodes. This occasionally resulted in delayed or incomplete verification, causing some tampered tasks to go undetected.

Nonetheless, the recall rate remained above 85% despite heavy load, indicating robust security. In contrast, the baseline DeepEdge and Fuzzy-based schemes lacked built-in data security mechanisms and could not detect data tampering. This demonstrates that the proposed scheme significantly enhances data integrity protection in edge computing environments.

Analysis of task failure rate

The task failure rate measures the proportion of tasks that are not completed within the time constraints defined by their SLAs. Experimental results indicated that the system maintained a failure rate below 1% under low load. Under medium-to-high load, the failure rate of the system integrated with the MAM mechanism was 11.2%, representing an improvement of approximately 20% compared with the original DeepEdge scheme. In comparison, the Fuzzy-based scheme had a 17.5% failure rate.

This improvement can be attributed to two key factors. First, the batch processing mechanism reduces computational overhead by avoiding repetitive signing operations, thereby freeing up edge computing resources. Second, the pre-verification mechanism of MAM filters out illegitimate tasks before scheduling, preventing them from consuming valuable computational capacity. Distributed subscription and ledger-based audit tracking also enhance the fault tolerance and recovery capability of the system. Unlike the baseline system, which is limited by resource congestion under high load due to accumulating invalid tasks, the proposed scheme ensures a higher task completion rate, confirming its ability to maintain task deliverability while enhancing data security.

Overall service time analysis

The overall service time includes the duration of network transmission, scheduling, and execution for each task. Integrating the MAM protocol introduced additional encryption and signature operations; therefore, we observed a moderate increase in service time. All three schemes demonstrated comparable performance under low load, with the Fuzzy-based scheme performing slightly better. Under medium load, the proposed scheme performed similarly to DeepEdge.

This was mainly due to the batching mechanism significantly amortizing the computational cost of encryption and signing—each batch required one encryption operation and one ledger write. Pre-filtering invalid tasks before scheduling avoided unnecessary computations and rollback operations, reducing the burden of the system. The system maintained low latency under light load, which increased under high load, indicating that further optimization of system parameters was necessary to maintain performance scalability.

Analysis of failure rates in multi-source heterogeneous application tasks

IOTA utilizes the MAM protocol. Assuming a 256-bit key space with a key size of 2^256, the cost of a brute force attack meets the NIST L3 standard. The W-OTS signature scheme satisfies the existence, unforgeability, and resistance to adaptive chosen-message attacks (EUF-CMA) under the random oracle model. Formally, if there is a probability polynomial time adversary ℱ that can forge a valid signature with advantage

Threat model and defense capability.

To evaluate the robustness and generalization capability of the proposed security framework across diverse workload scenarios, we analyzed the failure rates of four representative application categories within a unified multi-task environment. The results indicate that the proposed scheme consistently exhibits lower failure rates than both the original DeepEdge and Fuzzy-based methods, especially under high-load conditions with 2400 edge devices.

Compared to DeepEdge, which demonstrates strong average performance through DDQN-based orchestration, the proposed framework achieves better reliability across application types with varying delay sensitivities and resource footprints. While DeepEdge's performance is hampered by its limited exposure to less frequent task types—especially image rendering—the proposed system mitigates this sensitivity through pre-filtering unschedulable tasks and dynamically adjusting the hybrid-trigger mechanism, thereby reducing unnecessary queuing and VM-level contention.

In particular, failure rates for all task types remained below 17%, while baseline approaches exhibited sharp increases under identical load, with failure rates surpassing 50% in some categories. This improvement is not solely attributed to enhanced orchestration but to the integration of lightweight cryptographic validation and decentralized data integrity protection, which reduced overhead and task rejection rates caused by signature or verification errors.

Overall, the framework demonstrates a balanced trade-off between computational efficiency and task-level reliability. Although it does not eliminate failure entirely, it effectively extends system capacity before saturation and avoids early degradation under diverse demand patterns. These findings highlight its potential suitability for deployment in real-world edge environments where task diversity and high concurrency are common.

Conclusion and future work

This study proposes a lightweight, distributed ledger-based framework for data transmission security, utilizing the IOTA protocol. A novel edge data protection method was designed, featuring a dynamic hybrid-triggering mechanism and a Merkle tree–based batch verification architecture to enable the joint optimization of data integrity, privacy preservation, system throughput, and real-time performance. The proposed approach significantly reduced computational and communication overhead of edge devices by introducing a one-time signature scheme and an adaptive batch processing model. It effectively overcame the deployment limitations of conventional blockchain and centralized cryptographic systems in edge scenarios.

Regardless of the ultra-high load with 2400 devices, the proposed scheme achieved a recall rate of 85.7% and task failure rate of 11.2% while maintaining the overall service time under 6.5 s. These results highlight the robustness, scalability, and engineering adaptability of the solution. Compared with edge security schemes, the proposed method enhanced tamper-resistance, task deliverability, and system efficiency while reducing dependence on centralized trust and improving the scalability and deployment flexibility of the system. These findings validate the feasibility of lightweight distributed ledger technologies in resource-constrained edge environments. The solution contributes to developing a secure, trustworthy, and application-oriented paradigm for edge data protection, offering a feasible path toward trusted data management and sharing in industrial edge intelligence.

Future research will focus on integrating AI-driven security optimization strategies. This includes using machine learning to dynamically detect behavioral patterns of edge devices and build proactive intrusion detection and response systems. In addition, we plan to explore multi-channel MAM extensions to improve data processing parallelism under high load. A comparative evaluation between the proposed scheme and conventional blockchain-based frameworks will also be conducted, focusing on energy consumption, scalability, and security overhead metrics to quantify the comprehensive advantages of lightweight ledger architectures. Moreover, pilot deployments are envisioned in real-world industrial scenarios, such as smart factories and remote monitoring systems, to validate the engineering feasibility and application potential of the proposed framework.

Footnotes

Abbreviations

Author contributions

All authors contributed to this work. Writing—review and editing, F.L.; writing—original draft preparation, J.C. Methodology, HJ.H. All authors have read and agreed to the published version of the manuscript.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was supported by the 2024 Guangdong Polytechnic Institute Teaching Achievement Cultivation Project of the Open University of Guangdong (Project No. 2024CGPY002); 2025 educational reform project of the Open University of Guangdong (Project No. 2025KCJS011); Guangdong Philosophy and Social Sciences Planning Greater Bay Area Research Special Project “Research on the Mechanism of Cross regional Data Compliance Flow in the Guangdong Hong Kong Macao Greater Bay Area” (GD23SQGL03); Guangdong Provincial Science and Technology Plan Project “Research on Precise Policy Implementation Path for High tech Enterprises Based on Enterprise Exclusive Service Space: Taking Hengqin Guangdong Macao Deep Cooperation Zone as an Example” (No. 2024A101005002); and the talent project of the Open University of Guangdong, “Research on Key Technologies for Improving the Performance of Blockchain Application Platforms” (Project No. 2021F001).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

All data generated or analyzed during this study are included in this article and its supplementary materials.