Abstract

The decentralized and immutable nature of blockchain technology offers promising avenues for secure healthcare data sharing among legitimate institutions. However, current blockchain systems face critical bottlenecks in handling large volumes of medical data, low storage efficiency, and vulnerabilities in security. To overcome these challenges, a novel healthcare blockchain data-sharing scheme integrating Secure Multi-party Computation (SMPC) is proposed. To mitigate the risk of data leaks resulting from key compromise, Blakley’s secret sharing technique is employed for key distribution management, and Ciphertext-Policy Attribute-Based Encryption (CP-ABE) is integrated for access control. To address the challenge of handling large data on the chain, data that needs to be uploaded to the blockchain is encoded and fragmented using erasure coding techniques. Additionally, a Byzantine Fault Tolerance mechanism based on Reputation and Polling verification (TRP-PBFT) is introduced to enhance data sharing efficiency and system robustness. Simulation experiments conducted on platforms like Fabric demonstrate that this solution improves data sharing efficiency while ensuring data confidentiality and integrity. In comparison to Practical Byzantine Fault Tolerance (PBFT) under similar conditions, TRP-PBFT reduces the average latency from 655.42 ms to 342.22 ms and increases the average throughput from 107.31 TPS to 143.45 TPS. The scheme maintains high throughput and offers relatively stable latency performance.

1. Introduction

Smart healthcare uses digital and intelligent information technology to meet the diverse needs in healthcare. 1 With the widespread adoption of technologies like artificial intelligence, cloud computing, and big data, 2 medical data has grown rapidly, sparking significant concerns about the security management of healthcare data. 3 At present, the management of medical data predominantly relies on centralized systems. 4 However, these systems pose several challenges, including intricate privilege management, the risk of privacy breaches, and the complexity of achieving meaningful data sharing. Therefore, the shift from centralized to distributed medical data sharing systems has become an inevitable trend for providing better medical services. 5

Blockchain technology has introduced innovative solutions for ensuring the secure exchange of medical data. Its decentralized network architecture enhances the resilience of the system against various security threats. However, despite the promising potential of blockchain technology, existing medical data sharing schemes still exhibit significant disadvantages that hinder their practical deployment in large-scale healthcare ecosystems. First, cloud-storage-based medical data sharing technologies face inherent trust and security issues due to their reliance on semi-trusted servers, leaving systems vulnerable to single-point failures and internal data breaches. 6 Second, to circumvent cloud vulnerabilities, many existing blockchain schemes opt to upload complete original user data onto the chain. However, the openness and transparency of generic blockchains inevitably expose highly sensitive electronic health records to the risk of privacy leakage. 7 Third, directly storing massive amounts of medical data on the blockchain results in severe on-chain storage bottlenecks, making existing systems completely incapable of sustaining the continuous influx of modern medical data. Fourth, although various advanced consensus mechanisms 8 and Layer-II protocols 9 have been recently proposed to improve scalability, generic blockchain frameworks still suffer from severe efficiency bottlenecks and unacceptably high communication overheads when strictly required to satisfy the deterministic finality and low-latency demands of multi-institutional medical collaboration.

Motivated by these critical challenges, there is an urgent need for a highly scalable and secure architecture that can simultaneously ensure data confidentiality, alleviate on-chain storage burdens, and achieve high-throughput consensus. This paper aims to design a highly scalable, secure, and efficient healthcare data-sharing architecture to overcome the risk of data leakage in transparent blockchain networks, alleviate the substantial on-chain storage burden caused by raw medical data, and address the performance bottlenecks of traditional consensus mechanisms in large-scale sharing networks. To achieve this goal, this research focuses on combining SMPC and blockchain technology to comprehensively enhance the security, privacy, and efficiency of medical data sharing. Specifically, Blakley secret sharing technology is employed for key distribution management to ensure data confidentiality. Simultaneously, CP-ABE is introduced for access control to mitigate the risk of data leakage. Furthermore, erasure coding techniques are applied to encode and shard the data destined for the blockchain, thereby reducing the storage space requirements for on-chain transactions. Finally, a more secure and efficient Practical Byzantine Fault Tolerance consensus mechanism, referred to as TRP-PBFT, is introduced to enhance sharing efficiency and system robustness. The primary contributions of this study are as follows: 1) A multiparty secure medical data-sharing model is established and detailed in Section 4. By integrating SMPC with consortium blockchain technology, it provides a decentralized and highly secure framework. Its overall capability to handle large-scale medical datasets is validated through the System Throughput evaluation in Section 8.4. 2) A decentralized key management and fine-grained access control strategy is designed and elaborated in Section 6.2. This strategy combines the Blakley secret-sharing technique with CP-ABE to prevent single points of failure, and its theoretical security is formally analyzed and proven in Section 7.2. 3) An on-chain storage optimization method utilizing erasure-coding techniques is introduced in Section 6.1. Instead of broadcasting massive raw files, this scheme independently shards and encodes the encrypted data to alleviate storage burdens. Its processing efficiency is thoroughly evaluated through the experiments in Section 8.3. 4) A practical Byzantine fault-tolerant mechanism based on reputation and polling verification (TRP-PBFT) is proposed and formulated in Section 5. This mechanism improves system robustness by integrating trust assessment and fast-polling timers. Its performance superiority is quantitatively validated through Consensus Throughput (Section 8.5) and Consensus Latency (Section 8.6) comparisons.

The remaining structure is as follows: Section 2 discusses related work; Section 3 introduces preliminary knowledge; Section 4 describes the system model and workflow of the healthcare blockchain data sharing scheme; Section 5 introduces the proposed improved PBFT consensus in detail; Section 6 describes the key algorithm design of the multi-party secure healthcare blockchain data sharing scheme; Section 7 analyzes and proves the security of the scheme; Section 8 compares and evaluates the performance of various schemes. Finally, a summary and discussion of future work are presented.

2. Related work

2.1. Medical data sharing

Liu 10 proposed a searchable encryption framework for cloud assisted electronic health record sharing, aiming to address the trust crisis and data untraceability in traditional cloud storage. Nevertheless, reliance on semi trusted cloud service providers for centralized data management still entails inherent privacy leakage risks. Capraz 11 introduced a secure medical data sharing framework based on public blockchains. In contrast, storing massive amounts of raw medical data directly on a transparent blockchain network entails severe on chain storage bottlenecks. Similarly, Kaur 12 introduced a secure sharing system combining blockchain, the InterPlanetary File System, and CP-ABE to achieve fine grained access control. However, it is worth noting that executing complex encryption algorithms introduces significant computational overhead, and lacking a distributed key management mechanism leaves the system vulnerable to single point key leakage risks. Nekouie 13 introduced an escrow less blockchain assisted attribute-based encryption scheme for secure medical data sharing in the cloud, utilizing partial private keys to prevent key generation centers from accessing user keys. However, it is worth noting that executing complex encryption algorithms still brings high computational overhead, and relying on centralized cloud storage cannot completely eliminate the inherent risks of single point of failures.

In medical blockchain networks, efficient collaboration depends on robust and scalable architectures. Sunitha 14 introduced a hybrid framework integrating Attribute Based Access Control with Ethereum blockchain technology to establish a multi layered security architecture for cloud-based healthcare systems. However, it is worth noting that combining multiple complex cryptographic techniques and traditional public blockchain networks still brings significant computational overhead and faces scalability bottlenecks when handling massive real time medical data. Expanding the application scope to the medical supply chain ecosystem, Namasudra 15 introduced a blockchain and Internet of Things enabled system to ensure the traceability and transparency of medical drugs. However, it is worth noting that continuously recording massive amounts of supply chain data directly on the Ethereum network inevitably introduces significant transaction costs and latency issues. Furthermore, addressing network security during data auditing, Hanumantharaju 16 introduced a distributed intrusion detection architecture combining fog computing, cloud services, and blockchain technology. However, it is worth noting that deploying additional fog computing layers and continuous monitoring processes inevitably increases the systemic architectural complexity and edge node resource consumption.

To address availability and partition resistance in cloud resource management, Arias 17 proposed a decentralized architecture utilizing Byzantine-resistant consensus to replace traditional Paxos or Raft data stores. While this approach significantly increases overall system availability during network partitions, applying standard Byzantine consensus directly to large-scale healthcare data sharing still incurs considerable communication overhead and latency. To address the secure exchange of Electronic Medical Records between hospitals, Hasan 18 recently integrated blockchain, the Inter-Planetary File System, and proxy re-encryption. While proxy re-encryption enables secure sharing without revealing original data, its complex cryptographic operations inevitably introduce substantial computational overhead. Zhang 19 focused on attribute-based encryption ciphertext protection strategy to solve the data security problem during data sharing by designing suitable ciphertext and key structure. Sun 20 introduced a secure medical information storage solution utilizing Hyperledger Fabric and Attribute Based Access Control to guarantee data security through smart contracts. However, it is worth noting that storing data directly within the blockchain limits system scalability and poses challenges in handling large scale data.

2.2. Secure multi-party computation

To establish a trusted execution environment for SMPC, certain researchers have opted to carry out SMPC via a trusted third party. Wu 21 developed a generalized server-assisted secure multiparty framework to facilitate secure execution of collaborative computation tasks within the cloud. This framework enables the analysis of secure multi-party federated datasets in the cloud while safeguarding the privacy of individual datasets during the computing protocol. However, if the trusted third party is attacked or malfunctions, the whole system will not work properly, which may also lead to computation disruption or result in leakage. In addition to this, the trusted third party may collude with malicious parties to leak sensitive information or tamper with computation results.

To solve the potential problems posed by trusted third parties, some researchers have combined blockchain technology with SMPC to reduce the dependence on trusted third parties. The distributed nature and security features of blockchain can furnish a more reliable execution environment for SMPC, and by recording the computation tasks and results on the blockchain, it can realize the openness, transparency, and immutability of the data, as well as the validation and traceability of the computation process. Yang 22 introduced a blockchain enabled privacy preserving multiparty computation scheme integrating non interactive zero knowledge proofs for public auditing in the industrial Internet of Things. However, it is worth noting that executing complex zero knowledge proofs on the blockchain still incurs significant computational and communication overhead. Li 23 introduced a publicly verifiable secure multiparty computation framework utilizing homomorphic message authentication codes and bulletin boards to detect malicious servers. In contrast, executing complex pairing based homomorphic commitments still introduces significant computational burdens during the verification phase. Gilbert 24 introduced an innovative privacy solution combining zero knowledge proofs and SMPC to facilitate collaborative computations in blockchain ecosystems. However, integrating these advanced cryptographic technologies encounters severe challenges related to systemic complexity and scalability.

Although prior research focused on mitigating single points of failure and enhancing privacy using blockchain technology, it often overlooked the intricacies of secret sharing schemes and failed to adequately tackle the storage demands associated with blockchain implementations. Shree 25 introduced a data protection architecture combining blockchain, the InterPlanetary File System, and a secret sharing algorithm to fragment data and resist emerging cryptographic threats in the Internet of Medical Things. However, it is worth noting that retrieving and reconstructing fragmented data from public decentralized storage clusters introduces significant communication overhead and system latency. Building upon this, Alam 26 introduced a verifiable multi secret sharing scheme with a hierarchical access structure to enable authorized participants across different priority levels to reconstruct secrets. However, it is worth noting that coordinating participants across multiple hierarchy levels and performing continuous share verification inevitably introduces significant communication delays and computational complexity. Zhang 27 introduced a publicly verifiable secret sharing method combining on chain audit verification and off chain secure multiparty computation to enable secure data sharing between edge devices. However, it is worth noting that integrating multiple cryptographic primitives such as Pedersen commitments and Beaver triplets inevitably introduces substantial computational and communication overhead for resource constrained edge devices.

Li 28 introduced a layered blockchain based medical data asset sharing framework integrating zero knowledge proofs and group signatures to establish a supervisory privacy preserving mechanism. However, it is worth noting that executing complex cryptographic proofs within smart contracts inevitably sacrifices system throughput and introduces additional computational overhead during large scale concurrent transactions. Lu 29 introduced a personal health record sharing mechanism combining zero knowledge proofs and a linear secret sharing scheme to verify user attributes while protecting privacy. However, it is worth noting that generating complex zero knowledge proofs during the verification phase produces non negligible computational latency, and its system throughput faces obvious performance bottlenecks when handling high concurrency requests. Borges 30 introduced a secure multi-party computation methodology integrating a blockchain based platform and the MPyC library to facilitate collaborative clinical trial cohort creation without complex cryptographic key management. However, it is worth noting that as a proof of concept study operating on partitioned Fast Healthcare Interoperability Resources format data, its reliance on frequent multi-party network interactions inevitably introduces communication latency and scalability bottlenecks when applied to massive real time medical datasets.

Summary of relevant work.

3. Preliminaries

3.1. Bilinear mapping

A bilinear mapping forms the mathematical foundation for constructing attribute-based encryption algorithms based on the Diffie-Hellman problem. Let

3.2. Ciphertext-Policy Attribute-Based Encryption (CP-ABE)

Ciphertext-Policy Attribute-Based Encryption (CP-ABE) is a fine-grained access control encryption scheme in which the access policy is embedded within the ciphertext, and the user’s attributes are embedded within the user’s key.

31

Decryption is successful only if the user’s attributes satisfy the access policy specified in the ciphertext. The steps in CP-ABE are as follows: The typical architecture and fundamental algorithms of the CP-ABE scheme.

3.3. Blakley secret sharing scheme

The Blakley Secret Sharing Scheme is a method of secret sharing that leverages geometric principles.

32

In this scheme, the secret is viewed as the intersection of all hyperplanes in an n-dimensional space, with each hyperplane representing a share of the secret, known as a sub-secret. The steps of this scheme are as follows:

3.4. Erasure code scheme

Erasure coding is a robust mathematical method for data protection and storage optimization, with the Reed-Solomon (RS) code being one of its most prominent implementations. 33 In a decentralized or distributed storage system, the erasure code scheme transforms a message into a longer message with redundant data components, ensuring data availability even in the presence of node failures or data loss.

The fundamental principle of an

4. Scheme framework

4.1. System model

To mitigate data leakage risks and bolster overall system security, the proposed model adopts a consortium blockchain architecture specifically implemented on the Hyperledger Fabric platform. The rationale for selecting this network is threefold. First, its permissioned nature ensures that strictly authenticated medical institutions can participate, thereby shielding highly sensitive healthcare data from unauthorized public exposure. Second, by circumventing computationally intensive mining processes, the platform achieves the high transaction-processing throughput and low latency mandated by massive medical datasets. Third, its modular design seamlessly accommodates the deployment of our customized TRP-PBFT consensus mechanism, providing a highly scalable and robust infrastructure for secure healthcare data sharing. Specifically, unlike public blockchains that rely on Proof-of-Work, block generation in our system is not executed through competitive hashing. Instead, transactions are efficiently verified, ordered, and committed by high-reputation consensus nodes utilizing the TRP-PBFT mechanism, ensuring deterministic finality while strictly avoiding any resource-intensive mining process. The system model of the Multi-Party Secure Medical Blockchain Data Sharing Scheme (MHBDS) is depicted in Figure 2. Users are categorized into data owners (DO) and data sharing users (DSU) based on their distinct roles in data sharing transactions. Data sharing model.

The system model of MHBDS primarily consists of three key entities: data owners, shared users, and the blockchain storage system. Below is an introduction to each entity:

4.2. System processes

The complete interaction process of different roles in the simulation system model to carry out shared transactions, the specific system flow is shown in Figure 3. System process.

5. Improved PBFT consensus mechanism

5.1. Ideas for improvement

In view of the low consensus efficiency, high-performance overhead, and low scalability of the traditional consensus mechanisms previously used in the consensus phase of the blockchain, 34 as well as the threat to the security and robustness of the system in case of intrusion by malicious nodes, a Trust-based Reputation Polling Byzantine Fault Tolerance (TRP-PBFT) mechanism based on credibility and polling verification is proposed.

Based on the traditional PBFT consensus mechanism, TRP-PBFT makes the following three improvements: 1) Introducing a trust assessment mechanism in the process of node entry and dynamic adjustment. The credibility of nodes is assessed through their performance scores and network reputation scores, and more reliable nodes are prioritized as consensus nodes to reduce the possibility of malicious node invasion and improve the system’s ability to identify abnormal nodes. 2) Introducing a multi-party signature mechanism in PBFT ensures that the transaction is recognized by at least t different nodes before 3) Introducing a fast-polling method speeds up the process of message delivery and consensus reaching among nodes. The performance and response speed of the PBFT algorithm is improved by introducing a fast-polling timer in the nodes.

5.2. Design and implementation of the improved PBFT consensus mechanism

5.2.1. Trust assessment mechanism design

For the selection of consensus nodes, nodes with elevated reputation values are selected as the primary and backup participants in consensus activities through the introduction of a trust assessment mechanism, and these consensus nodes are utilized to pack data and generate blocks.

5.2.1.1. Performance indicator score

Assuming that node i has a set of performance indicators

X represents the value of the corresponding raw performance metrics, through which the normalized response time

5.2.1.2. Network reputation evaluation score

The network reputation is formed through the evaluation and feedback of the target node by other nodes in the network. Suppose there are

5.2.1.3. Comprehensive trust score

Combining the performance index and network reputation evaluation scores, the final trust calculation formula can be expressed as

5.2.1.4. Tolerance assessment

Set the threshold of trust

5.2.2. Introduce multi-party signature

Decrypt the hash value encrypted by the corresponding private key through the user’s public key, establish a multi-party signature function to generate a multi-party encryption string, and jointly encrypt the transaction information through the multi-party encryption string. The multi-party signature process is shown in Figures 4 and 5. Multi-party signature encryption. Multi-party signature decryption.

Instead of two scalars

By using the multi-party signature function, all the signature verification equations can be summed, and since the verification equations are linear, the sum of several equations is valid as long as all signatures are valid. For example, suppose 10 signatures are required to be verified:

5.3. Consensus process of the improved PBFT consensus mechanism

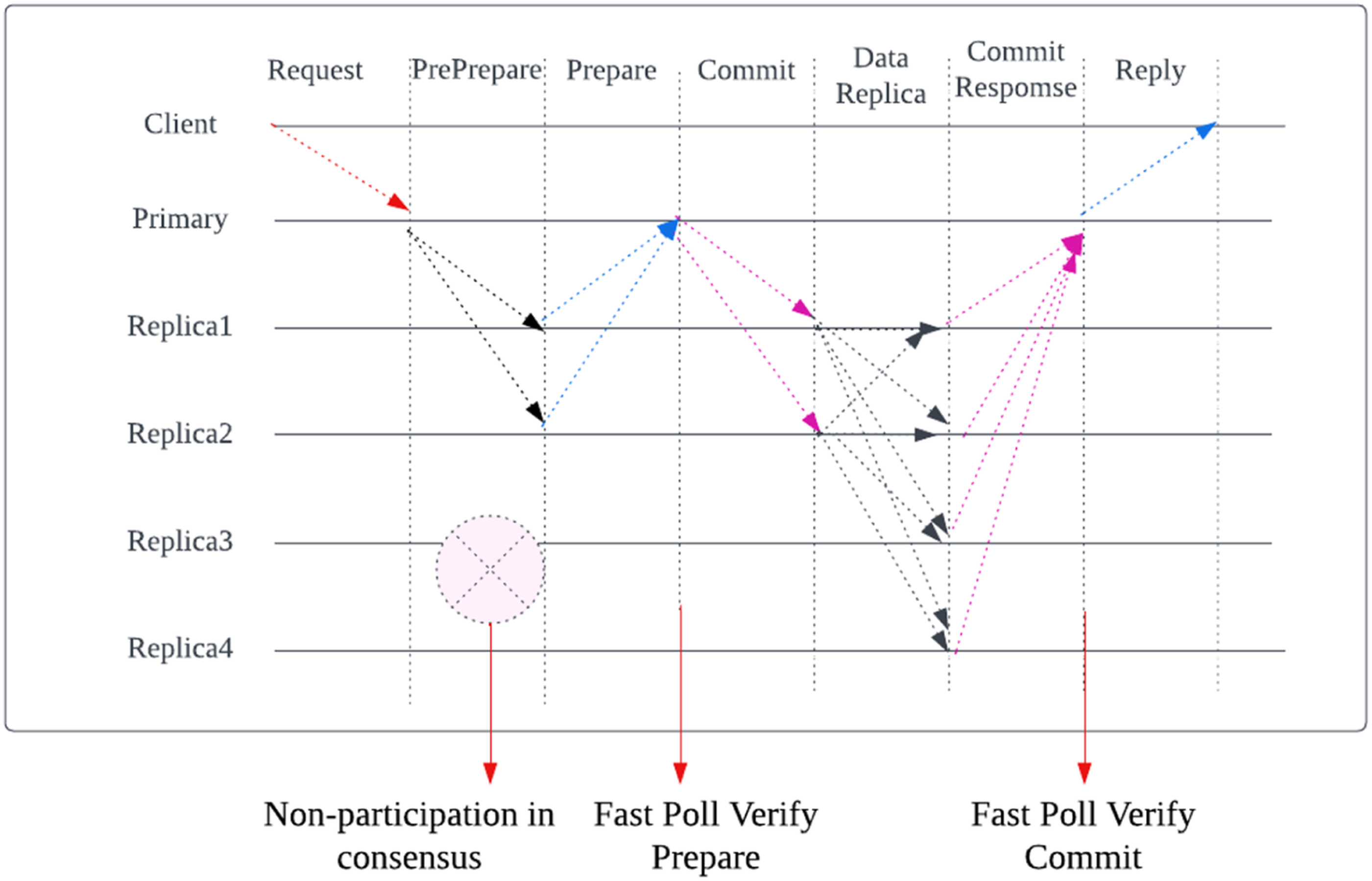

The TRP-PBFT consensus process is shown in Figure 6. TRP-PBFT consensus process.

Includes the following eight stages: 1) Initialization Phase: In the initialization phase of the system, the number of master and replica nodes is set and the reputation value is calculated for each node. Sort the replica nodes according to the reputation value from high to low, and use the node with a higher reputation value as the primary backup node and the node with a lower reputation value as the replica ordinary node 2) Request Pre-prepare Phase: The client sends the request to the master node (Primary). Upon receiving the request, the master node prepares the request and broadcasts a pre-prepare message (Pre-prepare) to all replica nodes in its group. The master node sets a fast-polling timer and starts waiting for Pre-prepare messages from a sufficient number of replica nodes. After the replica node receives a Pre-prepare message from the master node, it also sets a fast-polling timer and starts waiting for Pre-prepare messages from other replica nodes. 3) Backup Node Verify Pre-prepare Phase: The replica node receives the pre-prepared messages from the master node and other replica nodes. The replica node verifies the pre-prepared message based on the multi-party signature mechanism to ensure the integrity and authenticity of the message. If the verification passes, the replica node continues the preparation phase, otherwise the message is ignored. 4) Prepare Phase: Once the master node receives a pre-prepare message from a sufficient number of replica nodes, it broadcasts a Prepare message to all the nodes in its group, including itself and the replica nodes. The master node and the replica and backup nodes start waiting for a sufficient number of Prepare messages. After the replica nodes receive a sufficient number of Prepare messages, they broadcast a Prepare message to all nodes. 5) Fast Poll Verify Prepare Phase: During the preparation phase, the node performs fast polling according to the setting of the fast-polling timer. When a node needs to perform fast polling during the preparation phase, the timer starts timing. Once a sufficient number of ready messages are received or the threshold set by the timer is reached, the node can proceed to the commit phase immediately without waiting for responses from other nodes. 6) Commit Phase: Once the master node receives a sufficient number of ready messages, it broadcasts a commit message to all the nodes in its group, including itself and the replica nodes. The master and replica nodes start waiting for a sufficient number of commit messages. Once the replica nodes receive a sufficient number of commit messages, they broadcast a commit message to all the nodes in their group. 7) Fast Poll Verify Commit Phase: During the commit phase, the node performs fast polling based on the setting of the fast-polling timer. When a node needs to perform fast polling during the submit phase, the timer starts timing. Once a sufficient number of commit messages are received or the threshold set by the timer is reached, the node can proceed to the completion phase immediately without waiting for responses from other nodes. 8) Finalization Phase: Once the master node receives a sufficient number of commit messages, it confirms the execution of the transaction and broadcasts the result to all nodes in its group, including the replica nodes. The master and replica nodes update the local state based on the result.

At any stage, if the system detects a malicious node intrusion or data anomaly, a fault-tolerant transaction replay mechanism is adopted. That is, the affected transactions are re-executed to ensure that the correct transaction results are written to the blockchain. If a malicious attack behavior occurs then the evil node is kicked out from the cluster. At the same time, the replica node backup node is introduced to replace the evicted malicious node.

5.4. Theoretical analysis of TRP-PBFT

To comprehensively evaluate the TRP-PBFT consensus mechanism, this section analyzes its core theoretical properties including fault tolerance bound, safety, and liveness under a partially synchronous network model comprising 1) Fault Tolerance Bound: TRP-PBFT strictly adheres to the identical Byzantine fault tolerance lower bound as standard PBFT. To guarantee safety and liveness within a partially synchronous network, the system can tolerate up to 2) Safety Analysis: Safety dictates that no two honest nodes will ever commit conflicting blocks at the same height. TRP-PBFT guarantees this property through a rigorous intersection of quorums combined with unforgeable multi-party cryptographic signatures. During the Prepare and Commit phases, a node advances its state only after collecting at least 3) Liveness and Practical Robustness: Liveness ensures that legitimate client requests are eventually committed, preventing system deadlocks. In standard PBFT, malicious primary nodes easily trigger the high-overhead View Change protocol, severely degrading practical liveness. TRP-PBFT reshapes this guarantee by introducing a Fast-Polling Timer and a dynamic Trust Assessment Mechanism. The fast-polling mechanism curtails long-tail network latencies, preventing sluggish Byzantine nodes from stalling the consensus workflow. Concurrently, the trust assessment mechanism proactively isolates low-reputation nodes and revokes their primary node candidacy, successfully circumventing frequent view-change overheads. Consequently, even under adverse conditions approaching the fault tolerance limit, TRP-PBFT sustains stable block generation and system throughput, demonstrating exceptional practical robustness.

6. Schematic design

System parameters and symbols.

6.1. Encrypted data upload and storage

6.2. Key splitting and sharing

The key splitting and sharing phase splits the data encryption key

6.3. Recovering keys and data reconstruction

In this system of linear equations, the key terms are defined as follows:

The above equation can be written as

7. Security analysis

7.1. Threat model

Before proceeding with the formal security proofs, it is imperative to establish the rigorous threat models and collusion assumptions under which the MHBDS scheme operates. Given the hybrid architectural nature of the proposed framework, the threat landscape is defined based on the capabilities of three distinct types of adversaries: 1) External PPT Adversaries. For the data encryption layer, a Probabilistic Polynomial Time (PPT) adversary is assumed. The primary threat involves executing Chosen-Plaintext Attacks (CPA) from outside the authorized network or by unauthorized data-sharing users attempting to challenge data confidentiality. Regarding collusion assumptions, it is formally assumed that multiple unauthorized users can collude by pooling their respective attribute secret keys. The security boundary establishes that unless the mathematically pooled attribute set satisfies the target access policy 2) Malicious Insiders. During the Blakley secret sharing and key distribution phase, a malicious adversary model is considered. Corrupted internal nodes in this phase are fully capable of arbitrarily deviating from the prescribed protocol. They pose active threats such as forging secret shares, submitting invalid spatial equations, and actively colluding to reconstruct the symmetric key illegally. The rigorous security assumption dictates that the total number of colluding malicious adversaries must be strictly bounded below the predefined spatial reconstruction threshold 3) Semi-Honest Participants. During the SMPC-based collaborative computation phase, the standard Semi-Honest Model is adopted. Under this threat model, the corrupted entities strictly follow the protocol execution steps without malicious deviations. However, these semi-honest nodes are curious; they will collude and meticulously analyze all intermediate execution views to infer the private inputs of honest participants. The fundamental assumption is that at least one participant remains perfectly honest throughout the protocol execution.

7.2. Security proofs

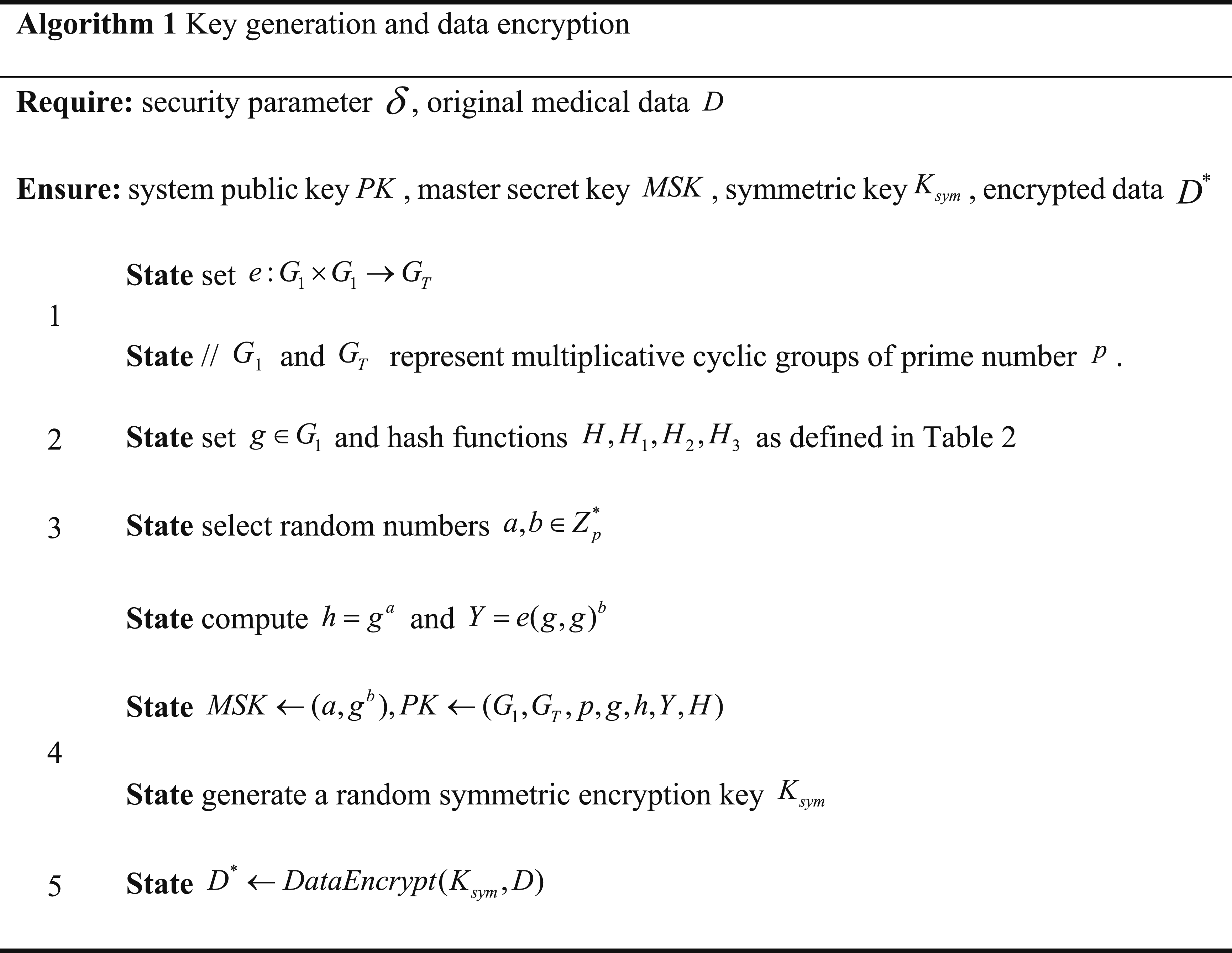

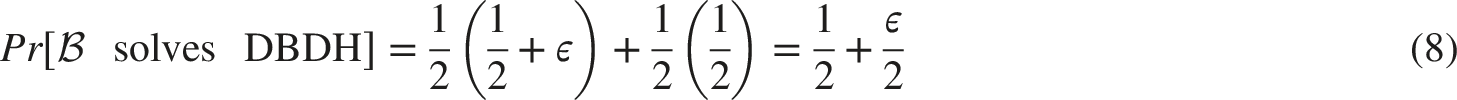

The proposed CP-ABE-based data encryption scheme is Indistinguishable under Chosen-Plaintext Attack (IND-CPA) secure, assuming the Decisional Bilinear Diffie-Hellman (DBDH) problem is intractable for any PPT adversary.

A formal IND-CPA game interacting between a PPT Adversary

Thus,

Under the malicious model, the Blakley secret sharing mechanism guarantees information-theoretic security, ensuring no key leakage occurs as long as the number of colluding adversaries is strictly less than the reconstruction threshold

A semantic security game is formalized. Let

Geometrically, the secret is a point in an

The proposed SMPC protocol operates securely in the semi-honest model, ensuring computational privacy provided there is at least one honest participant.

The standard Real vs. Ideal simulation paradigm is employed. Let

8. Performance analysis

8.1. Experimental environment

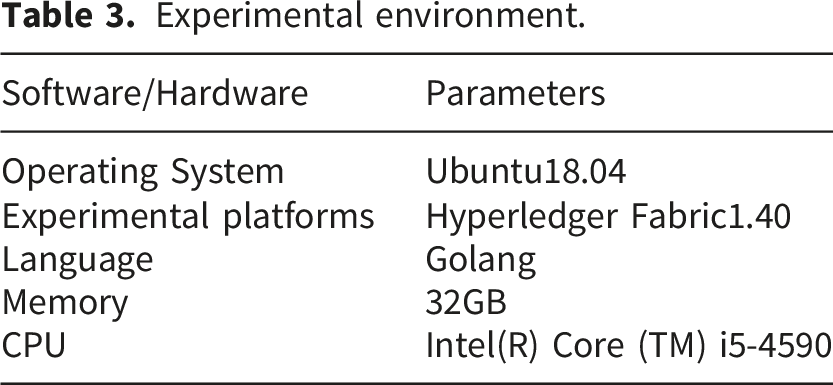

To ensure the reproducibility of the proposed MHBDS scheme, the experimental environment is structured into three primary components: 1) Infrastructure and software stack: The core blockchain framework is deployed on a server running Ubuntu 18.04 LTS, equipped with 32GB RAM and an Intel(R) Core (TM) i5-4590 CPU. All smart contracts and sharding logic are implemented using Golang v1.12. For performance evaluation, the TRP-PBFT consensus mechanism is simulated in IntelliJ IDEA on a Windows 10 environment (i5-8250U CPU) utilizing the JPBC (Java Pairing-Based Cryptography) library. 2) Blockchain network topology: We configured a permissioned consortium blockchain utilizing Hyperledger Fabric v1.4.0. The network consists of one medical organization managing four Peer nodes to simulate a distributed healthcare environment, and one Orderer node utilizing the Raft service for transaction ordering. The nodes are interconnected via a high-speed local network to minimize external propagation noise during throughput and latency testing. 3) Data characterization: Experiments were conducted using a synthetic medical dataset to maintain rigorous control over data volume variables. Data blocks ranging from 256 KB to 2048 KB were dynamically generated, with the erasure coding process utilizing the open-source Reed-Solomon library in Golang to perform precise sharding and encoding.

Experimental environment.

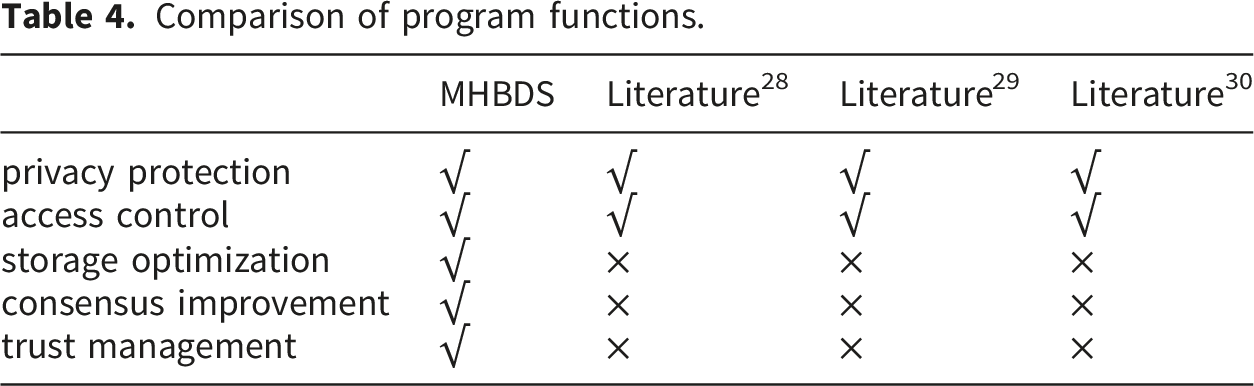

8.2. Functional comparison

Comparison of program functions.

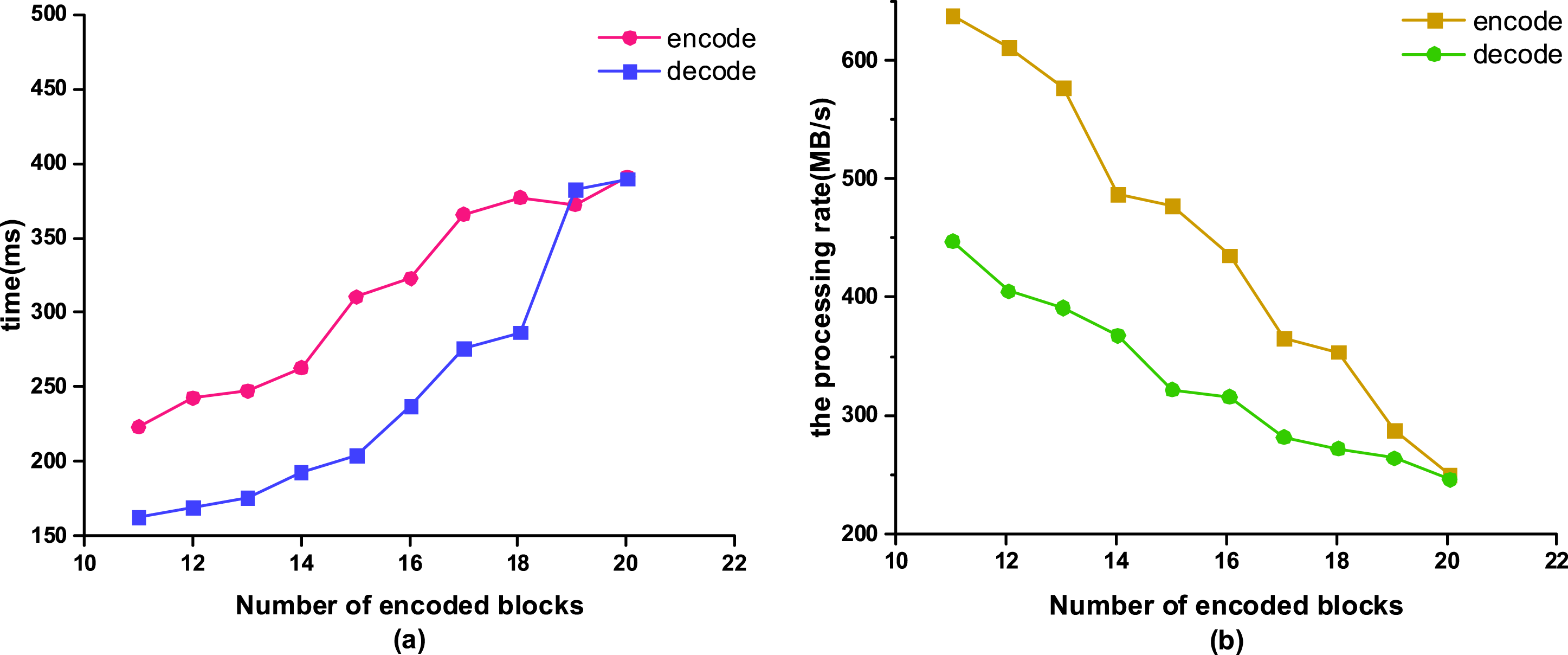

8.3. Encoding and decoding efficiency

Before data is uploaded, encryption and encoding are necessary, while during data access, decoding and decryption processes are required. Therefore, the efficiency of encoding and decoding has a significant impact on the entire system. Figure 7 presents experimental test results related to data encoding and decoding. To generate these results, the Reed-Solomon algorithm from the Go open-source library was executed on 1024 KB synthetic data blocks. The number of encoding blocks was systematically varied from 11 to 20. For each configuration, the execution time for encoding and decoding was recorded in milliseconds using built-in time-tracking functions, as shown in Figure 7(a). The execution rates in Figure 7(b) were calculated by dividing the processed data volume by the recorded execution time. All plotted data points represent the average values from multiple test iterations to ensure reliability. Encoding and decoding efficiency.

The range for the number of encoding blocks is specified as 11 to 20, with the minimum execution time observed when utilizing 11 encoding blocks. At this point, the encoding operation takes approximately 223ms, and the decoding operation takes about 161ms. As indicated by Figure 7(a), an elevated quantity of encoding blocks results in extended durations for both encoding and decoding processes. Moreover, as shown in Figure 7(b), with the continuous increase in redundant data, the corresponding processing rate gradually decreases. However, since MHBDS generates relatively small data scales when splitting data, its impact on the overall system’s operational speed is also relatively minor.

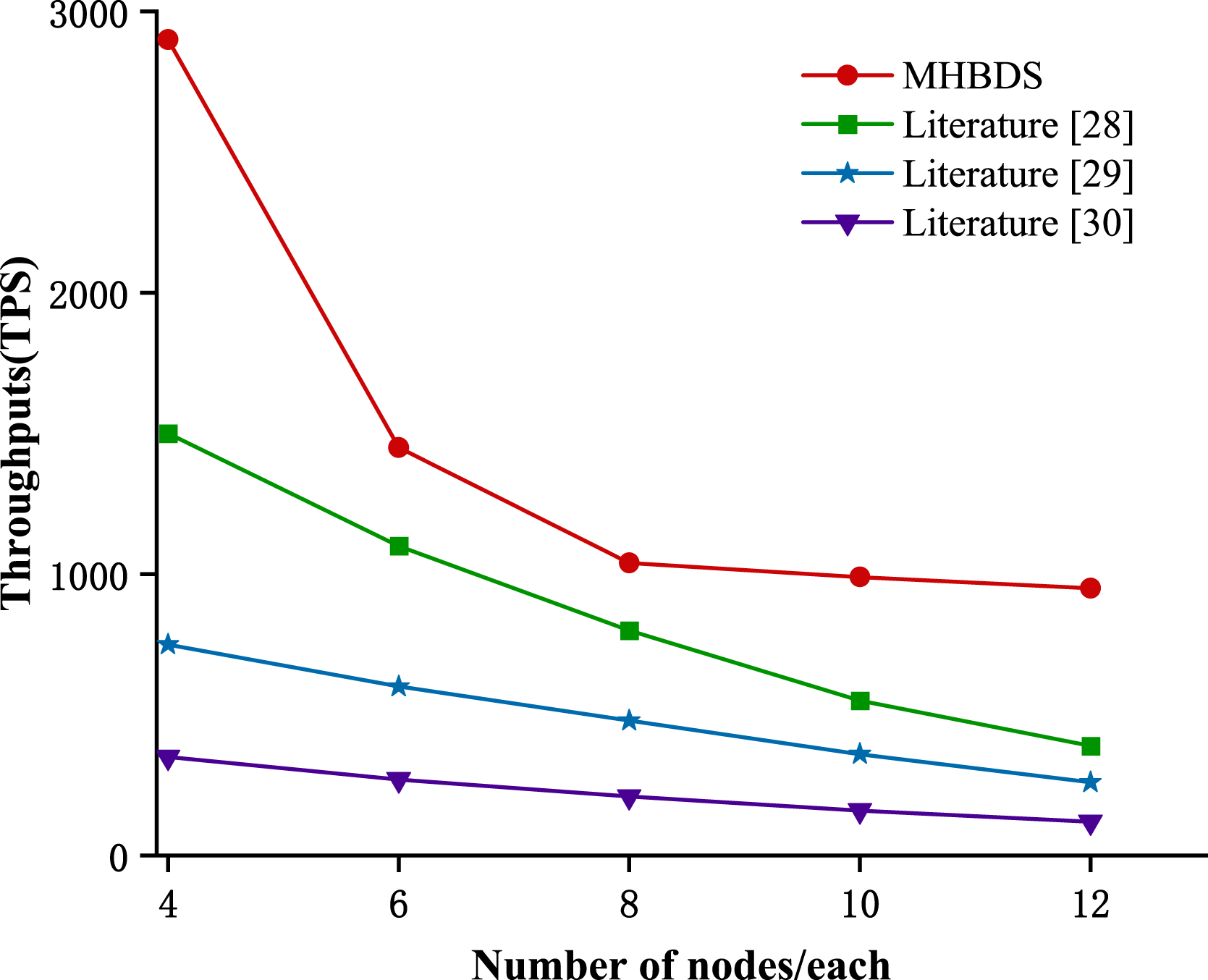

8.4. System throughput

To generate the end-to-end system throughput results, a multi-threaded client simulator was deployed to mimic high-concurrency transaction requests encompassing the entire data-sharing lifecycle. The system throughput, defined as Transactions Per Second (TPS), was calculated using the formula: System throughput comparison.

Under the same number of nodes, MHBDS consistently outperforms the baselines. When operating with eight nodes, MHBDS achieves a throughput of approximately 1040 transactions per second (TPS). As the number of nodes increases, the throughput gradually declines and stabilizes. This superiority stems from MHBDS’s adoption of the optimized TRP-PBFT consensus mechanism coupled with an efficient data structure. Both the Healthcare 4.0 framework in Ref. 28 and the PHR sharing scheme in Ref. 29 utilize standard PBFT consensus. Since standard PBFT inherently imposes an

8.5. Consensus throughput

Throughput is an important indicator of the efficiency and performance of a consensus protocol, and a higher throughput means that the system can process more transactions or blocks more quickly, thus improving the overall performance. The experiment sets the number of transactions to 50 for a block-packing transaction and tests the throughput difference between TRP-PBFT and traditional PBFT under different nodes when the consensus time (block time) is 5 seconds. The throughput comparison is shown in Figure 9. Consensus throughput comparison.

Experimental results indicate that PBFT throughput increases from an initial 39.73 to 158.9649, while TRP-PBFT throughput rises from 108.3784 to 172.8357. Both PBFT and TRP-PBFT demonstrate a trend of increasing throughput with the addition of more nodes, as more nodes can process transactions in parallel, enhancing overall system throughput. However, when the number of nodes reaches a certain threshold, further additions may no longer significantly improve throughput, indicating a saturation point. Under these experimental conditions, the TRP-PBFT algorithm consistently outperforms the traditional PBFT algorithm across all node counts, exhibiting superior throughput performance and greater stability with a higher number of nodes. This is because, compared to PBFT, TRP-PBFT incorporates reputation value calculations and node grouping mechanisms, and selects primary nodes with lower fault probabilities, thereby enhancing the system’s parallel processing capability and fault tolerance, maintaining high throughput in large-scale node networks.

The throughput superiority in TRP-PBFT stems from two fundamental cryptographic and architectural mechanisms. First, the multi-party signature algorithm aggregates multiple independent signature verifications into a singular linear equation system. This cryptographic compression drastically reduces the message payload size during the prepare and commit broadcast phases. Second, the dynamic trust assessment acts as a strict network filter. By systematically excluding nodes with historically high response latency from the primary consensus group, the system proactively avoids the exorbitant computational costs associated with view-change protocols, ensuring continuous block generation.

8.6. Consensus latency

Blockchain latency is a key performance metric for consensus algorithms, which is the time elapsed from the time a transaction is submitted to the time it is finally confirmed on the blockchain. Experiments are conducted to compare the latency of the two algorithms in the time range of 10-60s, respectively. The consensus delay comparison is shown in Figure 10. Delay comparison.

It is evident that, within a specific time range, the TRP-PBFT algorithm demonstrates reduced latency compared to the PBFT algorithm. Specifically, it reduces average latency from 655.42 ms to 342.22 ms and exhibits superior latency stability. The latency of the TRP-PBFT algorithm rises in a relatively gentle trend, for example, in the time period of 10 to 20 seconds, the latency increases from 325.00 ms to 330.02 ms, and the increase is small and smooth. The latency of the TRP-PBFT algorithm shows a similar trend in the other time periods without a significant jump. On the other hand, the rising trend of PBFT delay is relatively more obvious, with large fluctuations in different time periods. Therefore, the experimental results indicate that as the number of nodes increases, the efficiency of PBFT diminishes, whereas TRP-PBFT demonstrates enhanced stability and robustness. Additionally, TRP-PBFT exhibits superior performance with reduced delay.

The remarkable reduction and stability in latency primarily stem from optimized communication complexity. Traditional PBFT protocols inherently suffer from an

9. Conclusions

The paper proposes a multi-party secure medical blockchain data-sharing scheme. The architecture employs Blakley’s secret-sharing technology for decentralized key distribution and integrates CP-ABE to implement fine-grained access control, thereby significantly reducing data leakage risks. To address the storage bottlenecks of large-scale medical datasets, data uploaded to the blockchain is encoded and fragmented using erasure-coding techniques. Furthermore, the introduction of the TRP-PBFT consensus mechanism enhances system efficiency, stability, and robustness. Experimental results demonstrate that MHBDS is both effective and practical; compared to the traditional PBFT mechanism, the proposed TRP-PBFT reduces average latency from 655.42 ms to 342.22 ms and increases average throughput from 107.31 TPS to 143.45 TPS.

Furthermore, while this paper has established a solid theoretical foundation for security through robust architectural design and rigorous mathematical proofs, the quantitative evaluation of the empirical performance of these security mechanisms remains an important area for exploration. Future work will design specific attack simulation scenarios to quantitatively measure the defense success rate and the precise computational overhead of the proposed security architecture under various malicious network conditions.

Footnotes

Acknowledgments

The authors would like to acknowledge the East China Jiaotong University for the lab facilities and necessary technical support.

Author contributions

Conceptualization, L.Z.; methodology, J.L.; software, J.L.; investigation, L.Z.; resources, L.Z.; data curation, J.L., Z.H.; writing—original draft preparation, J.L.; writing—review and editing, L.Z., J.L., Z.H. and Q.Y.; supervision, L.Z. and Z.H.; project administration, L.Z. and Q.Y.; funding acquisition, L.Z. All authors have read and agreed to the published version of the manuscript.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Natural Science Foundation of China under grant (No. 62261023, 72262014), and Jiangxi Natural Science Foundation of China (20232BAB2).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The datasets used and/or analysed during the current study are available from the corresponding author on reasonable request.