Abstract

At present, significant progress has been made in the research of image encryption, but there are still some issues that need to be explored in key space, password generation and security verification, encryption schemes, and other aspects. Aiming at this, a digital image encryption algorithm was developed in this paper. This algorithm integrates six-dimensional cellular neural network with generalized chaos to generate pseudo-random numbers to generate the plaintext-related ciphers. The initial image matrix is transformed into L-matrix and U-matrix through Lower-Upper decomposition. These matrices are then encrypted simultaneously with distinct cipher sequences. The algorithm's feasibility and security are demonstrated through comprehensive encryption simulations and performance analysis. The paper's contributions include i) the cellular neural network and an innovative chaos approach to develop a new ciphers scheme; ii) the image decomposition encryption effectively shorten the cipher length and reduce interception risks during transmission; iii) the frequent application of nonlinear transforms enhances the structural complexity of the cryptosystem and fortifies the security of the algorithm. Compared to existing algorithms, the paper achieves a novel image decomposition encryption mode with comprehensive advantages. This mode is expected to be applied in image communication security.

Keywords

Introduction

Digital image encryption serves as a crucial method for protecting image information, it is typically conducted on digital computer systems, involves pixel confusion and diffusion processes.1,2 Supporting techniques in digital image encryption often include chaos, plaintext-related cipher, confusion (scrambling), and diffusion.3,4 Chaos and plaintext-related cipher are utilized to generate cipher sequences, while confusion and diffusion are applied for pixel grayscale conversion and coordinate transformation.5,6 Recent advancements in this field have been noteworthy. Feng et al. 7 presented a novel multi-channel image encryption algorithm, and their algorithm uses pixel reorganization and two robust hyper-chaotic maps to encrypt input images. In another paper, Feng et al. 8 constructed a robust hyper-chaotic map and developed an efficient image encryption algorithm based on the map and a pixel fusion strategy. Recently, Raghuvanshi et al. 9 introduced a novel, more stable, secure, and reliable image encryption model. This scheme combines a convolution neural network model with an intertwining logistic map to generate secret keys. Additionally, DNA encoding, diffusion, and bit reversion operations are applied for scrambling and manipulating image pixels. During the same period, Soniya Rohhila and Amit Kumar Singh 10 studied a comprehensive survey of recent digital image encryption using deep learning models. They discussed various state-of-the-art deep learning-based encryption techniques. Besides, many recent related works11–15 have significant research value and deserve attention, discussion, and learning. The research topics of these works include chaotic systems, image encryption schemes, cryptographic analysis, and more.

Currently, significant advancements have been made in the field of digital image information protection. However, certain challenges in this domain cannot be overlooked. These include (i) In 2018, Mario, Thomas, Stefan, et al. highlighted the necessity of ensuring both cipher and algorithm security in image encryption. 16 Regrettably, most existing image encryption algorithms focus solely on algorithm security, neglecting cipher security; (ii) When exposed to malicious attacks, schemes with limited key space or simplistic algorithmic structures are vulnerable; (iii) Conventional one-time-pad image encryption imposes stringent requirements on cipher sequence length. For example, traditional pixel diffusion based on add-and-modulus operations demands the cipher matrix match the size of the plain image. Therefore, cryptosystems should be capable of generating a substantial quantity of pseudo-random numbers. Designing a pseudo-random number generator with optimal statistical properties is challenging. A major issue is the difficulty in controlling the periodic variation of the sequence as the length of the generated pseudo-random sequence increases. Effectively and significantly reducing the length of the pseudo-random sequence remains an urgent problem to resolve; (iv) Almost all known schemes encrypt plaintext as a whole. This approach inherently risks one-time key and cipher-text leakage during information communication. If plain images are encrypted in a decentralized manner, the likelihood of comprehensive information leakage could be significantly diminished.

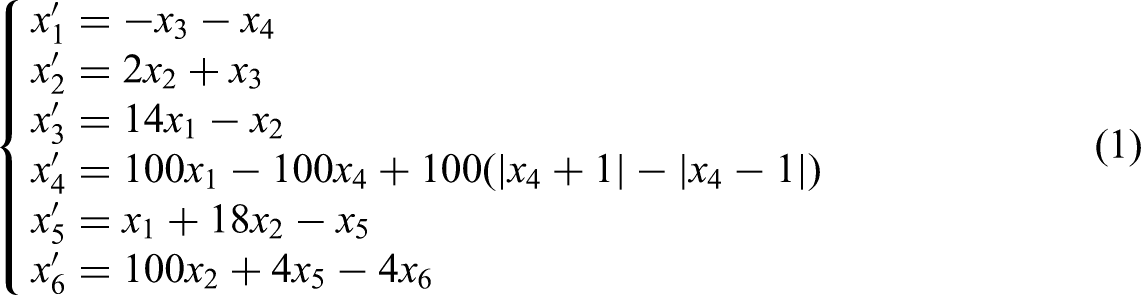

Presented in the paper is a digital image encryption scheme, underpinned by a six-dimensional cellular neural network (6D-CNN)

17

and augmented by lower-upper (LU) matrix decomposition. The 6D-CNN, integrated with a novel chaotic map, forms a cryptosystem with an extensive key space. The process combines matrix LU decomposition, pixel diffusion, and confusion to achieve image encryption. The primary features of the algorithm are summarized as follows:

The integration of CNN and chaos to generate a pseudo-random cipher sequence; Applying image decomposition techniques to the field of image encryption, effectively shortening the cipher sequence and reducing the risk of information leakage; The application of multiple nonlinear tools within the cryptosystem and encryption algorithm to increase structural complexity.

The paper is structured as follows: Algorithm preliminaries Section explains the mathematical underpinnings of the encryption scheme. Generating pseudo-random sequence and cryptosystem Section investigates the structure of the cryptosystem and the mechanism behind cipher formation. Algorithms for encryption as well as decryption Section delves into the intricacies of the image encryption algorithm. Encryption and decryption simulations Section showcases the simulation process of encryption and its results. Evaluation of algorithm performance Section evaluates the performance of the algorithm. The final section, Conclusion Section, provides a conclusion to the paper.

Algorithm preliminaries

In this section, the content focuses on the following four parts: i) The concept of 6D-CNN; ii) The definition of Extended Henon Map (EHM); iii) The method of matrix's LU decomposition and its computer implementation; iv) A new matrix nonlinear transformation and its’ calculation formula. Part 1 and 2 were jointly applied to password generation. Part 3 is the calculation foundation for subsequent image decomposition. Part 4 is served as an auxiliary tool for password generation.

Six-dimensional cellular neural network

The 6D-CNN, a continuous hyper-chaotic system, was introduced by Wang, Bing, and Zhang in 2010, building on the traditional CNN model

18

developed by Chua and Yang. The 6D-CNN is expressed as follows:

As the number of iterations approaches infinity, the system's six Lyapunov exponents include two positive exponents

A novel chaotic map

The Henon map, commonly used to generate random sequences, is a well-known example of chaos.1,2 The Henon map, in its recursive form, is defined as follows:

where

where

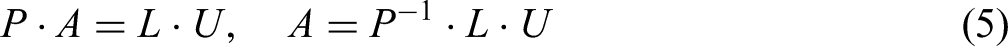

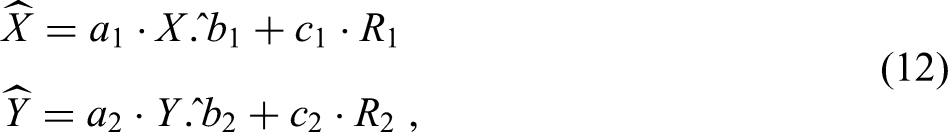

LU decomposition of matrix and rearrangement of LU matrices

Matrix decomposition involves converting a given matrix into a product of matrices according to specific rules. Common matrix decomposition methods include LU decomposition, QR decomposition, Schur decomposition, etc. Some of these are suitable for square matrices, while others can be applied to rectangular matrices. 19 This subsection introduces the LU decomposition of rectangular matrices and a rearrangement scheme for LU matrices, laying the groundwork for subsequent encryption of images.

For illustrative purposes, suppose that

If matrix

where

A significant portion of elements in the L-matrix and U-matrix are zeros, indicating their sparse nature. In matrix operations, excluding these zero elements from calculations can save computational resources and enhance information processing efficiency.

Let

In subsequent sections, the matrices in Equation (7) are referred to as L-image and U-image, respectively.

In practice, LU decomposition is often applied to square matrices. In this case, the size of the matrix

For example, if

The idea of using LU decomposition of matrices to implement digital image encryption is to encrypt the matrices L and U obtained by decomposing the original pixels matrix. This is a typical indirect encryption method. The detailed encryption and decryption algorithms and steps will be discussed in the following text. Due to the sparse nature of matrix P, it is ignored in encryption algorithm.

Matrix nonlinear transform

Suppose that

where

Generating pseudo-random sequence and cryptosystem

The process for generating a pseudo-random sequence is crucial in a cryptosystem. Despite significant achievements in this area, 21 the development of more secure pseudo-random number generators remains a pressing need in applied cryptography.

This section introduces a novel pseudo-random sequence generation mechanism based on 6D-CNN and EHM. To prevent chaos degradation due to finite precision, the outputs of 6D-CNN are numerically processed for the subsequent iteration. A nonlinear transform and a weighted combination of two pseudo-random sequences generated by 6D-CNN and EHM are used to create new pseudo-random numbers.

As per Equation (1), the 6D-CNN is described by a continuous first-order differential equation. For smooth chaotic sequence generation, the continuous form of 6D-CNN is discretized. Common discretization methods for first-order differential equations include the Runge–Kutta method, the Euler method, and the improved Euler method. The Euler method is employed here for simplicity.

where

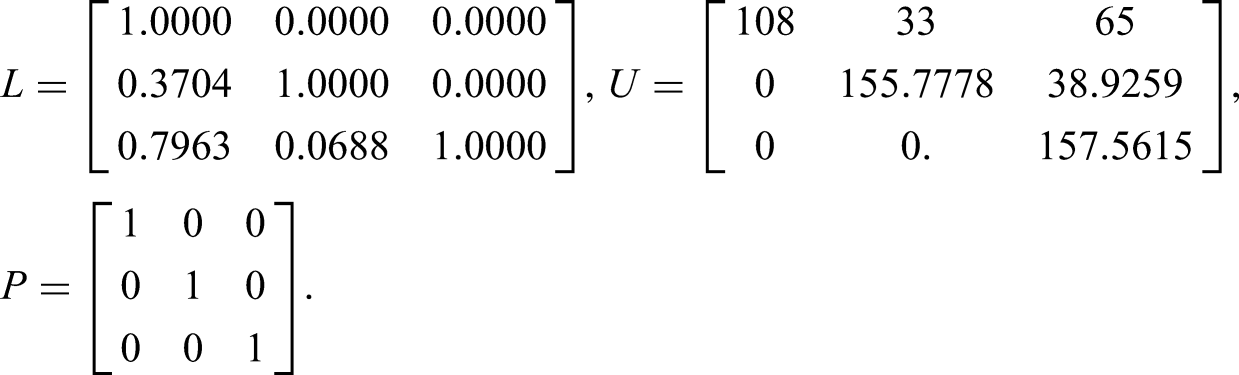

Generating pseudo-random sequence

The mechanism to generate the pseudo-random sequence is as follows:

Initialize parameters

where

Set the parameters

Then, implement the transforms expressed in Equation (8) on the sequences

Next, convert the outcomes of Equation (12) to integer data:

Finally, combine sequence

The key space of the cryptosystem

From Generating pseudo-random sequence Section, it is evident that 21 free parameters (

National Institute of Standards and Technology randomness testing

SP800-22 R1a is an international standard of the randomness statistical testing for binary sequences. It is issued by National Institute of Standards and Technology.

22

In SP800-22, there are 15 testing items, and part of them includes several sub-items. The test outcomes of each item include two indicators: p-value and proportion. In general, the testing is performed for the known significance level

SP800-22 R1a testing of PRSCCs.

Notes: (i) Due to random factors in the calculations, outcomes may vary between rounds; (ii) An asterisk () indicates the minimum data for the respective item.

PRSCC: Pseudo-Random Sequence based on 6D-CNN and Chaos.

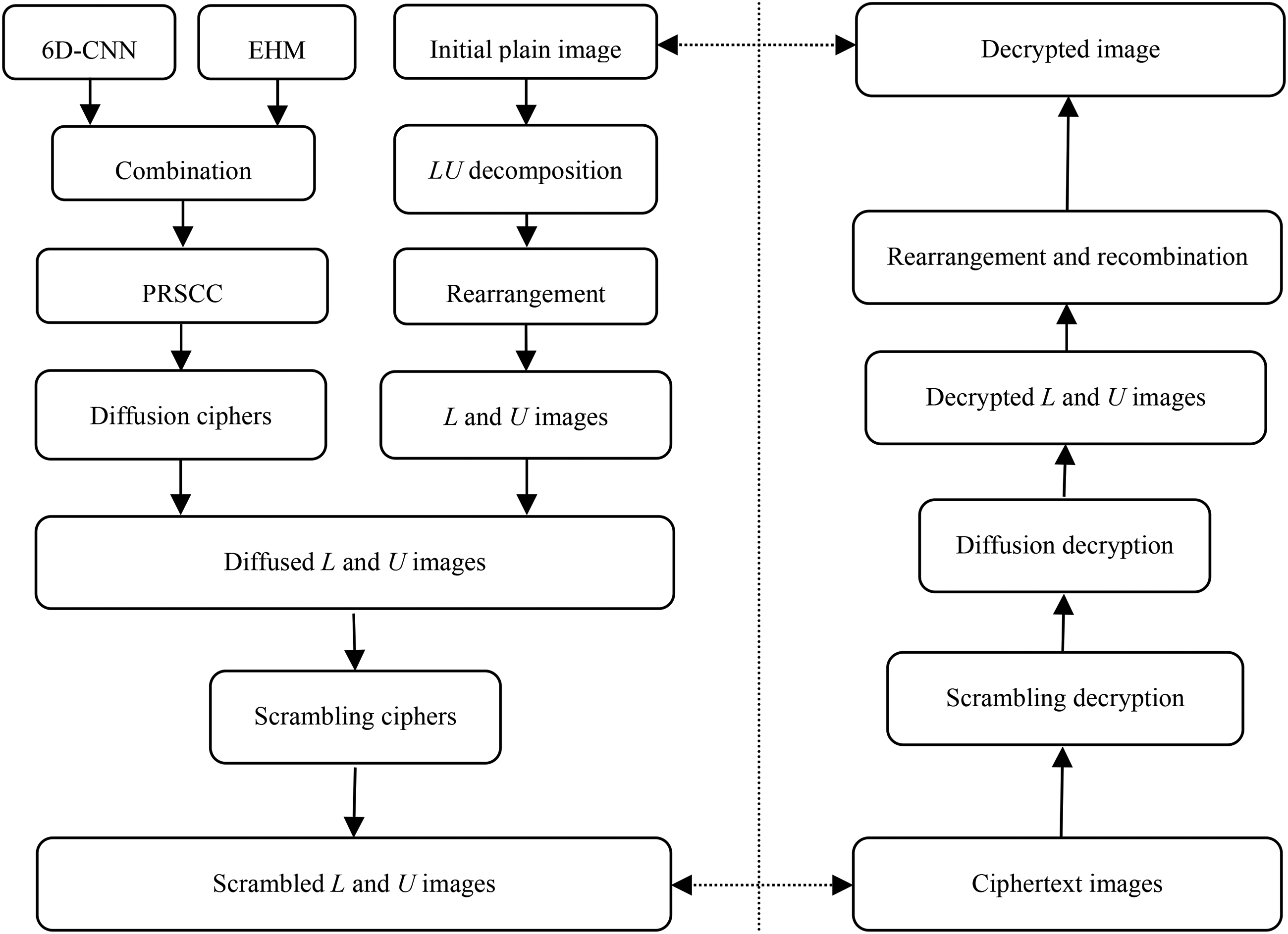

Algorithms for encryption as well as decryption

This section consists of the following parts: i) Image encryption scheme and its mathematical description, which includes the generation of ciphers related to plaintext, pixel diffusion and pixel scrambling; ii) Image decryption scheme and its mathematical description, it is the inverse course of image encryption; iii) The step-by-step description of image encryption and decryption algorithm; iv) The detailed diagram of encryption and decryption scheme; v) The overview of the main features of the proposed algorithms.

Encryption

Diffusion cipher related to plaintext and pixel diffusion

In order to generate a diffusion cipher linked to plaintext, the transformation defined in Equation (8) is applied between

where

Traditional image encryption often employs basic diffusion schemes like pixel XOR or addition-and-modulus operations.

2

These operations, applied to single pixels, result in low computational efficiency. This paper introduces an XOR operation performed row by row, and also column by column:

where

The inverse diffusion process corresponding to Equation (16) is executed as follows:

Pixel scrambling

Using Matlab's “randperm” function, two random positive integer sequences

Decryption

Decryption essentially involves reversing the encryption operations on the cipher image. Firstly, the cipher images

Algorithm description

Image decomposition

Image encryption

Image decryption and reconstruction

Algorithm flow chart

The algorithm flow chart is illustrated in Figure 1.

The flow chart of the proposed algorithm.

Characteristics of the algorithm

The proposed algorithm exhibits its distinct features primarily in two aspects. First, a composite cryptosystem and cipher generation mechanism have been constructed based on high-dimensional CNNs and multiple degrees of freedom in chaos. This structure ensures the security of pseudo-random sequences and stream ciphers effectively. Second, the encryption process is applied not directly to the original plain image but to two derivative images, namely L-image and U-image. These images result from the rearrangement of L and U matrices, which are they decomposed from the plain image.

Advantages of these features include:

- Substantial reduction in cipher sequence length: For an image of size - Decreased computational load in decomposition encryption: The size of L-image or U-image is significantly smaller than the initial image, thereby reducing the computational demand. In traditional pixel diffusion schemes, the number of diffusion operations approximates the cipher length. This algorithm effectively halves the computational requirements. - Reduced risk of interception during transmission: In public key cryptosystems, keys and cipher-texts are transmitted separately across two channels. Suppose that the probability of information being intercepted is

Encryption and decryption simulations

In this section, to verify the feasibility and effectiveness of the proposed algorithms, the simulations of encryption and decryption algorithms will be conducted in a specific experimental environment. The experimental images are selected from the international standard database for image processing.

Selected from the Caltech 101 universal image dataset, 23 the experimental images, namely “Face” (300 × 300) and “Lamp” (320 × 480), undergo simulations on the Matlab 2019a platform. The computational environment comprises an Intel Core (TM) i7 CPU (2.4 GHz), 8.0 GB RAM, running Windows 10.

Figures 2 and 3 display the simulation outcomes for the original images “Face” and “Lamp,” respectively. The initial keys are set as follows:

Encryption as well as decryption outcomes of “face.”

Encryption as well as decryption outcomes of “lamp.”

In Figures 2 and 3, sub-figure (a) depicts the original plain image. Sub-figures (b) and (c) represent the L-image and U-image, derived from the L-matrix and the U-matrix, respectively, referred to as secondary plain images. Sub-figures (d) and (e) illustrate the encrypted versions of these secondary images. Sub-figure (f) shows the decrypted version of the initial image.

In assessing image quality, the peak signal-to-noise ratio1,2 is a prevalent index for evaluating the similarity between the original and processed images. For two images

where

Another metric, called structural similarity,1,2 assesses the similarity of two statistical variables of the same size. Given variables

In the equation,

This section calculates the PSNR and SSIM for relevant image pairs, with data summarized in Table 2. P1 and S1 denote PSNR and SSIM between the cipher-texts and secondary plaintexts; P2 and S2 refer to PSNR and SSIM involving the decrypted as well as original plain images, respectively.

PSNR and SSIM involving the plain image as well as the cipher one.

Table 2 indicates the effective encryption as well as decryption capabilities of the introduced algorithm, affirming its feasibility and efficacy.

Evaluation of algorithm performance

A comprehensive evaluation of the proposed algorithm's security and its resistance to various attacks is conducted in this section, which includes: the size of the key space, the analysis of the key sensitivity and equivalent key sensitivity, the gray histogram and surface of the plain images and cipher images, the analysis of the pixel correlation, the computation of the information entropy (IE), the analysis of the plaintext and cipher-text sensitivity, and the computation and interpolation description of the algorithm's average running time.

Key space

In general, the key space encompasses a range of possible key values, where a larger key space bolsters resistance to brute-force attacks. For 8-bit integer images, a key space exceeding 128 bits is considered secure.

The proposed algorithm's key space encompasses 27 keys. Assuming these keys are double-precision decimals, the key space approximates log210378≈1256 bits. Even when keys are confined to the conservative range of [10−4, 104], the key space remains no less than log210216≈716 bits, offering ample security against exhaustive attacks.

Key sensitivity as well as equivalent key sensitivity

This subsection delves into both the key sensitivity as well as the equivalent key sensitivity of the introduced algorithm, providing an alternative perspective on its resistance to exhaustive attacks.

Key sensitivity

Key sensitivity, an essential metric, evaluates an algorithm's defense against brute-force attacks. It encompasses two dimensions: sensitivity during encryption and sensitivity during decryption. High key sensitivity is demonstrated when two marginally different key sets encrypt the same image and produce significantly distinct cipher images. Conversely, high sensitivity during decryption is evident when slightly varied keys decrypt the same cipher image, resulting in a drastically altered image compared to the original plaintext, the algorithm shows high sensitivity in the decryption process.

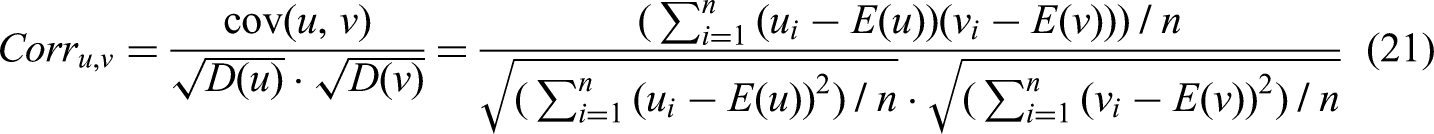

Prior to analyzing key sensitivity, several quantitative image comparison indicators are introduced: correlation coefficients (CORR), amount of pixels change rate (NPCR), unified average changing intensity (UACI), as well as block average changing intensity (BACI).1,2 CORR is calculated by:

In the equation,

For two same-sized images, NPCR indicates the proportion of differing pixels to the total image size, while UACI measures the average absolute difference rate relative to 255 (the greatest difference). NPCR as well as UACI calculations for images

In the equation,

BACI, offering a more intricate approach than UACI, measures the differences between two identically sized images on a block-by-block basis. Detailed computation methods for BACI are available in.1,2

Given 27 distinct keys in the key space, three keys Key sensitivity in the process of encryption

The four key sets are used to encrypt the L-image and U-image of the “Face” image. The resultant cipher images are revealed in Figure 4. At first glance, discerning differences between the four cipher-texts is challenging. Objective assessment of the cipher images is conducted using indicators such as CORR, SSIM, NPCR, UACI, as well as BACI, with outcomes tabulated in Table 3. The values closely align with theoretical expectations, demonstrating the algorithm's high sensitivity to keys during the encryption process.

Key sensitivity in the process of decryption

Cipher-texts generated using four key sets.

Sensitivity analysis of keys in image encryption.

CORR: correlation coefficient; NPCR: amount of pixels change rate; UACI: unified average changing intensity; BACI: block average changing intensity.

Use

Sensitivity analysis of keys in image decryption.

CORR: correlation coefficient; NPCR: amount of pixels change rate.

Equivalent key sensitivity

The analysis extends beyond key sensitivity to equivalent key sensitivity, crucial in symmetric cryptography security analysis. The equivalent key, typically derived from chaotic systems as a pseudo-random sequence, is fundamental in deciphering cipher-text. In the proposed algorithm, the equivalent keys, Analysis of equivalent key sensitivity in the process of encryption

In the selection process of the key set

Subsequently, the plain image Sensitivity analysis of equivalent keys in decryption

Sensitivity analysis of equivalent keys in image encryption.

CORR: correlation coefficient; NPCR: amount of pixels change rate; UACI: unified average changing intensity; BACI: block average changing intensity.

The decryption of the cipher image is performed using

Sensitivity analysis of equivalent keys in image decryption.

CORR: correlation coefficient; NPCR: amount of pixels change rate.

The gray histogram and surface

In the realm of image encryption algorithms, those with heightened security and robustness typically exhibit a uniformly distributed gray scale in cipher-texts. This characteristic manifests in the gray histogram and surface of the cipher-text. Figures 5 and 6 display the gray histograms and surfaces for the secondary plaintexts and their corresponding cipher-texts of the experimental image “Lamp.”

Gray histogram surfaces of plain as well as cipher images.

Gray surfaces of plain as well as cipher images.

Analysis of Figures 5 and 6 reveals fluctuating histograms and gray surfaces for the secondary plain image, in contrast to the flat and uniform histograms of the cipher-texts. The cipher images’ gray surfaces exhibit a nearly uniform height. These observations confirm the statistical security and robustness of the proposed encryption algorithm.

Pixel correlation

Given that the original unmodified image represents an accurate depiction of the subject, a specific level of correlation among its pixels can be anticipated. This correlation is expected to extend to the pixels of the L and U images, as they are derived from the original image. A crucial indicator of the security and robustness of an encryption algorithm is its ability to disrupt this inherent pixel correlation. Successful encryption would result in a pixel correlation within the ciphered images that is distinctly different from that in the L and U images.

In the context of the experimental Face image, a sampling of 2000 pixels was selected randomly in horizontal, vertical, and diagonal orientations. Subsequently, the CORR for these three sets of pixels were calculated for Gray Surfaces for both L and U images, and their encrypted variants. The findings are systematically presented in Table 7. Moreover, the distribution of pixels in these scenarios is illustrated in Figures 7 and 8.

Pixel distributions of L-image and cipher-text for L-image.

Pixel distributions of U-image and cipher-text for U-image.

Correlations between plain as well as cipher images in three directions.

Figures 7 and 8 indicate a concentration of pixels in lower triangular areas for L and U plain images, whereas cipher-text pixels display an even distribution in rectangular regions. This suggests a complete alteration of pixel correlation in the plaintexts by the encryption algorithm, a conclusion corroborated by the data in Table 7.

Information entropy

IE measures the randomness and unpredictability in an information system. For an 8-bit integer grayscale image, the maximal IE value is 8. An image encryption algorithm elevating the IE of plaintext towards this maximum is deemed secure and robust. The IE for an 8-bit image is computed as follows:

where the occurrence frequency of a pixel with a specified value

In image processing, relative entropy and information redundancy are key metrics for assessing cipher image quality. For 8-bit grayscale image, relative entropy is the score of

For the experimental images “Face” and “Lamp,” the information entropies, relative entropies, and information redundancy were calculated and are shown in Table 8. The related data of other test images of different sizes including 256 × 256, 512 × 512, and 1024 × 1024 are also listed in Table 8. The outcomes evidently indicate that the information entropies of the cipher-texts surpass those of the plaintexts, closely approaching the value of 8. Furthermore, the relative entropies (compression rates) of the plaintexts are notably less than 1, while those of the cipher-texts are almost 1. This indicates a significant compression potential in the plaintexts, in contrast to the cipher-texts, which exhibit minimal compression possibility. Additionally, the redundancy in each cipher-text is markedly lower than in the corresponding plaintext, approaching nearly 0, indicating minimal information redundancy in the cipher images. These findings lead to the conclusion that the results unequivocally indicate the security and robustness of the proposed algorithm.

Entropies, relative entropy, as well as information redundancy of images of plain and cipher.

IE: information entropy.

Plaintext sensitivity and cipher-text sensitivity

Plaintext sensitivity

In applied cryptography, plaintext sensitivity refers to the degree of impact that variations in the plaintext have on the cipher-text. This is a crucial measure of an image encryption algorithms defense against differential attacks such as chosen-plaintext attacks. An encryption algorithms sensitivity to plaintext is considered if a minor change in the plaintext outcomes in a significant alteration of the cipher-text, with keys remaining constant.

Assuming a pixel increment in the plaintext is

Plaintext sensitivity analysis of the proposed algorithm.

CORR: correlation coefficient; NPCR: amount of pixels change rate; UACI: unified average changing intensity; BACI: block average changing intensity.

Cipher-text sensitivity

Cipher-text sensitivity, akin to plaintext sensitivity, assesses the decryption algorithm's capacity to withstand differential attacks like chosen-cipher-text attacks. It gauges the extent to which variations in the cipher-text affect the plaintext. Employing a specific set of keys for encryption and decryption, when a slight alteration in the cipher image leads to a significant change in the plaintext upon decryption, the decryption algorithm is considered sensitive to the cipher image.

Variation in the cipher image was set to

Cipher-text sensitivity analysis of the proposed algorithm.

CORR: correlation coefficient; NPCR: amount of pixels change rate.

This data substantiates the sensitivity of the proposed decryption algorithm to cipher image changes.

The average running time of the algorithm

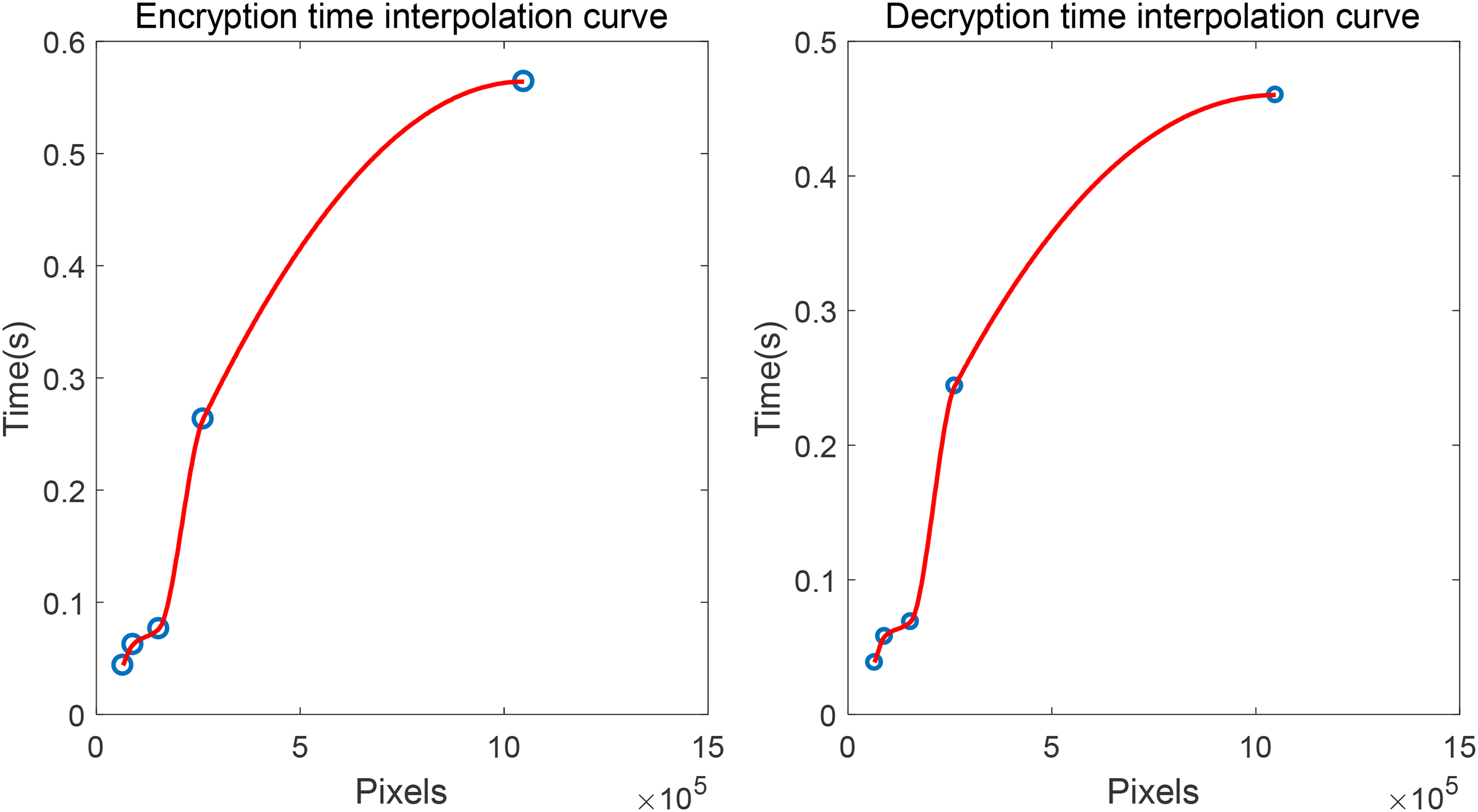

This subsection gives the running time evaluation of the proposed algorithm. The computational environment comprises an Intel Core (TM) i7 CPU (2.4 GHz), 8.0 GB RAM, running Windows 10. For images of different sizes, we tested and calculated the average running time of the algorithm. The average times of the algorithm running 100 times are listed in Table 11. Besides, Figure 9 demonstrates the time interpolation curves using the method of piecewise cubic Hermite interpolation polynomial. It is obvious that the algorithm time consumption is quite limited and the proposed algorithm is easy to implement in existing computing environments. It should be noted that, since some random factors are involved in the algorithm and the experimental result is closely related to the computation configuration, the given data is relative and only for reference.

Time interpolation curves of PCHIP. PCHIP: piecewise cubic Hermite interpolation polynomial.

The data of the average times of the algorithm.

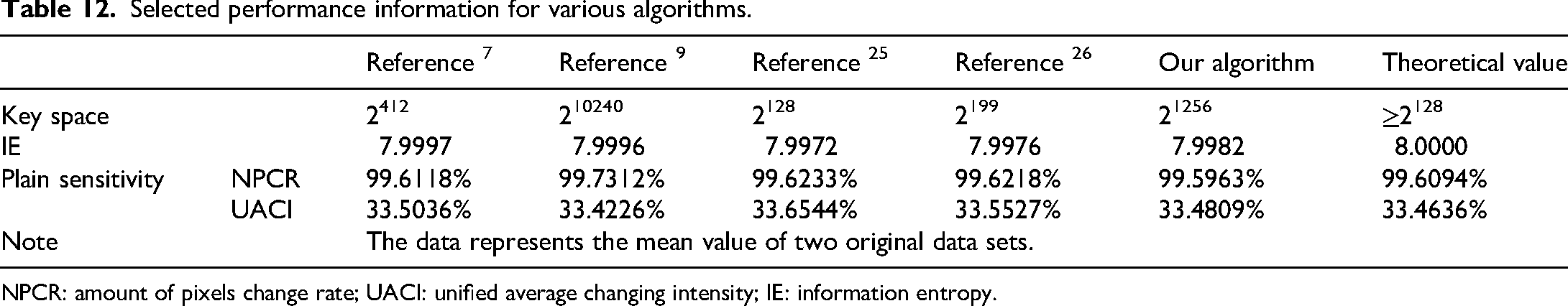

Comparison with other algorithms

This subsection compares the proposed algorithm with those cited in References,7,9, 25and, 26 based on experimental data from the “Lamp” image. It is noteworthy that the comparison involves only a subset of performance indicators. The experimental results are presented in Table 12.

Selected performance information for various algorithms.

NPCR: amount of pixels change rate; UACI: unified average changing intensity; IE: information entropy.

For all five algorithms under comparison, as shown in Table 12, all indicators meet the security requirements. Theoretically, this suggests the feasibility and effectiveness of these algorithms. To comprehensively evaluate their quality, identical performance indicators were rated on a scale. The scoring criteria were as follows: 5 points for the best performance, descending to 1 point for the least effective. The scoring outcomes are detailed in Table 13. These scores lead to the conclusion that the proposed algorithm holds local comparative advantages and good overall performance.

Performance data score of different algorithms.

NPCR: amount of pixels change rate; UACI: unified average changing intensity; IE: information entropy.

Conclusions

Aiming at some unresolved issues in image encryption, such as key space, password generation, security verification and encryption schemes, this paper constructed an encryption algorithm for digital images using 6D-CNN and matrix LU decomposition. The paper comprehensively discussed the mathematical foundation of algorithms, password generation methods, image decomposition encryption schemes, and the system security of the proposed algorithms. The simulation experiment has been conducted in the paper. Compared with some similar existed encryption schemes, the proposed algorithm showed comprehensive advantages, which include a significant reduction in cipher length, a decreased likelihood of key and cipher-text interception during transmission, a substantially large key space. In contrast to traditional image compression encryption, this paper proposed a novel image decomposition encryption mode. This undoubtedly opens up new ideas for enhancing image encryption and transmission security. As for the computational complexity of the algorithm, we will further discuss in the future.

Footnotes

Acknowledgments

This work is supported by the National Natural Science Foundation of China under Grant No. 61702153.

Authors’ contributions

LT and XL contributed in conception, design, manuscript drafting, and manuscript review. LH contributed in funding acquisition, software, and visualization. BH contributed in validation and resources.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Natural Science Foundation of China, (grant number 61702153).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.