Abstract

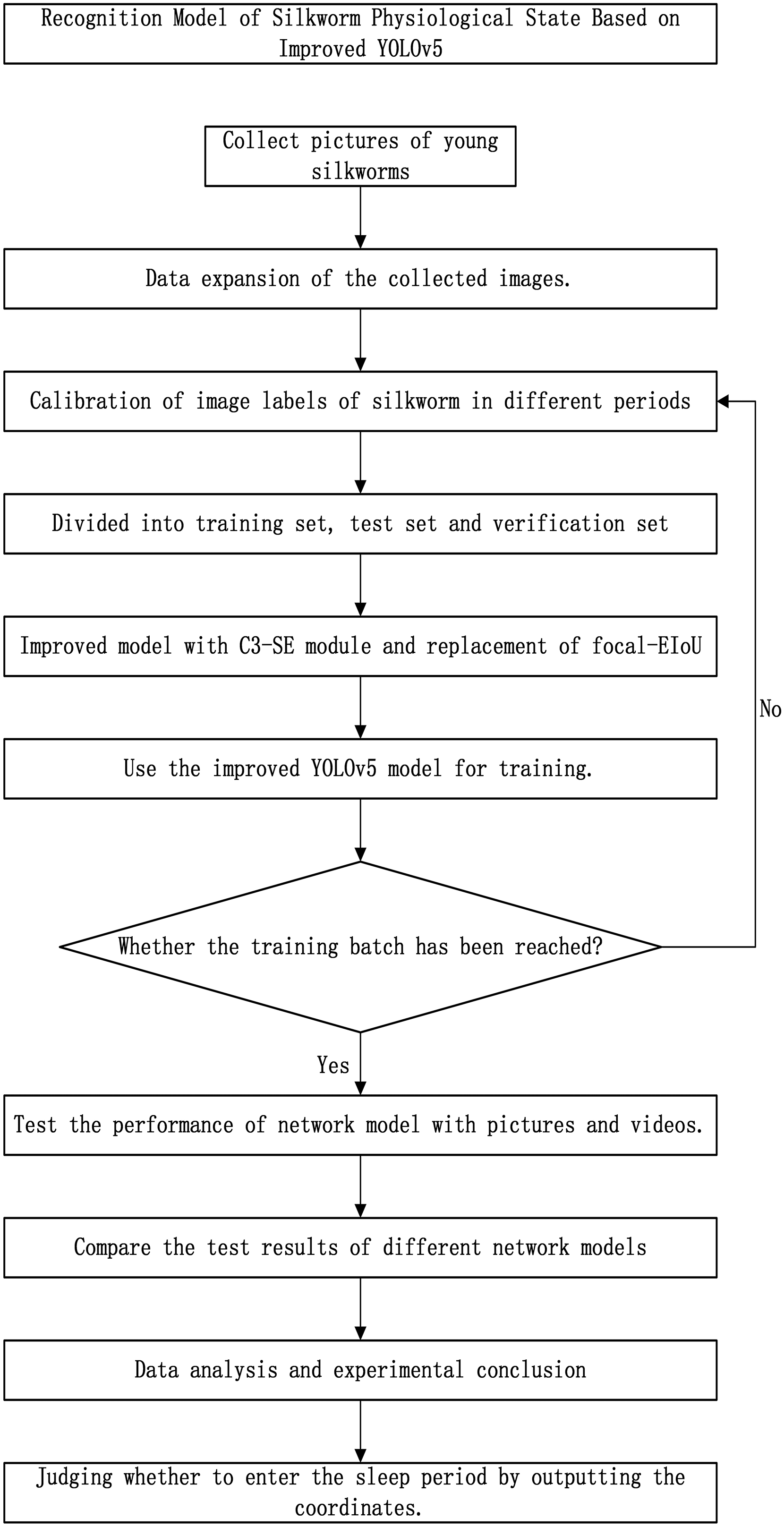

Silkworm breeding, as a pivotal economic activity across various regions of China, plays a crucial role in promoting rural revitalization. Notably, the early stage of silkworm development, during which the larvae are most vulnerable and environmentally sensitive, poses significant challenges due to their high pathogenicity and mortality rates. To enhance the efficiency of silkworm breeding, it is imperative to accurately and rapidly identify the physiological state of these small silkworms, ensuring timely feedback to farmers. By using the manually labeled data set, we trained a neural network model to identify the age of the small silkworm through the external characteristics and body length of different instars, and the model used the output center point coordinates to evaluate whether the silkworm entered the dormancy period. If the small silkworm enters the dormant period, the small silkworm will not move. By comparing the maximum difference of the coordinates of the center point of the small silkworm in the experimental group during the dormant period and the feeding period, a certain threshold is set. If the maximum difference of the coordinates of the center point is less than the threshold, the small silkworm is judged to enter the dormant period. To further enhance the model's performance, we introduced an improved target detection network model, building upon the established YOLOv5 architecture. This enhanced model integrates the C3-SE attention mechanism, enabling the network to focus more intently on the target of interest, thus improving detection accuracy. Additionally, we replaced the CIoU loss function in the original target detection network model with the Focal-EIoU loss function. This adjustment effectively mitigates the issue of imbalanced positive and negative samples, accelerating the convergence speed of the network and ultimately enhancing the model's accuracy and recall rate. To validate the accuracy of the proposed model, we randomly selected sample pictures from the curated small silkworm dataset, constituting the test and verification sets. This dataset comprised images and videos capturing different developmental stages of small silkworms. The test results demonstrate that the improved YOLOv5 model achieves an average accuracy of 92.2%, surpassing the preimproved network model by 2.29%. Specifically, the model exhibits a 0.3% increase in accuracy, a 3.4% improvement in recall rate, and a significant 7.7% enhancement in frames per second. These findings indicate that the enhanced YOLOv5 model is capable of accurately and efficiently identifying the physiological state of small silkworms.

Introduction

One essential prerequisite for a nation's economic progress is agricultural production, and specifically, the cultivation of crops. The nation's emphasis and backing for silkworm farming have significantly bolstered its advancement. 1 Within the lifecycle of mulberry silkworms, the larval stage is particularly vulnerable and responsive to environmental changes. If these larvae fail to consume mulberry leaves for certain duration after waking from sleep, they may exhibit signs of weakness, illness, or even mortality. Silk is a textile woven by silk, and silk is extracted from cocoons. For silk production, monitoring the health of these small silkworms is crucial. Unhealthy small silkworms can lead to a decrease in cocoon production, affecting not only the quality of the cocoons but also ultimately the overall quality of silk products. 2

In order to promote the development of sericulture, we are committed to developing a model based on machine vision recognition. Through the appearance differences of different silkworm age segments, the backbone network is used to extract its features, the neck network enhances the feature expression ability, and the head network performs model prediction. Finally, the category and center coordinates of the small silkworm are obtained through training. The hatching process for silkworm eggs spans approximately 8–9 days, during which the newly hatched silkworms are tiny and black, colloquially known as “ant silkworms”. These silkworms typically undergo five instars, molting once in each instar, with each molt increasing their age by one period. In total, they molt four times, and before each molt, they enter a period of dormancy referred to as the “sleep period.” In practical applications, silkworms in the first to third instar are commonly referred to as small silkworms. Table 1 outlines the physiological traits exhibited by silkworms at various growth.

Physiological characteristics of small silkworm at different stages.

During silkworm breeding, there are two distinct periods: the dormant phase and the feeding phase. When in the dormant period, the silkworm remains inactive and abstains from eating. Conversely, during the mulberry-eating phase, the silkworm consumes a significant quantity of mulberry leaves. However, if the silkworm fails to consume mulberry leaves for a certain duration after awakening, it may exhibit symptoms of weakness, illness, or even mortality. To mitigate the economic losses of sericulture farmers, it is crucial to efficiently and accurately identify the physiological status of small silkworms. 3 In recent years, the significant advancement in machine vision and artificial intelligence technologies has expedited the integration of engineering intelligence across numerous industries. Specifically, machine vision has undergone substantial enhancements in its application to intricate scenarios, encompassing both industrial and agricultural field.4–6

Increasingly, deep learning methods are finding applications in agricultural research, owing to their capability to autonomously extract intricate image features with greater speed and precision than traditional algorithmic approaches.7–11 There are many studies on the identification of physiological characteristics of animals and plants. Ran et al. 12 used the lightweight real-time fatigue driving detection model of improved YOLOv5s and Attention to detect the fatigue state of drivers in a timely manner. Qin et al. 13 used studied the identification and diagnosis of four types of alfalfa leaf diseases using pattern recognition algorithms based on image processing technology. Gui et al. 14 used an improved YOLOv5 model to detect tea buds. In this model, Ghost_conv module was introduced to replace the original convolution, and bottleneck focus module was added to the backbone network to improve detection accuracy. Chen et al. 15 added the GhostConv module to the YOLOv5 network, incorporated the convolutional Block attention module into the backbone network, and used the improved model to detect strawberry diseases. Wen et al. 3 proposed an improved lightweight YOLOv4 silkworm detection algorithm based on multiscale feature fusion. The improved deep learning separable convolution MobileNetV3 lightweight backbone network replaces the YOLOv4 backbone network, reduces the calculation amount and model scale of the backbone network, makes up for the accuracy loss of the deep separable convolution lightweight part, and improves the detection accuracy of the lightweight model. However, he only identified the silkworm and did not further explore the silkworm age and physiological state. The current system for recognizing small silkworm physiological states falls short of meeting practical demands, leaving ample room for enhancement in both the recognition model and methodology.

Certain classical two-stage detection algorithms and models, while boasting high accuracy, suffer from sluggishness and bulkiness. These constraints impede real-time monitoring of the silkworm's physiological state. 16 The YOLO algorithm, however, strikes a commendable balance between detection accuracy and speed. 17 The YOLO series has undergone numerous iterations, with its various modules undergoing continual optimization and integration of cutting-edge strategies. As a result of these refinements, YOLOv5 addresses shortcomings of previous versions, like its reduced parameter count and enhanced detection capabilities. 18

At present, the mainstream YOLO series algorithms are YOLOv5, YOLOv7, and YOLOv8. Table 2 shows the comparison of different versions of YOLO model.

The comparison of different versions of YOLO model.

It can be seen from Table 2 that although YOLOv5 is slightly inferior in accuracy, it performs well enough in speed and accuracy. For this experiment, it can meet the needs of real-time detection. Secondly, after a long period of verification and optimization, YOLOv5 has been widely recognized for its stability. Therefore, we use YOLOv5.

This paper introduces an enhanced YOLOv5-based detection algorithm to accurately identify the physiological state of small silkworms, aiming to enhance detection precision, enable real-time detection, and provide timely feedback to farmers. To address the challenge of inadequate silkworm feature extraction, we incorporate the C3-SE attention mechanism. This mechanism captures global long-range dependencies, thus bolstering the convolution's feature extraction capabilities. Additionally, we introduce the Focal-EIoU Loss function. The loss function does not introduce additional parameters in the complex small silkworm observation background, which further improves the convergence speed of the network while retaining the training time for optimization.

The rest of this paper is organized as follows: In the second section, we briefly review the original YOLOv5 model and propose an improved YOLOv5 model. In the third section, we list the experimental materials and methods. The experiment and result analysis are shown in the fourth part. Finally, the fifth section summarizes the conclusion.

Principle of the detection algorithm

YOIOv5 network module

The YOLO series represents a one-stage deep learning-based regression approach, contrasting with the two-stage deep learning-based classification methods such as R-CNN, Fast-RCNN, and Faster-RCNN. 19

YOLOv5 20 is a one-stage target recognition algorithm proposed by Glenn Jocher in 2020. YOLOv5 offers four variations based on network depth and width: YOLOv5s, YOLOv5m, YOLOv5l, and YOLOv5x. These models share a similar core structure, with the primary distinctions lying in the depth multiplier (controlling model depth) and width multiplier (regulating model width). 21

YOLOv5 is a target detection algorithm. Its model structure mainly includes the following components: input, Backbone network, Neck network, and output.

The Head network in YOLOv5 comprises three distinct output layers, designed to detect targets of varying scales: large, medium, and small. 22 The backbone network provides robust feature extraction and computational efficiency. The Neck network incorporates FPN to fuse information across various feature map levels. Post-detection, YOLOv5 employs Non-Maximum Suppression (NMS) to refine overlapping target boxes, resulting in the final detection output. Additionally, YOLOv5 utilizes the Mish activation function, an alternative to ReLU, to further boost model performance. 23 The YOLOv5 model structure is shown in Figure 1.

YOLOv5 model structure diagram.

Improved YOLOv5 network structure

Attention module

The squeeze–excitation network 24 is a network model proposed by Hu et al., which focuses on the relationship between channels. Its goal is to optimize the network model by learning image features based on the loss function. This involves augmenting the weights of effective image features while diminishing the weights of ineffective or irrelevant features, ultimately leading to the best possible results from the trained model.

The structure of SE Block is shown in Figure 2. Firstly, the input feature map

SE block structure diagram.

Here, * represents the convolution operation,

The main operation of the SE module: Squeeze, Excitation. The process is:

Transformation ( Squeeze ( Excitation ( Scale (

SE modules with different structures are shown in Figure 3.

SE modules with different structures.

Replace Focal-EIoU

In the YOLOv5 structure, the default loss function is CIoU (Complete-IoU) to be used as a regression optimization loss function. The calculation formula of CIoU is as follows:

EIoU Loss includes three parts: IoU loss, Distance loss, and Height width loss (overlap area, center point example, height-to-width ratio).

25

The loss of height and width directly minimizes the difference between the height and width of the predicted target bounding box and the real bounding box, resulting in faster convergence and better positioning results:

To enhance network performance with minimal overhead, the C3-SE module is integrated into the final two C3 layers of the backbone network. By incorporating this module and adopting the Focal-EIoU loss, the optimized YOLOv5 network model's overall architecture is established, as depicted in Figure 4.

Improved YOLOv5 model structure diagram.

Judgment method of dormancy period

In target detection, we usually use bounding boxes to describe the location of the target. The bounding box is a rectangular box, which can be determined by the

The small silkworm will not move during the dormancy period; during the feeding period, a certain amount of exercise will be performed. After the YOLOv5 model is established, the boundary frame coordinates are printed out. By comparing the maximum difference between the coordinates of the experimental group in the dormant period and the experimental group in the feeding period, the threshold value is set to judge whether the small silkworm is in the dormant state.

The camera is set to take a photo every 3 s. After identifying the small silkworm with the established model, the coordinate information is printed out, and the coordinate position is compared twice. The maximum difference is greater than the threshold value, indicating that the small silkworm is in the feeding stage. If the maximum difference is less than the threshold, the small silkworm is in a dormant state.

Materials and methods

Experimental material

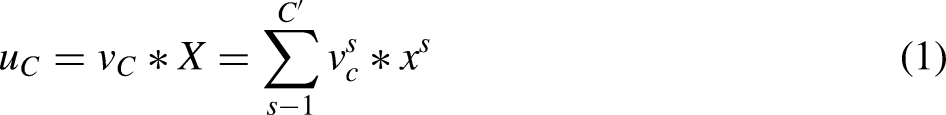

In the subtropical monsoon climate zone, we successfully cultivated small silkworms and captured their images. This region boasts ample thermal resources, a frost-free period spanning 347 days, an annual average temperature of 17.9 °C, and annual rainfall totaling 1169.6 mm, creating a favorable environment for silkworm breeding. To maintain data set precision, we employed a mobile phone camera to capture images of the silkworms’ various growth stages under diverse lighting conditions, including incandescent, LED, and natural light. These images boast a high resolution of 4640 × 2608 pixels, capturing even the most minute changes in the silkworms’ development. After screening, 418 original data sets were obtained, including 132 pictures of first instar silkworm, 139 pictures of second instar silkworm, and 147 pictures of third instar silkworm. Under the guidance of silkworm breeding experts, we accurately determined the three key growth stages of silkworm larvae, as shown in Figure 5.

Pictures of small silkworms at 1–3 ages. (a) First instar silkworm, (b) second instar silkworm, (c) third instar silkworm.

Data expansion

As deep learning models undergo training, the amount of training images plays a pivotal role in determining their ultimate performance. However, due to the scarcity of available silkworm images, it is essential to employ data augmentation techniques before commencing the training. Challenges encountered during the image capture of small silkworms, including uneven lighting, camera shake, diverse angles, and other factors, can result in blurry or overexposed images. Therefore, filtering the captured images becomes critical, requiring the removal of blurry images and refinement of unclear ones. To address this, we leverage YOLOv5's integrated data augmentation features, which enable us to apply various techniques such as image mirroring, random 180-degree rotations, the introduction of Gaussian noise, and brightness adjustments to either lighten or darken the images. The outcomes of these augmentation methods are visually presented in Figure 6. Finally, the original data set was expanded to obtain a data set of 1672 pictures, including 528 pictures of the first instar silkworm, 556 pictures of the second instar silkworm, and 588 pictures of the third instar silkworm.

Image after data enhancement. (a) Original image, (b) mirror image, (c) Gaussian noise, (d) increased brightness.

Experimental environment

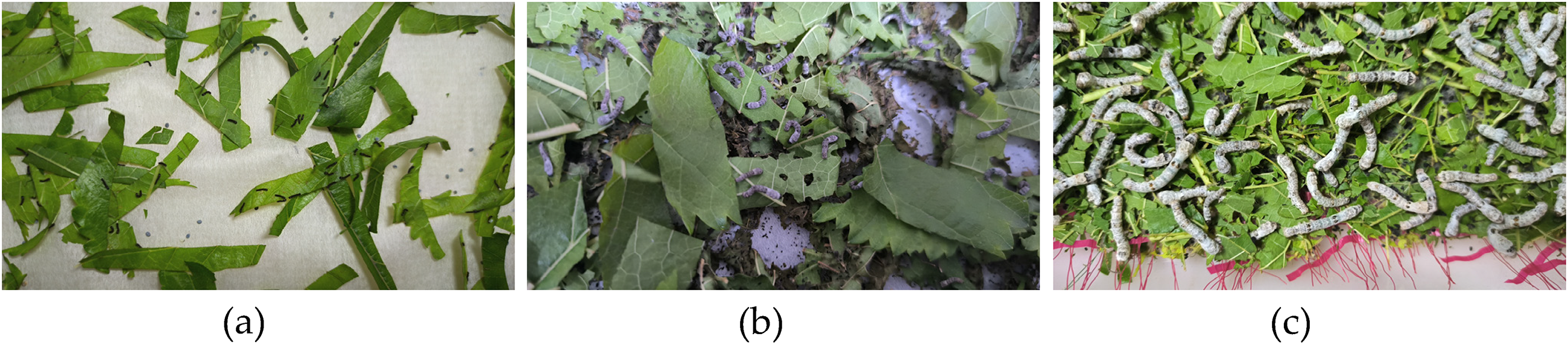

The desktop computer was used as the processing platform, the operating system is windows 10, the PyTorch framework and YOLOv5 environment were configured in the Anaconda3 environment, the python version 3.9.0 was used, and the CUDA version is 11.3. The processor is inter Core i5-10400F, the main frequency is 2.9 GHz, the memory is 16G, and the graphics card is GeForce GTX 1050Ti 4G.

Learning rate, momentum, and weight decay are called hyperparameters. The learning rate determines the step size of the weight update in the training process of the model. Momentum is a method of accelerating gradient descent; weight decay penalizes large weight values by increasing the square term of the weight in the loss function, thereby preventing overfitting. The batch size represents the number of samples used for each parameter update, and the number of training rounds defines the number of iterations on the entire training dataset.

We setten the initial learning rate to 0.01, momentum to 0.937, weight decay to 0.0005, image input size to 640 pixels × 640 pixels, batch size to 8, training rounds to 200, and IoU threshold to 0.5. Table 3 shows the specific configuration.

Test environment setting.

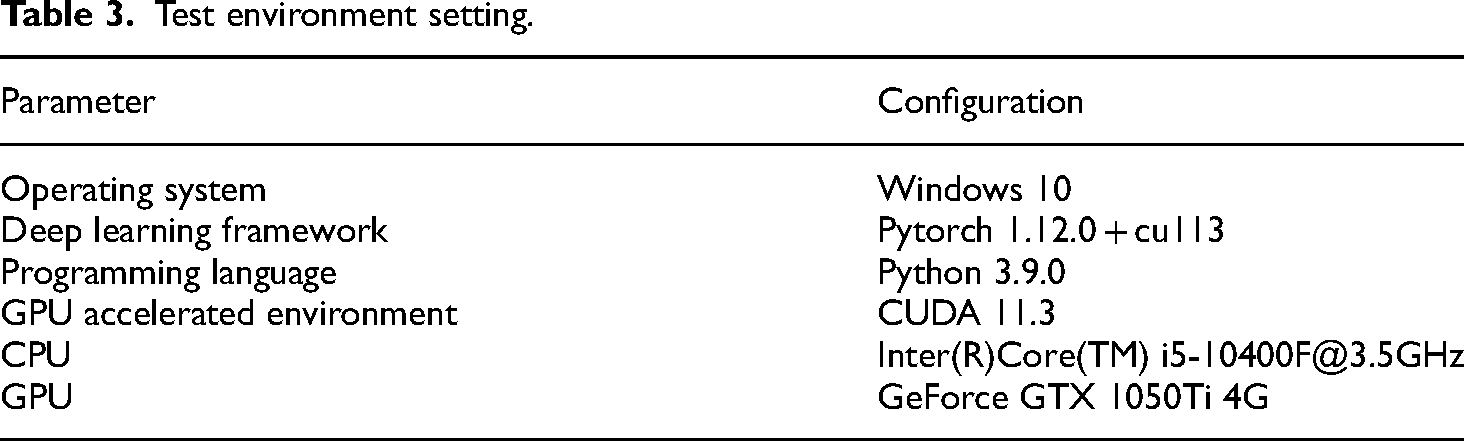

Experimental process

The data set is divided into training set, test set, and verification set according to the ratio of 8:1:1. To train the enhanced YOLOv5 network, the training set is supplied. During the training process, the stochastic gradient descent algorithm is utilized to refine and optimize the network model. Once the training is completed, the optimal weight configuration is achieved. Then, the test set was utilized to evaluate the performance of the refined network model. This refined model was benchmarked against the original YOLOv5 model and other competing models. Finally, the network model that demonstrates superior results was chosen as the definitive model for the physiological recognition of small silkworms. The experimental flow chart is shown in Figure 7.

Experimental flowchart.

Experimental results and analysis

Evaluation index

In order to evaluate the performance of the improved YOLOv5 model, it is very important to use the correct evaluation indicators. In this study, P (Precision), R (Recall), and AP (Average Precision) were used to evaluate the performance of the model in recognizing the physiological state of small silkworms and were compared with other models as evaluation indicators. Precision is concerned with the predicted positive examples, as well as the real positive and negative examples, reflecting the false detection rate of the model. Recall is concerned with the predicted positive and negative cases, as well as the real positive cases, reflecting the missed rate of the model. AP is the main index to evaluate the performance of model detection. The P, R, and AP formulas are as follows:

The formula for calculating the number of parameters is shown in formula (9):

Ablation study

In order to verify the influence of Focal-EIoU and C3-SE modules on the recognition algorithm of physiological state of small silkworm, a series of ablation studies were conducted. (1) YOLOv5 original model (A) YOLOv5 original model + C3-SE (B) YOLOv5 original model + Focal-EIoU (Ours) YOLOv5 original model + Focal-EIoU + C3-SE. The model performance is analyzed and compared in Table 4.

Ablation experiment.

As shown in Table 4, the YOLOv5 model integrated with the C3-SE module learns each image feature according to the loss function, increases the weight of effective image features, reduces the weight of invalid image features, and trains the network model to produce better results. Compared with the original model, the recall rate is increased by 0.5%, and the average accuracy is increased by 2.6%. Frames per second (FPS) increased by 7.7%, but accuracy decreased by 1.8%.

Replacing the YOLOv5 model of Focal-EIoU and changing the loss function of YOLOv5 can effectively improve the accuracy and robustness of the trained target recognition model. Compared with the original model, the accuracy, recall rate, and average accuracy are improved by 1.4%, 1.1%, and 0.8%, respectively.

Finally, compared with the original model, the recall rate of YOLOv5 model integrating C3-SE module and replacing Focal-EIoU increased by 3.4%, the average accuracy increased by 2.9%, and the accuracy increased by 0.3%. Frames per second increased by 7.7% due to a reduction in the number of parameters. The accuracy index reflects the false detection rate of the model. It is concluded that the accuracy has been improved by 0.3%, the recall rate has been improved by 3.4%, the average accuracy has been improved by 2.9%, the FPS has been improved by 7.7%, and the average accuracy and recall rate have been improved.

Table 5 is the recognition results of the modified model for different silkworm ages. Among them, we can find that the accuracy and regression rate of this model for the recognition of first instar silkworm are higher than other silkworm ages. This is precisely because the appearance of first instar silkworm is black, which is quite different from the appearance of other silkworm ages and is better recognized. The recognition accuracy of the second instar silkworm and the third instar silkworm is lower than that of the first instar silkworm, but we can still distinguish them after recognition.

Identification results for different categories.

Center point coordinate experiment

In fact, the same batch of small silkworms is in a dormant state at the same time, so in order to verify whether the small silkworm enters a dormant state by outputting the center point coordinates. By testing 30 groups of dormant pictures with a time interval of 3S, it is found that the average maximum difference between

The center point coordinate test example diagram. (a) Dormant period test group 3S before, (b) dormant period test group 3S later, (c) eating period test group 3S before, and (d) eating period test group 3S later.

(a) and (b) are the test case diagrams of the dormant period, and the output center point coordinates are (2141.5, 2577.0); (c) and (d) are the test case diagrams of the feeding period, (c) the output center point coordinates are (1513.5, 2364.5) and (2019.5, 2654.5), (d) the output center point coordinates are (1503.0, 2370.5) and (2000.0, 2595.0). The maximum difference between the

Comparison of experimental results before and after model improvement

The following figure is a comparison of the P-R curve between the improved model and the original model. P-R curve is a graphical tool used to evaluate the performance of binary classification models. The curve has two important indicators: Precision and Recall. According to formula (7), AP is the integral of the P-R curve, and AP is the main index to evaluate the detection performance of the model. It can be seen from Figure 9 that the improved model mAP is 2.9% higher than that of the preimproved model.

Comparison of P-R curves before and after model improvement. (a) Before improvement, (b) after improvement.

At the same time, the detection performance of the improved model on small targets and partially occluded targets is also improved. Figure 10 shows the test results of two pictures of small silkworm randomly displayed. After the improvement, the confidence rate is a little higher than that before the improvement, and the cases of missing detection are much less, but there are still some cases of missing detection.

Comparison of test results before and after improvement. (a) Before improvement, (b) after improvement, (c) before improvement, (d) after improvement.

Conclusions

A method based on improved YOLOv5 model is proposed. The aim is to identify the physiological state of small silkworm accurately and quickly. The research contents of this paper are summarized as follows:

Aiming at the recognition of small silkworm physiological state, a machine vision method is proposed, which visualizes human experience, monitors silkworm physiological state in real time for workers, and forms an objective evaluation system. The cornerstone of investigating the physiological state recognition model for small silkworms lies in its accuracy and swiftness of recognition. To enhance accuracy, the C3-SE attention mechanism is integrated, enabling the network model to learn from each image feature based on the loss function. This approach increases the weight of effective image features while reducing the weight of ineffective or redundant ones, thus optimizing the model's performance. Additionally, rather than utilizing CIoU, we adopt Focal-EIoU, as it aligns well with the YOLO algorithm, improving both the accuracy and robustness of the trained target recognition model by modifying the loss function of YOLOv5. In terms of recognition speed, we minimize the model's parameters while considering factors such as computational power and storage capacity. Consequently, we propose a lightweight YOLOv5-based model specifically designed for identifying the physiological state of small silkworms. According to the results of the ablation experiment in the laboratory, the P of the improved model is 89.9%, the R is 86.5%, the AP is 92.2%, and the detection frame rate is 59.8 FPS. Compared with the improved model, the improved model's accuracy rate increased by 0.3%, recall rate increased by 3.4%, average accuracy increased by 2.9%, and FPS increased by 7.7%, which effectively improved the physiological recognition effect of small silkworm.

Footnotes

Acknowledgements

The authors would like to thank the anonymous reviewers for their critical comments and suggestions for improving the manuscript.

Author contributions

Conceptualization, P.L. and K.Z.; methodology, P.L.; software, P.L.; validation, P.L., X.H., and K.Z.; resources, W.L. and B.H.; writing—original draft, P.L. All authors have read and agreed to the published version of the manuscript.

Data availability statement

Data or code presented in this study is available on request from the corresponding author.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This paper is supported by the 2024 Agricultural Innovation Capacity Building Project of Yibin City (2024NYCX013) and the Energy Conservation and Environmental Protection Equipment Innovation Team (SUSE652A010) in the “652” scientific research and innovation team of Sichuan Light Chemical Engineering University.