Abstract

Objective

The purpose of the present study was to verify the diagnostic performance of an AI system for the automatic detection of teeth, caries, implants, restorations, and fixed prosthesis on panoramic radiography.

Methods

This is a cross-sectional study. A dataset comprising 1000 panoramic radiographs collected from 500 adult patients was analyzed by an AI system and compared with annotations provided by two oral and maxillofacial radiologists.

Results

A strong correlation (R > 0.5) was observed between AI perception and observers 1 and 2 in carious teeth (0.691–0.878), implants (0.770–0.952), restored teeth (0.773–0.834), teeth with fixed prostheses (0.972–0.980), and missing teeth (0.956–0.988).

Discussion

Panoramic radiographs are commonly used for diagnosis and treatment planning. However, they often suffer from artifacts, distortions, and superimpositions, leading to potential misinterpretations. Thus, an automated detection system is required to tackle these challenges. Artificial intelligence (AI) has revolutionized various fields, including dentistry, by enabling the development of intelligent systems that can assist in complex tasks such as diagnosis and treatment planning.

Conclusion

The automatic detection by the AI system was comparable to oral radiologists and may be useful for automatic identifications in panoramic radiographs. These findings signify the potential for AI systems to enhance diagnostic accuracy and efficiency in dental practices, potentially reducing the likelihood of diagnostic errors caused by unexperienced professionals.

Keywords

Introduction

Artificial Intelligence (AI) is the branch of computer science that deals with designing computer system that can imitate intelligent human behavior to perform complex tasks, such as problem-solving, decision-making, human behavior understanding, and reasoning, among others.1,2 Ever since the conception of the term by John McCarthy in 1956, AI has been widely utilized in numerous fields including agriculture, automotive, industry, as well as medicine.3–5 AI has tremendous potential in the field of medicine, ranging from automatic disease diagnosis to the use of intelligent systems for assisted surgery.6–9 Machine learning (ML) is a branch of AI in which a computer model identifies patterns from a dataset, learns, and makes predictions without human instructions aiming to design a system with automated learning ability.10–13 Traditional machine learning techniques consisted of some features involving human intervention, making it more error-prone and time-consuming.3,11 To overcome this drawback, a more autonomous multilayered neural network system called ‘deep learning’ (DL) was developed.3,11,13–15 DL is multilayered system can detect hierarchical features such as lines, edges, textures, complex shapes, or even lesions and whole organs within a structure. 16 It attempts to predict outcomes by restructuring unlabeled and unstructured multilevel data. 13 DL is composed of numerous interconnected and sequentially stacked processing units called neurons, which collectively form artificial neural networks (ANN).3,11 It comprises an input layer, an output layer, and multiple hidden layers in between.11,17 Such ANNs possess remarkable information processing, learning, and generalization capabilities inspired by the analytical processes of the human brain.13,16 The involvement of numerous neurons in the network makes an ANN capable of solving complex real-world problems compared to conventional ML techniques. 18 Convolutional neural network (CNN), the most used subclass of ANN, is a special network architecture that uses a mathematical operation called convolution to process digital signals such as sound, images, and videos. 11 CNNs are primarily used for processing large and complex signals due to their ability to recognize and classify broader digital signals. 11 To process such wider signals, they use a sliding window to scan and analyze from left to right and top to bottom. 11 CNN can be employed for automated feature detection from two-dimensional (2D) and three-dimensional (3D) images.19,20 It involves the automated detection, segmentation, and classification of complex patterns in an image. 20

Radiographic examination is an integral part of the diagnosis, management, and treatment planning of most dental diseases.15,21,22 A panoramic radiograph, a low-dose and cost-effective imaging modality, is routinely used in dental practices due to its ability to portray all dentoalveolar structures together. 15 It can assist dentists in diagnosing dental pathologies, lesions, anomalies, and fractures of the maxillofacial structures, as well as planning restorative and prosthetic rehabilitation treatment. 21 However, panoramic radiograph images may sometimes be affected by enlargement, geometric distortions, unequal magnification, and multiple superimpositions, which could lead to misinterpretation and misdiagnosis.21,23 Hence, an automatic detection system for evaluating panoramic radiographs is needed to overcome these challenges.

Applications of AI in dentistry span across various specialties, including radiology, endodontics, periodontics, oral and maxillofacial surgery, and orthodontics. AI has demonstrated significant potential in dental disease diagnosis, treatment planning, and reducing errors in dental practice.13,14,16,22,24,25 Previously, it has shown promising results in numerous areas, including the detection of dental caries, identification of vertical root fractures (VRFs), diagnosis and classification of periodontal disease types, classification of malocclusion, automatic identification of cephalometric landmarks, as well as assistance in treatment planning.

The purpose of the current study is to assess the diagnostic performance of VELMENI Inc., an AI system that uses a convolutional neural network (CNN)-based architecture, for automatically detecting teeth, caries, implants, restorations, and fixed prostheses on panoramic radiographs.

Materials and methods

Radiographic dataset

Panoramic radiographs were randomly selected from the EPIC (an electronic medical record system used mostly in hospitals) and MiPacs systems of the Department of Oral and Maxillofacial Radiology at the University of Mississippi Medical Center, from June 2022 to May 2023. 1000 anonymized dental panoramic radiographs of 500 individuals 18 years or older were used to identify teeth, caries, implants, restorations, and fixed prostheses. Most patients identified themselves as Caucasians. This dataset compromised only panoramic radiographs with exposure parameters as low as reasonably achievable and diagnostically acceptable. Panoramic radiographs with artifacts caused by patient position, motion, or superposition of foreign subjects were not included in this study. The research protocol was approved by the IRB (2023–177). Panoramic radiographs were obtained using the Planmeca ProMax (Helsinki, Finland) with parameters of 66 kVp, 8 mA, and 15.8 s The reporting of this cross-sectional study conforms to STROBE guidelines. 28

Image annotation

The identification and detection of teeth, caries, implants, restorations (including amalgam and composites), and fixed prostheses were independently determined by two oral and maxillofacial radiologists, each with a minimum of 5 years of experience, to minimize personal bias. Each of the one thousand anonymized dental panoramic radiographs were analyzed and the findings were added to an Excel® spreadsheet including the number of teeth with caries (including all types such as enamel, dentin, secondary, radicular, etc.), number of implants, number of teeth with fillings (including amalgam, composite, etc.), number of teeth with fixed dental prostheses (FDPs), and the number of missing teeth.

Furthermore, the convolutional neural network (CNN)-based architecture was analyzed for the detection of the number of teeth with caries, fillings, FDPs, and the number of implants on the same panoramic radiographs. The artificial intelligence (AI) system used for analysis was VELMENI Inc., based in CA, USA. A dark filled circle was used to indicate agreement on the labeling of the above dental findings between the AI system and observer 1, while a red empty circle was used for agreement on labeling between the AI system and observer 2.

Statistical analysis

Pearson's product moment correlation co-efficient was used to compare the observations between AI detected dental findings and observers 1 and 2.

Results

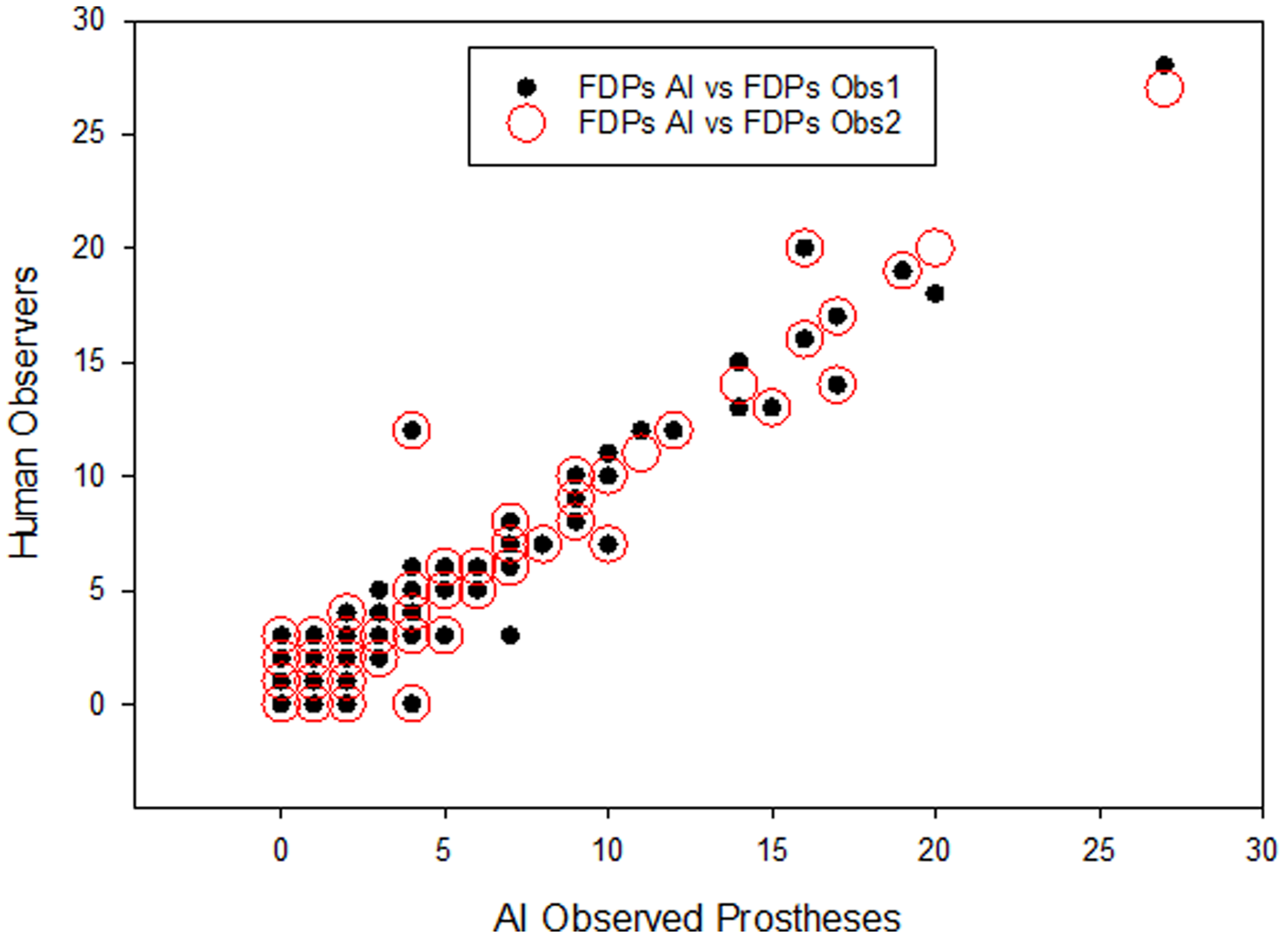

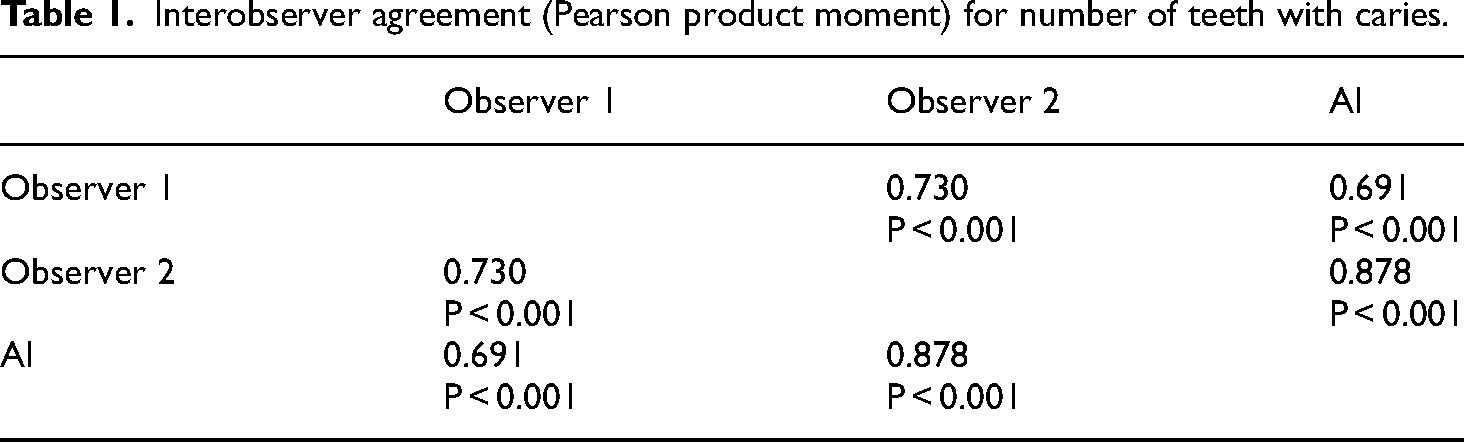

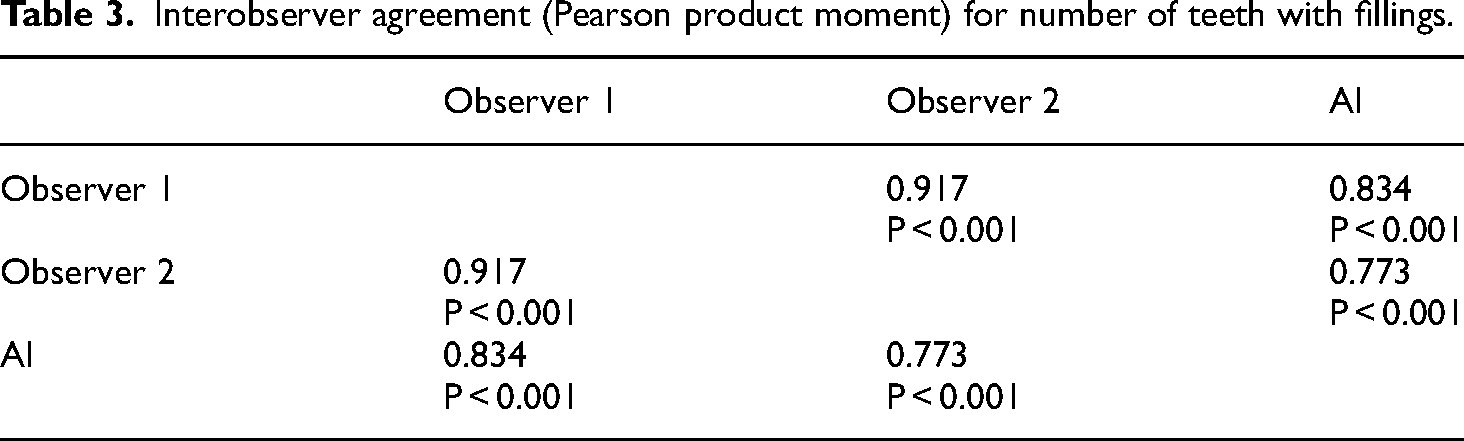

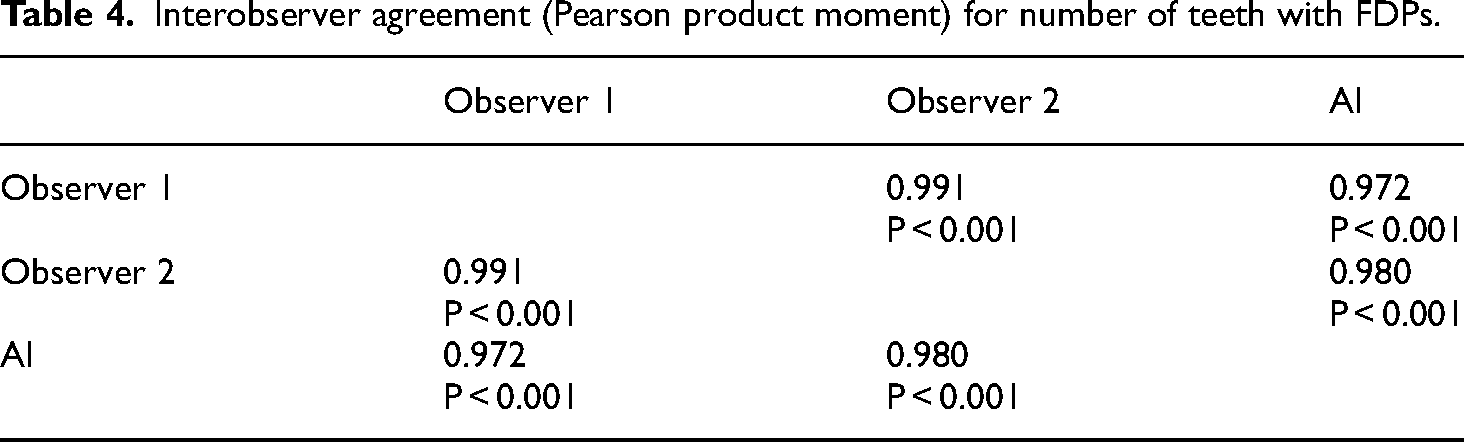

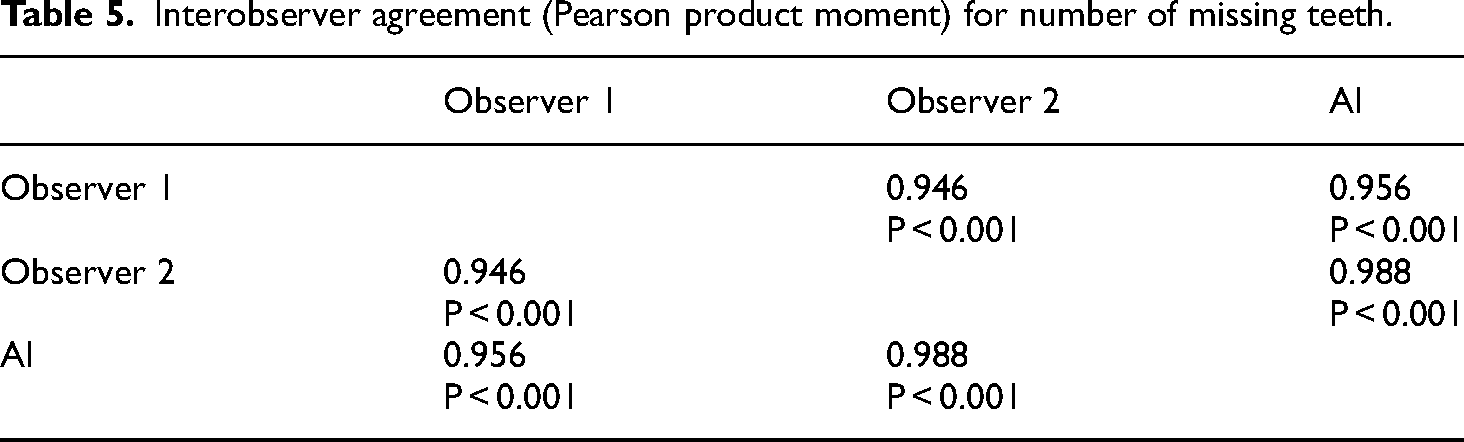

The Pearson Product Moment showed a strong correlation (R > 0.5) between the perception of the AI and perceptions of Observer 1 and Observer 2 for all structures that were identified in the panoramic radiograph. For the number of teeth with caries, the AI correlation was found to be 0.691–0.878 (Table 1 and Figure 1). For the number of implants, the AI correlation was found to be 0.770–0.952 (Table 2 and Figure 2). For the number of teeth with fillings, the AI correlation was found to be 0.773–0.834 (Table 3 and Figure 3). For the number of teeth with fixed prostheses, the AI correlation was found to be 0.972–0.980 (Table 4 and Figure 4), while that for the number of missing teeth was found to be 0.956–0.988 (Table 5 and Figure 5).

Interobserver agreement (Pearson product moment) for the number of teeth with caries showing strong correlation between the perception of the AI and perceptions of observer 1 and observer 2.

Interobserver agreement (Pearson product moment) for the number of implants showing strong correlation between the perception of the AI and perceptions of observer 1 and observer 2.

Interobserver agreement (Pearson product moment) for the number of teeth with fillings showing strong correlation between the perception of the AI and perceptions of observer 1 and observer 2.

Interobserver agreement (Pearson product moment) for the number of teeth with FDPs showing strong correlation between the perception of the AI and perceptions of observer 1 and observer 2.

Interobserver agreement (Pearson product moment) for the number of missing teeth showing strong correlation between the perception of the AI and perceptions of observer 1 and observer 2.

Interobserver agreement (Pearson product moment) for number of teeth with caries.

Interobserver agreement (Pearson product moment) for number of implants.

Interobserver agreement (Pearson product moment) for number of teeth with fillings.

Interobserver agreement (Pearson product moment) for number of teeth with FDPs.

Interobserver agreement (Pearson product moment) for number of missing teeth.

Discussion

Performing accurate diagnosis is one of the crucial steps in the dental office. Therefore, dental radiography, especially panoramic radiography, becomes a common and essential tool for assessing and planning patient treatment. Its widespread use among professionals and acceptance by patients stem from the ability to visualize all orofacial structures in a single image, as well as its simplicity of technique, low radiation dose absorbed by the patient, low cost, and painlessness.26,27 Despite its benefits, panoramic radiography has some limitations, such as a lack of three-dimensionality, the potential presence of artifacts, and a lack of homogeneity in regions of interest. These limitations may lead to incorrect interpretations by clinicians. 28 For these reasons, the utilization of automatic detection methods to assist dentists and their teams in interpreting panoramic radiographs will be highly significant for accurate diagnosis and planning.

The purpose of the present study was to verify the diagnostic performance of VELMENI Inc., for the automatic detection of teeth, caries, implants, restorations, and fixed prosthesis on panoramic radiography. The results of the study, as revealed by Pearson Product Moment correlation analysis, highlight key points regarding the agreement between the VELMENI artificial intelligence system, based on a CNN architecture, and two human observers in interpreting panoramic radiographs.

A study used an automated system for tooth detection and numbering and found that the CNN's performance was comparable to that of experts, potentially aiding in document completion processes and saving time for professionals. 29 For accurate tooth identification and numbering, it is important that the AI model is trained on a large dataset. 30 In the present study, the identification of the number of missing teeth yielded one of the best results, with a strong positive correlation of AI with both the human observers.

The use of Convolutional Neural Networks (CNNs) for identifying dental implants is a highly researched topic in the field of AI applied to implantology. Numerous studies have presented promising outcomes in the identification of dental implants in panoramic and periapical radiographs.31–35 These findings align with the results of our study, wherein a strong correlation was observed between AI and the two human observers. Given the vast array of brands and types of dental implants found worldwide, these results offer valuable assistance to dentists, addressing the need for identifying these points for the continuation of prosthetic treatment or for the replacement of past treatments where there is no access to the history of the prior treatment. 31

Convolutional Neural Networks (CNNs) are AI models particularly well-suited for image classification and, consequently, are widely used in cavity identification. 36 Early diagnosis of carious lesions pose a challenge due to low sensitivity and potential examiner disagreement.36–38 However, studies demonstrate that CNNs offer good accuracy in caries diagnosis.38–40 A literature review 36 highlighted that the accuracy of AI models in cavity detection ranges between 83.6% and 97.1%. Furthermore, AI assistance for dentists in exclusively enamel caries detection resulted in a significant increase in detection, rising from 44.3% to 75.8%. The present study also found a strong correlation in cavity detection between AI and the two human observers, with Pearson correlation coefficients of R = 0.691 with Observer 1 and R = 0.878 with Observer 2.

Previously, a study employed the U-Net architecture for automatic segmentation of amalgam and composite resin restorations in panoramic images, achieving highly accurate detection results. 41 Conversely, another study utilized a CNN and obtained good results in detecting metallic restorations, with a sensitivity of 85.48%, while the sensitivity in detecting composite resin restorations was 41.11%. 27 The present study found a strong correlation between CNN and the two human observers, with correlation coefficients ranging from R = 0.773 to R = 0.834. These findings emphasize the significant contribution of AI in dental restoration detection and enhance the existing knowledge in the field.

The detection of the number of teeth with fixed dental prostheses (FDPs) yielded the best results along with the identification of the number of missing teeth mentioned earlier. In our study, a strong correlation was found between observers 1 and 2 and the AI, being R = 0.972 and R = 0.980, respectively. Previous studies have utilized CNNs for the detection of fixed dental prostheses and full crowns, achieving remarkable precision and efficiency results.42,43 The findings from these studies further emphasize the potential of CNNs in dental prosthesis detection, with accuracy and efficiency comparable to or even surpassing those of dentists with 3 to 10 years of experience.

Although the current study showed promising results in identifying common conditions in panoramic radiographs, it's essential to recognize its limitations. The dataset utilized was restricted and lacked external data, potentially impacting the generalization of results. To address these limitations, future studies should consider employing larger and more diverse datasets. Our patient population consisted exclusively of the state of Mississippi, meaning that our findings may not be generalizable to other regions. Variations in demographics, socio-economic status, and healthcare access could affect the applicability of the results to broader populations. This approach could offer further insights and enhance the validity of the findings presented in this study.

Conclusion

The findings of the present study emphasize that AI systems based on deep learning methods may be useful for the automatic detection of teeth, caries, implants, restorations, and fixed prosthesis on panoramic images for clinical applications, thereby helping to improve efficiency. Furthermore, machine learning in dentistry might utilize fundamental dental radiography to complement further clinical examinations and the training of future dental practitioners.

Lastly, the strong correlation between the VELMENI Inc. AI system's detections and radiologists’ annotations highlights the clinical relevance of AI in dental diagnostics. This reliability indicates that AI may effectively assist in identifying dental conditions, potentially increasing diagnostic accuracy, reducing human error, and enhancing treatment planning. Integrating AI into clinical practice might improve efficiency, support dental professionals, and lead to better patient outcomes.

Footnotes

Abbreviations

Acknowledgements

We thank the University of Mississippi Medical Center School of Dentistry Division of Oral and Maxillofacial Radiology Clinic.

Authors' contributions

R.J., A.S., P.N., designed a study, data analysis, annotation, and coordination. T.V. collected data. A.P., P.T., and P.J., performed literature review. J.G. performed the statistical analysis. A.P., P.T., and M.R. wrote the manuscript. A.S., M.S., and R.J editing the draft of the manuscript. R.J., M.F., and A.F. did final approval of the manuscript. MBG editing the draft of the manuscript. All authors read and approved the final manuscript.

Data availability statement

Data may be available upon request.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethics approval

University of Mississippi Medical Center Institutional Review Committee gave ethical approval. IRB Approval number - (IRB-2023–177).

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.