Abstract

In this study, we introduce a method for estimating the position of a self-driving solar panel-cleaning mobile robot. This estimation relies on line counts, typically 16 cm in panel width, obtained through image processing on the panel floor, along with wheel encoder information and inertial sensor data. To achieve accurate line counts, we introduce two adjusted threshold values and allow offsets in these values based on the robot's speed. Additionally, inertial measurement unit (IMU) signals assist in determining whether a line is horizontal or vertical, depending on the robot's movement direction on the panel, utilizing the robot's heading angle and detected line angle. When the robot is positioned between lines on the panel, more precise location estimation is necessary beyond simple line counts. To tackle this challenge, we integrate the extended Kalman filter with IMU data and encoder information, significantly enhancing position estimation. This integration achieves an RMSE accuracy value of up to 0.089 m, notably at a relatively high speed of 100 mm/s. This margin of error is almost half that of the vision-based line-counting method.

Introduction

According to the International Energy Agency (IEA) energy statistics, the renewable energy sector has experienced significant growth since the 2000s, notably led by solar power within the renewable energy market. 1 This expansion in solar power generation has consequently increased the need for maintenance and repair of solar panels, directly impacting their efficiency in power generation. To address this, solar panel-cleaning robots have been developed to handle tasks that traditionally relied on human labor. Accurate recognition of the robot's location is crucial for effectively managing its autonomous operations in cleaning solar panels. 2

Currently, solar panel cleaning robots can be broadly categorized into two types based on their operational methods. The more common type is the manual operation cleaning robots.3,4 These robots necessitate users to visually inspect and regulate the cleaning range and the robot's driving speed during the cleaning process. Consequently, these robots possess simple mechanisms and do not require additional devices. However, they rely on constant user supervision, and the thoroughness of cleaning relies on the user's expertise. Additionally, the operational stability of the robot, such as the risk of it falling, is determined by the user's skill.

The second type is autonomous cleaning robots. These robots maneuver on the panels independently and conduct cleaning operations without requiring constant user intervention post-initial setup. Equipped with multiple sensors, they follow predetermined paths to clean the panels. Automatic drive cleaning robots come in two variations. One involves robots that continuously clean and traverse the entire panel after installing rails above and below the panels.5–8 The other variation comprises mobile robots that autonomously navigate along predefined paths to clean the panels.9–11

Rail-based robots offer the advantage of swiftly cleaning entire panels but come with the drawback of moving at a fixed speed, potentially leading to insufficient cleaning in heavily soiled areas. Conversely, mobile autonomous robots adhere to predetermined rules, traversing the panel surface while calculating displacement to estimate their position and generating navigation paths to avoid re-cleaning areas. However, on sloped panels, accurate displacement calculation and precise movement path determination become challenging, hindering the creation of optimal cleaning paths.

This challenge prompted the development of algorithms for robot localization. Functions to estimate position, implemented using camera images, wheel encoders, and inertia sensors, are integrated into localization algorithms. Previous studies have employed wheel encoders for position estimation, merging inertia sensors or image data to determine the current position.12–18 Research also explores indoor localization using Wi-Fi,19–22 ultra-wideband (UWB) communication modules,23–27 global positioning system (GPS),28–30 and real-time kinematic (RTK) GPS. 31 ,32

The limitations of conventional GPS, with errors within several meters, and RTK GPS, which introduces error variance based on distance from the reference station, pose challenges for precise robot localization, particularly on inclined solar panels. High-performance RTK GPS units, promising less than 10 cm error, are bulky, heavy, and often expensive, making them impractical for mobile robots maneuvering on inclined panels. Other positioning methods, excluding RTK GPS, exhibit errors in the order of meters, a significant concern given the size of solar panels.

Inaccurate position estimation during path planning for cleaning can lead to duplicated cleaning or missed areas on the panel. The tilted installation of panels aimed at enhancing solar power efficiency further complicates matters, potentially causing slippage for the robot, which in turn introduces errors in position estimation. Existing sensor fusion methods relying on wheel encoders struggle to offer precise measurements, leading to substantial errors in generated cleaning paths. To address these challenges and ensure comprehensive and accurate cleaning paths for solar panels, there is a pressing need to develop technology that enables precise position estimation, especially when robots navigate inclined surfaces and encounter slippage.

This study introduces a unique approach by leveraging a standard camera, wheel encoder, and IMU sensor data. The robot identifies perpendicular straight lines in both horizontal and vertical directions, mirroring the common pattern found on solar panels. Using IMU sensor data, the robot distinguishes between vertical and horizontal lines, tracks these lines as it moves, and estimates its position on the panel by counting the lines passed. Fusing the robot's posture angle from the wheel encoder and inertial sensor data through an extended Kalman filter enhances the accuracy of robot localization on solar panels. This research aims to estimate the location of mobile robots on solar panels using integrated data. To accurately detect panel straight lines, a more precise line count was achieved by setting a double threshold value. Addressing the robot's high speed, line counting was refined by adjusting offset values to accommodate the robot's velocity, even when using a relatively low-cost camera.

Determining the robot's heading angle crucially relies on the robot's roll and pitch angles while navigating inclined solar panels. These angles, along with line count data from image processing, are fused into the extended Kalman filter algorithm to precisely estimate the robot's location between each line in both horizontal and vertical directions.

The structure of this work is organized into sections, starting with the second section covering image processing for precise line identification. The third section focuses on determining the robot's heading angle on a tilted panel, followed by the fourth section, which delves into the location recognition algorithm using the extended Kalman filter. Finally, the fifth section encompasses the experimental results obtained from this research.

Image processing

It is crucial to detect the straight lines on the solar panel to estimate the moving robot's location accurately.33 Typically, solar panels feature white straight lines against a dark background, as illustrated in Figure 1. In this study, emphasis is placed on counting the thicker horizontal and vertical lines while the robot is in motion, while disregarding the thinner lines between them. The focus is on accurately identifying and counting these main lines for position estimation, ensuring their clear identification.

Orthogonal line pattern of solar panel.

To achieve this, specific image processing techniques tailored for line detection are employed. The general process for detecting straight lines is depicted in Figures 2 and 3 outlining the overall steps involved in this line detection process. This method aims to precisely identify and count the prominent lines critical for estimating the robot's location on the solar panel.

Camera placement on the robot.

Image processes for line detection.

Straight-line detection

It seems like you are describing the initial steps in the image preprocessing phase to detect straight lines using the camera-acquired image. Establishing a region of interest (ROI) aids in minimizing processing time and pinpointing a single straight line accurately. Since the color information is not critical for the line detection process, the image is converted to grayscale using Eq. (1), where R, G, and B denote the intensities of the color components, and

To combat this, a bi-directional filter, as outlined in Eq. (2), is employed to address noise removal while preserving edge values of the straight line.

34

In this equation,

Processed images (a) grayscale, (b) bilateral filter, (c) binary, (d) opening, (e) canny edge.

Through the camera installed on the underside of the robot body, lines drawn on the panel floor were recognized. Installing the camera on the floor allowed for better clarity in capturing video images as it blocked surrounding light.

From the detected groups of straight-line candidates, the selection process involves choosing two lines perpendicular to each other while excluding other lines that do not meet this perpendicular criterion. This step helps filter out non-perpendicular lines, focusing on identifying the primary lines of interest that are perpendicular to each other—a common pattern found in solar panels.

It sounds like the robot in this study is capable of moving both forward and backward, but the analysis focuses solely on the straight-line images captured when the robot moves in the forward direction. Even when the robot turns, the images of the lines are obtained from the forward-facing perspective. As a result, a reference point is established, as depicted in Figure 5, to track and analyze the straight-line data consistently across the forward movement and turning instances of the robot. This reference point likely aids in maintaining a consistent frame of reference for analyzing and processing the straight-line images obtained during the robot's movements. The track point is set to the horizontal center value of the image and the moving vertical value can be obtained as in Eqs. (4)–(6). Here,

Track point of a detected line.

Straight-line count

The process of estimating the robot's current location by counting the main straight lines as the robot moves, as illustrated in Figure 6, provides a means to determine the robot's approximate position. Considering that the typical distance between these main lines on a solar panel is around 16 cm, successful line counting allows for an estimated resolution of the robot's location at intervals of 16 cm. However, achieving precise location estimation between these lines will be discussed in a subsequent section, aiming for higher accuracy in pinpointing the robot's position on the panel.

Robot location on a solar panel.

While this approach appears straightforward, straight-line counting can present challenges. Sometimes, line detection might fail, resulting in inaccuracies. Additionally, without prior knowledge of the robot's trajectory, it is unclear whether the robot is counting horizontal or vertical lines as it progresses, which could affect the accuracy of position estimation. These uncertainties highlight the need for robust methods to handle line detection variations and to ascertain the direction of line counting for more reliable location estimation.

The concept you are referencing with the comparator and reference voltage resembles a Schmitt trigger, where changes in the input voltage may cause unpredictable output fluctuations near the reference voltage. To address this unpredictability, two threshold values are often used to ensure a stable and desired output in the Schmitt trigger (Figure 7, left). Inspired by this principle, the introduction of two threshold values, acting as reference values, is implemented in the line detection process. These threshold values serve to recognize a detected line reliably and determine it as a count value. By setting specific thresholds, this approach aims to establish clear criteria for identifying and counting straight lines, ensuring a more robust and consistent detection process despite potential noise or fluctuations in the input data.

Schmitt trigger inverter (left) and counting line with double thresholds (right).

Implementing two different threshold values based on the direction of the robot's movement is a smart strategy. When the robot moves upward in the vertical direction, resulting in an increase in the v-coordinate of the track point, a higher threshold value is used as a reference. This higher threshold aims to mitigate errors caused by potential noise in this particular movement direction. Conversely, during downward movement, a lower threshold is set as another reference to accommodate the movement and prevent counting errors attributed to noise. The result displayed in the right portion of Figure 7 showcases the variation in the line track point's height over time and how the line-counting value gets updated. As the robot moves vertically, the count increments when it surpasses the upper threshold. Notably, even during instances where noise affects the height of each line at 11,400 and 11,800 ms, the count increases by one without errors, demonstrating the accuracy achieved through the utilization of two different threshold values. This approach effectively ensures an accurate line count by adapting threshold values based on the robot's vertical movement direction, minimizing counting errors caused by noise fluctuations.

Absolutely, variations in shutter speed or image resolution can indeed impact the detection of straight lines in specific frames, potentially affecting the functionality of line counting. While adopting an ultra-high-speed camera could potentially mitigate this issue by securing a larger volume of data through numerous frames per second, this solution demands a costly environment. Absolutely, achieving accurate straight-line counting across different robot speeds, especially with a more affordable camera, necessitates precise adjustments to determine the thresholds.

Correction of threshold value according to robot speed

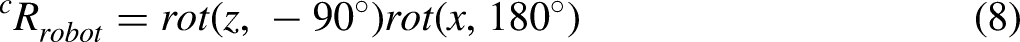

To account for the robot's speed, the threshold value requires adjustment by incorporating an offset value, as depicted on the right side of Figure 7. The correction procedure unfolds as follows: Given the disparity between camera and robot coordinates, Eqs. (7)–(11) are employed to convert the distance the robot traverses during the sampling time into values within the image coordinates (

From the camera Eq. (7), the point in the image coordinates

Coordinates of the robot, the camera, image (z-axis each is omitted).

Thus, the change in the

Afterward, it is also viable to alter the direction by leveraging the speed variance between the left and right caterpillars. Consequently, an offset value for horizontal directional movement will be introduced, akin to the adjustments made for vertical movement.

To summarize, determining the width of the offset in image coordinates accounts for the robot's movement speed and is subsequently added to the threshold values. Figure 9 illustrates the result of conducting a straight-line count while applying a correction value to the thresholds, maintaining accurate counting even at high speeds. The upper portion of Figure 9 showcases the vertical track point of the line over time during low-speed movement (50 mm/s). As time progresses, the line's height (vertical track point) increases, indicating the line's descent within the image coordinates as the robot ascends. At low speeds, the detected height data appear clustered. Contrastingly, the lower part of Figure 9 represents the counting outcome at a higher speed (80 mm/s). Here, due to limitations like the camera frame rate, there is a wider gap between data points compared to the lower-speed scenario. Nonetheless, it is evident that effective line counting occurs by appropriately configuring the offset for the threshold value.

Line-counting (upper: 50 mm/s, lower: 80 mm/s).

Heading angle determination

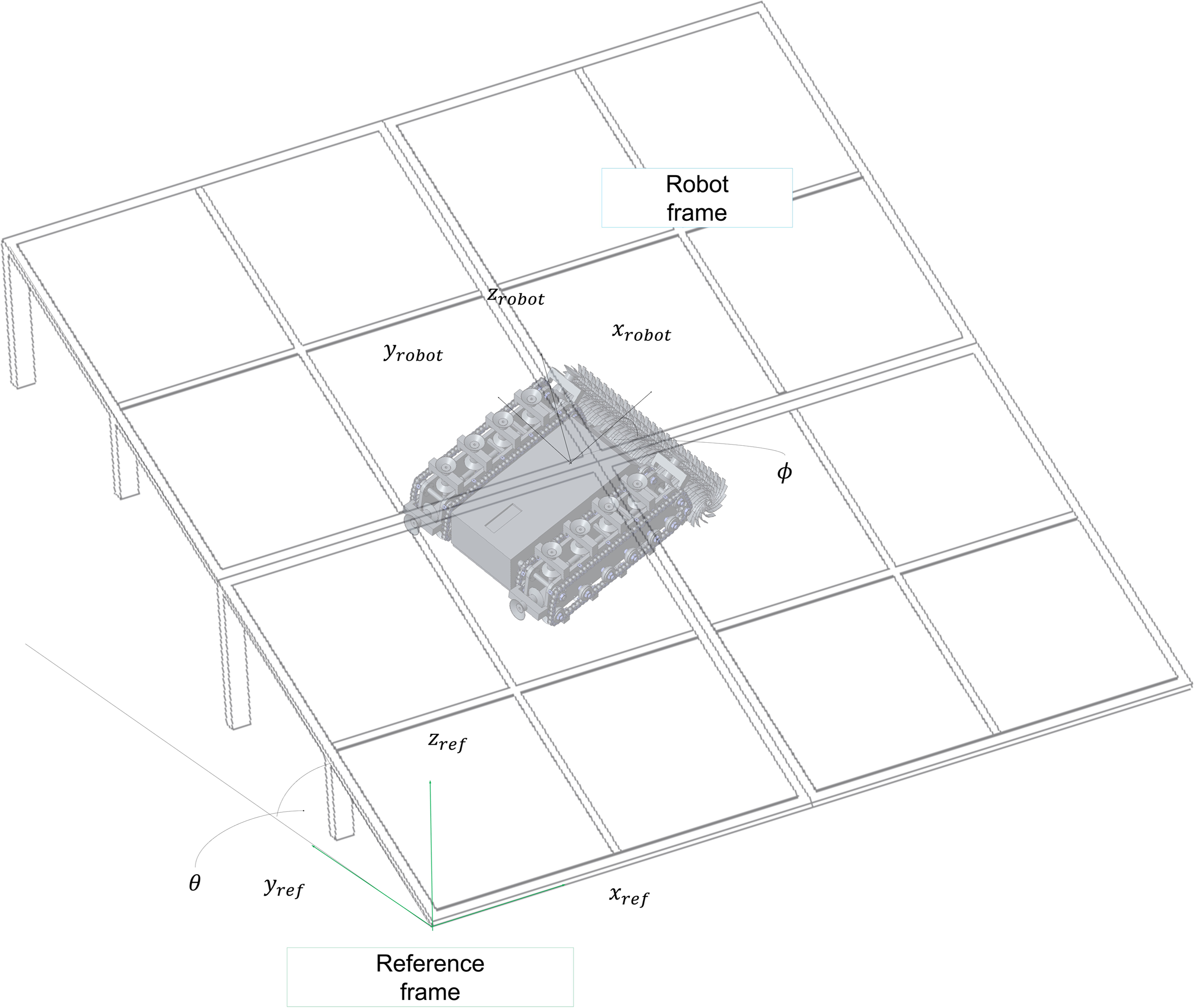

The solar panel, as depicted in Figure 10, is installed at an incline to enhance power generation efficiency. In such an inclined setup, the z-axis of the inertial sensor, positioned on the robot, does not align perfectly with gravity. Consequently, ensuring precise heading angle information, crucial for maintaining the robot's straight movement, becomes challenging. Therefore, an approach to precisely estimate the robot's heading angle on the inclined panel is introduced, utilizing both the panel's tilt angle and the IMU sensor data.

IMU sensor on robot.

Estimation of heading angle

Suppose the solar panel is inclined at an angle of

Robot on tilted solar panel.

By selecting (3,1) and (3,2) elements from two rotational matrices

Hence, the heading angle of the robot on the inclined surface can be derived from the roll and pitch angles measured by the IMU sensor. This calculated heading angle is utilized for the robot to either move straight on the panel or navigate at a predetermined angle.

Horizontal–vertical discrimination of detected straight lines

The solar panel's horizontal and vertical lines appear visually similar. Despite detecting and counting straight lines through image processing, determining the robot's movement direction—vertical or horizontal—solely from the image remains challenging.

When the robot is in motion, it falls into two defined cases based on the heading angle detailed in the Estimation of heading angle section. For instance, as depicted in Figure 12, if the robot's heading angle

Classification of robot's heading angle

Hence, as depicted in Figure 12, discerning whether the detected straight line represents a horizontal or vertical line depends on the robot's movement direction on the panel. This determination is achieved by integrating the line image data with posture information extracted from the IMU sensor. Implementing this algorithm allowed for two-dimensional location recognition, achieved by incrementing or decrementing count values in response to horizontal and vertical motions during line counting.

Location recognition between lines

In the preceding section, we demonstrated the feasibility of estimating the robot's position by merging the line-counting technique from camera image processing with the heading angle derived from the IMU sensor. However, the line-counting method has a limitation: it does not provide the exact robot position within the line. To address this, we propose a location recognition algorithm that combines image processing using an inertial sensor for line counting and an extended Kalman filter for pinpointing the robot's location between lines. As the robot traverses a line, its new location is updated through line counting, while precise location estimation between lines is achieved via the extended Kalman filter. This section introduces a Kalman filter design tailored for estimating the robot's location as it moves between lines.

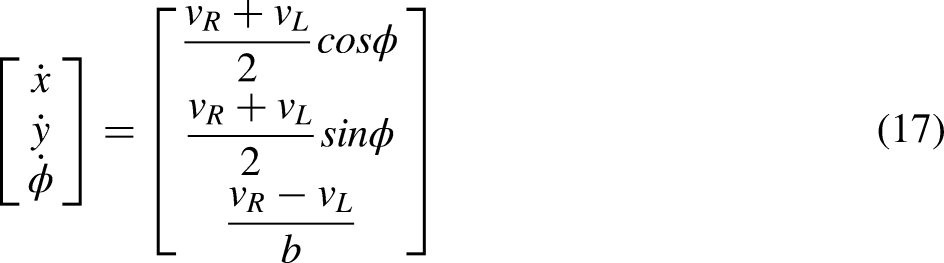

Robot model

The solar panel-cleaning robot in this work is a walking-type mobile robot with vacuum adsorption pads on the left and right. It can be modeled as a two-wheel-driven robot as in Figure 13. The kinematics of the robot can be expressed as Eq. (17), where

Model of solar panel-cleaning robot.

The robot's location was determined using an IMU (BNO080, manufacturer: Bosch) and encoders (PG42-BL42100B, manufacturer: Motorbank). While the IMU provides posture information through gyroscope and accelerometer data, the yaw angle (heading) is prone to error due to the magnetic field from the robot's driving components. As a remedy, the yaw angle is replaced with the outcome derived in Eq. (16). Estimating the robot's location using encoders within 16 cm-wide lines is susceptible to errors caused by vacuum pad slippage. Hence, this study employs an extended Kalman filter algorithm to fuse encoder and IMU data for estimating the robot's location within lines.36,37

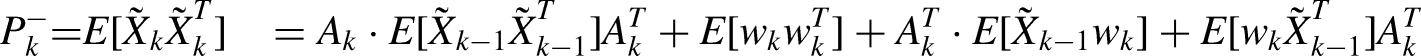

Extended Kalman filter-based location recognition

The state variables of the robot are set to the position

where

Here,

where

Next,

To summarize, the robot's position and heading angle between lines are estimated by integrating motor encoders and IMU data using an extended Kalman filter algorithm. The comprehensive location estimation process is illustrated in Figure 14. Consequently, the extended Kalman filter enables estimation of the robot's position between panel lines. Consequently, upon the robot detecting a new line via image processing, its location is updated based on the current count of horizontal and vertical lines. This updated location is then augmented by the estimated position of the robot between the current lines using the extended Kalman filter.

Block diagram of extended Kalman filter-based localization.

The final position (

Experimental results

The algorithm designed to estimate the solar panel-cleaning robot's location, as proposed in this study, was implemented on the robot outlined in Table 1, developed by our team. This robot is a link-driven walking model equipped with vacuum suction pads on both legs, enabling movement on inclined surfaces via individual BLDC motor control (shown as the left in Figure 15). The developed robot is depicted on the right in Figure 15. Key specifications of this robot are provided in Table 1.

Link mechanism of the walking type robot (left) and manufactured robot (right).

Specifications solar panel-cleaning robot for experiments.

Figure 15 illustrates two interconnected solar panels with approximately a 3 cm gap and a 30° inclination angle between them. An aluminum frame encases all edges, demanding the robot to traverse this gap between panels without losing vacuum on the pads. The specific panel utilized is the Q.PEAK BFR-G4.4 310 (Hanhwa Q CELLS). The robot's designated moving path is depicted in Figure 16, with the lower-left corner of the panel designated as the starting location at (0,0). Upon completing its designated path, the robot returns to this initial location.

Localization test on the tilted panel.

The robot's trajectory follows a specific sequence: it ascends a vertical slope initially, then undergoes a clockwise rotation to proceed horizontally. Following this, it makes a 90° clockwise rotation to descend and ultimately returns to its initial starting point. This precise movement path was determined by tracking the robot's position across several image frames, constituting the intended trajectory.

To validate the algorithm's efficacy, an experiment was conducted on an actual solar panel. The robot's true position was recorded at 5-s intervals, and the algorithm's performance was evaluated based on calculated errors at these intervals. This experimental procedure was repeated 10 times, with the robot autonomously following a predetermined route during each iteration.

The error according to the current position

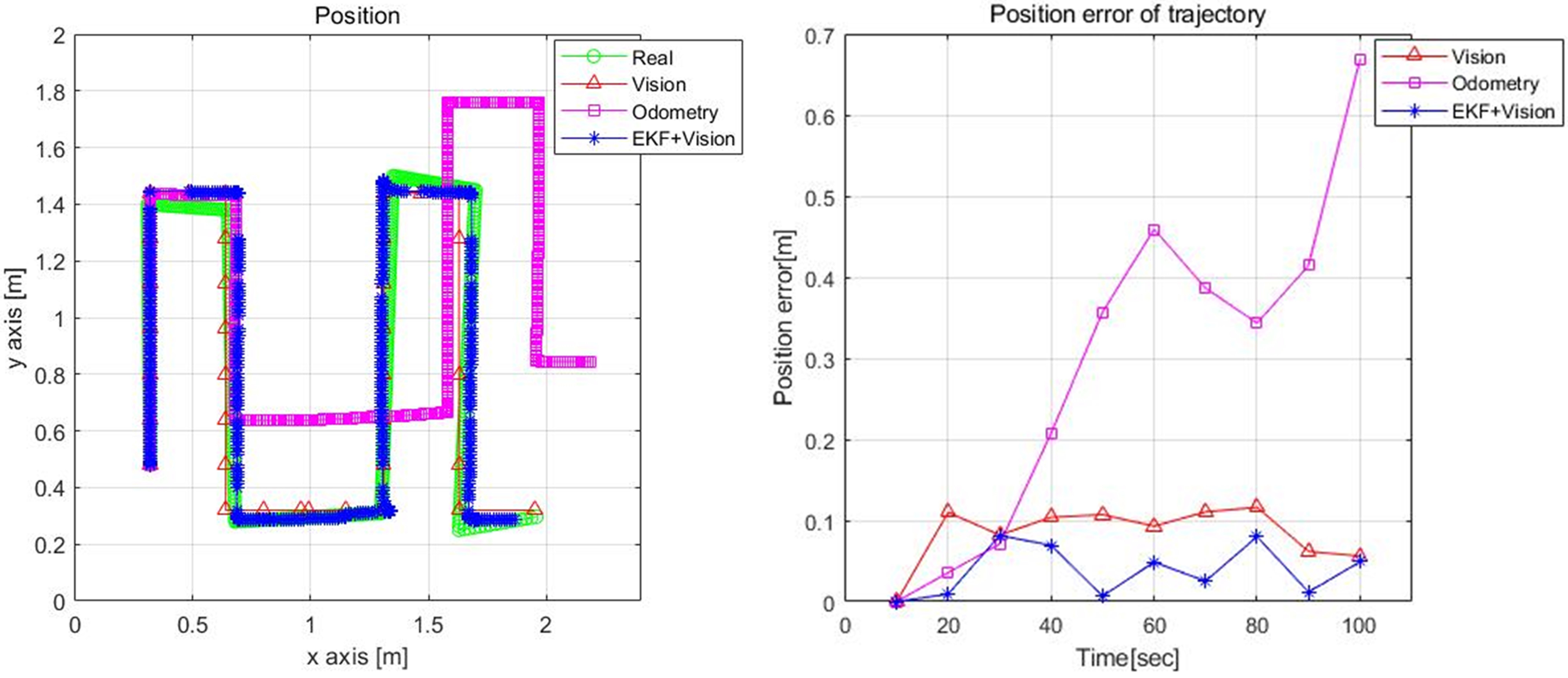

Figures 17 and 18 exhibit experimental outcomes illustrating position recognition during the robot's movement at 60 mm/s. Notably, during the vertical movement phase on the inclined surface, sliding occurs, causing cumulative errors in the wheel encoders. Conversely, substantial slipping occurs during horizontal movement, leading to a skewed robot heading angle. This slippage contributes to continuous accumulation of position error during horizontal movement at this speed.

Experimental results for each method.

Position errors for each method.

When relying solely on line image counting for position estimation, the robot's position remains unchanged until a new line is detected and counted. Consequently, a potential error in position estimation of up to 8 cm, equivalent to half the line width, may occur.

Figures 17 and 18 represent the respective positions of the robot according to positioning methods. “Real” denotes the actual position serving as the basis for error measurement, while “vision” represents the position determined through robot camera image processing. “Odometry” indicates the position calculated using robot sensors, and “EKF + vision” signifies a fused position combining the two methods proposed in this work.

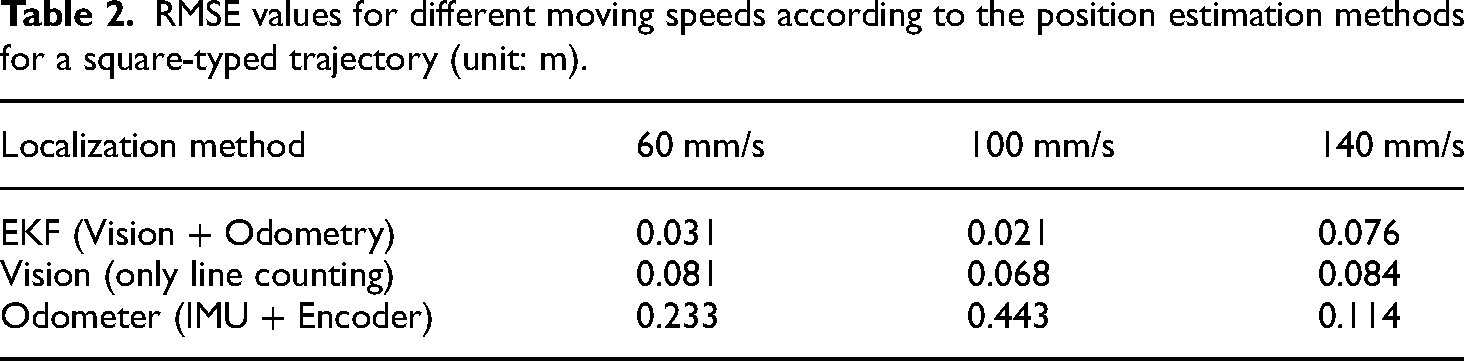

We conducted experiments involving position estimation at different speeds while following the same square trajectory. Table 2 summarizes the RMSE values obtained using three distinct position estimation methods. When utilizing the algorithm proposed in this study, which combines line image counting and an extended Kalman filter, the position between lines is estimated based on IMU and encoder data, and any accumulated error between lines is reset upon encountering a new line.

RMSE values for different moving speeds according to the position estimation methods for a square-typed trajectory (unit: m).

With the incorporation of the EKF algorithm, the average RMSE exhibits a reduction of less than 0.04 m at 60 mm/s and 0.08 m even at higher speeds of 140 mm/s compared to the vision-based method (line-counting).

Figure 19 depicts an experiment involving position estimation for a pulse-shaped trajectory, deliberately designed to induce slippage by increasing the number of turns. Figures 20–22 display the results of position estimation under identical speed conditions as the previous experiment.

Position estimation test setup for another robot moving trajectory.

Experimental results of position estimation (left) and errors (right) for each method with a moving speed of 60 mm/s.

Experimental results of position estimation (left) and errors (right) for each method with a moving speed of 100 mm/s.

Experimental results of position estimation (left) and errors (right) for each method with a moving speed of 140 mm/s.

Table 3 displays RMSE values for position estimation at varying robot speeds during the pulse-shaped trajectory experiment. Similar to previous results, when utilizing the algorithm integrating image line counting and the extended Kalman filter, the average RMSE showcases a decrease of less than 0.02 m and 0.14 m even at high speeds of 140 mm/s compared to the line-counting method.

RMSE values for different moving speeds according to the position estimation methods for a pulse-shaped trajectory (unit: m).

During rapid movements, vacuum release from pads in the walking sequence sometimes leads to inadequate vacuum formation in subsequent steps, causing slippage and substantial cumulative odometry errors. However, employing the extended Kalman filter demonstrates effective position estimation, notably at high speeds of 140 mm/s.

With exclusive reliance on line image counting, estimating position between lines becomes challenging due to continuous slip during robot movement. Increased robot speed exacerbates this slip issue. Combining the EKF method with vision-based line counting yields lower errors compared to simple line counting or odometry-based estimation. This fusion enhances robot position estimation, especially between lines.

Conclusions

This study introduced an algorithm aimed at estimating the position of a solar panel cleaning robot navigating an inclined surface. The algorithm incorporates an extended Kalman filter utilizing data from a wheel encoder and an inertial sensor, along with line-counting through image processing for visible lines on the panel. Notably, the robot's directional movement—whether horizontal or vertical—is determined by interpreting the yaw angle derived from roll and pitch angles obtained from the IMU sensor. This approach is advantageous as it excludes the potentially distorted yaw angle signal, susceptible to influence from the magnetic field surrounding the motor.

This algorithm was designed to estimate the robot's location using only a low-cost camera and IMU sensor, eliminating the need for high-end devices like RTK-GPS or Bluetooth anchors. To enhance accuracy, image processing techniques were employed to eliminate noise on the panel, ensuring the detection of exclusively straight lines. As the robot advances, it actively tracks these detected straight lines and precisely counts them by adjusting upper and lower thresholds according to its speed. This adaptive thresholding minimizes counting errors despite potential image noise.

Furthermore, the algorithm determines whether a detected line is horizontal or vertical by leveraging the combination of roll and pitch angles provided by the IMU. This reliance on IMU data allows for accurate differentiation between horizontal and vertical lines, augmenting the precision of the robot's positional estimation.

To improve the accuracy of robot location estimation between already identified and counted lines, an extended Kalman filter was implemented. This filter utilized the heading angle calculated from the IMU's roll and pitch angles, in conjunction with motor encoder values. Through this estimation method, the robot's position while traversing the inclined panel was reliably determined.

When relying solely on odometry for position estimation, errors between actual and estimated positions tend to increase with the robot's speed and accumulate over longer distances. However, by integrating line-counting via image processing with EKF, utilizing the yaw angle from the IMU and odometry data, the robot's position is initially updated based on line-counting. Simultaneously, more precise estimation of the position between lines occurs, markedly enhancing overall position accuracy regardless of the robot's movement across the panel.

Footnotes

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by Korea Evaluation Institute of Industrial Technology grant funded by the Korea Government (MOTIE) (P2004321) and by the Korea Institute for Advancement of Technology (KIAT) grant funded by the Korea Government (MOTIE) (P0008473, HRD Program for Industrial Innovation).

Author biographies

Joon Hee Kim graduated from Seoul National University of Science and Technology with a bachelor's degree in mechanical system design engineering in 2018. He graduated from Seoul National University of Science and Technology with master's degree in robotic engineering in 2020. Currently, he has been working at Hyundai Motor Company R&D since 2021.

Sang Hun Lee graduated from Seoul National University of Science and Technology with a bachelor's degree in mechanical system design engineering in 2022. He graduated from Seoul National University of Science and Technology with master's degree in robotics in 2024. Currently, he has been working at Hanwha Precision Machinery Back End Equipment R&D center, Semiconductor Equipment Division since 2024.

Jin Gahk Kim graduated from Seoul National University of Science and Technology with a bachelor's degree in mechanical system design engineering in 2020. He graduated from Seoul National University of Science and Technology with master's degree in robotics in 2022. Currently, he has been working at Hyundai Motor Company since 2022.

Woo Jin Jang graduated from Seoul National University of Science and Technology with a bachelor's degree in mechanical system design engineering in 2019. He graduated from Seoul National University of Science and Technology with master's degree in robotics in 2021. Currently, he has been working at Samsung Electronics' Equipment Technology Research Institute since 2021.

Dong Hwan Kim received his BS and MS degrees from the Department of Mechanical Design and Production Engineering at Seoul National University, in 1986 and 1988, respectively. Also he received a PhD degree from Georgia Institute of Technology, USA. He worked at Korea Institute of Industrial Technology from 1997-1998. He joined Seoul National University of Science and Technology in 1998 as a professor at the Department of Mechanical System Design Engineering. His major research interests are robot control, deep learning, and mechatronics. He is doing numerous projects on robot mechanism and control, artificial intelligence applications to robot, and smart mechatronics system.