Abstract

This study developed a fuzzy logic and Gagné learning hierarchy (FL-GLH) for assessing mathematics skills and identifying learning barrier points. Fuzzy logic was used to model the human reasoning process in linguistic terms. Specifically, fuzzy logic was used to build relationships between skill level concepts as inputs and learning achievement as an output. Gagné learning hierarchy was used to develop a learning hierarchy diagram, which included learning paths and test questions for assessing mathematics skills. First, the Gagné learning hierarchy was used to generate learning path diagrams and test questions. In the second step, skill level concepts were grouped, and their membership functions were established to fuzzify the input parameters and to build membership functions of learning achievement as an output. Third, the inference engine generated fuzzy values by applying fuzzy rules based on fuzzy reasoning. Finally, the defuzzifier converted fuzzy values to crisp output values for learning achievement. Practical applications of the FL-GLH confirmed its effectiveness for evaluating student learning achievement, for finding student learning barrier points, and for providing teachers with guidelines for improving learning efficiency in students.

Introduction

Both educators and students can use learning assessment methods to find learning barrier points and to evaluate learning achievement. Ma and Zhou 1 presented an integrated fuzzy set approach to assess the outcomes of student-centered learning and used fuzzy set principles to represent the imprecise concepts for subjective judgment and applied a fuzzy set method to determine the assessment criteria and their corresponding weights. In Grigoriadou et al., 2 integrating fuzzy logic concepts and multi-criteria decision-making in a learning assessment system provided a more complete and accurate description of expert knowledge as well as increased flexibility in assessment of learning achievement in students. Chen 3 developed an intelligent personalized learning system that used a genetic algorithm to determine the best learning paths according to the incorrect answers of individual learners. Ketterlin-Geller and Yovanoff 4 used cognitive diagnostic assessments to provide detailed and precise information about cognitive processes in students who had difficulty learning mathematics. Tay and Lim 5 investigated a fuzzy inference system as an alternative to the conventional addition or weighted addition in the criterion-referenced assessment. A fuzzy-based model was presented, and the monotonicity and sub-additivity properties were investigated. Ozyurt et al. 6 proposed a structure and improvement processes for computerized adaptive testing systems. The adaptive assessment system evaluated students according to their actual ability rather than according to their test grades as in a conventional system. Hauswirth and Adamoli 7 proposed a pedagogical approach in which students are asked a series of questions to facilitate learning from their mistakes. Hwang et al. 8 proposed a group decision approach for developing a concept-effect relationship model with the cooperation of multiple domain experts. Low-achievement students taught by the group decision approach had significantly better learning achievement compared to those taught by the conventional approach. Jee et al. 9 proposed a procedure that comprised sufficient conditions, fuzzy rule reduction, and monotonicity-preserving similarity reasoning. The framework reduced the number of fuzzy rules gathered from an assessor with a proposed fuzzy rule selection approach. Chai et al. 10 proposed a fuzzy peer assessment methodology that considered vagueness and imprecision of words used throughout the evaluation process in a cooperative learning environment. Yang et al. 11 proposed a two-tier test-based learning method for enhancing learning outcomes in computer-programming courses in a web-based learning environment. Samarakou et al. 12 presented a fuzzy-logic-based model for the diagnosis of the so-called students’ learning profile. The fuzzy logic module was coupled with an interactive open learning environment that incorporated the text comprehension theory by Denhière and Baudet, the dialogue theory of Collins and Beranek, and the learning styles theory of Felder and Silverman. Lai et al. 13 described applications of the assessment triangle in two studies. Although comparisons of the evaluation results in the studies revealed mixed results, the evidence generally agreed that the progression in student performance should be modeled as a loose network of concepts rather than as a strict hierarchy. Wilkins and Norton 14 used a hierarchy of fraction scheme that charted the progression from understanding part-whole concepts to understanding concepts of fractions. Tang et al. 15 developed an inheritance coding with Gagné-based learning hierarchy approach to building systems for assessing mathematics skills and diagnosing student learning problems. A practical application showed that it can find student learning barrier points and provide learning suggestions for teachers and students. Although researchers have developed several methods of analyzing learning barrier points in students,15–19 the best diagnostic method for finding learning barrier points in elementary students who struggle with mathematics still needs further study.

The goal of this research was to develop a reasonably objective and accurate method of evaluating mathematics skills and finding learning barrier points in students. The traditional assessment method is to set a standard that the student must achieve to demonstrate successful learning. Test scores above and below the standard are interpreted as learning success and learning failure, respectively. However, the use of a cutoff to judge learning failure and success is questionable. For example, if the passing score for a test is 60, then a score of 60 is interpreted as a learning success whereas a score of 59, a 1-point difference, is interpreted as a learning failure. Thus, the traditional method of judging student achievement is inappropriate.

To address this issue, this study used fuzzy logic concepts to integrate human semantics and thinking forms in evaluations of student learning effectiveness. The fuzzy logic theory expands the absolute membership relationship, which is one or the other, and uses the concept of membership function to determine the degree of belonging. When one set occupies a large proportion, the membership of the set is relatively high. The objective was to reduce the role of subjectivity and provide a more reasonable and objective measure of learning abilities and weaknesses.

To support efficient remedial teaching, this study combined fuzzy logic and Gagné learning hierarchy (FL-GLH) to enable accurate evaluation of student learning ability and learning barrier points. Practical applications of the FL-GLH confirmed its accuracy and effectiveness for finding student learning barrier points, which can aid teachers and students in improving learning efficiency.

Building a learning path diagram and generating testquestions by Gagné learning hierarchy theory

In the hierarchical analysis of intellectual skills advocated by Gagné, 20 component skills are task-analyzed in a part-to-whole sequence in order of their importance and in a “bottom-up” fashion. The hierarchical task-analysis is followed by increasingly complex combinations of the parts. 21 According to the Gagné learning hierarchy theory, intellectual skills are acquired cumulatively, that is, mastery of higher-level skills requires prior mastery of lower-level skills. Since intellectual skills are acquired in a hierarchical order, successful instruction requires the student to acquire lower-level skills before progressing to higher-level skills.20,22,23

Based on the results of previous research by the authors, 15 this study applied Gagné learning hierarchy theory in the development of learning path diagrams and test questions for assessing mathematics skills and finding learning barrier points. Assessing knowledge acquisition by the students required the teachers to apply their expertise in designing test questions for each level. For example, even though they had different learning paths, integers and fractions were both related to certain learning processes. Therefore, the learning hierarchy diagrams for integers and for fractions were integrated to enhance student learning. Figure 1 shows the learning hierarchy diagrams for integers and fractions. The a-path and b-path are the paths for integers and fractions, respectively, and the numbers in front of the a and b represent the learning level.

Gagné learning hierarchy diagram for integers and fractions.

Steps of assessing mathematics skills by fuzzy logic

A fuzzy logic 24 system comprises a fuzzifier, membership functions, a fuzzy rule base, a fuzzy inference engine, and a defuzzifier. First, the fuzzifier uses membership functions to fuzzify the given input parameters and outputs. The fuzzy inference engine then generates fuzzy values by applying fuzzy rules based on fuzzy reasoning. Finally, the defuzzifier converts the fuzzy values into crisp output values. For example, fuzzy reasoning can be described as two-input, one-output fuzzy logic. The fuzzy rule base consists of a group of if–then control rules with two inputs, x1 and x2, and one output, y. When x1 and x2 have three membership functions each, a representative rule set of nine fuzzy if-then rules can be stated as

where R n (n = 1, 2, …, 9) denotes the n-th implication. The x1 and x2 are the input values, and y is the output value. Subset Ai, subset Bj (i, j∈ 1, 2, 3), and subset Yk (k∈ 1, 2, 3, 4, 5) are fuzzy subsets defined by the corresponding membership functions µAi, µBj, and µYk.

The max-min compositional operation25,26 yields a fuzzy output that complies with these rules. This study used the center of gravity defuzzification method to transform the fuzzy inference output into a non-fuzzy value.

The four steps of using fuzzy logic to assess mathematics skills were as follows. The first step was grouping skill level concepts and establishing their membership functions to fuzzify the input parameters. The second step was building membership functions of learning achievement as an output. The third step was building fuzzy rules according to the expertise of the teacher. The final step was using the fuzzy inference engine to generate fuzzy values by applying fuzzy rules based on fuzzy reasoning of the min-max compositional operation. Then, the center of gravity method was used to perform defuzzification, that is, to convert fuzzy values into crisp output values for learning achievement. The detailed steps of the assessment were as follows.

Grouping skill level concepts and establishing their membership functions to fuzzify the input parameters

In Figure 1, the a-path is an example of 11 selected skill level concepts (i.e. 1a–11a) divided into four groups. Thirty questions were designed to test the eleven skill level concepts. Table 1 shows the test questions (Q k ) for the four groups (F i ) of skill level concepts (C j ), where k = 1, …, 30, i = 1, …, 4, and j = 1, …, 11. A test question that included the skill level concept was coded as 1. All other test questions were coded as 0.

Four groups of skill level concepts and associated test questions for the integer unit.

The first part F1 used six questions to test three concepts (C1 integer comparisons, C2 integer addition and subtraction, and C3 sorting and equal). The second part F2 used six questions to test three concepts (C4 integer multiplication, C5 integer division, and C6 integer addition, subtraction, multiplication and division). The third part F3 used eight questions to test three concepts (C7 integer mixed operations, C8 division and fraction, and C9 skilled for integer mixed operations). The fourth part F4 used 10 questions to test two concepts (C10 multiples and common multiples and C11 factors and common factors).

The four inputs to the fuzzy logic analysis were F1, F2, F3, and F4. Each input had two fuzzy subsets (“Poor” and “Good”), which were extracted by teachers and measured the degree of membership of a given value to the fuzzy subset. For example, the first part F1 used six questions to test three concepts. The teacher thought it was “Poor” for learning if students have learned less than two test questions, and it was “Good” for learning if students have learned more than four test questions. The third part F3 used eight questions to test three concepts. The teacher thought it was “Poor” for learning if students have learned less than three test questions, and it was “Good” for learning if students have learned more than six test questions. Therefore, the trapezoidal type was used as the fuzzy logic membership function for the study. The trapezoidal membership function is a curve that defines how each point in the input space is mapped to a membership value (or degree of membership, µ) between 0 and 1. Figure 2 show trapezoidal membership functions for F1, F2, F3, and F4, respectively.

Two trapezoidal membership functions for F1, F2, F3, and F4.

Building membership functions for learning achievement as an output for evaluating student learning achievement

The trapezoidal membership functions were also used in five fuzzy subsets (“Poor,”“Fair,”“Average,”“Good,” and “Excellent”) assigned to learning achievement. Figure 3 shows the trapezoidal membership functions for learning achievement.

Five trapezoidal membership functions for learning achievement.

Building fuzzy rules according to teacher expertise

The four inputs and one output were used to calculate various membership degrees of the fuzzy sets. Table 2 shows the 16 fuzzy rules that were directly derived according to the expertise of the teacher. For example, fuzzy rule 1 (R1) was IF F1 is “Poor” and F2 is “Poor” and F3 is “Poor” and F4 is “Poor” THEN learning achievement (LA) is “Poor”; fuzzy rule R11 was IF F1 is “Good” and F2 is “Good” and F3 is “Poor” and F4 is “Poor” THEN LA is “Average.”

Fuzzy rule table for assessing learning achievement.

LA: learning achievement.

Figure 4 shows the fuzzy logic system of four inputs, one output, and 16 rules for finding barrier points for learning skill level concepts for integers.

Fuzzy logic system of four inputs, one output, and 16 rules for finding student learning barrier points.

Building a range of crisp output values for evaluating learning achievement

The Mamdani method 25 was applied to transform the fuzzy inference output into a non-fuzzy value, that is, the value of learning achievement. A high value for learning achievement indicated good performance characteristic. Mamdani method transforms the fuzzy output into a crisp value by setting membership functions of inputs (Figure 2) and an output (Figure 3) and by using the min-max inference method. The Mamdani method was also used to integrate the experience of the teacher in the inference mechanism in the consequent part as shown in Table 2. The min-max operation for the case of a four-input-single-output system is shown below.

where the terms Ai, Bj, Ck, Dl (i, j, k, l = 1, 2), and E are the linguistic terms of the precondition part with membership functions µAi(F1), µBj(F2), µCk(F3), µDl(F4), and µE(LA).

Deffuzification was performed by using center of gravity method to convert the fuzzy values into a crisp output value for learning achievement.

where the term n is the number of skill level concepts and NSLC is number of skill level concepts.

For evaluating student learning achievement and finding learning barrier points, the respective ranges of crisp output values for learning achievement among skill level concepts were computed by fuzzy logic inference.

For example, Table 1 shows that the four inputs to the fuzzy logic analysis were F1, F2, F3, and F4. The first part F1 contained three concepts and six questions. The second part F2 contained three concepts and six questions. The third part F3 contained three concepts and eight questions. The fourth part F4 contained two concepts and 10 questions. When [F1, F2, F3, F4] was equal to [0, 0, 0, 0], the crisp output value for learning achievement was 0.67. When [F1, F2, F3, F4] was equal to [6, 0, 0, 0], the crisp output value for learning achievement was 3. When [F1, F2, F3, F4] was equal to [6, 6, 0, 0], the crisp output value for learning achievement was 5.5. When [F1, F2, F3, F4] was equal to [6, 6, 8, 0], the crisp output value for learning achievement was 8. When [F1, F2, F3, F4] was equal to [6, 6, 8, 10], the crisp output value for learning achievement was 10.33. Table 3 shows the respective ranges of crisp output values for evaluating learning achievement.

Ranges of crisp output values for evaluating learning achievement.

Practical applications of the FL-GLH in finding student learning barrier points

The effectiveness of the FL-GLH for finding student learning barrier points and evaluating learning achievement was evaluated in a practical implementation. Table 1 shows the test questions (Q k ) for the four groups (F i ) of skill level concepts (C j ), where k = 1, …, 30, i = 1, …, 4, and j = 1, …, 11. A test question that included the skill level concept was coded as 1. All other test questions were coded as 0.

The FL-GLH was used to assess mathematics skills learning achievement in 68 students. The results obtained by the FL-GLH for ten representative students in the integer unit subject are shown below. The FL-GLH results were then compared with those obtained by a conventional scoring method. The 30 test questions had a value of 100 points. Learning achievement is conventionally assessed by the number of correct answers.

Of the 30 test questions, Student 1 correctly answered eight questions (Q1, Q5, Q8, Q10, Q15, Q18, Q22, and Q29). The traditional interpretation would be that the student understood 27% of the tested concepts. However, Figure 5 shows that the learning achievement analysis by FL-GLH indicated that [F1, F2, F3, F4] was equal to [2, 2, 2, 2], and the crisp output value for learning achievement was 0.67. Based on the range of crisp output values in Table 3, student 1 did not understand any of the tested concepts. The teacher concluded that student 1 needed an improved understanding of concepts C1–C11 and inferred that the student answered test questions by guessing. This example demonstrates that the FL-GLH assessed the ability to answer questions correctly and identified learning barrier points. In contrast, the traditional scoring method overestimates learning achievement based on the number of correct answers and cannot find learning barrier points.

Evaluating the learning achievement of [2, 2, 2, 2] by FL-GLH.

Of the 30 test questions, Student 2 correctly answered nine questions (Q1, Q2, Q4, Q7, Q8, Q13, Q16, Q19, and Q28). The traditional interpretation would be that the student understood 30% of the tested concepts. However, Figure 6 shows that, according to the learning achievement analysis by the FL-GLH, [F1, F2, F3, F4] was equal to [3, 2, 3, 1], and the crisp output value for learning achievement was 2.16. According to the range of crisp output values in Table 3, student 2 understood the concepts in interval F1 (concepts C1–C3), approximately concepts C2 and C3. The interpretation by the teacher was that student 2 needed an improved understanding of concepts C3–C11. According to the Gagné learning hierarchy theory, the student answered two questions correctly in interval F2, but understanding of the concepts was still poor. Additionally, the student may have guessed the correct answers for three questions in interval F3 and for one question in interval F4. The traditional scoring method overestimates learning achievement based on the number of correct answers.

Evaluating the learning achievement of [3, 2, 3, 1] by FL-GLH.

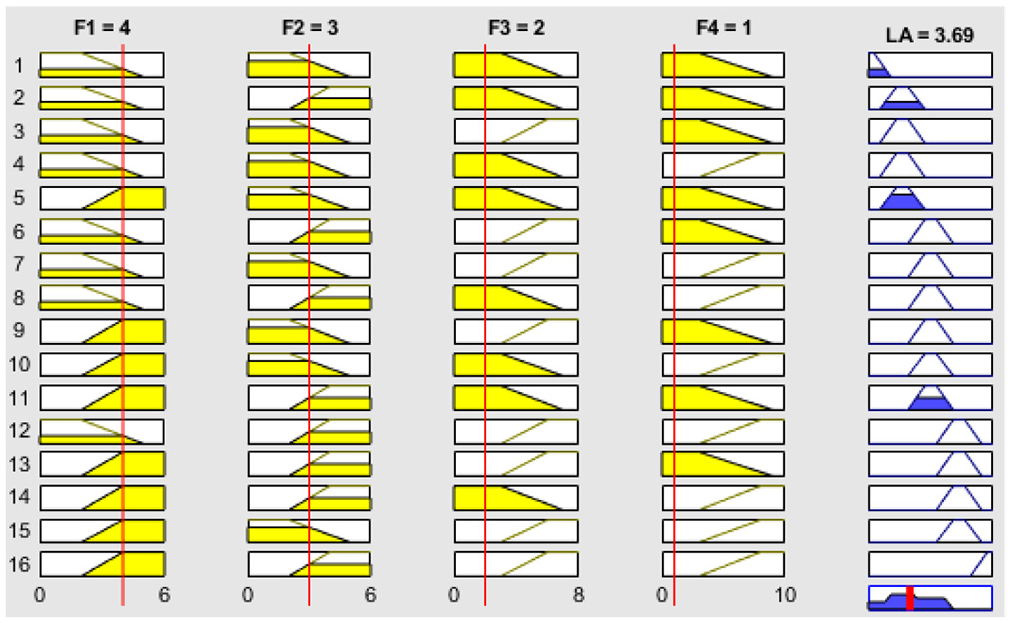

Of the 30 test questions, Student 3 correctly answered 10 questions (Q1, Q2, Q4, Q6, Q7, Q8, Q9, Q15, Q20, and Q29). The traditional interpretation would be that the student understood 33% of the tested concepts. However, Figure 7 shows that, according to the learning achievement analysis by FL-GLH, [F1, F2, F3, F4] was equal to [4, 3, 2, 1], and the crisp output value for learning achievement was 3.69. Based on the range of crisp output values in Table 3, student 3 understood the concepts up to interval F2 (concepts C4–C6), approximately concepts C4 and C5. The interpretation by the teacher was that student 3 needed an improved understanding of concepts C5–C11. According to Gagné learning hierarchy theory, the student may have correctly guessed the answers for two questions in interval F3 and for one question in interval F4. The results obtained by the FL-GLH were similar to the results obtained by using the number of correct answers to judge the ability of the student to answer test questions.

Evaluating the learning achievement of [4, 3, 2, 1] by FL-GLH.

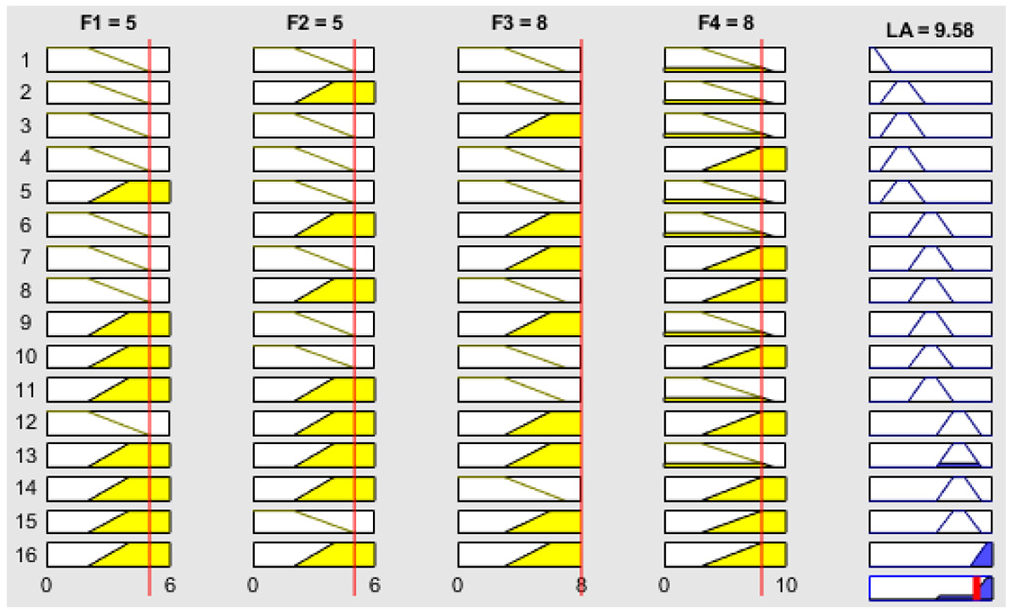

Of the 30 test questions, student 4 correctly answered 26 questions (Q1–Q5, Q7–Q11, Q13–Q20, and Q21–Q28). The traditional interpretation would be that the student understood 87% of the tested concepts. Figure 8 shows that, according to the learning achievement analysis by FL-GLH [F1, F2, F3, F4] was equal to [5, 5, 8, 8], and the crisp output value for learning achievement was 9.58. Based on the range of crisp output values in Table 3, student 4 understood the concepts up to interval F4 (concepts C10–C11). The interpretation by the teacher was that student 4 needed an improved understanding of concept C11. The teacher inferred that student 4 made careless errors on the test questions in intervals F1 and F2. The FL-GLH results were consistent with the results of traditional scoring methods. Notably, in terms of assessing the ability to answer the test questions, the FL-GLH was comparable to an assessment based on the number of correctly answered questions.

Evaluating the learning achievement of [5, 5, 8, 8] by FL-GLH.

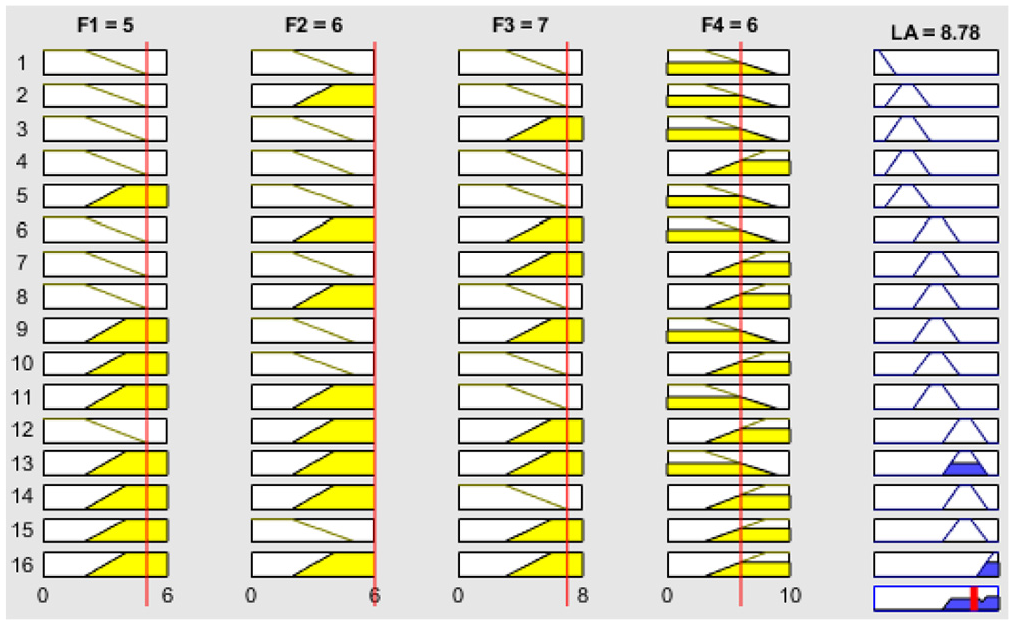

Of the 30 test questions, student 5 correctly answered 24 questions (Q1–Q5, Q7–Q12, Q13–Q19, Q21–Q23, and Q28–Q30). The traditional interpretation would be that the student understood 80% of the tested concepts. Figure 9 shows that, according to the learning achievement analysis by FL-GLH, [F1, F2, F3, F4] was equal to [5, 6, 7, 6], and the crisp output value for learning achievement was 8.78. Based on the range of crisp output values in Table 3, student 5 understood the concepts up to interval F4 (concepts C10–C11), particularly concept C10. The interpretation by the teacher was that student 5 needed an improved understanding of concepts C10–C11. The teacher inferred that student 5 made careless errors in answering test questions in intervals F1 and F3.

Evaluating the learning achievement of [5, 6, 7, 6] by FL-GLH.

Of the 30 test questions, student 6 correctly answered 15 questions (Q1–Q6, Q7–Q11, Q15, Q18, Q20, and Q27). The traditional interpretation would be that the student understood 50% of the tested concepts. Figure 10 shows that, according to the learning achievement analysis by FL-GLH, [F1, F2, F3, F4] was equal to [6, 5, 3, 1], and the crisp output value of learning achievement was 5.5. Based on the range of crisp output values in Table 3, student 6 understood the concepts up to interval F2 (concepts C4–C6) and around concept C6. The interpretation by the teacher was that student 6 needed an improved understanding of concepts C7–C11. According to the Gagné learning hierarchy theory, the student may have guessed the correct answers for three questions in interval F3 and one question in interval F4. The traditional scoring method overestimates learning achievement based on the number of correct answers.

Evaluating the learning achievement of [6, 5, 3, 1] by FL-GLH.

Of the 30 test questions, student 7 correctly answered 20 questions (Q1–Q6, Q7–Q12, Q13–Q17, Q21, Q25, and Q28). The traditional interpretation would be that the student understood 67% of the tested concepts. Figure 11 shows that, according to the learning achievement analysis by FL-GLH, [F1, F2, F3, F4] was equal to [6, 6, 5, 3], and the crisp output value for learning achievement was 6.89. Based on the range of crisp output values in Table 3, student 7 understood the concepts up to interval F3 (concepts C7–C9) and around concept C8. The interpretation by the teacher was that student 7 needed an improved understanding of concepts C8–C11. According to the Gagné learning hierarchy theory, the student may have guessed the correct the answers to three questions in interval F4. The FL-GLH results were consistent with the results obtained by using the number of correct answers to judge the ability of the student to answer test questions.

Evaluating the learning achievement of [6, 6, 5, 3] by FL-GLH.

Of the 30 test questions, student 8 correctly answered 23 questions (Q1–Q6, Q7–Q12, Q13–Q18, Q21, Q23, Q26, Q28, and Q30). The traditional interpretation would be that the student understood 77% of the tested concepts. Figure 12 shows that, according to the learning achievement analysis by FL-GLH, [F1, F2, F3, F4] was equal to [6, 6, 6, 5], and the crisp output value of learning achievement was 7.8. Based on the range of crisp output values in Table 3, student 8 understood the concepts up to interval F3 (concepts C7–C9) and around concept C9. The interpretation by the teacher was that student 8 needed an improved understanding of concepts C9–C11. According to the Gagné learning hierarchy theory, the student answered five questions correctly in interval F4, but understanding of the concepts was still poor. The FL-GLH results were consistent with the results obtained by using the number of correct answers to judge the ability of the student to answer test questions.

Evaluating the learning achievement of [6, 6, 6, 5] by FL-GLH.

Of the 30 test questions, student 9 correctly answered 25 questions (Q1–Q6, Q7–Q12, Q13–Q19, and Q21–Q26). The traditional interpretation would be that the student understood 83% of the tested concepts. Figure 13 shows that, according to the learning achievement analysis by FL-GLH [F1, F2, F3, F4] was equal to [6, 6, 7, 6], and the crisp output value for learning achievement was 8.78. Based on the range of crisp output values in Table 3, student 9 understood the concepts up to interval F4 (concepts C10–C11) and around concept C11. The interpretation by the teacher was that student 9 needed an improved understanding of concept C11. The teacher inferred that student 9 made careless mistakes in the answers to test questions in interval F3.

Evaluating the learning achievement of [6, 6, 7, 6] by FL-GLH.

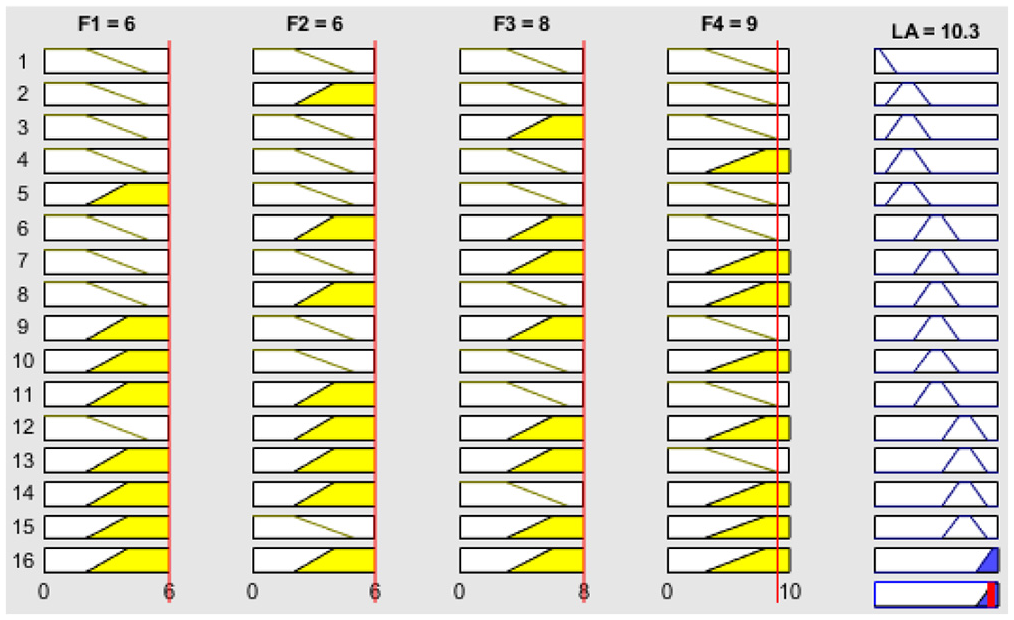

Of the 30 test questions, student 10 correctly answered 29 questions (Q1–Q6, Q7–Q12, Q13–Q20, Q21–Q28, and Q30). The traditional interpretation would be that the student understood 97% of the tested concepts. Figure 14 shows that, according to the learning achievement analysis by FL-GLH [F1, F2, F3, F4] was equal to [6, 6, 8, 9], and the crisp output value for learning achievement was 10.3. Based on the range of crisp output values in Table 3, student 10 understood the concepts up to interval F4 (concepts C10–C11) and around C11. The teacher inferred that student 10 made careless errors in the answers to test questions in interval F4. The FL-GLH results were very similar to those obtained by traditional scoring methods.

Evaluating the learning achievement of [6, 6, 8, 9] by FL-GLH.

Discussion

The above results obtained in practical applications indicate that the FL-GLH can accurately find student learning barrier points and can provide teachers and students with suggestions for improving learning efficiency. The experimental sites were elementary schools in Taiwan.

The FL-GLH for assessing student mathematics skills has the following four notable requirements.

The first requirement is that the test questions for each level of a mathematics unit must be carefully designed according to Gagné learning hierarchy theory and according to the expertise of the elementary school teacher in assessing student learning achievement. In a previous work by the authors, 15 test questions used for mathematics assessment revealed an expert validity of 0.8, a Cronbach alpha value of 0.8 for internal reliability, test–retest reliability of 0.91, and parallel-form reliability of 0.9.

The second requirement is that the test questions for each level of a mathematics unit must be selected and grouped by the learning hierarchy path.

The third requirement is that the membership functions for fuzzifying the inputs and the output must be selected and designed. Fuzzy rules for assessing learning achievement must also be built according to the expertise of the teacher.

The fourth requirement of the fuzzy logic system for assessing mathematics skills is that the min-max inference method must be used to transform the fuzzy inference output, and the center of gravity method must be used to convert the fuzzy values into crisp output values for learning achievement.

Conclusions

By integrating fuzzy logic and Gagné learning hierarchy theory, the FL-GLH provided a human-like reasoning method for assessing the mathematics skills of students and finding their learning barrier points. Fuzzy logic was used to model the human reasoning process and to build relationships between skill level concepts and learning achievement. Gagné learning hierarchy was used to develop the learning paths and test questions. The main contribution of this study is the development of an FL-GLH that can accurately identify learning barrier points and can provide teachers and students with suggestions for improving learning ability in a real-world classroom. Practical applications in elementary schools showed that, compared to the traditional method of using test scores to assess mathematics skills, the FL-GLH is superior in terms of accuracy in assessing student learning achievement. Since the learning barrier points of students were validated by their mathematics teachers, we conclude that the proposed FL-GLH has excellent performance in mathematics skills assessment and in finding learning barrier points. In the future work, the authors will combine education experts to design the learning hierarchy diagrams for different learning units with their fuzzy sets and to gather consistence fuzzy rules for improving students learning ability in a real-world classroom.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported in part by the Ministry of Science and Technology, Taiwan, R.O.C., under Grant Numbers MOST 104-2410-H-153-011, MOST 106-2410-H-153-013, MOST 107-2410-H-153-008-MY2, and MOST 109-2221-E-153-005-MY3.