Abstract

The evaluation of the competence of personnel working with laboratory animals is currently a challenge. Directive 2010/63/EU establishes that staff must have demonstrated competence before they perform unsupervised work with living animals. Nevertheless, there is a lack of research into education and training in laboratory animal science, and the establishment of assessment strategies to confirm researchers’ competence remains largely unaddressed.

In this study, we analysed the implementation of a practical assessment strategy over three consecutive years (2018–2021) using the Objective Structured Laboratory Animal Science Exam (OSLASE) developed previously by us to assess professional competence. The interrater reliability (IRR) was determined based on the assessors’ rating of candidates’ performance at different OSLASE stations using weighted kappa (Kw) and percentage of agreement. Focus group interviews were conducted to access trainees’ acceptability regarding the OSLASE.

There was a moderate-to-good Kw for the majority of the scales’ items (0.79 ± 0.20 ≤ Kw ≥ 0.45 ± 0.13). The percentages of agreement were also acceptable (≥75%) for all scale items but one. Trainees reported that the OSLASE had a positive impact on their engagement during practical training, and that it clarified the standards established for their performance and the skills that required improvement. These preliminary results illustrate how assessment strategies, such as the OSLASE, can be implemented in a manner that is useful for both assessors and trainees.

Examen structuré objectif de science animale de laboratoire (OSASSE) pour assurer la compétence professionnelle des chercheurs en SAL

Driven by an increased awareness of the importance of ensuring researchers’ professional competence in animal experimentation to safeguard animal welfare, the interest in the design and implementation of innovative assessment methods has been increasing notoriously. Such an increase is motivated not only by the imposition of the revised European legislation,1,2 but also by the need to guarantee the application of the 3Rs in practice. 3

Directive 2010/63/EU establishes that staff working with living animals must be ‘adequately educated and trained’ and ‘supervised in the performance of their tasks until they have demonstrated the requisite competence’. 2 The associated working document for a common education and training framework further clarifies that the assessment of practical competence should rely on observations of trainees while they perform the procedures. 1 Such regulations call out for greater awareness by education and training supervisors regarding competence and assessment constructs in the laboratory animal science (LAS) context. 4 Ensuring professional competence in LAS through observational assessments is critical at different stages of learning; for example, as a final examination at the conclusion of a LAS training period, before the beginning of autonomous work, or as a formative work-integrated assessment as part of continuing professional development (CPD).

The field of LAS can draw on the extensive knowledge regarding the assessment of professional competence developed in the context of medical and health professional education. In these fields, driven by specific professional regulations and the agenda of patient safety, concepts such as competence, performance, and the validity and reliability of the assessment methods, have been largely explored.5–7 Assessments are crucial elements in the educational process and play a relevant role in all phases of professional development. 8 Moreover, assessment is a key element in the process of learning, having a positive impact and affecting learners in regard to how they plan and carry out their studies.6–9

Regardless of the timing of their application, the format of observational assessments is a crucial element for ensuring that they are capable of measuring what is intended. In LAS, there is a need for the observational measurement of professional performance other than cognitive knowledge, which is suitably assessed using traditional formats, such as written or oral tests. In medical and health science education, there is consolidated experience in alternative formats of observational exams, such as the Objective Structured Clinical Examination (OSCE), the mini-clinical evaluation, simulations and direct observation in clinical workplaces.6,8,10 In this sense, the format and design of the assessments must be planned to allow the capture of psychomotor dimensions (technical ability) and, if possible, emotional/attitudinal dimensions (such as professionalism or empathy with animals).11,12

Although LAS training programmes are increasingly focusing on professional competence in practice, assessments focused on abilities in this domain are still lacking. LASA was the first organization to publish instruments to assess practical competence in LAS, namely the Direct Observation of Practical/Procedural Skills (DOPS). 13 More recently (starting in late 2021), the Education and Training Platform for Laboratory Animal Science (ETPLAS) website has been disseminating DOPS sheets developed by the working groups, to be used as examples in assessments. 14

As part of an ongoing project implemented at our institution aimed at developing an Objective Structured Laboratory Animal Science Exam (OSLASE) of researchers’ competence, we have previously developed instruments to capture the performance of researchers while handling and performing substance administrations in laboratory rodents.11,15 In this study, we continued the development process of this tool by evaluating the interrater reliability of the assessors and the acceptability of the OSLASE for those who have taken the examination. These are two important elements to understand better the relevance and the assessment impact . Interrater reliability is critical for the reliability of any assessment. As the OSLASE is quite different from the existing examinations, understanding how participants experience the examination should reveal its impact on their motivation and engagement, exam preparation, and development of competence.

Material and methods

Study design and setting

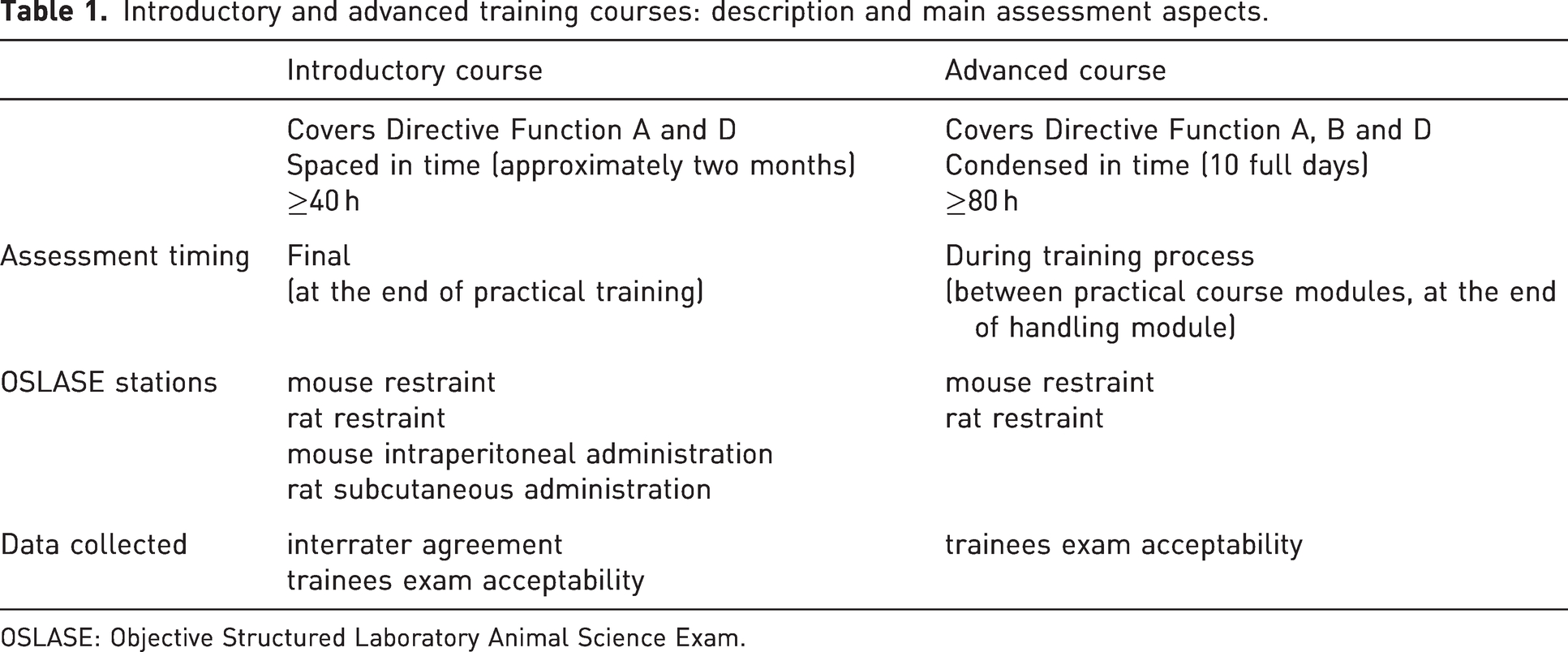

This study included participants who completed a LAS training course (see Table 1 for details of the two course formats). The OSLASE is an observational examination designed to assess LAS skills performance and competence inspired by the OSCE in health professional education.11,16 The exam consisted of four manned stations which assessed the performance of the participants during essential and routine procedures using laboratory rodents; that is, mouse restraint, rat restraint, mouse intraperitoneal administration and rat subcutaneous administration. All stations were timed and involved the handling and restraint of a live rodent (mouse restraint and rat restraint), as well as the execution of procedures using mannequins (mouse intraperitoneal administration and rat subcutaneous administration). Table 1 depicts the stations that integrated the OSLASE in the two course formats. In both contexts (introductory and advanced courses), performance was assessed by trained examiners supported by a global rating scale (GRS) specifically developed for these procedures. 15

Introductory and advanced training courses: description and main assessment aspects.

OSLASE: Objective Structured Laboratory Animal Science Exam.

Data from OSLASE assessments were collected over three years, from 2018 to 2021.

At our institution, a pass mark in the OSLASE (practical assessment) is necessary to successfully conclude the LAS course. Those who fail need to retake the practical training. In the introductory course, trainees take the OSLASE and a written assessment at the end of training. A choice of dates for the OSLASE is offered between two options spaced approximately by one month for the introductory training. In the advanced course, the OSLASE is integrated in the rodent handling practical module, and participants can choose to undertake it at any time during the two sessions of this module. At both training levels, the trainees are assessed individually in a standardized scenario by a trained assessor. The main aspects of each of the training courses are summarized in Table 1.

Data collection from human participants (instrument application in the context of the courses and group interviews) was performed under approval from the Joint Ethics Committee of CHUP/ICBAS with reference numbers 2019/CE/P014(292/CETI/ICBAS) and 2021/CE/P013(P353/CETI/ICBAS). Informed consent was obtained from the candidates prior to data collection. The use of animals for teaching was covered by approval 2017-03 by the i3S Animal Welfare and Ethics Review Body.

OSLASE set-up

In each station, the assessors used a specific global rating composed of 7 to 9 items with descriptors. The final item of each scale was the ‘global impression’ item. The items of all scales presented three performance-rating categories: fail, pass, and clear pass.

In introductory LAS courses, the candidates were assessed individually at the end of the training by the first and second authors of this study. The two assessors evaluated each candidate (n = 92) independently, at the same time, via direct observation of their performance. Not all candidates included in the sample completed the four stations. Some candidates failed scale items, which led to performance failing/end of the exam (e.g. failing to pick up the animal from the cage). Furthermore, a few candidates received training for only one of the species; therefore, they were assessed only for that species (e.g. when training for work using mice, they performed mouse restraint and mouse intraperitoneal administration).

Evaluation of interrater agreement

The evaluation of the OSLASE for the purpose of this paper included assessing the agreement between the ratings of the two assessors, that is, the interrater reliability (IRR), in two ways: the percentage of absolute agreement (% of agreement) and the weighted kappa (Kw) statistic. These are recommended methods for determining assessor agreement because of the recognition of the importance of decisions in healthcare and clinical research supported by IRR results. 17 The percentage of absolute agreement between the two assessors was the number of concordant scores divided by the total number of scores. A range from 75% to 90% of absolute agreement is considered to be acceptable.17,18 The Kw, with linear weighting, was calculated for all scale items of the four global rating scales and for the global impression item (the last item of each scale). Kw allows the weighting of disagreements differently, which is especially useful in studies applying scales with ordered rating categories, so that it confers greater emphasis to large differences between ratings than it does to small differences.19–21 Kappa values representing the agreement between raters were interpreted according to the following scheme: <0.20, poor; 0.21–0.40, fair; 0.41–0.60, moderate; 0.61–0.80, good; and 0.81–1.00, very good. 22 The analyses were performed using the SPSS 21 statistical software package (SPSS Inc., Chicago, USA).

Evaluation of acceptability

To capture a variety of perceptions in both course levels concerning the acceptability of the OSLASE, thirteen LAS course trainees (seven participants from the advanced course, two different editions and the remaining from the introductory course, three different courses) participated in the focus group interviews. Eligible trainees had successfully completed the course at either level (introductory or advanced) in the preceding twenty four months and had received a certificate. Participants were invited by email. All respondents who accepted the invitation were integrated in the interviews. They were previously informed about the study aim and conditions and gave their consent to participate. The decision to conduct focus groups envisioned a deeper delving into trainees’ views. The interview guide was developed by the study authors and was adapted to the different courses (see Table 1). Because of COVID-19 restrictions, the interviews were carried out online (via Zoom).

A total of four focus group interviews were conducted over a seven-month period. Trainees were allocated to focus groups according to course type (two focus groups per type) and availability. The interviews lasted approximately 90 min, with an initial introduction followed by a moderator (last author) - facilitated discussion; an assistant moderator (third author) took notes during the interviews. Neither the moderator nor the assistant moderator were related to the OSLASE administration. The participants were first provided an introduction about the purpose of the study, followed by script questions. The participants discussed their own experiences freely, in combination with prompts when necessary. With the participants’ permission, all sessions were audio and video recorded and the content was transcribed verbatim. Participant identification was pseudonymized. The verbatim transcripts were coded using the NVivo software (QSR International 2022) version 12 for qualitative analysis. The first and last authors decided on a first set of codes and coded each transcription separately, before meeting to confirm the coding and revise the citations. At the end of the procedure, five categories were identified (Choice of timing to perform the exam; Effect on trainee practical preparation before performing the OSLASE; Experience and perception; OSLASE impact on trainee learning process; and OSLASE relevance and importance).

Results

Determining the agreement between raters

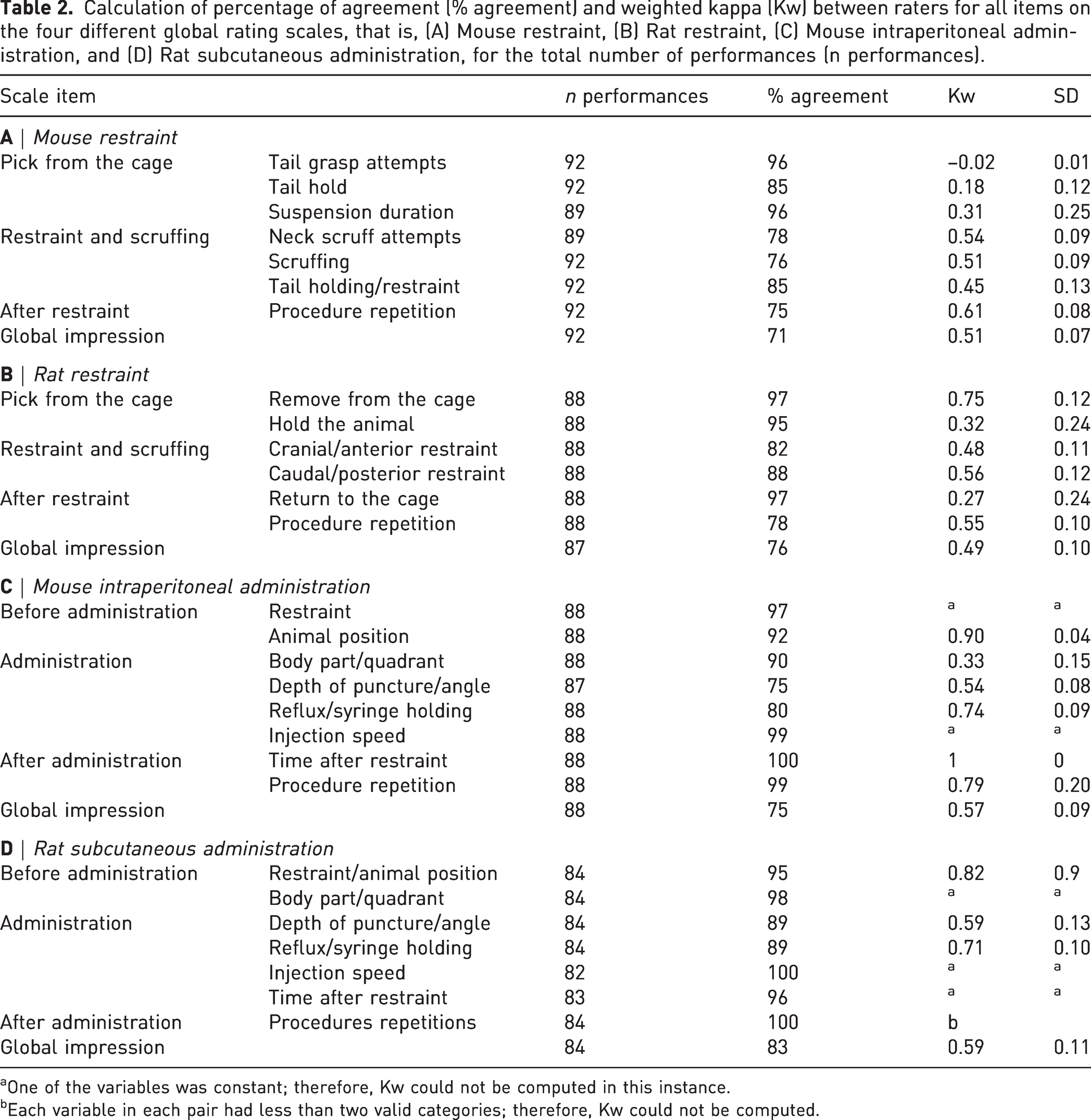

The mouse restraint scale items related to ‘tail grasp attempts’, ‘tail hold’, and ‘suspension duration’ showed high percentages of agreement between raters (≥85%), whereas the Kw revealed poor agreement (below 0.40). The remaining items of this scale showed moderate-to-good Kw values (0.45 ± 0.13 ≤ Kw ≥ 0.61 ± 0.08). The lowest percentage of agreement for all items of this scale was obtained for the ‘global impression’ item (71%), although it presented a moderate Kw value (0.51 ± 0.07) (Table 2, part A).

Calculation of percentage of agreement (% agreement) and weighted kappa (Kw) between raters for all items on the four different global rating scales, that is, (A) Mouse restraint, (B) Rat restraint, (C) Mouse intraperitoneal administration, and (D) Rat subcutaneous administration, for the total number of performances (n performances).

aOne of the variables was constant; therefore, Kw could not be computed in this instance.

bEach variable in each pair had less than two valid categories; therefore, Kw could not be computed.

Regarding the rat restraint scale, the Kw values for interrater agreement for the items ‘hold the animal’ (0.32 ± 0.24) and ‘return to the cage’ (0.27 ± 0.24) were low, despite the fact that the same items exhibited higher percentages of agreement (>95%). For the remaining scale items, including the ‘global impression’ item, there was moderate interrater agreement (0.48 ± 0.11 ≤ Kw ≥ 0.56 ± 0.12), with percentages ranging from 76% to 88%. The first scale item, ‘remove the animal from cage’, showed a good coefficient (Kw = 0.75 ± 0.12) and percentage of agreement (97%) (Table 2, part B).

Regarding the mouse intraperitoneal administration scale, it was not statistically possible to calculate kappa values for the restraint and injection speed items. The percentages of agreement were acceptable (≥97%). One item on this scale, ‘body part/quadrant’, exhibited a low interrater agreement (Kw = 0.33 ± 0.15) and a high percentage of agreement (90%). The remaining scale items showed moderate (‘depth of puncture/angle’ and ‘global impression’), good (‘animal position’ and ‘reflux/syringe holding’) and excellent (‘animal position’ and ‘time after restraint’) kappa values, with percentages of agreement ranging between 75% and 100% (Table 2, part C).

For some items on the rat subcutaneous administration scale (‘body part/quadrant’, ‘injection speed’, ‘time after restraint,’ and ‘procedure repetitions’), similarly to intraperitoneal administration, it was not possible to calculate kappa because of the statistical assumptions underlying the Kw calculation. Nevertheless, those same items showed acceptable percentages of agreement (96%–100%). The kappa of the remaining items was moderate (‘depth of puncture/angle’ and ‘global impression’) and good (‘restraint/animal position’ and ‘reflux/syringe holding’). The percentages of agreement for these scale items ranged between 83% and 95% (Table 2, part D).

The ‘global impression’ item of each scale exhibited moderate values of kappa and an acceptable level of percentage of agreement: global impression for the mouse restraint scale, Kw = 0.51 ± 0.07 and 71%; global impression for the rat restraint scale, Kw = 0.49 ± 0.10 and 76%; global impression for the mouse intraperitoneal scale, Kw = 0.57 ± 0.09 and 75%; and global impression for the rat subcutaneous administration scale, Kw = 0.59 ± 0.11 and 83% (Table 2).

OSLASE acceptability among the trainees

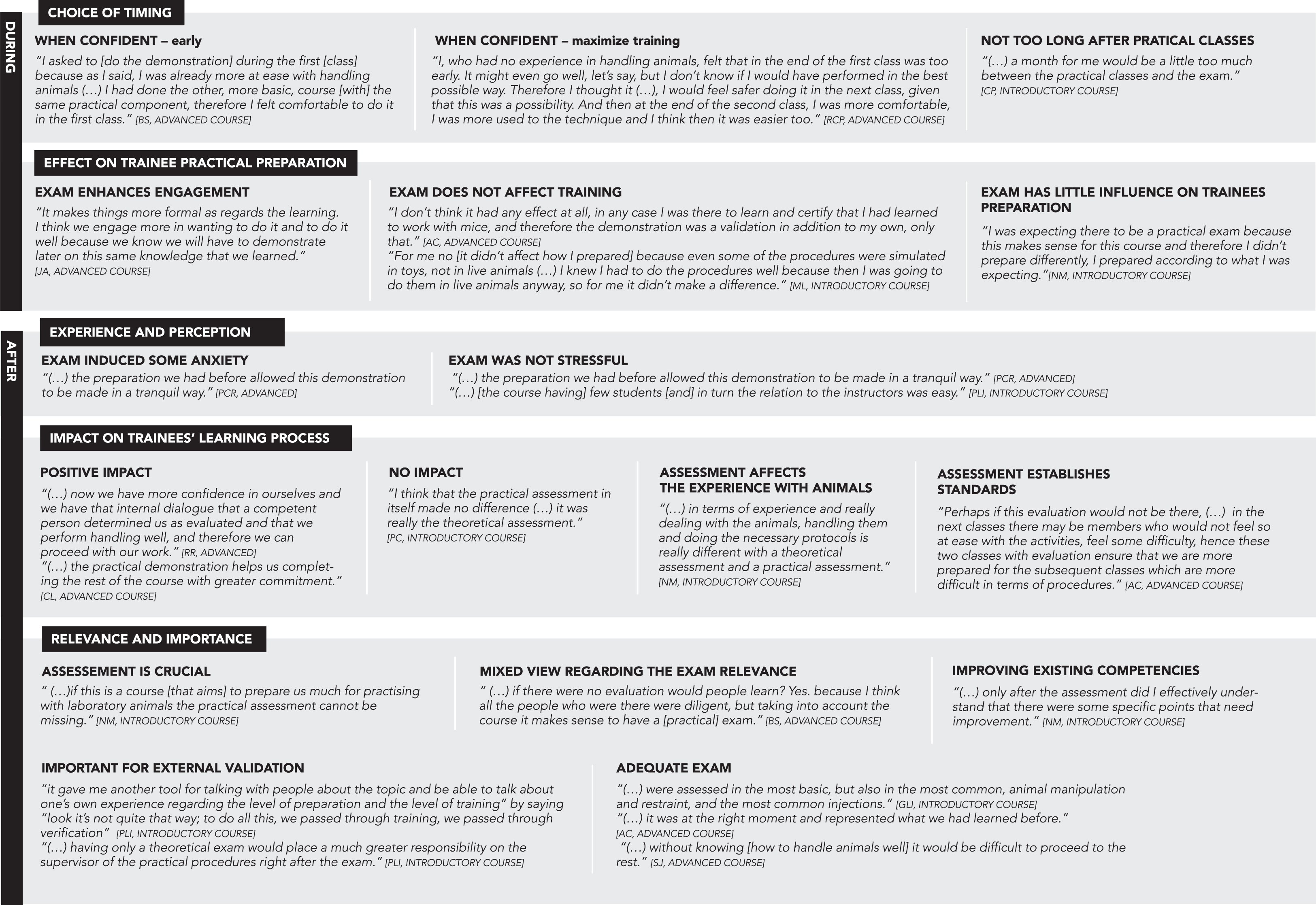

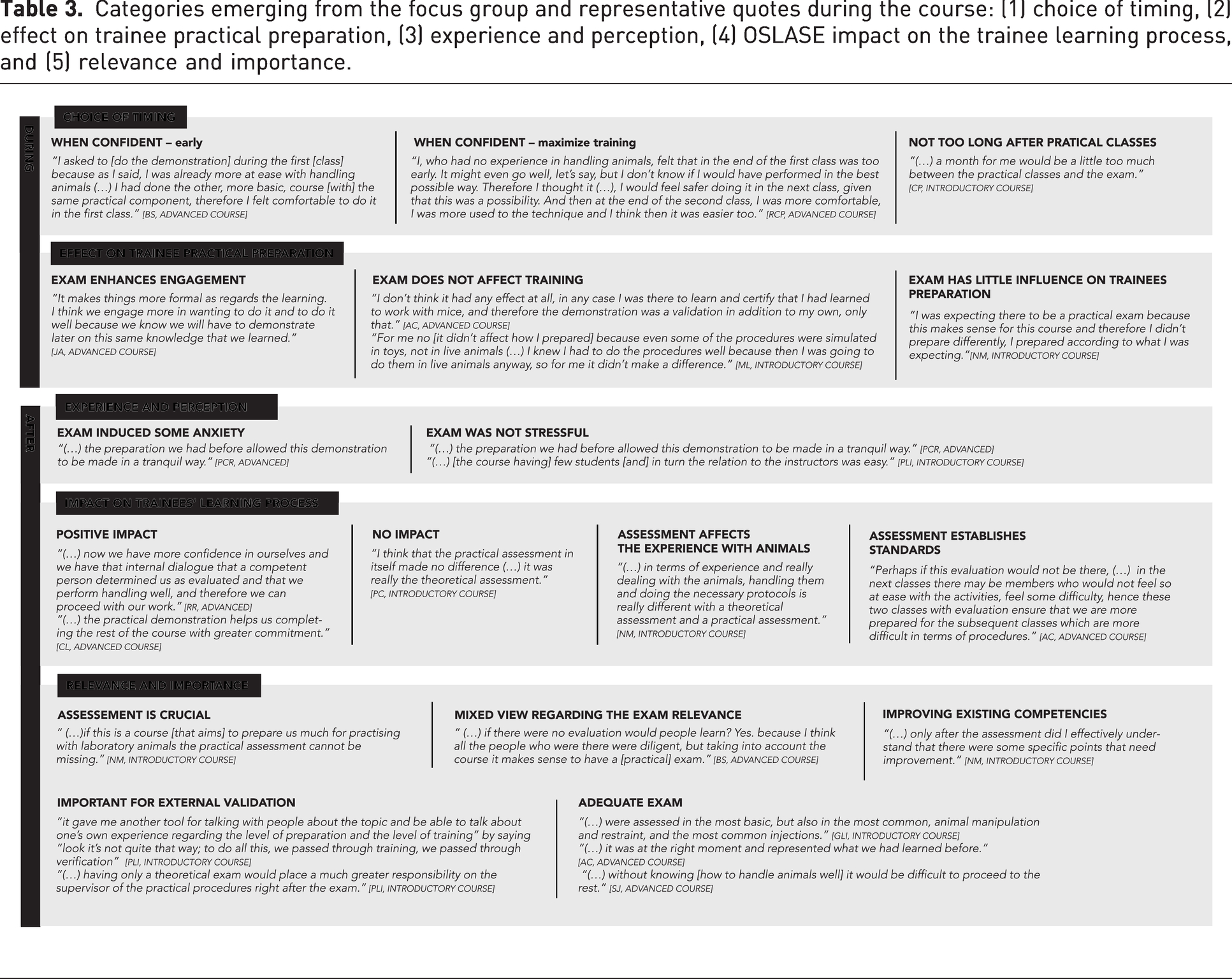

We considered that we reached saturation (i.e. that new interviews no longer added perspectives that had not emerged in previous interviews) in the four focus groups, in which both course types (introductory and advanced) were represented. From the focus group interviews performed among former trainees, five main themes emerged related to the practical exam. Verbatim quotes for each category are given as examples in Table 3. Respondent pseudonym and course format are indicated at the end of each quote.

Categories emerging from the focus group and representative quotes during the course: (1) choice of timing, (2) effect on trainee practical preparation, (3) experience and perception, (4) OSLASE impact on the trainee learning process, and (5) relevance and importance.

(1) Choice of timing

When the advanced course participants were asked about the factors that determined their choice of timing to perform the OSLASE, the key factors were feeling confident and making the best use of the available time to practice before the exam. Without exception, interviewees from the introductory course reported having chosen to take the exam after they had concluded the training period with their tutor, rather than directly after the end of the practical classes. These students, whose practical training had been impacted by restrictions during the COVID-19 pandemic, also highlighted the balance between avoiding a too-long gap but still having sufficient time to practice.

(2) Effect on trainee practical preparation before performing the OSLASE

Several of the interviewees from the advanced course stated that the existence of a formal practical exam enhanced their engagement in the practical class of animal handling. However, some participants argued that they were not approaching the learning any differently as a consequence of knowing they would have to pass an exam. Among the interviewees from the introductory course, there was also a widespread view that knowing there would be a formal practical exam had little influence on how they prepared and practiced. Students also mentioned that, because they were preparing for real life work with live animals, their preparation was not dependent on the administration of an exam.

(3) Experience and perception

Some respondents reported that they unavoidably felt anxious at having to perform a practical demonstration, whereas others highlighted the fact that they did not feel much pressure, as a consequence of how they had prepared and how the exam was carried out, with few trainees per instructor.

(4) OSLASE impact on the trainee learning process

Among the interviewees from the advanced course, in whom the OSLASE aimed to assess handling skills, the test took place during, rather than at the end of, the course; therefore, it was perceived to have a positive impact on subsequent course moments by enhancing self-confidence. The assessment was found to provide more confidence and commitment during the learning process. An assessment performed between the first and the subsequent classes may also contribute to the levelling of the standard between students. Regarding whether the exam changed trainee perception, the opinions of the participants were divided: some considered that practical assessment did not have an impact, whereas others reported that it made a significant difference.

(5) OSLASE relevance and importance

Interviewees considered the OSLASE to be an essential component of the course, as it prepared them to perform practical work with animals, although it was also reported that students in general were motivated and would learn independently of the presence of a practical assessment. One participant highlighted its relevance from the perspective of responsibility regarding overseeing work with animals, in that not having a practical exam would require others, such as supervisors, to assess competence. Trainees in the introductory course considered the format to be adequate. Advanced course participants reported that the choice of the exam moment was adequate and contributed to the identification of specific improvement needs. Interestingly, one respondent discussed the relevance of a practical exam as a means to add authority to the argument that animal welfare is taken into account in the regulation of animal use, filling an existing gap in which their importance is undisputed but often not met in practice.

Discussion

The aim of this study was to explore the implementation of the OSLASE in LAS training, to evaluate the interrater agreement in the practical assessment and the acceptance of the exam by trainees.

The consistency of the agreement among raters provides valuable information regarding the quality of the assessment and its reliability. In particular, it reveals whether the raters agree on how to apply the instrument as a support for deciding on a candidate’s performance. Two statistical methods that have been used in health sciences17,23 were used to calculate the IRR: the percentage of agreement and Kw. These two statistical measures of agreement present advantages and limitations: although the percentage of agreement is easily calculated and directly interpretable, it does not consider whether the raters perform scoring randomly; in turn, the Kw formula corrects this limitation, but is based on assumptions regarding rater independence and other factors that are rarely met in real-life data, which may result in an extreme underestimation of the level of agreement. 17 The results of this study revealed that the majority of the items on all four scales exhibited moderate-to-good values of kappa, as well as acceptable percentages of agreement for all items but one. The Kw and percentage of agreement showed similar results, thus confirming a tendency on agreement, although some scale items were not fully concordant regarding the magnitude of accordance. Previous studies have reported moderate kappa values and excellent percentages of agreement between human and automated raters. 24

Based on these results, there is room to enhance the IRR in the application of the OSLASE. One way of achieving this goal is to train the assessors further in how to apply the scale. Although the incorporation of continuous rater training into busy teaching schedules is challenging, it has been shown to considerably minimize disagreement, particularly for scale items that measure fine and very technical discriminations using various rating categories (as the scales used in this study).25,26 In this study, performance was assessed over a three-year period, and despite being administered by assessors that were very familiar with the GRSs, the quality of the data collected was not monitored over time, to identify potential disagreements, which may have contributed to the limited IRR scores. Moreover, the scales were applied to a restricted number of performances in a controlled scenario (of training). The diversification of the context in which the instrument is applied, such as by including workplace assessments (of researchers performing the procedures as part of their work with animals) as part of a continuing professional development strategy, could enhance IRR.27,28

Going beyond the question of agreement, it is also relevant to consider the value of the OSLASE as a way to implement an assessment strategy that is able to evaluate the practical competence acquired by researchers during the training (for the advanced course format) or at the end of training (for the introductory format). This adapted OSCE type of exam introduced standardization and uniformization of the assessments for all trainees (independently of the course type). The existence of standardized instruments introduces predictability among the trainees, who know how they will be evaluated, and can obtain systematic feedback on their performance. The instruments applied at the stations, the GRSs, promote consistency, which guarantees that the manner in which the candidates’ performance is evaluated is not dependent on who is performing the assessment.

Regarding the OSLASE acceptability, our findings revealed that the trainees accepted and recognized the validity of this type of structured practical assessment on the context of the LAS training course. The trainees took into consideration their confidence in their own capacity to decide on the timing to take the OSLASE, which seemed to be the key factor affecting the decision of when to perform the exam. It is noteworthy that trainees also highlighted another time issue: the time that is needed to develop and reach a level of proficiency to succeed in the assessment. The trainees who were forced to postpone the OSLASE because of COVID-19 pandemic restrictions reported frustration with the delay of the assessment, recognizing that the exam should be adjusted to the training timeline, as an excessive time interval between the end of classes and the exam was perceived as being problematic by them. The integration of the OSLASE in the course programme affected the preparation of the trainees: some respondents recognized that they felt more engaged and stimulated to invest on practical training. Furthermore, the trainees pointed out that assessments ensure that apprentices reach the expected practical learning outcomes. These findings are in line with research on the topic that supported the contention that assessment not only drives, but also has a positive impact on learning.6,8 Although the OSLASE is a relatively recent introduction in this training, a few respondents stated that, for them, a formal practical assessment was assumed and expected in these courses. Assessment was not a stressful experience for the majority of the respondents, which may be explained by the manner in which the experienced tutors, also in the role of assessors, drove the process. In fact, the assessor role being assumed by the tutors can be regarded as a limitation of this study, as it can bias the way candidates faced of the OSLASE. However, the number of LAS tutors with expertise in teaching, supervising and assessing trainees is limited. The interviewees also identified the positive impact of the exam on the learning process, as reported previously in the literature. 9 The topic of how OSLASE helps the trainee to confirm the skills acquired emerged several times during the interviews, confirming the perception that assessment is an essential step in the consolidation and promotion of future practical learning.29,30 The trainees also reported that the OSLASE establishes a standard and defines the skills that students are expected to develop in subsequent sessions. They also felt that demonstrations contributed to the identification of skills that should be improved and to the support of the role of those overseeing workplace training, which are crucial features introduced by the assessment in the context of these courses.

The strategy described in this paper was implemented in two course types, one of which is accredited by FELASA. Accreditation bodies are important in the efforts to support researcher competence development and maintenance during their professional life. Our experience suggests that having all trainees go through the same examination format mitigates the risk that trainees below the desired level of competence will complete the course, thus contributing to course credibility. However, the assessment and verification of the professional competence of individuals working with living animals is a process and a topic that goes beyond the responsibilities of the accreditation bodies or even course organizers. To guarantee that personnel performing procedures in animals are competent is also the responsibility of the institutions or companies at which the animals are used, the animal facilities and licensing authorities. FELASA and other accreditation bodies may play a fundamental role in supporting the change from a traditional paradigm of assessment by challenging the course organizers to include more extensive observational assessments in their courses. These approaches are relevant not only as summative, but also as formative assessments.

Conclusion

To the best of our knowledge, this was the first study to report the impact of the implementation of a practical assessment strategy in a LAS training context. In particular, this study addressed the feasibility of the OSLASE by exploring the interrater agreement and investigating the acceptance of the practical exam by trainees. The results showed that there is room for improvement of the interrater agreement, whereas the overall raters agreed on the decisions regarding the competence of the candidates. The trainees recognized that the OSLASE promoted and guided their learning, increased their engagement and motivation during practical sessions, and served as a confirmation that relevant skills had been learned. Overall, the introduction of the OSLASE allows the measurement of practical competence other than the assessment of cognitive knowledge using traditional evaluation methods.

Footnotes

Acknowledgements

The authors would like to give a special acknowledgement to all course participants who collaborated in this study. The authors also thank Dr Patricio Costa, from the University of Minho, for statistical assistance, and Anabela Nunes, from the i3S Communication Unit, for the support provided regarding the infographics of this paper.

Data availability

The data used in this study were collected from human participants who provided consent for the use of the data for research and publication purposes, but not for sharing as open data. The raw data collected for IRR calculation is available upon request to the corresponding author.

Declaration of conflicting interests

The author(s) declared no conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship and/or publication of this article.