Abstract

The translational value of osteoarthritis (OA) models is often debated because numerous studies have shown that animal models frequently fail to predict the efficacy of therapies in humans. In part, this failing may be due to the paucity of preclinical studies that include behavioral assessments in their metrics. Behavioral assessments of animal OA models can provide valuable data on the pain and disability associated with disease—sequelae of significant clinical relevance. Clinical definitions of efficacy for OA therapeutics often center on their palliative effects. Thus, the widespread inclusion of behaviors indicative of pain and disability in preclinical animal studies may contribute to greater success identifying clinically relevant interventions. Unfortunately, studies that include behavioral assays still frequently encounter pitfalls in assay selection, protocol consistency, and data/methods transparency. Targeted selection of behavioral assays, with consideration of the array of clinical OA phenotypes and the limitations of individual behavioral assays, is necessary to identify clinically relevant outcomes in OA animal models appropriately. Furthermore, to facilitate accurate comparisons across research groups and studies, it is necessary to improve the transparency of methods. Finally, establishing agreed-upon and clear definitions of behavioral data will reduce the convolution of data both within and between studies. Improvement in these areas is critical to the continued benefit of preclinical animal studies as translationally relevant data in OA research. As such, this review highlights the current state of behavioral analyses in preclinical OA models.

Introduction

In biomedical research, animal models are a crucial step in the translational pipeline. However, some discordance exists between animal studies and clinical trials. The predictive value of animal studies varies among the body's biologic systems,1–7 and currently, less than one in ten promising basic scientific discoveries significantly impact clinical practice within 20 years of discovery. 8 This trend extends to osteoarthritis (OA) research, where there has been little success in translating promising preclinical findings to clinical therapies. While shortcomings in preclinical research are not exclusively responsible for this problem, challenges in conducting animal studies can contribute to the lack of clinical impact.

OA presents as a collection of disease phenotypes with shared features. 9 Thus, developing models of OA requires balancing clinically relevant etiology, measurable sequelae, and the target OA phenotype for a potential therapy. Furthermore, preclinical researchers must identify critical OA symptoms without a direct means of communicating with their subjects. However, even in clinical studies, patient reports of pain can vary and be subject to confounding factors.10–14 Attempting to establish similar metrics in animals is nontrivial.

Behavioral assays should allow researchers to examine the symptomatic changes in animals in a controlled, repeatable manner. However, animal behavior is complex, quantifying behaviors is challenging, and inadequate reporting can make it difficult to compare behavioral data between studies. To be clear, behavioral analysis in animal models has been a powerful tool for preclinical researchers, as preclinical research affords a level of experimental control that is difficult to achieve in clinical studies. As such, animal models can provide a platform for in-depth studies of specific OA etiologies, and repeatable behavioral assay design, execution, and reporting would help to improve the robustness of these studies.

Just as a single OA animal model cannot equally replicate all OA phenotypes, so too “OA symptoms” cannot be adequately quantified by a single behavioral test. In her seminal book on behavioral phenotyping in mice, What's Wrong with My Mouse?, 15 Dr. Jacqueline Crawley describes the importance of putting multiple assays together in behavioral testing to form “an optimal constellation of behavioural tests to address specific hypotheses.” This includes considerations of animal numbers, strain, controls, timing, multiple uses and interactions with the same animal, and experimenter workload. As such, the primary objective of this review is to highlight findings from the most used behavioral assessments in the most common rodent OA models. In so doing, we aim to highlight how and why animal behavioral measures can deviate across OA models and experiments. In addition, we suggest steps that may improve the reliability of behavioral assays, since many factors can confound the outcome of behavioral assays. Most importantly, behavioral assays should aim to mitigate the number of possible variables influencing test outcomes and be transparent in the methodologies used. Improving the quality of behavioral assay design, execution, and reporting will benefit animal model utility, promoting robust assessment of OA diagnostics and therapies. Combined, this review serves to highlight the current state of behavioral analysis of OA-related pain and disability in rodent models.

Literature review criteria

A Pubmed search was conducted for the following: osteoarthritis, animal model(s), mice OR mouse, rat OR rats, pain, disability, behavioral analysis, and ARRIVE guidelines. Additional detailed searches were conducted for common behavioral assessments of rodent OA models, including: gait analysis, open field test, running wheel, Rotorod, incapacitance meter, static weight bearing, mechanical allodynia, and Von Frey testing.

Intersection of OA model and behavioral assay selection

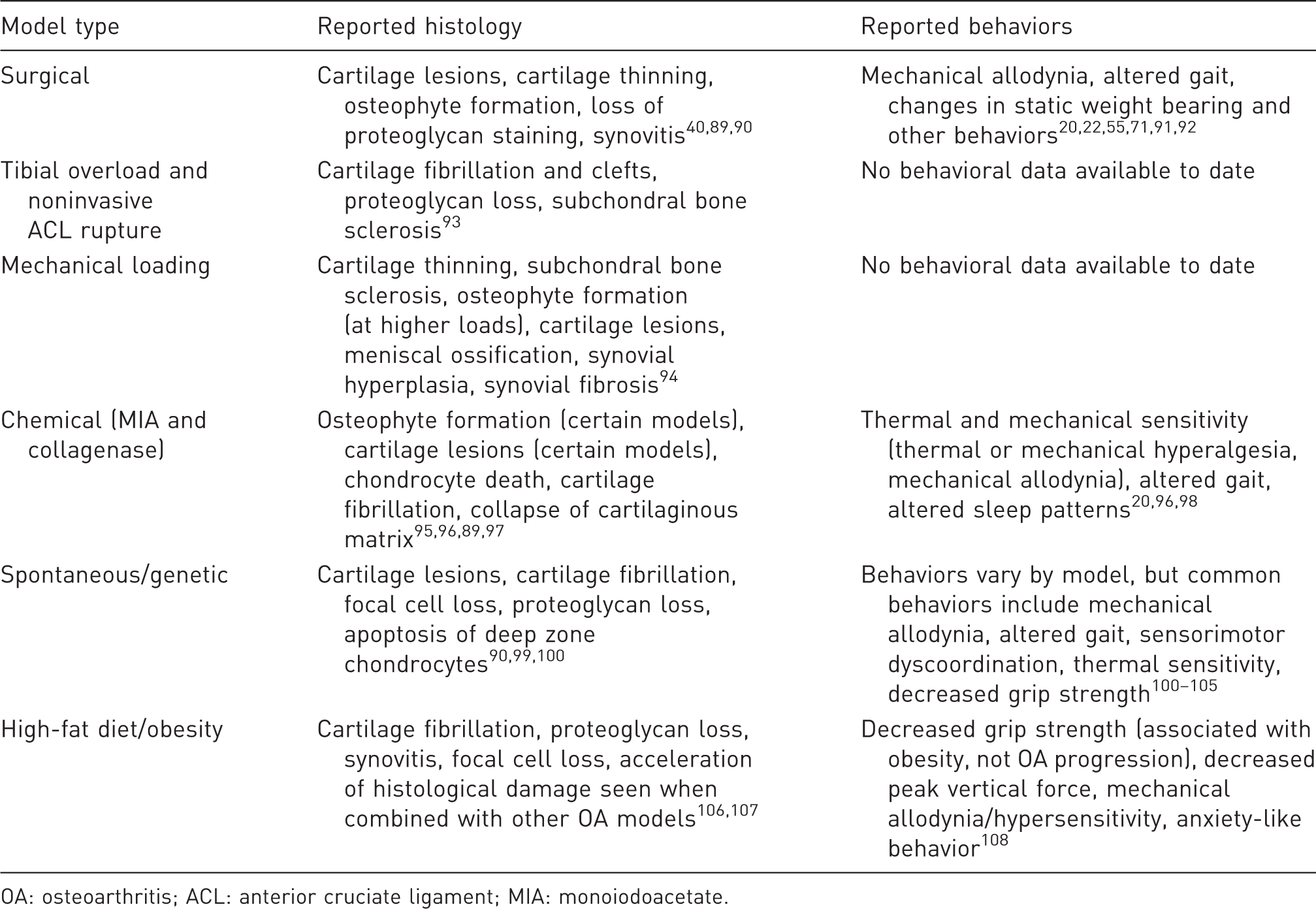

Common types of OA models and the behaviors reported within these OA phenotypes.

OA: osteoarthritis; ACL: anterior cruciate ligament; MIA: monoiodoacetate.

First, in humans, OA is often idiopathic, and while several underlying risk factors are known, there is significant heterogeneity in the etiology, progression, and presentation of OA. Complicating matters further, there is a lack of consensus on what qualifies as an OA model20,21 and which etiological roots different OA models are meant to represent (though there has been some effort to specify OA models as either primary or post-traumatic). 17 For example, despite prevalent use in studies of OA-related pain, chemical injection models are known to cause joint damage that is not necessarily characteristic of clinical OA. 20 An excellent review by Little and Zaki highlights the need for precision when extrapolating OA model data to the clinical condition, as the molecular mechanisms influencing pain and pathophysiology may be distinct for different OA etiologies. 20 Ultimately, there is not a “gold standard” OA model, nor should there be, given the heterogeneity of OA. Different models can provide insight into different aspects of OA. As such, there is a need for specificity in study design, clearly framing the intent behind, and limitations of, a particular OA model.

Applicability of different OA models to human OA

Intra-articular monoiodoacetate (MIA) injection is a widely used model of OA-related pain.22,23 The MIA model produces robust behavioral changes that appear before histological signs of OA, which is consistent with some patient reports.24,25 However, the reasons for clinical development of OA pain prior to joint damage are not fully understood, and whether the MIA model effectively recapitulates these pathways is not known. Additionally, while many OA patients report pain without severe joint damage, many individuals also present severe joint damage without pain.24,26 Finally, the MIA model shares no common etiology with clinical OA, causes severe structural histopathology without fully emulating human OA, 27 and has little transcriptional overlap with human OA cartilage. 28 Thus, the MIA model (and other chemically induced models) may have limited utility when extending the analysis beyond joint-related pain.

Conversely, joint trauma is a known clinical etiology of post-traumatic OA. As such, partial meniscectomy/transection, meniscus destabilization, and anterior cruciate ligament (ACL) transection are often used as OA models. Several surgically induced models of post-traumatic OA reasonably mimic clinical OA etiologies. 27 However, these models have sometimes failed to produce robust behavioral modifications.24,25 A study examining the MIA and partial medial meniscectomy models found partial meniscectomy resulted in less severe pain-related behaviors, despite similar levels of joint damage. 24 Finally, surgically induced models tend to cause focal damage, a histopathology reminiscent of post-traumatic OA, rather than the widespread joint damage characteristic of primary OA. 29 In addition, surgical models introduce a surgical injury that can be difficult to separate from the modeled joint injury. As such, recently developed noninvasive models of post-traumatic OA, like the noninvasive ACL injury model,30–32 have some advantages over traditional surgical models, as these noninvasive injuries mimic clinical etiologies and avoid surgery-associated damage. Nonetheless, little behavioral data have been collected on these models to date.

Selecting behavioral assays for different OA models

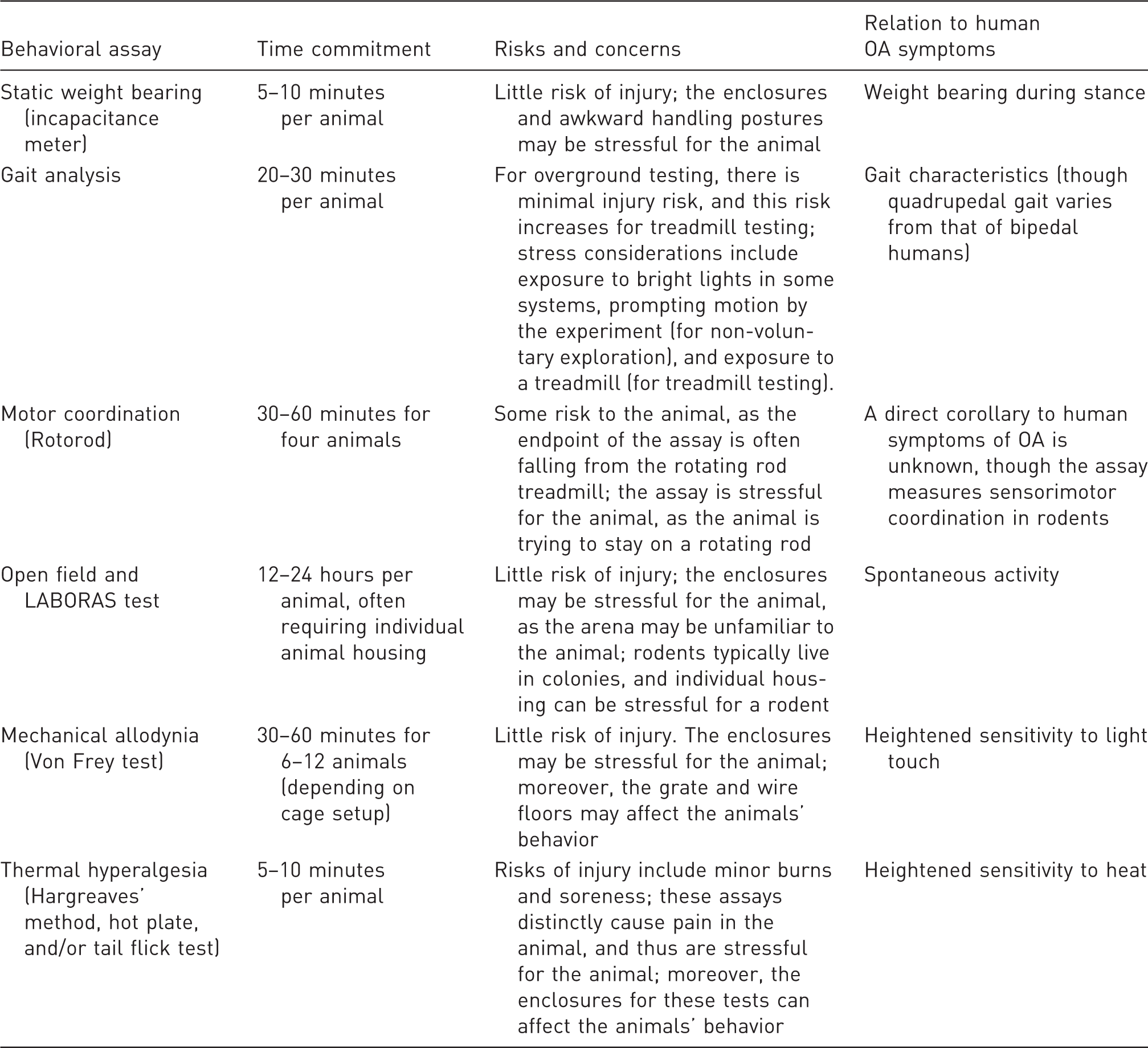

Common OA behavioral tests, typical time commitment for the assay, and the relationship between these behaviors and common OA symptoms.

As an example of behavioral inconsistencies between OA models, several studies have shown static weight-bearing changes in OA. Statistically significant static weight-bearing asymmetry was identified with an ACL transection (male Sprague Dawley rat), 38 medial meniscus transection (male Lewis rat), 39 destabilized medial meniscus (male 129/SvEv mouse), 40 and the MIA (male Wistar rat) models. 24 In contrast, Fernihough et al. 24 reported that partial meniscectomy in the male Wistar rat resulted in an insignificant change in static weight bearing. Similarly, Knights et al. 41 found no change in static weight bearing in a female C57Bl/6 mouse partial meniscectomy model. However, the same study noted changes in tactile sensitivity and vocalizations subsequent to knee compression, where fully meniscectomized mice displayed persistent sensitivity post surgery with these measures. 41 Overall, the differences in behavioral outcomes across rodent OA models may be related to the type of OA model selected, natural differences between rats and mice, variability between different animal strains, and differences between male and female animals.

Simply put, a single assay is unlikely to capture all behavioral changes in all possible OA models. Thus, when characterizing pain-related behaviors in an OA model, a variety of behavioral assays is recommended, when possible. Of course, the number of behavioral tests must balance animal stress and fatigue (see Table 2). In the future, our goal should be to identify the best behavioral assays for specific OA models. However, at this point, behavioral assays have not been consistently evaluated within or broadly evaluated across different models. As such, evaluation of multiple behaviors can help lead to improved experimental design, behavioral assay selection, and scientific robustness in future studies.

Considerations for common OA behavioral assays

Increasing transparency in methods reporting for behavioral assays

With provisions such as the ARRIVE guidelines, methods reporting in animal models has moved toward better transparency.42–44 However, seemingly benign variables can still have significant effects on behavioral outcomes, such as the biological sex of the researcher conducting the test 45 or the surface on which the animal stands. 46 While the ARRIVE guidelines suggest including details such as housing and husbandry conditions,42–44 these guidelines cannot reasonably cover all possible variables that can impact behavioral assays. Furthermore, although widely endorsed, the ARRIVE guidelines have yet to be thoroughly implemented.47,48 Thus, not only is it difficult (or functionally impossible) to report all potential sources of variation for a behavioral test, many studies still fail to follow currently available guidelines.

As an example, in 1994, Chaplan et al. described a method for evaluating tactile sensitivity in the rat. 49 Their method was based on previous tests using Von Frey filaments50–53 and is widely used today. In 1996, Pitcher et al. reported that the surface on which animals stand for Von Frey testing could significantly affect the animals' withdrawal thresholds. 46 Pitcher additionally reported that most studies did not report floor size or material used for Von Frey testing. 46 More than 20 years later, floor size and the material of the testing enclosures is still not regularly reported, despite the continued popularity of Chaplan's method.

One change in the research community that may improve transparency in behavioral assays is the move toward open-source methods and technologies. Many research groups share testing protocols and software on Web sites such as protocols.io and github.com, among others. Our own group hosts a Web site detailing our custom gait analysis system and its associated software (see gaitor.org). Expanding access to shared assays, or at least providing transparency in how the assay is conducted, has the potential to foster community-wide improvements in behavioral testing.

Controlling for common sources of variation

Reporting control data should also be standard for behavioral tests, and internal controls, such as sham procedures, are essential to assess the internal validity of an experiment. However, as OA progresses over long time scales, animals within the experiment may experience several weeks or months of aging. Notably, because of these long time scales, baseline controls are rarely appropriate for OA behavioral testing. Several animal behaviors correlate to animal age, weight, and size. As a primary example, most gait data correlate to the animal's size. 54 Failure to account for these natural confounders can markedly decrease the sensitivity of gait measures in both sham and experimental groups. For these age-, weight-, or size-related effects, historical naïve data may be used to reduce the variability associated with known correlates. 55 That said, while historical naïve data can help account for these covariates, historical data cannot replace internal controls, such as sham procedures, which are essential to the assessment of the internal validity of a study.

Reducing subjectivity in behavioral assays

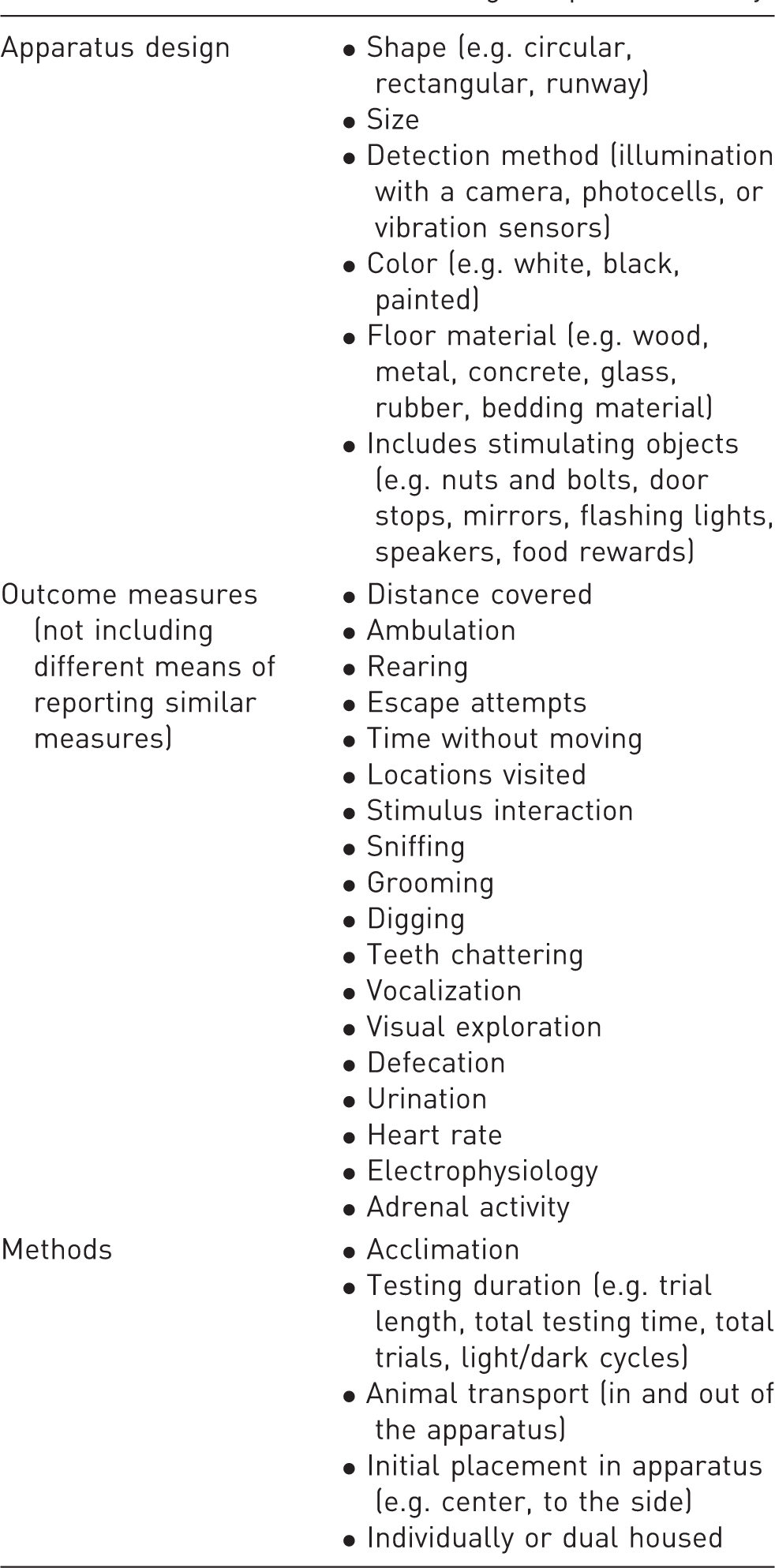

Considerations for selecting an open field assay.

To be clear, the level of subjectivity in a behavioral assay, by itself, does not mean the assay is more or less valuable for behavioral analysis in OA. In other words, behavioral changes detected by a Von Frey test may have a larger effect size than behavioral changes detected by gait analysis. Nonetheless, reducing the subjectivity within a test can help provide better comparisons between similar behaviors measured in different studies or by different groups.

Reducing animal stress

Another means of improving the reliability of behavioral assays is removing sources of animal stress. For any behavioral test, animals should be acclimated to their housing conditions, the researchers who will be handling them, and the testing equipment and procedures. 63 Stress effects have been demonstrated, particularly in behavioral tests requiring animals to be restrained.64,65 Simply incorporating handling methods such as tunnel handling to transport animals in and out of behavioral testing equipment can markedly reduce animal stress. 66 Moreover, utilizing behavioral assays without animal immobilization or restraint can potentially reduce animal stress.

For example, activity monitoring systems require neither researcher interaction nor animal restraint,59,60,67 providing quantitative measures of animal activity.59–61,67,68 Additionally, many rodents are most active in the evening, and activity monitoring can be scheduled to account for light/dark cycles. Finally, activity monitoring can often be conducted in the animals' home cages, allowing researchers to collect behavioral data in a familiar space.59–61,68 Opting to use less stressful behavioral assays, when possible, may reduce data variability related to animals' natural stress responses.

Adapting to operant testing methods

Operant behavioral tests allow animals to choose to participate, often measuring participation rates as an outcome. Changing a behavioral test to utilize an operant paradigm can affect measured behaviors. As an example, the voluntarily accessed static incapacitance chamber allows animals to self-select participation in the weight-bearing test for an allotted time (30 minutes).

69

Here, a water bottle is placed high in a cage, requiring the animal to rear to access the bottle. While drinking, weight bearing is dynamically recorded by an underlying force plate.

69

In contrast, incapacitance meters typically place animals in confined spaces but only require the animal to contact the force panels for 30-second intervals.

70

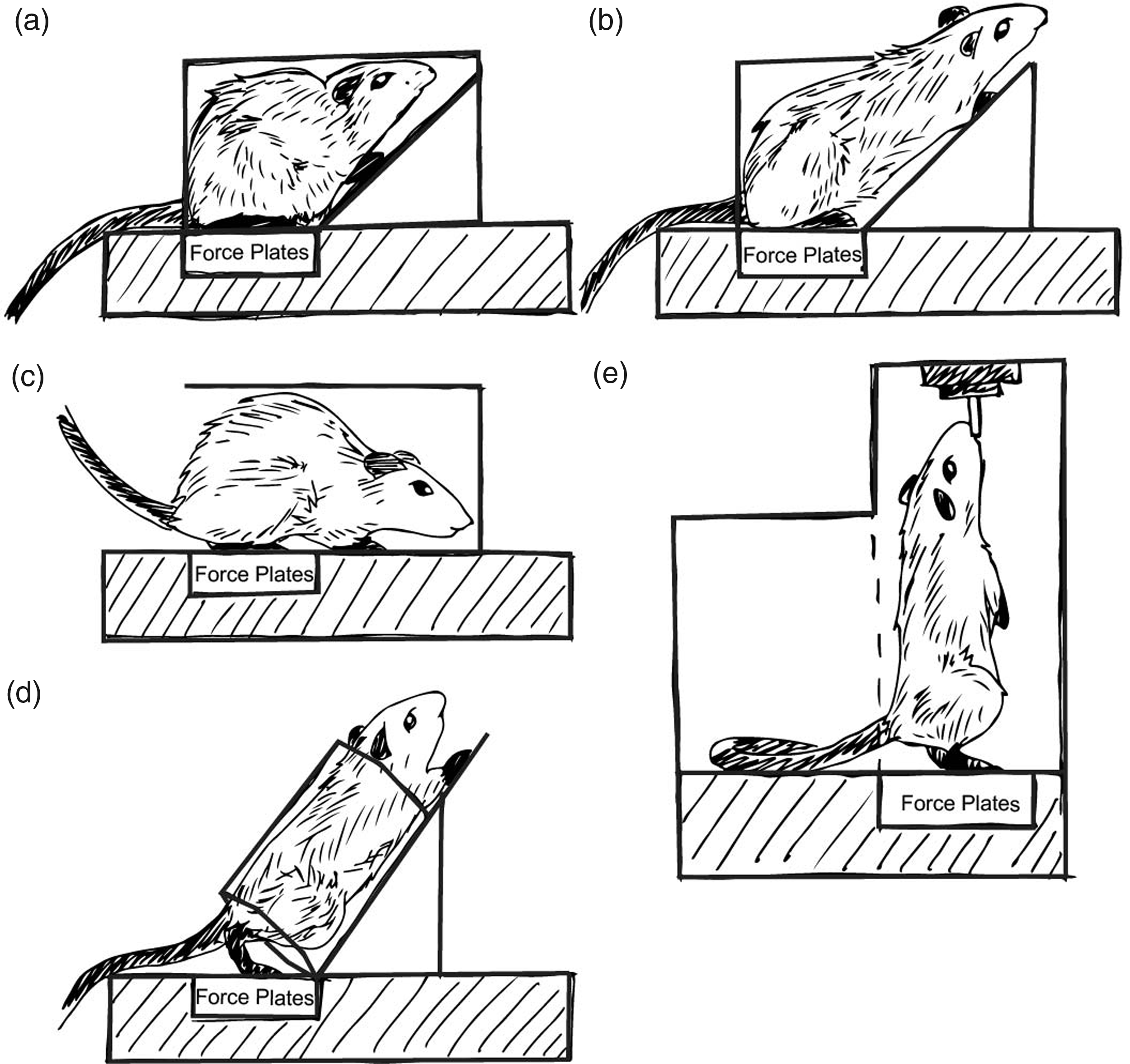

Moreover, the cages used for the incapacitance meter range from forced, squat-like postures to extended reaching postures (Figure 1). While similar metrics may be obtained, the environment is clearly different, as is the animal's posture. Hence, reporting the enclosure and posture characteristics is critical, and utilization of operant methods, when available, may improve behavioral assessments and reduce animal stress.

The incapacitance meter, or static weight-bearing assay, is a commonly used tool for assessing changes related to pain and dysfunction in the rodent joint. Despite sharing the same name, static weight-bearing tests can be conducted in a number of apparatuses, as shown. The variations in the animal's positioning and restraint may affect both the animal's weight distribution (e.g. more ability to offload weight to the forepaws) and stress.

Evolving as best practices form

While behavioral testing may be improved by increasing methodological transparency, reducing subjectivity within specific assays, and adapting to less stressful methods for the animal, standardization will remain difficult to achieve due to the rapid evolution of the field. Advancing behavioral assays to temper sources of variance further or address other potential limitations is important to the evolution of the field. Moreover, it is important to pair behavioral assays to confirm a symptomatic state in the animal. For example, while Von Frey tests can be prone to subjectivity, the test provides very useful data on paw sensitivity. Thus, pairing Von Frey tests with static weight bearing, activity monitoring, or gait data can provide complementary data indicative of the animals overarching symptomatic state. By working toward establishing broadly accepted tests for OA models, we will be better able to evaluate how and why OA-related symptoms vary as a result of different biological and physiologic factors.

Considerations for reporting behavioral testing results

Failure to adapt similar terminology across studies

In addition to refining behavioral assays, refining data presentation from behavioral assays is also critical. Consensus on which measures are meaningful (and what they mean) has not been reached for all assays. Unfortunately, many behavioral measures are either uncommonly used or have been defined in conflicting ways. While it is entirely valid for groups to employ different behavioral assays, it reduces the utility of these studies when the metrics remain “niche.”

As an example, much like OA may refer to many different etiologies of joint degeneration, “gait analysis” can refer to assays using treadmill walking or overground walking, which may additionally include spatiotemporal and/or dynamic assessments.49,54,58,71–75 Moreover, many gait systems approach data collection in different ways, and the outputs from different systems and software do not always agree or report the same measures.54,76 Some groups have also reported gait changes based on qualitative observation.77–82 These measures may share some terminology with quantitative measures (i.e. stance score vs. stance time), despite lacking any quantitative assessment.

As another example, weight bearing may refer to static or dynamic weight bearing. Bioseb's kinetic weight-bearing assay is described as a “refined” dynamic weight-bearing test, whereas incapacitance meters typically assess static weight distribution. Further conflating terminology, both static and dynamic weight bearing have been referred to as incapacitance meters, and “dynamic weight bearing” is also a metric obtained by certain gait analysis systems. Furthermore, descriptively similar measures (weight bearing on a given hind limb) may be presented in terms of weight distribution of ipsilateral relative to contralateral limb, 70 percent body weight, 57 or percent difference between “baseline reading and post-treatment,” 22 among other potentially derived ratios.

From these examples, the need for consensus in data presentation becomes apparent. Variation in data presentation poses a few issues. First, a lack of consensus on which measures to report (and how to report them) can hamper both inter- and intra-field communication. Second, as discussed next, reporting variables that are not independent measures can convolute the data.

Relative independence of behavioral measures

Returning to the example of gait analysis, the DigiGait system (Mouse Specifics, Inc., Quincy, MA) reports more than 50 gait measures. 54 In a study comparing DigiGait to TreadScan (CleverSys, Inc., Reston, VA), only five gait measures were reported for comparison, as they were the five measures “often used in existing publications of gait analysis in preclinical models.” 76 Consistent differences were observed between the two systems, and it was suggested that the lack of agreement might be due to belt speed. 76

Of course, walking velocity correlates with the majority of gait variables, and failure to account for velocity will increase the variability of gait measures. 58 A study by Batka et al. 83 identified more than 90% of gait variables reported by the CatWalk method were velocity dependent. However, few studies using the system control for speed. A study by Cendelin et al. 84 found that most gait differences identified in their study became insignificant when corrected for velocity. Furthermore, while treadmill-based gait systems allow for velocity control, overground walking reduces animal stress. Combined, it is difficult to compare velocity-dependent variables without presenting these data in a manner that accounts for velocity (such as residualizing the data). 55 Presenting data as relatively independent measures, such as velocity normalized gait data, could improve the utility of study results to the field at large.

Standardizing definitions

While developing new metrics can be beneficial to characterizing behavioral changes, “novel” measures have sometimes proven to be just new presentations of existing measures. Returning to the example of gait analysis, swing time ratio has been presented as a sensitive parameter defined as the swing time of the ipsilateral limb divided by the subsequent contralateral limb swing time. 85 However, swing time is not independent of stance time. 86 Thus, swing time ratio provides only a different presentation of stance time imbalance.

Furthermore, accepted metrics are sometimes misinterpreted and consequently presented in misleading ways. In gait analysis, stride length refers to the distance between subsequent footsteps on the same limb, while step length refers to the distance between a left and right footstep along the direction of travel. 58 Occasionally, stride length has been incorrectly presented as step length, and comparisons between left and right “strides” are assessed. 87 One would anticipate these results to be insignificant, as strides measured by either the right or left limb should be equal unless the animal is moving in a circle. However, step length, with its proper definition of distance between left and right footsteps, can be a significant measure of gait modification. 88 Recommendations on conducting and presenting rodent gait data have been published previously by our group.54,58 Similar recommendations for other behavioral assays, for both quantitative and qualitative reporting, could assist with improving these measures across the field. Furthermore, aligning terminology and definitions with clinical and historical definitions, where possible, would help to communicate study results to the broader research community.

Conclusions

Behavioral analysis serves an important role in preclinical studies, allowing researchers to assess clinically poignant consequences of disease. To improve the utility of behavioral tests, researchers should seek parity in their methods, and transparent methods reporting is essential to this goal. Additionally, studies should critically examine the intersection of behavioral assays and the OA model, seeking to understand behaviors indicative of pain and disability in the context of the human OA condition and various OA phenotypes. Finally, there is a need to improve our reporting of behavioral data, using clear definitions and calculations. This is not to suggest that there should be a singular standard array of behavioral assays for OA research at this time. We recognize that many of these assays are still relatively new to the field and have yet to be widely adopted across research groups. Rather, there is a need for preclinical researchers to push for broader dissemination of behavioral assay technology, protocols, and techniques to improve the quality of preclinical studies and the reliability of these critical symptomatic data.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the NIH (grant number R01AR071431-01).