Abstract

Secondary school educators are well placed to recognize and respond to mental illness in adolescents; however, many report low confidence and skills in doing so. A confirmatory cluster randomized controlled trial involving 295 educators (Mean age: 40.10 years, SD: 10.47; 76.6% female, 2.7% Aboriginal or Torres Strait Islander) from 73 Australian secondary schools (22 in rural-regional locations) evaluated the effectiveness of a new professional development training program that aimed to improve secondary school educators’ confidence, behavior, knowledge, and attitudes toward student mental health. Relative to the control, training participants reported significantly greater levels of confidence in recognizing and responding to student mental health issues, perceived mental health knowledge and mental health awareness, and mental health literacy, at post-intervention (10-weeks post-baseline; d = 0.26–0.35) and at 3-month follow-up (d = −0.21 to 0.41). Findings indicate that the Building Educators’ skills in Adolescent Mental health (BEAM) program improves important training outcomes for educators in the domain of student mental health.

Keywords

Introduction

Mental illnesses, particularly anxiety and depressive disorders, are a significant issue for secondary school educators worldwide. Half of all young people who experience these disorders first do so when at secondary school, and these illnesses often result in disrupted learning and development (Kessler et al., 2007). Secondary school educators are well-placed to notice the behavioral, social, and emotional changes that may signify the onset or deterioration in mental illness (Franklin et al., 2012; Mazzer & Rickwood, 2015; Whitley et al., 2018). However, many educators report a lack of skills and confidence in recognizing and responding to students’ mental health problems (Nash & López, 2021; Reinke et al., 2011; Whitley et al., 2013). Many educators also struggle to adapt their teaching practices in response to students’ mental health needs (Ginsburg et al., 2022). This is not surprising given that many secondary school educators have not received training in adolescent mental health (McCann et al., 2021; O’Dea et al., 2021; O’Reilly et al., 2018). This may be due to a lack of accessible, evidence-based training programs that are effective for improving educators’ knowledge, attitudes, and behaviors in relation to student mental health.

Three recent systematic reviews on teacher training programs for student mental health have highlighted the lack of evidence-based programs currently available to educators within and beyond Australia (Anderson et al., 2019; Ohrt et al., 2020; Sánchez et al., 2021; Yamaguchi et al., 2020). From these reviews, only 9 secondary school training programs had published evaluations and of the 13 included studies, only 3 were randomized controlled trials. This highlights the lack of rigorous research in this field. All existing training programs were delivered face-to-face in schools by external providers and were didactic in nature, relying on facilitator-led presentations and case studies to convey course content. The duration of the existing training programs also varied considerably, from 2 hours to 2 weeks, and all required educators to be absent from their normal school duties. As teachers are time-poor, it is likely that these logistical factors create significant barriers to the widespread uptake and completion of existing training programs. Finally, the primary goals of the existing training programs were to increase educators’ mental health knowledge and attitudes based on the classical theoretical framework of behavior change, whereby improving knowledge and beliefs improves teachers’ practices and approaches to student mental health (Organisation for Economic Co-Operation and Development, 2009). While some of these programs were indeed found to be effective for improving educators’ knowledge and attitudes in student mental health, most of the existing training programs and studies of these failed to examine other outcomes relevant to behavior change in this domain.

Self-confidence and self-efficacy are important constructs to consider when examining ways to improve educators’ approaches to student mental health. These constructs are founded on the principle that individual’s beliefs about their abilities to perform a task have a profound effect on their actual abilities (Bandura, 1977, 1997). Higher levels of educators’ self-confidence have been shown to positively influence their practices, enthusiasm, persistence in working with challenging students, and overall performance (Klassen & Tze, 2014; Skaalvik & Skaalvik, 2007). Despite this, existing training programs and past studies have failed to address the importance of educators’ self-confidence in the process of recognizing, responding to, and managing student mental health issues. Only two randomized controlled trials (RCTs) have measured the effect of mental health training on educators’ self-confidence and only one has measured the effect of training on actual helping behaviors. Furthermore, only one study has reported on the adverse events or unfavorable outcomes for educators related to their completion of mental health training (Yamaguchi et al., 2020). Thus, there is currently little scientific evidence to guide educators on what type of training approach is most effective for improving outcomes in all key domains of knowledge, attitudes, and confidence in relation to student mental health while also preserving their own well-being.

A New Training Program—Building Educators’ Skills in Adolescent Mental Health

To address the need for a more comprehensive, evidence-based approach to mental health training for secondary school educators, the Black Dog Institute developed the Building Educators’ skills in Adolescent Mental health (BEAM) program. This program extends beyond the outcomes of the existing teacher training programs in student mental health (i.e., knowledge and attitudes) to specifically target improvements in educators’ confidence in recognizing and supporting students with mental health problems. The program also embeds principles from the workplace management approach (Gayed, LaMontagne, et al., 2018), whereby secondary school educators are conceptualized as “student managers.” Embedding this novel approach was hypothesized to generate greater changes in confidence among educators as several studies had confirmed the effectiveness of manager training for improving knowledge, attitudes, and behavioral change in relation to supporting employee mental health (Bryan et al., 2018; Gayed, Bryan, et al., 2019; Gayed, Milligan-Saville, et al., 2018).

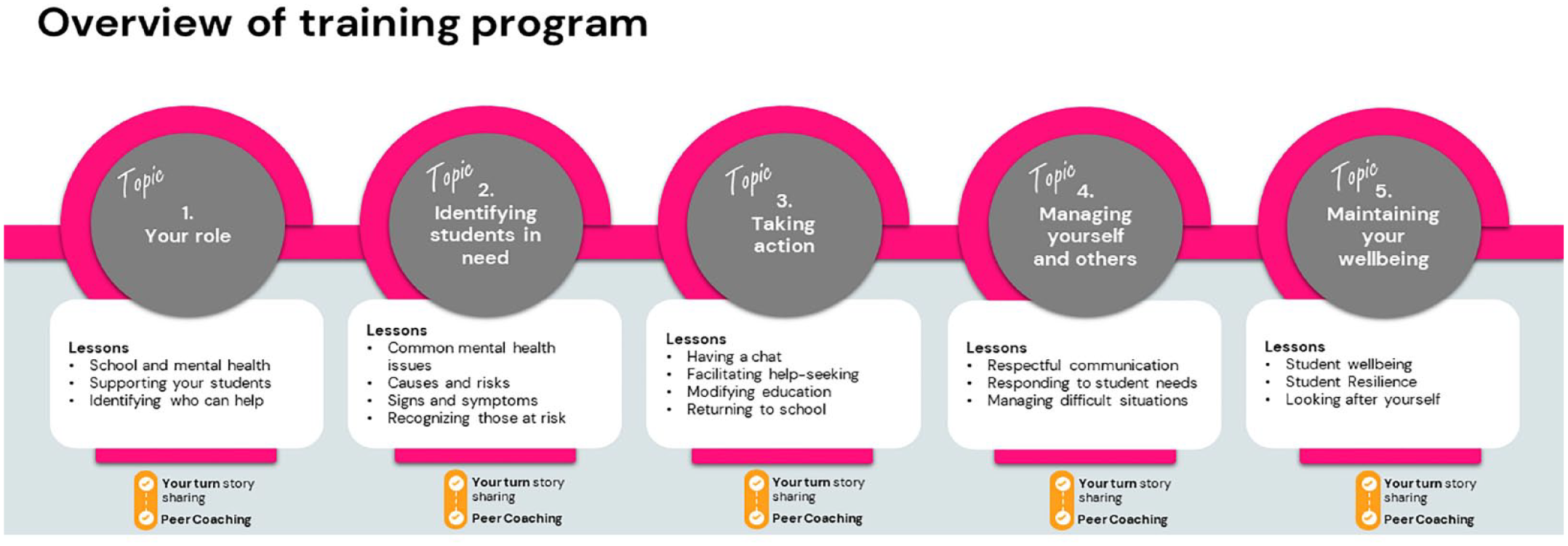

Co-designed with senior educators from Australia, the BEAM program is directed at secondary school educators in leadership roles (i.e., Year Advisors or equivalent) due to their increased responsibility and accountability for student mental health and a lack of specialized training for these positions (O’Dea et al., 2021). In contrast to existing didactic programs (Ohrt et al., 2020), the BEAM program blends online, self-directed learning content with in-person skill development and peer-coaching. This approach is based on Bandura’s research that demonstrates self-confidence develops through mastery, vicarious experiences, social persuasion, positive emotional states, and imaginal experiences (Bandura, 1977; Maddux, 2013) and the emerging research on the principles of effective workplace management training (Gayed, Bryan, et al., 2019; Gayed, LaMontagne, et al., 2018). The BEAM program targets these elements through five overarching topics consisting of 17 interactive activities (see Figure 1) that include skill development and practice, observation of peer role models, provision of feedback, visualization of effective behavior, and managing personal distress.

Overview of the Building Educators’ Skills in Adolescent Mental Health Training Program Content.

The BEAM program was also developed in accordance with professional development frameworks for teachers (Desimone, 2009; Philipsen et al., 2019). Several course components were designed to target the five core features of effective teacher training: content focus, active learning, coherence, duration, and collective participation. To this end, the content focus of the training curriculum was co-developed with different subject matter experts (e.g., psychologists, teachers, and educational designers) to ensure relevancy to teachers’ daily work and consistency with their expectations, beliefs, school context, and priorities in relation to student mental health (Liao et al., 2017; Quinn et al., 2019). Active learning and collective participation were targeted through the inclusion of peer-coaching activities, whereby participants were instructed to meet with their colleagues to respond to a series of hypothetical scenarios related to student mental health. These activities provide participants with an opportunity to share ideas, teach one another, reflect on current practices, build new skills, socially interact, develop rapport and supportive relationships with colleagues, build professional learning communities within schools, consolidate learnings, and receive feedback (Zhang et al., 2017). Self-reflection is further targeted through a “Your turn” activity whereby participants are asked to respond to a series of relevant case studies and a “Share their story” opportunity whereby participants are invited to submit an anonymous self-reflection on a personal experience related to student mental health. The program also includes strategies to support self-care as many educators have reported that the additional management of student well-being has led to greater workload and stress (Bower & Carroll, 2017; Higgen & Mösko, 2020; Lever et al., 2017; Mazzer & Rickwood, 2015). A blended delivery approach was selected based on teachers’ preferences for online learning that enhances collaboration and personal learning networks (McElearney et al., 2019) and reduces the social isolation of online-only courses (Gay & Betts, 2020; Kaufmann & Vallade, 2022). Online delivery also enabled greater flexibility for completion of the self-directed learning, greater accessibility and geographical dissemination, and the preservation of program fidelity (Sánchez et al., 2021).

To determine the initial acceptability, feasibility, and likely effectiveness of the BEAM program for improving secondary school educators’ mental health knowledge, attitudes, confidence, and helping behaviors, a single-arm 6-week pilot study among 70 Australian teachers was conducted (Parker, Anderson, et al., 2021). Educators reported significant increases in confidence at post-intervention and 3-month follow-up, significant improvements in helping behaviors at 3-month follow-up, and a significant reduction in their psychological distress at post-intervention. However, the study was limited by the lack of a control group. Furthermore, only 16% of participants completed the entire program within the 6-week timeframe. Participants requested a longer training duration and found the sequential structure and compulsory peer-coaching activities to be a barrier to completion. Many also reported forgetfulness as an additional barrier. In response, the BEAM program was modified so that all content was immediately accessible to participants. A topic recommendation feature was also implemented to tailor participants’ starting position to their level of interest and experience. The peer-coaching activities were made optional, and the duration of the program was extended to 10 weeks. Additional reminders were also implemented, sent via SMS, and the program was optimized for delivery on mobile and tablet devices.

Objectives of the Current Study

The primary aim of this cluster RCT was to examine the effectiveness of the BEAM training program for improving Australian secondary school educators’ confidence in recognizing and responding to students’ mental health needs. It was hypothesized that educators who received the BEAM program would report greater improvements in confidence, relative to a waitlist control condition, immediately at post-intervention and at 3-month follow-up. The trial also examined the secondary effects of the BEAM program on educators’ self-reported frequency of helping behaviors for student mental health problems, their perceived mental health knowledge and awareness, mental health literacy, mental health stigma, and personal levels of psychological distress. It was hypothesized that educators who received the BEAM program would report greater improvements in these outcomes at post-intervention and 3-month follow-up, relative to the control condition. This trial also measured program completions, barriers to program use, program satisfaction, and perceived effectiveness of training among participants.

Method

Design

This study utilized a two-arm cluster RCT with schools as clusters and educators as participants. The full trial protocol, outlining the methodology and questionnaire adaptations in detail, is available elsewhere (Parker, Chakouch, et al., 2021). All outcome measures pertain to the individual participant level. Outcomes were assessed at baseline, primary endpoint (i.e., post-intervention measured at 10 weeks post-baseline), and secondary endpoint (i.e., 3-month follow-up measured at 22 weeks post-baseline). Ethics approvals were obtained from the University of New South Wales Human Research Ethics Committee (HC200257), the State Education Research Applications Process for the New South Wales Department of Education (SERAP2020222), and the Catholic school offices for the Dioceses of Maitland-Newcastle, Canberra-Goulburn, and Wollongong. The trial protocol was registered with the Australian New Zealand Clinical Trials Registry (ACTRN12620000876998). The Universal Trial Number is U1111-1253-3176.

Setting

This study took place between August 2020 and March 2021 in New South Wales, Australia. All baseline data was collected between August and October 2020. During this time, schools were operating as usual, and there were no school closures due to the COVID-19 pandemic. All study outcomes were collected online.

Participants

Eligible schools were any Independent or government secondary schools located in New South Wales, Australia. Catholic schools from the approved dioceses in New South Wales (Maitland-Newcastle, Canberra-Goulburn, and Wollongong) could also take part. All schools were required to provide a signed letter of support from the principal. Eligible participants were educators who were employed in any leadership position responsible for student well-being (e.g., Year Advisors, Directors or Heads of Student Well-Being, Directors of Pastoral Care, or equivalent) at a participating secondary school in New South Wales, Australia, and who had principal consent. Secondary school educators who participated in the pilot study were ineligible. No other exclusion criteria were applied.

Assignment and Masking

Schools were assigned to a single condition (cluster design) to avoid contamination and for administrative feasibility. The assignment was carried out according to the International Council for Harmonization guidelines by an external researcher not involved in the study activities. The assignment occurred after the principal’s letter of consent was received by the research team. A minimization approach to the assignment was used to ensure balance across the study conditions in terms of school size (<400 or >400 students) and the Index of Communication Socio-Educational Advantage level (<1,000 or >1,000; Taves, 2010). This was undertaken in Stata version 14.2 using the rct_minim procedure (Ryan, 2021). The trial manager was unblinded to participants’ condition assignment to support study administration. While not explicitly informed, participants were likely made aware of their condition upon baseline completion due to the nature of the instructions provided and study activities.

Sample Size

The target sample size was 234 educators (i.e., 117 per condition) from approximately 46 schools. This target was based on an ideal participation rate of five educators per school, an intra-class correlation (ICC) of .07, a design effect of 1.28, a moderate effect size of .50 on the primary outcome, statistical power of 80%, alpha of .05 (two-tailed), and 30% attrition.

Recruitment and Consent

Study adverts featured in the Black Dog Institute’s e-newsletters, website, and social media channels (Twitter, Instagram, and Facebook). The study was also advertised in the New South Wales School-Link newsletter (a state government initiative that links schools with local health services); Teacher Magazine; and the relevant Catholic Diocese e-bulletins. Educators were directed to express interest in the study via an online form on the study website. They were then emailed study information and instructed to share it with their colleagues to encourage co-participation within schools. Educators were required to obtain a signed letter of consent from their school principal to enable their participation in the study. For schools with multiple study participants, only one letter of consent from the school principal was required. Educators provided their own informed consent to participate via an online Participant Information Sheet and Consent Form.

Procedure

All educators who expressed interest and had principal consent were emailed 1 week prior to their scheduled study start date and asked to confirm their eligibility and provide final consent. They were then invited to create an online study account by providing their name, school, email address, and setting a password. Participants could also provide their mobile phone numbers to receive study notifications and reminders via SMS. Participants were informed that their personal information would be removed prior to data analysis to preserve their confidentiality. On their scheduled study start date, participants received their invitation to complete the baseline survey (via email and/or SMS). The survey was accessible for 7 days, and participants who did not complete it were withdrawn from the study. Participants also received email/SMS invitations for the post-intervention and 3-month follow-up surveys. These surveys remained open for 7 days and participants received two reminders for each study assessment. All participants received a 15AUD gift voucher for completing the post-intervention survey and an additional 15AUD gift voucher for completing the 3-month follow-up survey.

Study Conditions

Intervention Condition

Participants in the intervention condition received access to the training program for 10 weeks upon completion of the baseline survey. In the current trial, the topics were displayed sequentially but participants were able to complete these in any order, at their own pace (i.e., did not require the completion of the Your Turn or Peer-coaching activities). The program was intended to be completed within 10 weeks and could be accessed on any internet-enabled device. Participants were advised that the program would take approximately 6.5 hr in total to complete. To encourage skill development and practice, participants were advised to undertake one topic per fortnight and received fortnightly email/SMS reminders to do so; however, it was possible for participants to complete the entire program in one session if they wished. Group responses to the peer-coaching were submitted to the research team and generic feedback was provided to participants by email within 3 business days.

Control Condition

The control condition was a waitlist. Participants allocated to the control condition were given access to the BEAM training program at the end of the study period (i.e., after the completion window closed for the 3-month follow-up assessment), regardless of whether they completed the post-intervention or 3-month follow-up study assessments. A waitlist was deemed the optimal comparator as it would allow for the effects of the training to be isolated while also providing educators with the opportunity to receive the program at a later date.

Outcome Measures

Primary Outcome: Confidence in Recognizing and Responding to Students’ Mental Health Needs

The primary outcome for this trial was participants’ self-confidence in their ability to recognize, refer, and support students with mental health problems. This was measured using an adapted version of the Confidence to Recognize, Refer, and Support subscale (Sebbens et al., 2016). Participants were asked to rate their confidence in dealing with 15 mental health scenarios (e.g., “recognizing a student with mental health problems”). Items were answered using a 5-point Likert-type scale ranging from not at all confident (1) to very confident (5). Items were summed to give a total score, ranging from 15 to 75. Higher scores indicated greater levels of confidence. In this study, the Cronbach α = .96.

Secondary Outcomes

Frequency of Helping Behaviors for Student Mental Health

A modified version of the Help Provided to Students Questionnaire (Jorm et al., 2010) was used to measure the frequency of helping behaviors for student mental health. Participants indicated how often they had engaged in 13 helping behaviors (e.g., “spent time calming a student down”) during the past 2 months, answered using a four-point scale (“never,” “once,” “occasionally,” and “frequently”). Items were then summed to create a total score, ranging from 13 to 52. Higher scores indicated more frequent helping behaviors. In this study, the Cronbach α = .90.

Perceived Mental Health Knowledge and Perceived Mental Health Awareness

These two constructs were assessed separately using the Perceived Knowledge and the Perceived Awareness subscales from the Mental Health Literacy and Capacity Survey for Educators (Fortier et al., 2017). For the 4-item knowledge subscale, participants were asked to rate their level of perceived knowledge (e.g., “how would you rate your knowledge of the signs and symptoms of student mental health issues”) from not at all (0) to extremely (4). Items were then summed to create a total score, ranging from 0 to 16. Higher scores indicated greater perceived knowledge of mental health. For the five-item awareness subscale, participants were asked to rate their level of perceived awareness (e.g., “how would you rate your awareness of the risk factors and causes of student mental health issues”) from not at all (0) to extremely (4). Items were then summed to create a total score, ranging from 0 to 20. Higher scores indicated greater perceived awareness of mental health. In this study, the Cronbach α = .84 for both subscales.

Mental Health Literacy

A six-item adapted version of the Mental Health Knowledge Schedule (Evans-Lacko et al., 2010) was used to measure mental health knowledge. The six statements assessed literacy (e.g., “most students with mental health problems want to complete their schooling”) and were rated on a five-point Likert-type scale ranging from strongly disagree (1) to strongly agree (5). Items were summed to give a total score, ranging from 6 to 30. Higher scores indicated greater mental health literacy. As this is an edumetric scale (Evans-Lacko et al., 2010), the Cronbach alpha is not reported.

Mental Health Stigma

A modified version of the Personal Stigma subscale from the Depression Stigma Scale (Griffiths et al., 2004) was used to measure teachers’ attitudes toward students with mental health problems. Participants were asked to rate how much they agreed with nine statements (e.g., “students with a mental illness could snap out of it if they wanted”) using a five-point Likert-type scale ranging from strongly disagree (1) to strongly agree (5). Items were summed to give a total score, ranging from 9 to 45. Higher scores indicated greater levels of stigma. In this study, the Cronbach α = .76.

Psychological Distress

The 5-item self-report Distress Questionnaire-5 (DQ5; Batterham et al., 2016) was used to measure educators’ psychological distress. Items were answered using a 5-point Likert-type scale ranging from never (1) to always (5). Items were summed to give a total score, ranging from 5 to 25. Higher scores indicated greater psychological distress with a score ≥ 14 indicating the possibility of a mental health condition (Batterham et al., 2016). The DQ5 has high internal consistency and convergent validity (Batterham et al., 2016, 2018). In this study, the Cronbach α = .89.

Demographics and Background Factors

At baseline, participants reported their age, gender identity, Aboriginal or Torres Strait Islander identity, teaching experience, duration in current role, and employment at current school (all reported in years). Participants also reported the location of their current school (metropolitan, regional, rural/remote), the school funder (government and non-government), and gender type (single-sex and co-educational). Participants also reported their current level of training in student mental health (none, limited, moderate, and extensive), the importance of receiving mental health training, and their confidence in web-based programs for satisfying their training needs. The latter two items were answered using a five-point Likert-type scale ranging from not at all (0) to extremely (4). At baseline, participants were also asked to estimate the average number of hours per week they spent assisting students with their mental health. At 3-month follow-up, participants were also asked to report whether they had engaged in any additional mental health training during the study period.

Training Program Outcomes

Completions

This was measured at post-intervention by the mean number of completed topics (range: 0 to 5, including the accompanying peer-coaching activity) as well as the proportion who completed more than half of the program (i.e., three or more topics and accompanying peer-coaching activities). Participants were also asked to report whether they completed the peer-coaching activities with a colleague, how frequently they met to do so, and whether the peer-coaching was useful to their understanding of the content and personal development.

Barriers to Use

Participants were asked to report what device they used to complete the training program (e.g., laptop/desktop/mobile/tablet). At post-intervention, participants were asked to report whether they had experienced a list of 11 barriers to program use related to user factors, program-specific factors, and contextual factors (answered yes or no).

Program Satisfaction

At post-intervention, participants were asked to rate the extent to which they agreed with a set of 14 statements about the BEAM program (such as “I enjoyed using BEAM” and “the content was easy to understand”). All items were rated on a five-point Likert-type scale ranging from strongly disagree (1) to strongly agree (5). Participants were also asked to provide an overall satisfaction rating and their likelihood of recommending the program to others (answered on five-point Likert-type scales ranging from not at all to entirely).

Perceived Effectiveness

At post-intervention, participants were asked to rate the extent to which they perceived the program to improve their confidence, skills, and approach to student mental health and whether the training program met their needs (answered on a five-point Likert-type scale ranging from not at all to entirely). Participants were also asked to report whether they had shared any of the information from the training program with other school staff (answered yes or no), and whether they had implemented any changes in their approach to student mental health during the study period because of the training program (answered yes or no).

Data Collection and Analyses

The training program and study data were stored securely on the Black Dog Institute’s research engine and were exported to Microsoft Excel and SPSS version 27.0 for cleaning and preparation. Group differences in outcome variables at baseline were compared using mixed linear models incorporating a random effect of school. To examine attrition, a logistic regression analysis was conducted to identify baseline factors associated with the completion of the post-test assessment. All primary and secondary outcome variables were included in the attrition analysis, along with age, gender, school type, years of employment, and school gender (single-gender or co-educational). The primary and secondary outcome analyses were undertaken on an intention-to-treat basis, including all eligible schools and participants randomized, regardless of treatment received. The primary outcome was examined using a mixed-effects model repeated-measures analysis, conducted in SPSS version 27.0. The school was included in the analyses as a random effect to evaluate and accommodate clustering effects. Models included the factors of time, condition (intervention vs. control), and their interaction. The critical test of effectiveness was the planned contrasts of the interaction from baseline to post-intervention (primary endpoint) and to 3-month follow-up (secondary endpoint). An unconstrained variance-covariance matrix was used to accommodate within-participant effects. The method of Kenward and Roger (1997) was used to estimate degrees of freedom for tests of all effects. Comparable methods were used for the secondary outcomes. Additional moderation analyses were conducted to determine whether gender, age, school type and location, the co-educational status of the school, participants’ level of prior training, or the total number of topics completed moderated improvements in training outcomes. All available data was used irrespective of drop out. As per the approved ethics, the data from participants who withdrew were retained in the final analyses. Effect sizes (Cohen’s d) were calculated based on the between-group differences in the change in the observed group means from baseline to post-intervention, divided by the standard deviation. All authors confirm that they had full access to all the data in the study and accept responsibility to submit for publication.

Role of the Funding Source

This work was supported by the Balnaves Foundation from a noncompetitive philanthropic research grant donation to the Black Dog Institute. The funders had no role in the design, execution, analyses, data interpretation, authorship, or the decision to submit the paper for publication.

Results

Overview of the Sample

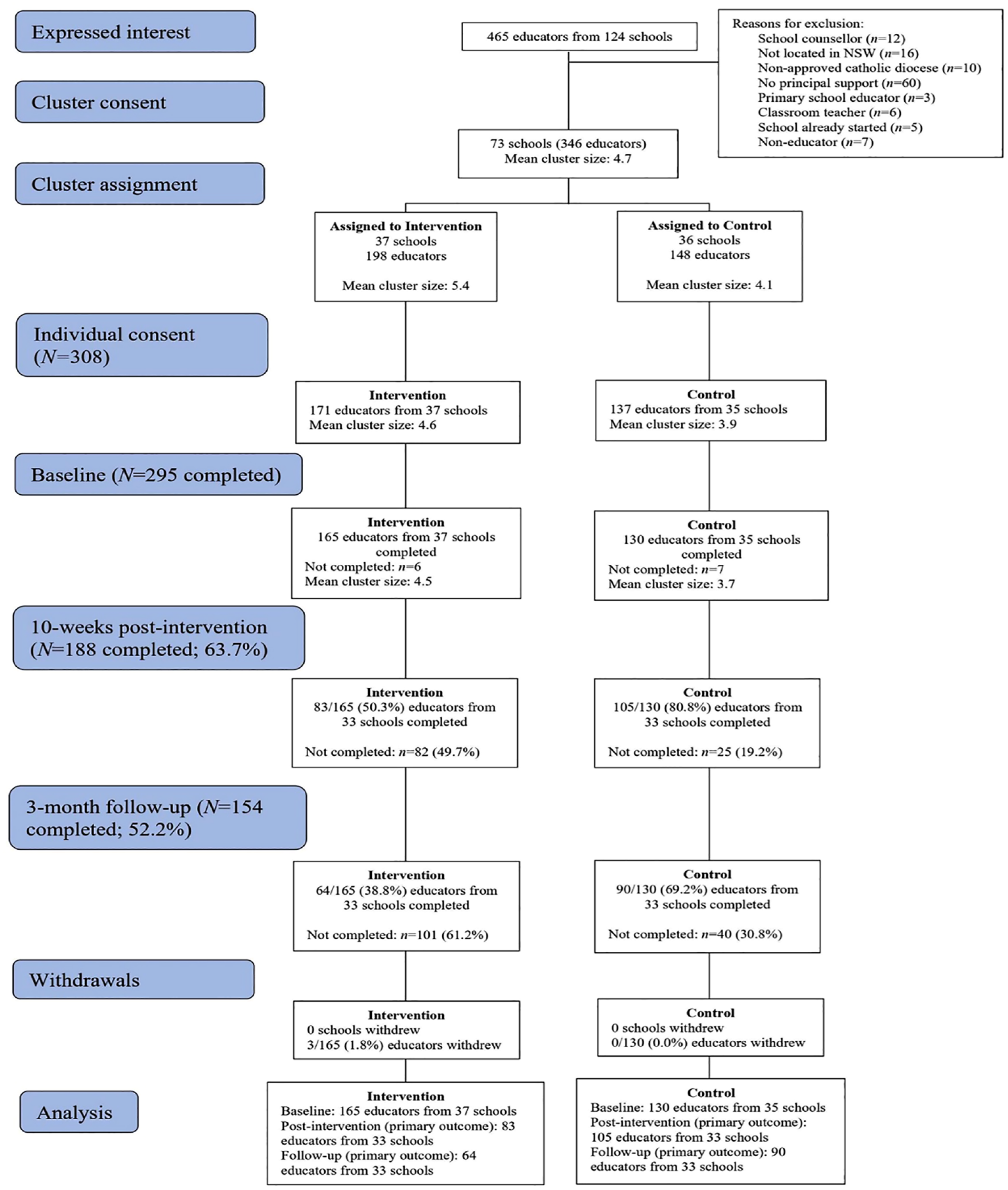

A total of 465 educators from 124 schools expressed interest in the study. From this, 73 schools provided consent (involving 346 educators) and were randomized (see Figure 2). Thirty-seven schools (n=11, 29.7% rural/regional) were assigned to the intervention condition and 36 schools (n=11, 30.6% rural/regional) were assigned to the control condition. The mean cluster size at randomization was 4.73. Figure 2 outlines the CONSORT study flow diagram.

CONSORT (Consolidated Standards of Reporting Trials) study flow diagram of cluster and individual-participant recruitment and participation.

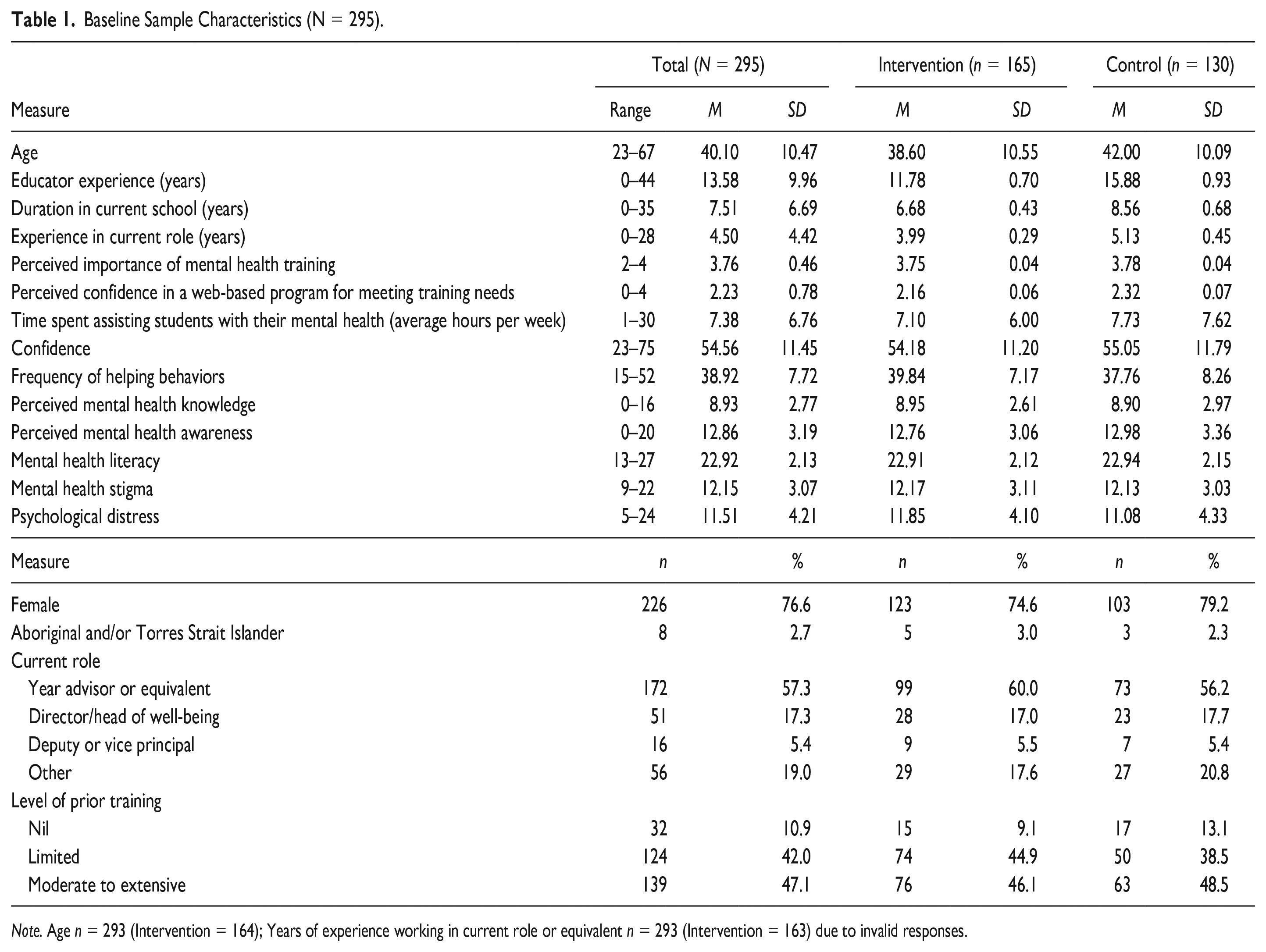

The baseline characteristics of the final sample (N=295) are shown in Table 1. At baseline, 10.9% of the total sample (n=32) reported no prior training in student mental health. Almost all participants (98.3%) believed that mental health training was “very” (n=60, 20.3%) or “extremely” (n=230, 78.0%) important. The majority (n=250, 84.8%) felt “moderately” to “extremely confident” that a web-based program could meet their mental health training needs: 13.9% (n=41) were “somewhat confident” and 1.4% (n=4) were “not at all confident.” There were no significant differences between conditions on the outcome variables at baseline after accounting for clustering within schools (all p>.06).

Baseline Sample Characteristics (N = 295).

Note. Age n = 293 (Intervention = 164); Years of experience working in current role or equivalent n = 293 (Intervention = 163) due to invalid responses.

Study Attrition

As outlined in Figure 2, attrition at post-intervention was higher in the intervention condition than in the control (49.7% vs. 19.2%, respectively). The only baseline characteristic that was significantly associated with completion of the post-intervention assessment was the gender of the respondent, with male participants significantly less likely to complete the post-intervention assessment than females (OR=0.51, p=.037, see Supplemental Table S1 for full analyses).

The effects of the program on participants’ confidence (primary outcome), helping behaviors, mental health knowledge and awareness, mental health literacy, stigma, and psychological distress

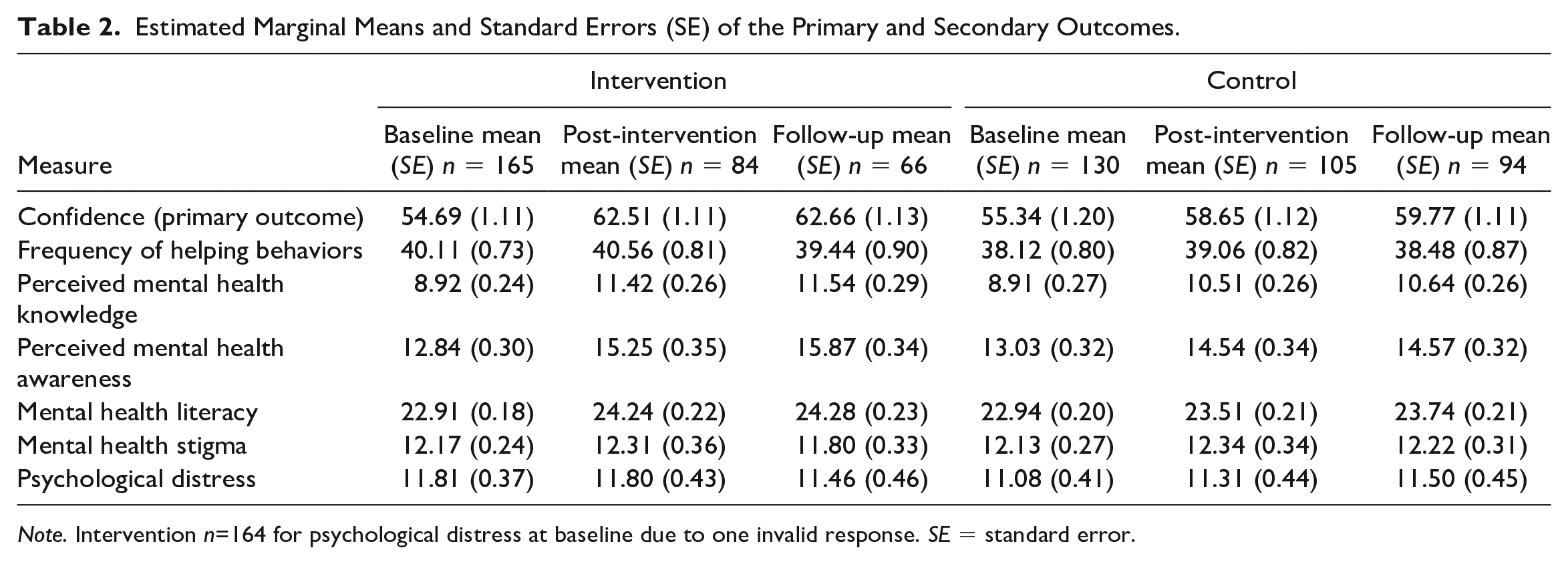

Estimated marginal means and standard errors were derived from models fitted for the primary and secondary continuous outcomes at each time point by condition. These are displayed in Table 2. The ICC for confidence was 0.051.

Estimated Marginal Means and Standard Errors (SE) of the Primary and Secondary Outcomes.

Note. Intervention n=164 for psychological distress at baseline due to one invalid response. SE = standard error.

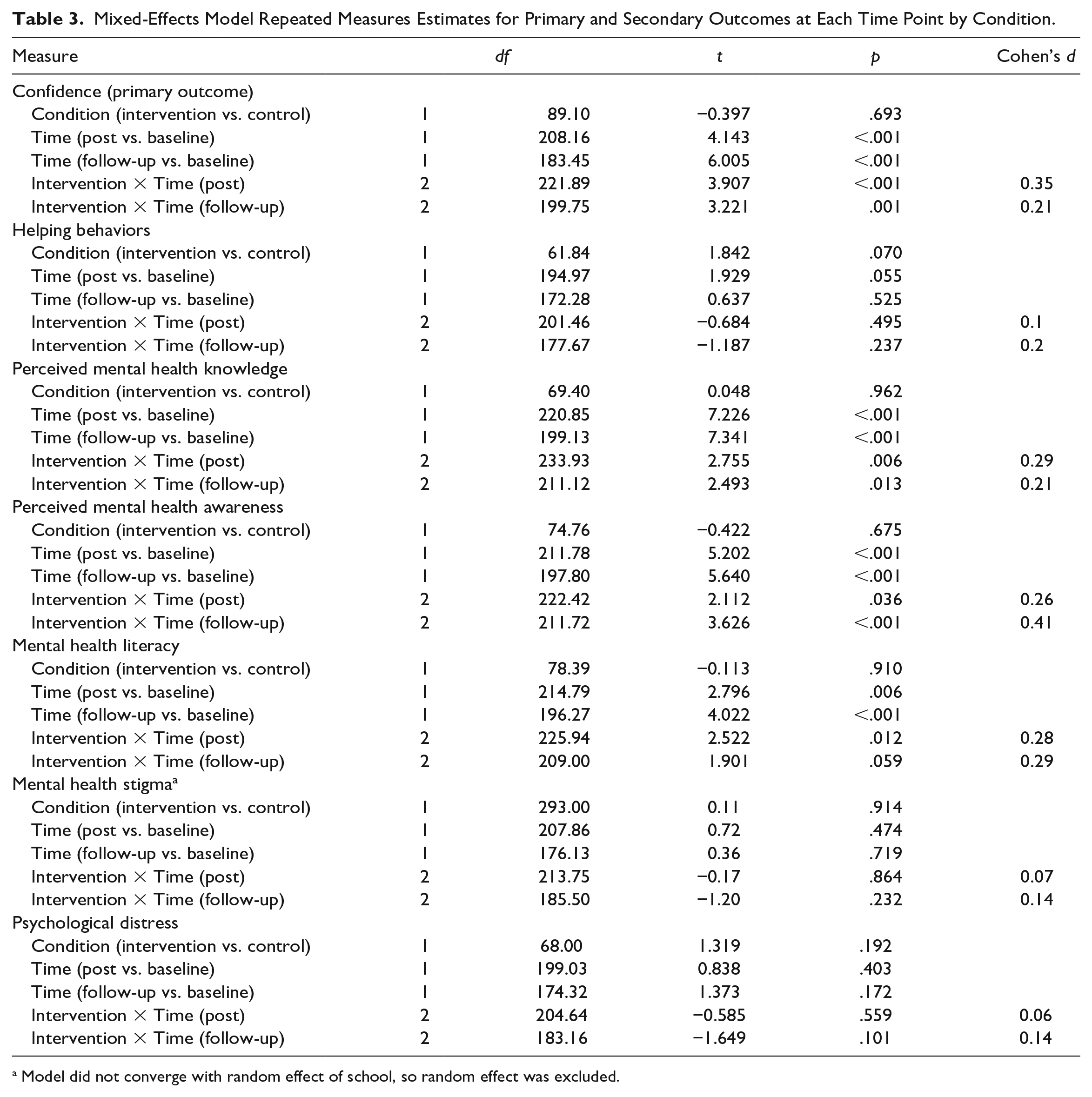

As outlined in Table 3, participants in the intervention condition had significantly greater improvements in their levels of confidence (primary outcome) than those in the control between baseline and post-intervention (d=.35), and baseline and 3-month follow-up (d=0.21). Relative to the control group, participants in the intervention condition also reported significantly greater improvements in perceived mental health knowledge between baseline and post-intervention (d=0.29), and baseline and 3-month follow-up (d=0.21) as well as significantly greater improvements in perceived mental health awareness at post-intervention (d=0.26) and follow-up (d=0.41). When compared with the control, participants in the intervention condition also reported significantly greater increases in mental health literacy at post-intervention only (d=0.28). No significant effects were found for self-reported helping behaviors, mental health stigma, or psychological distress. At 3-month follow-up, a total of 11 participants (all in the intervention condition) reported that they had undertaken additional mental health training during the study period. The moderation analyses revealed that the intervention worked similarly across demographic subgroups and school types, and completion of a larger proportion of the intervention had no significant effect on any of the outcomes.

Mixed-Effects Model Repeated Measures Estimates for Primary and Secondary Outcomes at Each Time Point by Condition.

Model did not converge with random effect of school, so random effect was excluded.

Training Completions

Nearly all participants completed the training on a laptop or desktop computer (n=82/84 97.6%), with very few participants using a mobile or tablet (n=2/84, 2.4%). Participants completed an average of 2.64 topics out of the five (SD: 2.26, range: 0–5). A total of 71 participants (n=71/165, 43.0%) completed the entire training program (i.e., all self-directed topics and peer-coaching activities) and 78 (47.3%) completed the self-directed topics only. Half of the sample completed 3 or more topics and accompanying peer-coaching (n=84/165, 50.9%). At post-intervention, 58.3% (n=49/84) of participants reported that they had completed the peer-coaching activities with another colleague, meeting weekly or fortnightly (n=36/49, 73.5%) to do so. Over half of the participants at post-intervention (n=43/84, 51.2%) reported that the peer-coaching was useful for understanding the content and personal development. The mean number of topics completed was higher among intervention participants who remained in the study at post-intervention (n=83, M: 3.73, SD: 1.91) when compared to those who did not (n=82, M: 1.57, SD: 2.06).

Barriers to Use, Training Satisfaction, and Perceived Effectiveness of the Beam Training Program

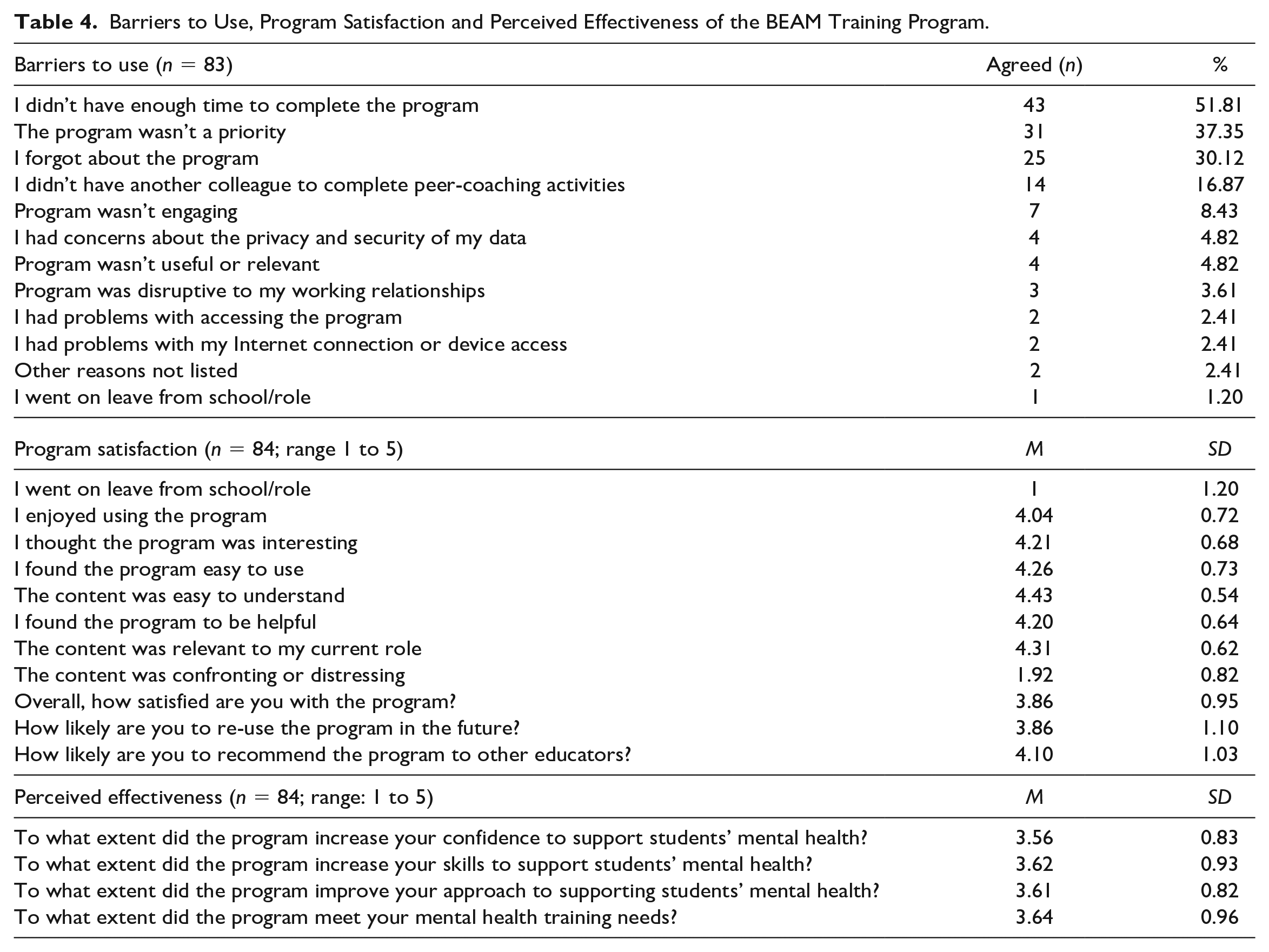

Table 4 presents the barriers to use, training satisfaction and perceived effectiveness of the BEAM training program among the educators who completed post-test. These participants reported that the most common barriers to program completion were not having enough time (n=43/83, 51.8%), low priority (n=31/83, 37.4%) and forgetfulness (n=25/83, 30.1%). Fourteen (16.9%) of these participants reported that not having a colleague available for the peer-coaching inhibited their program use. Nearly all participants who completed the post-intervention assessment agreed that the training program was easy to understand (n=82/84, 97.6%), relevant to their current role (n=79/84, 94.1%), helpful (n=76/84, 90.5%), easy to use (n=76/84, 90.5%), interesting (n=76/84, 90.5%), and enjoyable (n=70/84, 83.3%). Five of these participants (6.0%) reported that the training content was confronting or distressing. For satisfaction, 72.6% (n=61/84) were moderately or entirely satisfied with the program and 75.0% (n=63/84) agreed they would recommend it to others.

Barriers to Use, Program Satisfaction and Perceived Effectiveness of the BEAM Training Program.

Two thirds of the sample moderately or entirely agreed that the training program improved their confidence (n=55/84), skills (n=55/84), and approach (n=56/84) to student mental health. Fifty-one participants (60.7%) reported that the program moderately or entirely met their training needs. Over two-thirds of respondents (n=44/64, 68.8%) reported that they had shared information from the program with other school staff and 43.6% (n=28/64) reported that they had implemented changes in their approach to student mental health and well-being

Discussion

This cluster RCT aimed to evaluate the effectiveness of the BEAM program for improving secondary school educators’ confidence in recognizing and responding to students’ mental health needs as well as the frequency of their helping behaviors, perceived mental health knowledge and awareness, mental health literacy and stigma toward students with mental health problems. Consistent with the primary hypothesis, educators who received the BEAM training program reported significantly higher levels of confidence at post-intervention and at 3-month follow-up, relative to the control. Educators who received the BEAM program also reported significantly higher levels of perceived mental health knowledge and awareness at post-intervention and 3-month follow-up, relative to the control, with significant effects on mental health literacy found at post-intervention only. No significant effects were found for helping behaviors, stigma, or personal levels of psychological distress. Overall, the findings suggest that the BEAM program may address some of the training needs of Australian secondary school educators in the domain of student mental health.

The BEAM program was found to have a positive effect on educators’ confidence, mental health awareness, and knowledge, relative to the control, despite many participants reporting that they had already undertaken a moderate to extensive level of mental health training prior to the trial. This pattern of results contrasts with past studies that have found mental health training to be less effective among samples who have received prior training or who have high levels of experience working with distressed youth (Gryglewicz et al., 2018; Sánchez et al., 2021). On the contrary, the findings suggest that the BEAM program may induce positive effects on educators with diverse experiences and expertise in student mental health. This may have been driven by the alignment of the BEAM program with professional development frameworks for teachers and educators’ training preferences, as evidenced by the high levels of program satisfaction. Most participants described the program as relevant, helpful, and easy to understand, signifying that the content was appropriately targeted and aligned with the nature of participants’ daily work. The peer-coaching appeared to be successful in achieving active learning and collective participation, with nearly two-thirds completing these activities. Less than one in four teachers reported that these activities were a barrier to program completion, indicating that this type of learning activity may be an effective way to enrich collaboration between educators. However, as some participants reported difficulty in scheduling peer-coaching sessions, supplementing the program delivery with education partners and facilitators or online communities of practice may help to further support active learning and collective participation (Tsai et al., 2010). More specialized, intensive peer-coaching that focuses on social-emotional competence and personalized strategies may also help to improve program effects by strengthening the alignment of the BEAM program with participants’ training needs (Martin et al., 2021).

In this trial, program completion was not found to significantly moderate improvements in outcomes. Participation in the BEAM program was beneficial even among those who were not able to complete it in its entirety. This contrasts with a previous study of online mental health training for workplace managers (Gayed, Bryan, et al., 2019), where partial program completers did not report improvements in confidence. As our study was not designed to establish dose effects of program completion, it is important not to over interpret this finding. This finding may be due to an experimental artifact such that intervention participants reported higher levels of confidence simply due to the receipt of the training program, rather than its full completion. As found in the pilot study, time, competing priorities, and forgetfulness remained the top three barriers to program completion. However, the number of participants reporting these barriers dropped markedly in this trial and completions were also higher in this study when compared with the pilot (43% vs. 16%). This indicates that the program enhancements may have increased engagement. Despite this, less than half of the current trial participants completed the full training program. While the rate of completion was higher than that of other online courses (Bawa, 2016), and a similar self-paced online mental health training program (Gayed, Bryan, et al., 2019), teachers may require greater incentives to complete the 6.5-hr course in their own time, relief of teaching duties to complete the program while working, or integration into professional development training days. While in-person mental health training was not found to be superior to online training for improving confidence in workplace managers (Gayed, Tan, et al., 2019), providing educators with optional in-person school-based facilitator sessions may lead to greater completion of the BEAM program. Facilitated sessions may also strengthen the schools-based approach by providing more opportunities for teachers to gain feedback, come together to discuss training content, and strengthen rapport between staff (Kaufmann & Vallade, 2022). This may help to further satisfy the training needs of the cohort, although would likely reduce the sustainability and reach of the program. Professional accreditation and endorsement from school boards may also increase completions. However, the findings suggest that a conventional course structure may not always be appropriate for experienced educators, such that individuals may want to “dip in” and “dip out” of the information offered as it interests them or when they require it. Given that educators are time-poor, future research would benefit from exploring different patterns of use to determine the “minimum dose” required for positive training outcomes. As many of the participants reported that they would recommend the training to others, the BEAM program may hold significant promise for upskilling educators in the important domain of student mental health.

In this trial, there were no significant differences in helping behaviors or educators’ levels of psychological distress between the two conditions at post-intervention or 3-month follow-up. Ceiling effects may have impacted this outcome as the frequency of helping behaviors at baseline was higher in the current sample compared with the pilot evaluation. In addition, this trial involved senior educators employed in roles with greater responsibility for student well-being. Different effects on helping behaviors may be found when the program is tested among staff new to well-being roles, general educator samples, or when different measures of behavior (e.g., time spent assisting students) are used. Examining the program with different study designs would provide more insight into the relevance of the program for broader educator cohorts and whether the BEAM program changes educators’ workloads in relation to the management of student mental health issues. Moreover, in another cross-sectional survey of this sample of educators (O’Dea, 2021), some participants reported that COVID-19 school closures altered the nature of their helping behaviors for student mental health. While the current trial was not conducted during school closures, COVID-19 may have introduced new helping behaviors that were not captured by the measure used in this study. As such, future studies may be strengthened by reviewing the types of helping behaviors assessed to ensure they align with current practice. The lack of significant effects for psychological distress is likely due to the non-clinical nature of the sample and the low levels of distress reported by the participants at baseline. A key strength of the current trial is the measurement of this outcome, alongside the number of teachers who found the content distressing, as these aspects have been largely ignored by past studies (Yamaguchi et al., 2020). As secondary school educators are susceptible to burnout (García-Carmona et al., 2019), monitoring unintended consequences of this type of professional development is key to preserving the mental health and well-being of training participants.

Limitations

This study was impacted by attrition, particularly among intervention participants. Although the attrition rates in the current trial were lower than the pilot trial (60–67%; Parker, Anderson, et al., 2021) and the statistical analysis incorporated all available data, the control condition appeared more motivated to complete the study surveys. This was likely due to a heightened desire to receive the training program upon completion of study. Future studies may benefit from using additional contact measures, such as telephoning non-responders, or increasing reimbursements, as these approaches have increased retention rates in other trials of educator interventions (Schutte et al., 2018). As this study relied on self-reported measures to evaluate effectiveness, peer observations from other school staff, parent and student feedback could help to better capture the impacts of the program. Given the lack of standardized instruments designed to specifically to measure teachers’ self-efficacy in relation to student mental health, results may vary when different scales are used (Brann et al., 2021). Future studies may also benefit from examining school-level factors such as culture and belongingness and student-level factors such as the presence of mental health difficulties, absenteeism and attainment to provide further evidence of the training impacts (Kidger et al., 2021). This will also help to determine whether the BEAM program is able to produce meaningful change at the student-level, which may be required for broader improvements in schools’ approach to student mental health. Future studies may also benefit from the inclusion of a validated online learning scale (Yang et al., 2020) to examine the utility of the program for generating an effective learning environment and experience in the domain of student mental health. Emerging research has also suggested that the expectations and experiences of educators in relation to student mental health may vary by country and school type (Luthar et al., 2020). As such, future trials would benefit from evaluating the effects of the BEAM program in more diverse samples of educators, school types and locations. Finally, while program completion did not appear to moderate training effects in this trial, future studies may benefit from a deeper exploration of the relationship between adherence and outcomes. By doing so, future research can contribute to a more comprehensive understanding of how teacher training programs should be designed and delivered, given that many educators are time-poor with competing priorities.

Conclusion

This study is the first to rigorously evaluate the effects of a professional development program that blends online learning with in-person peer-coaching to improve secondary school educators’ confidence, knowledge, attitudes and behavior in relation to student mental health. The trial results indicated that the BEAM program improved educators’ confidence, perceived mental health knowledge and awareness, and mental health literacy at post-intervention, relative to the waitlist control, with some effects present at 3-month follow-up. With its flexible and accessible delivery, the BEAM program may be ideal for addressing the shortage of effective mental health training options for educators in secondary schools within Australia and beyond. While there remains value in future examinations of educator training and professional development programs to determine the strengths of different methods across international settings, this trial suggests that the BEAM program offers a scalable, safe, and effective approach to enhancing the way educators support the mental health needs of their adolescent students.

Supplemental Material

sj-docx-1-jte-10.1177_00224871231208684 – Supplemental material for A Cluster Randomized Controlled Trial on the Effectiveness of the Building Educators’ Skills in Adolescent Mental Health (BEAM) Program for Improving Secondary School Educators’ Confidence, Behavior, Knowledge, and Attitudes Toward Student Mental Health

Supplemental material, sj-docx-1-jte-10.1177_00224871231208684 for A Cluster Randomized Controlled Trial on the Effectiveness of the Building Educators’ Skills in Adolescent Mental Health (BEAM) Program for Improving Secondary School Educators’ Confidence, Behavior, Knowledge, and Attitudes Toward Student Mental Health by Bridianne O’Dea, Belinda Parker, Philip J. Batterham, Cassandra Chakouch, Andrew J. Mackinnon, Alexis E. Whitton, Jill M. Newby, Mirjana Subotic-Kerry, Aimee Gayed and Samuel B. Harvey in Journal of Teacher Education

Footnotes

Acknowledgements

This work was supported by the Balnaves Foundation from a non-competitive philanthropic research grant donation to the Black Dog Institute. The funders had no role in the design, execution, analyses, data interpretation, authorship or the decision to submit the paper for publication. The statistical experts for this study were Professor Philip Batterham and Professor Andrew Mackinnon.

Author Contributions

BOD, BP, AJM, AE, JN, and MSK were involved in the conceptualization and methodology of the trial. BOD, BP, AG, and SH were involved in the conceptualization, design, and development of the intervention. BP and CC were involved in recruitment, data collection, data curation, and cleaning. PJB led the formal analysis, supported by BP, BOD, and AJM. All authors contributed to the original draft preparation, review, and editing of the manuscript.

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: BOD, BP, AG, and SH were involved in the conceptualization, design and development of the intervention.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Balnaves Foundation from a non-competitive philanthropic research grant donation to the Black Dog Institute. The funders had no role in the design, execution, analyses, data interpretation, authorship or the decision to submit the paper for publication.

Supplemental Material

Supplemental material for this article is available online.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.