Abstract

Russia’s cyber-enabled influence operations (CEIO) have garnered significant public, academic and policy interest. 126 million Americans were reportedly exposed to Russia’s efforts to influence the 2016 US election on Facebook. Indeed, to the extent that such efforts shape political outcomes, they may prove far more consequential than other, more flamboyant forms of cyber conflict. Importantly, CEIOs highlight the human dimension of cyber conflict. Focused on ‘hacking human minds’ and affecting individuals behind keyboards, as opposed to hacking networked systems, CEIOs represent an emergent form of state cyber activity. Importantly, data for studying CEIOs are often publicly available. We employ semantic network analysis (SNA) to assess data seldom analyzed in cybersecurity research – the text of actual advertisements from a prominent CEIO. We examine the content, as well as the scope and scale of the Russian-orchestrated social media campaign. While often described as ‘disinformation,’ our analysis shows that the information utilized in the Russian CEIO was generally factually correct. Further, it appears that African Americans, not white conservatives, were the target demographic that Russia sought to influence. We conclude with speculation, based on our findings, about the likely motives for the CEIO.

Keywords

Introduction

Hollywood thinks it knows cyberwar. Popular imagery of conflict in the newest domain consists largely of explosions and nervous nerds with fast fingers consequentially clattering on keyboards. Strangely, this is not much different from the vision offered by cyber experts, who often concentrate on rare or even non-existent ‘cyber war’ events (Arquilla and Ronfeldt, 1993; Clarke and Knake, 2010; Gartzke, 2013; Rid, 2012).

Cyberspace is an informational domain. It is far easier to move (and manipulate) data and ideas in cyberspace than it is to alter the physical world directly. To date, the bulk of cyber conflict has involved the manipulation of information (espionage, theft of intellectual property (IP), election manipulation) rather than the damage or control of tangible ‘stuff.’ 1 While all forms of conflict exhibit a ‘power law’ distribution by dispute intensity – with few large events and far more small ones – cyber conflict appears ‘flatter’ than other domains. 2 Cyber conflict more often revolves around subtle acts of influence and information control, not the percussive destruction of human life and property. Cases of low-intensity cyber conflict are mounting, creating an imperative to re-think conceptual frames and to collect better data. States and other actors are spying on each other, stealing information, and trying to manipulate elite and popular understandings of political processes through the use of cyber-enabled influence operations (CEIOs).

Consequentially for researchers plagued by the problem of cyber events data availability (Baram et al., 2023; Hamman et al., 2020), CEIO data are often publicly available. This feature of CEIOs allows researchers to study elements of cyber conflict that are otherwise inherently clandestine (Gartzke and Lindsay, 2015). However, despite data availability, little has been done thus far to examine how adversaries use cyber capabilities to select and target various domestic audiences. Here, we offer one pathway for researchers interested in the systematic empirical study of low-intensity cyber conflict.

We also seek to demonstrate that textual analysis can be used as a tool to assist in understanding the mechanisms by which CEIOs function. To analyze operations targeting networks, one would need to use forensic analysis to reverse-engineer such operations and understand their mechanisms. When it comes to cyber operations designed to target human minds, however, we can assess available textual data to gain a deeper understanding of how such operations seek to influence groups and individuals, their perceptions of surrounding reality, and their behavior. In the case of CEIOs, textual content is the nominal payload. Importantly, the content of CEIOs is often readily available in open-source format.

Interest in cyber-enabled influence operations in particular skyrocketed in the aftermath of the Russian effort to interfere in the 2016 United States presidential election. While Russian-funded influence operations have fueled considerable public debate, careful research has only started to build momentum. To date, scholars have yet to prepare a systematic examination of targeting as well as messaging strategies of Russian CEIOs. This project seeks to fill this gap. Researchers must examine mechanisms by which CEIOs function to both understand and counter such operations.

We examine the cyber-enabled influence operation during the 2016 United States presidential election initiated by Russia by using the Facebook ads dataset released by the Senate Select Committee on Intelligence. We do so by employing social media textual data analysis. First, we study what groups were targeted. Second, we use semantic network analysis (SNA), a form of social network analysis that treats words as network nodes and helps unveil themes utilized in bodies of text. We apply SNA to assess both the content and transmission of messages to identify different targeted populations and issues, understand how organic discourse was mimicked or ‘faked’ in target audiences, and the extent to which themes were amplified throughout a given network. SNA is thus especially salient as a technique in attempting to analyze CEIOs.

Cyberspace as a domain of conflict enables adversaries to target combatants and civilians alike. Cyber-enabled influence operations specifically target domestic audiences in adversary states with the goal of affecting these publics – an activity that is bound to be highly problematic in the context of democratic target states where voters ultimately determine national policy. As such, CEIOs provide states with a tool to pierce the democratic election process that is traditionally considered a domaine réservé. 3

Researchers studying international security will benefit from considering CEIOs as part of the panoply of behaviors that pass below the threshold, or simply bypass, open armed conflict. Indeed, such operations represent a substantial portion of the most consequential, if subtle, conflict behaviors occurring in cyberspace. Whether practiced in isolation or in concert with other tools, CEIOs have the potential to undermine social cohesion or confidence in a regime or, alternately, to create foreign policy outcomes that are deemed desirable by a foreign power. CEIOs have increasingly been implemented in apparent attempts to influence domestic democratic processes. We detail one prominent example of a CEIO below, delineating the logic and likely impact of Russia’s interference in the 2016 US federal election.

‘Hacking’ human minds

Major overt acts of aggression make headlines, but subtler efforts can shape perceptions, and change the world. Cyberspace is especially well suited for influencing humans on a cognitive level for three reasons: (a) influence is conveyed via platforms rooted in cyberspace that users directly interact with; (b) cyber capabilities enhance the scale and speed of information dissemination; and (c) the emergence of cyberspace has enabled actors to micro-target audiences to an extent that was not technologically feasible in the past.

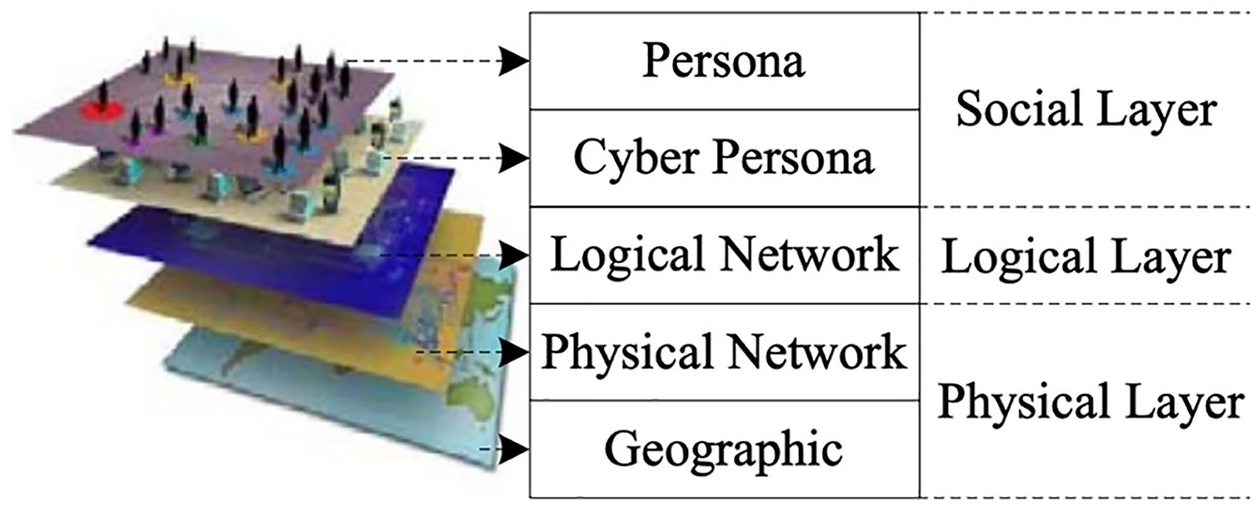

First, influence operations are facilitated in and via the virtual domain. In operational terms, cyberspace can be considered a ‘complex adaptive system’ (Kaminska, 2021), consisting of three layers: physical, logical, and social (United States Army, 2010). The three layers are further subdivided into five components (see Figure 1), consisting of geographic, physical network, logical, persona, and cyber persona components (United States Army, 2010). While terms (names) for the cyberspace layers vary from source to source (Gomez, 2020; Libicki, 2009), there is a general consensus regarding their ordering. The space where humans interact with machines (generally in a network) is considered the top layer and is where data are transformed ‘into a form suited for human consumption’ (Gomez, 2020; United States Army, 2010).

Cyberspace layers as visualized by Yang and Yu (2014) adapted from Figure 2.1 in United States Army (2010) 5

While cyber operations that aim to affect networked systems often occur below the level of human interaction – at the physical and logical layers – the surface (social) layer of cyberspace is susceptible to information operations designed to reach and affect humans behind keyboards. Penetrating through this social layer, CEIOs can potentially have effects on their targets outside of cyberspace by producing a change in behavior ‘in real life’ (Gomez, 2020; Libicki, 2009; Lin and Kerr, 2017). Importantly, the psychological mechanisms that influence operations utilize on a cognitive level are arguably easier to exploit in the cyber domain (Gomez, 2020). 4 The reach of such operations can be far and wide, given their relative ease of use and potential impact (Jensen et al., 2019; Whyte, 2020).

Second, cyberspace increases the rapidity and scale of information (and influence) transmission. When compared with more traditional ‘black propaganda,’ 6 cyber operations spread disinformation with unprecedented speed (Warner, 2019). During the Cold War, the USSR initiated Operation Infektion, also known as Operation Denver, intended to disseminate the falsehood that the United States engineered AIDS at military medical laboratories in Fort Detrick (Rid, 2020; Walton, 2022). The Soviet Union took an indirect route to spread this false information through a news story that first appeared in the Indian daily The Patriot, in 1983. By 1987, the accusation that the United States had engineered AIDS appeared on the CBS evening news with Dan Rather (Yaffa, 2020). It took this lie propagated by Soviet operators four years to reach broad American audiences. The lie had special resonance among African Americans, 50% of whom believed in 2005 that AIDS was human-made (Bogart and Thorburn, 2005). The Internet, along with social media, makes it much easier to propagate false information at a greater speed and scale.

Third, algorithms enable the ‘micro-targeting’ of citizens with information aimed to influence and mobilize them, specifically. Perceptions of social media have evolved, however, and are increasingly gaining a security context for several reasons, including privacy concerns. Social media platforms collect and store troves of information about their users, which are then monetized by promising to connect advertisers with the closely matched users (Cotter et al., 2021), including in political campaigning.

The Cambridge Analytica scandal revealed an especially dark side of this issue (Cadwalladr and Graham-Harrison, 2018). The now defunct company used personal user data to build psychographic user profiles (Garcia-Diaz et al., 2022; Kanakia et al., 2019) and influence voting preferences through tailored advertising (Hinds et al., 2020). Brad Pascarle, digital media director for Donald Trump’s successful 2016 presidential campaign, claimed that targeting highly specific clusters of voters daily on Facebook helped win the election (Cotter et al., 2021). On Facebook, users are grouped into different ‘interest’ categories, that are then sold to advertisers. Russian propagandists gained access to domestic US audiences in their efforts to interfere in the 2016 election using these sources.

While the role of social media in spreading civil revolutions and toppling authoritarian regimes can be seen as enhancing the spread of democracy, democratic institutions are themselves vulnerable to assault through the internet. For example, the internet has been shown to facilitate protest mobilization (Breuer et al., 2015), including the spread of information about protests (Tufekci and Wilson, 2012), and to undermine government censorship of conventional news outlets (Chowdhury, 2008).

On the other hand, social media raise a host of concerns about the manipulation of electoral processes. Indeed, studies have examined the use of social media as a means of spreading false information (Allcot et al., 2019) aimed at influencing audience opinions and behaviors for malicious purposes (Prier, 2020), especially during elections (Kim et al., 2018). Influence operations employed through social media can be used to ‘hack’ democracies with the intent to undermine democratic processes by corrupting the marketplace of ideas and ‘dampening the capacity’ of democracies to engage in healthy self-correction (Whyte, 2020).

Taking a closer look: Cyber-enabled influence operations

We focus on what we consider two of the most important features of cyber-enabled influence operations. First, CEIOs are an expression of international competition at a lower level than overt physical violence. Second, contrary to much of the reporting on the topic, which has labeled these cyber-enabled attempts at influence as ‘misinformation’ and ‘disinformation’ campaigns, much of the actual content delivered by social media is factually accurate. We discuss these two important features of CEIOs, each in turn.

The human dimension of cyber conflict is becoming increasingly important (Shandler and Canetti, 2023). Instead of a modern, electronic equivalent to conventional warfare, scholars argue that cyber conflict to date is akin to espionage/counter-espionage contests (Lindsay, 2024; Rovner, 2020). Indeed, there is considerable continuity between Cold War influence operations and today’s cyber operations (Walton, 2022; Rid, 2020), where cyber ‘attacks’ are instruments of subversion (Maschmayer, 2022).

While the use of social media to conduct influence operations is a modern phenomenon (Libicki, 2020), information is a perennial tool of statecraft (Warner, 2014). 7 Russia, and before it the Soviet Union, is a major exponent of various influence techniques as a function of state policy (Shultz and Godson, 1984). These operations have been studied as a continuation of Russian disinformation and ‘active measures’ (Rid, 2020), and as elements of informational warfare (Lin and Kerr, 2017).

Perhaps to a greater extent than any other form of political competition or conflict, influence operations blur neat distinctions between peace and war. Influence operations allow the ‘supreme art of war’ – ‘to subdue the enemy without fighting’ (Sun Tzu, 2007), and are increasingly understood as a major non-military means of competition (Jonsson, 2019; Kania, 2020). If international politics is considered an ongoing struggle (as Bolshevik leaders historically defined it), as opposed to alternating between periods of war and peace (Shultz and Godson, 1984), then cyber-enabled influence operations constitute a particularly insidious tool. Indeed, successfully countering cyber threats necessitates a response to cyber-enabled influence operations, which are integral to how countries advance their goals in the cyber domain (Libicki, 2020).

Discussions of CEIOs and their equivalents often emphasize terms like ‘misinformation’ and ‘disinformation,’ suggesting the unintentional or intentional spread of falsehoods, respectively. 8 For example, the events of 2016 sparked much interest within the social science literature on the effects of cue giving, fake news, disinformation, and counter-messaging campaigns. 9 While many influence operations may contain falsehoods, CEIOs that rely on information that is factually correct are arguably far more effective. Yet, this feature of influence operations is often either omitted, or noted just in passing (Shultz and Godson, 1984: 38).

The use of falsehoods for political purposes is neither novel nor reserved for non-democratic states. 10 However, the use of truthful information as part of a broader influence campaign requires more nuance, research, and planning. Truthful claims may take hold of public thinking more than ‘fake news.’ According to a former senior disinformation officer Ladislav Bittman, when targeting Western audiences, the first step was to obtain intelligence about existing societal cleavages, and then amplify these, instead of creating a ‘big lie’ (Walton, 2022). In the case of Operation Infektion, it was not the Soviets who came up with the idea that AIDS had been secretly manufactured by the US government. In fact, as reported by Rid (2020), this theory first emerged in the gay rights activist community in the United States.

CEIO mechanisms

CEIOs can be understood as targeted efforts to influence the perceptions or beliefs of a given audience, with the goal of altering behavior in order to advance a specific political agenda (Larson et al., 2009). CEIOs can be pursued with the intent of instilling confusion in a population, but they can also be used to promote or reinforce consensus, or to induce apathy. CEIOs are influence activities conducted through cyberspace, aimed at affecting an individual’s cognitive status/condition and/or psychological perceptions (Larson et al., 2009). Adversaries may seek to reinforce existing latent perceptions by targeting certain groups that hold specific biases and amplify those biases. We focus on CEIOs where the apparent intended effects involve achieving behavioral (political) changes among members of a given electorate.

While CEIOs can be seen as an extension of an intelligence contest (Rovner, 2020), the ability to target audiences with unprecedented scale and speed (Warner, 2019), as well as the precision and accuracy afforded by such campaigns, represent a considerable source of innovation. Social media platforms such as Facebook allow actors to micro-target audiences based on their interests and place the messages in the format that the users are most likely to pay attention to and engage with. This targeting process might be consequential, as it allows for inauthentic behavior to affect how political messages are received and shared on social media. We adopt a framework further detailed in previous research (Vićić, 2021; Vićić and Harknett, 2024).

We conceive of CEIOs as occurring in three phases, Identification–Imitation–Amplification (IIA). The first step in this cycle involves identifying (I) groups of individuals and issues that should be targeted and exploited through CEIOs. Microtargeting allows for a foreign entity to identify individuals using the individual’s online footprint. Social media is particularly suitable for microtargeting because the subjects of influence can be identified based on their various preferences, which are shared online as part of their online identities. Simultaneously, it is possible to identify potential fears, biases, and preferences of the chosen targets. In the second step, CEIOs imitate (I) organic conversations in the identified groups as a bridge to achieving influence over these groups. Cyberspace allows for imitation by foreign actors which can pose as members of the target group and participate in domestic conversations. In this way, target group identities and the salient issues can be emphasized and communicated back to the target groups. Third, manipulated conversations are then amplified (A) using social media tools, such as automated software ‘bots’ to increase the frequency of, and build on, previous messaging. The IIA pattern can be continuously expanded by identifying new salient issues and groups with the goal of creating discordant representations of perceived reality in the target groups or establishing group echo chambers.

CEIOs are distinctive in that the accumulation of effects, while difficult to measure, is part of the operational cycle. For CEIOs, the payload (information) persists, accumulates, and metastasizes. With a DDoS attack, for example, once the attack stops, the effects cease. In contrast, with CEIOs, the cognitive effects can persist and accumulate within a target’s mental models, and can be re-activated at a later point in time. Moreover, the effects of CEIOs are unlikely to be as direct as those of cyber operations targeting networked systems. While CEIOs could conceivably affect electoral outcomes directly by changing the behavioral preferences of target audiences, they may more often result in cognitive changes affecting (for example) militancy, distrust of institutions or digital outlets, changed cybersecurity behavior (Shandler and Gomez, 2022), as well as changes in a target’s location on the left–right political spectrum (further entrenchment), and changes in trust in government more broadly. These effects may vary – from swaying voters’ opinions, to mobilizing support, and even discouraging certain individuals from voting (suppression).

In the following section, we turn to the case of Russian use of cyber-enabled influence operations on Facebook during the 2016 US presidential election. In doing so, we identify the audiences that were targeted by this CEIO, and delineate the themes that were emphasized in targeting these audiences.

Russian election interference in 2016 US presidential election

Russian cyber operations linked to the 2016 US Presidential election garnered significant attention in the media and concern from policy makers and academics. Reportedly, 126 million Americans were exposed to the Russian CEIO on Facebook between 2015 and 2017 (Ingram, 2017). Facebook is said to have sold $150,000 worth of political advertising to Russian sources (Jensen et al., 2019). While a miniscule figure, particularly in national security terms, its consequences were disproportionate to its small size. This of course reflects both the efficiency and massive potential impact of CEIOs.

In official US government documents, the intelligence community has identified numerous and broad-ranging goals of Russia’s social media activity. For example, the US indictment against a Russian organization known as the Internet Research Agency (IRA) suggests that it ‘had a strategic goal to sow discord in the US political system, including the 2016 US presidential election’ (Indictment, United States v. Internet Research Agency, et al., 2018). Another putative objective of the IRA was the polarization of US society based on social, racial, and ideological differences. Additionally, undermining public faith in the democratic process, as well as compromising the campaign of Democratic candidate Hilary Clinton, were listed in other US government documents (Office of the Director of National Intelligence [ODNI], 2017; Senate Select Committee on Intelligence, 2020). According to a declassified report authored by staff members of the Central Intelligence Agency, the Federal Bureau of Investigation, and the National Security Agency, the Russian election interference represents both an effort to undermine the liberal international world order and an escalation as compared to previous operations (ODNI, 2017: ii).

The Report of the Senate Select Committee on Intelligence (2020), focused on Russia’s ‘active measures’ during the 2016 US election, remarked that the ‘Russian information campaign exploited the context of the election [. . .] the preponderance of the operational focus [. . .] was on socially divisive issues – such as race, immigration, and Second Amendment rights – in an attempt to pit Americans against one another and against their government.’ In assessing Russian activities and intentions during the 2016 US election, the Office of the Director of National Intelligence (2017) released a report stating that, ‘Russia’s goals were to undermine public faith in the US democratic process, denigrate Secretary Clinton, and harm her electability and potential presidency.’ The report further states that, ‘Putin and the Russian Government developed a clear preference for President-elect Trump’ (Office of the Director of National Intelligence, 2017).

In the aftermath of the release of a redacted version of Russian-purchased Facebook ads, a limited number of studies examined the content of these political advertisements. For example, one study using sentiment analysis assessed the emotional appeals of the text of the ads, finding that textual sentiment ratings went down before the 2016 election, and then went up after the election results were announced (Alvarez et al., 2020). Another study (Kim et al., 2018) found that some of the topics used in the Russian Facebook ad campaign (including race, police brutality, nationalism, immigration, and gun rights) also appear on the Pew Research Center list of the top ten most important voting issues (Alvarez et al., 2020; Etudo et al., 2023). 11 Other studies find that the ads used in the Russian CEIO on Facebook were divisive, were facilitated by microtargeting (Riberiro et al., 2019), and were built to incite anger and fear (Vargo and Hopp, 2020; Etudo et al., 2023). In terms of scope and scale, the Russian campaign spread over platforms including Facebook and Twitter and was promoted through web search engines like Google and Bing (Spangher et al., 2018). In terms of resonance, studies investigating the propagation of ideology on Twitter show that conservatives retweeted Russian trolls 31 times more often than liberals, with the most retweeted content originating from Tennessee and Texas (Badawy et al., 2018).

Despite the interest that the Russian information campaign garnered, there is no unified way to understand its objectives. Sowing discord (Alvarez et al., 2020; Mueller and Special Counsel’s Office Dept of Justice, 2019) is broadly described as the goal of the CEIO, but deeper analysis is still lacking, and needed. Both the security community (Goldman et al., 2020) and Facebook itself (Kelly, 2019) highlight the urgency of conducting research to understand the cyber-enabled influence operation on Facebook. To date, both government reports and the academic literature refer to the diverse campaign goals described above. Additionally, the focus in analyzing the campaign has been on disinformation, while other aspects of the Russian social media CEIO have been somewhat overlooked. Finally, studies of cybersecurity have yet to come up with a generalizable theory to explain the events above in the broader context of CEIOs.

Methodology: Using text-as-data in network analysis

Cyber-enabled influence operations are aimed at producing effects on their target audiences via text, audio and visual messaging. Today, such messages, collected from platforms such as Facebook, Twitter, and Reddit, are available to researchers. Open-source text can be treated as data and used to develop relevant analytical details – a corpus meta-network (Carley, 2002; Krackhardt and Carley, 1998).

Network analysis refers to the study of relationships between ‘things,’ where the ‘unit of analysis is not an individual actor, but the collection of actors and relationships that form the network’ (Maynard, 1997). Semantic network analysis (SNA) refers to the study of relationships between words and concepts. SNA rests on the idea (Carley, 1997) accepted in cognitive science and psychology that people understand the world through internal representations or mental models (Jones et al., 2011). As was first recognized by Craik (1943), and further discussed by Johnson-Laird (1983), human beings employ tacit mental processes to construct mental models of the world. Semantic networks or maps are a verbal representation of cognitive constructs that reflect subjects’ knowledge and understanding of a certain thing (Johnson-Laird, 1983; Rouse and Morris, 1986). SNA can be defined as network analysis that treats words and concepts as nodes with the goal to build networks of concepts and uncover meaning from the structure of those networks (Shim et al., 2015). SNA is widely used in the natural and social sciences (Carley, 1993; Kim et al., 2007; Maynard, 1997; Shim et al., 2015).

Bi-modal networks are an example of a meta-network, which is used for structural data analysis. Meta-networks reveal how different entities are linked. Multi-mode networks can help to reveal relational social structure based on the categorization of text into different ontological groups (Diesner and Carley, 2005). There are eight ontological categories including: agent, event, knowledge, location, organization, resource, task, and attribute. Categories can be assigned to nodes to facilitate analysis. Bi-modal network analysis of the Russian CEIO can reveal the underlying relational structure between Facebook ads and target groups. Constructing a bi-modal network will help test the expectation that CEIOs are identifying and targeting particular groups. It can also visually represent the volume of the campaign. As the first step in this analysis, a bi-modal network will show how Russian ads cluster around different target groups.

In the second stage of this analysis, we examine the uni-modal semantic network built utilizing the text from the Facebook ads in the sample. SNA helps understand the meaning of the raw content. 12 As discussed by Shim et al. (2015: 58), important properties of textual data can be uncovered, such as dominant concept subgroups, bridging concepts, and frequently mentioned terms. SNA also helps unveil the underlying meaning of text. By mapping dominant concept subgroups in texts, and then using the bridging concepts (established through centrality analysis), SNA can help map both main arguments as well as the underlying meaning of text and reveal hidden meanings (Newman, 2006; Shim et al., 2015). SNA analysis helps to uncover the dominant words and themes used across ads to target specific social groups. It is thus possible to assess some of the theoretical expectations of this project. For example, how did the designers of the Facebook CEIO seek to mask their identity and pose as members of target groups? Additionally, what were the salient themes that were used, and how do they differ across different target groups? Are there underlying meanings within subgroups and what are those meanings?

This study adopts an exploratory approach. In applying SNA, we utilize the NetMapper software for extracting textual networks from Facebook ads, and ORA for network analysis and visualization.

Data and sampling procedure

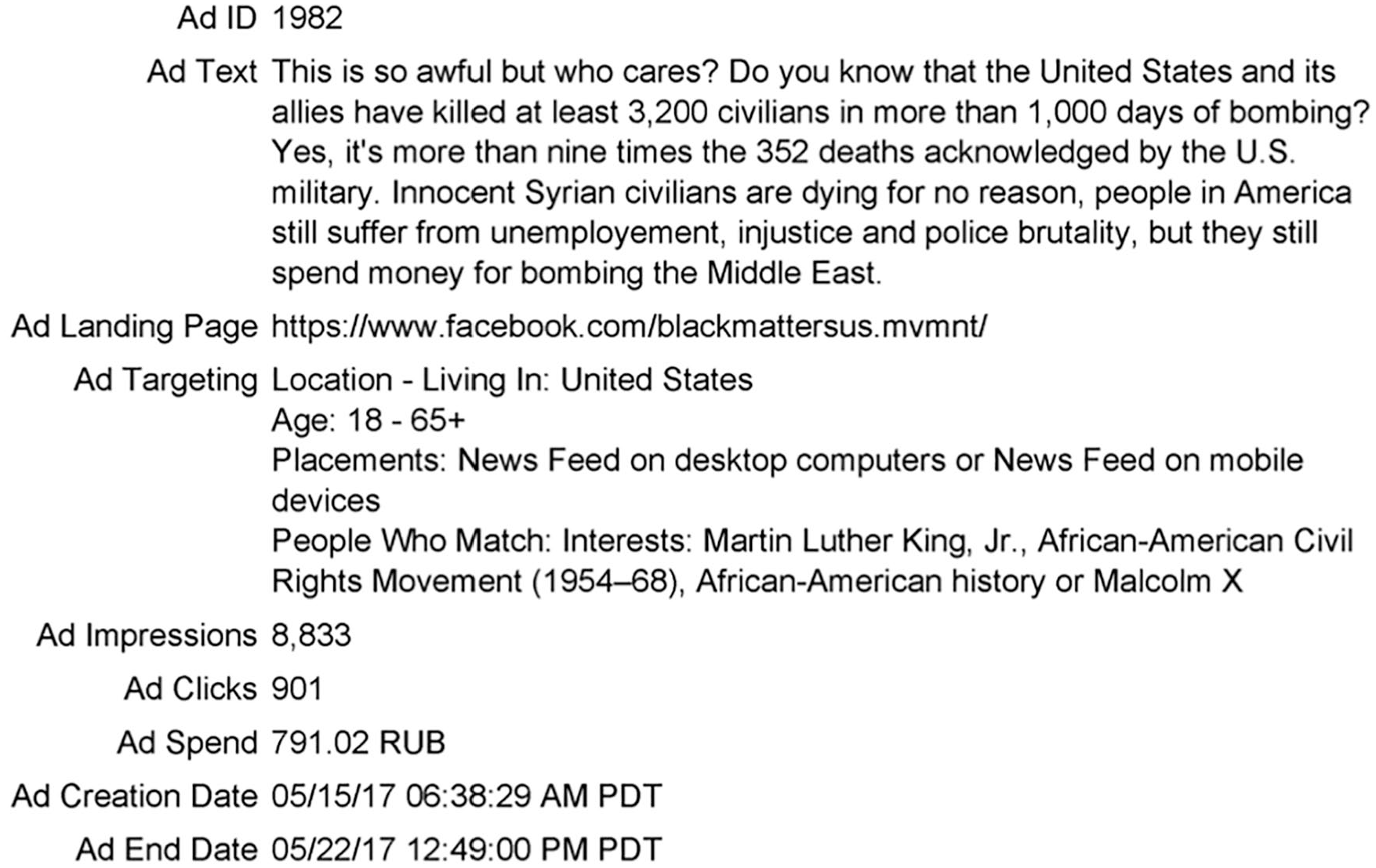

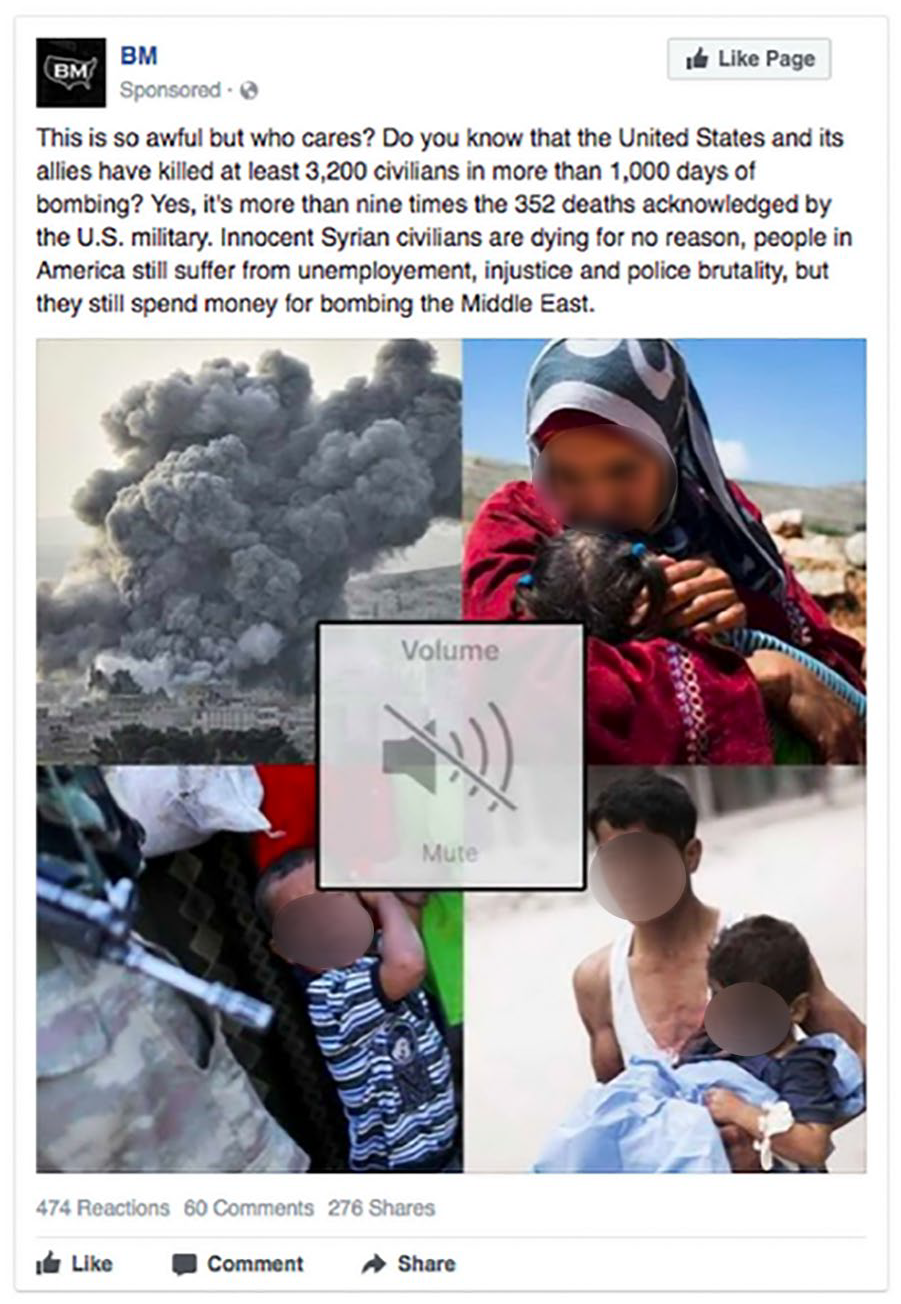

To explore what a CEIO on social media looks like, and examine the mechanisms by which they function, we focused on Russian-purchased Facebook ads. The US House of Representatives Permanent Select Committee on Intelligence released all Russian-purchased ads in the period between 2015 and 2017. 13 There were 3,519 ads released in the ‘.pdf’ format (for ad example see Figure 3). These data are unstructured text. Meta-data include the following categories: ad ID, ad text, ad targeting location, ad interests, excluded connections, age, language, number of ad impressions, ad clicks, ad spend, ad creation, and ad end date (see Figure 2). Most of the categories are self-explanatory except for ad impressions, which can be understood as the number of views of that particular ad. Impressions (n.d.) describes ad impressions as ‘if an ad is on screen and someone scrolls down, and then scrolls back up to the same ad, that counts as 1 impression.’ The act of viewing an ad through a user’s on-screen scrolling activity is thus recorded as viewing a given ad. Ad clicks are recorded separately. Some of the ads were redacted for either text or imagery, or both.

Example of Facebook ad meta-data

For the purposes of this project, 200 ads were randomly selected for analysis. The selected ads constitute a representative sample of the ads that were released. Sampling is a well-understood and commonly applied technique in the social sciences that is particularly appropriate where intensive coding of data can help to make these data more usable (King et al., 1994).

Visual representation of the Facebook ad (ad id 1982)

‘Building’ the network

To conduct SNA, textual data must first be preprocessed. Text preprocessing involves preparing documents for network extraction (network building) and analysis. Networks are then extracted from the preprocessed text, extracting words and concepts and coding them into nodes. Ties (links or connections) are identified between nodes based on co-occurrence in each ad text (performed by network extraction software). The process of building networks is described as the data-to-model (D2M) process (Carley et al., 2012). D2M workflow generally involves a series of separate steps leading to analysis, including data/text collection, text pre-processing, thesauri and delete list generation, network visualization, generation, and analysis (Carley et al., 2012). D2M was followed in this project. The step-by-step network extraction process is delineated in Online Appendix A. The coding procedure for identifying targeted groups based on ad meta-data information is described in Online Appendix B.

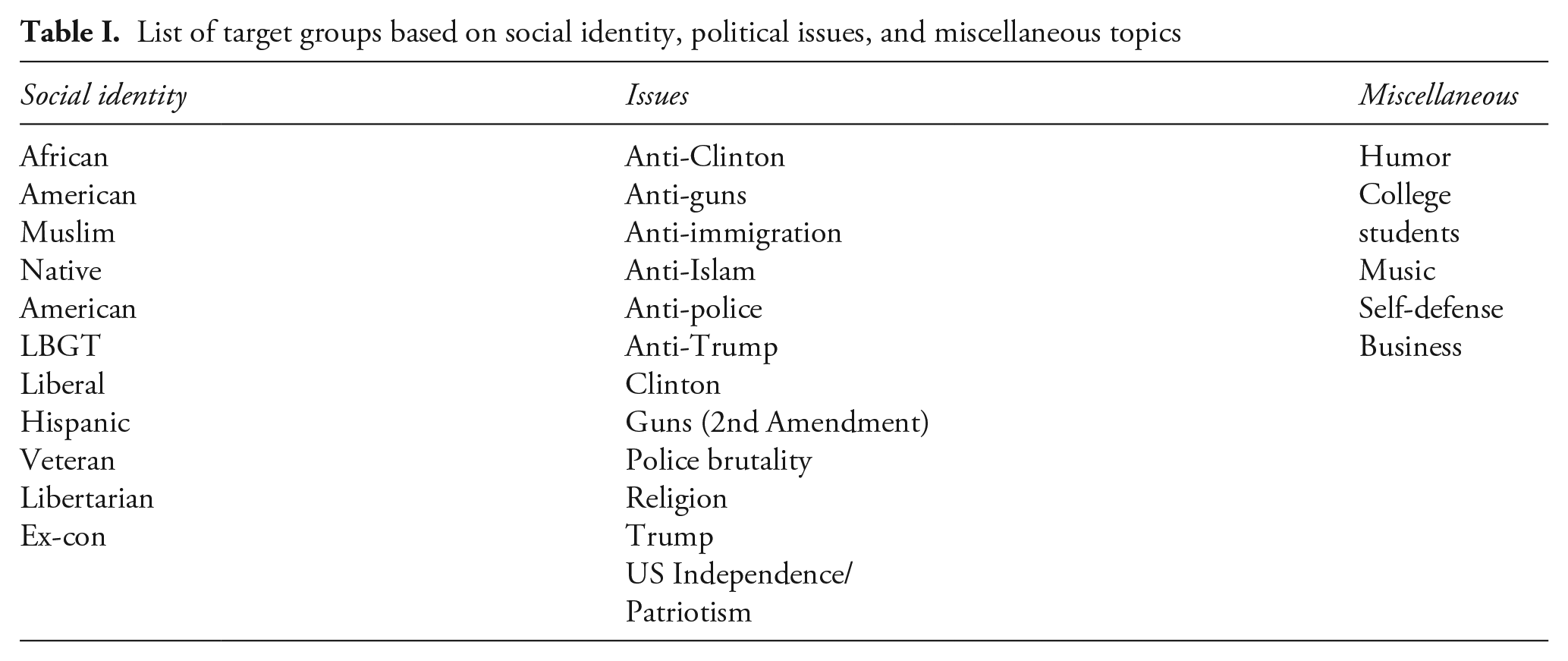

The coding procedure was applied to the whole sample of randomly selected ads. Overall, multiple groups of ads were identified (see Table I). Again, groups were coded using meta-data listed interests. There are thematic similarities among groups (e.g. ‘Anti-police’ and ‘Police brutality’). Ads where the target interests were listed as ‘Cops block’ were coded as ‘Anti-police,’ but ads where the interests were listed as ‘Police brutality’ were coded as such.

List of target groups based on social identity, political issues, and miscellaneous topics

Descriptive statistics

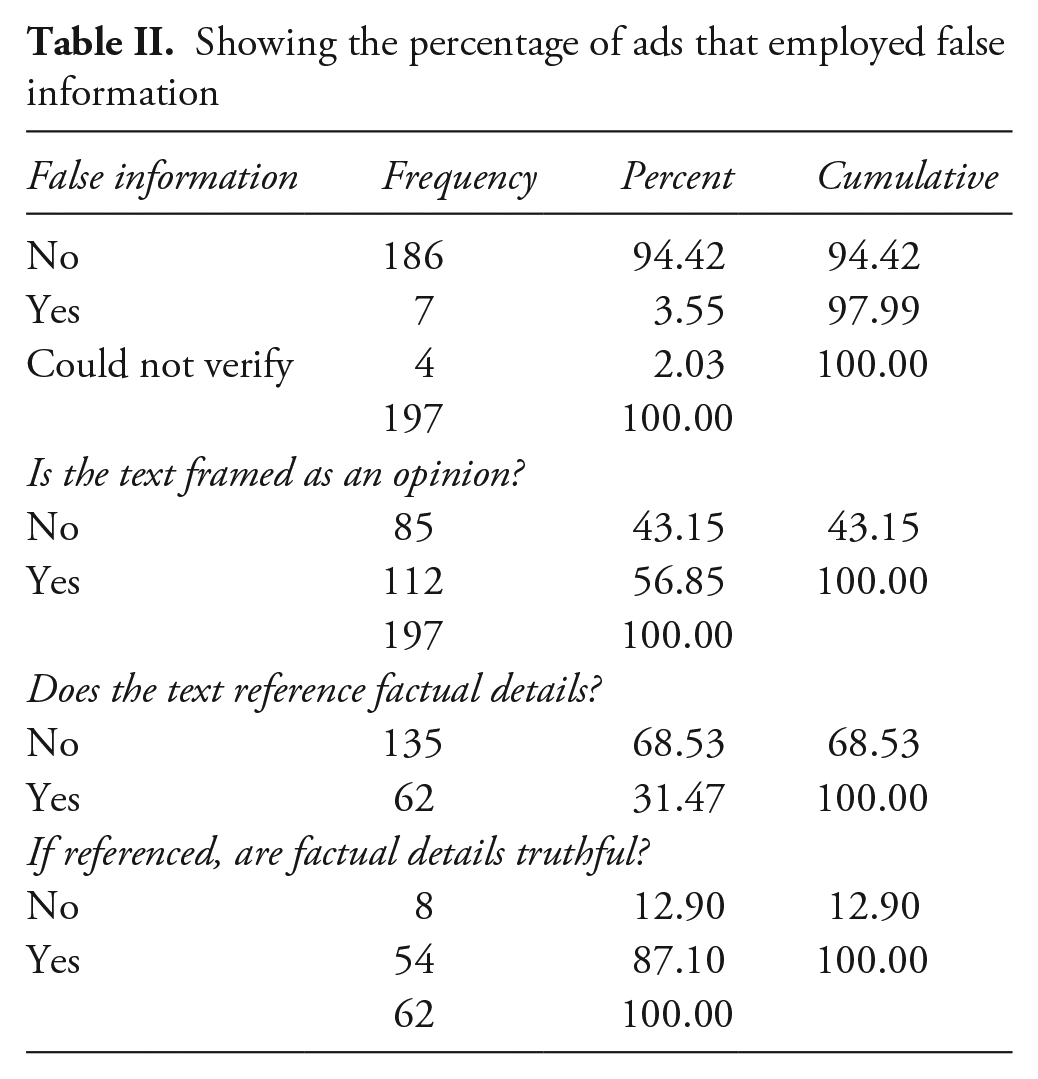

The largest primary target group of the Russian ad buy based on listed Facebook interests were African Americans, targeted with 108 ads (54.27% of the sample), followed by issue ads targeting Anti-Immigration (16 ads or 8.04%), and Hispanic (11 ads or 5.53% of the sample). For ads in the sample, total spending was 350,777.25 rubles, or roughly $5,500 (Shane and Goel, 2017). Prima facia, in terms of the validity of the content, 186 ads or 94.42% of the sample employed information that was factually accurate (i.e. not false), 7 ads or 3.55% used false information, while the information in 4 ads (2.01%) could not be verified. Digging a bit deeper, 56.86% of the ads are framed as an opinion, and only 31.47% of the ads actually reference factual details. However, of those ads where factual details are referenced, this information (as presented) is truthful in 87.10% of cases. For distribution of ads employing false information, see Table II.

Showing the percentage of ads that employed false information

The location of ads does not appear especially inconsistent with the geographical distribution of the US population (see Figure 4). Rural regions are represented (Idaho, Minnesota) as well as the Midwest (Illinois, Kansas), North (New York), South (Georgia, Texas) and West (California). Some ‘swing states’ in the 2016 election are included in the sample (Ohio, Pennsylvania, Virginia, Wisconsin) but others are not (Colorado, Florida, Iowa, Michigan, Nevada, New Hampshire, North Carolina). In short, the strategy emphasized in Donald Trump’s successful electoral campaign of targeting key swing states to win electoral votes does not appear to have been the focus of the Russian ad buy (Mahtesian, 2016).

Map showing locations in the United States that were targeted with ads in the sample

Results

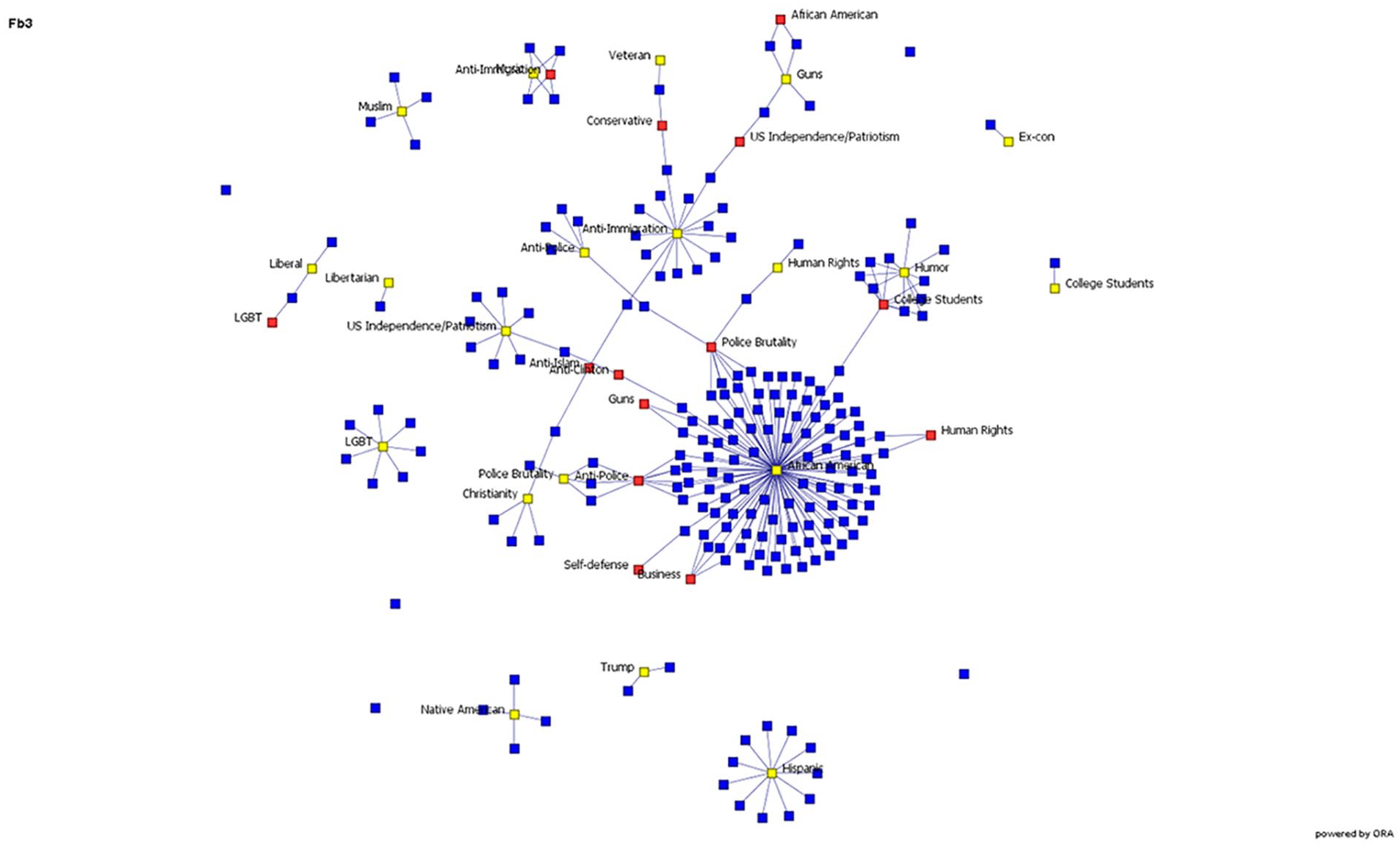

Two sets of results generated in ORA are presented below. Figure 5 depicts a visualization of the Russian Facebook influence operation based on the randomly drawn sample used in this study. This is a between-ads (documents) network, which shows relational ordering of the ads and groups targeted based on declared interests. We can see that there is clustering in the data around target groups as expected.

Bi-modal network

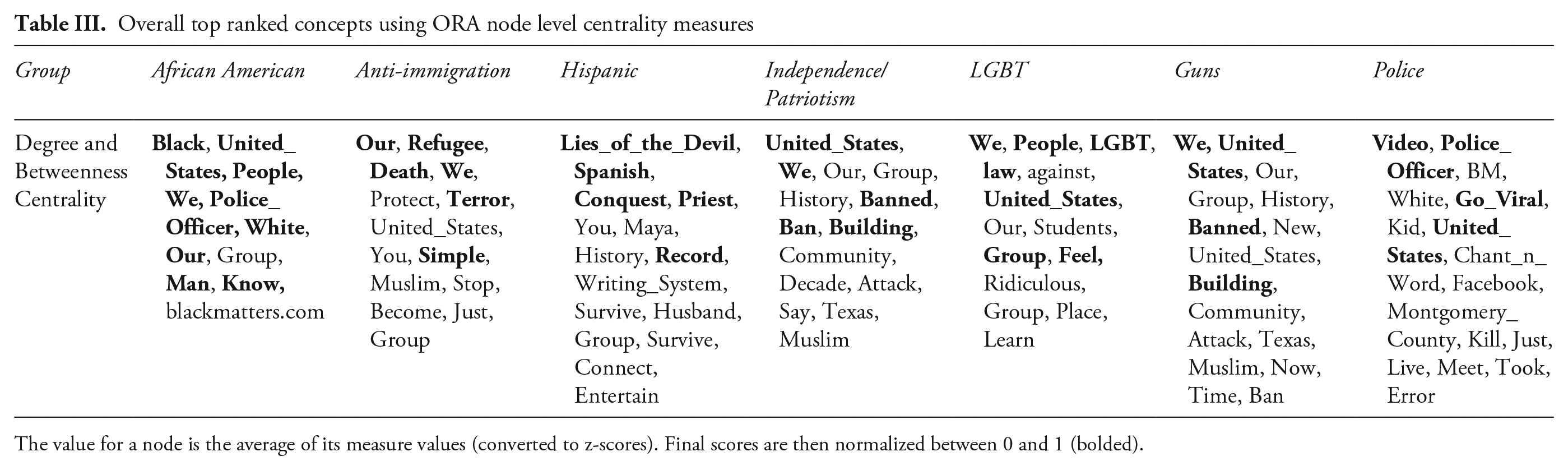

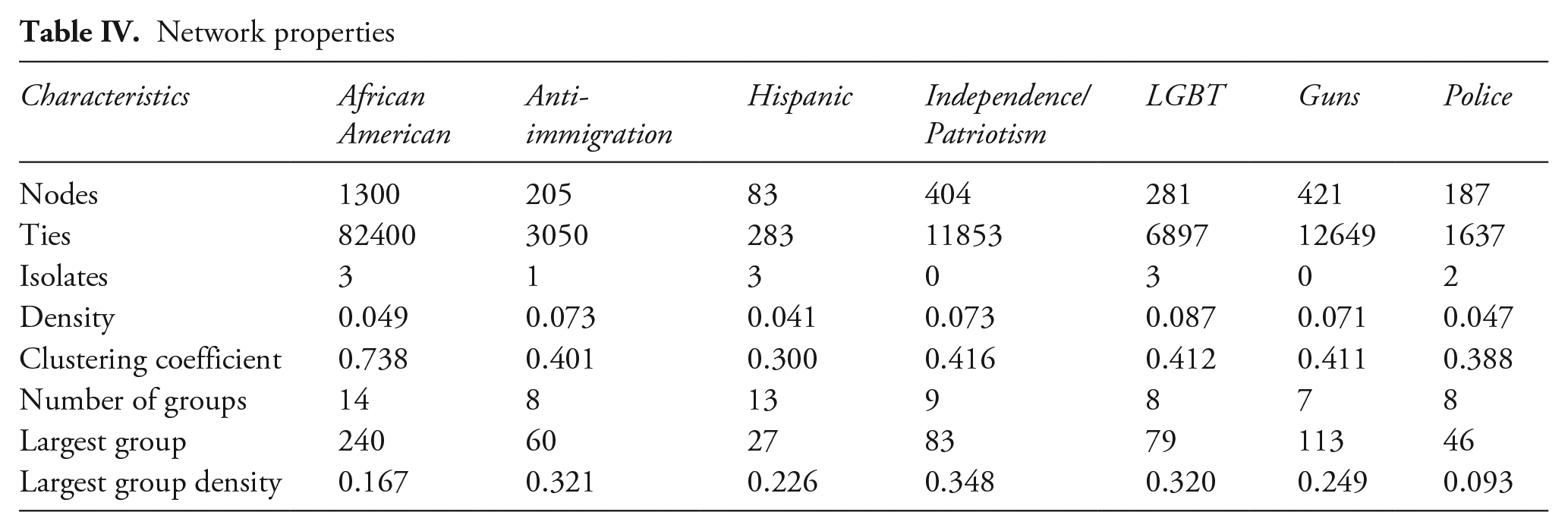

Tables III and IV represent the top ranked concepts, and general semantic network properties of the seven largest textual networks. 14 The results confirm expectations that specific marginalized groups were targeted; targeting was based on social identity or political, economic, and social issue support (Table III). Results also appear to show that the messaging is incendiary and diverse across groups. Additional textual analysis was employed to examine the most salient concepts used in group targeting (Table III). Bolded words indicate concepts with high in-degree and betweenness measures, identifying nodes that act as hubs to connect different thematic communities (Shim et al., 2015). Use of these words in ads across groups supports the expectation that CEIOs seek to achieve influence through the IIA mechanism by imitating organic conversations and posing as in-group members, while potentially obscuring the identity and intent of the authentic creators of the message (i.e. Russia). This strategy might be used to increase the influence of given messaging. Table III also shows some overlap in key words between the African American target group and Police target group. 15 For example, results show that words such as ‘police_officer’ feature prominently in the ads targeting both groups, as well as references to blackness. 16 This finding confirms the expectation of inflammatory topics in CEIOs.

Overall top ranked concepts using ORA node level centrality measures

The value for a node is the average of its measure values (converted to z-scores). Final scores are then normalized between 0 and 1 (bolded).

Network properties

As expected, the African American group is the largest overall in terms of network size (most nodes, Table IV). Network size varies across targeted groups, with the Hispanic group as the smallest. In terms of structural network properties, there is variation by network density and clustering. Network density refers to the number of connections of each node divided by the total possible connections a node can have. Network density is helpful for understanding the diversity of concepts in a network; networks that are rich in diversity of concepts have lower densities (Pereira et al., 2016). When it comes to network density, African American, Hispanic, and Police groups are similar, although density is higher in the Anti-Immigration, US Independence/Patriotism, LGBT, and Guns groups, suggesting that these groups address a smaller diversity of topics. Clusters refer to what surrounds a node, as a basic measure of local density in a network. They show how well-connected groups of nodes are (Drieger, 2013). The clustering coefficient is calculated as number of edges (links) connecting a node’s neighbors, divided by the total number of possible links. The coefficient can take on values between 0 and 1. Higher numbers indicate more connections in a node’s neighborhood. There is limited variation in the clustering in groups other than African Americans, which has a clustering coefficient of 0.79, suggesting strong connections between components that encode specific semantic topics or concepts (Drieger, 2013).

Based on these results, it appears that African Americans were considered an especially important target group for the Russian CEIO. The cyber-enabled influence operation against African Americans included a larger number of diverse topics as well as stronger connections between the topics than other target groups. This focus on African Americans suggests the group was perceived as especially salient, and vulnerable. In terms of topics, race, justice, and police were featured in this group. Marxist-inspired Soviet ideology regarded race and class as key societal cleavages. The USSR emphasized race as a theme in influence operations, with numerous documented campaigns since the 1950s (Shultz and Godson, 1984).

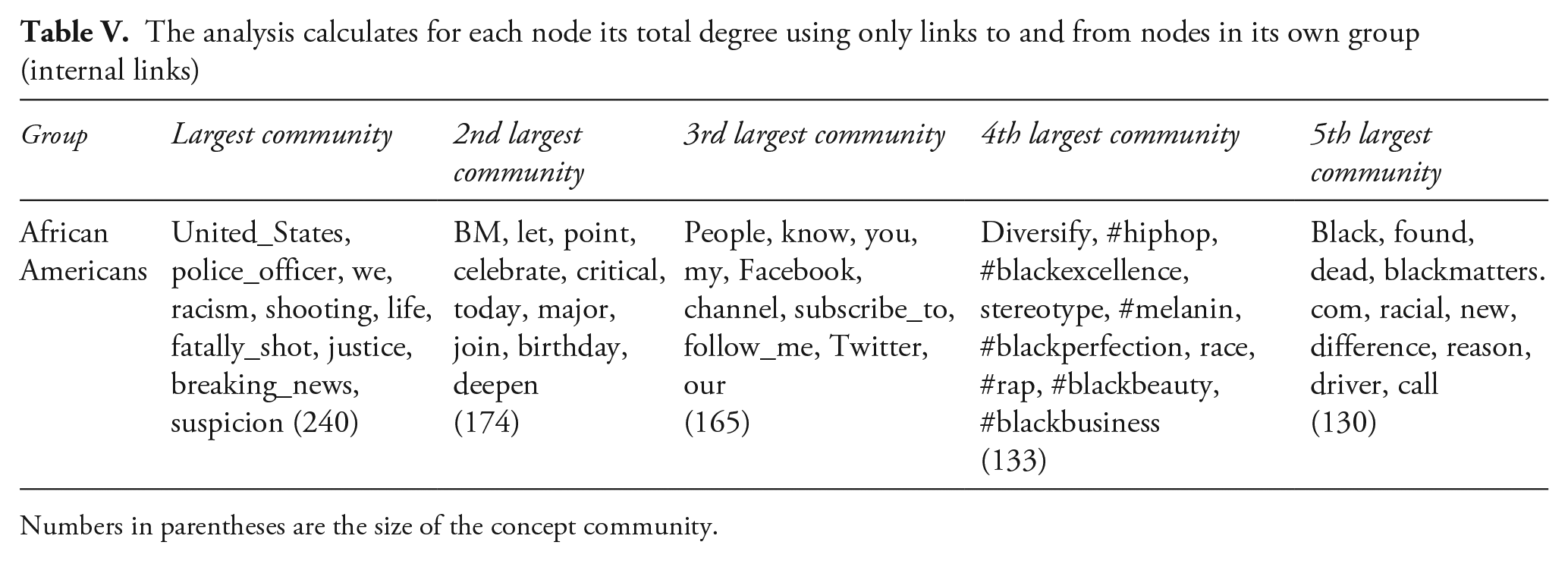

Table V lists the largest subgroups of concept networks within the African American target group, based on internal degree – the number of links a node has in a particular network. The largest single community of concepts features police brutality and racism as themes. The second largest thematic group focuses on celebrating blackness, while the third largest community is focused on mobilizing audiences to participate in advertised channels. The fourth largest group is focused on black culture cast in a positive light, while the fifth largest community of concepts centers around themes of race and brutality.

The analysis calculates for each node its total degree using only links to and from nodes in its own group (internal links)

Numbers in parentheses are the size of the concept community.

Limitations

There are (at least) two types of limitations in our analysis – relating to the dataset used, and to semantic network analysis. We address each in turn. First, we do not examine all of the Facebook ads that were released by the US House of Representatives Permanent Select Committee on Intelligence. Including the full dataset might facilitate new types of inquiry, for example examining changes in the content of the Facebook campaign over time. Second, some of the released ads were redacted which could potentially have affected the analysis. 17 Third, this article is concerned with, and analyzes, only the cyber-enabled influence campaign on Facebook. Russian operations surrounding the 2016 US Presidential elections were broader than the Facebook campaign, including activity on other social networking platforms.

Semantic network analysis also has limitations as an approach to textual analysis. First, coding affects the analysis, and for that reason, validation can be difficult (Diesner, 2012). The preprocessing techniques that are chosen and applied affect network construction. A researcher must make multiple coding choices that can alter results (Carley, 1993; Diesner, 2012). Consider the following example: it is often recommended that non-meaning bearing words be deleted. So, in other studies, words such as ‘like, we, our’ are often removed. However, for the purposes of this study, these words remained in the dataset because usage of ‘we’ and ‘our’ reflect imitation of organic discourse. The word ‘like’ can represent an invitation to mobilize via social media liking functions. Theory thus dictated that we retain these words in our analysis. Similarly, words like ‘cops’ and ‘police’ were coded as ‘police_officers’ to assess how often certain themes were used. The meaning of words should remain as objective as possible (Shim et al., 2015). Had the interest of this project been to offer a sentiment analysis, words such as ‘cops’ should perhaps be coded as ‘cops’ because this might be a sentiment-bearing word. For our purposes, however, a different coding was deemed more appropriate. Finally, ads were coded into target groups based on listed interests, which produced groups that appear similar but are distinct, such as ‘Anti-Immigration’ and ‘US Independence/Patriotism,’ or ‘Anti-Police’ and ‘Police Brutality.’

Conclusion

Despite widespread interest in the impact of Russian interference in the 2016 US federal election, it is nearly impossible to demonstrate an actual electoral effect. 18 We cannot identify individuals exposed to the Russian campaign 19 or whether they voted, and for whom. It is far easier, however, to infer the goals of Russian operations, their strategy, and the mechanisms they utilized in seeking to sway US voters.

While often conceptualized as disinformation, our analysis shows that the Russian CEIO did not attempt to introduce factually incorrect information into the public discourse (a ‘big lie’). Instead, the Russian CEIO appears to have been designed to shape the focus of certain US audiences by emphasizing certain genuine (recurring) themes. Our findings suggest that in terms of scope, the Russian CEIO was aimed at a diverse group of targets and included issues such as race, immigration, gun rights, nationalism, LGBT rights, and police brutality. In terms of the scale of the CEIO, most ads were targeting African Americans and race-related topics, while other possible targets received substantially less exposure.

Why did Russian operators disproportionately target African Americans? Racial tensions in the United States are an attractive – and resilient – target for foreign agitation. Well-documented systemic inequalities have historically disadvantaged African Americans. By targeting existing racial cleavages and emphasizing the disenfranchisement of minority groups, a foreign actor can build on recurring narratives and shift attention away from other political issues. However, in our view, this is simply the mechanism by which CEIOs work, and does not explain the focus on African Americans. With the same intensity, it was possible to target any other groups in the United States, and yet under-represented minority groups were chosen as a prime target. There are several possible explanations to contextualize these results.

The first possibility is that interference in the 2016 election was simply a recent manifestation of a venerable playbook of active measures, dating back to the Soviet era (Jonsson, 2019; Rid, 2020; Walton, 2022). Standard Operating Procedures (SOPs) drive bureaucratic activities, a phenomenon that is well understood in international relations (Allison, 1971). The matrix of contemporary Russian intelligence agencies (in particular the SVR and/or the FSB) may simply have chosen to continue the formulaic application of earlier KGB statecraft, tactics that are heavily oriented toward exploitation of racial tensions and ‘class struggle.’

A second explanation, also reflected in early analyses of Russian CEIO, is that Russian operators simply utilized brute force, ‘throwing stuff at a wall to see what sticks.’ Similar to their efforts to target domestic audiences inside Russia (Kerr, 2019), experimentation could travel abroad (Lin and Kerr, 2017). CEIOs can be applied to a broad array of goals and targets, including efforts to undermine credibility and trust, sow confusion and uncertainty, support extremism and polarization, etc. The focus on African Americans might then just have been coincidence. We are skeptical of this view, as the most obvious strategy in this case would be to (also) conduct explicit positive messaging favoring the Trump campaign.

A third view is that the Russian focus on the African American community was triggered by contemporary events, in particular the Black Lives Matter (BLM) movement (Etudo et al., 2023). While plausible, the temporal nature of BLM, and its role in the 2016 election, implies that Russian operatives would need to mobilize quickly and largely only after the triggering event. However, the very persistence of African American mistreatment, and resentment, as a focus of Russian/Soviet information operations indicates more continuity than this argument suggests. While it may have reconfirmed their efforts, we believe that Russian operators had determined to focus on African Americans as targets prior to BLM.

Finally, there are major parallels between the CEIO run by Russian authorities and the electoral strategy of the Donald Trump campaign. As we now know, Paul Manafort, Donald Trump’s campaign manager, supplied Russian operatives with polling data and the campaign’s electoral strategy documents (Ladden-Hall, 2022; Tucker, 2021). Manafort pleaded guilty to this in 2022 in a US Federal court (Rutenberg, 2022). Suspicion of collusion between the Trump campaign and Russian operatives emerged early on and was documented by the Robert Mueller investigation (United States Department of Justice, 2019: vols I and II). For the purposes of this project, the missing piece of the puzzle was why the Russian CEIO chose to focus on discouraging African American voters, given other available options (e.g. raising Republican turnout). Information that Trump planned to pursue a negative campaign against the presumptive Democratic candidate, Hillary Clinton, evidence that the campaign supplied their strategy and the reasons for it to Russian agents, and a peculiar correlation between the type and topics employed by both entities all seem to form a coherent pattern and story of indirect collusion. Timing again is critical; the Russian CEIO may have arrived at these tactics independently from the Trump campaign or used the Manafort information to develop or refine their efforts. While one can never know for sure, we plan to investigate further, using time-series data to track the progression of the Russian CEIO.

More broadly, we argue that our collective understanding of CEIOs should be expanded beyond disinformation operations. As the analysis here reveals, the information utilized in the 2016 Russian cyber-enabled influence campaign on Facebook was generally factually correct. This contradicts the common misconception that CEIOs disseminate or amplify ‘fake news.’ Such campaigns tend to stimulate debate about issues that emerge organically from within domestic audiences, rather than as a (pure) result of intervention.

The more general, organic, issue is influence; manipulation often occurs even in the presence of statements that are factually correct. Directing the focus toward particular truths privileges certain issues, shapes debates and prejudices the formation or ascent of specific groups or coalitions. In short, CEIOs determine winners and losers. Would some issues, if not amplified, take up as much space in the public consciousness? Would the same factions and cleavages form without the CEIO? It is difficult to tell.

CEIOs demonstrate the centrality of human dimension of cybersecurity. As our evidence shows, individuals were micro-targeted by influence operations in the context of presidential election in the United States. Indeed, instead of hacking networked systems, cyber capabilities can also be used to ‘hack human minds,’ and have the potential to undermine the integrity of electoral process. The case we examine in this manuscript is not isolated – increasingly, state actors are using cyber-enabled influence operations to sway domestic public opinion.

As nations struggle with the meaning, or even the presence, of thresholds of warfare in an era where new technologies are blurring better-established boundaries, scholars have an opportunity (perhaps even a responsibility) to investigate. CEIOs are an especially attractive area in which to focus collective research. They are apparent, measurable, systematic, and designed and authorized at the highest levels of government. Yet, they are also subtle and durable; their effects may linger and even merge with other phenomena, perceptions and events. CEIOs are not ‘flashy,’ but for this very reason they may represent the state and nature of cyber warfare more accurately than elusive (thankfully) ‘cyber Pearl Harbors.’

Our efforts here have only scratched the surface. Our goal was to provide an ‘existence proof,’ suggesting that cumulative research into the great bulk of low-intensity cyber conflict may prove fruitful. Even this initial foray has proven provocative. We hope our study suggests that more can indeed be done.