Abstract

Most cyber intrusions are a form of intelligence rather than warfare, but intelligence remains undertheorized in international relations (IR). This article develops a theory of intelligence performance at the operational level, which is where technology is most likely to affect broader political and military outcomes. It uses the pragmatic method of abduction to bootstrap general theory from the historical case of Bletchley Park in World War II. This critical case of computationally enabled signals intelligence anticipates important later developments in cybersecurity. Bletchley Park was uncommonly successful due to four conditions drawn from contemporary practice of cryptography: radio networks provided connectivity; German targets created vulnerability; Britain invested in bureaucratic organization; and British personnel exercised discretion. The method of abduction is used to ground these particular conditions in IR theory, revisit the evaluation of the case, and consider historical disanalogies. The result is a more generalizable theory that can be applied to modern cybersecurity as well as traditional espionage. The overarching theme is that intelligence performance in any era depends on institutional context more than technological sophistication. The political distinctiveness of intelligence practice, in contrast to war or coercive diplomacy, is deceptive competition between rival institutions in a cooperatively constituted institutional environment. Because cyberspace is highly institutionalized, furthermore, intelligence contests become pervasive in cyberspace.

Introduction

Most threat activity in cyberspace is more like intelligence than warfare (Lindsay, 2021; Rid, 2012; Rovner, 2019). But what is intelligence? The field of international relations (IR) has long ignored the dark arts of politics (Andrew, 2004). But this is changing. Just as espionage and subversion are becoming more prevalent in cyberspace (Gioe et al., 2020), IR scholars are becoming more interested in intelligence and covert action (Carnegie, 2021).

Still, the intelligence framing of cybersecurity is contested (Chesney and Smeets, 2023). The technical methods and global scale of cyber campaigns contrast with the boutique tradecraft of traditional covert operations (Warner, 2019). We now see military cyber units and nonstate actors, not just spy services, conducting strategic operations below the threshold of armed conflict (Fischerkeller et al., 2022). And commercial actors play an outsized role in cybersecurity (Egloff, 2021). But these disanalogies just beg the question of intelligence. Modern cyber operations are more digitized, supersized and civilianized, to be sure, but these may just be differences in degree with traditional espionage, not in kind. A good political theory of intelligence should be able to explain both continuity and change.

This article develops a general theory of intelligence performance that explains the common political features of espionage in any era. Intelligence performance here refers to the operational ability to conduct espionage operations and steal secrets. This is distinct from decisions at the political level to act on secrets, much as a military’s ability to win battles is distinct from a state’s ability to win wars. But an intelligence agency that cannot steal secrets is less likely to provide a decision advantage (Sims, 2022), just as a military that fails on the battlefield is less likely to contribute to victory. I argue that four institutional factors are necessary for sustained intelligence performance: connectivity; vulnerability; organization; and discretion. These conditions link the institutions of the attacker to the institutions of the target through a shared institutional environment, which enables persistent deception and subversion. There are good reasons to believe that these same conditions matter for cybersecurity, too. More institutionalized information systems enable more intelligence operations.

I make three contributions in this article. First, I ground cybersecurity in intelligence history through a case study of Bletchley Park (BP), the famous British codebreaking organization in World War II. BP pioneered a digitized, supersized and civilianized style of intelligence, which makes it a critical case in the prehistory of cybersecurity. Historians also agree that BP was uncommonly successful in the annals of intelligence (Ferris, 2020; Grey, 2012; Kenyon, 2019; Ratcliff, 2006), which means that any necessary conditions for successful operations should be present in this case. Indeed, rich empirical evidence shows how BP met all four hard-to-meet conditions, while more modern cases remain shrouded in secrecy. Linking cybersecurity to intelligence history expands the universe of cases that scholars can study to understand cyber conflict.

Second, I explain the ingredients of intelligence success in any era. There is a body of literature on intelligence failure (Dahl, 2013; Jervis, 2011; Rovner, 2011; Stein, 1980), which emphasizes unit-level bureaucratic politics and cognitive biases. But there is less work on intelligence success (Cormac et al., 2022; Lindsay, 2020a), which depends on system-level relationships between intelligence adversaries. The literature on military effectiveness (Biddle, 2004; Brooks and Stanley, 2007; Talmadge, 2015) offers relevant insights, namely on the importance of institutions over the quality or quantity of weapons. But intelligence effectiveness depends further on institutional resources shared across intelligence adversaries, not just on the organizational institutions of combatants. Deception, importantly, has a different strategic logic than war or coercion (Gartzke and Lindsay, 2015; Lindsay, 2017; Maschmeyer, 2022). While warriors fight their enemies, and diplomats make open threats, spies subvert trusting cultures, and spyware subverts trusted systems.

Third, this article showcases the method of abduction. I leverage the empirical details of BP to bootstrap a general theory of intelligence performance, not simply to illustrate it. Abduction (Fann, 1970), which is also known as grounded theory (Glaser and Strauss, 1967) or retroduction (Downward and Mearman, 2007), offers a middle way between empirical induction and logical deduction. This method turns the theme of the introduction to this special issue, ‘Moving from Speculation to Investigation,’ on its head by showing how an investigation of rich qualitative data can move to generalized theoretical conjecture. Abduction is particularly relevant for emerging scientific domains with ambiguous concepts and imperfect data, which describes the study of cybersecurity well.

I proceed in six steps. I start with the conjecture that cyber conflict is an intelligence contest and select a critical case of intelligence success. I next explore the historical circumstances of BP that enabled its success. I generalize these specific conditions into a general theory by linking them deductively to classic IR concepts. I then return to the case to recursively apply the refined theory to new details of the case to improve confidence in this explanation. I conclude by considering disanalogies between cryptology and cybersecurity as well as implications for further research.

The first cyber campaign

Science is a social process. Abduction thus emphasizes the reflexive role of the researcher in the scientific process. Abduction is most associated with qualitative research and the ‘pragmatic turn’ in constructivist IR (Franke and Weber, 2012; Friedrichs and Kratochwil, 2009), but it simply makes explicit the implicit theory building process in any scientific domain. In this article I strive to make visible the empirical scaffolding of theory construction.

Every researcher comes to a domain with background assumptions, which provide the starting point for theoretical conjecture. In my case, I have been following the IR cybersecurity debate for several years, which has made me sceptical about ‘cyberwar’ and other military analogies (Branch, 2021; Dunn Cavelty, 2008; Gartzke, 2013; Hansen and Nissenbaum, 2009; Lonergan and Lonergan, 2023). I prefer an alternate interpretation of cyber conflict as an intelligence contest (Chesney and Smeets, 2023; Gartzke and Lindsay, 2015; Rovner, 2019). This implies that to explain cyber performance, I first need to come up with a general theory of intelligence performance.

Many claims about cybersecurity focus on the impact of technology on the speed, scope and scale of espionage and subversion. Technology is believed to advantage offence over defence, increase the speed and scale of operations, and lower barriers to entry. Critics of these claims highlight institutional factors that condition or blunt the effects of technology (Maschmeyer, 2021; Slayton, 2017; Smeets, 2022). Thus, I will focus on intelligence performance at the operational level (i.e. stealing secrets rather than acting on them), which is where the technology of communication, collection and analysis should be most relevant, unless, that is, institutional conditions are even more important. The operational level of intelligence, furthermore, has important implications for the strategic impact of intelligence by any means. If we believe that intelligence matters in international politics, for improving (Early and Gartzke, 2021) or degrading (Matovski, 2020) strategic stability or other important outcomes, then it is vital to understand the determinants of intelligence performance.

The method of abduction begins by selecting a ‘most important’ or ‘most typical’ case that exemplifies the thing we hope to explain (Friedrichs and Kratochwil, 2009: 718; Gerring, 2004). This advice flies in the face of the statistical admonishment to avoid picking on the dependent variable. But for theory construction, as distinct from testing, it is helpful to find a clear and indisputable instance of our dependent variable – operational intelligence success – so that we can discover its potential causes. We do not yet know why intelligence is successful, after all, and we might be surprised at what we find. We may further discover that our case is not perfectly representative, but the later recursive phase of abduction is explicitly intended to discover inconsistencies and contradictions.

The British Government Code & Cypher School (GC&CS), headquartered at BP, is an attractive case here. GC&CS is rightly celebrated as one of the most successful intelligence organizations of all time, breaking and reading German communications in bulk throughout World War II. GC&CS also anticipates important features of cybersecurity: ubiquitous computing technology; large-scale clandestine operations; and civilians in prominent roles. Much as cyber actors today work through digital devices, the intelligence contest between Allies and Axis was mediated by computing machines (e.g. Bombe versus Enigma and Colossus versus Lorentz). GC&CS pioneered the mathematization and mechanization of cryptography, attacking the enemy’s entire cryptosystem through a dramatic increase in the scale of decryption. This innovation was spearheaded by civilian academics, telecommunications engineers and corporate managers. They pioneered new analytical and administrative methods by borrowing best practices from industry and academia, and they invented electromechanical and electronic machines that set important precedents in computing history (Haigh and Priestley, 2020). Indeed, the very notion of ‘cybernetics’ owes an important debt to wartime cryptography (Kline, 2015). It is not be much of an exaggeration to describe BP as ‘the first cyber campaign.’

I come to this case with many pre-existing ideas about the political nature of intelligence, and of cybersecurity as a lesser included case of it. The common theme is the use of deception to subvert cooperative institutions for competitive advantage (Gartzke and Lindsay, 2015; Lindsay, 2017, 2021; Maschmeyer, 2022). Deception depends on the willing but unwitting cooperation of the adversary. As Howard (1990: 5: 52) writes, ‘Deception can never be effective either in love or in war, unless there is a certain willingness to be deceived, and the London Controlling Section could never have succeeded as well as it did had it not possessed, at the highest level of the German Command, its unconscious sympathizers.’ In cybersecurity, likewise, complacent developers and gullible users inadvertently open doors for hackers. I thus expect to find that institutional cooperation enables intelligence conflict.

Here, too, BP is an attractive case. Howard (1990: 5: x) argues that Great Britain ‘enjoyed two extraordinary, possibly unique, advantages; advantages so extraordinary that one would be rash to assume that we or indeed anyone else could ever possess them in comparable measure again.’ He refers to the systematic decryption of German communications and the British ability to hide this feat. This is a contest of deception: German encryption disguised signals as noise, while British operational security (OPSEC) disguised clandestine decryption. Howard suggests that the lopsided outcome of this contest is difficult to achieve in general, which might explain why so many cyber campaigns appear messy and inconclusive by comparison. One could not ask for a better opportunity to theorize about cybersecurity.

The social life of cryptology

The next step in abduction is to jump into the empirical mess (Law, 2004). There is a vast historiography on BP, and almost everything relevant has been declassified (Ferris 2020). This is another reason to like BP as an abductive base for theorizing about cybersecurity. BP illuminates the critical organizational factors that shape intelligence practice. Modern cyber campaigns are shrouded in secrecy, by contrast, so we know more about observable intrusions than hidden intruders.

My guiding theoretical assumption that institutional cooperation enables intelligence competition now leads me to ask: what are the social conditions that enabled successful cryptological operations at BP? A focus on social conditions is more likely to reveal enduring factors that will remain relevant for cybersecurity, since human behavior changes more slowly than computational technology. Yet, the social life of BP was deeply ‘sociotechnical’ (Suchman, 2007) – cultural and material process were thoroughly entangled.

Technical work in cryptology at BP provides a starting point for analysing intelligence performance. The concept of cryptology refers to the contest between codemakers and codebreakers, or cryptographical defence and cryptanalytical offence (Kahn, 1996: 737–762). The broader concept of signals intelligence (SIGINT), which was first coined at GC&CS (Hinsley et al., 1979: 21n), encompasses not only cryptanalytical attacks on enemy traffic and cryptographical protection of decrypts but also intercepting traffic and analysing intelligence.

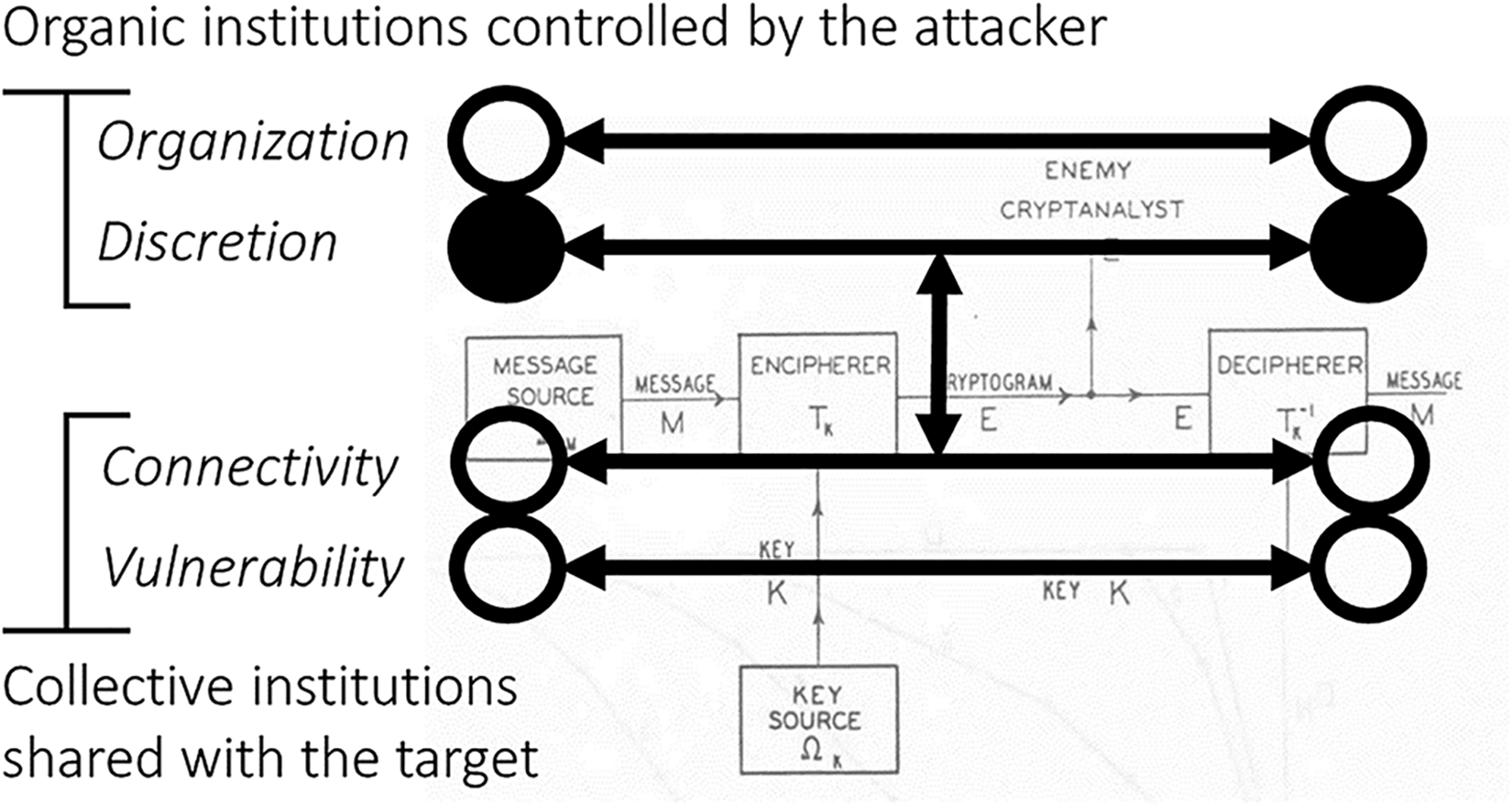

There are three basic social roles in the world of cryptology, which modern cryptographers describe as Alice, Bob and Eve. Alice sends an encrypted message that Bob can decrypt, while eavesdropping Eve uses cryptanalysis to break their cryptography. These roles cooperate and compete in different ways, as we shall see. Even as Axis and Allied forces fought viciously on the battlefield, German Alice and Bob offered up vulnerabilities, cooperatively yet inadvertently, that British Eve could quietly exploit.

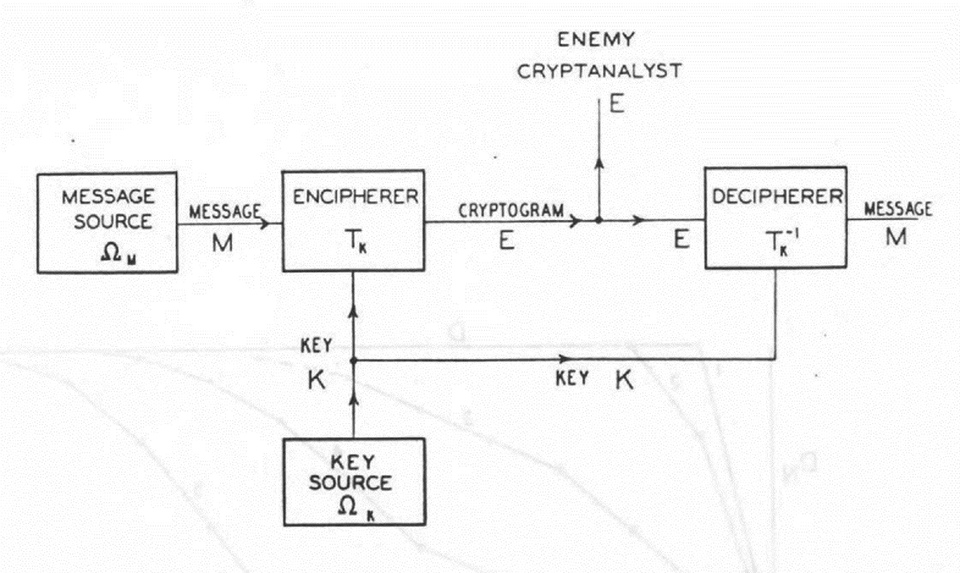

Figure 1 depicts the basic elements of a symmetrical cryptosystem such as the German Enigma, which used the same key to encipher and decipher.

1

Figure 1 is reproduced from a classified Bell Labs report by Claude Shannon (1945), a contemporary working on similar problems as GC&CS across the pond. Shannon and Alan Turing, BP’s star cryptographer, each made seminal contributions to computer science – yet another foreshadowing of cybersecurity. In Shannon’s model, the ‘Encipherer’ (Alice) and ‘Decipherer’ (Bob) communicate

Shannon’s model of cryptography (Shannon, 1945) Shannon’s model of communication (Shannon, 1948)

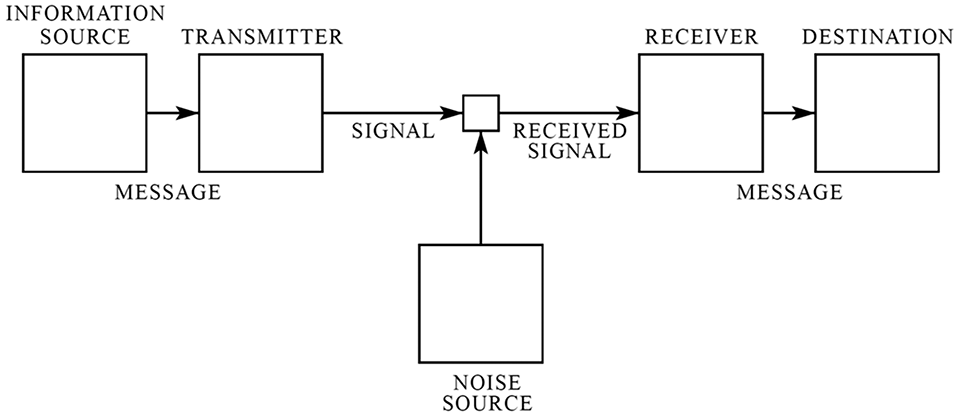

A key point here is that the same principles that enable communication also enable deception. This can be appreciated by comparing Figure 2, reproduced from a seminal paper by Shannon (1948) on information theory. In Figure 1, the encipherer (Alice) encodes a signal to look like noise, which increases uncertainty (entropy) for the cryptanalyst (Eve), while in Figure 2, the transmitter (Alice) uses redundant encoding to offset a noisy channel, which reduces entropy for the receiver (Bob). The only difference is that there are two channels in Figure 1, one public (Alice–Eve) and the other private (Alice–Bob), which makes it possible to create noise for one of the receivers (Eve) and a signal for the other (Bob). But the underlying mathematics of cryptography and communication are the same. This point cannot be emphasized strongly enough. Already in the contemporary logic of cryptography at BP, we have the essential political paradox of communication enabling deception.

Two remote actors establish a common connection to facilitate collective action

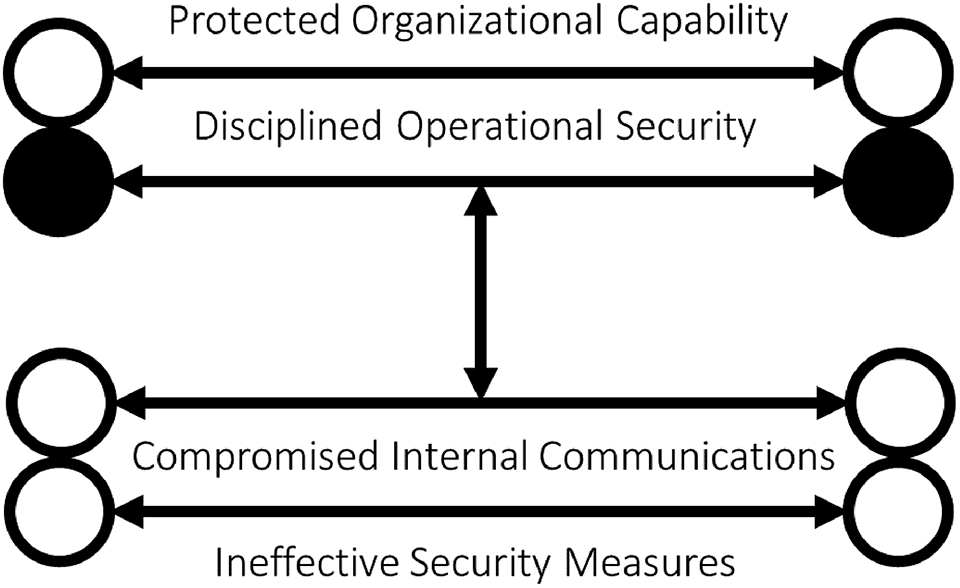

I will next break down the political logic of Shannon’s model and the implementation of it at BP. I will use a series of figures to highlight different relationships of cooperation and conflict implicit in this implementation, which in practice adds necessary elements that are not explicitly represented in Figure 1. The net result is four basic conditions that enabled GC&CS to be successful: Institutionalized connectivity via accessible communication systems. Inadvertent vulnerability in the target’s sociotechnical cryptosystems. Managerial organization to support exploitation in an uncertain environment. Deliberate discretion to protect sensitive sources, methods and operations.

Condition #1: Connectivity

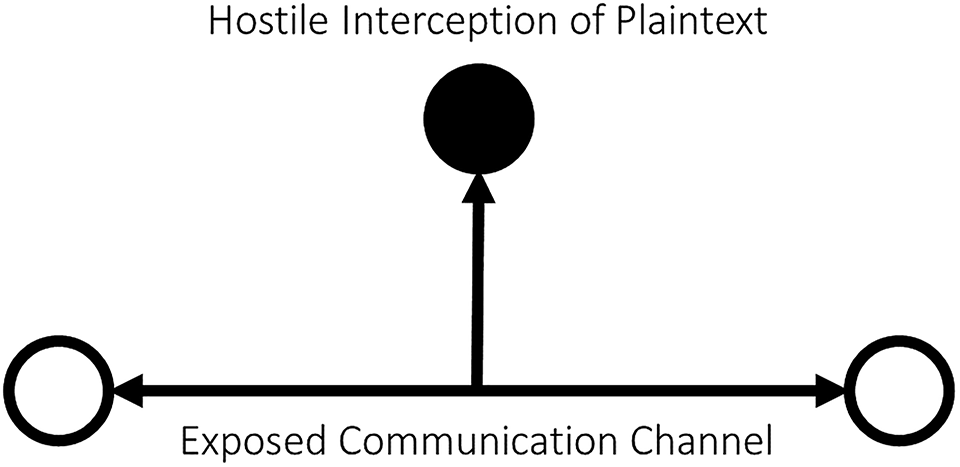

The fundamental condition for the possibility of intelligence exploitation is the existence of an information system. If there is no communication, there is nothing to steal. Figure 3 thus depicts mutual connectivity between friendly communicators that enables them to coordinate their activities. The endpoints are depicted as transparent to each actor, making them fully informed about each other. There is no eavesdropper to worry about. The connectivity condition describes the normal, cooperative aspect of an information system (i.e. Figure 2).

Geographically dispersed forces on the move are unable to communicate continuously via fixed landlines. World War I demonstrated that reliance on prior plans was insufficient for coordination in a fluid battle. Radio offered a solution. Germany relied on radio to integrate air, armour and infantry units into mobile offensives, that is, blitzkrieg. Radio also enabled the Kriegsmarine to direct U-boats to intercept Allied convoys in the vast Atlantic. To establish and maintain this connectivity, German forces needed compatible radios, a common language and shared communication doctrine (Beyerchen, 1996). German units cooperated with each other to establish connectivity because they had a mutual interest in coordinating military activity.

Offence (collector) has the advantage against the defence’s unsecured communications

In political economic terms, the German military created institutional ‘rules of the game’ (North, 1990) for constraining interaction and reducing the transaction costs of collective action. The connectivity in Figure 3 is implicitly an institutionally constrained channel that enables German communicators to coordinate organizational activity. Radio traffic, furthermore, has the properties of a ‘public good’ (Ostrom, 1990), because anyone can passively listen to it and listening does not diminish the signal: radio is nonexcludable and nonrival. These features helped the German military to solve the collective action problems of mobile offensive operations at scale.

But these same features invite enemy eavesdropping. High-power long-distance radio waves propagate in all directions at the speed of light. Long before cyberspace connected the world, radio created a common domain that exposed internal communications to a remote intelligence adversary. Figure 4 illustrates this problem. The endpoints (German Alice and Bob) are now visible to a passive midpoint collector (British Eve). The collector’s endpoint is opaque because it sends no traffic and only receives, effectively hidden in the noise of the channel, while the communicators are transparent to both themselves and the enemy.

Condition #2: Vulnerability

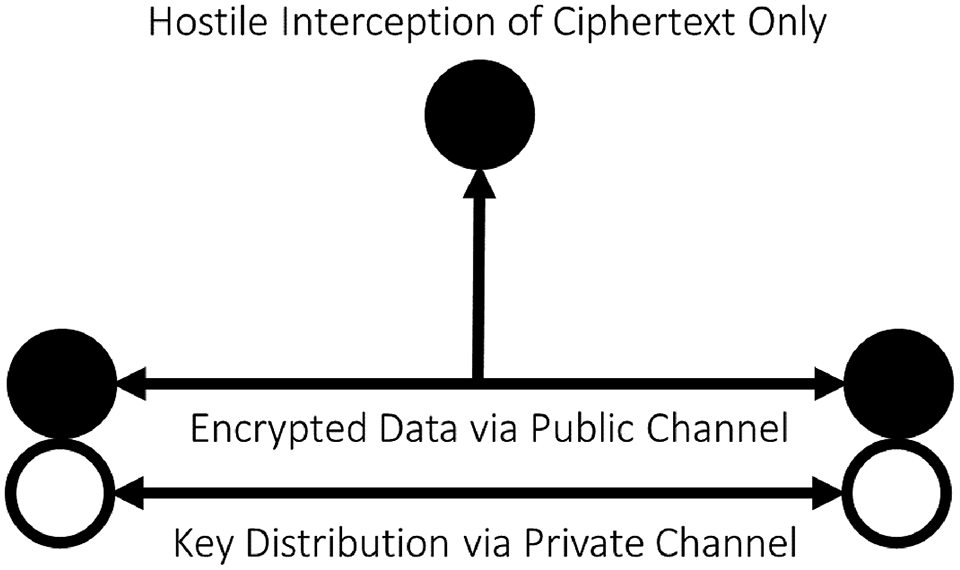

This intelligence threat and opportunity was obvious to all actors by World War I, if not before. Thus, all combatants took steps to secure their communications for World War II. Figure 5 depicts the addition of security protocols to Alice and Bob’s communicative institution. This modification – essentially equivalent to Figure 1 – partitions communications into a public layer of ciphertext that everyone including Eve can access, and a private layer of plain text and key material that only Alice and Bob can access. Their cryptosystem creates a ‘club good’ (excludable yet nonrival) that provides access to plaintext to trusted members only. Alice

Defence regains the advantage over offence through (symmetrical) cryptography

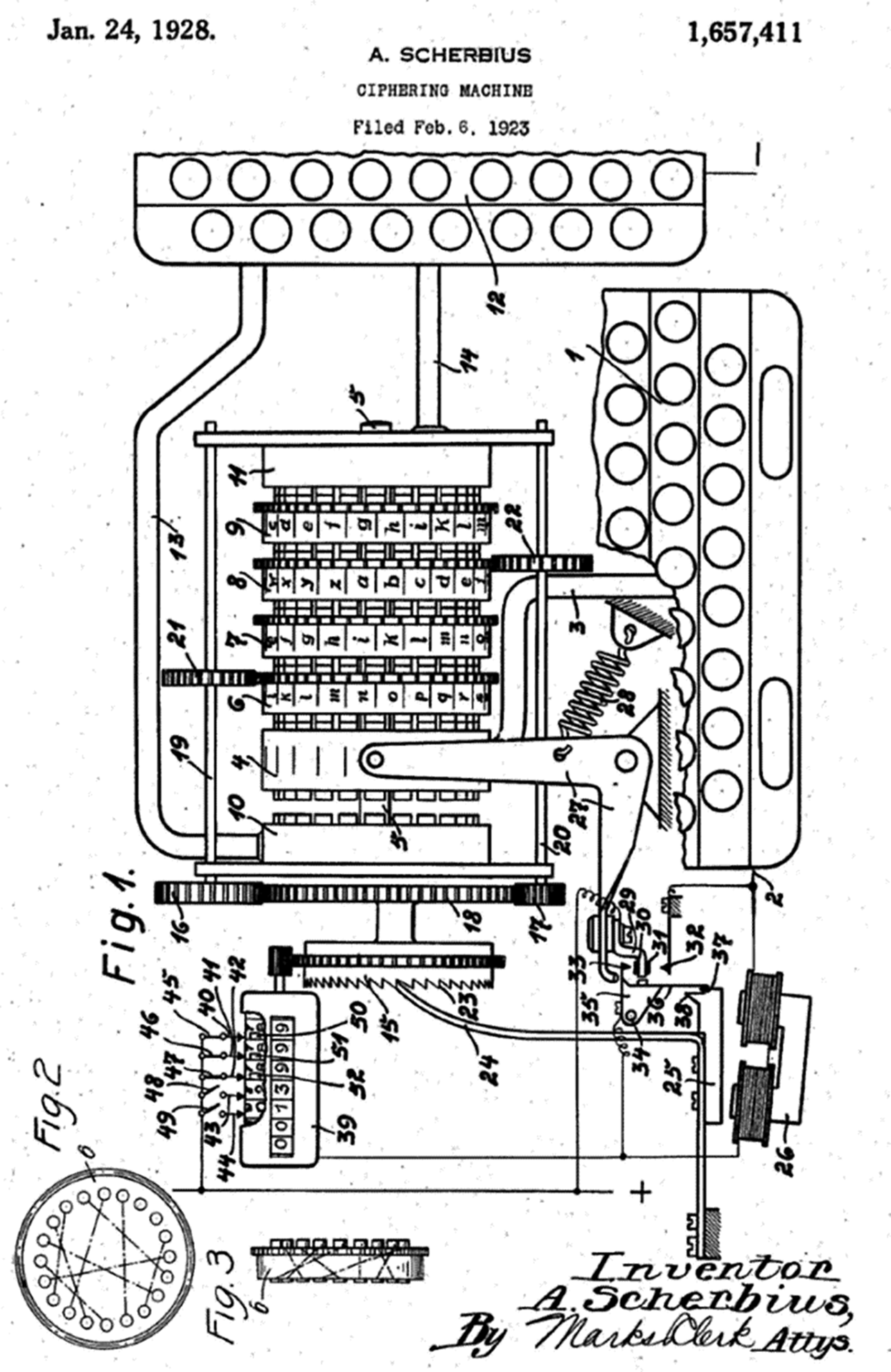

Germany implemented Figure 5 (and Figure 1) with several cryptosystems, most famously Enigma. The military Enigma was a variant of a commercial mechanical cipher device that embodied a symmetrical polyalphabetic cipher algorithm in its system of rotors, wires and plugs. Figure 6 depicts a drawing of the commercial device filed with the United States Patent Office. Kerkhoff’s principle holds that revelation of the details of the machine (algorithm) does not compromise its security as long as its settings (key) are kept secret.

The initial settings of Enigma’s movable parts embodied the cryptographic key. German couriers visited field units on a regular basis to drop off copies of the same codebook, which listed daily rotor and plugboard settings, so that communicators could share a key. Operators then selected unique rotor settings for every message, which they first enciphered using the initial daily settings from the codebook. The receiving operator used the same codebook to decipher the message. In principle, the three-rotor military Enigma could produce 3 × 10114 machine states, even as British cryptanalysts only faced 1023 possibilities in practice (Miller, 1995). This was still a vast search space, so German cryptographers assumed that Enigma was unbreakable. They accepted that an enemy might get hold of an Enigma machine, but they assumed that couriered key material would remain secure (Ratcliff, 2003). These were reasonable assumptions in an era of manual cryptanalysis, but not against the new methods pioneered at BP, which were kept secret from the Germans.

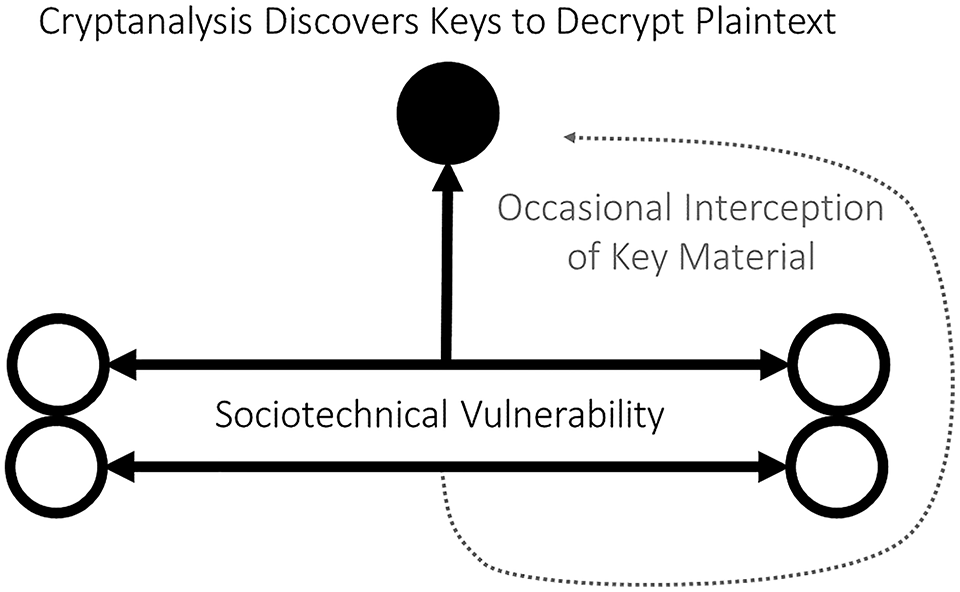

An intelligent adversary will attempt to break into the cryptographical club. Eve can try every possible key, but brute force search becomes infeasible with a large key space (entropy). She can also look for statistical patterns or redundancies in accumulated collected ciphertext. Cryptanalysis is a tedious process, even with the help of computational machines such as Bletchley’s Bombes. She can shortcut cryptanalysis if she can access the key distribution channel or unencrypted endpoints directly. German operators occasionally broadcast keys in the clear – a complacent convenience. The Allies sometimes recovered (‘pinched’) key material from German submarines. These windfalls helped British cryptanalysts to break into other Enigma keys. But mathematized and mechanized cryptanalysis was the bedrock of persistent access to Enigma. Figure 7 depicts both routes to decryption, that is, regular cryptanalysis and occasional pinches, overcoming the barriers of Figure 5 (defensive advantage) to revert back to Figure 4 (offensive advantage).

The opaque endpoints in Figure 5 become transparent in Figure 7 because Alice and Bob have inadvertently but voluntarily created a vulnerable cryptosystem. Anything that reduces the entropy of the target cryptosystem, whether through mechanical design or human behaviour, is a potential vulnerability. The target does not intend to enable constrained coordination with the intelligence adversary, of course, but any sociotechnical constraint in the target system becomes a potential resource for the adversary to establish a coordinated communication channel into it.

Germany offered up technical and social vulnerabilities to the enemy. Enigma machines could not encipher a letter as itself, by design, and rotor wiring was fixed. These constraints, in combination with German protocols for encoding message keys, created subtle cycles in the cipher text. The initial break into Enigma by Polish cryptanalyst Marian Rejewski in 1932 exploited these cycles. The addition of two new rotors in 1938 overwhelmed Polish methods, which led to coordination with Allied intelligence and further innovation in cryptanalysis (Kahn, 1993). But the most helpful vulnerability came from German Enigma operators themselves. In complacent deviation from official protocol, operators often failed to paraphrase, pad, or reorder messages that they retransmitted across different networks. They used easy-to-guess keys for multipart messages (e.g. HIT+LER or ROM+MEL) that the British called ‘cillies’ (Erskine, 2001b). Key material for most Luftwaffe networks reused portions of previous lists that GC&CS was quick to recognize (Erskine, 2001a: 69). According to Erskine (2001c: 370), ‘Germans had continually to make a lot of mistakes every day for [GC&CS] to succeed against them.’

Enigma patent (Sherbius, 1928)

Germany’s early cryptographical advantage with Enigma thus became its greatest security liability. Germany standardized Enigma throughout the force for both routine and sensitive communications. BP tracked nearly two hundred different German networks throughout World War II, but most were just different settings for the same basic cryptosystem. The unintended consequence was that anything GC&CS learned from any given German network might be applied to breaking into other networks. Germany bet its cryptographical security on Enigma, but Britain built a SIGINT organization around Enigma, too.

Condition #3: Organization

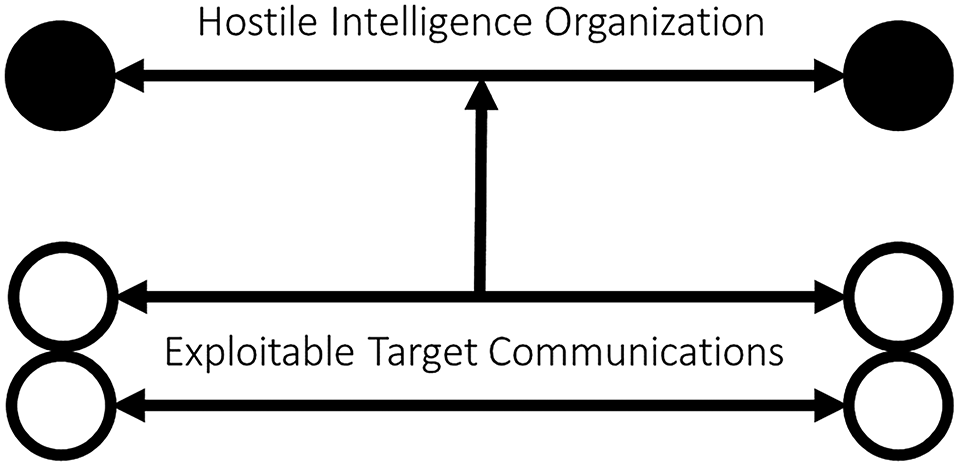

Connectivity and vulnerability created a rare intelligence opportunity. But targets do not exploit themselves. Figure 8 thus adds a new element that is not explicitly part of Shannon’s model but is necessary in practice. Shannon’s ‘enemy cryptanalyst’ is really an organized conspiracy to collect and decrypt messages. This quiet conspiracy needs an administrative and technical platform that is tailored to exploit the vulnerabilities of and connectivity to the target. Taken together, these three conditions describe the institutional constraints

that Offence regains the advantage over cryptographical defence Offensive advantage depends on organizational capacity tailored to the target’s sociotechnical vulnerability

Bletchley Park set the model for modern SIGINT agencies. By mid-1942, after a major reorganization spurred by intervention of Prime Minister Churchill, GC&CS combined radio interception, traffic analysis, cryptanalysis, intelligence analysis and OPSEC in a single agency. The director’s annual report stated (Grey, 2012: 55, 197), ‘we believe that we should try to read every enemy signal, neglecting none however apparently unimportant; we believe in the unholy trinity of Interception, Cryptography and Intelligence.’

Two radically different images are often used to describe BP, ‘a university and a factory’ (Ferris, 2020: 213). On one hand, cryptographers and engineers – mostly men such as Alan Turing, Max Newman, Bill Tutte and Thomas Flowers – interacted informally and collegially. They were free to experiment with new approaches to cryptanalysis, adapt to emerging opportunities and invent remarkable machines such as Colossus, the largest electronic computer ever built (to attack the Lorentz cipher) (Haigh and Priestley, 2020) On the other hand, an army of regimented clerks – mostly women – sorted paperwork and maintained machinery. They performed repetitive, mundane, exhausting, endless work without ever understanding its true purpose. Nearly ten thousand toiled at BP by the end of World War II. 2

The traditional dichotomy of formal centralization versus informal decentralization is misleading in this case. Grey (2012: 214–215) describes a ‘twisting together’ of mutually supporting modes of work. GC&CS, remarkably enough, was flexible enough to repair breakdowns and adapt to enemy countermeasures but well-managed enough to systematically collect, process, analyse and disseminate enemy secrets at scale. Elsewhere (Lindsay, 2020b: chap. 7) I describe this hybrid of adhocracy and bureaucracy as ‘adaptive management.’

There is a close relationship between organization and vulnerability. Indeed, vulnerability can be understood as the target’s failure by the target to implement an adequate counterintelligence organization. If BP achieved the improbable feat of combining the strengths of two opposite modes of organizing, its German targets achieved the equally improbable feat of combining the opposite weaknesses. Germany was centralized where it should have been decentralized but fragmented where it should have been integrated. Germany unwittingly created exploitable vulnerabilities through a combination of standardized doctrinal routines and opportunistic deviations from OPSEC policy by personnel in the field. Its counterintelligence organs, meanwhile, were too disunified and fractious to recognize and correct the danger. German intelligence neglected high-grade Allied cryptosystems such a Typex and Sigaba on the assumption that Enigma was invulnerable and Allied analogues were a waste of time (Hastings, 2016: 449; Ratcliff, 2003). No one in Berlin wanted to hear that the Reich’s communication security was categorically compromised, so intelligence officers succumbed to groupthink and pandered to their customers (Ratcliff, 2006: 231–232). Nazi coup-proofing measures further reinforced German gullibility (Howard, 1990: 49–52).

An information system makes intelligence possible by providing a channel. But organizations must stabilize endpoints of the intelligence channel. For one side to gain an advantage over the other, their organizations must be complementary, with one making mistakes while the other avoids them. Offensive advantage thus depends on dyadic institutional context, not systemic technology.

Condition #4: Discretion

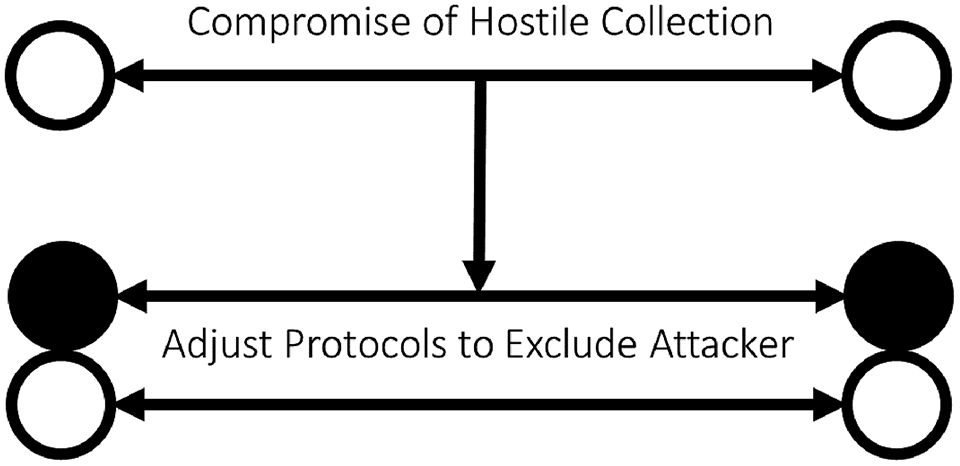

The fourth condition of discretion describes efforts to protect the other three conditions. BP’s investment

Offence loses the advantage as compromise prompts defence to make adjustments

To avoid this unhappy outcome, the British had to refrain from behaviour that would attract the attention of German counterintelligence. GC&CS thus fostered a culture of extreme secrecy, enforced through background investigations, personnel indoctrination, signing of the Official Secrets Act, classification and compartmentalization (Grey, 2012: 122–123). Many British personnel did not even realize that BP was a codebreaking organization, and the press acquiesced to official requests to keep mum. Furthermore, the British exercised security discipline and operational restraint to keep Germany in the dark. Ultra was often sanitized so that it might appear to come from some other source, for example, a human spy or aerial reconnaissance. The Allies seriously debated the risks and benefits of acting on Ultra, sometimes foregoing opportunities to attack Germans without a plausible alibi. Thus, for instance, ‘fear of tipping the Allied hand hampered photo-reconnaissance’ (Ferris, 2020: 260). The Allies also implemented a highly controlled communication system to disseminate Ultra to military commanders in the field, which meant that most Allied personnel remained ignorant about what GC&CS was doing as well.

The condition of discretion is necessary because intelligence is a contest. Yet this contest is a peculiar form of competition that depends on cooperation. Discretion enables the collector to preserve unconstrained room for manoeeuvre so that it can do a little extra on the side, even as it superficially conforms to target expectations. But the price of preserving the degrees of freedom that enable deception is the additional complexity of security and counterintelligence measures. This overhead is necessary because the consequences of compromise include the loss of costly capabilities, future collection opportunities, counterintelligence exploitation by the target and perhaps retaliation.

One might even say that deterrence is a condition for the possibility of secret intelligence contests. This runs contrary to claims that deterrence is unimportant in cyberspace (Fischerkeller et al., 2022). But if the attacker depends on secrecy, then the target’s security posture poses an implicit threat to the collector’s offensive operations (i.e. general deterrence against the initiation of challenges). In effect, the collector is deterred from collecting too aggressively or acting too rashly on what it collects. Deterrence here is understood as a continuous rather than a binary outcome (Gannon et al., 2023; Lindsay, 2015; Powell, 2015). From this perspective, ironically enough, clandestine intelligence penetration is symptomatic of deterrence success.

Active counter-counterintelligence can provide some additional protection, by deceiving enemy eyes on the lookout for deception. GC&CS was not just a passive SIGINT collector. Sometimes it took active measures to protect or enable Ultra. When Ultra discovered the locations of German U-boats, British reconnaissance flights would be vectored toward them so that U-boat commanders would assume that they had been discovered by a lucky patrol (Ratcliff, 2006: 115–116). BP sometimes arranged for a military operation in view of German observers who would likely report it via encrypted message on a net monitored by GC&CS, which simplified codebreaking (Kahn, 2012: 169). BP actively targeted German intelligence agencies, continually searching for evidence of Ultra’s compromise. It never found any (Ratcliff, 2006: 159–179). British counterintelligence also relied on Ultra to vet the reliability of double agents and verify that the enemy had swallowed the bait (Smith, 2001). Even more sportingly, the British sometimes sent false communications to German radio operators encrypted in legitimate Enigma keys to pass disinformation to enemy commanders. According to an official

Offence regains the advantage by strengthening operational security and counterintelligence

We now have all the elements of a genuine intelligence contest. Figure 10 depicts a fully symmetrical intelligence contest between two organizations competing to gain an advantage through secrecy. They each attempt to protect their internal activities while presenting an obfuscated façade to their rival. The vertical arrow is now bidirectional because each side attempts to learn about, and potentially to influence, the other. In this depiction, which is the configuration for a successful intelligence agency such as GC&CG, the collector (top) has the advantage over the target (bottom): effective security makes the collector opaque to the target but the target transparent to the collector. The collector has created its own institutional club that excludes the target, while the collector can penetrate the security club of the target to join its collective institutions. An important implication is that the offence–defence balance is not determined by technology, simply because technology is embedded in institutions that play both offence and defence.

Generalizing the political conditions for intelligence

So far, we have started with a contemporary understanding of the logic of cryptography (Figure 1) and then explored its more complex sociotechnical implementation at BP (Figure 10). We found that the same radios and Enigma machines that Germany used to protect its operations provided connectivity for the British eavesdropper. The German adversary voluntarily but unwittingly created vulnerability for British codebreakers. BP was a remarkable SIGINT organization that combined the virtues of administrative discipline and creative adaptation, in sharp contrast to its adversary. British bureaucrats, engineers, and operators exercised disciplined discretion to protect their investment from German counterintelligence.

The next step in abduction is to explicitly dismantle the historical scaffolding and articulate a more general theory. This more general theory can be articulated through deduction from general principles, as a logical possibility, even as it was inspired by a specific case, as a contingent fact. The result will be an institutional theory of intelligence performance that does not depend on historical circumstances, which makes it potentially as relevant for cybersecurity as mid-century SIGINT. This sort of bootstrapping is the power move of abduction.

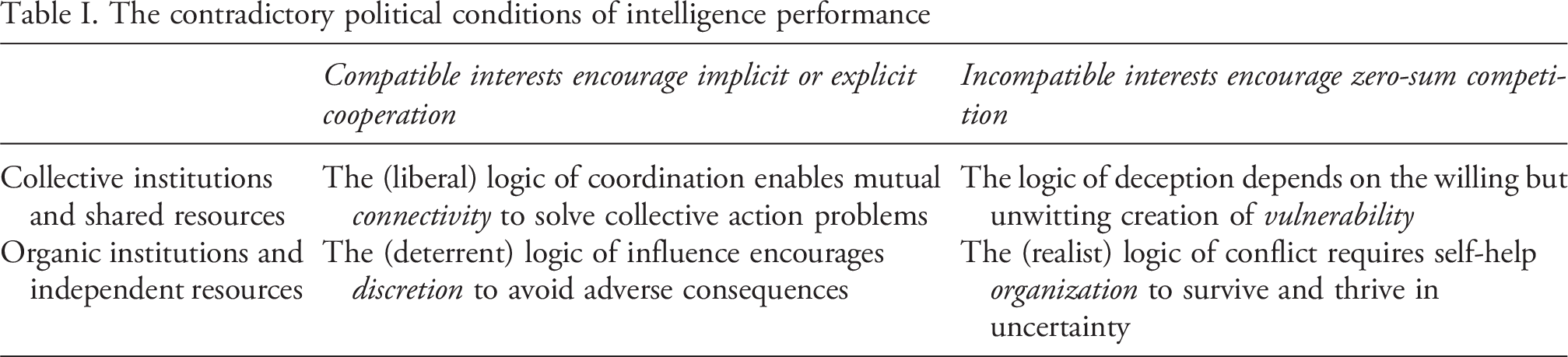

I begin by looking for abstract relationships across the conditions in the case. Something that jumps out is that the conditions of connectivity and vulnerability describe institutional features in the collector’s environment that are shared with the target, while the conditions of organization and discretion describe institutional features in the collector’s organization that are not shared with the target. I will describe these as collective and organic institutions, respectively, because the target makes choices that shape the shared environment with the collector, while the collector has more control of its own behaviour. Figure 11 combines Figure 1 and Figure 10 by grouping these pairs of conditions atop Shannon’s model. Thus, we can conjecture that an intelligence contest generally is an ongoing interaction between collective and organic institutions.

The magic trick of abduction is the move from the particulars of a case to the deduction of general theory. Often this will involve mapping concepts gleaned from the case to a broader body of theory that has already stood the test of time. There are not enough pages for a full discussion, but I will sketch, with very broad brushstrokes, a few tantalizing touchpoints between the four conditions and traditional IR theory. Even so-called post-paradigmatic or eclectic IR relies on traditional ideas from the liberal and realist traditions of IR (Kristensen, 2018; Milner et al., 2022), in part because institutional mobilization and power politics are enduring features of human sociality (Goddard and Nexon, 2016).

The distinction between collective and organic institutions maps onto the most fundamental distinction in IR, namely between international institutions and states in anarchy (or in political economy, between governments and market actors). The liberal tradition of IR emphasizes that cooperation is more likely with shared

Conditions for intelligence success

Table I hazards a mapping of intelligence conditions onto basic IR theory by disaggregating sociotechnical means and political ends. Sociotechnical systems can be institutionalized to enable collective action, or they can be organically controlled by independent actors. In either condition, the actors can engage in cooperative or competitive behavior. This defines four ideal strategic logics for linking ends and means. These general IR logics enable the four conditions for intelligence performance.

The logic of coordination captures intuitions from the liberal tradition. Actors adopt communication protocols and computational infrastructure to solve collective action problems and achieve common goals. This cooperatively constituted connectivity provides remote access to intelligence targets.

The converse logic of conflict captures intuitions from the realist tradition. Actors in anarchy must help themselves to survive and thrive in an uncertain competitive environment. Collectors need an organization that is both well-managed and adaptive to field a reliable exploit platform that is tailored to the opportunity created by connectivity and vulnerability.

The other logics mix traditional paradigms. The logic of influence captures intuitions from deterrence theory. In Arms and Influence, Schelling (2008: 4–8) points out that effective coercion depends on shared interests in avoiding pain. Coercive success, moreover, results in a negotiated deal – a mutually-constrained proto-institution. Deterrence thus combines realist means (threats of war) for liberal ends (peaceful agreements). In intelligence, the tacit threat of the target’s security posture influences the collector to exercise discretion in its operations.

The logic of deception is less developed in IR theory but is increasingly relevant in the information age. It captures the intuition that espionage and subversion depend on the unwitting cooperation of the target, or liberal means for realist ends. The target voluntarily presents sociotechnical vulnerability that enables its own deception.

The contradictory political conditions of intelligence performance

The contingency of intelligence success

We now have a bootstrapped theory that could have been derived from IR concepts directly, even though it was inspired by the case as a matter of historical fact. But this means we can now return to the case with the newly generalized theory. The new theory can then be used to revisit the case, explore additional variation in the case, or modify the theory. Abduction encourages recursion from empirics to theory and back again.

In the statistical worldview, of course, deductive theorizing and empirical testing are two separate processes. In the backstage of research, however, researchers often tweak theory and debug data to find a more satisfying fit. The scientific frontstage, much like sovereignty in politics (Krasner, 1999), is something of an exercise in organized hypocrisy. Abduction abandons this pretence. The bootstrapping of abduction results in a standalone theory that ‘could have been’ created simply through deduction from first principles (i.e. Table I), so the same theory also ‘could be’ tested with a qualitative case study. The process of abductive recursion may continue indefinitely by comparing different cases to test, qualify, or repair the conjectures of generalized theory.

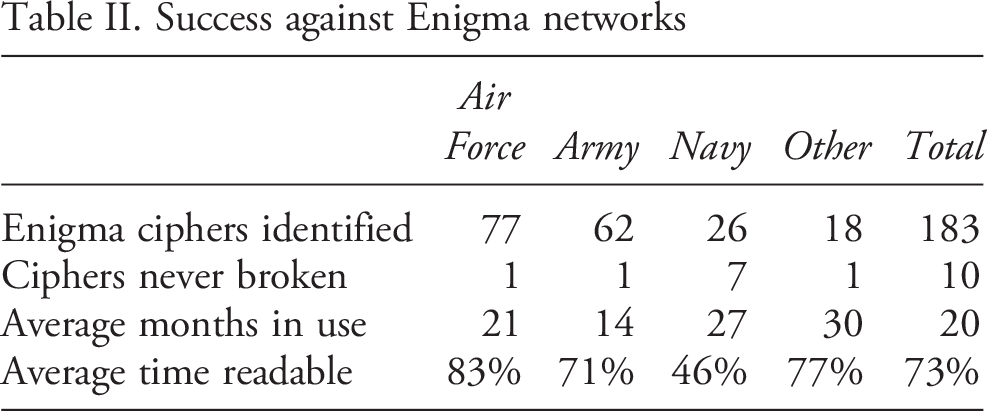

Success against Enigma networks

Table II summarizes the remarkable performance of GC&CS against Enigma ciphers. 3 Of the 183 network ciphers identified, all but 10 were broken, an incredible success rate of 95%. Once broken, German networks tended to remain readable for the life of the key, on average 20 months, and some much longer. Even after networks became readable, GC&CS still had to break into daily keys afresh. Sometimes ciphers were broken within hours, while others required many days. Breaking Enigma was necessarily an ongoing process (Grey, 2012: 34–35).

Success varies across services as conditions change. Luftwaffe ciphers, the largest proportion, were broken early and often because Luftwaffe operators made the most mistakes, and air operations generated the most traffic – more vulnerability. Army keys were generally used for a shorter duration compared to other services because keys were used for specific land campaigns – limited connectivity. Naval Enigma was the most challenging because the sea services practised better OPSEC – less vulnerability. The darkest periods of the naval SIGINT contest were the introduction of four-rotor naval Enigma (Shark), which locked out GC&CS for most of 1942. The Allies managed to adapt new methods and new machines – better organization – to get back in, fortunately, before the climactic convoy battles of 1943. Allied tonnage lost in January and February 1943 dropped to half of the total for November and December (Kahn, 2012: 264–266).

This study has focused on BP’s sustained operational success, but this did not automatically translate into strategic advantage for the Allies. Allied intelligence advantage was only one input among many into victory. Victory in World War II cannot be attributed to any one factor, certainly not intelligence. Material logistics, operational doctrine and hard fighting mattered more (Hastings, 2016; Keegan, 2003; Kennedy, 2013; O’Brien, 2015; Overy, 1996). As Kennedy (2013: 359) writes, ‘far, far better to have it than not, but by the very nature of the intelligence war with Germany it could only produce mixed results, not miracles.’

Conclusion

This article has used abduction to develop a general theory of intelligence performance from the critical case of BP. BP was successful because it met the hard-to-meet conditions of connectivity, vulnerability, organization and discretion. I conjecture that these four conditions, and the tensions they embody, are relevant for intelligence operations in any era, including in cyberspace. Institutional conditions shape the employment of technology for espionage and subversion.

An obvious concern is that modern cybersecurity differs radically from midcentury SIGINT. Why should we have faith that the theory generalizes? My basic answer is that the theory is grounded in general IR theory. Moreover, the constitutive tensions between collective and organic institutions, and between cooperative and conflictive behaviour, are rife in cyberspace. Indeed, superficial disanalogies between cybersecurity and SIGINT offer more reasons to have confidence in generalization, not less. Each also suggests fruitful avenues for future research.

First, BP relied on analog radios and electromechanical tabulators rather than digital networks. Yet, digital technologies, software programs and automation protocols are even more institutionalized than anything at BP. So, the institutional conditions for intelligence should be even more relevant in cybersecurity.

Second, SIGINT is a highly specialized form of intelligence, reliant on specialized technologies and methods. But all specialized intelligence disciplines (human, geospatial, acoustic, etc.) rely on shared cultures, predictable constraints and public protocols. Cyber operations are just another variety of institutional specialization. The common denominator is competition within collective institutions.

Third, BP was a wartime agency, but most cyber espionage happens in peacetime. But this makes BP’s achievement seem even more impressive. Warfare is the opposite of cooperation, and combat degrades institutions. But GC&CS managed to build up stable institutionalized relationships with its Axis targets, nonetheless. In peacetime, there is even more institutional cooperation available for intelligence actors to exploit. From this perspective, it is less surprising that most cyber threat activity occurs below the threshold of armed conflict. Peace, ironically enough, is more conducive for cyber conflict.

Fourth, BP collected intelligence, but cyber operations can also conduct sabotage and disinformation. This objection is misleading because spies in any era can become saboteurs. The only difference between espionage and subversion, at the operational level of intelligence, is the direction that information flows through a secret channel. The success of covert action at the operational level should also depend on the four conditions, which could be tested empirically.

Fifth, BP was a state agency, but non-state actors are prominent in cybersecurity. But the generalized theory, insofar as IR theory applies to non-state actors as well, is agnostic about the identity of intelligence actors. Spies, thieves, soldiers and engineers can all attempt to exploit connectivity and vulnerability with organization and discretion, even as they may differ in their ability to do so successfully.

In the final analysis, intelligence is a contest of deception between competing institutions within cooperative institutions. This means that intelligence falls in between traditional liberal and realist approaches to IR, with all the tensions and contradictions that implies. Cyberspace, moreover, is a highly institutionalized environment, so we should expect both the opportunities and challenges of intelligence to be magnified in cyberspace. Intelligence, both operationally and theoretically, does not respect established institutional boundaries. This suggests that the study of intelligence and cybersecurity must draw on concepts from multiple intellectual traditions. This article has attempted to show how this might proceed.

Footnotes

Acknowledgements

The author thanks John Ferris, Michael O’Hara, Michael Warner, the editors of this special issue and several anonymous reviewers for their constructive feedback on this project.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.