Abstract

Social scientists do not directly study cyberattacks; they draw inferences from attack reports that are public and visible. Like human rights violations or war casualties, there are missing cyberattacks that researchers have not observed. The existing approach is to either ignore missing data and assume they do not exist or argue that reported attacks accurately represent the missing events. This article is the first to detail the steps between attack, discovery and public report to identify sources of bias in cyber data. Visibility bias presents significant inferential challenges for cybersecurity – some attacks are easy to observe or claimed by attackers, while others take a long time to surface or are carried out by actors seeking to hide their actions. The article argues that missing attacks in public reporting likely share features of reported attacks that take the longest to surface. It builds on datasets of cyberattacks by or against Five Eyes (an intelligence alliance composed of Australia, Canada, New Zealand, the United Kingdom and the United States) governments and adds new data on when attacks occurred, when the media first reported them, and the characteristics of attackers and techniques. Leveraging survival models, it demonstrates how the delay between attack and disclosure depends on both the attacker’s identity (state or non-state) and the technical characteristics of the attack (whether it targets information confidentiality, integrity, or availability). The article argues that missing cybersecurity events are least likely to be carried out by non-state actors or target information availability. Our understanding of ‘persistent engagement,’ relative capabilities, ‘intelligence contests’ and cyber coercion rely on accurately measuring restraint. This article’s findings cast significant doubt on whether researchers have accurately measured and observed restraint, and informs how others should consider external validity. This article has implications for our understanding of data bias, empirical cybersecurity research and secrecy in international relations.

A missing data problem

Cybersecurity studies in political science have a widely acknowledged missing data problem (Madnick, 2022; Steiger et al., 2018; Valeriano and Jensen, 2019; Valeriano and Maness, 2014) – unknown attacks researchers do not know about, but which still matter. Missing data and unobserved attacks complicate all cybersecurity research – whether authors draw general findings from individual case studies, carry out large-n analyses, or design surveys or formal models with plausible assumptions. The existing approach is to either ignore missing data, assume they do not exist, or claim the known attacks faithfully represent them. This article demonstrates two systemic sources of bias in cyber data – the attacker’s identity and the attack’s technical characteristics (whether it targets the confidentiality, integrity, or availability of information). How do cyberattacks become media reports (or remain unobserved), what are the sources of bias in this process, and how does this affect our understanding of cyberconflict?

Cybersecurity is securing information as users store and transfer it across digital systems. Because information is vital, cybersecurity has wide-ranging implications for economic development, international conflict and competition, and personal security. International security scholars want to understand whether cyberattacks and cybersecurity impact how states interact – if they become more likely to enter conflict, coerce one another, or recognize new risks to their shared existence.

Social scientists do not directly observe cyberattacks, and to understand how cybersecurity shapes politics, they need the population of cyberattacks or an unbiased sample of them. Yet, the available evidence comes from public reports resulting from a technical and political disclosure process. Sometimes, we discover attacks swiftly – the media discovered and reported the 2009 North Korean attacks on United States and South Korean websites as they occurred. Other times, we discover attacks slowly – the media only reported the Stuxnet computer worm due to private cybersecurity firm reports 16 months after the attack. Sometimes, the public discovering a cyberattack is a failure by the attacker. Other times, the public discovering a cyberattack is the point, or attackers may not care whether the public views their behaviour. Visibility bias means: (a) an unknown population of successful attacks the public does not observe: and (b) some attacks are easier to observe than others. Researchers draw inferences from media-reported events. However, the process from event to report to dataset introduces bias (Baum and Zhukov, 2015; Dietrich and Eck, 2020; Gohdes and Price, 2013). The media needs stories that will draw attention and satisfy their stakeholders. However, we expect the media to cover cyberattacks due to their novelty and salience. Yet, when events are hard to observe in the first place, or actors have incentives to keep them secret, bias appears even if the media covers events comprehensively (Clark and Sikkink, 2013; Eck and Fariss, 2018; Landman and Gohdes, 2013). This article takes a novel approach to bias in media-reported event data. I make a simple argument – understanding the reporting process for known attacks helps us understand the likely population of unreported attacks.

We can interpret absent attacks as: (a) unsuccessful attacks; (b) successful attacks that attackers do not report and victims do not discover; or (c) successful attacks that attackers do not report and victims discover but do not report. Observational cybersecurity studies recognize that it is challenging to observe cyberattacks, and understand that attack characteristics may contribute to this. However, existing research either assumes all attacks are reported (Jensen et al., 2017; Steiger et al., 2018), or reported attacks are representative of the population (missing completely at random (MACR)) (Valeriano and Maness, 2014; Valeriano et al., 2018).

There is an urgent need for rigorous empirical research to help us understand how cybersecurity shapes global politics (Shandler and Canetti, 2024). This article first outlines methodological approaches in cybersecurity research relying on media reports of cybersecurity events. I then discuss media bias in event studies, some of which are less problematic for cybersecurity than others. I find a parallel to cybersecurity data challenges in human rights data, where governments have incentives to minimize their exposure and events have limited visibility. I explain how cyberattacks become reports and theorize how attack features shape the likelihood of reporting.

I test my theory on a novel report timeline dataset of publicly known cyberattacks in the Five Eyes (FE) (an intelligence alliance composed of Australia, Canada, New Zealand, the United Kingdom and the United States) states, adding information about when attacks occurred, when the media first reported them, and their technical characteristics. I use survival models to understand how attack features (who sent them and how they are designed) influence attack discovery. The attacks the media discovers the fastest utilize methods with short-term impacts 1 and are committed by non-state groups. Attacks by state actors (with greater resources) or that compromise confidentiality or integrity (which are harder to discover) take longer to surface in the media. I argue that under-reported attacks most likely involve state actors and affect information confidentiality or integrity.

After demonstrating how attacker and technical characteristics influence reporting, I explain how missing events bias our understanding of cybersecurity. Many researchers argue that cyberattacks are restrained (Baezner, 2018; Gartzke, 2013; Gartzke and Lindsay, 2015; Valeriano and Maness, 2015) or ‘limited to mostly defacements or denial of service’ (Valeriano and Maness, 2014: 357). Others argue that the United States carefully avoided ‘persistent engagement’ for two decades (Fischerkeller and Harknett, 2018), when it may simply use methods that are difficult for the public to observe. Alternatively, the media used observational evidence to argue that the United States fell behind China when subsequent intelligence leaks revealed the opposite was true. New advocates for the ‘intelligence contest’ theory of cyber engagement (Rovner, 2020) search for the cases we are most likely to miss. Visibility bias shapes cybersecurity data and creates a specific risk – believing actors or behaviours are restrained when they rely on hard-to-observe methods or choose to keep attacks secret.

Bias in observational research

Academics often rely on media reports to know whether something happened. Reporting bias – data features caused by how sources collect information and how researchers select sources – is a common challenge in international relations (Gohdes and Price, 2013), civil conflict (Baum and Zhukov, 2015) and political violence studies (Dietrich and Eck, 2020). Generally, researchers consider two biases – bias caused by the media and bias due to characteristics of what the media is reporting on. The media can frame an event using a bias like partisanship. For example, the media might emphasize different parts of a cyberattack from China than the hacking group Anonymous. I focus on whether attacks that happen can be reported, rather than on how the media covers reported attacks.

The media cannot report on everything researchers care about – there is too much information and insufficient bandwidth. Characteristics of the media or its institutional environment can determine what potential stories become actual stories. The media prefers salient (Galtung and Ruge, 1965) or novel (Earl et al., 2004) stories to draw an audience. These biases present fewer concerns for cybersecurity because cyberattacks are novel and salient. A 2021 Pearson Institute and Associated Press poll found that 91% of Americans were concerned, and 68% very concerned, about cyberattacks (Associated Press, 2021). Since the public pays attention to cyberattacks, journalists should cover attacks and pursue leads on potential events.

Bias due to what the media covers in an information-rich environment might emphasize large-scale cyberattacks, or attacks revealing novel capabilities such as zero-day exploits (Makridis et al., 2024). Maschmeyer et al. (2020) argue that media incentives ‘create a biased sample of incidents at the high end of conflict spectrum and/or targeting rich actors who can afford to pay for commercial cyber defense.’ Yet, the media covers many unsophisticated attacks, such as distributed denial-of-service attacks and website defacement. Alternatively, this bias may emphasize attacks from states in intense competition. Lindsay (2013: 21) addresses media bias related to China, noting that ‘an increase in reporting may reflect an increase in the West’s appetite for cyber reporting rather than an increase in Chinese activity.’ The current article focuses on how (and whether) the media observes attacks.

Characteristics that make events difficult to observe, or introduce incentives to keep them secret, also introduce bias into events data (Dawkins, 2021). Even if the media perfectly relays available information, events may remain unavailable. One close parallel to cybersecurity data challenges is those in human rights research. Scholars want to understand what shapes human rights practices. To do so, they rely on data gleaned from media reporting. Changing monitoring standards and capacity or differences across individual coders bias human rights measurement (Fariss, 2014). Additionally, governments violating human rights often want to obscure their actions.

Clark and Sikkink (2013) argue that governments trying ‘to hide, downplay, or dismiss information’ constrain media reporting. Incentives to conceal and self-reporting can lead to agencies calling transparent states human rights violators (Eck and Fariss, 2018). Landman and Gohdes (2013: 82) discuss ‘visibility’ as a source of bias in civilian casualty data, where ‘an execution committed in broad daylight in front of a village under siege has a much higher probability of being reported by one or even more people than a disappearance, which was effected covertly.’ Researchers cannot rely on victims or perpetrators to accurately report events, and events’ relative visibility determines the media’s opportunities to find events. Researchers have a sample of violations biased towards those that are easier to discover.

Visibility is the likelihood that an event is available to the media – whether the media can pick up on events at all. Even with a perfect sample of all cybersecurity events in the public domain, there remain critical events not in the public domain. Few authors have attempted to address this challenge directly.

Observational methods draw directly from the universe of known cybersecurity cases. Case studies select cyber incidents to understand how cyber dynamics affect international relations. Quantitative observational studies increase external validity with incident-level event datasets from media reports. Authors use quantitative approaches to describe cyber conflict (Steiger et al., 2018; Valeriano and Maness, 2015), the impact of cybersecurity on battlefield operations (Kostyuk and Zhukov, 2019), conflict spillover (Maness and Valeriano, 2016), or show how states’ economies shape industrial espionage (Akoto, 2024).

Authors turn to non-observational methods to avoid the pitfalls of observational data, but rely on observational data to design studies and argue external validity. Survey experiments manipulate salient attack features in a laboratory setting but need real-world cases to show

Public reporting process for cybersecurity events

Like human rights abuses (Dietrich and Eck, 2020), authors recognize missing cyberattacks as a ‘known unknown.’ Valeriano and Jensen (2019) write, ‘Like other forms of covert action, for every cyber operation we learn about, there are surely countless others we do not know about, as well as failed access attempts.’ Analysts hypothesized that a population of unobserved but successful attacks in the fog of war explained the apparent scarcity of cyber operations in the Ukraine–Russia War (Marks, 2022). Madnick (2022) argues that ‘it’s almost impossible to know how many cyberattacks there really are, and what form they take’ citing private sector research on under-reporting.

What are the stages between when one actor targets another using digital exploits and when the media reports the event to the public? How could technical–political factors influence this process, and how does this interfere with our ability to make valid inferences about cybersecurity?

Discovering cyberattacks

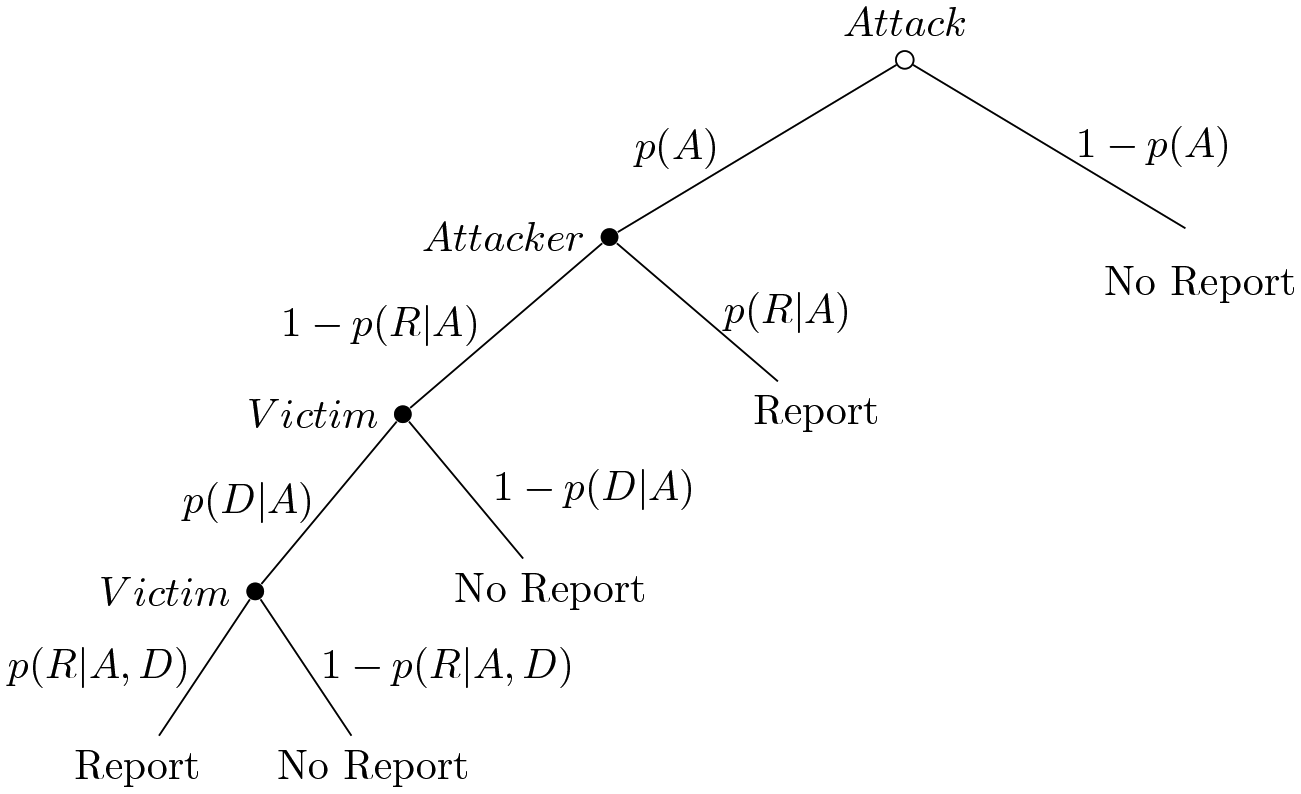

Cybersecurity disclosure and reporting is a dynamic process involving attackers (who choose methods and can inform the public) and victims (who discover attacks and can inform the public). Perpetrators are constantly attempting attacks and developing capabilities. Figure 1 presents the process that ends in media reports (and new observations). When attacks succeed, attackers can inform the public and take credit, or keep the attack secret. The defender must then discover the attack and either inform the public or keep it secret. The current literature either explicitly or implicitly assumes that attacks are always reported or that the population of non-reported events is the same as the population of reported events.

We only observe reported attacks (

There is an underlying success of attacks (

If an attacker is successful and has not informed the public, the victim must discover the exploit (

1. Assume no missingness

Some authors draw inferences by assuming that every successful attack is reported. As time continues, the only non-reporting is due to unsuccessful attacks (

If attackers do not always tell the public, then victims must always discover they were victimized (

Any author who does not recognize potential missing data when generalizing their results makes this assumption. However, some authors explicitly make this assumption. Valeriano and Maness (2014) assume that the probability of reporting converges to 1. The authors state, ‘Some operations will be hidden because they point to a specific weakness, but eventually the truth comes out’ (Valeriano and Maness, 2014: 351). Empirically, the authors only include attacks over five years old to account for reporting lag. The authors argue actors have few incentives to hide cyber operations. Steiger et al. (2018) take the same approach, only considering attacks older than one year. ‘Incidents may not become publicly known for a while’ the authors argue, but ‘it is difficult to keep every incident secret. It might be especially hard to conceal more disruptive attacks (e.g. blackouts)’ (Steiger et al., 2018: 81). By assuming away missingness, authors can analyse data as they are or draw generalizable findings from available cases.

2. Assume MACR

Data can be MCAR – missing observations are a random subset of all observations (Little and Rubin, 2020). In this approach, every event has an underlying probability of being missing or observed. If the reported attacks (

For this, say that C represents any salient aspect of the attack – who the attacker was, the techniques they used, who the victim is. Authors can assume unreported attacks (

Jensen et al. (2017: 15) claim, ‘our research assumes that documented attacks are a representative sample of the population of attacks.’ Valeriano et al. (2018) argue ‘at some point, the iceberg flips over and we are able to get a representative sample of the dynamics of all cyber actions.’ Missing attacks are just as likely to be carried out by North Korea as Anonymous and just as likely to be a distributed denial of service (DDoS) attack against a public website as an attack against Iranian nuclear reactors. If not, researchers make a weaker assumption.

3. Assume missing at random (MAR)

If the probability of reporting is correlated with attack characteristics, this research is a case of intentional selection of observations (King et al., 1994), where the selection of cases is correlated with an explanatory variable. When measurement error is correlated with independent variables, any inference, quantitative or qualitative, is subject to bias (Wooldridge, 2013). The problem is no longer only that there are unreported attacks, but that unreported attacks are qualitatively different than observed attacks.

Confidentiality, integrity, or availability triad

Bias: Technical features

How attacks affect target systems may influence whether the attacks are observed. Several authors have attempted to classify cyberattack intentions. Rid (2012) created a typology that separates attacks into sabotage, espionage and subversion. Valeriano and Maness (2018) characterized attacks according to coercive objectives, whether disruption, espionage, or degradation. Both these strategies require determining the political intention of an attack.

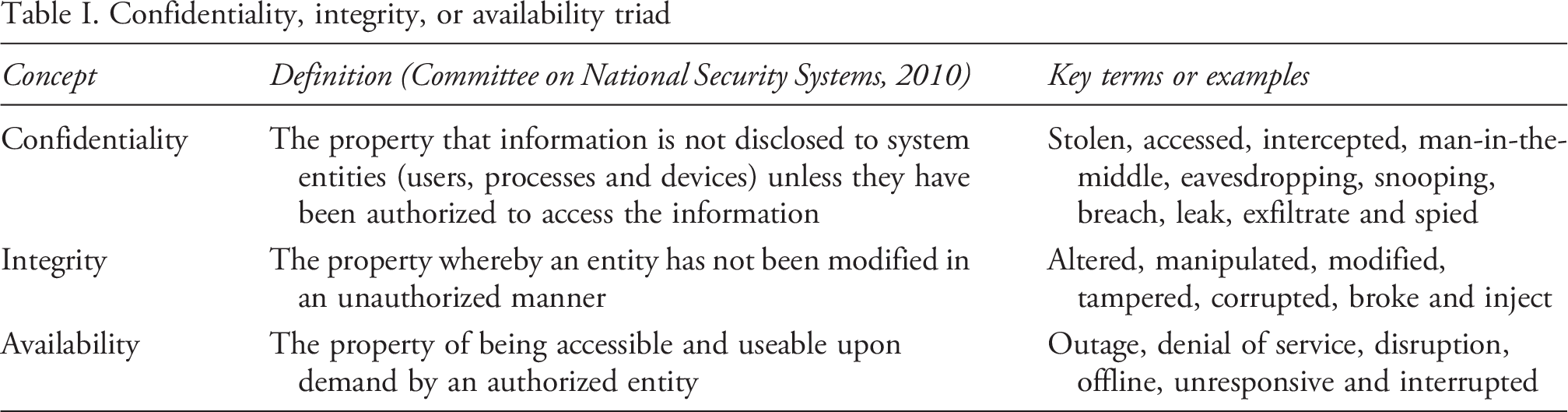

Instead, I borrow from computer security to characterize how attacks target systems – their technical intentions. This literature categorizes attacks against the confidentiality, integrity, or availability of information (Anderson, 2003; Samonas and Coss, 2014; Venter and Eloff, 2003). Confidentiality ensures that only authorized individuals can view information, integrity ensures that information is not altered, and availability ensures we can get information when we need it. Adopting this framework does not require judgments about the political intention of the attack. 3 Instead, I assess whether the attack was designed to limit access to information, view information the attacker did not have access to, or modify information on another network. Table I contains the information security concept, the definition from the National Information Assurance Glossary, and terms associated with coverage of these attacks (Committee on National Security Systems, 2010).

Different attacks are associated with different security principles. A zero-day exploit of a security flaw via malware breaks confidentiality and can be used to affect integrity and availability. DDoS attacks, which overwhelm systems to temporarily deny access, target availability. However, DDoS attacks have no long-term impact on integrity. The 2013–2015 United States Office of Personnel Management (OPM) hacks affected sensitive United States government data confidentiality, but there is no evidence that the attack modified any information in government databases, so the attack did not affect integrity. The hackers could have denied access to OPM data, but this would alert cybersecurity professionals that they broke data confidentiality. While the operations leading up to Stuxnet involved gathering intelligence and breaking confidentiality, Stuxnet primarily targeted data integrity by modifying protocols to damage physical systems.

These characteristics may be correlated with the probability of discovery. For example, attackers have limited ability to keep attacks affecting availability secret. The attack, by design, keeps the victim from accessing information. In this case, successful and unreported attacks are likely cases where the victim did not report, rather than the victim not discovering. Additionally, attacks against availability may be easier for the public to discover naturally (such as a DDoS attack against a public website). At the same time, these attacks are easier to recover from since their effects wear away over time; generally, attacks against availability are less sophisticated than attacks against integrity and confidentiality.

Attackers may be less likely to disclose an attack affecting confidentiality or integrity if they want it to remain a viable tool in the future. Lindsay (2015a) notes that ‘cyber weapons depend on “zero-day” vulnerabilities that will likely be countered if publicly revealed.’ If attackers want to keep certain capabilities secret, then the probability of reporting by the attacker (

Bias: Attacker identity

Different attackers have different intentions, which will affect the likelihood that an attack is reported. They are also likely to have different levels of sophistication. For example, while groups of unaffiliated hackers may be sophisticated, they lack the resources of a well-funded national intelligence agency. Well-resourced actors can also hide evidence of attacks more effectively. Rid and Buchanan (2015: 32) note that ‘Sophisticated adversaries are likely to have elaborate operational security in place to minimise and obfuscate the forensic traces they leave behind.’

The attacker’s identity may also influence whether they are likely to claim credit for the attack or keep it a secret. For instance, revisionist powers may quickly claim victory when they successfully target their enemy, while status quo powers may be more likely to keep secrets when they successfully attack. There is wide variation in whether actors take credit for their successful cyberattacks. Poznansky and Perkoski (2018) find that non-state actors claim credit for attacks to demonstrate their capabilities, shape public opinion, and recruit other hackers to their ranks. However, claiming credit for cyberattacks risks escalating conflict, which explains why actors often hide their role in covert actions (Brown, 2014; Carson, 2016). If non-state actors seek credit for attacks, and state actors fear escalation, we should expect fewer missing cases of cyberattacks from non-state actors than from state actors. In this case, the probability of reporting by the attacker (

Methodology

There is no way to estimate a model to account for attacks that were never reported. Instead, I assume each reported attack has a latent probability that it was ever going to be reported. The greater the time for an attack to be reported, the less likely that the attack was ever reported. An attack reported within two days was more likely to be discovered than an attack reported in two years. ‘Missing attacks’ are simply attacks that have not been reported yet. The missing population is like the known population of attacks that took more time to surface.

This approach to modelling observed attacks would provide an inaccurate understanding of unobserved attacks if the characteristics that lead to faster reporting are associated with lower likelihood of reporting. For example, if attacks by state actors are discovered slower than attacks by non-state actors, but attacks by state actors are more likely to be discovered at all than attacks by non-state actors. Alternatively, if attacks affecting information availability are quickly reported conditional on being reported at all, but have a low underlying likelihood of being reported at all. The assumption holds if the factors affecting reporting survival of observed attacks align with the factors affecting selection into observed. Alternatively, all unknown cases may share a common feature, such as a specific vulnerability or source. To characterize the likely population of missing cases from the reporting process of non-missing cases, I assume a mixture of missing and non-missing cases with common features, even if features are more common in one set. Additionally, all attacks (observed or not) have a non-zero probability of reporting.

I estimate Cox proportional-hazard models for reporting, modelling the time until an event occurs (Box-Steffensmeier and Jones, 1997; Cox, 1972) – the time from when an attack happens and when it is publicly reported. Covariates in hazard models provide the increased risk of a change in states occurring, given that the event has not occurred previously. Following best practices, each model includes tests for the non-proportional hazards’ assumption both globally and on an individual coefficient basis (Box-Steffensmeier et al., 2003; Grambsch and Therneau, 1994). I estimate a Cox proportion model since it requires no assumptions about the baseline hazard ratio, which would be the baseline probability of reporting. There is no ‘true’ hazard function – only a relative hazard function. All models were fit using the

Data: Attacks against or by FE governments

This article builds on a cyberattack dataset from Kertzer et al. (2020), which includes ‘incidents in which the confidentiality, integrity, or availability of information held by the government, or held in trust for the government, was compromised, or when the government does the same to non-government targets.’ It builds on the Council on Foreign Relations Cyber Operations Tracker, adding additional events and individually validating each attack with precise information about when it was first reported. The Council on Foreign Relations records come from public-submitted cyber incidents, which researchers then independently validate. I add attacks from the Center for Strategic & International Studies ‘Significant Cyber Incidents (Center for Strategic and International Studies, 2006).’ In the Online appendix, I list all attacks and links to news coverage, press releases, or technical reports detailing each.

Our sample is attacks by or against FE governments since 2005. These countries share cybersecurity practices and intelligence, similar gross domestic product per capita, the same language, a common-law legal system, and are economically interdependent. Several events (such as Operation Glowing Symphony) involved multiple FE states. Our sample includes actors such as China and Russia when they are the perpetrators of attacks against FE states or the victims of cyberattacks by FE states. They would be excluded if they were attacked or attacking third parties. I limit our study to FE states primarily for feasibility – this article has a large enough sample of attacks to generalize to out-of-sample cases. Our results would lose generalizability if, for example, non-state hackers claim credit for attacks against FE states, but not other states. However, hacking groups such as Anonymous frequently claim credit for successful attacks against Russia. Alternatively, if DDoS attacks from China against United States government websites are discovered quickly, but DDoS attacks from China against German government websites are discovered slowly. I do not believe this is the case.

I augment existing data sources with information about when the attack occurred. Since most attacks cannot be pinned down to a single day, I include the first and last day that the attack is reported to have potentially occurred. Specificity can vary between reports, and the dates refer to the earliest theoretical day when information is sparse. 4 With the first and last day that each attack could have occurred, I assume that each day in between is equally likely to be the ‘real’ date the attack occurred. The date that the attack occurred is the median of the first and last day that the attack could have occurred. 5 I then calculate the reporting lag as the difference between the reporting date and the expected occurred date. 6

I then include information about the technical methods of the attack. For each attack, I code whether the method impacted information availability, confidentiality, or integrity. I read media coverage, technical reports and disclosure statements, looking for the terms and concepts outlined in Table I.

Attacks can have multiple technical features. Sixty-two of the 90 attacks affected confidentiality, 14 of the 90 affected integrity, and 35 of the 90 affected availability to some degree. Seventy attacks only affected one method, 19 attacks affected two methods, and one attack affected all three methods. Operation Glowing Symphony, a counter-Islamic State of Iraq and Syria United States military operation, used exploits affecting all three security principles (National Security Archive, 2020). Coverage of Australian contributions to this campaign stated ‘For 12 hours they accessed accounts, locked Islamic State members out, stole the contents and deleted backups of the files.’ This affects each of the three security principles (Borys, 2019).

I then code whether the suspected attacker was a state or non-state actor. Because I only consider attacks associated with FE states, these are cases where non-state actors attacked a state actor. Twenty-one of the 90 attacks in the dataset were perpetrated by non-state actors against a FE state. This includes the hacking group Anonymous, the pro-Russian hacking group Killnet, LulzSec, and the criminal group behind the LockBit ransomware attacks.

Online appendix Figures A1 and A2 contain the attacks against or by FE governments since 2005. The plot includes the median date that the attack potentially occurred in orange and the date that the attack was disclosed or reported in red. Each orange date has an implied confidence interval (CI) with an equal probability of the attack occurring between the minimum and maximum possible dates. Attacks with multiple disclosure dates when new information comes to light, such as Stuxnet, are only coded based on their initial disclosure.

Results

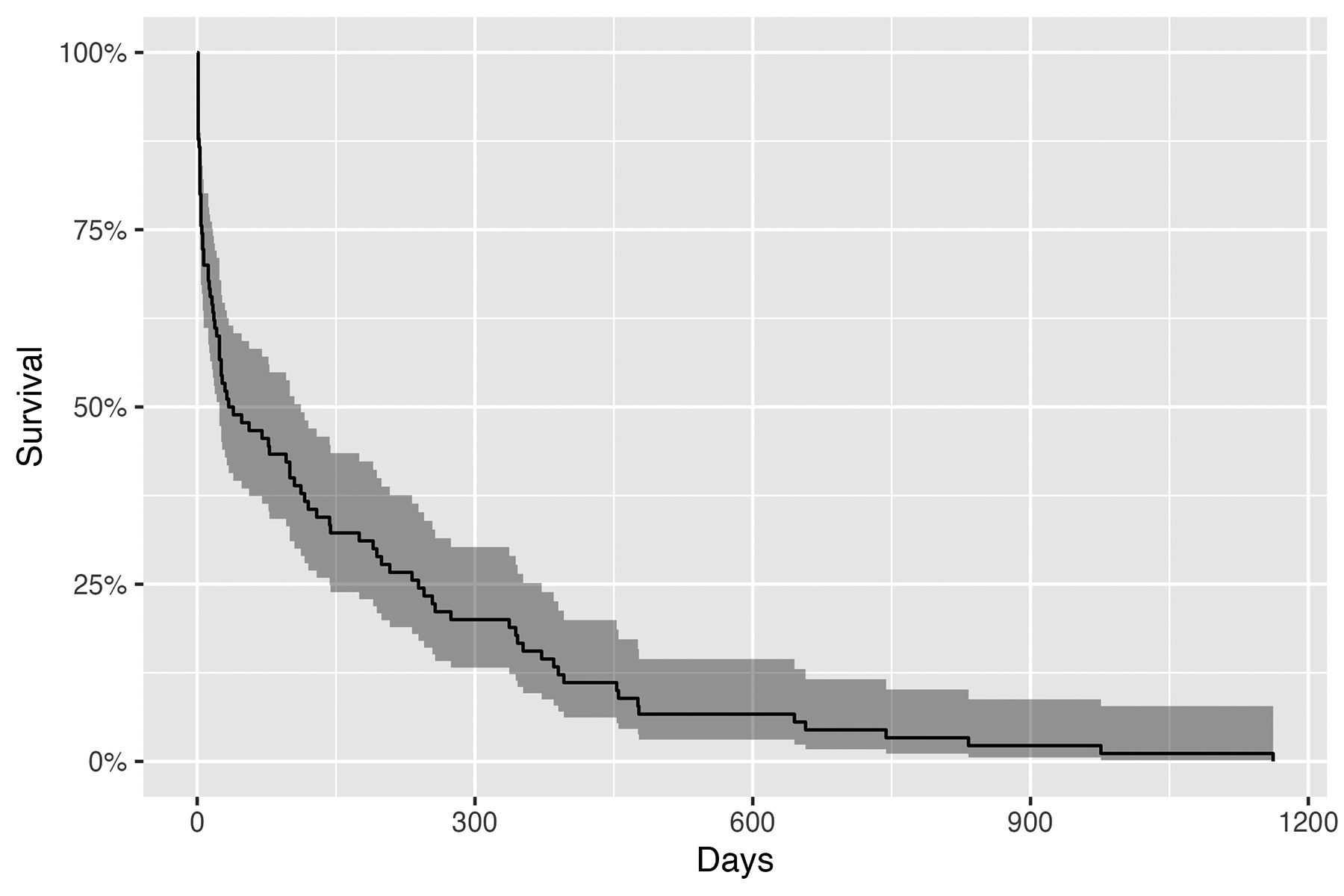

Figure 2 contains Kaplan–Meier non-parametric estimator survival curves. There was an average of 158.5 days between the median of when cyberattacks occurred and when they were publicly reported. In Figure 2, within 42 days the media reported approximately half (0.495 [95%CI 0.402, 0.609]) of publicly known cyberattacks. Interference with the United States National Aeronautics and Space Administration Terra AMN-1 satellite took the longest to surface. Reuters reported in October 2011 that the United States suspected that China interfered with satellites in 2007 and 2008 (Arthur, 2011; Associated Press, 2021).

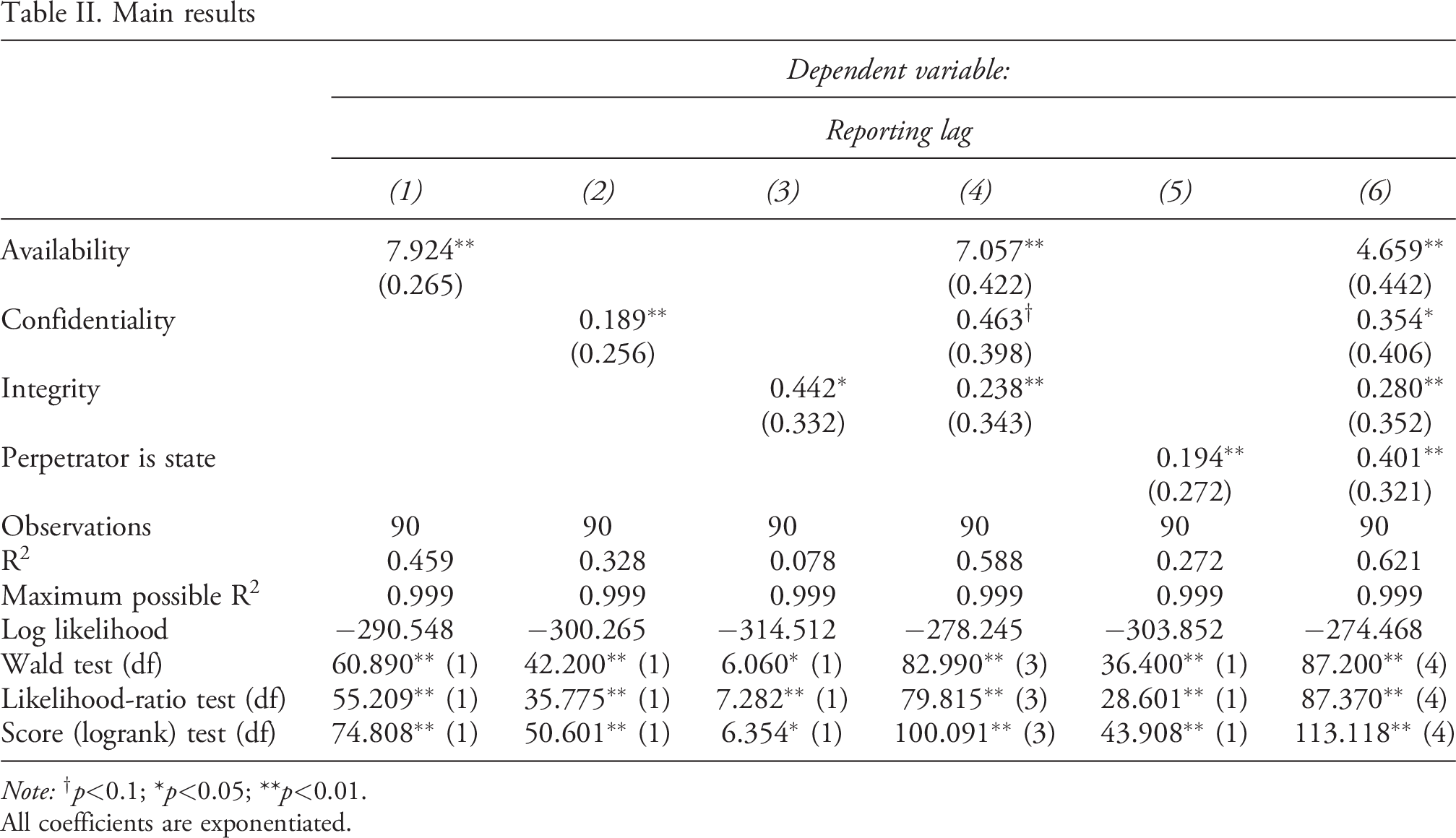

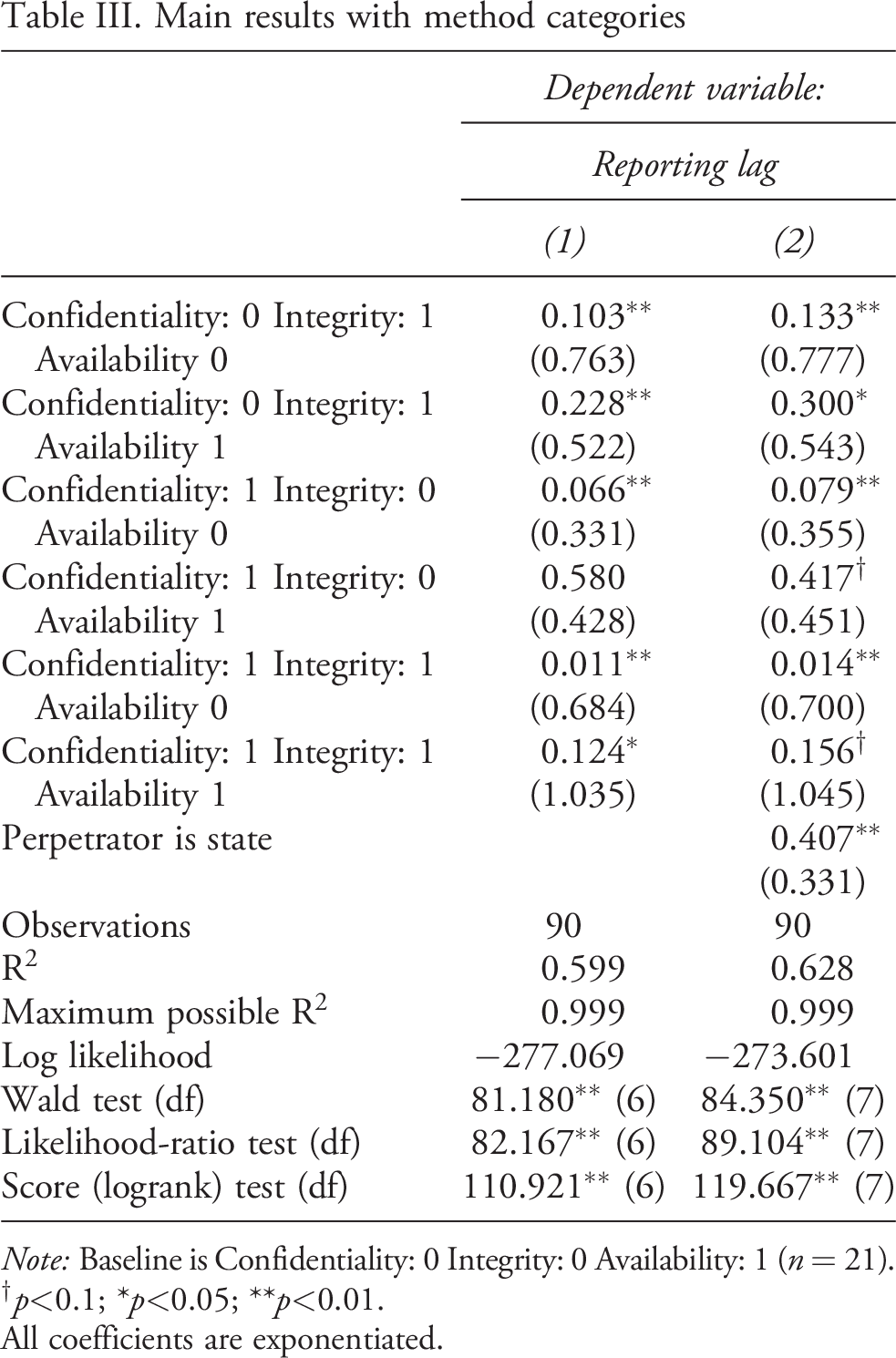

The results in Table II confirm our intuition – reporting delays systematically vary with attack characteristics. Table II presents exponentiated (

These results confirm important parts of our theory. First, the time between when cyberattacks occur and when the public learns about them is correlated with

Pooled survival of cyberattack reporting Main results

Note: All coefficients are exponentiated.

Attacks against information availability surface fastest. Compared to an attack that does not affect availability, an attack affecting availability has a 692.4% increased hazard ratio (reporting is significantly faster). Controlling for all other factors in attack designs, an attack affecting availability has a 605.7% increased hazard ratio. I control for whether the perpetrator is a state because non-state actors may carry out attacks that are reported faster and carry out more attacks that affect availability. Controlling for technical factors and whether the perpetrator is a state actor, attacks against availability have a 365.7% greater hazard ratio.

Attacks against availability differ from the other two in important ways. First, victims immediately discover them since the attacks intend to deny the victim access to information they need. There is no period between when the attack occurred and when the victim discovered it, which is often the case in other attacks. Second, these attacks are often public, since they can seek to deny access to information normally available to everyone. For instance, DDoS attacks against public-facing websites deny access to many users. These attacks are also less technical and do not have a permanent effect – when access to information is restored systems suffer no long-lasting effects. Attackers can deny access to a website but not steal information held behind credentials or destroy information users later need.

In Models 2, 4 and 6 of Table II, attacks against confidentiality have a significantly smaller hazard rate than attacks that do not affect confidentiality. Furthermore, in Models 3, 4 and 6 of Table II, attacks against integrity have a significantly smaller hazard rate than attacks that do not affect integrity. Attacks that affect confidentiality and integrity are not necessarily more or less sophisticated than one another – the skill required to carry out the attack will involve both the attacker’s and defender’s abilities. Since attacks can affect multiple security principles, but every attack must affect at least one security principle, I include another analysis that includes the security principles as dummy variables.

Main results with method categories

Note: Baseline is Confidentiality: 0 Integrity: 0 Availability: 1 (n = 21).

All coefficients are exponentiated.

Across the results, attacks by state actors have a significantly lower hazard rate. In Models 5 and 6 of Table II, attacks states perpetrate have a hazard rate 0.194 and 0.401 times that of an attack by a non-state actor. Controlling for attack characteristics as categorical in Table III, attacks by state actors have a hazard rate 0.407 times that of an attack by non-state actors. Attacks by state actors take longer to be reported than attacks by non-state actors. There may be several reasons for this. Attacks by non-state actors may be less sophisticated, since these groups do not have access to the same resources as a state actor. This may make it easier for victims to discover they were attacked. Furthermore, non-state actors may want to claim credit for attacks, while their state counterparts may want to manage the risk of escalation with victims.

I model an uncertain process with the potential for measurement error. Because of this, I conduct a series of sensitivity analyses in the Online appendix. I exclude each event (with replacement) and re-estimate the coefficients for each analysis. Events sometimes first attributed to cyber means are later attributed to human error. For example, the first reports on the Oldsmar water supply indicated a cyberattack, but reporting two years later showed the disruption was due to human error (Evans, 2023). However, removing any single event has no impact on the results. Next, I flip the method coding for each event sequentially – miscoding any one event in the analysis has no impact on the overall results.

If there are undiscovered cyberattacks, this analysis has important implications for our understanding of cyber conflict. We discover attacks against availability faster than attacks against confidentiality or integrity. We discover attacks by non-state actors much quicker than state actors. Some attacks are more visible than others, which provides new context for whether cyberspace is a restrained domain, if the United States eschewed ‘persistent engagement’ for the past two decades, and whether we can sufficiently capture new intelligence contests.

Implications for the field

We are missing much of the information we would need to fully assess how state and non-state actors use cyberspace to ensure their security or achieve their aims. Many authors perceive cyberspace as restrained, and the apparent lack of large-scale cyberattacks both within and outside war prompts authors to ask ‘where are all the attacks?’ (Gartzke and Lindsay, 2015: 232). Cyber restraint is the prevailing wisdom and a foundational assumption (Farley, 2019; Stauffacher and Kavanagh, 2013). Nye (2018) accepts the idea of cyber restraint, searching for normative constraints to explain it. Gartzke and Lindsay (2015: 343) model ‘the distribution of cyber conflict to date, with many relatively minor criminal and espionage attacks but few larger attacks that matter militarily or diplomatically.’

Lindsay (2013: 374) states ‘we observe a high frequency of low intensity cyberattacks resulting in computer crime, espionage, and hacktivism, but a remarkably low frequency of high intensity cyber warfare.’ High-visibility kinetic cyber warfare may be easy to observe, but the impact of espionage or attacks affecting data integrity is much harder to discern. Valeriano and Maness (2014: 357) investigate this and find ‘very few states actually fight cyber battles, and address why ‘the incidents and disputes limited to mostly defacements or denial of service when it seems that cyber capabilities would inflict more damage to their adversaries?’ Other articles ‘demonstrate evidence of a restrained domain’ (Valeriano and Jensen, 2019) or show ‘while cybertools may appear to be an easily accessible tool with high disruptive potential, actors conducting cyberattacks show restraint in their use’ (Baezner, 2018). Each author recognizes missing cyberattacks – but this population likely contains more of the attacks that they argue occur less frequently.

With limited information, authors or the public may draw conclusions using individual biases (Jardine et al., 2024). For example, the relative abundance of attacks against the United States might be interpreted as evidence that United States’ capabilities are limited. The media frames this with headlines that ‘US is woefully unprepared for cyber-warfare’ or ‘China’s head start in cyberwarfare leaves the US and others playing catch-up’ (Ratnam and Donnelly, 2019; Wagner, 2019). As Lindsay (2015a) discusses, the Snowden leaks demonstrated vast United States cybersecurity capabilities and access to systems during a period when public rhetoric focused on how the United States was slipping.

Visibility bias could convince researchers that actors restrain themselves when they instead rely on hard-to-observe methods. If weaker or less sophisticated actors rely on methods affecting information availability, and those attacks are the easiest to observe, we see more of those attacks. Other actors might make their actions difficult to observe, so we miss a larger proportion of their attacks. This dynamic has fundamentally shaped contemporary academic and policy debates.

‘Persistent engagement’ is an increasingly influential concept – states are (and should be) constantly targeting one another in cyberspace outside of larger conflicts (Fischerkeller and Harknett, 2018; Nakasone, 2019; Smeets, 2020). Advocates believe this represents a shift, where United States ‘restraint coincided with adversarial adventurism and led to strategic losses […] adversaries had recognized that cyberspace was a new competitive environment where strategic gains could be achieved through continuous activity (Fischerkeller et al., 2022).’ Authors argue that the cyber strategic environment has changed. However, how can we know this is true if the United States would most effectively make actions unobservable to the public?

The absence of public reports does not necessarily indicate the absence of engagement. If United States’ adversaries resorted to methods affecting information availability – disrupting websites, shutting down facilities, DDoS – we are more likely to know about every case. If United States’ methods heavily target confidentiality and avoid availability, it would be harder to observe their actions, and it would take longer for cases to surface. More accurately, a recent shift may signal increased transparency, rather than a change in the underlying strategy. Alternatively, it may signal a change to easier-to-observe methods rather than a more inherently offensive posture or intense set of engagements.

Governments often keep cyberattacks secret until victims report them. However, this is not always the case (Poznansky and Perkoski, 2018). Officials informed the Washington Post in 2019 that the United States military targeted data availability at the Russian Internet Research Agency during the 2018 midterms, and officials told Reuters that the United States carried out cyber operations against Iran after attacks against Saudi Arabian oil facilities (Indrees and Stewart, 2019; Nakashima, 2019). State actors use secrecy to avoid or manage escalation (Carson, 2016, 2018; Kurizaki, 2007), so they may voluntarily make secret operations visible to signal capabilities or intentions, or obscure them for the reverse. The choice of methods shapes whether attacks are revealed. Furthermore, authors should be careful when emphasizing non-state actors’ role in cyberspace. Non-state actors make their actions visible to researchers and the public. If we observed the true population of cyberattacks, we would likely discover fewer actions from non-state actors compared to state actors.

Authors now argue that cybersecurity is an ‘intelligence contest’ where states use cyberspace to steal information rather than compel or coerce one another (Chesney and Smeets, 2023; Lindsay, 2017; Rovner, 2020). Intelligence contest attacks would primarily target confidentiality. Attacks against information confidentiality take a long time to surface in comparison to those affecting availability. Furthermore, states are the primary actors engaged in intelligence contests. It takes longer for attacks by state actors to surface than non-state actors. If there are missing attacks, they are more likely to feature the attacks that ‘intelligence contest’ advocates believe in. Intelligence contest advocates will likely undercount total cases from the observed record.

Researchers are unlikely to overlook blackouts or infrastructure damage caused by cyberattacks in the United States. But researchers cannot assume that influential and coercive cases are rare without strong assumptions regarding visibility and discovery. Many cybersecurity events are defacements or denial-of-service attacks. However, these attacks are the easiest to observe, and the unknown population of cyberattacks likely contains few of them. Attacks such as Stuxnet or the OPM hack may be rare, but attacks targeting data integrity and confidentiality take the most time to surface. They are difficult to discover because the most sophisticated actors design them, they use valuable exploits that actors want to protect, and they target vital information that victims may want to keep secret.

Conclusion

This article details the technical and political process of discovering cybersecurity events and demonstrates two sources of bias in observational cybersecurity data. Actors have incentives to keep attacks secret, and attacks’ technical characteristics can make them more, or less visible. While cyber restraint is the conventional wisdom, the events some point to as the most common are also the easiest to discover and report, or are carried out by actors with fewer incentives to keep attacks secret. Persistent engagement is easier to observe depending on the tactics that states choose, regardless of their level of engagement in cyberspace. Intelligence contests describe attacks that employ hard-to-observe methods.

This article offers an approach to address observational challenges in other subjects. For instance, researchers studying human rights abuses using newswire data could gather additional information about the length of time between the event and the report. Existing studies evaluate bias by how different media sources cover or select different events (Baum and Zhukov, 2015; Dietrich and Eck, 2020; Fariss, 2014; Makridis et al., 2024). Researchers have fewer ways to analyse event ‘visibility’ (Landman and Gohdes, 2013).

There are important observational questions that cybersecurity researchers can directly answer without considering bias. How do public cyberattack reports affect domestic politics or international cooperation? Do states attempt to save face after being called out as the victim or perpetrator of a cyberattack? How do campaigns that explicitly target the public shape politics (Shandler et al., 2023; Vicic and Gartzke, 2024)? But, absent strong assumptions about the probability of reporting, researchers must consider bias in their sample and how this affects their results.

Understanding whether cyberattacks compel adversaries, which actors show restraint or persistently engage, or if cyberattacks change the balance of power can only be answered using a population of cases likely biased towards nuisance attacks and non-state actors. Engaging in academic research on a topic, researchers do not directly observe is difficult, and attack visibility introduces bias by making it easier to observe some attacks and harder to observe others. How could missing and unobserved cases influence your beliefs about cybersecurity?

Footnotes

Replication Data

Acknowledgements

I thank Austin Carson, Michael Colaresi, Rachel Hulvey, Iain Johnston, Tyler Jost and the three anonymous reviewers for helpful comments. Our editors Daphna Canetti and Ryan Shandler did a fantastic job conceiving and organizing this special issue. I also thank participants at the 2020 American Political Science Association Annual Conference for feedback. Connor Brown provided invaluable research assistance for data collection.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project received funding from the Weatherhead Center for International Affairs at Harvard University.