Abstract

Service robots on organizational frontlines, notably in health and elderly care settings, promise to tackle staff shortages. In such service contexts, compliance is crucial for consumer well-being, but compliance with robot advice remains problematically low. This research explores how the source of robot advice affects compliance in human–robot interactions. In six studies, including four field studies with real human–robot interactions, the authors demonstrate that consumers are more likely to comply with advice given by a robot service provider when the source of advice is a human rather than the robot itself. This is because a human source of robot advice increases the feeling of accountability, or the expectation that one might need to justify one's actions to others, which is more difficult to achieve with only robot social presence. In turn, this fosters advice adherence, which also persists over time across repeated interactions. However, when the robot embeds social cues in the advice, the difference in accountability and compliance between robot-only and robot advice with a human source attenuates. These insights hold enormous promise, especially for health care practitioners, institutions, and consumers for whom increased compliance can lead to better health outcomes, reduced hospital readmissions, improved recovery, and elevated well-being.

The rise of service robots in organizational frontlines largely reflects the personnel challenges facing many service industries, such that organizations have been compelled to search for alternative sources of labor, including service robots (Becker, Efendić, and Odekerken-Schröder 2022). Robot receptionists, delivery providers, and concierge assistants already are replacing human frontline service provision (De Keyser et al. 2019; Jiang and Wen 2020). In health care contexts, rising demand for services among the aging populations of many countries has created especially pressing staffing issues. The World Health Organization (2023) projects a global shortage of 10 million health care workers by 2030; in the United States alone, the shortage is expected to expand to more than 500,000 nurses and 139,000 physicians (Zhang et al. 2018, 2020). In turn, the market for robotic nurses is anticipated to reach $2.8 billion by 2031 (Falcone 2025).

This market expansion reflects the rapid development of technology, which allows health care service providers to augment or substitute frontline employees with robots that function as “information technology in a physical embodiment, providing customized services by performing physical as well as nonphysical tasks with a high degree of autonomy” (Jörling, Böhm, and Paluch 2019, p. 405). For example, the robot Moxi supports nurses in patient contact, and Zora conducts physical activities with nursing home residents (Falcone 2025; Kort and Huisman 2017).

Compared with body-less artificial intelligence (AI), these robots offer some advantages: Consumers tend to comply more with their requests (Bainbridge et al. 2011) and form more personal bonds with physical, embodied robots than with body-less AI (Wainer et al. 2006). Yet they still comply less with instructions given by a robot than those given by a human, or simply ignore robots altogether (Čaić et al. 2019; Schneider et al. 2022). Such poor compliance represents a serious risk, considering that in many service industries, consumer compliance, defined as the extent to which an individual conforms to recommendations or advice (Lee et al. 2017), is critical (Habel, Alavi, and Pick 2017): In airline travel, seatbelts need to be fastened; in education, homework needs to be completed; and in health care, treatment plans must be followed. Patient noncompliance with health care recommendations creates annual costs in the hundreds of billions of dollars (USD) and poor health outcomes, such that more than 125,000 deaths per year can be attributed to noncompliance (Cutler and Everett 2010; Watanabe, McInnis, and Hirsch 2018).

Some researchers suggest that robot attributes, such as type of embodiment (Wainer et al. 2006) and nonverbal behavior (Chidambaram, Chiang, and Mutlu 2012), as well as robot messaging (Kim and Duhachek 2020; Schneider et al. 2022) can affect the extent to which consumers comply with robots. While these studies show how compliance with robots is influenced, the underlying mechanisms driving compliance remain underexplored. Building on social presence theory (Short, Williams, and Christie 1976), we propose that messages that make consumers feel more accountable can increase human compliance with robot advice.

Rooted primarily in social and organizational psychology, accountability is broadly defined as the implicit or explicit expectation that one may be called on to justify one's beliefs, feelings, and actions to others (Lerner and Tetlock 1999), which can influence decision-making and norm adherence (Schlenker and Weigold 1989; Tetlock 1985). Notably, in human-to-human interactions, the social presence of the human service provider naturally evokes accountability (Evans 2021; Oussedik et al. 2017). When consumers experience human social presence (even the anticipation of a human social presence, such as a weekly meeting with a diet coach; Oussedik et al. 2017), they experience a heightened expectation to justify their actions, encouraging them to adhere to the advice provided (Evans 2021; Mohr, Cuijpers, and Lehman 2011).

While human social presence naturally evokes a sense of accountability and a need for compliance, this may not hold true for robots. Unlike humans, robots are not perceived as full-fledged social entities and have lower social presence (De Graaf 2016; Kidd 2003). Although technological agents can show some social cues—such as when communicating, instructing, and taking turns in interactions—these cues are subtle (Reeves and Nass 1996) and, as such, weaker than those innately evoked by humans. This limitation makes it less likely that robots can generate the same level of accountability and advice compliance as humans do.

We therefore highlight a novel way in which accountability and adherence with robot advice can be increased: by invoking human social presence as a source of the advice. When a robot gives advice on behalf of a human service provider, consumers may comply more readily because they recognize the social consequences of noncompliance (Lerner and Tetlock 1999; Oussedik et al. 2017). Thus, the human social presence invoked by the robot heightens the sense of accountability, leading to greater compliance compared with when the robot advises on its own. Moreover, we show that this heightened advice adherence persists over time, implying that a robot invoking a human social presence can encourage continued compliance across repeated interactions.

Different from literature that highlights the importance of authority for persuasive advertising (Kaptein and Duplinsky 2013), we expect authority differences to be less critical for compliance in personal face-to-face encounters (Okdie et al. 2013). Here, the sense of being accountable to someone is more important—and humans and robots crucially differ in the extent to which they can evoke a sense of social presence and accountability. In human-to-human face-to-face encounters, we expect that the richness of human social presence suffices to increase the sense of accountability, reducing the need for hierarchical authority (Okdie et al. 2013). This is illustrated by literature in the health domain that shows similar compliance levels with nurses and doctors (Barnoy, Levy, and Bar-Tal 2010; Laurant et al. 2018). For robots, hierarchical authority actually backfires and makes others' compliance less likely than when the robot is in a peer role (Saunderson and Nejat 2021), implying that other strategies are needed to increase human compliance with robot advice.

However, relying on a human service provider as a source of social presence is not always feasible, as there may be situations in service provision where there is no human a consumer feels accountable to. We therefore highlight a boundary condition in which the compliance gap between robot-only advice and robot advice with a human source is attenuated. Guided by social presence theory (Short, Williams, and Christie 1976), we suggest that the robot can enhance its social presence by embedding more social cues in the advice (Short, Williams, and Christie 1976; Xu, Chen, and You 2023), thereby fostering a heightened sense of accountability to the robot. Although prior research highlights both positive and negative effects of robots using social cues on social, affective, and behavioral responses (e.g., Ghazali et al. 2018; Liew and Tan 2021), these cues can sometimes backfire in service settings. For example, flattering comments by robot sales clerks have been shown to provoke skepticism (Lee and Yi 2025). To avoid these potential dark side effects, we propose explicating the goal of the robot advice to enhance consumer well-being as social cue. By doing so, a robot shows that it can go beyond simple task execution in an if-then fashion and is able to meaningfully act (Satyanarayan and Jones 2024), which increases its social presence, even without relying on its connection to a human service provider. Hence, embedding social cues makes robot-only advice more compelling, fostering a heightened sense of accountability to the robot and increases compliance with its advice.

This investigation makes five main contributions to extant literature. First, in light of the expected rise of service robots in organizational frontlines, this article shows how human compliance with robot advice can be increased; a crucial question for companies delivering services where adherence to advice is key to improve consumer well-being. Second, consistent with social presence theory, we identify the underlying mechanism driving this effect and show that generating a sense of accountability by connecting to a human social presence affects the extent to which consumers take a robot's advice to heart. Thereby, we not only add to the marketing literature, which has not examined robot accountability, but also address a gap in existing health behavior theories, which have largely overlooked the role of accountability in compliance (Oussedik et al. 2017). Third, we demonstrate that this heightened sense of accountability and higher compliance with a robot giving advice on behalf of a human service provider does not wear off over time, but rather persists. Fourth, we show that a robot embedding social cues in its advice increases its social presence, which in turn strengthens the consumer's sense of accountability and promotes greater adherence to the advice. Fifth, four field experiments, conducted in managerially relevant contexts, including an elderly care institution and a university, offer meaningful and practical insights for health care practitioners, institutions, and consumers. More effective compliance with service robots may lead to better health outcomes, reduced hospital readmissions, overall enhanced patient recovery, and elevated well-being, thereby reducing health care costs for providers and firms and benefiting society.

Theoretical Framework and Hypothesis Development

Compliance With Robots

People often discount others’ advice and rely more heavily on their own judgment, even when the advice is accurate and well-intentioned (Yaniv and Kleinberger 2000). This tendency extends to health care, where patients may resist following beneficial and benevolent advice from medical professionals (Van Poppel 2019). This reluctance is even more evident when the advice comes from robots or artificial agents. Consumers often refuse to comply with robots’ requests (Haring et al. 2019) or neglect their advice, because they trivialize robots (Schneider et al. 2022) or consider them unable to understand human goals, desires (Kim and Duhachek 2020), and uniqueness (Longoni, Bonezzi, and Morewedge 2019). This reluctance to comply poses a critical challenge in service contexts, particularly health care, where following advice is of utmost importance for health and well-being.

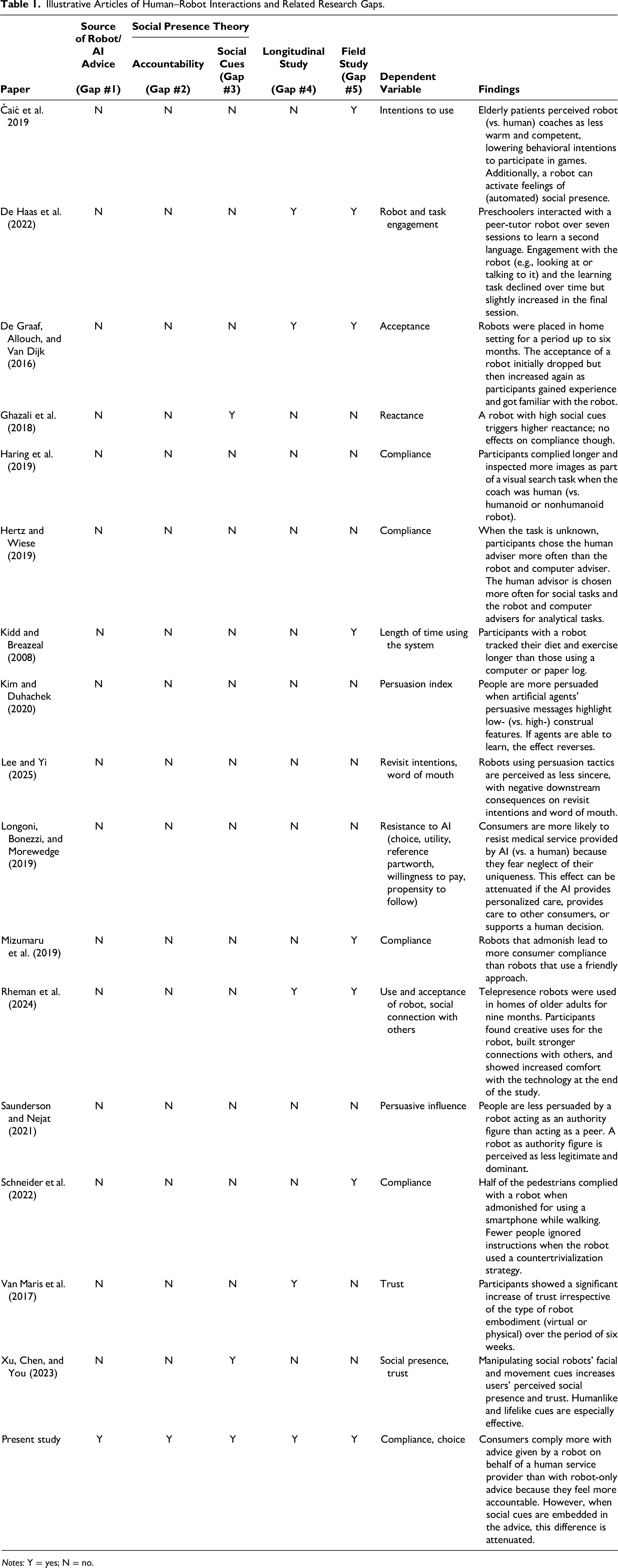

As service robots become more prevalent in frontline roles, and in services oriented to consumer well-being (De Keyser et al. 2019), their ability to influence consumer behavior is critical to ensure positive outcomes for consumers. Most studies have compared robot advice with human advice, with findings showing a preference for human advisors because humans are seen as more adaptable to changing circumstances and different task types (e.g., Hertz and Wiese 2019). While some interventions aim to make robot advice more persuasive—by positioning the robot as a peer instead of an authority figure (Saunderson and Nejat 2021) or employing a countertrivialization strategy (Schneider et al. 2022)—such approaches remain limited in scope. Critically, whether a robot can increase compliance by letting the robot make an explicit connection to a human service provider when giving advice—which is easily actionable—remains unexplored (see Table 1 for an overview).

Illustrative Articles of Human–Robot Interactions and Related Research Gaps.

Notes: Y = yes; N = no.

Beyond that, two methodological limitations constrain the current literature. First, most studies focus on one-time interactions, offering little insight into how compliance unfolds over time. Yet human–robot relationships, especially in health care and domestic contexts, are inherently longitudinal. Some studies have shown increasing trust or comfort with robots over weeks of exposure (De Graaf, Allouch, and Van Dijk 2016; Van Maris et al. 2017), while others document decreasing engagement over time (De Haas et al. 2022). Rheman et al. (2024), for example, show that the use of telepresence robots in older adults’ homes has led to several clients feeling more socially connected with others, while some also report higher comfort with technology due to their use of the robot. These mixed findings call for deeper exploration into how compliance evolves in sustained interactions. Second, few studies feature field experiments or actual compliance with real robots. Two studies have installed robots in shopping malls that ask pedestrians to stop using their phones while walking (Mizumaru et al. 2019; Schneider et al. 2022). Both studies report low compliance, but only Schneider et al. (2022) consider how compliance might be increased. Other studies investigate intentions to comply with a robot coach in an elderly care facility (Čaić et al. 2019) or at the participant's home (Kidd and Breazeal 2008).

Accountability as a Function of Social Presence

Social presence theory (Short, Williams, and Christie 1976) posits that the awareness of another entity establishes a social context that encourages individuals to align with the expectations of that entity. This presence establishes involvement obligations—the normative expectation to respond, engage, and attend to the other in the shared interaction (Goffman 1967, 1983; Schultze and Brooks 2019). These obligations are shaped by the assumption that one's actions are being observed or may be evaluated, promoting individuals to align more closely with normative expectations, thereby increasing compliance. In such contexts, individuals experience a heightened sense of accountability—the expectation of having to justify one's actions to others. This expectation can lead to more careful information processing, increased norm adherence, and greater compliance (Lerner and Tetlock 1999; Zajonc 1980).

While social presence theory was originally developed in the context of human–human interaction, these insights have since been extended to human–robot interaction. Early research shows that even minimal social behaviors by technological agents can elicit humanlike social responses. For instance, when computers communicate, instruct, and take turns during interaction, they are perceived as sufficiently humanlike to trigger social reactions, even when the social cues are subtle (Reeves and Nass 1996). Similarly, robots can evoke a sense of social presence (Čaić et al. 2019), particularly when they exhibit humanlike and natural features such as facial expression, speech and movement (Xu, Chen, and You 2023). However, it has also been noted that robot social presence differs fundamentally from human social presence (Van Doorn et al. 2017). We propose that one such fundamental difference is that robot social presence cannot generate the same sense of accountability as human social presence (Lerner and Tetlock 1999; Oussedik et al. 2017).

Accountability—a well-established driver of behavior in social settings in the psychology literature (Evans 2021; Lerner and Tetlock 1999)—is a promising yet underexplored mechanism that has yet to be fully leveraged in the context of human–robot interaction. When people feel accountable, they are more likely to process information carefully, align with expectations, and act in socially acceptable ways (Sedikides et al. 2002). Accountability can be shaped by social roles and shared expectations that guide behavior and regulate how individuals understand and meet accountability demands Overman and Schillemans (2022). Despite its significance, accountability has been underrepresented in health behavior models (Oussedik et al. 2017). However, evidence shows it can improve adherence to health advice (Feldman et al. 2017; Mohr, Cuijpers, and Lehman 2011).

As we have pointed out, one key factor that can shape accountability in interpersonal interactions is the sense of social presence. While robot social presence may make people feel less accountable than human social presence, prior literature does show that a robot can enhance its social presence by incorporating additional social cues (Gunawardena 1995; Xu, Chen, and You 2023). Social cues elicit both positive and negative social and behavioral responses in the human interacting with the robot (e.g., Ghazali et al. 2018; Liew and Tan 2021). A robot using persuasive tactics such as giving compliments or offering limited-time promotions has for instance been found to increase its social presence as a persuasion agent, with the negative downstream consequence that it is perceived as less sincere (Lee and Yi 2025).

Hypotheses

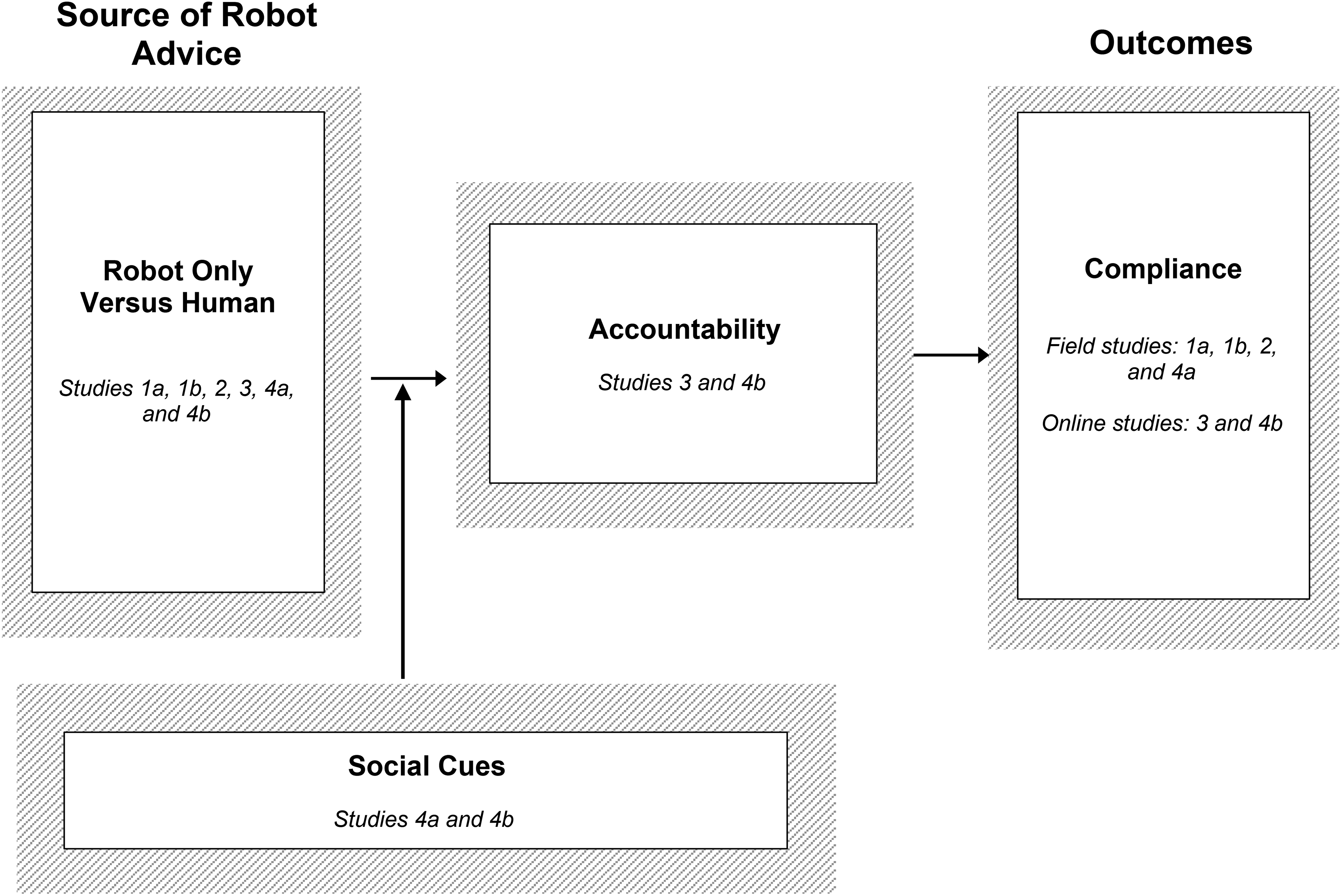

We propose the conceptual framework in Figure 1, which is theoretically rooted in social presence theory (Nowak and Biocca 2003; Short, Williams, and Christie 1976). Social presence theory posits that communication formats differ in their ability to convey a sense of social presence, or being with another (Biocca, Harms, and Burgoon 2003; Moffett, Folse, and Palmatier 2021). While early studies departed from the notion that consumers always interacted with another human, albeit mediated by communication technology, this is not the case for human–robot interactions.

Conceptual Model.

Robots differ meaningfully from humans in their ability to evoke this sense of presence. Although prior work identifies a form of “automated social presence” (Van Doorn et al. 2017), consumers typically do not perceive robots as full-fledged social others (De Graaf 2016), in part because they recognize that robots are programmed and do not possess their own intentions or preferences (Chugunova and Sele 2022; Garvey, Kim, and Duhachek 2023). This makes it less likely that users will feel accountable to robots or perceive social consequences for noncompliance.

In contrast, human–human interactions naturally evoke high social presence and activate accountability toward the interaction partner (Goffman 1967, 1983; Schultze and Brooks 2019), leading individuals to align their behavior with the preferences of those they feel accountable to (Tetlock 1985; Quinn and Schlenker 2002). This sense of accountability motivates individuals to act in accordance with perceived expectations and encourages compliance because consumers feel answerable to a respected source (Oussedik et al. 2017). Robots, with their lower social presence, however, may be less effective than humans in eliciting the social responses that typically lead to compliance, most notably the sense of accountability.

Drawing on work that shows that the effectiveness of a communication message depends on the extent to which it creates a sense of social presence (Short, Williams, and Christie 1976), we posit that human compliance with robot advice can be increased if this advice includes a source of social presence the consumer feels accountable to. Typically, this is a human service provider involved in service provision, such as a nurse, a physical therapist, or a coach. Building on prior literature that shows that accountability does not always require direct human contact (Bailey 2008) but can be facilitated through indirect means (Oussedik et al. 2017), we propose that a robot conveying advice on behalf of a human service provider can constitute such a form of mediated social presence.

While we acknowledge that there is always a human who programmed the robot behind robot advice, we also note that this human social presence is rather implicit in an actual human–robot interaction. We expect that when the human behind the robot is made explicit—specifically, a robot advising on behalf of a human service provider who evokes a sense of accountability—the advice can be more effective in driving compliance. Thus, a robot advising on behalf of a human service provider generates greater compliance than one acting merely on its own, as it leverages the accountability associated with the human.

Embedding Social Cues in Robot-Only Advice to Increase Accountability

Relying on a human service provider as a source of social presence is not always feasible, as there may be situations where there are no humans to whom a consumer feels accountable during a service provision. This makes it essential to explore ways to enhance the compliance with robot-only advice.

According to social presence theory, the degree to which an interaction feels socially rich can shape how individuals respond to mediated others (Short, Williams, and Christie 1976). In particular, social presence increases when the communication partner is perceived as intentional and aware of the interaction (Biocca, Harms, and Burgoon 2003). One way to enhance a robot's perceived social presence is through the inclusion of social cues—features of the robot's communication that convey interpersonal awareness or social intent (Xu, Chen, and You 2023). Prior research suggests that even minimal social behaviors by technological agents, such as taking conversational turns or providing guidance, are sufficient to elicit humanlike social responses. As long as there are some behaviors that suggest a social presence, people will respond accordingly (Reeves and Nass 1996), and such responses often occur in an automatic manner (Nass and Moon 2000). Thus, these social cues can help the robot be perceived as more “present,” which in turn may elicit accountability.

Although social cues can foster social presence, prior research cautions that certain social cues can also backfire (Ghazali et al. 2018), particularly when they trigger perceptions of insincerity or self-serving motives (Lee and Yi 2025). Mindful of such instances, we propose the robot embedding social cues by highlighting the purpose behind its advice, as a means to increase its social presence. By framing advice in a way that makes the underlying rationale for the consumer explicit, the robot can showcase meaningful intent rather than if-then task execution (Satyanarayan and Jones 2024). In turn, this should act as social cues that elicit social responses (Liew and Tan 2021). By doing so, the robot can enhance its social presence independently of any human connection (Xu, Chen, and You 2023). Such framing may also trigger a sense of accountability in users that in turn increases conformity, as individuals become more vigilant information processors when they anticipate having to justify their decisions (Schlenker and Weigold 1989; Tetlock 1985). Hence, embedding social cues in robot-only advice might make it more compelling, fostering a heightened sense of accountability to the robot and reducing the compliance gap between robot-only advice and advice given on behalf of a human service provider.

Hypothesis Tests and Study Overview

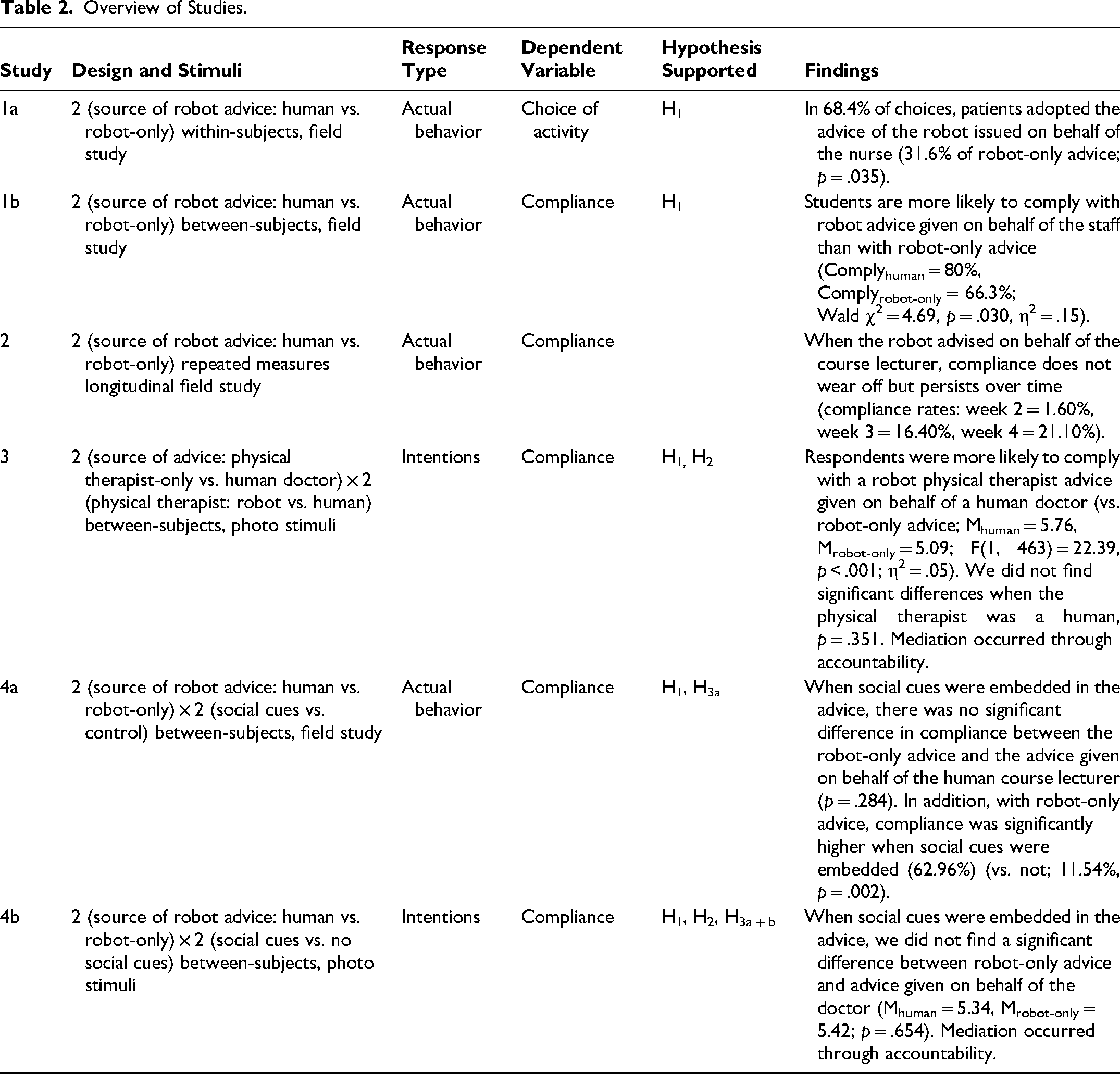

We test our hypotheses in a series of experiments (Table 2). Studies 1a and 1b are field experiments, conducted in an elderly care facility and a European university, respectively. They affirm that consumers are more likely to comply with robot advice when the robot highlights a nurse or university staff as source of robot advice compared with robot-only advice. Study 2 is a longitudinal field study that shows that this effect does not wear off, as compliance with a robot advising on behalf of a human service provider persists over time. Study 3 then sheds light on the underlying mechanism and establishes that consumers feel more accountable when the robot advises on behalf of a human service provider compared with robot-only advice. This study further highlights a key difference between human–human and human–robot interactions in that invoking another human service provider—even one with higher authority—as a source of advice does not make a difference for compliance in human-to-human encounters, as one human service provider alone fosters a sufficient sense of accountability. Lastly, noting that robot-only advice is less complied with, in Study 4 we examine embedding social cues as a means to enhance the compliance with robot-only advice. Field Study 4a reveals that the difference in compliance between robot-only advice and robot advice given on behalf of a human service provider is reduced when the robot embeds social cues in its advice. We replicate these results in a more controlled setting in Study 4b; the difference in compliance again is attenuated when social cues are embedded in the advice, mediated by accountability.

Overview of Studies.

The Web Appendix includes the stimuli and full scales used in the studies (Web Appendices A–F), robustness checks (Web Appendix G), information on the manipulation checks (Web Appendix H), and additional analyses (Web Appendix I).

Study 1: Field Studies

Study 1a: Activities with the Elderly

Study 1a is a two-cell source of robot advice (human vs. robot-only) field experiment. This study, conducted in an elderly care facility located in Europe, includes elderly consumers living independently in apartments on the premises of the care facility, those living in apartments and assisted by staff (e.g., meal service, help with washing and dressing), and those living in rooms in the care facility. We relied on the care facility's records and input from an in-house supervisor to screen the residents according to their capability to participate. The 19 informants (ages = 69–98 years; 73.7% women, 26.3% men) made 38 choices in the experimental decision task. This sample size is comparable to other elderly care field studies’ samples, reflecting the challenges of gaining access to this population of consumers (Looije, Neerincx, and Cnossen 2010; Shanks et al. 2024). 1

We worked with the care institution and a programmer for several months to develop four activities that the humanoid robot Pepper could undertake with elderly patients: exercise to music, listen to a story, play a quiz, or sing a song. We designed these activities in close cooperation with the staff of the care facility and adapted the activities based on their feedback. The robot gave each participant two activities to choose from, in two consecutive rounds. That is, in the first round, respondents selected between activities A and B. After they completed the activity, they made another choice between activities C and D. In each round, one activity was presented as advice on behalf of the nurse (“Hello, my name is Pepper. Do you want to do [activity] upon the advice from the nurse?”), and the other one was robot-only advice (“Hello, my name is Pepper. Do you want to do [activity] upon my advice?”). We randomized the combinations of all four activities and the order of source of robot advice.

These experiments took place in a separate room in the facility. We used a “Wizard of Oz” technique to maintain remote control over the robot. In this study, the programmer (i.e., wizard) directing the robot was in the same room but out of the field of view of the participant during the interaction with the robot. When participants entered the room, they were seated in front of the robot or stayed seated in their wheelchair or rollator walker (Figure 2) and started interacting with the robot. After the interaction, a member of the research team conducted a short interview to gather the respondents’ demographics. Overall, the interaction between the respondent and the robot, together with the short interview, took around 15 minutes.

Setup for Study 1a.

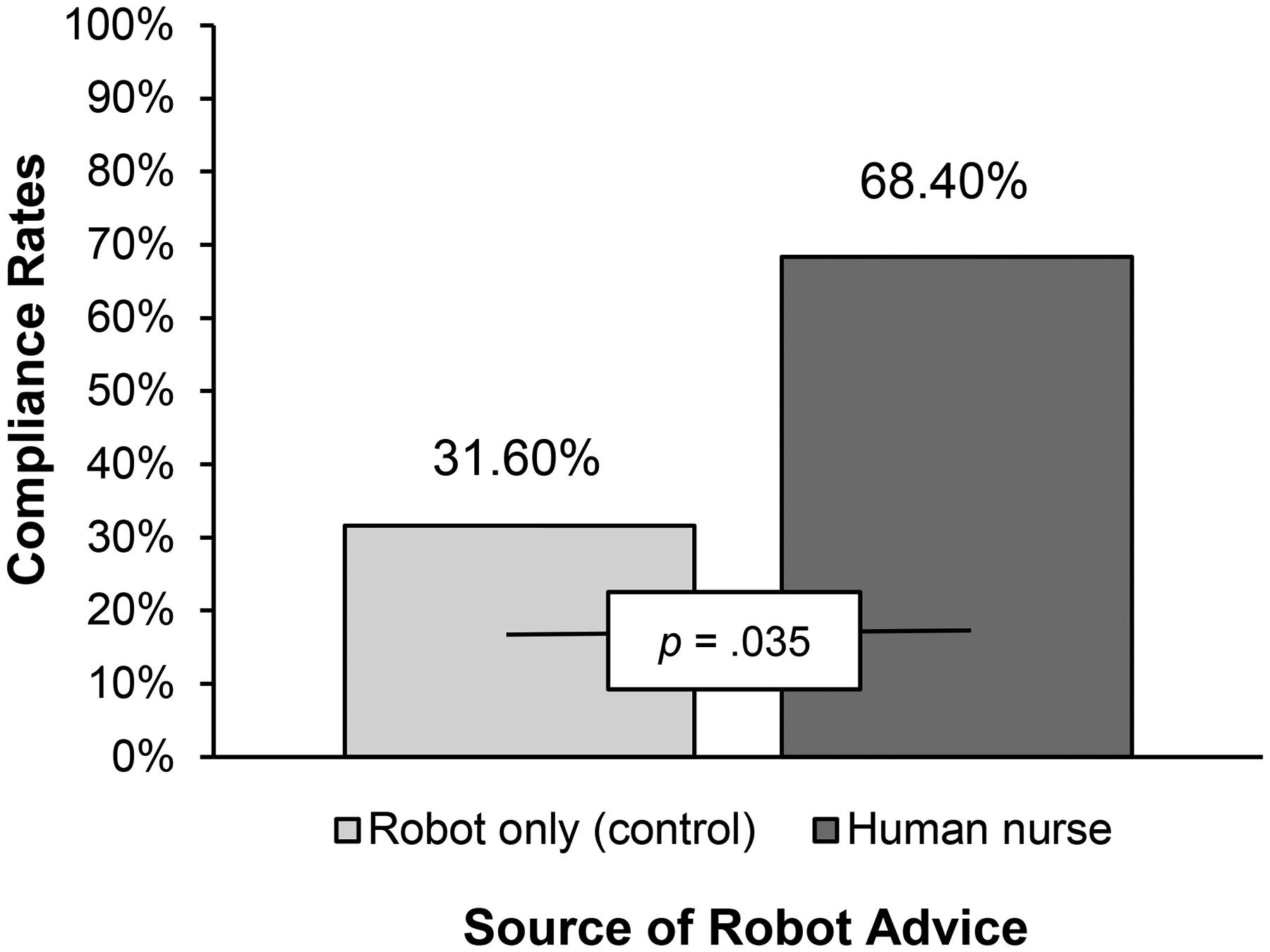

As expected, varying whether the advice was on behalf of the nurse or robot-only impacted the chosen options. Robot advice on behalf of the nurse was preferred for 68.4% of the choices (31.6% in the robot-only advice condition), according to a binomial test with exact Clopper–Pearson 95% confidence intervals (CIs) of 51.3% to 82.5% for robot advice on behalf of the nurse and 17.5% to 48.7% for the robot-only advice (p = .035; Figure 3).

Compliance Rates in Study 1a.

Thus, we can confirm that during an actual human–robot interaction, in a field study in an elderly care setting, senior citizens were more likely to follow robot advice given on behalf of a nurse (vs. robot-only advice), as we predicted in H1.

Study 1b: Hand Sanitation

In Study 1b, we aim to replicate the Study 1a results in a different context, with a different population of consumers, and with a different manipulation of our independent variable. This study is a two-cell source of robot advice (human vs. robot-only) field experiment, conducted on a university campus in Europe (i.e., toward the end of the third wave of the COVID-19 pandemic). This study was inspired by university staff reporting to us that they found it challenging to get students to still respect the COVID-19 rules, such as hand sanitation. We placed the humanoid robot Pepper at the entrance of the building next to a hand sanitizer dispenser (Figure 4). Specifically, the robot asked each student who entered the building to disinfect their hands, either on behalf of a university staff member (“Welcome! The staff of the university asks you to please disinfect your hands here before entering the building. The staff appreciates your cooperation”) or not (“Welcome! I ask you to please disinfect your hands here before entering the building. I appreciate your cooperation”). As a measure of compliance, we counted how many of the 201 students that the robot approached complied with the advice and disinfected their hands. We alternated conditions on a daily basis.

Service Robot used in Study 1b.

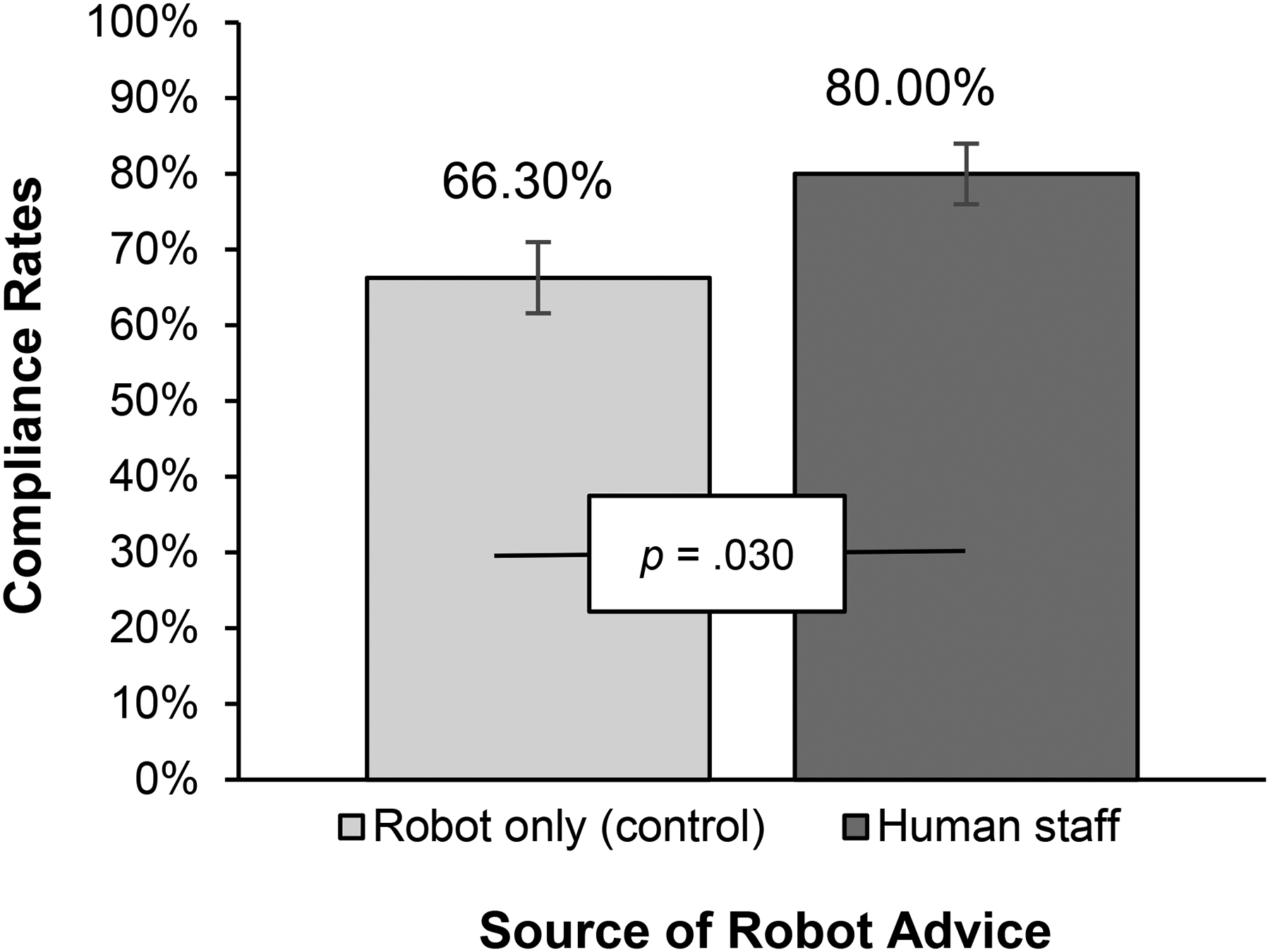

In a binary logistic regression, with source of robot advice as the independent variable, we find that respondents were more likely to act on the advice from the robot on behalf of the staff than on robot-only advice (Complyhuman = 80%, Complyrobot-only = 66.3%; Wald χ2 = 4.69, p = .030, η2 = .15), again in line with H1 (see Figure 5). Furthermore, compliance rates increased from 66% to 80%, just by changing the source of robot advice, indicating that robots advising on behalf of a human service provider are effective for increasing compliance rates in real-world service scenarios.

Compliance Rates in Study 1b.

In two different contexts, involving different types of health-related advice and distinct manipulations, Study 1 establishes that a robot giving advice on behalf of a human source is a viable option to increase advice compliance.

Study 2: Longitudinal Field Study

This longitudinal field study aimed to establish whether the effect of a robot evoking a human source of advice on compliance shown in Studies 1a and 1b persists over time or wears off. We followed 101 students in six tutorial groups of one course at a European university, each consisting of approximately 16 undergraduate students, over a four-week period. Our study began in the first week of the course. The objective was to investigate whether students would comply with the robot advice to participate in a three-minute exercise routine during the tutorial break, depending on whether advice was given on behalf of a human service provider or not. The robot was used in each weekly iteration of the field study. For this study, we used the humanoid robot Maatje from Smartrobot.solutions. This compact, 25 cm tall robot was ideal due to its ability to perform exercises effectively and its sturdy, durable design.

Study 2 employs a difference-in-differences approach (Li, Luo, and Pattabhiramaiah 2023) to estimate the effect of source of robot advice on compliance by comparing changes in compliance across pre- and postintervention periods between treatment and control groups. Thus, this study features a repeated-measures design with two sources of robot advice (human vs. robot-only). The first week served as a preintervention baseline for all groups, meaning that all tutorial groups received robot-only advice (“Hello! I am conducting a pilot study on optimal learning conditions, which includes incorporating exercises during a tutorial. I ask you to participate in those exercises.”). From the second week onward, the groups were divided into two conditions: In the treatment group, the course lecturer was used as the human source of robot advice (“Hello! Your lecturer 2 [first name and last name] is conducting a pilot study on optimal learning conditions, which includes incorporating exercises during a tutorial. [First name] asks you to participate in those exercises.”). Four tutorial groups received this treatment. The control condition consisted of robot-only advice, which used the same wording as the preintervention wording. Two tutorial groups were used as control condition.

At the beginning of each tutorial session, the tutorial teacher informed students: “In the break, a robot will come in for a pilot study on optimal learning conditions. Please note that participation is completely voluntary and will not affect your participation in this course or your grade.” To prevent potential bias, the tutorial teacher (who was not the course lecturer) was asked to step out of the room during the robot's interaction with the students. To observe patterns of behavior over time, we tracked student compliance weekly using a seating chart to systematically record each student's participation.

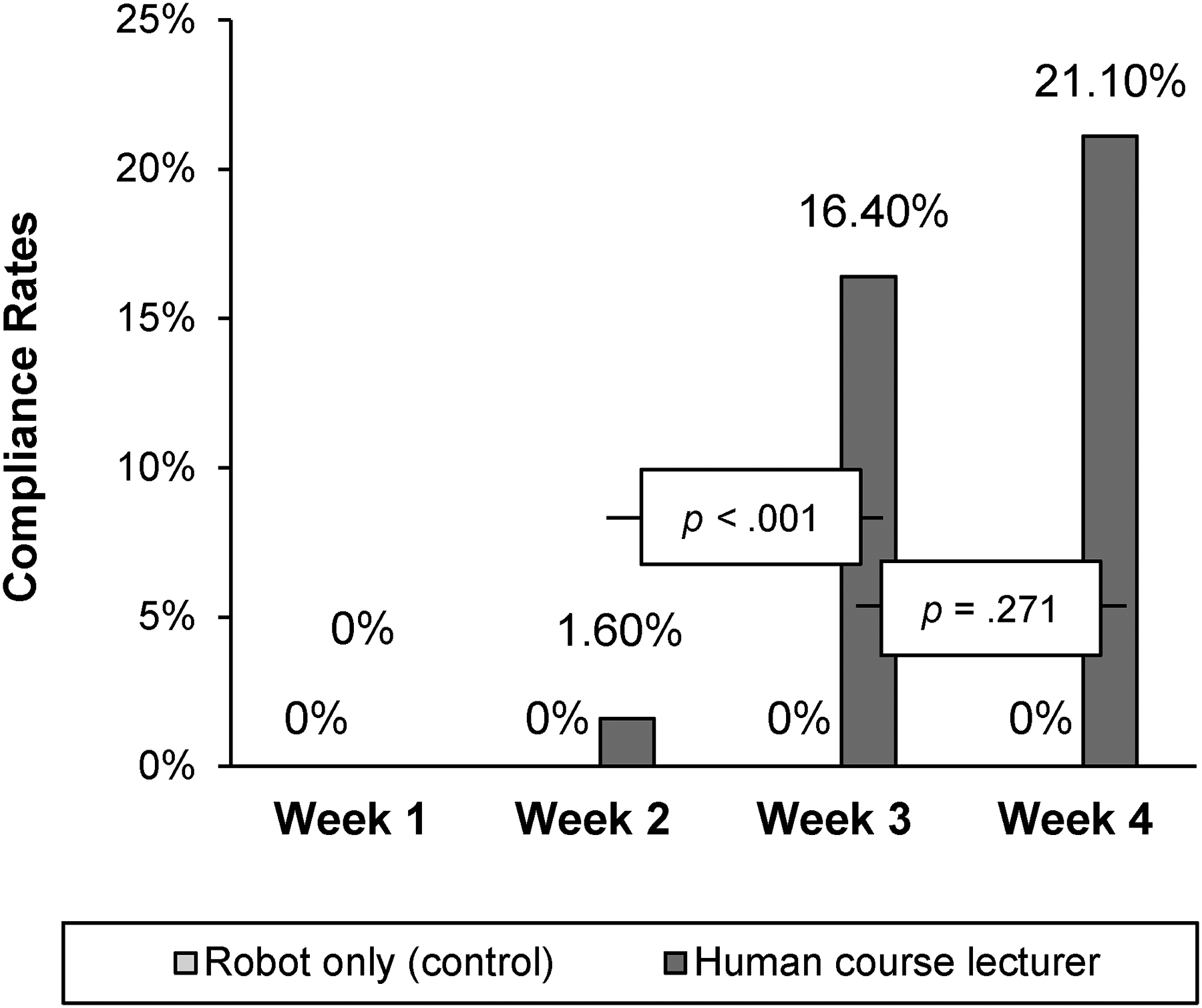

Results

First, and as assumed, we do not find any differences in compliance between tutorial groups in the preintervention week (week 1), given that none of the participants complied. This lack of difference in the preintervention week is critical for the model, as it ensures that any observed effects in the subsequent weeks can be attributed to the intervention rather than preexisting disparities between the groups (Li, Luo, and Pattabhiramaiah 2023). The postintervention phase began in week 2, when the treatment was introduced. Here, we find that when the robot asked students to participate on behalf of the human course lecturer, compliance increased from week 2 onward and did not wear off over time (compliance rates: week 2 = 1.60%, week 3 = 16.40%, week 4 = 21.10%; see Figure 6).

Compliance Rates in Study 2.

A general linear model with a linear probability specification compares week-to-week effects within the human and robot-only conditions and demonstrated a statistically significant overall fit (F(6, 357) = 8.63, p < .001). More specifically, when the robot advised on behalf of the human course lecturer, significant differences emerged between week 2 and week 3 (F(1, 357) = 12.60, p < .001) and week 2 and week 4 (F(1, 357) = 21.07, p < .001), suggesting that compliance did not wear off and persists over time. No significant difference was found between week 3 and week 4 (p = .271), indicating consistent compliance between the later weeks. In the control groups, students continued to not comply with robot-only advice (compliance rates: week 2 = .00%, week 3 = .00%, week 4 = .00%; comparisons across the weeks 2–4 yielded no significant differences in compliance rates with robot-only advice (all p > .90). 3

Discussion

This longitudinal field study shows that the higher compliance with advice given by a robot on behalf of a human does not wear off but persists over time. As in Studies 1a and 1b, we establish higher compliance with robot advice given on behalf of a human service provider than with robot-only advice, where compliance rates with robot advice on behalf of a human service provider increase as the weeks progress.

Study 3: Accountability as Underlying Process

With Study 3, in addition to replicating the prior findings, we examine the mediating effect of accountability as an underlying process. Study 3 further attempts to rule out multiple alternative explanations and moreover empirically demonstrates how human–robot social interactions differ fundamentally from human–human social interactions. As noted previously, humans already have a social presence that invokes accountability; the addition of another human to the situation (i.e., human advising on behalf of another human service provider) should not further enhance accountability. Therefore, our results should not replicate for a human advising on behalf of another human service provider.

We conducted a 2 (source of advice: physical therapist-only vs. human doctor) × 2 (physical therapist: robot vs. human) between-subjects design study among 475 Prolific workers. Eight participants were excluded from the final sample: one participant for not completing the survey, one for having missing values, and six participants for failing the attention check, resulting in a final sample size of 467 participants (Mage = 37.58 years; 45.6% women, 53.7% men, .4% nonbinary, .2% rather not say).

We asked respondents to “Please imagine you are currently recovering from a surgery after having had an accident and are therefore temporarily staying at a physical rehab facility. Today, you have the option to do some physical therapy exercises.” Participants were randomly assigned to one of the four conditions and shown a photo of a robot physical therapist or a human physical therapist (see Web Appendix D for stimuli). In the condition with the human doctor as the source of advice (i.e., the treatment condition), advice was given by the human physical therapist or robot physical therapist on behalf of a human doctor: “Hello. Today, a physical therapy session takes place and the doctor thinks it would be good for you to go there. The doctor advises you to do some physical exercises.” In the condition with the physical therapist-only as the source of advice (i.e., the control condition), the human physical therapist or robot physical therapist was the single source of advice: “Hello. Today, a physical therapy session takes place and I think it would be good for you to go there. I advise you to do some physical exercises.”

For the dependent variable, we measured compliance using a seven-point, four-item Likert scale (e.g., “How likely are you to participate in the physical therapy session?”; 1 = “highly unlikely,” and 7 = “highly likely”; Cronbach's α = .97; Chandran and Morwitz 2005). We used a seven-point, three-item Likert scale to accountability (e.g., “I feel accountable to the physical therapist for complying with the advice”; 1 = “strongly disagree,” and 7 = “strongly agree”; Cronbach's α = .95; Passyn and Sujan 2006). We also included measures for several competing processes (competence and warmth [Cuddy, Fiske, and Glick 2008], reactance [Roubroeks, Ham, and Midden 2011], task enjoyment [Dahl and Moreau 2007], degree of control [Zhu et al. 2007], eeriness [Mende et al. 2019], and level of comfort [Williams and Aaker 2002]). Full scales can be found in Web Appendix D. As an attention check, we asked respondents to “Please answer ‘strongly agree’ for this question”; 1 = “strongly disagree,” and 5 = “strongly agree”).

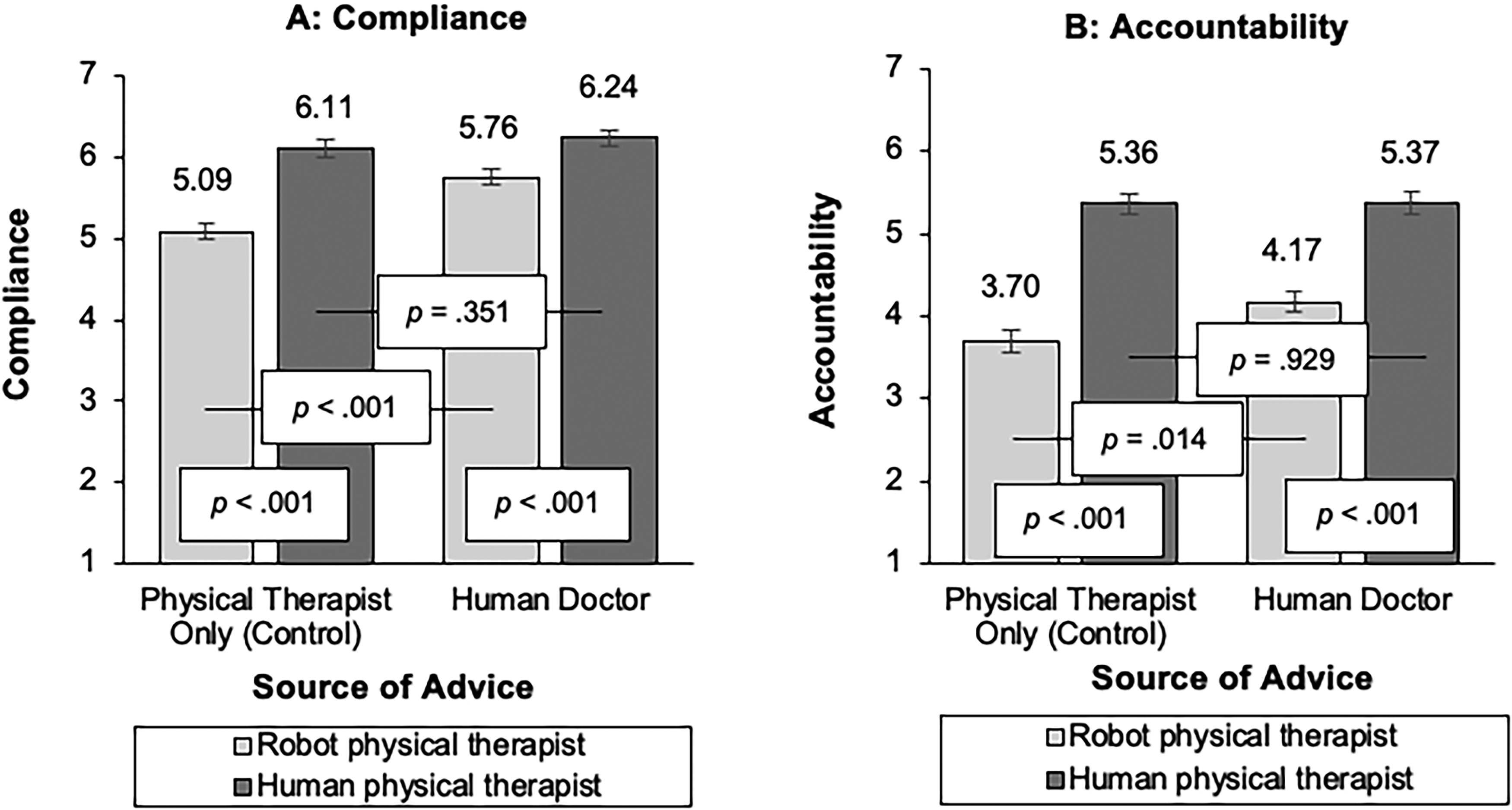

Compliance

An ANOVA on compliance shows the expected two-way interaction between source of advice and physical therapist (F(1, 463) = 7.29, p = .007; η2 = .02), together with significant main effects of source of advice (F(1, 463) = 16.12, p < .001; η2 = .03) and physical therapist (F(1, 463) = 55.56, p < .001; η2 = .11). Simple effects show that respondents were more likely to comply with a robot physical therapist advising on behalf of the human doctor (vs. with robot-only advice) (robot physical therapist: Mhuman doctor = 5.76, Mphysical therapist only = 5.09; F(1, 463) = 22.39, p < .001; η2 = .05), in line with H1. For the human physical therapist, we did not find a significant difference between the advice given by the human physical therapist only and on behalf of the doctor (human physical therapist: Mhuman doctor = 6.24, Mphysical therapist only = 6.11; p = .351; see Figure 7).

Compliance and Accountability, Study 3.

Accountability

An ANOVA reveals a marginally significant two-way interaction between source of advice and physical therapist (F(1, 463) = 2.85, p = .092; η2 = .01), as well as a marginally significant main effect of source of advice (F(1, 463) = 3.29, p = .070; η2 = .01) and a significant main effect of physical therapist (F(1, 463) = 114.25, p < .001; η2 = .20). Simple effects show that participants felt more accountable to the robot when the source of advice was the human doctor (vs. the robot only advice) (robot physical therapist: Mhuman doctor = 4.17, Mphysical therapist only = 3.70; F(1,463) = 6.10, p = .014; η2 = .01). Consistent with our arguments, accountability did not differ significantly when advice was given by the human physical therapist, no matter whether the human doctor was invoked as a source of advice or not (human physical therapist: Mhuman doctor = 5.37, Mphysical therapist only = 5.36; p = .929).

A moderated mediation analysis (Model 7; Hayes 2015) indicates a conditional indirect effect of source of advice on compliance through accountability, but only when the physical therapist was a robot (indirect effect = .15, SE = .07, 90% CI: [.04, .28]) and not when the physical therapist was a human (90% CI: [−.08, .09]). As expected, the index of moderated mediation was significant (−.15, SE = .09, 90% CI: [−.30, −.01]).

Alternative Mechanisms

We consider several alternative explanations for these findings. First, noting that people may respond negatively to strong persuasive attempts (Ghazali et al. 2018), we consider reactance as alternative mechanism; however, this does not drive our effects (b = .12; 95% CI: [−.10, .32]). Moreover, whether a robot advises on behalf of a human service provider or not when giving advice may affect its perceived warmth and competence, which prior literature has shown to influence compliance (e.g., Čaić et al. 2019). Therefore, we probe competence and warmth as potential mediators. Warmth (b = −.004; 95% CI: [−.19 .16]) did not mediate the effect of source of advice on compliance. Competence did not mediate for the robot physical therapist (b = .08; 95% CI: [−.06, .23]) but did for the human physical therapist (b = −.11; 95% CI: [−.22, −.01]) and therefore does not explain our effects (the opposite is needed to explain our effects). Additionally, individuals’ perceptions of having a sense of control can influence their willingness to comply with advice or directives (Li et al. 2024). We therefore tested degree of control which we could also rule out as potential mediator (b = −.02; 95% CI: [−.12, .08]). We further ruled out eeriness (b = .09; 95% CI: [−.05, .23]), level of comfort (b = .08; 95% CI: [−.12, .28]), and enjoyment of the task (b = .08; 95% CI: [−.05, .22]).

Discussion

Study 3 corroborates the finding that consumers are more likely to comply with advice when the robot acts on behalf of a human (supporting H1) and identifies accountability as mechanism driving this effect. In line with our reasoning, when the robot advises on behalf of a human service provider, consumers feel more accountable and want to avoid the social consequences of ignoring the advice, leading to higher compliance rates, as predicted in H2. 4

This highlights a key difference between human–human and human–robot relationships: Consumers feel more accountable to, and thus are more likely to comply with, advice given by a robot when the source of robot advice is another human. In contrast, when a human gives advice, no additional source of advice is required, as the human already serves as a sufficient source of accountability. This aligns with findings in the health domain, which show comparable compliance levels with advice from nurses and doctors (Barnoy, Levy, and Bar-Tal 2010; Laurant et al. 2018).

Study 4: Social Cues

With Study 4, we test whether robot-only advice can boost compliance to an extent similar to that achieved by advice given on behalf of a human service provider when a robot embeds social cues. Study 4a is another field study involving a real human–robot interaction with a behavioral response. Study 4b, conducted online, replicates Study 4a’s results and establishes accountability fostered by social presence as the underlying theoretical process.

Study 4a: Field Experiment

We conducted this 2 (source of robot advice: human vs. robot-only) × 2 (no social cues vs. social cues) between-subjects field experiment at a large European university (see Figure 8). We used a similar setup to Study 2 and the same manipulation for the source of robot advice (the robot-only advice condition served as the control, while the robot advice given on behalf of a human course lecturer served as the treatment condition) and added a sentence to manipulate the social cues. In the social cues condition, the robot says “Hello! I [Your lecturer (first name and last name)] is conducting a pilot study on optimal learning conditions, which includes incorporating short stretching exercises during a tutorial. Stretching with me will make it easier for you to focus after the break and ultimately benefit your learning performance. I ask [(First name) asks] you to participate in those exercises.” In the no social cues condition, the robot says “Hello! I [Your lecturer (first name and last name)] is conducting a pilot study on optimal learning conditions, which includes incorporating short stretching exercises during a tutorial. I ask [(First name) asks] you to participate in those exercises.” In total, 104 students 5 participated in the experiment (27.9% women, 72.1% men). The study took place halfway through their course.

Impression of Study 4a.

Results

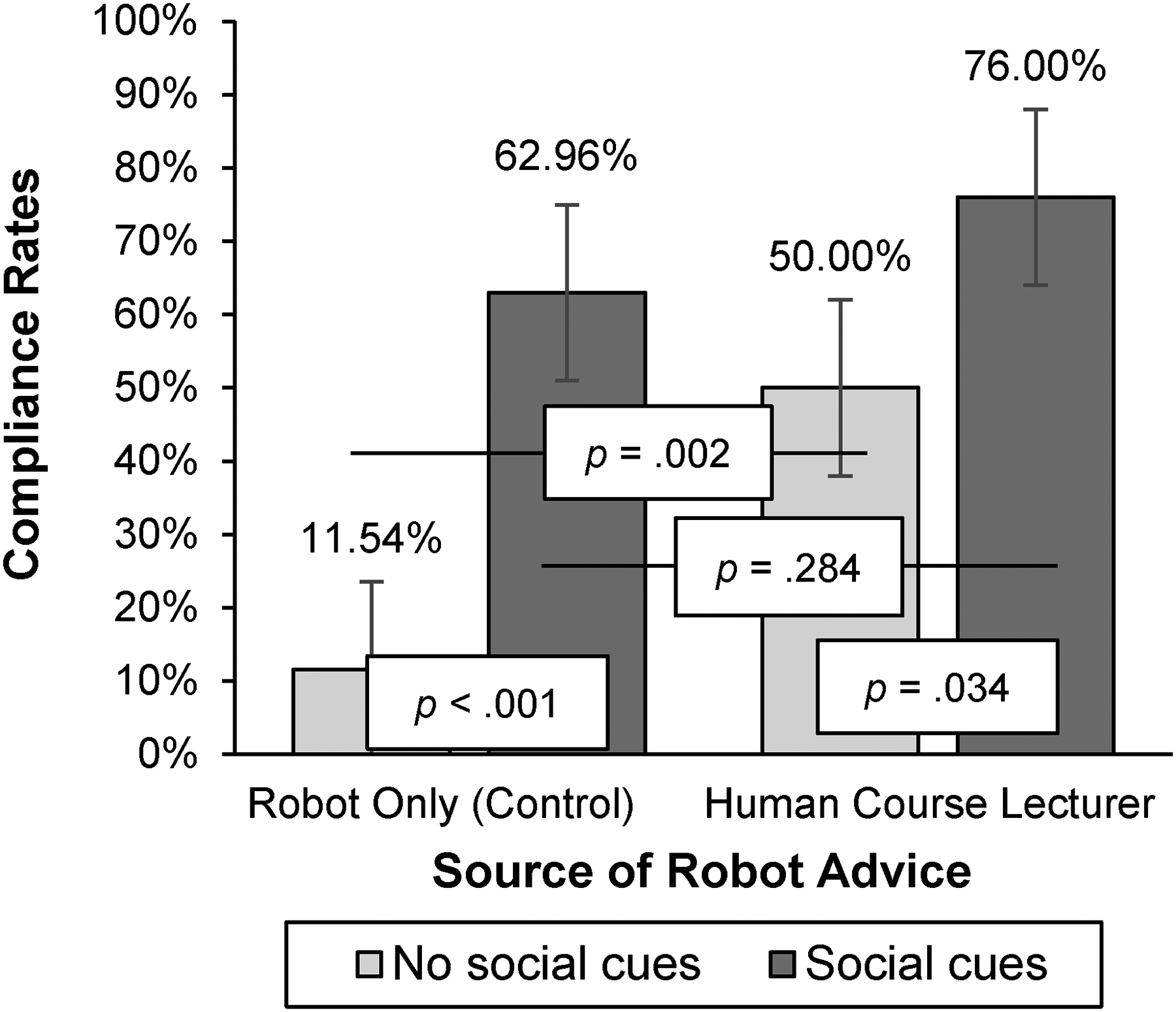

We used a generalized linear model with a linear probability function to model compliance based on the source of robot advice and social cues. The omnibus test was significant (χ2(3) = 27.23, p < .001). The model reveals two significant main effects, namely source of robot advice (Wald χ2(3) = 8.95, p = .003) and social cues (Wald χ2(3) = 20.24, p < .001). The interaction was directional yet did not reach significance (Wald χ2(3) = 2.18, p = .140). Although the interaction did not reach p < .05 levels, we explore simple effects to test our theorizing.

Simple effects show that in line with H1 and replicating our prior results, when social cues were not embedded in the advice, respondents were more likely to comply with robot advice on behalf of the human course lecturer (50%) than with robot-only advice (11.54%; 95% CI: [.15, .62], p = .002; see Figure 9), in line with the results of our prior studies. Importantly, this effect was attenuated when social cues were embedded (95% CI: [−.11, .37], p = .284), supporting H3b. In addition, with robot-only advice, compliance was significantly higher when social cues were embedded (62.96%, vs. not: 11.54%; 95% CI: [.28, .75], p < .001).

Compliance Rates in Study 4a.

Study 4b: Online Study

Study 4b provides an online replication of Study 4a, while also including accountability as a mediating mechanism. For this 2 (source of robot advice: human vs. robot-only) × 2 (no social cues vs. social cues) between-subjects study, 450 participants were recruited on Prolific. A total of 22 participants were excluded from the final sample: 15 participants for failing the attention check, 1 for having missing values, and 6 for not completing the survey. This resulted in a final sample size of 428 participants (Mage = 39.00 years; 51.2% women, 47.9% men, .7% nonbinary). In the social cues condition, the robot says, “Hello. Today, a physical therapy session takes place and I think [the doctor thinks] it would be good for you to go there. Doing some exercises will help you regain flexibility and support your overall recovery, ultimately making you regain your strength for long-term health. I advise [The doctor advises] you to do some physical exercises.” In the no social cues condition, the robot says “Hello. Today, a physical therapy session takes place and I think [the doctor thinks] it would be good for you to go there. I advise [The doctor advises] you to do some physical exercises.”

We used the same scenario and measures for compliance and accountability as in Study 3 and included an attention check as well. As in the previous studies, the robot-only advice condition served as the control, while the robot advice given on behalf of a human doctor served as the treatment condition.

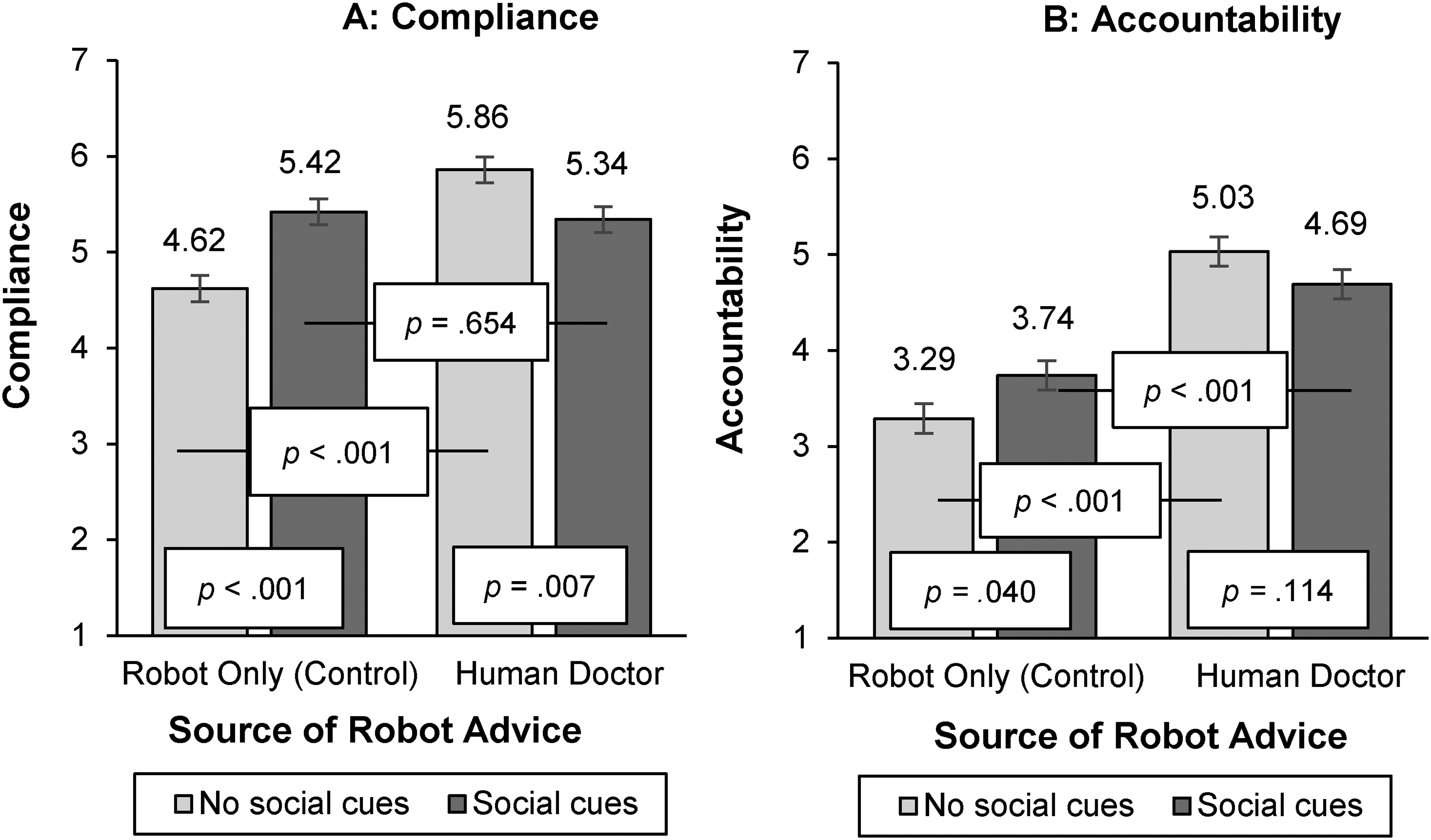

Compliance

An ANOVA on compliance shows the expected two-way interaction between source of robot advice and social cues (F(1, 424) = 23.73, p < .001; η2 = .05), together with a significant main effect of source of robot advice (F(1, 424) = 17.97, p < .001; η2 = .04). The main effect of social cues was not significant (p = .297). Simple effects show that in line with H1 and replicating our prior results, when social cues were not embedded, respondents were more likely to comply with a robot advising on behalf of the human doctor (vs. with robot-only advice) (Mhuman = 5.86, Mrobot-only = 4.62; F(1, 424) = 41.11, p < .001; η2 = .09). Importantly, this effect was attenuated when social cues were embedded (Mhuman = 5.34, Mrobot-only = 5.42; p = .654), supporting our H3b. For robot-only advice, the likelihood to comply with the robot was significantly higher when social cues were embedded (vs. not) (Msocial_cues = 5.42, Mno_social_cues = 4.62; F(1, 424) = 17.25, p < .001; η2 = .04). Interestingly, we see a decrease in likelihood to comply with advice on behalf of a human doctor when social cues were embedded (vs. not) (Msocial_cues = 5.34, Mno_social_cues = 5.86; F(1, 424) = 7.43, p = .007; η2 = .02; Figure 10).

Compliance and Accountability, Study 4b.

Accountability

An ANOVA on accountability reveals the expected two-way interaction between source of robot advice and social cues (F(1, 424) = 6.66, p = .010; η2 = .02), together with a significant main effect of source of robot advice (F(1, 424) = 76.95, p < .001; η2 = .15). The main effect of social cues was not significant (p = .719). Simple effects show that when social cues were not embedded, respondents felt more accountable to the robot when the source of advice was the human doctor (vs. the robot physical therapist only) (Mhuman = 5.03, Mrobot-only = 3.29; F(1, 424) = 63.84, p < .001; η2 = .13), in line with H2. Importantly, this effect was attenuated when social cues were embedded (Mhuman = 4.69, Mrobot-only = 3.74; F(1, 424) = 19.35, p < .001; η2 = .04), in support of H3a. For robot-only advice, accountability was significantly higher when social cues were embedded in the advice (vs. not) (Msocial_cues = 3.74, Mno_social_cues = 3.29; F(1, 424) = 4.27, p = .040; η2 = .01). For advice on behalf of a human doctor, we do not see a difference in accountability when social cues were embedded (vs. not) (Msocial_cues = 4.69, Mno_social_cues = 5.03; p = .114).

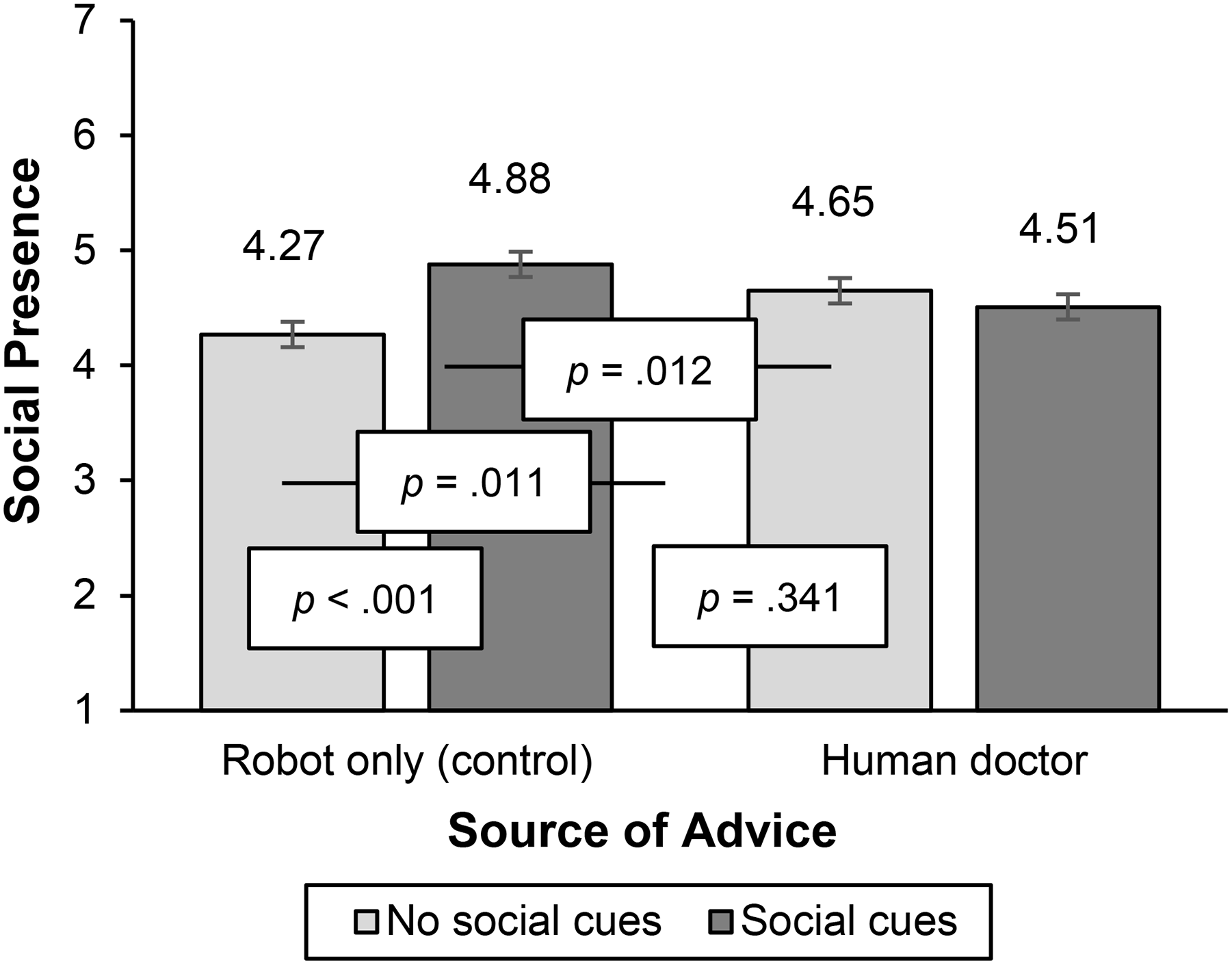

Social Presence

Our theoretical rationale rested on the premise that a robot highlighting social cues will increase its own social presence (sample scale item: “How much did you feel as if someone is talking to you?”; 1 = “strongly disagree,” and 7 = “strongly agree”; Lee and Nass 2005; Nass and Lee 2001); see Web Appendix F for full scale). An ANOVA on social presence reveals the expected two-way interaction between source of robot advice and social cues (F(1, 424) = 12.90, p < .001; η2 = .03), together with a significant main effect of social cues (F(1, 424) = 5.07, p = .025; η2 = .01). The main effect of source of robot advice was not significant (p = .981). Importantly, simple effects show that for robot-only advice, the perceived social presence of the robot physical therapist was significantly higher when social cues were embedded in the advice (vs. not) (Msocial_cues = 4.88, Mno_social_cues = 4.27; F(1, 424) = 16.83, p < .001; η2 = .04). This was not the case when advice was given on behalf of a human doctor (Msocial_cues = 4.51, Mno_social_cues = 4.65; p = .341; see Figure 11). In addition, we confirm our theorizing by showing social presence mediates our effects (index of moderated serial mediation = −.25, SE = .08, 95% CI: [−.41, −.11]). A moderated serial mediation analysis (Model 83; Hayes 2015) reveals a significant indirect effect through social presence and accountability as serial mediators only for robot-only advice (indirect effect = .20, SE = .06, 95% CI: [.11, .32]) and not for robot advice on behalf of the human doctor (indirect effect = −.05, SE = .05, 95% CI: [−.15, .05]). Hence, we show that embedding social cues in the advice increases accountability and compliance for robot-only advice due to higher social presence.

Social Presence, Study 4b.

Discussion

Study 4 demonstrates that differences in consumer compliance with robot advice given on behalf of another human service provider versus robot-only advice are attenuated when the robot embeds social cues in its advice. This pattern is observed in both a field study (Study 4a), involving a human–robot interaction, and an online study (Study 4b), which further identifies accountability, fostered by social presence, as the underlying mediating mechanism.

However, a notable difference emerges between the two studies regarding advice given on behalf of a human service provider when social cues were embedded (vs. not). In the field study, compliance increased slightly, whereas in the online study, compliance decreased. This discrepancy may stem from the contextual differences between the two settings. In the field study, a real-life environmental factor—the preexisting relationship between students and their course lecturer (the human source of the robot's advice)—might have been stronger than in an online environment. Specifically, in the online study, respondents were exposed to a hypothetical human doctor, lacking the relational familiarity, which may have diminished the impact of the advice that embedded social cues.

General Discussion

Noting both the expanding uses of service robots at organizational frontlines and some gaps in prior research devoted to increasing consumer compliance with robot recommendations, we undertake this study of service robots in settings where consumer well-being is the goal. Accordingly, we structure our discussion of the findings according to the research questions and gaps that motivated this research.

Does Connecting to a Human Service Provider Encourage Compliance with Robot Advice (Gaps #1, #4 and #5)?

In six studies, including four field studies with actual human–robot interactions, we establish that consumers are more likely to comply with robot advice if the robot invokes a human source rather than when the source of robot advice is only the robot. This highlights a key difference between human–human and human–robot relationships: Human advice requires no additional source, as human service providers inherently encourage compliance, a pattern also observed in the health domain, where advice from nurses and doctors leads to similar adherence levels (Barnoy, Levy, and Bar-Tal 2010; Laurant et al. 2018). In contrast, robot advice does require an additional source.

In our field studies, the simple yet powerful adjustment of including a human source into the robot advice led to compliance that doubled the choice likelihood of health activities (Study 1a), nearly quadrupled compliance from 11.54% to 50% (Study 4a), and led to 20% more compliance with health-related instructions (Study 1b).

Prior studies presenting a lever to increase compliance with robot advice also do not show whether compliance wears off over time. Crucially, we demonstrate that heightened adherence to robot advice invoking a human service provider persists over time and does not wear off. In our longitudinal field study (Study 2), compliance levels increased from 0% to 20%, illustrating the profound potential of incorporating a human source in advice to drive compliance, even in situations where no prior adherence was observed. This sustained effect implies that invoking a human service provider through the robot can effectively encourage continued compliance across repeated interactions, highlighting its long-term value in promoting adherence.

Accountability as a Crucial Mechanism for Closing the Compliance Gap (Gap #2)

Building on social presence theory (Nowak and Biocca 2003; Short, Williams, and Christie 1976) and resulting accountability from psychology (Lerner and Tetlock 1999; Schlenker and Weigold 1989; Tetlock 1985) and health behavior literature (Oussedik et al. 2017), we show that consumers feel less accountable to robots as a social presence and comply less with robot-only advice. We therefore highlight a novel way in which accountability and compliance with robot advice can be increased: by invoking human social presence as the source of the given advice. When a robot gives advice on behalf of a human service provider, consumers comply more readily because they recognize the social consequences of noncompliance (Lerner and Tetlock 1999; Oussedik et al. 2017). Thus, the robot generates a sense of accountability by connecting to a human social presence who can naturally evoke accountability, leading to greater compliance.

Prior literature has explored various strategies to improve compliance with robot advice but often lacks clarity on the underlying mechanisms. For example, Mizumaru et al. (2019) demonstrated a method to increase compliance with robot advice but did not investigate the potential mechanisms driving this effect. Schneider et al. (2022) combined appeals to counter robot trivialization, yet they remained uncertain about the exact mechanism responsible for the observed compliance increases. Moreover, such countertrivialization strategies may wear off over repeated interactions. In contrast, our approach of invoking accountability through human social presence may offer a more robust mechanism. As demonstrated by our longitudinal field study (Study 2), invoking human presence through the robot sustained heightened compliance over repeated interactions, showing the persistence of this mechanism.

How Can Compliance with Robot Advice Be Increased Without Invoking a Human Service Provider (Gap #3)?

Another route to enhance human compliance with robot advice is when a robot embeds social cues in its advice. The robot thus demonstrates the ability to act meaningfully, which serves as a social cue enhancing its social presence (Satyanarayan and Jones 2024; Short, Williams, and Christie 1976; Xu, Chen, and You 2023). In turn, increased social presence fosters a stronger sense of accountability and bolsters compliance with the robot's advice, even without relying on a connection to a human service provider. However, we caution that prior literature has identified both positive and negative downstream effects of a robot displaying more social cues to elicit social, affective, and behavioral responses (e.g., Ghazali et al. 2018; Liew and Tan 2021). Robot sales clerks making flattering comments have, for instance, been met with skepticism (Lee and Yi 2025). The reason why we find positive rather than negative downstream effects likely is that our investigation is situated in the realm of services aimed at increasing consumer well-being, with the advice purpose highlighting consumer well-being as well. This context notably differs from the commercial selling context of Lee and Yi (2025), where consumers may attribute more selfish motives to a more social sales clerk.

Implications for Theory

This study advances research on social presence, accountability, and consumer compliance. First, this comprehensive investigation into the effects of source of robot advice addresses an important element of human–robot interactions that has not been sufficiently researched in the literature. While existing research investigates ways to make robot advice more compelling (Saunderson and Nejat 2021; Schneider et al. 2022), it has yet to explore whether linking a robot's advice to a human service provider increases compliance. Notably, framing AI as supporting human decisions has been shown to improve acceptance (Longoni, Bonezzi, and Morewedge 2019). With four field and two online experiments, we demonstrate that consumers are more likely to comply when a robot gives advice on behalf of a human service provider because they recognize the social consequences of noncompliance (Lerner and Tetlock 1999; Oussedik et al. 2017). While invoking human social presence as the source of the given advice is a necessary condition to increase adherence with robot advice, this significantly differs from human–human relations, where a need for compliance is evoked more naturally. We hereby contribute to the emerging body of theories on how human–robot interactions fundamentally differ from human–human interactions.

Second, we address a gap in human–robot interaction literature, which has so far overlooked the role of accountability, and further establish how social presence theory affects consumers’ compliance with robots. Our mediator accountability highlights a key difference between human–human and human–robot relationships: Consumers feel more accountable to, and thus are more likely to comply with, advice given by a robot when the source of robot advice is another human. In contrast, when a human gives advice, no additional source of advice is required, as the human already serves as a sufficient source of accountability and can generate compliance on their own (e.g., Barnoy, Levy, and Bar-Tal 2010; Laurant et al. 2018).

Third, we identify a boundary condition that offers two routes in which accountability with robot advice can be increased: either by invoking another human that consumers feel accountable to, or by embedding social cues in the advice. Extending social presence theory (Short, Williams, and Christie 1976), we show that social cues not only enhance awareness of another entity but also influence how a sense of accountability can be heightened. This adds to the role of social cues in influencing compliance and behavioral responses in human–robot interactions (e.g., Ghazali et al. 2018; Liew and Tan 2021; Xu, Chen, and You 2023).

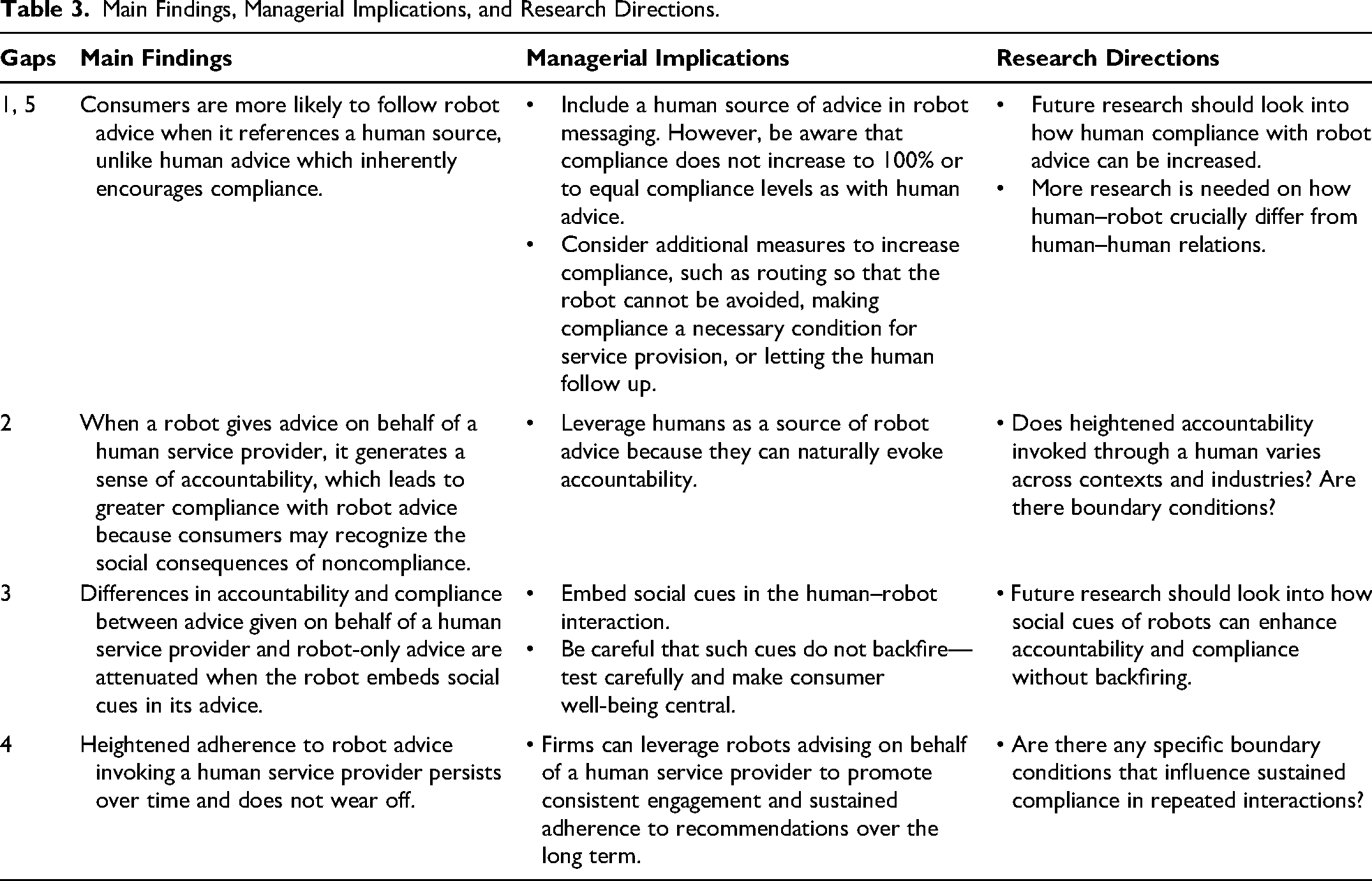

Implications for Practice

The successful incorporation of robots into organizational frontlines is a significant but necessary challenge for most organizations (Čaić et al. 2019; Schneider et al. 2022). Our findings offer several practical managerial implications for achieving such incorporation, which we summarize in Table 3. First, in light of the challenges associated with consumers’ reluctance to comply with robots’ recommendations, service providers should generate a sense of accountability by invoking a human source in robot advice. When the robot advice was given on behalf of a human service provider, compliance rates significantly increased to more than four times the compliance exhibited with robot-only advice (see Study 4a). With such an approach in place, firms can more readily use robots to automate their frontline services, especially for services aimed at enhancing consumer well-being. We learned in discussions with practitioners who actively employ robots in health care institutions that caregivers typically use robots to support their own tasks. For instance, robots may remind clients to get ready for lunch or to select clothing before caregivers come in to assist with washing and dressing. In such contexts, including the health care professional as the source of advice could make these reminders more powerful, increasing compliance without the message feeling forced or artificial. Our findings therewith offer clear recommendations for health care professionals, organizations, and patients, as well as robot developers: If they want to improve compliance and achieve enhanced health care outcomes, ensure that the robot is not the only source of advice.

Main Findings, Managerial Implications, and Research Directions.

Second, accountability does not always require direct human contact (Bailey 2008) but can be facilitated through indirect means. For organizations, we therefore recommend generating a sense of accountability to a human service provider when a robot serves, for instance, by emphasizing that the human may follow up. Consumers may comply more readily because they recognize the social consequences of noncompliance (Lerner and Tetlock 1999; Oussedik et al. 2017).

Third, another way for organizations to improve adherence with robot advice is to embed social cues in the advice, which also fosters accountability and compliance with robot advice. However, given the negative downstream effects prior literature has also identified when a robot displays more social cues, such advice framing needs to be carefully designed to avoid backfiring and triggering reactance or resistance (Ghazali et al. 2018; Lee and Yi 2025). Putting consumer well-being central in message framing may be a good way to limit such backfire effects.

Fourth, firms do not need to hesitate when it comes to using robots for social tasks. Traditionally, machine agents have been regarded as more capable of performing analytical tasks, while human agents seem more effective for social tasks (Hertz and Wiese 2019). In many service contexts though, consumers need both analytical and social advice. For firms worrying about their employees feeling threatened by their new robot colleagues, anecdotal evidence from our field Study 1a indicates that the care personnel do not fear robots taking over their jobs; they recognize the workforce shortages in the health care industry (Zhang et al. 2018, 2020) and seemingly welcome technological advancements that enable them to do their jobs well. Strategically incorporating the insights from our research might help ease the burdens on health care staff and address staffing shortages through the integration of robotic assistance, which then offers the potential to enhance service quality and improve operational efficiency in health care contexts.

Limitations and Further Research

Our research contains some limitations that offer opportunities for continued research. First, in our field study in the elderly care facility, one-third of the participants had to be excluded, because their hearing impairments made it difficult for them to understand the robot. Continued studies might address this problem by using lower-pitched robots or developing robots that can be connected to users’ hearing aids.

Second, Study 2 has been conducted in a group setting, which may have influenced the outcomes due to social influence. Group processes may explain why higher compliance with a human source of robot advice did not immediately occur in week 2 but manifested in weeks 3 and 4. Another reason for this difference might be that the robot we used in this study is a lot smaller than robot “Pepper” used in Studies 1a and 1b, making it easier for participants to ignore the robot altogether. We did not measure individual drives variables in Study 2, such as enjoyment in the exercises. Future research is needed to assess these types of differences.

Third, research should further investigate how human–robot interactions crucially differ from human–human interactions. A key challenge remains in understanding if compliance with robot-provided advice can be boosted to levels comparable to human advisors, as there is still a significant gap (Haring et al. 2019). Despite the significant improvements observed across various interventions, compliance never reached 100%, highlighting a persistent gap in achieving full adherence.

Fourth, future research should explore how social cues in human–robot interactions can be designed to enhance accountability and compliance without triggering backfire effects such as reactance. While embedding social cues can improve advice adherence, careful testing is essential to ensure that these cues do not have unintended negative consequences (Ghazali et al. 2018; Lee and Yi 2025). Investigating the balance between effective social cues and avoiding backfire is critical, particularly in contexts where consumer well-being is the ultimate goal. Future studies should examine this balance to provide valuable insights into optimizing robot interactions to maximize both acceptance and compliance.

Supplemental Material

sj-pdf-1-jmx-10.1177_00222429251370268 - Supplemental material for Increasing Accountability and Compliance with Robot Advice

Supplemental material, sj-pdf-1-jmx-10.1177_00222429251370268 for Increasing Accountability and Compliance with Robot Advice by Jana Holthöwer, Jenny van Doorn, and Stephanie M. Noble in Journal of Marketing

Footnotes

Coeditor

Detelina Marinova

Associate Editor

Manjit S. Yadav

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the project “My robot colleague will be with you in a moment – Public service by teams of robots and humans,” funded by the Dutch Research Council (NWO) under grant number 406.22.EB.011. The views expressed in this manuscript are the sole responsibility of the authors, and they do not necessarily reflect the views of NWO.