Abstract

Background:

Digital suicide prevention interventions have previously been shown to be effective, however the field has rapidly developed.

Aims:

To undertake a contemporary review of the evidence and understanding where interventions may work best.

Method:

A meta-analysis following the PRISMA guidelines was conducted. PubMed/Medline, PsycINFO and Cochrane Central were searched for randomised controlled trials up to February 2024. Interventions were categorised according to their delivery setting, and as direct (directly targeting suicidality) or indirect (targeting depression), and effects on suicidal ideation and behaviours (plans, self-harm, attempts and suicide death) were calculated using Hedge’s g.

Results:

Forty-six papers reporting 48 unique trials were included. The majority of studies examined direct interventions (n = 27, 56.3%), and most were delivered in community settings (n = 31, 64.6%). There was a small and significant effect for suicidal ideation in clinical settings (g = −0.35, 95% CI [−0.59, −0.10], p = .006) and community settings (g = −0.10, 95% CI [−0.19, −0.01], p = .037), but not in education settings (g = −0.20, 95% CI [−0.55, 0.16], p = .283). Pairwise comparisons between settings were not significant, nor were there any significant effects for suicidal behaviours.

Conclusions:

The results show that digital interventions to reduce suicide ideation are effective when delivered in community and clinical settings. Fewer studies have been conducted in, and the evidence does not yet support the effectiveness in, education settings. Furthermore, there does not appear to be any evidence supporting the effectiveness of digital interventions in reducing suicidal behaviours. Design features (such as treatment modality) may account for less variance in effectiveness than previously thought.

Introduction

Although rates of suicide are in decline worldwide, it is unlikely that targets set by the World Health Organization and the United Nations General Assembly to reduce suicide mortality by one third before 2030 will be achieved (World Health Organization, 2021). A critical challenge for suicide prevention is that most people experiencing suicidal thoughts and/or behaviours do not, or cannot, access help from mental health services. It is estimated that around half of those at risk for suicide (Tang et al., 2022) and two-thirds who die by suicide (Stene-Larsen & Reneflot, 2019; Walby et al., 2018) do not access mental health services. Those who are least likely to seek mental health care, for example, men and the elderly (Tang et al., 2021, 2022; Walby et al., 2018), are also those most likely to die by suicide (World Health Organization, 2014, 2021). Many other individuals experiencing suicidality who want help cannot access it, with evidence of wait times for face-to-face treatment in excess of three months (Australian Healthcare Index, 2023).

Digital mental health interventions refer to therapeutic and wellbeing strategies delivered via technology platforms (e.g. internet, smartphones, virtual reality), to improve users’ mental health. They have become an important part of mental health care systems because they overcome many barriers that commonly prevent access to care, such as stigma, cost and workforce capacity (Schueller et al., 2019). The importance of digital mental health interventions was notably augmented during the COVID-19 pandemic. Substantial increases in population rates of psychological distress, ideation and self-harm saw digital mental health scaled rapidly for the first time, to meet rising treatment demand (Duden et al., 2022). Widening disparities in healthcare access, globally, means that digital interventions will play an increasingly central role in the delivery of universal healthcare. Because of this, continued efforts to understand their effectiveness, limitations and relevancy, remains an important objective for researchers, clinicians and decision makers alike.

In recent years there has been a proliferation of meta-analyses examining digital interventions for suicide prevention. With many of these reviews being replicative efforts to establish the pooled effectiveness of these innovations, it is not surprising that all have reached the same conclusion; digital interventions are modestly effective at reducing suicidal ideation but there is insufficient evidence to determine efficacy for suicidal behaviour (Buscher et al., 2020, 2022; Chen & Chan, 2022; Sutori et al., 2024; Torok et al., 2020; Witt et al., 2017). While valid, the conclusions of these reviews have been limited by the availability of only a small pool of published studies (between n = 4 and 20 studies) measuring suicide-related outcomes (e.g. ideation, self-harm, suicide attempt). In the time since many of these reviews were conducted (i.e., most searches end in 2020) there has been a notable increase in the number of relevant published trials. This mounting evidence base now provides an opportunity to answer more nuanced questions about effectiveness, such as, in what contexts are these innovations efficacious, and for whom. While digital interventions have the advantage of being able to be used flexibly, they are typically designed and implemented for a specific setting and therefore validated with particular population groups. Knowing if the different settings and populations that digital interventions are developed and validated for contributes to performance variance has scientific and practical importance for advancing their implementation into mental healthcare systems.

This review also addresses other important limitations of previous meta-analytic studies that can help to further unpack the question of ‘what works and for whom?’. Many previous reviews have focussed on unguided (unsupervised) interventions, because this has so far been the prevalent delivery model for digital ‘suicide prevention’ (Buscher et al., 2020; Sutori et al., 2024; Torok et al., 2020; Witt et al., 2017). More recently, there has been increased emphasis on testing blended treatment models, which integrate digital interventions with face-to-face clinical care, compensating for disadvantages in engagement, cost and workforce capacity that individually and unevenly characterise these treatment modalities (Erbe et al., 2017). Although blended treatment models are believed to be a good solution to increasing the small effects observed in unguided digital interventions, there has not yet been any comparative examination of these two dominant models on suicide-related outcomes in the digital intervention literature. Also, while it is assumed that building interventional content and activities from proven psychotherapy models is an important moderator of the efficacy in digital health, this assumption is yet to be explicitly tested. Many publicly available interventions contain little to no theory-driven, therapeutic content (Larsen et al., 2019) and without understanding what role ‘science’ plays in the efficacy of digital interventions, we cannot know if such interventions are likely to be helping or hindering those with suicidal experiences.

A final issue for advancing the ‘what works’ discourse is the ‘crossover’ effects of digital interventions. Because epidemiologic studies have shown that depression and ideation are highly comorbid (30%–50%; Handley et al., 2018; Piscopo et al., 2016) and that depression increases the odds of experiencing suicidal ideation more than any other common psychiatric disorder (Nock et al., 2008), by extension, it may be assumed that change in one outcome will naturally positively affect the other. While there may be potential for cross-over effects on suicidal ideation in digital interventions targeting depression, the available research does not appear to strongly support this hypothesis. For example, in two prior meta-analyses, depression-focussed cognitive behavioural interventions have shown null effects for ideation (Cuijpers et al., 2013; Torok et al., 2020) with limited support for other novel approaches, such as interpersonal psychotherapy (Schneider et al., 2020). As it has been shown both theoretically and empirically that the risk processes for suicidal ideation and suicide attempt (e.g. hopelessness, defeat, pain sensitivity; Klonsky et al., 2017) are uniquely differentiated from those underpinning the onset and maintenance of depression (e.g. negative attention bias, neuroticism, rejection sensitivity; Remes et al., 2021), these equivocal findings are not surprising. Understanding the role of ‘risk specificity’ in intervention effectiveness can be tested by directly comparing digital interventions which target suicide-related outcomes specifically (‘direct interventions’) to depression intervention approaches (‘indirect interventions’) that are unlikely to target core risk processes underpinning ideation and attempts. A comparison of direct and indirect digital interventions has only been explored in one prior review – on a limited number of trials (Torok et al., 2020), and further investigation is warranted to advance intervention optimisation efforts to improve effectiveness.

The following questions were the primary focus of this review:

Do the effects of digital interventions on suicidal thoughts and behaviours differ across clinical, community and education settings?

Does the effect for suicidal ideation differ depending on whether it is the primary versus secondary target of a digital intervention?

Does the effect of digital interventions on suicidal ideation differ depending on the delivery model or theoretical model?

Methods

This systematic review and meta-analysis adheres to the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) guidelines for transparent and comprehensive reporting (Page et al., 2021). The protocol was registered on PROSPERO, an online prospective register of systematic reviews (CRD42022314246). The eligibility criteria were amended following registration, as described in the Protocol amendment section below.

Search strategy

We conducted searches of three relevant electronic databases from their inception to April 7, 2022, followed by an update from April 7 to October 20, 2022, a third update from 20 October 2022 to 03 July 2023, and a final update from 03 July 2023 to 01 February 2024: PubMed/Medline (from 1946), PsycINFO (from 1806) and Cochrane CENTRAL (from 1996). Additionally, we manually searched the reference lists of the included articles to identify other relevant articles and comprehensively assess the published literature.

The search strategy was developed using an empirical approach to identify RCTs examining digital interventions for suicide prevention (Hausner et al., 2012). First, we identified articles which met our inclusion criteria through Google Scholar, to create a test set of articles (n = 5). These articles were used to develop the search strategy by using relevant MeSH and keyword terms identified within the titles and abstracts of the test set as search terms. This search strategy was run through PubMed to confirm that the test set of articles could be identified, which achieved 100% sensitivity. Subsequently, the search strategy was adapted for PsycINFO and Cochrane CENTRAL databases and were grouped into three key domains: (i) RCTs; (ii) suicide and depression and (iii) digital healthcare (see Supplemental Materials for the full search strategy).

Data screening

Screening was conducted using Covidence (www.covidence.org), an online systematic review management software, which automatically identified and removed duplicates. After checking that all duplicates were removed, two reviewers (NJ and SEDR, research officers with training and experience conducting systematic reviews) independently screened a 25% subset of titles and abstracts according to prespecified PICOS-based eligibility criteria. As agreement between the two reviewers was high (κ = .81), the remaining articles were single screened. Whilst this dual-screening practice was only conducted on the first set of search results, it still formed a considerable percentage of the total search results (19%). Full-text articles were then independently screened by both NJ and SEDR (κ = .75). After each stage, the review team resolved discrepancies through discussion. For the updated searches, SEDR or NJ single-screened titles and abstracts, and full texts. The included articles were checked by team members (MT and ML) to confirm eligibility.

Eligibility criteria

Eligible articles included any superiority randomised controlled trials involving comparison conditions which received either an intervention (e.g. attention placebo control or treatment as usual [TAU]) or no intervention (e.g. waitlist control). Eligible interventions had to be (i) delivered using current technology (i.e. computers, tablets, smartphones or the internet); (ii) directed towards an individual; (iii) designed to reduce suicidal thoughts or behaviours (direct intervention), or depression (indirect intervention) and (iv) delivered either as a standalone ‘self-guided’ model or as part of a ‘blended care’ clinician supported model. Interventions were excluded if they were (i) directed towards caregivers or people in positions of trust (e.g. general practitioners); or (ii) delivered as face-to-face treatment using video-conferencing technology or via phone calls. Eligible articles had to report a measure of suicidal ideation (thoughts) or suicidal behaviours (plans, non-suicidal self-harm, suicide attempt and suicide) as a primary or secondary outcome. Non-suicidal self-harm was included in our operationalisation of ‘suicidal behaviour’ as it is a well-established precursory behaviour that greatly increases the odds of suicide, particularly in young people (Bennardi et al., 2016; Hawton et al., 2020), and therefore is a critical target for suicide prevention. Depression symptoms were reported as secondary outcomes. Articles were not restricted based on the target population, setting or language. Four articles not published in English (Dutch, German and Chinese [n = 2]) were reviewed by native speakers during full-text screening.

Protocol amendment

Originally, the eligibility criteria specified that interventions had to be designed using theory-based therapeutic models (e.g. cognitive behavioural therapy [CBT], dialectical behavioural therapy [DBT]), following the criteria used in an earlier systematic review and meta-analysis (Torok et al., 2020). This criterion was removed during full-text screening in the first search round (from inception to April 7, 2022), as we discovered that some articles reported interventions based on practices which are derived from broad theories, models or practices, such as conditioning (e.g. Franklin et al., 2016) or cognitive writing exercises (e.g. Hooley et al., 2018), which are not necessarily psychotherapeutic in nature. Therefore, instead of excluding these interventions, we elected to perform a sub-group analysis on the effectiveness of these interventions according to whether or not they were theory-based (i.e. if they specifically cited a psychotherapeutic model such as CBT), or not-theory based (i.e. if there was no mention of theory; or if the theories, principles or practices used were not psychotherapeutic). As non-theory based interventions may have been excluded during title and abstract screening in the first search round, SEDR manually searched through relevant systematic and scoping reviews (n = 12) to find eligible articles (see Supplemental Materials for the reviews). No additional articles for inclusion were identified.

Data extraction

Data from the final included articles were extracted into a custom spreadsheet and checked by two reviewers (NJ and SEDR). Extracted data included: study details (e.g. author, year of publication, country), sample characteristics (e.g. age, gender/sex, sample size), intervention details (e.g. theory-based, setting, delivery modality), comparison condition details (e.g. waitlist control, attention placebo) and outcome data (suicidal ideation, suicidal behaviours, depression and anxiety). Delivery settings were defined as either community-level (e.g. online or in workplaces), clinical healthcare settings (e.g. general practices, hospitals or psychiatric clinics) or education settings (e.g. schools or universities). Intention-to-treat data were used when reported. When data were not provided or unclear, we emailed the corresponding author of the article for clarification and sent two follow-ups (n = 23). We excluded ten articles during this process because suicide outcome data reported was unusable (n = 6) or the author could not be reached/failed to provide data (n = 4). Interventions from the included articles were classified as ‘direct’ (i.e. interventions which aim to reduce suicidal thoughts and behaviours) or ‘indirect’ (i.e. interventions which aim to reduce depression).

Data analysis

The following a priori meta-analyses were conducted:

(1) To examine the effect of digital interventions on (a) suicidal ideation and (b) suicidal behaviours, by setting (clinical, community and education);

(2) To examine the effects of direct versus indirect digital interventions on suicidal ideation, by setting;

(3) To examine the effect of (a) self-guided versus blended delivery models and (b) theory-based versus non-theory-based digital interventions on suicidal ideation, by setting.

For each of these main analyses we additionally provide aggregated overall effects. Post hoc analyses examined the effect of direct interventions on depression outcome measures, and the effects by study control conditions (TAU/waitlist vs. attention placebo).

For all analyses, we conducted random-effects meta-analyses using Hedges’ g (Hedges & Olkin, 1985), which estimates the effect size of the difference in means between intervention and control conditions for continuous measures. The primary outcome was suicidal ideation for the main and subgroup analyses, as it is commonly reported in studies assessing digital interventions for suicide prevention (Torok et al., 2020). For continuous data, we used the difference in means from validated suicidal ideation or depression measures (Hedges’ g) with the associated 95% CI for the main and subgroup meta-analyses. For dichotomous outcomes (e.g. where suicidal ideation or suicidal behaviours have been scored as present or absent), the odds ratios between intervention and control conditions were converted into a Hedges’ g effect size. For instances in which follow-up data were provided, these were analysed using data from the longest follow-up period which was reported. Studies were excluded from the follow-up analyses if they provided waitlist controls with access to the intervention after the post-intervention assessment. Negative effect sizes demonstrate the superiority of the intervention compared to control conditions (waitlist, TAU and attention placebo). Comparisons between effect sizes (e.g. when comparing effects between settings) was conducted using pairwise z-tests.

For each meta-analysis, forest plots are provided along with the I2 statistic as a test of between-study heterogeneity (Higgins et al., 2003), which depicts the likelihood that variation is due to chance or heterogeneity. Values of the I2 statistic range from 0% to 100% (negative values were assigned to zero), with 0%, 25%, 50% and 75% indicating zero, low, moderate and high heterogeneity, respectively (Higgins et al., 2003).

Publication bias and sensitivity analyses

To check for publication bias, we have provided funnel plots of suicidal ideation outcomes for digital interventions in community, education and clinical settings (Egger et al., 1997). Funnel plots were assessed using Egger’s test for asymmetry when a sufficient number of studies were included (i.e. ⩾10 studies). The trim-and-fill procedure was used to generate imputed studies which corrected for effect variance and provided an estimate of the unbiased effect size (Duval & Tweedie, 2000). To assess the robustness of results from the primary analyses, we also conducted a leave-one-out sensitivity analysis (Willis & Riley, 2017) which tested whether the results were disproportionately affected by one study. Additional sensitivity analyses were carried out to examine the robustness of results when only studies with low risk of bias were included in the analyses.

All statistical analyses were conducted using Comprehensive Meta-Analysis Version 3 (Borenstein et al., 2013).

Quality assessment

Articles were divided equally and study quality was independently assessed by authors SEDR, NJ, JH, ML and MT using the Cochrane Risk of Bias (RoB) 2 tool (Higgins et al., 2023; Sterne et al., 2019), which includes five risk domains:

(1) Bias arising from the randomisation process;

(2) Bias due to deviations from the intended interventions;

(3) Bias due to missing outcome data;

(4) Bias in measurement of the outcome;

(5) Bias in selection of the reported result.

For each of the five risk domains, included studies were scored according to a three-point rating scale, for ‘high risk’, ‘some concerns’ or ‘low risk’, with scores guided by responses to signalling questions (i.e. questions designed to evaluate information relevant to the risk of bias domain). The overall risk of bias scores were determined using the RoB 2 algorithm and verified by the reviewers.

Results

Study selection

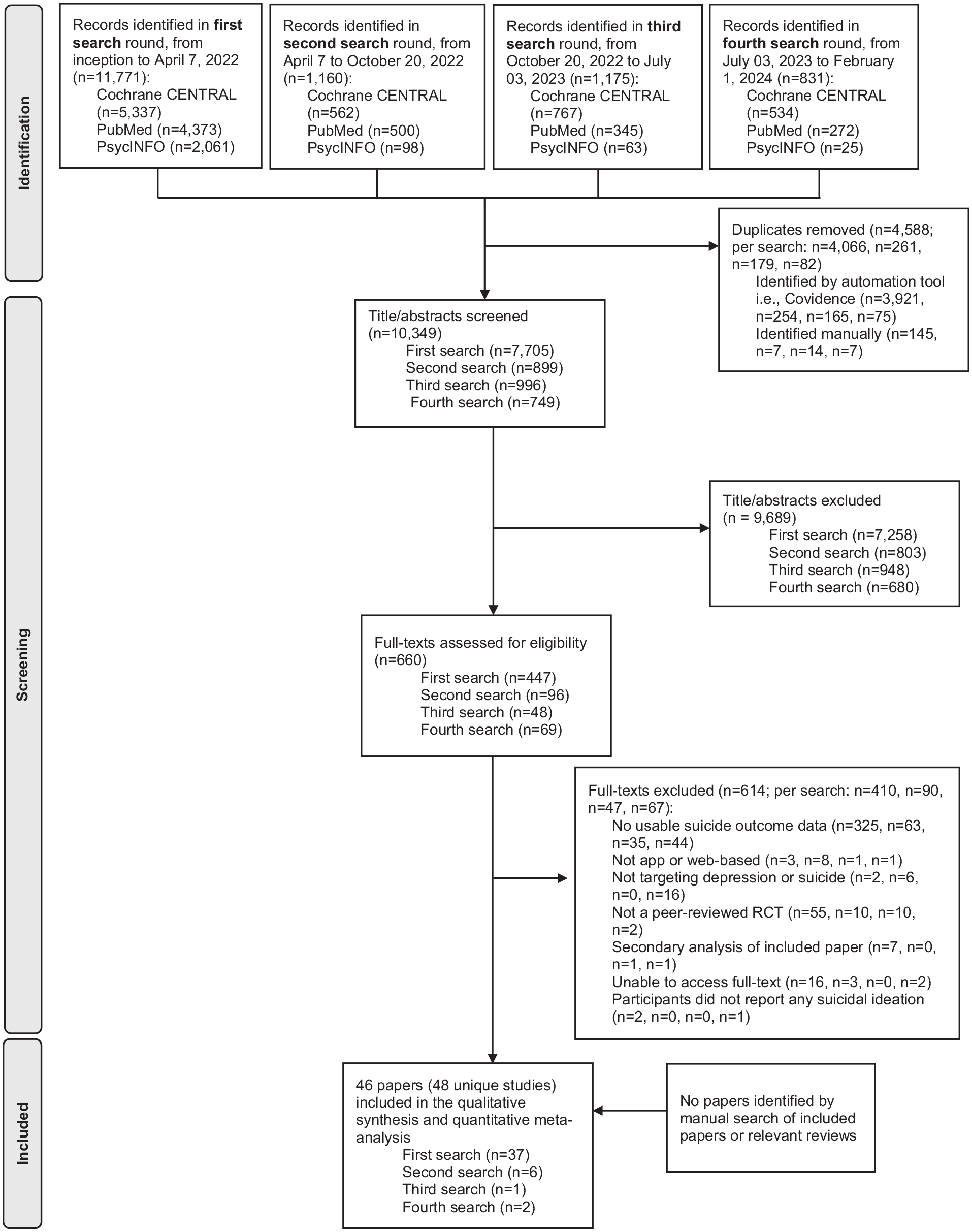

The literature searches identified 14,937 articles (11,771 from the first round, 1,160 from the second round, 1,175 from the third search, 831 from the fourth search and none from manual searching). After removing duplicates and excluding irrelevant articles from screening, 46 articles were identified as eligible for inclusion in the review (Figure 1).

PRISMA flowchart depicting study selection.

The 46 included articles reported on 48 unique trials of digital interventions. Franklin et al. (2016) included three different participant studies which were analysed separately. Additionally, Batterham et al. (2018, 2021), Comtois et al. (2022), Hooley et al. (2018) and Law et al. (2023) all included two intervention streams using the same control group participants. The intervention findings in each of these five studies were therefore combined to enable a pairwise comparison with their respective control groups. 1 In total, 27 (56.3%) of the studies reported on ‘direct’ interventions and 21 (43.8%) were ‘indirect’. The majority of the studies were delivered in community (n = 31; 61.3% were direct interventions; 71.0% were theory-based and 80.6% were self-guided), followed by clinical (n = 13; 53.8% were direct interventions, 76.9% were theory-based; 23.1% were self-guided), and education settings (n = 4; 25% were direct interventions; 100% were theory-based; 75% were self-guided (see Supplemental Table A1 for study characteristics, and Supplemental Table A2 for a summary of results from each study). These studies included baseline data from 29,752 participants (mean age ranging from 14.7 years to 59.6 years). Samples sizes ranged from 18 to 12,414 participants (mean = 619.8 participants [SD = 1781.9]; median 223.5) and 22 studies (45.8%) had sample sizes of <200 participants. The majority of studies (n = 40, 83.3%) skewed towards female participants (range 8–89%), and recruited adult participants (n = 42, 87.5%). Most studies were conducted in the USA (n = 19, 41.3%), Australia (n = 11, 23.9%) or Germany (n = 5, 10.9%).

Studies compared treatment interventions to attention placebo (n = 22, 45.8%), TAU (n = 13, 27.1%) and waitlist conditions (n = 13, 27.1%). Almost all studies reported post-intervention suicidal ideation data (n = 43; 89.6%), and 15 (31.2%) reported suicidal behaviour. One study reported suicidal ideation outcome data at follow-up, but not post-intervention (Batterham et al., 2021). Of those that reported suicidal ideation, 28 reported follow-up outcomes although two studies were excluded from analyses as participants in the waitlist conditions were provided access to the treatment intervention after the post-intervention assessment. For the remaining 26 eligible studies, the mean duration to follow-up was 6.28 months (SD = 4.69) post-intervention.

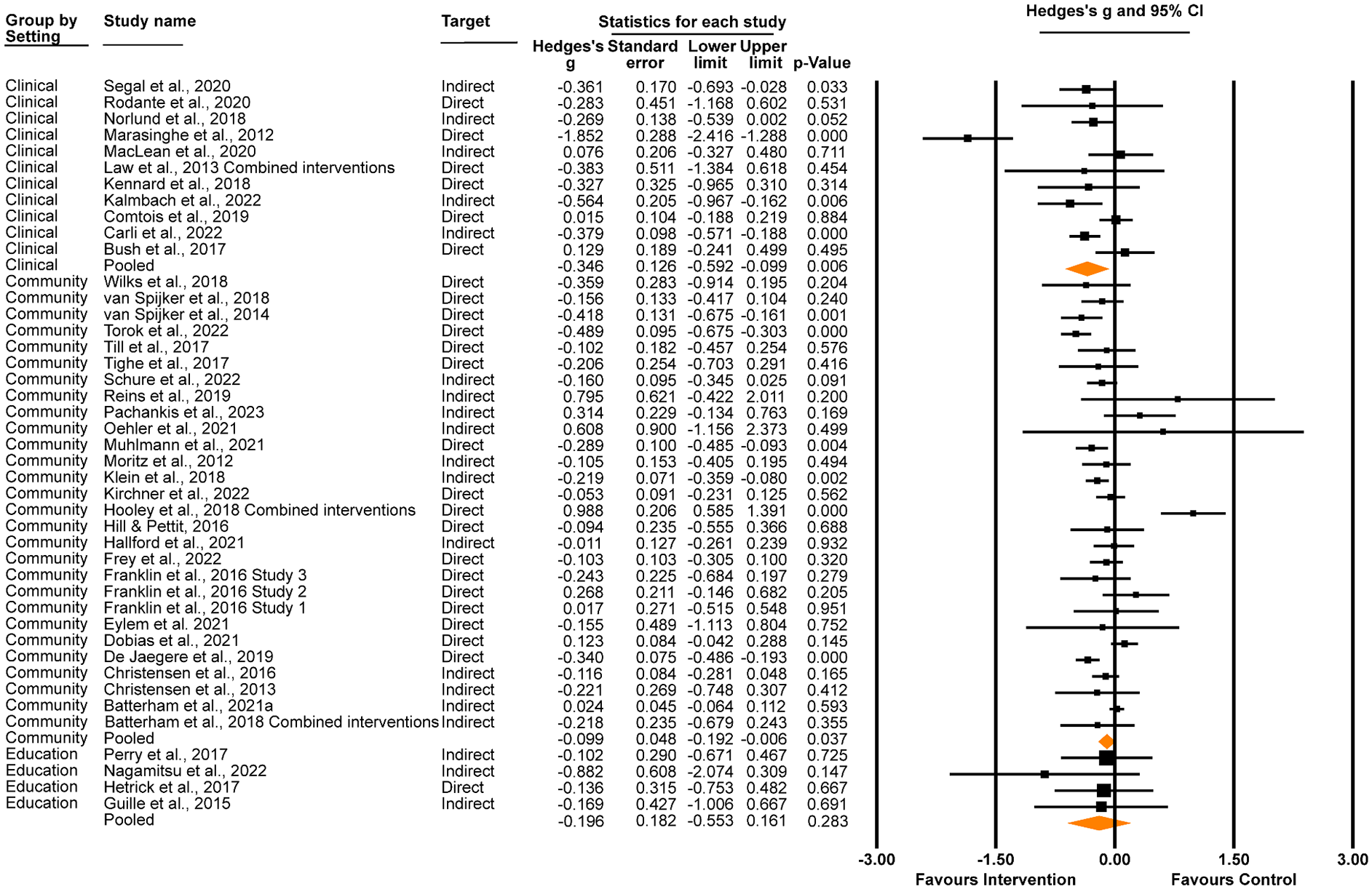

Comparison of digital interventions across settings

Our first research question was to compare the effectiveness of digital interventions on suicidal thoughts (Figure 2) and behaviours (Figure 3) when delivered in different settings. The first meta-analysis demonstrated a significant, small effect on suicidal ideation in favour of interventions delivered in clinical settings (Hedges’ g = –0.35, 95% CI [–0.59, –0.10], p = .006; I2 = 79.48%, 95% CI [78.28%, 80.67%]), and a very small but significant effect in favour of interventions delivered in community settings (Hedges’ g = –0.10, 95% CI [–0.19, –0.01], p = .037; I2 = 71.33%, 95% CI [69.87%, 72.78%]). No significant effect was found for digital interventions delivered in education settings (Hedges’ g = –0.20, 95% CI [–0.55, 0.16], p = .283; I2 = 0%, 95% CI [−3.28%, 3.28%]). The difference in effect between clinical and community settings approached significance (z = 1.84, p = .066), although the other pairwise comparisons were non-significant (clinical v education settings, z = 0.68, p = .498; community v education settings, z = 0.52, p = .604). We also examined the total effect of digital interventions on suicidal ideation measured at post-intervention (n = 43, 93.5%), when aggregated across settings. There was a significant, small reduction in suicidal ideation scores for intervention conditions compared to control conditions at post-intervention (Hedges’ g = –0.15, 95% CI [–0.24, –0.07], p < .001; I2 = 72.34%, 95% CI [70.89%, 73.78%]).

Effect of digital interventions delivered in clinical, community and education settings on suicidal ideation at post-intervention.

Effect of digital interventions on suicidal behaviours at post-intervention, grouped by setting.

As shown in Figure 3, there was no significant effect of digital interventions on suicidal behaviour at post-intervention in clinical settings (Hedges’ g = −0.07, 95% CI [–0.33, 0.19], p = .609; I2 = 66.457, 95% CI [65.75%, 67.16%]), community settings (Hedges’ g = 0.01, 95% CI [–0.15, 0.17], p = 0.866; I2 = 47.07, 95% CI [46.47%, 47.66%]) or educational settings (Hedges’ g = –1.052, 95% CI [–2.69, 0.59], p = .209; I2 = 0.00). Correspondingly, there were no significant differences when comparing settings (clinical v community settings, z = 0.34, p = .737; clinical v education settings, z = 1.16, p = .245; community v education settings z = 1.23, p = .217). Aggregated across settings, there was no significant effect on suicidal behaviours (Hedges’ g = −0.02, 95% CI [–0.15, 0.12], p = .794; I2 = 53.24, 95% CI [52.99%, 53.49%]).

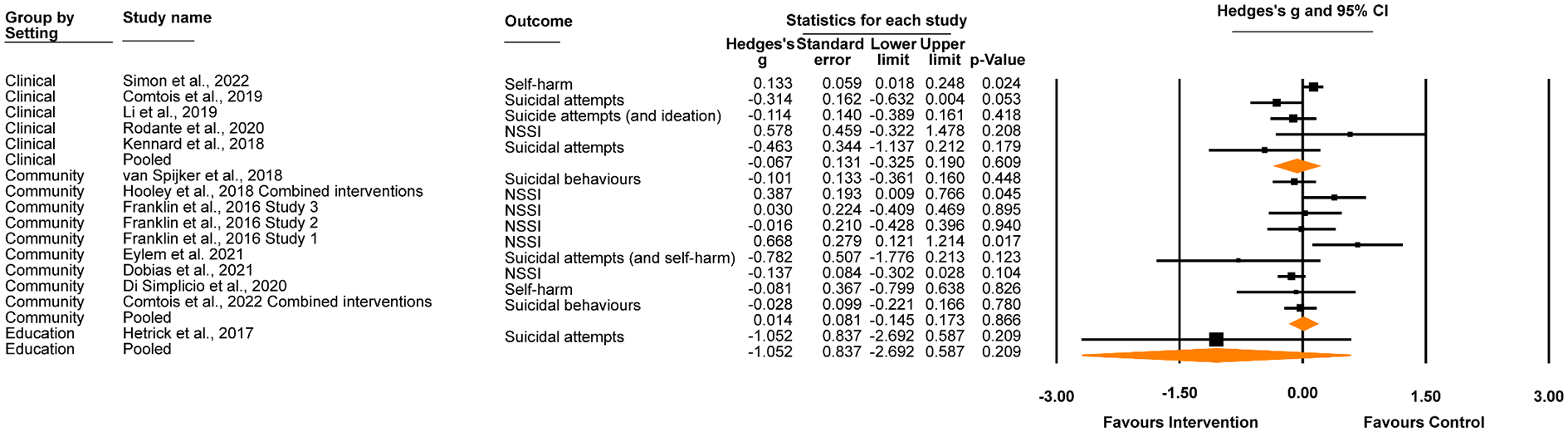

Comparison of direct and indirect interventions

Findings for direct and indirect interventions by setting are shown in Figure 4. Within clinical settings, there was a significant small effect for indirect interventions on suicidal ideation scores at post-intervention (Hedges’ g = –0.32, 95% CI [–0.48, –0.16], p < .001; I2 = 29.56%, 95% CI [27.55%, 31.58%]), but not for direct interventions (Hedges’ g = –0.44, 95% CI [–1.01, 0.14], p = .134; I2 = 87.60%, 95% CI [86.73%, 88.47%]), although this difference was not significant (z = −0.39, p = .695). The effects of direct or indirect digital interventions on suicidal ideation scores at post-intervention were not significant for community interventions (direct: Hedges’ g = –0.11, 95% CI [–0.26, 0.04], p = .137; I2 = 38.47%, 95% CI [36.41%, 40.54%]; indirect: Hedges’ g = –0.08, 95% CI [–0.17, 0.02], p = .099; I2 = 78.02%, 95% CI [76.76%, 79.28%]; z = 0.37, p = .721) nor for interventions delivered in education settings (direct: Hedges’ g = –0.14, 95% CI [–0.75, 0.48], p = .667; I2 = 0% 2 ; indirect: Hedges’ g = –0.23, 95% CI [–0.66, 0.21], p = .312; I2 = 0%, 95% CI [−2.37%, 2.37%]; z = −0.23, p = .815). Aggregated across settings, small significant effects were observed for both direct (Hedges’ g = –0.16, 95% CI [–0.30, –0.02], p = .029; I2 = 79.67%, 95% CI [78.45%, 80.89%]) and indirect digital interventions (Hedges’ g = –0.15, 95% CI [–0.25, –0.06], p = .002; I2 = 51.62%, 95% CI [49.75%, 53.49%]) on suicidal ideation scores at post-intervention (z = 0.084, p = .933).

Effect of direct and indirect digital interventions on suicidal ideation at post-intervention, in (i) clinical, (ii) community and (iii) education settings.

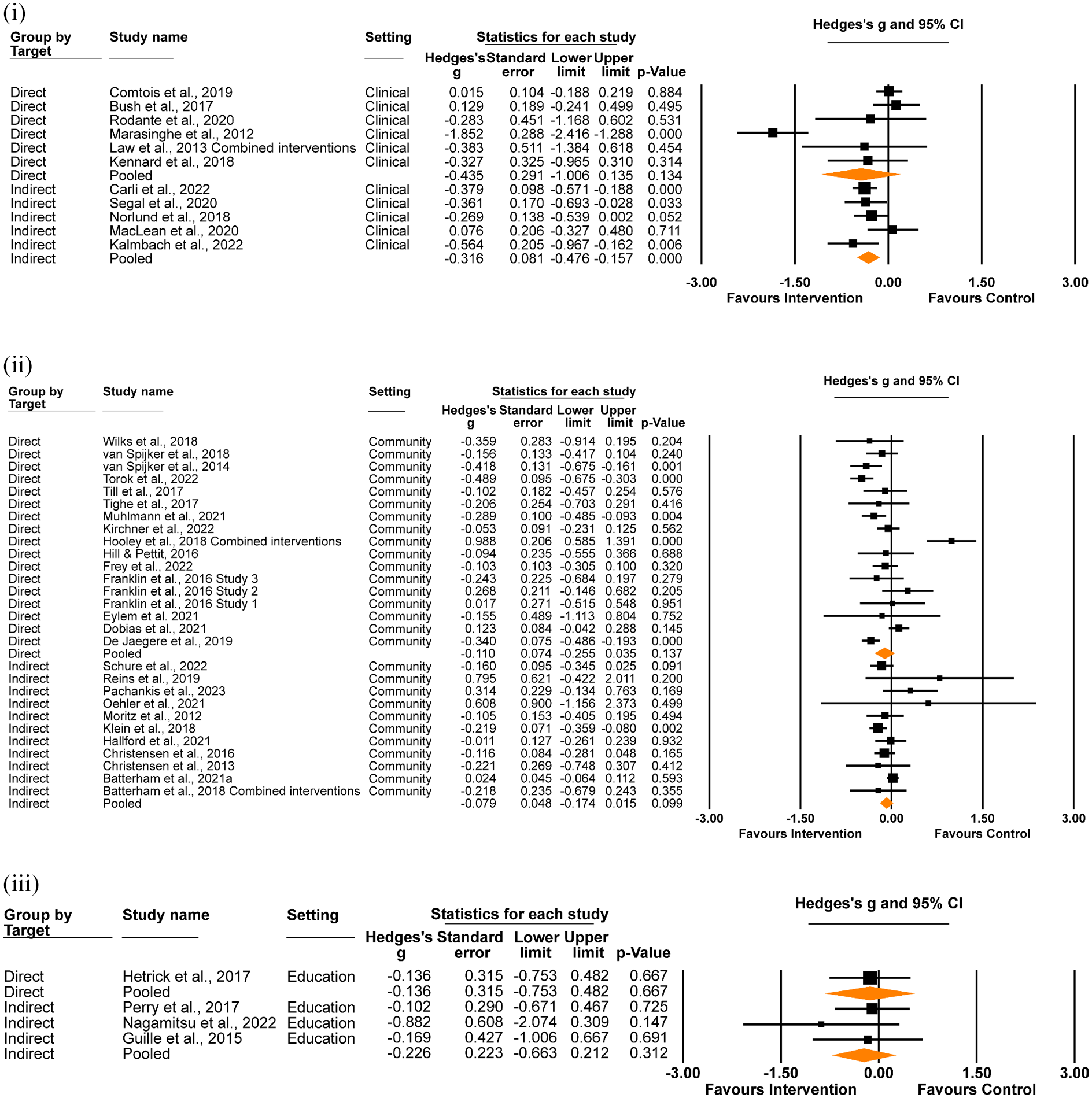

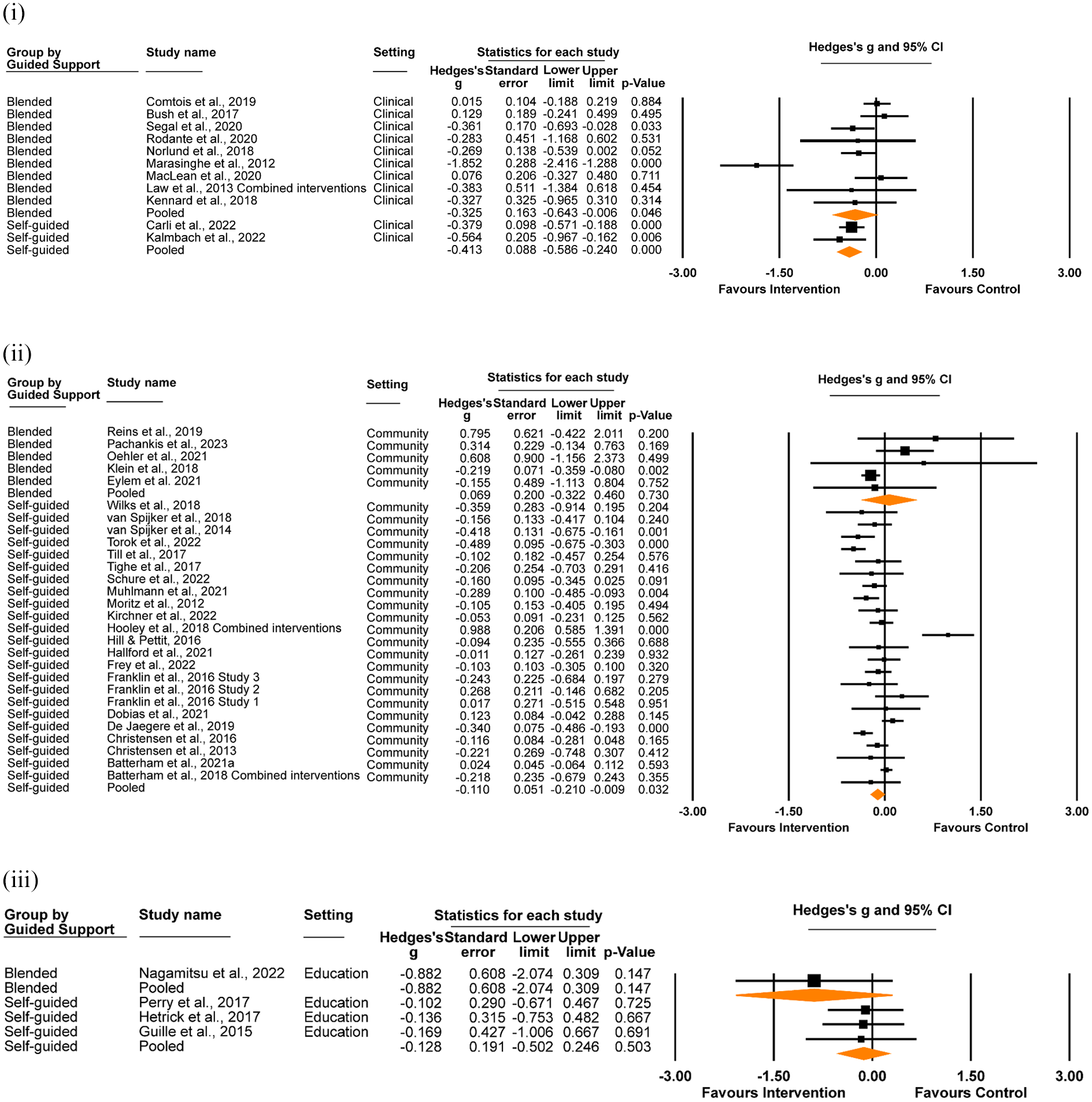

Effect of delivery model on suicidal ideation

We evaluated the effect of self-guided versus blended digital interventions, according to setting (Figure 5). In clinical settings, both self-guided and blended interventions demonstrated a significant effect on suicidal ideation, although the difference between the delivery models did not reach significance (self-guided Hedges’ g = −0.41, 95% CI [−0.59, −0.24], p < .001; I2 = 0.00%, 95% CI [0.00%, 0.00%] 3 ; blended Hedges’ g = −0.33, 95% CI [−0.64, −0.01], p = .046; I2 = 81.62%, 95% CI [79.93%, 83.31%]; z = 0.47, p = .063). In community settings, self-guided but not blended interventions had a significant effect for suicidal ideation (self-guided Hedges’ g = −0.11, 95% CI [−0.21, −0.01], p = .032; I2 = 74.28%, 95% CI [72.78%, 75.78%]; blended Hedges’ g = 0.07, 95% CI [−0.32, 0.46], p = .730; I2 = 50.55%, 95% CI [48.53%, 52.56%]; z = 0.86, p = .388). Neither self-guided nor blended interventions demonstrated a significant effect in education settings (self-guided Hedges’ g = −0.13, 95% CI [−0.50, 0.25], p = .503; I2 = 0.00%, 95% CI [−7.06%, 7.06%]; blended Hedges’ g = −0.88, 95% CI [−2.07, 0.31], p = .147; I2 = 0.00%; z = 1.18, p = .237).

Effect of delivery model (self-guided vs. blended) on suicidal ideation at post-intervention, in (i) clinical, (ii) community and (iii) education settings.

When aggregating the effect of delivery model across settings, there was a significant effect for self-guided interventions (Hedges’ g = −0.14, 95% CI [−0.28, −0.04], p = .004; I2 = 72.37%, 95% CI [70.94%, 73.80%]), while the effect for blended models did not reach significance (Hedges’ g = −0.22, 95% CI [−0.44, 0.01], p = .057; I2 = 73.62%, 95% CI [72.04%, 74.98%]). No significant difference between self-guided and blended models was observed (z = −0.64, p = .524).

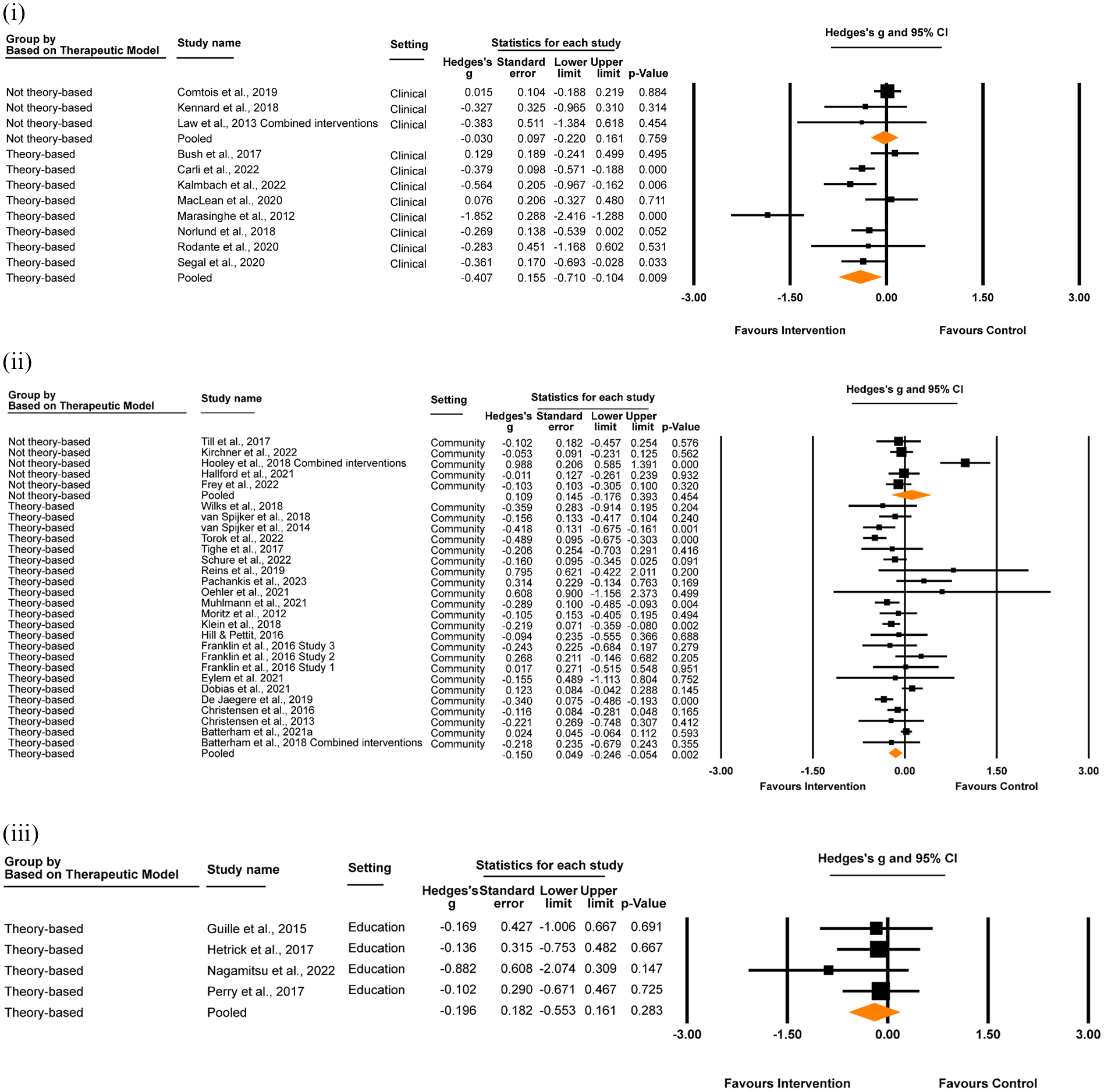

Effect of theoretical model on suicidal ideation

Whether or not interventions that were designed based on therapeutic models (e.g. CBT, DBT) had an effect on suicidal ideation was also assessed (Figure 6). In clinical settings, theory-based digital interventions were associated with a significant reduction in suicidal ideation, whereas there was no effect for non-theory-based interventions. The difference was significant (theory-based Hedges’ g = –0.41, 95% CI [–0.71, –0.10], p = .009; I2 = 82.30%, 95% CI [79.09%, 85.51%]; non-theory-based Hedges’ g = –0.03, 95% CI [–0.22, 0.16], p = .759; I2 = 0.00%, 95% CI [−0.35%, 0.35%]; z = 2.08, p = .037). A similar pattern was observed in community settings, however the difference was not significant (theory-based Hedges’ g = –0.15, 95% CI [–0.25, –0.05], p = .002; I2 = 65.53%, 95% CI [61.01%, 70.07%]; non-theory-based Hedges’ g = 0.11, 95% CI [–0.18, 0.39], p = .454; I2 = 83.79%, 95% CI [83.42%, 84.16%]; z = 1.71, p = .087). All interventions delivered in education settings were theory-based, so a comparison was not possible.

Effect of interventions designed with and without theoretical therapeutic models (theory-based vs. not theory-based) on suicidal ideation at post-intervention, in (i) clinical, (ii) community and (iii) education settings.

When aggregated across settings, there was a significant small effect in favour of interventions designed using theory-based models (Hedges’ g = –0.20, 95% CI [–0.30, –0.11], p < .001; I2 = 70.69%, 95% CI [71.95%, 68.98%]) which was not apparent for interventions designed using non-theory-based approaches (Hedges’ g = 0.04, 95% CI [–0.17, 0.24], p = .719; I2 = 73.39%, 95% CI [72.06%, 74.72%]). However, this difference was not significant (z = 1.43, p = .153).

Additional analyses

We analysed the effect of direct interventions compared to control conditions on depression measured at post-intervention (n = 13, 27.1%; Supplemental Figure B1). The analysis yielded a significant small effect for the effectiveness of direct interventions on depression scores at post-intervention (Hedges’ g = –0.32, 95% CI [–0.46, –0.18], p < .001; I2 = 63.64%, 95% CI [62.04%, 65.23%]). A small, significant effect of direct interventions on anxiety is also reported in Supplemental Appendix B, Figure B2, Hedges’ g = –0.22, 95% CI [–0.41, –0.04], p = .02; I2 = 67.54%, 95% CI [66.84%, 68.24%].

We also assessed whether inactive controls (treatment as usual or waitlist control groups) differed from attention placebo control groups on suicidal ideation outcomes (Supplemental Figure B3). Interventions compared to treatment as usual or waitlist control conditions demonstrated a significant effect on suicidal ideation (n = 23, 53.5%; Hedges’ g = –0.22, 95% CI [–0.34, –0.11], p < .001; I2 = 63.45%, 95% CI [61.81%, 65.09%]) which was not found for interventions compared to attention placebo control conditions (n = 20, 46.5%; Hedges’ g = –0.07, 95% CI [–0.20, 0.05], p = .258; I2 = 74.79%, 95% CI [73.44%, 76.15%]). The difference did not reach significance (z = 1.81, p = .071).

Lastly, we examined the effect of digital interventions compared to control conditions on suicidal ideation outcomes at the longest follow-up timepoint for studies in which data were available (n = 26, 60.5%; Supplemental Figure B4). There was a significant effect of digital interventions in reducing suicidal ideation at follow-up (Hedges’ g = –0.15, 95% CI [–0.26, –0.04], p = .006; I2 = 70.828%, 95% CI [69.36%, 72.29%]). A similar analysis found no significant effect for suicidal behaviour outcomes at the longest follow-up timepoint (Supplemental Figure B5; n = 4, Hedges’ g = –0.26, 95% CI [–0.56, 0.05], p = .097; I2 = 51.02%, 95% CI [50.21%, 51.83%]).

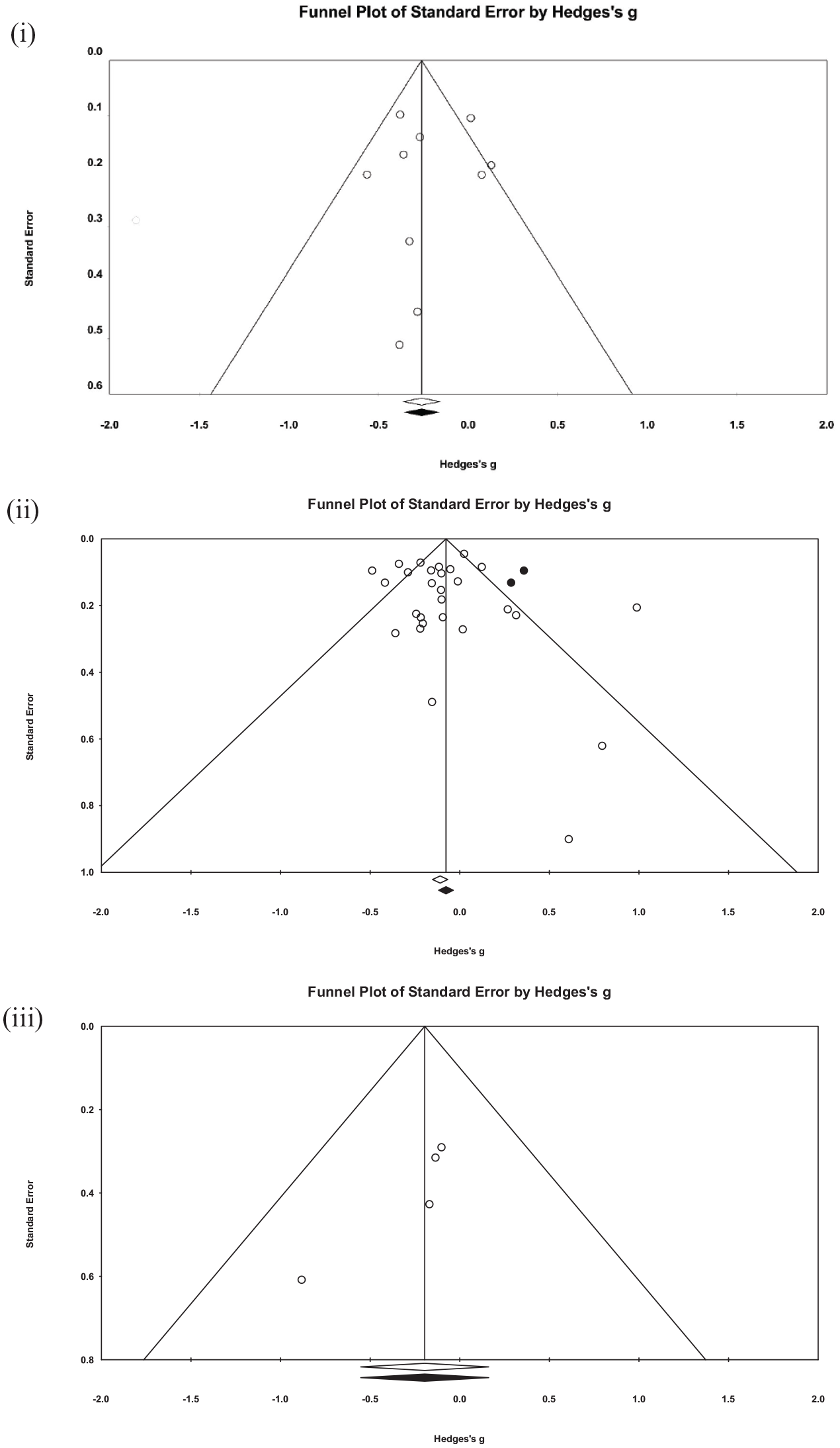

Publication bias and sensitivity analyses

There was no indication of publication bias for studies in the clinical setting, with a non-significant result from Egger’s test (intercept = −1.43, SE = 1.51, p = .184) and no imputed studies generated by the trim and fill method (Figure 7). Similarly, there was no evidence of publication bias for studies conducted in community settings (intercept = 0.32, SE = 0.675, p = .322), although two studies were imputed generating an effect size of −0.06 (95% CI [–0.16, 0.04]). Given the small number of studies in the education setting, an Egger’s test was not appropriate. However, visual inspection of the funnel plot demonstrates considerable asymmetry, suggesting the presence of publication bias within this subgroup. Aggregated across settings, there was no evidence for publication bias with Egger’s test (intercept = −0.33, SE = 0.50, p = .271) however there were seven studies trimmed when applying the trim-and-fill method, generating an imputed effect size of −0.07 (95% CI [−0.17, 0.02]).

Funnel plot of studies reporting suicidal ideation scores at post-intervention for interventions delivered in (i) clinical, (ii) community and (iii) education settings. Open circles represent studies included in the analysis. Black circles represent imputed studies from the trim-and-fill method. Studies inside the triangle have non-significant effects while studies outside the triangle have significant effects, at the p=0.05 level.

We conducted leave-one-out analyses within each setting (Supplemental Figure B6). In clinical settings, the largest change in effect size was observed when omitting Marasinghe et al. (2012) from the model, which decreased Hedges’ g from −0.34 to −0.21, although no single study rendered the random-effects model non-significant if omitted from the analysis. In the community setting pool of studies, a considerable number rendered the random-effects model non-significant (n = 11 of 28; 39.2%), the largest change in effect size occurring when omitting Hooley et al. (2018), with Hedges’ g increasing in magnitude from −0.09 to −0.14. The leave-one-out analysis in education settings maintained the model as non-significant, with the greatest change in effect size occurring if omitting Perry et al. (2017) from the model (Hedges’ g = −0.19 to −0.26). Across all settings, the omission of no single study rendered the model non-significant. The largest effect reduction was observed when omitting Marasinghe et al. (2012) from the model, which decreased the Hedges’ g value from −0.15 to −0.13.

When excluding studies with high risk of bias (see ‘Risk of Bias’ below for full results, and Supplemental Figure B7) in clinical settings, the effect remained significant (Hedges’ g = −0.35, 95% CI [−0.59, −0.09], p = .006; I2 = 79.48%, 95% CI [77.80%, 81.15%]), although this was not true when removing studies of high risk from community settings (Hedges’ g = −0.09, 95% CI [−0.19, 0.01], p = .069; I2 = 73.23%, 95% CI [71.93%, 74.53%]). The effect size of education settings when removing studies with high risk of bias was minimally affected (Hedges’ g = −0.20, 95% CI [−0.59, 0.19], p = .317; I2 = 0.00%, 95% CI [−1.19%, 1.19%]). When excluding studies with high risk of bias across all settings, the effect size was similar to the effect size of the overall effect on suicidal ideation at post-intervention, (Hedges’ g = –0.15, 95% CI [–0.243, −0.059], p = .001; I2 = 74.191%, 95% CI [72.80%, 75.58%]). There was significant heterogeneity in the overall model as demonstrated by the I2 values.

Risk of bias

From the risk of bias assessments, we found that the methodological quality of the included studies was generally good (see Supplemental Table B1 for assessment ratings). Overall, only 2 studies (4.2%) were assessed as having a high risk of bias, with 32 (66.7%) assessed as having some concern. The largest source of potential bias was in measurement of the outcome at post-intervention, with 25 of the 48 studies (52.1%) presenting some concern.

Discussion

This study provides a comprehensive examination of how the settings for which digital interventions are designed for and tested in may impact their effectiveness on suicide-related outcomes, and why. Included in this review were 48 unique trials (involving >29,000 participants) testing digital interventions in clinical, community and education settings and in which suicidal ideation, self-harm or suicide attempt were assessed as a primary or secondary outcome. Only clinical- and community-based interventions yielded small, significant effects for reducing suicidal ideation at post-intervention (Hedges’ g = –0.35 and −0.10, respectively), and although the magnitude of the effect was greater in clinical settings, it was not significantly different from that observed in community settings. When studies were aggregated, we observed a small and significant pooled effect for digital interventions on suicidal ideation (i.e. Hedges’ g = –0.15) which was of a similar magnitude to those observed in prior reviews (effect size range = −0.18 and −0.29; Buscher et al., 2020, 2022; Sutori et al., 2024; Torok et al., 2020). The consistency in findings across reviews indicates that digital interventions are modestly effective in reducing the intensity of suicidal ideation, although it remains unclear if these changes are clinically meaningful for participants. Null effects were observed for self-harm and suicidal behaviours, both by setting and when studies were pooled, also consistent with prior reviews (Buscher et al., 2022; Sutori et al., 2024; Torok et al., 2020). Because self-harm and suicide attempt outcomes were assessed in few studies, and typically only as secondary or exploratory outcomes, difficulties in detecting significant change in these rare phenomena is likely an artefact of insufficient power (Witt et al., 2017).

Although the differences in intervention effectiveness for ideation by setting were slight, it is useful to consider what clinical and methodological confounders may have contributed to this variance. The largest effect was observed in the clinical setting, where trials typically involve more symptomatically severe patients than those recruiting from community-based settings (including schools). As baseline symptom severity has been shown in other studies to be strongly prognostic of treatment response (Scholten et al., 2023), it is to be expected that effects would be of greater magnitude in trials treating indicated populations. Conversely, the education setting was the only group where the main effect for ideation was non-significant. Only four studies met criteria for the education setting, of which 75% were indirect interventions designed to reduce depression. The one direct intervention achieved less than 30% of its recruitment target (Hetrick et al., 2017). Because ideation is less prevalent than depression in adolescents (Voss et al., 2019), it is likely that the trials conducted in schools were insufficiently powered to detect clinically significant change in suicidal thoughts. More high-quality trials of digital interventions in education settings are needed to determine the benefits of these tools for young people experiencing suicidal ideation.

At an aggregate level, direct and indirect interventions were equivalent in their effectiveness for reducing suicidal ideation (g = −0.16 and −0.15, respectively), contradicting findings from a prior review that only direct interventions were effective (Torok et al., 2020). Given that the effect for indirect interventions approached significance in the previous review (p = .07), the greater number of trials included in the current review may help to explain this different outcome. When examined by setting however, we unexpectedly found that indirect but not direct digital interventions in the clinical setting were effective. This peculiarity may in part be explained by differences in the quality of the interventional approach. Over 40% of direct ‘clinical’ interventions were non-theory-based – and did not target negative cognitions linked to mood and affect changes – while all indirect clinical interventions were grounded in third-wave CBT approaches. This argument fits with our examination of the quality of the interventions; in the clinical setting, interventions grounded in proven therapeutic approaches were not only effective but also statistically superior to non-theory-based models for reducing ideation (g = –0.41 vs. g = −0.03, respectively). The effectiveness of theory-driven approaches (and null effect for non-theory models) was also shown in community settings, and in the total pool of studies. Therapeutic alignment appears to be an essential ingredient for effectively reducing ideation. While this finding is reassuring, the reality is that there is a substantial and concerning disparity between ‘what works’ and ‘what is publicly available’, with many industry-developed direct-to-consumer interventions (e.g. websites, apps) being untested (Cohen et al., 2020) and containing few components based on proven therapeutic models (just one on average; Larsen et al. (2016)). Stronger industry-academic alliances are needed to drive increases in the availability of effective and safe digital interventions to patients, where commercialisation goals are balanced against theoretical alignment and well-designed systems for monitoring effects and harms.

Differences in the effectiveness of blended care versus self-guided models were observed in the community setting, and in the aggregate pool of studies. Our data showed small significant effects in favour of self-guided interventions, while blended care models did not reach significance for suicidal ideation. In the clinical setting, both delivery models were effective. However, pairwise comparisons of self-guided and blended care models showed neither to be superior in any setting, nor overall. Our findings are highly consistent with a recent meta-analysis that directly compared human guided versus self-guided models and found almost negligible differences in the effects of these models for common mental disorders (g = −0.11; Koelen et al., 2022). The comparable effectiveness of these delivery modes may be due to advances in the ways in which design thinking principles have been applied to self-guided digital models to improve usability and appeal (Gan et al., 2022; Kraepelien et al., 2023), subsequently minimising the impact of human-support on therapeutic gains. On a practical level, the real value of these delivery models performing similarly well is the implication that digital interventions, irrespective of delivery model, can be flexibly used to meet to the needs of different patient populations across settings, addressing variance in the degree to which stigma, confidentiality, privacy (e.g. Josifovski et al., 2022) and logistical challenges are barriers to care.

The included studies were diverse, differing in therapeutic modality, delivery mode (self-guided vs. blended care), comparator conditions and outcome measurement, and this variance was reflected in the significant heterogeneity in the overall analysis of all included RCTs (I2 = 72%) and is typical of meta-analytic studies. Other limitations also include the heterogeneity in follow-up periods across studies, ranging from immediately post-intervention to 18 months post-baseline and the possibility that the true nature of a ‘self-guided’ intervention is compromised when studies incorporate safety measures (e.g. follow-up phone calls). The validity of our findings is, however, supported by the overall good quality of the underlying studies, with only 4% assessed as having a high risk of bias and most of the studies allocating participants and handling missing values appropriately. There was also no evidence of publication bias influencing effect size across the entire pool of included studies.

Taken together, the findings provide some directions for future research as well as practical implications for the design and delivery of digital suicide prevention interventions. There is now good evidence for delivery of digital interventions within clinical settings which supports their implementation; however, as the difference between self-guided and blended interventions in this setting was near the threshold of significance, future work could directly compare these delivery modes. A significant effect was found in trials in community settings, however this was not robust to the leave-one-out sensitivity analysis, or when only low risk of bias studies were examined. Further high-quality trials of interventions in community settings may therefore be warranted. There is a need for more high-quality trials in education settings before being able to determine their benefits for young people. Almost all eligible research was from high-income countries (94% of included studies). However, most suicides occur in low-to-middle-income (LMIC) countries, where the availability of mental health services is inadequate, and where the promise of digital care most needs to be realised. Future research should seek to include diverse samples from LMICs to understand if the patterns for overall and setting-specific effects observed in this study remain true, advancing current understandings of whom digital interventions work for. If there is variance, efforts to manipulate therapeutic components, and test different implementation factors and diffusion pathways across settings may help advance understandings of how these tools can better meet individuals’ needs. Further, effectiveness of these interventions in the real world depends on greater alliance between industries and academics, so that implementation and commercialisation are balanced with theoretical alignment. There is much work to be done in developing digital suicide prevention tools that can support behavioural change, given the currently limited evidence that these innovations effectively prevent self-harm and suicide attempts. Dismantling studies of digital suicide prevention interventions mapped to ideation-to-action theories may be helpful in providing clarity and new directions for what may be the most effective targets for preventing suicide ideation versus suicidal behaviour, to optimise effects of digital interventions.

When the overall findings of this study are considered in the context of previous similar meta-analyses, they suggest that psychological interventions delivered via digital devices or platforms have promise for supporting individuals to manage suicidal ideation. While basing digital content on proven therapeutic models appears to be one of the more important ingredients for effectiveness, there was an absence of compelling evidence of setting-specific effects and for most other feature effects. This finding is in many ways good news, suggesting there is greater flexibility than previously thought as to how digital interventions may promote equitable care provision across settings, to meet people where they are at. The present meta-analysis is consistent with previous research that digital suicide prevention interventions are effective in reducing suicidal ideation, and that there appears to be greater flexibility regarding other design elements, such as whether or not suicide is directly targeted, and which setting interventions are implemented in. At the same time, there is still a lack of evidence supporting the effectiveness of these interventions in reducing suicidal behaviours, a necessary next step in suicide prevention.

Supplemental Material

sj-docx-1-isp-10.1177_00207640251358109 – Supplemental material for What works where and why? A systematic review and meta-analysis of digital interventions addressing suicide-related outcomes in community, education and clinical settings

Supplemental material, sj-docx-1-isp-10.1177_00207640251358109 for What works where and why? A systematic review and meta-analysis of digital interventions addressing suicide-related outcomes in community, education and clinical settings by Natasha Josifovski, Sylvia Eugene Dit Rochesson, Quincy JJ Wong, Jin Han, Mark E Larsen and Michelle Torok in International Journal of Social Psychiatry

Supplemental Material

sj-docx-2-isp-10.1177_00207640251358109 – Supplemental material for What works where and why? A systematic review and meta-analysis of digital interventions addressing suicide-related outcomes in community, education and clinical settings

Supplemental material, sj-docx-2-isp-10.1177_00207640251358109 for What works where and why? A systematic review and meta-analysis of digital interventions addressing suicide-related outcomes in community, education and clinical settings by Natasha Josifovski, Sylvia Eugene Dit Rochesson, Quincy JJ Wong, Jin Han, Mark E Larsen and Michelle Torok in International Journal of Social Psychiatry

Supplemental Material

sj-docx-3-isp-10.1177_00207640251358109 – Supplemental material for What works where and why? A systematic review and meta-analysis of digital interventions addressing suicide-related outcomes in community, education and clinical settings

Supplemental material, sj-docx-3-isp-10.1177_00207640251358109 for What works where and why? A systematic review and meta-analysis of digital interventions addressing suicide-related outcomes in community, education and clinical settings by Natasha Josifovski, Sylvia Eugene Dit Rochesson, Quincy JJ Wong, Jin Han, Mark E Larsen and Michelle Torok in International Journal of Social Psychiatry

Supplemental Material

sj-docx-4-isp-10.1177_00207640251358109 – Supplemental material for What works where and why? A systematic review and meta-analysis of digital interventions addressing suicide-related outcomes in community, education and clinical settings

Supplemental material, sj-docx-4-isp-10.1177_00207640251358109 for What works where and why? A systematic review and meta-analysis of digital interventions addressing suicide-related outcomes in community, education and clinical settings by Natasha Josifovski, Sylvia Eugene Dit Rochesson, Quincy JJ Wong, Jin Han, Mark E Larsen and Michelle Torok in International Journal of Social Psychiatry

Footnotes

Author contributions

Natasha Josifovski: investigation, formal analysis, validation, writing – original draft, writing – review and editing, visualisation and project administration. Sylvia Eugene Dit Rochesson: investigation, formal analysis, validation, writing – original draft, writing – review and editing, visualisation and project administration. Quincy JJ Wong: conceptualisation, methodology, validation, formal analysis and writing – review & editing. Jin Han: conceptualisation, methodology, validation, formal analysis and writing – review & editing. Michelle Torok: conceptualisation, methodology, validation, formal analysis, investigation, resources, data curation, writing – review & editing, supervision and project administration. Mark E Larsen: conceptualisation, methodology, validation, formal analysis, investigation, resources, data curation, writing – review & editing, supervision and project administration. All authors contributed to and have approved the final manuscript.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: No specific funding was received to support this research, but MT was supported by the National Health and Medical Research Council (NHMRC GNT2007731). The NHMRC had no role in the study design, collection, analysis or interpretation of the data, writing the manuscript or the decision to submit the paper for publication.

Ethical approval and informed consent statements

Ethics approval was not required for this review of previously published studies.

Protocol registration

The protocol for this systematic review was registered on PROSPERO (CRD42022314246).

Data availability statement

Extracted data are available on request to the corresponding author.

Supplemental material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.