Abstract

Objective

We investigated the extent to which a voluntary-use range and bearing line (RBL) tool improves return-to-manual performance when supervising high-degree conflict detection automation in simulated air traffic control.

Background

High-degree automation typically benefits routine performance and reduces workload, but can degrade return-to-manual performance if automation fails. We reasoned that providing a voluntary checking tool (RBL) would support automation failure detection, but also that automation induced complacency could extend to nonoptimal use of such tools.

Method

Participants were assigned to one of three conditions, where conflict detection was either performed: manually, with RBLs available to use (Manual + RBL), automatically with RBLs (Auto + RBL), or automatically without RBLs (Auto). Voluntary-use RBLs allowed participants to reliably check aircraft conflict status. Automation failed once.

Results

RBLs improved automation failure detection – with participants intervening faster and making fewer false alarms when provided RBLs compared to not (Auto + RBL vs Auto). However, a cost of high-degree automation remained, with participants slower to intervene to the automation failure than to an identical manual conflict event (Auto + RBL vs Manual + RBL). There was no difference in RBL engagement time between Auto + RBL and Manual + RBL conditions, suggesting participants noticed the conflict event at the same time.

Conclusions

The cost of automation may have arisen from participants’ reconciling which information to trust: the automation (which indicated no conflict and had been perfectly reliable prior to failing) or the RBL (which indicated a conflict).

Applications

Providing a mechanism for checking the validity of high-degree automation may facilitate human supervision of automation.

Due to the increased volume and complexity of information which human operators process in the modern workplace, automation is increasingly being provided (for reviews, see Bhaskara et al., 2020; Endsley, 2017; O’Neill et al., 2022). Automation can assist operators to perform beyond their physical, perceptual, and cognitive limits by partially (or fully) completing task functions. Automated assistance can range from low-level perceptual/cognitive support (i.e. organising/filtering incoming information) to high-level decision support (i.e. recommending/performing task actions; Parasuraman et al., 2000). Highly automated systems have the potential to free operator capacity for other tasks and improve routine system performance (Manzey et al., 2012).

Technological innovation means that in the future, humans will increasingly deal with higher degree automation. Unfortunately, both incidents reported from operational settings and controlled experimental research, indicate potential costs to using high-degree automation. For example, the U.S. Department of Transportation highlighted concerns about aviation accidents caused by the improper monitoring of automation and subsequent human failure to appropriately transition to manual control (Hampton, 2016). Correspondingly, research indicates that high-degree automation can remove operators from the control loop and degrade their situation awareness (Endsley & Kiris, 1995). Degraded situation awareness, in turn, can impair an operator’s ability to transition to manual control if automation fails (see Onnasch et al., 2014 meta-analysis). Insufficient monitoring of automation (aka ‘automation-induced complacency’; Parasuraman & Manzey, 2010) is most prevalent when high-degree automation is more reliable (Bowden et al., 2021; Parasuraman et al., 1993) and relatedly, when operators have more trust in automation (Hussein et al., 2020; Lee & See, 2004).

Implementing reliable, trustworthy automation is essential for ensuring sustainable human workload and maximum airspace capacity in air traffic control (ATC; Edwards et al., 2017; Loft et al., 2007, 2023). A crucial ATC task is to prevent future loss of minimum separation between aircraft (referred to as ‘conflicts’). Internationally, automation can be provided to advise controllers of potential upcoming aircraft conflicts (FAA, 2018; Kim et al., 2021; Noskievič & Kraus, 2017). While the specific features of conflict detection automation can differ across countries, all use flight plan data, forecast weather, and aircraft performance to derive expected aircraft trajectories and predict future conflicts. What is currently unclear however, is how to design conflict detection automation that benefits routine performance and reduces workload, while ensuring the human has sufficient situation awareness to detect conflicts manually if required (i.e. return to manual control; RTM).

When manually detecting conflicts, research indicates that both student (Rantanen & Nunes, 2005) and expert (Gronlund et al., 1998; Loft et al., 2009) controllers initially assess whether any aircraft share common altitude, and then determine the future lateral separation for any such aircraft pairs heading toward a common intersection. Errors can occur if controllers either inappropriately scan for, and thus do not assess, all potential conflicts (Remington et al., 2000), or incorrectly calculate the relative arrival times of aircraft pairs at future intersections after they are selectively attended (Neal & Kwantes, 2009). The latter can be overcome by controllers applying safety margins to aircraft trajectories to compensate for uncertainty in the closest point of aircraft approach (Loft et al., 2009), and while this reduces the risk of conflict, it does increase controller intervention and thus potentially decreases airspace capacity. Thus, given that conflict detection automation can typically calculate the closest point of aircraft approach precisely (Strybel et al., 2016), ATC work design solutions that retain high-degree automation, but also contain features to mitigate the aforementioned costs of automation use on RTM, are of particular interest.

One potential solution is to provide controllers with tools that they can voluntarily use to assist with conflict detection. Existing tools include movable scale markers (to measure aircraft distance from points of interest such as waypoints), range and bearing lines (RBL’s; to estimate aircraft time/distance from other aircraft/waypoints), and history dots (representing where aircraft have been). When detecting conflicts manually, these voluntary tools allow controllers to determine future aircraft separation. We contend that providing tools (specifically, RBLs) alongside high-degree conflict detection automation could assist controllers with identifying when they need to intervene manually to conflicts that automation may have missed. However, there is certainly no guarantee that voluntary tools will be used the same way when supervising automation as they would be used when conflicts are detected manually. It is possible that complacent supervision of automation may extend to the infrequent, delayed, or otherwise inappropriate voluntary tool use compared to using such tools when detecting conflicts manually. In the current study, we investigated the extent to which participants used a voluntary RBL tool to improve RTM performance when supervising high-degree automation in simulated ATC.

THE CURRENT STUDY

In the current study, the RBL allowed the precise calculation of the relative arrival time of aircraft pairs at flight path intersections. Participants were instructed that any aircraft pair cruising at the same altitude, and due to cross flight paths less than 20s apart (as indicated by the RBL) would definitely conflict (i.e. violate the 5 nm lateral separation standard). Using the RBL therefore eliminated the need to manually estimate future relative aircraft position. Assuming participants can effectively scan the sector for aircraft at the same altitude in order to identify which aircraft warrant RBL use, manual conflict detection should be more accurate and/or faster with the option to use the RBL than without RBL provision.

In conditions where high-degree conflict detection automation was provided, the automation resolved all conflicts at the earliest possible opportunity. The participants’ task therefore changed from manual conflict detection, to supervisory oversight of automation, with instructions to intervene only in the event that automation performed inappropriately (i.e. missed a conflict). To ensure the current study was as ecologically valid as possible, the automation only failed to resolve one conflict event late in the scenario (see Bowden et al., 2021). Examining complacency under conditions where automation failures are rare is representative of real automated systems (Molloy & Parasuraman, 1996), including ATC, where automation reliability can be so high that operators seldom, if ever, experience a failure (Wickens et al., 2009). This experimental design feature is critical because while detection of the first automation failure is often poor, the detection of subsequent failures can dramatically improve (e.g. Merlo et al., 2000).

We recorded participants’ speed and accuracy to detect the single conflict that automation missed, compared to detecting an identical conflict manually. When provided with automation and the RBL, participants could use the RBL to check whether automation had missed a conflict. However, participants might fail, or be slower, to notice the conflict missed by automation, or be slower to decide whether the aircraft are in conflict after applying the RBL, compared to using the RBL when manually detecting conflicts. Being slower to notice the automation-missed conflict and apply the RBL would be indicative of residual complacency in automation use even with the provision of highly accurate RBL’s. Whereas being slower to resolve the conflict after applying the RBL would instead indicate delayed use of RBL information (delayed decision-making). The current study design thus creates a theoretically interesting and ecologically valid situation where we assess voluntary RBL use for the single conflict missed by automation under conditions in which automation has not previously missed conflicts.

All participants completed one fully manual 30-min baseline scenario, in which they accepted incoming aircraft into their sector, handed off departing aircraft, and detected and resolved conflicts. This scenario was considered unsupported since it contained no automation and no RBL. In the 30-min supported scenario, participants were provided with a combination of high-degree automation and/or RBL, specific to their condition. Participants were assigned to one of three supported conditions in which conflict detection was either performed: (1) manually, with the RBL available to use (Manual + RBL), (2) automatically, with the RBL (Auto + RBL), or (3) automatically, without the RBL (Auto). In all supported conditions, aircraft were automatically accepted and handed-off.

First, we aimed to confirm that voluntary use of the RBL tool improved participants’ ability to detect a single failure of conflict detection automation. Specifically, we predicted that participants would be more likely, or faster, to intervene to the automation failure event because they could check the future aircraft positions with the RBL, compared to those provided automation without the RBL (Auto + RBL vs Auto). It was predicted that providing the RBL would also decrease false alarms (i.e. unnecessary interventions to nonconflicting aircraft). It was unclear whether RBL’s would increase perceived workload, or reduce task disengagement, compared to automation without the RBL. The ability to ‘check’ the automation with RBLs may increase trust in the automation itself.

It was less clear whether RBLs would be as effective in supporting the detection of an automation failure, compared to detecting an identical conflict manually with the RBL (Auto + RBL vs Manual + RBL). It is possible that high-degree automation-induced complacency may extend to the infrequent, delayed, or otherwise inappropriate use of RBLs when compared to detecting conflicts manually with RBL’s. Should this be the case, we would predict that participants in the Manual + RBL condition would be more likely, or faster, to detect the conflict than participants in the Auto + RBL condition. On the basis of prior findings (e.g. Bowden et al., 2021), we expected participants in the Auto + RBL condition would report lower workload, and increased task disengagement, than participants in the Manual + RBL condition.

METHOD

Participants

One hundred and forty-four undergraduate students (38 male, 106 female) aged 16–59 (M = 22.7 years) participated for course credit and were randomly allocated to one of three conditions (n = 48). Participants received a performance-based bonus of between AU$5 and AU$10. Eight participants’ data was excluded and replaced for failing to follow task instructions. This research complied with the tenets of the Declaration of Helsinki and was approved by the Institutional Review Board at The University of Western Australia. Participants provided informed consent.

Air Traffic Control Simulation

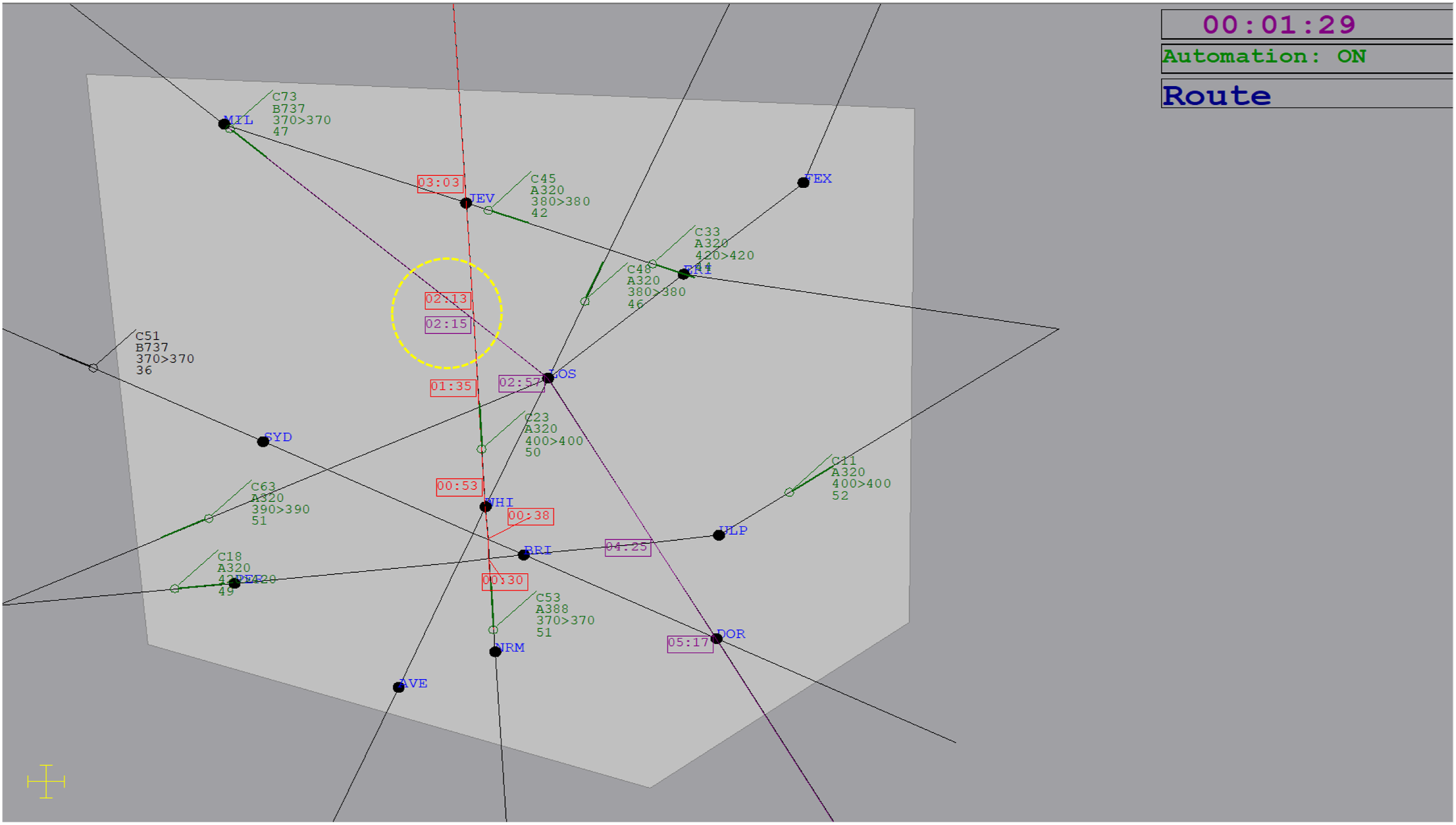

Participants completed a simulation of en-route ATC on two, 22-inch monitors. The radar display/tasks were based on ATC-labAdvanced (Fothergill et al., 2009), while the flight progress strips and event log display was based on Masalonis et al. (1997). Figure 1 shows the radar display. Participants were responsible for the light grey inner sector, while the dark grey outer sector contained approaching/departing aircraft. Aircraft were represented by icons with attached data blocks containing call sign (e.g. C79), model (e.g. A320), current altitude (e.g. 400, denotes 40,000 ft), cleared altitude, and speed (e.g. 50, denotes 500 knots). Aircraft travelled on flight paths and through waypoints (e.g. JEV) at a cruising altitude before crossing the sector boundary and exiting the display. Participants were responsible for a median of eight aircraft at a time, with new aircraft entering throughout the scenario every ∼40s. Aircraft flight paths and speeds were fixed. Aircraft remained at a constant cruising altitude unless directed to ascend by either participants or automation to prevent a conflict (altitude was assigned in 1000 ft increments). Aircraft position was updated every second and a scenario timer was presented in the top right corner of the radar display. Participant response times (RTs) were recorded in milliseconds. Simulated ATC radar display with the range and bearing line (RBL) tool engaged for aircraft C73 (RBL purple) and C53 (RBL red). The RBL indicates that this pair are in conflict since have a common altitude (37,000 ft) and will cross paths with 2s discrepancy (relative arrival times at the common intersection: 2 min 15s and 2 min 13s, respectively, which is less than 20s discrepancy in relative arrival time, making it a definite conflict). The yellow dashed circle is added for illustration purposes and was not presented in the task.

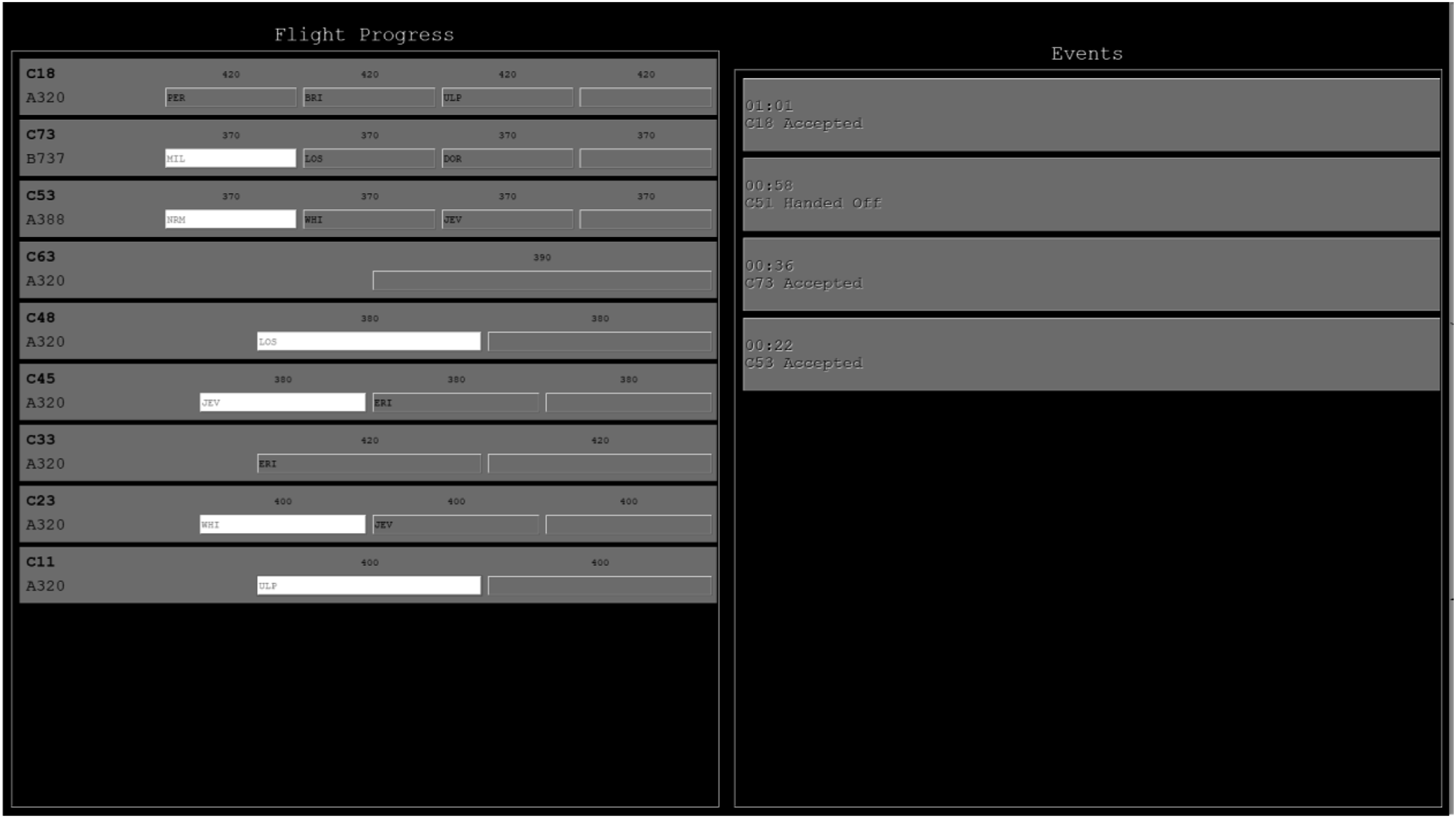

A second display contained flight progress strips and an event log (Figure 2). One strip was provided for each accepted aircraft. Strips contained aircraft call sign, model, flight path (represented by the waypoints remaining along its route, with waypoints changing from grey to white once crossed), and altitude. The event log contained a scrolling list of all previous actions taken by both the participant and the automation (i.e. acceptances, handoffs and altitude changes to prevent conflicts). Aircraft flight progress strips (left) and event log (right) listing all acceptances, handoffs, and altitude changes to prevent conflicts.

Aircraft flashed blue when they approached the sector boundary to indicate they required acceptance. Participants pressed ‘A’ on the keyboard and clicked on the aircraft to accept it. Once accepted, aircraft stopped flashing and turned green. Aircraft flashed orange when leaving the sector to indicate they required handing-off. Participants handed-off aircraft by pressing ‘H’ and clicking on the aircraft. Once handed-off, aircraft stopped flashing and turned black. Participants had 20s to accept/hand-off aircraft in the unsupported scenario. Automation accepted/handed-off aircraft after 5s of flashing in the supported scenario.

Conflicts occurred when aircraft violated minimum separation standards (1000 ft vertical and five nautical miles lateral). To assist with judging lateral separation, a yellow scale was presented in the corner of the radar display to depict five nautical miles. Participants were instructed to prevent conflicts quickly and accurately, and that preventing conflicts was their most important task. To prevent a detected conflict, participants changed the altitude of one aircraft in the conflicting pair by left-clicking the aircraft’s data block. A dialogue box then prompted participants to click on the other conflicting aircraft (i.e. it did not matter which aircraft was selected first). If the pair were in conflict, one aircraft would begin ascending 1000 ft and the action was added to the event log. If the pair were not in conflict, an audio message notified participants that they had made a false alarm. If a conflict was missed, the conflicting pair turned yellow to indicate a loss of separation and an audio message notified participants. Conflicting aircraft returned to green once separation was re-established. Conflicts could only be prevented after both aircraft in a pair had been accepted. Each scenario contained 10 conflicts and six near-misses, where a near-miss involved aircraft at the same altitude that nearly violated lateral separation.

Conditions

In the 30-min unsupported baseline scenario, participants performed acceptances, hand-offs, and prevented conflicts with no conflict detection automation and no RBL. In the condition-specific supported scenario, conflict detection was either performed: manually with RBLs available to use (Manual + RBL); automatically with RBLs (Auto + RBL); or automatically without RBLs (Auto). Acceptances and handoffs were performed automatically in the supported scenario. All conflicts and near-misses presented in the unsupported and supported scenarios were unique, with the exception of the ninth conflict event, occurring 26-min 20s into the 30-min scenario. The identical ninth conflict was the single conflict event the automation failed to resolve in the supported scenario, and allowed direct comparison to the same conflict event in the unsupported scenario (with the call signs for this key ninth conflict changed between scenarios).

Conflict Detection Automation

Automation detected and resolved conflicts when the second aircraft in a conflicting pair was automatically accepted (i.e. 5s after it first flashed for acceptance). At this time, one aircraft began ascending 1000 ft to avoid the conflict and a message appeared in the event log reporting the automated action. Participants were instructed that automation was highly reliable, but not perfect, and that in the unlikely event that automation either performed an incorrect action or failed to perform an action, it was their responsibility to intervene to correct the failure as soon as possible. When conflict detection was automated (Auto, Auto + RBL), automation failed to resolve the ninth conflict during supported scenarios, referred to hereafter as the automation failure event. Aside from this single failure, automation was 100% reliable. Participants’ speed and accuracy to intervene to the automation failure event was recorded. When conflict detection was not automated (Manual + RBL), we recorded manual detection of the identical conflict.

Range and Bearing Line

In the supported scenario, participants in the Auto + RBL and Manual + RBL conditions activated the RBL by pressing the ‘R’ key and clicking to select up to two aircraft at a time. Once selected, the time remaining until each aircraft arrived at all future waypoints and intersections on their flight path was displayed. For example, Figure 1 shows C73 and C53 will arrive at a common intersection in 2 min 15s, and 2 min 13s, respectively. Since participants were instructed that any aircraft pair cruising at the same altitude and due to cross paths less than 20s apart were guaranteed to conflict, this indicates that C73 and C53 will conflict unless the participant (or automation) intervenes. The RBL was 100% reliable and accurate and participants were explicitly informed of this.

Questionnaires

Trust

Merritt's (2011) 6-item ‘Trust in Automation’ questionnaire was completed after scenarios containing conflict detection automation (Auto, Auto + RBL) and a ‘Trust in RBL’ variant was administered after scenarios containing the RBL (Manual + RBL, Auto + RBL). Participants responded strongly disagree (1) to strongly agree (5) on items such as ‘I can depend on the Conflict Detection and Resolution Automation’ and the average score was recorded.

Workload

The National Aeronautics and Space Administration Task Load Index (NASA-TLX; Hart & Staveland, 1988) was completed after each of the two scenarios. The weighted composite of six workload subscales (mental demand, physical demand, temporal demand, effort, performance, frustration) was used to calculate a single, overall workload score between 0 and 100, with higher scores indicating higher workload. The weighting procedure involved 15 pairwise comparisons of relative subscale item importance.

Task Disengagement

A 5-item task disengagement questionnaire was completed after each scenario. The questionnaire included items from the inattention and disengagement subscales of the Multidimensional State Boredom Scale (items: 3, 10, 16, 20, 23; Fahlman et al., 2013). Participants responded strongly disagree (1) to strongly agree (7) to each item.

Procedure

All participants first completed 60-min of training to familiarise themselves with performing all the ATC tasks (acceptance, hand-off, conflict detection) manually. This included a 25-min audio-visual training presentation describing and demonstrating the tasks, followed by a 30-min manual practice scenario where they could ask questions and receive feedback. Participants’ average speed and accuracy on all tasks was provided as feedback after all scenarios. Following training, participants completed two 30-min scenarios: one unsupported and one supported. Two unique ATC scenarios were created, but with an identical ninth conflict event (aircraft call signs were changed). The assignment of the two scenarios to condition (supported or supported), as well as presentation order (supported first or second), was counterbalanced. Prior to the supported scenario, participants received additional condition-specific instructions on the automation and/or RBL tool, with an additional 3-min practice using RBLs. Total experiment duration was ∼2.5 hrs.

RESULTS

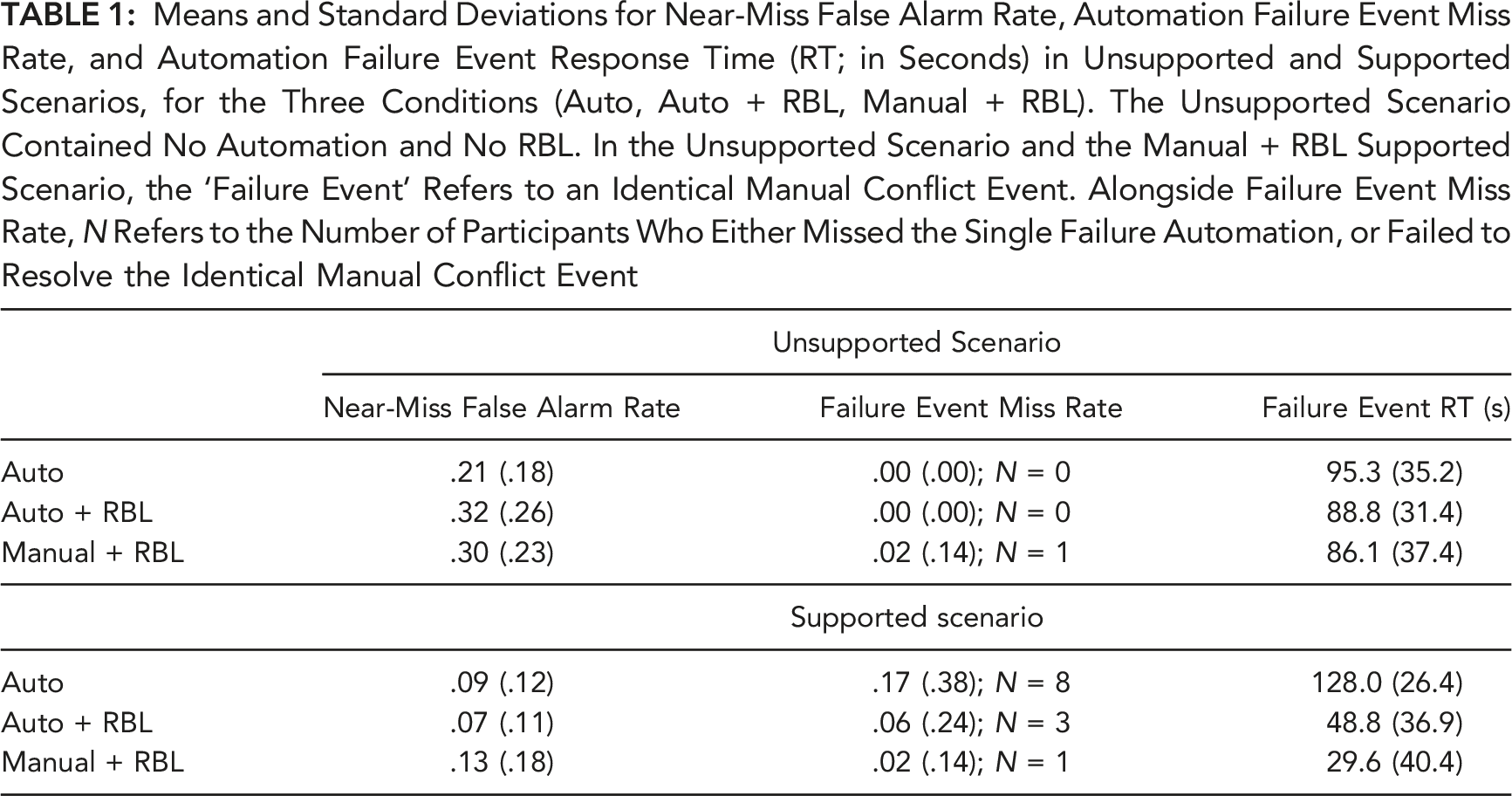

Means and Standard Deviations for Near-Miss False Alarm Rate, Automation Failure Event Miss Rate, and Automation Failure Event Response Time (RT; in Seconds) in Unsupported and Supported Scenarios, for the Three Conditions (Auto, Auto + RBL, Manual + RBL). The Unsupported Scenario Contained No Automation and No RBL. In the Unsupported Scenario and the Manual + RBL Supported Scenario, the ‘Failure Event’ Refers to an Identical Manual Conflict Event. Alongside Failure Event Miss Rate, N Refers to the Number of Participants Who Either Missed the Single Failure Automation, or Failed to Resolve the Identical Manual Conflict Event

Failure Event Performance

Participants missed the automation failure event if they did not intervene before the aircraft involved in the ninth conflict lost separation. In the unsupported scenario, accuracy was at ceiling, with all but one participant manually detecting this conflict. Chi-squared tests were used to compare failure event miss-rate between conditions in the supported scenario. Nominally, fewer participants missed the failure event in the Auto + RBL compared to the Auto condition (6% vs. 17%), but the difference was not significant, χ 2 = 2.57, p = .109. There was no difference between the Auto + RBL and Manual + RBL conditions, χ 2 = 1.04, p = .307.

For failure event RT, 3 (condition: Auto, Auto + RBL Manual + RBL) x 2 (scenario: unsupported, supported) ANOVA was conducted, with condition between-subjects and scenario within-subjects. Note that with a single failure event, RTs were not available for the 13 participants who missed the conflict. There were main effects of scenario, F (1,128) = 39.57, p < .001, ηp2 = .24, and condition, F (2,128) = 39.58, p < .001, ηp2 = .38, and an interaction between scenario and condition, F (2,128) = 63.1, p < .001, ηp2 = .50. There was no difference between conditions in the unsupported scenario, F (2,140) = 1.21, p = .300.

As predicted, in the supported scenario, participants in the Auto + RBL condition were faster than those in the Auto condition, M diff = −79.2s, t (83) = 11.25, p < .001, d = 2.45, indicating that RBL’s allowed participants to detect the automation failure faster. Next, we tested whether RBL’s were as effective in supporting the monitoring/checking of automation as they were in supporting manual conflict detection. Participants in the Auto + RBL condition were slower than those in the Manual + RBL condition, M diff = +19.1s, t (90) = 2.39, p = .019, d = .50. This indicates that a cost of high-degree automation use persisted even when participants were provided with a voluntary checking tool that eliminated uncertainty around the future relative positions of aircraft. This appears to support the presence of automation-induced complacency; however, differences in the timing of RBL use between the Auto + RBL and Manual + RBL conditions (reported below) suggest delayed decision-making after applying the RBL is the more likely explanation.

Near-Miss False Alarms

Near-miss false alarms were defined as attempting to change the altitude of an aircraft involved in a scripted near-miss event (i.e. aircraft pairs at the same altitude that came close to violating minimum separation). A 3 (condition) x 2 (scenario) ANOVA revealed an effect of scenario, F (1,141) = 69.1, p < .001, ηp2 = .33, no effect of condition, F (2,141) = 2.53, p = .083, and an interaction, F (2,141) = 3.48, p = .034, ηp2 = .05. Given the difference between conditions in the unsupported scenario, F (2,141) = 3.17, p = .045, costs were used for the planned comparisons (specifically, supported minus unsupported scenario false alarm rate). Participants in the Auto + RBL condition had a greater reduction in false alarms in the supported compared to unsupported scenario (M = -.26, SD = .29) than those in the Auto condition (M = −.12, SD = .23), M diff = .15, t (91) = 2.71, p = .008, d = .56, suggesting that RBL provision may have reduced near-miss false alarms. There was no difference between Auto + RBL and Manual + RBL conditions (M = −.16, SD = .26), M diff = −10, t (91) = 1.78, p = .079.

Range and Bearing Line Use

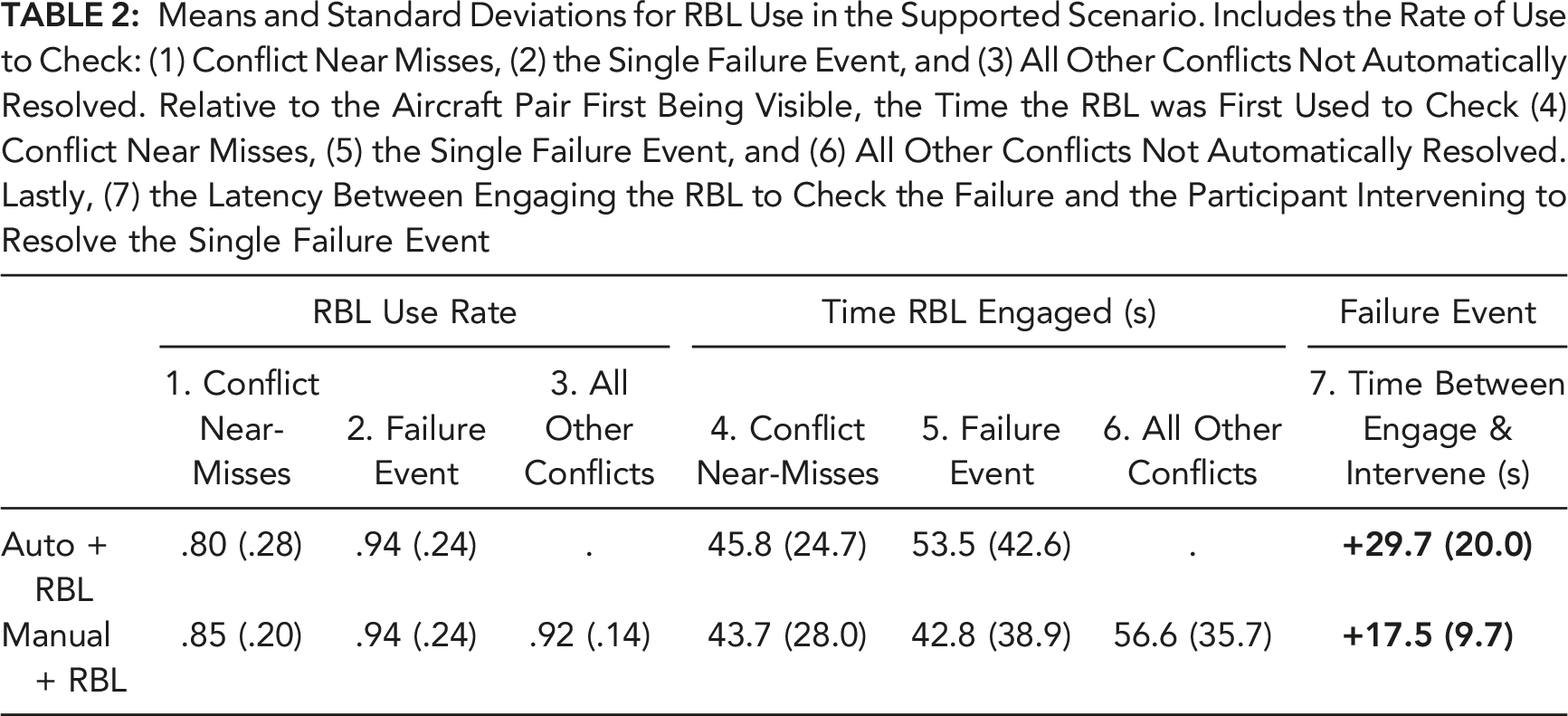

Means and Standard Deviations for RBL Use in the Supported Scenario. Includes the Rate of Use to Check: (1) Conflict Near Misses, (2) the Single Failure Event, and (3) All Other Conflicts Not Automatically Resolved. Relative to the Aircraft Pair First Being Visible, the Time the RBL was First Used to Check (4) Conflict Near Misses, (5) the Single Failure Event, and (6) All Other Conflicts Not Automatically Resolved. Lastly, (7) the Latency Between Engaging the RBL to Check the Failure and the Participant Intervening to Resolve the Single Failure Event

Next, to test whether the Auto + RBL and Manual + RBL conditions differed in the time that the RBL was engaged, we calculated the latency between when a pair was first visible (i.e. the first time the RBL could be applied) and when the RBL was first engaged. There was no difference in engagement time for conflict near-misses, t < 1, and no difference for the failure event, t (88) = 1.25, p = .217. This suggests that participants provided with automation and RBLs were not slower to initially check the failure event (or near-misses) compared to those who detected conflicts manually with RBLs. They did, however, take significantly longer to follow up this initial check with an intervention to resolve the failure event, with participants in the Auto + RBL condition taking longer to intervene after first engaging the RBL than those in the Manual + RBL condition, M diff = +12.2s, t (91) = 3.69, p < .001, d = 0.79.

Questionnaires

Trust

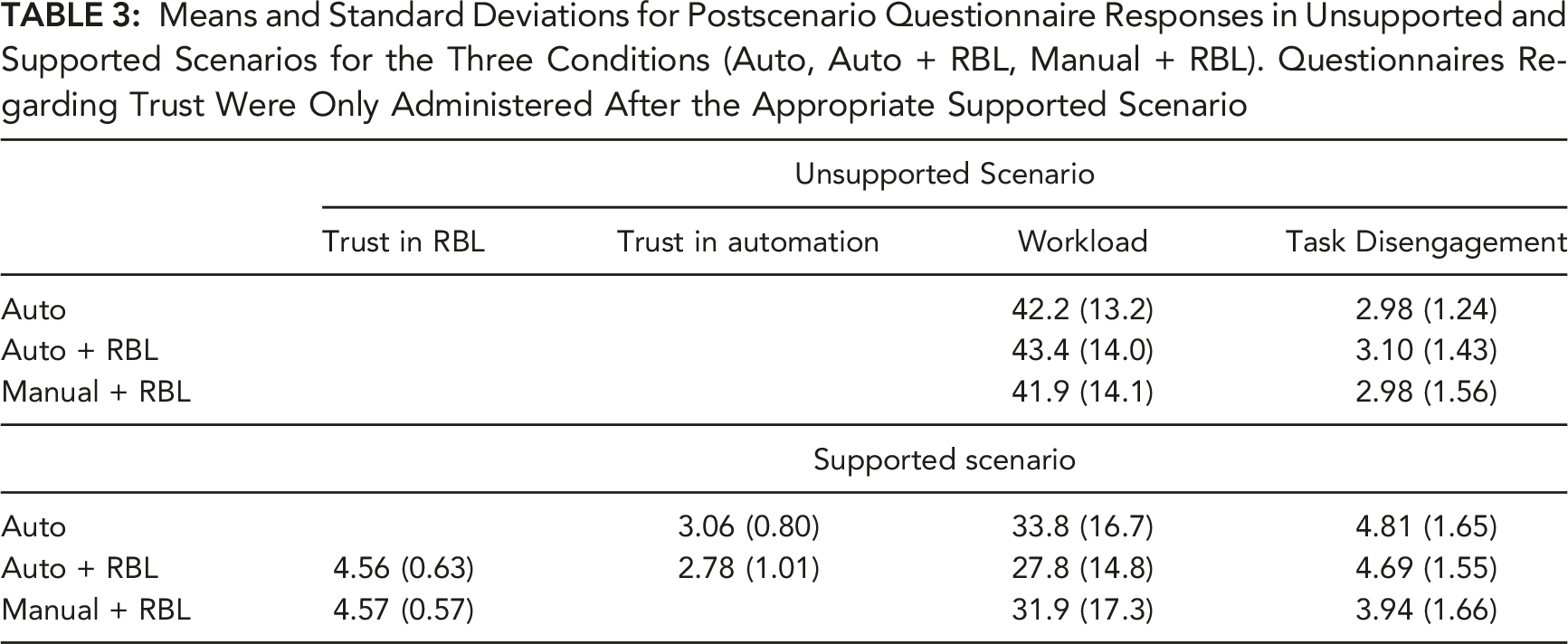

Means and Standard Deviations for Postscenario Questionnaire Responses in Unsupported and Supported Scenarios for the Three Conditions (Auto, Auto + RBL, Manual + RBL). Questionnaires Regarding Trust Were Only Administered After the Appropriate Supported Scenario

Workload

A 3 (condition) x 2 (scenario) ANOVA revealed an effect of scenario, F (1,136) = 64.0, p < .001, ηp2 = .32, no effect of condition, F < 1, and no interaction, F (2,136) = 2.33, p = .101. There were no differences between conditions in unsupported scenarios, F < 1. In supported scenarios, there was a trend towards participants in the Auto + RBL condition having lower workload than those in the Auto condition, t (90) = 1.85, p = .067, d = .38, but this was not significant. There was no workload difference between the Auto + RBL and Manual + RBL conditions, t (90) = 1.21, p = .229.

Task Disengagement

A 3 (condition) x 2 (scenario) ANOVA revealed a main effect of scenario, F (1,141) = 107.3, p < .001, ηp2 = .43, no effect of condition, F (2,141) = 1.90, p = .154, and an interaction between scenario and condition, F (2,141) = 3.34, p = .038, ηp2 = .05. There were no differences between conditions in unsupported scenarios, F < 1. In supported scenarios, there was no difference between the Auto + RBL and Auto conditions, t < 1. Participants in the Auto + RBL condition reported higher task disengagement than those in the Manual + RBL condition, t (94) = 2.28, p = .025, d = .47.

DISCUSSION

We investigated the extent to which providing a voluntary RBL tool improved participants’ ability to detect a single failure of high-degree conflict detection automation in simulated ATC. RBLs enabled the precise calculation of the relative arrival times of aircraft pairs at flight path intersections. We predicted that, when provided with automation, the inclusion of RBLs would improve automation failure detection, as participants could reliably check whether automation had missed a conflict. It was less clear whether RBLs would be as effective in supporting the detection of an automation failure as they would support detecting an identical conflict manually, as we reasoned that providing automation might have caused the infrequent, delayed, or otherwise inappropriate RBL use, compared to manual conflict detection with RBLs.

RBLs significantly improved automation failure detection, with participants intervening 79.2s faster compared to when not provided with RBLs (Auto + RBL vs Auto). RBLs also led to fewer conflict false alarms compared to no RBLs, and there was a trend towards improved failure detection accuracy (17% miss rate decreased to 6%). Providing RBLs did not impact self-reported trust in conflict detection automation or perceived task disengagement, and there was a trend towards RBLs reducing workload (although it is worth noting that workload in this study was relatively low for an ATC task; see Grier, 2015). Overall, when considering the findings that reached statistical significance, participants were able to identify automation failures faster, and were less prone to false alarming, when a mechanism for checking the validity of automated actions was provided.

Next, we addressed whether a cost of automation remained when participants could reliably check with RBLs whether automation had detected conflicts. Comparison of participants’ detection of an automation failure with an identical manual conflict event (Auto + RBL vs Manual + RBL) indicated no difference in miss rates or false alarm rates. Participants were, however, 19.1s slower to intervene to the automation failure compared to the identical manual event, even though both conditions were provided with RBLs.

Complacency could manifest in RBL use through either absent or delayed checking of potential conflicts. However, despite participants in the Auto + RBL condition reporting higher subjective task disengagement, almost all participants engaged RBL’s to check the automation failure event, with no difference between the Auto + RBL and Manual + RBL conditions (both 94%). Similarly, there was also no difference between these two conditions in the rate of RBL use on either conflict near-miss events or any other aircraft pairs. These results suggest that automation did not reduce the frequency of participants initially noticing potential conflict aircraft pairs (Remington et al., 2000), and engaging the RBL to subsequently check them. Secondly, there was no difference in the RBL engagement time when checking the automation failure event compared to the equivalent manual conflict, and similarly, no difference in engagement times for checking near-miss events. The cost of automation was therefore only apparent in the time taken to subsequently intervene to prevent the conflict, with participants in the Auto + RBL condition taking longer to act on the information provided by the RBL compared to the Manual + RBL condition (29.7s vs 17.5s), indicating a delayed use of RBL information (delayed decision-making).

There are several possible explanations for this finding. It may be that participants provided with automation were less proficient in general to detect conflicts, or less proficient in using the RBL, because they only needed to intervene once late in the 30-min supported scenario, compared to those in the manual condition who had to detect and resolve eight other conflicts manually before the equivalent event. We do not consider this explanation likely because if participants provided with automation were less proficient at conflict detection/RBL use, we would have expected a higher near-miss false alarm rate (i.e. more unnecessary interventions). Coupled with the hour of training and practice provided, we suggest reduced proficiency in intervening to prevent conflicts is unlikely to account for the delay in resolving the automation failure (however, we cannot rule this possibility out using the current experimental design).

An alternative is that participants took longer to decide whether to act on RBL information because they had to choose between trusting the automation (which indicated no conflict and had been perfectly reliable in detecting conflicts up to that point in time) and trusting the RBL (which indicated a conflict). In comparison, participants in the manual condition similarly provided with RBLs encountered no such incongruence, given the only additional source of information (other than their own estimation of aircraft relative arrival time; Neal & Kwantes, 2009) about conflict status came from the RBL. It is therefore possible that the additional time was used to decide which source of information to trust and rely on (automation or RBL), which led participants to delay their intervention. This is conceptually consistent with recent computational modelling in the human-automation-teaming literature indicating that when presented with automated advice as to the conflict status of aircraft (e.g. nonconflict), the response option incongruent with that automated advice (e.g. conflict) is inhibited (Strickland et al., 2021; 2023). The delay here might therefore have been caused by participants receiving incongruent inputs from the two information sources. We believe this explanation is highly plausible. However, given the elevated task disengagement ratings of participants in the Auto + RBL condition, we cannot discount the possibility that participants were complacent when interpreting information from the RBL after it was engaged on the failure event, and that this contributed to slowed conflict decision-making.

Practical Implications, Limitations, and Conclusions

While research suggests that both student (Rantanen & Nunes, 2005) and expert (Gronlund et al., 1998; Loft et al., 2009) controllers undertake similar processes when detecting conflicts, care should be taken when generalising these findings to air traffic controllers. Differences in experience, skill, and motivation to prevent real incidents (Jamieson & Skraaning, 2020) would likely influence the monitoring of automation and voluntary checking tools such as RBLs.

To summarize, we found that providing a means of voluntarily checking high-degree automation improved failure detection, relative to using automation without a checking tool. This suggests that providing voluntary-use checking tools, such as RBLs, alongside high-degree automation has the potential to improve the human supervision of automation. A possible limitation here is that participants’ sole task in supported scenarios was to supervise automation. Therefore, despite finding no costs associated with the tool here, future research should investigate the impact of voluntary checking tools on the performance of other concurrent tasks (e.g. aircraft acceptance/hand-off and providing weather advice).

While providing a voluntary checking tool eliminated most of the costs of automation on RTM performance (Onnasch et al., 2014), relative to manual task performance with the same tool available, subjective task disengagement remained higher with automation, and there was a delay in the time taken to enact the 100% reliable diagnostic information provided by the checking tool. The latter suggests that when the advice of two forms of assistance (automation and a checking tool) are incongruent, this may delay decision making.

Footnotes

Acknowledgments

This research was supported by Australian Research Council Discovery Grant DP160100575 awarded to Loft from the Australian Research Council, and Future Fellowship FT190100812 awarded to Loft from the Australian Research Council.

KEY POINTS

• We investigated the extent to which a voluntarily engaged checking tool improves supervision of high-degree conflict detection automation in simulated air traffic control. • Providing a checking tool improved participants’ detection of a single failure of automation, relative to when using automation without a checking tool. • Participants were still slower to intervene to the automation failure than to an identical manual conflict event – despite both conditions being provided the same checking tool. • Providing voluntary checking tools, such as range and bearing lines in air traffic control, alongside high-degree automation can improve human supervision of automation but may not entirely eliminate return-to-manual costs.