Abstract

Objective

The study aimed to provide a comprehensive overview of the factors impacting technology adoption, to predict the acceptance of artificial intelligence (AI)-based technologies.

Background

Although the acceptance of AI devices is usually defined by behavioural factors in theories of user acceptance, the effects of technical and human factors are often overlooked. However, research shows that user behaviour can vary depending on a system’s technical characteristics and differences in users.

Method

A systematic review was conducted. A total of 85 peer-reviewed journal articles that met the inclusion criteria and provided information on the factors influencing the adoption of AI devices were selected for the analysis.

Results

Research on the adoption of AI devices shows that users’ attitudes, trust and perceptions about the technology can be improved by increasing transparency, compatibility, and reliability, and simplifying tasks. Moreover, technological factors are also important for reducing issues related to human factors (e.g. distrust, scepticism, inexperience) and supporting users with lower intention to use and lower trust in AI-infused systems.

Conclusion

As prior research has confirmed the interrelationship among factors with and without behaviour theories, this review suggests extending the technology acceptance model that integrates the factors studied in this review to define the acceptance of AI devices across different application areas. However, further research is needed to collect more data and validate the study’s findings.

Application

A comprehensive overview of factors influencing the acceptance of AI devices could help researchers and practitioners evaluate user behaviour when adopting new technologies.

Background

Although rapid advances in machine learning have made it increasingly applicable to expert decision-making, the delivery of accurate algorithmic predictions is insufficient for effective human–artificial intelligence (AI) collaboration (Cai et al., 2019). Therefore, research focusing on factors that ensure successful interactions with AI technologies has been increasing. Furthermore, research on human−AI interaction has become more significant in different fields in which AI tools are widely used, such as autonomous driving (AD), healthcare, robotics and education.

Although AI has numerous applications that can offer significant benefits, existing studies indicate that there is a need for an independent evaluation of the factors influencing AI use. At present, many published studies on AI focus on the technology itself, as developers are keen to demonstrate that the technology works, while the effects of other factors on technology acceptance remain unclear (Sujan et al., 2020). Some existing studies have examined psychological factors integrated into behaviour theories or related to user behaviour (e.g. attitude, perceived usefulness (PU), perceived ease of use (PEOU), trust) to explain technology acceptance (Jing et al., 2020). However, prior studies suggest that research on human factors related to user demographics and cognitive aspects should accompany AI development from the outset (Sujan et al., 2020). Furthermore, it may be helpful to consider additional factors related to human values in establishing successful interactions with AI systems (de Visser et al., 2020). Currently, a lack of trust in AI systems is a significant barrier to technology adoption. Trust in AI can be influenced by several human factors (e.g. education, experience, perception) and properties of the AI system (e.g. transparency, complexity, controllability) (Asan et al., 2020). Thus, investigating the influence of both technological and human factors on user behaviour towards AI systems could greatly help to predict the acceptance of AI-infused systems among different user groups. Therefore, the present study aimed to analyse the effects of behavioural, technological and human factors on technology adoption.

Technology Acceptance Theories

Numerous models and frameworks have been developed to explain user adoption of new technologies (Taherdoost, 2018). The theory of reasoned action, developed by Fishbein and Ajzen (1975), suggests that behaviour is determined by the intention to perform that behaviour, and this intention is, in turn, a function of individual attitudes towards the behaviour and subjective norms (SNs). Similarly, the technology acceptance model (TAM) suggests that technology acceptance can be determined by a user’s behavioural intention (BI), which is also known as the intention of use (IU) of the technology. Technology acceptance model explains user intention to apply the technology based on three factors: PU, PEOU and attitude. Therefore, TAM contains not only BI but also two central beliefs, PU and PEOU, which have a considerable impact on user attitude and intention (Taherdoost, 2018). Recently, a theory with clear similarity to TAM, the Unified Theory of Acceptance and Use of Technology, has been developed in an impressive attempt to merge the literature on information technology acceptance (Venkatesh et al., 2003). Venkatesh et al. (2003) changed PU into a performance expectancy construct, PEOU into effort expectancy, and SNs into social influence (SI), and added facilitating conditions as a fourth construct. All four constructs are moderated by gender, age, experience and voluntariness of use (Taherdoost, 2018).

Several researchers have reviewed the factors that influence user acceptance of AI devices in the AD field. In particular, Jing et al. (2020) used behaviour theories to examine factors affecting the acceptance intention of autonomous vehicles (AVs), Becker et al. (2019) investigated the heterogeneity of AV preferences among different groups by comparing survey methods used to assess AV acceptance, and Peek et al. (2014) studied the factors influencing technology acceptance among community-dwelling older adults. However, to the best of our knowledge, the general acceptance of AI-based technologies across different application fields (e.g. healthcare, robotics) has not been extensively reviewed. In addition, the effects of technological, human and behavioural factors on AI adoption have not been widely examined in technology acceptance related research.

Thus, the present study had two objectives. The first objective was to investigate the factors affecting the adoption of AI-infused systems across different application domains. The second objective was to provide a comprehensive review of the relationships among technological, human and behavioural factors and their impact on user acceptance of AI technologies. In addition, based on the findings, this study aimed to draw implications that could be applicable in further research.

Method

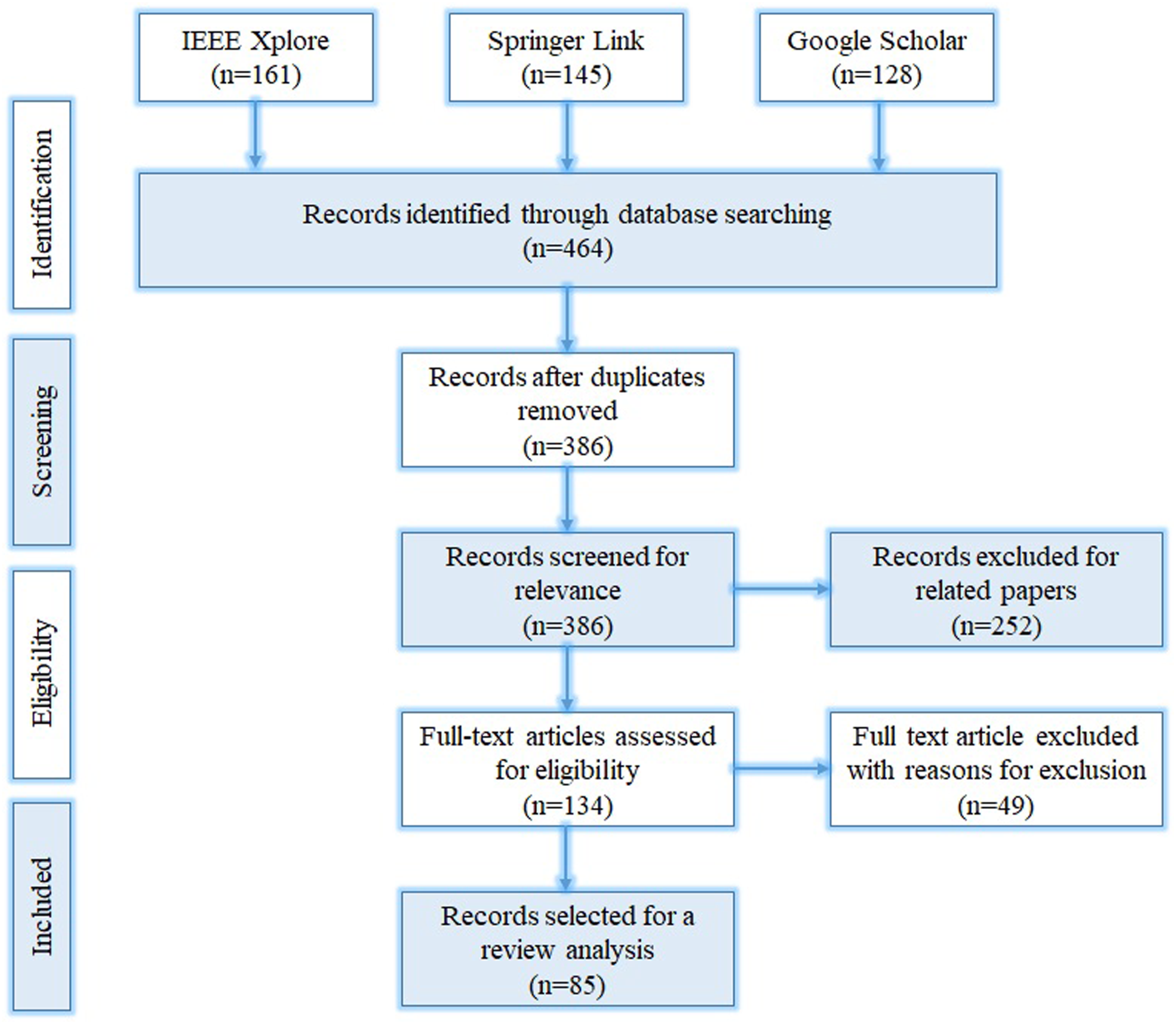

In this study, we employed the Preferred Reporting Items for Systematic Reviews and Meta-analysis (PRISMA) model developed by Moher et al. (2009). Using PRISMA for systematic reviews is beneficial because it reduces the risk of including an excessive number of studies addressing the same question (Bagshaw et al., 2006; Biondi-Zoccai et al., 2006) and provides greater transparency when updating systematic reviews. Figure 1 shows the steps taken and the PRISMA model applied in the present study. Flow diagram of the Preferred Reporting Items for Systematic Reviews and Meta-analysis model used in the present study.

Using keywords such as ‘artificial intelligence’, ‘technology adoption’, ‘acceptance’ and ‘intention of use’ we searched the IEEE Xplore, Springer Link and Google Scholar databases, which include articles on engineering, computer science and transportation. First, we searched Springer Link and IEEE Xplore using the search string ‘artificial intelligence’ AND ‘intention’ AND (‘adoption’ OR ‘acceptance’). Second, on Google Scholar, we used ‘artificial intelligence’ AND other keywords (‘intention’, ‘adoption’, and ‘acceptance’) individually to search for unique papers related to the study. Through all databases, only papers that pertained to AI and used at least one phrase related to acceptance and IU were included in the analysis. Further inclusion criteria used to identify eligible articles for the analysis were papers that focused on AI technologies, addressed factors influencing technology adoption, and were published in English, while the exclusion criteria were duplicate studies and studies published before 2000.

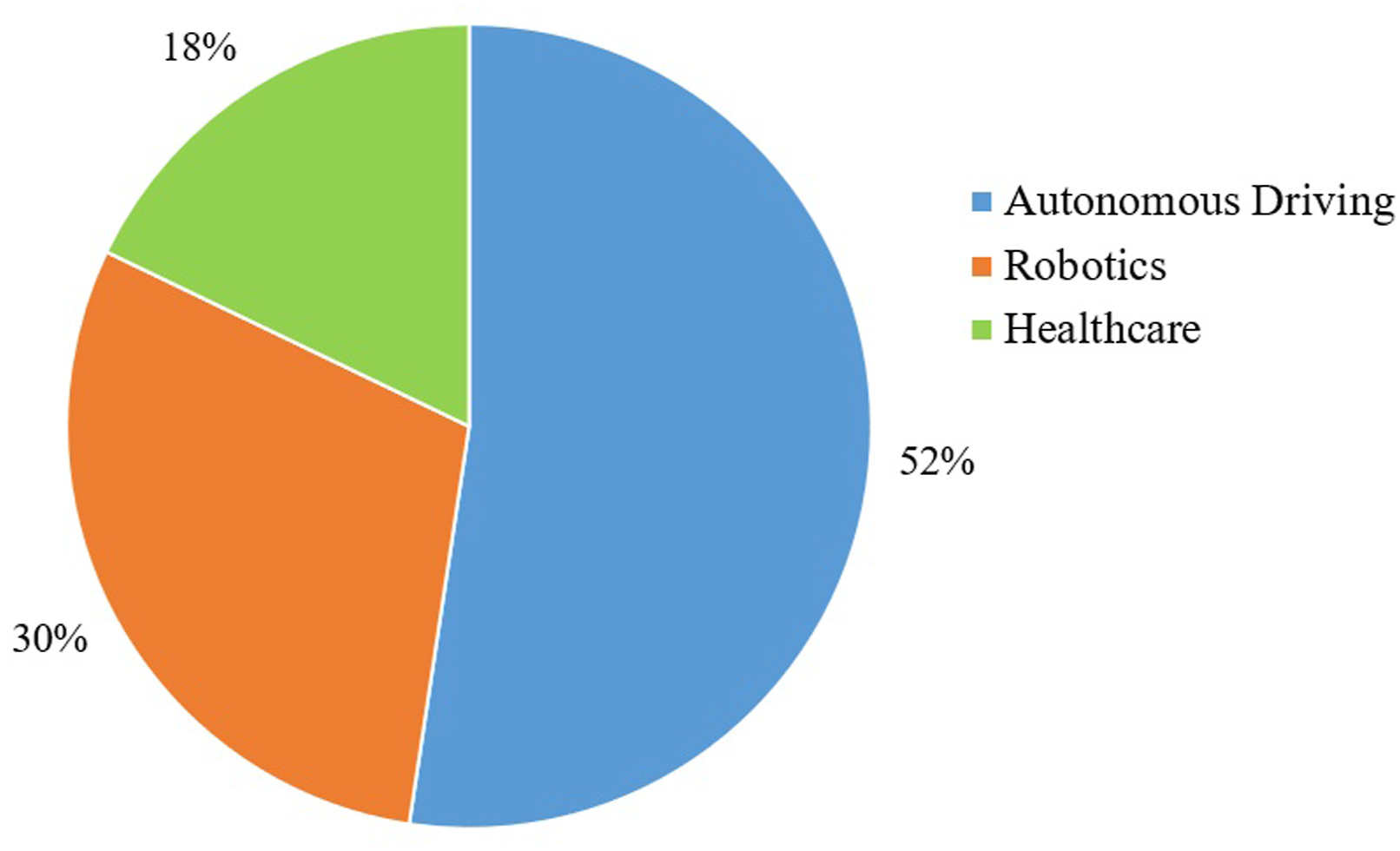

The last search was performed on December 1, 2020, and the included studies were limited to that date. A total of 464 publications were found based on the related keywords above: 161 from IEEE Xplore, 145 from Springer Link and 158 from Google Scholar. As mentioned above, a different search strategy with the same keywords was used to search for unique papers on Google Scholar compared to the other databases. As a result, 78 duplicate papers were identified and excluded from the analysis. Then, 386 publications were screened based on their abstracts to verify their relevance to the study objectives; 249 of these publications were excluded after screening. Next, 137 full-text articles were assessed for eligibility criteria, including inclusion and exclusion criteria. Finally, 85 publications related to AD, healthcare and robotics were selected for further analysis. A total of 44 publications were found in the most studied application area, AD, followed by 25 and 16 publications in robotics and healthcare, respectively (Figure 2). Proportion of literature by application area.

Although papers on acceptance of AI devices have also been published in other fields, particularly education and telecommunications, the number of studies related to these areas (less than five in total) differed sharply from that of research in the selected application domains. Thus, papers belonging to other application domains were excluded, and the application areas were limited to three for the analysis. We also considered that increasing the application areas would complicate the review analysis and prevent accurate conclusions from being drawn across areas.

Results

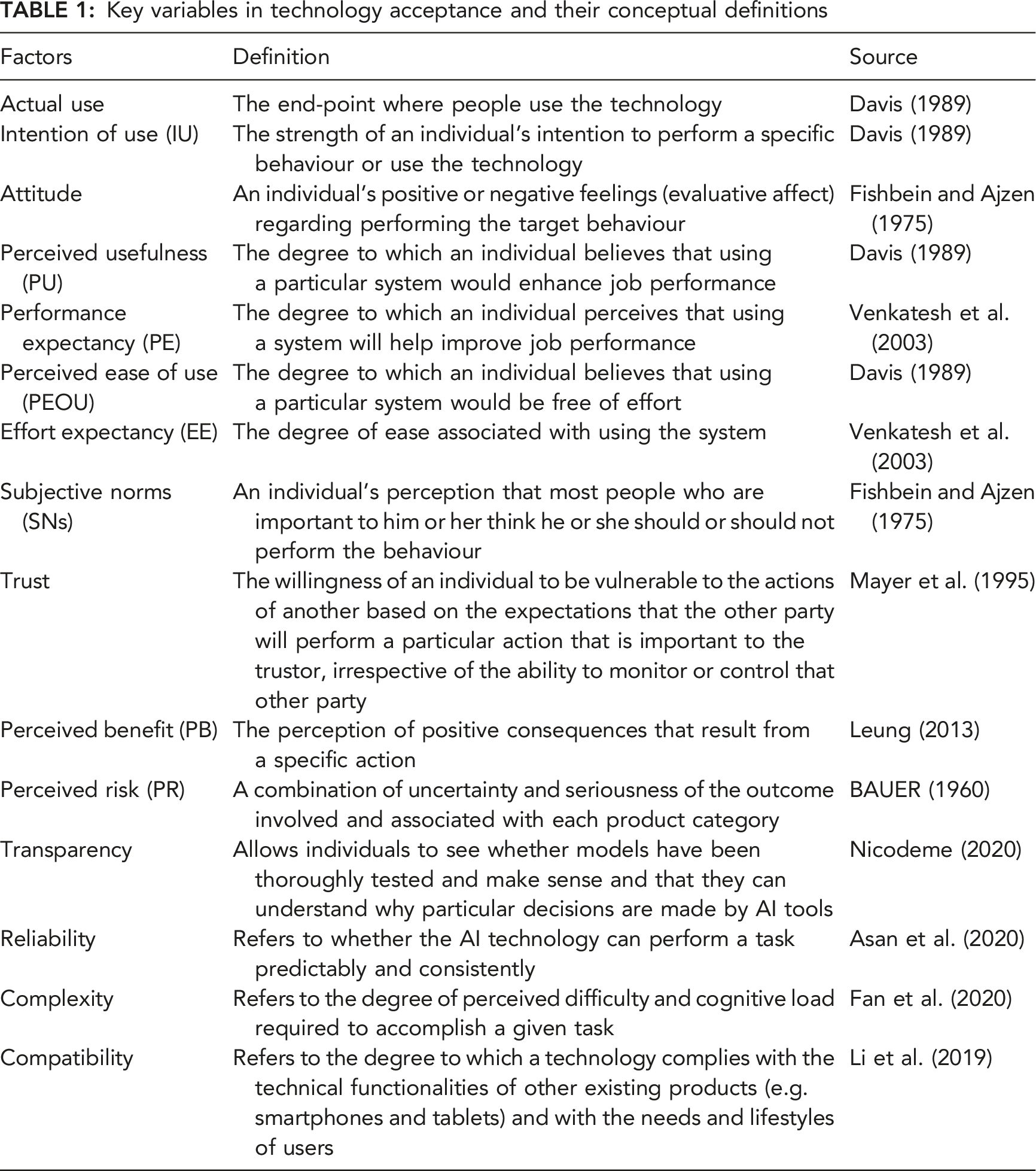

Key variables in technology acceptance and their conceptual definitions

Factors Affecting Acceptance of AI Devices in Studies with Behaviour Theories

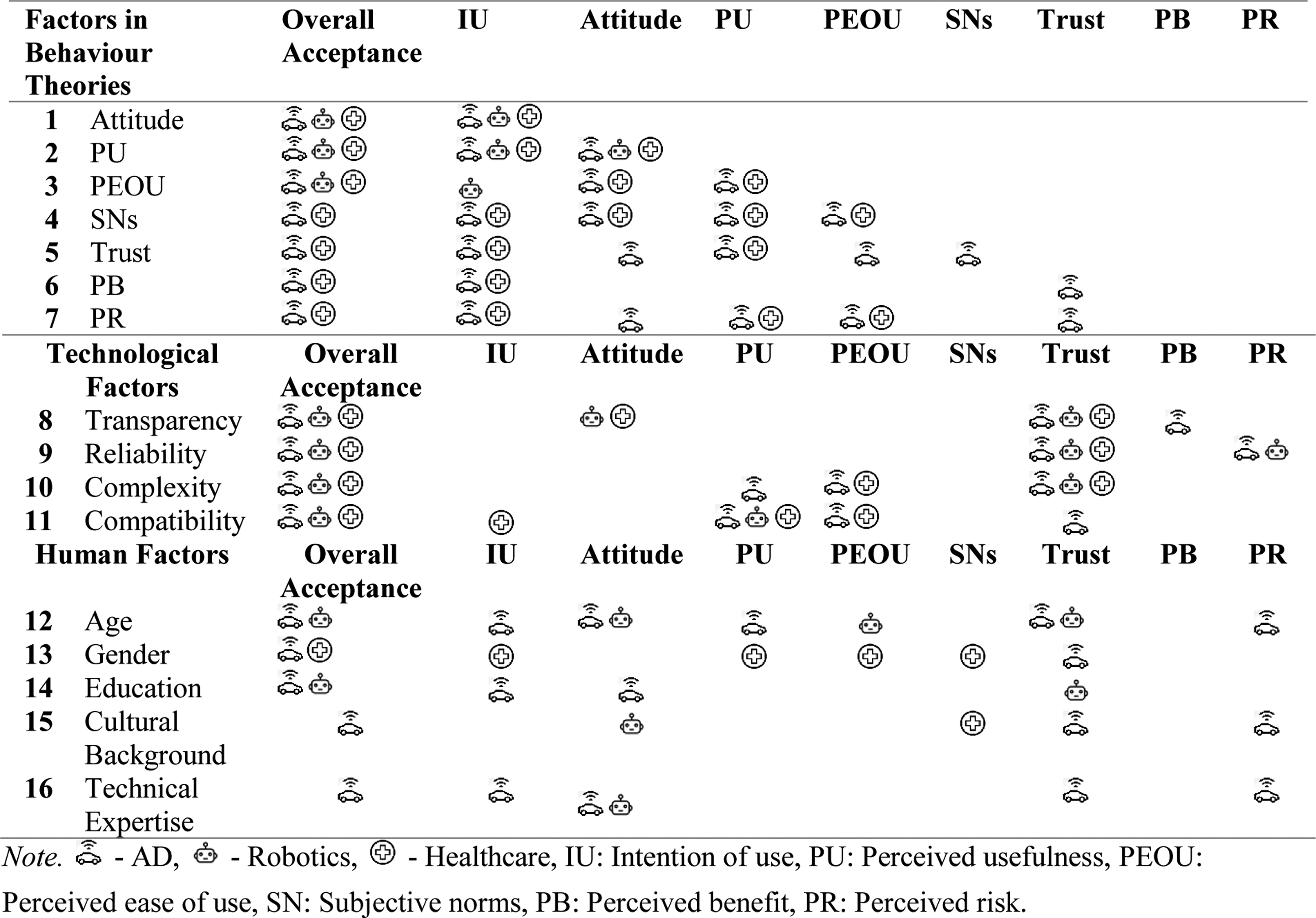

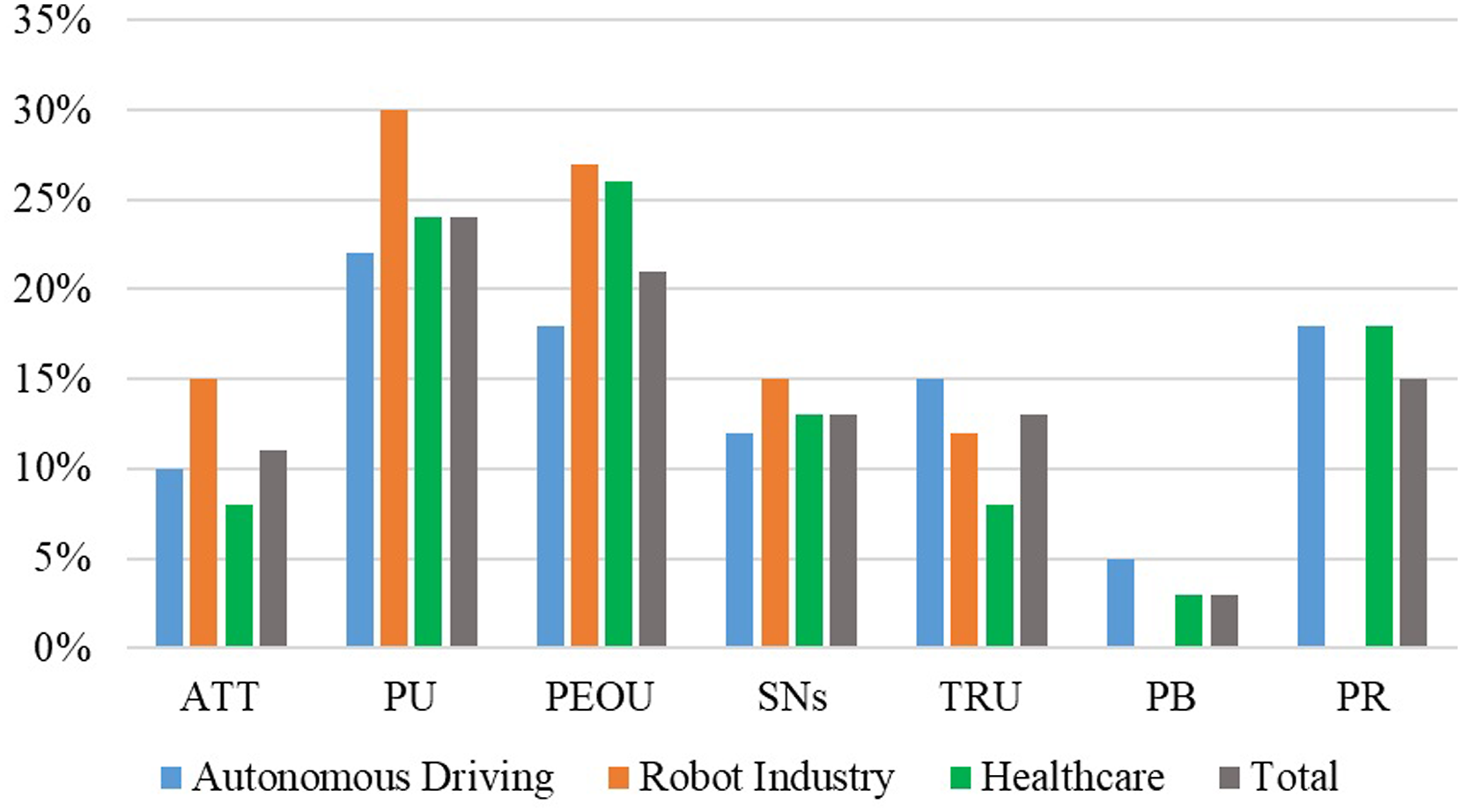

This section discusses the effects of key factors in behaviour theories on IU of AI systems and highlights the relationships among them. Figure 3 shows the results of the frequency analysis of the main factors found in behaviour theories: attitude, PU, PEOU, SNs, trust, perceived benefits (PBs), and perceived risks (PRs). Frequency was calculated based on the number of references that emphasised the effects of each factor on AI device acceptance. Frequency analysis of the factors in behaviour theories of technology acceptance.

Figure 3 shows that PU and PEOU, which are the basic constructs of TAM, have been extensively studied in each application area considered, followed by PR, SNs and trust. The effect of Perceived benefit (PB) on BI to use AI tools has been explored relatively less than other factors in technology acceptance studies. Regarding AD, PU has been studied more extensively, followed by PEOU, PR and trust. In robotics, the impact of PU on technology acceptance was the most discussed, while PEOU, attitude, and SNs were the next most studied factors. PEOU was the most studied factor in healthcare, followed by PU, PR and SNs.

Attitude

In addition to the definition by Fishbein and Ajzen (1975), it has been argued that attitude is an accumulation of information regarding an object, person, situation, or experience leading to a predisposition to act in a positive or negative way towards some object or technology (Littlejohn, 2002). Thus, users may behave or react based on their positive or negative attitude towards specific technology. The results of this review confirmed that attitude positively influences users’ IU of AI-infused systems across all studied application areas. For example, people with more positive attitudes towards autonomous shuttles as an alternative to public transport are more likely to use them in the future (Chen, 2019; Man et al., 2020). In addition, it was found that physicians with more positive attitudes tend to exhibit greater IU for AI technologies (Alhashmi et al., 2020). Furthermore, the attitude was found to affect perceived privacy risk (PPR). Therefore, it was suggested that providing users with control over their personal information will reduce PPR and increase positive attitudes towards AVs (Walter & Abendroth, 2020).

Some specific relationships were also found between attitude and human factors across application areas. Young people were observed to have more positive attitudes compared with other age groups, as they are more likely to be open to AVs recording and using their data (Biermann et al., 2020). In healthcare, Zhang et al. (2014) showed that, for men with more positive attitudes, increases in the level of SNs have a decreasing marginal impact on adoption intention; however, this was not confirmed for women. In interactions with social assistant robots, users with high levels of technology experience were likely to have more positive attitudes towards these robots (Gessl et al., 2019). Research further suggests that attitude has a weaker effect on IU than PU in the acceptance of service robots in Korea, implying that users in Korea were more likely to be concerned regarding the usefulness in the adoption of service robots (Park et al., 2013).

PU and PEOU

PU and PEOU showed a high level of influence in each application area on AI device acceptance. In particular, for AVs, users were more concerned regarding the usefulness rather than the ease of use (Choi & Ji, 2015). For individuals already familiar with driving, using an AV does not seem to be a difficult task (Choi & Ji, 2015; J. Lee, D. Lee et al., 2019; S. Lee, Ratan et al., 2019). Moreover, in the adoption of ophthalmic AI devices, the public might perceive them as intelligent as newly developed products and believe that they should be convenient and easy to use (Ye et al., 2019). However, some contradictory results were also found. In particular, Walter and Abendroth (2020) and Garidis et al. (2020) reported that PU did not have a significant effect on IU of AVs, while Chen (2019) found PU had much less of an effect than PEOU on attitude.

The positive effects of PEOU and PU on trust in AI-based technologies in healthcare were confirmed (Fan et al., 2020; Zhang et al., 2017). In addition, regarding robotics, Heerink et al. (2009) found that the social abilities of robotic systems could help older adults perceive the system as more useful and effortless, which, in turn, could help increase the acceptance of robots and screen agents. Ezer et al. (2009) emphasised that both younger and older age groups would be willing to use and accept robotic technologies in their homes, as long as they perceive that using these technologies would be beneficial and not too difficult.

SNs

Subjective norms were found to have a positive impact on adoption intention and PU and a negative impact on PRs of AVs (Zhu et al., 2020; Zmud & Sener, 2017). In particular, media was shown to be a critical influence on AD adoption intention (C. Lee, B. Seppelt, et al., 2019; Zhu et al., 2020). While C. Lee, B. Seppelt, et al. (2019) found that frequent reporting in the media of accidents involving AVs in most cases negatively affected users’ attitudes towards the technology, Zhu et al. (2020) argued that the more impressions users received from media, the greater their self-efficacy regarding AV use. In addition, users were mostly influenced by family members, peers, friends and colleagues to use AVs and mobile health devices (Hoque, 2016; Walter & Abendroth, 2020; Ye et al., 2019; Yuen, Thi, et al., 2020). A negative relationship was observed between SNs and trust, implying that the more trust people have in their IU for AVs, the less will they be influenced by family members, friends and peers (Panagiotopoulos & Dimitrakopoulos, 2018).

T. Zhang, H. Tan, et al. (2019) emphasised that those who perceived their friends as having a more positive attitude towards AVs had higher PEOU and PU of AVs, showed higher trust, and were more likely to use AVs when available. This is expected to have a greater impact in collectivistic cultures, owing to group conformity. Likewise, Ye et al. (2019) emphasised that SNs are the most important predictor of IU and confirmed the effect of SNs on PU in the adoption of ophthalmic AI devices. According to Ye et al. (2019), in China, when individuals encounter new technologies, such as ophthalmic AI devices, PU, PEOU and IU are likely to be influenced by the perceptions of close friends and relatives, colleagues, peers and superiors or leaders in the workplace because of crowd mentality and collectivistic culture.

In addition, SNs were observed to be related to age and gender. Li et al. (2019) found that, in terms of SI, the attitudes and decisions of older adults in using wearable smart systems were significantly influenced by the opinions of their children and grandchildren. For men, having highly positive attitudes increases in the level of SNs have a decreasing marginal impact on IU for intelligent health devices (Zhang et al., 2014). Although there were several studies on SNs in robotics (Gessl et al., 2019; Heerink et al., 2006; L. Y. Lee, W. M. Lim, et al., 2020; Piasek & Wieczorowska-Tobis, 2018), no significant findings were found regarding the significant impact of SI on IU for robots.

Trust

Trust has been found to exhibit strong direct and indirect effects on BI and overall acceptance of AVs and AI-based healthcare technologies. It was confirmed that trust has a significant direct effect on BI in AD and healthcare (Dirsehan & Can, 2020; Fan et al., 2020; Nadarzynski et al., 2019), while it was the most important, but not the only, direct antecedent of BI to use AVs (Panagiotopoulos & Dimitrakopoulos, 2018; Zhang, Tan, et al., 2019). Zhang, Tao, et al. (2019) and Man et al. (2020) found that trust has a stronger effect than PU and PEOU on attitudes towards AVs, which was explained by the fact that, in uncertain situations, trust is a solution for the specific problems related to the risk factors. Trust showed a significant moderating effect on the path from PU to IU for AI-based healthcare technologies, indicating that if people’s trust in AI technologies improves, these users will adopt AI technology, even if the devices are not as useful as expected (Ye et al., 2019). However, contradictory results were also found. While the effects of trust on IU and PR in AD were not supported by Walter and Abendroth (2020), Chen (2019) found that the path from trust to IU was not significant, although trust positively influenced attitude. Age and gender were also found to be related to trust in AD. It was observed that it is more difficult to gain the trust of older participants than younger ones, while women showed higher trust than men in using AVs (Chen, 2019; J. Lee, Abe, et al., 2020). No significant effects of trust on the acceptance of robotic devices were found.

Perceived Benefit

Perceived benefit showed significant effects on the adoption of AVs and AI-related healthcare devices. Liu et al. (2019) argued that PB is a stronger predictor of fully automated driving acceptance than PR. Moreover, Manfreda et al. (2019) found that PB had the greatest impact on AV adoption, whereas perceived safety can significantly reduce perceived problems with AV acceptance. In healthcare, users tend to use and accept medical technologies when they perceive the benefits as exceeding the risk of privacy loss (Li et al., 2019). In addition, a direct effect of PB on IU for healthcare wearable devices was observed. Therefore, it was suggested that focusing more attention on forming users’ perceptions regarding the potential benefits they could receive from technology could greatly facilitate increased acceptance (Brell et al., 2019a; 2019b).

PR

Perceived safety risk

Several findings were noted regarding perceived safety risk (PSR) in AD. In particular, J. Lee, D. Lee, et al. (2019) and Garidis et al. (2020) confirmed the direct effect of PR on IU for AVs. This indicates that users who perceive a high degree of safety are more likely to use AVs than people who assume a low degree of safety, and PRs regarding AVs are mostly related to external factors, such as system errors or random accidents. Man et al. (2020) found a relationship between PSR and trust, in those drivers who felt the security risks of using AVs were acceptable tended to have a high level of trust in AV. The negative impact of driving experience was confirmed, as with increasing experience, PR decreases for novel driving technologies (Brell et al., 2019a; 2019b). Moreover, it was found that PSR is a crucial factor in decreasing the adoption of AVs among millennials since the perceived safety of AVs significantly reduces the impact of concerns related to AVs (Manfreda et al., 2019). However, in both robotics and healthcare, no significant findings regarding the effects of PSR on the adoption of AI devices were reported.

PPR

Perceived privacy risk was found to negatively impact attitudes towards AD, as well as PU and PEOU in healthcare (Dhagarra et al., 2020; Walter & Abendroth, 2020). Therefore, Walter and Abendroth (2020) suggested that service providers should take measures to strengthen user control over their personal information to reduce PPR and ultimately increase positive attitudes towards the use of vehicle services. The more individuals worry about data privacy issues, the less likely they are to use self-driving cars (Zhu et al., 2020). However, some contradictory results were found for AD. In particular, Choi and Ji (2015) and Bogatzki et al. (2020) argued that PPR is not a significant factor in predicting BI, while trust has a negative effect on PPR. Bogatzki et al. (2020) explained this by positing that consumers may not be aware of the scope of data collection when they utilize AVs. Furthermore, the path from PPR to trust was not significant (Man et al., 2020; Zhang, Tao, et al., 2019). This was because the potential losses associated with a violation of privacy while driving are not as immediate and obvious as the losses associated with a risk to personal safety. Ye et al. (2019) found that PPR does not affect IU for AI technologies in healthcare. This could be explained by the fact that people are accustomed to providing personal information when registering on an app or receiving nuisance calls. However, perceived privacy should not be overlooked by designers because the use of medical history data applied to teach AI requires steps to be taken to prevent the data from being passed on to third parties or cyberattacks (Balta-Ozkan et al., 2013).

Technology-Related Factors in Technology Adoption

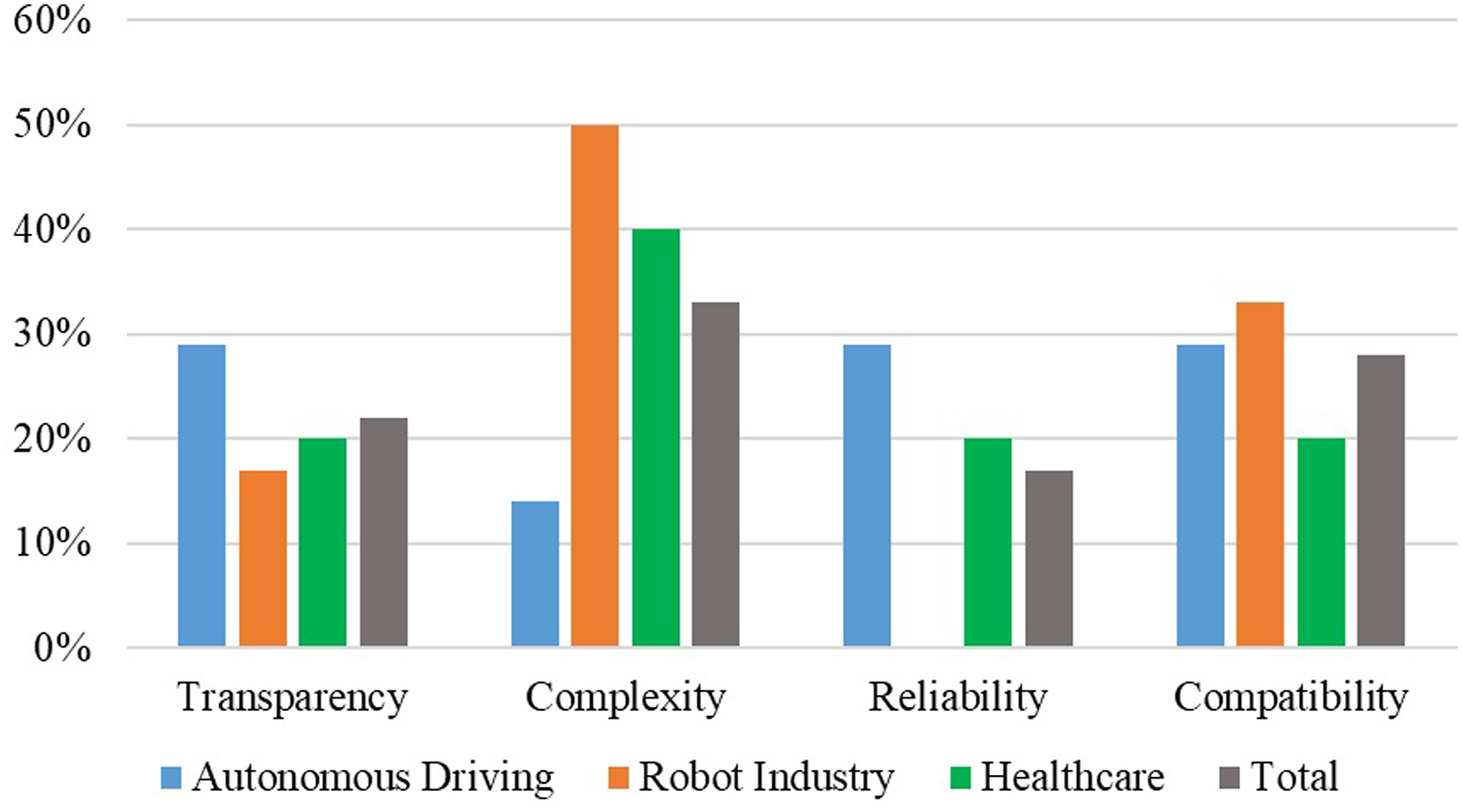

In studies on the acceptance of AI devices, numerous technology-related factors have been found to affect behavioural factors and overall acceptance. Figure 4 shows the key factors related to AD, healthcare, and robotics: transparency, reliability, complexity and compatibility. Frequency analysis of the technological factors.

As shown in Figure 4, compatibility, complexity and transparency of the AI system are the most discussed technological factors in all fields. In AD, the impact of complexity on AV acceptance was studied least; however, its impact on AI-based healthcare devices was the most studied in healthcare, followed by reliability and difficulty level. However, relatively few studies have examined the effects of technological factors on the use and adoption of robotic technologies, with only limited research available on the effects of compatibility, complexity and transparency on the acceptance of robots.

Transparency

Transparency was found to significantly moderate and influence trust in predicting IU for AVs and robotic devices (Choi & Ji, 2015; de Visser et al., 2020; Weitz et al., 2020). Therefore, user perceptions of the accuracy of autonomous technology must be clearly defined by providing adequate transparency, to help drivers predict and understand the operation of AVs and the features that enable them to regain control (Choi & Ji, 2015). Moreover, Hutchins et al. (2019) stated that transparency greatly impacts the psychological feedback of any decision that a system makes. In human−robot interactions, transparency can help calibrate trust by conveying information regarding an agent’s uncertainty, dependence and vulnerability (de Visser et al., 2020). Evidence suggests that a lack of transparency, with respect to the decisions of an AI system, might have a negative impact on the trustworthiness of a system (Weitz et al., 2020). It was further suggested that adding transparency to a model’s higher level design objectives can be helpful for improving user attitudes and outcomes in healthcare (Cai et al., 2019).

Complexity

Regarding the effects of complexity, Heerink et al. (2009) suggested that the simplicity of the tasks might warrant a closer look. Because task simplicity enables setting up a strict scenario with few surprises, it gives participants less of an idea of the whole range of tasks that a robot agent could do for them. In relation to AD, K. F. Yuen, Y. D. Wong, et al. (2020) suggested that for complexity, the process of using or interacting with AVs can be simplified by reducing the number of AV components that require human interaction and the number of relations between these components. The complexity of AVs can also be reduced by stimulating public interest, increasing the public’s capability of using AVs, and eliciting positive experiences from using AVs. Furthermore, Fan et al. (2020) found significant positive effects of task complexity on PEOU and trust in AI-based healthcare devices. In addition, Ziefle and Röcker (2010) identified that the ease of using medical technology is the most significant criterion, in line with data manageability and comfort of communication with technologies for the acceptance of pervasive healthcare systems.

Reliability

Reliability was found to be positively associated with IU and trust in AD and healthcare (Asan et al., 2020; Brell et al., 2019a; Kaur & Rampersad, 2018; Siau & Wang, 2018). Brell et al. (2019a) suggested that users’ perceptions regarding the reliability of AVs must increase; otherwise, even if AVs become highly reliable, users might still not trust the system and prefer human drivers. Furthermore, reliability showed a positive influence on PU of AVs (Hutchins et al., 2019). In robotics, Siau and Wang (2018) emphasised that reliability is a key factor in developing continuous trust in AI systems. To achieve reliability, the AI application should be designed to operate easily and intuitively without unexpected downtime and crashes.

Compatibility

System compatibility was found to be an important influencing factor for the acceptance of AVs and robotic technologies. Compatibility showed a significant positive influence on PU, PEOU, and trust in AD, indicating that if AVs have good compatibility and system quality, drivers tend to have a high level of trust in AVs and believe they are both useful and easy to use (Man et al., 2020). In addition, K. F. Yuen, Y. D. Wong, et al. (2020) reported that compatibility is an important factor affecting public acceptance of AVs. In healthcare, Li et al. (2019) found that, for wearable smart systems, hardware compatibility with current communication equipment, weight and volume of the wearable device, and lifestyle significantly affected IU among older adults. In robotics, compatibility is one factor that can predict IU through PU (Turja et al., 2020).

Human Factors in Technology Adoption

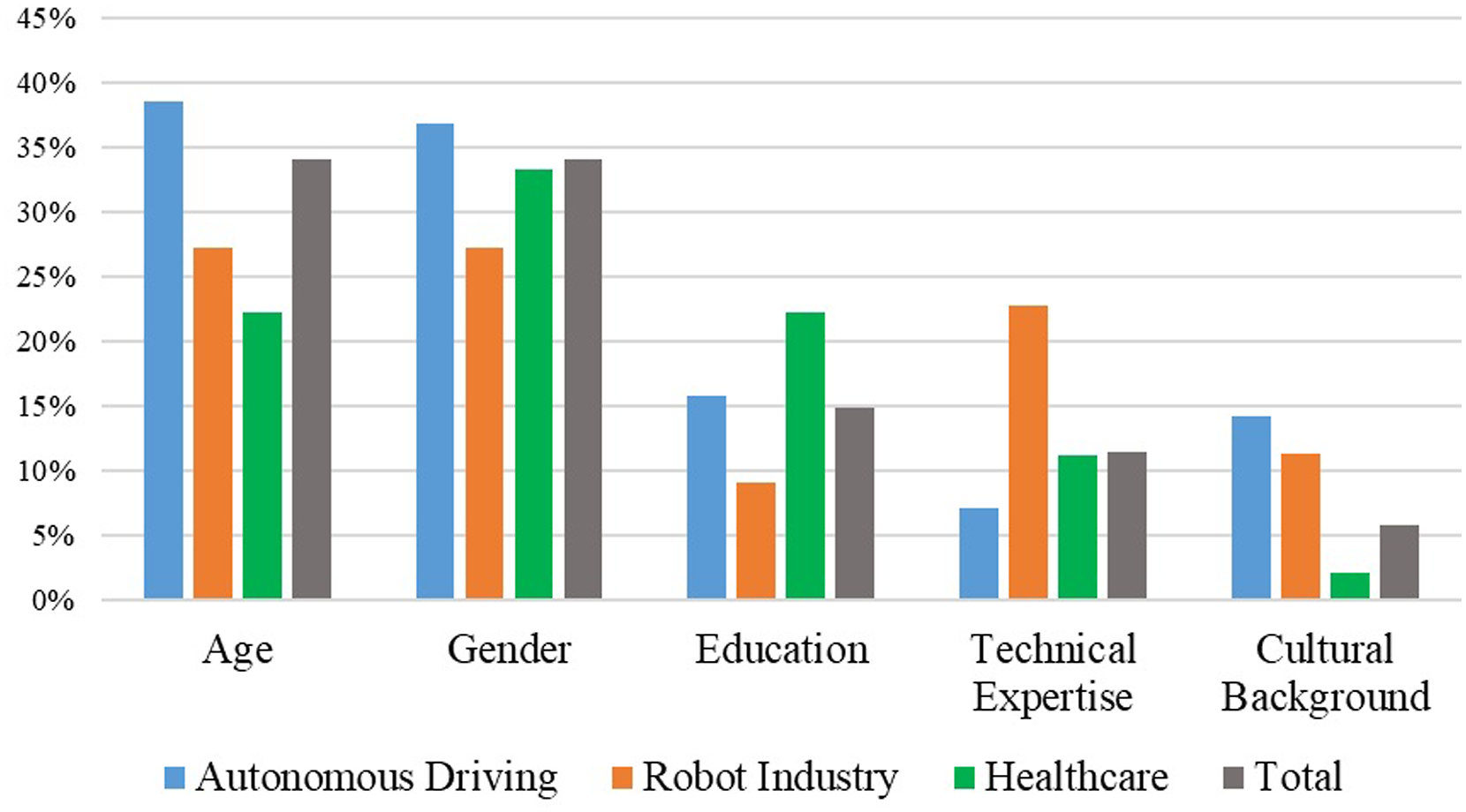

The effects of human factors, specifically age, gender, education, cultural background and experience, on technology adoption are discussed in this section. Figure 5 shows the frequency analysis of the studies on these factors across different application areas. Frequency analysis of human factors.

User age and gender, followed by education, were the most frequently studied as moderating variables in the acceptance of AI devices across different application areas. For AD, the effects of age on AV adoption were discussed most often, whereas, for the acceptance of healthcare devices, the effects of gender were found to be studied more frequently. In robotics, studies on these two factors were found to be almost equal in number. However, unlike the other two application areas, the impact of technological expertise in the robot industry has been studied more extensively than that of education. As noted above, the effects of human factors on AI acceptance have been studied most extensively in AD, followed by robotics and healthcare.

Age

Several studies identified the moderating effects of age on the adoption of AVs, indicating that users have different attitudes towards and interest in using AVs based on their age group. For AD, older people were found to be more likely to prefer conventional cars, since they tend to be attuned to their lifestyle and less open to trying new technologies (Haboucha et al., 2017). Chen (2019) observed that users over the age of 40 were more concerned about the system’s ease of use, and it was difficult to gain their trust regarding AVs. However, among younger users, trust was found to have a positive effect on attitude. AVs were further found to be more appealing to younger users when compared with older people (Bansal et al., 2016). Older people are often reluctant to adopt new technologies if they cannot perceive them as potentially reliable and useful, while younger people are typically enthusiastic about experiencing new technologies (Deb et al., 2017; T. Zhang, D. Tao, et al., 2019). Additionally, older users typically have more concerns regarding manufacturers using their private information, whereas younger drivers are generally open to AD technologies recording and using their data (Biermann et al., 2020).

Likewise, in human−robot interactions, older people tend to distrust robotic technologies they perceive as potentially unsafe, whereas younger people show higher positive feelings and overall confidence in the ability of robots to perform tasks (Scopelliti et al., 2005). Furthermore, older people interact with robots as they would with humans. This includes politeness markers and social speech acts that are not only unnecessary but also likely to severely confuse an actual end-to-end spoken dialogue system. Whereas, younger people interact with them efficiently and adapt quickly to systems (Wolters et al., 2009). Another difference between older and young users in the adoption of robotic technologies is that older adults tend to be concerned regarding the physical and ecological issues associated with robots, whereas younger users expect robots to have more features and functions (Nomura et al., 2009). However, contradictory findings indicated that age showed no significant effects in relation to either AD or robotics, which was unexpected because older generations would be less technologically savvy than younger ones (Bogatzki et al., 2020; Sener et al., 2019; Yuen et al., 2020; Zmud & Sener, 2017). Ezer et al. (2009) argued that both younger and older people are willing to accept a robot in their home if it is beneficial and not too difficult to use.

Gender

While Bogatzki et al. (2020) and Kettles and Van Belle (2019) noted that gender did not significantly affect overall BI and acceptance of AVs, other studies observed the significant effects of gender on AV acceptance. In particular, it was found that, compared with men, women are more likely to trust and adopt AVs (J. Lee, G. Abe, et al., 2020; Panagiotopoulos & Dimitrakopoulos, 2018). Panagiotopoulos and Dimitrakopoulos (2018) identified that women (almost 78%) were more likely to have or use AVs when they became available on the market, while the corresponding percentage of men was lower (almost 59%). In contrast, other studies found that men were more willing to drive AVs and had fewer concerns regarding data privacy (Biermann et al., 2020; Souders & Charness, 2016). Hohenberger et al. (2016) found that gender had a negative indirect effect on willingness to use through anxiety and a positive indirect effect on willingness to use AVs through pleasure. In human−robot interactions, men showed higher PEOU and trust in robots compared with women, since they are likely to have more experience with technology (Gessl et al., 2019).

However, Scopelliti et al. (2005) found that gender differences were far less important in the adoption of robotic technology, with gender only influencing trust in technology. Specifically, compared with men, women were more likely to express greater scepticism regarding robotic technologies. However, in healthcare, women showed greater PU for medical technology (Wong et al., 2012). In the healthcare sector, Ziefle and Röcker (2010) reported that gender has no significant effect on the overall willingness to use medical technologies. However, significant moderating effects of gender on m-health adoption were confirmed by other researchers. Specifically, Hoque (2016) and Zhang et al. (2014) showed that men had higher levels of m-health adoption intention than women in terms of PEOU and SNs; however, specifically in relation to PU, women had higher levels of adoption intention.

Education

Differences were also observed in acceptance of AI devices, depending on educational background. C. Lee, B. Seppelt, et al. (2019) reported that younger age, a high level of education, a positive effect for advanced driver-assistance systems in use and high self-rated early technology adoption tendency significantly influence AV acceptance. Moreover, it was argued that people with higher education levels prefer AVs, as more educated people may already be more familiar with the idea of AVs and more likely to adopt new ideas and technologies (Brell et al., 201b; Haboucha et al., 2017). In addition, people with a low education level are typically less confident in their ability to master a novel device (Giuliani et al., 2005). In the robotics sector, Scopelliti et al. (2005) argued that education does not significantly affect how potential users evaluate the usefulness and functionality of technological devices. Contrary to the findings of Scopelliti et al. (2005), Nomura and Nakao (2010) reported that users with an educational background in science and engineering had lower scores for negative attitudes towards interaction with robots, compared with those who had an educational background in the arts.

Cultural background

Some key differences were found with regard to the cultural background in the acceptance of AI-based technologies in AD, robotics and healthcare. It was observed that people from low-income countries were most concerned about cost and safety, whereas those in more developed countries were concerned regarding their ability to take control of AVs and privacy issues (Haboucha et al., 2020). In addition, users from high-income countries particularly disliked the idea of their vehicle providing data to insurance companies, tax authorities or road organisations (Kyriakidis et al., 2015). In robotics, users from different cultural backgrounds showed different levels of positive and/or negative attitudes towards robots (Bartneck et al., 2007; Nomura et al., 2008). For healthcare and AD, it was found that the effects of SI could be greater in collectivistic cultures, owing to conformist mentality and authoritarianism, than in other cultures (Man et al., 2020; Ye et al., 2019).

Technical expertise

Technical expertise showed a positive effect on BI and trust in AD, implying that experienced drivers will have a higher level of trust and a more positive opinion of AVs (Koo et al., 2015; Rödel et al., 2014). Moreover, Brell et al. (2019a, 2019b) showed that with increasing experience, novel driving technologies are perceived as significantly less risky, and experience significantly affects PRs in both connected vehicles (i.e., vehicles that use technology to communicate with each other; connect with traffic signals, signs, and other items on the road; or obtain data from a cloud) and autonomous vehicles (i.e., vehicles that use technology to steer, accelerate, and brake with little to no human input). In addition, people who drive more often and have experience with technology are more likely to choose AVs, and this is less dependent on how many of their friends have already adopted AVs (Bansal et al., 2016; Lee, Seppelt, et al., 2019). Robotic technologies were likely to be more appealing to older users, especially those less experienced with technological devices and who could benefit more from the adoption of robotic services (Di Nuovo et al., 2018). This was explained by experienced users’ reluctance to learn new procedures and preference for performing familiar tasks unless they could see a strong improvement with the new technology. However, it was also shown that previous interactions with robots significantly reduce negative attitudes towards robotic technologies (Bartneck et al., 2007).

Summary

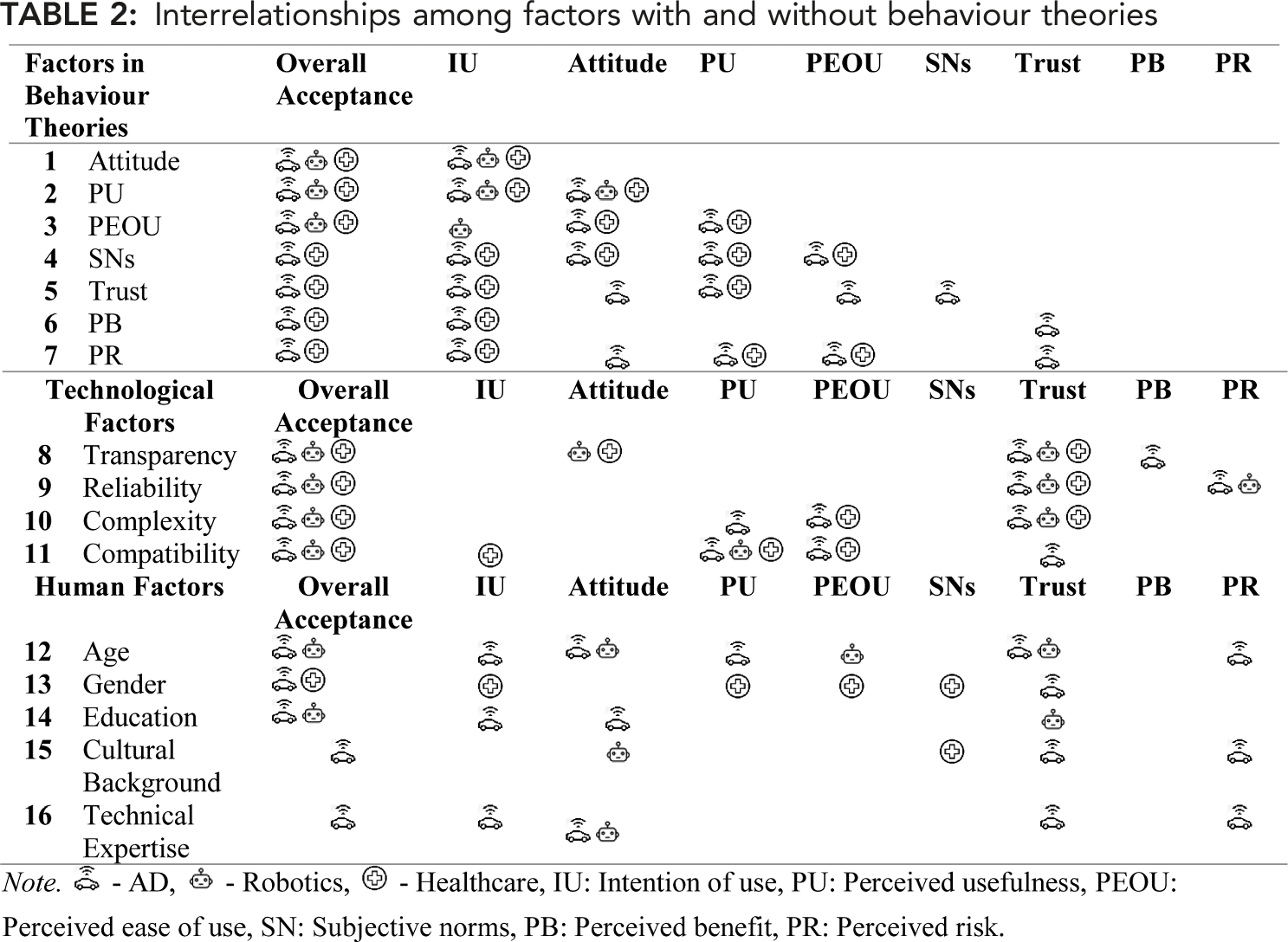

Interrelationships among factors with and without behaviour theories

Note that Table 2 does not include any statistical outcomes, but rather it provides a summary of the findings identified in this review.

Discussion

This systematic review of the literature examined the factors that influence the acceptance of AI-infused systems. The analysis included an evaluation of the relationships among the factors across different application areas. Specific key findings and research implications are discussed below.

Key Findings

The review evaluated behavioural, technological, and human factors in relation to their use in predicting technology adoption. The results indicate that many researchers have explored the impact of behavioural factors on the adoption of AI technologies, and these factors have been noted to have the strongest influence on technology adoption. Specifically, attitude and PU have been identified in many studies as the most influential factors on adoption intention. In addition to traditional acceptance theories, this review highlighted that trust and PR also have significant roles in the adoption of AI systems. In particular, unlike previous computer technologies, AI technologies, which make extensive use of users’ personal information, pose privacy risk issues. This requires consideration of privacy challenges in the implementation of any AI technology. Nevertheless, the research shows that challenges related to PR can be addressed by improving users’ trust in technology (Panagiotopoulos & Dimitrakopoulos, 2018).

In general, the findings on behavioural factors confirm those reported in prior reviews regarding the acceptance of AI devices in AD (Jing et al., 2020). However, this review also revealed the effects of other factors on user behaviour towards AI-infused systems, specifically those associated with the properties of technology and human factors. In particular, the findings revealed which technological factors have a significant impact on human behaviour in relation to technology adoption. Thus, by focusing on the required technological parameters, it is possible to influence factors related to user behaviour, or conversely, the cause of changes in user behaviour can be determined by linking these technological factors to behavioural factors. For example, user distrust of AI systems can be overcome through technology-related factors, such as by increasing transparency (de Visser et al., 2020; Weitz et al., 2020), reliability (Brell et al., 2019a), and compatibility (Man et al., 2020), and reducing complexity (Fan et al., 2020). Compatibility also allows users to perceive AI systems as useful and easy to operate (Man et al., 2020). However, in most current technology acceptance studies, the effects of technological parameters are overlooked.

Human factors are also frequently neglected in research on the adoption of AI systems. While the unified theory of acceptance and use of technology emphasises the need to consider the effects of certain human factors, many TAM-related studies do not take into account these factors’ effects. However, the findings of the present review confirm that some human factors, especially age and gender differences, are often observed in the adoption of AI technology. Specifically, older adults and women should be seen as target user groups who need to increase their technology acceptance. This is because, as several researchers noted, users in these groups tend to be more reluctant, less interested, and relatively less confident in using new technology (Bansal et al., 2016; Deb et al., 2017; Haboucha et al., 2017; Hohenberger et al., 2016; Scopelliti et al., 2005; Wolters et al., 2009; Zhang et al., 2014, 2019a). Since older adults are typically more concerned regarding the reliability and usefulness of technology than are younger adults, the features that need to be considered to improve acceptance among these user groups can be identified (Deb et al., 2017). Likewise, less educated and less experienced users tend to have less trust in AI technologies. Thus, identifying methods to increase trust would make it easier to facilitate technology adoption among these users. In this case, trust can be gained by making improvements related to the technological factors mentioned above. Finally, a relationship was also found between cultural background and PR. Accordingly, it is possible to predict user reactions regarding PR depending on cultural background and take necessary measures against challenges that hinder technology adoption. Furthermore, SI also needs to be considered, as the effects of SNs appear to be stronger in collectivistic cultures (Man et al., 2020; Ye et al., 2019).

There were no significant differences in the impact of behavioural factors on technology adoption across selected application areas. It can, therefore, be concluded that the factors related to user attitudes towards technology do not change according to the application area. This finding can help to predict technology adoption across application areas using the same behavioural factors. However, unlike the other application areas examined, no effects of PB and PR factors on technology adoption were observed for robotics. This may be because users already perceive robot technologies as being beneficial to society and do not think robots pose any significant risks. It should be noted that this may be because there are relatively few studies on PR factors in the robotics field. However, privacy issues have become more serious in healthcare, since the use of personal information related to medical history or checks by third-party companies might directly endanger human life (Balta-Ozkan et al., 2013). Likewise, users may also be concerned regarding the possibility that other people could take control of AVs by hacking the system since higher concerns related to privacy risk were observed for AD (Zhu et al., 2020). Thus, privacy risks should be carefully considered when implementing both AD and healthcare technologies.

Regarding technological factors, the effects of transparency and compatibility on user behaviour did not vary depending on the application domain, implying that these are two factors that should be considered in technology adoption despite the application field. However, the effect of complexity was not observed in AD as an important factor influencing user behaviour in relation to AVs. This was explained by the fact that AD users do not consider driving to be a complex task (Choi & Ji, 2015; J. Lee, D. Lee, et al., 2019). However, there is evidence that potential users of AI systems in healthcare and robotics are more concerned regarding the ease of operation of technology (Ezer et al., 2009). This finding suggests that providing an effortless user interface for technologies used in healthcare and robotics is highly recommended. In addition, the lack of research on the impact of reliability on the adoption of robot technology requires further in-depth research of the impact of this factor.

Regarding human factors, the effects of age, cultural background, and gender were found to be important across application areas, implying that these three factors are important to consider in relation to the acceptance of AI technologies in any application area. However, no gender effects were found on technology adoption in healthcare, indicating that adoption intention for medical technologies depends less on the user’s gender. The findings of this review demonstrated that the effect of education is important in both AD and healthcare, but not robotics, suggesting that the adoption of robot technologies depends less on the user’s education level. Regarding technical expertise, prior experience with the technology can facilitate the adoption of, and increase positive attitudes towards, AVs and robot technologies. However, no impact of the experience was observed in healthcare, implying that technology acceptance is not associated with healthcare professionals’ experience with the technology, or at least no study was found that recognised its effects.

Implications

Based on the key findings of previous research, this study has some important implications. First, this study provides a comprehensive overview of factors impacting the acceptance of AI-infused systems. Based on the results of the review, this study suggests that developers, policymakers and researchers consider technological and human factors along with factors related to user behaviour theories. Specifically, this review highlights significant effects of technological factors on user behaviour and the dependence of technology adoption on user characteristics (e.g. age, gender, education).

Second, this review suggests extending traditional TAMs to include PR to determine the adoption of AI technologies. Unlike conventional computer technologies, AI devices extensively use personal information to improve the user experience of the system. This, in turn, creates challenges for AI designers and developers. Thus, this study suggests overcoming PPR among users by increasing user control over their own information with regard to how their data are used by providers and ensuring safety by conducting third-party inspections.

Third, this review showed how the factors affecting technology acceptance vary by application area. Accordingly, among the selected technological factors, to improve the technology acceptance, it was recommended to consider compatibility and transparency in each application area, reliability in healthcare and AD, and complexity in robotics. In particular, user trust in AI can be improved by considering specific technological factors. In addition, developers should not overlook the effects of human factors throughout application areas to predict acceptance among different user groups. Below are specific considerations that can serve to increase intention to use AI technologies: Ensuring privacy protection, increasing ease of use of the system for older adults, and providing more system functionality for younger people, because older people have more concerns regarding their private information being used by manufacturers and difficulties associated with novel technologies, while younger people show concerns regarding system functionality; Increasing trust among women in AI technologies by considering a greater scepticism among women towards technologies; Considering the users’ cultural background (e.g. crowd mentality, collectivistic culture, authoritarianism) and the support of the government towards AI technologies when implementing the technology; Offering affordable options for AVs, while reducing the PSR of users in underdeveloped countries; introducing cars that allow easy access to take control of the vehicle while reducing the PPR of users in developed countries; Supporting users with less experience with technology by making positive comments about technology to reduce their PR regarding AI, since they are more likely to be dependent on others’ opinions before using the technology; the media can also be essential to encourage new users to adopt AI technology; Identifying methods to increase trust in AI among users with low educational levels, such as by specific technological factors.

Conclusion

As AI devices become more widespread, research into their adoption is increasing. Therefore, unlike previous research, this study focused on investigating the factors affecting technology adoption of AI-infused systems by conducting a systematic review of the literature and identifying relationships among the factors. We confirmed that the intention to use AI technologies is influenced not only by factors in behavioural theories but also by human factors and technological factors. Technological factors showed a significant correlation with UI and behavioural factors. Therefore, considering them in integrated TAMs might serve to predict the acceptance of AI-infused systems more precisely and improve their adoption. No significant differences were observed across application areas; however, some parameters should be considered. For example, while complexity is a major issue for robotic technology users, privacy risk is important in healthcare and AD. As for human factors, as expected, mainly older adults, women, those with low education levels, those inexperienced with technology, and members of relatively collectivistic cultures have difficulties adopting AI technology. It is therefore necessary to identify specific issues associated with these groups. In particular, identifying the moderating effects of human factors can help identify specific challenges associated with user groups. Indeed, identifying individual differences in technology acceptance serves to increase the inclusiveness of technology and identify more targeted or complex user groups.

Limitations and Future Research Directions

This study has some limitations. One limitation is the lack of factors studied in the adoption of AI in different application areas. The limited number of studies reviewed based on selective keywords could be extended further by using different keywords in the search. The lack of literature, in turn, led to research conducted in relatively few application areas. Further studies could conduct a large-scale review including research from more fields or experiment-based research in a specific field to analyse the findings of this literature review.

As the main objective of this study, the key factors that may affect AI devices as well as the effects and correlations of technological factors and human factors on factors in behaviour theories were found and confirmed across different application areas, and a conceptual basis for further studies was determined. However, the implications and findings of this research need to be validated by conducting experiments or surveys in the future. Future research should aim at developing an enhanced TAM by integrating the factors studied in this review to validate the present findings and implications.

Key Points

Factors in behaviour theories, technological factors, and human factors are all significant in investigating the acceptance of AI-infused systems, according to findings in existing studies. PU and attitude were found to be the most important factors influencing the adoption of AI-infused systems in all application areas studied. The effects of factors related to user behaviour can be influenced by technological factors. There are significant differences in the way users adopt AI devices depending on the level of human factors (e.g. younger vs. older, male vs. female, educated vs. uneducated, experienced vs. inexperienced, and users from different cultures). Further research is needed to validate the findings regarding relationships among factors and the direct and indirect effects of variables on the acceptance of AI-infused systems.

Footnotes

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The Basic Research Program through the National Research Foundation of Korea (NRF) funded by the MSIT.

Ulugbek V.U. Ismatullaev is a MA student in the Industrial Engineering Department at Kumoh National Institute of Technology (KIT), Gumi, South Korea. He completed his BA at Fergana Polytechnic Institute (FerPI), Fergana, Uzbekistan, in 2018, where he studied automation and control systems.

Sang-Ho Kim is a Professor in the Industrial Engineering Department at the Kumoh National Institute of Technology (KIT), Gumi, South Korea. He obtained his PhD in industrial engineering at Pohang University of Science and Technology (POSTECH), Pohang, South Korea, in 1995.