Abstract

The success of teachers is tied to their effectiveness in managing student behavior. In this meta-analysis, we identified 49 single-case-design studies that evaluated the effectiveness of teacher training on their implementation of behavioral support strategies. Training was most often provided in a one-on-one format (n = 18) and included ongoing coaching (n = 20). Thirty-three of the 49 designs met What Works Clearinghouse standards with or without reservations. The overall between-case standardized mean difference effect size was d = 1.50. We analyzed and grouped teacher- and student-level outcomes as a result of training into five domains: (a) teacher-delivered praise (d = 1.94), (b) teacher desirable behavior (e.g., treatment fidelity; d = 1.22), (c) teacher undesirable behavior (e.g., reprimands; d = 0.87), (d) student desirable behavior (d = 1.88), and (e) student undesirable behavior (d = 1.22). Across all studies, the combined nonoverlap of all pairs scores ranged from 0.37 to 1.0 (M = 0.866). We discuss future areas of research as well as implications for teacher training in behavioral support implementation.

Managing classroom behavior is one of the most challenging demands of the teaching profession (e.g., Moore et al., 2017) yet also one of the most crucial skills for successful teaching (Simonsen et al., 2008). Teacher effectiveness in behavioral support is made even more urgent due to poor academic and social trajectories (e.g., reduced graduation rates, interaction with the justice system, substance abuse; Kauffman & Landrum, 2013) of students who engage in chronic and challenging behavior. Across populations of students with and without disabilities, these outcomes are most common for students with emotional and behavioral disorders (EBD; U.S. Department of Education, 2018). Teachers who frequently interact with and support students who engage in challenging behavior, particularly those with or at risk of EBD, are at an increased risk of occupational stress, and turnover (Gilmour & Wehby, 2020).

General education teachers report that students who engage in high rates of externalizing and internalizing behavior are the most difficult to educate in inclusive classroom environments (Harrison et al., 2019). Nonetheless, school districts remain obligated to provide a free and appropriate public education in a student's least restrictive environment, which is often an inclusive general education setting. In fact, the percentage of students with disabilities who are included in general education settings is increasing. For example, from 2017 to 2019, the percentage of students with EBD who received at least 80% or more of their instruction in the general education setting increased from 48.5% to 50.8% (U.S. Department of Education, National Center for Education Statistics, 2020). Although this increase represents a positive trajectory for this population of students, it also underscores the need to support general education teachers who may not have adequate training or experience managing problematic behavior using evidence-based strategies (Samudre et al., 2022; Stormont et al., 2011). Improving teachers’ effectiveness in behavioral support implementation may have a bidirectional benefit by increasing proximal and distal outcomes for both teachers and students. For example, teacher-provided behavior-specific praise (BSP) has been shown in the literature to positively impact proximal student outcomes (e.g., on-task behavior, reductions in disruptive behavior; Gage et al., 2017). In another example, McDaniel et al. (2022) demonstrated that schoolwide implementation of targeted behavioral supports (i.e., Tier 2) resulted in reductions in the use of disciplinary practices (e.g., office discipline referrals [ODRs], in-school suspensions) at the school level. ODRs, a valid and practical distal measure for students who are at risk of identification of having a disability (e.g., EBD), are considered standard practice in schools when externalizing behaviors occur and are a common data source for nomination to more intensive tiers of support. To this end, implementation of targeted behavioral support in inclusive settings can be linked to improvement in student-level distal outcomes (Bruhn et al., 2014). In a separate example, schoolwide implementation of positive behavior interventions and supports was distally linked to reductions in teacher burnout and increases in teacher work satisfaction (Ross et al., 2012). Notably, the problematic outcomes for students with EBD continue (Lloyd et al., 2019), which suggests that the status for this population has remained unchanged since the conception of a position on the state of special education for students with EBD (Peacock Hill Working Group, 1991). A seemingly obvious nexus to improve student proximal and distal outcomes is to improve teacher implementation; therefore, research on teacher implementation as it relates to improvements in distal outcomes for students with and without disabilities who engage in challenging behavior is crucial. This line of inquiry can be integral to alter trajectories for students with EBD and improve distal outcomes (Freeman et al., 2019).

One way to address teacher challenges with behavioral support, and improve collateral student outcomes, is through professional development that integrates high-quality training strategies to improve implementation of behavioral interventions (Hirsch et al., 2021). In addition to increasing knowledge, training should be designed to promote an increase in discrete teacher behaviors (e.g., BSP) or an increase in treatment fidelity (i.e., the degree to which an intervention is implemented as intended; Yeaton & Sechrest, 1981). There is limited research that suggests traditional one-day workshops result in successful generalization and maintenance of target skills (Simonsen et al., 2020). Improved training efforts may result in increased adherence to federal legislation that demands educator implementation of evidence-based and research-based practices to promote positive outcomes for students with disabilities (Individuals With Disabilities Education Improvement Act [IDEA], 2004).

Previous Research on Teacher Training

Research continues to confirm that maximizing teachers’ treatment fidelity is essential in achieving intended results of student-level interventions (Stormont et al., 2015; Stormont & Reinke, 2014). For example, Owens et al. (2020) found that as a teacher's treatment fidelity decreased, so did the student's on-task behavior. Conversely, when the teacher implemented the intervention with higher levels of fidelity, student on-task behavior increased as well. However, research on effective professional development and implementation science (e.g., Fixsen et al., 2005) confirms that fidelity of implementation is influenced by multiple factors, such as the design of initial training and the amount of follow-up support. In a comprehensive review of the literature on classroom management professional development, researchers identified initial didactic training, ongoing coaching, and performance feedback as the most common training components (Wilkinson et al., 2020).

To make recommendations for practice, further research is needed to determine the degree to which these strategies are associated with increased teacher fidelity and the degree to which increased fidelity translates into improved student outcomes. This can be achieved through experimental research that evaluates the effectiveness of teacher training and simultaneously measures both teacher implementation and student-level outcomes. Single-case research design (SCRD), a commonly used method in applied research, provide session-by-session data and would allow for a formative analysis on the relation between fidelity and student outcomes, which can help optimize behavioral support training for general education teachers. Ultimately, training should be informed by high-quality sources of science (Maggin et al., 2013). Applying design and evidence standards to existing research, such as those developed by What Works Clearinghouse (WWC; 2020), can help identify training practices with robust evidence in improving educators' skills and treatment fidelity.

Researchers have summarized the existing literature on initial training strategies and follow-up support. For example, Kirkpatrick and colleagues (2019) conducted a review on behavioral skills training, a multicomponent training intervention that includes didactic instruction, modeling, rehearsal, and feedback immediately after the opportunity for rehearsal. Behavioral skills training is distinguished from other initial training interventions given that it integrates an opportunity for modeling and rehearsal and can be easily adapted to small-group or one-on-one formats. Results indicated that behavioral skills training is effective for promoting initial acquisition of a variety of skills (e.g., incidental teaching). A meta-analysis conducted by Brock et al. (2017) also found that behavioral skills training and the singular provision of modeling and opportunities for rehearsal were associated with higher treatment fidelity. Results from these reviews provide an additional basis for enhancing initial training. However, the extent to which these results translate to behavioral support training for general education teachers are unknown.

Results from a review conducted by Hirsch and colleagues (2021) indicated that professional development was effective in increasing novice teachers’ classroom management skills. Many of the studies adhered to a practice-based professional development framework involving an opportunity for teachers to receive content, rehearse targeted skills in the natural environment (e.g., classroom), and receive feedback on implementation (Hirsch et al., 2021). When evaluated using the Standards for Evidence-Based Practices in Special Education (“Council for Exceptional Children,” 2014)—a collection of quality indicators to evaluate the methodological features of SCRD and group experimental studies (Cook et al., 2015)—all SCRDs included in the study met 100% of the quality indicators, which indicates sufficient methodological rigor. Given that characteristics of initial training varied (e.g., dosage, use of technology, setting, format), limited recommendations could be made about the specific design and delivery of initial training.

Fallon et al. (2015) evaluated the empirical support for performance feedback using WWC design and evidence standards. Performance feedback is a cost-, time-, and resource-efficient support strategy and has been shown to result in improved implementation of educational practices (Sanetti et al., 2011). Fallon and colleagues determined the empirical support was sufficient to consider performance feedback as an evidence-based practice. Researchers have continued to experimentally evaluate the effectiveness of performance feedback on improvements in treatment fidelity including more novel technology-based variants, such as bug-in-ear technology (e.g., Owens et al., 2020). In a more recent review, Sinclair et al. (2020) found that performance feedback provided during intervention sessions via technology is also an evidence-based practice per Council for Exceptional Children (CEC) quality indicators.

Stormont et al. (2015) summarized research that targeted ongoing coaching (i.e., one-on-one support following initial professional development) to increase teachers’ use of social-behavioral interventions. Results indicated that it is a highly promising method to increase teacher implementation, but direct relationships between the effectiveness of coaching and improvements in teacher and student outcomes could not be determined given that it was not an independent variable. In a more recent review, Ennis and colleagues (2020) reviewed the evidence for ongoing coaching to better understand how it specifically supports educator use of BSP. Studies were assessed through the CEC quality indicators, and results indicated that coaching educators to increase BSP is an evidence-based practice. However, its effectiveness on teacher implementation of a collection of behavioral support strategies is unknown.

Previous reviews provide important guidance on teacher training, but questions on how best to support teachers in implementing behavioral support remain. First, reviews that exclusively focus on a single training or support strategy are limited in how they inform future training, considering such practices rarely occur in isolation. For example, performance feedback is often integrated into ongoing coaching (e.g., Gage et al., 2017). A review that more broadly examines a diverse set of training practices can provide guidance that is more consistent with how in-service professional learning is provided. Second, a close look at a homogenous group of teachers by certification area (e.g., general education teachers) can provide important information on how best to support that population in providing effective behavioral support. Specifically, contextual features for general education teachers and their classrooms, such as adult-to-student ratios, instructional time, contrasting beliefs, and previous training in behavioral support, differ when compared with teachers in other certification areas (e.g., special education). Thus, substantive differences in how general education teachers are trained to implement behavioral support strategies may result. Finally, previous reviews provide little information on the differential effects of specific training and support strategies, which are essential for researchers to understand (Hirsch et al., 2021). For example, Ennis et al. (2020) established that ongoing coaching is effective in supporting educator use of BSP, but the efficacy of coaching compared with other training strategies is unknown.

Samudre et al. (2022) systematically analyzed the literature on what strategies general education teachers have been trained to implement, how they were trained, and the methodological rigor and evidence of effectiveness. With specific regard to training components, results indicated that the two most common characteristics were initial training provided in a one-on-one format and the provision of ongoing coaching. Researchers also applied WWC guidelines to a subset of studies that measured changes in teacher behavior in the context of the experimental design (n = 49 SCRDs; n = 11 group designs). Fifty-five percent of SCRDs and 81.82% of group designs met WWC standards with or without reservations. A total of 27 designs had moderate to strong evidence of effectiveness (n = 23 SCRDs; n = 4 group designs). Limitations were present in Samudre et al. First, the review did not analyze the differential effects of training strategies on teacher implementation for studies that experimentally measured this variable. Additionally, results for SCRDs were informed by visual analysis alone. Using WWC (2020) standards and reporting effect sizes for SCRD studies can help mitigate the risk of subjective reporting of results through visual analysis (Shadish et al., 2015). One of the most promising effect sizes for synthesizing single-case-design research is a between-case effect size that is calculated using a multilevel model that nests time within participants (Shadish et al., 2015). This approach avoids the limitations of overlap methods (e.g., Wolery et al., 2010) and yields a d-types estimator that can be directly compared with Cohen’s d from group-design research. Finally, student outcomes were not included in Samudre et al.'s review. By assessing the effect of teacher implementation on student behaviors, providers can evaluate the true impact of training (Brock et al., 2017).

Purpose and Research Questions

A critical review and analysis of relevant literature can help optimize training for general educators who struggle with managing challenging behavior (Moore et al., 2017; Stormont et al., 2011). The purpose of the present study is to respond to gaps in the general educator behavioral support training literature by addressing the limitations outlined in Samudre et al. (2022). Specifically, we evaluated the differential effects of included training components on teacher behavior, we calculated effect sizes rather than relying on visual analysis alone, and we evaluated student-level outcomes. In the present review, we replicated search procedures outlined in Samudre et al. The screening procedures in the present review differs from Samudre et al. due to additional criteria related to effect size calculation. Specifically, we address the following research questions: (a) What is the effect of training on general educator use of praise, increase in desirable behaviors (e.g., treatment fidelity), and decrease of undesirable behaviors (e.g., reprimands)? (b) What is the effect of training on student-level desirable and undesirable behaviors? (c) How do teacher-level outcomes differ based on training strategies used and study quality? A critical review and analysis of relevant literature can help optimize training for general educators who struggle with managing challenging behavior

Method

Inclusion Criteria

Studies included in this review met four criteria adapted from Samudre et al. (2022) to identify relevant studies. First, studies were published in a peer-reviewed journal after 2004. In alignment with WWC (2020), systematic reviews should focus on evidence from the previous 20 years. Second, intervention involved a teacher-level training focused on a student-level intervention for problematic or prosocial behavior (e.g., being on task, academic engagement). We defined student-level intervention as a teacher-implemented behavioral support strategy intended to reduce students’ undesirable behavior and promote desirable behavior. Third, at least 50% of trainees or primary implementers were in-service general educators located in the United States. Studies met this criterion if the authors stated participants held teaching degrees or met state standards for certification. Fourth, at least one dependent variable was a measure of teacher fidelity to a student-level intervention or a measure of discrete teacher behavior, and the dependent variable was directly observed in the context of an SCRD. We defined discrete teacher behaviors as behaviors that have a clear beginning and end, such as opportunities to respond and BSP. To facilitate single-case effect size calculations, we required studies that used ABAB (i.e., withdrawal) designs to include at least three contrasts per design and three total designs (i.e., participants), and we required studies that used multiple-baseline or multiple-probe designs to stagger intervention across at least three participants. Due to the purposeful addition of the design and effect size–related criteria, many studies that were included in Samudre et al. were excluded in the current review.

Search and Screening Procedures

Database and Hand Searches

We replicated search procedures in Samudre et al. (2022) by conducting a concurrent search of Academic Search Complete, ERIC, PsycINFO, and Medline Full Text databases within EBSCO as our primary database using the terms (“general education teacher” OR “classroom teacher” OR “regular education teacher”) AND (“behavior management” OR “classroom management” OR “behavior*”) AND (“intervention*” OR “training” OR “professional development” OR “development” OR “coaching” OR “consultation”). These search terms were developed by the research team to include synonyms for the relevant population (i.e., general educators), intervention (i.e., training), and outcome (i.e., behavior management), and Boolean operators were included to increase the likelihood of relevant results. We limited results to scholarly journals, academic journals, and years 2019 to 2021 to capture articles published after Samudre et al.'s search. After duplicates were removed by EBSCO and EndNote file management software, this search process resulted in 449 articles for review. Supplemental Figure 1 depicts this search process and the number of studies yielded from each stage of the database search.

Next, we hand searched the four most represented journals from the initial database search in Samudre et al. (2022) for studies published from January 2020 to January 2021. This included Journal of Behavioral Education, Journal of Emotional and Behavioral Disorders, Journal of Positive Behavior Interventions, and School Psychology Review. The first author reviewed the titles and abstracts of all issues published during that time and created a spreadsheet with the titles and abstracts of potentially relevant studies.

Record Screening

We used a three-stage screening process to identify records that met all inclusion criteria for this review. The first author screened the titles and abstracts of the 449 total records to remove any irrelevant studies. The second author double screened a randomly selected 20% of studies, and interrater agreement (IRA) was 94.69%. If either author indicated the record was relevant, we included it in the next stage (n = 61 studies).

Second, we screened the full text of the 61 records using a spreadsheet that listed all inclusion criteria in hierarchical order. We stopped screening after a study failed to meet any criteria in the hierarchy. The first author screened all records, and the second author double screened a random selection of 20 records (32.79%). Agreement on the final inclusion decision was 90%, and we resolved all disagreements through consensus coding. As a result, we included seven additional studies from database and hand searches.

Third, the first author screened the 28 SCRD studies included in Samudre et al. (2022) using our updated inclusion criteria, and the second author double screened nine studies (32.14%; IRA = 100%). Of those 28 studies, 19 studies met criteria for the current review. This resulted in a total of 26 studies included in this review.

Data Collection and Extraction

To remain transparent and congruent with contemporary standards for research, we uploaded our complete codebook for all descriptive data, moderators for the metaregression, between-case standardized mean difference (BC-SMD) database for effect size calculation, and all supplemental files to the Open Science Framework online repository, which can be found at https://osf.io/mw45x/?view_only=d9c1fa305d3f47e7976eff7d0bda1dab.

Descriptive Data

The first and second authors developed a codebook to extract descriptive data about (a) teacher and student participants (i.e., number, grade level, special education status, number of students per disability category), (b) the student-level intervention that teachers were trained to implement, (c) the context of implementation (e.g., whether the intervention targeted classwide or individual student outcomes), and (d) reported training characteristics and strategies (i.e., format, length, training components). Training components included didactic instruction, modeling, opportunity for rehearsal, trainer-provided feedback, and ongoing coaching. We coded for variants for each training strategy (e.g., video model, in vivo rehearsal, bug-in-ear technology for performance feedback). We coded whether a study used a combination of different variants for a reported strategy (e.g., live model with a video model) by coding for all reported variants. A master's-level Board Certified Behavior Analyst with experience in teacher training was the primary coder for all studies. Prior to coding for this review, the first author reviewed all coding procedures, and practiced coding with the primary coder until meeting 90% agreement across two studies. The first author then double coded two randomly selected studies (29%). IRA was 91% and disagreements were resolved through consensus coding.

Quality and Rigor

We coded the quality and rigor of all studies using the most recent version of WWC (2020). The second author developed a coding spreadsheet that listed each design (i.e., ABAB or multiple-baseline figure) related to a teacher or student outcome (n = 76 designs). We updated the initial set of 19 studies from Samudre et al. (2022) to include quality of designs with student outcomes and to include new WWC standards for teacher outcomes (e.g., for multiple-probe designs). WWC standards were assessed at the study level (i.e., availability of data, interobserver agreement collected for 20% of sessions in each phase and meeting minimum thresholds of 80% agreement per dependent variable) and the design level (i.e., minimum number of data points per condition, at least three attempts to demonstrate an effect at three separate points in time, and if the design was concurrent). We used additional standards for multiple-probe designs (i.e., vertical overlap for cases during initial baseline sessions, the availability of probe data for each case just before introducing the independent variable, and probe data for cases not in intervention when another case receives intervention or reaches criterion). The first and second authors refined the quality procedures by double coding sets of four studies and discussing disagreements until achieving 90% IRA (eight total studies required). Then each author coded half of the remaining studies, overlapping on six studies (23.08%). Point-by-point IRA was high (92.45%), and discrepancies were resolved through consensus discussions.

Teacher and Student Outcome Data

A doctoral student in special education (fourth author) was trained to use Plot Digitizer to extract teacher and student-level data from images of each SCRD. Data extraction consisted of uploading screenshots of all eligible graphs and separate screenshots of each tier of the design into Plot Digitizer. Training consisted of a behavioral skills training package (i.e., didactic, model, rehearsal, and feedback). Following initial training, the first and fourth authors independently extracted data from a sample study using Plot Digitizer. We defined agreements as follows: (a) for percentage or count variables, as y-axis values within two points of each other and (b) for rate variables (e.g., BSP), as y-axis values within two tenths of each other. Point-by-point IRA for the training set was 100%. Following training, the first author completed secondary data extraction across 23% of studies (n = 6; IRA = 98.20%).

Analytic Strategies

In this study, we chose to analyze the data using d-Hedges-Pustejovsky-Shadish effect size, a BC-SMD (Shadish et al., 2014), using the macro developed by Marso and Shadish (2014). The BC-SMD calculation uses a hierarchical model to produce a between-subjects effect size for multiple-baseline-across-participant designs with at least three tiers or withdrawal designs (e.g., ABAB) that include at least three participants with at least three condition changes between baseline and intervention per participant. Studies were excluded from the review who did not meet this criterion during our screening process.

The BC-SMD effect size has advantages over within-case effect sizes (e.g., overlap methods), including providing a d-type estimator that is theoretically comparable to group design studies, accounting for autocorrelation, and allowing for power analysis (Shadish et al., 2015). Furthermore, this particular between-case approach is more straightforward than other between-case methods that use a multilevel model. With the BC-SMD, Level 1 analyzes the effects differences between phases, and Level 2 makes comparisons between cases (Shadish et al., 2014).

The BC-SMD effect size does have apparent limitations. For example, the design-related criteria for BC-SMD effect size calculation (e.g., reversal or multiple-baseline designs across multiple participants) can result in the exclusion of otherwise eligible studies. Additionally, there are no benchmarking standards for BC-SMD, thus limiting comparability to Cohen's d. In response to these limitations, general guidance recommends using multiple effect size statistics when conducting meta-analyses for SCRDs to provide a better description of the characteristics of the data for readers (Vannest et al., 2018). We calculated nonoverlap of all pairs (NAP; Parker & Vannest, 2009) in addition to the BC-SMD. NAP is a nonparametric measure that allows for comparisons between baseline and intervention data by scoring whether data points overlap or are the same value between conditions. We calculated all NAP values using an online calculator for single-case-design research (Vannest et al., 2016).

We chose to conduct our meta-analysis of the effect sizes using a random-effects model. Fixed-effect models are appropriate only when there is reason to believe that all studies are functionally identical (Borenstein et al., 2010). This assumption is not met in the present meta-analysis, in which researchers used different training methods to train teachers to use different practices. Therefore, the more appropriate model was a random-effects model that assumes that studies differed in ways that likely impacted the results. This approach also allows for greater generalization of the results (Borenstein et al., 2010)

Efficacy of Training

Next, we conducted a subanalysis to compare studies across five domains of dependent variables that included desirable and undesirable behaviors for both teachers and students. We coded behaviors as desirable or undesirable based on whether the authors intended for the behaviors to increase or decrease following intervention. For teachers, Domain 1 included dependent variables related to teacher-delivered praise (e.g., BSP, number of praise statements). Domain 2 included other desirable dependent variables (e.g., fidelity of implementation, number of precorrections used). Domain 3 included undesirable teacher behaviors (e.g., reprimands, general praise statements). For students, we coded one desirable behavior domain (e.g., engagement with academic tasks) and one undesirable behavior domain (e.g., classroom disruptions, being off task).

After calculating the BC-SMD effect size by study, we used the “metan” macro in Stata to run a random-effects model to calculate a mean effect size for all studies and by study domain. We then conducted a metaregression using the “metareg” macro in Stata. First, we used a null model to calculate the distribution of effect sizes across studies. Then we ran separate single-predictor models using training strategies, characteristics, implementation context, and study quality indicators as the independent variable and study-level effect sizes as the dependent variable. Finally, in addition to individual moderators, we conducted a follow-up analysis by running a model for studies that incorporated both modeling and feedback compared with studies that did not. Given the combination of modeling and feedback has been theoretically and empirically linked to treatment fidelity in a previous meta-analysis (i.e., Brock et al., 2017), we wanted to see if this finding generalized to training on behavioral support for general educators.

Results

Descriptive Results

Participants

Participant demographic data are provided in Supplemental Table S1. There were 104 general education teacher participants and 912 student participants included in this study set; however, note that only 22 of 26 studies reported the number of student participants. The large number of student participants is reflective of researchers measuring whole-class behaviors. The majority of authors reported training general education teachers to intervene with elementary (K-5) students (n = 22). Authors of 23 studies reported the special education status of student participants. Eleven studies included only general education students, and 14 studies included general and special education students. One study included only special education students. Seventy-one students with disabilities participated in included studies; however, information on specific disability categories of included students was provided for 36 students. The majority of students included in studies were identified as at risk for EBD due to referral for intensive tiers of support or nomination for the study by school-based teams (n = 16). The two categories with the second and third highest numbers of students were attention-deficit hyperactivity disorder (n = 8) and EBD (n = 5).

Student-Level Intervention

Student-level interventions targeted in studies are included as a supplemental file (see Supplemental Table S2). Definitions for each student-level intervention are also included as a supplemental file (see Supplemental Table S3). We identified nine student-level interventions. In 11 of 26 studies (42.31%), researchers reported training focused on increasing BSP. Researchers targeted Class-Wide Function-Related Intervention Teams (CW-FIT) across three studies (11.54%) and a multicomponent intervention package across six studies (23.08%). Four studies included training on strategies targeted for individual students (15.39%), and the remaining studies provided training on strategies targeted classwide outcomes.

Training Strategies

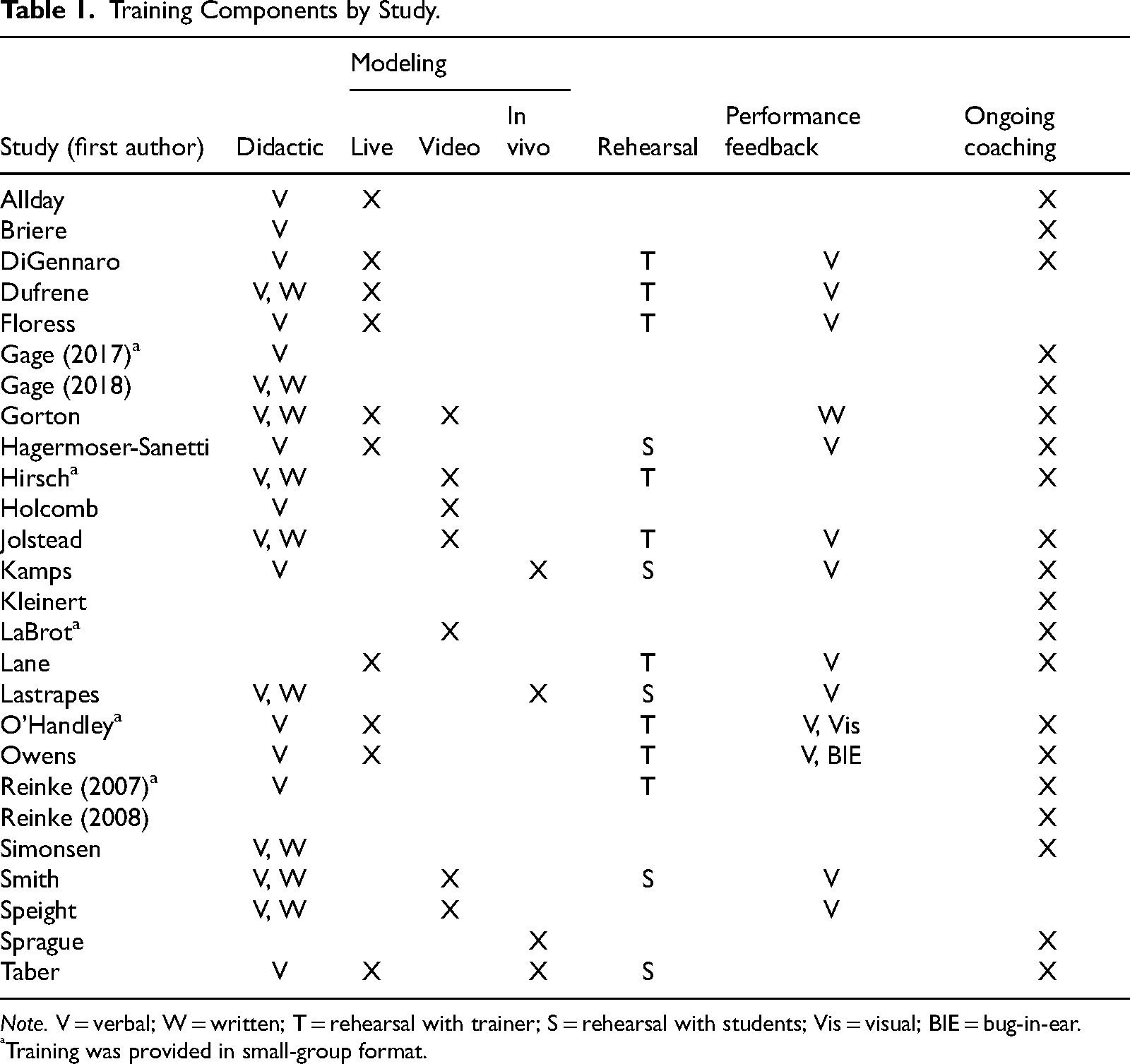

Table 1 displays teacher training procedures. Authors of 23 studies reported that training was provided in a one-on-one format (n = 18) or group format (n = 5). Authors for 19 studies reported the initial training length, with 13 studies reporting initial training was less than or equal to 1 hr and the remaining authors reporting training was 1 to 2 hr (n = 1), 2 to 4 hr (n = 2), or greater than 4 hr (n = 2). Authors of 21 studies reported the modality of didactic instruction. Verbal instruction was provided in all 21 studies, and nine studies used a combination of verbal and written modalities. Authors of 19 studies reported inclusion of modeling as a training strategy. Ten studies used a live model to demonstrate correct implementation or use of the targeted student-level intervention, whereas six studies used a video model. Authors of three studies provided in vivo modeling (i.e., with students). Authors of two studies used a combination of live and video models (Gorton et al., 2021) or live and in vivo models (Taber et al., 2020). An opportunity for rehearsal was provided across 14 studies. Nine of those studies provided an opportunity for rehearsal with just the trainer, and the remaining five provided rehearsal with students (i.e., in the generalization environment). Authors of 13 studies reported providing some type of performance feedback. Eleven studies provided verbal performance feedback only, and one study provided written feedback (Gorton et al., 2021). Owens et al. (2020) provided bug-in-ear performance feedback. Two studies included a combination of verbal and visual performance feedback and bug-in-ear and verbal performance feedback (e.g., Owens et al., 2020). Authors of 21 studies reported providing ongoing coaching.

Training Components by Study.

Note. V = verbal; W = written; T = rehearsal with trainer; S = rehearsal with students; Vis = visual; BIE = bug-in-ear.

Training was provided in small-group format.

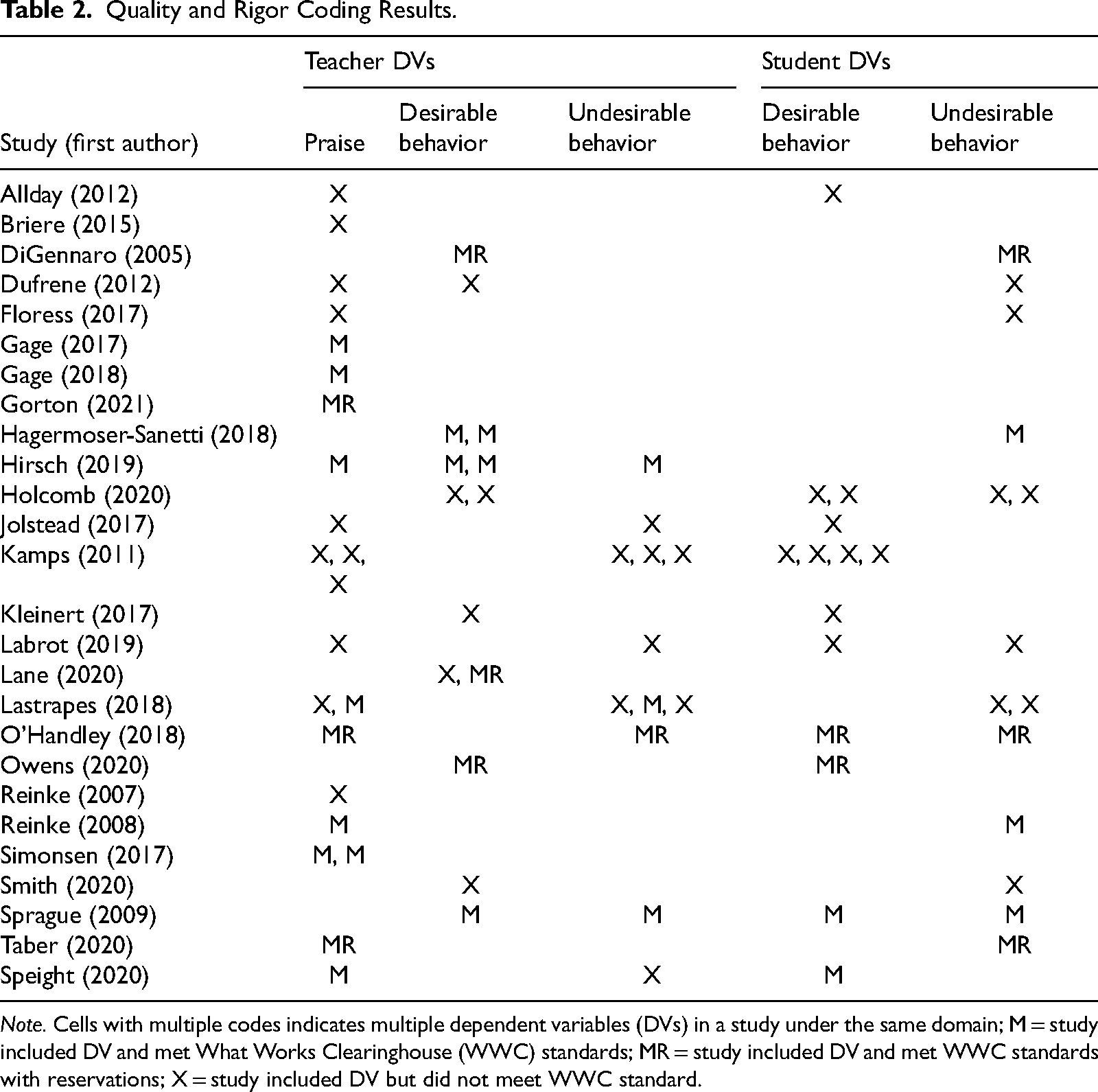

Quality and Rigor

Table 2 displays the results of coding the methodological rigor of included studies using WWC (2020) standards. The evaluation occurred for each dependent variable measured in the context of an experimental design (n = 76 dependent variables). Twenty-one designs (27.63%) met WWC standards, and 12 designs (15.79%) met WWC with reservations. Per WWC updated guidelines and standards, we used additional standards to assess the methodological rigor of five multiple-probe designs, three of which met standards with reservations.

Quality and Rigor Coding Results.

Note. Cells with multiple codes indicates multiple dependent variables (DVs) in a study under the same domain; M = study included DV and met What Works Clearinghouse (WWC) standards; MR = study included DV and met WWC standards with reservations; X = study included DV but did not meet WWC standard.

BC-SMD Meta-Analysis

Supplemental Table S4 displays overall effect sizes and the weight of each study. Effect sizes ranged from d = −.05 to d = 15.65. There was a very large overall effect size of 1.50, 95% confidence interval (CI) [1.34, 1.66]. Effect sizes by domain were large or very large. Results from this analysis also suggest that a large amount of variance is explained by differences between studies (I2 = 97.9), suggesting a small distribution of effect sizes (τ2 = .321).

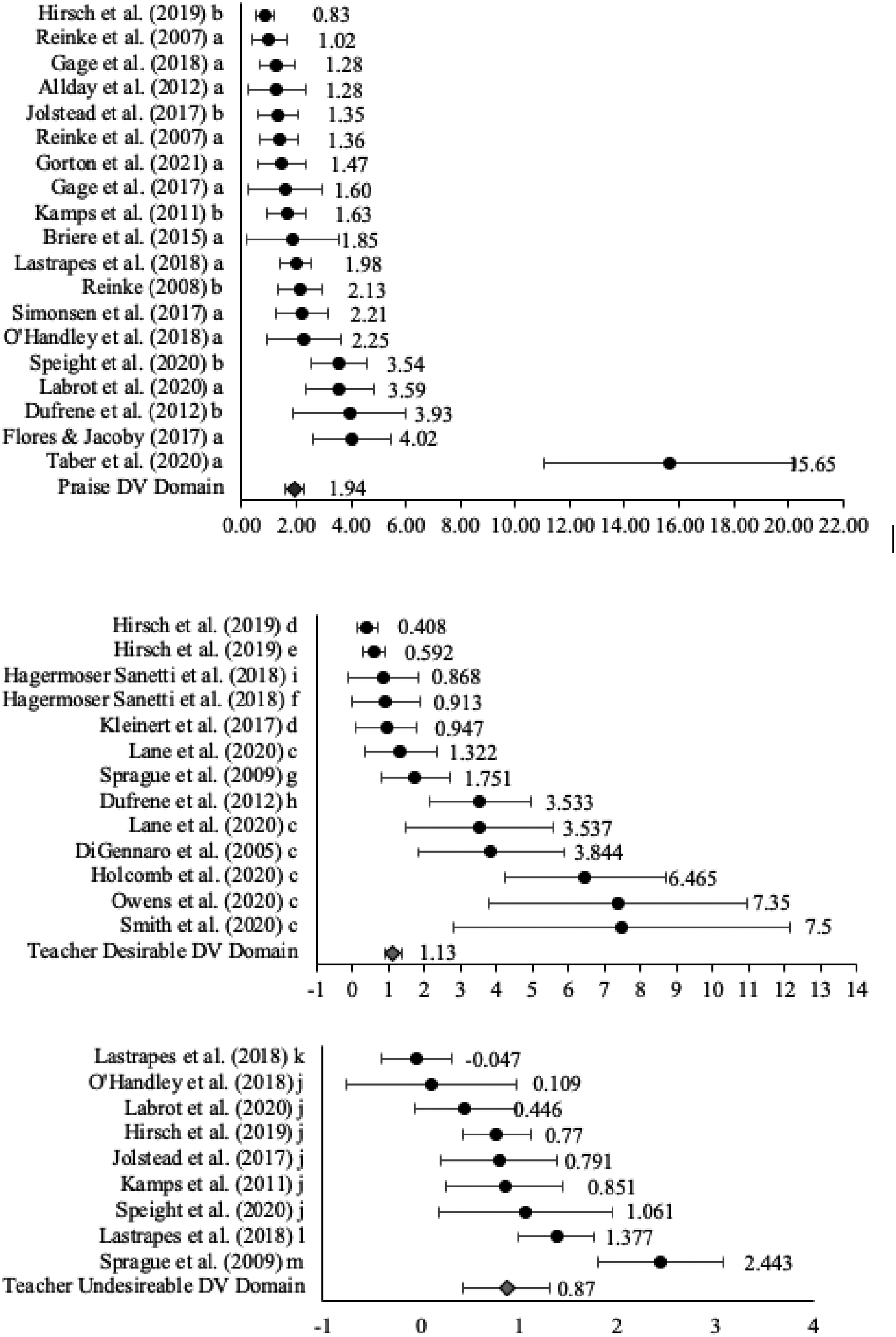

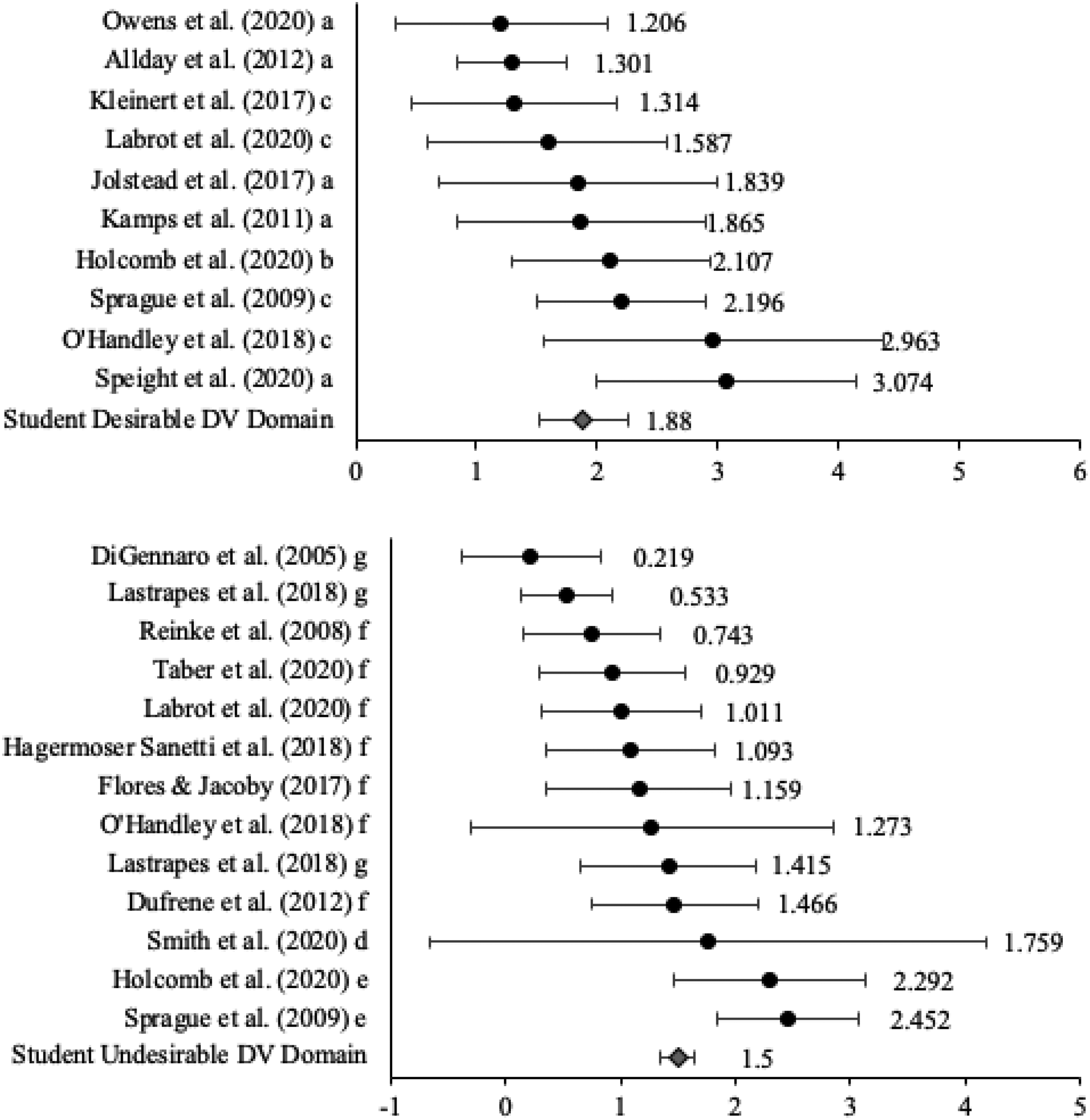

Figure 1 displays forest plots for all teacher-level dependent variables grouped by domain. For teacher praise, the overall effect size was 1.94, 95% CI [1.60, 2.29], and effect sizes ranged from d = 0.83 to d = 15.65. The overall effect size for teacher desirable behavior was 1.22, 95% CI [0.89, 1.37], and effect sizes ranged from d = −0.41 to d = 7.48. The overall effect size for teacher undesirable behavior was .87, 95% CI [0.43, 1.31], and effect sizes ranged from d = −0.05 to d = 2.44. Figure 2 displays the forest plots for all student-level dependent variables grouped by domain. The overall effect size for student desirable behavior was 1.88, 95% CI [1.52, 2.25], and effect sizes ranged from d = 1.21 to d = 3.07. The overall effect size for student undesirable behavior was 1.22, 95% CI [0.81, 1.62], and effect sizes ranged from d = 0.22 to d = 2.54.

Forest plots for all teacher-level domains. a = behavior-specific praise; b = praise statements; c = treatment fidelity; d = opportunities to respond; e = precorrection rate; f = quality ratings; g = teacher positive behavior; h = effective instruction delivered; i = adherence ratings; j = reprimands; k = general praise; l = corrective statement; m = teacher negative behavior.

Forest plots for all student-level domains. a = on-task behavior; b = desirable behavior; c = academic engagement; d = duration of transitions; e = undesirable behavior; f = disruptive behavior; g = off-task behavior.

Teacher use of praise had the largest individual effect sizes and largest overall effect size by domain; in contrast, reduction of undesired teacher behavior had the smallest effect size across all domains. Student domains both had smaller effect sizes than teacher praise and had larger effect sizes than other teacher desirable behavior. Student domains had less variability in effect sizes but smaller individual effect sizes than teacher desired-behavior domains, suggesting that student effects were more consistent but were not as strong as teacher effects.

NAP Sores

NAP scores are displayed by dependent variable and study in Supplemental Table S3. Across all studies, the combined NAP scores ranged from 0.37 to 1.0 (M = 0.866). NAP values can tentatively be interpreted as 0 to 0.65 indicating weak effects, 0.66 to 0.92 indicating medium effects, and 0.93 to 1 indicating strong effects (Parker & Vannest, 2009). Of the dependent variables in this analysis, eight fell into the weak-effects range, 33 of the studies fell into the medium-effects range, and 30 fell into the strong-effects range, with the average score of 0.866 falling into the medium-effects range.

BC-SMD Metaregression

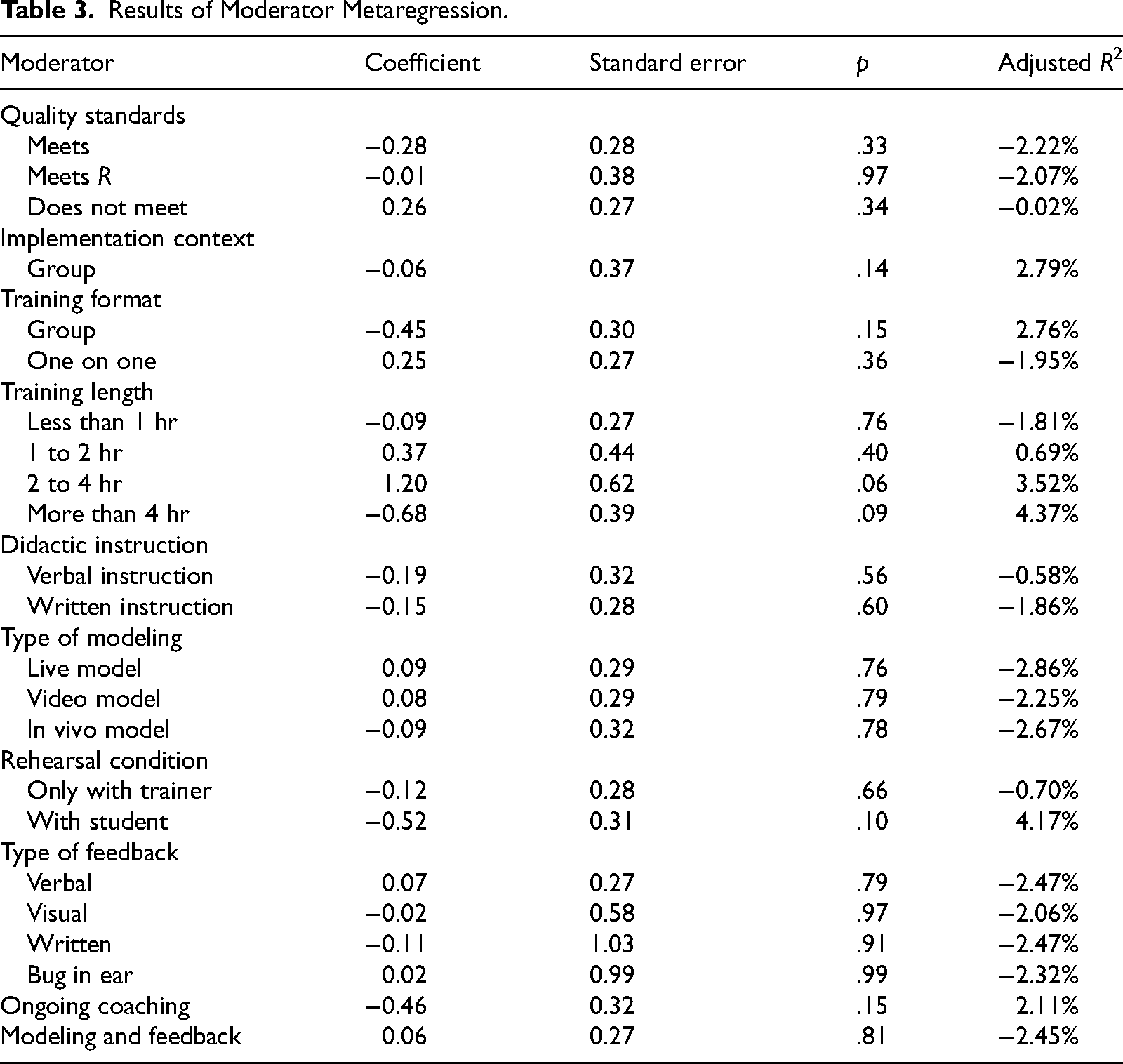

We used metaregression to determine the effects of moderators on study-level BC-SMD effect sizes. Prior to testing individual moderators, we ran a null model (without predictors). Results from this analysis suggest some variability among effect sizes (τ2 = .768) and that most of this variability could be attributed to heterogeneity among the studies (I2 = 97.93). When testing effects of moderators on study-level effect sizes, we found that no individual moderator was a significant predictor. In addition to individual moderators, we also tested the effects of studies that used modeling and feedback. Similar to the individual moderators, there was no significant effect for the combination of modeling and feedback. Table 3 lists effect sizes by potential moderator and the proportion of variance explained (i.e., adjusted R2) for each variable.

Results of Moderator Metaregression.

Discussion

Research continues to confirm that general education teachers remain ill equipped to respond to the needs of students who engage in challenging behavior, such as students with or at risk of EBD. We conducted a review of SCRD literature that measured the effect of training on teachers’ implementation of behavioral support strategies and student outcomes as a result of teacher implementation. This review served as an extension to a published systematic review on behavior management training for general education teachers (Samudre et al., 2022), which sought to descriptively synthesize the literature on student-level interventions that were targeted for training, commonalities of training strategies provided, and the methodological rigor of included studies using older WWC design standards (WWC, 2017). The WWC (2017) design standards rely on visual analysis to determine the appropriate categorization of evidence of effectiveness at the design level. The current meta-analysis differs from Samudre et al. (2022) in the following ways: (a) We asked research questions that required the application of analytic strategies to answer, (b) we replicated search procedures that yielded additional SCRD studies, (c) we provide results on student outcomes, and (d) we used updated WWC (2020) design standards. The updated standards provide additional guidelines for evaluating multiple-probe designs and using SCRD effect sizes—something that was not completed in Samudre et al. We calculated BC-SMD effect sizes to quantify the overall effect of training on teacher implementation of discrete strategies (e.g., BSP) or increases in treatment fidelity and its concomitant effects on student behavior. We also coded specific training components and strategies used (e.g., performance feedback) across included studies as well as an evaluation at the design level using WWC (2020) standards. Finally, we conducted a metaregression to determine if specific training strategies and the rigor of the experimental design moderated teacher-level effects. The findings from this review provide information on behavioral support training as well as considerations on key investments across schools, districts, and universities for supporting training efforts.

Improvements in Discrete Teacher Behaviors and Treatment Fidelity

Overall results from BC-SMD analysis indicate that training was effective in increasing general educators’ implementation of behavioral support strategies. Teacher-level dependent variables were separated into three domains (i.e., teacher praise, teacher desirable behavior, and teacher undesirable behavior). Effect sizes across each domain also indicated a large or very large effect (d range = 0.868–1.94). Of the three teacher-level domains, training had the largest effect on increasing general education teachers’ use of praise. This finding corresponds with existing literature stating that teachers may be implementing low levels of praise in preintervention conditions (e.g., Scott et al., 2011). These results are consistent with findings in Samudre et al. (2022), which indicated that a majority of SCRDs (i.e., 85.18%) demonstrated moderate to strong evidence of effectiveness regarding changes in teacher behavior. The overall effects of training on general education teachers’ reduction in undesirable behaviors (e.g., reprimands) had the smallest effect (d = 0.868). Although generally effective, the disparate effect sizes between increasing teacher use of praise and reducing undesirable teacher behaviors are surprising given that providing reprimands and high rates of praise are presumably incompatible and were often measured in the context of the same study. This may also indicate that initial rates of reprimands were relatively low, whereas praise had more potential to change.

Results indicated there were very large effects related to increasing teacher desirable behaviors such as treatment fidelity. Strategies targeted for training varied between discrete teacher behaviors (i.e., BSP) and implementation of multistep behavioral interventions (e.g., CW-FIT). Authors primarily targeted increasing rates of BSP (n = 11). This is not a surprising finding given that BSP is a low-intensity strategy with a robust evidence base to support its use. It is important to note that six studies targeted training on multicomponent intervention packages, which often included BSP alongside other Tier 1 practices (e.g., establishing rules and routines, precorrections). As the evidence base for BSP continues to grow, so has experimental evaluations on specific nuances associated with the effect of BSP on student behavior. For example, Gorton et al. (2021) examined if differential effects on student behavior occurred when voice intonation during teacher-delivered BSP changed.

An additional finding with the present review is the number of included studies that targeted increasing general education teachers’ treatment fidelity (n = 7). Four studies in the current meta-analysis targeted individualized behavior support strategies, and the remaining studies targeted classwide strategies. Findings such as these are reflective of potential disconnects between teacher-reported needs in behavioral support and the actual strategies targeted during training (Moore et al., 2017; Samudre et al., 2022). We contend that it is a valuable investment to evaluate the effect of training on teachers’ treatment fidelity of more intensive student-level supports as well as concomitant effects on student behavior. Some of the studies included in the meta-analysis do describe results related to training on targeted and individualized student-level supports (e.g., Holcomb et al., 2020; Owens et al., 2020). This is encouraging yet provides an urgent call for researchers to conduct further empirical evaluations that can help optimize training for implementation of intensive student-level supports. We contend that it is a valuable investment to evaluate the effect of training on teachers' treatment fidelity of more intensive studentlevel supports as well as concomitant effects on student behavior.

Key Investments for Behavioral Support Training

Most studies used between one and five initial training and support strategies. Authors of 13 studies reported that initial training was less than or equal to 1 hr, and 10 of those studies (76.92%) provided training in a one-on-one format. This finding may reflect our exclusive focus on SCRDs and the procedural necessity of providing one-on-one training within multiple-baseline-across-participants designs. Brock et al. (2017) found similar patterns within the special educator training literature and raised concerns regarding the feasibility of individualized training practices. These methodological requirements may not be expected in everyday practice; however, issues with the cost and time of providing high-quality training that extends beyond stand-alone workshops may present additional barriers. Nonetheless, the results from this review and similar reviews across teachers with different certification areas (e.g., special education; Brock et al., 2017) provide professional development designers and providers with priority investments for training (e.g., ongoing coaching).

Additionally, we examined commonalities of training practices across included studies. In addition to didactic training, ongoing coaching was the most common practice. Authors reported providing ongoing coaching as a follow-up strategy to initial training in 21 studies (80.77%). These studies typically demonstrated large to very large effect sizes, in alignment with previous evidence that coaching is effective at increasing discrete teacher behaviors (e.g., BSP; Ennis et al., 2020). Therefore, ongoing coaching should be considered a priority investment for supporting teachers’ behavioral support needs. With further regard to feasibility, emerging research has shown that coaching can be provided in a time- and resource-efficient manner (e.g., Gage et al., 2017). For example, multitiered systems of professional development involve providing small-group training and reserving more intensive support (e.g., ongoing coaching and performance feedback) for teachers who require it based on their implementation data. Modeling was also frequently reported as a training strategy across studies and could be considered an additional investment to enhance initial training. Therefore, ongoing coaching should be considered a priority investment for supporting teachers' behavioral support needs.

Results from the meta-regression did not yield statistically significant predictors for study-level effect sizes. We propose three possible explanations for these null findings. First, there was very low variability in the types of strategies used. For example, all studies used performance feedback during initial training or follow-up coaching. Therefore, we had a limited ability to contrast the presence and absence of strategies. Related to this point, there was a trend-level effect for training time, suggesting that if most studies were using similar strategies, using similar strategies for a longer time tends to be associated with stronger effects. Second, with the exception of two studies, all studies had moderately large to very large effects. Therefore, the metaregression was focused on three degree of efficacy among very effective interventions. This is in sharp contrast to previous analyses of staff training that involved both effective and ineffective staff training (e.g., Brock et al., 2017). It likely is more difficult to isolate the contributions of individual components under these circumstances. Third, given that studies used similar training approaches that were largely effective, the remaining variability is likely better explained by variables that were not included in our model. This idea is supported by the substantial unexplained variance in the model. Examples of possible explanatory variables include student characteristics, teacher characteristics, aspects of the classroom or instructional context, and prior or ancillary use of other behavioral support strategies.

Effective Training as a Nexus for Improved Student Outcomes

Student-level effects were categorized into two separate domains (i.e., desirable behavior and undesirable behavior). Arguably, the strongest indicator on if training was effective in research is if improved outcomes are observed for students. It is encouraging to see that many studies demonstrated positive effects for teacher and student participants synchronously. Given that ongoing coaching and performance feedback were provided across most studies, it is important to note that teacher-level support likely continued until some criterion (e.g., increase in BSP, reduction in disruptive behavior) was met. This is in contrast to typical professional development (e.g., one-day workshop), which may not include ongoing support strategies that consider teachers’ specific needs for improving implementation or use student data to determine when teacher-level support (e.g., coaching) concludes. Professional development designers and providers should consider follow-up support driven by student-level data a key investment in behavioral support training.

Improved Outcomes for Students With Disabilities

Over half of the studies in this review reported training teachers of both general and special education students (studies n = 14; see Supplemental Table S1). Of the total value of student participants across included studies (n = 912), 13% of those students were identified as having a disability (n = 71), and the majority of those students were identified as with or at risk of EBD (n = 21). It is important to note that a disability label was not provided for 35 students listed as receiving special education services at the time the included study was conducted. Eight studies measured classwide data from inclusive classrooms in response to teacher implementation but did not report the number or characteristics of students with disabilities in the classroom. The remaining studies either measured and reported proximal outcomes for individual students with disabilities in the context of a multiple-baseline or withdrawal design (e.g., Holcomb et al., 2020) or reported outcomes for an entire classroom with students with disabilities (e.g., Hagermoser-Sanetti et al., 2018). Despite the heterogenous reporting measures for outcomes for students with disabilities, general education teachers’ implementation resulted in improved proximal outcomes for students with and at risk of disabilities. This must be interpreted with the following in mind: Information on the magnitude of improvement when compared with students without disabilities and whether improvements in distal outcomes were observed are unknown. Improving general education teachers’ implementation can support students who require intensive behavioral support, such as students with or at risk of EBD, and who receive most of their instruction in an inclusive setting. However, the successful transference of evidence-based practices for students with disabilities from research to practice requires training that is unique to the environmental dynamics of the generalization setting. Training that is designed to improve general education teachers’ implementation of behavioral support strategies so that they can support students across exceptionality labels is crucial.

As informative as the effect of training on implementation is, it should not be the only metric that informs future training. The evidence of effectiveness should also be interpreted with the methodological rigor of the research base in mind. We used WWC (2020) guidelines to rate the quality of each of the designs. Given an overall low percentage of designs that met standards with or without reservations (i.e., 27.63% met WWC standards and 15.79% met WWC with reservations), we strongly encourage researchers to conduct methodologically rigorous research that can confidently inform future training. It is important to note that design quality was not a statistically significant moderator for study-level effect sizes per our analysis. It remains, however, that potential shifts in approaches to training should be informed only by rigorous experimental research (Maggin et al., 2013).

Implications

Findings from this review present timely implications for general education teacher training in behavioral support implementation. First, investments in professional development that integrate high-quality training strategies, such as modeling, performance feedback, and ongoing coaching, are supported. However, conversations regarding the feasibility of these training packages in applied settings is warranted. Luckily, emerging research has evaluated efficient and feasible approaches to training by providing more tiered support for teachers whose implementation data indicate they require it (e.g., Simonsen et al., 2020). This could be especially important for supporting teachers in settings with higher rates of challenging student behavior. As researchers continue to expand on this work, professional development designers and providers can organize training in a way that reserves more intensive support for teachers who need it most.

Though not guaranteed, improved teacher implementation can have concomitant improvements for student behaviors. Therefore, we contend that it is imperative for researchers to measure student and teacher outcomes from behavioral support training within the context of the experimental design. If changes across both student- and teacher-level outcomes occur, then a close analysis on what components of training led to that improved change is deeply warranted. Our metaregression results did not identify any one strategy as more effective than the other; however, quality professional development typically involves a collection of strategies. Thus, it remains important to design training that includes some type of initial instruction, modeling the correct implementation of the targeted practice(s), an opportunity for the teacher to practice implementation, and follow-up support with ongoing performance feedback. Relatedly, researchers should continue investigating effective approaches to training through methodologically rigorous research and reporting training components with replicable precision. This can help consumers of research to confidently arrive at conclusions on efficacious professional development approaches.

Limitations and Future Research

The results from the present study should be interpreted with the following limitations in mind. First, we did not evaluate the effect of training on teachers’ maintenance of the targeted strategies. However, general education teachers’ ability to sustain use of a targeted strategy after support has ended is likely the most transparent indicator of training effectiveness. With consideration of how maintenance data are often variably reported, we encourage future research on evaluating the impact of training using maintenance data. Second, although we reported summary effect sizes for two domains of teacher desirable behavior—one focused on more discrete strategies (e.g., BSP) and one focused on more complex teacher behavior (e.g., treatment fidelity)—we did not examine the intensity of the student-level intervention as a potential moderator of teacher outcomes. Although our analysis did not indicate a statistically significant association between the context of implementation (group vs. individual strategies) and study-level effect sizes, future research should further categorize student-level interventions to determine the interaction of the difficulty of a strategy and study-level effect size. Third, studies that measured changes in teacher behavior in the context of the design were omitted from the review due to the restrictiveness of the BC-SMD effect size criteria. Researchers who design future SCRD studies to evaluate training practices should consider designs that increase the feasibility of including adequate participants while also meeting criteria for common effect sizes, such as BC-SMD. For example, a well-designed nonconcurrent multiple-baseline design can reduce the burden of simultaneous baseline data collection across teachers (and potentially classrooms or schools) while controlling for common threats to internal validity (Slocum et al., 2022). By considering this match, researchers can increase the likelihood of their studies meeting inclusion criteria for future meta-analyses, which may increase the researchers’ ability to detect contributions of specific training components. Additionally, the Marso and Shadish (2014) macro we used assumes no baseline time trend and immediate-level change in the intervention condition. We recommend using updated BC-SMD effect size estimators to relax these assumptions (e.g., Pustejovsky et al., 2023). Next, we did not conduct any publication bias analyses. Given that we excluded dissertations, these analyses would have been especially important to conduct. Finally, although we did conduct a hand search for possible studies to be included, we did not conduct an ancestral or forward search and did not include the term “general educator*” in the Boolean string during our database search. We are optimistic that our search process was comprehensive; however, it is possible that articles that would have otherwise met our inclusion criteria were not included.

Conclusion

Researchers have made many advancements on training teachers to support positive student behavior. However, there is still a large and overwhelming consensus that managing classwide and individual student behaviors is among the most challenging aspects of the teaching profession today. Recent data indicate the percentage of students with EBD who receive the majority of their instruction in inclusive classroom settings is increasing. Thus, general education teachers need to be further equipped to prevent and respond to challenging behavior. The results of this meta-analysis indicate that training that includes didactic instruction, modeling, and ongoing coaching are effective at improving both teacher and student outcomes and provide information on potential investments for effective behavioral support training.