Abstract

Recent studies reiterate the importance of mathematical literacy and the identification of skills, knowledge and cognitive processes which contribute to composite test scores to facilitate targeted remediation and extension activities. To this end, the current article examines data from the 2012 cycle of the Programme for International Student Assessment (PISA), using multilevel modelling techniques to explore the relationship between selected student-level and teacher/school-level factors and the three processes of interpret, employ and formulate which were measured as the skills underlying mathematical literacy in that assessment. Results of the analyses indicate that boys outperform girls significantly (p < 0.001) in all three processes whereby formulate invokes relatively more inter- and intra-level influences compared with interpret. Apart from the relatively higher item-difficulties of formulate, an increase in the complexity of contextual effects at the student and the teacher/school-level emerges as mathematical processes move from interpret to employ to formulate. Findings also reveal that students taught by teachers who had mathematics as a major in their undergraduate studies and who work in relatively smaller classes or groups show higher performance in all three mathematical literacy processes. Use of ICT in mathematics lessons is negatively associated with the three mathematical literacy processes. The additional negative effect of mathematical extracurricular activities at school on the processes highlights the need to rethink how technology and extracurricular lessons are to be used, designed/structured and delivered to optimise the learning of mathematical processes, and ultimately improve mathematical literacy.

Keywords

Introduction

The triennial Programme for International Student Assessment (PISA) assesses reading literacy, mathematical literacy and scientific literacy. The main assessment areas are rotated across cycles with mathematical literacy being the major domain of assessment in the 2012 cycle, meaning that a larger proportion of the assessment was devoted to mathematical literacy than to reading or scientific literacy. PISA operationalises mathematical literacy as ‘an individual’s capacity to formulate, employ, and interpret mathematics in a variety of contexts’ (OECD, 2013a, p. 25).

Mathematical literacy encompasses reasoning mathematically and using mathematical concepts, procedures, facts and tools in describing, explaining and predicting phenomena. The verbs ‘interpret’, ‘employ’ and ‘formulate’ indicate the three cognitive processes which students will engage with when completing mathematical literacy tasks (OECD, 2013b, p. 25).

This study examines the relationships between selected contextual factors at the student level as well as the teacher/school level and the cognitive processes associated with interpret, employ and formulate, and performance in mathematical literacy.

The focus on the three cognitive processes, which underpin mathematical literacy, has implications for learning, teaching and the mathematics curriculum (Thomson et al., 2013). This emphasis is explicit in Australia through the Victorian (VCCA, n.d.) and Queensland Education Department’s (Goos et al., 2015) directions.

Smith et al. (2018) warn Australia’s mathematics performance trend has stalled or declined in the National Assessment Program – Literacy and Numeracy (NAPLAN). They indicate the urgency to examine benefits of analytical skills and underlying cognitive skills and processes.

Our research study seeks to respond to Smith et al.’s challenges and to provide useful insights to mathematics educators and curriculum developers of mathematical literacy in Australia and beyond.

Synthesis of literature and foci of current study – Processes and contents underlying mathematical literacy

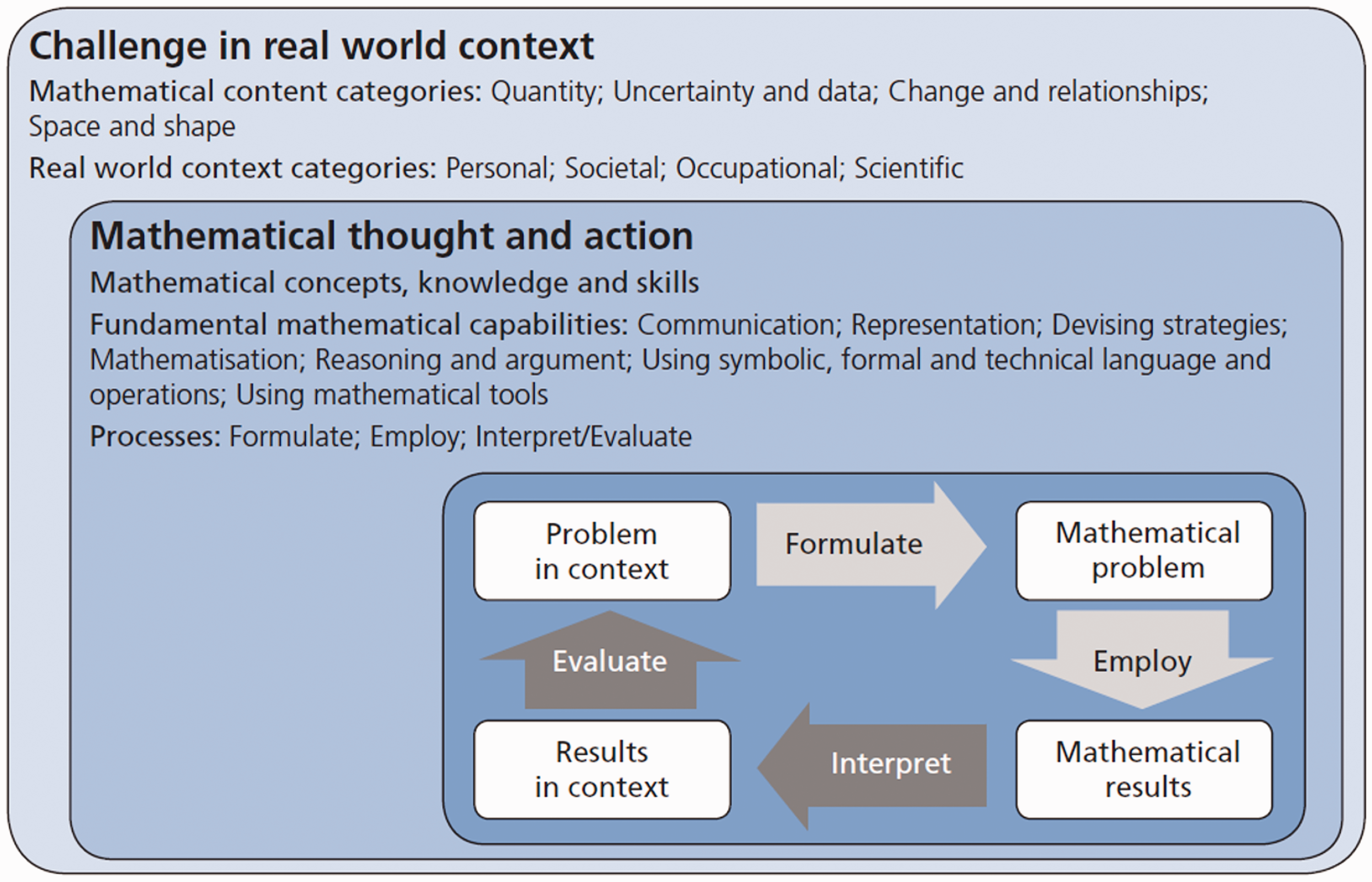

The 2012 cycle of PISA saw the broadening of the definition of mathematical literacy, with the integration of mathematical modelling (OECD, 2013a, p. 25). Figure 1 provides the progressions of learning as one proceeds through the stages (and processes) and the framework for the assessment of mathematical literacy and the relationships between contents, contexts and processes.

A model of mathematical literacy in practice (OECD, 2013a, p. 26).

Three interrelated aspects of mathematical literacy were made explicit (OECD, 2013a, p. 27) and included the following:

mathematical processes describe what individuals do to connect the context of the problem with mathematics and thus solve the problem; mathematical content targeted for use in the assessment items and contexts in which the assessment items are located.

Of interest and scrutiny in this research are the mathematical processes as evidenced through student-level and school-level performance.

The OECD report (2013a) highlighted mathematical literacy, which begins with a problem in context, is an individual’s capacity to interpret, employ and formulate processes associated with mathematics in a variety of contexts. It includes reasoning mathematically and assists individuals to recognise the role that mathematics plays in the world and to make the well-founded judgements and decisions needed by constructive, engaged and reflective citizens. Importantly, ‘mathematical literacy is very closely related to mathematical modelling, because formulating mathematical models, employing mathematical knowledge and skills to work on the model and interpreting and evaluating the outcome are its essential processes’ (Stacey & Turner, 2015, p. 12).

Stacey (2001) aptly summarises the underlying processes in Figure 1. In the formulate process, a real-world problem is transformed into a mathematical problem (top arrow in Figure 1). The mathematical problem is solved by the employ process. The interpret process underpins how the mathematical results produced are translated into real-world terms and judged for adequacy.

In 2012, PISA mathematical literacy results were reported as overall score, scores for each of the four mathematical content categories and (new) scores for each of the three processes. The interpret scale should indicate how effectively students are able to reflect upon mathematical solutions or conclusions, interpret them in the context of a real-world problem and evaluate whether the results or conclusions are reasonable. Students’ facility at applying mathematics to problems and situations is dependent on all three of these processes, and an understanding of their effectiveness in each process can help inform both policy-level discussions and decisions being made closer to the classroom level.

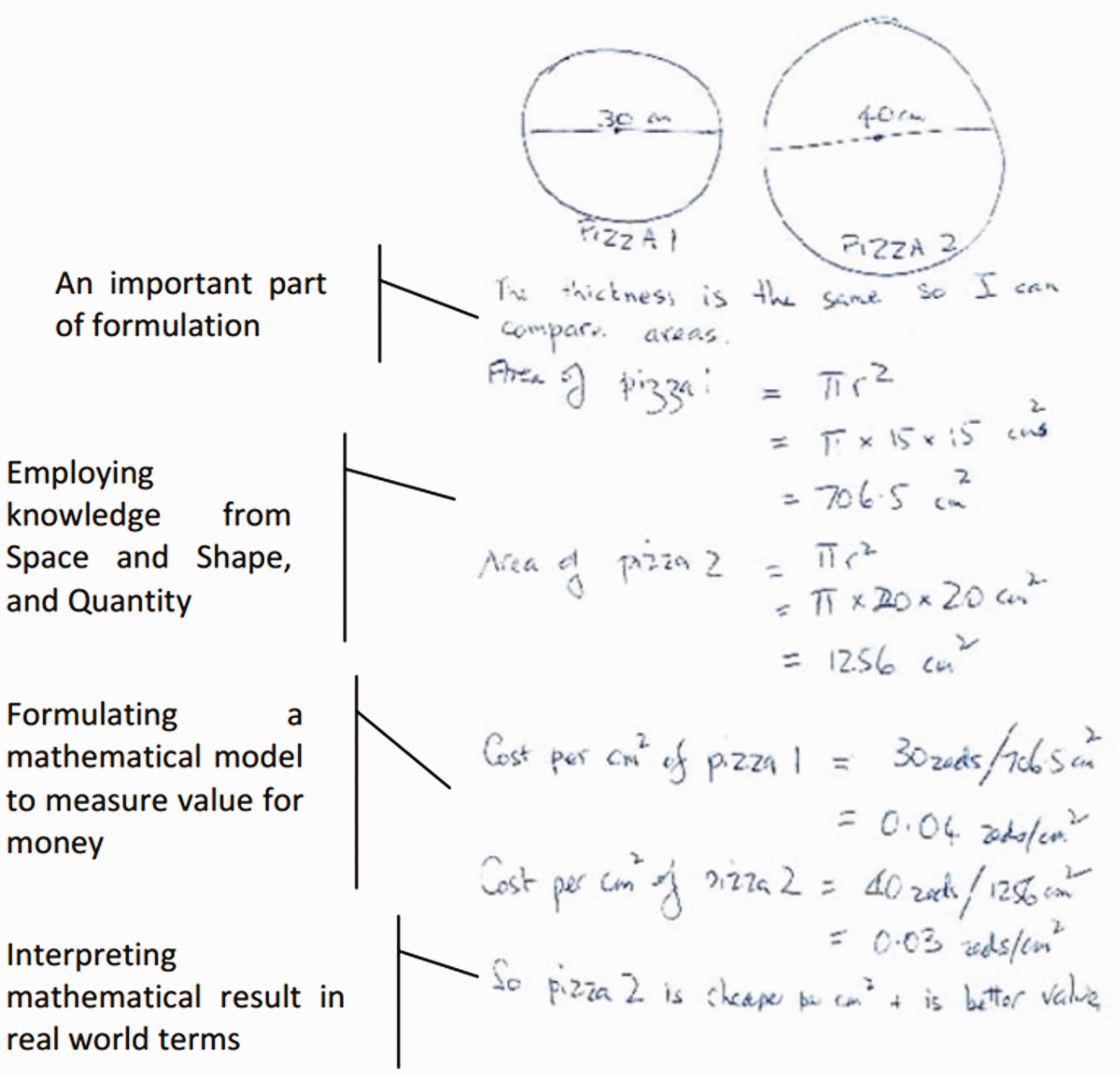

Figure 2 illustrates the interpret–employ–formulate processes from the PISA 2012 mathematical literacy report (OECD, 2013a).

Details illustrating the interpret – employ – formulate processes (OECD, 2013a).

The employ scale should indicate how well students are able to perform computations and manipulations and apply the concepts and facts they know to arrive at a mathematical solution to a problem formulated mathematically.

The PISA 2012 report (OECD, 2013c) also indicated scores on the formulate scale should show how effectively students are able to recognise and identify opportunities to use mathematics in problem situations and then provide the necessary mathematical structure needed to formulate that contextualised problem into a mathematical form.

Although employing is the application of mathematics knowledge to solve a problem, formulate can provide even higher difficulties if underlying interpret and employ processes are deficient. Alagumalai (1999) provided evidence that higher-order mathematical processes require fundamental knowledge and understanding of key mathematics concepts and skills. Moreover, he demonstrated the Rasch model provides support for the complexity of cognitive processes used in assessing understanding and applications. The more complex items, which require higher-order thinking and cognitive processes, had item difficulties higher than items involving recall and understanding. Both the interpretation and translation of word problems into its mathematical form is fundamental to success in mathematical problem-solving (Alagumalai, 1999, 2012).

Paralleling the linking of complexity of cognitive processes with difficulty of items associated to these processes, Stacey and Turner (2015, p. 17) argued that the empirical difficulty of cognitive demand increases when moving from ‘interpreting, applying and evaluating mathematical outcomes’, to ‘employing mathematical concepts, facts, procedures and reasoning’, to items which involve ‘formulating situations mathematically’. They further refer to the use of Rasch-based item response theory that the item difficulty increases with employ and formulate items, relative to those involving interpret. The item difficulty levels will not be tested in the current study. However, this research explores the various factors which associate and interact with items for the three cognitive processes. It is important to examine the stages of action in solving real problems and contextual factors which may facilitate the problem-solving processes (Stacey & Turner, 2015). Moreover, the PISA 2012 framework specifies that ‘about half of the mathematics items used in the PISA survey are classified as Employ and about one quarter are in each of the Formulate and Interpret categories’ (Stacey & Turner, 2015, p. 26). Hence, this research will provide understanding on how selected student and teacher/school-level factors influence the three cognitive processes uniquely and provide further insights beyond the aggregate mathematical literacy scores (and reported in nearly all research publications).

PISA provides information that allows comparison between jurisdictions and education systems to identify factors and examine trends that enhance performance in mathematics. Nguyen et al. (2020) highlight that contextual information in PISA enables the linking of background factors to student performance, and how student, home, teacher, school and organisational information may predict achievement in mathematical literacy. Good measures of students’ and families’ contexts are critically important when analysing large-scale assessment data so as to find which factors are associated with positive outcomes. It is pertinent to understand performance in processes associated with mathematical literacy and associations and relationships between the micro-student level and family factors, the meso-teacher/school-level factors and macro-country level factors (OECD, 2016a). As noted in the ‘Assessment and Analytical Framework’ (OECD, 2013a), a large section of the student questionnaire, school questionnaire and the international options, is devoted to contextual factors.

An important aim of PISA is to ‘assess the cumulative yield of education systems at an age where compulsory schooling is still largely universal’ (OECD, 2009, p. 11). The current study seeks to examine the factors (or variables) that contribute to student achievement in the three cognitive processes associated with mathematical literacy. As such, no comparisons between countries and/or teachers/schools are intended. Moreover, due to the complexities associated with missing data, complex sampling design, multilevel modelling and associated imputation methods (Allison, 2002, pp. 73–90; Raghunathan, 2015; Rutkowski et al., 2010), only complete datasets from participating jurisdictions have been used to identify statistically significant predictors to understand the contextual factors which contributed positively to performance in mathematical literacy.

Rationale for selection of variables for study

Variables that had been investigated in previous studies (Alivernini et al., 2010; Bando et al., 2017; Coyle et al., 2015; Ma et al., 2008; O’Dwyer et al., 2015; OECD, 2014c) were selected and included in the current analyses to provide a comprehensive picture of factors related to mathematical literacy. The categories, levels and variables nested under these levels included here follow on from the classifications articulated by Willms (2010): . . . various factors that affect student achievement can be classified into three broad domains – curriculum, teaching practices and the context or learning environment. This classification helps to set some boundaries on what is meant by school context, and it provides a framework for considering different educational reforms. (p. 1010)

Differences in the average performance of males and females in mathematics, mathematical literacy and mathematical sciences continue to draw research interests (Doris et al., 2012; Toh & Alagumalai, 1994). Strength in various cognitive skills, attitudes and motivation has been attributed to success in mathematics performance (Coyle et al., 2015; Hasan & Khan, 2015; Stoet & Geary, 2013). However, Reilly et al. (2016, p. 147) contend that ‘ . . . sex-role identity may have greater utility in explaining individual differences as demonstrated through performance in spatial skills and measures’. Ongoing debate about gendered differences in performance and associated equality issues provided the motivation to examine how gender is associated with performance in mathematical literacy in this study.

The variables identified in this study were guided by Plomp’s (1992) framework and rationale used in comparative educational research. He acknowledged the interconnectedness of curricular antecedents, curricular contexts and curricular contents, and their influences on the system, school/classroom/teachers and student levels.

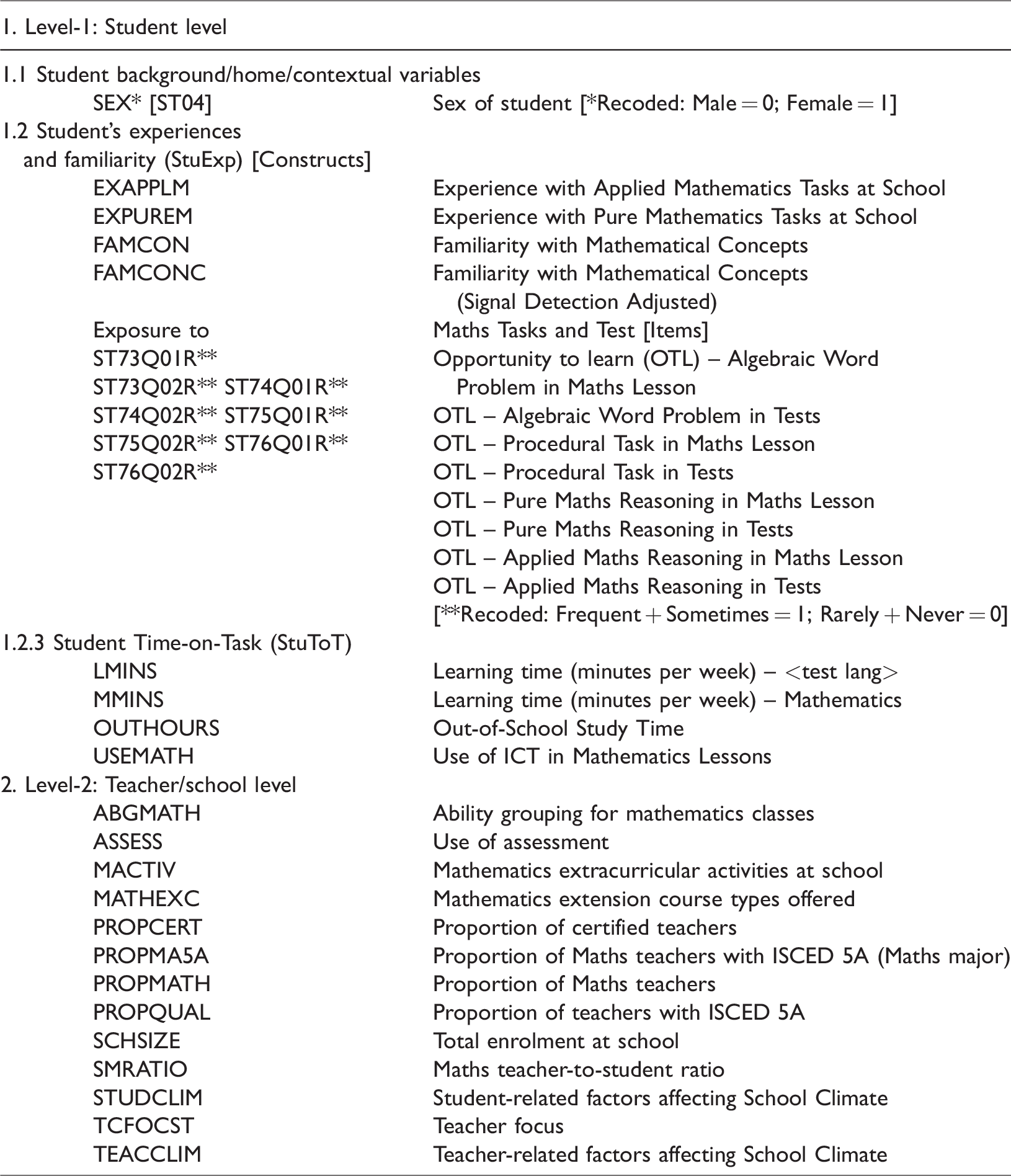

Moreover, the OECD (2013a, 2016b) reports provided support for the selection of variables associated with students’ familiarity with mathematics, their attitudes towards mathematics and time-on-tasks (and completion of lessons, problems and tests). Namkung and Peng’s (2019) study reiterated the need to understand better the experiences and exposure to mathematical tasks. Curricular factors which influence school learning (Keeves, 1976) provided the basis for identifying school-level factors. Selected school-level factors were included to examine their importance for the three cognitive processes and in developing instructional strategies (Jitendra et al., 2016; Tambychik & Meerah, 2010). The variables selected for this study are presented in Table 1.

Home/student, classroom and school variables.

Unless otherwise indicated, the indices above were scaled using a weighted likelihood estimate, using a one-parameter item response model (a partial credit model was used in the case of items with more than two categories) (OECD, 2013a, p. 191).

The Centre for Survey and Survey/Register Data (http://cssr.surveybanken.aau.dk/webview/) provide description for all variables used in the PISA2012 study. Moreover, Chapter 16: Scaling Procedures and Construct Validation of Context Questionnaire Data of the PISA2012 Technical Report (OECD, 2014b) provides details to scaling processes used.

Research questions and models

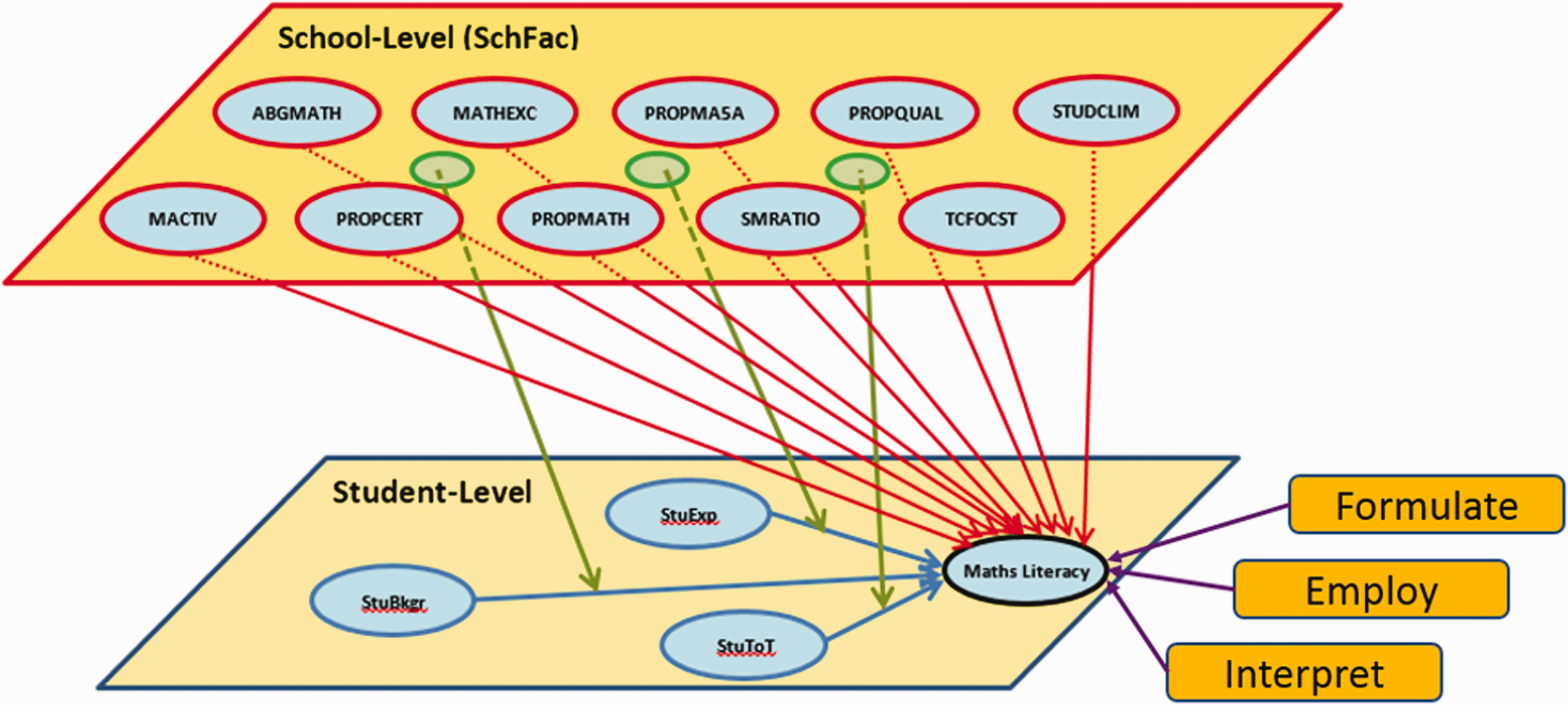

Figure 3 presents the conceptual framework adapted from the OECD report (2013a), the two-level proposed model for testing in this study, premised on what and how students learn and how teachers/schools support their learning and performance. Guided by this framework and the findings of previous studies discussed above, the research questions for this study were:

HLM representation of possible effects and interactions (student- and school-level).

RQ1: What are the proportions of variance:

a) associated with the student and teacher/school levels in the interpret, employ and formulate processes, and b) in student performance on the interpret, employ and formulate processes that are explained by the student and teacher/school factors?

RQ2: What student (Level-1) factors are significant predictors of student performance in the interpret, employ and formulate processes?

RQ3: What teacher/school (Level-2) factors are significant predictors of student performance in the interpret, employ and formulate processes?

RQ4: What is the nature of the cross-level interactions between teacher/school (Level-2) predictors and student (Level-1) predictors of student performance in the interpret, employ and formulate processes?

Methodology – Multilevel modelling in education

In education, students are nested within classrooms and classrooms are nested within schools. Students interact with the social and learning contexts within the class and in the school, and these broader ‘groupings’ (class and school) are influenced by the students and teachers within the system.

This investigation utilises the current developments in multilevel modelling and examines individual-level, school-level effects and the interactions between the variables in these levels. Multilevel modelling was used to estimate the effects of selected student background/home/contextual variables, student’s experiences and familiarity (StuExp), Student Time-on-Task (StuToT) and school-level factors on the three cognitive processes underlying mathematics literacy (achievement).

It was noted that several schools sampled and included in this study had ‘one mathematics classroom and with one mathematics teacher’. As indicated earlier, the classroom and school levels are confounded (O’Dwyer et al., 2015). The confounding between classroom, the teacher and school is acknowledged by including variables from these three categories at the second level, namely the Teacher/School-level category in the current study. A similar approach was applied by Alivernini et al. (2010), Mora (2020), O’Dwyer and Parker (2014) and OECD (2014d, 2015a).

Understanding the interactions between levels is important for examining policies in education. Raudenbush and Willms (1991) purport: Interaction effects have important implications for policy, and one of the central purposes of multilevel analyses of educational data has been to explore the possibility that educational policies and practices may modify the distribution of outcomes within schools of classrooms. (p. 3)

Sample and dataset

Students between the ages of 15 years 3 months and 16 years 2 months from 65 jurisdictions, including 34 OECD member countries, participated in the 2012 cycle of PISA (OECD, 2014a). In addition to completing items on mathematical literacy, scientific literacy and reading literacy, contextual information from students (including family background, motivation and instruction) and principals (schools) was also collected. Table 1 presents the student variables selected for inclusion at Level-1 as well as the teacher/school variables that were included as Level-2 variables in the models examined in this study.

Australia plays a key role in large-scale international studies through the Australian Council for Educational Research. Moreover, the focus on mathematical literacy (NAPLAN) and innovations in the learning and teaching of mathematics (Thomson et al., 2013) require scrutiny of the underlying analytical skills vis-à-vis through the three cognitive processes (Smith et al., 2018). It is acknowledged that although key variables were selected from PISA participating countries, the findings of this study are useful to inform policy and curriculum matters in Australia.

As the analyses for this study focussed primarily on examining broad teacher/school-level (Level-2) and student-level (Level-1) factors that predicted mathematics literacy, and not on the performance of individual schools, teachers or students, listwise deletion was used for Level-1 and Level-2 data. After removing all combinations of missing data (user-missing and system-missing values combined, see Appendix 1), the remaining sample for analysis included 46,518 students nested within 3001 schools/teachers.

This study acknowledges the correlations within and between compositional and contextual variables and its implications for multicollinearity and associated inferences. One suggestion to reduce multicollinearity in a regression model is re-specification through the removal of highly correlated variables. Moreover, Anderson (2012, p. 13) recommends that centring in HLM ‘has additional benefits, such as reducing issues of multicollinearity, which can ease estimation’. Hence, in this study, the following centring methods were used: Group-mean centring: SEX, ST73Q01R, ST73Q02R, ST74Q01R, ST74Q02R, ST75Q01R, ST75Q02R, ST76Q01R and ST76Q02R.

Plausible values, which are computed approximations of proficiency estimates for mathematics literacy and the underlying processes, were used as outcome variables in the HLM analyses. Researchers (Adams & Wu, 2002; Monseur & Adams, 2009; Von Davier et al., 2009) have highlighted the advantages of plausible values over single individual (raw) scores, and the current features in HLM7 allow for their use in the current analyses.

This study adopted OECD’s (2010) recommended student final weights (W_FSTUWT) and school weights (W_FSCHWT) for Level-1 and Level-2, respectively, and in the analyses using HLM-8 software (Raudenbush et al., 2019).

The models tested for this study are the (1) unconstrained (null) model, (2) random intercept model, (3) means as outcomes model and (4) interactions model (see Figure 3).

Results and discussion

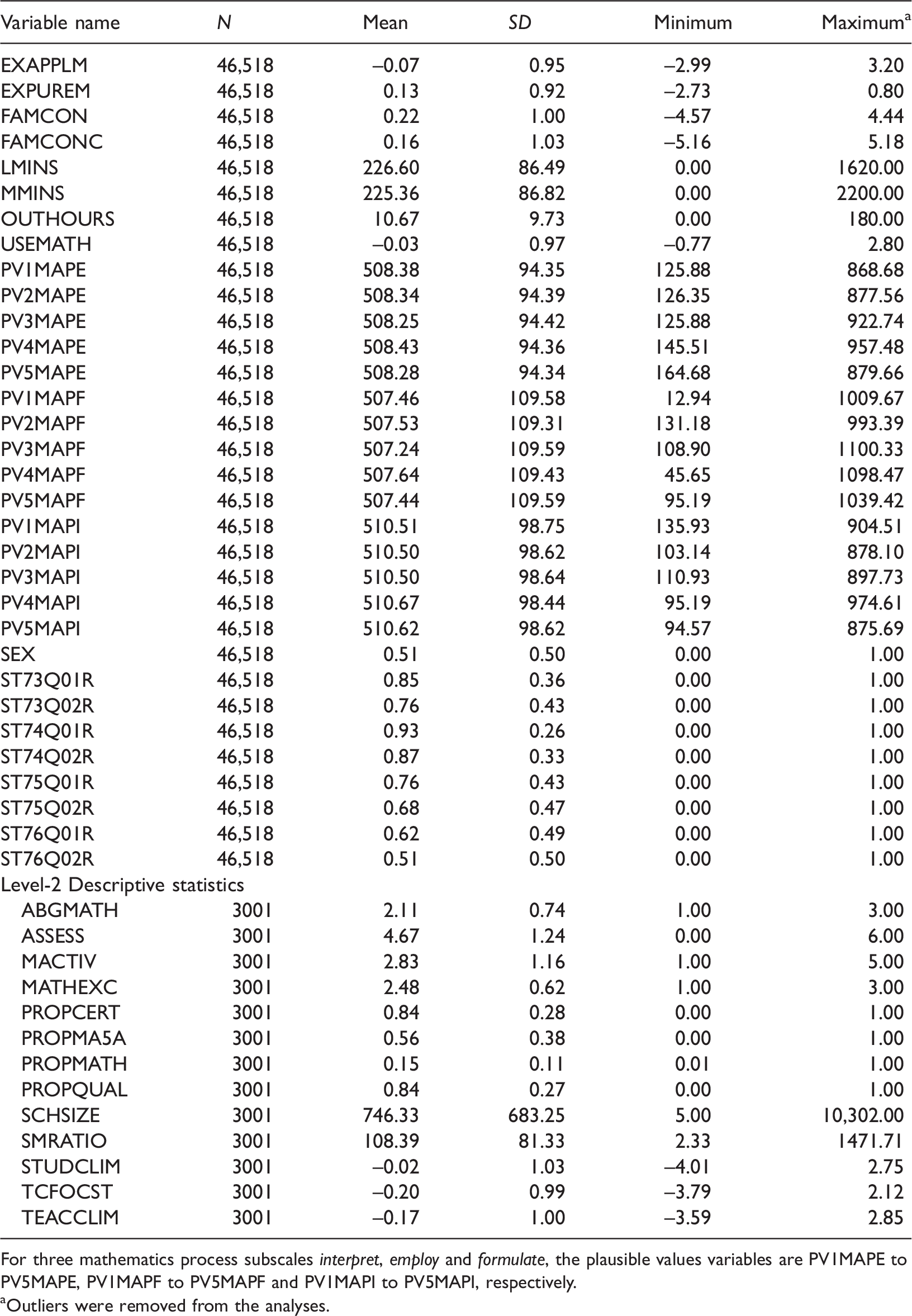

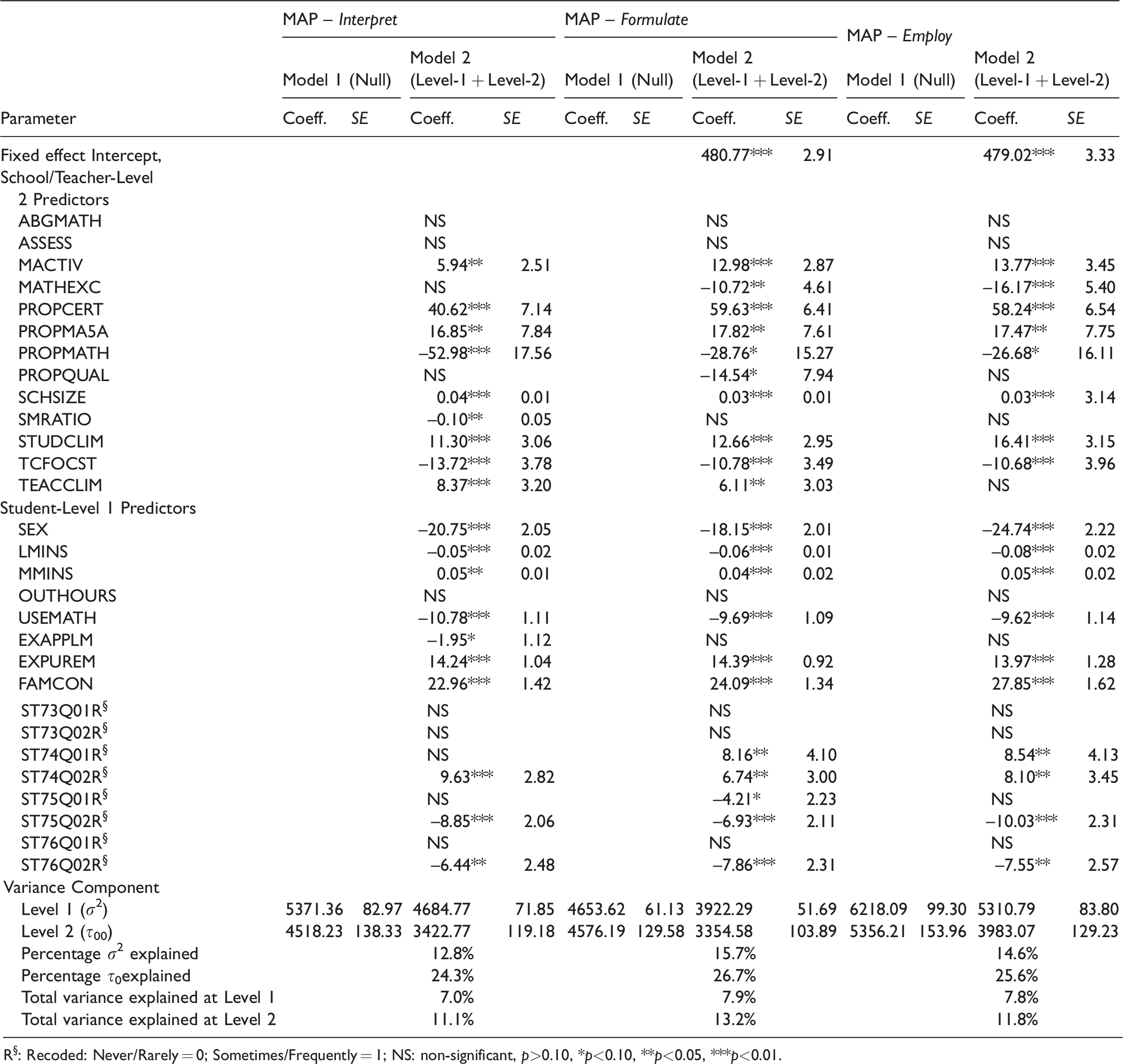

While the descriptive statistics of the variables included in the models are presented in Table 2, the multilevel analysis results are presented in Table 3. To address the first research question, ‘What are the proportions of variance (a) associated with the student and teacher/school levels in the interpret, employ and formulate processes, and (b) in student performance on the interpret, employ and formulate processes that are explained by the student and teacher/school factors?’ the results of the null model (Model 1) for each of the three processes were used. To obtain estimates of the available variance, the intraclass correlation coefficients (ICC) were calculated dividing the Level 2 variance by the sum of the Level 1 and Level 2 variances of the null model for each of the three processes. This produced ICCs of 0.46, 0.50 and 0.46 for interpret, employ and formulate, respectively, indicating that between 46% and 50% of the total variability in the three cognitive processes was associated with schools/teachers. These results regarding available variances is consistent with the findings of Nye et al. (2004), Sikora and Saha (2010) and United Nations Children's Fund (2012).

Descriptive statistics.

For three mathematics process subscales interpret, employ and formulate, the plausible values variables are PV1MAPE to PV5MAPE, PV1MAPF to PV5MAPF and PV1MAPI to PV5MAPI, respectively.

aOutliers were removed from the analyses.

Multilevel modelling results for mathematical literacy processes (Nstudent = 46,518; Nschool/teacher = 3001).

R§: Recoded: Never/Rarely = 0; Sometimes/Frequently = 1; NS: non-significant, p>0.10, *p<0.10, **p<0.05, ***p<0.01.

Table 3 also presents the total variance explained by factors in the models at Levels 1 and 2. The ability to formulate and employ has nearly equal variance explained at Level 1 (student level) compared to a relative lower variance explained at the same level for interpret. However, at Level 2, the variance explained is substantially higher. This confirms the importance of Level 2 predictors in terms of their contribution to explaining differences in higher-order thinking skills relevant to complete tasks associated with mathematical literacy. Thus, about 18% to 21% of the variance is explained by the model derived in Table 4, and about 79% (formulate) to 82% (interpret) is unexplained.

Interaction effects for the multilevel models explaining interpret, employ and formulate, respectively.

To address Research Question 2, ‘What student (Level-1) factors are significant predictors of student performance in the interpret, employ and formulate processes?’ separate HLM models were run with each of the three processes as an outcome variable (see Table 3). The focus was on those student variables for which significant effects on each of the mathematical processes emerged in the respective Model 2.

Results for the student level predictors in Table 3 (Model 2) show that experience with pure mathematics tasks at school (EXPUREM) and familiarity with mathematical concepts (FAMCON) at the student level predicted mathematical literacy for all three cognitive processes when other factors were held constant. Although the positive relationship between EXPUREM and mathematical literacy has already been reported elsewhere (Koğar, 2015; OECD, 2013c), analyses in this study provide further insights of its relationships with the three cognitive processes. Thus, students who were familiar and had experiences with mathematical concepts and pure mathematics tasks at school did relatively better than students who had little or no experience or familiarity with associated concepts.

These findings parallel the findings reported by the New Zealand’s Ministry of Education (New Zealand – Ministry of Education, 2012). The report highlighted that ‘greater student familiarity with mathematical concepts and exposure to formal mathematics is related to higher mathematics achievement’ and that ‘greater perceived use of cognitive activation by students, where teachers encourage students to reflect on their learning in class, was related to higher mathematics achievement’ (New Zealand – Ministry of Education, 2012, pp. 50–51).

Use of Information and Communications Technology (ICT) in mathematics lessons (USEMATH) – a new scale created for PISA2012 – is a composite based on seven items (OECD, 2014b, p. 340). Table 3 provides evidence that USEMATH was significantly (p < 0.01) negatively related to all three outcome variables. In other words, greater use of ICT use during mathematics lessons was negatively linked to performance in interpret, employ and formulate. This is in line with findings of Bando et al. (2017), Rohaetia (2019), Skilling et al. (2020) and Watson (2001) who report that less use of ICT in mathematics lessons (USEMATH) provided better contexts for higher achievements in interpret items. It can be argued that the use of ICT to support mathematics needs careful planning and facilitation by appropriate pedagogy.

For all three processes, male students significantly outperformed girls which is noteworthy given that the modelling held all other effects constant.

Finally, for all three processes, the amount of time learning the language in which the test was administered in a particular country (LMINS) had a negative effect on performance while more mathematics learning time per week (MMINS) raised student’s performance.

Results to address Research Question 3 ‘What teacher/school (Level-2) factors are significant predictors of student performance in the interpret, employ and formulate processes?’ are also presented in Table 3.

Results show that some of the teacher/school-level effects were similar for all three processes. Thus, Table 3 highlights that students in schools which provided enrichment activities through extracurricular mathematics activities (MACTIV) had higher proportions of certified teachers (PROPCERT) and higher proportions of teachers who had studied mathematics as a major subject at university (PROPMA5A) showed higher performance in the three mathematical processes.

In contrast, more positive teacher-related factors affecting school climate (TEACCLIM) were associated with higher performance in interpret and employ but not in formulate. Noteworthy was also the finding that a lower Maths teacher-to-student ratio (SMRATIO) had a statistically significant (p < 0.05) effect, but only on interpret.

The additional negative effect of mathematical extracurricular activities at school (MACTIV) on employ and formulate through use of ICT in mathematics lessons (USEMATH) suggests that it might be beneficial to rethink how technology and extracurricular lessons are used, structured and delivered to optimise the learning of ‘reasoning’ of mathematical processes, and thus in improving achievement in mathematical literacy.

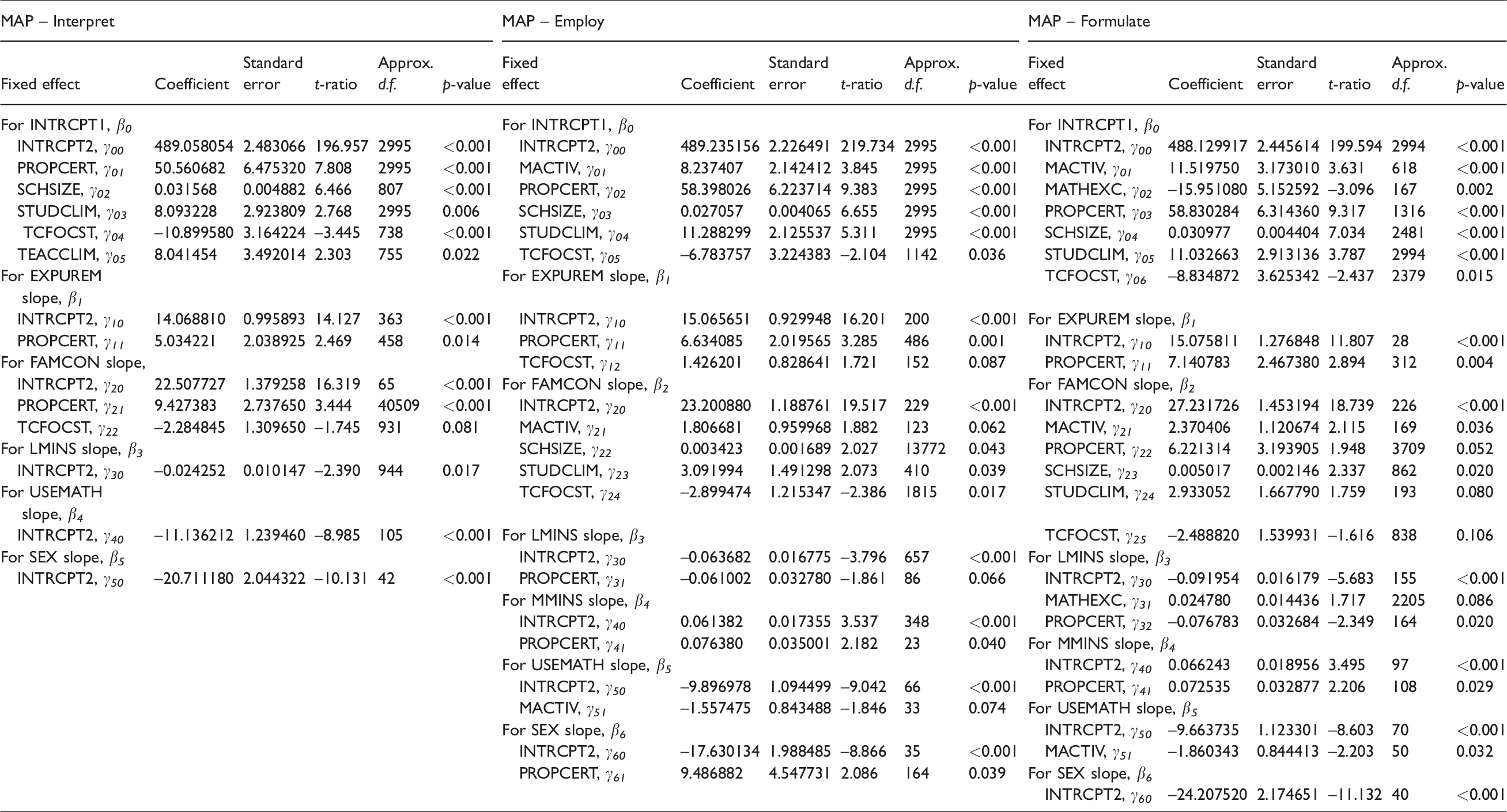

Results addressing Research Question 4 ‘What is the nature of the cross-level interactions between teacher/school (Level-2) predictors and student (Level-1) predictors of student performance in the interpret, employ and formulate processes?’ are presented in Table 4.

Interesting results emerged from the analysis of interaction effects of teacher/school-level variables on the relationship between student factors and performance. Thus, for larger schools (SCHSIZE) with more mathematics extracurricular activities (MACTIV) and with more positive student-related factors affecting school climate (STUDCLIM), an interaction could be observed with familiarity with mathematical concepts (FAMCON) which was associated with higher performance on the employ and formulate processes.

It was evident that the proportion of certified teachers (PROPCERT) in a school interacted positively with experience in pure mathematics tasks as school (EXPUREM) to enhance student performance on interpret items. Similar effects were noted for PROPCERT and familiarity with mathematical concepts (FAMCON).

Overall, Table 4 provides visual evidence of the increasing complexity of contextual effects as mathematical processes gradually increase in difficulty from interpret → employ → formulate in that more significant effects of teacher/school-level variables on the relationships between student-level variables and performance emerged. Perhaps the hypothesis of Stacey and Turner (2015) that higher-order cognitive processes (here formulate) are more difficult (relative to interpret and employ items), holds not only for the difficulty indices of items which involve formulate and employ but also for the relationships between factors having an effect on the cognitive processes involved. There is further evidence from Table 4 that complex cognitive processes in mathematics and subjects which require quantitative reasoning invoke more Level-1 and Level-2 factors. Familiarity of concepts (FAMCON), time learning the language of test administration (LMINS) and mathematics (MMINS) interact with relatively more factors in the models explaining differences in performance on the employ and formulate processes.

Conclusion and implications for research and practice

This study raises several issues that require urgent attention about composite contextual variables which predict performance in the three cognitive processes involved in mathematical literacy and specifically for the learning and teaching of mathematics. The importance of qualified mathematics teachers and their contributions through targeted school-based mathematics extracurricular activities is pivotal to success in mathematical literacy performance. The central role of qualified mathematics teachers is further supported through students’ familiarity with mathematical concepts and enrichment provided by quality learning time in the discipline. This becomes a necessity when students are required to utilise all three cognitive processes when solving problems in mathematics.

Gender interacts with the learning of mathematics and their differential performance in mathematical literacy warrants urgent attention. While many societal values and attitudes are likely to play a role in gender differences in mathematics, a highly granular analysis at the item or task level could provide understandings for the modification of test, curriculum and learning design.

In terms of what and how students learn, the frequency of procedural tasks, such as solving algebraic equations and finding the volume of abstract shapes in lessons (ST74Q01R) and tests (ST74Q02R), was positively related to achievement in mathematical literacy.

In contrast to procedural tasks, the frequency of pure mathematical reasoning, such as applying geometrical theorems to abstract shapes and answering conceptual questions about prime numbers in lessons (ST75Q01R) and tests (ST75Q02R), and the frequency of applied mathematical reasoning, such as solving problems from everyday life in tests (ST76Q02R), were negatively related to achievement in mathematical literacy. These findings warrant further investigation to scrutinise the reasons as to why an increased exposure to wider a variety of mathematics has a detrimental effect on students’ achievement. Although most research studies have examined the general performance in mathematical literacy, it is pertinent that higher-order thinking skills and cognitive processes are given specific attention and investigation.

One possibility is that students who had a greater exposure to procedural tasks (and consequently less exposure to mathematical reasoning) had a higher achievement in mathematical literacy because the literacy test may be biased towards those types of tasks. However, the PISA test incorporated a range of processes, content and contexts which suggests that a bias towards procedural tasks was unlikely. If anything, the definition of mathematical literacy used in this study is more focused on everyday applications of mathematics and the preparation of students for their post-school lives than is the case on other assessments.

Another hypothesis is that the teaching and learning centred on mathematical reasoning, vis-à-vis pure mathematics task, could be less robust than teaching and learning centred on procedural tasks. It may be that teachers were more adequately prepared and able to teach mathematical procedures and that teaching mathematical reasoning requires greater skill, understanding and mastery of the material.

The results in Tables 3 and 4 indicate that increased experience with pure mathematics tasks (EXPUREM) and familiarity with mathematics concepts (FAMCON) had positive effects on achievement in mathematical literacy. These results indicate that students benefited from exposure to mathematical concepts and procedures but did not benefit from exposure to reasoning. These results are of concern because students of all abilities will be expected and required not only to use mathematics, but more importantly to apply mathematical reasoning in novel situations. This necessity is articulated by Huang et al. (2016), for its centrality in curriculum design, and the professional development of teachers. They argued for the explicit teaching of mathematical reasoning and formalised as part of the learning for mathematics teachers.

Success in mathematical literacy tasks depends on students’ prior experiences of mathematics. If students have been mainly exposed to procedural tasks and mainly required to do rote learning and methodical practice of similar problems, they may struggle to cope when more mathematical reasoning, conceptualisation and more complex problems are introduced. Perhaps, as advanced by Rohaetia (2019), Schoenfeld (1992), Skilling et al. (2020) and Tall (2008, 2013), students were better equipped to respond to procedural tasks in mathematical literacy tests than to utilise the mathematical reasoning underlying each task/problem and step/sequence. The benefits of exposure to mathematical reasoning and its importance need to be emphasised in mathematics education. As emphasised by Jeannotte and Kieran (2017, p. 14), mathematical literacy involves mathematical reasoning and the latter includes processes of ‘the search for similarities and differences, and the search for validation’. They highlighted further that their mathematical reasoning model can be ‘applied to various mathematical content areas’ (Jeannotte & Kieran, 2017, p. 16).

Hence, the type of classroom activities, the type of mathematical thinking and reasoning need further scrutiny to optimise performance. The roles of theory, practice, pure mathematics, applied mathematics and ICT in the curriculum need further examination. This reiterates the recommendations of Thomson et al. (2013, p. 44) that ‘teachers can support students’ mathematics learning by providing direct and explicit instructions about strategies for understanding mathematics and tackling problems’.

Future research may want to consider associated mathematical processes beyond PISA’s – interpret, employ and formulate. Does exposure to procedural tasks improve students’ employment of procedures to solve problems? Does exposure to mathematical reasoning improve students’ interpretation of solutions? Although attitudinal variables were not included in this study, the variance explained by Level-1 predictors could be extended through their inclusion.

Quality time on meaningful mathematics tasks through teachers with optimal content and pedagogical knowledge is important. Although much professional development of teachers centres on pedagogical content knowledge, it is imperative that the focus and resources be committed to content knowledge, its renewal and extensions. The importance of content knowledge and associated pedagogies cannot be understated; this implies being mindful of the use of ICT in mathematics lessons and with pedagogy (and importantly the contents) in the driver seat (Brown et al., 2019; Fullan, 1991; Watson, 2001).

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.