Abstract

Objective:

This study examined the effectiveness of the Better Access initiative using outcome data from real-world practice settings.

Methods:

We used anonymised data from four datasets to assess outcomes for consumers over 86,121 episodes of care. The datasets contained routinely captured episode-level data from the practices of psychologists and other eligible Better Access providers. Across the datasets, outcomes were assessed on 11 different measures (mostly consumer-rated measures of depression and anxiety symptoms, psychological distress, functioning and wellbeing). We conducted purpose-designed analyses with three of the datasets (83,346 episodes), examining score changes on given measures between the first and last assessment occasion within an episode. We used preexisting outputs for the fourth dataset (2775 episodes), again considering change from the beginning to the end of the episode.

Results:

In the purpose-designed analyses, consumers’ mental health improved in around 50–60% of episodes. However, consumers showed no change or experienced deterioration in their mental health in 20–30% and 10–20% of episodes, respectively. Those with more severe baseline scores had a greater probability of showing improvement. The preexisting outputs also identified significant improvements, particularly in episodes where treatment was complete.

Conclusion:

Better Access is achieving reductions in symptoms and improvements in functioning and wellbeing for the majority of consumers. A minority of consumers do not have these sorts of positive outcomes, however, and further work is required to understand why. Routine measurement of outcomes – particularly consumer-rated outcomes – would enable ongoing monitoring of the extent to which Better Access is achieving its goals.

Introduction

The Better Access to Psychiatrists, Psychologists and General Practitioners through the Medicare Benefits Schedule initiative (Better Access) was introduced in 2006 to enable people with common mental disorders like depression and anxiety to readily receive treatment. It was specifically designed to support those with mild-to-moderate mental health conditions who might respond well to short-term evidence-based interventions (Australian Government Department of Health, 2021). Under Better Access, a series of item numbers was added to the Medicare Benefits Schedule (MBS). These item numbers are associated with a variety of mental health services that are delivered by different mental health professionals. Key among these are psychological therapy services delivered by clinical psychologists and focussed psychological strategies delivered by psychologists, social workers and occupational therapists. MBS rebates are available to assist consumers meet the costs of these services.

We were commissioned by the (then) Department of Health to evaluate Better Access in 2021–2022. This followed an evaluation that we had conducted in 2009–2010 (Pirkis et al., 2011b). The more recent evaluation considered the accessibility, responsiveness, appropriateness, effectiveness and sustainability of Better Access and involved 10 separate studies; this study and eight others are reported on in this issue of the Australian and New Zealand Journal of Psychiatry (Arya et al., 2026; Chilver et al., 2026; Currier et al., 2026; Harris et al., 2026; Newton et al., 2026; Pirkis et al., 2026; Tapp et al., 2026a; Tapp et al., 2026b).

The current study drew on outcome data that were routinely collected in the clinical practices of psychologists and other eligible providers. It complemented three of the other studies reported in this issue by providing a different lens on consumer outcomes. It assessed outcomes via validated measures of symptoms, functioning and related concepts that were administered prospectively, and considered change over discrete episodes of care. Pirkis et al. (2026) also considered outcomes over the course of an episode of care, but relied on consumers’ retrospective reports of how their mental health changed over the course of the episode. Like the current study, Harris et al. (2026) and Arya et al. (2026) used prospectively administered measures, but assessed change over set periods of time rather than for specific episodes.

The study was informed by our previous experiences with assessing the effectiveness of Better Access. In our 2009–2010 evaluation, we followed 883 consumers over sessions of Better Access care (Pirkis et al., 2011a). Because we needed to collect baseline data for these consumers before they received care, we recruited a stratified random sample of providers and asked them to recruit their next 5–10 English-speaking ‘new’ Better Access consumers. We found that the majority showed improvement on the Kessler Psychological Distress Scale (K-10) (Kessler et al., 2002) and/or the Depression Anxiety Stress Scales (DASS-21) (Lovibond and Lovibond, 1995a; Lovibond and Lovibond, 1995b). We were criticised because our sample was relatively small and may have been biased if providers ‘cherry picked’ consumers who were likely to experience positive outcomes (Allen and Jackson, 2011; Hickie et al., 2011).

In the current study, we addressed these criticisms by using outcome data that had been routinely collected by providers during the course of consumers’ care. This had the advantage of allowing us to access anonymised data for a far greater number of consumers, and, because the data had been routinely collected by providers for a different purpose (i.e. to monitor consumers’ progress and guide their clinical approach) the likelihood of sampling bias was minimised. In addition, using routinely collected data allowed us to examine outcomes not only for consumers who had completed treatment, but also for those who were still receiving treatment or had ceased treatment prematurely. This gave us confidence that we were gleaning an accurate representation of Better Access in real-world settings.

Methods

Study overview

We used four datasets to assess outcomes for consumers over their episodes of care. Four of our authors (B.B., A.F., C.M. and K.F.) were the custodians of these datasets. The first dataset was NovoPsych, a subscription-based platform developed by B.B. and used in multiple Australian practices (usually psychology practices). The other three datasets came from large psychology practices which at the time were run by A.F. (Benchmark Psychology, Queensland), C.M. (Chris Mackey and Associates, Victoria) and K.F. (Kaye Frankcom and Associates, Victoria).

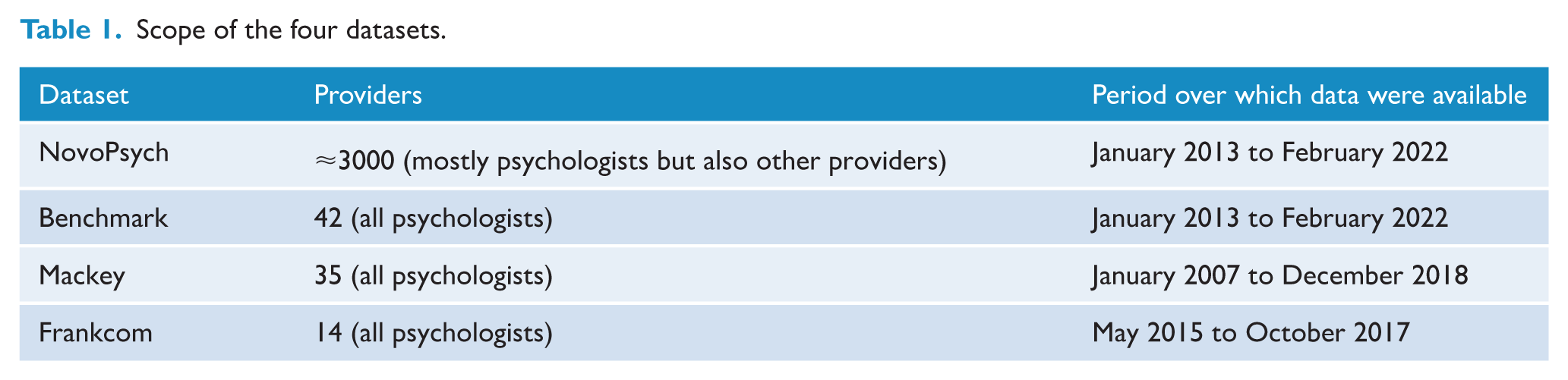

Table 1 provides detail about the datasets’ scope. NovoPsych was the largest, housing data on consumer outcomes from around 3000 providers. All four contained data from extensive periods, with the Mackey database going back to 2007 (when Better Access began), the NovoPsych and Benchmark databases housing data from early 2013, and the Frankcom database containing data from mid-2015.

Scope of the four datasets.

We were not able to identify individual consumers or individual providers in any dataset. To anonymise the data further, we do not refer to any of the datasets by name for the remainder of this paper, but instead report all findings by individual measure.

Outcome measurement

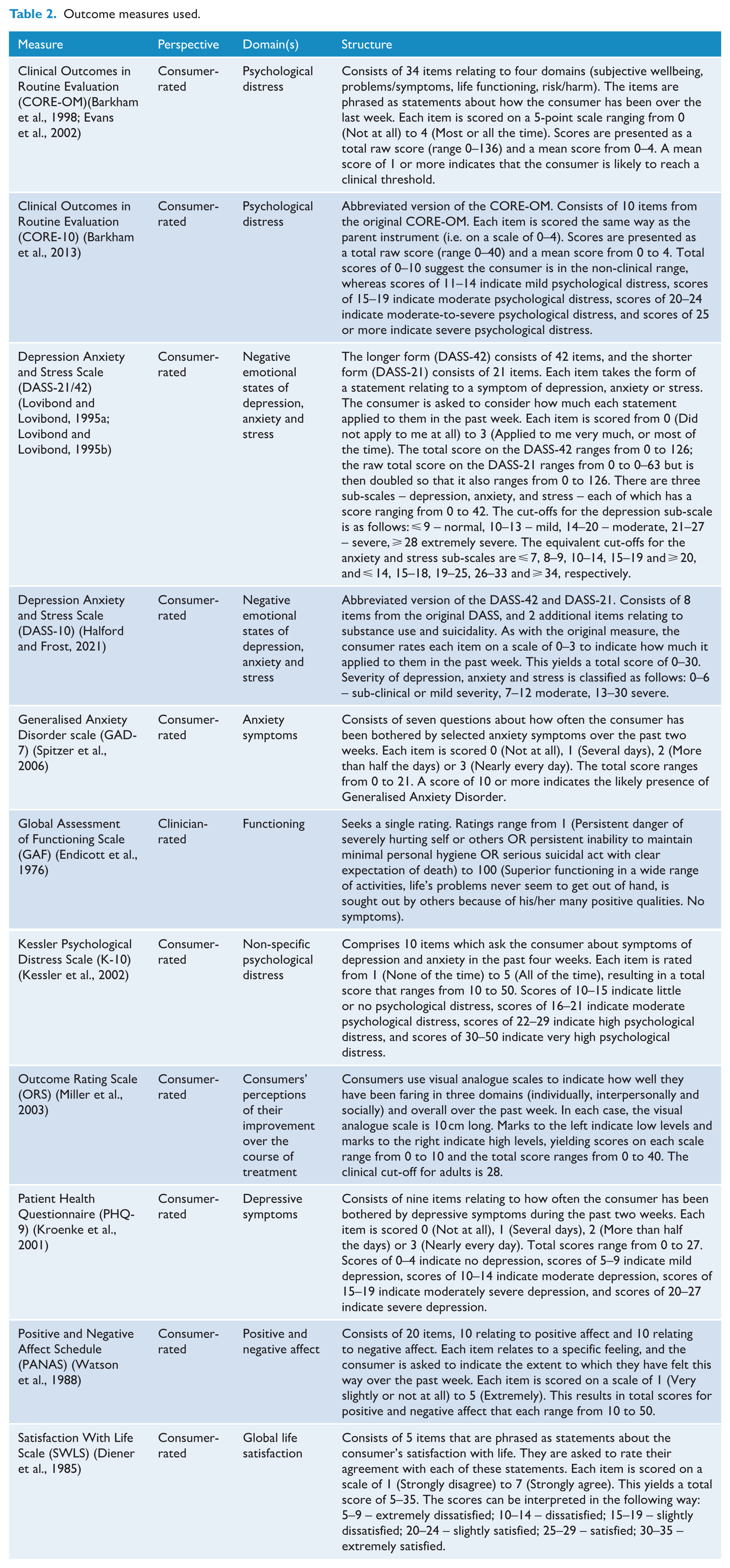

The four datasets included outcome data from 11 different measures (see Table 2). These were mostly consumer-rated measures and covered various constructs, including depression and anxiety symptoms, psychological distress, functioning and wellbeing.

Outcome measures used.

Purpose-designed analyses

We were able to implement a consistent analysis strategy that employed purpose-designed analyses for three datasets. These datasets included data on all of the measures in Table 2 except the ORS. Our approach is described later.

Data management

The datasets were processed and analysed separately. For all three, the data custodian retained the raw data and provided anonymised test datasets with limited numbers of rows to our analysis team. The analysis team developed data cleaning, organisation and analysis code (in R [v4.0.0]) using the test datasets. These differed depending on the structure of the given dataset, but all ultimately enabled the data to be presented consistently across the three datasets. The code was sent to the data custodians who then used it to conduct the analysis and provide our team with aggregate results.

Episodes of care

We organised each dataset around episodes, creating these by aggregating series of sessions at which outcomes were assessed. Where sessions were dated, we were able to determine the time between consecutive sessions; sessions with no date were excluded. We treated consecutive sessions as belonging to the same episode if the period between them was less than six months; if the gap between sessions was six months or more, the latter session was treated as the start of a new episode. This meant that episodes could straddle the end of one year and the beginning of the next and were not defined by the Better Access annual session cap rules. We thought that this was a more meaningful way to explore the outcomes of a consecutive series of sessions of care.

For each episode, we extracted the following data: consumer’s age, sex and first and last scores on the relevant outcome measure(s). Other variables were not consistently available across the datasets.

Inclusion and exclusion criteria

Wherever possible, we tried to ensure that the sessions that made up episodes were delivered through Better Access. Our starting point involved ensuring that the providers who had delivered the care came from a professional group whose services were eligible for rebates under Better Access (psychologists, social workers and occupational therapists).

We were able to take one additional step with one of the datasets. This dataset ‘tagged’ sessions of care that were delivered under Better Access. We used these in the analysis and excluded all others in this dataset. In the remaining datasets, we assumed that all sessions and the episodes they were aggregated to were delivered under Better Access. We did this based on the rationale that most of the episodes in our datasets were delivered by psychologists, and we know from two background analyses that the majority of services delivered by psychologists in Australia are funded through Better Access. The first of these analyses drew on epidemiological data from the National Study of Mental Health and Wellbeing (Australian Bureau of Statistics, 2022) and on MBS administrative data (Australian Institute of Health and Welfare, 2023) and showed that approximately 75% of all Australians aged 16-85 who saw a psychologist in 2020–2021 received Better Access treatment sessions. The second analysis used expenditure data from a range of sources and showed that Better Access accounted for around 85% of all expenditure on psychologists, when psychologists funded through Primary Health Networks (Australian Government Department of Health, 2022), private health insurance companies (Australian Prudential Regulation Authority, 2022), the Department of Veterans Affairs (Australian Institute of Health and Welfare, 2022) and the Department of Defence (Australian Institute of Health and Welfare, 2022) were added to the mix (Pirkis et al., 2022). We are confident, therefore, that the majority of sessions represented in the datasets were Better Access sessions.

To be eligible for inclusion in the analysis, an episode had to include at least two sessions for which the same measure was completed. For some episodes, outcomes were assessed at more than two sessions. Where this was the case, we used the outcome scores from the first and last sessions to calculate change on the given measure.

We also excluded some sessions that did not have valid data for analysis (e.g. outcome scores that fell outside the legitimate scoring range for the given measure; multiple administrations of the same measure on the same day).

We had some rules about the consumers who received the episodes. Consumers were excluded from the analysis if they were based outside Australia. They were also excluded if there was evidence that they were aged <18 years; where date of birth was missing we assumed that they were adults. Our reasoning was that when we interrogated MBS data supplied by Services Australia for the purposes of the broader evaluation, 90% of those who received Better Access services from a clinical psychologist or a psychologist in 2022 were aged 15 or more (Tapp et al., 2026b).

Data analysis

We profiled episodes by sex and age of the consumer, presenting the results as percentages. We also profiled episodes by the first (baseline) score on each measure, using standard cut-offs or quartiles where cut-offs were not available and rounding scores down for the purposes of categorisation.

We examined outcomes (i.e. the change in scores on a given measure between the first and last assessment occasions within an episode) using an effect size methodology. We classified episodes in terms of change, using an effect size of 0.3. A change score of greater than 0.3 times the standard deviation of the mean difference in outcome score for all episodes was taken as ‘significant improvement’; a change score between −0.3 and 0.3 times the standard deviation was taken as ‘no significant change’; and a change score of less than -0.3 times the standard deviation was taken as ‘significant deterioration’. We chose 0.3 as the effect size after considering studies of the Minimum Clinically Important Difference (MCID) on two of the measures in our suite (the PHQ-9 and GAD-7) (Kounali et al., 2020; Kroenke et al., 2019) and recommendations about the range of effect sizes likely to be minimally important in clinical or subjective terms (Angst et al., 2017). The MCID represents the smallest difference perceived by the consumer to be beneficial. An effect size of 0.3 is at lower end of the reported ranges, but we considered this appropriate because samples were not restricted to those who used a minimum number of sessions or completed treatment. If we had limited the samples in this way, we might have anticipated greater average improvement and a higher effect size might have been more appropriate.

For all estimates of change, we calculated 95% confidence intervals. Non-overlapping confidence intervals were used as a conservative method of determining whether differences in the proportions classified as ‘significant improvement’, ‘no significant change’ or ‘significant deterioration’ were statistically significant (Schenker and Gentleman, 2001).

We calculated effect sizes for each measure within a dataset, conducting an analysis for all episodes for each measure and then analyses stratified by baseline severity score on the given measure.

Preexisting outputs

The remaining dataset included data on the ORS. It was not possible to conduct the above purpose-designed analyses with this dataset for logistical reasons, so we were provided with outputs from unpublished preexisting analyses. We felt that it was important to include these because the ORS is widely used in clinical practice.

The specific outputs were organised around outcomes on the ORS at six points in time and contained data from the preceding six months or so (periods 1–6). In each case, the key outcome metric was the effect size associated with change on the ORS from the beginning to the end of each episode for active clients (clients who were still receiving treatment at the end of the episode) and inactive clients (clients whose episode had ended).

The effect size was different from the one that we used in the purpose-designed analyses, described above. This effect size was more complex and described the relative effect of treatment compared to no intervention, after correcting for number of sessions, regression to the mean, baseline severity and bias. The creators of the software through which the outputs were generated indicate that a relative effect size of 0.8 can be translated as ‘[consumers] reporting outcomes 80% better than those not receiving treatment’.

Again, we assumed that the majority of sessions represented in this dataset were delivered via Better Access.

Approvals

The University of Melbourne Human Research Ethics Committee approved the study (2021-22452-23859-4).

Results

Purpose-designed analyses

In total, we had data on outcomes from 83,346 episodes in our purpose-designed analyses. Individual episodes could be represented in more than one analysis if multiple measures were used to assess outcomes in the same episode. The number of episodes represented in any given analysis varied from 1862 to 53,216.

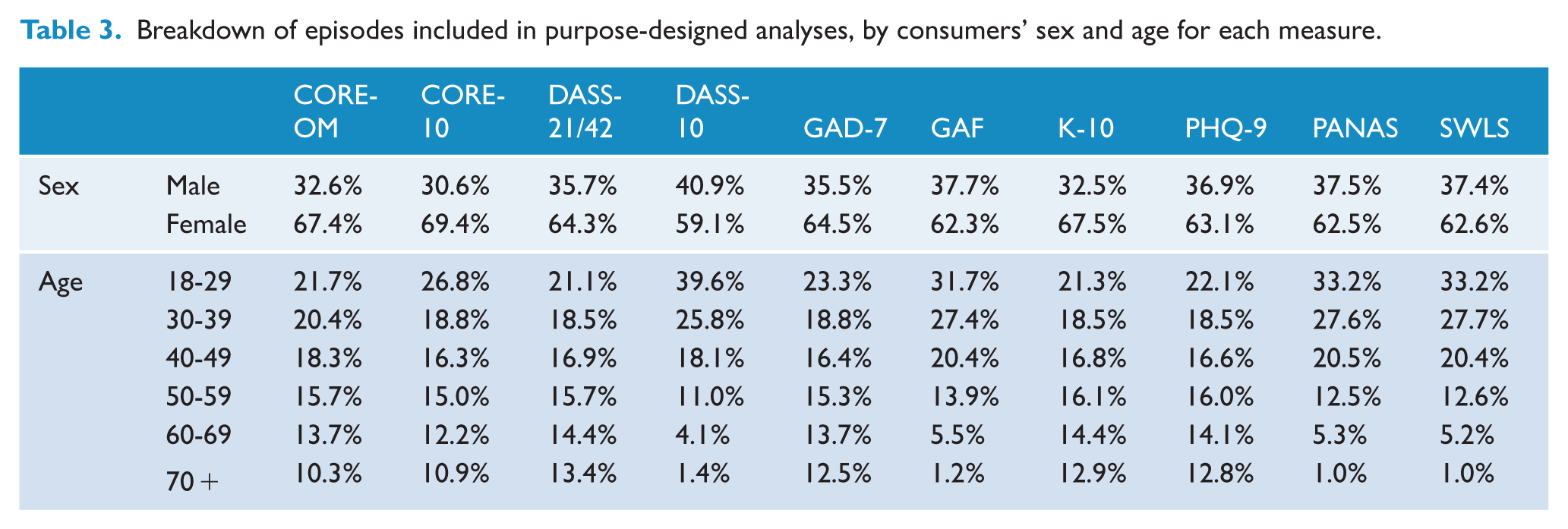

Table 3 profiles the episodes included in the analysis for each measure. Across all measures, around two-thirds of episodes were delivered to females. Between 40% and 65% of episodes were provided to people aged < 40.

Breakdown of episodes included in purpose-designed analyses, by consumers’ sex and age for each measure.

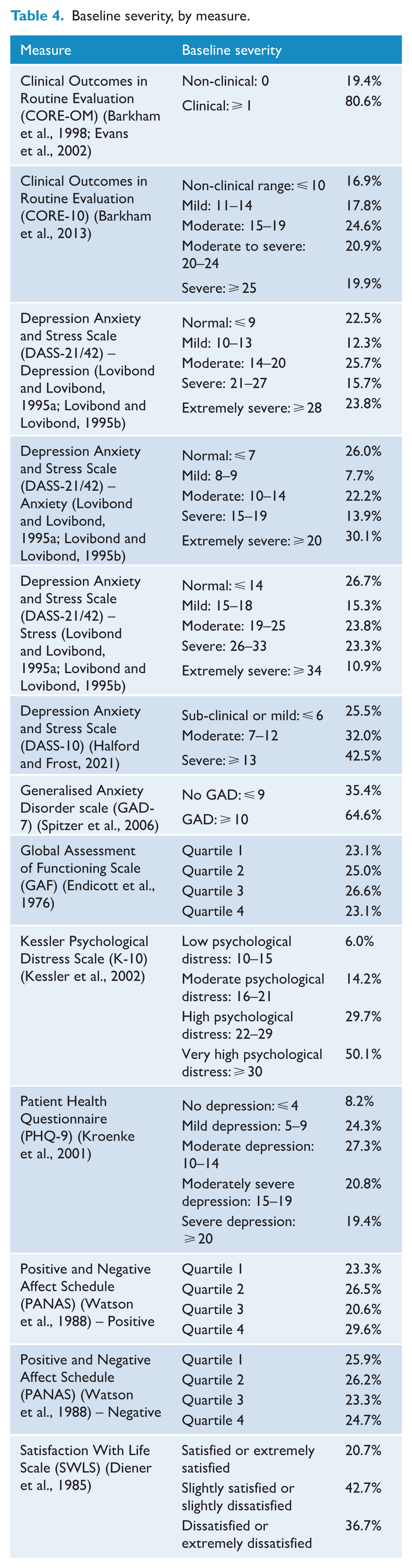

Table 4 shows the distribution of consumers’ baseline severity across episodes for each measure. For all measures, episodes were distributed across baseline severity categories. There were sizable proportions of episodes where the consumer began care with mild, moderate or severe symptoms or levels of functioning. There were also instances where the consumer began the episode in the ‘normal range’. The precise patterns differed depending on the measure, and the number and nature of the cut-offs for the various levels of severity.

Baseline severity, by measure.

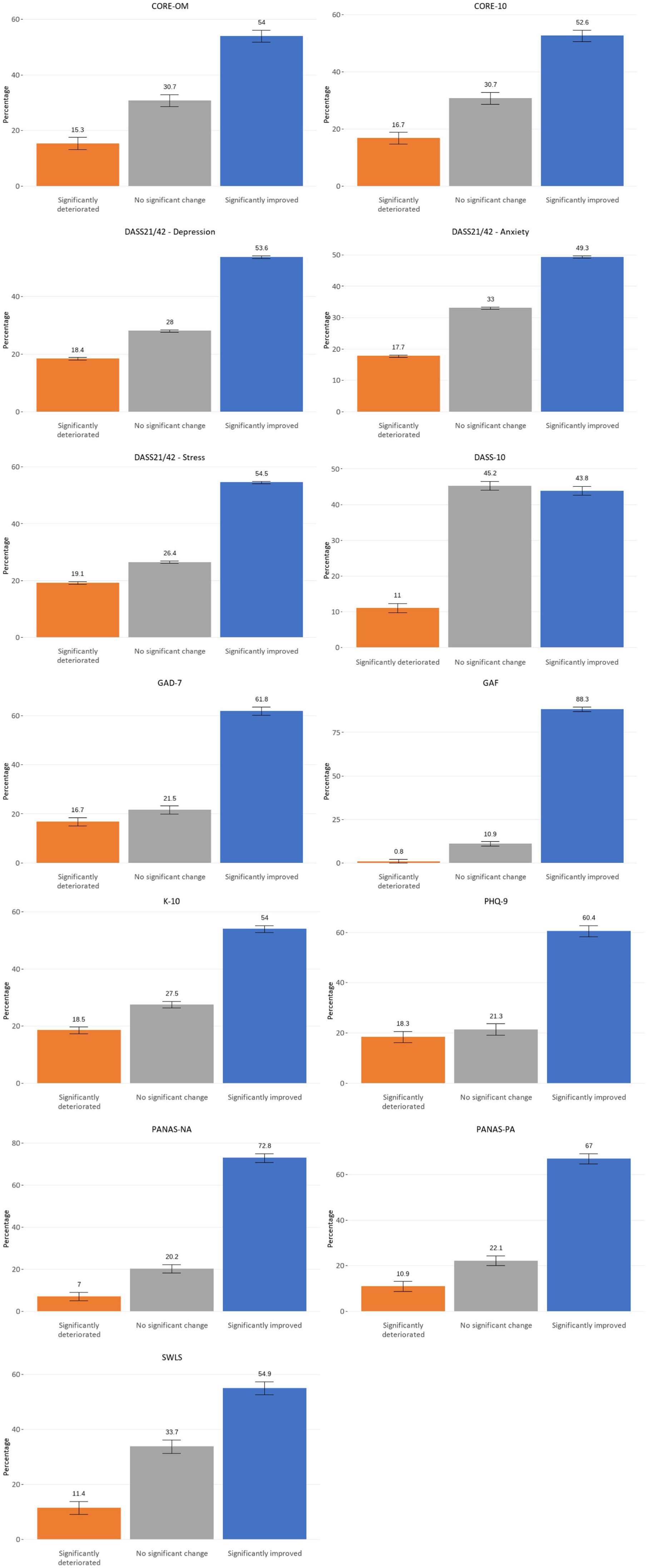

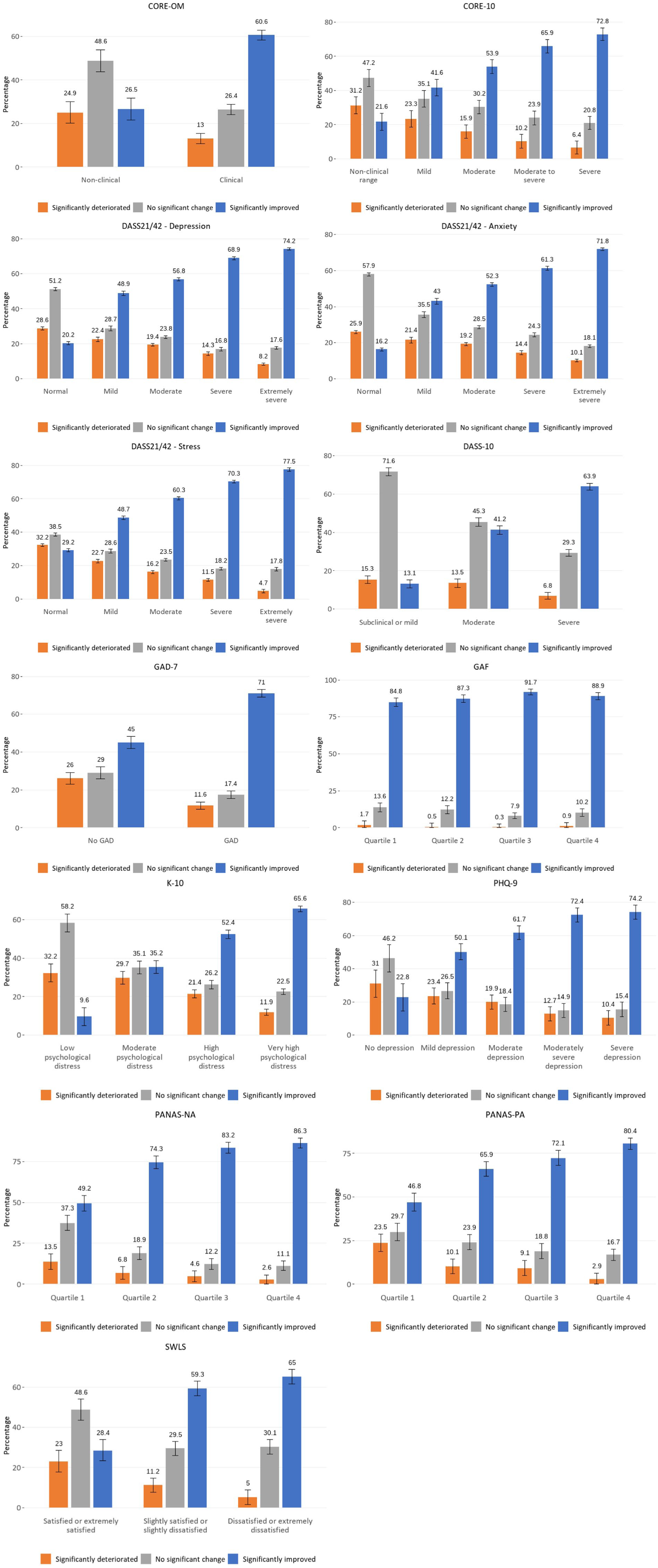

Figures 1 and 2 present the findings in relation to outcomes for each measure. Figure 1 presents data for all episodes, and Figure 2 presents data for episodes stratified by baseline severity score (with the lowest level of severity presented to the left).

Outcomes by measure.

Outcomes by measure and baseline severity.

The picture is largely consistent across measures. In most cases, there was significant improvement in around 50–60% of episodes. There were some outliers, with higher proportions of episodes showing improvement according to the GAF and, to a lesser extent, the PANAS. Lower proportions did so when the DASS-10 was used as the assessment tool. There may be reasons for this that relate to the measures themselves, the constructs they assess (e.g. symptoms versus levels of functioning versus wellbeing), whose perspective they take (i.e. consumers’ versus providers’), and the way they were administered. There may also be differences in the way practices record data for consumers (e.g. how they account for consumers who drop out of care early). In addition, the casemix of consumers seen by different practices will have a bearing on outcomes.

Almost without exception, those with the most severe baseline scores on the given measure were the most likely to show improvement over the course of the episode. For these consumers, across most measures, there was improvement in around 60–75% of episodes. Exceptions were the GAF and the PANAS, where the percentages were higher.

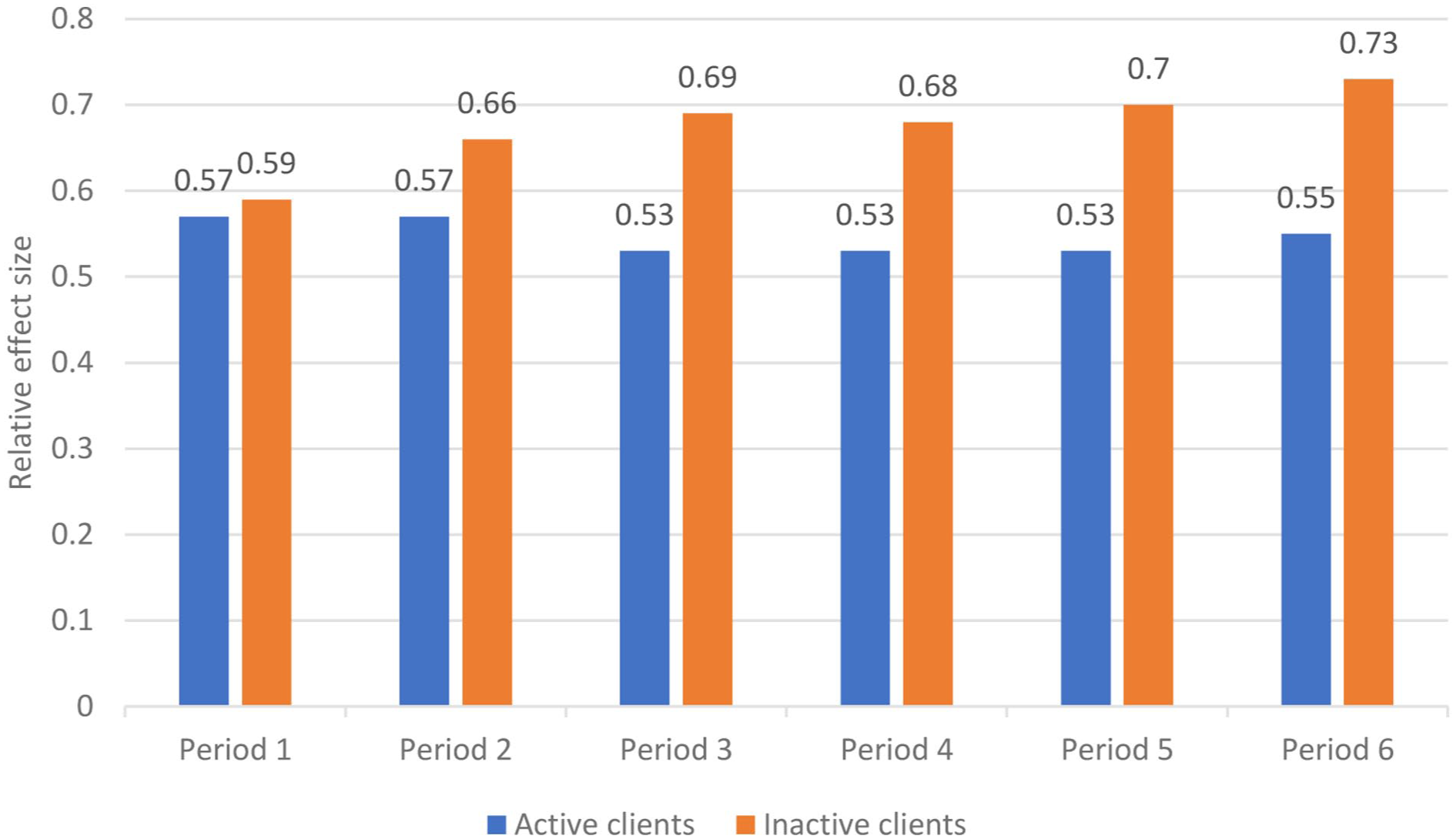

Preexisting outputs

The preexisting outputs represented 2775 episodes. Figure 3 presents the key results, describing outcomes over six periods as measured by the ORS. It shows the relative effect size associated with change on the ORS over the duration of an episode for active and inactive clients. Active clients might not have yet achieved optimal outcomes because they were still in treatment. Conversely, inactive clients might have been expected to have better outcomes because many would have completed a full course of treatment (although some would have dropped out before they did so). The relative effect sizes for active clients sit at around 0.55 across all time points. The relative effect sizes for inactive clients ranged from 0.59 to 0.73.

Outcomes on the ORS for active and inactive clients, by period.

Discussion

Summary and interpretation of findings

Our study tracked consumers’ progress over 86,121 episodes, assessing change via various measures of different aspects of mental health. Data on outcomes of psychological care delivered by allied health professionals in private practice are not available on this scale from any other source. Although there are examples elsewhere in the mental health sector of systems for routinely collecting outcome data – e.g. for episodes delivered through public sector specialised inpatient and community services (Australian Mental Health Outcomes and Classification Network, 2022) or commissioned by Primary Health Networks (Australian Government Department of Health, 2022) – there is no equivalent system for Better Access services. Medicare data that are collected for administrative purposes relate to activity only and not outcomes. In an ideal world, steps would be taken to implement routine outcome measurement as a quality assurance tool for Better Access.

Consumers in this study began their episodes with varying levels of severity on the different measures. Some presented with high levels of baseline severity on a given measure, while others presented with more mild or moderate levels. Overall, this suggests that Better Access is not only reaching consumers with mild to moderate mental health conditions as originally intended (Australian Government Department of Health, 2021), but that it is also providing services for those with more severe mental illness. Some consumers (often 20–25%) presented in the ‘normal range’ for some of the symptom-based measures. In some cases, it may be that the consumer had, for example, low levels of anxiety or depressive symptoms but still warranted a mental health diagnosis (e.g. phobia, adjustment disorder). Relatedly, it may be that the particular measure was not capturing the consumer’s presenting issue (e.g. a general measure of anxiety being used for a person with a specific phobia). However, in others instances it may suggest issues relating to the threshold and appropriateness of referral.

It is positive that, irrespective of the measure used, consumers’ mental health improved during the majority (50–60%) of episodes. It is also positive that this improvement was related to indicators of clinical need (i.e. baseline severity); this aligns with the other studies in our evaluation that considered consumer outcomes (Arya et al., 2026; Harris et al., 2026; Pirkis et al., 2026). However, it is of concern that some consumers experienced deterioration in their mental health in considerable numbers of episodes (typically 10–20%), and that some (typically 20–30%) showed no change, although this is consistent with the international literature (Cuijpers et al., 2018). These consumers were most likely to be people who began their episode with relatively mild symptoms or high levels of functioning or satisfaction with life. This may reflect the fact that those who present with relatively worse symptoms or levels of functioning have greater opportunity for improvement.

It is worth commenting on the GAF, which was the only clinician-rated measure in our suite. The GAF was associated with considerably higher proportions of consumers showing significant improvement over their episodes than other measures. This is consistent with international literature which suggests that, compared with consumers, clinicians tend to overestimate outcomes (Cuijpers et al., 2010).

Strengths and limitations

A major strength of this study is that it examined outcomes for consumers over a very large number of episodes (n = 86,121), using a variety of measures. It used real-world outcome data from private practice settings. It is rare for studies conducted in the primary mental health care context to capture outcome data on such a substantial number of episodes, and to do so in a way that provides a window into effectiveness outside of controlled trials.

Our study had some limitations, however. Episodes did not necessarily equate to people; some consumers may have had more than one episode in a given dataset, meaning that the episodes would not have been independent. We were able to investigate this in one of the datasets, and found that the mean number of episodes per consumer was ⩽1.1, indicating that the vast majority of consumers did actually only have one episode of care.

More than one measure may have been used to assess outcomes across a single episode. We considered how to deal with this but decided that it was justifiable to include all measures for each episode, on the grounds that the different measures assessed different constructs.

We were unable to examine the full range of consumer- and provider-level factors that might have influenced outcomes. For example, we did not have information on consumers’ diagnoses, although we know from elsewhere in our evaluation that the majority of Better Access users have a diagnosis of an anxiety disorder (70%) and/or depression (72%) (Pirkis et al., 2026). Likewise, we did not have information on the type of provider who delivered care during the episode. In addition, in our purpose-designed analyses, we were unable to determine whether the episode of care was complete (i.e. whether the consumer had completed treatment). The pre-existing outputs were better suited to this purpose, because we were able to determine whether consumers were still ‘active’ or not. Although some ‘inactive’ consumers may have dropped out of treatment, it is likely that the majority of them would have completed treatment. The findings from this analysis pointed in the expected direction, with outcomes being better for ‘inactive’ consumers than ‘active’ ones.

Although our use of existing data overcame any suggestion of sampling bias, it presented a different issue. Because the data were collected by providers in the course of their clinical practice, the data were not always perfect for the current purpose. In particular, we were only able to be certain that a given session was delivered through Better Access in one dataset. We are, however, confident that the majority of sessions in the other datasets were also delivered via Better Access, for the reasons noted above. The findings from the current study are consistent with those from two other studies in our evaluation where we were able to determine with certainty that participants had used relevant Better Access services. One of these was a survey of consumers who were selected specifically because they had seen a Better Access provider in the past year (Pirkis et al., 2026), and the other was an analysis of data from two national longitudinal studies that had been linked to MBS claims data (Arya et al., 2026). Both indicated that outcomes for Better Access consumers were generally positive.

We went to some lengths to ensure that individual providers and consumers could not be identified, but this meant that we were unable to provide details that we might otherwise have provided. For example, because the datasets varied in size and used unique measures, we were concerned that indicating the number of episodes associated with each measure could identify the dataset (and therefore potentially identify providers and consumers).

Conclusion

Our study provides evidence that Better Access is achieving reductions in symptoms and improvements in functioning and wellbeing for the majority of consumers, particularly those who seek care when they are experiencing relatively severe depression, anxiety and/or psychological distress. A minority of consumers do not have these sorts of positive outcomes, however, and although this is consistent with international literature, further work is required to understand why. Routine measurement of outcomes – particularly those from the consumer’s own perspective – would circumvent the need for one-off studies like ours. It would enable ongoing monitoring of the extent to which Better Access is achieving its goals and would allow improvements to be made to the programme as appropriate.

Footnotes

Acknowledgements

This study was funded by the Australian Government Department of Health, Disability and Ageing, as part of the broader evaluation of Better Access. We would like to thank the two groups that were constituted to advise on the evaluation, the Clinical Advisory Group and the Stakeholder Engagement Group.

Declaration of conflicting interests

The authors declared the following potential conflicts of interest with respect to the research, authorship and/or publication of this article: B.B. is the co-founder and director of NovoPsych; K.F. is the director of Kaye Frankcom Consulting, A.F. is a co-director of Benchmark Psychology and C.M. was the director of Chris Mackey and Associates.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The evaluation of Better Access was funded by the Australian Government Department of Health, Disability and Ageing.

ORCID iDs

Data accessibility statement

The datasets generated and analysed for the current study are not available.