Abstract

The ball mill is usually the largest energy consumer at a mine site and significantly affects operational expenditures. Given a target particle size, Bond Mill Work Index estimates are used to predict a ball mill's throughput. In order to maximize ball mill throughput and optimize energy utilization, it is important to get these estimates right. At the Tropicana Gold Mine, Work Index estimates, derived from X-Ray Fluorescence and Hyperspectral scanning of Grade Control samples, are used to construct spatial GeoMetallurgical models (GeoMet). Inaccuracies in block estimates exist due to limited calibration between grade control derived and laboratory Work Index values. To improve the calibration, an updating algorithm has been tested at the Tropicana Gold Mine. The aim of the study was to demonstrate a new process for updating block estimates using actual mill performance data. Deviations between predicted and actual mill performance are monitored and used to locally improve the Work Index estimates in the GeoMet model. The updating algorithm improves the spatial Work Index estimates, resulting in a real-time reconciliation of already extracted blocks and a recalibration of future scheduled blocks. The case study shows that historic and future production estimates improve on average by about 72 and 26%.

Keywords

Introduction

Traditionally, the mining industry has had mixed successes in achieving the production targets it has set out. Produced tonnages (and grades) nearly always deviate from model-based expectations due to ever-present geological uncertainties. Even when numerous exploration samples are collected, it remains challenging to accurately characterize short-term production units equivalent to a few truckloads (Benndorf 2013). In certain commodities, Grade Control (GC) drilling is performed to further reduce uncertainties (Peattie and Dimitrakopoulos 2013; Dimitrakopoulos and Godoy 2014). GC drilling is expensive and almost exclusively focused on sampling grades.

At the Tropicana Gold Mine, GC samples are collected at one metre intervals during Reverse Circulation drilling. Once collected, the samples are sent to an on-site laboratory for a semi-automated analysis. An autonomous system crushes, splits and pulverizes the sample material prior to X-Ray Fluorescence (XRF) and Hyper-Spectral (HS) scanning. Conventional fire assaying techniques are used to determine the gold grade in a final prepared pulp. Calibrated relationships are subsequently applied to translate the obtained proxy measurements (XRF and HS) into geometallurgical estimates (e.g. work index, hardness or recovery). At this stage, the geometallurgical estimates describe the properties of one meter long cylindrical volumes, virtually located at the original down-hole positions of the GC samples. Geostatistical techniques are used to model metallurgical estimates for contiguous block volumes in the GeoMet model (Catto 2015).

The calibrated relationships, used to translate proxy measurements (XRF & HS) into metallurgical estimates, are largely untested. A larger number of metallurgical tests to improve the calibration is simply economically infeasible. Hence, despite all efforts, the derived geometallurgical block estimates remain (largely) inaccurate.

The Bond Ball Mill Work Index ( m (Lynch et al. 2015). At the time of writing, this variable is of particular interest for the following two reasons. (1) Collecting large data-sets of

m (Lynch et al. 2015). At the time of writing, this variable is of particular interest for the following two reasons. (1) Collecting large data-sets of

The mill feed estimates

:

:  is a column vector with

is a column vector with  :

:  matrix each refer to a unique block in the GeoMet model. The

matrix each refer to a unique block in the GeoMet model. The  :

:  and

and  is a row vector containing

is a row vector containing  are substituted in the following formula to compute the energy required in grinding a ton of ore in the mill from a known feed size to a required product size (Lynch et al. 2015):

are substituted in the following formula to compute the energy required in grinding a ton of ore in the mill from a known feed size to a required product size (Lynch et al. 2015):

Assuming a constant power draw ( and

and  represent the

represent the  passing sizes of the feed and product, respectively, (

passing sizes of the feed and product, respectively, ( and

and  in

in  m). To maximize mill throughput and optimize energy utilization, it is important to get the

m). To maximize mill throughput and optimize energy utilization, it is important to get the  estimates right.

estimates right.

When the ore is softer than expected ( is overestimated), the amount of energy transferred into each ton of material is too large and the resulting product will be too fine. This situation does not harm downstream recovery but rather results in an amount of wasted energy (the larger recovery due to a smaller product size does not outweigh the additional milling costs). An increase in throughput

is overestimated), the amount of energy transferred into each ton of material is too large and the resulting product will be too fine. This situation does not harm downstream recovery but rather results in an amount of wasted energy (the larger recovery due to a smaller product size does not outweigh the additional milling costs). An increase in throughput

When the ore is harder than expected ( is underestimated), not enough energy is transferred into each ton of ore. The resulting product will be too coarse. A larger proportion of the product stream will be separated by a hydrocyclone (based on particle size) and recirculated as mill feed. A lower throughput

is underestimated), not enough energy is transferred into each ton of ore. The resulting product will be too coarse. A larger proportion of the product stream will be separated by a hydrocyclone (based on particle size) and recirculated as mill feed. A lower throughput

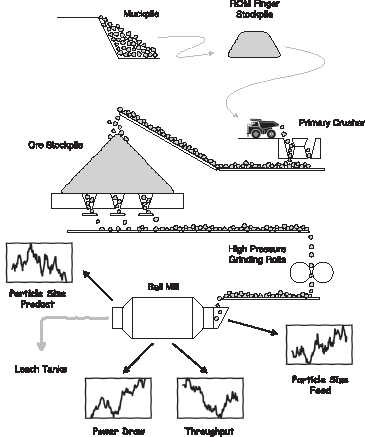

Installed sensors continuously monitor throughput, power draw, feed and product sizes in the ball mill (Figure 1). The sensor responses have the potential to be used in real time to derive an actual Operating Work Index value  of the material residing in the mill (Equation (1)). The lower case notation refers to an actual measurement (single value), whereas the upper case notation, previously used, indicates an estimate (multiple values in a row vector to characterize uncertainty). The observation

of the material residing in the mill (Equation (1)). The lower case notation refers to an actual measurement (single value), whereas the upper case notation, previously used, indicates an estimate (multiple values in a row vector to characterize uncertainty). The observation  characterizes all material that went through the ball mill between

characterizes all material that went through the ball mill between  and

and

Simplified representation of the monitoring set-up at the Tropicana Gold Mine. Research question: is it possible to use the ball mill performance measurements to better inform spatial

The online computation of  carries a large potential as demonstrated in the pilot study. Mill observations

carries a large potential as demonstrated in the pilot study. Mill observations  are used to progressively improve the block estimates

are used to progressively improve the block estimates  in the GeoMet model. Estimates of both mined and scheduled blocks are adjusted simultaneously. This backward integration is only been made possible through material tracking initiatives. Data, from monitoring systems in the mining fleet and processing plant, are used to link mill observations with their constituent GeoMet blocks. A developed algorithm subsequently updates the constituent GeoMet blocks and their surroundings based on the noisy time-averaged mill observation. The strength of the algorithm lies in its capability to differentiate between the more and less accurately estimated local areas (in this context, a local area refers to a collection of adjacent mined blocks and its immediate surroundings). Updates aim to more aggressively correct the less accurate local areas.

in the GeoMet model. Estimates of both mined and scheduled blocks are adjusted simultaneously. This backward integration is only been made possible through material tracking initiatives. Data, from monitoring systems in the mining fleet and processing plant, are used to link mill observations with their constituent GeoMet blocks. A developed algorithm subsequently updates the constituent GeoMet blocks and their surroundings based on the noisy time-averaged mill observation. The strength of the algorithm lies in its capability to differentiate between the more and less accurately estimated local areas (in this context, a local area refers to a collection of adjacent mined blocks and its immediate surroundings). Updates aim to more aggressively correct the less accurate local areas.

In the future, the updating algorithm can be expanded to integrate additional performance data into the GeoMet model (e.g. recovery and reagent consumption). The methodology eventually could lead to a more optimal and automated selection of ores for blending whilst providing advanced information for process control. For example, the throughput of the comminution circuit can be reduced/increased upfront when harder/softer ore is expected to ensure the most optimal energy utilization, while achieving a grind required for maximizing gold recovery in the leach circuit.

This paper demonstrates an algorithm for continuous reconciliation of mill derived observations ( ) against block estimates in the GeoMet model (

) against block estimates in the GeoMet model ( ). First, the updating algorithm, as presented in Wambeke and Benndorf (2017), is briefly reviewed. Then, background information is provided regarding the geology at Tropicana, the operation and the available data. Thereafter, a forward simulation model is constructed to convert (updated) block estimates (

). First, the updating algorithm, as presented in Wambeke and Benndorf (2017), is briefly reviewed. Then, background information is provided regarding the geology at Tropicana, the operation and the available data. Thereafter, a forward simulation model is constructed to convert (updated) block estimates ( ) into mill feed estimates (

) into mill feed estimates ( ). The forward simulation step is essential in linking a specific observation (

). The forward simulation step is essential in linking a specific observation ( ) back to its constituent GeoMet blocks, while providing a flexible approach to overcome a number of mathematical challenges and material tracking limitations (implementation details, to be discussed later on). Using the forward simulator, the spatial GeoMet models are updated every 4 h over the course of one week. It is shown that the updates do not only result in a real-time reconciliation of extracted blocks but also significantly improve estimates of scheduled blocks (the surroundings). The paper concludes with an extensive discussion on modelling assumptions and potential improvements.

) back to its constituent GeoMet blocks, while providing a flexible approach to overcome a number of mathematical challenges and material tracking limitations (implementation details, to be discussed later on). Using the forward simulator, the spatial GeoMet models are updated every 4 h over the course of one week. It is shown that the updates do not only result in a real-time reconciliation of extracted blocks but also significantly improve estimates of scheduled blocks (the surroundings). The paper concludes with an extensive discussion on modelling assumptions and potential improvements.

At any point in time, when a new mill observation  becomes available, the updating algorithm needs to solve the following inverse problem (conceptual formulation):

becomes available, the updating algorithm needs to solve the following inverse problem (conceptual formulation):

where  is a forward observation model (linear or non-linear) that maps block estimates

is a forward observation model (linear or non-linear) that maps block estimates  onto mill feed estimates

onto mill feed estimates  . In other words, the algorithm is tasked with inferring attributes of individual blocks based on time-averaged mill observations.

. In other words, the algorithm is tasked with inferring attributes of individual blocks based on time-averaged mill observations.

Please note that this conceptual formulation ignores the subtle difference between the forward observation model  and the required inverse

and the required inverse  . The former is used to characterize the mill feed during the interval

. The former is used to characterize the mill feed during the interval . Consequently, only the estimates of blocks which are fed to the mill during the corresponding interval are required as input (i.e. only a collection of already mined blocks as opposed to all the blocks in the GeoMet model). The inverse

. Consequently, only the estimates of blocks which are fed to the mill during the corresponding interval are required as input (i.e. only a collection of already mined blocks as opposed to all the blocks in the GeoMet model). The inverse  accepts a mill observation

accepts a mill observation  and computes various block estimates. This computation does not necessarily have to be limited to already mined blocks, i.e. the ones constituting the mill feed during interval

and computes various block estimates. This computation does not necessarily have to be limited to already mined blocks, i.e. the ones constituting the mill feed during interval  . Due to the spatial correlation, blocks in the direct surroundings of milled blocks (already mined) can be updated as well.

. Due to the spatial correlation, blocks in the direct surroundings of milled blocks (already mined) can be updated as well.

The algorithm essentially solves previous inverse problem using a sequential estimator within a Monte Carlo framework. At time zero, an initial set of  is generated using techniques of conditional simulation. All exploration information is inherently accounted for within these initial realizations; (a) sample values are approximated at their respective locations (no exact reproduction in order to account for measurement error), (b) the degree and scale of variability in the realizations follows a pattern described by a covariance model derived from the GC data (

is generated using techniques of conditional simulation. All exploration information is inherently accounted for within these initial realizations; (a) sample values are approximated at their respective locations (no exact reproduction in order to account for measurement error), (b) the degree and scale of variability in the realizations follows a pattern described by a covariance model derived from the GC data ( ).

).

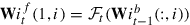

Once collected, a mill observation  is assimilated into the GeoMet model:

is assimilated into the GeoMet model:

Each realization  is updated based on a weighted difference between a mill observation

is updated based on a weighted difference between a mill observation  and a mill feed estimate

and a mill feed estimate  . A mill feed estimate results from running a forward simulator

. A mill feed estimate results from running a forward simulator  based on a most recent GeoMet realization

based on a most recent GeoMet realization  (to be discussed later on). The latest solution set

(to be discussed later on). The latest solution set  (

( ) accounts for all previously collected exploration and production data (

) accounts for all previously collected exploration and production data ( and

and  ).

).

Equation (3) essentially describes how blocks in the GeoMet model are adjusted to reduce the difference between a mill feed observation and an estimate. The weights in the  vector will ‘redistribute’ the observed difference over the blocks in the observed mill feed (

vector will ‘redistribute’ the observed difference over the blocks in the observed mill feed ( can also be a matrix if multiple mill observations are assimilated simultaneously). That is the

can also be a matrix if multiple mill observations are assimilated simultaneously). That is the  vector will contain some non-zero entries at positions that do match those of already mined blocks (Type I weights at rows corresponding to mined blocks). Additional non-zero entries occur in rows representing blocks located in the close proximity of the recently milled blocks (Type II weights). This second group of weights ensures that the improved characterization of individual milled blocks is extended to the surrounding local areas (The ‘ : ’ operator in

vector will contain some non-zero entries at positions that do match those of already mined blocks (Type I weights at rows corresponding to mined blocks). Additional non-zero entries occur in rows representing blocks located in the close proximity of the recently milled blocks (Type II weights). This second group of weights ensures that the improved characterization of individual milled blocks is extended to the surrounding local areas (The ‘ : ’ operator in  points to all

points to all  vector are zeros (blocks outside of immediate vicinity of mined blocks).

vector are zeros (blocks outside of immediate vicinity of mined blocks).

In summary, both already mined as well as surrounding blocks do get assigned an identical recorded deviation ( , Equation (3)). Individual block corrections do however differ across and within both block groups (

, Equation (3)). Individual block corrections do however differ across and within both block groups ( , where

, where  represents an element of the

represents an element of the  vector linked to block

vector linked to block  ). Generally speaking, mined blocks receive larger weights compared with surrounding blocks. Consequently, their applied correction is more significant.

). Generally speaking, mined blocks receive larger weights compared with surrounding blocks. Consequently, their applied correction is more significant.

The simulation-based approach (Monte Carlo framework with  (and calculating its inverse, Equation (2)). Due to the complexity of the material handling process, it would indeed be very challenging to describe the link between individual blocks and a blended measurement as a single equation. Instead, for each unique operation, a case specific forward simulator

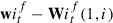

(and calculating its inverse, Equation (2)). Due to the complexity of the material handling process, it would indeed be very challenging to describe the link between individual blocks and a blended measurement as a single equation. Instead, for each unique operation, a case specific forward simulator  is built and ran parallel to the more generally applicable updating code (Figure 2). The simulator is but a virtual model describing which blocks are extracted, processed and measured between

is built and ran parallel to the more generally applicable updating code (Figure 2). The simulator is but a virtual model describing which blocks are extracted, processed and measured between  and

and

Closed-loop reconciliation framework to integrate ball mill performance measurements into the GeoMet model.

The forward simulator is thus used to propagate GeoMet realizations  into mill feed estimates

into mill feed estimates  . During a forward step, the simulator

. During a forward step, the simulator  only includes the blocks which are mined and milled during the corresponding time interval (

only includes the blocks which are mined and milled during the corresponding time interval ( could be replaced by

could be replaced by  where

where  is a set representing the constituent GeoMet blocks in the mill feed).

is a set representing the constituent GeoMet blocks in the mill feed).

The sets of GeoMet and mill feed realizations,  and

and  , respectively, contain enough information to link observed deviations back to their constituent GeoMet blocks. Both realization sets also hold the data necessary to improve the characterization of the immediate surroundings. Updating the constituent GeoMet blocks and their surroundings is governed through the calculation of the Kriging weights

, respectively, contain enough information to link observed deviations back to their constituent GeoMet blocks. Both realization sets also hold the data necessary to improve the characterization of the immediate surroundings. Updating the constituent GeoMet blocks and their surroundings is governed through the calculation of the Kriging weights  (Equation (3)):

(Equation (3)):

where  (A column vector of size

(A column vector of size  (a single value) hold the conditional forecast and observation error covariances. The covariances are computed empirically from the available realization sets. Each entry

(a single value) hold the conditional forecast and observation error covariances. The covariances are computed empirically from the available realization sets. Each entry  of the covariance vector describes the correlation between the observation and the

of the covariance vector describes the correlation between the observation and the  on the other hand describes the accuracy of the mill observation.

on the other hand describes the accuracy of the mill observation.

Kriging weights tend to be larger when the observation is accurate (i.e.  is low) and strongly correlated to particular blocks (i.e.

is low) and strongly correlated to particular blocks (i.e.  is large). Type I Kriging weights (ref. previous discussion) determine how the value of milled blocks have to be adjusted in order to shrink the detected deviations (Equation (3)). Significant type II weights, on the other hand, cause a modification of the neighbouring blocks. Neighbouring blocks only get updated when they are strongly correlated with the observations. Such a strong correlation occurs when a neighbouring block is spatially correlated with a recently milled block. In order words, an adjustment of the milled block warrants an update of the neighbouring blocks as well (though to a lesser extent). The type II updates are based on the notion that two closely spaced blocks are likely to have similar properties.

is large). Type I Kriging weights (ref. previous discussion) determine how the value of milled blocks have to be adjusted in order to shrink the detected deviations (Equation (3)). Significant type II weights, on the other hand, cause a modification of the neighbouring blocks. Neighbouring blocks only get updated when they are strongly correlated with the observations. Such a strong correlation occurs when a neighbouring block is spatially correlated with a recently milled block. In order words, an adjustment of the milled block warrants an update of the neighbouring blocks as well (though to a lesser extent). The type II updates are based on the notion that two closely spaced blocks are likely to have similar properties.

Several technical and practical challenges are solved by computing covariances empirically. (1) As time progresses, conditional forecast error covariances become non-stationary. But for the empirical computation of the covariances, a large non-stationary field covariance matrix  would have to propagated from one update cycle to the next (number of entries equal to the square of the number of grid nodes). Computing covariances empirically reduces computation costs and memory requirements (Wambeke and Benndorf 2017). (2) Differences in scale of support are automatically dealt with. There is no need to perform a support correction on a non-stationary covariance model. (3) Empirical covariances are convenient to handle measurements on blended material streams originating from multiple extraction points. Based on the magnitude of the forecast error covariances, it is possible to pinpoint multiple blocks in the GeoMet model that are responsible for a single detected deviation. Furthermore, the forecast error covariances are of paramount importance in updating neighbouring correlated blocks.

would have to propagated from one update cycle to the next (number of entries equal to the square of the number of grid nodes). Computing covariances empirically reduces computation costs and memory requirements (Wambeke and Benndorf 2017). (2) Differences in scale of support are automatically dealt with. There is no need to perform a support correction on a non-stationary covariance model. (3) Empirical covariances are convenient to handle measurements on blended material streams originating from multiple extraction points. Based on the magnitude of the forecast error covariances, it is possible to pinpoint multiple blocks in the GeoMet model that are responsible for a single detected deviation. Furthermore, the forecast error covariances are of paramount importance in updating neighbouring correlated blocks.

The interested reader is referred to Wambeke and Benndorf (2017) for a detailed literature review and an elaborate presentation of the algorithm. The suggested paper discusses several other aspects of algorithm which were omitted here.

This section briefly presents background information about the Tropicana Gold Mine. Aspects related to geology, mining and processing are discussed and relevant data sources are highlighted.

Geology

The Tropicana Gold Mine is located in Western Australia, approximately 330 km East–North–East of Kalgoorlie. The mine is situated near the edge of the Great Victoria Desert along an ancient collision zone between the Yilgarn Craton and the Albany Fraser Origen. The regional geology is dominated by granitoid rocks, felsic to mafic paragneiss and orthogneiss, and felsic to ultramafic intrusive and volcano-sedimentary rocks. The area is characterized by extreme weathering that resulted in the formation of a 100 m thick regolith. Mineralization is found within Archean-aged high grade quartzo-feldspathic gneisses and is associated with late biotite and pyrite alteration. The mineralization occurs as one or two laterally extensive planar lenses with a moderate dip. Post mineralization faulting resulted in four distinct structural domains offsetting the initial ore body.

Mining

The ore body is mined from four contiguous pits extending six kilometres in strike length (from North to South: Boston Shaker, Tropicana, Havana and Havana South). The mine is operated as a typical drill and blast, truck and shovel open pit mine.

Prior to extraction, GC drilling (Reverse Circulation) is completed on relatively dense 10 m East  m North drill patterns to define the ore zones to be mined. The GC holes are drilled to intersect multiple benches at once and are drilled weeks ahead of extraction. The resulting 1 m samples are sent to an on-site lab for analysis. Conventional fire assaying techniques are applied to determine the gold grade. During the sample preparation stage, samples are processed in an automated sample preparation system, which crushes, splits and pulverizes the material prior to XRF and HS scanning. The resulting multivariate interval data are translated into geometallurgical properties using previously calibrated relationships. The inferred geometallurgical properties are subsequently modelled to populate the

m North drill patterns to define the ore zones to be mined. The GC holes are drilled to intersect multiple benches at once and are drilled weeks ahead of extraction. The resulting 1 m samples are sent to an on-site lab for analysis. Conventional fire assaying techniques are applied to determine the gold grade. During the sample preparation stage, samples are processed in an automated sample preparation system, which crushes, splits and pulverizes the material prior to XRF and HS scanning. The resulting multivariate interval data are translated into geometallurgical properties using previously calibrated relationships. The inferred geometallurgical properties are subsequently modelled to populate the  blocks of the GeoMet model (used for ore design and short-term planning). Once populated, the mine geologist delineates ore polygons to group adjacent spatial blocks into semi-homogeneous digging volumes (known as dig blocks).

blocks of the GeoMet model (used for ore design and short-term planning). Once populated, the mine geologist delineates ore polygons to group adjacent spatial blocks into semi-homogeneous digging volumes (known as dig blocks).

Subsequently during blasting operations, a 10 m high bench is blasted. Transmitters are installed in blast holes and their locations are logged prior to and after the blast. Three-dimensional displacement vectors are computed and applied to the in situ ore polygons to correct for blast movements. The fragmented material is then excavated in three passes (based on design flitches with a height of 3.33 m). Ore is hauled by truck directly to the primary crusher or to one of the reclaim stockpiles situated at the ROM pad (Run Of Mine). Direct crusher feed (material directly coming from the mine) is supplemented with ore reclaimed from ROM stockpiles. The fleet management system records each individual truck cycle in a central database. The recorded spatial coordinates are used for material tracking purposes, linking mill observations to their constituent blocks in the GeoMet model.

Comminution

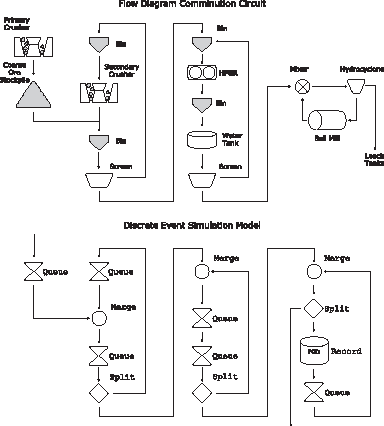

The comminution circuit comprises of a primary crusher, secondary crusher, High Pressure Grinding Rolls (HPGR) and a ball mill. The HPGR screen has a top size of 2.75 mm, resulting in a typical ball mill feed of 500 to  m (

m ( ). The upper part of Figure 4 displays a simplified version of the plant flowsheet (to be discussed later in detail). Conveyor belt speeds, throughput values, recirculating loads, flow velocities and mill performance are continuously monitored. The related sensor readings are written to a database at five-minute intervals.

). The upper part of Figure 4 displays a simplified version of the plant flowsheet (to be discussed later in detail). Conveyor belt speeds, throughput values, recirculating loads, flow velocities and mill performance are continuously monitored. The related sensor readings are written to a database at five-minute intervals.

Forward simulator

A forward simulator is built to generate mill feed estimates  . The realization set of mill feed estimates is used to compute the empirical covariances, which are essential in linking an observation

. The realization set of mill feed estimates is used to compute the empirical covariances, which are essential in linking an observation  to its constituent GeoMet blocks. The forward simulator is subdivided into two connected modules. The first module describes the material handling process in the mine. The second module tracks material flow in the comminution circuit.

to its constituent GeoMet blocks. The forward simulator is subdivided into two connected modules. The first module describes the material handling process in the mine. The second module tracks material flow in the comminution circuit.

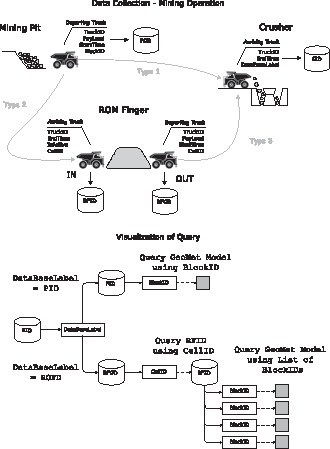

From pit to crusher

The material handling process in the mine can be replicated in great detail using truck cycle data stored in a fleet management database (Figuare 3). Four types of truck cycles are defined: (1) ore is hauled from the pit and dumped directly into the primary crusher (direct tip); (2) ore is hauled from the pit and stockpiled on one of the ROM stockpiles; (3) ore is reclaimed from ROM stockpiles and dumped into the primary crusher; (4) material is hauled from the pit to a waste dump (not shown or further discussed).

Schematic representation of the material handling process in the mine.

Schematic representation of the material flow in the comminution circuit.

A type 1/2 mine cycle starts the moment a truck is being loaded in the pit (Figure 3). A

A type 2 cycle ends the moment a truckload is stockpiled on one of the six ROM stockpiles (Figure 3). An  subdomain within a larger stockpile (and is computed from the GPS location of tipping truck). All ‘active’

subdomain within a larger stockpile (and is computed from the GPS location of tipping truck). All ‘active’

A type 3 mine cycle is initiated when reclaimed ROM finger material is loaded into a truck. A

Querying the databases allows for a live characterization of the crusher feed and stockpile domains. The PayLoad of the trucks arriving at the crusher is obtained from the database referenced by the  values (multiple realization to characterize uncertainty) is obtained in one of two possible ways, depending on the assigned

values (multiple realization to characterize uncertainty) is obtained in one of two possible ways, depending on the assigned

If the label points to the  values which are assigned to a specific truck.

values which are assigned to a specific truck.

If the label points to the The BlockIDs and For each Weighting factors are computed based on the obtained This single set of

The module discussed thus far allows for the characterization of individual truckloads arriving at the crusher, independent of whether the material originates from one of the ROM stockpiles or directly from the pit. values is extracted from the GeoMet model (one-to-one relationship).

values is extracted from the GeoMet model (one-to-one relationship). values characterizing the material within a stockpile subdomain.

values characterizing the material within a stockpile subdomain. values can be connected to a truck departing from the ROM stockpiles and in extension thus also to a truck arriving at the crusher.

values can be connected to a truck departing from the ROM stockpiles and in extension thus also to a truck arriving at the crusher.

The second module of the forward simulator is designed to describe the material flow in the comminution circuit. Four sequential circuits have been identified and modelled: (a) primary crushing, (b) secondary crushing, (c) High Pressure Grinding Rolls (HPGR) and (d) ball mill. The material flow through each circuit is modelled using following modelling components (Figure 4); a ‘merge’ unit (circle) combines material streams, a ‘queue’ (hourglass) describes delays and a ‘split’ unit (diamond) subdivides material streams into two substreams. The behaviour of each virtual unit is driven by information derived from the central processing database (five-minute interval readings).

The moment a truck tips its load into the crusher, its virtual representation is subdivided into a large number of smaller

When material enters the comminution plant, it passes through a gyratory crusher and ends up on a coarse ore stockpile (COS). The behaviour of this circuit is modelled as a queue (first in, first out). The delay time of the queue corresponds to the residence time of a

Drawn stockpile material is subsequently blended with the product of the secondary crusher (merge unit). Once blended, material resides in a bin (queue) before being dropped onto a screen (split unit). Oversized material is circulated back to the bin of the secondary crusher (queue), while the undersize is directed towards the HPGR circuit. The split unit randomly selects virtual

Arriving at the HPGR circuit, material is blended with screen oversize and stored in a bin, before being dropped into the HPGR (merge unit and queue). The HPGR grinds a loose collection of material into a conglomerated cake product. The cake product resides in a bin awaiting wet screening (queue). Prior to screening (split), the HPGR cake is deagglomerated using water jets and vibration. The screen oversize is circulated back to the bin installed above the HPGR, the undersize enters the milling circuit. Diverter gates to extract tramp metal and to construct emergency stockpiles are not accounted for (future work).

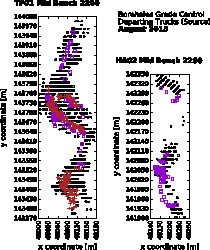

Truck pit source locations from Tropicana and Havana pit during the entire month of August (2015). The displayed trucks are either dispatched to the crusher (red crosses – direct tip) or to ROM stockpile 4 (purple squares). The black dots display GC holes intersecting the bench.

Material arriving from the HPGR circuit is mixed with the ball mill product and inserted into a hydrocyclone (a merge and split unit). The cyclone underflow is circulated back into the ball mill (queue), its overflow is transported to the carbon-in-leach tanks. The moment a virtual

The comminution model is by far not accurate enough to track individual truckloads as they move through the plant. Consequently, the mill feed is characterized over 4 h intervals to filter out possible inaccuracies. A mill feed estimate Query the Group Compute weighting factors based on the group weights. Connect each group to a truck which already arrived at the crusher at an earlier time. Assign the truck Compute a weighted averaged set characterizing the mill feed within a 4 h interval. is obtained as follows:

is obtained as follows:

In comparison, an actual observation  and

and  values to the group.

values to the group. is obtained by processing 4 h long-time series of measured mill performance (previously discussed).

is obtained by processing 4 h long-time series of measured mill performance (previously discussed).

In this pilot study, about 120 h of mill performance data is used to conduct 30 updates of the GeoMet model (30 4 h intervals from 21 August 2015 06:00 to 26 August 2015, 06:00). The historic data-set realistically mimics a live application. More importantly, future mill observations are available ahead of time and can be compared against their corresponding estimates (in a live application, these future observations would not yet exist). Obviously, the future observations are only to be used for validation purposes and should not be fed into the updating algorithm.

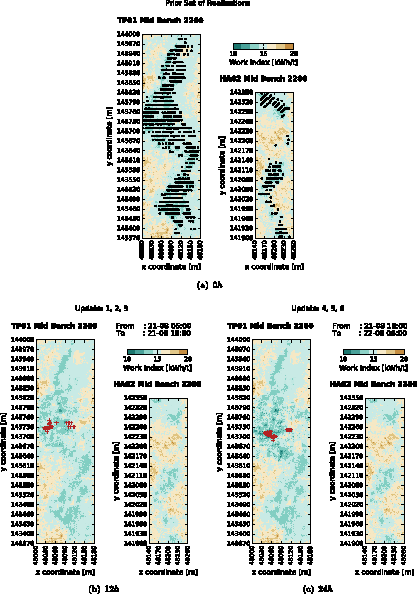

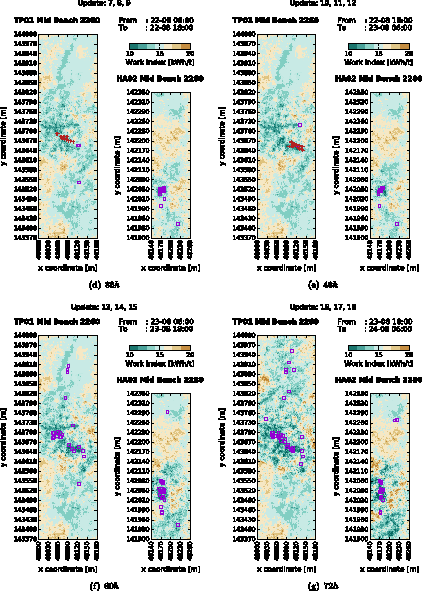

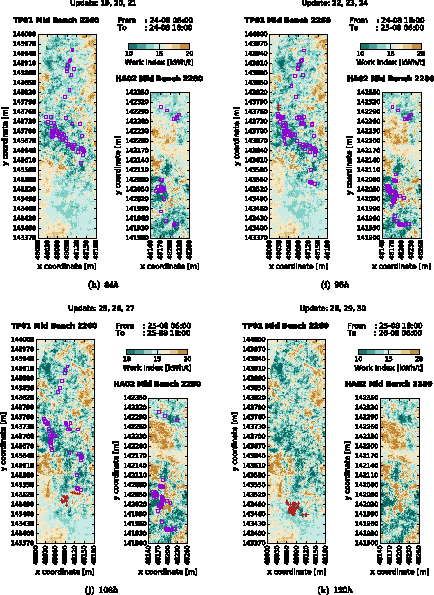

Mean field across the middle flitch of two benches in the Tropicana and Havana pit. Blocks are colour coded according to their best estimate at the indicated time. The source locations of the material milled during the indicated time interval are displayed using either red crosses (direct tip) or purple squares (material has resided on a stockpile finger).

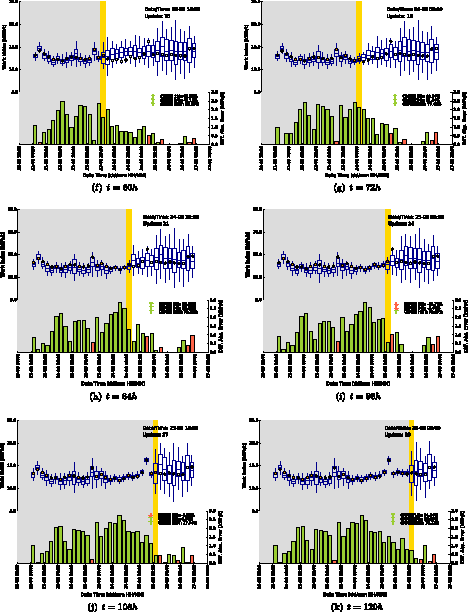

Predicted (blue) and actual time-averaged measurements (orange, red or gray dots). The lower axis displays difference in absolute error (DAE) relative to time 0. Changes in

The material fed to the mill in the pilot study period, was sourced from ROM stockpiles and direct crusher feed. Due to intermediate stockpiling, the mill feed represents mining activity that occurred over a one-month period. Hence, a month of truck cycle data needs to be analyzed. At 21:20 on 02 August, the first truckload is tipped on a previously zeroed ROM stockpile. During the subsequent three weeks, the building of this finger is carefully tracked. As a result, ROM stockpile material, reclaimed to the crusher during week four, can be characterized. In summary, a month of mining data is required to connect a week of plant performance measurements back to their source locations.

The material milled between 21 August and 26 August mainly originates from two distinct benches; bench 2260 in the Tropicana pit and bench 2280 in the Havana pit (Figure 5). Prior to extraction, two GeoMet models are constructed describing the spatial variation of the  values in both benches. Each model contains 100 GeoMet realizations

values in both benches. Each model contains 100 GeoMet realizations  on a block support of

on a block support of  . The field realization are generated using a sequential Gaussian simulation algorithm. All realizations are conditioned on

. The field realization are generated using a sequential Gaussian simulation algorithm. All realizations are conditioned on  estimates, derived from XRF and HS proxies collected on GC samples (Figure 5). Figure 6(a) shows a horizontal section across the middle flitch of both benches. The figure displays the mean field computed over the 100 prior realizations.

estimates, derived from XRF and HS proxies collected on GC samples (Figure 5). Figure 6(a) shows a horizontal section across the middle flitch of both benches. The figure displays the mean field computed over the 100 prior realizations.

Figure 6(a)–(k) illustrate how the mean field changes through time when assimilating mill observations  . A total of 30 updates are conducted. The time between updates amounts to 4 h. Only results obtained at the end of each shift (i.e. every 12 h) are shown. The markers on each figure refer to the source locations of the material milled during the indicated time interval (last 12 h). Figures 6(a)–(k) show that the updates extend beyond the source locations. The

. A total of 30 updates are conducted. The time between updates amounts to 4 h. Only results obtained at the end of each shift (i.e. every 12 h) are shown. The markers on each figure refer to the source locations of the material milled during the indicated time interval (last 12 h). Figures 6(a)–(k) show that the updates extend beyond the source locations. The  estimates of blocks in the immediate vicinity are adjusted as well. Some of these surrounding blocks still have be milled. Improving the estimates of these surrounding blocks directly leads to an improved characterization of the future mill feed (ref. Mill feed estimates).

estimates of blocks in the immediate vicinity are adjusted as well. Some of these surrounding blocks still have be milled. Improving the estimates of these surrounding blocks directly leads to an improved characterization of the future mill feed (ref. Mill feed estimates).

As the realizations are updated, the level of detail in the resulting mean field increases. Overall, the algorithm seems to correct for the globally occurring overestimation bias (i.e. in most blocks the  is lowered). However at specific locations, the algorithm learns that the ore is harder than initially expected (

is lowered). However at specific locations, the algorithm learns that the ore is harder than initially expected ( values are increased).

values are increased).

Mill feed estimates

Updated GeoMet models  are continuously propagated through different forward simulators

are continuously propagated through different forward simulators  to adjust historic (

to adjust historic ( ), current (

), current ( ) and future (

) and future ( ) mill feed estimates accordingly (

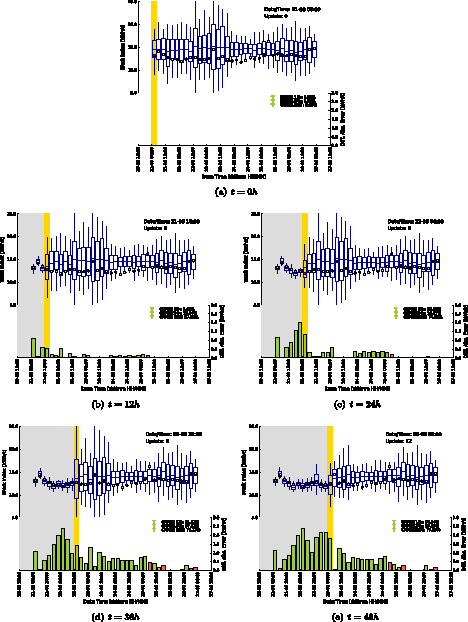

) mill feed estimates accordingly ( ). The top axes of Figure 7(a)–(k) display how these mill feed estimates change as

). The top axes of Figure 7(a)–(k) display how these mill feed estimates change as  , orange dots in grey area); one is currently being milled (

, orange dots in grey area); one is currently being milled ( , red dot in yellow bar); others still have to be fed to the mill (

, red dot in yellow bar); others still have to be fed to the mill ( , grey dots right of yellow bar). The grey dots right of the yellow bar are never fed to the updating algorithm. They only serve to illustrate how future mill feed estimates improve over time (

, grey dots right of yellow bar). The grey dots right of the yellow bar are never fed to the updating algorithm. They only serve to illustrate how future mill feed estimates improve over time ( ).

).

Once a 4 h interval ends, a new observation  becomes available (red dot) and an update is performed. Subsequently,

becomes available (red dot) and an update is performed. Subsequently,

Since the pilot study is based on a historic data-set, all future observations ( ) are known ahead of time (during a live application, they would not yet exist). As a result, assessment statistics can be computed not only describing improvements in historic mill feed estimates but also in future ones.

) are known ahead of time (during a live application, they would not yet exist). As a result, assessment statistics can be computed not only describing improvements in historic mill feed estimates but also in future ones.

The bottom axis in Figure 7(a)–(k) displays the Differences in Absolute Error (DAE) between mill feed estimates (the mean, horizontal line in boxplot) and available observations. The differences are computed relative to time 0 ( , Figure 7(a)). The height of the bar represents the magnitude of the movement. The colour indicates whether the estimate is moving towards (green) or away (red) from the actualobservation. Historic mill feed estimates do undergo significant improvements (Figure 7,

, Figure 7(a)). The height of the bar represents the magnitude of the movement. The colour indicates whether the estimate is moving towards (green) or away (red) from the actualobservation. Historic mill feed estimates do undergo significant improvements (Figure 7,  left of yellow bar). These improvements result from updated estimates in already mined and milled blocks. The figure further shows that future mill feed estimates are being corrected as well (Figure 7,

left of yellow bar). These improvements result from updated estimates in already mined and milled blocks. The figure further shows that future mill feed estimates are being corrected as well (Figure 7,  right of yellow bar). This correction relates to updated estimates of surrounding blocks. Normally, it is impossible to compute

right of yellow bar). This correction relates to updated estimates of surrounding blocks. Normally, it is impossible to compute  ). The required observations are not yet available (grey dots, right of yellow bar). Again, it is important to stress that the future observations are only used for validation purposes.

). The required observations are not yet available (grey dots, right of yellow bar). Again, it is important to stress that the future observations are only used for validation purposes.

The change in Root Mean Square Error ( ). The third window encompasses all historic 4 h intervals (grey area). Future predictions improve on average by about

). The third window encompasses all historic 4 h intervals (grey area). Future predictions improve on average by about  (next 12 h) and

(next 12 h) and  (next 24 h). The error in historic estimates reduces on average by about

(next 24 h). The error in historic estimates reduces on average by about  . A correction of

. A correction of  is not desired since time-averaged noisy observations do not contain enough information to fully eliminate all remaining inaccuracies in the blended blocks.

is not desired since time-averaged noisy observations do not contain enough information to fully eliminate all remaining inaccuracies in the blended blocks.

Conclusion

This paper describes the pilot testing of a novel updating algorithm at the Tropicana Gold Mine. During the pilot, online mill observations are automatically reconciled against the spatial work index estimates of the GeoMet model. Deviations between predicted and actual mill performance are monitored and used to locally improve the GeoMet model. The novelty of the approach resides in its ability to trace detected deviations back to the predominant source. The algorithm automatically handles differences in scale of support, sensor inaccuracies and observations made on blended material originating from two or more extraction points.

In order to operate the updating algorithm, actual observations are to be compared against model-based expectations (the mill feed estimates). The model-based expectations result from the propagation of GeoMet realizations through a forward simulator. The resulting realization sets (block and mill feed estimates)are subsequently used to compute empirical covariances. The covariances describe the link between mill derived observations and blocks from the GeoMet model. There is no need to formulate and linearize an analytical forward observation model, let alone compute its inverse.

A total of 30 updates were performed to assimilate a week of mill performance data into the GeoMet model. The level of detail in the mean field increases significantly as the GeoMet realizations are updated. The algorithm corrects various local estimation biases resulting from ill-calibrated relationships between sensor data (XRF and NIR measurements) and work index values.

The obtained results are validated against readily available production measurements. Since the pilot was ran off-line, future mill observations are known ahead of time (although this information is ignored during updating). Consequently, validation statistics are computed to evaluate whether updated GeoMet models result in more accurate mill feed estimates. Over the course of a week, the  . The results further indicate that updating causes on average a reduction in error of about

. The results further indicate that updating causes on average a reduction in error of about  in performance forecasts for the next shift (upcoming 12 h). Improvement in future forecast of up to

in performance forecasts for the next shift (upcoming 12 h). Improvement in future forecast of up to  have been observed.

have been observed.

Although the current implementation of the forward simulator does adequately describe the relevant operational features, further work needs to be done.

Tracking assumptions are to be validated using e.g. RFID tags (radio frequency identification). The material source locations can be more accurately defined. Currently, only the GPS location of the trucks are recorded. Aerial photographs are used to derive a set of correction vectors (length and orientation) linking truck positions to actual digging locations. Very recently, the high-precision GPS locations of the loaders and excavators are made available as well. An algorithm can be written to accurately determine the origin of each bucket loaded into the truck. A support correction algorithm needs to be designed and implemented to adjust the distribution of Equipment performance measurements might start to drift as critical components are wearing out (e.g. liners in the ball mill). Machine learning techniques could be applied to automatically correct for the occurring drift. Since the case study is based on an off-line execution of the algorithm, future predictions were generated using a ‘tracking-based’ forward simulator. When the algorithm would run online, the tracking data would only allow to generate model-based equivalents to current and historic measurements. A second ‘schedule-based’ forward simulator needs to be build to generate production forecasts. The production forecasts are by no means necessary to run the updating algorithm. The application of a ‘schedule-based’ forward simulator allows for a continuous re-evaluation of operational decisions based on the most up-to-date information. Production forecasts should be recorded and validated against performance measurements as soon as they become available. As such, the performance of the algorithm with respect to generating accurate forecasts is continuously monitored. The performance regarding reconciliation of historic measurements should obviously be monitored as well.

Future development should further focus on extending the capabilities of the updating algorithm. The current implementation is designed to update a single continuous attribute (spatial work index) based on a single continuous measurement variable (mill derived work index). This measurement variable is either directly or indirectly related to the attribute of interest. The algorithm needs to be extended to handle multivariate attributes, both in the block model as on the measurement side (updating multiple GeoMet estimates simultaneously). That is correlated measured variables need to be jointly considered to update (other) correlated attributes. Neglecting to do so will result in a loss of information. Additionally, it would be interesting to update categorical variables such as ore types, lithologies, weathering zones based on equipment performance or other sensor measurements. values assigned to a truck when material is reclaimed from a stockpile subdomain. Some random noise proportional to the amount of volume reduction should be added to the characterizing set. As a result, the uncertainty in the truck would be larger than the one in the stockpile subdomain. The statistical consequences of this volume reduction are currently ignored.

values assigned to a truck when material is reclaimed from a stockpile subdomain. Some random noise proportional to the amount of volume reduction should be added to the characterizing set. As a result, the uncertainty in the truck would be larger than the one in the stockpile subdomain. The statistical consequences of this volume reduction are currently ignored.

The application of machine learning algorithms to regularly recalibrate the relationships between sensor data and word index values opens up another avenue of research. A large database of reconciled work index values is built up as the updating algorithm is operated over a long period of time. Each resulting block value is then to be linked with sensor data from neighbouring grade control samples. Both data-sets are then to be used to regularly retrain the relationship between sensor data and work index estimates. The retrained relationship will eventually result in more accurate and reliable GeoMet models. Finally, the gained knowledge, e.g. a better understanding of the relation between ore types and hardness (assuming sensor data can be used to differentiate between ore types), should be transferred into the long-term mineral resource model on a regular basis.

Notes on contributors

Footnotes

No potential conflict of interest was reported by the authors.