Abstract

We focus on data gathering problems in energy-constrained networked sensor systems. We study store-and-gather problems where data are locally stored at the sensors before the data gathering starts, and continuous sensing and gathering problems that model time critical applications. We show that these problems reduce to maximization of network flow under vertex capacity constraint. This flow problem in turn reduces to a standard network flow problem. We develop a distributed and adaptive algorithm to optimize data gathering. This algorithm leads to a simple protocol that coordinates the sensor nodes in the system. Our approach provides a unified framework to study a variety of data gathering problems in networked sensor systems. The performance of the proposed method is illustrated through simulations.

Introduction

State-of-the-art sensors (e.g., Smart Dust [1]) are powered by batteries. Replenishing energy by replacing the batteries is infeasible because the sensors are typically deployed in harsh terrains. Also, the cost of replacing batteries can be prohibitively high. These sensors, which are usually unattended, need to operate over a long period of time after deployment. Energy efficiency is thus critical. Techniques ranging from low power hardware design [2], [3] and energy-aware routing [4], [5] to application level optimizations [6], [7] have been proposed to improve energy efficiency of networked sensor systems.

An important application of networked sensor systems is to monitor the environment. Examples of such applications include vehicle tracking and classification in the battlefield, patient health monitoring, pollution detection, and so on. In these applications, a fundamental operation is to sense the environment and transmit the sensed data to the base station for further processing. In this article, we study energy-efficient data gathering in networked sensor systems from an algorithmic perspective.

Compared with sensing and computation, communication is the most expensive operation (in terms of energy consumption) in the context of data gathering [8]. Generally, data transfers are performed via multihop communications where each hop is a short-range communication. This is due to the well-known fact that long-distance wireless communication is expensive in terms of both implementation complexity and energy dissipation, especially when using the low-lying antennae and near-ground channels typically found in networked sensor systems. Short-range communication also enables efficient spatial frequency re-use. A challenging problem with multihop communications is the efficient transfer of data through the system when the sensors have energy constraints.

Some variations of the problem have been studied recently. In reference [9], data gathering is assumed to be performed in

Our study departs from the aforementioned with respect to the problem definition as well as the solution technique. For short-range communications, the difference in the energy consumption between sending and receiving a data packet is almost negligible. We adopt the reasonable approximation that sending a data packet consumes the same amount of energy as receiving a data packet [8]. The studies in reference [10] and [11] differentiate the energy dissipated for sending and receiving data. Although the resulting problem formulations are indeed more accurate than ours, the improvement in accuracy is marginal for short-range communications.

In reference [9], each sensor generates exactly one data packet per round (a round corresponds to the occurrence of an event in the environment) to be transmitted to the base station. The system is assumed to be fully connected. The study in reference [9] also considers a very simple model of data aggregation where any sensor can aggregate all the received data packets into a single output data packet. In our system model, each sensor communicates with a limited number of neighbors due to the short range of the communications, resulting in a general graph topology for the system. We study

Our focus in this article is to maximize the throughput or volume of data received by the base station. Such an optimization objective is abstracted from a wide range of applications in which the base station needs to gather as much information as possible. Some applications proposed for the networked sensor systems may have different optimization objectives. For example, the balanced data transfer problem [12] is formulated as a linear programming problem where a “minimum achieved sense rate” is set for every individual node. In reference [13], data gathering is considered in the context of energy balance. A distributed protocol is designed to ensure that the average energy dissipation per node is the same throughout the execution of the protocol. However, these issues are not the focus of this article.

By modeling the energy consumption associated with each send and receive operation, we formulate the data gathering problem as a constrained network flow optimization problem where each each node

The constrained flow problem reduces to the standard network flow problem, which is a classical flow optimization problem. Many efficient algorithms have been developed [14] for the standard network flow problem. However, in terms of decentralization and adaptation, these well-known algorithms are not suitable for data gathering in networked sensor systems. In this article, we develop a decentralized and adaptive algorithm for the maximum network flow problem. This algorithm is a modified version of the Push-Relabel algorithm [15]. In contrast to the Push-Relabel algorithm, it is adaptive to changes in the system. It finds the maximum flow in

The aforementioned algorithm can be used to solve both store-and-gather problems and continuous sensing and gathering problems. For the continuous sensing and gathering problems, we developed a simple distributed protocol based on the algorithm. The performance of this protocol is studied through simulations. Because the store-and-gather problems are by nature off-line problems, we do not develop a distributed protocol for this class of problems.

The rest of the article is organized as follows. The data gathering problems are discussed in Section 2. We show that these problems reduce to network flow problem with constraint on the vertices. In Section 3, we develop a mathematical formulation of the constrained network flow problem and show that is reduces to a standard network flow problem. In Section 4, we derive a relaxed form for the network flow problem. A distributed and adaptive algorithm is then developed for this relaxed problem. A simple protocol based on this algorithm is presented in Section 4.3. Experimental results are presented in Section 5. Section 6 concludes the article.

Data Gathering with Energy Constraint

System Model

Suppose a network of sensors is deployed over a region. The location of the sensors are fixed and known

Among the three categories (sensing, communication, and data processing) of power consumption, a sensor node typically spends most of its energy in data communication. This includes both data transmission and reception. Our energy model for the sensors is based on the first order radio model described in reference [16]. The energy consumed by sensor

Communication link (

An energy budget

Store-and-Gather Problems

In store-and-gather problems, the information from the environment is sensed (possibly over a long time period) and stored locally at the sensors. The data is them transferred to the base station during the data gathering stage. This represents those data-oriented applications (e.g., counting the occurrences of endangered birds in a particular region) where the environment slowly changes. There is typically no deadline (or the deadline is loose enough to be ignored) on the duration of data gathering for such problems, and we are not interested in the speed at which the data is gathered. But due to the energy constraint, not all the stored data can be gathered by the base station, and we want to maximize the amount of data gathered.

For each

For the simplified scenario where

|

Similar to the SMaxDV problem, the net flow out of the intermediate nodes (

The continuous sensing and gathering problems model those time-critical applications that need to gather as much information as possible from the environment while the nodes are sensing. Examples of such applications include battlefield surveillance, target tracking, and so on. We want to maximize the total number of data packets that can be gathered by the base station

Similar to the store-and-gather problem, we have the following mathematical formulation when

The major difference between the SMaxDV and the SMaxDT problem is the consideration of link capacities. In the SMaxDV problem, because there is no deadline for the data gathering, the primary factor that affects the maximum number of gathered data is the energy budgets of the sensors. But for the SMaxDT problem, the number of data packets that can be transferred over a link in one unit of time is not only affected by the energy budget, but also bounded from above by the capacity of that link, as specified in Condition 1. For the SMaxDT problem, we did not model the impact of

Similarly, we can formulate the multiple source maximum data throughput problem as follows:

Condition 4 in the problem formulation takes into account the sensing capabilities of the sensors.

Problem Reductions

In this section, we present the formulation of the constrained flow maximization problem where the vertices have limited capacities (

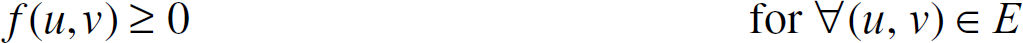

In the CFM problem, we are given a directed graph 0 ≤

The

It is straightforward to show that the SMaxDV and the SMaxDT problems reduce to the CFM problem, By adding a hypothetical super source node, the MMaxDV and the MMaxDT problems can also be reduced to SMaxDV and SMaxDT, respectively.

It can be shown that the CFM problem reduces to a standard network flow problem. Due to the existence of Condition 1, Condition 3 is equivalent to

The standard network flow problem is stated as:

The vertex capacity

The edge capacity in the CFM problem models the communication rate (meaningful for continuous sensing and gathering problems) between adjacent sensor nodes. The edge capacity captures the available communication bandwidth between two nodes, which may be less than the maximum available rate. For example, a node may reduce its radio transmission power to save energy, resulting in a less than maximum communication rate. This capacity can also vary over time based on environmental conditions. Our decentralized protocol results in an online algorithm for this scenario.

Because energy efficiency is a key consideration, various techniques have been proposed to explore the trade-offs between processing/communication speed and energy consumption. This results in the continuous variation of the performance of the nodes. For example, the processing capabilities may change as a result of dynamic voltage scaling [18]. The data communication rate may change as a result of modulation scaling [19]. As proposed by various studies on energy efficiency, it is necessary for sensors to maintain a power management scheme, which continuously monitors and adjusts the energy consumption and thus changes the computation and communication performance of the sensors. In data gathering problems, these energy related adjustments translate to changes of parameters (node/link capacities) in the problem formulations. Determining the exact reasons and mechanisms behind such changes is beyond the scope of this article. Instead, we focus on the development of data gathering algorithms that can adapt to such changes.

Distributed and Adaptive Algorithm to Maximize Flow

In this section, we first show that the maximum flow remains the same even if we relax the flow conservation constraint. Then we develop a distributed and adaptive algorithm for the relaxed problem.

Relaxed Flow Maximization Problem

Consider the simple example in Figure 1 where

An example of the relaxed network flow problem where

This leads to the following relaxed network flow problem:

Condition 2 differentiates the relaxed and the standard network flow problem. In the relaxed problem, the total flow out of a node can be equal to or larger than the total flow into the node. A feasible function

Theorem 1

Proof of the theorem is not difficult and hence omitted here. If we interpret

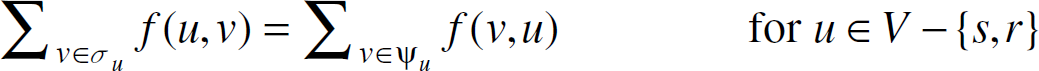

In this section, we develop a decentralized and adaptive algorithm for the relaxed network flow maximization problem. This algorithm is a modified version of the Push-Relabel algorithm [14] and is denoted as the

The Push-Relabel algorithm is a well-known algorithm for network flow maximization. It has a decentralized implementation where every node only needs to exchange messages with its immediate neighbors and makes decisions locally. But in order to be adaptive to the changes in the system, this algorithm has to be re-initialized and re-run from scratch each time when some parameters (weight of the nodes and edges in the graph) of the flow maximization problem change. Each time before starting to search for the new optimal solution. The algorithm needs to make sure that every node has finished its local initialization, which requires a global synchronization and compromises the property of decentralization.

In contrast to the Pust-Relabel algorithm, our algorithm introduces the adaptation operation, which is performed on the current values of

For the discussion that follows, let us first briefly re-state some notations for the network flow maximization problem. For notational convenience, if edge (

Given a directed graph

The algorithm is as follows:

Each node if if if if

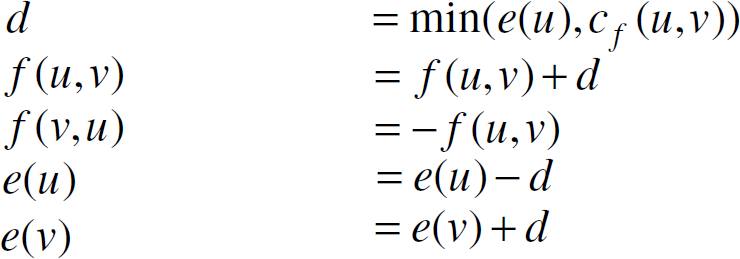

The aforementioned algorithm defines an integer valued auxiliary function

An intuitive explanation of the RIPR is as follows.

Lemma 1

Proof

Lemma 2

Proof

Suppose

We claim that if

It is fairly easy to show that

But this contradicts the assumption that

Lemma 3

Proof

When

Lemma 4

Proof

We prove by induction on the number of adaptation operations.

Before any changes occur in the system, the adaptation operation will not be applied. At this stage, the RIPR algorithm performs the exact operations as the Push-Relabel algorithm, thus we have

Suppose after the adaptation has been applied We first show that Suppose This may add edge ( Suppose For a residual edge ( Now we need to show that Let

Combining these inequalities, we have

For any node

Note that e(

Combining these inequalities, we have

Corollary 1

The proof of Corollary 1 is included in the proof of Lemma 4.

Lemma 5

Proof

Suppose for the sake of contradiction that there exists a node

According to Lemma 4,

According to Corollary 1,

On the other hand, consider the first hop (s, u1) along this path. (s, u1) ∊ Ef implies that

Similar to the standard flow problem, for the relaxed flow problem, a

Lemma 6

Proof

we have

Because

The next lemma shows that the RIPR algorithm finds the maximum relaxed flow if it terminates. By terminates, we mean that none of the nodes need to execute any of the basic operations, assuming that edge capacities do not change any more. After proving this lemma, we will show that the RIPR algorithm indeed terminates.

Lemma 7

Proof

According to Lemma 3, if the algorithm terminates, then

Given such an

According to Lemma 5,

Therefore

We claim that

Therefore,

According to Lemma 6, such a relaxed flow

Now we show that the algorithm indeed terminates.

Lemma 8

Proof

If the adaptation if applied

If a

Lemma 9

Proof

Consider edge (

Lemma 10

Proof

Define a potential function Φ = Σ

According to Lemma 4,

For a non-saturated push

Therefore, the total increase in Φ is at most ((2

Theorem 2

Proof

In this section, we present a simple online protocol for SMaxDT problem based on the RIPR algorithm.

In this protocol, each node maintains a data buffer. Initially, all the data buffers are empty. The source node Contact the adjacent node(s) and execute the RIPR algorithm. While β

Upon receiving “request to sent,”

For node

For the MMaxDT problem, the situation is a bit more complicated. Because the MMaxDT problem is reduced to the SMaxDT problem by adding a hypothetical super source node

The SMaxDV and MMaxDV problems are by nature off-line problems and we do not develop online protocols for the two problems.

Experimental Results

Simulations were conducted to illustrate the effectiveness of the RIPR algorithm and the data gathering protocol. For the sake of illustration, we present simulation results for the SMaxDT problem.

The systems were generated by randomly scattering the sensor nodes in a unit square. The base station was located at the lower-left corner of the square. The source node was randomly chosen from the sensor nodes.

The RIPR algorithm described in Section 4 adapts to every single change that occurs in the system. The adaptation is initiated by source node

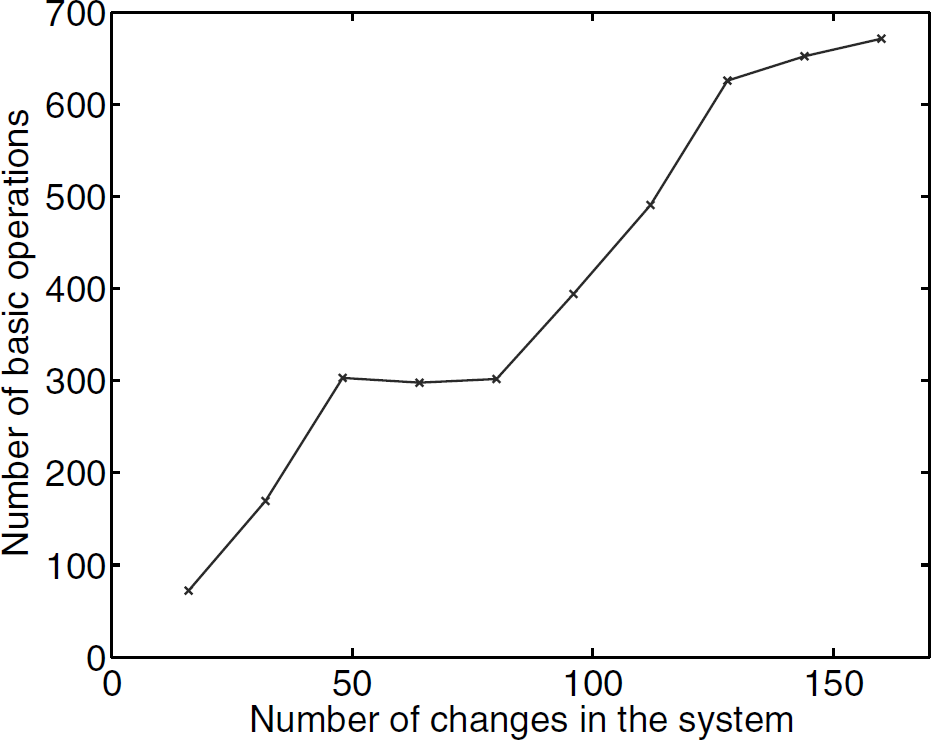

The result in Figure 2 illustrates the cost of adaptation (in terms of the total number of basic operations) vs. the number of changes occurred before the adaptation. In each experiment, a randomly generated system with 40 nodes was deployed in a unit square. After the system stabilized and found the optimal solution, the bandwidth of a certain number of links was changed. Adaptation was then performed and the system stabilized again (and found a new optimal solution) after executing a certain number of basic operations. For each experiment, we recorded the number of basic operations executed by the system to find the new optimal solution. Each data pointed in Figure 2 is averaged over 50 experiments. We can see that the required number of basic operations increases as the number of changes (per adaptations) increases.

Adaptation performed in batch mode.

So far the performance of the RIPR algorithm is evaluated in terms of the total number of basic operations. We do not expect the individual nodes to execute the same number of operations because the RIPR algorithm is not designed for load balancing. But interestingly, the following simulation results show that the RIPR algorithm is pretty well balanced in terms of the number of basic operations executed by different nodes. For each experiment, a randomly generated system is initialized and the number of basic operations executed by the system to stabilize was recorded. The basic operations were reclassified into two categories: local updates and control message exchanges. Each

The maximum and mean cost per node for executing the PIPR algorithm.

The second set of simulation results illustrate the convergence and adaptivity of the proposed protocol. In each experiment, a certain number (between 40 and 100) of nodes were randomly deployed in the unit square. Communication radius ranging from 0.2 to 0.5 units were tested. For each experiment, the data gathering process lasted 30 seconds.

The

Normalized Steady-State Throughput

In the protocol, data is transferred when the RIPR algorithm is being executed. Thus the start-up time of the system needs to be evaluated from two aspects: the

For each experiment in the second set of simulations, we monitored the activities of each individual node. The termination of the RIPR algorithm was detected when none of the nodes needed to execute any of the basic operations. Note that such a global monitoring is made available in the simulations for performance analysis only. It may be very costly to implement this monitoring function in an actual deployment. Let

The impact of the number of nodes and the communication radius on the execution time of the RIPR algorithm is shown in Figure 4. The execution time increases as the number of nodes increases. The execution time also increases as the communication radius increases, which leads to an increase in the number of links in the system. Such a trend is expected from Theorem 2.

Execution time of the RIPR algorithm.

The start-up time of the protocol is shown in Figure 5. The result shows that for a given communication radius, the start-up time of the protocol increases as the number of nodes increases; and interestingly, for a given number of nodes, the start-up time decreases as the communication radius increases. Such a behavior is due to the fact that a larger communication radius leads to a smaller diameter of the graph. The diameter of a graph is defined as the largest distance (in terms of the number hops) between any two nodes in the graph. In systems with small diameter, the base station is closer to the source node. Thus the data can be transferred sooner to the base station during the start-up time.

Start-up time of the proposed protocol.

We have also observed that in some experiments, the system throughput reached steady-state even before the RIPR algorithm terminated. This is not a contradiction. Actually, when such scenarios occurred, the RIPR algorithm was pushing excessive flow (node

The earlier results illustrate the behavior of the the protocol and the RIPR algorithm. Awareness of such behaviors is useful for system synthesis. For example, in order to reduce the start-up time of the protocol, we can deploy the nodes so that they can reach the sink in a small number of hops. To reduce the cost (both time and energy) of executing the RIPR algorithm, we can restrict the communication of each node to a subset of its neighbors (thereby reducing |

Note that the observed execution time of the RIPR algorithm (less than 1.3 seconds) and the start-up time of the protocol (less than 4.3 seconds) depends on the bandwidth settings of the links. In our simulations, the bandwidth of the links is around 10 kbps, which is around 40 data packets per second because each data packet is 32 bytes. The shortest path (in terms of the transfer time of one data packet) from the source node to the base station ranges from 0.05 seconds to 0.13 seconds. The execution and start-up time will be much shorter if the links have higher bandwidth. For example, if the system is built with Telos [20] wireless sensors that can communicate at 250 kbps, we can expect about 20 times speed up in both the execution time of RIPR algorithm and the start-up time of the protocol.

Adaptivity of the proposed protocol is shown in Figure 6. The system consisted of 40 nodes randomly deployed in the unit square. Communication radius was set to 0.4. The system activities during the first 40 seconds are shown. At time

Illustration of the start-up and the adaptation of the proposed protocol. Framed block (a) is zoomed in Figure 7(a), framed block (b) is zoomed in Figure 7(b).

Detailed Illustration of the start-up and the adaptation of the proposed protocol.

In this article, we studied a set of data gathering problem in energy-constrained networked sensor systems. We reduced such problems to a network flow maximization problem with vertex capacity constraint, which can be further reduced to the standard network flow problem. After deriving a relaxed formulation for the standard network problem, we developed a distributed and adaptive algorithm to maximize the flow. This algorithm can be approximated as a simple data gathering protocol.

One of the future directions is to design distributed algorithms that do not generate excessive flow at the nodes (i.e.,