Abstract

Common mental disorders are responsible for nearly 30% of the non-fatal disease burden in Australia [1], yet fewer than one in six people with depression or anxiety receive evidence-based treatments [2, 3]. Care, for those who do access it, is largely provided by general practitioners (GPs), whose ability to deliver optimal treatment is often impeded by heavy case-loads and inadequate training and support [4, 5].

In an effort to improve access to mental health care, 108 projects have been funded progressively since July 2001 under the Access to Allied Psychological Services component of the Better Outcomes in Mental Health Care programme. The projects are similar, in that they are all under the auspices of the Divisions of General Practice, and all enable GPs to refer patients to allied health professionals (usually psychologists) for six or more sessions of low-cost, evidence-based mental health care. However, they differ in the models they use to retain, locate and direct referrals to their allied health professionals [6].

The projects are encouraged to enter patient-level and session-level data into a minimum dataset. Various evaluation activities have drawn on aggregated data from this minimum dataset, and have suggested that the Access to Allied Psychological Services projects have improved access to high-quality psychological care for people whose access might otherwise have been restricted by barriers such as cost [7].

The ultimate arbiter of success, however, is whether the projects are having a positive impact in terms of patient outcomes (e.g. level of functioning, severity of symptoms and/or quality of life). Originally, the minimum dataset did not include any outcome data because of logistical difficulties associated with the wide variety of different outcome measures being used across projects [8]. In mid-2005, however, outcome measure fields were added to the minimum dataset, and it is now timely to consider the direct impacts that the projects are having for patients. Adopting a methodology that uses a common metric to quantify the varying outcome measures, the current paper examines the following evaluation questions: (i) what is the level of patient outcomes within and across projects; and (ii) do improvements in patient outcomes vary depending on the model of service delivery?

Method

Examining the level of patient outcomes within and across projects

Data sources

To address the first evaluation question, the report drew on outcomes data from the previously mentioned minimum dataset. The minimum dataset is a web-based national database that standardizes the basic information collected by Divisions implementing Access to Allied Psychological Services projects. The minimum dataset captures de-identified, descriptive patient-level sociodemographic, clinical and outcome information and session-level treatment information.

Projects were included in the analysis if they had entered pairs of pre- and post-treatment scores on a given outcome measure for at least five patients. At the time of downloading the data (mid-May 2006), 29 projects (27%) had entered sufficient outcomes data into the minimum dataset to be included in the analysis. These outcome scores were available from 11 different measures, namely the Kessler 10 (K-10) [9], the Beck Anxiety Inventory (BAI) [10], the Beck Depression Inventory (BDI) [11], the Hospital Anxiety and Depression Scale (HADS) [12], the Depression Anxiety Stress Scales (DASS) [13], the Health of the Nation Outcome Scales (HoNOS) [14], the General Well-Being Index (GWBI) [15], the State–Trait Anxiety Inventory (STAI) [16], the Behaviour and Symptom Identification Scale (BASIS-32) [17], the Self-rating Depression Scale (SDS) [18], and the General Health Questionnaire (GHQ-28) [19]. It should be noted that a decrease in score from pre- to post-treatment represents an improvement on all of these measures except the GWBI, for which an increase represents an improvement.

Data analysis: effect size calculation

A single-group pre–post-measurement design across multiple projects was used in order to calculate effect sizes. This approach was chosen to cater for the naturalistic nature of the study, and the range of outcome measures being used within and across projects [20].

Effect sizes (d) were chosen as the key metric because they present outcome in a standardized form to allow combination and comparison across multiple measures and studies or, in this case, projects. For repeated measures, Cohen's d provides a reasonably accurate effect size estimate [21]. Cohen's d was calculated as the difference between pre- and post-treatment scores divided by the pooled pre- and post-treatment standard deviations [22]. Because effect sizes provide a slight overestimate of the true population effect, an adjustment was applied to remove this bias (equation 8 using adjustment provided by equation 11, p. 20) [21]. Variance around d was calculated using an equation appropriate for repeated measures studies with very small sample sizes (equation 9 using adjustment provided by equation 11, p. 21) [22].

The goal of the analysis was to produce one effect size (d) per project across all available measures, and one aggregate d across all projects. The analysis used a random effects model to cater for the presence of heterogeneity, and was conducted in the following steps.

An effect size was calculated for each outcome measure, for each project. d was calculated for each measure using pre- and post-treatment means and standard deviations. In the case of the DASS subscales, one d was obtained from correlated subscales by averaging [23]. A combined effect size was calculated within projects. Where there was one measure per patient per project (e.g., K-10 scores only), the single d calculated for that measure was used. In cases where there was more than one measure per patient (e.g. K-10 and HoNOS scores for the same patient), the measures were averaged. This was done on the grounds that the measures were correlated, all measures were of interest and projects had medium to small sample sizes [23, 24]. In a single case where different measures were provided for different patients (e.g. K-10 scores for five patients and HoNOS scores for six), the standard meta-analysis formula described in the next section was used, on the grounds that there was no overlap and these scores were analogous to independent estimates from different studies. Effect sizes were combined across projects. A single weighted average d was obtained using the standard meta-analysis formula for independent estimates, using the weighted inverse variance method.

Cohen's ‘rule of thumb’ was used for interpreting the resultant standardized effect sizes (small effect d=0.20, medium effect d=0.50, large effect d=0.80) [24]. It should be noted, however, that d was based on single-group paired pre- and post-treatment scores in the current analysis, and pre–post-treatment effect sizes from single groups will generally be larger than post-treatment differences between independent groups.

Examining whether improvements in patient outcomes vary depending on the model of service delivery

Data sources

To address the second evaluation question, the aforementioned outcomes data from the minimum dataset were combined with data from a project-specific survey administered in 2005 and designed to elicit information on the models of service delivery being used in the projects. The survey achieved a 95% response rate, and profiled the projects in terms of their means of retaining allied health professionals, their location of allied health professionals, and their referral mechanisms. The survey methodology and findings have been reported in detail elsewhere [6]. For the 29 projects contributing data to the analysis, effect sizes were added to the survey data file.

Data analysis: linear regression analysis

The association between models of service delivery and patient outcomes was investigated using linear regression analysis. The objective was to determine which, if any, models of service delivery were predictive of higher levels of patient outcomes. The dependent variable, patient outcome effect size, was continuous.

Results

What is the level of patient outcomes within and across projects?

Availability of outcomes data

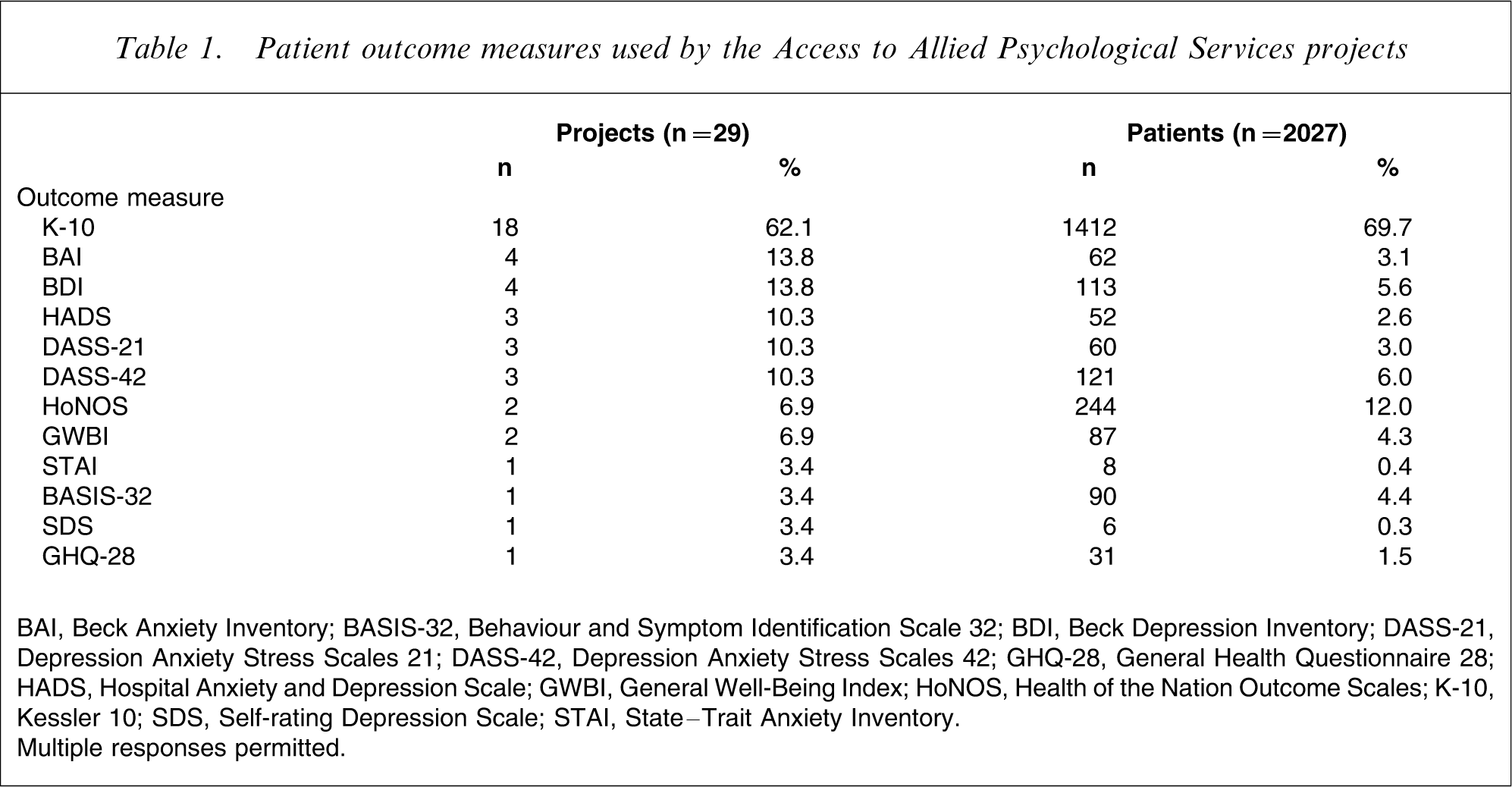

Pre- and post-treatment scores were available from the 29 projects for a total of 2027 patients. The numbers of projects using each outcome measure is shown in Table 1, as is the number of patients for whom each measure was used. Note that the totals exceed 29 and 2027, respectively, because some projects used more than one outcome measure for the same patient.

Patient outcome measures used by the Access to Allied Psychological Services projects

BAI, Beck Anxiety Inventory; BASIS-32, Behaviour and Symptom Identification Scale 32; BDI, Beck Depression Inventory; DASS-21, Depression Anxiety Stress Scales 21; DASS-42, Depression Anxiety Stress Scales 42; GHQ-28, General Health Questionnaire 28; HADS, Hospital Anxiety and Depression Scale; GWBI, General Well-Being Index; HoNOS, Health of the Nation Outcome Scales; K-10, Kessler 10; SDS, Self-rating Depression Scale; STAI, State–Trait Anxiety Inventory.

Multiple responses permitted.

Mean pre- and post-treatment effect sizes

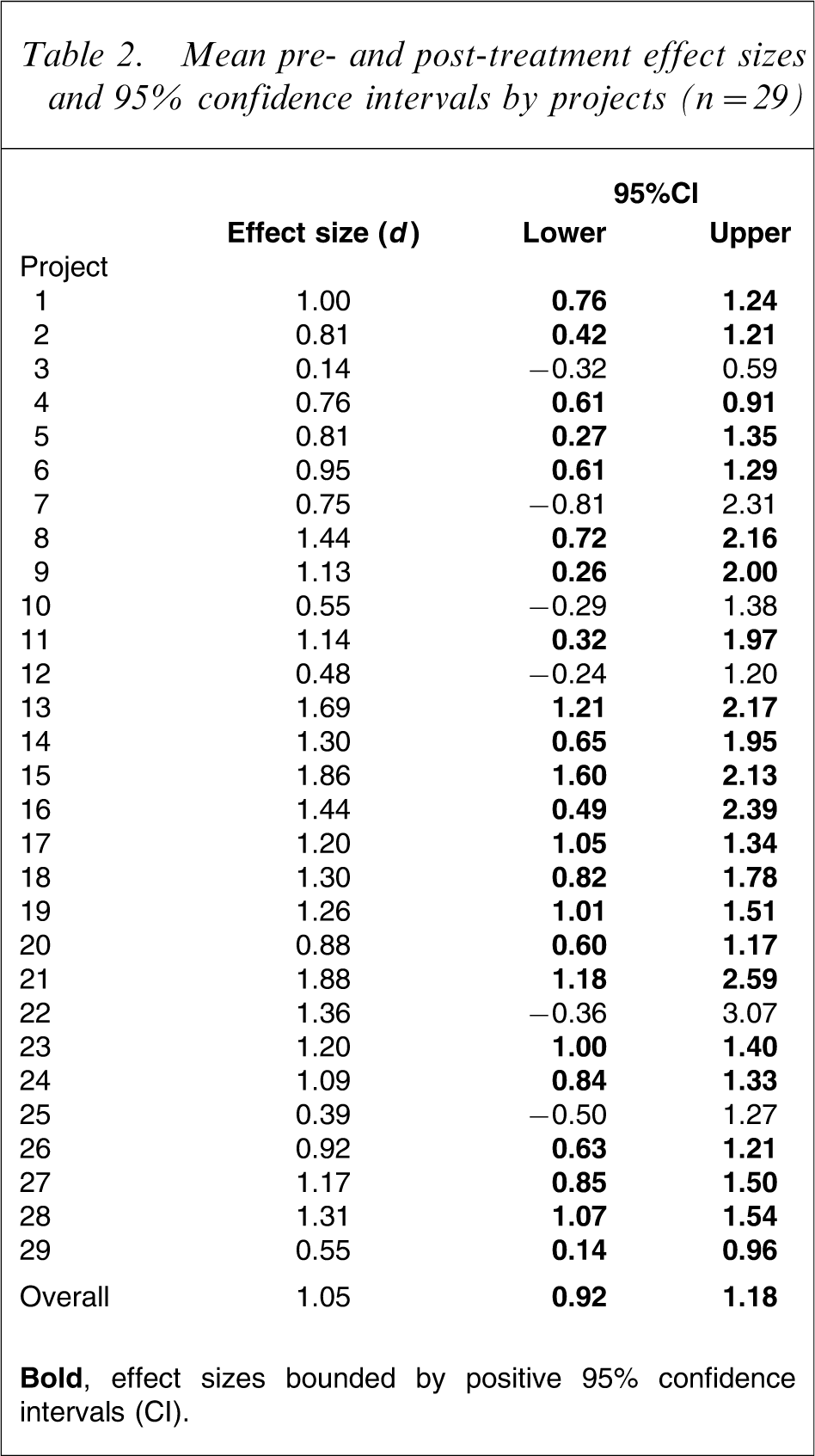

The mean pre- and post-treatment effect size, weighted for sample size, for patients across projects was 1.05 (95% confidence interval (CI) = 0.92–1.18). Based on Cohen's interpretation of effect size, this indicates a large positive effect (d>0.80) [24].

Table 2 shows the effect sizes for the 29 (de-identified) projects. The point estimates of effect size are all positive, indicating that all projects are achieving improved patient outcomes. Bold font indicates that for the majority of projects (79%), effect sizes are bounded by positive 95%CIs. Of these, 43% show large positive effects at worst, 35% show medium positive effects at worst, and 22% show small positive effects at worst. However, as noted, the point estimates are all positive and the projects with CIs bounded by negative lower limits tend to be those with small sample sizes.

Mean pre- and post-treatment effect sizes and 95% confidence intervals by projects (n = 29)

Do improvements in patient outcomes vary depending on the model of service delivery?

Models of service delivery utilized by projects for which outcomes data were available

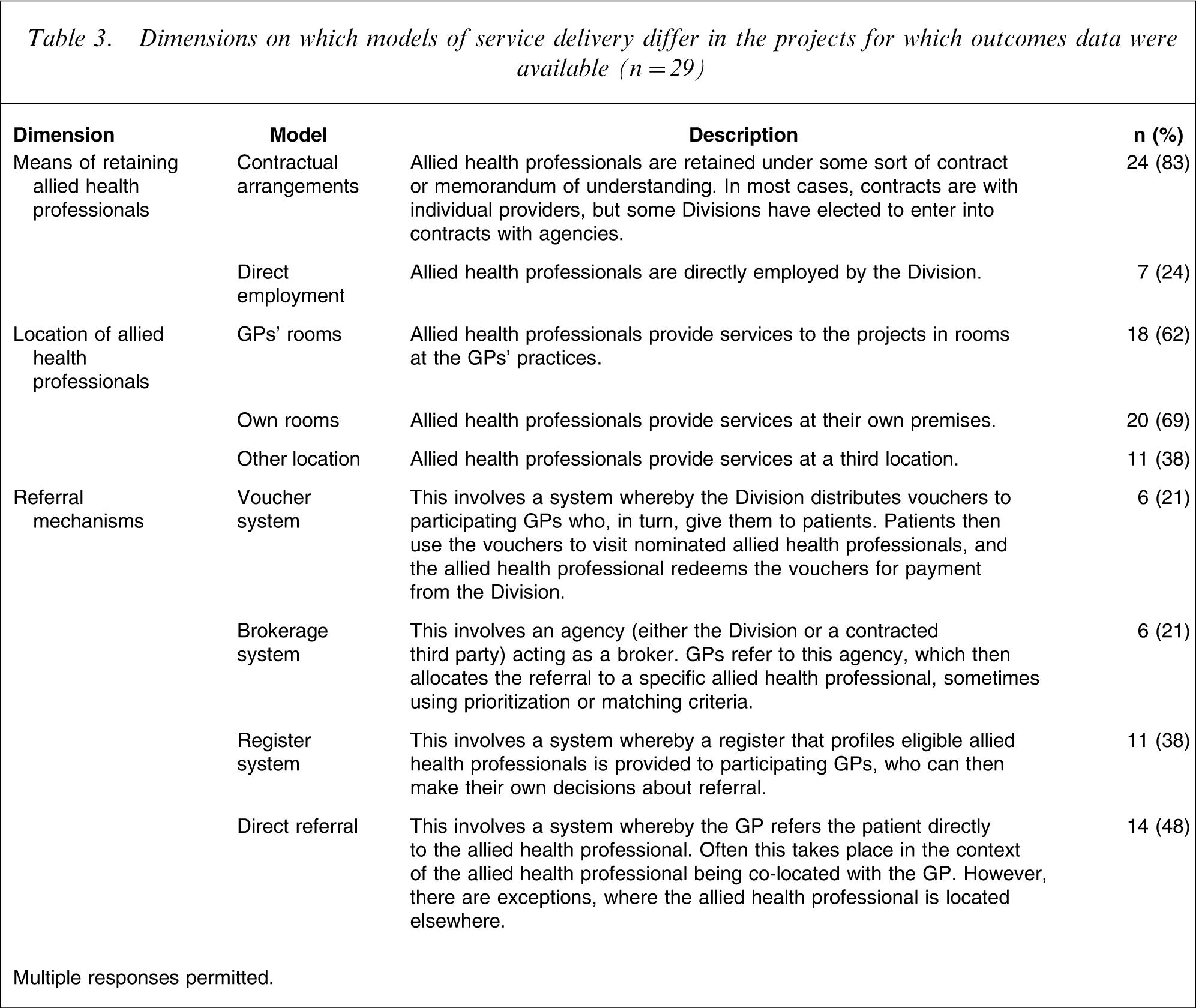

Table 3 shows the models of service delivery being adopted by the 29 projects in terms of their means of retaining allied health professionals, where their allied health professionals are located, and how referrals are made. It should be noted that within each of these dimensions, projects often use multiple models, so the totals commonly exceed 100%.

Dimensions on which models of service delivery differ in the projects for which outcomes data were available (n = 29)

Multiple responses permitted.

The data show that there is considerable variability in terms of the models of service delivery in the 29 projects. At least one-fifth of all projects are using each of the different models, although some are more popular than others. The majority of projects retain their allied health professionals under contract (83%). For a similar proportion of projects, services are provided from allied health professionals’ own rooms (69%) or co-located in GPs’ practices (62%). The majority of projects implement a direct referral mechanism (48%) and/or a register system (38%).

Association between models of service delivery and levels of patient outcomes

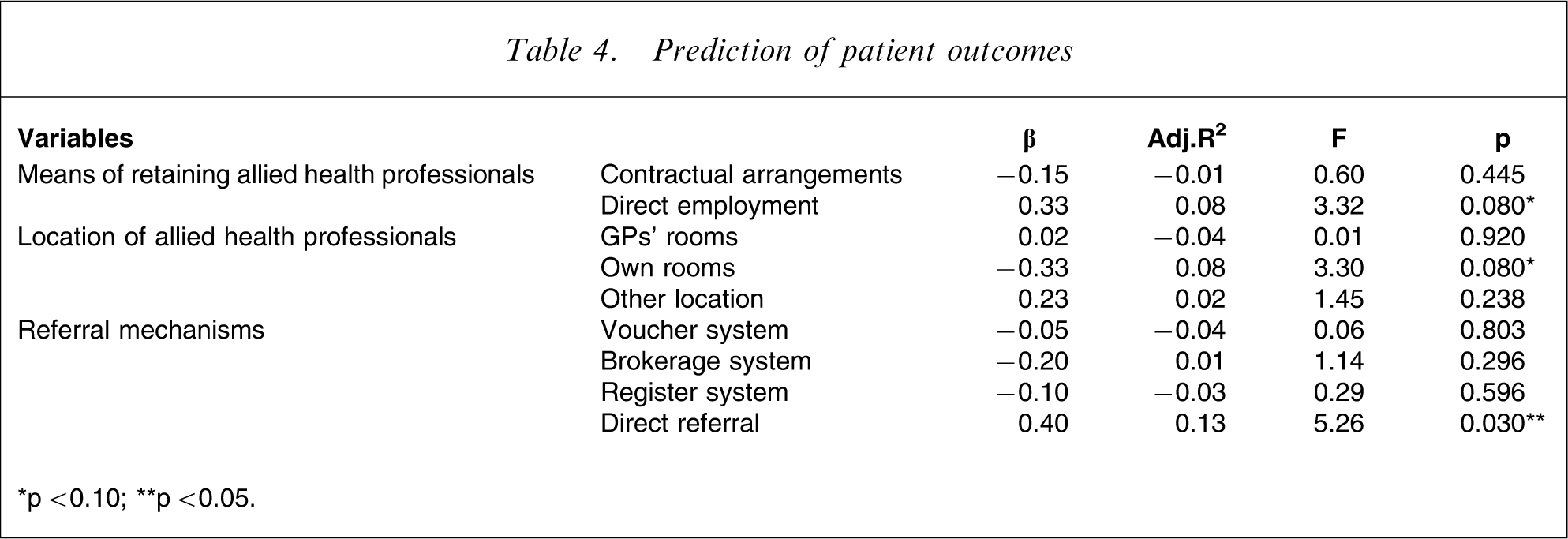

Table 4 shows the standardized regression coefficients (β), and adjusted R2 for each of the predictors.

Prediction of patient outcomes

∗p < 0.10; ∗∗p < 0.05.

The results suggest that the projects do not differ markedly in terms of the patient outcomes they are achieving, despite their differences in models of service delivery. This is in line with the findings in the previous section, which suggested that there is little variability in terms of patient outcomes – the majority of projects are achieving positive patient outcomes of significant magnitude.

Having said this, projects that are using a direct referral model have significantly greater effect sizes, indicating that they are achieving better levels of patient outcomes than their peers. In addition, there are non-significant trends toward employment of allied health professionals being predictive of greater patient outcomes and delivery of services from allied health professionals’ own rooms being predictive of weaker patient outcomes.

Discussion

Summary of findings

Patient outcomes achieved through the Access to Allied Psychological Services projects were quantified. Results indicated that the projects are achieving positive effects, mostly of large or medium magnitude. This suggests that the projects are effective in improving the mental health of patients who are receiving psychological services.

The association between different models of service delivery and patient outcomes was also examined, in order to determine whether particular models were predictive of greater or lesser levels of outcome. The findings suggest that the projects do not differ markedly in terms of the patient outcomes they are achieving, despite their differences in models of service delivery. Only one variable emerged as significant: projects implementing direct referral systems are tending to achieve greater levels of patient outcomes. In addition, there were non-significant trends toward direct employment of allied health professionals by the Division being predictive of greater patient outcomes, and delivery of services from allied health professionals’ own rooms being predictive of weaker patient outcomes.

Limitations

Some caution should be exercised in interpreting the aforementioned findings, because the analyses suffered from several limitations. First, outcomes data were available only for 29 projects (27%), raising the question of the extent to which the findings can be generalized. Having said this, exploratory analyses suggest that, in the main, these projects do not differ substantially from their counterparts for which no outcomes data were available [25]. They are largely similar in terms of their models of service delivery, their sociodemographic and clinical patient profiles and their treatment characteristics.

Second, outcomes data were available only for 2027 patients (5% of all patients on the minimum dataset at the time of downloading). This was due to the relatively small proportion of projects contributing outcomes data to the minimum dataset and the comparatively recent addition of the outcomes data fields on the minimum dataset. As time goes by, and outcomes data for more patients become available, it will be important to repeat the analyses reported here to confirm the current findings. It should be noted, however, that the number of patients for whom outcomes data are available will always fall short of the total number of patients who have had contact with the projects, because the majority of projects are not in a position to enter outcomes data retrospectively, and because a minority of projects do not have access to the relevant outcomes data because it is not made available to the Division(s) [8].

Third, the analyses were complicated by the fact that different projects are using different outcome measures. Indeed, in some cases individual projects are using different measures for different patients, and in one case multiple measures are being used for the same patient within the same project. The methodologies used here were designed to deal with this as rigorously as possible, but ideally the process of outcomes data collection would be streamlined to ensure projects are using a smaller number of valid and reliable measures. The K-10, the DASS, and the HoNOS are the most commonly used measures across projects [20].

Finally, the study design has implications for the magnitude of effect sizes. Given the naturalistic nature of the study, it is not possible to determine how much of the recorded improvement is directly attributable to the intervention and how much is due to other factors not controlled for in the study design. It is possible that patients could have improved over time due to the natural history of the disorder; that is, they would have improved whether or not they received the intervention, or because they received treatment at their worst and so were more likely to improve anyway. Having said this, the overall number of patients on whom data are provided is relatively large, which tends to suggest that the recorded improvements are valid, but further research is needed to confirm this.

Interpretation of findings

Despite these limitations, the findings appear to be extremely encouraging. The fact that the Access to Allied Psychological Services projects are achieving positive patient outcomes is significant, and suggests that by increasing access to high-quality mental health care they are playing a role in improving the mental health care of Australians who might otherwise not have had access to such care.

The finding that the direct referral model seems to be associated with particularly positive outcomes is worth examining in more detail, particularly in the context of the observed trends for employment of allied health professionals to be predictive of greater patient outcomes and delivery of services from allied health professionals’ own rooms to be predictive of weaker patient outcomes. Previous work suggests that these findings may be linked, in the sense that elements of the different models often occur in tandem [6]. Allied health professionals who receive direct referrals most commonly do so in the context of their being co-located with GPs, and this often occurs under a direct employment model. By contrast, allied health professionals who operate from their own rooms are more likely to be retained under contract, and are more likely to receive referrals via systems other than direct referral.

Given that the projects overall are using a variety of service delivery models [6] and the current findings suggest that direct referral and some of the other service delivery configurations that are commonly associated with it are predictive of better outcomes, consideration should be given to whether this approach should be singled out and encouraged. Such consideration is timely, given the fact that psychologists’ services are soon to be listed on the Medicare Benefits Schedule (MBS). The precise MBS arrangements for psychologists are yet to be announced, but it is likely that they will involve psychologists providing services from their own practices following a general referral from a GP, rather than being co-located with GPs and receiving direct referrals. There is certainly an argument for the continuation of the Access to Allied Psychological Services projects alongside these new arrangements, and the two initiatives should be complementary, rather than duplicative. The new arrangements may create incentives for the Access to Allied Psychological Services projects to utilize psychologists (and other allied health professionals) who receive referrals from the GPs with whom they are co-located, and for psychologists who operate from their own rooms to largely do so via the new MBS arrangements.

Having said this, further work is clearly needed to inform decisions of this kind. In particular, it is necessary to conduct ongoing analyses to ensure that the findings are not an artefact of methodological issues. In addition, there may be issues to do with interrater reliability, which also require exploration. Earlier work suggests that in many projects, outcome measures are administered by the GP at assessment but by the allied health professional at review, raising questions of interrater reliability [6]. Where the allied health professional is co-located with the GP, it may be more likely that he or she administers the outcome measure at both assessment and review, thereby obviating data quality issues associated with poor interrater reliability.

Future directions

As noted, further evaluation efforts are needed to determine whether the positive patient outcomes observed for the 29 projects involved in the current analysis can be generalized more broadly, and to determine whether the predictor(s) of positive outcomes remain robust as more data become available.

In addition, consideration should be given to the efficiency of different models of service delivery. The current analyses represent the first comprehensive look at the effectiveness of these models, but models that are effective may not necessarily be efficient. For this reason, future work should combine costing data with outcomes data, in order to provide a cost–outcomes description of the different models.

Conclusions

The findings of the current evaluation exercise shed some light on the effectiveness of the Access to Allied Psychological Services projects in improving the mental health of patients. Overwhelmingly, the projects are having a positive impact for patients in terms of their level of functioning, severity of symptoms and/or quality of life. Preliminary indications suggest that a service delivery model incorporating the use of a direct referral system may be associated with superior outcomes, but further work is needed to confirm this finding.

Footnotes

Acknowledgements

This work was funded by the Health Priorities and Suicide Prevention Branch of the Commonwealth Department of Health and Ageing. Strategic Data Pty Ltd were responsible for the development of the minimum dataset.