Abstract

This paper proposes a fuzzy based sensor fusion algorithm for localization of an intelligent vehicle by correcting translational error of latitude and longitude in easily available maps such as Google Earth. Even though the chosen map possesses translational error, the proposed algorithm will automatically correct and compensate this error by using the integration of a Global Positioning System (GPS), a magnetic compass, and a CCD camera. To integrate these sensors, all the sensing data must be converted to the same reference. The GPS and the magnetic compass provide global data, whereas the camera provides local data. Since all sensors contain some uncertainties, the outputs of all sensors can be expressed by fuzzy numbers with triangular membership functions. All the fuzzy numbers are operated based on the alpha-cut closed interval properties. The proposed algorithm uses information from the GPS and the magnetic compass to calculate the global position of two selected pixels in two different segments, which represent road center line in the road images. Consequently, by knowing the global positions of the selected pixels, it is possible to calculate the horizontal and vertical deviations that the waypoints in the original map are to shift. The system performs efficiently on an unmarked road inside Asian Institute of Technology Thailand (AIT) campus.

Introduction

Basically, guiding an intelligent vehicle from a location to another location requires three modules of global perception, local perception and vehicle control. The global perception system identifies the vehicle position with respect to an available global map which is usually in terms of latitudes and longitudes. It also determines a path that the vehicle has to track. However, because of the dynamic environment in real driving, the global perception system alone is not enough to maneuver the vehicle to move to its destination. Real-time sensing system is required to perceive vehicle's surroundings. Therefore, the intelligent vehicle also uses information from the local perception to avoid any static and dynamic obstacles that block vehicle path and to follow traffic rules. Finally, the vehicle control system integrates information from the global and local perception systems and then determines an appropriate action of the vehicle.

Global perception system involves vehicle localization and path planning. It allows the vehicle to know its position and direction with respect to the real world and series of positions in order to reach the destination. There are many researches addressing on global perception. Fang et al. used ground texture pattern to match with a built-in global reference texture map using Iterative Closest Point (ICP) to localize the vehicle in the global map (Fang, H. et al., 2007). Chausse et al. demonstrated the use of a GPS and a vision system to localize a vehicle (Chausse, F. et al., 2005). Many path planning algorithms focused on dynamic path regeneration when confronting obstacles such as real-time path planning based on multiple stereovision and monovision cues (Hummel, B. et al, 2006) and using probabilistic approach to find the optimal and robust path after detecting obstacles (Blackmore, L. et al, 2006).

In order to sense the environment along the path, the vehicle has to obtain information from local perception system. The local perception includes detecting of road lanes, traffic signs, static and dynamic obstacles using sensor devices such as camera, laser, Light Detection and Ranging (Lidar), sonar, etc. An example of the work using local perception is seen in unstructured road detection by utilizing Hue-Saturation-Value (HSV) color space and road features (Huang, J. et al., 2007). Traffic signs detection and classification was achieved by a combination of Hough transform, a neural network, and a Kalman filter (Garcia-Garrido, M.A. et al., 2006). Obstacle was identified by using a monocular vision system (Yamaguchi, K. et al., 2006) or an integration of lidar and vision system (Premebida, C. et al., 2007).

In vehicle control, the vehicle has to react to environment around itself properly using some control rules. There are many control algorithms for navigating the vehicle such as GPS based navigation control in combination with laser range finder for obstacle detection (Wu, B.F. et al., 2007), adaptive neural network for controlling longitudinal and lateral motion of the vehicle on highway scenario (Kumarawadu, S. & Lee, T.T., 2006), and fuzzy based control scheme for both longitudinal and lateral movement (Chiang, H.H. et al, 2006).

Latitudes and longitudes are usually the main source of the vehicle global data. However, most of the intelligent vehicle researches either assumed the obtained global maps were accurate or spent time in building the global map by driving the vehicle to collect the map data. For instance, each competitor in the DARPA Grand Challenge, which is one of the most challenging autonomous vehicle competitions, was provided with the Route Network Definition File (RNDF) 24 hours in advance. This RNDF contains waypoints for the vehicle to track until it reaches the goal. Naranjo et al. created a map using Differential Global Positioning System (DGPS), which had an accuracy of 1 centimeter, by driving the vehicle to collect waypoints along the test track (Naranjo, J.E. et al, 2004).

This paper focuses on utilizing easily available maps such as Google Earth in global path planning for an intelligent vehicle. Using the existing maps can reduce time and cost in path planning, however, these maps introduce horizontal and vertical translational error. To solve this problem, the fuzzy based sensor fusion using a GPS, a magnetic compass, and a camera is proposed. The other advantage of this algorithm is that the vehicle can localize itself on the global map which does not have any special landmarks. This paper is arranged in the following order. Section 2 explains the architecture of AIT intelligent vehicle used in this experiment. Section 3 describes the overall architecture, the sensor fusion method and concept of fuzzy arithmetic operation. Section 4 shows an experimental result tested on unstructured roads inside AIT campus. The work is concluded in Section 5.

AIT Intelligent Vehicle Architecture

AIT intelligent vehicle was developed on the 1991 Mitsubishi Galant platform as shown in Fig. 1.

AIT intelligent vehicle

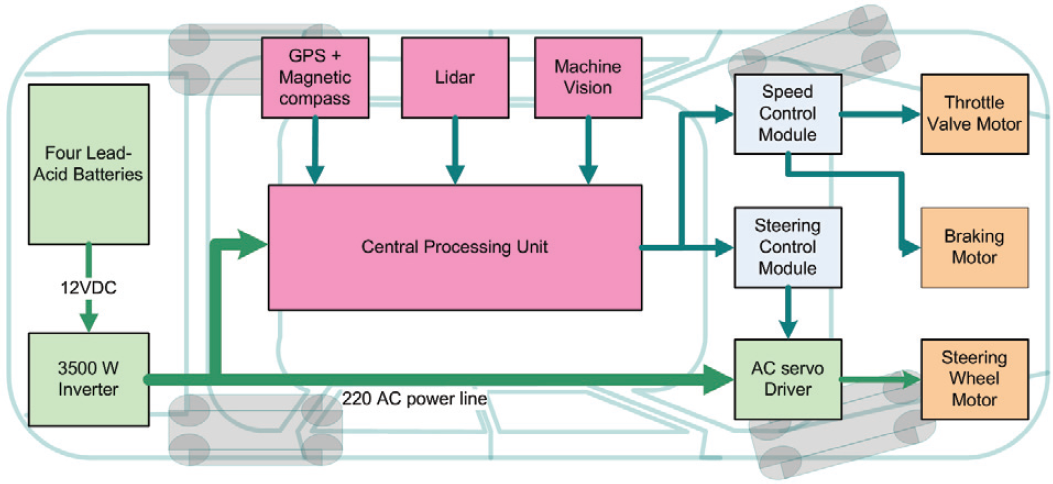

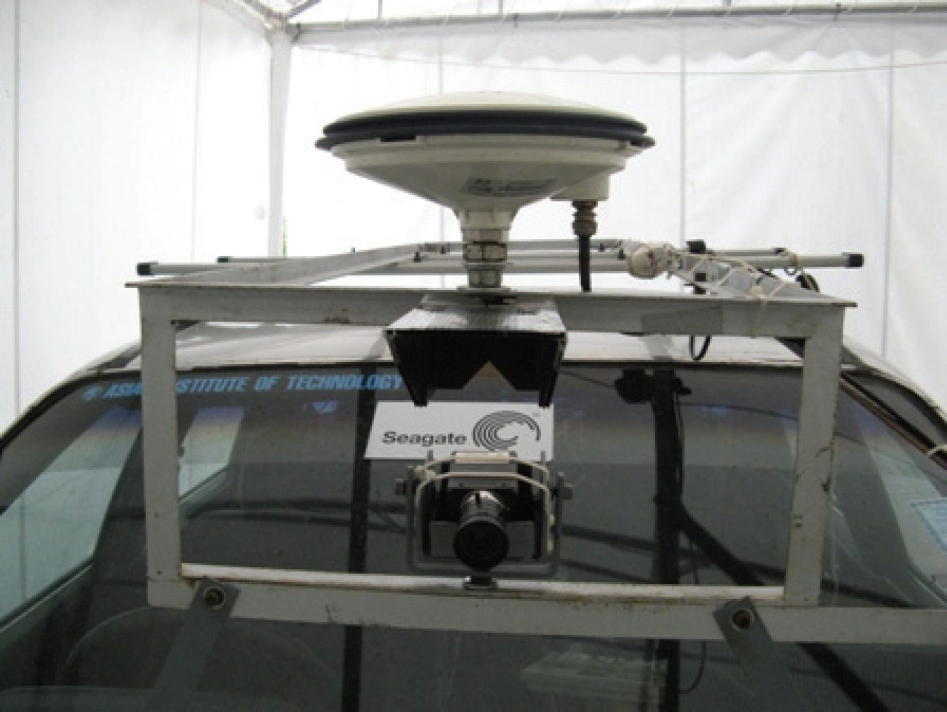

Fig. 2 shows the overall architecture of AIT intelligent vehicle. Four lead-acid batteries supply electrical power to three motors and a computer which performs as the central processing unit. Latitudes and longitudes are obtained from Novatel OEM4-RT20 GPS card. HoneyWell HMR-3000 magnetic compass with 0.1° accuracy measures vehicle heading respect to the earth magnetic North Pole. These two sensors are put inside a white protection box as shown in Fig. 3. GPS antenna is placed above the vehicle windshield as shown in Fig. 4. Lidar and machine vision modules provide local perception information. A601fc camera from Basler is also attached above the vehicle windshield in the way that the center of road image is the same as the center of the vehicle as shown in Fig. 4. All data are processed by the computer before sending outputs via RS-232 to speed and steering control modules which will further send out commands to throttle motor, breaking motor, and steering motor to drive the vehicle.

Overall architecture of the AIT intelligent vehicle

GPS card and a magnetic compass

GPS antenna and a camera

This section describes the sensor fusion algorithm based on the integration of a GPS, a magnetic compass and a camera, and the fuzzy number operations. Fig. 5 shows the sensor fusion flowchart.

Flowchart of the sensor fusion algorithm

In the algorithm, two road images from two different waypoints are captured. Road center and road direction (θ

image

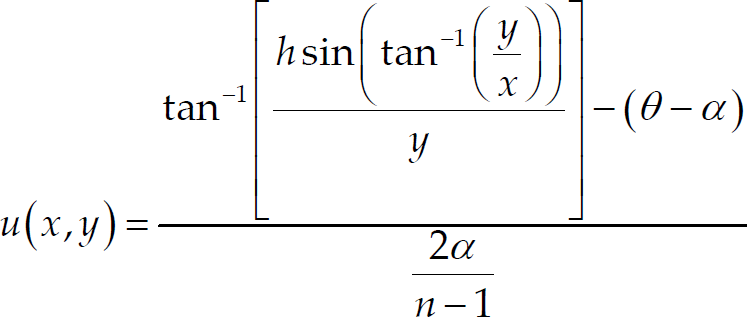

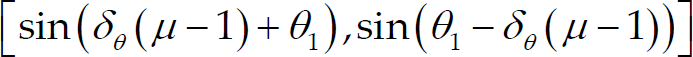

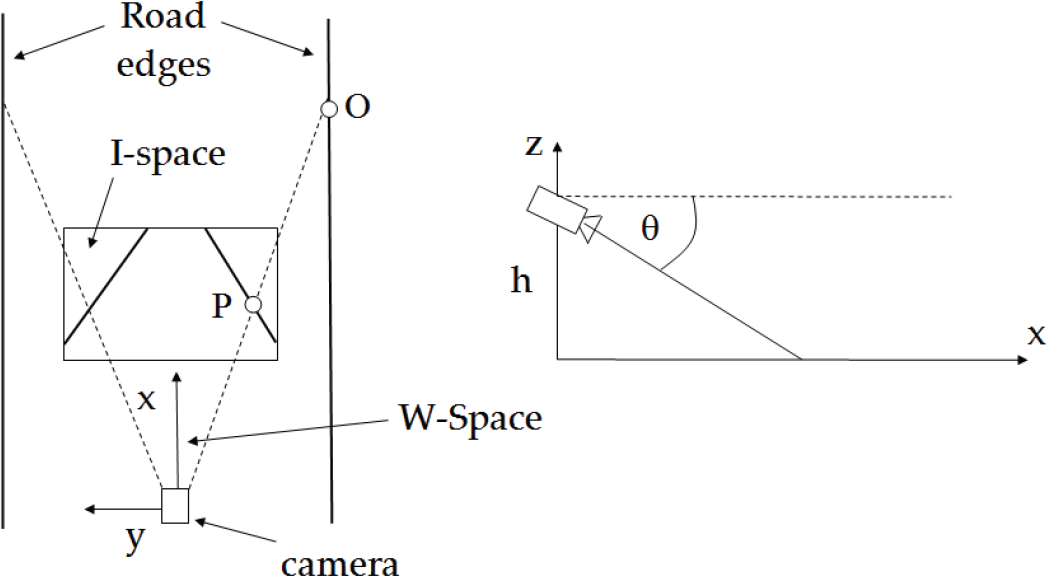

) are determined. One road image is used in determining horizontal error. The other road image is used in determining vertical error. Perspective distortion is solved by using inverse perspective mapping (IPM). Fig. 6 displays the relationship between the world coordinate (W-space) and the image coordinate (I-space). Point O in W-space corresponds to point P in I-space. Road edges, which are parallel in the world coordinate, become a vanishing point in the image coordinate. The position of the camera is defined as the center of W-space, using equation (1) and (2) (Bertozzi, M. et al, 1998).

where h is the height of the camera above ground, θ is the angle between the optical axis and horizontal axis, 2α is the camera aperture, and n × m is the resolution of image.

Inverse perspective mapping

To identify the road segment in an image, hue and saturation thresholdings are utilized. Hue thresholding is basically used for thresholding road pixel without shadow. On the other hand, saturation thresholding plays an important role for thresholding road segment under shadow. These two thresholdings compliment each other very well to identify the road segment. In hue thresholding, reference hue value is computed by averaging hue values inside a 40 × 40 window at the center lowest part of the image before inverse perspective mapping. Based on the camera installation, most pixels inside this area are road pixels.

The pixels which have hue value within ±8 of the reference hue value are recognized as road pixels, white pixels, otherwise black pixels. For the road pixels under shadow, the saturation value is very low. In the algorithm, the pixels that have saturation value below 20 are also recognized as road pixels.

Because of non-smooth condition of the road, some noises deteriorate the road image. 5 × 5 median filter is used along with closing operation to remove these noises. Median filter cannot perfectly eliminate salt and pepper noises. Therefore, closing operation helps closing all small black blobs inside the road area.

Canny Edge Detector is applied to identify road edges. However, after edge detection, some noises exist in the image. In order to suppress these noises, thresholding based on the length of feature is applied. Features that are shorter than 200 pixels are eliminated. Next, two lines are drawn at the 150th and the 180th rows resulting in four intersecting points as shown in Fig. 7. In the first road image, two intersecting points on each row as center pixels

Road edges after Canny Edge Detector

The pixel (

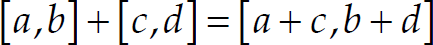

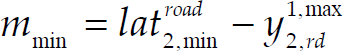

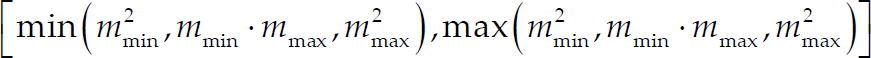

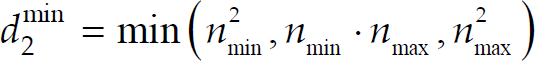

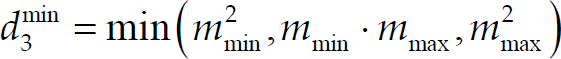

Since information from all the sensors contain some uncertainties, their output readings can be considered as fuzzy numbers. Alpha-cut of each fuzzy number is a closed interval of real number. Thus, arithmetic operations on fuzzy numbers can be determined by applying arithmetic operations on the closed interval as followings.

when 0 is not in [c, d]

In the algorithm, all the fuzzy numbers are modeled by triangular shape centered at the value read from the sensor with a base width of two times of the tolerance. Fig. 8 shows membership function of the vehicle longitude in the world coordinate.

Membership function of vehicle longitude in the world coordinate

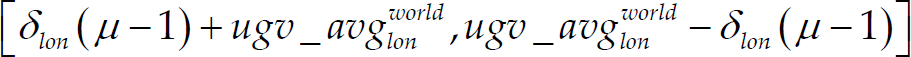

The alpha-cut of the vehicle longitude can be expressed by

Fig. 9 shows membership function of the vehicle latitude in the world coordinate.

Membership function of vehicle latitude in the world coordinate

The alpha-cut of the vehicle latitude can be expressed by

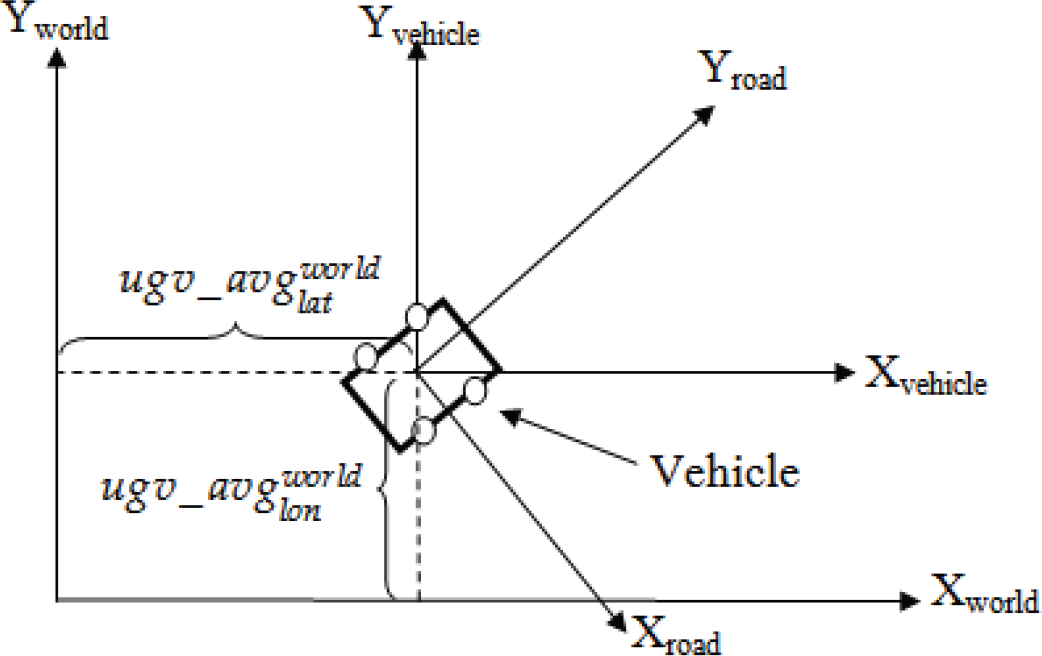

Since the GPS provides information respect to the world coordinate but the camera provides information respect to the road coordinate, this two information must be converted to the same reference before integration. The world coordinate is firstly translated to the vehicle coordinate so that the current vehicle position locates at the origin point of the vehicle coordinate. Then, the vehicle coordinate is rotated using a rotational matrix to obtain the road coordinate. Fig. 10 shows these coordinate systems. The rotational matrix is characterized solely by rotational angle (θ rotation ). This rotational angle is determined from the vehicle heading read from a magnetic compass and the road direction determined from a road image as shown in Fig. 11.

World, vehicle, and road coordinates

Vehicle heading and road direction

The vehicle heading is summed with the road direction θ

image

according to equation (10) in order to obtain the rotational angle.

To obtain θ

rotation

within the first four quadrants, equation (11) is applied

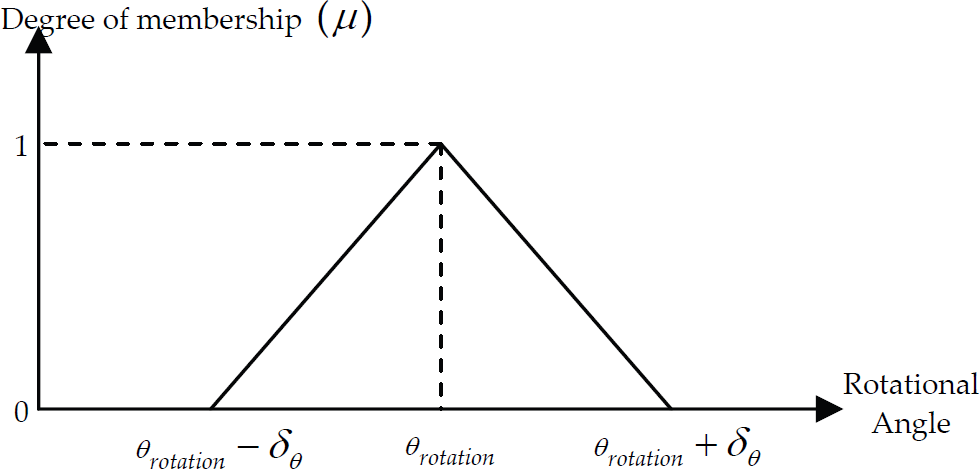

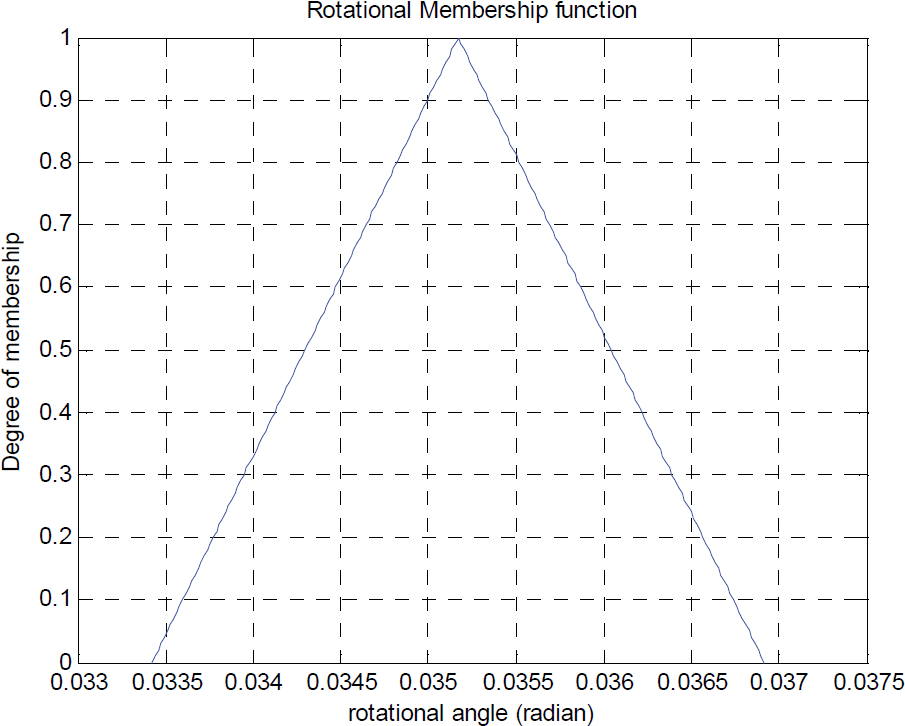

Fig. 12 shows membership function of the rotational angle. The alpha-cut of the rotational angle can be expressed by

Membership function of rotational angle

After defining all fuzzy numbers, the vehicle longitude and latitude in the vehicle coordinate are then determined. This can be done by translating the world coordinate horizontally by

Let's denote

After obtaining the rotational angle, latitude and longitude of the current position of the vehicle, the vehicle coordinate is converted to the road coordinate by vehicle-to-road rotational matrix as shown in equation (19).

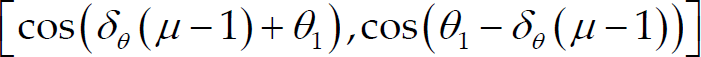

Since the rotational angle is associated with trigonometry functions used in the rotational matrix, the extension principle which relates a fuzzy set to other mathematical functions is applied. The alpha-cuts of cos θ

rotation

and sin θ

rotation

can be expressed by

Let's denote

The next step is to find the current position of the vehicle in the road coordinate system. According to equation (19), to obtain the alpha-cut of

Let's denote

By using the property of the closed interval subtraction, the alpha-cut of

Similarly, the alpha-cut of

Let's denote

Then, by using the property of the closed interval addition, the alpha-cut of

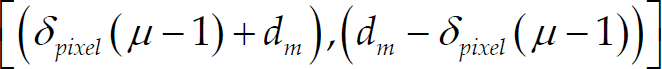

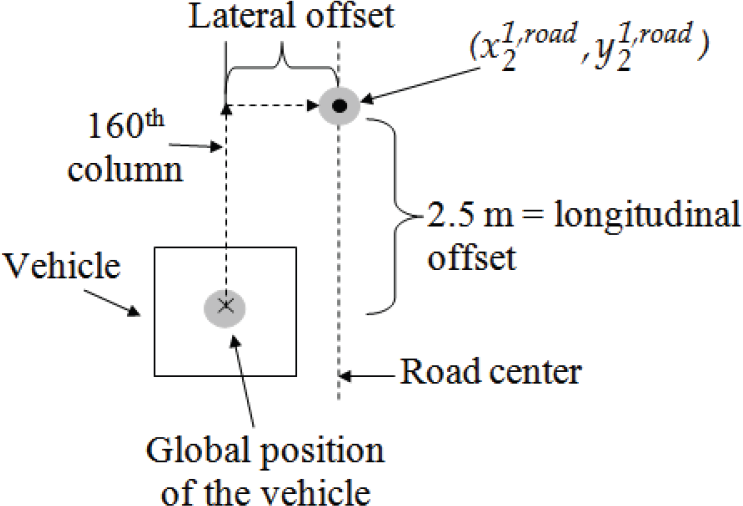

From Fig. 13, the global position of the selected pixel

Lateral and longitudinal offsets

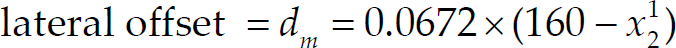

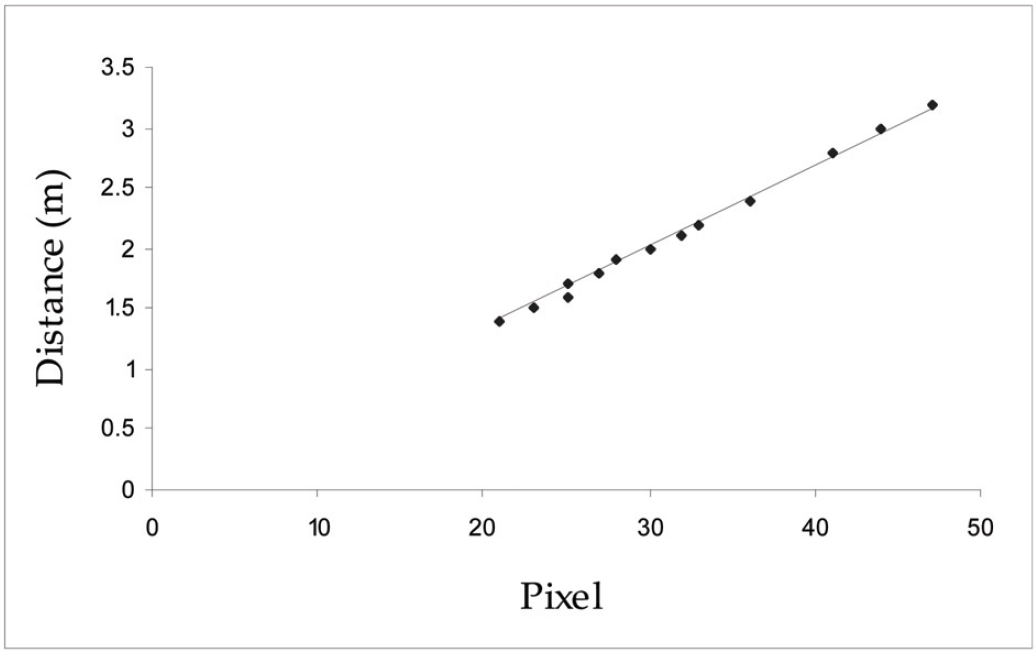

A calibration experiment is conducted to acquire the relationship between distance in pixel and distance in meter of the road image at 2.5 m in front of the vehicle. The camera on the vehicle captures images containing different objects at known length in meter. The distances are then counted in pixel length. The results are plotted as shown in Fig. 14. A straight line is fitted. The maximum error of this data is expressed as δ

pixel

. The relationship between distance in meter and distance in pixel is expressed in equation (40).

Relationship between distance in pixel and distance in meter

As a result, the lateral offset can be calculated by the following equation

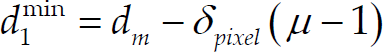

Fig. 15 shows membership function of the lateral offset. The alpha-cut of the lateral offset can be expressed by

Membership function of lateral offset

Let's denote

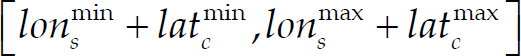

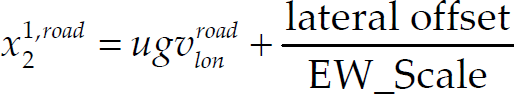

To obtain the global position of the selected pixel

where EW_Scale = 106080 m/degree and NS_Scale = 109369.2 m/degree. These two parameters are used in unit conversion of latitude and longitude from degree to meter.

The alpha-cuts of both

Let's denote

Fig. 16 shows x

offset

and y

offset

in road coordinate. Let's consider the road coordinate. When the line connecting the two waypoints of the first image aligns with the North Pole, x

offset

is calculated by

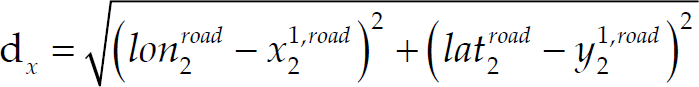

where d

x

is the distance from the vehicle to the next waypoint. θ1 is the angle from the vehicle to the next waypoint respect to the North Pole. d

x

is computed from

x offset and y offset

The alpha-cuts of

Let's denote

The alpha-cuts of

Let's denote

The alpha-cut of d

x

becomes

Let's denote

The alpha-cut of x

offset

can be expressed by

y

offset

is calculated by the following equation.

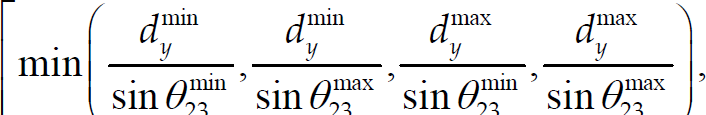

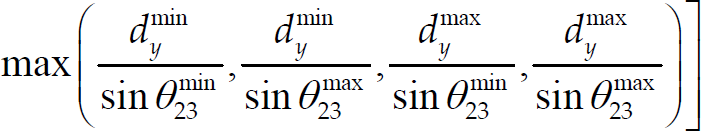

where d

y

is the distance from the vehicle to the next waypoint in the second image. θ23 is the direction of the line connecting the two waypoints respect to the North Pole. θ2 is the heading from the vehicle to the next waypoint respectively. The alpha-cut of

Let's denote

Hence, the alpha-cut of y

offset

becomes

Result on map error correction

The experiment is conducted on an unmarked road inside AIT campus. The image of a course track acquired from Google Earth is shown in Fig. 17. The white line drawn in the Google Earth map shows the path that the vehicle has to follow. There are 12 waypoints in the experiment as shown in Table 1. If these waypoints are provided directly to the vehicle, the vehicle will run out of the road. This problem is resulted from position error of the Google Earth map.

Course track

Latitude and longitude of original map

The camera is equipped at the center of the vehicle and 1.35 m above the ground on the front windshield. The image is resized from 480 × 640 to 240 × 320 in order to reduce the processing time. Thus, the 160th column represents the center position of the image and the vehicle. The images are converted from RGB to gray scale. The result of the inverse perspective mapping is shown in Table 2.

Comparison of images before and after IPM

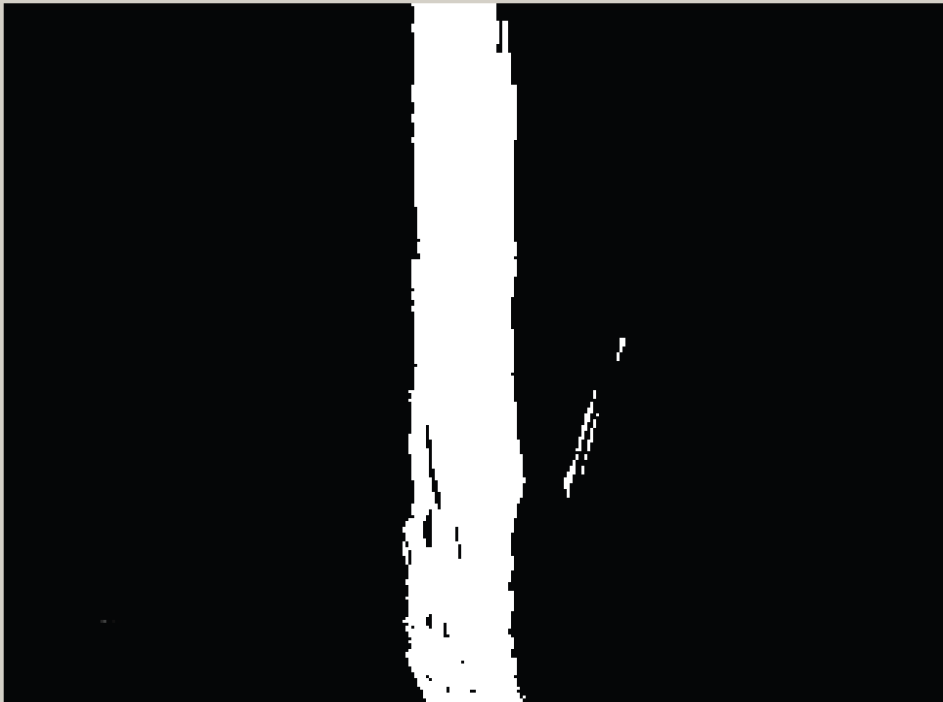

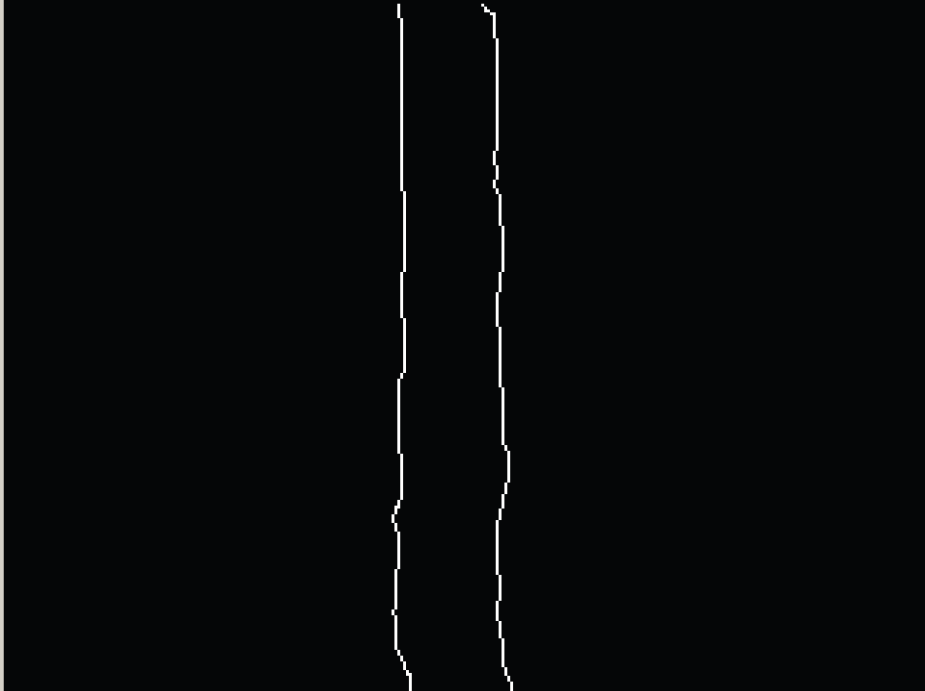

After hue and saturation thresholding, the image is displayed in black and white pixels as shown in Fig. 18. After Canny Edge Detector and length of contour thresholding, the small white noises on the right hand side in Fig. 18 are eliminated resulting in the image shown in Fig. 19.

Result of hue and saturation thresholding

Road edges result

Table 3 shows locations of the left and the right edges on the 150th and the 180th rows and the center position on both rows and also the road direction.

Comparison of images before and after IPM

Fig. 20 shows 200 data of the latitudes and longitudes from the GPS.

Latitude and longitude for vehicle current position estimation

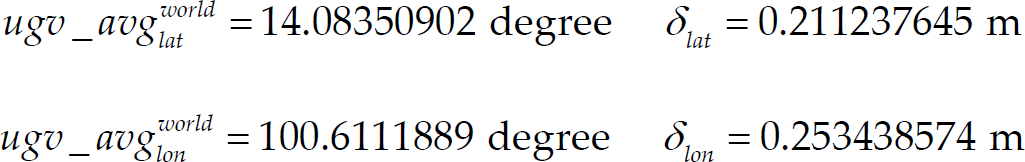

The results from the experiment show that the first global vehicle position is

And the second global vehicle position is

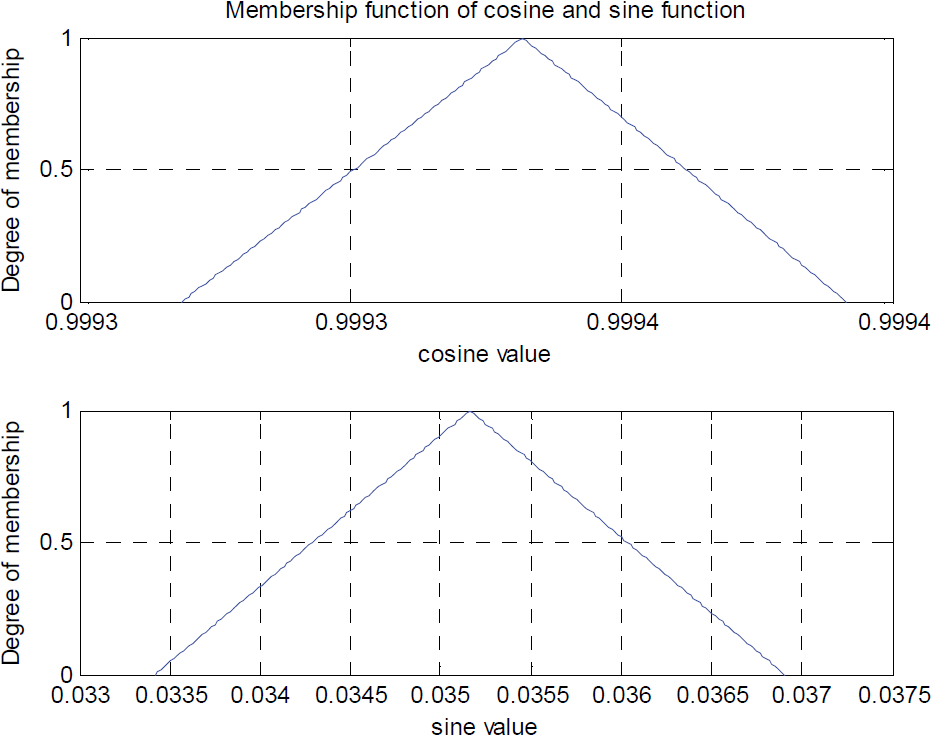

Based on these data, membership functions of longitude and latitude of the first and the second vehicle position in the world coordinate are expressed in Fig. 21 and Fig. 22 respectively.

Membership functions of longitude and latitude of the first vehicle position in the world coordinate

Membership functions of longitude and latitude of the second vehicle position in the world coordinate

The initial heading read from the magnetic compass is 358.2°. On the other hand, θ image is 3.814°. Thus, the rotational angle is 2.014°. The result of rotational angle membership function is shown in Fig. 23.

Membership function of rotational angle

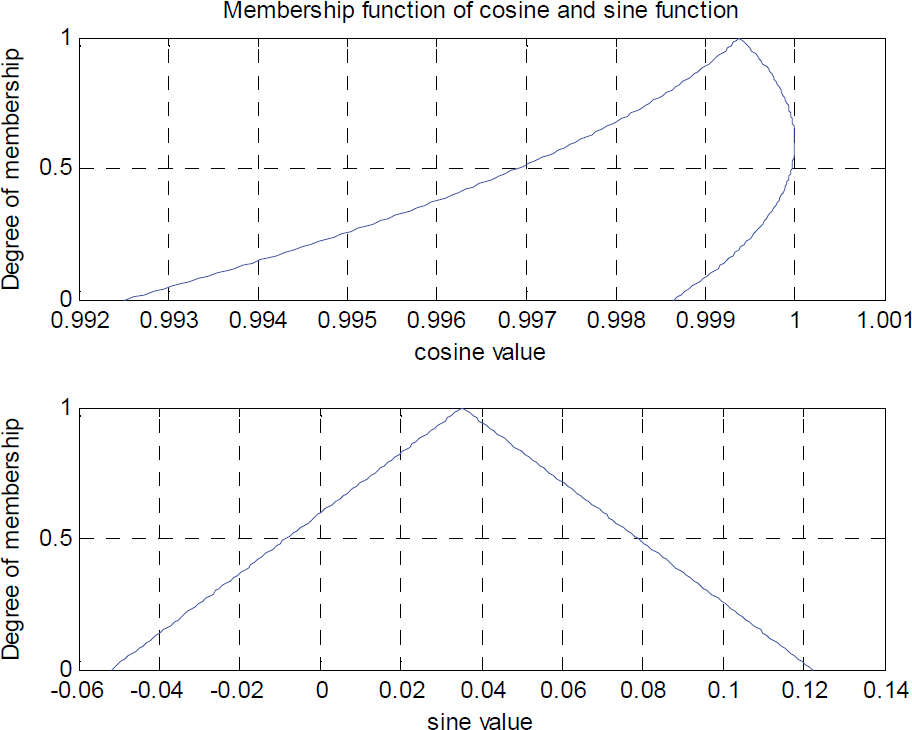

By using the extension principle, cosθ rotation and sinθ rotation can be expressed in terms of fuzzy numbers as well. The results of cosine and sine membership functions are shown in Fig. 24.

Membership functions of cosine and sine functions

In fact, the shapes of membership functions of cosθ rotation and sinθ rotation are not triangle. The membership functions are the results from very small angular uncertainty, around 0.1°. Thus, cosine and sine functions are taken only on small interval of rotational angle. However, if the uncertainty is larger, the membership functions will deviate from triangular shape. Fig. 25 illustrates the membership functions of cosθ rotation and sinθ rotation when rotational angle is 2.014° with uncertainty of 5°.

Membership functions of cosine and sine functions when rotational angle is 2.014° and its uncertainty is 5°.

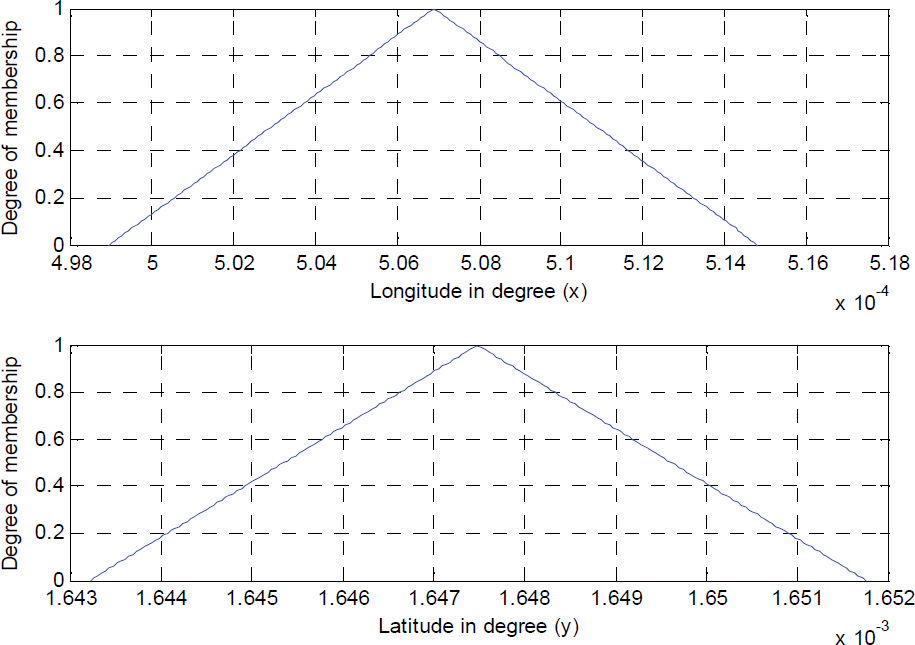

After obtaining the position of the vehicle in the road coordinate, the membership functions of

Membership functions of x21,road and y21,road

Fig. 27 shows the membership functions of

Membership functions of x22,road and y22,road

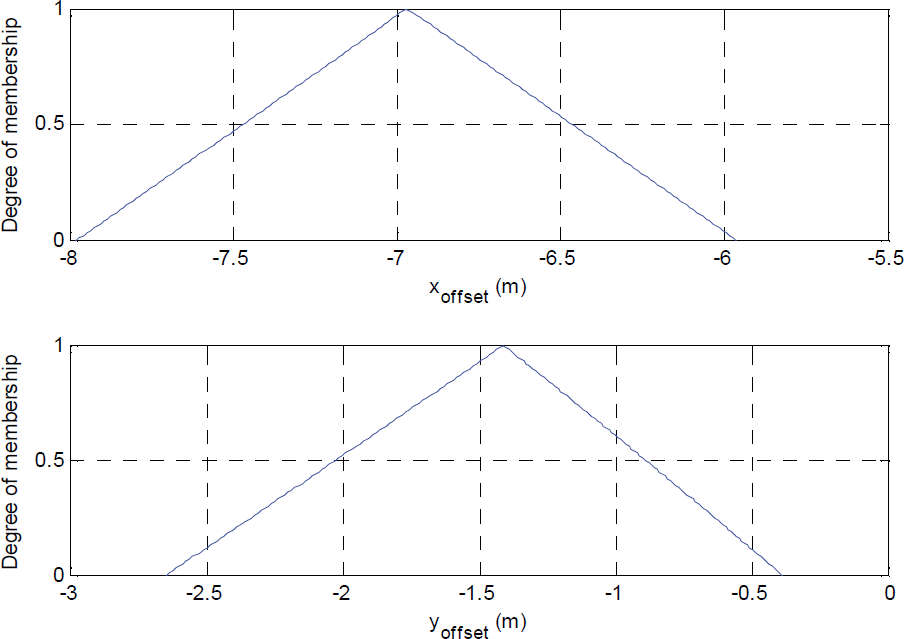

The membership functions of x offset and y offset are shown in Fig. 28.

Membership functions of x offset and y offset

According to Fig. 28, x

offset

and y

offset

have the highest degree of membership at

By using these x offset and y offset , the new waypoints are generated. The result is shown in Fig. 29. The light color line is the original waypoints. The dark color line is the new map which is shifted horizontally to the left by 6.978 m and vertically downward by 1.414 m.

New waypoints versus old waypoints

After correcting the waypoints, they are provided to the vehicle control system. This control system is designed based on fuzzy logic controller with two inputs and one output. The first input is the lateral offset from the vehicle to the line connecting the previous and next waypoint. The other input is the heading error which is the angle difference between the vehicle heading direction and the direction from the previous waypoint to the next waypoint. The output is the steering angle. All membership functions of the inputs and the output are modeled as triangular shape as shown in Fig. 30 (a), (b), and (c). Fuzzy inference rules are designed and shown in Table 4.

Membership functions of the heading error input (a), lateral offset input (b), and the steering angle output (c)

Fuzzy inference rules

Fig. 31 shows the result of vehicle latitudes and longitudes during autonomous running along with the original and the corrected waypoints. The result shows that the AIT intelligent vehicle successfully tracked the road center.

The actual positions of the vehicle compared to the old and new waypoints.

Where

LN = large negative

N = negative

SL = small left

ML = medium left

FL = fully left

LP = large positive

P = positive

SR = small right

MR = medium right

FR = fully right

This paper described an algorithm used to correct inaccuracy of the available maps such as Google Earth. The proposed algorithm corrected and compensated the error by using fuzzy based sensor fusion concept. Three sensor devices, a GPS, a magnetic compass and a camera, were integrated in order to determine the offset that the original waypoints were away from the road center in both horizontal and vertical directions. Furthermore, all arithmetic operations were conducted based on the alpha-cut closed interval arithmetic so that the uncertainties of the sensors were also taken into account. After knowing the horizontal and vertical offsets, the initial path was shifted accordingly. This algorithm performed very well in the experiment. The proposed algorithm was very easy to implement, low cost and less time consuming compared to other commonly used methods such as driving and collecting waypoints or assuming that all the given waypoints were accurate.

Footnotes

6.

This research project is financially supported by National Electronics and Computer Technology Center (NECTEC).