Abstract

General purpose visual programming languages (VPLs) promote the construction of programs that are more comprehensible, robust, and maintainable by enabling programmers to directly observe and manipulate algorithms and data. However, they usually do not exploit the visual representation of entities in the problem domain, even if those entities and their interactions have obvious visual representations, as is the case in the robot control domain. We present a formal control model for autonomous robots, based on subsumption, and use it as the basis for a VPL in which reactive behaviour is programmed via interactions with a simulation.

Introduction

Many problem domains consist of entities that have commonly accepted visual representations. An example in the realm of software application development, is graphical user interfaces (GUIs), which were originally programmed by writing code in standard textual programming languages. Because interface elements have standardised, well understood visual representations and behaviours, an obvious step was to create tools with which GUIs could be built by directly assembling interface elements, and even programming some of their interactions and behaviours by demonstration (B. & Buxton, W. (1986): Wolber, D. (1997)), or drawing various kinds of connecting lines (Carrel-Billiard, M. & Akerley, J. (1998)). GUIs are now almost exclusively built using such direct-manipulation tools.

Another such ‘concrete’ domain, populated with entities with obvious visual representations and observable behaviours, is robot programming. A robot control program consists of algorithms built on the primitive actions the robot can perform, at least some of which are physically observable, such as changes in position or colour, grasping, pushing and so forth. Just as the concreteness of GUIs led to the development of GUI builders, the concreteness of the robot world has motivated researchers and practitioners to search for ways to program robots by direct manipulation. A simple application of this idea is the training of industrial robots to perform repetitive tasks in a completely known environment by simply recording the actions of an experienced operator doing the task, painting car panels on an assembly line for example. In recent years, many aspects of this promising paradigm have received close attention (Billard, A. & Dillmann, R. (2004): Billard, A. & Siegwart, R. (2004)), such as training a robot by interacting with a simulation rather than with the actual robot (Aleotti, J.; Caselli, S. & Reggiani, M. (2004)), improving task performance through practice (Bentivegna, D.C.; Atkeson C.G. & Cheng, G. (2004)), and optimisation of imitative learning (Billard, A.; Epars, Y.; Calinon, S.; Schaal S. & Cheng, G. (2004): Chella, A.; Dindo, H. & Infantino, I. (2006)).

Programming by Demonstration (

Robot programming has recently caught the attention of VPL researchers, prompted in part by a competition at the 1997 IEEE Symposium on Visual Languages, involving the application of VPLs to mobile robot programming (Ambler, A.L.; Green, T.; Kimura, T.D.; Repenning A. & Smedley, T.J. (1997)), and inspired by an example of programming a robot car using the rule-based mechanism of AgentSheets (Gindling, J.; Ionnidou, A.; Loh, J.; Lokkebo O. & Repenning, A. (1995)). Some examples giving the flavour of the approaches taken are as follows.

Altaira (Pfeiffer, J.J. (1997)) and Isaac (Pfeiffer, J.J. (1999)) both implement rule-based models for robot control, relying respectively on a variant of Brooks' subsumption model for robot control (Brooks, R.A. (1986)), and a fuzzy deductive system for geometric reasoning. Cocoa (Smith, D.C.; Cypher, A. & Spohrer, J. (1994)) also implements a rule-based model, and although not specifically designed for robot control, with minor changes it can be used for programming simple robots in a 2D environment.

In (Cox, P.T. & Smedley, T.J. (1998)) it is noted that if a visual

In the following we provide a formal, subsumption-based definition for a control model for autonomous robots, suitable for the kind of visual programming-by-demonstration suggested in (Cox, P.T. & Smedley, T.J. (1998)), followed by a proposal for

Robots and control models

A robot is a programmable machine equipped with at least one actuator and at least one sensor. An actuator is a device that can release non-mechanical energy in its surrounding environment, such as light or other electromagnetic waves, or can mechanically change its environment, including itself. A sensor is a device that can detect or measure a specific kind of energy in its operating domain, for example, an infrared sensor, or a touch sensor. The sensors and actuators of a robot are connected by some structural parts collectively called the body, which has no significance for control or programming purposes except for the geometric relationships it imposes on sensors and actuators. Such robots are intended to operate in an environment which is at least partly unknown, in such a way that they can react sensibly when they encounter objects or otherwise detect changes. We are not, therefore, interested in robots that perform fully defined, repetitive tasks in a completely known environment such as an assembly line. Programs controlling robots must compute values for actuators based on the values of sensors. A robot's level of autonomy is the degree to which it can respond to environmental changes in a logical fashion as if it were being controlled by an operator. Examples of mobile autonomous robots are the Mars Rover (NASA Jet Propulsion Laboratory (2007): St. Amant, R.; Lieberman, H.; Potter, R. & Zettlemoyer, L. (2000)), autonomous underwater vehicles (Jackson, E. & Eddy, D. (1999): (1992)) and mobile office assistants (Simmons, R.; Goodwin, R.; Haigh, K.Z.; Koenig S. & O'Sullivan, J. (1997)).

Of the many control models for programming robots, the subsumption architecture due to Brooks is among the simpler ones. Since the control model we propose is based on this architecture, we give an overview of it below. A thorough discussion can be found elsewhere (Brooks, R.A. (1986)).

Brooks' Subsumption Architecture

The traditional method for designing a control system for a robot is to decompose the problem of computing actuator values from sensor inputs into subproblems to be solved in sequence. A control system then consists of a sequence of functional units for solving these subproblems. Input signals are produced by the robot's sensors, and processed by a perception module, the first unit in the sequence, which passes its results to the next functional unit. Each unit receives its inputs from the previous unit in the sequence, processes the data and passes results to the next unit. The final unit produces values which are applied to the actuators to achieve the required response. To motivate subsumption, Brooks cites several drawbacks of this traditional structure (Brooks, R.A. (1986)). For example, a robot control system cannot be tested unless all its constituent units have been built, since all are required to compute actuator commands. Information received from the sensors is therefore meaningful only to the first unit of the model and meaningless to the rest. Clearly, making changes to a unit of such a control system is problematic. Changes must either be made in a way that avoids altering the interfaces to adjacent units, or if that is not possible, the effects of a change must be propagated to the adjacent units, changing their interfaces and functionality, and possibly necessitating changes to other units.

Another problem with the traditional sequential system is the time required for a signal to pass through all the stages from sensors to actuators. Changes in the robot's environment may happen more quickly than the robot can process them.

To overcome these difficulties, Brooks proposed that, instead of decomposing a control problem into subproblems based on successive transformations of data, decomposition should be based on “task achieving behaviours”. In this model, behaviours ideally can run in parallel, receiving sensor outputs and processing them to generate values for the actuators. This leads naturally to another issue: mediating between behaviours which are simultaneously trying to control the robot.

Brooks introduced a solution to this problem, called subsumption, in which behaviours run in parallel, but those which provide commands to a common set of actuators are prioritised by suppressors and inhibitors, simple functions each of which selects between two signals, a control input c and a data input i, defined in Fig. 1. In the figure, h is a special value indicating “no signal”. Suppose, for example, that a control system for a robot consists of two behaviours, “obstacle avoidance”, which causes the robot to move around objects it encounters, and “move to A”, which drives the robot towards a particular point. To resolve potential conflicts, outputs of these behaviours destined for the same actuator could be provided as c and i inputs to a suppressor, and the suppressor output sent to the actuator. When an obstacle is detected, the “obstacle avoidance” output will take priority, ensuring that the robot does not hit any object on its way to point A. One of the advantages of subsumption is that, unlike the traditional sequential control system, behaviours can be directly connected to sensors, actuators and to other behaviours. Since behaviours are not strongly tied to each other, new behaviours can be added to existing ones to create a higher level of autonomy.

Suppressor and Inhibitor

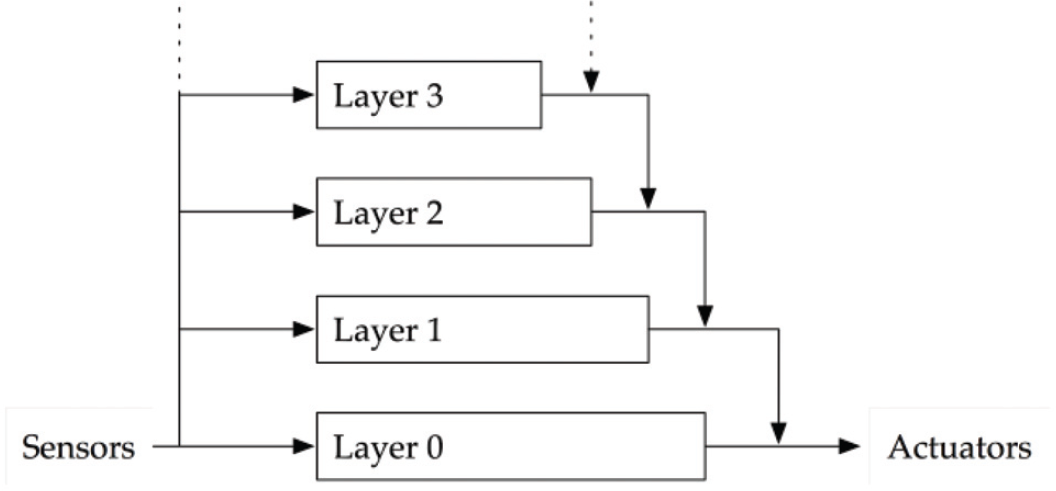

In Brooks' architecture, a robot control system is constructed incrementally by building behaviours at increasing levels of competence. First a level 0 system is built and fully debugged. The level 0 system together with the hardware robot is then considered to be a new robot, more competent than the original. On top of this new robot, a level 1 control layer is constructed. It may read actuators, investigate data flowing in the level 0 system, and via subsumption functions, interfere with actuator output or data flowing in the level 0 system. When this control layer is debugged, the entire layered structure constitutes a level 1 system. This architecture is illustrated in Fig. 2.

Subsumption architecture control model (diagram adapted from (Brooks, R.A. (1986)))

Each control level consists of a set of behaviours, implemented as a finite state transducer loosely modelled on finite state machines. A behaviour has input and output lines, and variables for data storage. Inputs may come from actuators or other behaviours. Each input line is buffered, making the most recently arrived message on a line always available for inspection. Each module has a special reset input, which switches the FSM to its start state on receipt of a message. States of an FSM are classified as Output, Side Effect, Conditional Dispatch, and Event Dispatch. When in an Output state, the FSM sends a message on an output line and enters a new state. The output message is a function of the inputs and variables. In a Side Effect state, a new state is entered and one of the variables is set to a new value computed as a function of the module's input buffers and variables. In a Conditional Dispatch state, one of two subsequent states is entered, determined by a predicate on the variables and input buffers. In an Event Dispatch state, a sequence of pairs of conditions and states are continuously checked, and when a condition becomes true the corresponding state is entered.

Each control level consists of a set of behaviours, implemented as a finite state transducer loosely modelled on finite state machines. A behaviour has input and output lines, and variables for data storage. Inputs may come from actuators or other behaviours. Each input line is buffered, making the most recently arrived message on a line always available for inspection. Each module has a special reset input, which switches the FSM to its start state on receipt of a message. States of an FSM are classified as Output, Side Effect, Conditional Dispatch, and Event Dispatch. When in an Output state, the FSM sends a message on an output line and enters a new state. The output message is a function of the inputs and variables. In a Side Effect state, a new state is entered and one of the variables is set to a new value computed as a function of the module's input buffers and variables. In a Conditional Dispatch state, one of two subsequent states is entered, determined by a predicate on the variables and input buffers. In an Event Dispatch state, a sequence of pairs of conditions and states are continuously checked, and when a condition becomes true the corresponding state is entered.

The goal of this architecture is to allow control systems to be made up of independent layers that can run in parallel, and incrementally improved as described above. Each layer, however, can monitor data flowing in lower levels and interfere with the flow of data between behaviours in lower levels by suppressing or inhibiting. This means that although the lower levels are unaware of the existence of the higher layers and can control the robot independently, a higher layer cannot be unaware of the structure of lower levels, unless its inputs and outputs are limited to sensors and actuators. The form of data monitoring, suppression and inhibition in subsumption prevents us from looking at each layer of behaviours as a “black box”, so that although the degree of autonomy of the control system increases with the addition of higher layers, the overall system must still be viewed as a distributed one, made up of many small processing modules. The final block diagram of a control system constructed according to Brooks' architecture must include the internal connections between layers, where one layer monitors or interferes with the internal flow of data in another. Consequently, such a diagram cannot, in fact, have the neatly layered structure suggested in Fig. 2. This can impede the understanding of the control system by other designers, complicating the process of editing and maintaining such systems.

Another characteristic of the subsumption architecture is that suppression is applied only to inputs of a behaviour and inhibition to outputs. Considering that the output of each module is connected to the input of another module, except if directly communicating with the hardware, inhibiting an output is equivalent to suppressing the input of the succeeding module. The same argument holds true for suppression. This means that in practical terms, inhibition and suppression can affect both the input and the output of a module, although in cases where there are both suppressors and inhibitors on a line from an output to an input, their order is significant.

In the architecture proposed by Brooks, although the overall control system is decomposed according to the desired behaviours of the robot, one might argue that each layer must still be decomposed in the traditional manner, and must therefore be complete before the expanded control system can execute.

Published descriptions of the subsumption architecture outline an implemented control system, rather than a general formalism for control systems. For example, the nature of the messages sent from sensors or generated by behaviours is not clearly specified. They are sometimes referred to as “signals”, giving the impression that they are continuous. Elsewhere, there are references to Boolean operations applied to messages, and to variables containing Lisp data structures.

In order to design a well defined, visual programming-by-demonstration system, we need a precisely specified control model as a foundation; a “structured programming” equivalent to Brooks' “Fortran”. To that end, in this section we propose a simpler, more streamlined subsumption model,

Behaviours

Behaviours in

A robot behaviour must process values from several different input lines rather than just one, so in order to use Moore Machines to implement behaviours, we must combine several input alphabets. Consequently, to realise a behaviour with n inputs from alphabets A1, A2 … An, we can define a Moore Machine with input alphabet Σ = A1 × A2 × … × An. Similarly, if the behaviour has several outputs, the output alphabet of the machine will be the Cartesian product of output alphabets of the behaviour.

A Moore Machine, like an

Although Moore Machines are adequate for implementing simple behaviours, the implementation they provide may be rather clumsy for more complex ones. Consider, for example, the situation in which an autonomous mobile vehicle detects a pedestrian in its path. The sensor values signifying this event will cause a transition from the current state of the machine to some new state which outputs appropriate commands to actuators, in particular, signals sent to brakes. The degree of braking force to be applied should be a function of the speed and the distance from the pedestrian. The only way to implement such a function in a standard Moore Machine, however, is to provide one target state for each value of braking force, with a corresponding transition from the current state, taken in response to a particular combination of speed and distance values. Essentially, the function is explicitly defined in tabular form. Clearly, this can lead to large and repetitious machines.

This example illustrates a further inadequacy of ordinary Moore Machines, namely, that their input and output alphabets are finite. Although the speed of the robot in our example may be bounded, the set of speed values is not finite. The same can be said for braking force and distance. One could always partition such sets into a large but finite set of subsets, but that would exacerbate the first problem mentioned above. We define Extended Moore Machines to overcome these difficulties. An Extended Moore Machine (

The SPRD Control Model

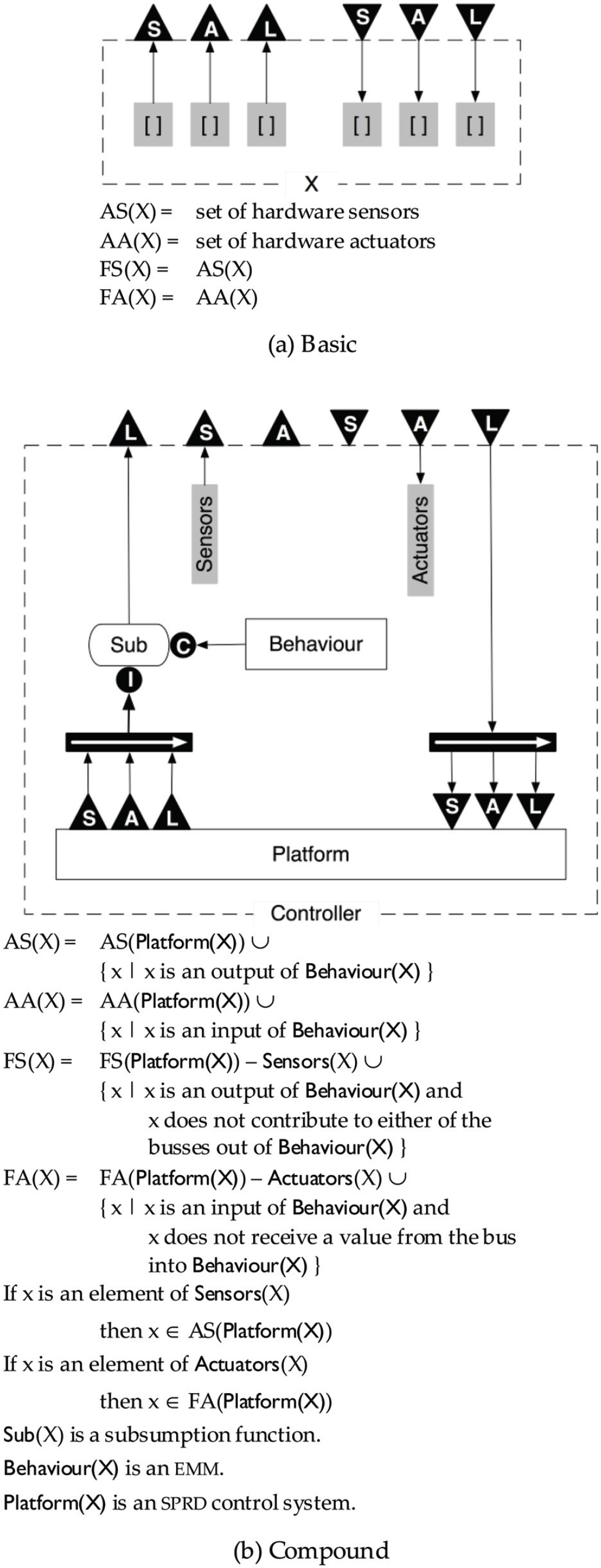

An

The structure of the  icons indicate concatenation or splitting of vectors in the direction of the arrow. It is easy to prove by induction on the diagrams in Fig. 3, that in the case of splitting, the points at which a vector is divided are well defined.

icons indicate concatenation or splitting of vectors in the direction of the arrow. It is easy to prove by induction on the diagrams in Fig. 3, that in the case of splitting, the points at which a vector is divided are well defined.

In each of the diagrams in the figure, the labelled arrowheads on the dashed perimeter indicate connections which are not part of the control system being defined, but are necessary for it to function. For example, there is an implicit bus from the  to the

to the  on the dashed perimeter in each diagram.

on the dashed perimeter in each diagram.

The figure defines two kinds of

The item Sub in the control diagram of the compound control system in Fig. 3 is a subsumption function. A subsumption function has two vector inputs

If X is a compound

The intuition behind this definition is as follows. A hardware robot is analogous to a car. It has sensors which, like the gauges on the dashboard of a car, provide information about current conditions, and actuators which, like the controls of a car, can be given values that cause the robot to perform various actions.

A hardware robot, together with an

To extend the capabilities of robot R, we add a processing unit, in the form of an

Note that because of the simple form of a level 0 control system, a level 1 control system has the degenerate control diagram shown in Fig. 4, where Sensors and Actuators consist of, respectively, the hardware sensors read by Behaviour, and the hardware actuators controlled by Behaviour. We leave it to the reader to verify that the first level at which the full generality of the model can be realised is 3.

Level 1 has a simple form

In order to simplify the

In a robot control system, an input or output line may or may not carry a signal. The way that a signal or lack of a signal is interpreted will depend on the hardware receiving it. For example, a signal value of 0 input to a motor may have the same effect as no signal at all. In order to support “no signal” values, we define a new no-value symbol η which is included by default in all sets of symbols which are components of input and output alphabets.

The remaining conventions are introduced in order that Moore Machines can be specified in a more compact form, where a single transition may be an abbreviation for a set of transitions.

We define a transition labelled with a set S = {x1, …, xn} as an abbreviation for n transitions labelled x1, …, xn as shown in Fig. 5.

The transition with set label at left is equivalent to the n transitions at right

A Cartesian product X1 × X2 × … × Xk is abbreviated as X1X2…Xk.

If × is any symbol we will use × to mean {x} when this meaning is clear in context.

The complement of a subset Y is denoted by  , then

, then  . We extend this notation by applying it to consecutive components of a Cartesian product. For example,

. We extend this notation by applying it to consecutive components of a Cartesian product. For example,

If x is an n-tuple then we write x i to denote the ith component of x.

In an

These notations are illustrated in Fig. 6 which depicts EMMs that implement suppression and inhibition. In this figure, the alphabets of the c and i inputs are C and I respectively, and the input alphabet of both machines is CI. The output alphabets of the suppression and inhibition EMMs are

EMMs implementing suppressor and inhibitor

In this section we show how the SPRD model described above can be used as the underlying formalism for SDM, the simulation environment suggested in (Cox, P.T. & Smedley, T.J. (1998)), in which the programmer creates control programs by interacting with a simulation of a robot and its environment. Although the aim of the programming process is to generate an SPRD control system, like other programming-by-demonstration systems, the philosophy of SDM is to focus the programmer's attention on making the simulated robot behave properly, rather than on the underlying abstraction. It is important to note that “demonstrating” an action does not always mean directly illustrating the result of the action. For example, demonstrating how to make an automobile move forward does not entail pushing the car. The demonstration involves giving a value to a control (the accelerator pedal), which will cause the driving wheels to turn, achieving the required result. Similarly, in our proposed PBD environment, the programmer demonstrates behaviour by setting values for the robot's actuators via a panel.

To show how a complete SPRD control system would be created in SDM, we use an example to illustrate the programming steps. The SDM interface we present has not been implemented. Although the interface and example are two-dimensional, the underlying principles are not limited to two dimensions. The example shows how to program a robot car to traverse a maze defined on a rectangular grid. Each grid cell is occupied either by a tile marked with a black cross in the centre, or an obstacle. The car is equipped with two motors and driving wheels, one at each side, and four infrared sensors mounted horizontally at the front, right, left and back, for detecting objects. Another infrared sensor, mounted underneath the robot and slightly to the left, detects the black crosses, and can determine when the car is in the middle of a cell. Because of its left offset, it can also detect when a 90° rotation to the right or left is complete.

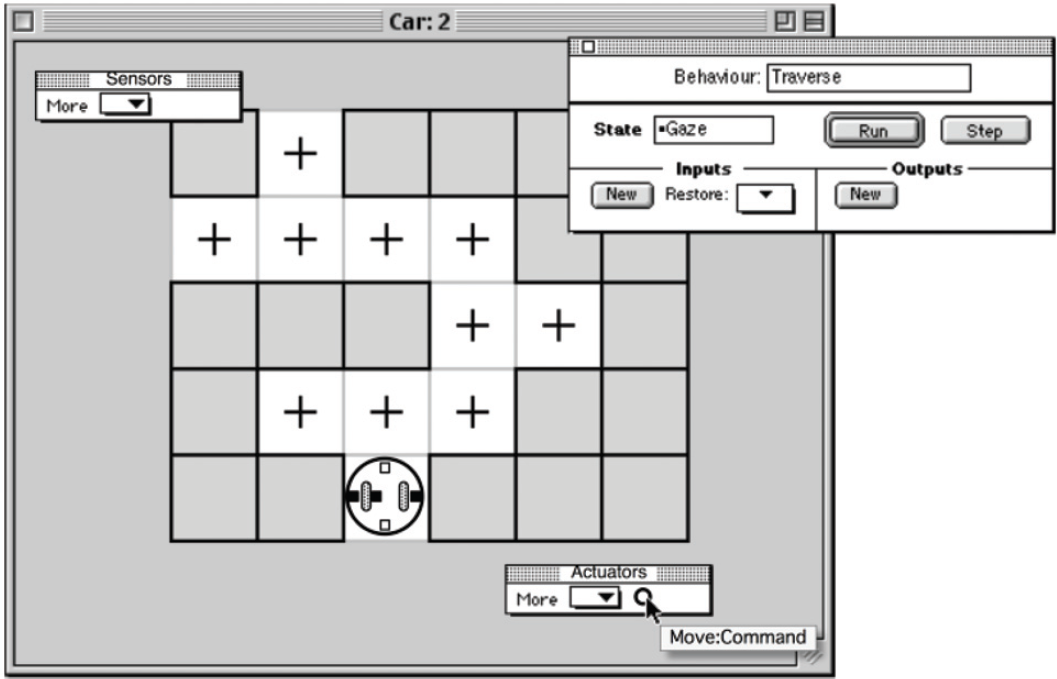

Once Car, Tile, and Obstacle are modelled in hdm by a process such as that outlined in (Cox, P.T. & Smedley, T.J. (1998)), the resulting description is loaded into sdm, which displays a workspace window named

Assembling the simulation environment

A simple maze-traversal algorithm that a human might use in real mazes, involves walking forward while at all times keeping one hand on a wall. To program the robot with this algorithm, we start by building a useful low-level behaviour which we will call

To define the

Programming at Level-1

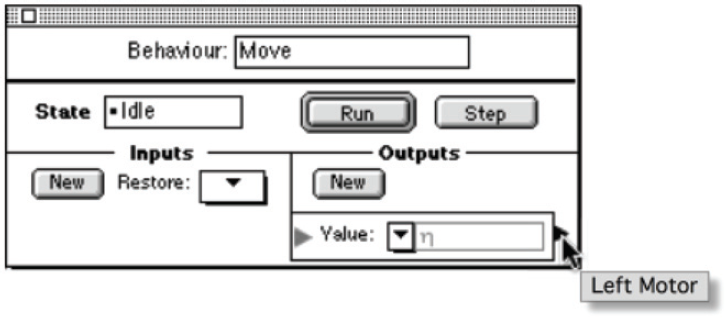

The first step in programming the

Adding an output

The

Since this output will generate values for the left motor of the car, we connect it to the left motor by clicking on the terminal of  , and from

, and from  to

to

Connecting the Left Motor output to the left motor

As a consequence of creating this connection, the output

Once an output has been defined and connected, we can hide its details in order to reduce the size of the behaviour palette, by clicking the icon beside the name of the output. When an output (or input) is reduced, its name is displayed whenever the cursor passes over its terminal, as shown in Fig. 11. Next we define a second output named

Reducing an output

In the

Adding an input

By clicking the

After simulation has halted

Now we select ↑ from the value pop-up of the Command input, placing ↑ in the value box. Since the

We want the  , and from

, and from  to

to

As soon as the connection between the

After defining the Start Forward state

As described above, when restarted, the simulation immediately stops, and we confirm in the transition dialogue that, given the current input ■, the control system should remain in the

When restarted, the car moves forward until the underneath sensor changes from □ to ■, causing the simulation to stop again. At this point the car has reached the next tile, so processing of the ↑ command is finished. Accordingly, we set the next state to

The state of the simulation after processing the ↑ command.

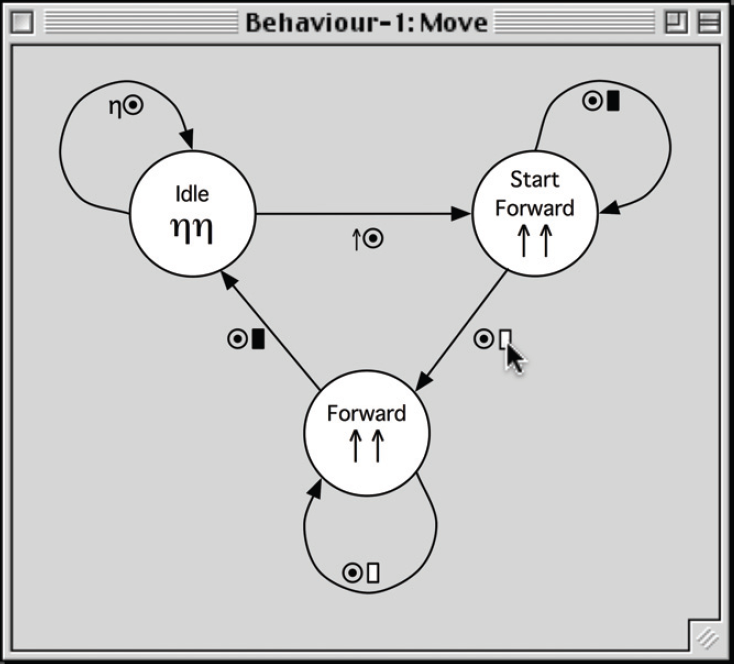

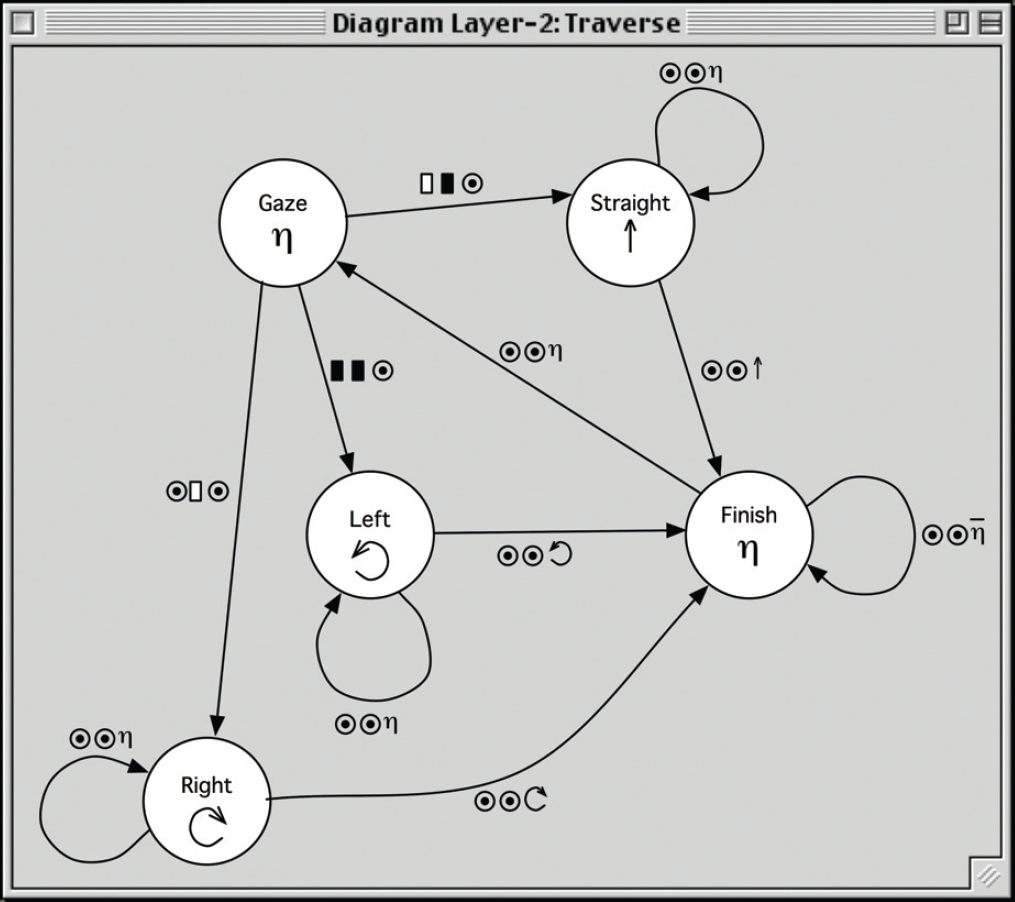

At any time during the process of programming a behaviour, we can select a menu item to open a window displaying the state diagram of the  represents any value from an alphabet. As the cursor passes over an input or output value, the name of the corresponding input or output is displayed, followed by the name of its alphabet, as illustrated in the figure.

represents any value from an alphabet. As the cursor passes over an input or output value, the name of the corresponding input or output is displayed, followed by the name of its alphabet, as illustrated in the figure.

To complete the programming of the

At this point, the simulation runs without stopping, responding appropriately to each of the four possible values for

To relate the control system developed in this section to the structure defined in Section 3.2, we make the following observations. Note that, as a convenience, we use the name of the behaviour to also identify the control system of which it is a component when the meaning is clear in context.

The control diagram of AS( AA( FS( FA( software sensors of software actuators of

Next we build a level 2 control system by adding to level 1 a new behaviour

To initiate this construction, we select the menu item

Creating the

The

The function of the

For the

Inputs and outputs for the

The

Connecting to a software sensor.

When we start the simulation, the transition dialogue immediately appears, and we choose

After processing the ↑ command.

Once all combinations of input values in the

To relate the control system developed so far to the structure defined in Section 3.2, we note the following.

The control diagram of AS( AA( FS( FA( software sensors of software actuators of

The control diagram of

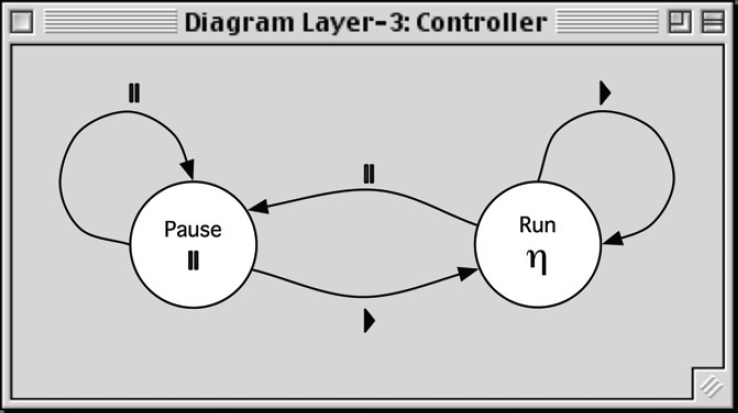

To conclude our example, we add another level to the control model to illustrate subsumption. This third level implements a controller with which the user can pause and resume the progress of the robot as it traverses the maze. As described above, we create a new behaviour called  }, set its value to

}, set its value to  , and add an output called

, and add an output called

Creating a subsumption function.

Replacing the level 2 signals to the motors is, however, not what we need to do to make the robot pause: we should simply inhibit the level 2 signals. Accordingly, we double-click the suppressor to transform it into an inhibitor.  . Fig. 24 illustrates the workspace at this point. Continuing in the manner described in preceding sections, we construct the

. Fig. 24 illustrates the workspace at this point. Continuing in the manner described in preceding sections, we construct the  , and resume when we set it to

, and resume when we set it to  .

.

Inhibiting the output of lower layers.

Diagram for

As in previous sections, we relate the control system developed here to the structure defined in Section 3.2, as follows.

The control diagram of Sub( Sensors( Actuators( AS( AA( FS( FA(Controller) = ϕ software sensors of software actuators of

The control diagram of

As discussed in Section 3.1, Extended Moore Machines allow for state outputs to be computed as functions of the input values on incoming transitions in order to deal with large, possibly infinite alphabets. However, to keep the above presentation as straightforward as possible, we have used a simple example in which the input and output alphabets are small. Consequently, this example does not lend itself to a realistic illustration of the use of functions in computing the outputs of states. For the same reason, the example does not demonstrate the construction of transitions corresponding to subsets of input values.

The example also does not illustrate the situation in which the programmer needs access to software sensors and actuators defined at some level below that of the behaviour currently being programmed. Unlike software sensors and actuators defined as outputs and inputs of the current behaviour, these lower-level ones are not directly available with the interface described above. To remedy this deficiency, our proposed environment allows the programmer to create a control panel, a floating palette similar in structure to a behaviour palette. Fig. 27 depicts a control panel imposed on the

Adding a control panel.

In terms of the SPRD model, the control panel is a behaviour in a level above the one currently being simulated, its functionality provided manually rather than by an

In previous sections we noted that Brooks' subsumption model, because of its modularisation of a control system into independent behaviours, provides a foundation for visual language environments for robot control programming, and listed some examples. We also noted characteristics of Brooks' formulation that make it less than ideal for this purpose, motivating the SPRD model presented in Section 3. In particular, although the architecture is layered into increasing levels of competence, higher layered into increasing levels of competence, higher layers can, and may need to, interfere with the flow of data in lower layers, and must therefore be aware of the inner structure of those layers. Hence the levels do not represent levels of abstraction in the software engineering sense, each presenting a well defined interface but hiding its implementation. Furthermore, the behaviours in each layer are interdependent, so cannot be individually tested and debugged. As a result, in order for a user to build a control system in a programming environment based on Brooks' subsumption, he or she would have to be aware of, and be able to directly edit, the underlying structures, as in the

In contrast, the recursive structure of SPRD encourages the programmer to view the programming task as extending an existing robot that provides an interface consisting of sensors and actuators, by adding one new behaviour and defining further, higher-level sensors and actuators. Certain aspects of the SPRD control model still show through in the interface we have proposed. While building a behaviour, the programmer needs to be aware of the concepts of “state” and “transition”: however, these are arguably quite natural concepts in the context of a problem that has a finite state solution. For example, a human walking a maze will be aware of being in the state “walking straight ahead with right hand on wall”, and on encountering a wall, will be aware of the need to start doing something else. Although SPRD reduces the need for the programmer to understand intricate wiring details, he or she must still understand subsumption functions, which are explicitly represented in the interface described above. Because of the simpler structure of the SPRD model, however, the application of subsumption is limited to replacing or turning off a signal from a sensor or a signal to an actuator in the “platform” robot, so is possibly less daunting than subsumption in the Brooks model.

A proof that the recursive structure of SPRD can capture the functionality of the Brooks model is precluded by the fact that Brooks' architecture is not formally defined. Instead we invite the reader to examine the diagram in Fig. 28, depicting one level of a Brooks control system. In this diagram, each node is a behaviour and the edges indicate flows of data between behaviours and between behaviours and the robot hardware.

A level 0 control system (adapted from (Brooks R.A. (1986)) Fig. 5).

To create an equivalent control system in SPRD, we would create one of these behaviours first. For example, we may decide to start with

The effectiveness of a control model and programming environment such as that we have proposed ultimately needs to be assessed by user testing. We believe, however, that further investigation is necessary before expending resources on implementing a prototype. For example, the behaviours embedded in the recursive structure we have described need not be finite state machines: in fact, other models would be necessary in order to achieve behaviours beyond the simple reactive ones. Preliminary work in this direction focusses on the use of neural networks for implementing behaviours (S.M. & Cox P.T. (2004)). Another possibility to consider is the feasibility of incorporating logic-based control systems to implement deductive behaviours, and the extent to which these parts of a hybrid system might be amenable to visual PBD (Amir, E. & Maynard-Zhang, P. (2004): Lespérance, Y.; Levesque, H.; Lin, F.; Marcu, D.; Reiter, R. & Scherl, R.B. (1994): Poole, D. (1995)).

Footnotes

6.

This work was partially supported by the Natural Sciences and Engineering Research Council of Canada Discovery Grant OGP0000124.