Abstract

Recently a social robot for daily life activities is becoming more common. To this end a humanoid robot with realistic facial expression is a strong candidate for common chores. In this paper, the development of a humanoid face mechanism with a simplified system complexity to generate human like facial expression is presented. The distinctive feature of this face robot is the use of significantly fewer actuators. Only three servo motors for facial expressions and five for the rest of the head motions have been used. This leads to effectively low energy consumption, making it suitable for applications such as mobile humanoid robots. Moreover, the modular design makes it possible to have as many face appearances as needed on one structure. The mechanism allows expansion to generate more expressions without addition or alteration of components. The robot is also equipped with an audio system and camera inside each eyeball, consequently hearing and vision sensibility are utilized in localization, communication and enhancement of expression exposition processes.

1. Introduction

Humanoid robot head with facial expressions is becoming one of the main components in recent developments of this type of robot. It is gaining high acceptance due to its multi-lateral applications and importance in response to some social service needs. The development of realistic human-like face and associated expressions presents challenges due to multiple factors such as material, mechanism design, sensing and control. Recent trends portray that humanoid robots are attracting the attention and interest of the scientific community in different areas of science as it calls for collaborative multi-disciplinary efforts to its human friendly development.

Investigating biomechanics of the human face may give insight on how to mimic its performance and behavior using mechanical and synthetic skin components [1]. A number of universities and research laboratories have developed humanoid robots capable of making facial expression to create intuitive communication interface between the former and humans [1–4]. For decades researchers have investigated humanoid robot facial mechanisms to reproduce the basic six expressive emotions; surprised, happy, disgusted, angry, sad, and fearful. A substantial number of results of varying degree of success have been published [5, 6].

The face is one of the complex parts of the human body; consisting of a large number of muscles which enable complex combinations of motions to express a specific facial expression [7]. The design of a mechanical mechanism to emulate the human face and the associated motion to depict realistic human-like facial emotions needs in depth understanding and analysis of various muscles of face anatomy. Numerous studies have been conducted to systematically understand human facial emotions. Other than the 1978 pioneer work of Ekman and Friesen (FACS techniques) which dealt with the basics of facial expression in perspective of systematic muscle grouping into action units (AUs), the comprehensive Carnegie Mellon facial image database [8, 9] is also another invaluable reference.

Quite a number of studies are reported in literature related to humanoid head with facial expression. Kismet head with a total of 15 DOFs on the face, including eyebrows, ears, eyeballs, eyelids, and mouth has been developed at MIT [10]. Tokyo University of Science developed a robot named SAYA to serve as a receptionist [11]. SAYA's face is actuated by high speed pneumatic actuators and covered with flexible skin. WE-4RII created at WASEDA University in 2004 has 24 DOF [12]. WE-4RII's facial expressions are created by changing the shapes of externally attached eyebrows, ears, eyeballs, eyelids, and lips. However a majority of these face robots have common drawbacks either lacking human-like appearance or comprising too many actuators and corresponding mechanical parts which consequently hamper their acceptance and emergence in daily social functions.

In recent times the advance in humanoid robot design and realization of human-like expressive head robot has increased the interest of their use in quite a number of potential social roles; as a receptionist robot or information desk for different institutions, as service robot in hotels, or replacing human in repetitive operations. They can also be applied in therapeutic treatments for children's with autism [13], and other assistive tasks in sales and health sectors [14]. To speed up its applicability in daily lives, designing a simplified anthropomorphic face mechanism is an important step. One of the major constraints to achieve this is high DOF or the number and type of actuators used. Compared to the existing systems presented in literature, our design proposes a mechanism composed of a much smaller number of actuators.

Given the level of sophistication of human face anatomy, it is obviously challenging if not impossible to fully replicate it using artificial muscle or mechanical elements powered by some form of actuation techniques. Towards this end size is one major constraint. Moreover, success in designing a robot head very close to human head size with facial expressions has limitation in most of the existing robot heads, where complicated mechanisms and a plethora of actuators used are considered as major drawbacks [15].

This work, therefore addresses the design and development of a simplified expandable robotic face with the facial expression mechanism for a fully functional humanoid head with the capability of interacting emotionally, visually and physically with humans. It demonstrates more than 8 different facial expressions and uses a flexible artificial skin on a rapid prototyped face skull. This manuscript also provides detailed functional description and mathematical model of the physical and kinematics behavior of the servo driven mechanism.

The rest of this paper is organized as follows: Section 2 discusses the humanoid head robot mechanism. Section 3 details facial expressions and the proposed hardware mechanism and its design. Section 4 presents the system integration and control of the robot head designed in this study and lastly conclusions are presented in section 5.

2. Humanoid Head Mechanism

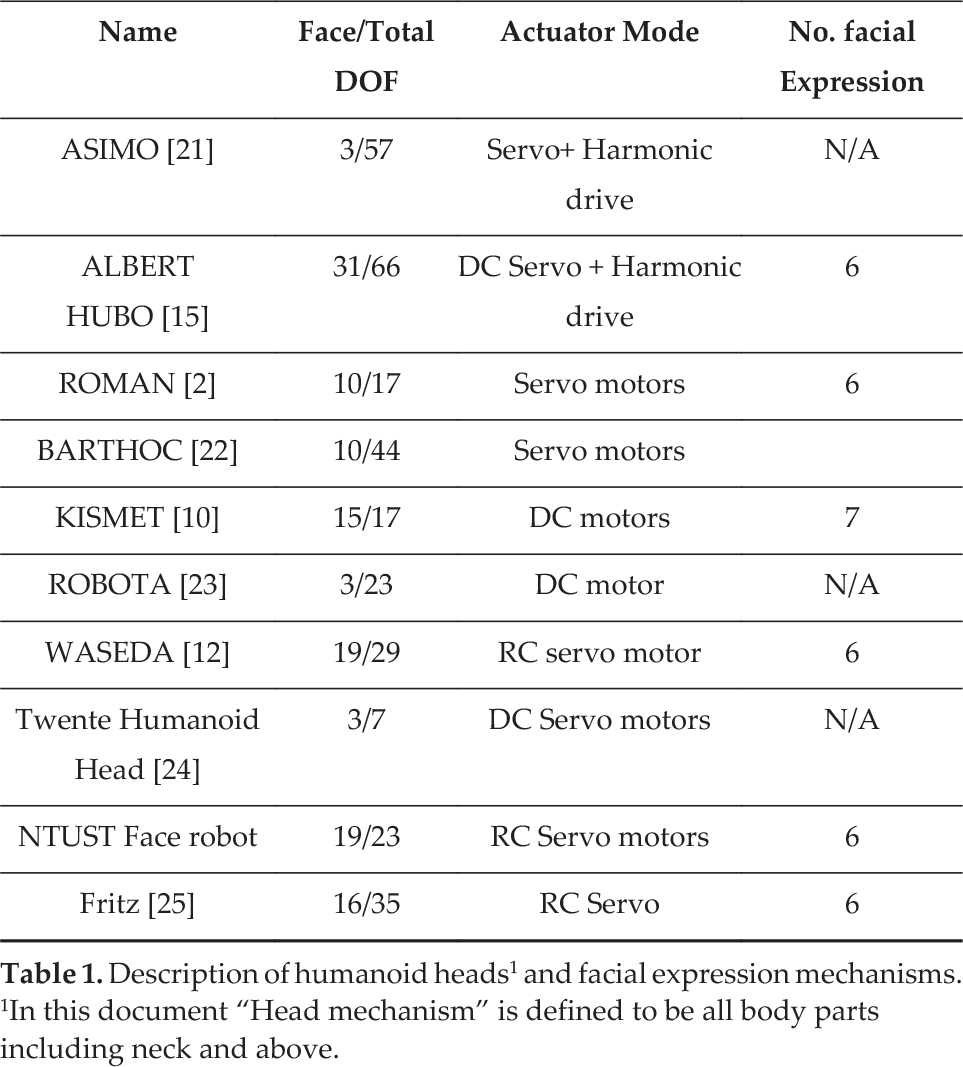

The current trend in humanoid robot designs and development with different levels of sophistication and interaction with humans has registered a big improvement, and gained a wide acceptance for plenty of applications. As the review of literature summarized in Table 1 shows, most humanoid heads use servo or DC motors as the main actuator, which is imputed to the majority of hardware cost. However, quite a few systems powered through smart actuators such as shape memory alloy [16–18] also exist. From a human anatomy and physiology one may understand that facial muscle can be divided into 44 independent basic sub-units called action units (AUs) which constitute the entire facial expression muscle system [3, 8, 19].

Description of humanoid heads1 and facial expression mechanisms. 1In this document “Head mechanism” is defined to be all body parts including neck and above.

Basic facial expressions AUs and CPs (FACS coding)

Synthetic silicone rubber skin material data

Specification of servo motors in the robot face

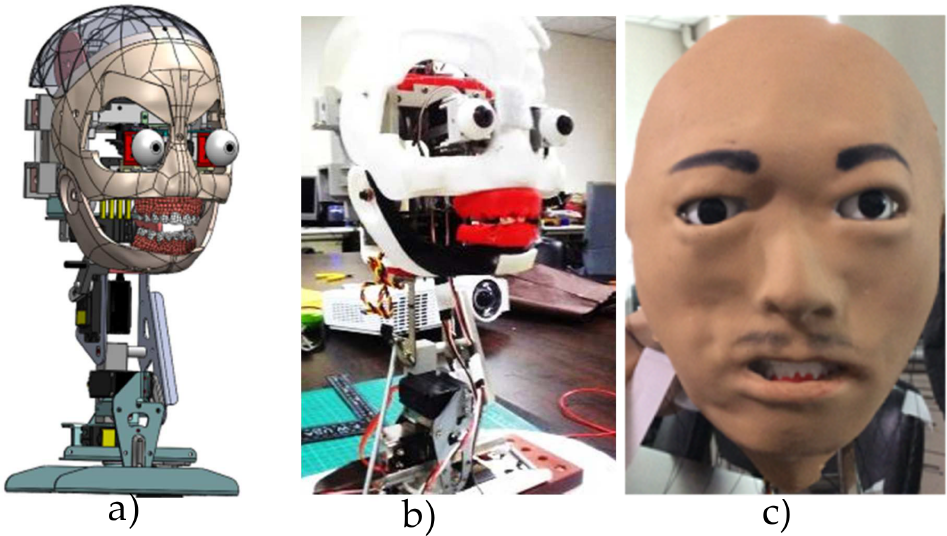

The proposed humanoid head (see Fig.1) has; one 3-DOFs of expandable facial expressions mechanism, one 2-DOFs eye system, one 3-DOFs neck and one single DOF mouth mechanisms. RC Servo motor is used as a prime means of actuation to generate all sorts of motions. According to studies daily communications significantly rely on face-to-face interactions, and it is assumed that up to 55% of the information transfer is through facial expressions [8].

Development of head robot: a) full CAD model, b) face skull model, c) full head prototype with face skin

The face robot is designed as a component to be used for a mobile receptionist humanoid robot. The robot head that has human like appearance is developed to make the human-robot interaction more natural and intuitive. Broadly speaking most of the existing humanoid robot heads can be categorized into three main groups; humanoid robots with an interactive robot heads based on LEDs and speakers, humanoid robots with kinematic heads, and those heads with flexible skin [20].

An anthropomorphic robot face design previously done in our research laboratory [3] has laid basis for a new approach of simplifying humanoid head design. The study has proposed a device with a lower degree-of-freedom mechanism that can generate facial expressions and facial muscles which were categorized into groups with no interfering motion, considering the concept of AUs. The present study which is the successor of Lin et al.[3] has further enhanced the new face robot development direction to make the humanoid robot head reliable, accessible, and very intuitive. Furthermore, the present work also improves the limitations of the mechanism and in effect reduces the costs and the energy consumption significantly.

3. Facial Expression Mechanism

3.1. Facial Muscle and Expression Analysis

Our daily life is full of interactions with other human beings around us. Thus effective communication either in verbal or non-verbal form is one of the basic pillars for successful life. Studies show that nonverbal (using body language, gesture and the tone and pitch of voice) communication takes a major share in meaningful and responsive interactions. To this end human faces play an important role as a feedback platform. However replicating the biological face in a robotic face is challenging due to a multitude of reasons such as the complexity of the face, large number of degrees of freedom (DOFs) required, cost to manufacture and maintenance. Also face with flexible skin is part of the humanoid robot most likely to pull the rest into the uncanny valley [7].

There are quite a number of muscles on the human face responsible for enabling different facial expressions such as smiling, anger, surprise and fear. Many previous research works revealed much about the human facial muscle anatomy. In FACS, formulated by Ekman and Friesen, the face muscles were divided into 66 action units (AUs), including eyebrows, nose, and lips. The movements of the muscles of these units are used to describe a human facial expression [26].

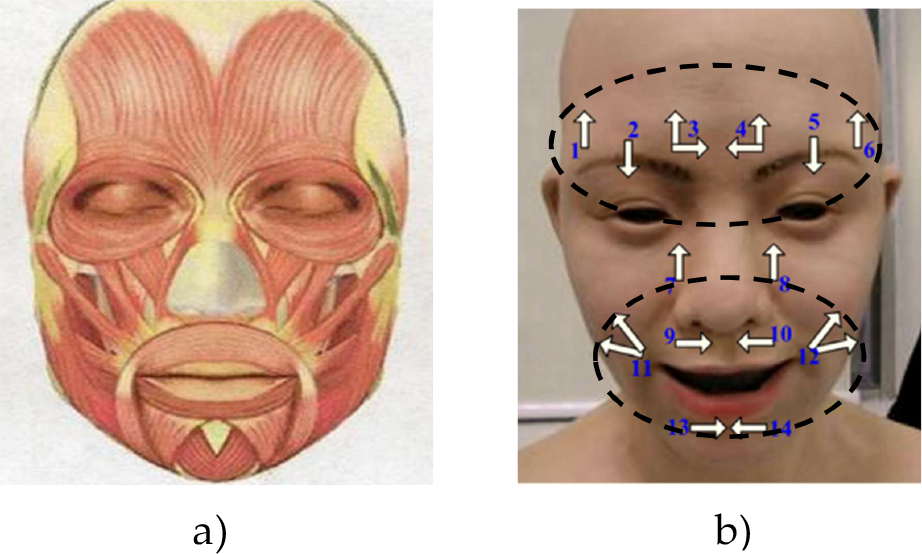

Ignoring detailed interrelation and insignificant interference to each other's movement facial muscles can be grouped into three [3]. These are (1) muscles responsible for movement near the eyebrow, eyes, and nose in one (2) those muscles distributed around mouth in another category, and (3) muscles creating rotation motion of eyeballs (see Fig. 2). This grouping of muscles is used to distribute control points (CP) on the artificial skin robot face (see Fig. 2-b). Despite the uncanny feeling around observers and users, the development and research intensity unveiling the future of humanoid robot with flexible skin face is promising.

a) Musculatures of face b) control points on face

3.2. Artificial Face skin

The skin used to construct the face of the robot needs to be deformable so that different facial expressions are possible and it is commonly made from a type of synthetic silicone rubber with proper additives to control its mechanical and color dyeing behaviors [4, 27]. Choice of silicone is based on the controllable stiffness and ease of fabrication. The skin is designed to have variable thicknesses at different regions to resemble the human facial skin. Its fabrication procedure goes through a series of various material molding processes. The number and locations of the control points of the skin for deformation, can affect the degree of reality of facial expressions. Moreover, the distribution of these control points needs a careful consideration of elastic properties. In this research, CPs selections were based on the analysis of FACs coding and facial anatomy.

3.3. Design of Facial Expression Mechanism

Realistic facial expression of a humanoid robotic face significantly affects its interaction with humans. Most of the existing humanoid heads with facial expressions reported in literature so far, were realized by using an excessive number of actuators, be it in mechanical drives (servo or DC motors) or smart actuators [3, 20]. However the large number of actuators used in a single system raises different technical issues like, system complexity, complicated control protocols, and undesirable weight and energy consumption. In this design, only three servo motors are required inside the uniquely designed skull that can generate more than 8 different facial expressions. The actuating levers were connected to the facial skin through wires tied to anchor points set on the artificial skin corresponding to human face muscles responsible for generating a specific expression.

For the enhanced level of acceptance for humanoid robots in the society its appearance and realistic facial expression can make a greater influence. Nonetheless, there is no clear understanding in literature on the impact of robot anthropomorphism on interaction with humans [5].

The mechanism for generating the six standard expressions in this humanoid head design consists of two parts; the actuator and specific expression mode selector. The expression generating module roller is connected to the actuating servo using a gear drive to increase the number of expression lines on the upper and lower modules, which are otherwise limited to only accommodate a few expressions due to limited servo angle (180°) and also increase torque. Each line on the roller represents a specific or part of a facial expression.

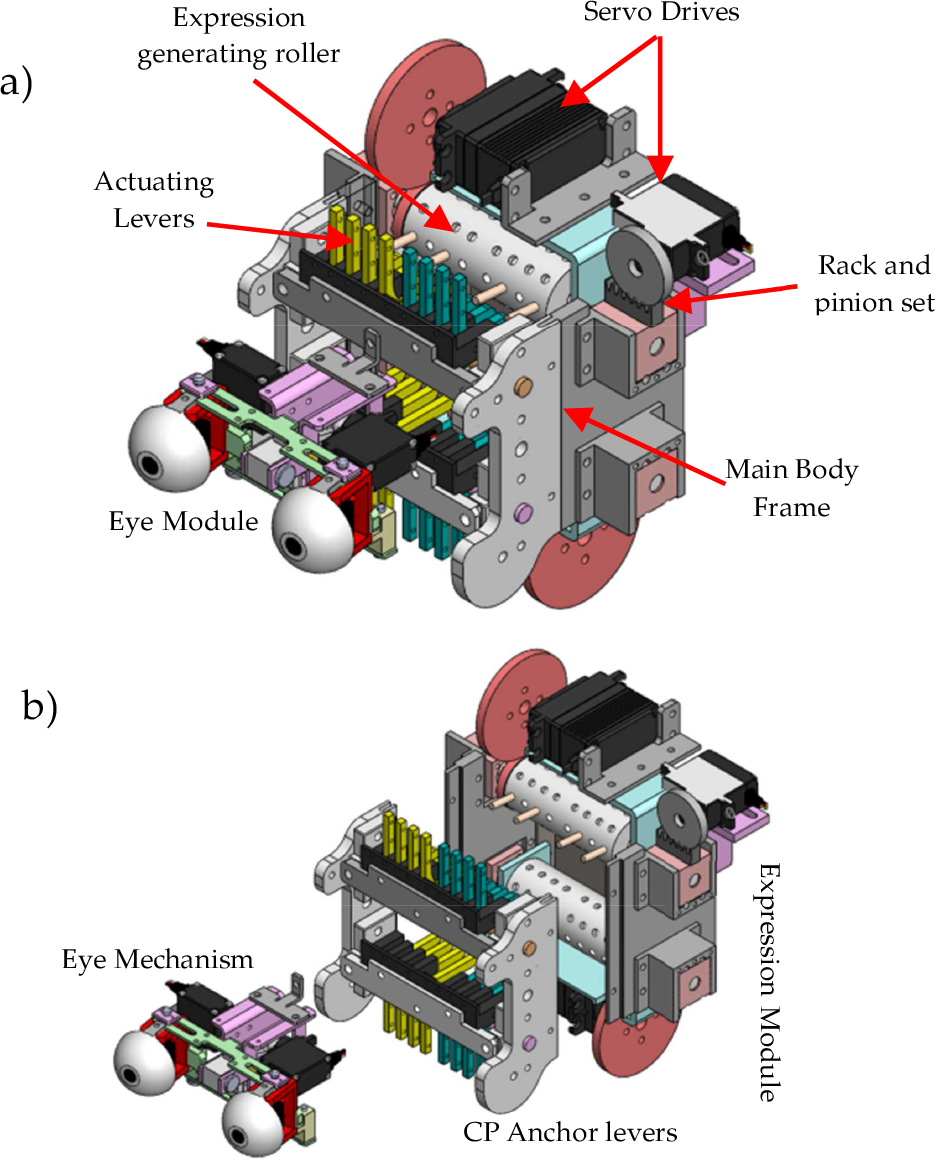

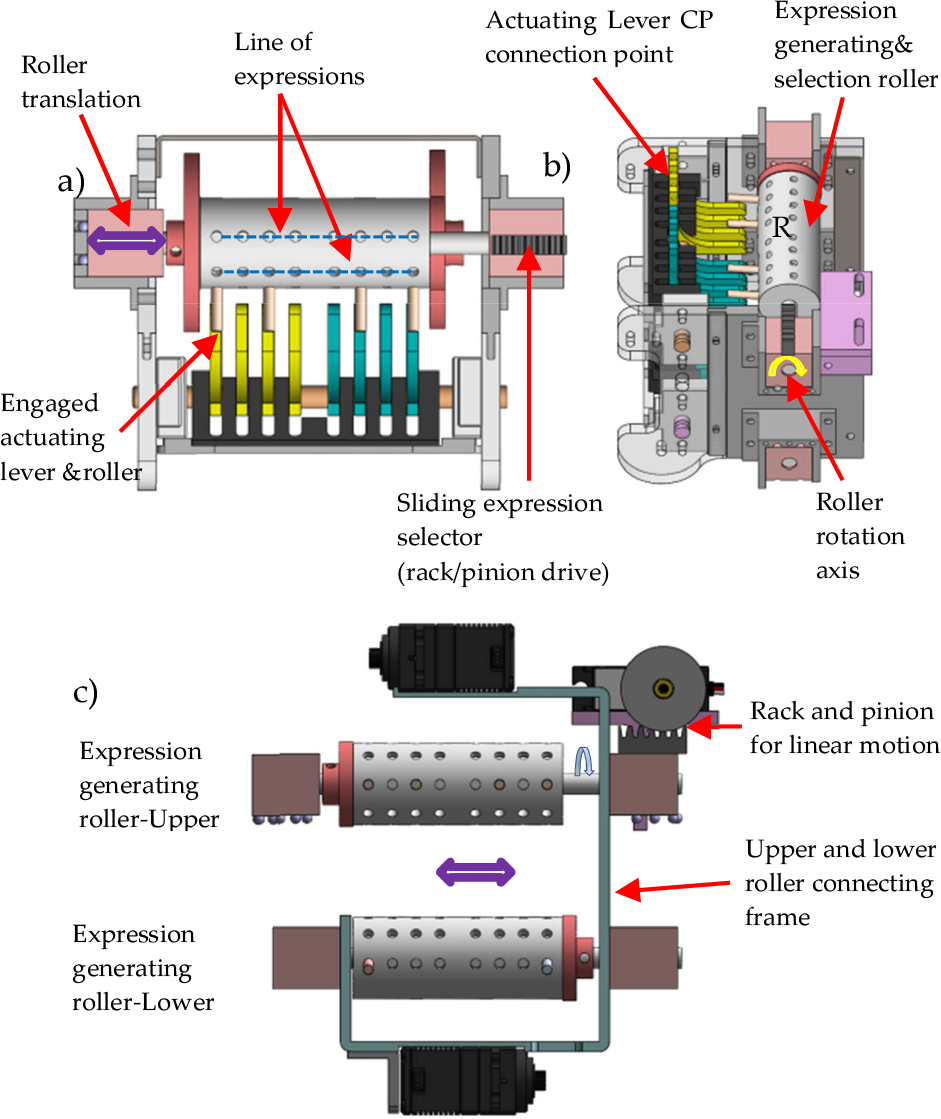

Figure. 3 shows the facial expression generating module inside the skull. This module is composed of the main body frame, RC servo motors, actuating lever, eye module, a roller and expression selector slider rack and pinion parts. The maximum displacement of the face skin tangent to the facial surface do not normally exceed 11 mm in some typical facial expressions compared to a neutral face [3]. Thus, the required skin deformation corresponding to a given facial expression is set as the maximum displacement of anchor point on the skin that can be induced by the mechanism. The size of the roller

Facial expression generating mechanism: a) complete assembly; b) explode view modular components

Expression selecting and generating roller

As can be seen from Fig. 4, the rollers have a line of holes along its length and a certain number of these lines placed along its circumference (six lines in this design on each roller). Each line represents a given expression therefore one or more pins are inserted in these holes at required positions to engage with the actuating levers. For example: 1) “Anger” expression has two CPs on the skin thus only two pins in anger expression line, and the rest of the holes are empty. 2) “Sadness” has to pull 4 points on the skin therefore 4 pins are inserted in 4 holes on sadness expression line etc.

The two basic motions performed by expression generating roller are translation to engage/disengage with actuating levers and rotation to generate or select expression/ expression line. Rotation of the roller when in neutral position allows for the selection of a given expression, whereas the rotation while engaged with actuating lever will generate the chosen expression. To change the expression on the face the roller performs these two motions sequentially; disengage from the actuating lever through motion of the rack and pinion set, once in neutral position rotate to choose the sought expression line and move back to engage position and do expression.

Eight holes with equal spacing on one line are set on the roller (see Fig. 4). Each row of the upper and lower roller selection module represents the AUs (or CPs on the face) or combination of theirs (i.e. represent a specific expression or part of it).

The actuating lever, with its pivot axis set at the specific distance from the expression selector module axis, is connected to the face skin through the anchor control points on the skin which are distributed based on action unit coding formulation and grouping.

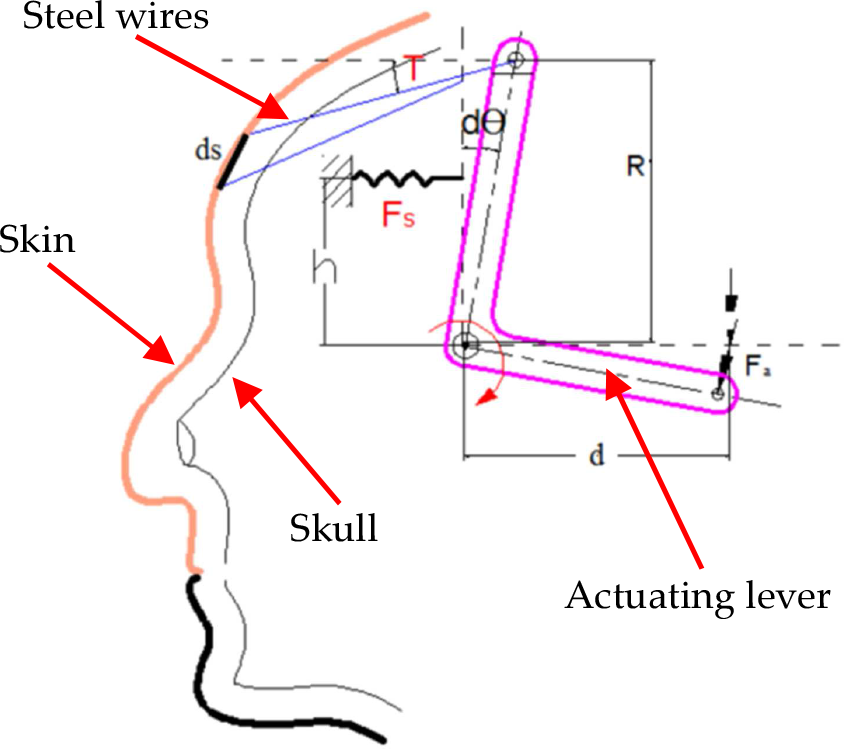

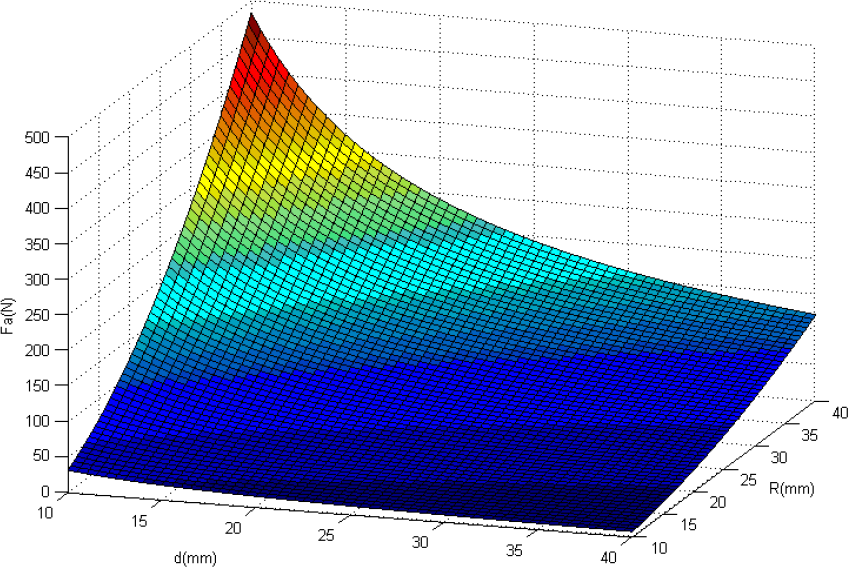

The expression module roller motor rotates the actuating lever to generate facial expressions. Thus, selecting an actuator with a sufficient torque to effectively drive the system is of paramount importance. The torque required in creating elastic deformation in the skin, with the configuration geometry of the actuator levers and expression selector module (see Fig.4) schematically depiction as in Fig.5, can be set as (1):

Expression generating mechanism kinematics

where

The silicone rubber material used in the synthetic skin can be accurately modeled as neo-Hookean material for deformation range exhibited in humanoid robot face [27]. In this work it has been assumed that Hook's law nearly governs the skin material property. Thus differential force of deflection,

where

The limit of the rotation angle

Plot of actuating force and link parameter d and R

The primary focus of this humanoid head design is to create a simplified, easy to control light weight mechanism with as minimum number of actuators as possible without compromising the number of distinctive emotions generated.

Thus, to achieve the set target facial muscles are grouped based on their expressional emotion vicinity on the face. At each CP an anchor of small area made of a bundle of muscles like net of soft threads along with metal rings is glued. A steel wire fixed on the rings is pulled through its connection on the actuating lever to generate the respective expression (see Fig. 7). The rings' fixing positions on the skin and the direction of pulling are set according to the human facial muscles' motion responsible for expression of the emotions sought.

Face skin CP connections

4. System Integration and Control

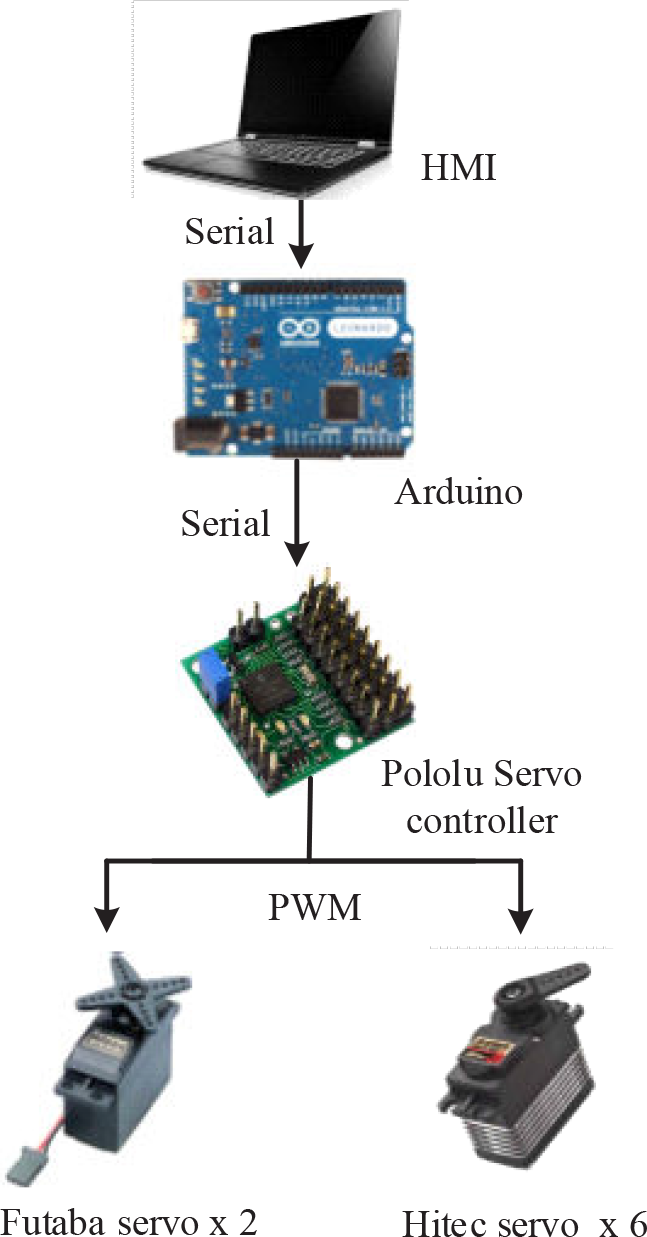

The humanoid head robot system has a facial expression generation driven by a total of 8 servo motors (3 for facial expression movements, 1 for jaw motions, 2 for eye motions, and 2 for neck motion). In addition to the human-like expressive face, the camera and the speaker are installed to endow it with vision, hearing and also speaking ability. The eye module composed of 2 motors is used to generate eyeball motions and the stereo camera is fitted in each eye ball for vision. The neck module that also used 2 motors is employed to generate motion of the neck.

The actuating units are modularly designed and can be separated from the face skull and the skin structure (see Fig.8), when required. Therefore, a number of faces can be created and different appearance of individuals can be represented with one system.

Face assembly a) the robot face without skin, b) modular components

Rotational angle information for each servo motor corresponding to every facial expression is stored in a control PC. When a specific facial expression is required to display on the face robot, a set of pre-programmed commands will be sent to a servo motor controller so as to seek the expression.

A Pololu micro serial controller with 8 channels that is used to drive servos as a controller is chosen (see Fig. 9). It needs a supply of voltage V and output PWM signal to control servo position with the resolution of 0.005 degrees. The communication is based on Serial (TTL), which is easily be connected by two lines Tx/Rx and integrate program by RS232. In the embedded case, an Arduino µ-processor board is used to control all system motions and conduct certain parameter calculations. The general system architecture is as shown in figure 10.

Pololu servo controller SSC30A layout and pinout

Embedded control system diagram

5. Conclusion

In this paper a new mechanism design of a humanoid robot head with facial expressions is reported. Compared with previous studies, the system complexity and number of actuators required are significantly reduced without compromising the number of achievable distinctive facial expressions. Only three off-the-shelf servo motors are required to mimic facial expressions, in contrast to large numbers in most existing robot heads, to generate six standard facial expressions. Various skin deformation patterns can be created as required in a controlled fashion.

In addition to fewer hardware components, mostly rapid prototyped (3D printed) parts, modular design of sub-parts, enables it to exchange the face character without affecting the rest of the system. Therefore, various human characters can be represented in many service robots such as receptionists and tour guide robots (which is the initial primary focus of this work), movie characters or medical rehabilitation robots.

The actuator is at a lever arm distance, therefore the torque requirement is reduced due to the mechanical advantage of the mechanism which gives the room for using inexpensive motors. The kinematics model is also presented to set the torque requirement of the actuator used, and estimate the power consumption for a given artificial skin elasticity behavior and the optimal value for the link parameter