Abstract

The paper compares the performance of several methods used for the estimation of an image Jacobian matrix in uncalibrated model-free visual servoing. This was achieved for an eye-in-hand configuration with small-amplitude movements with several sets of system parameters. The tested methods included the Broyden algorithm, Kalman and particle filters as well as the recently proposed population-based algorithm. The algorithms were tested in a simulation environment (Peter Corke's Robotic Toolbox for MATLAB) on a PUMA 560 robot. Several application scenarios were considered, including static point and dynamic trajectory tracking, with several characteristic shapes and three different speeds. Based on the obtained results, conclusions were drawn about the strengths and weaknesses of each method both for a particular setup and in general. Algorithm-switching was introduced and explored, since it might be expected to improve overall robot tracking performance with respect to the desired trajectory. Finally, possible future research directions are suggested.

1. Introduction

The use of visual sensors in robot control loops has been discussed in the literature (and in practice) for a number of years [1, 2], since computer vision sensors provide information-rich signals which are very useful, especially in unknown environments [3–7]. This paper focuses on an image-based visual servoing (IBVS) approach in which control error is defined in the image plane, enabling the use of an uncalibrated and model-free approach [8, 9]. To achieve this, a Jacobian matrix needs to be calculated/estimated to define dependencies between changes in the camera coordinate frame (visual changes) with changes in the robot coordinate frame (robot angle changes). Since the early 2000s, several approaches to image matrix estimation have emerged based on nonlinear optimization algorithms [8–12] which are a significant improvement on traditional methods for Jacobian estimation.

The large body of work produced and the numerous available methods might suggest that the problem of visual servoing is trivial, but the implementation of the specific setup/problem reveals drawbacks (and advantages) with each individual method. There appears, however, to be no significant research comparing uncalibrated model-free image-based visual servoing methods (especially for small-amplitude movements). Additionally, the question of the integration of several estimation methods is still very much open. Thus, this paper tries to present the specific characteristics of several methods applied in a simulation problem: the positioning of an object in a specified position in space, and the identifying of scenarios in which the methods provide the best results. This was achieved for an eye-in-hand configuration [13] with a PUMA 560 robot manipulator for the small-amplitude movements that are characteristic of high-precision tasks, something which has not been widely studied in the literature. The contributions of the manuscript can be summarized as follows:

A comparison and analysis of the performance of widely-used methods applied in uncalibrated and model-free IBVS on several characteristic trajectories (both static and dynamic) for small-amplitude movements.

The application and analysis of population- and particle filter-based visual servoing for an eye-in-hand configuration (sparsely represented in the literature).

The application of the Kolmogorov-Smirnov statistical test for the analysis of similarities between end-effector trajectories.

The discussion of the real-time integration of several IBVS algorithm outputs for the best adaptation of the control system to the environment.

The article is structured as follows. Section 2 contains a brief literature review which highlights the current state of the art and identifies possible room for improvement. Section 3 presents the experimental design used for simulation, and introduces the reader to the image Jacobian matrix and the estimation algorithms used. The results are presented and discussed in Section 4 with respect to the evaluation parameters introduced in Section 3. Finally, Section 5 concludes and suggests possible future research directions.

2. Image-based visual servoing – A brief literature review

Visual servoing for robots has received significant attention since the late-1990s [7], and so the available literature on the subject is significant and goes well beyond the scope of this paper. The research field can be divided according to two main categories [14–17]: PBVS (position-based visual servoing, also known as 3D visual servoing), and IBVS (also known as 2D visual servoing). It should be noted that a third (hybrid) approach also exists which is a combination of these two approaches [18]. A comparison of PBVS and IBVS methods is provided in [17]. The authors were concerned with three fundamental characteristics of two approaches to visual servoing: stability, robustness and sensitivity. It was shown that both approaches are locally asymptotically stable with no modelling errors, and are locally robust to such errors. However, they could not handle large motion commands. Trajectories as well as convergence rates were affected by all types of error. With regard to sensitivity, the two approaches showed the same trends, depending upon the type of reference.

Because of the advantages which IBVS offers, such as definition of control error in terms of image features and robustness to system modelling and image measurement errors [14, 19–24], it is an interesting robot control tool. IBVS includes a special subset where no camera calibration or knowledge of an exact system model (e.g., model-free) is needed, and it offers additional flexibility, especially in unknown and unstructured environments [8, 9]. An additional division can be made based on the procedure used for the calculation of an image Jacobian [9, 17]. This type of visual servoing problem could be formulated as a nonlinear least squares problem in which the goal function (error) needs to be minimized. The first application of image Jacobian estimation was proposed in Hosoda et al. [4], where a model-free but calibrated approach was adopted. One of the first efficient solutions for a model-free approach was offered by Jägersand et al. [5], who formulated the visual servoing problem as a nonlinear least squares problem solved by a quasi-Newton method with Broyden Jacobian estimation. Stability was ensured by the thrust region method. Similar principles have also been applied for multiple camera model-based 3D visual servoing [6]. If the target is moving, the error is not only a function of the robot poses but also of the poses of the moving object. Consequently, Piepmeier [12, 20] suggested the use of a dynamic quasi-Newton uncalibrated model-free method with a recursive estimation scheme for Jacobian calculation. This estimation actually involved integrated velocity prediction and correction. The velocity prediction and correction term was calculated as the time derivate of target motion which attempted to compensate for target motion, in turn resulting in better tracking performance. Testing of the proposed method was done with a 6DOF PUMA robot.

Recently, the generalized secant method has been suggested [8] as best fitting the model of a nonlinear system. The method stores data from a certain number of data points in the past and uses them in future decision-making, which has favourable effects on overall visual servoing performance. Hao and Sun in [9] proposed a universal state space approach after examining several approaches to the estimation of an image Jacobian, including a population-based method. This was motivated by the desire to address the sensitivity to noise and error of the Broyden approach. They argue that their universal state space approach provides a standardized framework into which all approaches to uncalibrated model-free visual servoing can be fed, and that it provides a framework for the identification of connections between different approaches. It is interesting to note that they conclude that a variant of the population-based method with 20 members obtained the best results for dynamical tracking. This work is close to the subject of our study, but since the authors were primarily concerned with a unified state space framework, performance comparison is not fully explored, especially for small-amplitude movements. Thus, our work expands on that of [9].

Another class of methods relies on estimators like (extended) Kalman and particle filters for the estimation of elements in an image Jacobian matrix [25, 26]. The advantage of this kind of approach (besides the computational efficiency of Kalman filters and the power of particle filters) is the existence of a covariance matrix which provides the algorithm with confidence in its estimate, thus enabling the inclusion of innovative data-fusion approaches to visual servoing. Kalman filters can also be used for the estimation of object motion in an image, thus improving tracking accuracy [27, 28]. One known issue with Kalman filters is the need for knowledge of process and measurement noise, which are in general not known or constant. Thus, an adaptive Kalman filter [27, 28] is employed which tries to adapt these statistics in order to maximize estimation accuracy. Another way to improve the performance of a Kalman filter is to use motion-primitive models [29] and a strategy for switching between them using validation gates implemented by nonlinear least square fitting. In the particular paper, only two primitives were used – linear and circular motion – but the authors stress that this was enough to construct complex trajectories. They go on to state that additional primitive models can easily be added.

Since these methods update the image Jacobian matrix according to changes in image features, the question arises as to how the initial matrix is estimated. This is done with exploratory movements [6], whereby the robot is moved by very small and known increments (joint-by-joint; all other joints are kept constant in the particular case). On the basis of the recorded changes in image features, the initial Jacobian matrix is calculated. The stability of the estimation method has been proved [4, 11] to depend significantly on the accurate estimation of this initial matrix. When choosing image features to track, one should bear in mind possible instability because of the singularity of the Jacobian matrix, as shown in [30–32]. This is the reason why the desired and target shapes in [12, 19] do not fully match. Sutanto et al. [6] originally examined deterioration in an image Jacobian matrix due to the assumption of a constant matrix for N robot movements (because of a reduction in computational demands). This situation is especially pronounced when several consecutive joints are moved in the same direction (which is a common occurrence). Sometimes, it is also necessary to take into account the specifics of applied hardware, as was the case in [14] where epipolar constraints or/and a fundamental matrix were taken into account (for stereovision), thus increasing the robustness of the method. This was verified on a 3DOF system.

The literature review reveals that there is no significant research on uncalibrated model-free image-based visual servoing methods (an exception is [9]) for small-amplitude movements which compares traditional approaches with some rarely-used or novel approaches. Additionally, the question of the possible integration of several methods to provide more reliable and accurate estimates is still very much open.

3. Materials and methods

3.1. Experimental setup

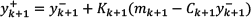

In the simulation study, a 6DOF Puma 560 industrial robot was used for the experiments. This was achieved with the Robotics and Machine Vision Toolbox for MATLAB created by Peter Corke [33]. This toolbox is widely recognized in the visual servoing community and has been used in a number of previous papers [9, 12, 20, 32]. An eye-in-hand configuration was used in such a way that the manipulator end-gripper and camera coordinate frames coincided. In this way, processing was simplified and generality of discussion maintained. Figure 1 depicts an example of the robot setup and its pose during simulation, as well as current and desired image feature positions.

Simulation setup: a) 3D view of the robot, b) 6DOF Puma 560 robot manipulator in the simulation environment, c) view from the eye-in-hand camera comparing the current pose (black) and the desired pose (grey).

From Figure 1c, it can be seen that four points on the end-effector (i.e., image features) do not constitute a rectangle because of possible singularity issues, as suggested in [25, 30, 31]. The target was positioned (and moved) mainly in the Y-Z plane, as defined in Figure 1b. The camera parameters were set to the following values: image plane size 512×512 pixels, focus length f = 8 mm and pixel projection ratio P = 60 pixel/mm. The camera sampling time was set to 0.02 s, and visual servoing algorithms and a simulation were run with a 0.01 s sampling time. Thus, rate transition blocks were inserted before (zero-order hold) and after (unit delay) the camera.

Two types of scenarios were explored: static target-tracking and dynamic target-tracking. In both cases, zero mean Gaussian noise was added with variances of σ2 = 0 (i.e., no noise), σ2 = 0.5 and σ2 = 1. Noise was added to the measurement of the image features. The static case demonstrated the algorithm's convergence and steady-state performance parameters. In the dynamic case, three characteristic trajectories were chosen (a diagonal line, a rectangle and a circle) in order to test the algorithm's tracking capabilities, robustness in terms of prediction bias and performance subject to abrupt changes. The influence of the target speed was also analysed, and thus each trajectory was simulated at three speeds (in the image plane): 10 pixels/s, 20 pixels/s and 30 pixels/s. Additionally, in every case performance was tested with and without velocity prediction and correction. Four online image Jacobian matrix estimators were used (the Broyden method, a Kalman filter, a particle filter and a population-based method) along with the analytical calculation of the matrix as the reference. Thus, in total 5×3×2 (static case: number of methods, noise levels, velocity prediction and correction) + 5×3×3×2 (dynamic case: number of methods, target speed levels, number of noise levels, velocity prediction and correction) = 120 simulation runs were performed in this study.

The initial image Jacobian matrix estimation was based on [6], where small, exploratory movements of each individual robot joint (while other joints were kept constant) were made. In this manner, the correspondence between each joint displacement and the displacement of image features was established. This step needs to be executed carefully, since errors can make visual servoing – regardless of the method used – unstable. Since the Puma 560 has six joints, six exploratory steps were executed, each providing two elements (one for the x axis and one for the y axis) of the Jacobian matrix. This can be written formally for a single image feature as:

where

3.2. Image Jacobian estimation algorithms

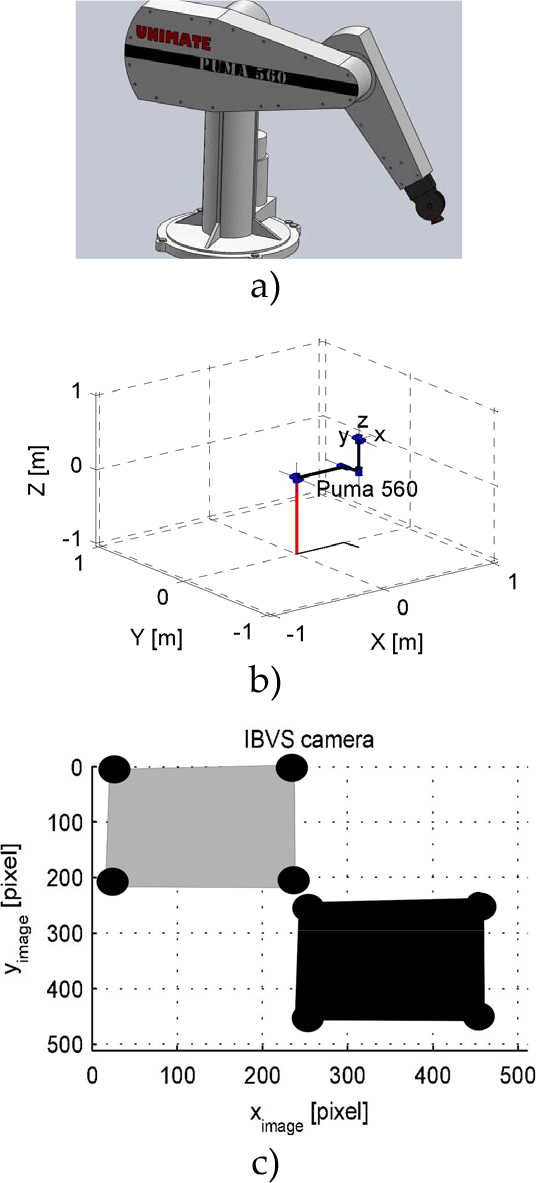

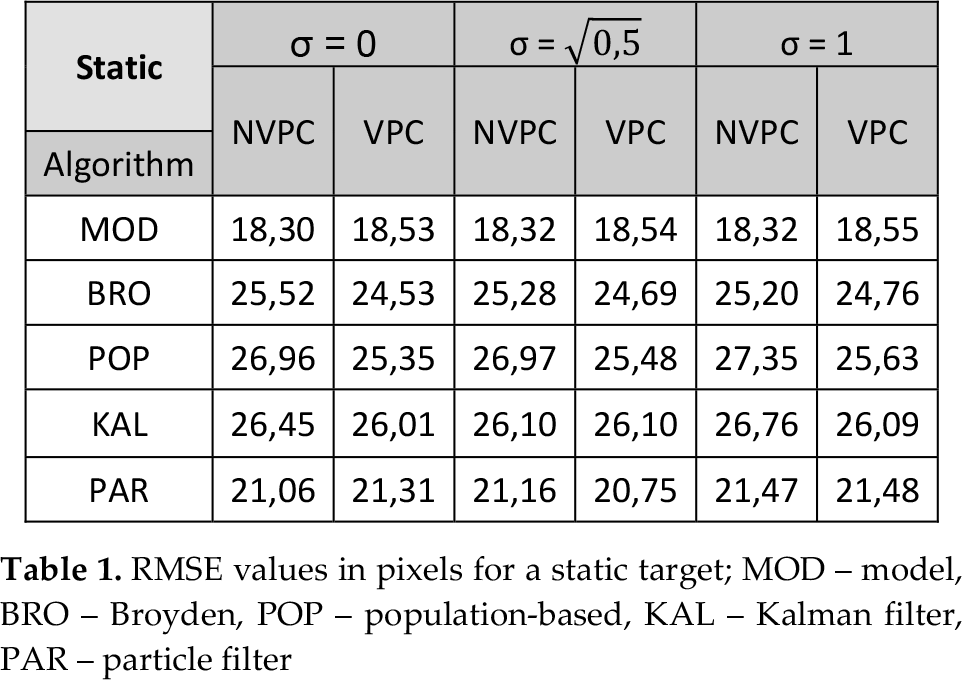

The general principle of IBVS control is depicted in Figure 2, although in the study itself joint angular velocities rather than joint angles were used for control. As can be seen from the figure, the visual servo controller takes as inputs the differences in image features and robot joint angles between two consecutive time instances and, according to a predefined optimization/estimation algorithm, provides an image Jacobian matrix. Please note that some image processing algorithms are needed (e.g., feature-tracking algorithms like the Lucas-Kanade algorithm [34]) to obtain the image feature vector. The Jacobian matrix is used to define changes in the joint angles' values necessary to minimize the residual error function (i.e., to get closer to the desired target). For the eye-in-hand configuration, the residual error function is defined as:

A general IBVS control scheme, where: f d denotes the desired image feature values, f denotes the current image feature values, Δf denotes the change in image feature values between two consecutive frames, ∆θ robot denotes the required change in robot angle values, θ denotes the current robot angle value's vector, ∆θ denotes the actual change in robot angle values between two consecutive time instances, and (xi, yi) are the coordinates of respective robot joints in the world coordinate frame.

A robot kinematics block was used to reconstruct the x and y coordinates of each individual joint/segment based on the robot joint angles. In the simulation study, forward kinematics was used as depicted in Figure 3 (which was later adapted to include rate transition blocks). In practice, this block is not needed (except in cases where analytical calculation of the image Jacobian is performed). It was used to simulate the real robot movement, something which is readily available in practical applications. Also, please note the integrator in the loop for the transition from speed control to position control. In the study, a proportional controller was used, such that the control signal could be defined as:

Simulation scheme detail (from Peter Corke's Toolbox [33])

where

The multiplication of two Jacobian matrices produces a total image Jacobian matrix (which, in this paper, is simply referred to as an ‘image Jacobian matrix’), which must be known in order to generate a speed control signal.

The algorithm chosen for the image Jacobian matrix estimation defines the accuracy and reliability of the overall control algorithm as well as its computational demands. In the paper, four algorithms were selected for the estimation and one for the analytical calculation of the image Jacobian matrix. The inclusion of the Broyden method was motivated by the fact that it can be considered a standard method for uncalibrated visual servoing without a model, and population-based visual servoing was included as a more recent addition to the field. Kalman and particle filter-based methods were introduced as methods only rarely used in uncalibrated visual servoing, especially for eye-in-hand configurations. It should be noted that these two methods are often used for the prediction of target movement and target-tracking in image planes. An analytical method was included in the study because it represents a reference method and thus provides a means for comparison. The parameters for each of the estimation methods were chosen experimentally, so there is no guarantee that they are optimal. The determination of optimal parameters is an active research field with regard to some algorithms (e.g., adaptive Kalman filters), but these have not yet been fully explored in others (e.g., for population size and update functions).

3.2.1. Analytical estimation of the Jacobian matrix

If the forward kinematics model of the robot is known, the end-effector position in the world coordinate frame can be calculated as in equations (3) and (4). This in turn, along with any change in the image feature vector, enables the analytical calculation of the image Jacobian as:

It should be noted that the calculation of the exact value of the image Jacobian matrix is not a trivial task, as real-time calculation is generally too computationally demanding.

3.2.2. The Broyden method

From equation (2), the quadratic weighing function can be defined as:

which can then be used for expansion in the Taylor series around

From equation (7), it is evident that

From equation (8), the main idea of Broyden's approach can be identified: the full Jacobian matrix is estimated only once at the beginning of the experiment, whereas in subsequent iterations a rank-one update [9] is performed. However, when a dynamic target is tracked, one has to take into account velocity prediction and correction. Since, in general, a Jacobian matrix defines the relation between the speed vectors of the image features and the robot angles, the total time derivate of equation (2) can be written in discrete form as:

The main disadvantage of Broyden's method is its high sensitivity to noise, which can even result in the instability of the whole system, while its main advantages are simplicity and computational efficiency. It is worth emphasizing that in this paper the standard Broyden method has been adopted in order to ensure Broyden matrix convergence through the adaptation constant α [9], which is used to multiply the update part of equation (9) (i.e., the second term in the equation). In the study, α was set to 0.15.

3.2.3. The population-based method

If Broyden's approach is expanded in such a way that several Broyden estimates are weighted and used to update the Jacobian matrix, then a significant improvement (especially in terms of robustness to noise) can be obtained, as was demonstrated in [8, 9]. The algorithm is relatively new in the field of visual servoing, especially in relation to eye-in-hand configurations. In this approach, the image Jacobian matrix updates are based on equation:

where

Although the population method has proved to be significantly better than the Broyden method in terms of numerical stability, its computational demands are higher depending on the population size. For the purposes of comparison, the computational complexity of the Broyden method is proportional to O(mxn), whereas the computational complexity of the population method is proportional to O(mxnxp). Thus, this method might not be feasible for multiple camera systems or systems with a high degree of freedom.

3.2.4. The Kalman filter

The Kalman filter [35] is an iterative estimation algorithm intended for the state estimation of linear systems, in general requiring a model in order to generate a priori estimates through a time-update equation. Since the study is concerned with uncalibrated image-based visual servoing without a model, a time update equation can be simply formulated as a random walk by:

where

where

When measurements become available, the time update step is followed by a measurement update step. In the study, the measurement update was achieved with a lower sampling frequency (50 Hz) than the process sampling frequency (100 Hz) on the assumption that the computer's working frequency (on which the control algorithm was running) was higher than the camera sampling rate. The measurement update was used to correct the initial state vector estimate and provide an a posteriori estimate with lower variance. This was achieved with equation:

where

where

3.2.5. The particle filter

In certain application scenarios when Kalman filter assumptions are violated, particle filter estimation is more appropriate. The theoretical and mathematical derivation of particle filters is well documented in the literature [36], and will thus be omitted. The state vector of the particle filter was the same as for the Kalman filter, with dimensions of 48×1. For each of 48 elements, 100 particles were generated. This in turn resulted in a total of 4,800 particles per iteration, which makes considerable computational demands on the hardware.

In the initial iteration, the particles were drawn from a uniform distribution with the same value as was used with the Kalman filter and based on procedures from [36]. The weighted sampling of particle values was derived from the proposition distribution, which is often called the ‘weighing function’, defined as the last known (a priori) distribution. This distribution was based on an available model which, as in the case of the Kalman filter, was a random walk defined for every particle as in equation (11). This is called a ‘prediction step’. In the next step (which is often called ‘correction’), the weight w for every particle is calculated from the available measurements with equation:

where

This value was then thresholded by means of a simple threshold value

3.3. Evaluation methods

In order to evaluate and compare the performance of applied algorithms objectively, three evaluation criteria were applied:

The root mean square error (RMSE), which was used to measure how close the current and desired image features were, defined by equation:

Jerk (JRK), which was used to measure the rate of change in the angular acceleration of individual joints and was defined by equation:

A 2D paired (two-sample) Kolmogorov-Smirnov test, used to determine whether two data sets arise from the same distribution, the null hypothesis being that both data sets are drawn from the same distribution [38]. This test provides the answer to the question “Are two trajectories statistically different?” which can be used to supplement the RMSE data. This is because algorithms can be slow to track dynamic targets (thus having a higher RMSE value) but fit the desired trajectory very well (essentially displaying a time-delay effect), as reflected in the statistical significance of the two trajectories. However, it should be noted that there is controversy about the approach in terms of its possible bias [39]. Since the test can only be performed on two samples at the time, in total 1,360 individual tests were performed. In order to maintain a conservative interpretation of the data, we opted for the multiple test adjustment of p-values using the Bonferroni-Holm method. This was performed for each individual test group under consideration (e.g., we compared test pairs in the model-based approach with different noise levels and the existence of VPC) rather than all 1,360 tests at once. In every test, a 5% significance level was applied.

4. Simulation results and discussion

4.1. RMSE performance

As expected, in all the experimental setups, the calculation of the image Jacobian matrix based on the robot model produced the best results compared with the estimation methods in both static and dynamic cases. It is interesting to note that the model approach did not benefit from velocity prediction. All the other methods generally benefited from the inclusion of velocity prediction and correction.

4.1.1. Static target-tracking

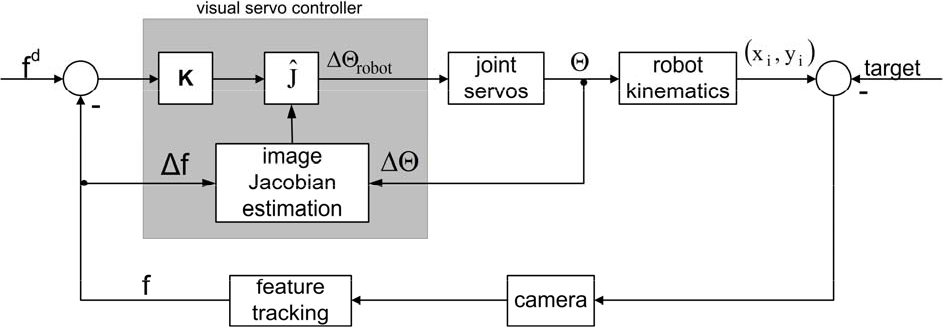

With respect to velocity prediction and the correction algorithm, the population-based method seems to be the most sensitive. This can be seen in Table 1 (NVPC – No Velocity Prediction and Correction; VPC – with Velocity Prediction and Correction implemented as suggested in [9, 12, 20]; σ is standard deviation of zero mean Gaussian noise). If Table 1 is examined more closely, several additional observations can be made. First, the absolute value of the error is relatively large, but this is due to the initial target distance. This prevents the direct comparison of obtained values with similar research in the literature, although the comparison with RMSE value trends in respect of different methods is still possible. The introduction of noise deteriorates the accuracy of all the algorithms but with varying values: it was lowest for the model approach and highest for the population-based approach. The particle filter exhibited the best performance of all the estimation algorithms in all cases. Consequently, the particle filter would be a logical choice when high accuracy is needed. However, in the current particle filter setup, it takes up to six times longer to compute a Jacobian matrix compared with the other methods, making real-time application more challenging. Improvements to the basic algorithm as well as – possibly – reducing the number of particles should reduce computational time.

RMSE values in pixels for a static target; MOD – model, BRO – Broyden, POP – population-based, KAL – Kalman filter, PAR – particle filter.

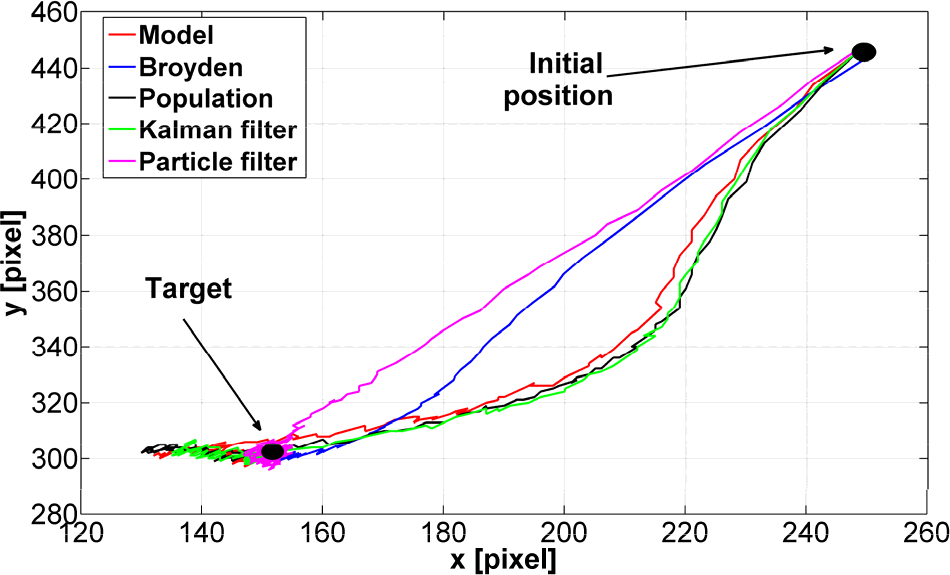

The comparison of the Broyden, population-based and Kalman filter algorithms for static targets showed that the Broyden approach is the most sensible. The differences presented in the table have small absolute values, but it should be kept in mind that this is a simulation and that our experience from previous research [8] suggests that differences are usually amplified in practical applications. It is interesting, visually, to compare the performance of different algorithms, as depicted in Figure 4. However, it should be kept in mind that the RMSE values capture error for all four tracked image features whereas the trajectories presented in Figure 4 pertain only to a single feature.

Comparison of 2D trajectory of one feature for static target case with σ2 = 0.5 and VPC

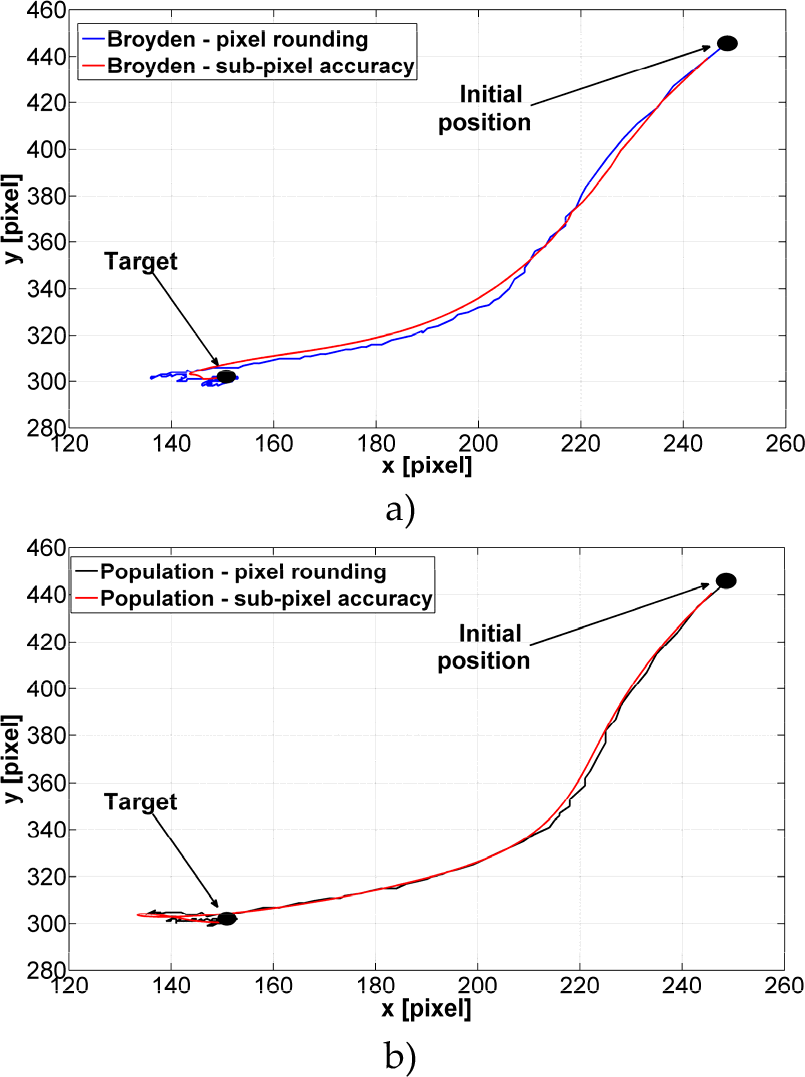

Figure 4 provides several additional interesting observations. The particle filter and model-based methods take a more direct approach to the target with little or no overshoot, whereas the other algorithms have a more pronounced overshoot and an indirect approach. When looking at the Broyden, population-based and Kalman filter algorithms, one can see somewhat rugged trajectories. The reason for this is the fact that pixel rounding was used (in conjunction with different sampling times for the controller and camera).

If a sub-pixel accuracy algorithm is employed, a smoother trajectory is obtained – as depicted in Figure 5 – although overshoot is still present. The positive effect of the sub-pixel accuracy algorithm in the case of fine movements toward a static target can also be seen in the obtained RMSE values, which are in every case lower. The improvement varies depending upon the method used, but is generally within the RMSE range of one to two pixels. This is depicted in the bar chart in Figure 6.

Comparison of the 2D trajectories of the a) Broyden and b) population-based algorithms for one feature with and without a sub-pixel accuracy algorithm for σ2 = 0 and VPC.

Comparison of RMSE values for all methods with and without a sub-pixel accuracy algorithm for σ2 = 0 and VPC

4.1.2. Dynamic target-tracking

Rectangular target-tracking was selected as an illustrative example (containing both straight diagonal lines and abrupt changes in direction) of trends in the RMSE values due to article-space constraints. The obtained results are depicted in Table 2. Similar trends in the RMSE values were observed for all other dynamic target trajectories. Here, again, the unfavourable effect of velocity prediction and correction on the model-based method can be observed for all target speeds. Similar trends are present in the RMSE values as in the case of static targets, but with some significant differences. First, the particle filter is again best in terms of accuracy compared with other estimation methods, but again it takes significantly longer to execute. It should, however, be noted that the Broyden method outperforms all other estimation algorithms in the case of a slow-moving target, and with no added noise. As soon as noise is added, the error increases between 15% and 24%, depending upon the measurement conditions. Other methods also exhibit a deterioration in accuracy with the introduction of noise, but it is not so significant, thus highlighting the Broyden algorithm's sensitivity to noise. Please note that the population-based method has the smallest increase in this particular case (a trend which can be observed in all other cases) of about 0.01%.

RMSE values in pixels for dynamic tracking (rectangular-shaped target trajectory)

It is also interesting to note that for faster-moving targets the Broyden algorithm in no longer best, even if no noise is present, though it outperforms the population-based and Kalman filter methods. However, the relative increase in the RMSE value is higher than with the other two methods. A population of a different size for population-based method might produce better results. Finding the optimal population size as well as the ideal weighing function is beyond the scope of this paper but is an interesting topic for future research. Additionally, the covariance matrices for the Kalman filter were not adapted to every noise level but were kept constant, which potentially reduced the algorithm's accuracy. The implementation of some type of adaptive mechanism would thus be beneficial. All of this suggests that the Broyden method cannot be recommended when a faster-moving dynamical target is to be tracked, whereas the Kalman filter and population-based methods seem to have similar performance parameter values – the Kalman filter being computationally more efficient. For the purposes of completeness and comparison, a cross-section of the RMSE values of all the methods over all the dynamic target trajectories for a speed of 20 pixels/s is given in Table 3 in the case of VPC and

RMSE values for all methods for all 20 pixels/s dynamic target trajectories in the case of VPC and σ2 = 0.5 (L-diagonal line, R – rectangular, C – circle)

The visualization of the tracking results for a rectangular target with a 20 pixels/s speed is presented in Figure 7a. Similar trajectory trends to those in the static case can be observed. The particle filter is closest to the model trajectory, although at several places it deviates from it, whereas the Broyden, Kalman and population-based methods have similar trajectories. In particular cases, all the trajectories are rather jittery (even the model-based one) which can be attributed to several reasons: the presence of noise, pixel rounding, different sampling rates of the camera and controller, and small (fine) movements. Thus, our analysis could be considered the worst case scenario. As in the static case, the introduction of sub-pixel accuracy would reduce or even eliminate this effect. To offer a better picture of the algorithms' performances for different trajectories, Figure 7b presents the tracking results for a target's circular trajectory. Similar trends as in the case of the rectangular trajectory can be observed. In the dynamic case, it should be kept in mind that Figure 7 does not explicitly capture time dependency, which makes the direct comparison with the RMSE values somewhat difficult.

Comparison of the 2D trajectories of one feature for dynamic target-tracking for the case with a speed of 20 pixels/s with σ2 = 0.5 and VPC for: a) rectangular target trajectory, and b) circular target trajectory.

4.2. JRK performance

In the context of jittery movements, it is interesting to examine the values and trends in the jerk signal and – possibly – to identify the algorithms which, despite having low RMSE values, might not be suitable for practical applications because of severe mechanical strain on robot joints/actuators. In order to make the analysis and comparison more practical, only the jerk values for the cases presented in subsection 4.1 will be presented here, although similar trends were observed for all the other measurement conditions.

4.2.1. Static target-tracking

The data for static targets are presented in Table 4, the obtained values being within the ranges reported in the literature on robot control applications [40].

Jerk values in rad/s3 for static target.

Examination of the table reveals that for static cases the particle filter has by far the largest jerk values, although it is the best estimation algorithm. This suggests that the application of the particle filter for static targets might not be the best possible solution if both the RMSE and jerk values are considered. In our opinion, this can be attributed to the nature of the particle filter, which always (even in the vicinity of the actual target) casts a number of particles around the last estimate (amplified if roughening is applied); taking into account the fine nature of the motion under consideration, small oscillations around the target location can be expected (this is also illustrated in Figure 4). The model-based algorithm has the second-highest jerk values, which can be attributed to the fact that it quickly converges on the target location. In general, the population-based algorithm has the lowest jerk values, which was also noted for different robot configurations during experiments in [8]. It is interesting to observe how the robot joint angles change over time for each algorithm. For the third robot joint, this is depicted in Figure 8.

Comparison of robot third joint angles for the static case with σ2 = 0.5 and VPC

In the figure, it can be seen that the two most accurate algorithms (model-based and particle filter) seem to employ slightly different tracking strategies from those in the remaining three methods that differ slightly in their joint angles. This difference is mainly in the form of local minima, which can be traced back to overshoot in the 2D trajectory in Figure 4. Additionally, the existence of slight jitter in the model-based and particle filter signals confirms the data presented in Table 4. However, when comparing Figure 8 and the Table 4 data, one should exercise a certain level of caution since the data in Table 4 are averaged across all robot joints, whereas Figure 8 pertains to only one robot joint (i.e., the other joints might have smaller or larger jerk values).

For the jerk parameter, we were also interested in whether sub-pixel accuracy would reduce (or increase) its values. As depicted in Figure 9, the inclusion of sub-pixel accuracy dramatically reduced the jerk values (please note that because of large amplitude differences, the y axis is presented in natural logarithmic form). Negative values indicate that in some cases it was lower than one. This again demonstrates the positive effects that sub-pixel accuracy can have on final robot performance and justifies its inclusion in the loop. These effects are even more dramatic if the robot joint angles are examined. In Figure 10, the joint angles for the robot's third joint are presented.

Comparison of jerk values for all methods with and without the sub-pixel accuracy algorithm for σ2 = 0 and VPC

Comparison of robot third joint angles for the a) model- based and b) Kalman filter algorithms with and without the sub-pixel accuracy algorithm for σ2 = 0 and VPC.

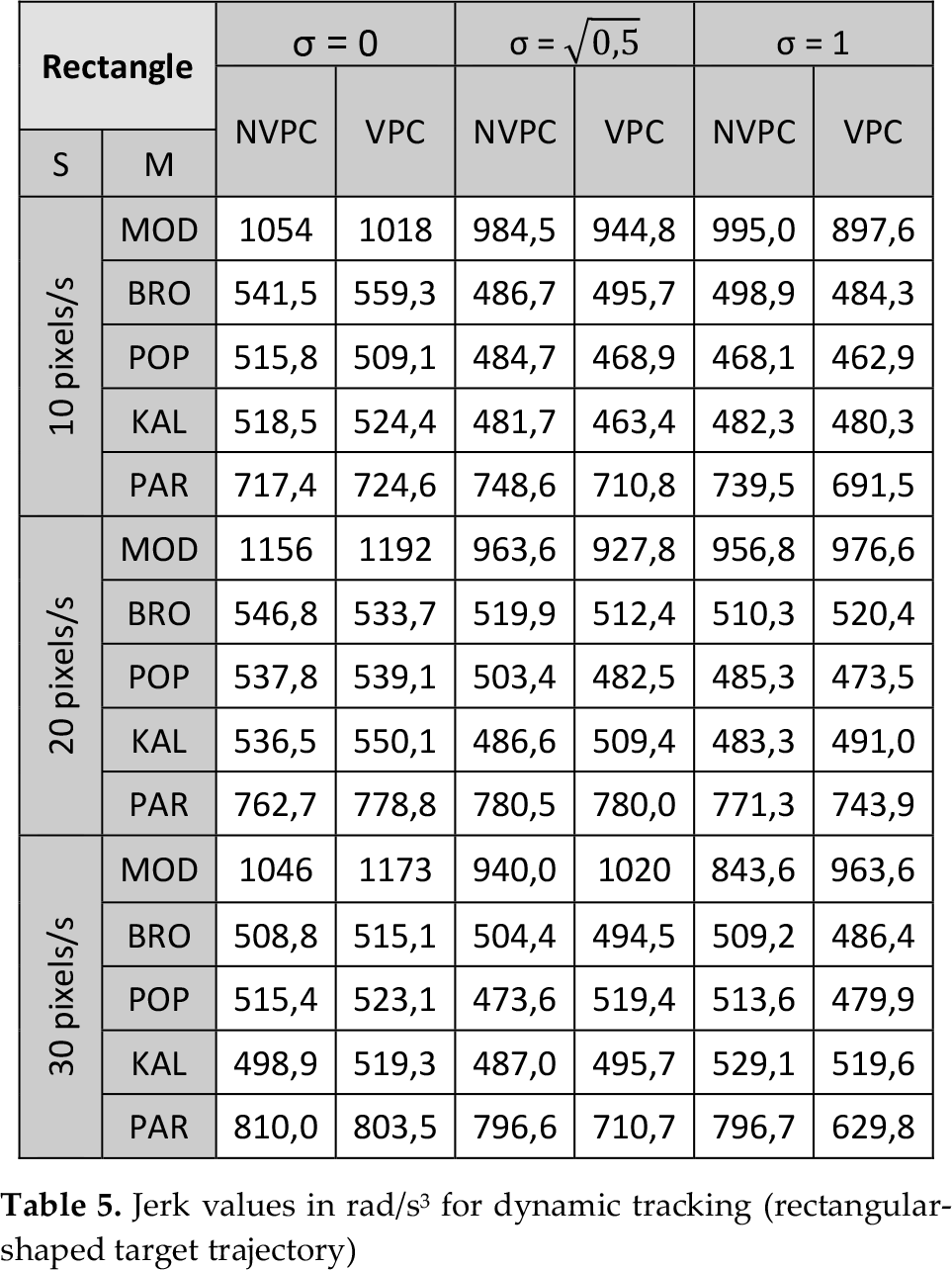

4.2.2. Dynamic target-tracking

If dynamic target-tracking is examined and the rectangle trajectory is selected as a representative example, then the data shown in Table 5 are obtained. It is interesting to note that, now, the model-based algorithm has the largest jerk values, which again could be attributed to its tracking speed. The particle filter has significantly smaller values than in the static case (supporting our explanation with respect to static targets), but they are still larger than in the case of the remaining three estimation algorithms. The population-based method again generally has the smallest jerk values, except with regard to high-speed targets (in which case, the Kalman filter and Broyden method would seem to be a better choice). This in turn suggests that the population-based method (at least in the current configuration) might be better suited for slower-moving targets. Looking at the third robot joint angle values in Figure 11, we can draw similar conclusions to those in the static case.

Comparison of robot third joint angles dynamic target-tracking for a rectangular target trajectory and a speed of 20 pixels/s with σ2 = 0.5 and VPC

Jerk values in rad/s3 for dynamic tracking (rectangular-shaped target trajectory)

The model-based and particle filter algorithms employ different dynamic target-tracking strategies from those employed by the remaining three estimation algorithms, which all have similar angle profiles. It is also interesting to note that although the model-based and particle filter approaches have dramatically different joint angles in the third robot joint, their feature trajectory in the image plane (as depicted in Figure 7) is not significantly different. Small oscillations in the trajectories of the model-based approach (especially around 12 s to 18 s) and the particle filter algorithms in a sense support the data in Table 5. As in the case of the RMSE values, a cross-section of the jerk values for all the estimation algorithms and all the dynamic target trajectories with a speed of 20 pixels/s with VPC and

JRK values for all the methods, all with a speed of 20 pixels/s and dynamic target trajectories with VPC and σ2 = 0.5

The fusion of all the obtained results suggests that it might prove beneficial to have a switching logic which would, depending upon the target trajectory shape (and taking into account the convergence rate of every algorithm), switch between different estimation algorithms. Such a switching logic would act as a kind of supervisor which would decide which method is appropriate in a particular case. A similar logic (but for a different application) was used in [29].

To demonstrate the validity of this assumption, we created a trajectory consisting of four simpler ones with known start- and end-time instances: a diagonal line, a static point and two one-directional lines (in the x and y directions). Three cases were tested: Broyden only, particle filter only, and a combination of Broyden and particle filter, where the Broyden method was used in part of the static target and the particle filter in the other three characteristic trajectories.

The obtained results in terms of the RMSE and jerk values are presented in Table 7, from which it can be seen that switching has a potentially compromising effect. It should also be noted that the switching algorithm also reduced the computational cost in comparison with the particle filter alone.

Comparison of the Broyden method, the particle filter and the Broyden-particle filter combination (COMBO) results

4.3. 2D two-sample Kolmogorov-Smirnov test

Presenting the results of all the tests is not possible because of space-constraints, but some interesting conclusions can be drawn and some illustrative examples presented. It is important to note that although some methods (within and between method comparisons) exhibited different accuracy levels in Table 1 and Table 2, this did not necessarily reflect in the statistical tests performed. This is indicative of the RMSE vs. trajectory-shape relation explained in section 3.3.

If, within the algorithm, comparison is made, the following conclusion can be drawn:

Model-based approach: in the static case, there is a statistically significant difference for cases when there is no noise, but with the introduction of noise this effect disappears. The same effect is present in the following dynamical cases: line slow (10 pixel/s) and line fast (30 pixel/s). In all other cases, no statistically significant difference was detected.

Broyden method: the only statistically significant difference was detected in the static target case when we compared trajectories generated in the case of no noise and without VPC with other trajectories.

Population-based method: no statistically significant difference was detected.

Kalman filter: in the static case, a difference was observed when we compared cases with no noise (with and without VPC) with cases with

Particle filter: in the static case, a difference was observed in comparison with VPC and no noise with all other cases, whereas in the dynamic tracking scenario a difference was observed in the case of fast-moving targets (line and rectangular trajectories) with the following noise-VPC combinations: the line-no noise-VPC case with line-

To offer a clearer picture of the algorithms' discriminatory power we present two cases in Figure 12 for the particle filter method in similar dynamic target-tracking scenarios.

Illustration of statistically significant test results for the particle filter tracking of a target moving at 30 pixels/s. The blue trajectory, according to two sample 2D Kolmogorov-Smirnov tests, is statistically different at the 0.05 level with respect to the red trajectory, but red is not different from the green trajectory.

When we compared the algorithms using only cases with the same noise and VPC status parameters, we made the following observations:

No noise: in general, the model-based approach (VPC and NVPC) was significantly different from all other methods in the static case and for slow and fast line trajectories. Differences were also detected in normal (20 pixel/s) and fast rectangular and circular target trajectories. The Broyden method generally demonstrated a difference only with the particle filter algorithm in the static case and in the fast speed line and rectangular cases. The same was true of the population-based method with a rectangular trajectory and normal speed. The Kalman filter exhibited a statistically significant difference in comparison with the particle filter method in the static case, for slow and fast line trajectories, and for normal and fast rectangular trajectories.

Noise with

Noise with

Finally, the statistical differences of the generated trajectories in the static target case (with no noise) were examined for outputs with pixel rounding and sub-pixel accuracy. Comparison was made with corresponding measurements only. In every case, a very significant statistical difference was observed.

5. Conclusions

This paper compared several methods for the estimation of an image Jacobian used for visual servoing in an eye-in-hand configuration in the simulation study of a number of application scenarios. This large number of experimental scenarios coupled with four estimation algorithms and a model-based approach provided a large amount of data. The data were used for drawing conclusions based on three performance parameters: the root mean square error, jerk and the statistical difference of observed 2D trajectories. Although not quantitatively analysed, the influence of computation time was observed. The obtained results suggest that no single method can be applied in all scenarios and with all performance parameters in mind. Thus, a between-algorithm switching technique was proposed and initially tested to prove the validity of the assumptions made.

In the static case, the model-based approach was best in terms of accuracy, followed by the particle filter; however, the particle filter had higher jerk values than the model-based approach. The computational time of the particle filter was up to six times that of the Broyden method. Particle filter jerkiness was greatly reduced in the dynamic scenarios, which we attributed to the nature of the filter. Accuracy levels generally showed the same trends in dynamic cases as in static cases, but it was evident that the Broyden method is by far the most sensitive to noise while the population-based method is not. This is not surprising, since its operation is based on the number of past estimations, thus significantly reducing the influence of noise. It is interesting to note that the Broyden algorithm performed better in terms of RMSE values in dynamic line target-tracking in the diagonal direction (i.e., when both the x and y coordinates changed) than when one directional motion was considered (i.e., when one coordinate was kept constant and the other one changed).

A slight difference in algorithm performance was observed in dynamic cases, depending upon the target trajectory as well as upon the target speed. Statistically significant testing showed that, for smaller speeds, although there is a difference in the RMSE values, the resulting trajectories were not different, and this was not true for faster speeds. This might be because RMSE implicitly takes time into account, whereas statistical testing does not. Additionally, a few differences were detected for circular target motion. It should also be noted that the inclusion of the VPC algorithm improved the performance of all the estimation algorithms except for the model-based algorithm, and that the population-based approach showed the greatest deterioration in accuracy when VPC was turned off.

Finally, we tested in limited number of cases what effect the sub-pixel accuracy algorithm would have on the performance of the visual servoing algorithms. The obtained results demonstrated a significant impact on all the parameters: the RMSE error was slightly reduced, jerk was significantly reduced, and a statistical difference was observed between the corresponding pixel-rounding and sub-pixel algorithm cases. All of this suggests that the inclusion of this kind of algorithm is desirable in any type of visual servoing.

It should be pointed out that this was a simulation study and that the experiment should be repeated on a real robot (or a number of them), which is one of our future research directions. The selection of optimal parameters for estimation methods is another important area where improvements in performance might be achieved. Additional future research directions include testing under additional dynamic target motion trajectories, the implementation of a switching supervisor, and the development of an adaptive weighing function for the population-based algorithm.