Abstract

This paper discusses research in the area of texture image classification. More specifically, the combination of texture and colour features is researched. The principle objective is to create a robust descriptor for the extraction of colour texture features. The principles of two well-known methods for grey-level texture feature extraction, namely GLCM (grey-level co-occurrence matrix) and Gabor filters, are used in experiments. For the texture classification, the support vector machine is used. In the first approach, the methods are applied in separate channels in the colour image. The experimental results show the huge growth of precision for colour texture retrieval by GLCM. Therefore, the GLCM is modified for extracting probability matrices directly from the colour image. The method for 13 directions neighbourhood system is proposed and formulas for probability matrices computation are presented. The proposed method is called CLCM (colour-level co-occurrence matrices) and experimental results show that it is a powerful method for colour texture classification.

1. Introduction

In recent years, the need for efficient content-based image retrieval has increased tremendously in many application areas, such as biomedicine [1,2], military [3], commerce, education, and web image classification and retrieval [4–6]. Currently, rapid and effective image retrieval from a large-scale image database is becoming an important and challenging research topic [7]. One of the most popular fundamental research areas is content-based image retrieval (CBIR) [8–10].

Although CBIR has been a very active research area since the 1990s, there are still a number of challenging issues due to the complexity of image data. These issues are related to long-standing challenges among several interdisciplinary research areas, such as computer vision, image processing, image database, machine learning, etc. In a typical CBIR, image retrieval is based on visual content such as colour, shape, texture, etc. [8–10].

Texture has been one of the most popular features in image retrieval [11]. Even though greyscale textures provide enough information to solve many tasks, the colour information was not utilized. But in recent years, many researchers have begun to take colour information into consideration [12–17].

The human eye perceives an image as a combination of primary parts (colour, texture, shape). Therefore, our approach is oriented to creating robust low-level descriptors by a combination of these primary parts of the image. Specifically, research on the combination of colour and texture is presented in this paper.

The outline of the paper is as follows. In the next section, an overview of basic principles of GLCM (grey-level co-occurrence matrix) and Gabor filters, and the extraction of colour features based on these methods are introduced. The novel method called CLCM is presented in section 3. In section 4, the texture classification and evaluation methods are described. The experimental results are presented in section 5. Finally, a brief summary is presented in section 6.

2. Related Work

There are several approaches to how colour and texture can be combined. Ye Mei and Androutsos [13] introduced a new colour and texture retrieval method, based on wavelet decomposition of colour and texture images on the hue/saturation plane. Assefaa et al. [14] discussed how the spectral analysis of each colour component of an image leaves us without information about the coupled spectra of all colour components. They introduced the idea of computing the Fourier transform of colour images as one quantity. Jae-Young Choi at al. [16] proposed new colour local texture features (colour local Gabor wavelets and colour local binary pattern) for the purpose of face recognition. Hossain and Parekh [17] extended GLCM to including the colour information for texture recognition by separating colour channels' combinations, e.g., rr, gg, bb, rg, rb, gr, gb, br, bg. In our previous work [18], new possibilities to improve the GLCM-based methods were presented. In this paper, new and extended experiments with GLCM are described and novel methods are proposed.

3. Grey-level methods

3.1. Grey-level co-occurrence matrix

The GLCM (grey-level co-occurrence matrix) is a powerful method in statistical image analysis [19–22]. This method is used to estimate image properties related to second-order statistics by considering the relation between two neighbouring pixels in one offset as the second order texture, where the first pixel is called the reference and the second, the neighbour pixel. GLCM is defined as a two-dimensional matrix of joint probabilities between pairs of pixels, separated by a distance

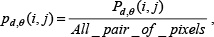

For the scale invariant of the texture pattern, the GLCM is standardized by total pairs of pixels as follows:

where

Haralick [20,21] defined 14 statistical features from the grey-level co-occurrence matrix for texture classification. However, these features are strongly correlated [19]. We decided to avoid this issue by using only one feature for GLCM methods comparison. The feature of the inverse difference moment, also called “homogeneity”, was selected based on our previous research. The homogeneity is defined as follows [20]:

3.2. Gabor filters

The Gabor filters (GF) are optimally localized in both time and spatial frequency spaces, and they obtain a set of filtered images which correspond to a specific scale and orientation component of the original texture [22]. In this work, five scales and six orientations are used, in terms of the homogenous texture descriptor (MPEG-7 standard) [23,24].

The frequency space is partitioned into 30 feature channels, indicated by

Gabor filter banks (frequency region dividing) [24]

Here

The Gabor function defined for Gabor filter banks (GFB) is written as

where Gps,r is the Gabor function at s-th radial index and

For the frequency layout shown in Fig. 1, σ

The detailed description of parameters in the Gabor feature channels are described in [23,24].

The features vector is created by energies written as [

where

where |

4. Colour-level methods

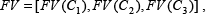

The grey-level method provides the texture feature's vector from grey-level images. This method can be also used for colour images [16,25]. The easiest way is to analyse colour images by applying method to each 2D matrix of three-dimensional colour image representation [26]. Subsequently, the colour feature's extraction can be defined as follows:

where FV is the feature's vector and

4.1. Colour-level co-occurrence matrices

After applying these methods to separate colour channels of the colour image, a huge increase in retrieval precision of GLCM was obtained. This encouraged us to take colour into consideration in the next experiment with GLCM. We modified this algorithm for extracting GLCM matrices directly from the colour image. We called this method ‘colour-level co-occurrence matrices’ (CLCM).

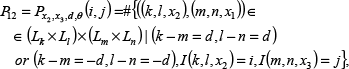

In colour image representation, a pixel on position (

Principle of the neighbourhoods system for direction 13 in which

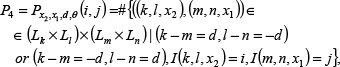

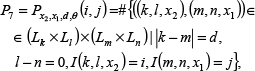

For the distance

where # denotes the number of elements in the set,

These 13 probability matrices express relations between component

The combination of colour space components for CLCM, where

4.2. Texture classification and evaluation

4.2.1. Support vector machine

Today, SVM (support vector machine) is one of the most frequently used techniques for classification and regression [27].

The SVM is a universal constructive learning procedure based on the statistical learning theory proposed by Vapnik in 1995 [28]. The term “universal” means that the SVM can be used to learn a variety of representations, such as neural networks (usually with sigmoid activation function), radial basis function, splines, polynomial estimators, etc. [29].

In our experiments, the C–SVM formulation with an RBF (radial basis function) kernel and a five-fold CV (cross validation) scheme based on LIBSVM (library for support vector machines) [27] was used. The standard version of PSO (particle swarm optimization) was used for the model parameters. The PSO method searches for the best model parameters of SVM. After finding the best parameters using a five–fold CV-like criterion function, we train the SVM classifier which produces a model representing learned knowledge.

4.2.2. Evaluation criteria

For the evaluation of the experiments, the evaluation criteria of precision, recall and

where

Next, the

It gives high values if both precision and recall have high values; on the other hand, if one of them has low value, the value of

5. Experiments

5.1. Experiment layout and databases

In our experiments, two widely used colour texture databases, the Outex TC_00013 database (MIT Media Lab) [32] and the Vistex database [33], were used.

The Outex database contains 68 types of textures in 1360 colour images (20 images per texture) at a resolution of 128×128 pixels. In the classification process, 10 images were excluded for training and 10 images for testing for each texture class. An example of the Outex TC_00013 database is shown in Fig. 3.

Example of Outex TC_00013 database

From the Vistex database, the 512×512 colour images dataset was chosen. The 31 image texture pairs were chosen from this database, where the first image was excluded for training and the second for testing. The texture window with dimensions of 64×64 pixels was used (16 textures for training and 16 for testing). An example of Vistex database is shown in Fig. 4.

Example of Vistex database of training and testing texture pairs: a), b) c) are training images; d), e) and f) are testing images

For all experiments on GLCM, the parameters' distance

For GF, 30 banks in six orientations and five scales based on standard MPEG-7 (homogeneous texture descriptor [23,24]) were used.

Two simple software scripts for annotation and classification were created. The first script was used for the creation of an annotated database, where the training databases are at the input and the extracted feature vectors, exported into an XML file, are at the output. The second script was created for texture classification, through which the images from the testing databases are classified into appropriate classes by SVM and the results are evaluated by P, R and F1 score.

5.2. Experimental results

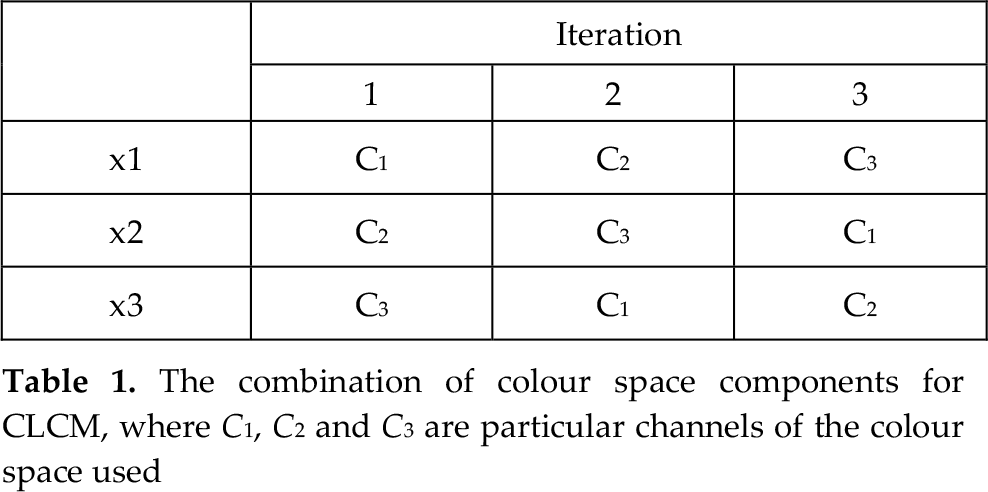

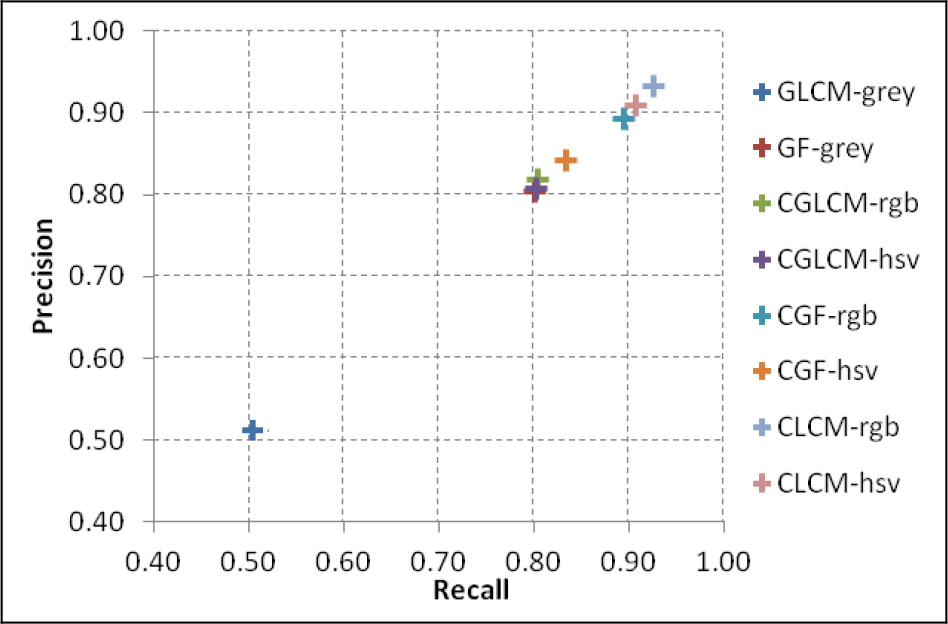

The texture classification results achieved on the Outex TC_00013 and Vistex databases are shown in Fig. 5. The best results for grey-level texture description were obtained by GF, and showed F1 reaching almost 80%. The GLCM with one feature (homogeneity) reached about 50%. After applying these methods to separate colour channels, a huge increase of retrieval precision for GLCM was obtained. More specifically, GLCM reached almost 80% of F1 for both databases. The retrieval precision of GF for separate colour channels increases by only a few percent. The highest precision for colour texture retrieval was obtained by the modification of GLCM called CLCM, where F1 reached over 90%. The detailed results of all experiments are shown in Tab. 1.

Comparison of results for Outex TC_00013 dataset

The experimental results of P, R and F1 for databases Outex TC_00013 and Vistex

The table shows the P, R and F1 score for all descriptors and both databases. Graphical representations of obtained results are presented in Figs. 5 and 6.

Comparison of results for Vistex dataset

6. Conclusion

In this paper, research on extraction and classification of colour texture information was presented. Initially, GLCM and GF methods for the extraction of grey-level texture features and their use on separate channels in the colour image were experimentally tested. These results led us to apply the GLCM method on colour vector data and thus we produced the CLCM method. The experiments were carried out on the Vistex and Outex databases by using the RBF-based SVM classification. The experimental results confirm that the proposed CLCM method achieved an F1 score approximately 40% higher than the basic GLCM method, demonstrating over 90% success in colour texture classification.

In the future, the application of various combinations of GLCM features on the CLCM principle and also classification for specific applications will be researched.

Footnotes

7. Acknowledgments

This contribution is the result of the project's implementation at the Centre of Excellence for Systems and Services of Intelligent Transport, ITMS 26220120050. It was supported by the Research & Development Operational Programme funded by the ERDF and by Project No. 1/0705/13, “Image elements' classification for semantic image description”, with the support of the Ministry of Education, Science, Research and Sport of the Slovak Republic..