Abstract

This paper presents an algorithm to remove fog from a single image using a Markov random field (MRF) framework. The method estimates the transmission map of an image degradation model by assigning labels with a MRF model and then optimizes the map estimation process using the graph cut-based α-expansion technique. The algorithm employs two steps. Initially, the transmission map is estimated using a dedicated MRF model combined with a bilateral filter. Next, the restored image is obtained by taking the estimated transmission map and the ambient light into the image degradation model to recover the scene radiance. The algorithm is controlled by just a few parameters that are automatically determined by a feedback mechanism. Results from a wide variety of synthetic and real foggy images demonstrate that the proposed method is effective and robust, yielding high-contrast and vivid defogging images. In addition to image defogging, surveillance video defogging based on a universal strategy and the application of a transmission map are also implemented.

Introduction

Image defogging is an important issue in the field of computer vision. There are many circumstances in which defogging algorithms are needed, such as automatic monitoring systems, automatic guided vehicle systems, outdoor object recognition and visual navigation in low visibility environments, etc. However, the quality of images taken in foggy weather conditions is easily undermined by the aerosols suspended in the medium, which have an effect on the image such that the contrast is reduced and the surface colours become faint. Such degraded images often lack visual vividness and offer a poor view of the scene contents. The goal of defogging algorithms is to recover the details of scenes from foggy images. Since the process of removing fog from an image depends on the depth of the scene, the essential problem that must be solved for most image defogging methods is scene depth estimation. This is not trivial, and requires prior knowledge.

In this paper, we propose a new method that can produce a good defogging effect for various foggy images. The main motivation of this research is to improve the visual quality of images for the bulk of automatic systems and outdoor photos taken in poor weather conditions. For example, in foggy weather, the quality of images captured by a classic in-vehicle camera is drastically degraded, which makes current in-vehicle applications reliant on such sensors very sensitive to weather conditions. An in-vehicle vision system should take fog effects into account if it is to be more reliable. A solution is to remove fog effects from the image beforehand. This is also the case for other applications, such as surveillance, intelligent vehicles, remote sensing and aerial photography, etc. Therefore, restoring foggy images is highly desirable in both computer vision applications and consumer photos. Usually, computer vision algorithms assume that the input image characterizes the scene radiance. The performance of vision algorithms (e.g., feature detection, filtering, object recognition and photometric analysis) will inevitably suffer from the biased, low-contrast scene radiance. Removing fog can significantly increase the visibility of the scene and correct the colour shift caused by the ambient light to make the vision algorithms more effective and the appearance of foggy photos more pleasing. In this paper, the proposed defogging method combines the MRF model with transmission map (scene depth) estimation, and the graph-cut based α-expansion method is used here to optimize the map estimation process. This provides a new way to solve the image defogging problem. The main contribution of this paper can be described as follows:

- A novel MRF-based method is proposed which applies an optimization library to estimate a transmission map. Experiments on both synthetic images and real-world images show the effectiveness of the proposed method. Compared with existing defogging methods, the proposed algorithm can remove fog more thoroughly without producing any halo artefacts, and the colour of the restored images is natural in most cases.

- We extend our proposed method to foggy video applications using a universal strategy, which greatly improves computational efficiency and enhances the visual effect. The application of our transmission map, such as fog simulation, is also implemented based on the estimated transmission map.

- The adaptive adjustment of the algorithm's parameters using a defogging effect measurement index is realized in this paper. Thus, a static, open-loop parameter estimation issue is transformed into a dynamic parameter adjustment issue. In addition, the performance of the defogging algorithms is effectively measured using appropriate qualitative and quantitative evaluations.

The organization of this paper is as follows. We begin by reviewing existing works on image defogging. In Section 3, we introduce the MRF model and the outdoor geometry of a foggy image. In Section 4, we propose a defogging algorithm based on the MRF model. In Section 5, we extend our algorithm to video applications and our transmission map is also presented. In Section 6, we present some experimental results. Finally, in Section 7, we make some concluding remarks.

Previous Works

Given the importance of defogging algorithms, many studies on defogging have been conducted. Previous defogging research can be divided into two categories: image enhancement methods and image restoration methods [1]. Image enhancement methods tend to increase the dynamic range and contrast of images degraded by fog. Classic image enhancement algorithms include histogram equalization and a Retinex algorithm. Image restoration methods cover the intrinsic luminance of an object using additional information or prior information. Representative algorithms include the dark channel algorithm [2] and the fast filter algorithm [3]. The dark channel algorithm [2] is recognized as one of the most effective ways to remove fog. The algorithm estimates the transmission map of each patch as the minimum colour component within that patch and employs a soft matting algorithm to refine the map. The fast filter algorithm [3] has been proven to be faster than most other algorithms for outdoor scenes. The algorithm uses a fast median filter to infer the atmospheric veil and further estimate the transmission map. The main advantage of this method is its speed. However, The defogging algorithm in [2] is based on an image prior-dark channel prior, which is a kind of statistics of haze-free outdoor images, and the dark channel prior will be invalid when the scene objects are inherently similar to the ambient light and no shadow is cast on them; in addition, the defogging method in [3] is unable to remove the fog between small objects and the colour of the scene objects is unnatural for some situations.

Graphical models (GMs) are probabilistic models combining probability with a graph, and comprise an important means for solving this problem. Such models can be divided into two categories: directed graphs and undirected graphs. Generally, a directed GM is a Bayesian network (BN) when the graph is acyclic, meaning there are no loops in the directed graph. The relationships in a BN can be described by local conditional probabilities [4]. In [5, 6], a Bayesian defogging method that jointly estimates the scene albedo and depth from a single foggy image is introduced by leveraging their latent statistic structure. The undirected graph refers to a MRF. Since a MRF is undirected and may be cyclic, it can represent certain dependencies that a BN cannot, providing a new means for image defogging due to the dependencies existing between the neighbouring pixels. The defogging algorithm in [7] is based on the observation that the surface Lambertian shading factor and the scene transmission are locally independent. Thus, the fog can be separated from the scene. Then, a Gaussian MRF is used to smooth the intensity value of the transmission map. In [8], a cost function is developed within the framework of MRF to enhance the visibility of images. However, the results obtained by this method tend to have larger saturation values than those in the actual clear-day images. In [9], scene geometry and the α-expansion optimization technique are employed to improve the robustness of a single image dehazing algorithm. Recently, image defogging based on the MRF model has made significant progress [10–12]. In [10], the image defogging problem is decomposed into two steps: first, the atmospheric veil is inferred using a dedicated MRF model, and second the restored image is estimated by minimizing another MRF energy which models the image defogging in presence of noisy inputs. In this MRF model, the flat road assumption is introduced to achieve better results on road images. In [11], a MRF model for both stereo reconstruction and defogging problems is combined into a unified MRF model to take advantage of both stereo and atmospheric veil depth cues. Thus, the stereo reconstruction and image defogging of daytime fog can be solved using the new MRF model. In [12], a multi-level depth estimation method based on a MRF model is presented for image defogging. The method integrates the characteristics of a dark channel prior into the MRF model in order to estimate an accurate depth map. The MRF is applied, here, to label the depth level in adjacent regions to compensate for wrongly estimated regions. The textures in the scene are the critical element, serving as the smoothing term in the MRF model. These fog removal algorithms are the most representative of MRF defogging methods, and they are all physically sound. However, the colour and the profile of the scene objects can sometimes look unnatural for the defogged results. To solve the problem, we introduce an image assessment index to the MRF model to optimize the parameters of the proposed method. Thus, visually pleasing defogging results can be obtained.

Background

Markov Random Fields

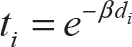

Many vision problems can be solved naturally using the MRF technique. MRF theory is a branch of probability theory for analysing the spatial or contextual dependencies of physical phenomena. It is often used in visual labelling to establish the probabilistic distributions of interacting labels. Here, we use an MRF to estimate the transmission map in an image degradation model. It is an undirected graph, and adjacent nodes are connected to determine the depth of a real scene [12]. We associate a hidden layer with the dense level of fog and an observation layer with the initial transmission map, and then a MRF model is added to a cost function, such that:

In (1), f = {fp | p ∊ P} is a labelling of image P, fp is the label of pixel p in image P, and fp = {1,2,3, …, k}. In addition, q is the neighbour of p, N is the set of pairs of pixels defined over the standard four-connection neighbourhood, E(f) is for minimizing the sums of two types of terms, and the first term Dp(·) is a data function. The smaller the difference between a pixel and its label, the smaller Dp(·) will be. Dp(·) penalizes a label fp assigned to pixel p if it is too different from the observed data Ip. The second term Vp,q(·) is a smoothing function (or a ‘discontinuity-preserving’ function) [13, 14]. The smaller the difference among the labels of the pixels in set N, the smaller Vp,q(·) will be. Vp,q(·) encourages the integrity of an image by penalizing two neighbouring labels fp and fq if they are too different. The choice of Vp,q(·) is a critical issue, and in the proposed defogging method we apply the outdoor geometry to obtain this term. With the smoothing term, the saturated colours at each pixel can be computed with reasonable smoothing. Thus, for the transmission map estimation, the data function represents the probability of pixel p having a transmission association with label fp. The smoothing function encodes the probability whereby neighbouring pixels should have a similar depth. A graph cut is used here to minimize the energy function of the MRF. The method transforms an image represented by a set of pixels into a graph with an augmented set of nodes, and then cuts the graph into different sets. The cuts correspond to some assignment of pixels to labels. If the edge weights are appropriately set based on the parameters of the energy function [see Eq. (1)], a minimum cost cut will be obtained by labelling each pixel according to the minimum value of this energy function. Thus, in finding a cut that has the minimum cost among all cuts, the minimum value of the energy function of the MRF can be obtained and a proper label can be assigned to each image pixel, as shown in Figure 1. Therefore, the graph cut technique transforms the energy minimization problem to an equivalent problem concerning finding an effective way to partition a special graph constructed according to the primal minimization problem into different sets. α-expansion algorithm is used to solve the graph cut problem with good computational performance. For the transmission map estimated using the MRF model, the smaller value of the label on behalf the deeper depth in the scene, while the lager value corresponding to the scene points which near the camera or observer. The relabeling results would constitute the initial transmission map of the proposed method. However, there remains certain redundant details that need to be removed.

Label assignment by energy minimization

In this section, we present the outdoor geometry that is used in the transmission map estimation of the proposed algorithm. Light passing through a scattering medium is attenuated and distributed in other directions. This can happen anywhere along the path and leads to a combination of radiances incident towards the camera, as shown in Figure 2.

Scattering of light by atmospheric particles

Formally, to express the relative portion of light that managed to survive passage along the entire path between the observer and a surface point within the scene, the defined transmission map ti combines the geometric distance di and the medium extinction coefficient β (the net loss from scattering and absorption) into a single variable [15]:

According to (2), the following outdoor geometry is reasonable: assuming that β is constant over the image, the variations in transmission are due to the distance d between the scene point and the camera such that, the greater the distance, the lower the intensity in the transmission map. For most outdoor images, an object which appears closer to the top of the image is usually further away. Thus, the distance along the ground to the object is a monotonically increasing function of the image plane height, which starts from the bottom of image going up to the top. For example, from Figure 3(a) one can clearly see that the distance between the scene point R and the camera is smaller than that between scene point S or T and the camera. In addition, the intensity at point R in the transmission map is higher than that of point S or T, as shown in Figure 3(b). Figures 3(c–d) show the relationship between the height position of the observation point and its distance or intensity in relation to the transmission map.

The distance and intensity relationship of any scene point. (a) Input foggy image and three scene points. (b) The transmission map for (a) and the scene points. (c) The relationship between the height position of the observation point and its distance. (d) The relationship between the height position of the observation point and its intensity in relation to the transmission map.

The algorithm flowchart

Specifically, the proposed algorithm employs three steps in removing fog from a single image. The first one involves computing the ambient light according to the three distinctive features of the sky region. The second step involves the computing of the transmission map with the MRF model and the bilateral filter. The goal of this step is to assign an accurate pixel label using the graph cut-based α-expansion and to remove any redundant details using the bilateral filter. Finally, with the estimated ambient light and the transmission map, the scene radiance can be recovered according to the image degradation model. The flowchart of the proposed method is depicted in Figure 4.

Flowchart of the algorithm

The presence of aerosols in the lower atmosphere means that the light may scatter and be absorbed while travelling through the medium [16]. This can happen anywhere along the path, and it can lead to a combination of radiances incident towards the camera. The image degradation model that is widely used to describe the formation of foggy images is as follows [2]:

where I(

Estimating ambient light A should be the first step in restoring the foggy image. To estimate the ambient light, three distinctive features of the sky region are considered here, which is a more robust approach than that of the ‘brightest pixel’ method. The distinctive features of the sky region are: (i) a bright minimal dark channel, (ii) a flat intensity, and (iii) an upper position. For the first feature, the pixels that belong to the sky region should satisfy Imin(

Initial transmission map estimation

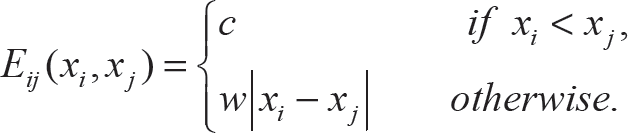

Transmission map estimation is the most important step for image defogging. Here, we use the graph cut-based α-expansion method to estimate the map t(

where P is the set of pixels in an unknown transmission t, and N is the set of pairs of pixels defined over the standard four-connect neighbourhood. The unary function Ei(xi) is the data term representing the probability of pixel i having transmission ti associated with label xi. The smooth term Eij(xi, xj) encodes the probability whereby neighbouring pixels should have a similar depth.

For data function Ei(xi), which represents the probability of pixel i having transmission ti associated with label xi, we first convert the input RGB image Ii into a grey-level image I'i, and then compute the absolute differences between each pixel value and the label value. The process can be written as:

In (5), I'i is the intensity of a pixel in the grey-level image (0 ≤ I'i ≤ 1), L(xi) denotes each element in the set of labels L = {0,1 / l, 2 / l, …, 1}. The parameter ω is introduced to ensure that I'i and L(xi) have the same order of magnitude.

The smooth function Eij(xi, xj) encodes the probability whereby neighbouring pixels should have a similar depth. Inspired by the work in [8], we use a linear cost function, which is solved by α-expansion:

From the outdoor geometry, we know that objects which appear closer to the top of the image are usually further away. Thus, if we consider two pixels i and j, where j is directly above i, we have dj > di according to the outdoor geometry. Thus, we can deduce that the transmission tj of pixel j must be less than or equal to the transmission ti of pixel i by using Eq. (2), that is xj ≤ xi. For any pair of labels which violate this trend, a cost c > 0 can be assigned to punish this pattern. Thus, the smoothing function in Eq. (6) can be written as:

The parameters w and c are used to control the good or bad of the defogging effect. The value of w controls the strength of the detail enhancement, and is set usually between 0.01 and 0.1. The cost c controls the strength of the colour recovery, and is usually set between 100 and 1,000. The two parameters are useful as a compromise between highly enhanced details where colours may appear too dark, and less restored details where colours are brighter. Besides, the weights associated with the graph edge should be determined. If the intensities of two neighbouring pixels in the input foggy image I are less than 15 in each channel, which means that the two pixels have a high probability of sharing the same transmission value. Thus, the cost of the labelling is increased by fifteen-fold to minimize the artefacts due to the depth discontinuities in this case. Taking the data function and the smoothing function into the energy function equation (4), the pixel label of the transmission map can be estimated by using graph cut-based α-expansion. In our method, the gco-v3.0 library [17], developed by O. Veksler and A. Delong, is adopted for optimizing multi-label energies via the α-expansion. It supports energies with any combination of unary, pairwise and label-cost terms [18, 19]. Thus, we use the library to estimate each pixel label in an initial transmission map. The pseudo-code of the estimation process using the gco-v3.0 library is presented in Figure 5.

The pseudo-code of the label assignment using the gco-v3.0 library

In Figure 5, M and N are the height and width of the input foggy image, and ω, w and c are the parameters in Eqs. (5) and (7). By using the functions defined in the optimization library (e-g., GCO_SetDataCost, GCO_SetSmoothCost and GCO_GetLabeling), we can obtain each pixel label xt. Next, a proper intensity value of the initial transmission map can be assigned to each image pixel. Specifically, for each label xi, we have:

In Figure 6, we show a synthetic example in which the image consists of five grey-level regions. The image can be accurately segmented into five label regions using the proposed MRF method, whereby the five labels are represented by five intensity values, whose results are shown in Figure 6.

Synthetic example. (a) Input grey-level image. (b) Output multi-label image.

The MRF-based algorithm can also be applied to estimate the initial transmission map for real-world image. An illustrative example is shown in Figure 7. In the figure, Figure 7(b) shows the initial transmission map estimated using the algorithm presented above - its corresponding restored result is shown in Figure 7(c). One can clearly see that the appearance of the scene objects in the restored image looks one-dimensional.

True example. (a) Input image. (b) Initial transmission map. (c) Restored result obtained using (b). (d) Bilateral filter to (b). (e) Restored result obtained using (d).

As shown in Figure 7, there is an obvious deficiency in the recovered image in the discontinuities of the transmission map obtained by the MRF model. For example, the red bricks and the gaps between them should have the same depth values. However, as shown in Figure 7(b), one can clearly see the gaps between the bricks in the transmission map estimated by the MRF-based algorithm. In order to handle these discontinuities, many works adopt a bilateral filter to refine the transmission map estimation, such as local albedo-insensitive dehazing [20], filtering-based dehazing [21] and image dehazing using an iterative method [22], etc. In this work, we also apply a bilateral filter to our algorithm, since such a filter can smooth images while preserving edges [23]. Thus, the redundant details of the transmission map tini estimated by the algorithm presented above can be effectively removed, which improves the restored result with better detail enhancement capability. This process can be written as:

where tini(

Since, now, we already know the input haze image I(

where t0 is application-based and is used to adjust the fog remaining at only the farthest reaches of the image. If the value of t0 is too large, the result has only a slight defogging effect, and if the value is too small, the colour of the fog removal result seems oversaturated. Experiments show that when t0 is set to 0.2, we can get visually pleasing results in most cases. An illustrative example is shown in Figure 8. In the figure, Figure 8(a) shows the input foggy images, Figure 8(b) shows the transmission map estimated by using our MRF-based method, and Figure 8(c) is the final defogging result.

Image defogging example. (a) The input image. (b) Our transmission map. (c) The fog removal image.

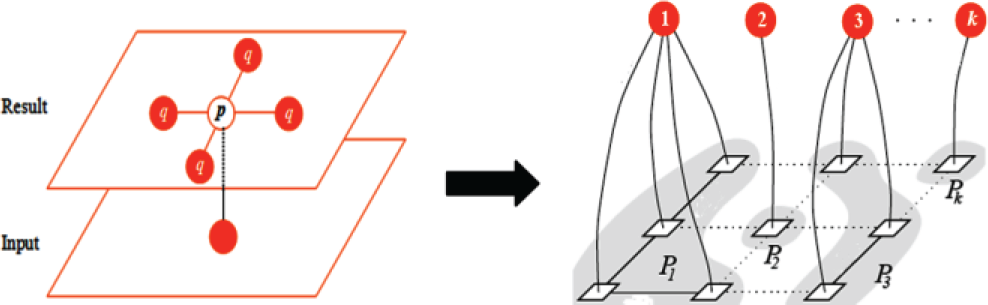

Video defogging

Given the importance of the fog removal method, many researchers have studied algorithms for single image defogging. However, research into video defogging is rare in the literature. Video processing takes into consideration not only the pixel values in a single static frame but also the temporal relations between frames. For surveillance camera systems, the camera is fixed and often positioned high up, such that the background of each frame is unchangeable and the difference in the transmission map between a foreground object and the background is usually small. Thus, we can regard the foreground object as image noise and use some denoising algorithms - such as a bilateral filter - to eliminate the noise and produce a universal transmission map. Figure 9 shows the main idea behind our video processing method. During the defogging process, the transmission map is only calculated once for the background image of the input video and then applied to more frames with a tolerable error.

The main idea behind our video defogging process: Input video frames (top), extracted background image (middle) and estimated universal transmission map (bottom). In the input video frames, the foreground objects are denoted by circles, squares and triangles. These objects are regarded as image noise and are eliminated using a bilateral filter during the estimation process of the universal transmission map.

Specifically, we define the static part of the scene as the background part and the moving objects in the scene as the foreground part. The background image can be obtained by using a frame differential method. Next, our method estimates the transmission map of the background image by using the algorithm mentioned above as the universal transmission map, and applies the map to a series of video frames to obtain the restored images, as shown in Figure 10.

Video results. First row: estimated background image and two original frames from the video. Second row: universal transmission map and the enhanced frames obtained by using the same transmission map.

The parameter values of the bilateral filter used in our video defogging method are set to a smaller value of σ c and a larger value of σ s (σ c = 1, σ s = 0.9) compared with single image defogging which cause the foreground noise and the redundant details of the transmission map to be effectively removed. Generally, no significant errors will be introduced into the restored image by using the universal transmission map, as shown in Figure 10.

After acquiring the transmission map, we can add some visual effects on the fog removal image, such as fog simulation. Figure 11 shows the fog simulation results. The virtual foggy scene can be simulated by multiplying the extinction coefficient β by a factor of λ. Specifically, according to Eq. (2), this is achieved by applying the following simple power law transformation of the transmission values:

Fog simulation based on our transmission map. (a) Input image. (b) and (c) Simulated foggy images with λ = 2 and λ = 4, respectively.

where t(

Parameter setting

The proposed MRF model [see Eq. (7)] is mainly parameterized by w and c, which are the weights of the smooth term. From Figure 12, one can clearly see that the dents in the haystack are more obvious when w is close to 0.1 [see Figure 12(a)], and the colour of the fog removal results seems less saturated when c is close to 1,000 w [see Figure 12(b)].

Defogging results with different parameter values. (a) From left to right: original image, the results obtained with w = 0.02 and c = 200w, w = 0.1 and c = 200w. (b) From left to right: original image, the results obtained with w = 0.05 and c = 200w, w = 0.05 and c = 1000w.

The value of w and c are application-based - we thus adopt the measurement presented in [24] to determine the proper value for the two parameters. For the CNC index proposed in that work, three components - contrast, image naturalness and colourfulness - are combined to yield an overall defogging result measure. Therefore, the CNC index between the original foggy image x and the fog removal image y is defined as:

where e measures the contrast by the number of visible edges in image signals x and y, CNI is the image colour naturalness that describes the degree of correspondence between human perception and the external world, and CCI is the image colour colourfulness that presents the degree of colour vividness [25]. Good results are described by a high value of CNC. We use the CNC index as a feedback signal to determine the optimal value for the two parameters. Thanks to the feedback mechanism, the static, open-loop parameter estimation issue can be transformed into a dynamic parameter adjustment issue.

Figure 13 shows the average results of the CNC index with a different w and c for 128 testing images. One can clearly see that the best result, corresponding to the highest CNC value (1.2005), is obtained when w = 0.05 and c = 200w (see point M) for the testing images. Therefore, the optimal values for w and c are approximately 0.05 and 200w.

Average results of CNC with different parameter values

We thus fix c = 200w and set an indicator of w over the range [0.1, 0.01] by a certain interval, which set as −0.001. The reason for us to choose CNC as a parameter adjustment index is that the index covers image contrast, naturalness and colourfulness. Besides, it is easy to implement and has quick computational speed. Assume that the index values obtained during a step are represented as CNC1 and CNC2, then the interaction conditions can thus be defined as: if CNC1 ≤ CNC2, iteration continues. Otherwise, stop the iteration and obtain the value of w in this condition. The defogging image produced with w and c (c = 200w) is our final result. Figure 14 shows the pseudo-code of the parameter estimation process.

The pseudo-code of the parameter estimation using CNC

To evaluate the performance of various defogging algorithms, we rely on synthetic images due to the difficulty of acquiring a scene with and without fog. 66 synthetic images with uniform fog from the database FRIDA2 [26] are used here. This database contains ground-truth no-fog images as the target images to compare various defogging methods. The sample results obtained using the proposed MRF-based algorithm on the FRIDA2 databases are shown in Figure 15. One can see how far the extent to which the buildings and extends further in the restored images.

Defogging results on synthetic images from the FRIDA2 database. First row: the synthetic images with fog. Second row: the obtained restored images using the proposed method.

We compared the proposed algorithm with the two classic enhancement algorithms: histogram equalization and the Retinex algorithm, as shown in Figure 16. Table 1 shows some of the results of the average absolute error (AAE) for the restored image and the target image without fog and for three defogging methods on 66 synthetic images with fog. In the evaluation, good results are described by a small value for the AAE. From Table 1, we can see that the proposed algorithm outperforms all the other algorithms. The AAE of the proposed algorithm is 30.71, while the next best result is 34.18 for the Retinex algorithm, which demonstrates that the results obtained with the proposed algorithm and the Retinex algorithm can effectively remove the fog. However, the remote object in our results seems much clearer than that seen using the Retinex method.

Comparison of synthetic images. From left column to right column: original foggy image, results using histogram equalization, Retinex algorithm and the proposed algorithm.

Average absolute error between the restored image and the target image without fog

The algorithms proposed in this paper work well for a wide variety of real captured foggy images. Figure 17 shows some examples of the defogging effects obtained using the proposed MRF-based algorithm. One can clearly see that the image contrast and detail are greatly improved compared with the original foggy images.

Defogging results for real captured foggy images using the proposed method. First row: the real captured images with fog. Second row: the obtained restored images using the proposed method.

We also compared our defogging algorithm with several other state of the art algorithms. Figure 18 shows a comparison between the results obtained by Fattal [7] and our algorithm. As can be seen in Figure 18, Fattal's method can produce a visually pleasing result. However, the method is based on statistics and requires adequate colour information and variance. If the fog is dense, the colour information used in that method is not enough to reliably estimate the transmission.

From left to right: the input image and the results obtained by Fattal [7] and our method

In addition, we compare our method with Tan's work [8] in Figure 19. The colours of Tan's result can sometimes over-saturate or distort. For example, the colour of the sky and the road region in Tan's result is turned yellow, as shown in Figure 19.

From left to right: the input image and the results obtained by Tan [8] and our method

We also compare our method with He's work [2] in Figure 20. He's algorithm can achieve a good enhancement effect for most outdoor images. However, when the scene objects are inherently similar to the ambient light, the dark channel prior used in He's method will be invalid. In this case, the defogging result of He's algorithm is not visually pleasing, as shown in Figure 20.

From left to right: the input image and the results obtained by He [2] and our method.

The comparison between the results obtained by Tarel [3] and our algorithm is shown in Figure 21. We can see that the colour in Tarel's result seems unnatural and that it also has many halo artefacts, whereas our method has no such problems.

From left to right: the input image and the results obtained by Tarel [3] and our method.

Figure 22 shows a comparison between the results obtained by Carr [9] and our algorithm. It can be seen that our algorithm tends to enhance details better than Carr's result, and that the colour of our result seems closer to the original input image.

From left to right: the input image and the results obtained by Carr [9] and our method

Figures 23 and 24 show the results of our method and Caraffa's methods [10, 11]. From these images, we can see that although the results we get are unable to thoroughly remove the fog in very dense fog regions compared with Caraffa's methods (e.g., the buildings and the trees in the distance), our results appear natural in terms of both colour and the profile of the scene objects.

From left to right: the input image and the results obtained by Caraffa [10] and our method

From left to right: the input image and the results obtained by Caraffa [11] and our method

Figure 25 shows a comparison between the results obtained by Wang [12] and our method. One can clearly see that the colour of the sky region in Wang's result seems a little inconsistent with that of the original foggy image.

From left to right: the input image and the results obtained by Wang [12] and our method

Results on a variety of haze and fog images also show that the results obtained with our algorithm seem visually close to the results obtained by Carr, He and Fattal, with better colour fidelity and fewer halo artefacts than compared to Tan, Tarel, Caraffa and Wang. Meanwhile, we also find that - depending on the image - each algorithm is a trade-off between colour fidelity and contrast enhancement.

To quantitatively assess and rate the nine restoration algorithms (Fattal's method, Tan's method, He's method, Tarel's method, Carr's method, Caraffa's two methods, Wang's method and the proposed MRF-based method), we use the CNC index [24] to measure the defogging effect. Figure 18 to Figure 25 give some example results obtained using the above defogging algorithms, and their corresponding CNC results are shown in Table 2. From the table, we can see that the highest values of CNC are obtained using the proposed method. This illustrates that the proposed method can achieve as good or even better results in most real-world foggy situations as compared to other defogging algorithms.

CNC index computed for the nine compared methods

To better evaluate the proposed method, an assessment method dedicated to visibility restoration proposed in [27] is also used here to measure the contrast enhancement of the defogged images. We first transform the colour-level image to a grey-level image and use the three indicators to compare two grey-level images: the input image and the fog removal image. The visible edges in the image before and after restoration are selected by a 5% contrast threshold according to the meteorological visibility distance proposed by the International Commission of Illumination. To implement this definition of contrast between two adjacent regions, the method for the segmentation of visible edges proposed in [28] has been used.

Once the map of the visible edges is obtained, we can compute the rate e of edges that are newly visible after restoration. Next, the mean

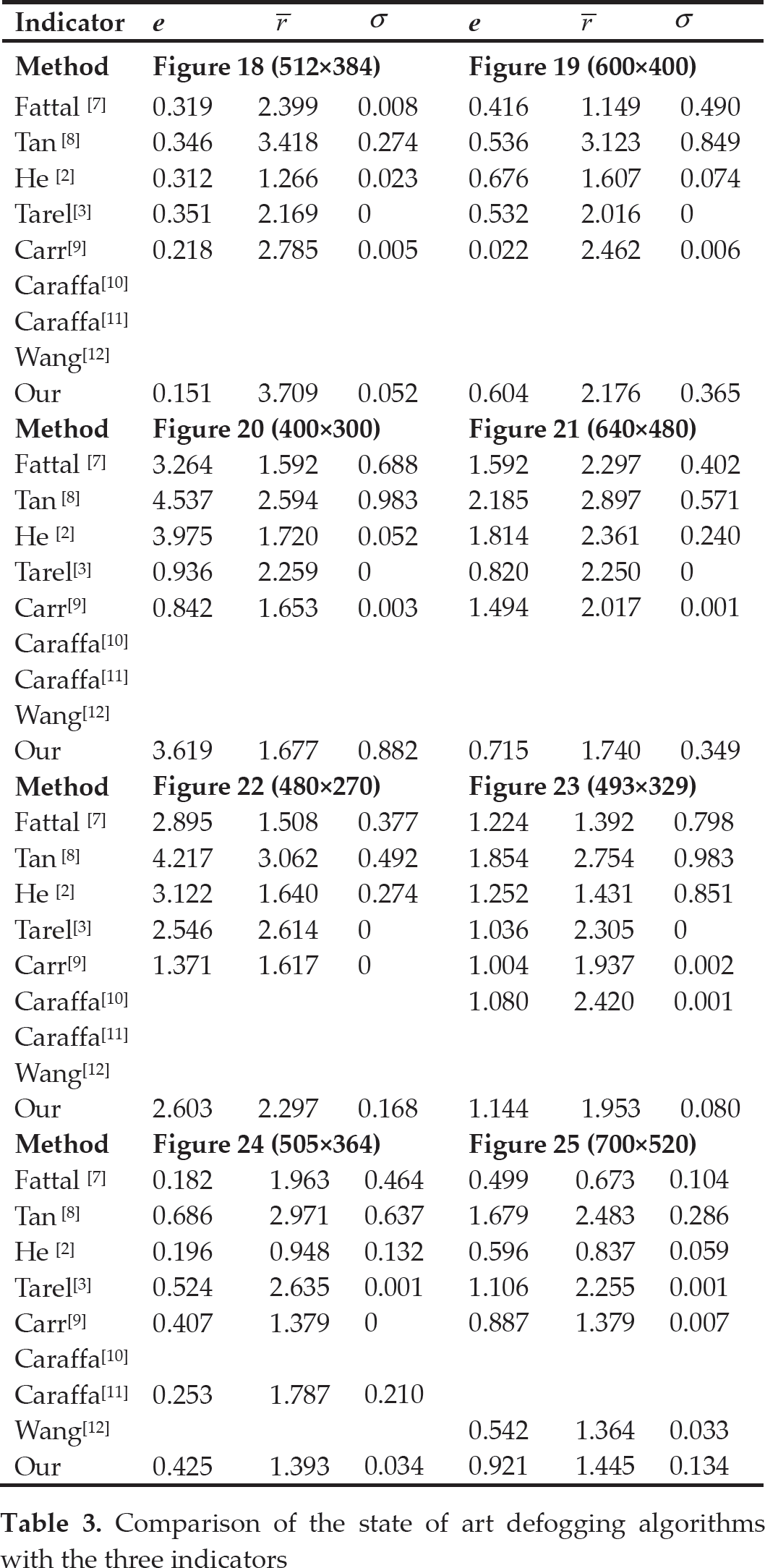

These indicators e,

Comparison of the state of art defogging algorithms with the three indicators

Under each method, the aim is to increase the level of contrast without losing visual information. Hence, according to [27], good results are described by high values of e and

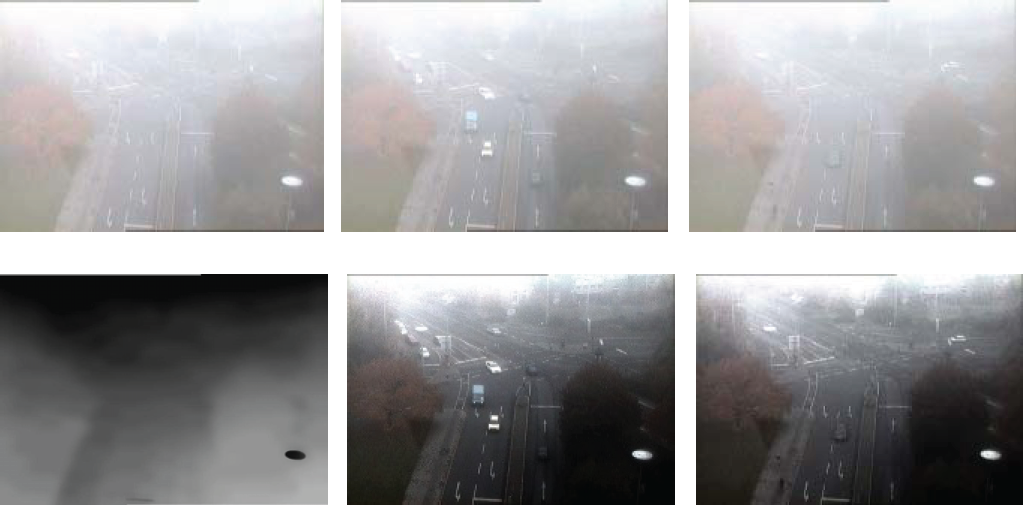

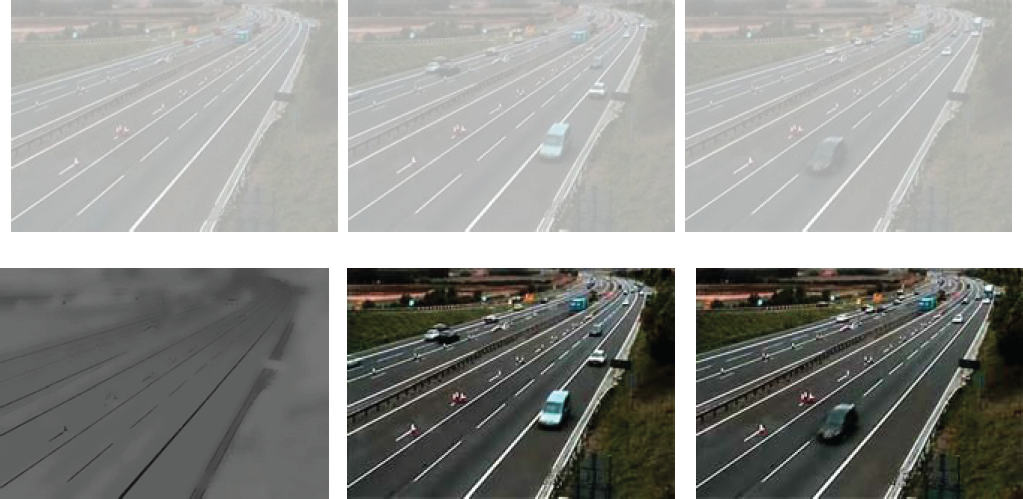

Experimental results with videos of traffic scenes taken under foggy conditions are offered in Figures 26 and 27. The two video clips are used to evaluate the proposed algorithm for traffic monitoring. One clip has 350 frames, with 768×576 RGB colour images coded with 24 bits per pixel. The other is a 640×480 resolution 200-frame video. As described in previous experiments, we first obtain the background image of the input foggy video sequences and compute the universal transmission map for the background image. Next, the contrasts of the road, trees and moving vehicles were restored for each frame of the video using the universal map. Notice the significant increase in contrast and the improvement in colour (see Figures 26 and 27). In our current implementation, fog removal was applied to the input foggy video while offline.

Restoration results for video clip #1. First row: estimated background image and two original frames from the video. Second row: universal transmission map and the enhanced frames obtained using the universal map.

Restoration results for video clip #2. First row: estimated background image and two original frames from the video. Second row: universal transmission map and the enhanced frames obtained using the universal map.

The computational time is measured by executing MATTAB on a PC with a 3.00 GHz Intel Pentium Dual-Core Processor.

For an image of size sx×sy, the fastest algorithm is Tarel's method. The complexity of Tarel's algorithm is O(sxsy), which implies that the complexity is a linear function of the number of input image pixels. Thus, only two seconds are needed to process an image of size 600×400. For He's method, its time-temporal complexity is relatively high, since the Matting Laplacian matrix L used for the method is so large; therefore, for an image of size sx×sy, the size of L is sxsy×sxsy; accordingly, 20 seconds are needed to process a 600×400 pixel image. The computational times of Fattal's and Tan's methods are even greater than that of He's method. They take about 40 seconds and five to seven minutes to process a foggy image of the same size, respectively. For our proposed algorithm, it takes about two minutes to process a 600×400 pixel image. This can be improved using a GPU-based parallel algorithm. Notice that when the image size is small, the proposed method has a relatively faster speed. For example, only three seconds are needed to process a 250×190 pixel image using our method, while it takes about six seconds to process an image of the same size using He's method.

Conclusions

Image defogging is an important issue in computer vision. In this paper, a new defogging algorithm was presented based on a MRF model. The problem was formulated as the estimation of a transmission map with α-expansion optimization. The algorithm was implemented in two stages. First, the transmission map was estimated using a dedicated MRF model and a bilateral filter. Second, once the map was inferred, the restored image could be obtained according to the image degradation model.

The experimental results demonstrated that the proposed algorithm can produce visually pleasing defogging results and that it tends to enhance the image contrast, which is better than previous techniques. The main contributions of this work are as follows:

A novel MRF-based method was proposed which applies an optimization library to estimate a transmission map. Experiments on both synthetic images and real-world images showed the effectiveness of the proposed method.

We extended our proposed method to foggy video applications using the universal strategy and implemented the fog environment simulation based on the estimated transmission map.

Due to the feedback mechanism proposed in this paper, the static open-loop parameter estimation issue can be transformed into a dynamic parameter adjustment issue.

However, the colour of our defogging results sometimes seemed over-saturated, and some fog removal results may have a gradient effect. Nevertheless, we might improve the overall quality of a foggy image by enhancing the main details, and the algorithm could be further improved by employing better image prior for the smoothing function of the MRF model. In the future, we intend to investigate instances of various kinds of fog and speed up the proposed algorithm for real-time processing.

Footnotes

8.

The authors would like to thank Dr. Fan for providing his paper [![]() ], Dr. Tarel for providing the MATLAB code for his approach, and Dr. Fattal, Dr. Tan, Dr. He and Dr. Carr for providing the defogging images on their websites. This work was supported by the National Natural Science Foundation of China (71271215, 71221061 and 91220301), the International Science and Technology Cooperation Programme of China (2011DFA10440), the Collaborative Innovation Centre of resource economical and environment friendly society, the China Postdoctoral Science Foundation (No. 2014M552154), the Hunan Postdoctoral Scientific Program (No. 2014RS4026), and the Postdoctoral Science Foundation of Central South University (No. 126648).

], Dr. Tarel for providing the MATLAB code for his approach, and Dr. Fattal, Dr. Tan, Dr. He and Dr. Carr for providing the defogging images on their websites. This work was supported by the National Natural Science Foundation of China (71271215, 71221061 and 91220301), the International Science and Technology Cooperation Programme of China (2011DFA10440), the Collaborative Innovation Centre of resource economical and environment friendly society, the China Postdoctoral Science Foundation (No. 2014M552154), the Hunan Postdoctoral Scientific Program (No. 2014RS4026), and the Postdoctoral Science Foundation of Central South University (No. 126648).