Abstract

We present an implementation of a model of very early sensory-motor development, guided by results from developmental psychology. Behavioural acquisition and growth is demonstrated through constraint-lifting mechanisms initiated by global state variables. The results show how staged competence can be shaped by qualitative behaviour changes produced by anatomical, computational and maturational constraints.

Introduction: developmental learning

In the last five years developmental robotics has emerged as a vibrant new research area. Previously many research projects have explored the issues involved in creating truly autonomous embodied learning agents but only recently has the idea of a developmental approach been investigated as a serious strategy for robot learning. For a review of developmental robotics see (Lungarella et al., 2003) and for recent results see new conference series such as (Epigenetics, 2005).

In this paper we describe an approach to sensory-motor learning and coordination that draws from psychology rather than neuroscience. There have been many models of sensory-motor coordination (Lungarella et al., 2003) but most of these have been based on specific, usually connectionist, architectures and tend to focus on a single behavioural task. We are interested in exploring mechanisms that can support not only the growth of behaviour but also the transitions that are observed as behaviour moves through distinct stages of competence.

Developmental psychology concerns the study of behaviour and changes in behaviour over time and attempts to infer internal mechanisms of adaptation that could account for the external manifestations. We are interested in very early development, in particular the control of the limbs during the first three months of life. The newborn human infant faces a formidable learning task and yet advances from undirected, uncoordinated, apparently random behaviour to eventual skilled control of motor and sensory systems that support goal-directed action and increasing levels of behavioural and cognitive competence.

Motivation

It is important to state the objectives of our research and the framework in which it should be viewed. Our goals are to implement, investigate and explore appropriate mechanisms that will support sensory-motor learning, in order to understand the key parameters and design issues for future robotic systems. We are inspired by psychological data and theory because this is a rich source of knowledge on sensory-motor behaviour, (which is still relatively under-exploited). However, we are not designers of psychological models and so we do not make close comparisons between our mechanisms and the existing psychological explanations. Rather, we wish to explore logically and scientifically the requirements for algorithms that could support developmental learning in machines. We hope that eventually a sound scientific understanding of sensory-motor control will become available. Of course, such theory may have some relevance for psychology but we recognise that future machine intelligence will be quite different from human intelligence, with complementary strengths and weaknesses.

Early infant learning

One of the most influential pioneers of infant development was Jean Piaget who emphasised the importance of sensory-motor interaction, staged competence learning and the sequential lifting of constraints (or scaffolding) (Piaget, 1973). Others, such as Jerome Bruner, have reinforced this by suggesting mechanisms that could explain the plasticity seen in infant studies (Bruner, 1990). Many more studies have investigated the growth of pre-linguistic competence in neonates (Gallahue, 1982, Rochat and Striano, 1999).

In robotics and artificial intelligence it has become generally accepted that intelligence of all kinds must be grounded in experience and we agree that sensory-motor coordination is likely to be a significant general principle of cognition (Pfeifer and Scheier, 1997). It seems more profitable to explore how a system might create its own models of experience for future growth, rather than program in particular learning methods, and we are interested in how some of the infant's learning behaviour might shed light on this scenario. We are particularly interested in the fact that such early learning appears to proceed in terms of stages (periods of similar behaviour) and transitions (phases where new behaviour patterns emerge).

We describe an experimental framework for building models in order to gain insight into the key requirements. Our immediate objective is the implementation of a flexible learning framework for an embodied hand/eye system which exhibits a prolonged epigenetic developmental process. Eventually, it is hoped to approach some of the skills achieved by the newborn human infant, at a general level. This includes discovering the structure of the various local representations of space (visual, tactile and motor), learning how to integrate these, and how to master their coordination for the control of action. Our long-term goal is to deduce sound principles for robotic development from psychologically inspired models.

An Experimental System for Development

We now set the context by describing the features and organization of our laboratory system. Our robot consists of two manipulator arms and a visual sensor that acts as an “eye”. These are configured in a manner similar to the spatial arrangement of an infant's arms and head — the arms are mounted, spaced apart, on a vertical backplane and operate in the horizontal plane, working a few centimetres above a work surface, while the “eye”, which is a colour imaging camera, is mounted above and looks down on the work area. Figure 1 shows the general configuration of the system. The effector part of the system comprises two industrial quality Adept robot arms, each with six degrees of freedom. In the present experiments only two joints are used, the others being held fixed, so that the arms each operate as a two-link mechanism consisting of “forearm” and “upper-arm” and sweep horizontally across the work area. The plan view of this arrangement is shown diagrammatically in figure 2.

The laboratory robot system used in experiments

A plan view of the arm spatial configuration.

The camera is mounted on a computer-controlled pan and tilt head. This allows fast scanning of the work space (saccades) and vision processing software is used to detect shape and colour patches from the pixels within a central image region.

The arm end-points can each carry a “hand” i.e. an electrically driven two-finger gripper fitted with tactile sensing contact pads on all surfaces. However, for the present experiments we fitted one arm with a simple probe consisting of a 10mm rod containing a small proximity sensor. This sensor faces downwards so that, as the arm sweeps across the work surface, any objects passed underneath will be detected. Normally, small objects will not be disturbed but if an object is taller than the arm/table gap then it may be swept out of the environment during arm action.

This experimental setup provides a set of rich visual, tactile and motor spaces, which are crucial for our experimental program.

Even before any cross-modal spatial integration can begin it is necessary to first discover the structure of the local spaces within each modality. By virtue of its given physical structure and constraints, each modality will have its own coding of space. Thus, when the eye refers to a spatial location then that data will only have meaning in terms of the actions required to move or direct the eye to that position. Similarly for a hand; locations in end-effector space are encodings of signals that correspond to the hand being at a certain location.

During the first months of life the neonate may seem to show no purpose or pattern in motor acts, but actually the infant displays very considerable learning skills: from spontaneous, apparently random movements of the limbs the infant gradually gains control of the parameters and coordinates sensory and motor signals to produce purposive acts in egocentric space (Gallahue, 1982). Various stages in behaviour can be discerned and during these stages the local egocentric limb space becomes assimilated into the infant's awareness and forms a substrate for future cross-modal skilled behaviours. This essential correlation between proprioceptive space and motor space seems to be a foundation stone for development, and occurs at many levels (Pfeifer and Scheier, 1997). Sensory-motor growth in the limbs appears to precede visual development (it may begin in the womb) and even when it can continue concurrently with visual development, in the first few months, the eye is too functionally restricted (tunnel vision) to correlate with other modalities (Westermann and Mareschal, 2004). For this reason, in the experiments reported here we do not involve the eye system. Also, there is no experimental advantage in driving two arms and so, for simplicity, we use only one arm.

Motor Coordination in a Single Modality

A two-section limb requires a motor system that can drive each section independently. A muscle pair could actuate each degree of freedom, i.e. extensors and flexors, but this can be abstracted into a single motor parameter to define the overall applied drive strength. As we are operating in two dimensions, two motor parameters are required, one for each limb section:

To allow for viscous friction and other effects in practical actuators we assume that the arm sections will operate at approximately constant velocity (angular or linear) as determined by the motor parameters. As the

An integrator is assigned to each degree-of-freedom and these are all reset to zero whenever the limb is returned to the rest position (see below).

The sensing possibilities for a limb include internal proprioception sensors and exterior tactile or contact sensors. The actual biological mechanisms of proprioceptive feedback are not entirely known but a simple and very “natural” method would be to sense the angles of individual joints. Thus if we assume proprioceptive neurons generate joint related signals, then these can be represented by

However, there are other, more complex, possibilities. If the location of the limb end-point can be sensed then the end-effector can be positioned at a desired spatial location; this would be very useful for many actions. In this case the feedback signals could be as follows:

Another even more attractive scheme would be to relate the arm end-points to the body centre-line. This

One other notable spatial encoding is a Cartesian frame where the orthogonal coordinates are lateral distance (left and right) and distance from the body (near and far). The signals for this case are simply the location values of the end-points in a rectangular space, thus:

Before vision comes into play, it is difficult to see how such useful but complex feedback as given by the three latter encodings could be generated and calibrated for local space. The dependency on trigonometrical relations and limb lengths at a time when the limbs are growing significantly makes it unlikely that these codings could be phylogenetically evolved. Only the

Mappings as a Computational Substrate for Sensory-Motor Learning

We have developed a computational framework for investigating this problem based on a two-dimensional mapping scheme. Our mappings consist of two-dimensional sheets of elements, each element being represented by a patch of receptive area known as a field. The fields are circular, regularly spaced, and are overlapping.

Every field,

The stimulus values held in a map's fields are effectively a form of short-term memory. If a stimulus is sufficiently salient then the associated fields are excited. Repeated stimulations are reduced by a habituation function (Stanley, 1976) that recovers when stimulation ceases (Meng and Lee, 2005). Equation 1 gives the habituation model which describes how excitation,

Let

Also a very slow decay function causes all excitation levels to fall over time. By this means, those fields with the highest excitation levels are those that have most recently experienced unexpected change. The immediate neighbours of stimulated fields also receive a proportionate level of excitation.

The above variables are local to individual fields, but some important global variables can be obtained by simple summation over the map of various field properties.

We assume that basic uniform map structures are produced by prior growth processes but they are not pre-wired for any spatial system. Our arm system has to learn the correlations between its sensory and motor signals and the mapping structure is the mechanism that supports this. We use two variables,

The software implemented for the learning system is based on a set of six modules which operate consecutively. The modules are:

However, there is also a probability of a purely random selection of motor values which increases in inverse proportion to the global excitation level. The probability of a random action is given by

Two special regions of local space form part of the system structure. We assume that the arm starts from a “rest position” (equivalent to arm being in the lateral position) and the result of driving the motors ‘full on’ (

The rest area consists of a predesignated group of fields as shown in figure 2 and, in order to create a reflexive homing behaviour, these fields are initially all set to a high excitation value. The decay and other excitation functions will eventually cause the homing effect to be reduced and allow new behaviours to become possible.

Constraint lifting and reflexes

Human cognitive development is characterized by progression through distinct stages of competence, each stage building on accumulated experience from the level before. This can be achieved by lifting constraints (removing “scaffold”) when high competence at a level has been reached (Rutkowska, 1994). Any constraint on sensing or action effectively reduces the complexity of the inputs and/or action, thus reducing the task space and providing a scaffold which shapes learning (Bruner, 1990, Rutkowska, 1994). Such constraints have been observed or postulated in the form of sensory restrictions, environmental or anatomical limitations, and internal or computational limits (Hendriks-Jensen, 1996).

We have several possible constraints available in our system: the availability of contact sensing, the resolution of the proprioception sense, and the parameters of the motor system. Of course, another constraint could be not having a visual sense but this very early stage of infant growth does not rely on vision (Piek and Carman, 1994). Transitions must be related to internal global states, not local events, and we use global state indicators to lift constraints in two ways: finer resolution sensory maps are used when global familiarity is high, and the degree of motor spontaneity increases with very low global excitation.

Novelty is the motivational driver for our system and the motor system attempts to repeat actions that cause stimulation. But without an initial stimulus there would be no reason to act and hence we provide a basic “reflex” to initiate the system when the total excitation levels are very low.

Experiments and results

Given the single modality arm described above we can now logically examine all the experimental parameters that we may vary and experiment with relevant combinations. There are five areas to be considered: environmental structure, sensing schedule, proprioception encoding, map field sizes, and attention/excitation parameters.

As the hand contact sensor is binary valued there is little scope for any environmental scaffolding to occur through different object regimes: objects are either present or not. However, the contact sensor can be turned off, in which case a contact event does not interrupt movement and some objects may be moved or even pushed out of the environment. This is an internal constraint and so we should investigate active/inactive contact sensing.

Regarding proprioception, we have four candidate encoding schemes (Section 5.1) and can arrange that the signals

The effects of different field sizes need to be examined. We achieved this by creating three maps, each with fields of different density, and running the learning system on all three simultaneously. Each map had a different field size: small, medium and large, see figure 5, and the

Finally we need to experiment on the possible excitation schedules for field stimulation. In the present system this consists of the habituation time constants.

The first trials used no contact sensing and objects on the table were either ignored or pushed out of range. Figure 3 illustrates behaviour as traces of movements. As the stimulation levels of the body area fall due to the habituation function so spontaneous motor signals are introduced, which produce hand sweeps to points on the extreme boundary. When contact sensing is active, figure 4 then shows intended rest/body-area moves being interrupted by contact with an object on the path, thus becoming rest/object moves.

Arm movements with no contact sensing. Initial repetitive moves between the rest and body areas (lower right and upper left respectively) gradually changed to spontaneous moves that explored the boundaries of the motor space.

Arm movements with active contact sensing. An object (near the centre of the diagram) caused sensory interrupts which excited the central fields and caused repeated rest/object moves.

These results are further illustrated in figures 5 and 6 which show the field maps produced by each of the above cases respectively. The number of fields, used to cover the same space, were 80, 324 and 1369 in the large, medium and small sized field maps respectively. We can observe the difference between motor noise and random or spontaneous acts in these diagrams. Motor noise is a very small disturbance in the motor parameters (and reduces with excitation levels) which, in fact, is beneficial as it causes close neighbours of the excited fields to be visited and hence explored. Spontaneous movements originate from a different source and are probabilistic in occurance and extent. These are driven by lack of attention, i.e. when there are no excited fields of interest, and serve to explore completely different regions; e.g. the boundaries in figures 5 and 6.

Three-scale mapping with contact sensor off. The highlighted fields indicate the fields visited

Three-scale mapping with contact sensor on. The highlighted fields indicate the fields visited

From these figures we see that the arm moved between body and rest areas first, but as these became less stimulated so random moves were introduced and fields on the boundary of the local reach space were explored. Then, when contact sensing was allowed (a constraint lifted), internal fields and their neighbours were stimulated by object contact. Figure 7 shows map growth in terms of four “types” of fields: the rest area, the body area, the boundary, and the internal area.

The observed behaviour is seen as series of stages: first a “blind groping” mainly directed at the body area, then more groping but at the boundary, these are accompanied with unaware pushing of objects, then follows more directed and repeated “touching” of detected objects as shown in figure 7. If more than one object is detected then attention will shift to each object in turn, as they become habituated, so that a roughly cyclic behaviour pattern is produced, similar to eye scanpaths. All these behaviours, including motor babbling and the rather ballistic approach to motor action, are widely reported in young infants (Piek and Carman, 1994).

Growth of S-M map. Only initial field visits are counted; repeated visits are ignored. The figure shows the number of fields visited in the rest area, body area, boundary and the internal area. At the start, only the numbers of fields in the rest and body areas grow. Next, more boundary fields are visited, indicating spontaneous movements. When the robot senses an object the stimulation is seen in an increase in internal field growth. Eventually the curve of internal field growth reaches a plateau as the robot gets familiar with the objects and spontaneous movements are again deployed to explore more areas, and hence the number of boundary fields grows.

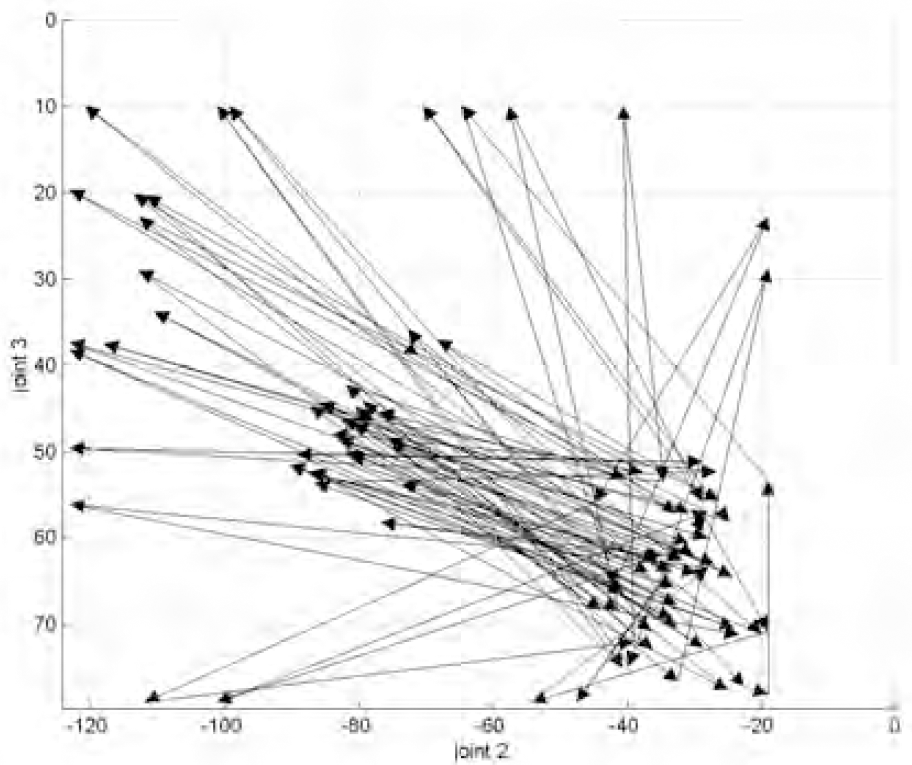

Regarding proprioception, we did not observe any clear advantage in any one encoding scheme. Perhaps, this could be expected in this experiment as they are all continuous and two-dimensional, being related by systematic distortion or warping. We recognize that when operating in the more restricted zones of the non-linear encodings there may be difficulties, see the operating space in figure 8, but these are at the extremities where mobility is restricted and humans actually avoid these areas (Bernstein, 1967). It is likely that the encoding scheme will matter much more when hand/eye coordination is to be learned, and this may account for the presence of two or more encodings in animals (Bosco et al., 2000).

Nonlinear relationship between joint angle and cartesian encoding schemes for the robot arm. The mapping distortion is not uniform across the workspace

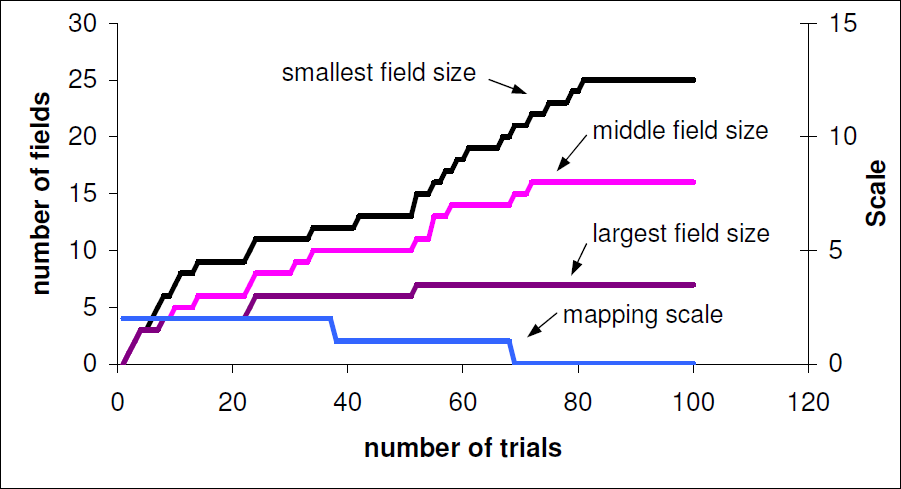

From the field size experiments we see a trade off: speed of exploration versus accuracy. When larger fields are used they cover more sensory space and thus the mapping is learned much faster. If smaller fields are used then movements to reach these locations are more likely to be accurate but more exploration is needed to map out the fields. Figure 9 shows how the system started on a coarse map and progressively transitioned to a finer scale map as the global familiarity variable reached a steady plateau. It is interesting that the receptive field size of visual neurons in infants is reported to decrease with development and thus lead to more selective responses (Westermann and Mareschal, 2004).

Transitions between three maps of different scale. Only initial field visits are counted; repeated visits are ignored. The right axis indicates the active map field size; there are three levels in this paper: 0 (small), 1 (medium) and 2 (large). The system progresses from coarse mapping to fine mapping, i.e. from scale 2 to 0.

Regarding the excitation parameters, we did not find any significant advantage in quite large variation in these. The main effects are to vary the persistent actions or number of repetitions performed on a stimulus and to alter the order in which attention is given to different objects. Neither of these had much effect on map generation for the single limb case. For more details of the excitation and habituation model used see (Meng and Lee, 2005).

There have been many models of sensory-motor coordination, frequently using connectionist architectures (Kalaska, 1995). For example, Baraduc et al designed a neural architecture that computes motor commands from arm positions and desired directions (Baraduc et al., 2001). Other models use basis functions (Pouget and Snyder, 2000) but all these involve weight training schedules that require in the region of 20,000 iterations (Baraduc et al., 2001). They also tend to use very large numbers of neuronal elements. While “motor babbling” is seen in the behavioural output of several systems, very few are inspired by the psychological literature on development and even less deal with transitions between more than one behavioural skill pattern. As reviewers report: “their behavioural capacity is usually limited” (Kalaska, 1995). Even one of the most well known “developmental” robotics projects, the COG project at MIT (Brooks et al., 1999), has delivered very little in terms of developmental mechanisms. While many such studies in sensory-motor learning have produced methods for generating particular desired behaviours, little real progress has been made on explicit modelling of developmental progression.

The system described here records sensory-motor schemas in topological mappings of sensory-motor events, pays attention to novel or recent stimuli, repeats stimulating behaviour, and changes behaviour as global parameters alter. We suggest that plateaus in experience correspond to competence being achieved at a given level. The behaviour observed from the experiments displays initially spontaneous movements of the limbs, followed by more “exploratory” movements, and then directed action towards contact with objects. Our approach has been supported by the findings cited and reports such as (Gomez, 2004) who show that starting with low resolution in sensors and motor systems and then increasing resolution leads to more effective learning. The results were produced with uniform map structures but we have also experimented with non-regular fields that grow and are created on demand, see (Meng and Lee, 2006).

From the early experience of motor acts leading to spatial locations, the S-M maps support the generation of motor commands to achieve an action (i.e. move from a given a start field to a destination field). We note that they could also be used to support “higher-level” cognitive functions by allowing rehearsal of motor acts, without actual performance, and thus lead to the processing of patterns of sensory-motor behaviour that are “perceived”, “imagined” or “desired”, rather than actual.

The reported work is part of a larger program. For the eye system we have already achieved a similar mapping between the image space and the motor drive for the camera. The next stage will be to allow cross-modal mappings to develop between the eye and hand mapping frames. This will use Hebbian cross-links between the associated map fields and should allow unskilled reaching to seen objects to develop. This will produce a further rich range of attentional, action selection, and sensing issues to deal with but the foundations laid by this work will provide a logical framework.

Footnotes

Acknowledgments

We are very grateful for the support of EPSRC through grant GR/R69679/01 and for laboratory facilities provided by the Science Research Investment Fund.