Abstract

Epi is a humanoid robot developed by Lund University Cognitive Science Robotics Group. It was designed to be used in experiments in developmental robotics and has proportions to give a childlike impression while still being decidedly robotic. The robot head has two degrees of freedom in the neck and each eye can independently move laterally. There is a camera in each eye to make stereovision possible. The arms are designed to resemble those of a human. Each arm has five degrees of freedom, three in the shoulder, one in the elbow and one in the wrist. The hands have four movable fingers and a stationary thumb. A force distribution mechanism inside the hand connect a single servo to the movable fingers and makes sure the hand closes around an object regardless of its shape. The rigid parts of the hands are 3D printed in PLA and HIPS while the flexible parts, including the joints and the tendons, are made from polyurethane rubber. The control system for Epi is based on neurophysiological data and is implemented using the Ikaros system. Most of the sensory and motor processing is done at 40 Hz to allow smooth movements. The irises of the eyes can change colour and the pupils can dilate and contract. There is also a grid of LEDs that resembles a mouth that can be animated by changing colour and intensity.

Introduction

Central problems in developmental, or epigenetic, robotics include motor development, including eye–hand coordination, and the development of social skills. To facilitate the study of such abilities, we have developed an open humanoid platform, called Epi, as a research platform. It has several features that sets it apart from other current systems, including animated physical pupils in the eyes, a very robust hand design, and a high resolution binocular vision system. Furthermore, by relying on readily available electronic components and 3D-printed parts, the robot platform is relatively inexpensive while still being versatile enough to be used in a wide range of research.

Epi can be compared to several alternative humanoid robots that are used for research. For example, iCub is a platform funded by the European Commission. 1,2 The robot has an appearance akin to a young child and is about a meter tall. In all, the iCub robot has 53 degrees of freedom, where 6 are in the head and neck, 7 in the arms, 9 in each hand and 6 in each of the legs. The waist has three degrees of freedom. 1 Sensors for the system include binocular vision, touch in the fingertips and audition. Inertial sensors are also present in the head, as are sensors measuring torque on the arms. 2 Locally, sensor and motor signals are handled by DSP chips, and YARP 3 is used as middleware to supply inter-process communication between modules that may run on distributed processors. Creating new behaviour for iCub implies implementing YARP processes, and connecting these processes up to a central controller. 2

NimbRo-OP is a platform designed primarily to play soccer and compete in the RoboCup competition. 4 Hence, it is focused on bipedal locomotion and being able to manipulate a ball with its feet. The system has 20 degrees of freedom; 6 per leg, 3 per arm and 2 in the neck. The robot has no actuators for gripping. It is 95 cm tall and weighs 6.6 kg. Sensors include accelerometers, gyroscopes and a wide field camera for vision. Software for the NimbRo-OP hardware platform is based on the DARwIn-OP software framework, 5 but adapted to the particular configuration of the NimbRo-OP. 4 This software supports basic behaviours and the authors plan to implement new control software based on ROS, 6 to include higher level cognitive functions. 4

Poppy is an open-source robotics platform. 7 An assembled system is 85 cm tall and weighs 3.5 kg. The system is based on 3D-printed structural components and has 25 degrees of freedom, where 5 are in the spine. This configuration is somewhat unusual but potentially allows the Poppy system to take advantage of weight shifting to maintain balance, as well as being more expressive in social interaction with humans. To control the robot hardware, a Python library called PyPot has been developed. 7 This library allows interaction with motors and sensors by means of scripting. In addition, the library can interact with robot simulator software for testing of action sequences.

There are also several commercial platforms that are commonly used in research. Nao is a commercial platform available from Aldebaran Robotics. The robot is 0.57 m tall and weighs 4.5 kg. It has 25 degrees of freedom, where 11 are for the legs and pelvis and 14 for the trunk, arms and head. 8 Nao’s hip differs somewhat from other legged designs in that it consists of coupled joints with rotation axes tilted at 45° towards the body. This prevents yaw rotation of the trunk while standing but does not interfere with walking. The Nao robot runs a framework called NaoQi, which gives access to the features of the system, like controlling the actuators and reading sensor signals. 9 This framework can execute commands in parallel and has interface towards popular programming languages like C++ and Python. In addition to NaoQi, the Nao system comes with dedicated software for designing motion patterns, called Choregraphe. 10 This software runs on a separate computer and interfaces with the NaoQi framework but provides a graphical interface to facilitate implementation of behaviours. Choregraphe comes with a library of high-level behaviours like walking, dancing and getting up, but also more low-level ones that can be composed to form complex behavioural sequences. Composition is done by means of connecting modules together, in a manner known as flow programming. 11 Motion sequences are also shown as curves that can be edited by manipulating inflexion points.

A larger humanoid robot Pepper was introduced by Aldebaran in 2014. 12 It measures 121 cm and weighs 28 kg. This is a wheeled platform with 20 degrees of freedom and sensors for vision and touch. To sense the environment, the robot also has ultrasonic sensors, laser and bump sensors, as well as internal gyroscopes for proprioception. Humans can interact with Pepper through a touchscreen on its chest, as well as through speech. Pepper can be programmed via an SDK that enables scripting of movement sequences, as well as means for programming applications with popular programming languages. 12

Although all these robot systems have their merits, they all have limitations that restrict their use in certain scenarios. For example, only iCub has stereovision which is necessary to study eye–hand coordination.

In designing Epi, we strived for a robot with simple design but sufficient abilities for the type of experiments and robot control model we aim to build. We also wanted the robot to give an honest impression of its capabilities. To this end, its physical appearances are intended to convey childlike abilities. The head of Epi has a simple geometrical shape displaying the proportions of a child, with large eyes. This design is partly a result of the larger cameras initially used in the eyes and partly an attempt to give the robot a cute and friendly appearance.

Several of the robots have legs and very basic walking abilities. At the present time, we chose to prioritize functionality that enables exploration of cognitive development over ambulation.

Epi overview

We have developed two version of the Epi robot. A torso version with arms and a smaller head version. The torso humanoid is 101 cm tall and weighs 12 kg. All mechanical parts except for the servo motors are 3D printed. The current version is printed in PLA, but earlier models have used ABS or HIPS. This section gives an overview of the Epi platform and its different components.

Torso and arms

The torso is 19 cm wide and 15 cm thick from front to back. The whole torso can rotate around its central axis. This allows the whole body to turn sideways and the centre of rotation is placed directly under the rotation of the head.

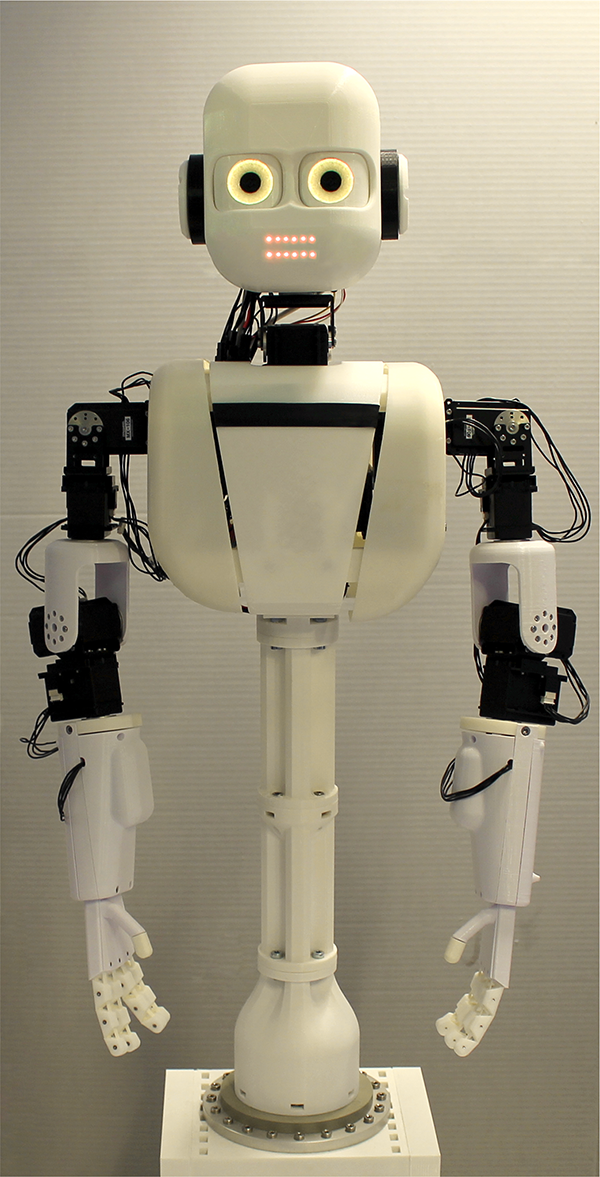

The arms are designed to resemble those of a young human (Figure 1). The extended arm reaches 35 cm from the torso.

Overview of the Epi robot.

Each arm has five degrees of freedom and the joints consists of Dynamixel MX-106 servos from Robotis. These servos are relatively fast and strong with a stall torque at 8.4 Nm and a maximum speed of 270°/s at 12 V. They also have the advantage that they are linked on a single data line so it is not necessary to draw individual wires to each servo (See Figure 7).

There are three servos in the elbow that are arranged so they effectively make up a ball joint. Although the servos are displaced, their centre of rotation all meet in a single point. One advantage of this arrangement is that it simplifies the kinematic calculations.

The arms also have one servo in the elbow and one in the wrist. This is sufficient to move the hands to any position in space but only allows the hand one degree of freedom in the rotation around the wrist.

The arms and hands are able to lift objects that weigh up to 1 kg, but for sustained manipulation, a weight around 100 g or less is more suitable.

Hands

Each hand has a single servo that controls four of the fingers while the thumb is stationary. There are three main features of the hand.

The first is that the fingers are mounted at a slight angle to each others so that the fingertips all meet in one point as the hand is closed. This produces the kind of grasp movement seen in infants and is useful for picking up objects. 13

Another feature is that there are no wires or complicated multipart joints. Instead the joints and tendons are 3D printed as a single piece of polyurethane rubber. The actual fingers consist of small plastic parts that are screwed onto the rubber core (Figures 2 and 3). This design can withstand very strong forces and it is not possible to manually break the tendons by dragging or tearing. The joints continue into the tendon that then seamlessly connect to the tendon of the next finger. The joints and tendons for two fingers thus constitute a single piece of rubber (Figure 3). Because it can be hard to 3D-print complex shapes in elastic materials, the joint–tedon component is flat on all sides and the shape of the hand is made up of the additional plastic parts.

Top: The hand of Epi holding two different objects. The grip is automatically shaped after the object. Bottom: The internals of the hand with the force distribution mechanism using a whippletree in the palm visible.

The hand mechanics of Epi. The four fingers are controlled through a force distribution whippletree mechanism that allows the hand to automatically fold the fingers around an object. A single servo is responsible for control of the hand. See text for further explanation.

Since the elasticity of 3D-printed rubber is usually not very predictable for consumer 3D printers, we have designed several versions of the rubber parts of the fingers. They differ in the thickness and produces joints of different stiffness.

This third feature is that the tendons are connected to the servo through a force distribution device inside the palm of the hand. This mechanism uses a whippletree design. 14 This allows the fingers to automatically grasp around an uneven object. When one finger comes into contact with an object, the force generated by the servo will be distributed to the other fingers (Figure 2). This design has been used previously in hand prostheses. 15 –17

There is no sensing within the fingers, but a sense of touch can be obtained by measuring the feedback from the servo that controls the fingers. Preliminary results show that it is possible to distinguish between soft and hard materials, as well as determining whether the hand is holding an object or not. Figure 4 shows the feedback signals from the hand servo during a grasping movement. The feedback is different when the hand is grasping an object compared to when the movement is made without object. Although there is some overlap in the signals, the reason for this is that the data included some very soft materials, such as a piece of cotton, which is hard to distinguish from not holding anything. However, the difference between the recorded signals was significantly different when the object was dropped (

Sensing using servo feedback. Top: When an object is dropped, the measured signal is higher during the grasp phase. Data from grasping eight different objects six times each. Bottom: Comparison of feedback for a hard wooden cube and a soft crocheted object. The difference in the measured value reflects the difference in hardness.

Head

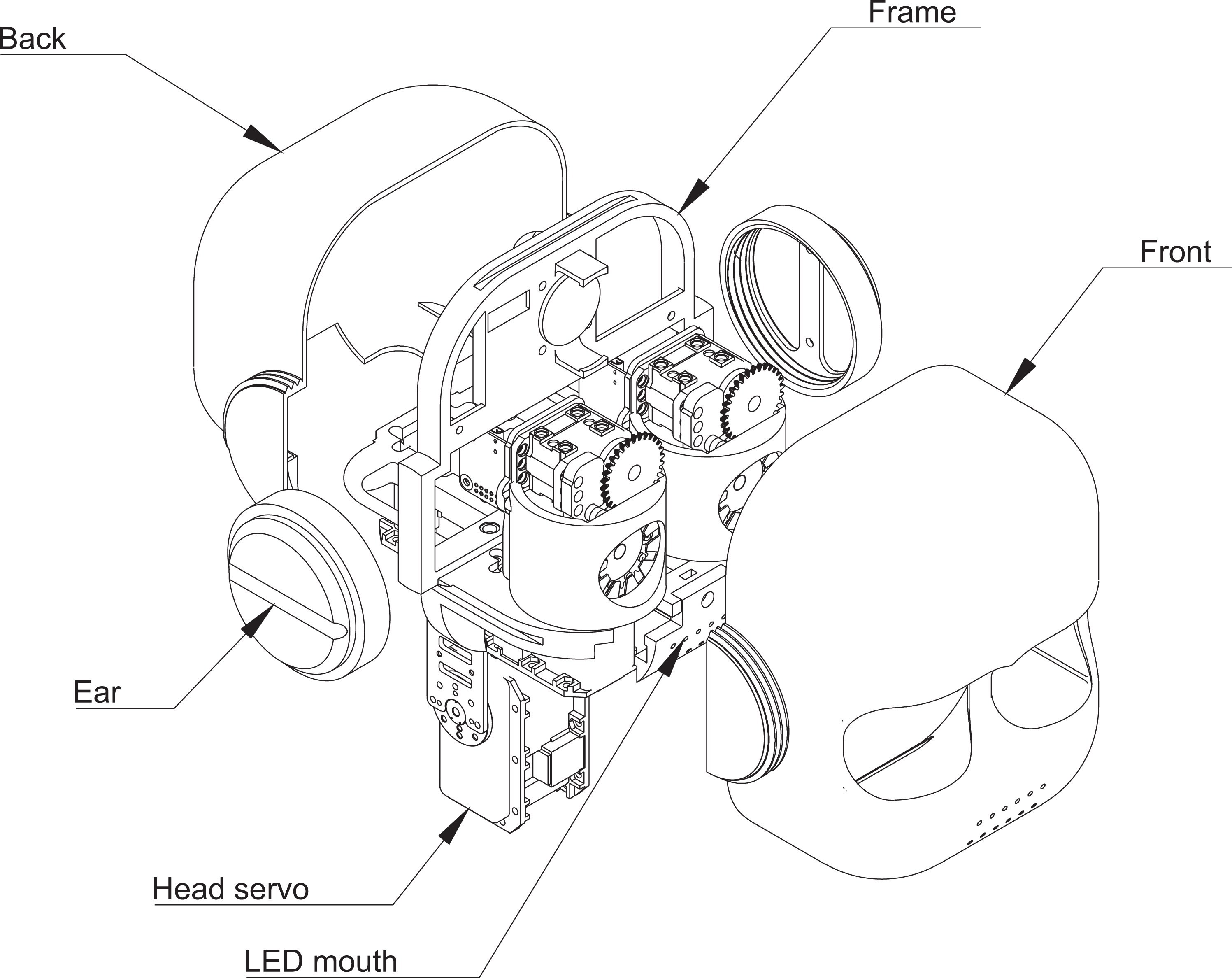

Figure 5 shows an overview of the components in the head. There are six servos that control different parts of the head. The main motion of the head is controlled by two Dynamixel MX-106 servos allow the head to pan and tilt. There is also one Dynamixel RX-28 servo for each eye that allows it to move sideways at high speed. The servos are rated at a maximum of 475 °/s which is approaching the speed of human eye saccades. The eyes cannot move up and down. This prevents vertical misalignment between the eyes but makes it necessary for the robot to tilt its head to look up or down. Finally, each eye has an animated pupil that each is controlled by a Dynamixel XL-320 servo.

The head of Epi. The front and back of the head are joined together using the two threaded ear pieces. The outer shell of the head encloses the mechanical parts inside mounted on a frame that also houses most of the electronics within the head. The figure shows the location of the eyes as well as the LED equipped mouth display. A more detailed view of the eyes can be found in Figure 6.

The eyes of Epi. One of the main features of Epi is its eyes with animated mechanical irises and high resolution cameras. See text for explanation.

The outer part of the head consists of two large 3D-printed components, the front and the back. These have been printed in ABS, PLA and HIPS plastic, depending on the printer used. The frontal part has holes for the eyes as well as a mouth made up of 12 small holes. The outer parts enclose the inner frame of the head and are joined together using threaded ear pieces at each side of the head. This makes it possible to quickly open the head if needed.

The inside of the head consists of a 3D-printed frame that holds all mechanical and electronic components (see also Figure 7). These include an IMU and a small speaker that can play audio. Behind the mouth, there are two LED strips with individually addressable RGB-LEDs that make it possible to animate the mouth lights. This is used when the robot is ‘talking’ or producing other noises to indicate the origin of the sound. These LEDs are controlled by a FadeCandy card that produces smooth animations by interpolating between visual key frames. The most important part of the head are the eyes that are described below.

Electronics overview. Epi is controlled from an Apple Mac mini computer. Most of the communication with the hardware is through USB. See text for further explanation.

Eyes

Cameras

A Raspberry Pi Zero with a minimal version of the Camera Module V2 is mounted inside the inner body (Figure 6). The camera dimensions is only 8.6 mm × 8.6 mm × 5.2 mm and is connected to the Raspberry Pi Zero’s CSI interface. The camera has a sensor resolution of 3280 × 2464 pixels and supports 1080p30 and 640×480p90. The User space Video4Linux (UV4L) (https://www.linux-projects.org/uv4l/) is installed on each Raspberry Pi Zero. UV4L is a real-time performance streaming server optimized for loT devices. UV4L grabs the images from the camera and uses hardware encoding to encode the images into h.284 video format. The video stream is then available using the in-built streaming server. Each Raspberry Pi Zero is configured as an USB On-The-Go (OTG) Ethernet device, which allows the main computer to recognize the USB devices as Ethernet cards and listen to the video stream.

Iris and pupil

A unique feature of the eyes is the adjustable pupils. The pupils consist of a number of layers (Figure 6). First the LED ring is mounted on the inner body of the eye. The LED ring has 12 RGB and is controlled by a FadeCandy card connected to the main computer. Each of the RGB diodes can be controlled separately. Next layer is a LED diffuser that will smoothen the LED light, helping to create an illusion of colour around the pupil of the eye. The next layer is the iris gear that is connected to the servo via the servo gear. The servo is an XL-320 mounted on top of the inner body. Ten small blades are connected to the iris gear and the iris front and laying stacked on top of each other. By moving the servo, these blades adjust the pupil size of the eye similar to an iris diaphragm in cameras.

Control system

Epi is controlled by an Apple Mac mini computer, which is small and comparatively powerful (see Figure 7). The computer communicates with most of the electronic and electromechanical components using USB. Eight different USB channels are used.

Since most of the Dynamixel servos in Epi use an RS-485 interface, the USB signals are converted before being sent to the servos together with a 12 V power source. One exception is the XL-320 servo used within the eye, for the pupil, that operates on 7.4 V. For this servo, the initial 12 V is converted using a DC/DC converter. The output from the 12 V power supply is also converted to the 5 V that the LEDs use. USB is also used to communicate with the two cameras.

A biologically based control architecture

Epi is controlled using the Ikaros system, which is an infrastructure for building biologically motivated models for robot control. 19 The system supports the development of complex system-level brain models that can run in real time in the robot.

The Ikaros system is built as platform independent as possible and uses standard C++ language with a small set of external libraries. We have used Ikaros in a range of different hardware from small single board computers such as the Raspberry PI to large computer clusters. Due to its highly threaded design, Ikaros is also well suited to take advantage of the massive parallelism of supercomputers or to run off-line simulation and deep neural network training, on multi-GPU servers. Ikaros has recently been substantially updated with an interactive web-based editor, which let the user create models and introspect the simulation with a minimum programming skills.

Since the start in 2001, more than 100 persons has contributed and over 100 scientific publications report on work that has used Ikaros for simulations or robot control. The code is continuously updated and can be found as part of the Ikaros project at GitHub (https://github.com/ikaros-project).

The Epi robot is an open system, the user has full freedom to implement any control architecture with or without Ikaros, but as default, the robot runs a set of basic low-level reflexes motivated by the corresponding systems in the human brain. The details of this architecture is outside the scope of this article, but we highlight some of its features below.

Basic reflexes

The pupils of the eyes are controlled by two systems. The dilation of the pupil is controlled by a model of the nuclei in the sympathetic and parasympathetic peripheral nervous system. 20,21 Blinking is controlled by a model of the systems in the brain responsible for blinking in humans 22 and potentially allows for a more natural interaction. 23

There is also a set of basic bodily reflexes, among them 72 different scratch reflexes that target different parts of the body. A similar set of withdrawal reflexes are currently being implemented.

Population coding

All commands to the motors use population coding. 24 In addition to being biologically motivated, it has several advantages. 25 Firstly, it allows seamless blending and interpolation between alternative individual motor patterns which on a higher level allows smooth transitions between robot configurations; secondly, such smooth transitions are subjectively perceived by humans to be more natural and less machine like; more natural movements are more predictable to humans and hence safer 26 ; lastly, population-coded movements avoid undesirable jerky movements which protect both the robot hardware and interacting humans from injury.

Arbiters

When several subsystems are able to influence the same servo motor, the choice between competing signals is done by arbiter modules in Ikaros. The arbiters take advantage of the property of population codes to represent both a value, such as a position of a joint, and an ‘urgency’. The arbiter receives several of the codes and either selects the one with the highest ‘urgency’ or, depending on its settings, blend the different inputs. This allows for signals from one part of the control architecture to blend into others to produce a combined behaviour.

The arbiters has a range of parameters that allow them to implement different action selection mechanisms. 27 –29 These methods range from simple winner take all to reinforcement and emotion-based selection, including methods to produce displacement activities during conflicts.

Motion sequencer

To reproduce stereotypical fixed movements, the control architecture also contains a motion sequencer. Motions can be recorded by moving the arms and the head of the robot. These motions can later be triggered by various conditions and replayed.

We are currently extending this system with interpolation abilities where different recorded behaviours are time-aligned and seamlessly blended. This blending can depend on external sensory signals such as the location of an object that is the target of an action.

Epi the head

As mentioned in the Epi overview section, we have also designed and built several smaller versions of Epi without the arms (Figure 8). Instead of a full-scale computer, the heads use a Raspberry Pi for control. This makes the robot less expensive and at the same time uses less energy and allows the robot to be run off batteries. At the same time, this also limits the complexity of what the robot can do, but for many smaller projects, the computational power of the Epi-head is sufficient.

The smaller version of Epi. The head is identical to the larger model, but a Raspberry Pi is used for motor control instead of a full-scale computer.

Evaluating the design

To evaluate how people perceive the robot, we asked 42 informants (22 women) to fill in a questionnaire about Epi. We asked whether the participants had seen or met a similar robot before and how friendly and intelligent they thought it looked. The participants was also asked to estimate the age of Epi based on its appearance. Epi was rated on average to have an age of 9.8 years (SD = 5.0). There was a significant difference in the mean age that our informants ascribed to Epi depending on whether they reported having met similar robots before (7.6 years) or not (11.2 years,

We also tested to what extent the robot face could be used to express different internal and emotional states. 30 We combined head movements with eye colour changes to try to convey thinking, angry, happy, confused and sad. The majority of participants were able to identify thinking, happy and confused.

In addition to the formal evaluation, we have also observed how students react to the robot in different situations and it is clear that pupil dilation often causes positive emotional reactions in people working with the robot. Its childlike appearance also seems to cause students to behave as if it were a child in their care.

Epi as a student platform

The different versions of Epi have been used in several student projects for master students in Cognitive Science and Engineering at Lund University. Working with a humanoid robot makes it possible to combine what the students have learned about human behaviour with engineering techniques to experience what it is like to work on a real task in a multidisciplinary environment.

One project aimed at recoding animations that could be used to control the movement of Epi in a natural way. 31 The project investigated how movements like reaching, pulling and shoving could be recorded by means of filming humans performing the motions, and converting the film to angular data via motion tracking. These data were then transferred to the robot motors and played back.

Another project investigated how the Epi head could animate facial expression and expressions of internal states. 30,32 These expressions combined the LEDs in the eyes with movements of the head.

The robot was also compared to an animated agent in a learning-by-teaching situation. 33 Here, children either interacted with a robot or with a virtual agent on a screen. The outcome was that there were large individual differences in whether the children preferred the robot or the virtual agent but no significant difference between groups.

There has also been a project that looked at natural gazing behavior. 34 The goal was to make the robot look at people in a natural way, including making orienting movements when someone new enters the room, or following a person with the gaze while not making eye contact for too long.

One project looked at methods to detect if a person is looking at the robot and investigated appropriate gaze behaviour in the robot. 35 A 3D model of a face was matched to the input to estimate the position and orientation of a person in front of the robot.

Another project investigated throwing movements, where Epi was programmed to throw a ball at a target at a certain angle and distance. The robot would first track moving persons, select one as a target, and subsequently throw the ball at them. The robot used the interpolating look-up tables that are part of the Ikaros system to code for the relation between movement and target location.

Recently, a group of students implemented mirroring behaviour during interaction in the torso version of Epi. In this projects, a Kinect sensor was used to detect the movement and posture of a person in front of the robot and relevant aspects where mapped to the robot.

Discussion

One of the features that sets the Epi system apart from comparable systems is its eyes with controllable pupils and iris colour. Combined with a flexible control system, this allows researchers to study human robot interaction where the robot can use the eyes for signalling. Potentially, this opens up interesting research paths pertaining to emotional aspects of interaction, since pupil dynamics are salient indicators of liking and social acceptance in humans. Combining facial dynamics of the eyes and mouth with movement in the neck and arms affords experimentation on non-linguistic interaction between robot and human.

The arms and hands of Epi allow for studying reaching, grasping and sensorimotor cognition. Although the hands do not currently support thumb movement, they can still grasp soft objects like plushy toys or suitably shaped rigid objects like cups with handles. Combining this with the binocular vision of the moveable head opens up the study of visual attention, binocular vision and object examination.

Considering the head-only version of Epi, it provides some advantages in the form of portability and flexibility of deployment over its larger sibling. The head can run on batteries which means it can be used for experiments outside a laboratory and in places lacking electrical outlets. Its diminutive size means it can fit in a handbag or suitcase. The comparably lower price of each unit also might allow for building several instances such that experiments may be conducted in parallel.

The component price for a complete unit of the large version of Epi is USD 8500, while the head unit can be built for USD 2300. This compares favourably with other systems, such as the iCub with its EUR 250,000 price, the Nao at EUR 8400, Pepper at EUR 20,280. Perhaps a more fair comparison is with the build cost of other open-source projects, such as the EUR 7500 Poppy or the USD 20,000 NimbRo-OP.

Robots are notoriously prone to failure and accidents, and repair can be costly. Since the Epi systems are based on 3D-printed structures and off the shelf components, however, the cost of maintenance can be kept at a manageable level.

Even after a robot has been assembled and its hardware is operational, there is often still a lot of work to make it ready for experimentation. The Ikaros framework allows compositing functional modules into hierarchical complexes without programming. This can significantly reduce the time and effort necessary to employ an Epi system in experiments and to quickly get on with generating data. Producing custom modules is also supported by means of a C++ API.

Limitations and future work

Although we consider the design of Epi to have reached a nearly mature stage, there are many ways in which Epi could be improved.

Hands

One limitation of the robot is that it does not have more than one degree of freedom in the wrist. It can be turned, but not moved left-right or up and down. This obviously limits the dexterity of the hand. It is not easy to add these extra degrees of freedom without increasing the size and weight of the arm. A perhaps more attainable addition would be to allow the thumb to move sideways. This would greatly simplify the grasp of some objects since it would be possible to shift between a precision grip and the current palmar grasp. Since the forces on the thumb during a grasp would be orthogonal to its movement, it would be sufficient with a very small (and weak) servo.

Another extension would be to change the shape of the palm to make it more curved. This would help in grasping some objects. Similarly, the grasping abilities of the hand would increase if the fingers were of different length. Ideally, the middle finger should be longer while the little finger should be shorter. It would be easy to change these aspects of the hand in the future though it would require most of the parts of the hand to be altered. For example, the angle between the fingers will have to be changed to optimally utilize differential finger lengths.

The sensing abilities of the hand are currently limited and could be improved by attaching bend and contact sensors to the hand. Bend sensors can fit within the rubber part of the fingers, while contact sensors could be placed on the inner surface on the fingers.

Hearing

A notable omission is hearing. There is no particular reason for not giving the robot hearing and it will certainly be added in the future. A microphone array of some sort will be necessary to allow Epi to detect and potentially recognize sounds in a noisy environment.

Facial features

Currently, Epi lacks facial features except for the eyes and the mouth. Although it would be useful in social interaction to have eye brows and a more advanced mouth, we think that the addition of such features distracts from the goal of presenting Epi as a robot. Adding such features instead gives the robot the appearance of an animated wooden doll with negative associations. Instead, we believe that various the features of the robot face could be used to convey more or less the same impression. For example, we use the light intensity of the irises to simulate blinking by momentarily turning off the light. We can also imitate eye widening by increasing the light intensity. 36 Subtle light signals have been shown to facilitate human-robot communication. 37

Limited DOF:s

A final limitation of the design is that Epi lacks a servo motor to tilt its head. This is important in social interaction and could be added in the future. Another degree of freedom that would be useful is if the robot could lean forward. This can be used both to extend the reach of the arm and to modulate social distance during interaction.

Footnotes

Authors’ note

Acknowledgements

The authors would like to thank the Crafoord Foundation that has supported the development of the robot. The authors also want to thank Betty Tärning for collecting data on attitudes to the robot and all students that have contributed to the development and testing of Epi.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was financially supported by the Crafoord Foundation.