Abstract

In this paper, we propose a new algorithm of mapping dynamic indoor environments. Instead of accurate but expensive laser, we employ sonar and camera to map dynamic structured indoor environments. Based on fuzzy-tuned grid-based map (FTGBM), we use two methods: sonar temporal difference (STD) and statistical background subtraction (SBS), to detect and track moving objects when mapping dynamic environments. The former is a consistency-based method realized by monitoring a sequence of temporal lattice maps for a certain number of measurement periods to detect moving objects by using sonars; and the latter is a background subtraction technique which adopts an expectation maximization (EM) learned 3-class mixture of Gaussians to model the nonstationary background relied on sufficient update during mapping process. After finding the moving objects, we propose a fuzzy-tuned integration (FTI) method to incorporate the results of motion detection into the mapping process. The simulation and experiment demonstrate the capabilities of our approach.

Keywords

Introduction

Robotic mapping is referred to the process of generating spatial models of physical environments from sensor measurements through navigating in the environment. This procedure is generally regarded as one of the most important problems in the pursuit of building truly autonomous mobile robots. Over the past two decades, the field has been received considerable attention, because generation and maintenance of environmental maps are often inherently necessary for mobile robots in order to perform complex tasks in partially known or unknown environments. At present, this field has matured to a point where detailed maps of large-scale complex environments can be built in real-time, specifically indoors (Thrun, S. et al 1998a; 1998b; 2001a; 2002; Bosse, M. et al 2004). Many existing techniques are robust to noise and can cope with variety of structured environments. However, the majority of existing mapping approaches are designed for static environments. They assume that the mobile robot is the only moving object in the map world. Nevertheless, the real worlds, where robots are deployed, are usually dynamic. That is, some objects in the environments do often change states over time. In an office, for instance, the location of desks may have been changed, and doors may be opened or closed, etc. In particular, to map a crowded environment, there also exits multiple moving objects in the perceptual range of the robot. For example, there may be many people walking through a corridor of an office building during office hours who are within the sensor range of the robot during the mapping process. In this context, such people moving in or out the scene will have a serious influence on the resulting map since it would contain evidence about these people at the corresponding locations. But when the robot later returns to this location and scans the area a second time using such localization methods as (Thrun, S. et al 2001b; Fox, D. et al 1999), the pose estimates would be less accurate, because the new measurements do not contain any features corresponding to those people, thus resulting in spurious objects in the map and consequently affects the future tasks (Hähnel, D. et al 2003a; 2003b). Therefore, an autonomous mobile robot should be equipped with the capacity to be conscious of the changes around it and to filter out the spurious models of moving objects when building maps and to constantly update its map of the environment, if it is going to perform services in real world.

In this paper, we extend our pervious work (Ip, Y.L. et al 2002; Chow, K.M. et al 2002) which is able to model the static environments and propose a mapping technique allowing a mobile robot to map the dynamic environments, with the assumption that the odometry is perfect, so that localizatoion problem is not considered in this work. Particularly, we use fuzzy-tuned grid-based map (FTGBM) (similar idea as in our previous work (Chow, K.M. et al 2002)) to model the environment. We suggest two methods: sonar temporal difference (STD) and statistical background subtraction (SBS) to detect moving objects. The former is a consistency-based method realized by monitoring a sequence of temporal lattice maps for a certain number of measurement periods to detect moving objects by using sonars; and the latter is a statistical background subtraction technique which adopts an expectation maximization (EM) learned 3-class mixture of Gaussians to model the nonstationary background based on sufficient update during mapping process. After obtaining the motion information, we propose fuzzy-tuned integration (FTI) to incorporate the results of above two types of motion detection to filter the moving objects out of the resulting map. Additionally, since Bayesian update rule is used in FTGBM, our approach also has the capability to estimate and update the states of the dynamic objects in robot workplace.

The rest of this paper is organized as follows. After discussing the related work in the following section, we will briefly present basic notation and definition about the fuzzy system and fuzzy-tuned grid-based map building method in Section 3. We will describe the sonar temporal difference by sonar sensor in Section 4 and statistical background subtraction by camera in Section 5, and then report the fuzzy-tuned integration to incorporate the motion tracking results into the resulting map in Section 6. And Section 7 will contain our simulation and experiments to illustrate the capabilities and the robustness of our approach. Finally, conclusions and future work will be presented in Section 8.

Related Work

Approaches to mapping problem can be roughly classified into two major paradigms: occupancy grid-based maps and topological maps. The occupancy grid-based maps, like our previous work (Chow, K.M. et al 2002) are generated from stochastic estimates of the occupancy state of an object in a given cell. It is rather easy to construct and maintain them, whereas topological maps are graph-like spatial representations (Remolina, E. & Kuipers, B. 2004). Nodes in such graphs correspond to distinct situations, places, or landmarks (such as corners). They are connected by arcs if there has a direct path between them. Furthermore, Thrun, S. (1998a) successfully integrated these two paradigms to build a metric-topological map, thus gaining the advantages from both methods. However, all these approaches assume that the environment is almost static during the mapping process, although they can tackle a certain mount of noise in the sensor data. But in dynamic environments where moving objects are appearing or disappearing in the perceptual range and states of the objects are changing over time, robots have to be equipped additional ability to deal with such additional noise comparing with the static environments. Otherwise, the resulting maps can not be usable for localization or navigation.

Recently, there has been work on updating maps in dynamic environments. Thrun, S. et al. (2000) and Burgard, W. et al. (1999) update a given static map using the most recent sensor information to deal with people in the environment. Fox D. et al. (1999) propose a filtering technique to identify range measurements that do not correspond to the given world model, and then to update the robot position using only those measurements which are with high probability produced by known objects contained in the map. Montemerlo et al. (2002) present an approach to simultaneous localization and people tracking. More recently, there also exist several approaches to mapping in dynamic environments which contain moving objects in perceptual range of the robots. Biswas, R. et al. (2002) and Anguelov, D. et al. (2002) derive an approximate Expectation-Maximization (EM) algorithm for learning object shape parameters at both levels of the hierarchy, using local occupancy grid maps for representing shape. Andrade-cetto et al. (2002) combine the landmark strength validation and Kalman filtering for map updating and robot position estimation to learn moderately in dynamic indoor environments. Montemerlo et al. (2002a) employ a Rao-Blackwellized particle filter to solve the simultaneous localization and people tracking problem based on a prior accurate map of the corresponding static environment, which is similar with FastSLAM (Montemerlo et al. (2002b)). Hähnel et al. (2003) present a probabilistic approach to map populated environments by using Sample-based Joint Probability Data Association Filters (SJPDAFs) to track people in the data obtained with the laser range scanners of the robot like Schulz, D. et al. (2001; 2003). The results of the people tracking are integrated into a scan alignment process and into the map generation process, thus filtering out the spurious objects in the resulting maps. Wang C.-C. (2004) solves the problem of simultaneous localization, mapping and moving object tracking in crowded urban environments. He establishes a mathematical framework to integrate SLAM and DATMO (Detection and Tracking Moving Objects). The idea is to identify and keep track of moving objects in order to improve the quality of the map. Wolf et al. (2005) propose an online algorithm for SLAM in dynamic environments, which is based on maintaining two occupancy grid maps: one for static objects and another for dynamic ones, and a third landmark map with which localization is solved. This method is limited to moderately dynamic indoor environments, especially the narrow assumption of localization implementation. But the algorithm has advantages that it is robust to detect dynamic entities both when they move in and out robot's field of view.

However, virtually all state-of-art approaches use SICK scanning laser range-finders. While the SICK is ideal for this because of the accurate and detailed range information provided, there are drawbacks. In particular, the SICK laser sanner is expensive and quite heavy and bulky. It seems that these methods can not be assigned to cost effective sensor systems such as sonars.

In our work, we propose a new technique of mapping dynamic environments. We respectively use sonar sensors and camera to detect moving objects, and then fuzzy-tuned integrate the results of motion detection to filter out the moving objects from the resulting map. Additionally, we use Bayesian update rule in fuzzy-tuned grid-based map to estimate and refine the states of some dynamic objects which change slowly.

Fuzzy System and Fuzzy-Tuned Grid-Based Map (FTGBM)

Notation and Definition of Fuzzy System

Consider a fuzzy model with n inputs and a single output. The fuzzy rule base can be formulated as:

xi: The ith input variable.

Ni: The number of fuzzy subsets of input i.

n: The number of input variables.

Assuming singleton fuzzifier, product inference engine and centre average defuzzifier (Wang, T. X. 1997), and the crisp fuzzy model output ŷ is obtained as:

We adopt fuzzy-tuned grid-based mapping technique to generate a basic global map and will update this basic map in following sections by filtering out the spurious objects and refining the dynamic object states. Here, the fuzzy-tuned grid-based map algorithm is similar with our previous work (Chow, K. M. et al 2002). Therefore, we only briefly review this algorithm to make this paper readable on its own. Interested readers may refer to (Chow, K. M. et al 2002) for further details.

In this approach, the probability distribution function (pdf) of the sonar sensor model is tuned by a set of fuzzy rules based on the maximum probability of the grid cell within the sensor cone. Similar to traditional approaches, the occupancy grid probabilities of the state s(Ci) of grid cell Ci for the environmental map P[s(Ci) = occ | x] = 0.5 means unknown or unexplored region. P[s(Ci) = occ | x] = 1 means that the grid cell G is occupied and vice-versa. The fuzzy-tuned sonar sensor model (pdf) is shown in Eq. 2, considering the example of a range sensor characterized by Gaussian uncertainty in both radial and angular directions. In Eq. 2, where

r: The sensor range measurement of the sonar sensor.

z: The true parameter space range value.

θ: The azimuth angle measured with respect to the beam central axis.

ko: The parameter that corresponds to the space is occupied.

kε: The parameter that corresponds to the empty space and will be tuned by the fuzzy model.

A plot of

Occupancy probability

After obtaining the sonar sensor model pdf, we use Bayesian update rule (Eq. 3) to update the occupancy probabilities of the grid cells.

where P[s(Ci = occ | {r} t ] is the prior occupancy grid probability of grid cell Ci based on observations {r} = {r1, r2, …, rt}, P[s(Ci) = occ | {r}t+1] is the new occupancy grid probability of grid cell Ci based on observation up to rt+1. The fuzzy-tuned grid-based map-building algorithm is an incremental method, as most traditional mapping algorithms, i.e. it only add specific features in the map model and never removes the old features. Hence, the global map contains all the sensor information, surely including spurious models of moving objects. In order to filter out such spurious models, we use following motion detecting methods and fuzzy-tuned integrating technique, thus getting the resulting map only contains stable stationary objects.

The fundamental idea to identify temporal changes in the surrounding environment of a robot is to monitor a temporal sequence of spatial observations and then to determine how these observations differ from each other. An inconsistency between two temporally subsequent observations is a strong indication of a potential motion in the environment. Such inconsistency is mainly caused by dynamic objects in a dynamic indoor environment. In computer vision literature, temporal difference is simple and popular method for detecting moving objects with a static observer. However, for a moving mobile robot, it in itself is not sufficient to unequivocally identify moving objects. Here, we propose a new scheme to detect moving objects using sonar sensors called sonar temporal difference (STD) borrowing from the etymology in the computer vision literature, which is realized by monitoring sensor-based information called time-variant map (TVM) along the time axis with a certain time duration of τ (τ = nt, t is sampling time) and simultaneously filtering out the same information, i.e. stationary objects, thus obtaining the trajectories and outliers of moving objects during time span τ. Note that all the sensor information has been transformed into the same global coordinate frame.

Time-Variant Map (TVM)

Sonar temporal difference is realized by monitoring a temporal sequence of time-variant maps (TVMs). This procedure is not new and we have borrowed it from (Han, Y. et al 2001; Prassler, E. & Scholz, J. 2000). We also adopt occupancy grid model to represent time-variant map because it is easy to incorporate the result of sonar temporal difference into the resulting fuzzy-tuned grid-based map. Elfes, A. (1989) introduced the occupancy grid map. It includes the projection of the range scans on a 2D rectangular lattice and the annotation of each cell with the time tags of the measurements that fall into it. Each grid corresponds to a small spatial region in the real world.

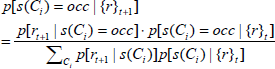

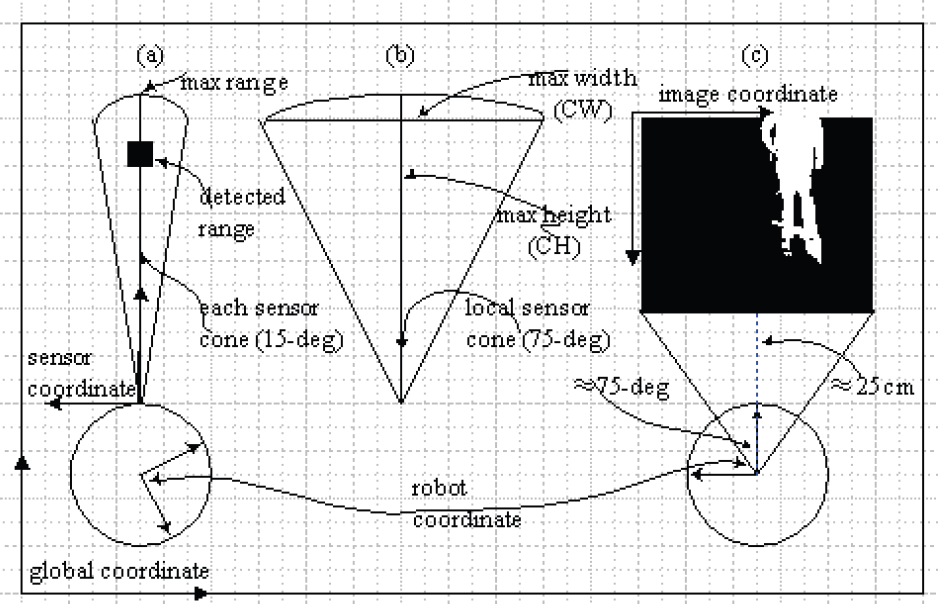

In occupancy grid-based mapping procedure, every time that new measurements are available, a significant amount of time is spent in updating the posterior including free space or stationary object state from Bayesian update rule (Eq. 3). However, it is less important in the context of short-term motion detection since all the sensor data information is synchronically registered in fuzzy-tuned grid-based map. Hence, we only update the occupancy probabilities of those cells in local sensor cone at time t while all other cells remain untouched. Fig. 2 clearly shows the relevant transformation. It should be noted that our experimental platform is Pioneer 1 mobile robot (Active media 1998a) that only has seven sonar sensors, five in front and two at each side, separated by 15-degrees. Here, we are only concerned with the front 75-degree region. Therefore, there is only a 75-degree cone shown in Fig. 2b. We call this representation a time-variant map. Building such maps is rather simple: in each sensor measurement at time t, the cell that corresponds to the object detection is labeled with this time tag t. The tag means that the cell occupied at time t. No other cells are updated during this operation. Therefore, the temporal changing features of the environment are captured by the sequence of time-variant maps: TVMt, TVMt-1, …, TVMt-n. An example of such a sequence is shown in Fig. 3(a)–(c). Note that the maps are already transformed into the same frame of reference.

(a) The kinematical transformation; (b) Local sensor cone; (c) Image frame transformation

A sequence of time-variant maps describing a simple environment, different gray levels represent the age of observation, darker ones corresponding to the more recent

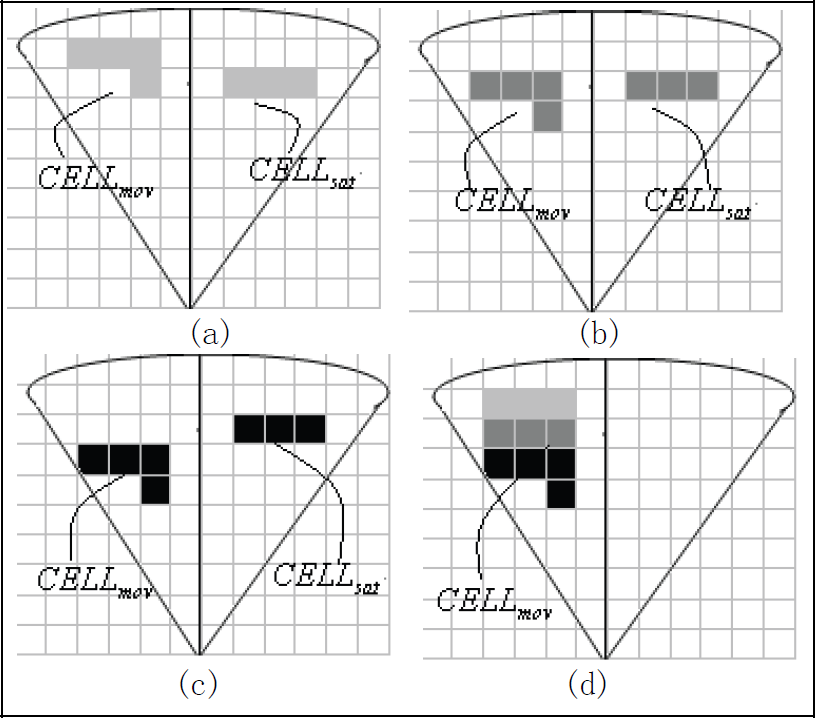

Intensity histogram of a particular pixel over 15min (a) in a dynamic indoor environment and corresponding emission model (b)

Due to the noise and uncertainty inherently in the sonar sensors, to keep false moving object detection events at a low rate, we do not track single cells that are apparently moving, but the cluster ensembles of coherently moving cells into distinct objects even though which may be only part of certain object. The cell clustering algorithm here is temporarily simple: to check the adjacent cells, if occupied, they are considered as the same class, otherwise considered as different ones. In this work, we use Sonar Temporal Difference with a sequence of time-variant maps to detect moving objects. We consider the set of cells in TVMt which carry a time tag t (occupied at time t) and test whether the corresponding cells in TVMt-1 were occupied too, i.e., carry a time tag t-1. If corresponding cells in TVMt, TVMt-1 carry time tags t and t-1, respectively, then we interpret the spatial region circumscribed by these cells occupied by a stationary object CELLsat. If, however, the cells in TVMt-1 carry a time tag different from t-1 or no time tag at all, then the occupation of the cells in TVMt must be due to a moving object CELLmov. If it is detected as a stationary object, we filter this object out of the time-variant map by simply freeing the corresponding occupied cells, while the moving objects stay left in the time-variant map. Fig. 3d shows the result of Sonar Temporal Difference based on the sequence of time-variant maps shown in Fig. 3a–3c. Note that here we consider only the two most recent maps, TVMt and TVMt-1, for detecting moving objects. This limits our motion detection resolution, since objects that move very slowly as compared to the sensor sampling rate will not be detected as moving. This problem can be alleviated by selecting an appropriate value of n (n = τ / t) and by using Bayesian update rule which can update the dynamic object states in fuzzy-tuned grid-based map. The outline of Sonar Temporal Difference algorithm is shown in pseudo-code in Table 1.

Sonar Temporal Difference Algorithm

Sonar Temporal Difference Algorithm

A common method to track motion in image sequences is background subtraction between an estimate of the image without moving objects and the current image. Previous researcher (Rittscher, J. et al 2000; Ren Y. et al 2003; Rowe S. & Blake, A. 1996) have shown that the disruption can be somewhat suppressed by using statistical model of background in image-subtraction to find motion. Here, we also adopt a statistical method to model the background: a 3-class mixture of Gaussians, which is learned by using Expectation-Maximization (EM) algorithm (Dempster, A. P. et al 1977). We consider the intensity values of a particular pixel over time as an independent statistical process called “pixel process” (refer to Fig. 3). In a structured indoor environment, due to the lighting changes, scene changes, and moving objects, the distribution of each pixel is fitted with multiple Gaussians. Since illumination is one of the important components in indoor environments, it is necessary to discriminate the shadows from background and foreground. Therefore, we adopt a 3-class mixture of Gaussians to model the pixel process. Since it is hard to estimate the distribution of foreground along the image sequence, we adopt uniform distribution to represent it.

where

ωF,ωB,ωS: Weights of the three distributions in the mixture, respectively.

μxB, μxS: Means of the two Gaussians in the mixture, respectively.

ςxB, ςxS: Standard deviations of the two Gaussians in the mixture, respectively.

R: Parameter of the uniform distribution in the mixture, decided by the valid range of the intensity value. Usually it is 256.

In the learning stage, we use EM algorithm to estimate the model parameters by given a training sequence like (Rowe S. & Blake, A. 1996). It should be noted that EM algorithm is not guaranteed to find global maximum and very sensitive to the starting point. That is, the algorithm will not converge quickly and fail to fit the distribution properly, if given a poor initial estimate of the distribution. In our work, we empirically determine the initialization similar with (Rittscher, J. et al 2000).

In our work, when new frame is available, we have to compensate the sensor motion in order to use background subtraction to detect foreground objects. That is, we map each pixel in current frame xc into background frame. Due to the errors in feature localization, motion estimation etc., this map process is not very accurate. So, at best, we predicate a position ○B, i.e. TxF = α○B, where T is the transition matrix for background motion compensation and a is a nonzero scalar. We use iterative closed point (ICP) algorithm to determine T : Using a reasonably good initial guess of the relative transformation, a set of salient landmarks are chosen from front image (i.e. current frame) and background.

lF, i and lB, i are the corresponding landmarks in current and background frame respectively. The better estimate of the relative transformation T is iterated by least-square-estimation (LSE) method. Because the motion compensation is not accurate, that is ○B will not definitely the corresponding pixel xF, in order to comprise this approximate alignment, we adopt another Gaussian model called alignment Gaussian model (AGM) (Fig. 4), which centers at ○B with covariance matrix Σ in a validation region

Illustration of xB, xF with their AGMs

Σ is important for determining the size of AGM and will be different from pixel to pixel. But here for computational simplicity, we assume it is constant and estimated by Eq. (8). With

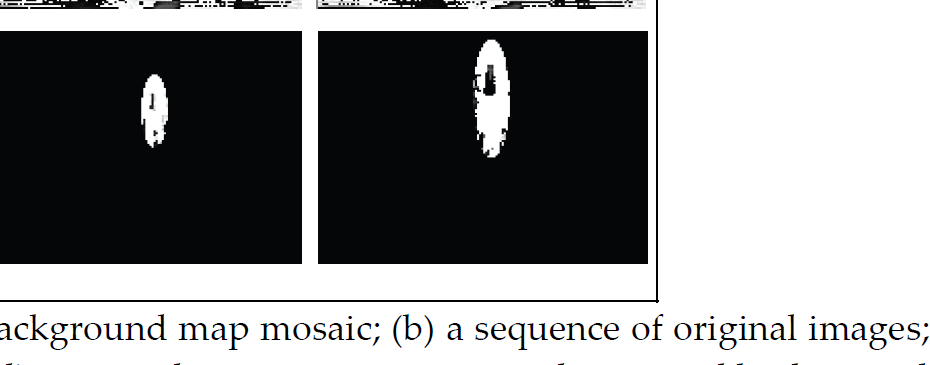

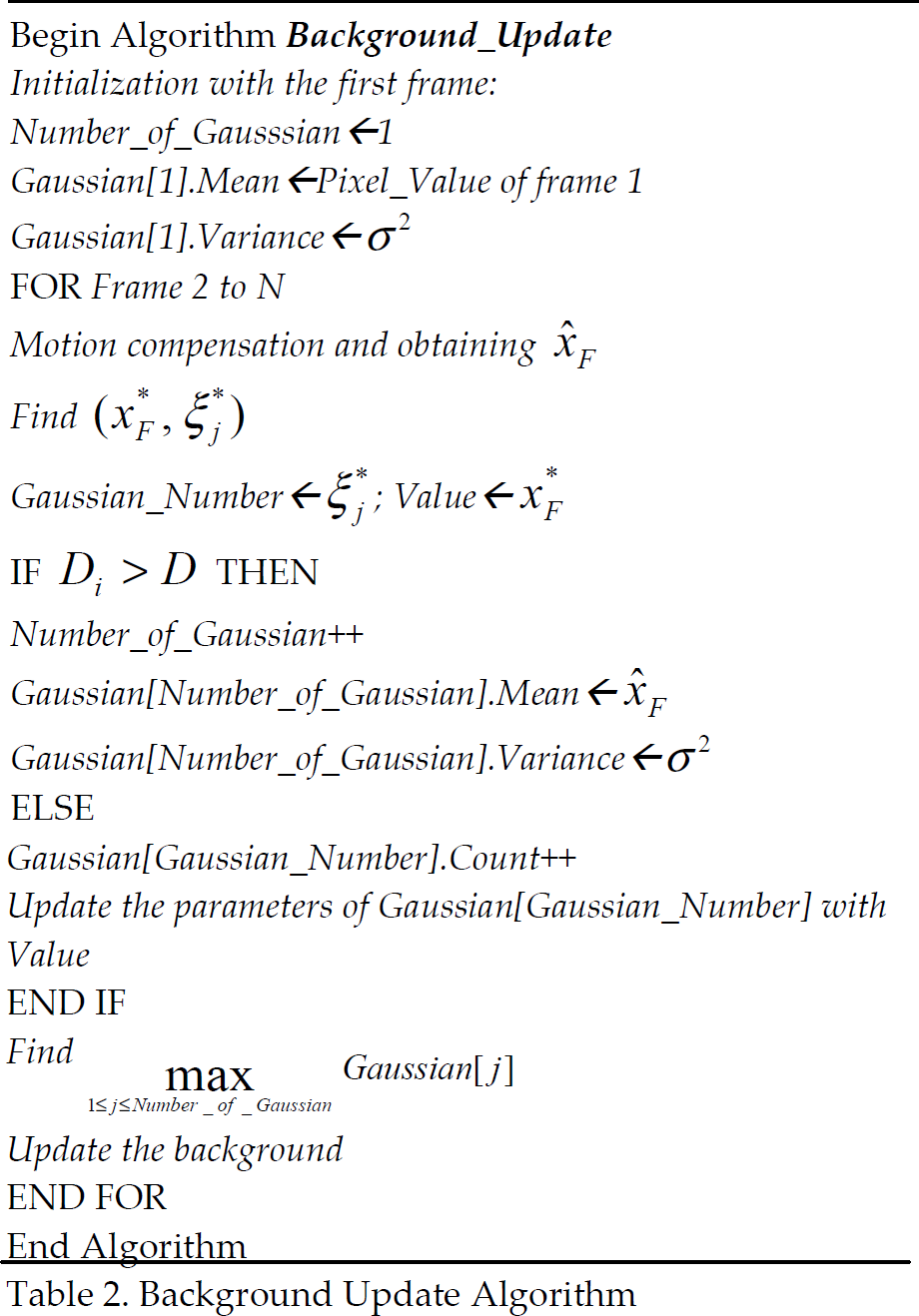

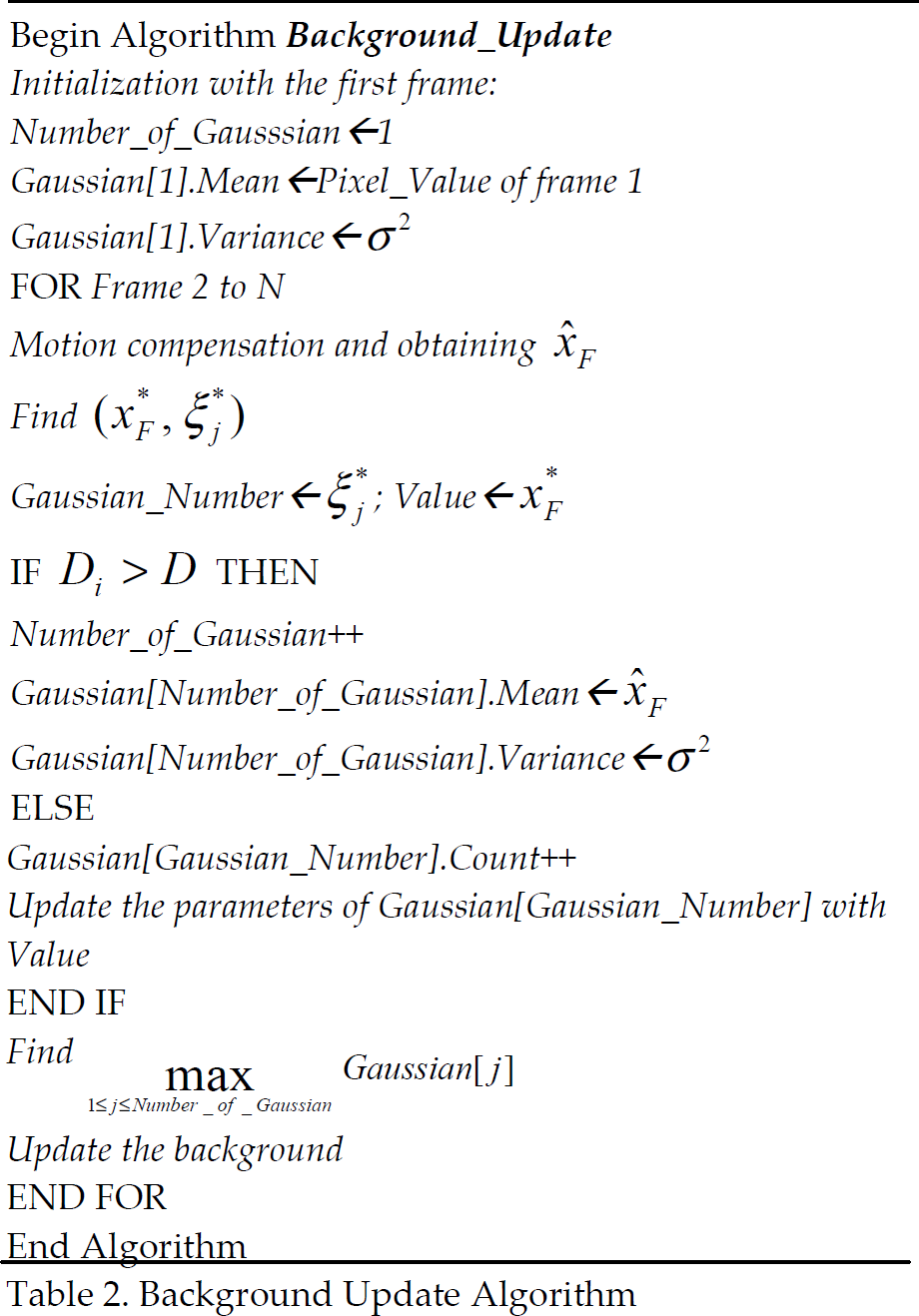

Everything above works well while the background is adequately updated. But this is not easy for a moving background, especially when there is an occlusion and/or uncovered background. Here we use the similar approach with (Ren, Y. et al 2003) to update nonstationary background. We briefly report the algorithm as Table 2. For details, please refer to (Ren Y. et al 2003). And a preliminary experimental result of proposed algorithm is shown in Fig. 5.

Background Update Algorithm

Background Update Algorithm

Motion detection with nonstationary background. (a) background map mosaic; (b) a sequence of original images; (c) motion detection using common background subtraction; (d) motion detection using proposed statistical background subtraction

After detecting moving objects respectively by the sonar sensors and the uncalibrated camera, we need to integrate these two different sources into the result global map with filtering out the spurious objects. However, since the camera is uncalibrated, that is, lack of the inter-parameters of the camera, we can not know the precise distance measurement from images, additionally, the sonar is also not very accurate because of the uncertainty in radial and angular, therefore, we can not directly use traditional multisensor fusion (Castellanos, J. A. et al 2001) which requires precise sensor-sensor calibration to integrate these two detection results into a common reference frame.

In order to achieve a reliable integration, in this paper, we propose Fuzzy-Tuned Integration (FTI) algorithm to find out the spurious objects in the fuzzy-tuned grid-based map, which needs two necessary parameters: location and size of the spurious objects in the resulting map, and then filter them out. To design this fuzzy system, we first define the input variables and output variables as follows:

Input Variables

B_Centroid(x, y): The centroid of BLOBmov in robot frame.

C_Centroid(x, y): The centroid of CELLmov in robot frame.

B_Size: The size of the BLOBmov in vision frame in number of pixels.

C_Size: The size of the CELLmov in grid-based map in number of grids.

Output Variables

O_Centroid (x, y): The centroid of update region in robot frames.

O_Size: The size of update region in number of grids.

Tables 3–5 show the fuzzy rule-base for tuning the output variables, and the membership functions and linguistic states are in Fig. 6. where

Fuzzy rule table corresponding to O_Centroid.x

Fuzzy rule table corresponding to O_Centroid.y

Fuzzy rule table corresponding to O_Size

IW: The image width (in our experiment, this value is fixed at 160 pixels)

IH: The image height (in our experiment, this value is fixed at 120 pixels)

CW: The maximum width of the local sensor cone

CH: The maximum height or range in the local sensor cone

Note that in this fuzzy system, the image coordinate has been transformed into the robot frame according to Fig. 2.

Everything explained above works fine under the assumption that motion correspondence problem has been well solved, that is the moving object pair respectively detected by sonar sensor and un-calibrated camera already finely associated to each other. However, this problem can seriously damage the resulting map if the motion correspondence is not well done. In computer vision literature, there already exist many approaches to solve this problem, such as track-splitting joint likelihood, multiple hypothesis algorithm etc. Cox has a good review to this problem in (Cox, I. J. 1993). In our work, we use the Nearest-Neighbor algorithm to solve the detected moving object association. The nearest-neighbor algorithm is the simplest suboptimal data-association algorithm, which assumes that each measurement originates from the closest corresponding feature, in our experiment, where closest is defined using the Euclidean distance of the centroids of the detected moving objects formulated by Eq. 11. Note that B_Centroid(x, y) and C_Centroid (x, y) were transformed into the same coordinate frame according to Fig. 2.

Since our experiment is performed in the indoor dynamic environment which is structured and also has not many moving objects to detect and track, hence the nearest-neighbor algorithm can satisfy our need. So, our fuzzy-tuned integration works only when we find there exist corresponding moving objects, i.e. only when DIST > ςcorrespond, where ς correspond is motion correspondence threshold obtained by trial and error. The whole outline of proposed fuzzy-tuned integration algorithm is shown in pseudo-code in Table 6.

Fuzzy-tuned integration algorithm

Simulation Study

In this simulation study, we try to illustrate that the Bayesian update rule used in our proposed algorithm is capable to update dynamic object states like slow relocation. To explain the results more easily, we only consider the one-dimension environment and assume that the sensor measurement is ri and there is a dynamic obstacle which changes its location over time. The motion profile of the object is shown as follows:

Location 1: Obstacle is stayed 1m away from the sensor. i.e. {ri =1m, i =1, 2,…, 8}

Location 2: Obstacle is moved to 1.6m away from the sensor. i.e. {ri =1.6m, i =9, 10,…, 16}

Location 3: Obstacle is moved to 0.5m away from the sensor. i.e. {ri =0.5m, i =17, 18, 19,…}

The profiles of occupancy probabilities corresponding to 7th, 8th, 15th, 16th, 23rd, 24th readings for our proposed algorithm are shown in Fig. 7. From this Fig., we can observe that our algorithm can provide good estimate of the obstacle position when is moved from Location 1 to Location 2 and from Location 2 to Location 3. Therefore, it can be concluded that this algorithm is suitable for updating the states of dynamic objects.

The result of the algorithm to update the state of dynamic object

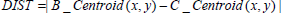

The goal of the experiment is to illustrate that fuzzy integration of two detection techniques, STD and SBS, into the mapping process leads to a better global resulting map since spurious objects were filtered out. The experiment was carried out on the Pioneer 1 robot in the corridor at HKPU. The robot is equipped with one uncalibrated camera with a fixed angle and seven sonar sensors, five locating front, separated by 15-degree each, and two locating at each side. The driving mechanism is by means of 2 reversible DC motors with wheel encoders to update the location by dead reckoning. The software is written in C language and Saphira software (ActivMedia 1998) with API libraries has been used to obtain the sonar data and to perform the localization to estimate the current position and orientation of the robot. The navigation is not autonomous in the present implementation. The robot is manually navigated to predefined locations such that to avoid the dead reckoning error due to slip. The sonar sensors were measurements in a range up to 3 meters around the robot which were considered relevant for mapping, and the maximum distance of the camera visual zone we concerned in this experiment is also around 3 meters which can highly improve the quality of the fuzzy-tuned integration. Fig. 8 shows the hand-measured model of this environment to be mapped.

Hand-measured model of the corridor in HKPU

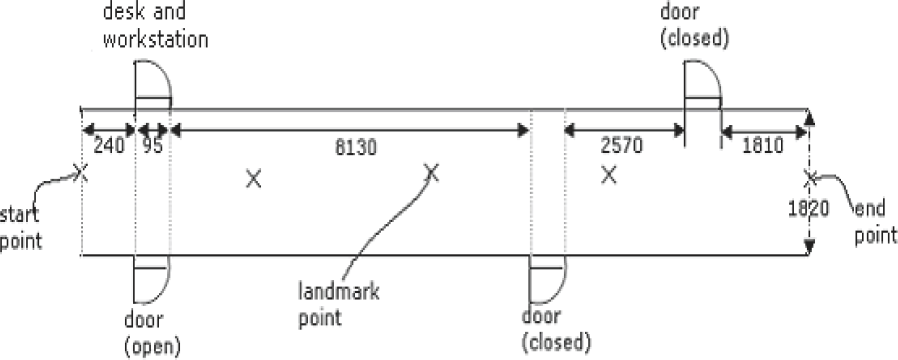

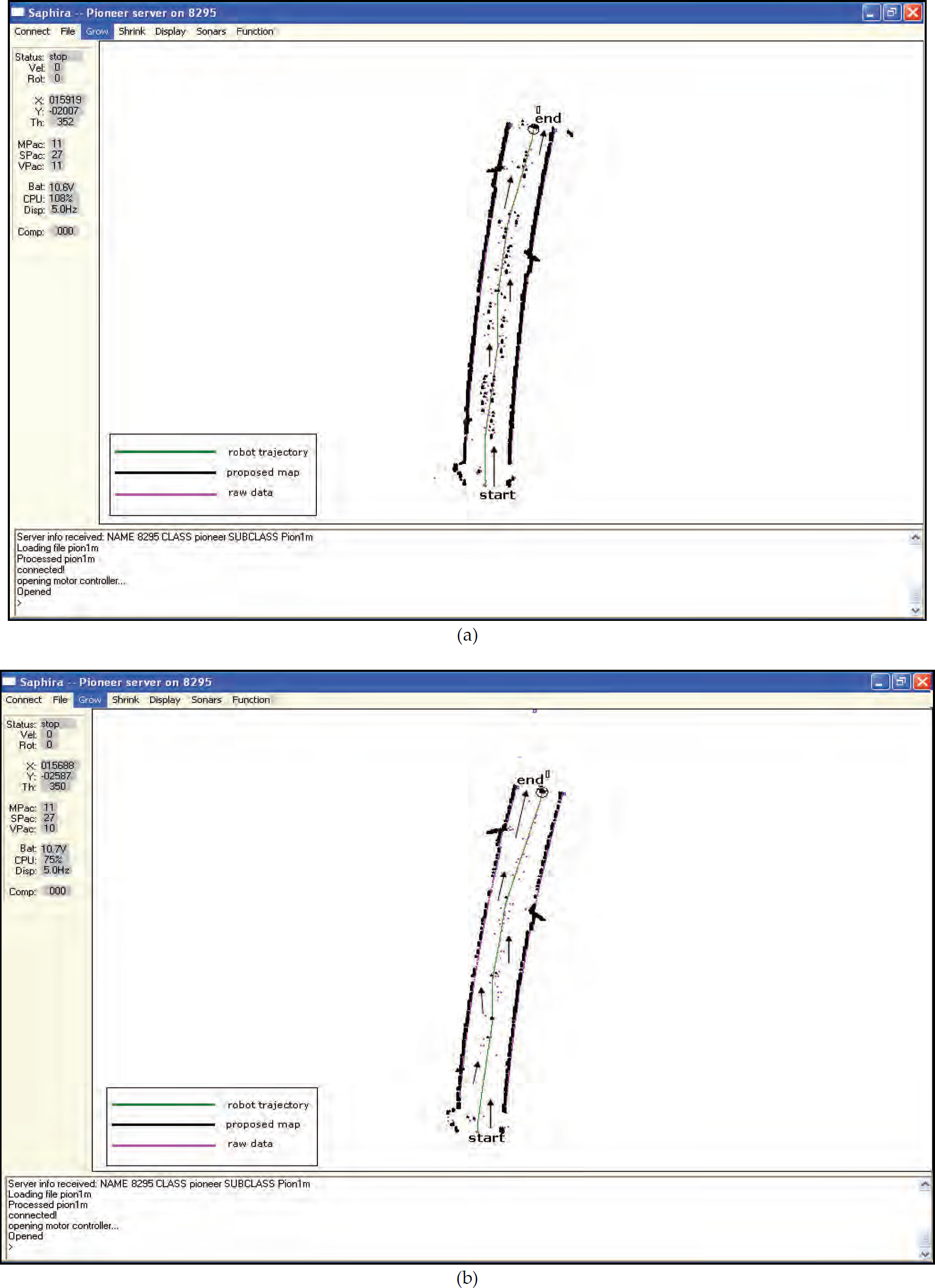

During mapping, there were several (up to three) people walking in front of the robot. Fig. 9 shows the robot during the mapping process. Fig. 10a shows the raw range sonar measurements which were obtained by the robot and the map obtained without people filtering. And the resulting map obtained with our proposed algorithm is shown in Fig. 10b. Both maps have a resolution of 50mm per cell. As seen from Fig. 10a, there are a many cells in the resulting grid map, which have a high occupancy probability since people covered the corresponding area while the robot was mapping the environment. If, however, we use the proposed algorithm and filter out the most of moving objects (here is people), the effect of the people is seriously reduced in the resulting map (see Fig. 10b).

Pioneer 1 mobile robot is mapping a dynamic corridor environment.

(a) Fuzzy-tuned grid-based map without filtering out moving objects; (b) Resulting map of proposed algorithm

Therefore, as see from Fig. 10(a–b), the algorithm is more reliable for mapping in dynamic environments than fuzzy-tuned grid-based mapping method as well as traditional mapping methods.

Note that sine the robot has only seven sonar sensors, five located forward, separated by 15-degree each and two at both sides. Additionally our camera faces forward at a fixed angle. Thus both sensors only have a limited measurement zone (about 75-degree). Considering these limits, we assume d that people only walked in front of the robot during the experiment. And since localization problem was temporarily ignored in this paper and we used odemetric measurements to localize the robot's position with predefined landmarks, there must be some dead reckoning errors. Thus the resulting map was not rectangular compared with the hand-measured map. The localization problem will be addressed in future work.

In this paper, we have presented a new solution to map structured indoor dynamic environments that incorporates motion tracking into mapping process. The two methods used in motion tracking are sonar temporal difference and statistical background subtraction methods. The former is constructed by monitoring a sequence of temporal local maps. The latter is achieved based on sufficient background update with a 3-class mixture of Gaussians. After detecting the dynamic entries, due to no accurate transformation between image plane and robot local frame, we applied a fuzzy system to integrate these two methods to filter out the spurious objects from the resulting map. We demonstrated that detection of dynamic entries can benefit the maping process. Simulation and experimental results show the capability of the proposed algorithm.

As the main focus of this work is the fuzzy integration of motion detection into mapping, localization temporarily is not an issue. As the future work, we will incorporate localization into this work to solve the problem of simultaneous localization and mapping (SLAM) in dynamic environments. We also plan to extend this work to deal with more complex environments. We will investigate alternative algorithms of detecting moving objects to improve the accuracy and efficiency of the motion detection and accordingly improve the resulting map.