Abstract

In this paper, a method is proposed for humanoid robots performing object transfering task in a teleoperated cooperative paradigm. The cooperative task is accomplished using simple communication among two humanoid robots and then switch between modes according to the situation. In case of object passing with two humanoid robots, mutual position shifts may occur while they are moving. Therefore, it is necessary to correct the position in a real-time manner. To control the arm and hand of the robot remotely we use master arm and hand while it carries and passes the object, the dynamic stability during the execution of walking is ensured by incorporating the ZMP criterion and the desired spacing between the robots is controlled by Leader follower type control. Object passing cooperation for two humanoid robots is based on computer control, wireless LAN, vision, cooperative handling control and text commands. The method is applied as key software of the system. The effectiveness of the proposed methodology for performing cooperatively real time tasks is discussed.

1. Introduction

The research in the field of humanoid robots is evolving to develop human like performances in the real world scenarios. One of the important tasks among human beings is transferring of object among themselves. These types of tasks form the basis for cooperation among humans and therefore it is a problem that is supposed to be solved for humanoid robots to perform human like tasks and the transfer of objects between humanoid robots is a fundamental requirement for cooperatively performing useful work.

The issues related to comparisons studies of cooperative work both by humans and humanoid robots have been discussed (Yokoi, K., 2002 & Inoue, H., 2000). Many of the studies aim on allowing a humanoid robot with physical features suitable for the human beings environment to perform human tasks. Several solutions exist for transferring an object between robot and human being. These tasks include preparing food, picking items up from the floor, and placing items on a shelf (Stanger, C.; Anglin, C. & Harwin, W., 1994). These tasks could potentially include some form of cooperative object hand-off. Recent results with the NASA Robonaut (Sian, N., 2006) and the HERMES robot (Bischoff, R. & Graefe, V., 2005) have included the handing of objects between a humanoid and person. However, these projects have yet to consider object transfer in detail. Another approach declares that during a collaborative task, a robot requires understanding of a person's intentions and desires in order to behave as a partner rather than just a tool (Breazeal, C.; Brooks, A. & Chilongo, D., 2004). It may be difficult to realize such systems in real-time applications.

In human-robot cooperation for transferring task, an interface system was developed to afford direct and intuitive interactive communication (Ikeura, R. & Inooka, H., 1995) and the human-following experiment with biped humanoid robot was described (Yamaguchi, J.; Gen, S. & Setia Wan, S. A. 1998). Humanoid robot HRP-2P was developed by Japan to work cooperatively with a human in the outdoor (Yokoyama, K.; Maeda, J. & Isozumi, T., 2001). A humanoid robot HRP-2P with a biped locomotion controller, stereo vision software and aural human interface was designed to realize cooperative works by a human and a humanoid robot. The robot could find a target object by the vision and carried it cooperatively with a human by biped locomotion according to the voice commands by the human. A cooperative control was applied to the arms of the robot while carrying the object and the walking direction of the robot controlled by the interactive force and torque through the force/torque sensor on the wrists. We consider it is important for these robots to interact with other robots as well.

Several generic approaches have been proposed concerning goal decomposition, task allocation and negotiation (Asama, H. & Ozaki, K., 1991). PGP (DesJardins, M., E. & Durfee, E., C., 1999) and later GPGP (Durfee, E., C. & Lesser, V., 1999) is a specialized mission representation that allows exchanges of plans among the agents. However (Gerkey, B., 2004 & Dias, B, 2005) addresses a mission planning for multi-robot task allocation, for effective conflict free execution in a dynamic environment. An example of such a mission could be transporting and assembling a superstructure in a construction site. Another approach may require synthesizing a sophisticated plan composed of numerous partially ordered tasks to be performed by various robot types with different capabilities: transportation of heavy loads, maneuvering in a cluttered environment manipulation (Gravot, F.; Cambon, S. & Alami, R., 2003). It can be done in a central way where it is essentially one thread process. Moreover it can take benefit from several CPUs but this is only a distribution of computing workload. This is different in nature from problems being addressed for cooperative decision-making based on independent goals, on different robots with various robot capabilities and contexts. In our case, the mission is provided directly by the user as a set of partially ordered tasks.

The robots can communicate with each other by radio, infrared, or other wireless means. Robots express their invisible communication by visible means, such as voice and gestures (Kanda, T., 2002). This is applied on cooperative system with two humanoid robots. Sensing and actuation may be noisy and uncertain in robot domains resulting in partial knowledge about the world, so not suited when robots are being teleoperated in some dangerous environment. A humanoid robot has the unstable balance and is difficult to operate. Therefore, it is more difficult for humanoid robots to carry out a cooperative task (Inoue, Y.; Tohge, T. & Iba, H., 2003).

We want to show that two robots can efficiently and spontaneously work together using supervisory teleoperation control. For transferring appropriate object between two robots, the teleoperators directly and intuitively control the robots rely on human robot interactions. In this work an effective cooperation method for humanoid robots is proposed. We have developed a cooperative robot system for two teleoperated humanoid robots to communicate with each other for task execution. A cooperative control is applied remotely to the arms and hands of the robot while it carries and transfers the object and desired spacing will be controlled by leader-follower method. The use of communication to speed up performance of multi robots cooperation has been explored as due to the noise, error and uncertainly in the sensors and effectors. We describe a simple communication protocol that compensates for the robots incomplete and inaccurate information about the environment while also reducing interference between the teleoperated-robots. Our robots system is stable thus the cooperative tasks can easily be performed.

The paper is organized as follows: The overview of teleoperation system for BHR-02 is presented in section 2. Section 3 describes the proposed teleoperation system for cooperative object transferring. In Section 4 some simulations are presented. Conclusions are given in section 5.

2. The Overview of Teleoperation System for BHR-02

The humanoid robot BHR-02 consists of a head, two arms and legs and has total 32 DOF (Degrees of Freedom). The height is 160cm and the weight is 63 Kg as shown in Fig.1.

BHR-02

The humanoid robot BHR-02 has stereo cameras and stereo microphone and speakers in the head, torque/force sensors at wrists, feet and ankles; acceleration sensors and gyro sensors at the trunk. There are two computers built in robot body, one is for motion control, another for information processing (such as images processing, objects characters identifying and so on) and transferring data with remote cockpit. The two computers are connected with a mass memory for data sharing which is a high speed communication device that need not shake hands while exchanging data, called memolink. When BHR-02 receives instructions from the remote cockpit via wireless LAN, or independently acts according to its vision or perceives other kinds of exoteric information such as robot head tracking a moving object by its view. The first step of BHR-02 works is that one of its computers, which is for information processing and exchanging data, disposes these information and writes the results into memolink. The second step is that the motion control computer reads the data from memolink calculates and generates the values of motion trajectory that will be used to control corresponding DC motors. The control system of BHR-02 is a real-time position control system based on RTLinux operating system. Now BHR-02 can perform walking, squatting, wheeling and shadowboxing etc. Research on bipedal dynamic walking, 3D vision, motion planning, teleoperation and other subprojects are done (Huang, Q., 2001 & 2002).

2.1. Remote cockpit for Humanoid robot:

To control the humanoid robot BHR-02 a remote cockpit has been developed whose system architecture is client/sever mode based on the 802.11G wireless LAN (Liu, Q.; Huang, Q. & Zhang, W., 2004). The data transferring between the remote cockpit and BHR-02 is bilateral. In one direction, the operator manipulates the multiple input devices (keyboard, mouse, master arm and hand) and these actions are transformed into instructions for the computer and these instructions are transferred to BHR-02 ultimately via the wireless LAN. In the other direction, the operator can also receive certain feedback information such as BHR-02 bodily sensor data system and video from robot vision system.

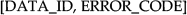

Fig. 2 shows working sketch map of the cockpit. With the help of remote cockpit, teleoperator can control the BHR-02 walking to the aim position and manipulate the robot upper limb working.

Working sketch map of the remote cockpit

There are 4 kinds of feedback in the system which are: body sensors data of the robot, feedback by the robot vision system, real scene of the overall workspace and virtual scene monitoring system.

Virtual scene monitoring system is a special system based on the motion capture system. This system can render the virtual scene, but it can only adopt the data from the motion capture system. Other data, for example, the internal sensors data of the robot body can not be rendered in the interface. Furthermore, when this system works we should attach more than 30 markers on the robot body which is not convenient. To overcome these problems we have developed another virtual scene monitoring system which is based on virtual reality.

As the humanoid has many moving parts such as legs, head, arms and hands etc., we use multiple input devices to control robot including the keyboard, mouse, master arm and hand where hand and arm manipulator contain 3 sensors for angle displacement are processed by microprocessor unit. In controlling different parts of the robot, operator can select different operational modes conveniently to input the instructions. It is important for the operator to watch the the overall scene of the workspace. There are following four feedbacks in the currently system.

A. Body Sensors Data of the Robot

Sensors on the robot detect the predicament data including the joint angle data, force data and the orientation data of the robot body etc in real time. With these data, the operator can know whether the whole robot is running well. By the remotely cockpit, the data are transformed from the robot side to the operator side to display.

B. The Robot Vision System

There are CCD cameras equipped on the robot head and graphic card equipped on the computer. The cameras capture the video in the workspace near the robot and transmit them to the operator side by the wireless LAN to display.

C. Real Scene of the Overall Workspace

When we want to operate the robot to finish a task as walking to somewhere or just action, we need to know about the location of the robot and the target. An overall real scene monitoring system has been designed to get this information. The system adopts the current video monitoring technology, including the camera system (vidicon, camera lens, controlling platform), transmission system, controlling system and displaying system.

To get the general video information in the valid area, we equip two cameras, one on the top and the other on the side. Each camera is equipped with an adjustable cameralens. The cameras are placed on a platform, the orientation of which can be adjusted by the operator. Operator can use the joystick/keyboard to adjust the two platform orientations and two cameras lens to get the video from all of the workspace (Fig. 3).

Composition map of the overall real scene system

D. Virtual Scene Monitoring System

Virtual scene monitoring system based on virtual reality is used to get the vision and location information. The system is provided with atleast three markers data placed on robot to calculate the whole robot position and attitude data. Real time coordinates of the markers are sent from the motion capture system to the teleoperation platform. When the robot is working, the motion capture system can offer more than 60 frame data of the markers position. A virtual robot interface is built to render the data of the humanoid robot BHR-02 and can render the multiple real-time feedback data from the robot (Fig. 4). In the data-fusion module an algorithm is adopted to determine the position and attitude of the robot body (Zhang, L. & Huang, Q., 2006).

Virtual model BHR-02

By motion capture system we can capture the motion information of the robot and its target. This system is composed by the infrared cameras, reflecting infrared markers and data disposal system. First we fix markers on the robot and start the capture system and then we get the three-dimensional data of the markers. In the end we get the motion information of the robot.

In the graphic work station (Fig. 4), the graphic models of the robot are made by three-dimensional software (Zhang, L. & Huang, Q., 2006) and the work is enhanced by reconstructing 3D shape models, detecting an object, measuring position and tracking an object using virtual reality based teleoperation control (Keerio, M., U.; Huang, Q. & Gao, J., 2007). The motion information can be used to drive the models to simulate the motion of the real robot. As the three-dimensional data of the markers express the location of the robot parts (hand, wrist etc.), they can be used in the accurate position operation. When the robot is executing the order, the sensor can detect the joint angle value in every control cycle. By the teleoperation platform, the data of the joint angle will be sent back to the operator in real time. All these data represent the motion status of the robot at real time. By rendering these data, the operator can monitor the robot motion state.

2.2. Balance for humanoid motion

Zero moment point (ZMP) criterion is one of the standard methods for dynamic balance and is published in number of articles. The implementation of this method requires the knowledge of all dynamic forces on the robot's body plus all torques between robot's foot and ankle. This data can be determined by using accelerometers and gyroscopes on the robot's body plus force/ torque sensors in the robot's feet.

With all contact forces and all dynamic forces on the robot known, it is possible to calculate the ZMP, which is the dynamic equivalent to the center of mass. If the ZMP lies within the support area of the robot's feet on the ground, then the robot is said to be in dynamic balance.

Most of the researchers plan the trajectory in a way that first they plan the ZMP trajectory and then compute the foot and waist trajectory to satisfy that ZMP trajectory. But in this method, every desired ZMP trajectory is not possible to achieve with enough stability margin so that the humanoid should not tip over. We used the method in which first we plan the off- line foot trajectory and then waist trajectory by using constrained cubic spline interpolation, to minimize the overshoots, to achieve the smooth trajectory with minimum acceleration and ensures that the humanoid robot is dynamically stable. The reason for overshoot is that, the function is higher order polynomial and prone to oscillation and overshoots at intermediate points. The minimum acceleration is achieved because the interpolation is smooth and with mimimum overshoot.

For the hip and both feet trajectories, all legs joint trajectories of the humanoid robot are determined by the kinematics constraints. Thus the running pattern can be obtained uniquely by the trajectories of the hip and two feet. The lateral motion of the hip and both feet can be derived similarly as the sagittal direction (Li, Z.; Huang, Q. & Li, K., 2004). The offline or pre-computed trajectory is simulated on DADS (Haug, E., 1989) software. DADS (Dynamic Analysis and Design System) perform design verification through the comprehensive simulation and animation of mechanisms and mechanical systems. Virtual prototyping is achieved through extensive modeling capabilities, including intermittent component contact and controls and hydraulic systems. DADS perform dynamic, kinematics, static, inverse dynamic and quasi-static analyses to provide position, velocity, load, and acceleration results. System behavior is pesented through plots, tables, and photo-realistic animation. DADS modular structure allows to add additional capabilities. DADS can solve problems associated with large nonlinear displacements of parts connected by joints and force elements.

2.3. Operational modes

For humanoid robot working, the operator should select different operational modes to control the robot for different kinds of motion.

A. Legs Motion

In the humanoid robot system BHR-02, when we control the robot to walk, the basic walking trajectory data are obtained off-line and stored in the control computer, then the control program select different data according to the instruction and control the robot to walk as different style. The operator input the walking instruction to the robot by keyboard. The robot control computer select the data according to the parameters in the instruction such as the walking type, speed, step and the number for controlling the robot.

B. Head Motion

The view point of the video from the robot vision system will change by the robot head moving. There are 2 DOF in the head of BHR-02. Operator controlling the robot BHR-02 head is directly controlling of the joint space. Four keys on the keyboard were used as direction keys. Press one of them; we can control the robot head to move a special angle value to the special direction.

C. Hand and arm motion

The hand of the BHR-02 can execute two orders: loose and hold tightly. It is fit to grasp the object. To control the robot hand remotely, two graphic symbols or text numbers are designed: one is for order of open and the other is for close. Operator can press one of the two symbols by mouse to generate the instruction.

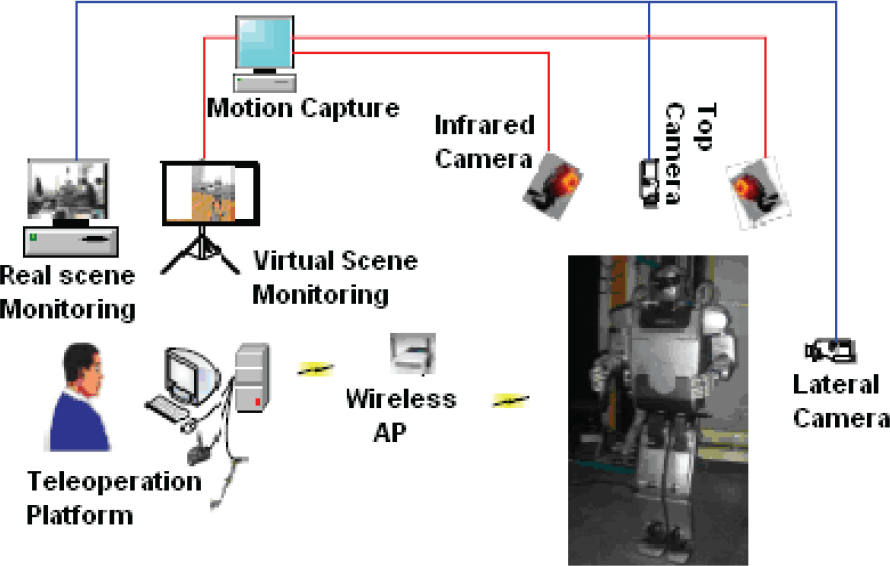

A master arm has been designed which has similar mechanism to that of human and the humanoid robot arm. Mechanism of the master arm is based on the man-machine engineering principle, the characteristic of the humanoid robot arm and its manipulation (Liu, Q.; Huang, Q. & Zhang, W., 2004). As shown in Fig. 5 using the master arm, the operator manipulates the humanoid arm in its workspace. The operator grids the master arm, watches the information from the workspace (robot visual information, location information of the subject etc.), moves the arm and depicts the master arm moving. The angle data are detected by the sensors equipped on the master arm and communicated to the teleoperation system by USB interface.

Working map of operation with master arm

Although the master arm has the approximate mechanism with that of human, the size of each part is not equal to corresponding part of the robot, so the check data of joint angles motion couldn't be applied to corresponding joints motion directly. At the operator side, we can calculate the positions and orientations of the centre of the palm by kinematics principle. At the side of humanoid, the motor motion values of the shoulder, elbow and wrist of BHR-02 can be calculated using the data of the master palm, according to inverse kinematics principle.

Besides the master arm, operator can use the keyboard to input the joint angle data. The operator input the joint angle data by keyboard in two ways:

input the joint angle data directly;

input the values of positions and orientations of the centre of palm, then the joint angles data could be calculated by reverse kinematics.

Operator can use master arm, keyboard or mouse to control the different parts of the robot in different modes. Through the remote system we can control BHR-02 to walk from the initial position to the target point, moving head in left/right and up/down to get the helpful vision, and manipulate it to grasp.

3. Teleoperation System for cooperative object transfering

The target task we have chosen is a cooperative object transfering in which two humanoids have to cooperate with each other for carrying and passing an object. In this paper, we consider walking direction and correction difference to ensure a cooperative task by humanoid robots using leader- follower control.

The overall system has two teleoperation platforms and common remote site where two humanoid robots can cooperate. Client/ Server mode is adopted. In this system the client program is installed in manipulation platforms, server program in robot or remote site. That is to say that two platforms send their respective commands to the remote site where the cooperation between two robots are executed in the remote simulator station.

Operators can use master arm, keyboard/joystick or mouse to control remotely the different parts of each robot in different modes. In this teleoperation system, operators can get sensors information from the sensors equipped on robot body; the video from the robot vision system and the real scene monitoring system and virtual scene from vitual scene monitoring system. In our laboratory more than two humanoid robots BHR-02 and one BHR-01 are developed but presently BHR-01 is out of order. As shown in Fig. 6, both BHR-02 has unique configurations, we have assigned names BHR-02L and BHR-02F and both are fit for performing application tasks like cooperative works.

Cooperative Humanoid Robots Teleoperation System

3.1. Leader-follower type control

Control can be achieved using leader-follower type control (Ota, J., 1996 & Kosuge, K., 1996). In a leader-follower type control is often used for the cooperative movement, it is essential that the follower robot acquires sufficient information by the motion of the leader robot. This information is usually obtained by force/ torque sensors or wireless communication. Force/ torque sensors are used for measuring force and torque in foot and hip of the robot. The waist trajectory is calculated by using constrained cubic spline interpolation to ensure the dynamic stability, the height of the waist is lowered to keep the hip and knee angles within the limit. For dynamic stability, some simulations are shown in section. 4. The leader-follower control is considered for controlling humanoids motion for desired direction and spacing, by the selection of the following variables as listed below:

η l – Distance of the leader from a reference point

η f – Distance of the follower from a reference point

η d – Desired distance to be maintained between the robots

η e – Error in the desired distance to be maintained

η e = η l - η f

OR

Where η

d

is the desired spacing that is to be strictly maintained between the two robots and η

e

is the amount of error or drift from this desired spacing and μ

f

is the input of the robots for satisfying required performance criteria. The functions C

f

(,) and M

f

() are called the robot dynamics. To compute the required actuator specifications such as torque and velocity, it is necessary to solve the equations of the kinematics and dynamics of the robot mechanisms. To solve this problem, a dynamic simulator has been developed (Huang, Q.; Nakamura, Y. & Arai, H., 2000) based on dynamic analysis and design systems (DADS). The dynamic robot motion on DADS is simulated using the offline or pre-computed trajectory to ensure the dynamic balance and proper execution of the trajectory on real humanoid robot and to analyze various factors such as the necessary joint torque and joint speed etc. In order to keep the humanoid robot from tipover, the sensory reflex control is applied on-line ensuring high stability and reliability of walk (Huang, Q. & Nakamura, Y., 2005). Further more for example [DATA_ID] can distinguish robots and error in desired distance can be overcome as in equation (2).

3.2. Object passing method:

The torch passing between two humanoid robots is assumed as a task for performing cooperative work. We have employed windows programming collaborator with color touch screen display, integrated software functions, track-based positioner integrated with robot controller for interchanges of object between robots when signaled by operators. These elements help:

reduces arm-to-arm interference when using two robots on one place.

enables to operate two robots and reducing risk of collision.

Programmable unit can perform information transmissions, where sensor can track on both sides of joint. DSP is utilized for signal processing tasks, in which one DSP with serial link to client computer can be used for controlling two robot manipulators. The use of PC-based vision guidance, robotic vision guidance and inspection by teleoperation make our system safer and let easier to handle real time problems. In our system, selective color option can be assigned to the robot which uses color matching tool and color processing can provide acquisition and calibration of color images. Moreover, all the functions are integrated homogeneously in the system making it observable and controllable at the decisional level. The actions planned by the decisional level are managed by the functional level. It is important to note that the trajectory should remain in a bounded area specified by the supervisor in order not to interfere with the other robot. The control system of BHR-02 is a real-time position control system based on RTLinux operating system.

The robot can find a target object by the vision. Object manipulation approach has been used in which object location is detected through stereo vision, pre-reaching and alignment of the object with hand is based on image features. Firstly object is located and then position based tracking system is used to track the object in field of view; the position of the target object is obtained by the stereo geometry. When the end-effector is in the vicinity of the target object, a mark is put on the end-effector to detect its pose. A simple image processing is done through stereo vision in which visual cues such as color, disparity and shape parameter were considered as objective features and by matching of these visual cues criteria, the target object can be located and tracked successfully. Position-based servo algorithm for aligning and positioning manipulator relative to the object and a model base grasping algorithm detected object pose is used (Rajpar, A., H.; Huang, Q. & Pang, Y., 2006). For carrying an object in real time, graphic symbols or text numbers are used as described in section 2.2.C.

The management of the commands for task from two platforms is made by object passing method using teleoperation.

We have developed a robots system using two humanoid robots to communicate with each other according to the following sequence.

Find and approach a colleague robot.

Start to send/receive data.

The robot controllers calculate and report the current torch position. At the same time, two robots express communicative behaviors when torch passing is under transition.

A. Design of scheme:

The design of the scheme includes:

Remote cockpit, which composes wireless communication unit, hand and arm manipulator, image capture unit of working space, etc.

Control for motion of two humanoid robots accomplishing torch passing, which can supervise and help humanoid robot decision-making how and where to go, when and which robot to work, mainly via text commands and manipulate working of robot hands and arms.

Bidirectional information, data stream and control commands transition and working.

Input information which includes: Text commands, audio commands, arms and hands motion data, etc.

Feedback information which include: Images of scene and location of environment, motion data of joints in the robot body, etc.

B. The sequence analyses of passing torch action:

The operators manipulate two robots for passing torch by thinking different features of the course; we divide the operative process in to four phases.

1. Moving and approaching phase:

Robots moving and approaching from far away location to certain area can be supervised and controlled by teleoperators. For two humanoid robots working cooperatively to pass an object, say torch, from the one robot to another. The communication can be taken place between client computers and the remote computers. Audio commands can be generated and transmitted to the relevant robot. The robot will execute command after interpretation. Each operator can also see the robot if it executes his command as described in sections 2. 1. C and 2. 1. D . The tele operators command while saying “Start move” using audio controls to relevant robot. The robots move and follow trajectories (in our case one robot follows 3step and other 6 step) to come in front of each other at desired 3step distance.

2. Before torch passing, adjusting poses phase:

Before torch passing it is necessary to keep two humanoid bodies at desired distance, in order to ensure torch passing reliably. To meet this requirement we have used leader- follower control.

3. Handing over and taking over phase:

Handing over and taking over the torch can be done after manipulating the two humanoid robots at desired distance, where the operator can manipulate the humanoid arm in its workspace using the master arm as described in section 2.3.C . The operator from platform1 can send text command to one robot BHR-02L “Get torch” and the robot will start to search the object. Based on the detection of object position using stereo vision, the robot wil automatically judge the current position of the object to grasp and will grasp it by sending text command “Grasp torch”. After grasping the operator will send another text command “Pass torch” to lift/handover the torch to another robot using the pose information acquired by the stereo vision. Then the taking over for other robot BHR-02F will be done using text commands by the teleoperator from another platform as shown in Fig. 6.

4. Turning over and departing away phase:

The operators can supervise and can control the turning over and departing away of robots. The robots can release the object after receiving the text command “Loose torch” and both robots will finish their work after receiving text commands from their corresponding platforms.

Using this technique the cooperation between two robots can be executed easily in the remote simulator station. Therefore a task can be completed without wait-to-move.

4. Simulations

For simulating the walking trajectory we have used a simulator named dynamic analysis and design systems (DADS). The simulation results for a BHR-02 are shown in Fig. 7, Fig. 8, Fig. 9 and Fig. 10.

ZMP with stability region in x direction

ZMP in y direction with upper and lower limits

Stability margin in x and y direction

Variation of zmp in x and y direction

Fig. 7 and Fig. 8 show the stability regions in x and y direction. It is clear from the figures that the planned walking trajectory is dynamically stable and the ZMP is almost in the center of the stable region. The slight deviation of ZMP from the center is due to the shifting of CoM during the double support phase to balance the humanoid. The maximum and minimum values of ZMP are the limiting values of the stability region at every time instant. Fig. 9 shows the stability margin in x and y direction. In Fig. 10 the variation of ZMP is shown. The variation in the y-zmp ranges between −0.01m to 0.05m which is more than the variation in x-zmp, whose limits are −0.003m to 0.025m. The reason for that the body motion in forward direction is more than side wards.

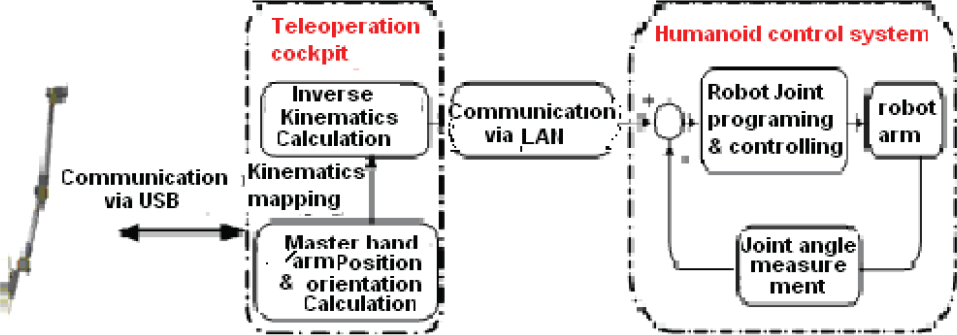

In this work, the system is analysed as one robot follows 3 step trajectory and other robot follows 6 step trajectory from reference point for the sake of desired spacing i.e. distance of 3 steps between them in order to ensure torch passing reliably. In order to keep the humanoid robot from tipover, the sensory reflex control is applied. For the balance, waist trajectories are shown in Fig. 11 and Fig. 12.

BHR following 3 step trajectory

BHR following 6 step trajectory

The figures show the waist trajectories in x, y, and z direction during the forward walking of humanoid robots. The height of the waist is lowered to keep the hip and knee angles within the limits. The y-waist trajectory depicts the forward movement of the waist to keep the dynamic stability of humanoid by moving the CoG of the body. The graph shows the waist movement during the stance (moving forward) and swing phase (remains same) of the forward walking. The swing of the waist in the lateral direction, to balance the robot tipping over sideward is also clear from the figures. The waist acceleration should be less because the variation in ZMP demands directly on the acceleration. The lateral motion of the waist is less as compared to the forward motion, which is obvious from the figures. The time taken by trajectory execution on the humanoid is approximately 25 sec for 6 steps and approximately 15 sec for 3 steps walking patterns.

5. Conclusion

In this paper cooperative work of two humanoid robots has been explored, where stable operation and avoidance of robot to tumble down during walking task is achieved by using ZMP criterion. Leader-follower method is used for the desired spacing between the robots to ensure object passing. The system configuration and management of commands are presented. Objects can be efficiently transferred between two robots with minimal delay. And the teleooperators can freely decide how to operate so as to finish tasks more easily and precisely. The software, for the final goal such as torch passing between two robots, is modified and improved accordingly. Authors believe that it is significant work that communication robots will play an important role in our daily life. Some simulations are done for showing balance of walking and required spacing for robots. Future work includes experimentations to accomplish real time tasks and extending this work over multiple humanoid robots where parallel execution of processes will be undertaken during experimentation.