Abstract

In this paper, we present a tracking technique utilizing a simple saliency visual descriptor. Initially, we define a visual descriptor named local similarity pattern that mimics the famous texture operator local binary patterns. The key difference is that it assigns each pixel a code based on the similarity to the neighbouring pixels. Later, we simplify this descriptor to a local saliency operator which counts the number of similar pixels in a neighbourhood. We name this operator local similarity number (

We apply the local similarity number operator to measure the amount of saliency in a target patch and model the target. The proposed tracking algorithm uses a joint saliency-colour histogram to represent the target in a mean-shift tracking framework. We will show that the proposed saliency-colour target representation outperforms texture-colour where texture modelled by local binary patterns and colour target representation techniques are used.

1. Introduction

Texture analysis has gained lots of popularity in recent years due to its wide range of uses in industry and different machine vision applications, for instance classification of different materials or visual inspection of material surfaces. Thus different operators have been introduced over the decades to enhance texture analysis, e.g., co-occurrence matrices [1] and polarograms [2]. One of the most successful operators in this field is the local binary patterns (

Local binary patterns is widely used in areas of visual inspection, image and video retrieval, aerial image analysis, environment modelling, biomedical image analysis, and biometrics. It is successfully applied for example to face and gender classification [4], paper characterization [5] and wood inspection [6], as well as background subtraction in tracking problems [7].

Different extensions exist to

In this paper, we define a local similarity descriptor that assigns each pixel a binary pattern based on the similarity to its surrounding pixels. This operator is simplified to measure the amount of salience for each pixel by counting the number of similar pixels in the surrounding of a pixel. This will assign each pixel a number that represents how different it is to the surrounding. We utilize this simplified version of the operator to measure the amount of salience in a local window. Based on the salience value, we compute a joint saliency-colour histogram to represent a specific target in a local window. We will show that this operator outperforms target representation using joint texture-colour and colour histogram descriptors.

2. Local Similarity Operator

There has been lots of research on saliency, especially in recent years [11]. Many techniques tried to employ the centre-surround mechanism which relies on comparing a central region with its surrounding. For instance, Itti et al. [12] computed different feature channels and fused them in a centre-surround approach. Later [13] studied the characteristics of centre-surround saliency and wrote on the biological plausibility of this approach.

Getting advantage from centre-surround differences in computer vision is not limited to saliency computation. Local Binary Patterns (

In the local similarity operator defined here, instead of partitioning pixels into the two aforementioned groups, we consider partitioning them into groups of similar and dissimilar pixels. A threshold value will be used to define the amount of similarity and surrounding pixels are considered similar to centre if they fall within this similarity threshold.

Let us start by presenting the grey-scale similarity operator by defining texture

where the grey value g corresponds to the centre of neighbourhood, and gi,(i=0,…,i=P-1) correspond to the grey values of P neighbouring pixels lying on a circle of radius

2.1. Local Similarity Pattern

At first the grey value gc is subtracted from the grey values of neighbourhood gi,(i=0,…,i=P-1):

In case of texture analysis, it is proven in [15] that (2) can be simplified and approximated without loss of useful information using:

Since gi - gc is not affected by changes in the mean luminance, (3) is invariant against grey-scale shifts. If fact, it is possible to only consider the sign of differences [8]. Consequently, we introduce a similarity merit function which is invariant against grey-scale shifts and produces results similar to sign function:

where

A unique number can be obtained by transforming (4) using a binomial factor 2i for each f(gi- gc, d). The number obtained characterizes the similarity pattern of the local image. The obtained number is called “Local Similarity Pattern (

The operator is called

Comparison of LBP and LSP generated patterns, (a) is a sample grey patch, (b) is the LBP generated labels that produces ‘00011101’ and (c) is the LSP generated binary labels using

2.2. Local Similarity Number

In the previous section, it was explained how a local similarity pattern can be obtained. The local similarity pattern includes the structural information of the saliency. In order to compute saliency the structural information is of no help. Hence, we can discard the structural information. This can be achieved by simply modifying (6) and replacing the binomial factor 2i with 20. This reduces (6) to the following:

which is equivalent to summing the binary codes assigned by (5) to the surrounding pixels. Hence, by calculating

The local similarity number shows the pop-out property of a pixel which defines how different a pixel from its surrounding neighbourhood is. The lower the number, the more salient the central pixel is. Hence, (7) can be used to show saliency of the central pixel. Figure 2 shows the nine saliency degrees of LSNd8,R where (a) is the less salient and (i) is the most salient pattern.

Groups of LSN8,1 patterns, any of the 256 patterns is member of a group. Black circles represent pixels similar to the centre. (a) Has only one member where all the pixels are similar and is the representation of a flat area; (b) - (h) has several members depending on the combination of similar pixels; (i) has only one member and is the most salient pixel since all the neighbouring pixels are different.

2.3. Colour Extension

The similarity operators

where gRi is the value of red channel, gGi represents value of green channel, and gBi is the value of blue channel.

3. Target Representation

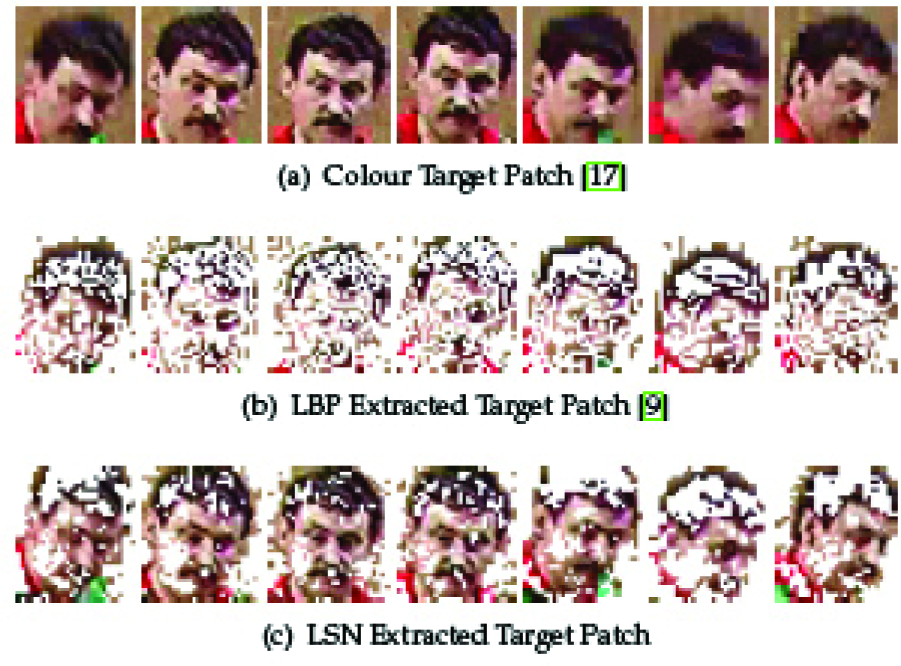

In this section, we explain how to apply LSN and LBP to extract masks that are needed in target representation which will be used in tracking. Initially, we will explain how the saliency operator can be used to represent the target by using saliency extracting an LSN Mask. Afterwards, we will discuss the target representation method of [9]. Their method is based on textural analysis using LBP masks.

3.1. LSN Mask

In the case of

In colour-LSN target representation, the aim is preserving the unity of the target of interest as well as its edges, lines and corners. Hence, we modify LSNd8,1 to fulfil the required properties as follows:

where

Different patch representations. LBP-colour joint histogram process patches mostly on the edges of the object as can be seen in (b). On the other hand, as (c) depicts, patches of LSN-colour joint histogram convey more useful information while suppressing background and plain areas.

3.2. LBP Mask

Recently, Ning et al. [9] presented an object tracking method using a joint colour-texture histogram which relies on the local binary pattern. The method utilizes major uniform patterns of

where

and

and a are robustness terms set to 1e−6 in the experiments similar to [9].

Figure 3 shows sample masks extracted using aformentioned techniques. The masks include key feature points in the target region, obtained using (11) and (10). As seen, the smooth area (i.e., background) in both target patches are eliminated. In comparison to traditional colour representation (i.e., colour patch), both LSN and LBP extract effectively edge and corner features. The advantage of LSN is that it preserves unity of the object of interest better and saves more useful information; thus it is expected to model the target more effectively.

4. Tracking

Object tracking is a challenging task. It has a wide range of applications in different machine vision applications such as automated surveillance, video indexing, human-computer interaction and traffic monitoring. Different tracking algorithms exist which are categorized into point tracking, kernel tracking and silhouette tracking [18]. Mean-shift tracking is a kernel-based algorithm, where a kernel is the object shape and appearance that is supposed to be tracked. The object can be represented using a rectangular or elliptical patch. We applied a colour-LSN histogram for object representation in the mean-shift algorithm. This method is compared with an algorithm [9] which utilizes a colour-LBP histogram.

4.1. Mean-shift Algorithm

Suppose the normalized target patch is represented by {x*1}i=1…n. The target model is computed as follows:

where

The candidate model

where

It is proven in [17] that in order to estimate the new position y from position y iteratively we can use

4.2. Tracking Using a Joint Colour-Texture Histogram

We use RGB channels and

In the case of a colour-LBP, the joint-histogram consists of 8-bin quantized colour RGB channels and 5-bin

5. Experimental Results

In this section, experiments are conducted to show the performance of the mean-shift-based tracking algorithms using different target representation methods. Three target representation models are tested, the first one uses an RGB histogram as explained in [17]. The second algorithm [9] utilizes the

The algorithm is implemented using MATLAB 2008a and run on a computer with a 2.4 GHz Intel Core2 Duo P8600 CPU and 4GB RAM. The operating system is Windows Vista SP2.

Quantitative assessments are done using PETS2001 (available at http://ftp.pets.rdg.ac.uk/PETS2001) data set. It consists of five multi-view (two camera) sequences of people and vehicles. The first sequence with the first camera is used in our experiments. The aim of these experiments is tracking people. Tracking started when the target is completely in a frame and stopped before leaving in final frames. Error is calculated from centroid deviation using the available ground truth (annotation).

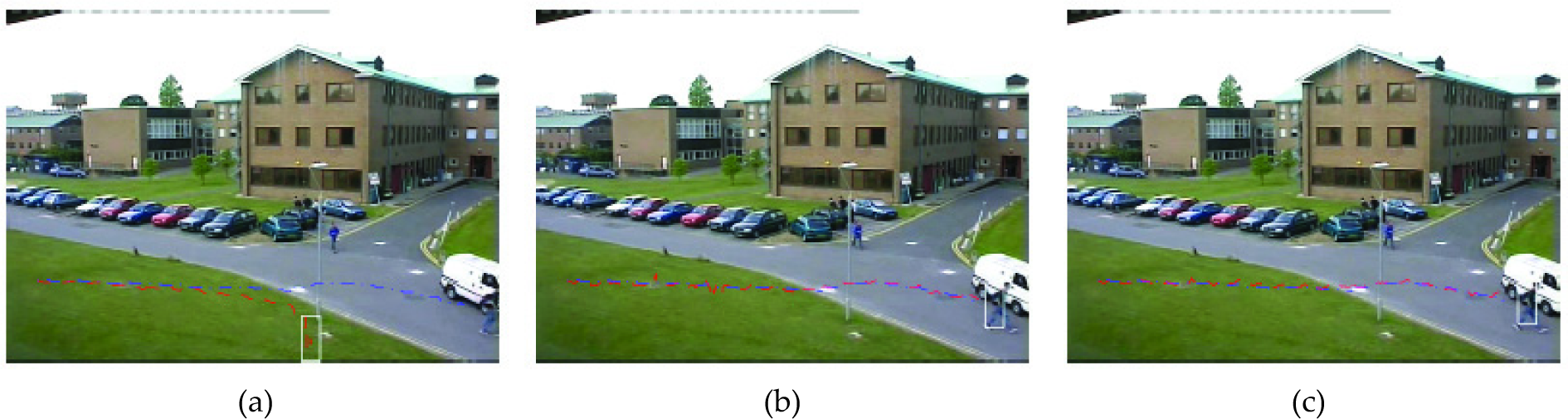

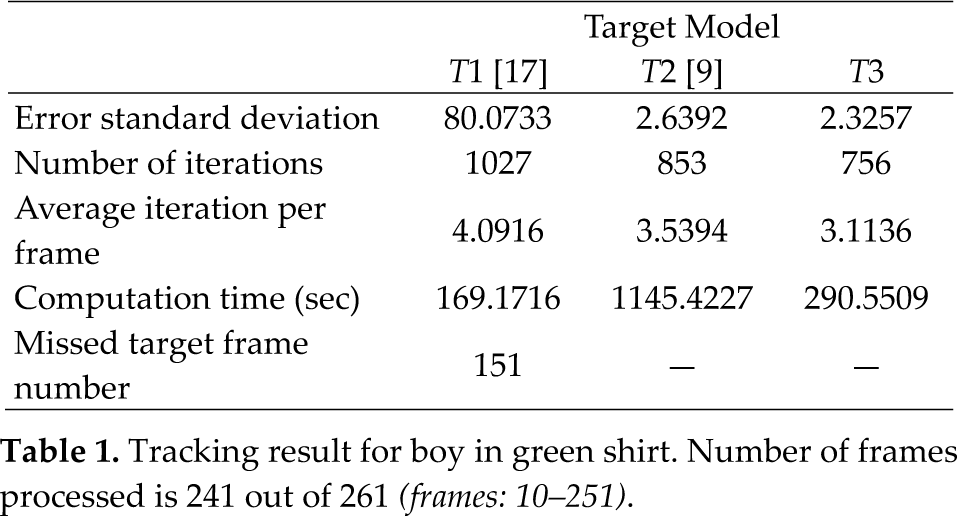

In the first experiment, a walking boy crossing from left to right is tracked. He is wearing a green shirt that is similar in colour to the grass he is walking on. In the middle of the sequence he is occluded by a lamppost and walks on a road that is similar in colour to his trousers. Figure 4 shows the tracking trajectory for each target model. Table 1 summarizes tracking information, including standard deviation of error, number of iterations, average iterations per frame and computation time. Computation time is estimated using tic/toc commands in MATLAB.

Tracking trajectory for boy in green shirt. Target video sequence has 261 frames (used frames: 10–251). Blue trajectory is the ground-truth. (a) RGB model, misses target on frame 151. (b) RGB-LBP model. (c) RGB-LSN model.

Tracking result for boy in green shirt. Number of frames processed is 241 out of 261

The first experiment shows that using

Another factor that is useful for evaluation of the efficiency of the mean-shift-based tracking algorithm is number of iterations. The lower the iteration number, the faster the convergence speed. As shown in this experiment,

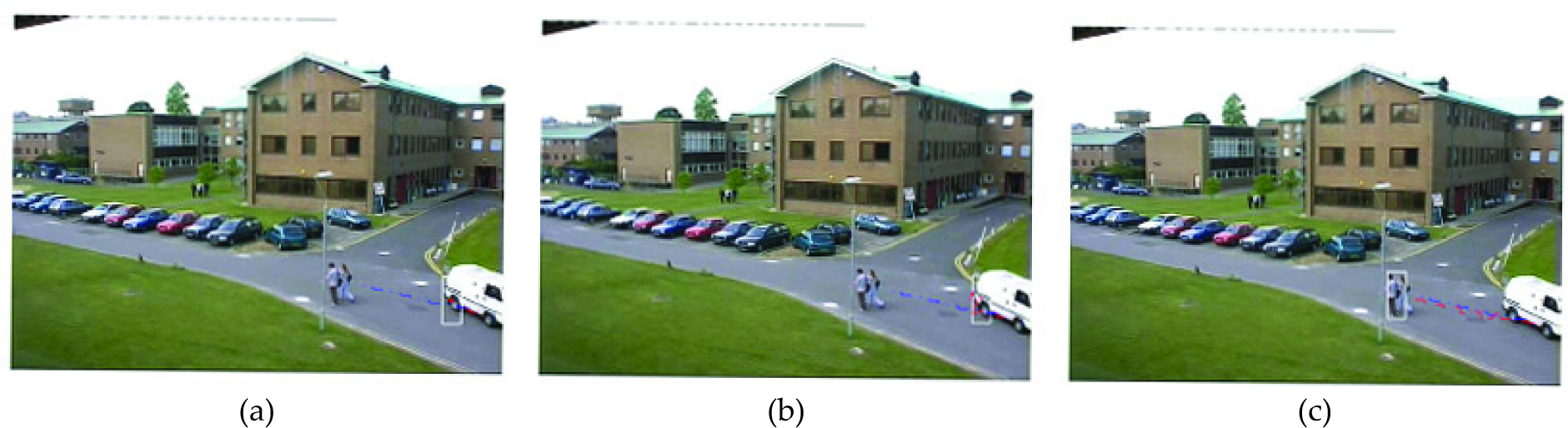

The second experiment tries to follow a woman in cream shirt. She is accompanied by a man in a white shirt. They start walking along the road from right to the left. The purpose of this experiment is to test the robustness in partial occlusion situations. The man is in front. The sequence has 513 frames, from which only 90 frames are processed.

Tracking trajectory for the woman in cream shirt. Target video sequence has 513 frames (used frames: 10–100). Blue trajectory is the ground-truth. (a) RGB model, misses target on frame 37. (b) RGB-LBP model, misses target on frame 37. (c) RGB-LSN model.

Tracking result for the woman in cream shirt. Number of frames processed is 90 out of 513

(frames: 10–100)

Considering Table 2, it is inferred that

Iteration number of

In the third experiment, the purpose is to track a woman who enters from the left and walks to the right along the road. The main goal is testing the robustness of modelling methods against continuous changes of the background. Unfortunately, all the three modelling methods fail to follow the target.

Tracking trajectory for red-shirt woman. (a) RGB model, misses target on frame 14. (b) RGB-LBP model, misses target on frame 45. (c) RGB-LSN model, misses target on frame 786.

6. Conclusion

In this paper a visual similarity operator based on LBP was introduced. The operator uses a similarity measure function to produce binary patterns. We extended the operator to simply measure the amount of saliency. This new variation simply counts the number of similar to centre pixels, so it was named local similarity number (

The operator was applied to object tracking in the mean-shift algorithm. The target was modelled by extracting a mask using LSN and computing a joint colour-LSN histogram within that mask. This target representation effectively suppresses the smooth area in the target patch while preserving edges and corners as well as target unity.

The proposed model was compared with original colour-histogram modelling [17] and colour-LBP modelling [9]. The experiments showed that this new operator produces excellent tracking results and outperforms the other two modelling methods. Moreover, it convergences faster than the other models.