Abstract

Traditional mean shift method has the limitation that could not effectively represent object accurately and converge the correct target position fast. To address this problem, in this paper, we propose a novel tracking algorithm using a Newton style convergence descending way based on distribution Fields target representation scheme. In contrast with traditional mean shift algorithm, the computational efficiency is greatly improved due to the SSD form of the histogram in distribution Fields and the efficient Newton style search. Our method adds uncertainty information of the target model and ensures a more accurate convergence to the true target. Moreover, we use a Kalman filter to predict target location in modified mean shift framework. This contributes to shorten convergence speed of the algorithm. Experiment results on several challenging video sequences have verified that the proposed algorithm is efficient and effective in many complicated scenes.

Introduction

Visual tracking is a challenging research topic in the field of computer vision. The task of tracker is to generate the trajectories of the moving objects in a sequence of images. In previous literature, the key components of most object trackers is composed of object representation, similarity measure and seeking method. Target is often represented by a histogram to model the appearance. 1 And also a similarity measure between the reference model and candidate targets is used to discriminate the object of interesting. Moreover, a local mode seeking method, such as mean shift is introduced to find the most similar location in the subsequent frames. 2 Target location is often searched by the value of the objective function which will reach the global optimum. A common method to smooth the objective function is to blur the image. However, blurring the image pollutes image information, which may cause the target to be lost. To address this problem we use a method which is introduced to build a target descriptor in distribution Fields in the literature. 3 DF’s representation allows smoothing the objective function without polluting information about pixel values. Then we introduce a Newton-style minimization procedure on distribution Fields. We also show that the Newton-style searching framework is a more efficient than mean shift which is fundamentally a gradient descent method.

The rest of this paper is organized as follows. The next section recalls related target representation method in tracking method. The Distribution fields motion estimation section introduces our tracking framework, i.e. distribution fields tracking method based on Newton style convergence descending. The Experiments section shows extensive experiments to compare our method with state-of-the-art ones. The last section concludes on our new proposed method.

Previous work

It has been many years since mean shift algorithm became one of the most popular hill climbing methods. It is introduced in the literature. 1 After that it has been adopted to solve various computer vision problems, such as segmentation and object tracking. 4 The virtue of the mean shift method is obvious. It is popular for ease of implementation, real-time response and robust tracking performance. The original algorithm uses histograms as a target representation and Bhattacharya coefficient as a similarity measure. At the same time, an isotropic kernel is used as a spatial mask to smooth a histogram-based appearance similarity function between model and target candidate regions. The tracker searches to a local minimum of this smooth similarity function to estimate the translational offset of the target in each frame. Although it has promising performance, the traditional mean shift method suffers from two main limitations: inaccurate target representation and trapping in local minima. Both of them can result in target drift. To alleviate this problem, recently many authors perform self-learning5–7 during the process of tracking. This approach finds the position of the target and updates the model with positive and negative samples. The strategy can make the tracker adapt to new appearances and background, but breaks down as soon as the target is mislabeled. In the literature, 5 the author proposes a co-training classifiers in the context to alleviate this problem. The tracker demonstrated re-detection capability and scored well on challenging video sequences. Another solution is MIL learning, 6 where the training examples are selected by spatially related regions, rather than independent training samples. In Kalal et al., 7 a tracking method was introduced that combines adaptive tracking with object detections. Growing and pruning events are perform during the process of tracking. Rather than self-learning, recently a superior method based on the DF target representation and MIL classifier is proposed in Ning et al. 8 Comparing with the self-learning which needs a big sample pool, the layer feature based on DFs can more effectively represent the target without maintaining a increasing sample pool. Following the framework, we also use a similar representation in DFs to make the model more generative. Our method helps in disregarding outliers during tracking without modeling them explicitly. Meanwhile, we demonstrate a connection between distribution Fields target representation and Newton style iterations optimization method to further improves the tracker’s performance.

Distribution fields motion estimation

Target representation scheme

Distribution Fields are proposed by Sevilla-Lara and Learned-Miller.

3

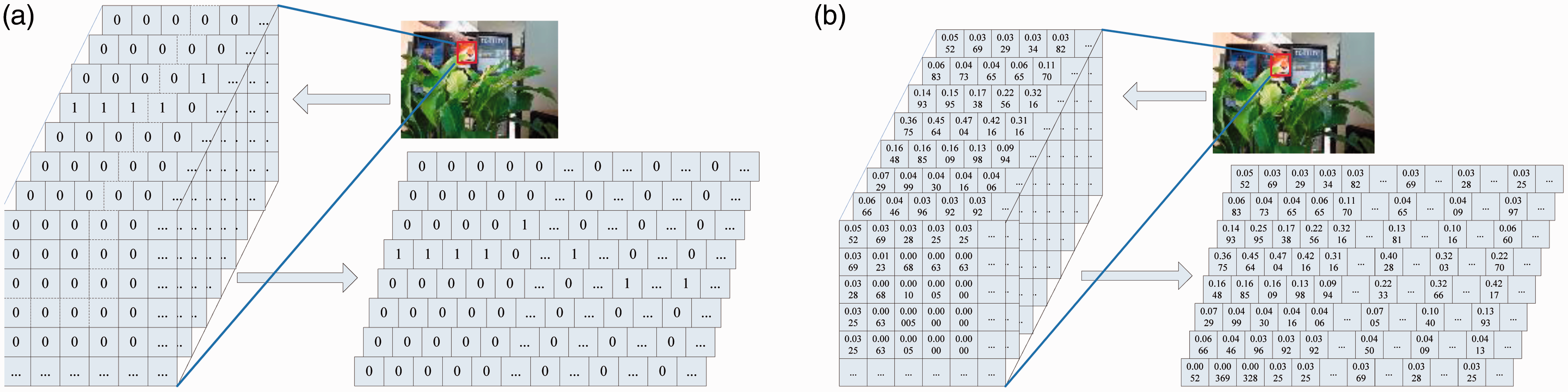

A DF is an array of probability distributions which defines the probability of a pixel of taking each feature value. DF model has matrix with (2 + N) dimensions. The first two dimensions of the matrix are height and width of the image, and the other N dimensions index the feature space. Compared with histogram-based representation, it preserves the spatial structure of the object by having a distribution at each pixel. It can be viewed as a generalization of many previous histogram-based descriptors. As shown in Figure 1, feature bins are 8. Model matrix is shown in the left and reshape matrix in the right. In histogram-based representation, each element in matrix is either 0 or 1, and it is a binary matrix. A DF model is represented as a matrix d of size Comparison of target models. (a) Bright histogram. (b) Distribution fields histogram.

The 3D DF has simply been convolved with a 2D Gaussian filter which spreads out in the x and y dimensions first. Then filter results convolve with a 1D Gaussian filter which spreads out in the feature dimension. That is, each layer k of the smoothed DF

It is well known that target representation is critical to the robust and precision in tracking. To demonstrate the performance of DFs, we compared DFs descriptor with other descriptor in the basin of attraction. For an objective function and a point p, the basin of attraction is the region around the point p from which descending the gradient of objective function leads to p. The size of the patches is Comparison of target models. (a) Original image. (b) The different objective function evaluated translating a patch 1–40 pixels in both directions horizontally. (c) Objective function in df-L1 descriptor. (d) Projection map in df-L1 descriptor. (e) Objective function in df-matu descriptor. (f) Projection map in df-matu descriptor.

Search mechanism

Kalman filter

In general, we assume that there is a linear process governed by an unknown inner state producing a set of measurements. More specifically, there is a discrete time system and its state at time n is given by vector Xn. The state and measurement in the next time step n + 1 is given by

Newton style mean shift: as shown in equation (7), a target model can be represent by histogram

It is known that Matusita metric is defined by the sum of squared differences (SSD) objective function.

As a result, the minima of (9) coincide with the maxima of the Bhattacharyya coefficient. By substitute Bhattacharyya distance with the SSD error, we can equivalently work with traditional mean shift algorithm. We derive a Newton-style iterative procedure to solve this optimization by expanding the expression for

Let objective function partial derivatives be zero:

Provided that

Model update

In the process of tracking, first a model of the target should be generated by expanding the target image into a DF and smoothing it. Then, the same work is done underlying part of the bigger candidate region in the new frame. Searching for the target follows the direction where the gradient of the Matusita distance between the model of the target and candidate. The location of the target is estimated by finding the global minimum. After that, a model of the target is updated to adapt to the target appearance change. As in equation (13), a linear combination of the model and the observation is used to update target model.

Distribution fields tracking

Input: S = video sequence.

I = patch containing target in frame 1st.

m = number of brightness bins (m = 16).

λ = learning factor (

Output:

1. Initialize target representation model

Reshape

2. Initialize target location

3. for

4. Predict target location

5. for

6. Candidate object representation model in distribution Fields.

Reshape

7. Find Minimum value of objective function at

8. end for

9. update target location

10. model update:

11. end for

Algorithm 1

Experiments

Experimental setup

In the experiments, nine publicly available video sequences are used for tracking performance evaluation. Captured in different scenarios, these video sequences contain diverse events such as occlusion, object pose variation, lighting changes and out-of-plane rotation and so on. For each tracker, the default parameters with the source code are used in all evaluations. Our method performs object localization using a distribution Fields target representation scheme with Kalman filter and Newton style mean shift algorithm. The average running time of our C++ implementation on OpenCV3.0 is about 0.03 s per frame on a workstation with an Intel i7 3770 CPU (3.4 GHz) and 6G RAM.

For quantitative performance comparison, two popular evaluation criteria are used: center location error (CLE) and overlap ratio (OVR) between the predicted bounding box Bp and ground truth bounding box

Empirical comparison of trackers

We compare our method with several state-of-the-art trackers both qualitatively and quantitatively. These trackers are referred to as OAB, SBT, BSBT, MIT, TLD.5–7 In our method (DF), we set parameters as follows. The number of bins is 16. Width of space filter is [9, 15], width of feature filter is 5, variance of space filter is [1, 2], variance is feature filter is 0.625 and learning factor λ is 0.95. Motion model of Kalman filter is constant velocity model and initial parameters set 1.

Qualitative comparison

We evaluate the performance of all the six trackers on 10 video sequences. Figure 3 shows the qualitative tracking results of the six trackers over several representative frames of nine video sequences. From this figure, we observe that our method achieves the best tracking performance on most video sequences. In particular, our method obtains the more robust tracking results in the presence of complicated appearance changes. An example of severe illumination variation and occlusion is the “coke” sequence, shown in the top left of Figure 3. The tracked coke cans is lost by all other trackers at the 79th frame as the target is occluded partially by the leaves. Our method succeeds in tracking the target in the whole sequence. The “bolt” video sequence, bottom left of Figure 3, contains object deformation and out-of-plane rotation followed by partial occlusion. SBT, MIL, and TLD break down after the 10th frame. OAB and BSBT lose the target after the 14th frame and the 116th frame, respectively. Our method locks on the target whatever the illumination or body pose is changed. In the ‘dog1’ sequence, row third left of Figure 3, the object undergoes out-of-plane rotation and in-plane rotation. Our method achieves the best performance than the other algorithms. In the ‘football1’ sequence, row fourth right of Figure 3, the object suffers from heavily background clutter. Our method can handle the clutter effectively and efficiently. The DF descriptor is able to follow a gradient back to its original position in a wide basin of attraction fast.

Qualitative tracking results of the six trackers over several representative frames of the 10 video sequences (i.e. ‘coke', ‘faceocc1', ‘boy', ‘fleetface', ‘dog1', ‘tiger1', ‘football1', ‘freeman1', ‘bolt', ‘fish') that are, respectively, aligned from left to right and from up to down.

Quantitative comparison

We evaluate the CLE and OVR performance of all the six trackers on 10 video sequences. Table 1 reports the median CLEs, OVRs and FPS of the six trackers on all of the 10 video sequences. Figure 4 plots the frame-by-frame CLEs and OVRs obtained by the six trackers for the ‘bolt’ video sequences. We report true tracking with CLE at a threshold of 20 pixels and OVR at a threshold of 0.25. It is seen that our tracker obtains more accurate tracking results than the other trackers. It is known that visual trackers can be sensitive to initialization. To evaluate the initialization robustness, we follow the protocol proposed in the benchmark evaluation.

9

We compare our method with the other trackers in the distance and overlap precision plots for TRE and SRE experiments. The trackers are ranked using the area under the curve (AUC). Both the precision and success plots show the mean precision scores over all the sequences. The results are shown in Figure 5. In all evaluations, our method obtains the best results.

Quantitative evaluation of using six methods in CLE & OVR on the “bolt” video sequences. Precision and success plots over all 10 sequences. The mean precision scores for of each tracker are reported in the legends. Note that our method improves the second tracker by 16% in mean distance precision. In both cases, our approach performs favorably to state-of-the-art tracking methods. Comparison of different methods for tracking. Note: The best two results are shown in red and blue fonts. The results are presented using both median overlap precision (OVR) (%) and center location error (CLE) (in pixels), frame per second (FPS) (fps) over all 10 sequences.

Discussion and analysis

Each component is evaluated to show its contribution to the overall performance and sensitivity. Since the multiple DFs are introduced, the tracking method has the ability to overcome different challenges like moderate illumination changes and occlusion. Further study shows that

Conclusion

We have proposed a new target representation scheme in distribution Fields for tracking. It resolves ambiguity and overcome the under sensitivity to spatial structure. it also resolves the over sensitivity that other descriptors have to the geometric structure of the target, and it’s able to model slow changes in appearance and pose and be robust to moderate occlusions. We extend the mean shift scheme with Newton style descending way for the less iteration. Furthermore, we merge the Kalman filter and modified mean shift framework together. It is well known that speed is a crucial factor for many real-world applications such as robotics and real-time surveillance. Our method maintains state-of-the-art accuracy while operating in real-time speed. We believe that DFs is a fertile framework for visual tracking and Newton style convergence descending is especially suitable for real-time applications.

Footnotes

Acknowledgement

We thank the reviewers for the helpful comment.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work is granted by Science Project of Fujian Education Department (JA12263) and Science and technology cooperation projects of Fuzhou City (2013-G-86).