Abstract

We present a novel vision servoing method, which is fit for climbing robots and those in unstructured environments, based on texture analysis. A large textured area is the target for observation. After the definition of texture element distribution density, the relationship between the change of the density at some selected points and the camera pose is deduced. The vision servoing control law, which meets the requirement of Lyapunov stability is designed in this paper. Experiments show the effectiveness of this method.

1. Introduction

Vision servoing is widely used in object tracking, grasping and robot station keeping. The image feature is a main aspect, which decides the controlling capability. Geometrical primitives, object contours, regions of interest, optical flow and the models of the tracked objects [1–7] are often employed. Edges and texture [8, 9] are the two major sources of visual features used in markerless visual tracking. For scenes with sharp edges or high spatial gradients, contour features are very informative. If illumination changes, edge information provides a good result in object tracking. If the scene is filled with texture and there is no obvious edge, contours may be absent. Texture-based methods are required in such situations [10]. Most of these methods at present are based on interesting points detection, where Harris corners are very popular. However, tracking interest points is sensitive to noise and changes in illumination. Some researchers integrated the texture and contour cues. Hafez [10] developed an integration framework that integrates edge and texture points for planar object visual tracking. After studying various illumination models that improve tracking robustness, Pressigout presented a hybrid contour and texture tracker that allows fast and robust tracking on planar and non-planar objects for augmented reality applications [11]. In [12] a method was introduced to estimate the Jacobian transform matrix using non-linear minimization of a unique criterion that integrates both the texture and the edge information of the tracked object. Chen developed a fuzzy controller to maintain the lighting level suitable for robotic manipulation and guidance in dynamic environments [13].

Most of the visual features used in the existing vision servoing algorithm are focused on the features of a small area or a target. However, in some environments the target plane may be large and be filled with texture with no obvious edge. Even the texture elements style on the planar object may be dynamic. If the texture elements are similar to each other or those elements change their appearance all the time, it is difficult to match interesting points or analyse the contours of the texture. Such kinds of environment are very common in robots working in areas such as the seabed, sea surface, desert, etc. The tasks of the robots include station keeping, auto landing, climbing and so on. A novel vision servoing method based on texture analysing is proposed in this paper. We do not extract or match any interesting points as most of the existing vision servoing methods do. The relationship between the texture features and the camera pose is deduced in this paper. A vision servoing control law, which meets the requirements of Lyapunov stability law, is designed. The texture feature we choose is the distribution density of the texture elements.

In Part 2, we define the distribution density of texture elements at a point in the texture plane and deduce the relationship between the distribution density of texture elements and the camera pose. In Part 3, we design the vision servoing control rule, which meets the requirements of Lyapunov stability law. In Part 4, we design some experiments to prove the effectiveness of the method.

2. Relationship between the distribution density of texture elements and the camera pose

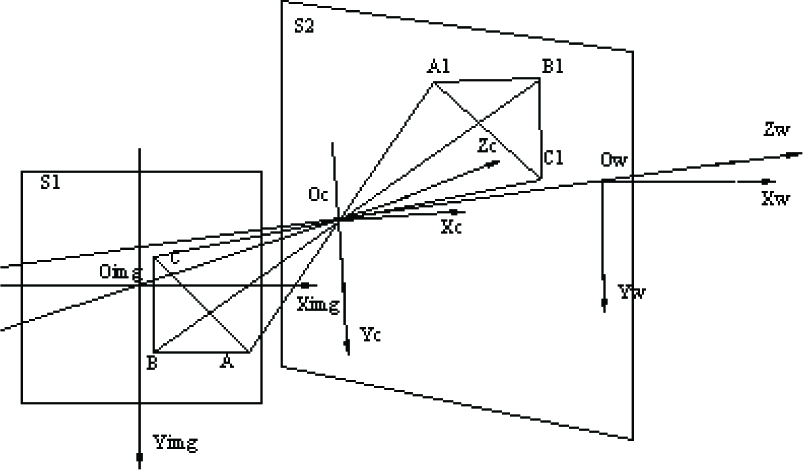

We define three coordinate systems: 1) The world coordinate system OwXwYwZw, with the origin point Ow being at a position in the workspace, 2) The camera coordinate system OcXcYcZc with the origin point Oc, crossing the optical centre of camera lens and sitting on the axis Zw and 3) Imaging plane coordinate system OimgXimgYimgZimg, with the origin point Oimg being at the cross point of the camera imaging plane and the optical axis of the camera. OimgXimg is parallel to the row direction of the pixels arrangement and OimgYimg is parallel to the column direction of the pixels arrangement (as shown in Figure 1).

The sketch map of the texture elements density calculation

S1 is the camera imaging plane and S2 is the target texture plane observed by the camera. The plane equation for S2 in world coordinate systems is zw = 0. The camera motion includes the rotation around axis Oc Xc, the rotation around axis OcYc and the translation along OwZw.

Suppose P is a point in the world space. P is the coordinate of P in the camera coordinates system.

where f is the focal length of the camera and δ > 0 is a constant. A1, B1, C1 are the three corresponding points of A, B and C in the target plane. According to the solid geometry theory, the world coordinates

The calculations of

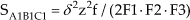

The area of triangle SA1B1C1 is:

wheres sA1B1C1=(lA1C1+lB1C1+lA1B1)/2 and lA1C1,lB1C1 and lA1B1 are the length of A1C1, B1C1, A1B1 respectively. Then the triangle area of A1B1C1 is:

where:

Suppose ρ is the texture element distribution density of a point in the target texture plane. It is obvious that all the texture elements in the triangle A1B1C1 will be imaged in the triangle ABC in the image plane. That means the amount of texture elements in A1B1C1 is equal to the amount of image points of the texture elements in ABC if one texture element in the image is considered as one point. Then the image points density of texture elements in triangle ABC is:

where:

By substituting (2) into (3) and letting δ → 0, we get the texture element distribution density feature T of a point in the imaging plane, that is:

where

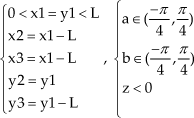

To prevent the conglutination of the texture elements in the image, the rotation angles of the camera are limited to (−π/4,π/4).

3. Designing of control law

3.1 Designing of Lyapunov function

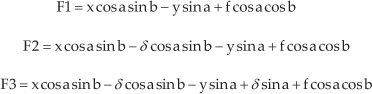

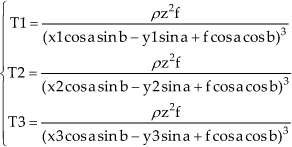

According to formula (4), suppose there are 3 points p1(x1,y1),p2(x2,y2) and p3(x3,y3) in the image plane, whose features can be calculated as formula (5):

T1, T2 and T3 are all larger than or equal to 0 because of the physical meaning of texture distribution density. Ifp1(x1,y1),p2(x2,y2) and p3(x3,y3) are chosen following the rule shown in Figure 2, a unique solution of equation (5), as shown in (7), can be solved with the condition (6):

Sketch map of choosing the appointed points for texture character calculation

Let the texture element distribution densities of the three points chosen above beT10, T20, T30 respectively, when the camera is in its ideal position. If the camera pose changes, the distribution densities of the three points change toT, T2 andT3. Now an error function is defined as:

The task of vision servoing is to adjust the camera pose until function (8) reaches an accepted error ε.

The partial derivatives of the three points features with respect to a, b, z are:

From (5), the derivation result of the texture distribution density features of the chosen points with respect to t is:

where

Xc,

Yc and

There is only one solution (7) for function (5) under the condition (6). So the result of (11) will be 0 only when a = a0, b = b0 and z = z0. Otherwise, it is larger than 0.

3.2 Designing of control law based on Lyapunov function

The derivation of the Lyapunov function (11) to the time t is:

If setting:

then:

Because t>0, the equality exists only if:

This means ΔT1 = ΔT2 = ΔT3 = 0 is valid only if (a = a0 b = b0 z = z0)T. Obviously the robot has moved to its ideal pose at this time. Otherwise, Ṡ < 0. Then the error function asymptotical converges to zero vectors.

3.3 Jacobian matrix and control law calculation

Change (13) to matrix style:

where

J is the Jacobian matrix, which shows the relationship between the changing speed of the image features and the camera motion speed. After calculation, we get:

where:

It is obvious that |J| ≠ 0 if the rule group (6) exists.

Then the inverse matrix J−1 of J exists. If the two sides of (15) are multiplied by J−1, we get:

Formula (17) is the vision control law based on the texture element distribution density analysis.

4. Vision servoing experiments

A half physical experiment is designed to testify the availability of the proposed method. Large areas for observation are generated by a computer (4) and projected onto the screen (2) by the projector (3). A camera 1 is fixed in front of the screen and the images are captured by the calculation computer (4). The experiment environment is shown in Figure 3.

Experiment environment

The parameters of the equipment are listed below:

1. Camera: MTV-188IEX, 2. White projector screen, 3. Projector: BENQ-PBL25XGA, 512*512 pixels, 4. Computer: Intel Core i5 2320 3.0GHz CPU, 4G memory, NVIDIA GTX470 display card, the programming tool is Visual C + +6.0. The control software interface is shown in Figure 4.

Control software interface

The transform rule between the physical coordinates and the pixel coordinates should be given as follows:

where (u,v) are the pixel coordinates of an image point and (x,y) are the length coordinates. (u0,v0)are the pixel coordinates of the original point of the image plane. dx,dy are the distances between the two adjacent pixels in the directions of x, y respectively.

Image features are employed in the robot control in this method, which is not sensitive to the internal parameters error of the camera. â,b̂,ẑ (the estimation ofa,b,z) can be calculated when the internal parameters estimations of the camera are induced into (7). The motion speed of the camera in the pitching yawing and translation of the next step can be calculated when the parameters, including â,b̂,ẑ the estimation internal parameters of the camera, the distance of the features between the ideal position and the present position, are induced into (17).

Now we talk about the flow of control:

Set the estimated value of camera internal parameters û0,v̂0,dx̂,dŷ,f̂

Set the present and the ideal pose of the camera a,b,z,a0,b0,z0

Calculate the ideal density values at the three appointed points

Calculate the present density values at the three appointed points

Calculate the error between the values at the ideal and the present position

If

Calculate the speed values of the camera for the next step Ra, Rb, Trz

Calculate the true value of the camera pose at present

9. Go to 4)

The control order sending period is set at 250ms.

The real internal parameters of the camera are:

Some parameters are set as below. The pixel coordinates of the three chosen points in the texture image are:

The ideal pose of the robot is:

The present pose of the robot is:

The initial estimation internal parameters of the camera are:

The proportional control coefficients are:

The experiment results are shown in Figure 5, where the horizontal axis shows the servoing time. The vertical axis shows the rotation angle or the amount of translation. The dotted line shows the ideal position of the camera.

Curves of vision servoing process

A lot of similar experiments have been done with the same conditions. What's different to them is that the ideal poses and the present poses were changed to a different value each time. The results are similar to Figure 5. From the experiment results we can see that the robot can move to the ideal pose and position from the present pose and position with an acceptable error.

5. Conclusion and Discussion

It is difficult to use obvious geometry features such as corners, outlines, holes, etc. for robot visual servoing if the robot works in a textured environment. Geometry features in such environments are homogeneous, which is a big problem in robot control. The distribution density of the texture elements will change with some camera motions. With this cue, we develop this new method based on distribution density analysis. The shape or the contour of texture elements is not the feature used in this method. As a result, complex geometry structure matching or optical flow calculation, which can be an impossible mission in such environments, is not necessary. The main work is to calculate the distribution density of the texture elements at the different parts in the image. The most outstanding capability is that the method can be used in an environment with a dynamic textured surface. The reason is that the distribution density has nothing to do with the texture element shape or contour. Experiments showed an effective result.

However, there are some weaknesses to this method. First, vision servoing based on the distribution density of the texture elements is a statistical method. This means the control precision is not very high if the environment is filled with big texture elements and there is low density in a pointed area. The robot may swing with a small range at an ideal position when a high precision is requested. Second, big tilt angles of a robot are not allowed in this method because the texture elements that are far from the robot camera optical axis may conglutinate in the image with a big tilt angle. Last, this statistical method cannot deal with the motion of translation parallel to the camera image plane and the motion of rotation along with the optical axis. The reason is that the distribution densities in all the points in the view are similar at this time. A solution will come in future research by the author.

Although there are some shortcomings, as mentioned above, this new vision servoing method is still an effective theory. It solves a type of problem in robots control when working in a textured environment where there are no obvious geometric features.