Abstract

The present study investigates whether adults and children exhibit different eye-fixation patterns when they look at human faces, machinelike robotic faces, and humanlike robotic faces. The results from two between-subject experiments showed that children and adults did have different facial recognition patterns; children tended to fixate more on the mouth of both machinelike and humanlike robotic faces than they do on human faces, while adults focused more on the eyes. The implications of notable findings and the limitations of the experiment are discussed.

1. Introduction

Do children and adults share the same or similar patterns when they interact with robots? Are the ways in which children and adults view and form impressions of robots different? These and related questions have become particularly relevant in recent years because R-Learning for children has gained much attention and popularly lately. The present study argues that we may answer these questions by examining whether children and adults look at robotic faces differently when forming first impressions.

When we communicate with others, we exchange our emotions, feelings and thoughts by using various communication modalities. In addition to verbal communication, we also use non-verbal communication modalities such as body movements, hand gestures and facial expressions [1, 2, 3, 4, 5, 6, 7]. Gross and Ballif suggested that facial expressions are one of the most efficient ways for understanding others' emotions and feelings. In particular, children do not have sufficient social communication skills to understand others' minds or feelings when compared to adults [8], suggesting that the ways in which children recognize and see robotic faces may be different from that of adults.

Therefore, the aim of this paper is to identify different recognition patterns between children and adults when looking at robotic faces.

2. Literature Review

2.1. Essential Facial Components for Effective

To find the main communication channels in human robot interactions, DiSalvo analysed the facial components of various robots, including academic, commercial and entertaining robots as well as imaginary robots from popular films. After examining 48 robots, DiSalvo found that 81% and 62% of the robots had eyes and a mouth respectively, suggesting that eyes and mouths are crucial facial components needed for effective human-root interactions [9, 10].

Kim and Han found that robots' eyes were the most important facial component affecting children's preferences for robots [11]. In addition to eyes, Bassili compared a robot that moves its mouth while speaking to a robot with an immovable mouth, and found that the movement of the mouth was key to effective communication and successfully facial recognition [12]. Furthermore, the nose is another important core component because it is located between the eyes and the mouth; people are required to look at the nose when they try to look at the eyes and the mouth. Therefore, these three components – eyes, mouth and nose – are considered the key facial components [13].

2.2. Children's Communication Skills

Children's social communication skills develop gradually. In early childhood, they try to read and understand different thoughts, feelings and perspectives of others. For instance, children attempt to integrate social cues for social cognition, including not only verbal sources but also non-verbal information such as gestures, facial recognition and tone of voice. Therefore, seeing others' faces is an important social activity to gather such crucial information [8, 14].

2.3. Cognitive Robots

Many cognitive robots have been developed in order to improve children's social communication skills [15, 16, 17, 18]. For example, Keepon was designed for engaging in emotional communications with children and improving their communication skills. Although Keepon was not capable of making emotional expressions and it delivered its emotions only though body movements and rotations, the robot was still able to convey diverse emotions through its eyes and mouth, which was particularly effective for autistic children with limited social skills [18].

As R-Learning (i.e., robot assisted learning) for children has recently gained much attention and earlier studies primarily focused on the adult population [19, 20], it is important to examine whether children interact with robots in different ways.

Therefore, the present study intends to examine the facial recognition patterns of adults and children when they are exposed to human and robotic faces. Study 1 investigated whether adults and children show different eye-fixation patterns when they look at human faces and machinelike robot faces. Study 2 further explored the eye-fixation patterns when looking at humanlike robotic faces [13].

3. Study 1

3.1. Design

Study 1 was a between-subjects experiment with four conditions: 2 (participants' age: children vs. adults) × 2 (type of face: human vs. machinelike robotic).

3.2. Participants

80 participants (40 females, 40 males) were recruited from a large private university and a kindergarten in Seoul, South Korea. The age of the children ranged from 6 to 11, with a mean age of 9.0 years (SD=1.70). The age of the adults ranged from 23 to 32, with a mean age of 28.1 years (SD=2.22). All of the participants had normal eyesight. Each condition had 20 participants (10 females, 10 males).

3.3. Apparatus and Stimulus

Photos of human and machinelike robotic faces were prepared. For the human faces, we hired twelve actors from various age groups: two teenagers (1 male, 1 female), four in their twenties (2 males, 2 females), four in their thirties (2 males, 2 females), and two in their forties (1 male, 1 female). They were instructed not to express any emotions or feelings during the photo-shot. We took eight photos from each actor. 48 photos from six actors were then selected after excluding 48 photos that included facial expressions.

The validity of the photos was supported through a pre-test by twelve adults. They were asked to respond to a 7-point Likert scale measuring the degree to which they were able to identify the facial expressions of the actors depicted in the photos (1=“

For the machinelike robotic face, 64 images of 16 existing robots were collected. We selected robots that had eyes, a nose and a mouth. From a similar pre-test, 24 photos were selected as stimulus materials for the experiment. The robots were Kaspar, Kobian, EveR-2 Muse, and Jules [21, 22, 23, 24]. All of the photos were prepared in 1200 dpi and 900×1200 pixels resolution.

3.4. Experimental Environment

A 24-inch LCD monitor and Tobii x120 eye-tracker (Fig. 1) were prepared in a room with a comfortable chair [25]. A laptop computer was placed remotely from the monitor because participants might be distracted with other computer components, such as the mouse. A desk was placed between the monitor and the participants so as to maintain a proper distance. Two experimenters were present in the room for instructing participants and controlling the eye-tracking software.

Tobii x120 eye tracker

3.5. Procedure

As the participants entered the room, the experimenter provided the participants with a brief overview of the experimental procedure. Before the main-task, the participants were asked to look at the photos for the calibration of the eye-tracker.

As the main session began, the eye-tracker began to record participants' eye movement patterns and fixation time. The participants were instructed to look at the monitor carefully. 24 photos were randomly displayed on a black background for 4 seconds. To remove the effects of previous images, a black-screen was displayed for a second between each image.

3.6. Measurement

Eye-tracker recorded and calculated the eye fixation time on the eyes, nose and mouth of the faces depicted on each photo (Tobii x120). Because there was a few milliseconds gap between the photos (presented times ranged from 3.991 to 4.007 seconds), we used the percentage-results of the fixation time for the entire presented time for an accurate comparison between the conditions.

3.7. Results

Table 1 showed the results of the average fixation time of the core features.

3.7.1. The Effects of Human vs. Machinelike Robotic Faces

An analysis of variance (ANOVA) was conducted to examine the effects of face type on the percentage of fixation time on the sum of core features. Also, a multivariate analysis of variance (MANOVA) was conducted so as to examine the effects of face type on the percentage of fixation time of each core feature (eyes, nose and mouth). The results of the ANOVA indicated that the face type did not have a notable effect on fixation time in the sum of core features (p=.09). However, the results from the MANOVA indicated that participants who looked at the machinelike robotic faces (M=24.00%, SD=17.10) reported a longer fixation time on the mouth than those looking at the human faces (M=15.45%, SD=9.74), F(1,76)=16.81, p<.001, while the participants who looked at the machinelike robotic faces (M=26.88%, SD=16.54) reported a longer fixation time on the nose than those looking at the machinelike robotic faces (M=21.49% SD=9.68), F(1,76)=4.10, p=0.046.

Mean and standard deviation of the percentage of fixation time on core facial features

3.7.2. Effects of Age Level

The age of the participants also had a significant effect on the percentage of the fixation time on the eyes, mouth and nose. Although the age of the participants did not have a significant effect on the percentage of the fixation time on the sum of core features, the results from the MANOVA found that adults (M=48.58%, SD=15.52) had a longer fixation time on the eyes than children (M=24.27%, SD=14.19), F(1,76)=52.57, p<.001, while the children (M=28.68%, SD=14.45) had a longer fixation time on the mouth than the adults (M=10.78%, SD=7.18), F(1,76)=73.60, p<.001. Also, the results indicated that the children (M=28.56%, SD=16.10) had a longer fixation time on the nose compared with the adults (M=19.81%, SD=9.14), F(1,76)=10.81, p=.002.

3.7.3. The Interaction between Face Type and Age Level

There was an interaction between face type and age, such that children looked at the machinelike robot's mouth (F(1,76)=23.89, p<.001) for relatively longer than the nose (F(1,76)=14.45, p<.001) while adults showed no difference in their eye-fixation patterns due to the face type (Fig. 2, 3, 4, 5).

Eye-tracking image of one of robotic faces; the left shows the adults' and the right the children's

Mean and standard error of the percentage of the fixation time on eyes

3.8. Discussion

In Study 1, we examined the differences in facial recognition patterns of children and adults looking at human and machinelike robotic faces. While the adults showed no difference in their eye-fixation patterns, the children tended to pay more attention to the mouth than the eyes or the nose of machinelike robotic faces. Interestingly, the children's facial recognition patterns did not show any difference when they looked at the human faces.

One of the major limitations of this study was that it did not consider other characteristics of the face, such as face shape and colour. In other words, if the area of the core features is bigger, participants may be more likely to look at the core features.

4. Study 2

4.1. Design

Study 2 was a between subject experiment with four conditions: 2 (participants' age level: children vs. adults) × 2 (Terminator face: human vs. humanlike robotic).

4.2. Participants

The participants from Study 1 participated in this study.

The mean and standard error of the percentage of the fixation time on the nose

The mean and standard error of the percentage of the fixation time on the mouth

4.3. Apparatus and Stimulus

100 photos of Arnold Schwarzenegger [26] depicted as the Terminator and 40 photos of a robotic version of the Terminator were collected from the Terminator series movies, TV shows and Internet websites. After the identical procedure used in Study 1, three photos of Arnold Schwarzenegger depicted as the Terminator and three photos of the mechanical version of the Terminator were finally selected as the stimulus materials of the experiment (Fig. 6).

4.4. Experimental Circumstances

The experimental environment, procedure and measurements were identical with Study 1.

A sample photo of the Terminator used in Study 2 (left: robotic face, right: human face)

4.5. Results

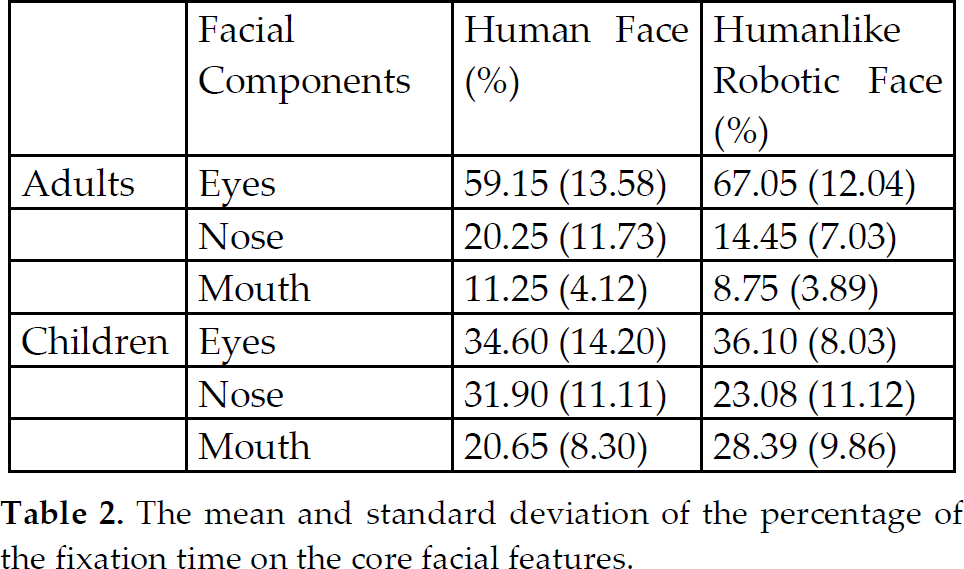

Table 2 shows the results of the average fixation time of the core features.

The mean and standard deviation of the percentage of the fixation time on the core facial features

4.5.1. The Effects of Human vs. the Robotic Face of the Terminator

A multivariate analysis of variance (MANOVA) was conducted to examine the effects of face type on the percentage of the fixation time on each core feature (eyes, nose and mouth). The results of the analysis indicated that those participants exposed to the humanlike robotic faces of the Terminator (M=18.77%, SD=10.17) reported a longer fixation time on the nose than those exposed to the human faces of the Terminator (M=26.08%, SD=12.73), F(1,76)=9.84, p<.001. However, there was no significant effect on the eyes (p=.089) or mouth (p=.100)

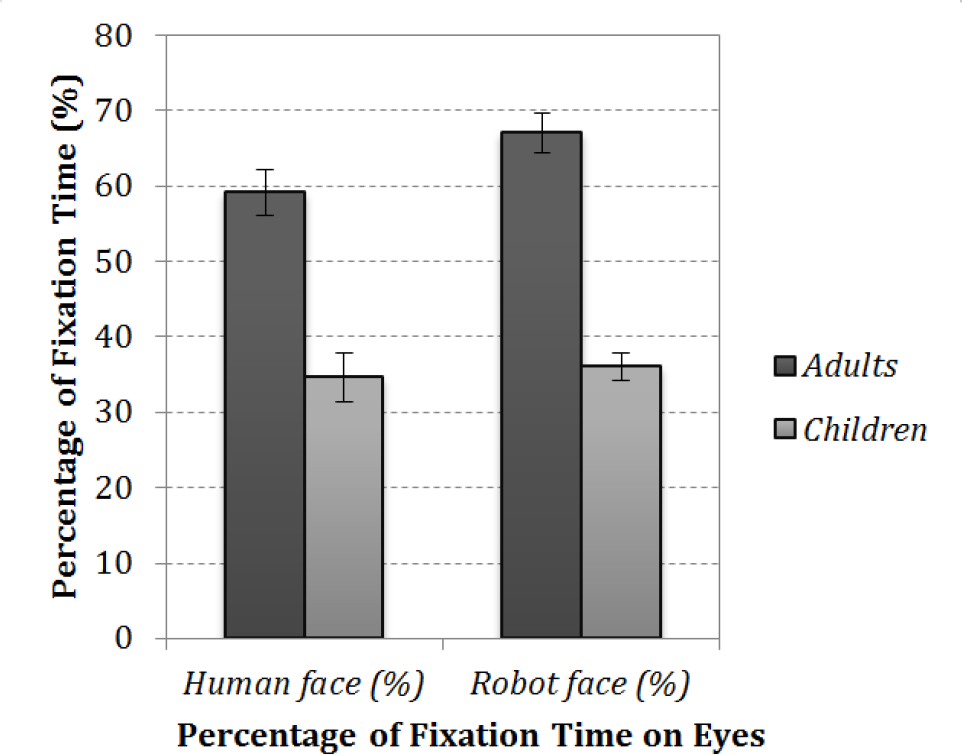

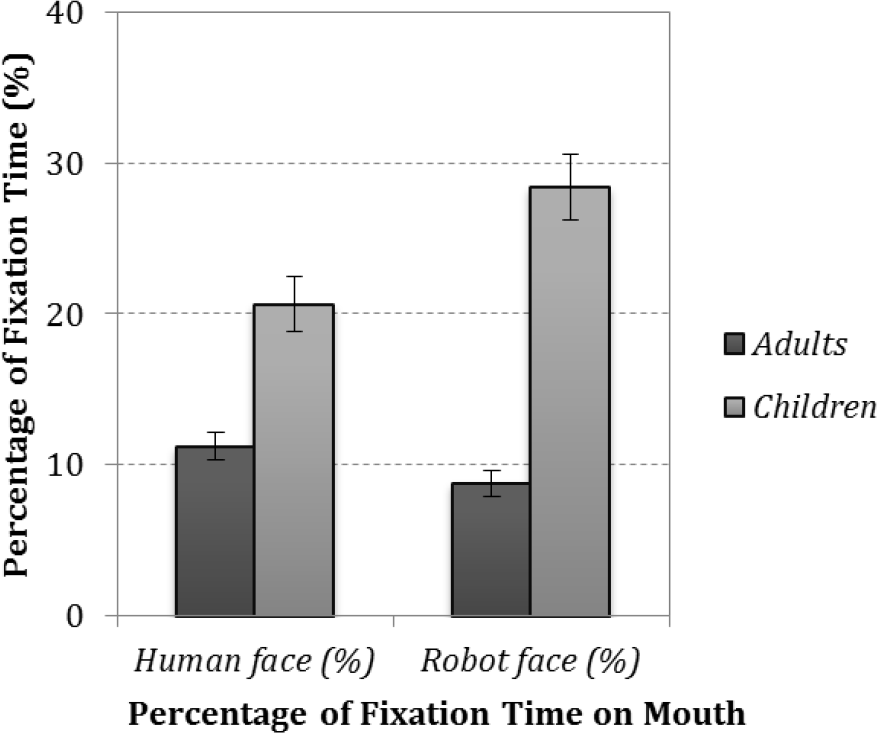

4.5.2. The Effects of Age Level

The results from the MANOVA showed that the adults (M=63.10%, SD=13.28) had a longer fixation time on the eyes than the children (M=35.35%, SD=11.41), F(1,76)=103.44, p<.001, while the children (M=24.52%, SD=9.81) had a longer fixation time on mouth than the adults (M=10.00%, SD=4.15%), F(1,76)=85.08, p<.001. Also, the results indicated that the children (M=27.49%, SD=11.85) had a longer fixation time on the nose than the adults (M=17.35%, SD=9.99), F(1,76)=18.95, p<.001.

4.5.3. The Interaction between Face Type and Age Level

There was an interaction between the face type and age. Consistent with the findings of Study 1, the children looked at the mouth of the humanlike robotic faces (F(1,76)=10.58, p=.002) longer than nose while adults showed no difference in their eye-fixation patterns due to the face type (Fig. 7, 8, 9).

The mean and standard error of the percentage of the fixation time on the eyes

4.6. Discussion

Study 2 examined the effects of humanlike robotic faces on facial recognition patterns. By using the same areas of the core features and the same face shape, the existence of different fixation tendencies between adults and children was confirmed. Specifically, the data showed a higher tendency in the fixation time of the eyes than that of Study 1. It may be due to the effect of luminous materials. The eyes, especially among robotic faces, looked like an electric bulb. This may have attracted the participants' gaze (Fig. 6).

5. General Discussion

Recently, many studies have shown increasing interest in the psychological effects of interactive robots. In this study, we conducted two experiments in an attempt to identify and analyse differences in facial recognition patterns between children and adults. Also, we focused on the different face searching patterns between robot faces and human faces. A number of previous studies indicated that social communication skills were strongly associated with and linked to the humans' gazing behaviour [27].

Therefore, this study considered facial recognition as an important behaviour when people communicate or interact with others. Also, we focused on the differences in the participants' ages, because childhood is a critical period during which our social communication skills are developed. Therefore, adults and developing children took part in Study 1 with the photos from actors and machinelike robots. Study 2 used the photos from humans and humanlike robots which were extracted by the same facial forms. The participants' eye movement and fixation patterns were recorded by an eye-tracker and then analysed for age-specific patterns. The present study found that children and adults have different eye-fixation patterns when recognizing human and robotic faces.

In Study 1, all of the participants tended to see core features in the face. However, the adults gazed longer on the eyes than on the mouth or the nose while seeing either the robot photos or human photos, while children gazed for a shorter time on the eyes than the mouth in robot photos or the nose in human photos. In particular, in comparison with Study 2, these tendencies were strongly shown in Study 1. This may be due to three reasons. First, the robots in Study 1 included the mouth, which occupied a big part of the robots' faces relative to the case of the humans. Second, it may be that children tend to focus more on dynamic features, such as the mouth, rather than static features such as the eyes. Third, other characteristics of the faces in the photos, such as the face shape or other features, might easily affect the children's attention or gaze.

To explain the first and third reasons, we controlled other possible features of the robots' and humans' faces, by using the same person's face frame. The results of Study 2 confirmed the findings of Study 1.

The results are interesting in that the children had different patterns and manners of fixation in comparison with those of the adults. Although the rate of the children's eye fixation time on the core features was similar to that of adults, the patterns and manners are different. This raises the important implication that the dynamic features - such as the mouth - of an interactive robot should be carefully designed if it is to be used for children. This means that the design guidelines of interactive robots for children should be different from those of other robots'. It especially suggests the needs of the design guidelines of robots for education or other services for children. Also, many other considerations for differences between humans and robots may be in existence.

The mean and standard error of the percentage of the fixation time on the nose

The mean and standard error of the percentage of the fixation time on the mouth

6. Limitations and Future Studies

There exist a number of limitations in the present study. First, future studies should consider other modes of communication, including facial expressions, because facial expressions are one of the easiest ways of delivering emotions or states of mind [28]. Second, future studies may also consider other characteristics of participants. Age and the cultural differences of the participants may affect their fixation patterns and manners. Several studies indicated that different social interaction styles could come from cultural differences [29, 30, 31]. Third, the present study used the facial images of humans and humanoid robots. Therefore, future studies might include the images of entire body of humans and robots. Finally, other characteristics of humans and robots in photos, such as gender, may affect respondents' preferences and fixation patterns. Some previous studies reported different fixation patterns based on the different genders of humans in photos [32, 33].

Footnotes

7. Acknowledgments

A preliminary version of this study was presented at the IRoA 2011 conference [13]. This version includes a more extensive literature review and additional data analyses in order to revisit notable limitations discussed in the preliminary version of the paper. This study was supported by a grant from the World-Class University programme (R31-2008-000-10062-0) of the Korean Ministry of Education, Science and Technology via the National Research Foundation.