Abstract

Object tracking can improve the performance of mobile robot especially in populated dynamic environments. A novel joint conditional random field Filter (JCRFF) based on conditional random field with hierarchical structure is proposed for multi-object tracking by abstracting the data associations between objects and measurements to be a sequence of labels. Since the conditional random field makes no assumptions about the dependency structure between the observations and it allows non-local dependencies between the state and the observations, the proposed method can not only fuse multiple cues including shape information and motion information to improve the stability of tracking, but also integrate moving object detection and object tracking quite well. At the same time, implementation of multi-object tracking based on JCRFF with measurements from the laser range finder on a mobile robot is studied. Experimental results with the mobile robot developed in our lab show that the proposed method has higher precision and better stability than joint probabilities data association filter (JPDAF).

1. Introduction

Since object tracking can improve the performance of the robot in such tasks as person following, obstacle avoidance, map building and robot localization, etc. in dynamic environments by estimating the motion pattern of moving objects to predict their positions ahead of time, it has been regarded as one of the basic abilities the mobile robot should have.

Multi-object tracking is to estimate the states of unknown number of objects. The main difficulty in multi-object tracking comes from that it has to simultaneously solve the two problems: data association and state estimation. The approaches used widely for multi-object tracking include Nearest Neighbor (NN) approch, Joint Probabilitic Data Association Filter (JPDAF) (Fortmann, T.; Bar-Shalom, Y. & Scheffe, M., 1983), Multi-Hypothesis Tracking (MHT) (Cox, J. I. & Hingorani, L. S., 1996) and Particle Filter (PF) (Arulampalam, S.; Maskell, S.;Gordon, N. & Clapp, T., 2002) (Li, S. J.; Wang, H. & Chai, T.Y., 2006). Using only the observations closest to the predicated state, NN approach may be the simplest approach but it is not robust enough in many situations. JPDAF, extension of probabilistic data association filter to multi-object tracking, estimates the state by a sum of all the associations weighted by the probabilities that a measurement is associated to an object. In JPDAF only associations at one time period are considered and the number of objects is supposed to be known and fixed during the tracking process. Recently, Schluz proposed sample-based JPDAF to deal with tracking of variable number of objects by integrating JPDAF with particle filter (Schluz, D.; Burgard, W. & Fox, D. etc., 2003). MHT recursively estimates the association hypothesis and assumes that each measurement can be issued by a new object. Thereby MHT handles the entire life-cycle of tracks from creation and confirmation to deletion (Arras, K.; Grzonka, S.; Luber, M. & Burgard, W., 2008). PF-based approach estimates the states of objects by a set of weighted samples, so it is able to handle highly non-linear and non-Gaussian models (Khan, Z.; Balch, T. & Dellaert, F., 2005) (Hue, J. P.; Cadre, L. & Perez, P., 2002), but PF needs a large size of samples with the increasing of tracked objects, which will be intractable. Because these approaches are based on generative models which assume measurements are independent given the states of objects, they are not convenient to use the special and temporal inherent dependents among observation information.

Conditional Random Field model (CRF), a kind of discriminative model, is originally proposed for sequential data labeling (Lafferty, J., McCallum, A. & Pereira, F., 2001). Since CRF directly models the dependency between concurrent state and observation, it can be extended by introducing more general relationships between state and observations. CRF and its derivations have been successfully used for information extraction (Sunita, S. & William, W. C., 2005), image segmentation (Wang, Y.; Loe, K. F. & Wu, J. K. 2006) and action recognition (Wang, Y. & Mori, G., 2009). Some researchers have also tried to use CRF to solve the state estimation problems in a discriminative way. Limketkai proposed a novel version of discriminative PF called CRF-Filters and applied CRF-Filters to robot localization (Limketkai, B., Fox, D. & Liao, Lin., 2007). Hess proposed a discriminative method to train PF for multi-object tracking (Hess, R. & Fern, A., 2009). And Taycher combined CRF and grid filters for people tracking (Taycher, L., Demirdjian, D., Darrell, T. & Shakhnarovich, G., 2006).

In this paper a novel filter based on Conditional Random Field model (CRF) called Joint Conditional Random Field Filter (JCRFF) is proposed for multi-object tracking by abstracting the data associations to be a sequence of labels. Making no assumptions about the dependency structure between observations and allowing non-local dependencies between the state and observations, JCRFF can not only fuse multiple cues including shape information and motion information to improve the stability of tracking, but also integrate moving object detection and object tracking quite well.

2. Problem Definition

The objective of multi-object tracking is to estimate the states of the objects, but during the process of state estimation, the data association problem which determines the corresponding relation between observations and objects has to be solved simultaneously. In multi-object tracking approaches such as JPDAF and MTH, an implicit variable is used to represent data association. In order to fully utilize the spatial and temporal information of the observations to improve the performance of the tracking algorithm, data association is treated in an explicit way in this paper.

Suppose there are

According to the rules of conditional distribution,

Since the state space of θ

3. Joint Conditional Random Field Filter

Conditional Random Field model (CRF) is an undirected graphical model developed for labeling sequence data. Since CRF directly models the conditional distribution over the hidden variables given observations, CRF can handle arbitrary dependencies between the observations. In this section, a conditional random field model called joint conditional random field is designed to explain the calculation of the two conditional probabilities

3.1 Joint Conditional Random Field (JCRF)

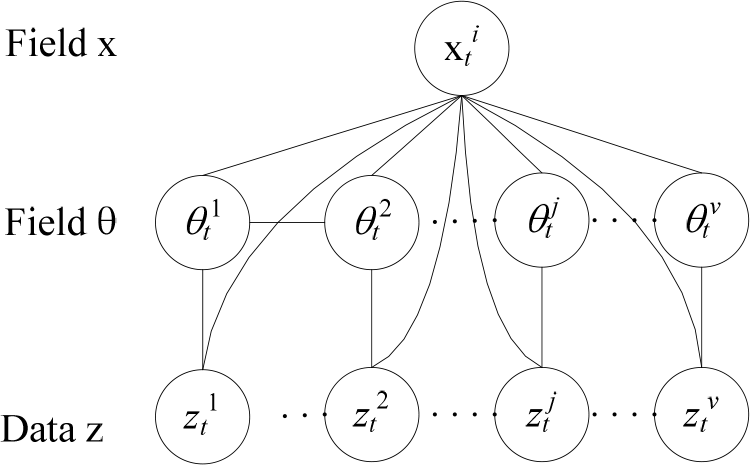

The undirected graph model of joint conditional random field proposed in this paper is shown in Fig.1. In the model, besides the observation data layer

Joint conditional random field

According to the model in Fig.1 and the definition of random field, the conditional probability

where

Random field for data association

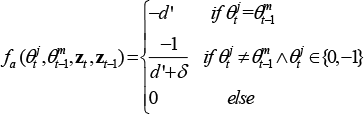

According to Fig.2, the potential function φ

where

And the potential function φ

where the potential function φ

3.2 JCRF Filter (JCRFF)

The conditional probability

Random field for state estimation

where

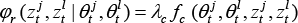

The potential function φ

where potential function φ

Where

The potential function φ

3.3 Inference of JCRFF

The objective of inference is to estimate the state of the objects and the data association sequence simultaneously according to the observation sequence

In algorithm 1 the function

Algorithm 1. Inference of JCRFF

3.4 Parameter Learning

In JCRFF the potential function will be composed of some feature functions and parameters according to the application systems. The feature functions and parameters of JCRFF for multi-object tracking with laser range finder will be explained in section 4.

In parameter learning, the training data includes observation sequence

3.5 New Object and Object Disappearance Detection

New object detection and object disappearance detection, two difficult problems in multi-object tracking, can be solved conveniently using JCRFF.

Usually the appearance of a new object will blot out the background or other objects. So in JCRFF, the detection of new objects is to concern the regions in data association sequence θ

In process of multi-object tracking, an object may disappear due to going out the range of sensor view or the occlusion of other objects. So in JCRFF, the disappearance detection of the

4. Multi-object Tracking with Laser Range Finder

Because laser range finder can get precise range information of objects, it has been widely used in modern robot system. In this section the implementation details of JCRFF-based multi-object tracking with laser range finder will be discussed.

4.1. Preprocessing of Laser Readings

The observation data from laser range finder can be represented by sequence

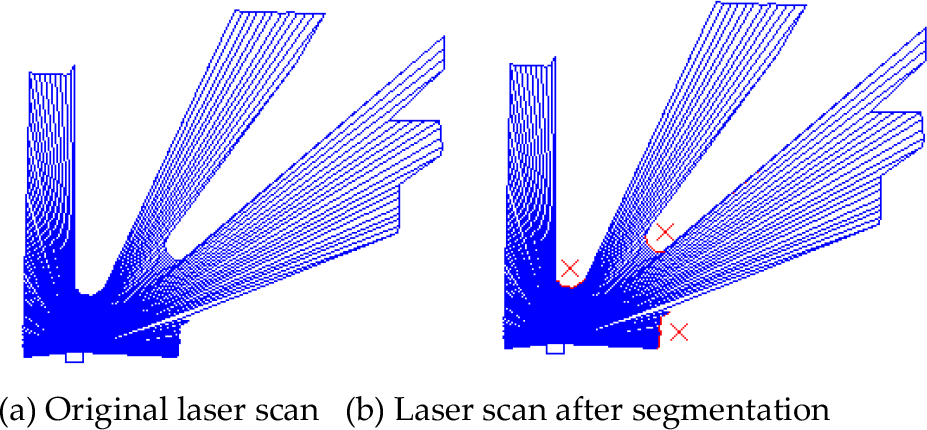

Laser reading

Since the laser readings corresponding to objects will form concave regions, the concave regions will be segmented out in preprocessing step and called candidate object regions which are portrayed in red in Fig.4 (b). Suppose the set of candidate object regions is Ψ

Algorithm 2. Preprocess of Laser Readings

4.2 Neighborhood Relation in Temporal Space

In JCRFF based multi-object tracking, the temporal neighbors of label node θ

Project laser readings

The laser reading

4.3 System Models

Design of system models is to design the detail format of potential functions in JCRFF according to the properties of the applied sensor, i.e. laser ranger finder in this paper.

1) Potential function φ

where Λ

where

where

2) Potential function φ

where Λ

where

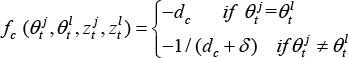

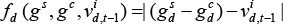

3) Potential function φ

This potential function means that utility that an object is adjacent to background is constant and that objects will be more likely to be adjacent to each other if the difference between

4) Potential function φ

In this paper the state of moving objects is

where Λ

where

5) Potential function φ

where Λ

4.4 Multi-object Tracking Algorithm

Multi-object tracking algorithm based on JCRFF is described in algorithm 3. In the algorithm function

Algorithm 3. Multi-object Tracking Algorithm

5. Experimental Results and Analysis

Experiments are carried out with a robot developed by our lab, as is shown in Fig. 5. The robot is equipped with a SICK laser range finder whose range of view angle is set 180 degree with the precision of 1 degree. And the frequency of the CPU of the processor computer is 2.4GHz. The experimental environment is two rooms in our lab building. At the same time the new object detection threshold

Robot used in experiments

Firstly, performance of new object detection using JCRFF is tested. There are two cases in new object detection: the first case (Case 1) is that the new object moves into sensor's view slowly, and the second case (Case 2) is that the new object has already existed in the view of the sensor but starts to move at some moment. In both cases the object will move randomly. The ratio of successful detection of new objects and the ratio of the false detection which labels static background to be a new object are tested. The experimental results in 30 times of test of JCRFF and JPDAF (Schluz, D.; Burgard, W. & Fox, D. etc., 2003) are compared in table 1. The experimental results demonstrate that for object detection in both cases JCRFF performs better than JPDAF, and that the performances in false detection of the two algorithms are similar.

Experimental results of new object detection

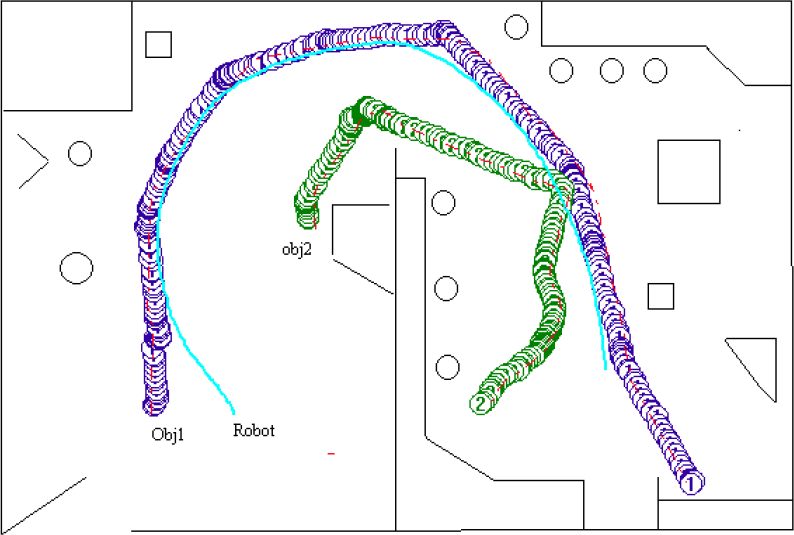

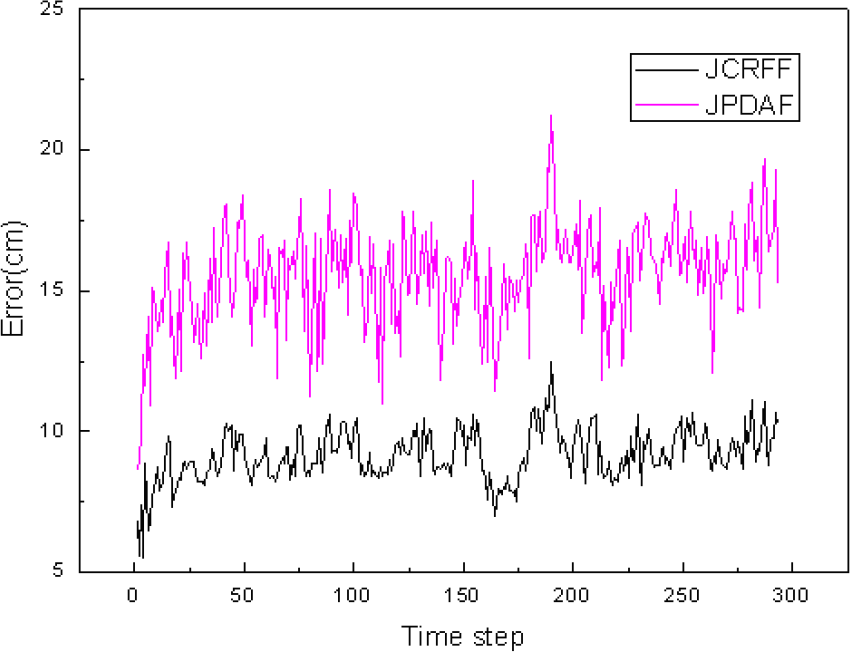

Secondly, the precision and success ratio of multi-object tracking are tested. In the test, the robot with SICK laser range finder called sick-Robot is commanded to track moving objects which are two other robots called target-robots. The sick-robot will follow one of the target-robots which run randomly in the environment and the other target-robot is regarded as an interferential object which will run into the view of the sick-robot randomly. In the tracking process, the odometer information of the robots which are supposed to be the precise state of the robots will be recorded. One of the experimental results is shown in Fig.6, in which the lines with circles and the red dashed lines represent the trajectory of objects estimated using JCRFF and odometer information respectively, and the wide real line represents the trajectory of the sick-robot. From the figure we can see that JCRFF can estimate the state of the objects fairly well. The object tracking errors of JCRFF and JPDAF that is the difference between objects' state estimated by object tracking algorithms and odometer information are shown in Fig.7, which shows that the tracking error of JCRFF is much smaller than that of JPDAF. And at the same time, in 30 times of experiments the success ratio of object tracking is 100% for JCRFF and 90% for JPDAF.

Trace of tracked objects

Trace error of objects in tracking

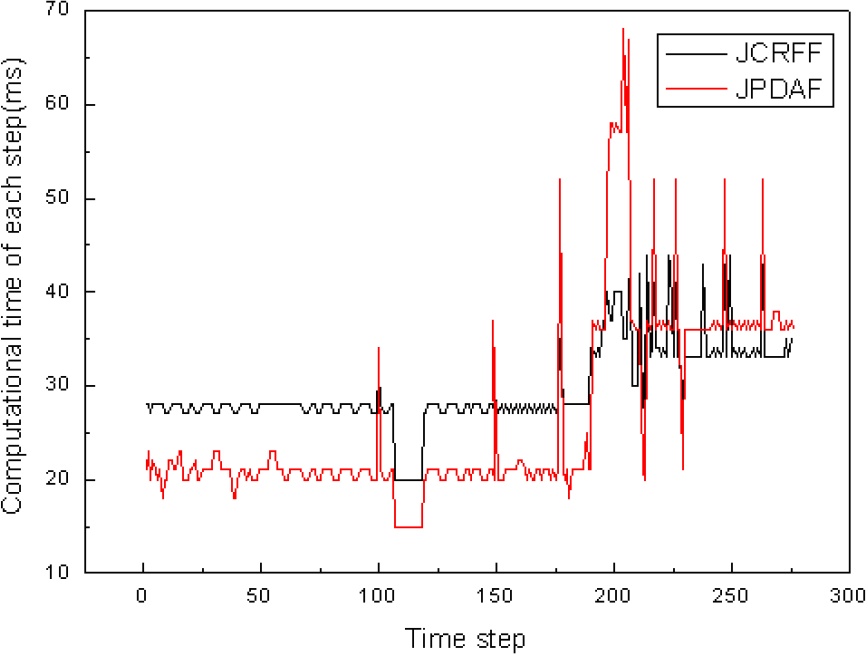

Lastly, the computational time of the multi-object tracking algorithms is tested. The computational time of JCRFF and JPDAF in tracking two moving objects are shown in Fig.8. From Fig.8 we can see that in the time steps before 200 when there are a few interferential regions in background, the computational time of JCRFF is larger than that of JPDAF, but after time step 200 when the interferential regions increase, the computational time of JCRFF is smaller than that of JPDAF. So JCRFF is preferable in complex environments.

Computational time of each step in tracking

6. Conclusions

A new kind of joint conditional random field filter (JCRFF) based on conditional random field with hierarchical structure is proposed for multi-object tracking with a mobile robot system. JCRFF based multi-object tracking algorithm can not only simultaneously perform new object detection and object tracking but also integrate local shape and motion information of moving objects to improve the precision of state estimation. Experimental results of multi-object tracking with laser range finder sensor prove that JCRFF has higher precision and better stability than JPDAF in complex environments.

Footnotes

7. Acknowledgements

This paper is supported by the Fundamental Research Funds for the Central Universities(No. 2009ZM0123); the Science and Technology Planning Project of Guangdong Province, China (No. 2009A040300008) and the Educational Commission of Guangdong Province (No. x2jsN9090170).