Abstract

Background

Progress testing is a method of assessing longitudinal progress of students using a single best answer format pitched at the standard of a newly graduated doctor.

Aim

To evaluate the results of the first year of summative progress testing at the University of Auckland for Years 2 and 4 in 2013. SUBJECTS: Two cohorts of medical students from Years 2 and 4 of the Medical Program.

Methods

A survey was administered to all involved students. Open text feedback was also sought. Psychometric data were collected on test performance, and indices of reliability and validity were calculated.

Results

The three tests showed increased mean scores over time. Reliability of the assessments was uniformly high. There was good concurrent validity. Students believe that progress testing assists in integrating science with clinical knowledge and improve learning. Year 4 students reported improved knowledge retention and deeper understanding.

Conclusion

Progress testing has been successfully introduced into the Faculty for two separate year cohorts and results have met expectations. Other year cohorts will be added incrementally.

Recommendation

Key success factors for introducing progress testing are partnership with an experienced university, multiple and iterative briefings with staff and students as well as demonstrating the usefulness of progress testing by providing students with detailed feedback on performance.

Introduction

Progress testing can be defined as “a form of longitudinal examination which, in principle, samples at regular intervals from the complete domain of knowledge considered a requirement for medical students on completion of the undergraduate programme”. 1 All progress tests are written assessments using single best answer format. 2

Progress testing is different from more traditional tests. Historically, assessment in medical schools has occurred soon after a particular curriculum subject has been taught and has been limited to that subject. The intent of developing progress testing as a concept was to sever the direct relationship between education in a particular subject and its assessment. 3 Items used in progress tests are commonly clinically based, require integrated knowledge of sciences underpinning medicine with clinical knowledge and cover the entire medical school curriculum. The test is set at the level of knowledge expected of a first year house officer.

The major advantage of progress testing is that rote memorization is discouraged in favor of integrated, higher order knowledge.4–6 Other recognized benefits of progress testing include the capability of improved feedback to students and to academic departments on student attainment and teaching, 7 fostering of deep learning strategies, 8 change in study habits, 9 reduced stress from spreading assessment across several events, 10 and the early identification of both high achieving and struggling students. 11 For these reasons, the method is increasingly being used in medical schools internationally. 8 A more detailed history of progress testing and its advantages for creating a learning environment can be found in the AMEE guide on progress testing. 2

The University of Auckland has worked closely with Peninsula College of Medicine and Dentistry (PCMD) in developing policies for delivering, analyzing, and reporting progress tests. The framework chosen at this university is a 3-hour test of 125 items which students sit three times per year irrespective of year level. A large databank of >4000 items is available to ensure that items are not repeated within a three-year timeframe. Formula scoring is used where 0.25 of a mark is deducted for an incorrect answer. There is a “Don't know” option that results in an item score of zero. The blueprint used is based on the Professional and Linguistic Assessments Board (PLAB) blueprint. 12 All items are reviewed by a moderation group to check validity, wording, and answer. After each test, the results are reviewed for items that did not perform as expected using point-biserial correlation, analysis of distractors, and index of difficulty. These items are then discussed by the moderation group who decides if the item should be kept or excluded from analysis. In progress tests, it is usual that all but the final year of the program are norm-referenced because of difficulties in applying criterion-referenced methods to multiple class cohorts.13,14

Although progress testing is being introduced incrementally in the University of Auckland with Year 2 and Year 4 participation in 2013, by 2015, all students from Year 2 to Year 6 will be required to complete these tests. In anticipation of Years 2 and 4 of the medical program having to sit progress testing in 2013, the Year 3 cohort of 2012 was invited to take a pilot test. In 2013, students in Years 2 and 4 of the program were required to take three progress tests. For Year 2, the first was formative and the remaining two were summative with limited weighting and in addition to end of module testing. For Year 4, all three were summative. This paper reports on the evaluation of the three tests from 2013 and results of Years 4 and 2 student feedback on progress testing from 2013 and suggests a possible framework for assessing progress testing.

Method

The University of Auckland Human Participants Ethics Committee approved the study. Aggregated data for the separate cohorts of Years 2 and 4 were collected to assess the progress of student cohorts from test to test. For Year 2, the number of students sitting the tests was 241, 238, and 240 for each test, respectively, and for Year 4, the numbers were 203, 201, and 202. Analysis of reliability was undertaken on each test using Kuder–Richardson coefficient.

For calculating concurrent validity, the performance of Year 4 students in the domain of Clinical and Communication Skills was compared with the performance in progress tests. In the calculation, progress test scores in all the three tests for each student were averaged. The Clinical and Communication Skills domain uses a combination of 10 separate assessments; two Mini-CEX, one short OSCE in musculoskeletal medicine, end-of-year-6-station OSCE, and an assessment in Drug and Alcohol. Grades of Distinction, Pass, Borderline, and Fail are possible for all these clinical assessments. For the purpose of this analysis, all the assessments were equally weighted and numerical scores of 0, 1, 2, and 3 were replaced for the grades of Fail, Borderline, Pass, and Distinction, respectively.

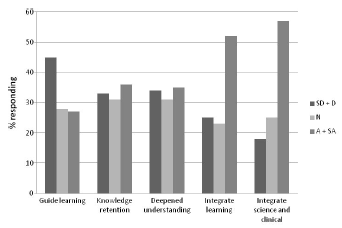

End of year 2013 feedback was sought from Year 4 students by way of a questionnaire using Likert scales. The questions asked were: Did use of PT enhance retention of knowledge? Did use of PTs enhance learning? Did PTs deepen understanding of clinical medicine? Did PTs help to integrate science with clinical medicine? Was the student confident in preparing for PTs? Responses were received from 115 (57%) of the Year 4 cohort after they had taken three summative progress tests. The results of “Significantly Disagree” and “Moderately Disagree” were combined, “Slightly Disagree” and “Slightly Agree” were combined as were “Moderately Agree” and “Strongly Agree”.

Feedback was sought from Year 2 students in the form of a survey. The questions sought feedback on the use of progress tests to guide learning, enhance retention of knowledge, deepen understanding, integrate learning in the taught modules, and integrate basic science theory with clinical practice. Responses were received from 190 out of 242 students representing 79% of the cohort.

Results

Psychometrics

The KR-20 scores for each test are given in Table 1.

KR-20 for progress tests.

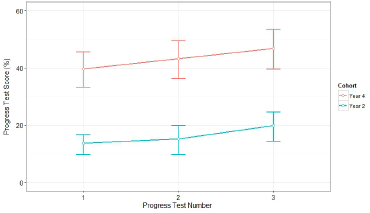

Measurements of test reliability show a KR-20 of either 0.84 or 0.85 for all six measurements. Test results showed an increase in mean score for each cohort over the three tests. The P25 (score below which 25% of the class achieved) and P75 (score above which 25% of the class achieved) are given in Figure 1 and showed corresponding increase.

Results of Year 2 and Year 4 across three 2013 progress tests. Mean, P25 and P75.

Concurrent validity

The correlation of progress test scores with the domain of CCS assessments shows a Pearson's r of 0.54 indicating moderate to strong correlation. The coefficient of determination (R2) value is 29 indicating that 29% of the variability seen in the performance of students in the CCS domain is due to their performance in progress tests. A scatter plot confirms that the relationship is linear (Fig. 2).

Scatterplot of CCS score and progress test results.

Year 4 end of year survey

The questions are detailed in the Methods section. The results are provided in Figure 3.

Year 4 survey.

Although there was some diffidence about preparing for progress tests, it is also clear that students considered the tests assisted in knowledge retention, deepened understanding of clinical medicine, assisted with integrating knowledge, and enhanced their learning.

The qualitative section of the survey revealed more about why students gave the responses above. For many students, progress testing was seen as requiring a different and more clinically based approach to learning:

“I liked the progress tests because they motivated me to learn as much as I could in the clinical environment, and they motivated me to learn by seeing patients.”

“They were fun to do from the point of view of being clinical and making me think in a clinical fashion.”

The issue of preparation for progress tests revealed that many found revision of material for progress tests to be dissimilar to their prior experience of studying for examinations:

“Study for progress tests and study relevant to clinical medicine was quite different to study for pre-clinical years and took quite a while to adapt to this.”

“Progress tests require approximately zero preparation–-it feels like all the preparation to be done is through the clinical portion of the course.”

“Throughout the year I worked hard to learn and relearn material which I was facing on the wards and I believe this showed in my progress testing.”

However, not all comments were favorable about changes in study habits:

“For me they actually inhibit my learning as there is no external motivation to study particular topics in depth.”

“Progress tests did not work for my learning, feel I have actually lost overall knowledge this year.”

Several students commented on the reduced stress levels associated with progress testing:

“I had initial reservations about the progress test, but I feel now that is an appropriate test. It has caused less stress and anxiety and I am now preparing better for the tests.”

“Progress tests have reduced anxiety levels towards assessments for me this year.”

Year 2 feedback

Year 2 feedback is presented in Figure 4.

Year 2 feedback.

Students perceive that progress testing assists in integrating science and clinical medicine as well as integrating learning, were ambivalent about the ability of the tests to promote knowledge retention or deepen understanding and were slightly negative about the ability of the tests to guide learning.

Positive open comments from Year 2 students focused on the clinical nature of progress tests and the integrated learning they require:

“Progress Tests helped me focus on the importance of thinking about what I've learnt in the modules in a clinical context.”

“Progress tests are a good chance to see practical application of our knowledge and they area challenge/motivating factor.”

“Might need to change HOW course is taught. Progress test is very clinically focused whereby lecture content is not.”

Negative comments were mostly around the difficulties of sitting a clinical test so early in the education of the students:

“At this stage of our learning, progress tests are not a good assessment of our learning. It's all about guessing and doesn't reflect how much you've learnt from modules.”

“The Q's of the progress test are not at all suited to what we have learnt this year.”

Discussion

This evaluation of progress testing incorporated a number of facets: psychometric evaluation, key success factors, and student feedback.

Psychometric analysis of the progress tests revealed a KR-20 of 0.84–0.85. For this assessment approach, the Kuder–Richardson Formula 20 (KR-20) was chosen as the most appropriate measure of internal consistency due to the dichotomous nature of the variables and provides the same information as Cronbach's alpha. A KR-20 score >0.7 is acceptable and a KR-20 score >0.8 is preferable. 15 Scores >0.9 may indicate possible redundancy of items or may indicate an unnecessarily long assessment. 16 It is of note that the three tests reported in this paper had satisfactory reliability. The KR-20 is reported on a little <125 items as a post moderation process undertaken on raw data excludes items that have performed poorly (usually 2–3 items). These items are excluded before feedback on performance is given to students.

A measure of concurrent validity provides insight into student performance on progress tests in comparison to clinical assessments where the correlation found is moderate to strong. The r value is high considering that that the clinical assessments used in this study were a combination of different methods including Mini-CEx and OSCE styled assessments. It is expected that there would be a reasonable correlation based on other research that examines the relationship between written and clinical assessments. 17

It is reassuring that the mean score for both Years 2 and 4 increased across the three tests. However, historical data from PCMD indicates that there is not a consistent incremental increase in mean scores for cohorts and some tests show a decrease. This represents a challenge in test equating where individual tests may vary in difficulty. Thus, it is unlikely that all progress tests in the program will continue to show such incremental increases.

Overall, the feedback indicates students perceive that progress testing has considerable educational value. Students would appear to agree that the objectives of encouraging deeper and more integrated medical knowledge have been largely met. Preparation for progress tests is an issue for some students. This is unsurprising as the nature of the assessment does not lend itself to short intense periods of study on isolated topics, an approach that may have been successful with more traditional testing techniques. Rather, preparation that focuses on clinically relevant information drawn from multiple preclinical and clinical sources and across all medical disciplines is required. Of particular note was the strong indication from students that the test had a clinical or real world focus. Alongside this was understanding that preclinical knowledge alone was limited in preparing for the tests.

The issue of competitiveness due to the norm-referencing method of standard setting was seldom mentioned by the Year 4 group. There was also little concern over negative marking and stress of the assessment process (issues that appeared problematic in student feedback prior to the summative tests). Indeed, it would appear that the Year 4 cohort considered that progress testing would reduce stress.

The partnership with a university experienced in running progress tests was a critical success factor in the introduction of progress testing. As stated by two authors with considerable experience in progress testing, “introducing progress testing involves not only a change in thinking about assessment but also an academic cultural change”. 18 The partnership assisted with developing policies, processes, analytical capability and item sharing. The combination of psychometric data, student feedback that includes the acceptability of the assessment and the performance of key success factors such as partnership with experienced progress test providers may have promise as a framework for an assessment instrument for progress tests.

Limitations

Progress testing is still a relatively new part of the assessment suite and student experience so far is limited to three summative tests over a single year. The long-term benefits of progress testing such as encouraging clinical learning in the early years of the medical program and providing a clear vision of the depth of clinical knowledge that is needed by the end of the medical program may not yet be apparent to students.

The Kuder–Richardson coefficient assumes dichotomous data. However, the formula scoring structure of these tests introduces a third option (Don't know). This may have a small impact on the coefficient.

The two surveys used different questions and different Likert scales. The format of the questions and scale used for the different years is historical and allows longitudinal comparison of student feedback over many years but does make direct comparison problematic.

Conclusions

Progress testing has been introduced as a significant part of the assessment suite in the medical program at the University of Auckland. Important success factors include partnership with an experienced university, moderation of items both before and after tests, and running a pilot test. The combination of multiple and iterative briefing and feedback opportunities coupled with the experience of a pilot test with no academic consequences has proved valuable in gaining student support for progress testing. Student feedback after three summative tests indicated an understanding of the positive aspects of this assessment method and decreasing levels of concern as students gain experience with the method. The 2013 testing data demonstrate a progressively improving standard for student cohorts. The reliability of the tests was acceptable and a measure of concurrent validity reassuring. Progress testing will continue to be implemented for all years of the medical program. Possible frameworks for the systematic evaluation of progress tests have been suggested.

Author Contributions

Conceived and designed the experiments: SL, WB. Analyzed the data: SL, VM, JY. Wrote the first draft of the manuscript: SL. Contributed to the writing of the manuscript: SL, JY, KB, RB, WB. Agree with manuscript results and conclusions: SL, JY, WB, VM, RB, BO'C, KB. Jointly developed the structure and arguments for the paper: SL, J Y. Made critical revisions and approved final version: JY, WB, VM, RB, BO'C, KB. All authors reviewed and approved of the final manuscript.