Abstract

In a distributed environment, replication is the most investigated phenomenon. Replication is a way of storing numerous copies of the same data at different locations. Whenever data is needed, it will be fetched from the nearest accessible copy, avoiding delays and improving system performance. To manage the replica placement strategy in the Cloud, three key challenges must be addressed. The challenges in determining the best time to make replicas were generated, the kind of files to replicate, as well as the best location to store the replicas. This survey conducts a review of 65 articles published on data replication in the cloud. The literature review examines a series of research publications and offers a detailed analysis. The analysis begins by presenting several replication strategies in the reviewing articles. Analysis of each contributor’s performance measures is conducted. Moreover, this survey offers a comprehensive examination of data auditing systems. This work also determines the analytical evaluation of replication handling in the cloud. Furthermore, the evaluation tools used in the papers are examined. Furthermore, the survey describes a lot of research issues & limitations that might help researchers support better future work on pattern mining for data replication in the cloud.

Nomenclature

Nomenclature

(Continued)

Operating systems, storage, networks, hardware, databases, and even complete software applications are supplied to consumers as on-demand services through cloud computing, which is a network-based architecture [45,63]. Cloud computing does not make use of certain latest techniques, but it saves money and improves the scalability of IT services management. SaaS, IaaS, PaaS, & EaaS are the four types of cloud computing. A tremendous quantity of data is currently a significant and critical portion of shared resources across several scientific fields. In several disciplines, the size of data is estimated in terabytes or even petabytes. Cloud data centres [61,67] are often used to store such massive amounts of data. As a result, data replication is commonly used to manage a large amount of data by producing identical copies of data in geographically dispersed locations known as replicas. Data replication [56,74] has the benefit of accelerating data access, lowering access latency, and enhancing data availability. To enhance the response time to consumers, a common method is to deploy numerous replicas spread across geographically distant clouds. The overheads of producing, maintaining, & updating replicas are essential, and the difficult concern in distributed setups is ‘multiple copies of data at several places. Cloud service providers offer a variety of geographically dispersed facilities that users can share in accordance with the Service Level Agreement, and the market for these services is expanding quickly. Using cloud computing, a potent paradigm for addressing the requirements of people and companies, information may be exchanged across the Internet. Distributed cloud-based or peer-to-peer (P2P)-based material on a wide scale can be effectively replaced by the peer-to-peer-cloud (P2P-Cloud).By solving some of this criterion’s major issues, including availability, reliability, security, bandwidth, and reaction time of data access, data replication’s primary goal is to increase performance for data-intensive applications [29,57,58,70].

In the field of distributed & cloud computing, data replication [71] has been widely investigated. If one of the nodes is unavailable, the data can be retrieved through another node. The staging, placement, & transfer of data across a cloud are all part of data replication. Data staging refers to the temporary storage of data for evaluation at a later stage of implementation. Data replication is frequently used by cloud service providers to meet their service level goals for availability and response speed. Cloud computing is a rapidly evolving paradigm that gives users the flexibility and on-demand access to cloud services they need using a pay-as-you-go pricing structure. A well-known method for increasing data availability, lowering bandwidth usage, and achieving fault tolerance is data replication [23,60,68]. If a shared resource is unavailable in a cloud computing [62] system, data is “staged-in” at the execution site. The data is “staged-out” of the storage after it has been “staged-in.” Data placement is the process of placing the data at various locations, and data movement is focused on how (a) data should be transferred to maintain replication levels & (b) data would be accessible from various locations. Traditionally, many strategies are included to improve the cloud performance by dividing the file into multiple blocks & distributing the pieces among data nodes for parallel data transmission. Data management & replication strategies should be built to reach QoS in consideration of preventing performance degradation. Mostly, data replication is used to handle enormous amounts of data in a dispersed manner. Data replication [22] improves data availability, which also increases data access speed & lowers access latency. While considering replication of data operations in cloud storage clusters, there are two major issues to be considered: the right amount of data replicas and the replicas must be placed in the system correctly to complete the task efficiently.

Many replication levels [47] are typical in cloud-based systems that run all over the world, particularly between clusters, servers, inter-data centers, and even cloud systems. Conventional approaches for consistency across an object’s replicas include pessimistic (i.e. lock-based) & optimistic (i.e. non-lock-based). Although the replication process enhances application performance, there is a situation that results in poor overall performance. Updates require various organizations across the network when the data file is read as the load, causing performance reduction. The replication protocol is also utilized, as well as the replication cost has a significant impact on the process of replication [42,70]. Data replication can increase costs & energy consumption despite it has many benefits. Another concept of data replication is the method of enhancing performance, availability, & dependability by maintaining multiple copies of a data file across many sites. It’s frequently used in applications that require a large amount of data to be collected from multiple locations across the world. Whenever one of the sites’ replicas fails, the requested data file could still be supplied from other locations. The purpose of data replication is to fulfill requests from nearby locations to keep the appropriate replicated files. Thus, it is required to implement a data replication [54,55] technique that analyses the balancing of several trade-offs. Optimization has become a popular area in recent years for determining the best answer to complex situations. As a consequence, researchers have concentrated on meta-heuristic algorithms to address replication issues. Many people and businesses are outsourcing their data to remote cloud service providers (CSP) to cut maintenance costs and the workload associated with managing huge data storage systems. Replication of the data is essential for boosting information availability and reliability. Additionally, maintaining numerous copies of the data across many hosts results in higher maintenance costs for the providers, who then pass those costs along to the client in the form of higher prices. However, occasionally they might not preserve that copy on all servers. The clients’ requests for data updates were also not adequately carried out.

The following is a list of the key contributions to this work.

Conduct a comprehensive review of 65 research papers based on pattern mining of data replication in the cloud.

Analyzes various replication methods in reviewing articles. This survey provides a comprehensive analysis of data auditing schemes. Moreover, the analytical review on replication handling in the cloud is performed.

Moreover, evaluation tools used in the papers are also analyzed. Analysis of performance measures of each contribution is also done. Finally, research gaps and challenges in this topic are determined.

The previous literature based on data replication in the cloud is listed in Section 2 of this article. In Section 3, a review of cloud data replication models cloud, performance, & maximum attainments is preferred. Section 4 contains an evaluation of the replication handling schemes in the cloud and a chronological review. Section 5 also includes a review of data auditing schemes and simulation tools. Section 6 also listed the research gaps and challenges. The conclusion of this work is preferred in Section 7.

Literature review

Related work

This study evaluates 65 papers that were published between 2015 and 2021. The papers are chosen from well-known publishers including IEEE, Springer, Elsevier, and others. The papers are arranged as follows:

Replication management approach

In 2016, Mansouri

In 2012, Sun

In 2019, Ramanan

In 2019, Edwin

In 2021, Mohammad

In 2015, Boru

In 2021, Maheshwari

In 2021, Ulabedin

In 2013, Chen

In 2019, Toosi

In 2021, Latip

In 2021, Mseddi

In 2021, Raouf

In 2021, Zhang

In 2021, Awad

In 2020, Amel

In 2019, Riad

In 2018, Liang

In 2018, Mansouri

In 2016, Navneet

In 2021, Gregory

In 2020, Behnam et al. [52] have introduced a multi-objective optimized placement method on a meta-heuristic approach as well as the fuzzy system that balances the trade-offs among the six optimization objectives to discover the best places for replicas. Furthermore, comprehensive experiments using CloudSim demonstrate that the suggested replication algorithms improve the most popular replication strategies in terms of hit ratio, amount of replications, load variance, latency, average service time, availability, & energy usage.

In 2019, Gustavo et al. [27] has introduced FT-Aurora, a high-availability IaaS cloud manager which permits access to cloud resources even though the manager fails. By enabling network programmability, FT-Aurora allows for more efficient and flexible resource management. Both the efficiency & reliability of FT-Aurora were tested as well as the conclusions were given.

In 2018, Suji et al. [20] has presented an approach for dynamically adjusting the replica factor for each data item based on the data’s popularity, its present replication factor, as well as the number of active nodes in the cloud services. HDFS was used to accomplish the suggested technique. The test findings demonstrate that the suggested strategy keeps an appropriate number of clones for each data item depending on its popularity while respecting the cloud storage availability limitation.

In 2017, Kuchaki

In 2014, Sai

In 2014, Tao

In 2020, Abbes

In 2020, He

In 2021, Khelifa

In 2020, Javidi

In 2014, Kumar

In 2016, Galen

In 2015, Sreekumar

In 2016, Bui

Replication selection approach

In 2016, Sookhtsaraei

In 2019, Shen

In 2018, Bilal

In 2018, Mansouri

In 2018, Marwa

Replication placement approach

In 2021, Ulabedin

In 2020, Salem

In 2021, Bowers

In 2020, Peng

In 2021, Fan

In 2017, Israel

In 2019, Amrith

In 2017, Qiu

In 2012, Mansouri

In 2021, Younes

In 2017, Wiese

Replication creation approach

In 2021, Javidi

Replication retirement approach

In 2018, Tos

In 2021, Mokadem

In 2020, Guo

Replication decision approach

In 2020, Nannai

In 2018, Mansouri

In 2019, Ali

In 2021, Maheshwari

In 2021, Liu

In 2020, Castro

In 2017, Tziritas

In 2019, Sheng

In 2018, Liang

In 2016, Songling

In 2019, Moin

In 2016, Galen

Replication decision approach

In 2016, Nahir

In 2015, Sreekumar

Static and dynamic replication

In 2021, Shakarami et al. [70] this study gives a thorough analysis and classification of state-of-the-art data replication schemes among several existing cloud computing solutions in the form of a classical classification to characterize current schemes on the subject and discuss open challenges. The three key categories in the classification that is being offered are data management, data auditing, and data de-duplication systems. A thorough analysis of the replication schemes emphasizes their key characteristics, including the classes they use, the type of scheme, the location of implementation, the evaluation methods, and their strengths and shortcomings. Table 2 shows the reviews on the existing model.

Review of the existing model

Review of the existing model

(Continued)

In 2021, Séguéla et al. [68] have provide the dynamic data replication technique (DE2ARS) in this research changes the number of copies based on the workload and tackles difficulties with energy consumption and cost. It is initiated by a Control Chart and occurs after an initial placement. To properly analyze the suggested technique, we first contrast various parameter options. We contrast DE2ARS with methods found in the literature.

In 2021, Hamrouni et al. [23] offers a thorough examination of the data replication techniques now in use in cloud systems, including both standalone and networked clouds. We also describe critical steps for data correlation-aware techniques. In addition, we look at the characteristics of the main techniques, such as how replication problems are addressed, how providers and consumers are prioritized, how service level agreements are taken into account, how cost and economic factors are taken into account, and how assessment tools are used. Finally, using extensive simulations of several replication algorithms designed for standalone and networked clouds, we present a performance study.

In 2022, Mokadem et al. [60] provides a new classification of data replication tactics in cloud systems in this research. It also considers a number of other factors that are unique to cloud settings, including (i) the profit orientation, (ii) the examined objective function, (iii) the number of tenant objectives, (iv) the cloud environment’s characteristics, and (v) the assessment of economic expenses. Regarding the final criterion, we concentrate on the provider’s financial gain and take into account the provider’s energy use.

Economic profit

As a result, getting the most economic benefit at the lowest possible operating cost may not always coincide with achieving satisfactory performance. A replica is actually only constructed if a node that could receive a new duplicate is located, even if a replication is taken into consideration (per-query or per set of queries). Moreover, the provider needs to make money from this duplication. Before choosing to duplicate (before Q is executed), the provider’s economic advantage (Q_Profit) is also calculated for this purpose. As a result, the provider’s estimated revenues (Q_Revenues) and expenses (Q_Expenses), as indicated by Formula (1), are determined.

In order to ensure profitability for the provider when implementing Q, its income must exceed its expenses when several renters are served.

Review on data replication models in the cloud, performances and the maximum attainments

Review on adopted data replication models in the cloud

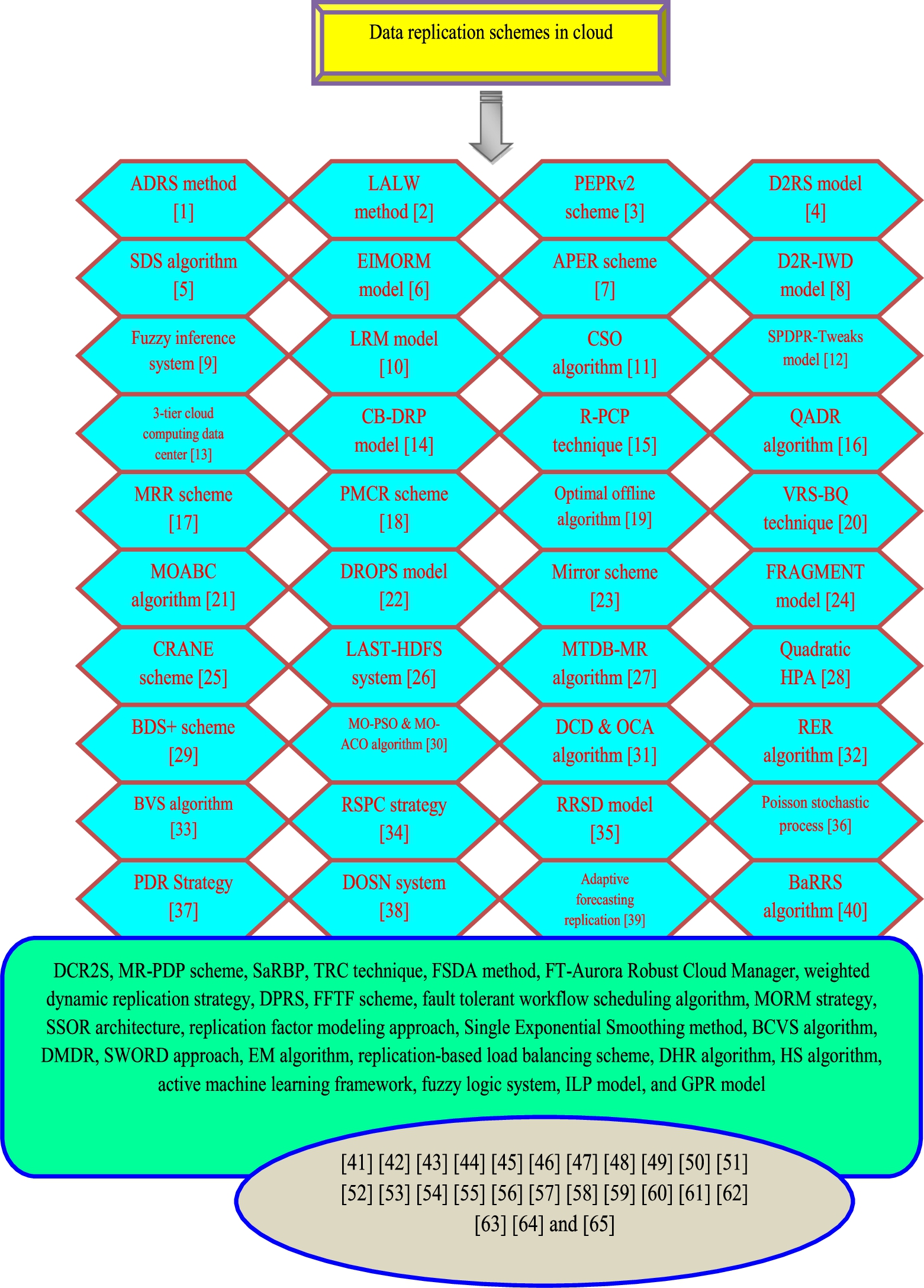

Each work is reviewed regarding adopted data replication methods in the cloud, and the pictorial depiction is shown in Fig. 1. By delivering several copies with a coherent state of the same service, data replication a well-known distributed approach is the key mechanism utilized in the cloud for lowering user waiting time, boosting data availability, and minimizing cloud system bandwidth usage. Moreover, it was observed that the ADRS method was adopted in [43], and the LALW method was exploited in [49]. For Grid environments, a novel dynamic replication approach known as LALW [49] is employed. The LALW technique’s primary objective is to assign different weights to files of differing ages. As a consequence, the weight declension rate would be reduced.

Architectural diagram of data replication models in the cloud.

Further, the PEPRv2 scheme was adopted in [76], the D2RS model was exploited in [73], SDS algorithm was determined in [65]. The suggested SDS [65] method would reduce the cost of data duplication. SDS is a positive feedback system that encourages the investigation of superior solutions by allocating additional agents to them. EIMORM model was exploited in [13], the APER scheme was adopted in [75], the D2R-IWD model was determined in [63], the fuzzy inference system was adopted in [45], and the LRM model was employed in [72], respectively. LRM [72] is the replication manager referred to in this study. LRM’s [72] primary responsibility is to obtain user inquiries, gather information about the cluster’s data nodes, as well as eventually choose the greatest host for the blocks. LRM carries out these responsibilities in collaboration with its other components. LRM is the last arbiter.

Moreover, the CSO algorithm was adopted in [50], the SPDPR-Tweaks model was employed in [4], the three-tier cloud computing data centre architecture was determined in [6], the CB-DRP model was adopted in [41], the R-PCP technique was adopted in [78], QADR algorithm was exploited in [35], MRR scheme was determined in [36], PMCR scheme was exploited in [37], the optimal offline algorithm was used in [53], and VRS-BQ technique was exploited in [2], correspondingly. In a cloud scenario, the VRS-BQ [2] replica placement strategy is utilized to reduce storage usage, response time, as well as replication process time. In addition, the MOABC algorithm was used in [67] and the DROPS model was adopted in [3]. Furthermore, the Mirror scheme was exploited in [22], the FRAGMENT model was adopted in [10], and CRANE scheme was adopted in [61], and the LAST-HDFS system was adopted in [7]. The LAST-HDFS [7] technology guarantees location-aware file allocations & monitors file transfers in the cloud in real-time to prevent any unlawful transfers.

Moreover, other data replication models in the cloud such as the MTDB-MR algorithm was deployed in [66], Quadratic HPA was exploited in [77], the BDS+ scheme was employed in [83] and MO-PSO and MO-ACO algorithm was adopted in [5] correspondingly. In addition, the DCD and OCA algorithm was deployed in [38], the RER algorithm was employed in [15], the BVS algorithm was exploited in [30], the RSPC strategy was adopted in [59] and the RRSD model was deployed in [74] respectively. RRSD [74] employs the approach of making minimum copies to enhance load balancing and minimize storage consumption, and data dependability.

Consequently, the Poisson stochastic process was adopted in [34], the PDR Strategy was deployed in [46], the DOSN system was exploited in [16], the adaptive forecasting replication Framework was employed in [56] and the BaRRS algorithm was adopted in [9]. Similarly, the DCR2S was adopted in [19], the MR-PDP scheme was deployed in [81], SaRBP was employed in [82], the TRC technique was used in [33], the FSDA method was adopted in [52], and FT-Aurora Robust Cloud Manager was adopted in [27]. To evaluate the trade-offs between the six objectives, FSDA [52] was utilized. It’s used to assess fitness and more accurately characterize the solution. The new replica is placed in the most optimal position to decrease access time while maximizing network & resource utilization. Likewise, the weighted dynamic replication strategy, DPRS, FFTF scheme, fault-tolerant workflow scheduling algorithm, MORM strategy, SSOR architecture, replication factor modelling approach, Single Exponential Smoothing method, BCVS algorithm, DMDR, SWORD approach, EM algorithm, replication-based load balancing scheme, DHR algorithm, HS algorithm, active machine learning framework, fuzzy logic system, ILP model, and GPR model were adopted in [1,11,20,25,26,28,31,32,39,40,44,48,51,62,64,69,79,80] and [8], respectively. To forecast future file demand, the single exponential smoothing approach [26] is utilised. It creates a smoothed time series & removes irregular as well as random interference. The DHR technique in [44] distributes replicas at the most suitable sites, i.e. the optimum site with the most access for such replicas. While many locations host replicas, it also decreases access latency by picking the optimal replica. When compared to other regression models, GPR [8] is quite accurate.

Table 3 determines the performance measures obtained from various contributions regarding data replication models in the cloud. From Table 3, it is noted that 19 papers that have made a performance analysis under response time have contributed about 29.23% of the reviewed works, and the computation time was examined in 9 papers which had contributed about 13.84% of the entire works. Likewise, the SEU has contributed about 12.30% (8 papers). Further, energy consumption, cost computation, execution time, and storage capacity have been adopted in 9.23% (6 papers). Moreover, the AUC and FPR have contributed about 9.23% (6 papers) of the entire contribution. In addition, the false network usage and load variance have been contributed about 7.69% (5 papers). Furthermore, the bandwidth, delay, and several files have contributed about 6.15% (4 papers) of the entire contribution. Likewise, the latency, storage cost, number of replications, and transmission time have contributed about 4.61% (3 papers). On the other hand, the replication frequency, number of storage nodes, replication cost, file size, storage overhead, query size, recovery time, and completion time have been adopted in 3.07% (2 papers). Accordingly, the measures like provider expenditure, execution rate, block availability, mean block unavailability, efficiency, updation time, makespan, access time, TUE, runtime, replication time, R/W ratio, size of outsourced file, replica size, number of partition, mitigation time, detection correctness rate, number of violation, network overhead reduction, accuracy, time complexity, total provider expenditure, VM processing capability, load balance, reliability, security levels, desired level of data availability, current time point, data reduction rate, number of data centers, available probability, TPV verification time, MDS memory, request arriving rate, task failure penalty, P-value, number of data nodes, VM size, memory usage, CPU utilization, number of replica factors, hit ratio, task count, check point interval, average resource usage, number of requests, failure rate, threshold error rate, number of host, average error, arrival rate, computational capacity, NLCD ref data, RLCD ref data, RLCD-NLCD data, SBER value, time of execution query, mapping time, replication factor, and data locality have contributed about 1.54% (1 paper), respectively.

Review of various performance measures based on data replication in the cloud

Review of various performance measures based on data replication in the cloud

(Continued)

Maximum performance attained in the reviewed works

(Continued)

The maximum performance attained in every reviewed paper based on data replication in the cloud is illustrated in Table 4. Further, the response time attained in [63] has obtained a better range of 100 ms and the computation time used in [62] has the best value of 200 ms. By using the replication-based load balancing scheme [62], the computation time is lower with better outcomes than other techniques. Moreover, SEU has obtained a better value of 20% measured in [5] and Energy consumption has obtained a better value of 0.3 kwh examined in [78] respectively. The R-PCP approach [78] built a cloud platform with computing, storage, & bandwidth capabilities. To enhance resource utilization and energy consumption, R-PCP restricts the amount of provided resources. Likewise, the cost computation and execution time have attained a better value of 0.025$ and 500 ms which is examined in [31] and [79]. The response speed and average response time are greater by using the BCVS algorithm [31]. Similarly, storage capacity, network usage, load variance, bandwidth, delay, number of files, latency, storage cost, number of replications, transmission time, replication frequency, number of storage nodes, replication cost, and file size have attained better values of 100%, 0.25 ENU, 0.17, 640 Gb/s, 2500 ms, 1500 Mb, 0.6 ms, 15.3, 2, 50 sec, 0.1, 36, 1000, and 1500 Mb it has been examined in [6,22,36,38,43–45,48,49,49,59,67,67,77] and [48], correspondingly. The storage overhead, query size, recovery time, completion time, provider expenditure, execution rate, block availability, mean block unavailability, efficiency, updation time, makespan, access time, Traffic Usage Efficiency (TUE), runtime, replication time, R/W ratio, size of the outsourced file, replica size, number of partition, mitigation time, detection correctness rate, number of violation, network overhead reduction, and accuracy has attained a better value of 50%, 11, 0.5 sec, 200 ms, 26.94$, 92%, 0.8, 0.1428, 6.5%, 15 sec, 0.95, 0.25 sec, 1.38%, 10 sec, 22%, 0.50, 64 Mb, 15 Gb, 4, 10 minutes, 100%, 89%, 50%, and 99% that are examined in [2,3,7,11,22,32,35–37,41,50,61,61,61,62,66,72,73,73,76–78,80] and [83]. CRANE [61] can decrease replica construction & migration time by up to 60% & inter-data center network traffic by up to 50% when maintaining the minimum necessary data availability. The measures such as time complexity, total provider expenditure, VM processing capability, load balance, reliability, security levels, desired level of data availability, current time point, data reduction rate, number of data centers, available probability, TPV verification time, and MDS memory have attained higher values of 3.7, 95$, 1500MIPS, 10%, 8%, 0.9, 99%, 31, 99%, 1, 0.6–0.9, 18.827 ms, and 8 G and they have been analysed in [15,16,16,19,19,30,34,56,59,74,74,81], and [82], respectively. In comparison to other existing techniques, the experiments show that RRSD [74] can achieve better load balancing & assure data dependability. Also, request arriving rate, task failure penalty, P-value, number of data nodes, VM size, memory usage, CPU utilization, number of replica factors, hit ratio, task count, checkpoint interval, average resource usage, number of requests, failure rate, and threshold error rate were exploited in [1,11,20,25,25,27,27,27,33,51,52,52,69,82], and [1] and they have acquired higher values of 104 per unit time, 20000, 0.05, 7, 2.5 MB, 95%, 92%, 3, 72%, 100,000, 1 hour, 41%, 30, 50%, and 9% correspondingly. The replication factor modelling approach [1] minimized the failure rate more than other schemes. In addition, the number of hosts, average error, arrival rate, computational capacity, NLCD ref data, RLCD ref data, RLCD-NLCD data, SBER value, time of execution query, mapping time, replication factor, and data locality have attained higher values of 3–10, 2.72%, 6.67, 90%, 41.8%, 36.9%, 36.3%, 0.7, 0.25 s, 0.5 s, 1.22, and 73.96% and they have been measured in [8,8,40,40,40,48,62,64,64,79,80], and [8], respectively. EM method [64] was applied to lower the average error and improve the arrival rate. When compared to the default replication method and the second best option, the GRP model [8] helps to minimize mapping time (ERMS). The average replication factor is better than the default method, as can be observed. Furthermore, lowering the thresholds improves the data locality measure.

Evaluation of adopted replication handling schemes in the cloud and chronological review

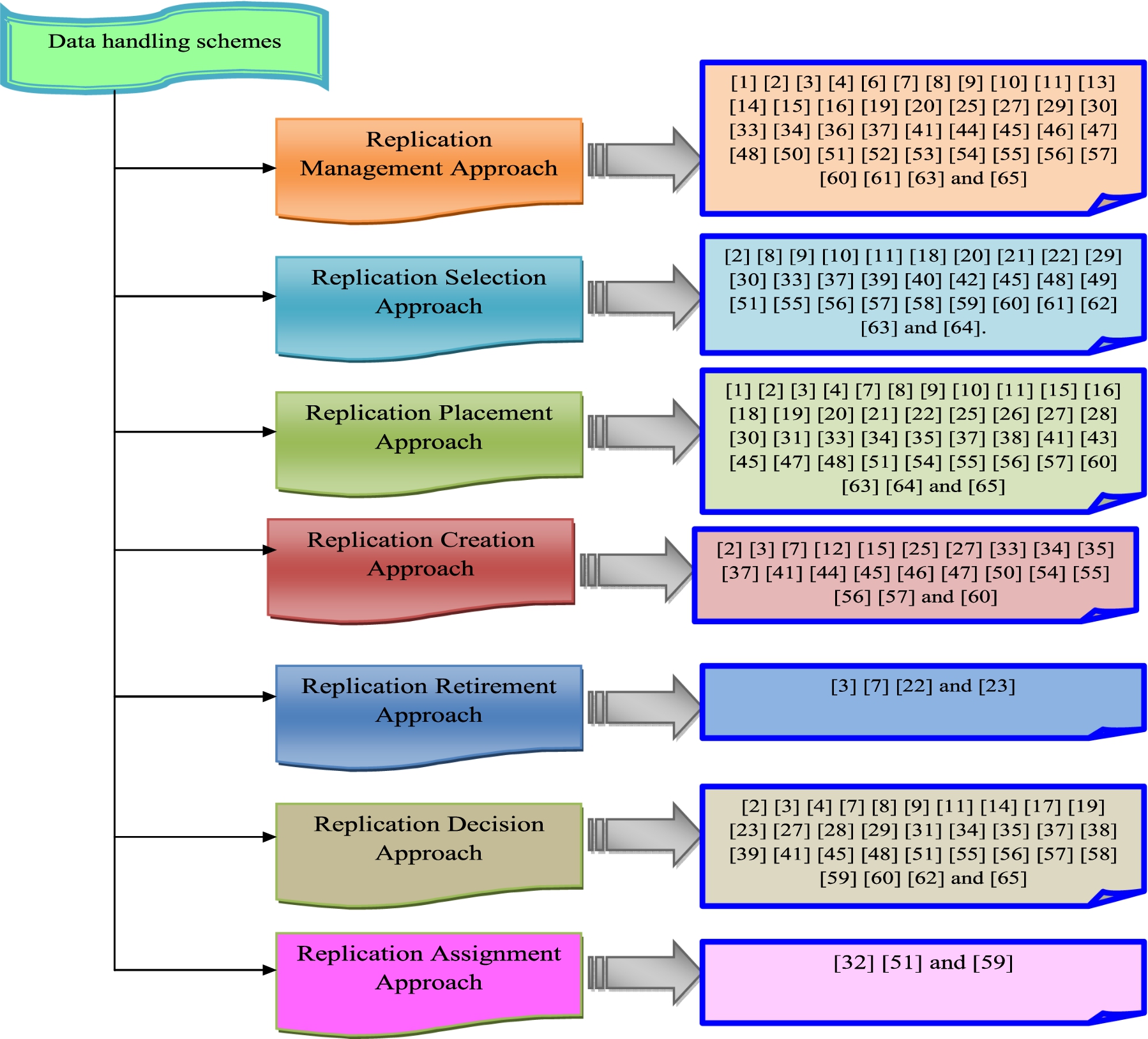

Review on replication handling in cloud storage systems

A review of major studies on replication handling in cloud storage systems is presented. As it will be explained, some of the studies evaluated took a replication modelling technique, whereas others took a different strategy: replication management, replication selection, replication placement, replication creation, replication retirement, replication choice, & replication assignment. Figure 2 depicts the replication handling in cloud storage systems.

Pictorial representation on review of replication handling in cloud system.

From the review, the replication management approach was employed in [1,2,5,6,11,13,19,20,26–28,30–35,39,41,43–46,48–53,59,61,63,66,69,72,73,75,76,78,79,83] and [8] respectively. An important topic that is closely related to replication migration is replication placement. One of the most difficult to solve when evaluating new replication requests is choosing the most effective location to transmit the duplicate. To reduce network congestion & ensure replica availability while keeping access time efficient, the updated schedule placement should be evaluated. Another key related problem is the replica’s effective size that falls within this group. The incremental technique which is a dynamic way of replicating could be a fair guideline for replica size. The size of replicas could be affected by the size of the targeting storage. In terms of replica size, replication might well be regarded as the granularity of replication that relates to the quantity of data size involved in replication creation. The replication selection approach was adopted in [2,3,5,9,25,28,30–32,37,39,40,44–46,48–52,56,62–64,67,72,79,81,83] and [80]. In addition, the replication placement approach was used in [2,3,5,7,16,19,20,26,30–32,35,37–39,43–46,48–53,59,61,63,66,67,72–80,82] and [8] respectively. However, replication creation approach was exploited in [4,19,20,26,27,30–33,46,48,49,52,59,61,66,69,74–76,78] and [44]; the replication retirement approach was adopted in [3,75,76] and [22], correspondingly. From the review, it is attained that the replication decision approach was used in [16,19,22,31,32,36,38–41,44–46,48–53,56,59,62–64,66,73–77,83] and [8], correspondingly Moreover, replication assignment approach was exploited in [15,39] and [62], correspondingly.

Data auditing schemes

The review of data auditing schemes is analysed from the papers [12,14,18,21,24,65] and [17], respectively. Ramanan

Jaya

Imad

Xiang

Jing

Daniel

Gan

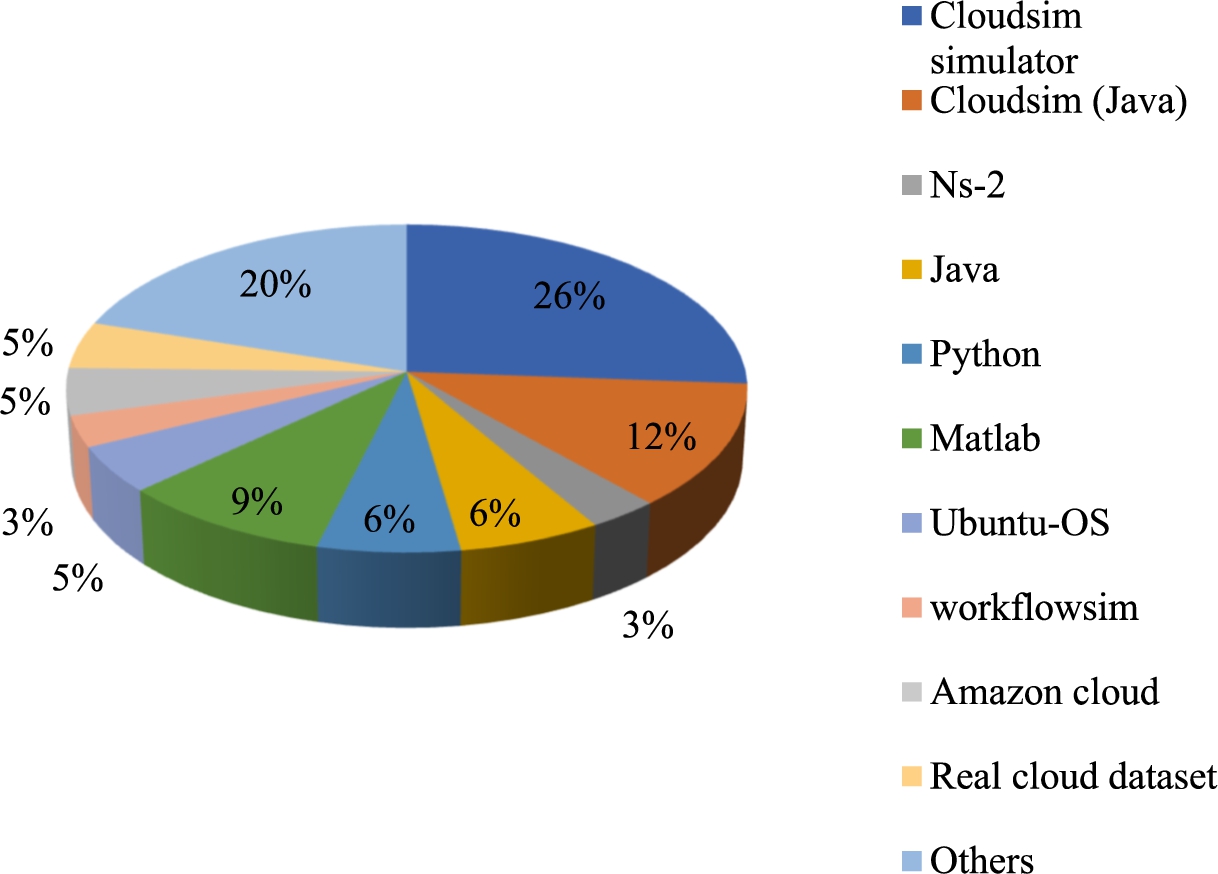

Representation of simulation tools used in each paper.

65 papers related to data replication in the cloud are taken for review on the simulation tool used in each paper. Figure 3 shows the representation of simulation tools used in each paper. Initially, 8 papers [2,13,43,45,51,63,73] and [19] have used Cloudsim (Java) as a simulation tool. Further, 17 papers [5,10,16,25,26,30,31,46,48–50,52,53,59,67,75,76] has contributed normal cloud sim simulator tool. Moreover, 4 papers [11,20,79] and [72] have adopted Java as a simulation tool. Likewise, 4 papers [11,20,79] and [72] have adopted Java as a simulation tool. Moreover, 6 papers [35,39,62,64,83] and [28] has adopted the MATLAB as a simulation tool. In addition, 4 papers [22,41,61] and [38] have adopted the PYTHON as a simulation tool. Still, 2 papers [6] and [77] have adopted the NS-2 as a simulation tool. Furthermore, 3 papers [9,27], and [1] have used Ubuntu as an operating system. Workflows have been used in 2 papers [78] and [69]. 3 papers [32,36] and [37] have used Amazon cloud datasets. Further, 3 papers [7,8] and [56] have used real cloud datasets. Also, 13 papers [3,4,15,33,34,40,44,65,66,74,81,82] and [80] have used other simulation tools.

Research gaps and challenges

The number of individuals using cloud storage has exploded recently. The reason was that the cloud storage system is difficult to keep and has lower storage costs than other storage options. It also offers excellent dependability, and availability, and is well-suited to large-scale data storage. The technologies use redundancy to ensure high availability & reliability. Objects were cloned numerous times in replicated networks, with each copy stored in a distinct place in distributed computing. As a consequence, Data Replication poses a minor danger to the Cloud Storage System for users, while providing effective Data Storage would be a major difficulty for providers. Data replication enables users to view data in real-time from many sources including servers, websites, as well as other sources, overcoming the difficulty of ensuring consistent data availability. The procedure of storing and maintaining multiple copies of critical data across several devices is referred to as data replication. To ensure a flawless replication process, they need to invest in a variety of hardware & software components, including CPUs, storage drives, and other components, as well as a full technical setup. Setting up a reaction pipeline is required to complete the arduous work of replication without any defects, errors, or other issues. Establishing a response pipeline that works correctly might take days, weeks, or even months, based on the replication requirements as well as the task’s complexity. Furthermore, large firms might find it difficult to maintain patience & maintain all stakeholders on the same page at this time. A considerable volume of data moves from the data source to the target database during replication. A significant amount of bandwidth was required to enable a smooth flow of information & prevent data loss. Even among big enterprises, keeping bandwidth capable of sustaining and processing enormous amounts of complex data in doing replication may be a difficult issue. It also necessitates investing in more “manpower” with a superior technological background. All of these constraints make data replication difficult, especially for large enterprises. As a consequence, the different existing data replication solutions were examined as well and the major challenges caused by data replication were highlighted. The goal of this study work in the future is to lower the number of replications while maintaining data availability & dependability.

Conclusion

This paper offered a complete review of data replication in the cloud. The contribution of this paper is as follows;

This paper determined the reviews in 65 papers related to data replication in the cloud.

The analysis has reviewed the performance measures and its maximum achievements were contributed by different data replication schemes.

Various data handling schemes in the cloud exploited in every reviewed work were also analyzed and determined diagrammatically.

In addition, the data auditing schemes were analyzed in certain papers and the simulation tools were analysed in 65 papers.

In the end, this paper presented different research issues that were helpful for researchers in further work on data replication in the cloud.

In the future, the cost analysis of the replication can be considered.

In addition, real time test bed can be considered.

In order to increase provider profit, it may be possible to balance tenant volume and performance in subsequent work.

In this case, we want to demonstrate that the ‘pay as you go’ model yields the most return for the provider when it is used to serve an ideal number of tenants.

This serves as justification for the suggested strategy’s design.

To determine which replicas should be replicated or removed beforehand, the log of previous queries may also be considered for making these decisions.

To further minimize resource consumption, RSPC could also be assessed while employing data compression or de-duplication in the environment.