Abstract

In the relational database realm, there has been a shift towards novel hybrid database architectures combining the properties of transaction processing (OLTP) and analytical processing (OLAP). OLTP workloads are made up by read and write operations on a small number of rows and are typically addressed by indexes such as B+trees. On the other side, OLAP workloads consists of big read operations that scan larger parts of the dataset. To address both workloads some databases introduced an architecture using a buffer or delta partition.

Precisely, changes are accumulated in a write-optimized delta partition while the rest of the data is compressed in the read-optimized main partition. Periodically, the delta storage is merged in the main partition. In this paper we investigate for the first time how this architecture can be implemented and behaves for RDF graphs. We describe in detail the indexing-structures one can use for each partition, the merge process as well as the transactional management.

We study the performances of our triple store, which we call

Introduction

Hybrid transactional and analytical processing (HTAP) is a term coined in the relational database world, to indicate database system architectures performing real-time analytics combining read and write operations on a few rows with reads and writes on large snapshots of the data [15]. Combining transactional and analytical query workloads is a problem that has not been yet tackled for RDF graph data, despite its importance in future Big graph ecosystems [24]. Inspired by the relational database literature, we propose a triple store architecture using a buffer to store the updates [11,25]. The key idea of the buffer is that most of the data is stored in a read-optimized main partition and updates are accumulated into a write-optimized buffer partition we call delta. The delta partition grows over time due to insert operations. To avoid the situation that the delta partition becomes too large thus leading to deteriorated performances, the delta partition is merged into the main partition. One would expect the following advantages of such an architecture:

higher read performance, due to the read-optimized main partition faster insert performance, since only the write-optimized partition is affected; faster delete performance, since the write-optimized partition is smaller and deletions in the main partition are just marking data as deleted; smaller indexing size and smaller memory footprint, since the main partition is read only and therefore higher compression on the data can be applied faster indexing speed, since all initial data does not need to be stored in a data structure that is updated over time while more an more data is indexed. better performance on analytical queries. since the read-optimized partition allows for faster scans over the data

By leveraging the above insights, we provide the design and implementation of the first differential-update architecture for graph databases showing how it behaves under different query workloads and with respect to state of the art graph systems (such as Virtuoso, Blazegraph,1

This paper is organized as follows. In Section 2, we describe the related works. In Section 3, we describe the data-structures that we use for the main-partition and for the delta-partition. In Section 4, we describe how SELECT, INSERT and DELETE operations are carried out on top of the two partitions. In Section 5, we describe the merge operation. In Section 6, we carry out a series of experiments that compare the performance of this new architecture with existing ones. A Supplemental Material Statement in Section 8. We conclude with Section 9 where we discuss the advantages and limitations of our proposed architecture.

Relational database systems are the most mature systems combining transaction processing (OLTP) and analytical processing (OLAP) [11,18]. OLTP workloads are made up by read-and write operations on a small number of rows and are typically addressed by B+Trees. On the other side OLAP are made up by big read and write transactions that scan larger parts of the data. These are typically addressed using a compressed column-oriented approach [1]. To address both workloads, the differential update architecture has been introduced [11] combining a write-optimized delta partition and a read-optimized main partition. This process is called merge [17,18]. One of the main advantages of the main partition is not only that it is read-optimized, but also that it is compressed allowing to load in memory much bigger parts of the data.

Many different architectures have been explored for graph databases. We limit ourselves to describe the common options and we refer to [2] for a more extensive survey. A very common architecture for triple stores is based on B+-trees. These are used for example in RDF-3X [21], RDF4J [8] Blazegraph and Apache Jena. These allow for fast search as well as delete and insert operations.

Another line of work tries to map the graph database model on an existing data model. For example, Trinity RDF [29] maps the graph data model to a key value store. Another approach is to use the relational database model. This is done for example in Virtuoso [9] or SAP HANA Graph [16] where all RDF triples are stored in a table with three columns (subject, predicate, object). Also, Wilkinson et al. [28] uses the relational database model but in this case a table is created for each property in the dataset.

There are two lines of work that are most similar to ours. The first are works that use read-only compressed data structures rather than B+-trees to store the graph like QLever [4]. However, they do not support updates and are only limited to the main partition. The second are versioning systems where the data is stored in a main partition and changes are stored in a delta partition. This is the case of x-RDF-3X [22] relaying on RDF-3X and OSTRICH [26] relaying on HDT. OSTRICH, unlike our system, gives access to the data only via triple pattern searches with the possibility to specify a start or end version. Their aim is not to have an efficient SPARQL endpoint. x-RDF-3X on the other side, is built on top of RDF-3X which, as mentioned above, is a B+-trees and not on compressed data structure. Moreover this system isn’t maintained anymore.

Data structures for main and delta-partition

In order to construct a differential update architecture we need to choose data-structures that are well suited for the main-partition and the delta-partition, we respectively HDT and the RDF4J native store. We describe our choices for

RDF

In

An RDF triple is a tuple

An RDF triple pattern is a tuple

We say that an RDF triple if if and if We call a function:

HDT: The main partition

The main partition should be a data-structure that allows for fast triple-pattern retrieval and has high data compression. For this we choose HDT [12]. HDT is a binary serialization format for RDF based on compact data structures. These aim to compress data close to the possible theoretic lower bound, while still enabling efficient query operations. More concretely HDT has been shown to compress the data to an order of magnitude similar to the gzipped size of the corresponding n-triple serialization, while having a triple pattern resolution speed that is competitive with existing triples-stores. This makes it an ideal data structure for the main-partition.

In the following we are describing the internals of HDT that are needed to understand the following of the paper. HDT consists of three main components, namely: the header (H), the dictionary (D) and the triple (T) partition.

The header component is not relevant, as it stores metadata about the dataset like number of triples, number of distinct subjects and so on.

The dictionary is a map that assigns to each IRI, blank node, and literal (which we will call resources in the follow) a numeric ID. The dictionary is made up by 4 sections (

The ID is 0 if the term does not exist in the corresponding section.

The triples are encoded using the IDs in the dictionary and then lexicographically ordered with respect to subject, predicate and object. These are encoded using two numeric compact arrays and two bitmaps. This data structure allows for fast triple pattern resolution for fixed subject or fixed subject and predicate. With HDT FoQ [20], an additional index data structure is added to query the triple patterns

Most importantly, HDT offers APIs to search for a given triple pattern either by using resources or by using IDs. It returns triples together with their

Last but not least, we would like to point out that an HDT file can be queried either in “load” or “map” mode. In the “load mode”, the entire data set is loaded into memory. In the “map mode”, the HDT file is “mapped” in the endpoint memory, only parts of the file are loaded into memory when required.

RDF-4J native store: The delta-partition

The delta-partition should be a write-optimized data structure. B+-trees offer a good trade-off between read speed while maintaining good write performance. Moreover B+-trees are widely used in many triple-stores showing that they are a good choice for triple-stores in general. Finally B+-trees are also used as the delta-partition in the relational database world. We therefore choose for the delta-partition the RDF4J native store which is an open source, maintained and well optimized triple-store back-end that relies on B+-trees.

Due to space reasons, we do not provide details about the internals of RDF4J since they are not important to understand the following. Despite HDT, an RDF4J store

Select, insert and delete operations

In

It is important to make the destinction between the RDF4J Native Store and the RDF4J SPARQL API. These are two projects of the RDF4J library, but are independent components of

The main components of

The

HDTTriplesID retrieve HDT id from triple pattern

SELECT: both the HDT main partition and the RDF4J delta partition offer triple pattern resolution functions, we denote them as

Using the strategy above, we would, for each triple pattern resolution, make calls to the HDT dictionary and convert a triple ID back to a triple pattern. This is very costly and in many cases not necessary. When joining multiple triples we do not need to know the triples themselves, but only if the subject, predicate, object are equal (and the ID suffice for this operation). Whenever possible, we therefore avoid the tripleID conversions. On the other hand, for example for FILTER operations we need the value of some of the resources in the triple and in these cases we convert the IDs to their corresponding string representations. This is also done when the actual result is returned to the user.

In order to make all joins over IDs, the triples stored in the delta-partition need to be stored via IDs (otherwise a conversion of the IDs via the dictionary is unavoidable). We therefore store every resource in the delta-partition with its HDT ID (if it exists) using the particular IRI format

Finally note that, as described above in Section 3.2, a predicate and a subject/object can have the same ID but represent different resources. This means that if a subject or object ID is used to query in predicate position (or the other way around) then the conversion over the dictionary is unavoidable.

When searching over IDs, some HDT internals are exploited to cut down certain search operations. For example, from an object ID, one can check whether it also appears as a subject. Triple patterns that in the subject position have IDs of objects that by their ID range cannot appear as object, also do not need to be resolved.

Using the above strategy, we would, for each triple pattern, search over HDT and also search over RDF4J. In general, we assume that most of the data is contained in HDT and most of these calls will return empty results. On the other hand, these calls are expensive. To avoid them we add a new data structure in

INSERT: the insert operation is affecting only the RDF4J delta. When a triple is inserted, we check against the HDT dictionary if the subject, predicate, object are present in the HDT dictionary. If this is the case we store the corresponding resource using the HDT IDs in RDF4J. Moreover, in this case, we mark the corresponding bits in the XYZBits data structure. Note that the RDF4J store contains a mixture of “plain” resources and IDs (see Algorithm 2).

DELETE: when a triple is deleted, we need to delete it in the main partition and in the delta, depending where it is located. Deleting a triple in the delta is not a problem since this functionality is directly provided. HDT on the other side does not have the concept of deletion. In HDT, each triple is naturally mapped to an ID and the triples are lexicographically ordered. We exploit this by adding a new data structure conceived to handle deletions. It consists of a bit sequence of the length of the number of triples in HDT. We call it “DeletedBit”. We use it to mark a triple as deleted. This is achieved by searching the triple over HDT, computing its position and marking the corresponding bit. Note that HDT does not provide APIs to retrieve, given a triple, its corresponding ID. We have therefore implemented this functionality in

Insert a triple into the RDF graph

Delete a triple from the RDF graph

Select a triple of the RDF graph

Notice that the above operations allow to achieve a SPARQL 1.1-compliant SPARQL endpoint, given that the SPARQL algebra is build upon these operations. We carry out two further optimizations, as follows. First, we reuse the query plan generated by RDF4J. In particular, this means that all join operations are carried out as nested joins. Second, we need to provide the query planner with an estimate of the cardinality for the different triple patterns. These are used to compute the correct query plan. We compute the cardinality by summing the cardinality given by HDT with the one given by RDF4J. While the cardinalities provided by RDF4J are estimations, the cardinalities provided by HDT are exact. This allows the generation of more accurate query plans.

Our system was built on top of the RDF4J API to provide a SPARQL endpoint, allowing the delta partition to be replaced with any triple store integrated using the RDF4J Sail API.5

As the database is used, more data accumulates in the delta. This is problematic since the delta store cannot scale. We therefore trigger merges in which the data in the delta is moved to the HDT main partition so that the initial state of an empty delta is restored.

There are two problematic aspects there. The first is how to move the data from the delta-partition to the main partition in an efficient way. The second is how to handle the transactions.

HDTCat and HDTDiff

To move the data from the delta-partition to the main partition the naive idea would be to dump all data from the delta-partition uncompress the HDT main partition, merge the data and compress it back. This approach is not efficient, neither in terms of time nor in terms of memory footprint. We therefore rely on HDTCat [6], a tool that was created to join two HDT files without the need of uncompressing the data. The main idea of HDTCat is based on the following observation. HDT is a sorted list of resources (i.e. the dictionary containing URIs, Literals and blank-nodes) as well as a sorted list of triples. This is true up to, the splitting of the dictionary in different sections, and the compression of the sorted lists. This means that merging to HDTs corresponds to merge two ordered lists which is efficient both in time and in memory.

On the other side in the merge operation we do not need only to add data, but also to remove the triples that are marked as deleted. We therefore developed HDTDiff, a method to remove from an HDT file triples marked as deleted using the main partition delete bitmap.

HDTDiff will first create a bitmap for each section of the HDT dictionary (Subject, Predicate and Object) and fill them using the delete bitmap. If a bit is set to one at the index

Note that HDTCat and HDTDiff do not assume that the underlying HDTs are loaded in memory, the HDT file and the bitmaps are only mapped from disk. By reading the HDT components sequentially without any random memory access, these operations are not memory intense and hence scalable.

After this step, the delta partition is now empty and the main partition is containing all the triples of dataset. Allowing us to fill again the delta partition while having our previous data compressed with the main partition.

Transaction handling

In the following, we detail the merge operation (see Fig. 2), that takes place in 3 steps.

Figure detailing the merge operation. Horizontally we depict the different merge steps. In each step we detail which operations (SELECT (S), DELETE (D) and INSERT (I)) is possible. Moreover, we vertically depict the data structures that are accessed by the corresponding operation.

This step is triggered by the fact that the delta has exceeded a certain number of triples (which we call threshold). Step 1 locks all new incoming update connections. Once all existing update connections terminate, a new delta is initialized, which will co-exist with the first one during the merge process. We call them deltaA and deltaB, respectively, and they ensure that the endpoint is always available during the merge process. Also, a copy of DeletedBit is made called DeletedBit_tmp. The lock on the updates ensures that the data in the delta and in DeletedBit is not changed during this process. Once the new store is initialized, the lock on update connections is released.

Step 2

In this step, all changes in the delta are moved into the main partition. In particular, the deleted triples in (DeletedBit_tmp) and the triples in the deltaA storage are merged into the main partition. This is carried out into two steps using hdtDiff and hdtCat. The use of hdtCat and hdtDiff is essential to maintain the process scalable since decompressing and compressing an HDT file is resource intense (in particular with respect to memory consumption). When the hdtDiff and hdtCat operations are finished, a new HDT is generated that needs to be replaced with the existing one and step 3 is triggered. In step 2, SELECT, INSERT and DELETE operations are allowed. SELECT operations need access to the HDT file as well as the deltaA and deltaB store. INSERT operations will only affect deltaB, while DELETE operations will affect the delete bitmap bits, deltaA and deltaB. Moreover, all deleted triples will also be stored in a file called DeletedTriples.

Step 3

This step is triggered when the new HDT is generated. At the beginning, we lock all incoming connections, both read and write. At the beginning of this step, the XYZBits are initialized using the data contained in deltaB. Furthermore, a new DeletedBit is initialized. To achieve this, we iterate over the triples stored in DeletedTriples and mark these as deleted.

Moreover, the IDs used in deltaB are referring to the current HDT. We therefore iterate over all triples in deltaB and replace them with the IDs in the new HDT. During this process there is a mixture of IDs used in the old and the new HDT, which explains why we also lock read operations. We finally switch the current HDT with the new HDT and we release all locks restoring the initial state.

Experiments

In this section we show two evaluation results of the

Loading times and result index size for different dataset sizes and stores

The Berlin SPARQL benchmark allows to generate synthetic data about an e-commerce platform containing information like products, vendors, consumers and reviews. The benchmark itself contains 3 sub-tasks which reflect different usage of a triple-store:

Explore: this task loads into the triple-store the dataset and executes a mix of 12 types of SELECT queries which are of transactional type. Update: this task is similar to explore, but the data is changed via update queries over time. Bi: this task loads the datasets into the triple-store and runs analytic queries over it.

We benchmark all three tasks on the

The benchmark is returning 2 values, the Query Mixes per Hours (QMpH) for all the queries and the Query per Seconds (QpS) for each query type. These values are computed by,

Our main objective is to evaluate

Explore

We executed the explore benchmark task for 10k, 50k, 100k, 500k, 1M and 2M products. The loading times are reported in Table 1. We see that the RDF4J indexing method is quickly taking a long time to index a dataset. From 500k to 1M products the import time is increasing by a factor 3 while from 1M to 2M for a factor 5.  . We also report the index size for the

. We also report the index size for the  . The compression rate for this dataset is lower than for other RDF datasets. The data produced by the Berlin Benchmark contains long product description. These are not well compressed in HDT.

. The compression rate for this dataset is lower than for other RDF datasets. The data produced by the Berlin Benchmark contains long product description. These are not well compressed in HDT.

In Table 2 we report the QMpH. The  even if higher speeds might be expected.

even if higher speeds might be expected.

QMpH on the explore/BI/update task.

result

: best values

QMpH on the explore/BI/update task.

We executed the update benchmark task for 10k, 50k, 100k and 200k products. We execute 50 warm-up rounds and 500 query mixes. The queries Q3-Q14 are the same as in the explore benchmark. Q1 is an INSERT query, Q2 is a DELETE query.

We do not report the loading times and the store sizes since they are similar to the ones in the explore use case. The QMpH are indicated in Table 2. The performance of the INSERT query Q1 is higher for the  . It is faster to insert triples into a small native store than into a larger one. Since in the

. It is faster to insert triples into a small native store than into a larger one. Since in the  . This is due to the fact that triples are not really deleted in the

. This is due to the fact that triples are not really deleted in the

The query performance is not negatively affected when comparing between the “update” and the “explore” task. This shows that the combination of HDT and the delta is efficient and does not introduce an overhead. The performance of the “load” mode is again much superior than the “map” mode.

Business intelligence task

We executed the business intelligence (bi) benchmark task for 10k, 50k, 100k, 200k and 500k products. We execute 25 warm-up rounds and 10 query mixes. We ignored Q3 and Q6 since we encountered scalability problems both for the

We do not report the loading times and the store sizes since they are similar to the ones in the explore use case. The QMpH are indicated in Table 2. We can see that the  . Overall, the QMpH is form 2 to 4 times higher depending on the dataset size. This is the effect of the HDT main partition that is read-optimized. This is particularly evident in this task since the queries access large parts of the data. On the other side we believe that due to the fact that all joins are “nested joins” the analytical performance can be further increased.

. Overall, the QMpH is form 2 to 4 times higher depending on the dataset size. This is the effect of the HDT main partition that is read-optimized. This is particularly evident in this task since the queries access large parts of the data. On the other side we believe that due to the fact that all joins are “nested joins” the analytical performance can be further increased.

Merge time comparison

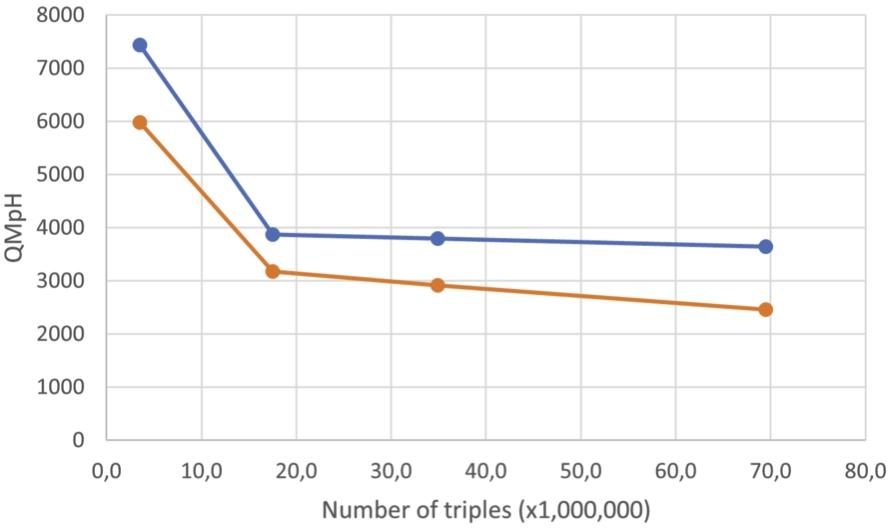

To test the efficiency of the merge step, we ran the BSBM update benchmark task using

To decide the right threshold, we looked at the amount of changes for each query mix in the update experiment of the BSBM. This amount was of 1,000 changes for the 3.53M triples dataset and of 2,000 changes for the 69,5M triples dataset. We decided to choose a threshold of 1,000. Like this the system would constantly be in a merge state, allowing us to compare the worst case scenario (constantly merging) with the perfect case scenario (nearly no update, never merging). The count of merges was added as a second metric.

During the previous experiments we saw that the performance of the native store lack behind the

Merges comparison using BSBM update benchmark task

Merges comparison using BSBM update benchmark task

To compare

To run our experiments, we used an AMD EPYC 7281 with 16 virtualized cores, 64 GB of RAM and a 100 GB HDD. The performances for the other systems, namely Blazegraph, Jena, Neo4j and Virtuoso are drawn from the paper [3], which rely on a similar machine in terms of per-thread rating of the CPU, disk type and available RAM.

First we indexed the WDBench dataset. Table 4 compares the index size for the different triple stores. As shown in Table 4, the index is from 3 to 5 times smaller for our system compared to the competitors which reflects the advantage  .

.

Size of the different indexes

Thereafter we run each query provided by WDBench one. For each of them we check if an error occurs or a timeout, otherwise we measure the execution time. The timeout is set to 1 minute and is as in the WDBench paper, is considered when computing the average and median times. The queries are splitted into 5 types.

Basic graph pattern (BGP) with two sub types: Single or Multiple

Optional queries containing OPTIONAL fields

Path queries

Navigational graph patterns

Results with the WDBench from the different types of queries for

We report the WDBench results in Table 5. We can see that as predicted, thanks to the main-partition, our system is faster to run most of the queries  . We can see that the query performance is at least 3 times faster on BGPs and at least 2 times faster on path, navigational and optional queries.

. We can see that the query performance is at least 3 times faster on BGPs and at least 2 times faster on path, navigational and optional queries.

In the following we describe how to index and query Wikidata as well as a comparison with existing alternatives.

Loading data

To load the full Wikidata dump into

With few commands, it allows everyone to quickly have a SPARQL endpoint over Wikidata without the need of special configurations. Note that the Wikidata dump is not uncompressed during this process. In particular, the disk footprint needed to generate the index is lower than the uncompressed dump (which is around 1.5 Tb). In the Table 6, we can see the time spent for the different indexing steps.

Time split during the loading of the Wikidata dataset

The time to download the dataset wasn’t taken into account in the 50 h.

Time split during the loading of the Wikidata dataset

The time to download the dataset wasn’t taken into account in the 50 h.

Wikidata index characteristics for different endpoints

The precise number of days for the preprocessing is not indicated in the documentation.

For comparison, to date, it exists only few attempts to successfully index Wikidata [10]. Since 2022, when the Wikidata dump exceeded 10B triples, only 4 triple stores are reported to be capable of indexing the whole dump, namely: Virtuoso [9], Apache Jena,8

Virtuoso is a SQL based SPARQL engine where the changes on the RDF graph are reflected to a SQL database.

QLever is a SPARQL engine using an RDF custom binary format to index and compress RDF graphs. It supports a text search engine, but only the SPARQL engine will be compared during this experiment.

Blazegraph is a SPARQL engine using a B+Tree implementation to store the RDF graph, it is currently used by Wikidata.

Jena is a SPARQL engine also using a B+Tree implementation to store the RDF graph. Unlike Blazegraph the Jena’s implementation wasn’t considered for large RDF graph storage.

We report in Table 7 the loading times, the number of indexed triples, the amount of needed RAM, the final index size and the documentation for indexing Wikidata.

Overall, we can see that the

Sizes of each components of qEndpoint (total: 296 GB)

Sizes of each components of qEndpoint (total: 296 GB)

HDT is meant to be a format for sharing RDF datasets [13] and was therefore designed to have a particularly low disk footprint. Table 8 shows the sizes of the components needed to currently set up a Wikidata SPARQL endpoint using only HDT, which amounts to the first two rows in the table, i.e. 300 GB in total. The RDF4j counterpart, which is the third row in Table 8, corresponds to 16 KB. Compared with the other endpoints in Table 7, the whole data can be easily downloaded in a few hours with any high-speed internet connection.

The second component in Table 6 of 113 GB can be avoided in setups with slow connections since this co-index can be computed in 5 h. As a consequence, it is possible to further reduce the amount of time required to deploy a full SPARQL endpoint. This time turns to be shrunk to a few minutes after downloading the files in Table 6.9 The index files are currently available at the URL

In the following, we discuss the evaluation of the query performance of the qEndpoint with other available systems. To the best of our knowledge, ours is also the first evaluation on the whole Wikidata dump using historical query logs.

As described above, while there are successful attempts to set up a local Wikidata endpoint, these are difficult to reproduce and depending on the cases the needed hardware resources are difficult to find [10]. Therefore, in order to compare with existing systems, we restrict to those whose setups are publicly available:

Blazegraph: the current system that is used in production by Wikimedia Foundation available at Virtuoso: a live demo was set up in 201911

QLever: a live demo is available at

To benchmark the different systems, we performed a random extraction of 10K queries from Wikidata query logs [19] and ran them on the qEndpoint and on the above endpoints. Table 9 shows the query types for each query. The 10k queries where selected as follows. We picked 10k random queries of the interval 7 dump12

No usage of

No MINUS operation, currently not supported by the qEndpoint.

Unlike with WDBench, the whole dataset is used, increasing the usage of analytic queries, our system being on commodity hardware, we except to have worse results for some query due to a lack of resources to compensate.

The resources for the compared systems are the same as the one reported for indexing in Table 7.

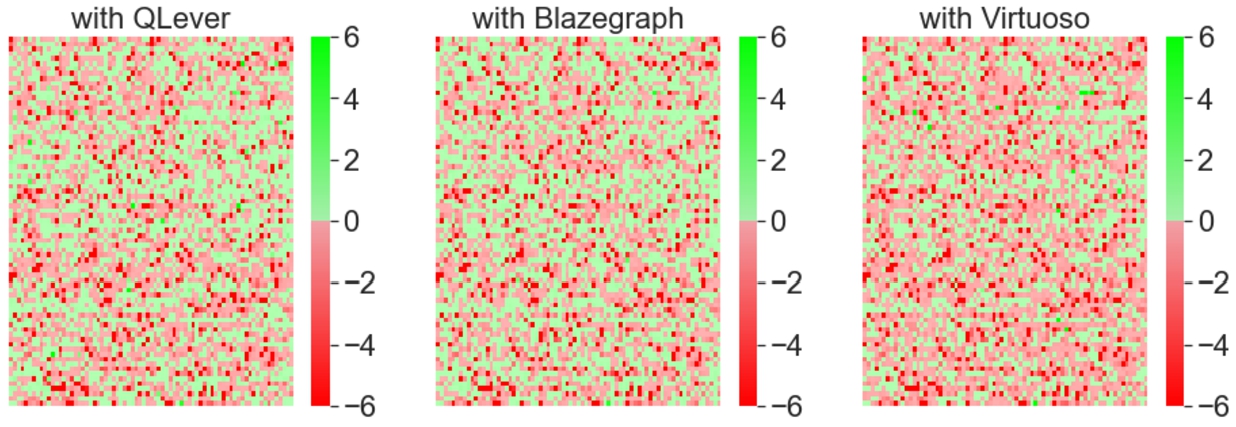

The Wikidata logs queries results are presented in Fig. 3 and Table 10.

Query count per type

qEndPoint time difference with 3 different endpoint (QLever, Virtuoso, Blazegraph from left to right) over 10K random queries without considering errors, one square is one query. A square is green if qEndPoint is faster and red if the baseline is faster. The intensity is based on the time difference which is between −6 and +6 seconds.

Errors per endpoint on 10K random queries of the Wikidata query logs

We observe that the various systems have varying level of SPARQL support (see Table 10) and that qEndpoint via RDF4J can correctly parse all the queries. As shown in Table 11, it achieves better performances than QLever in 44% of the cases (despite 4x lower memory footprint). It outperforms Virtuoso in 34% of the cases (despite 10x lower memory footprint) and it outperforms Blazegraph (the production system used by Wikimedia Deutschland with Wikidata) in 46% of the cases (despite a 4x lower memory footprint). Overall, we see that the median execution time between the qEndpoint and the other systems is −0.05. This means that, modulo a few outliers, we can achieve comparable query speed with reduced memory and disk footprint. By manually investigating some queries, we believe that the outliers are due to query optimization problems that we plan to tackle in the future. For example by switching the order of the triple patterns in the query.

Overall, despite running on commodity hardware, qEndpoint can achieve query speeds comparable to other existing alternatives. The dataset and the scripts used for the experiments are available online.13

During the demonstration, we plan to show the following capabilities of qEndpoint:

how it is possible to index Wikidata on a commodity hardware;

how it is possible to set up a SPARQL endpoint by downloading a pre-computed index; (Point 2 in the Fig. 1)

the performance of qEndpoint on typical queries over Wikidata from our test dataset (see Table 9). Query types that are relevant for showcasing are simple triple pattern queries (TP), TPs with Unions, TPs with filters, Recursive path queries and other query types found in the Wikidata query logs. We will also be able to load queries formulated by the visitors of our demo booth.

The objective is to demonstrate that the qEndpoint performance is overall comparable with the other endpoints despite the considerable lower hardware resources. As such, it represents a suitable alternative to current resource-intensive endpoints.

Statistics over the time differences in seconds with the percentage of queries qEndpoint was faster.

result

: best values

Statistics over the time differences in seconds with the percentage of queries

The above implementation is used in two projects that are in production and currently operational at the European Commission, the European Direct Contact Center (EDCC) and Kohesio.

European direct contact center

The European Direct Contact Center14

Kohesio is an EU project that aims to make research projects funded by the EU discoverable by EU citizens (

The

Conclusions

In this paper, we have presented the

We have evaluated over different benchmarks the performance of the architecture as well as the efficiency of the implementation that we provided against other public endpoints. We see the following main advantages of this architecture: high read performance  , fast insert performance

, fast insert performance  , fast delete performance

, fast delete performance  , small index size

, small index size  , fast index speed

, fast index speed  , and good analytical performance

, and good analytical performance  .

.

These results show that the architecture that we propose is promising for graph databases. On the other side this is a first step towards graph databases with this architecture. Open challenges are for example:

be able to query named graphs [14], exploit more the data structure of HDT to construct better query plans (for example, using merge joins instead of the current nested ones) and improve in OLAP scenarios, propose new versions of HDT optimized for querying (to support for example filters over numbers), propose a distributed version of the system

Overall we believe that the propose architecture has the potential to become an ideal solution for querying large Knowledge Graphs in low hardware settings.