Abstract

Virtual data integration is the current approach to go for data wrangling in data-driven decision-making. In this paper, we focus on automating schema integration, which extracts a homogenised representation of the data source schemata and integrates them into a global schema to enable virtual data integration. Schema integration requires a set of well-known constructs: the data source schemata and wrappers, a global integrated schema and the mappings between them. Based on them, virtual data integration systems enable fast and on-demand data exploration via query rewriting. Unfortunately, the generation of such constructs is currently performed in a largely manual manner, hindering its feasibility in real scenarios. This becomes aggravated when dealing with heterogeneous and evolving data sources. To overcome these issues, we propose a fully-fledged semi-automatic and incremental approach grounded on knowledge graphs to generate the required schema integration constructs in four main steps: bootstrapping, schema matching, schema integration, and generation of system-specific constructs. We also present

Introduction

Big data presents a novel opportunity for data-driven decision-making and modern organizations acknowledge its relevance. Consequently, it is transforming every sector of the global economy and science, and it has been identified as a key factor for growth and well-being in modern societies.1

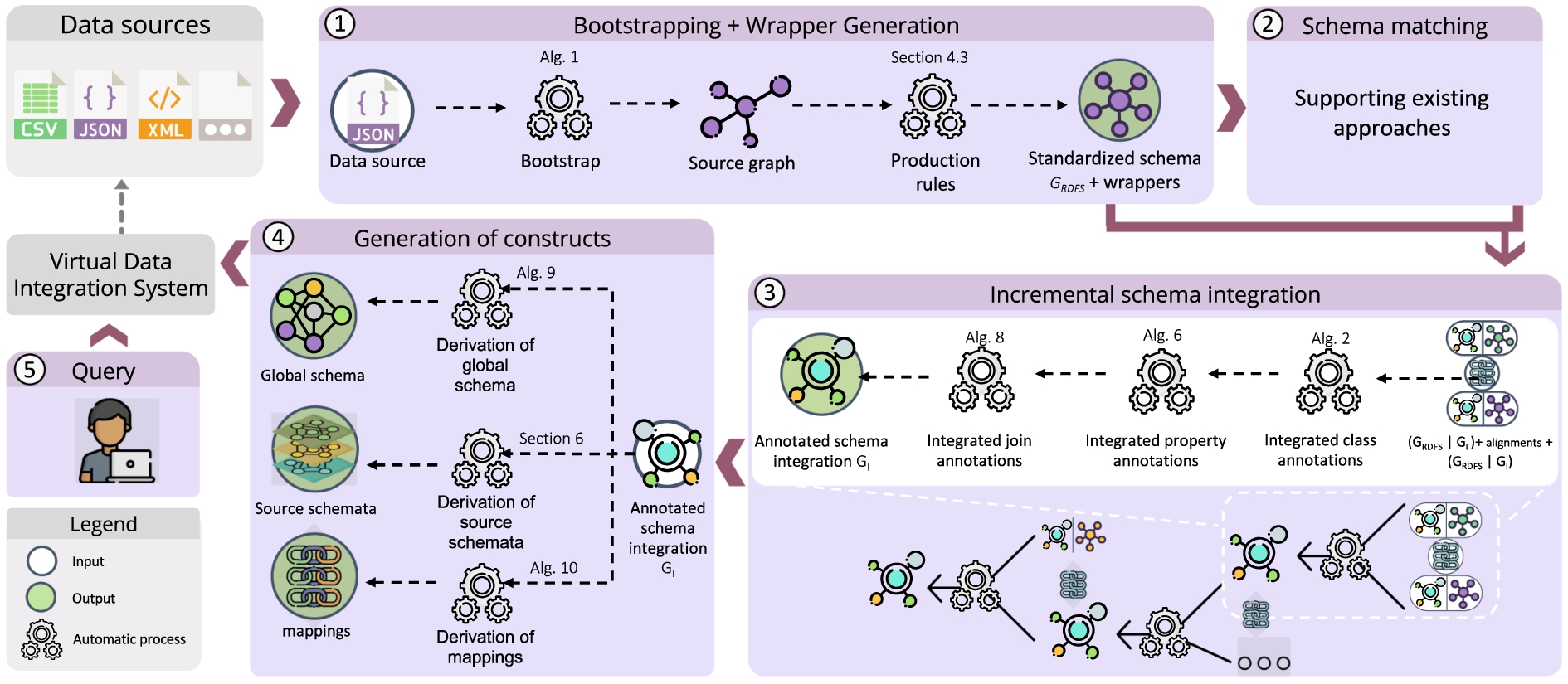

The schema integration pipeline.

Data integration encompasses three main tasks: schema integration, record linkage, and data fusion [15,54]. In this paper, we focus on

The variety of structured and semi-structured data formats hamper the bootstrapping of the source schemata into a common representation (or canonical data model) that facilitate their homogenization.

Instead of the classical waterfall execution, the dynamic nature of data sources introduces the need for a

The large number and variety of domains in the data sources, some of them unknown at exploration time, hinders the use of predefined domain ontologies to lead the process.

Our approach in a nutshell.

The limitations highlighted above have seriously hampered the development of end-to-end approaches for schema integration, which is a major need in practice, especially when facing Big Data [22,46]. To that end, we propose an all-encompassing approach that largely automates (essential for Big Data scenarios) schema integration. On top of that, we also implement our approach as an open-source tool called In the ancient Nahuatl language, the term

Our approach is able to deal with heterogeneous and semi-structured data sources.

It follows an incremental

It provides a single, uniform and system-agnostic framework grounded on knowledge graphs.

We developed and implemented novel algorithms to largely automate all the required phases for schema integration: 1◯ bootstrapping (and wrapper generation) and 3◯ schema integration.

Our approach is not specific for a given system and it generates system-agnostic metadata that can be later used to generate the 4◯ specific constructs of most relevant virtual data integration systems. We showcase it by generating the specific constructs of two representative families of virtual data integration systems (an ontology-based data access system and a mediator-based system).

We introduce

See more details at

Data integration encompasses three main tasks: schema integration, record linkage, and data fusion [15,54]. A wealth of literature has been produced for each of them. In this paper, we focus on automating and standardizing the process to create the schema integration constructs for virtual data integration systems. Accordingly, in this section, we discuss related work on the two main phases depicted in Fig. 2: bootstrapping and schema integration. We wrap up this section discussing the available virtual data integration systems that provide some kind of support to generate the necessary schema integration constructs.

Related work on bootstrapping

Bootstrapping techniques are responsible for extracting a representation of the source schemata and there has been a significant amount of work in this area, as presented in several surveys [2,23,27,52]. Most of the current available efforts, however, do require an a priori available target schema and/or materialize the source data in the global schema [8,14,36]. Since our approach is bottom-up and meant for virtual systems, we subsequently focus on approaches generating the schema from each source (either checking the available metadata or instances) without using a reference schema or ontology. Table 1 depicts the most representative ones. To better categorize them, we distinguish three dimensions: required metadata, supported data sources, and implementation, which we detail as follows, accompanied by a discussion to highlight our contributions.

Comparison of bootstrapping state-of-the-art techniques

Comparison of bootstrapping state-of-the-art techniques

Schema integration is the process of generating a unified schema that represents a set of source schemata. This process requires as input semantic correspondences between their elements (i.e., alignments). In Table 2, we depict the most relevant state-of-the-art approaches. We distinguish three dimensions: integration type, strategy, and implementation:

Comparison of schema integration state-of-the-art techniques

Comparison of schema integration state-of-the-art techniques

We examined virtual data integration systems that have introduced a pipeline supporting bootstrapping and/or schema integration with the goal of providing query access to a set of data sources. We have excluded systems that rely on the manual generation of schema integration constructs [11,41] and focus on those that support semi-automatic creation of such constructs.In all cases, nevertheless, the processes introduced are specific and dependent on the virtual data integration system at hand. Further, note that some popular data integration systems such as Karma [36], Pool Party Semantic [53], or Ontotext7

Comparison of virtual data integration systems automating, at least partially, schema integration

We now introduce the formal overview of our approach and the running example used in the following sections.

Formal definitions and approach overview

Data source bootstrapping and production rules

Here, we present the formal definitions that are concerned with phase 1◯ as depicted in Fig. 2.

Integrating bootstrapped graphs and generating the schema integration constructs

Here, we present the formal definitions that are concerned with phases 2◯, 3◯ and 4◯ as depicted in Fig. 2.

Note that virtual data integration systems just consider schema integration and disregard record linkage and data fusion. Thus, in the original framework it talks about data integration, which is used as a synonym of schema integration.

Formal overview of our approach.

We consider a data analyst interested in wrangling two different sources about artworks from the Carnegie Museum of Art (CMOA)9

Running example excerpts.

Metamodel to represent graph-based schemata of JSON datasets (i.e.,

As previously described, our approach is generic to any data source, as long as specific algorithms are implemented and shown to satisfy soundness and completeness. In this section, we describe phase 1◯ in Fig. 2 and showcase a specific instantiation of the framework for JSON. Following Fig. 3, we introduce a bootstrapping algorithm that takes as input a JSON dataset and produces a typed graph-based representation of its schema. Then, such graph is translated into a graph typed with respect to a canonical data model by applying a sound set of production rules. Next, we present the metamodels required for our bootstrapping approach and the production rules.

Data source metamodeling

In order to guarantee a standardized process, the first step requires the definition of a metamodel for each data model considered (note that several sources might share the same data model). Figure 5 depicts the metamodel to represent graph-based JSON schemata (i.e.,  consists of one root

consists of one root  , which in turn contains at least one

, which in turn contains at least one  instance. Each

instance. Each  is associated with one

is associated with one  value which is either a

value which is either a  , a

, a  or a

or a  . We also assume elements of a

. We also assume elements of a  to be homogeneous, and thus it is composed of

to be homogeneous, and thus it is composed of  elements. Last, we consider three kinds of primitives: these are

elements. Last, we consider three kinds of primitives: these are  ,

,  and

and  . In Appendix A, we present the complete set of constraints that guarantee that any typed graph with respect to

. In Appendix A, we present the complete set of constraints that guarantee that any typed graph with respect to

The canonical metamodel

In order to enable interoperability among graphs typed w.r.t. source-specific metamodels, we choose RDFS as the canonical data model for the integration process. A significant advantage of RDFS is its built-in capabilities for meta-modeling, which supports different abstraction levels. Figure 6, depicts the fragment of the RDFS metamodel (

and their

and their  . Additionally, in order to model arrays and under the assumption that there exists a single type of container, we make use of the

. Additionally, in order to model arrays and under the assumption that there exists a single type of container, we make use of the  property. In Appendix B, we present the complete set of constraints that guarantee that any typed graph with respect to

property. In Appendix B, we present the complete set of constraints that guarantee that any typed graph with respect to

Fragment of the RDFS metamodel (i.e.,

We next present a bootstrapping algorithm to generate graph-based representations of JSON datasets. The generation of wrappers that retrieve data using such graph representation has already been studied (e.g., see [42]), and here we reuse such efforts. Here, we focus on the construction of the required data structure. As depicted in Algorithm 1, the method

The goal of this algorithm is to instantiate the  and

and  elements using the document’s root. Then, the method

elements using the document’s root. Then, the method  , we define its corresponding

, we define its corresponding

or

or  with fresh IRIs (i.e., object or array identifiers). This is not the case for primitive elements, which are either connected to the three possible instances of

with fresh IRIs (i.e., object or array identifiers). This is not the case for primitive elements, which are either connected to the three possible instances of  (i.e.,

(i.e.,  ,

,  , or

, or  ). The presence of such three possible instances in

). The presence of such three possible instances in  to an instance of

to an instance of  using the

using the  labeled edge.

labeled edge.

Bootstrap a JSON dataset

Retaking the running example introduced in Section 3.2, Fig. 7 depicts the set of triples that are generated by Algorithm 1 on a simplified version of the

are declared in line 4 of function

are declared in line 4 of function  and

and  are declared, respectively, in lines 3 and 5 of function

are declared, respectively, in lines 3 and 5 of function  are declared in line 3 of function

are declared in line 3 of function

Set of triples generated by Algorithm 1. In the left-hand side we depict the function that generates each corresponding set of triples.

,

,  ,

,  ,

,  and

and  there exists a method named likewise. Regarding

there exists a method named likewise. Regarding  , the resource

, the resource

We next present the second step of our bootstrapping approach (see Fig. 3), which is the translation of graphs typed with respect to a specific source data model (e.g.,

Instances of  are translated to instances of

are translated to instances of  .

.

Instances of  are translated to instances of

are translated to instances of  . Additionally, this requires defining the

. Additionally, this requires defining the  of such newly defined instance of

of such newly defined instance of  .

.

Instances of  which have a value an instance of

which have a value an instance of  are also instance of

are also instance of  .

.

The  of an instance of

of an instance of  is its corresponding counterpart in the

is its corresponding counterpart in the  whose counterpart is

whose counterpart is  . The procedure for instances of

. The procedure for instances of  and

and  is similar using their pertaining type.

is similar using their pertaining type.

The  of an instance of

of an instance of  is the value itself.

is the value itself.

Here, we take as input the graph generated by our bootstrapping algorithm depicted in Fig. 7. Then, Fig. 8 shows the resulting graph typed w.r.t. the RDFs metamodel after applying the production rules. Each node and edge is annotated with the rule index that produced it.

Graph typed w.r.t. the RDFS metamodel resulting from evaluating the production rules. Extension of the rdfs metamodel for the integration annotation.

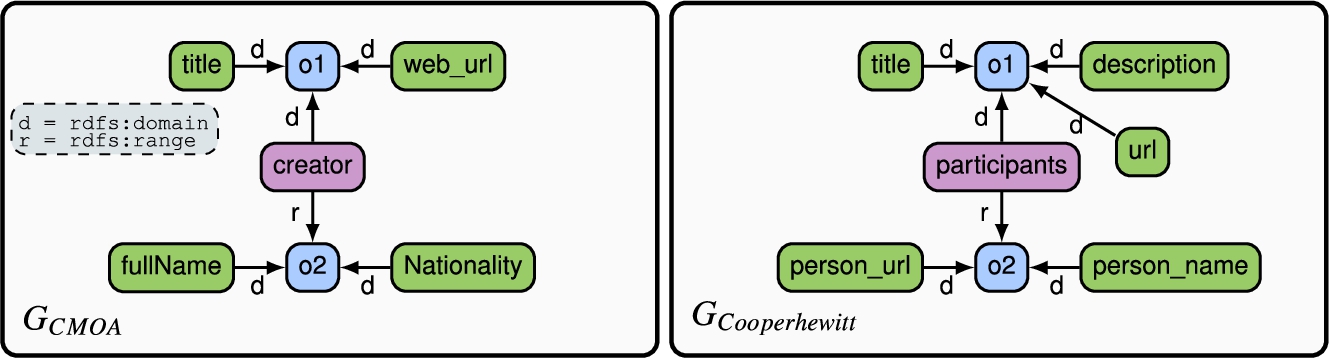

This section describes phase 3◯ in Fig. 2, which addresses the problem of generating the  and

and  (see Fig. 9). These resources are essential to annotate how the underlying data sources must be integrated (e.g., union or join). The integration is accomplished through a set of invariants (see Section 5.1) that guarantee the completeness and incrementality of the IG. Importantly, our proposal is sound and complete with regard to the bootstrapping method described in Section 4 and it can only guarantee these properties if its input is typed graphs (see Fig. 3). The algorithm we propose in this phase takes as input two typed graphs and a list of semantic correspondences (i.e., alignments) between them. The integration algorithm is guided by semantic correspondences when generating the IG, keeping track of used and unused alignments in the process. Unused alignments identify those semantic correspondences not yet integrated due to conditions imposed by the integration algorithm, but to be integrated once the conditions are met in further executions. To obtain the semantic correspondences, we rely on existing schema matching techniques (e.g., LogMap [30] or

(see Fig. 9). These resources are essential to annotate how the underlying data sources must be integrated (e.g., union or join). The integration is accomplished through a set of invariants (see Section 5.1) that guarantee the completeness and incrementality of the IG. Importantly, our proposal is sound and complete with regard to the bootstrapping method described in Section 4 and it can only guarantee these properties if its input is typed graphs (see Fig. 3). The algorithm we propose in this phase takes as input two typed graphs and a list of semantic correspondences (i.e., alignments) between them. The integration algorithm is guided by semantic correspondences when generating the IG, keeping track of used and unused alignments in the process. Unused alignments identify those semantic correspondences not yet integrated due to conditions imposed by the integration algorithm, but to be integrated once the conditions are met in further executions. To obtain the semantic correspondences, we rely on existing schema matching techniques (e.g., LogMap [30] or

All in all, the IG is a rich set of metadata containing all relevant information to perform schema integration and, as such, it traces all information from the sources as well as that of integrated resources to support their incremental construction. In Appendix B.1, we present the complete set of constraints that guarantee that any IG is consistent.

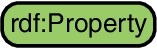

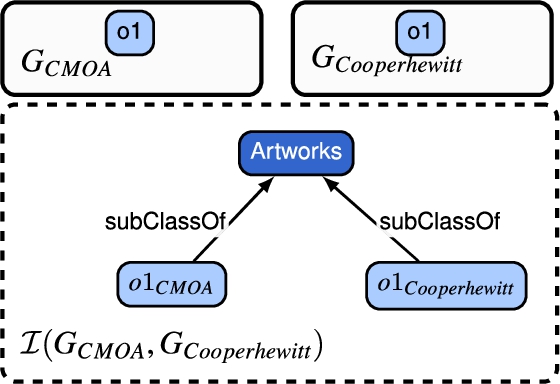

Continuing the running example, Fig. 10 illustrates the result of the bootstrapping step to generate the typed graph representation of the data sources  and

and  . Since this information is not available in the input typed graphs, we use

. Since this information is not available in the input typed graphs, we use  and distinguish them by checking their range. Hereinafter, as shown in Fig. 10, we use the following color schemes to represent

and distinguish them by checking their range. Hereinafter, as shown in Fig. 10, we use the following color schemes to represent  ,

,  and

and  . We use dark colors to represent integrated resources:

. We use dark colors to represent integrated resources:  of type class,

of type class,  of type datatype property and

of type datatype property and  of type object property.

of type object property.

The extracted canonical RDF representations for the CMOA and cooperhewitt sources.

We here present an implementation for the graph integration algorithm (see Section 3.1.2). This algorithm, system-agnostic (i.e., not tied to any specific system) and incremental by definition, generates an integrated graph

, then

, then

and

and  , then

, then

and

and  , then

, then

, then the new integrated resource

, then the new integrated resource

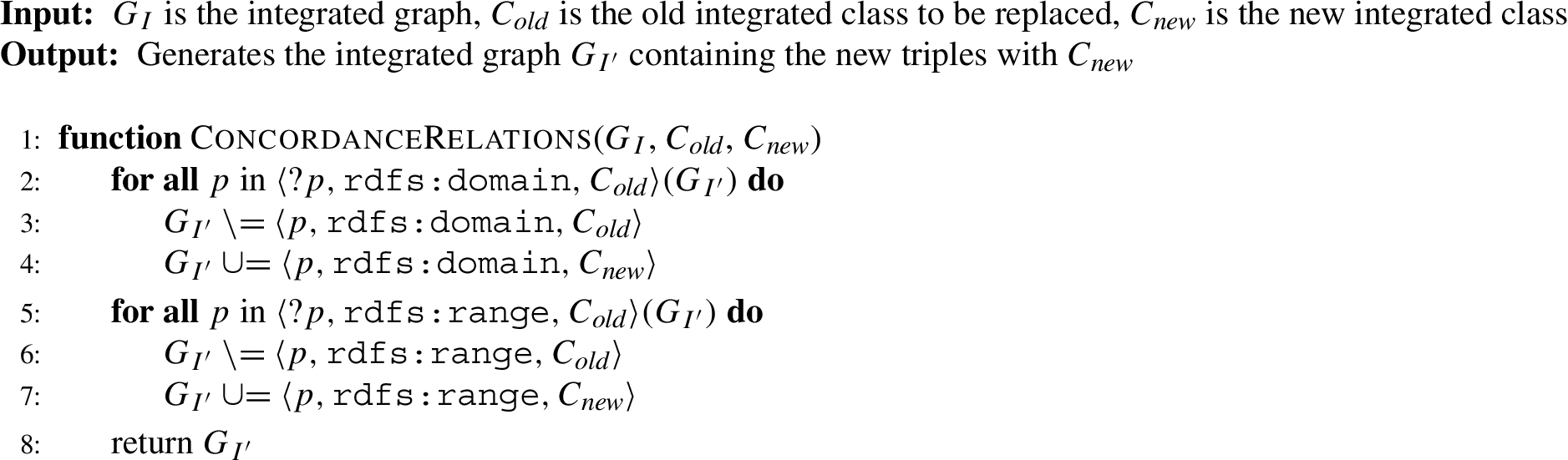

Intuitively, the presented invariants guarantee the soundness and completeness of our approach, since it covers all potential cases derived from one-to-one mappings: non-previously integrated resources, either one or another previously integrated or both already integrated. Grounded on these invariants, Algorithm 2 generates the corresponding integrated metadata and supports their incremental construction and propagation. Note that invariant  of type class, invariants

of type class, invariants  and invariant

and invariant  . To accomplish

. To accomplish  with

with  . When integrating classes, all resources (e.g., properties) connected to

. When integrating classes, all resources (e.g., properties) connected to  must be connected to

must be connected to  For example, replacing an

For example, replacing an  of type class requires updating all properties referencing

of type class requires updating all properties referencing  by rdfs:range or rdfs:domain to reference

by rdfs:range or rdfs:domain to reference  . Therefore, we introduce the method

. Therefore, we introduce the method

Integration of resources – classes

Replace integrated resource – classes

Algorithm 4 is the main integration algorithm to generate

We have introduced the  axioms are coherent when replacing an

axioms are coherent when replacing an  .

.

Incremental schema integration.

ConcordanceRelations – classes.

Discovered correspondences for

Integration of two classes from

Retaking the running example, consider the alignments depicted in Table 4 between  and

and  . For this case, invariant

. For this case, invariant  and

and  , namely

, namely  . For this example, two

. For this example, two  were generated:

were generated:  and

and  . Note, the set

. Note, the set

The invariants for properties are very similar to those presented for classes. Thus, we will use the  and

and  .

.

Let us start with datatype properties. Following the class-oriented integration idea introduced, we only integrate datatypes if they are part of the same entity, that is, if their domains (e.g.,  ) have already been integrated into the same

) have already been integrated into the same  of type class. Therefore, the invariants for

of type class. Therefore, the invariants for  integration must reflect this condition. In the following, we present invariant I1 for

integration must reflect this condition. In the following, we present invariant I1 for  . Note that the remaining invariants should be updated accordingly.

. Note that the remaining invariants should be updated accordingly.

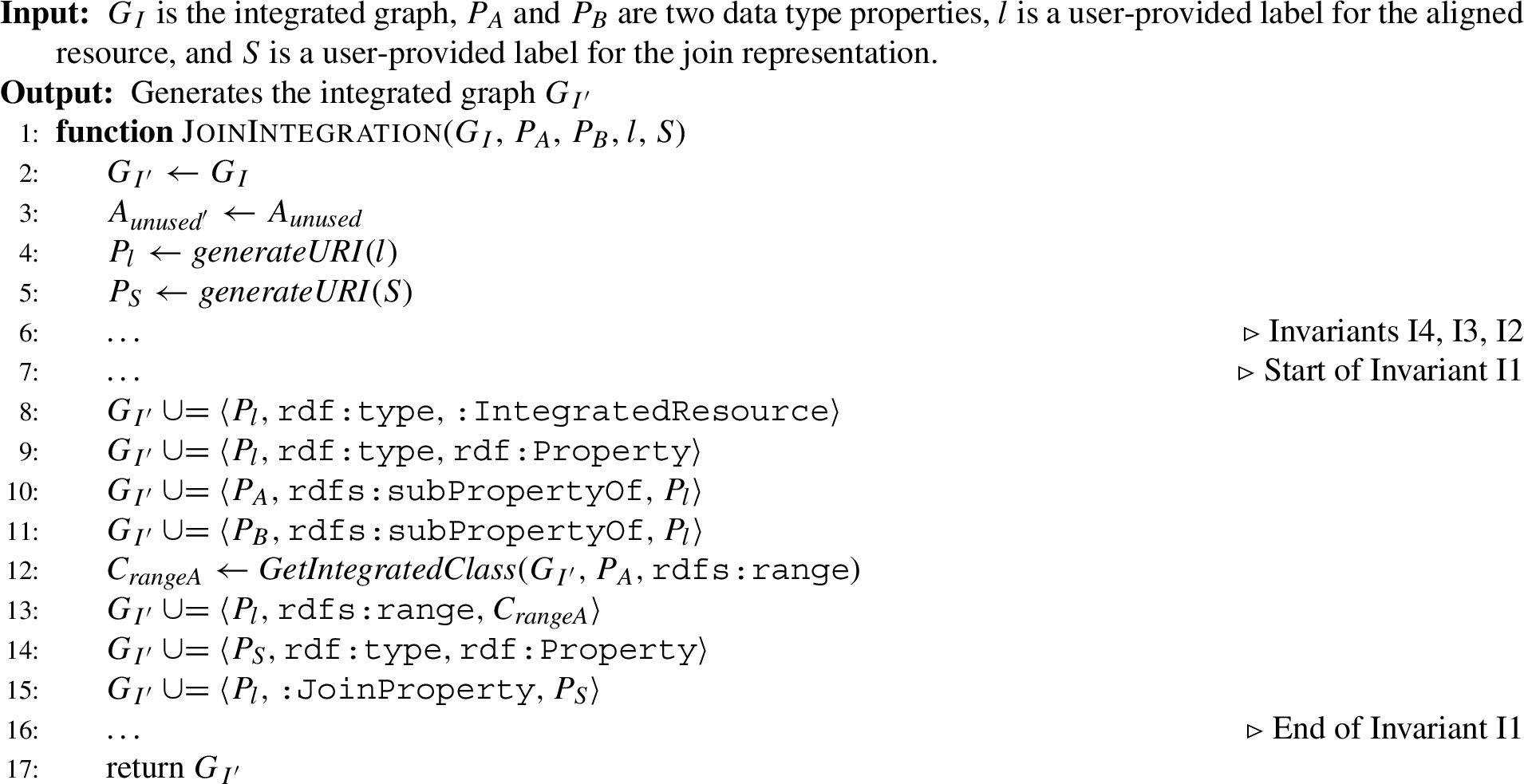

Integration of data type properties – adapted from Algorithm 2.

Algorithm 6 implements the  in further integrations. Thus, whether this integration will take place depends on the discovered set of alignments from the schema matching approaches. Further, the domain of the

in further integrations. Thus, whether this integration will take place depends on the discovered set of alignments from the schema matching approaches. Further, the domain of the  of type property is an

of type property is an  of type class. And for the range, we assign the more flexible xsd type (e.g., xsd:string). Algorithm 7 implements the method

of type class. And for the range, we assign the more flexible xsd type (e.g., xsd:string). Algorithm 7 implements the method  or an

or an  of type property.

of type property.

For the integration of  , we follow a similar approach and only integrate object properties if their domain and range have already been integrated. Accordingly, the object property invariants should reflect this condition and we showcase it for invariant I1 as follows:

, we follow a similar approach and only integrate object properties if their domain and range have already been integrated. Accordingly, the object property invariants should reflect this condition and we showcase it for invariant I1 as follows:

ConcordanceRelations – properties.

The implementation of the  integration is very similar to Algorithm 6. We should accordingly add the proper conditions set by the invariants and consider the manipulation of the axioms as we performed for

integration is very similar to Algorithm 6. We should accordingly add the proper conditions set by the invariants and consider the manipulation of the axioms as we performed for  . We illustrate the integration of properties in Example 5.

. We illustrate the integration of properties in Example 5.

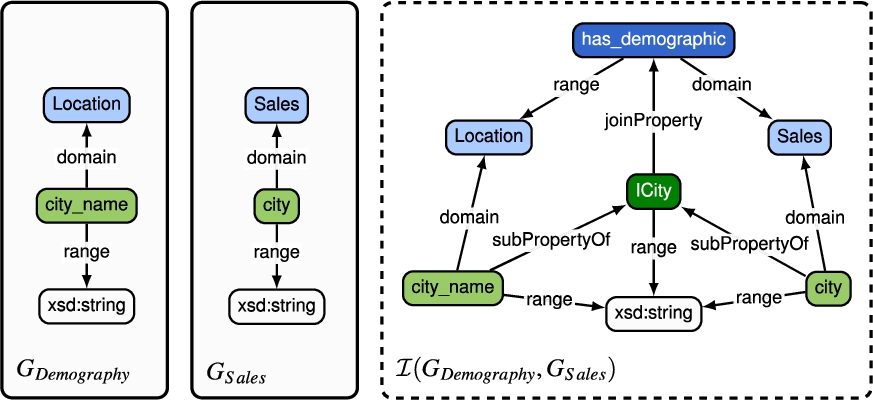

Continuing the Example 4, the algorithm will integrate all  using invariant

using invariant  and

and  . The integration algorithm uses rdfs:subPropertyOf to connect both

. The integration algorithm uses rdfs:subPropertyOf to connect both  to the

to the  of type property, that is,

of type property, that is,  . For this example, the following

. For this example, the following  were generated:

were generated:  ,

,  and

and  . Then, we proceed to integrate

. Then, we proceed to integrate  as we can note that the domain and range of

as we can note that the domain and range of  and

and  have already been integrated, fulfilling the object property requirement. Finally, Fig. 13 depicts the complete integrated graph generated from Example 4 and 5. The result of this schema integration generates one

have already been integrated, fulfilling the object property requirement. Finally, Fig. 13 depicts the complete integrated graph generated from Example 4 and 5. The result of this schema integration generates one  of type class, three

of type class, three  of type datatype property and one

of type datatype property and one  of type object property. Note that the set

of type object property. Note that the set

Result of integrating two classes and two data type properties from

Join integration

Example of a join integration.

New data source.

Alignments discovered for

Result of integrating two integrated classes from

As discussed, our property integration is class-oriented and we only automate the process if the integrated properties are equivalent and can be integrated via a union operator. However, in real practice, this is very restrictive and we allow to integrate two datatype properties whose classes have not been previously integrated in what we call a  . This integration must be performed by a post-process task triggered by a user request. This type of property integration occurs when the domains are not semantically related, but the properties have a semantic correspondence in an input alignment. We thus consider this case as a join operation. Algorithm 8 depicts this integration type. Having no conditions allows us to integrate properties from completely distinct entities. To allow this integration, we must express the join relationship by creating an

. This integration must be performed by a post-process task triggered by a user request. This type of property integration occurs when the domains are not semantically related, but the properties have a semantic correspondence in an input alignment. We thus consider this case as a join operation. Algorithm 8 depicts this integration type. Having no conditions allows us to integrate properties from completely distinct entities. To allow this integration, we must express the join relationship by creating an  that connects both domain

that connects both domain  of the

of the  to integrate, as illustrated in Fig. 14. This object property aims to add semantic meaning to the implicit relation of the properties domain. Thus, this

to integrate, as illustrated in Fig. 14. This object property aims to add semantic meaning to the implicit relation of the properties domain. Thus, this  connects to the

connects to the  using

using  to identify that this is an on-demand datatype property integration not meeting the regular integration algorithms.

to identify that this is an on-demand datatype property integration not meeting the regular integration algorithms.

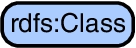

We will use the final result of Example 5 to perform an incremental integration. For this case, consider the data analyst wants to integrate the typed graph  . Let us consider the alignment

. Let us consider the alignment  and

and  . Note that we have two

. Note that we have two  of type class in this alignment resulting in the use of invariant

of type class in this alignment resulting in the use of invariant  and

and  are replaced by the new

are replaced by the new  of type class, namely

of type class, namely  . Now, the algorithm proceeds to integrate

. Now, the algorithm proceeds to integrate  . Let us consider the alignment

. Let us consider the alignment  and

and  representing invariant

representing invariant  of type property, we reuse

of type property, we reuse  . Therefore,

. Therefore,  will be a

will be a

. For the last alignment,

. For the last alignment,  and

and  , we replace both

, we replace both  of type property by

of type property by  . In summary, our approach creates one

. In summary, our approach creates one  of type class and two

of type class and two  of type property. The resulting integrated graph is depicted in Fig. 18.

of type property. The resulting integrated graph is depicted in Fig. 18.

Result of integrating one integrated data type property from

Integration graph from a second integration.

Example of two different data models.

The integration algorithm is our incremental proposal to integrate the typed graphs. However, this algorithm assumes one-to-one input alignments, a limitation all schema alignment techniques have. Our integration algorithm integrates equivalent resources, but it is not able to deal with other complex semantic relationships. To illustrate this, consider the example presented in Fig. 19. Let us consider a data analyst interested in retrieving the country’s name related to a project. For the  property to retrieve the

property to retrieve the  entity. However, the

entity. However, the  entity, using the

entity, using the  property, and then using the

property, and then using the  property to retrieve the

property to retrieve the  entity, which contains the

entity, which contains the  information. The main problem in this case is that the alignment is not one-to-one, instead, the alignment matches a subgraph to one resource. As far as we know, there is no schema alignment technique that considers such case and we for now do not either and leave it for future work. Last, but not least, we would like to stress that even if largely automated, schema integration must be a user-in-the-loop process, since this is the only way to guarantee the input alignments generated by a schema alignment tool are correct.

information. The main problem in this case is that the alignment is not one-to-one, instead, the alignment matches a subgraph to one resource. As far as we know, there is no schema alignment technique that considers such case and we for now do not either and leave it for future work. Last, but not least, we would like to stress that even if largely automated, schema integration must be a user-in-the-loop process, since this is the only way to guarantee the input alignments generated by a schema alignment tool are correct.

Generation of schema integration constructs

In this section, we show how our approach can be generalized and reused to derive the schema integration constructs of specific virtual data integration systems. We, precisely, instantiate phase 4◯ in Fig. 2. The integrated graph generated in Section 5 contains all relevant metadata about the sources and their integration, which can be used to derive these constructs. Regardless of the virtual data integration system chosen, once the system-specific constructs are created, the user can load them into the system and start wrangling the sources via queries over the global schema. This way, we free the end-user from manually creating such constructs. In the following subsections, we present two methods to generate the schema integration constructs of two representative virtual data integration approaches: mediator-based and ontology-based data access systems

Mediator-based systems

We consider ODIN as a representative mediator-based data integration system [43]. ODIN relies on knowledge graphs to represent all the necessary constructs for query answering (i.e., the global graph, source graphs and local-as-view mappings). However, all its constructs must be manually created. Thus, we show how to generate its constructs automatically from the integrated graph generated in our approach.

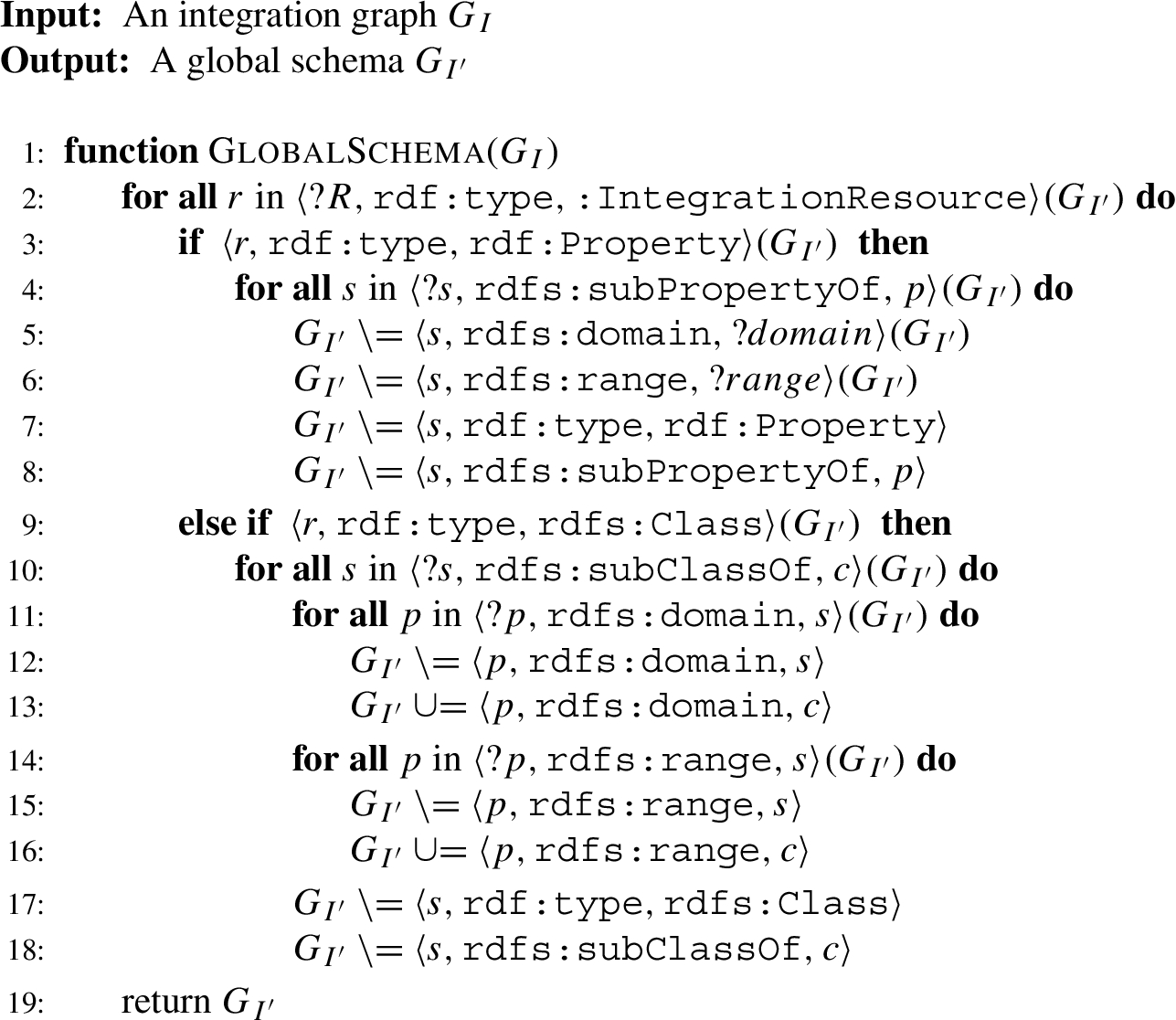

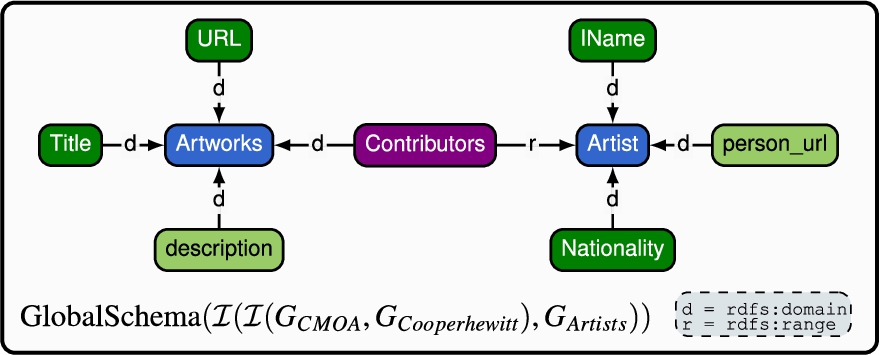

Algorithm 9 shows how to generate the global graph required by ODIN from our integrated graph. In ODIN, the global graph is the integrated view where end-users can pose queries. Hence, this algorithm first merges the sub-classes and sub-properties of integrated resources. As a result, we represent the taxonomy of integrated resources with a single integrated resource and properly modify the domain and range of the affected properties. In the case of non-integrated resources, their original definition remains. Figure 20, illustrates the output provided by Algorithm 9 for the generated integration graph in Fig. 18. The source schemata and wrappers representing the sources and required by ODIN are immediate to retrieve, since our integration schema preserves the original graph representation bootstrapped per source, and the wrappers remain the same. Finally, since the integration graph was built bottom-up, the local-as-view mappings required by ODIN are generated via the sub-class or sub-property relationships created during schema integration between source schemata elements and integrated elements. All ODIN constructs are therefore straightforwardly generated from the integration graph automatically. Once done, and after loading these constructs into ODIN, the user can query the data sources by querying the global graph of ODIN, which will rewrite the user query over the global graph into a set of queries over the wrappers via its built-in query rewriting algorithm. We have implemented the method here described to generate the constructs and integrated it in ODIN. This implementation is available on this paper’s companion website.

Global schema

Generated global schema.

Ontology-based data access systems provide a unified view over a set of heterogeneous datasets through an ontology, usually expressed in the OWL 2 QL profile, and global-as-view mappings, often specified using languages such as R2RML and RML. The integrated graph is able to derive an OWL ontology by converting RDFS resources into OWL resources. To this aim, Algorithm 9 requires a post-process step to perform this task. Note that, by definition, the integrated graph contains a small subset of RDFS resources. Thus, we only require to map  to

to  and

and  to the corresponding

to the corresponding  or

or  . Concerning mappings, as long as appropriate algorithms are defined to extract and translate the metadata from the integrated graph, we can derive mappings for any dedicated syntax. Here, we will explain the derivation of RML mappings. Each RML mapping consists of three main components: (i) the Logical Source (

. Concerning mappings, as long as appropriate algorithms are defined to extract and translate the metadata from the integrated graph, we can derive mappings for any dedicated syntax. Here, we will explain the derivation of RML mappings. Each RML mapping consists of three main components: (i) the Logical Source ( ) to specify the data source, (ii) the Subject Map (

) to specify the data source, (ii) the Subject Map ( ) to define the class of the RDF instances generated and that will serve as subjects for all RDF triples generated, and (iii) a set of Predicate-Object maps (

) to define the class of the RDF instances generated and that will serve as subjects for all RDF triples generated, and (iii) a set of Predicate-Object maps ( ) that define the creation of predicates and its object value for the RDF subjects generated by the Subject Map. Algorithm 10 shows how to generate RML mappings from the integrated graph. The process is as follows.

) that define the creation of predicates and its object value for the RDF subjects generated by the Subject Map. Algorithm 10 shows how to generate RML mappings from the integrated graph. The process is as follows.

The algorithm creates RML mappings for each entity defined in a data source schema. Therefore, it iterates over all resources of type  from the integrated graph that are not

from the integrated graph that are not  , since those resources were generated from a data source during the bootstrapping phase. For each resource

, since those resources were generated from a data source during the bootstrapping phase. For each resource  , we generate a unique URI, namely

, we generate a unique URI, namely  for the mapping

for the mapping  to specify the data source location, which is obtained from the source wrapper through the method

to specify the data source location, which is obtained from the source wrapper through the method  to express the data source format, which is assigned dynamically depending on the data source type by using the method

to express the data source format, which is assigned dynamically depending on the data source type by using the method  or

or  ), and (iii) the

), and (iii) the  to define the iteration pattern to retrieve each data instance to be mapped, obtained from the wrapper definition using the method

to define the iteration pattern to retrieve each data instance to be mapped, obtained from the wrapper definition using the method

RML mappings generation.

The next step is to specify the  for the mapping

for the mapping  , we define

, we define  : (i) the

: (i) the  to define the URIs of the instances, for which we use the URI from

to define the URIs of the instances, for which we use the URI from  to specify the class of the subjects produced. Then, we create of the set of

to specify the class of the subjects produced. Then, we create of the set of  . To that end, we iterate over all

. To that end, we iterate over all  that has as domain the class

that has as domain the class  or

or  that represents a

that represents a  , we define

, we define  metadata. Here, we distinguish two cases: properties that are not part of a

metadata. Here, we distinguish two cases: properties that are not part of a  and those that are. In the first case, we will generate the metadata as follows: (i) the

and those that are. In the first case, we will generate the metadata as follows: (i) the  to indicate that property

to indicate that property  as well as

as well as  to indicate which element of the data source schema should be used to generate the RDF objects instances. Here, we use the method

to indicate which element of the data source schema should be used to generate the RDF objects instances. Here, we use the method  , we will create a

, we will create a  for all properties that are part of the

for all properties that are part of the  . Then, the following metadata is generated for each

. Then, the following metadata is generated for each  : (i) the

: (i) the  where we use the join property URI to connect two entities from different sources, and (ii) the

where we use the join property URI to connect two entities from different sources, and (ii) the  along with a

along with a  , which is used to link the mappings of two different entities. We use the method

, which is used to link the mappings of two different entities. We use the method  : (i) the

: (i) the  containing the

containing the  and the

and the  , which require a reference of an element from the data source to create the join. We used the method

, which require a reference of an element from the data source to create the join. We used the method

As a result, the provided algorithm generates the RML mappings for all entities and data sources contained in an integrated graph and uses the annotations with regard to unions and joins to construct global-as-view mappings according to the global schema (e.g., ontology). Last but not least, this algorithm showcases the feasibility of generating RML mappings from the integrated graph. Note that the generated RML mappings can be used for any RML compliant engines (virtual or materialized) such as Morph-RDB [49], RDFizer [28] and RMLStreamer [24]. Importantly, note that our objective is not to generate optimized RML mappings, which should be part of the future work.

In this section, we evaluate the implementation of our approach. To that end, we have developed a Java library named

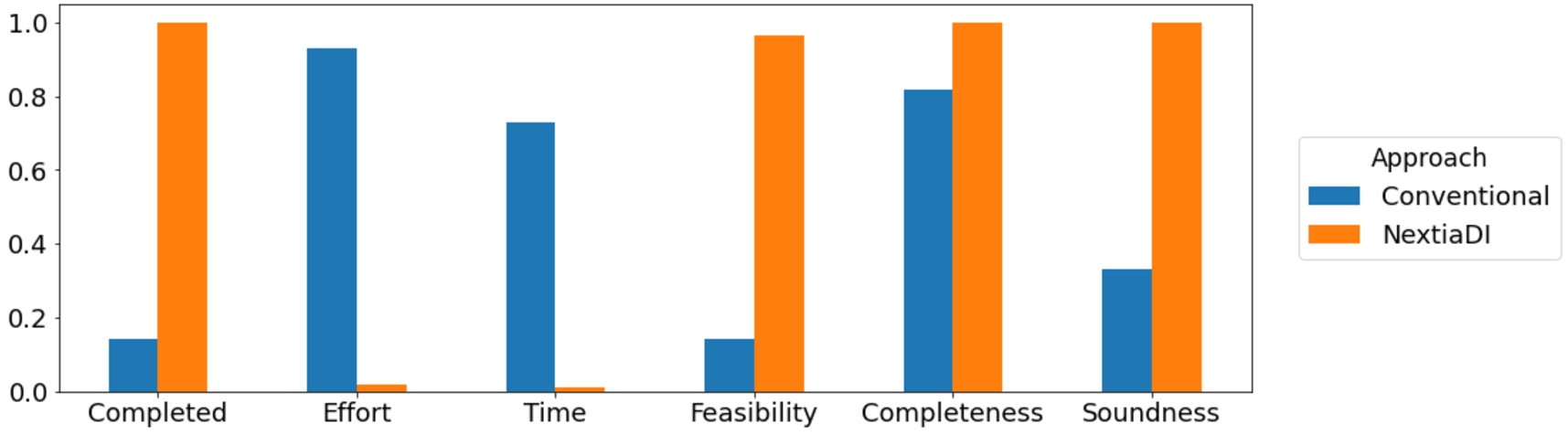

(Q1) Does the usage of

(Q2) Does the usage of

(Q3) Does the quality of the generated integrated schema improve using

(Q4) Does the runtime of

To address questions Q1, Q2, and Q3, we conducted a user study. Note we distinguish between Q1 and Q2, since the first is meant to report on the qualitative perception (according to the feedback received), while Q2 is a quantitative metric independent of the participant feelings during the activity. Regarding Q4, we carried out scalability experiments.

This user study aims at evaluating the efficiency and quality of

The notebooks and all experiments data are available on the companion website of this paper.

Distribution of level across skills for all participants.

Aggregated results for the bootstrapping phase.

Aggregated results for the schema integration phase.

Aggregated results for the mapping generation phase.

Conclusion. We confirmed that

Another relevant conclusion of the experiment, raised by most of the participants, is the difficulty of the tasks at hand due to the required combined skills. In the conventional approach, they spent a considerable amount of time exploring the sources, modeling and expressing schemata and mappings in RDFS. This combined profile is difficult to find in the field of Data Science, specially, knowledge graph experts as shown in Fig. 21. This fact confirms our claim that is not feasible to make the users responsible for generating the schema integration constructs of virtual data integration systems, even for small scenarios. This can explain the low impact of such tools in industry. Fortunately, all participants believe

In order to address research question Q4, we evaluate our two technical contributions (i.e., bootstrapping and schema integration) to assess their computational complexity and runtime performance. All experiments were carried out on a Mac Intel core 2.3 GHz i5 processor with 16GB RAM and Java compiler 11.

Evaluation of bootstrapping

We evaluated the bootstrapping of JSON and CSV data sources by measuring the impact of the schema size. Therefore, we increase the size of the schema elements. This experiment was executed 10 times. We describe the dataset preparation and the results obtained in the following.

Performance evaluation when the number of schema elements increased.

We evaluate the schema integration under three scenarios: (i) increasing the number of alignments in an incremental integration and (ii) integrate a constant number of alignments with a growing number of elements in the schemata and (iii) perform integration using real data.

Performance evaluation when alignments increased incrementally.

Performance evaluation when the number of schema elements increased.

This paper presents an approach for efficiently bootstrapping schemata of heterogeneous data sources and incrementally integrating them to facilitate the generation of schema integration constructs in virtual data integration settings. This process is specially thought to meet the requirements of data wrangling as required in Big Data scenarios: i.e., highly heterogeneous data sources and dynamic environments. As such, our proposal deal with heterogeneous data sources, follows an incremental approach to follow a pay-as-you-go integration approach and largely automates the process. Relevantly, our approach is not specific for a given system and it generates system-agnostic metadata that can be later used to generate the specific constructs of the most relevant virtual data integration systems. Last but not least, we have presented

This work opens many interesting research lines from it. For example, how to generalize the current approach to integrate complex semantic relationships between schemas (beyond one-to-one mappings) or develop a hybrid approach that, once the integrated schema is generated in a bottom-up approach, it allows the user to enrich the automatically generated outputs in a top-down approach. The ultimate question we would like to address is how to use these techniques to suggest refactoring techniques over the underlying data sources to facilitate their alignment and integration.

Footnotes

Acknowledgements

This work was partly supported by the DOGO4ML project, funded by the Spanish Ministerio de Ciencia e Innovación under project PID2020-117191RB-I00, and D3M project, funded by the Spanish Agencia Estatal de Investigación (AEI) under project PDC2021-121195-I00. Javier Flores is supported by contract 2020-DI-027 of the Industrial Doctorate Program of the Government of Catalonia and Consejo Nacional de Ciencia y Tecnología (CONACYT, Mexico). Sergi Nadal is partly supported by the Spanish Ministerio de Ciencia e Innovación, as well as the European Union – NextGenerationEU, under project FJC2020-045809-I.

JSON metamodel constraints

In this appendix, we present the constraints considered for the metamodel we adopt to represent the schemata of JSON datasets (i.e.,

RDFS metamodel constraints

In this appendix, we present the constraints considered for the fragment of RDFS that we consider in this paper (i.e.,