Abstract

Bias in Artificial Intelligence (AI) is a critical and timely issue due to its sociological, economic and legal impact, as decisions made by biased algorithms could lead to unfair treatment of specific individuals or groups. Multiple surveys have emerged to provide a multidisciplinary view of bias or to review bias in specific areas such as social sciences, business research, criminal justice, or data mining. Given the ability of Semantic Web (SW) technologies to support multiple AI systems, we review the extent to which semantics can be a “tool” to address bias in different algorithmic scenarios. We provide an in-depth categorisation and analysis of bias assessment, representation, and mitigation approaches that use SW technologies. We discuss their potential in dealing with issues such as representing disparities of specific demographics or reducing data drifts, sparsity, and missing values. We find research works on AI bias that apply semantics mainly in information retrieval, recommendation and natural language processing applications and argue through multiple use cases that semantics can help deal with technical, sociological, and psychological challenges.

Keywords

Introduction

There is growing awareness of bias and discrimination in AI applications. Users from inactive groups are more at risk of being mistreated on e-commerce platforms, such as Amazon or eBay, which is problematic as these often correspond to limited income groups [29]. One of the main challenges in image searching is its limitation to only the sample set of training data [19,41], which can lead to irrelevant or inaccurate results but, at worst, incorrect associations that reflect and perpetuate the harm done to historically disadvantaged groups [77]. These are just a few enlightening examples of the use cases covered in this survey article. Understandably, the direction of the AI community is shifting towards the pursuit of not only accurate but also ethical AI [50].

One of the main advantages of AI over human intelligence is its ability to process vast amounts of data. Indeed, data plays a fundamental role in which algorithmic decisions can reproduce or even amplify human biases, as these systems are only as good as the data they work with [9]. One of the main challenges of AI is dealing with data limitations, such as incomplete, unrepresentative and erroneous data [7]. In addition, how humans do or do not have access to these systems and how they interact with them are also key bias factors to consider in AI design.

The vast amount of information available on the Semantic Web (SW) has enormous potential to address the bias problems mentioned above by leveraging the structured formalisation of machine-understandable knowledge to build more realistic and fairer models. There are examples in different domains, such as machine learning and data mining, natural language processing or social networking and media representation, where the SW, linked data and the web of data have made a significant contribution [30]. For example, we can leverage semantics to control and restrict personal and sensitive data access, support different AI processing tasks such as reasoning, mining, clustering and learning, or extract arguments from natural language text. These systems are not averse to systemic bias, e.g., they may lack information from specific domains or entities that are less popular than others, or information from specific demographics may have more detail depending on the contributor’s interest [42]. While we consider the potential bias that SW technologies may have at the data and schema levels, we mainly focus on the contribution that SW technologies can make as a “tool” to address bias in different algorithmic scenarios to promote algorithmic fairness. This analysis is relevant for the SW and AI communities, as bias is gaining attention in different areas, such as computer science, social sciences, philosophy and law [50].

In this article, we provide a review of the contribution of SW technologies to addressing bias in AI. We aim to explain why bias arises and at what level of the AI system,

We follow a systematic approach [13] to review the literature and analyse the existing bias solutions that use semantics. Specifically, we focus on the following contributions:

We provide a survey of 34 papers that use semantic-based techniques to address bias in AI.

We categorise relevant papers according to the type of semantics used, and the type of bias they target.

We highlight the most common AI application areas in the framework of semantic research for bias.

We identify further challenges in AI bias research for the SW and AI communities.

The rest of the paper is organised as follows. In Section 2, we describe the methodology of the systematic literature review. In Section 3, we define the concepts of semantics and bias used in this article. In Section 4, we report on an analysis of previous works that use semantics to address bias in AI and discuss the main findings, future opportunities and challenges in Section 5. Finally, we provide a conclusion in Section 6.

Survey methodology

To provide a thorough literature review, we followed the guidelines of the systematic mapping study research method [13]. Specifically, we address the following research question (RQ):

The collection of relevant works is based on keyword-based querying in two popular scholarly databases: Elsevier Scopus and ISI Web of Knowledge (WoS) (Table 1). We complete our search with Microsoft Academic Search, Semantic Scholar and Google Scholar to do snowballing [68]. We collect papers according to specific inclusion criteria (IC):

Keywords used to search for relevant works in scholarly databases. TITLE-ABS-KEY refer to the title, abstract and keywords of the paper, respectively. We use the wildcard * to ensure multiple spelling variations are included in the search results

Keywords used to search for relevant works in scholarly databases. TITLE-ABS-KEY refer to the title, abstract and keywords of the paper, respectively. We use the wildcard * to ensure multiple spelling variations are included in the search results

Papers written in English.

Papers published in relevant journals between 2010 and 2021.

Only papers subjected to peer review, including published journal papers, as part of conference proceedings or workshops and book chapters.

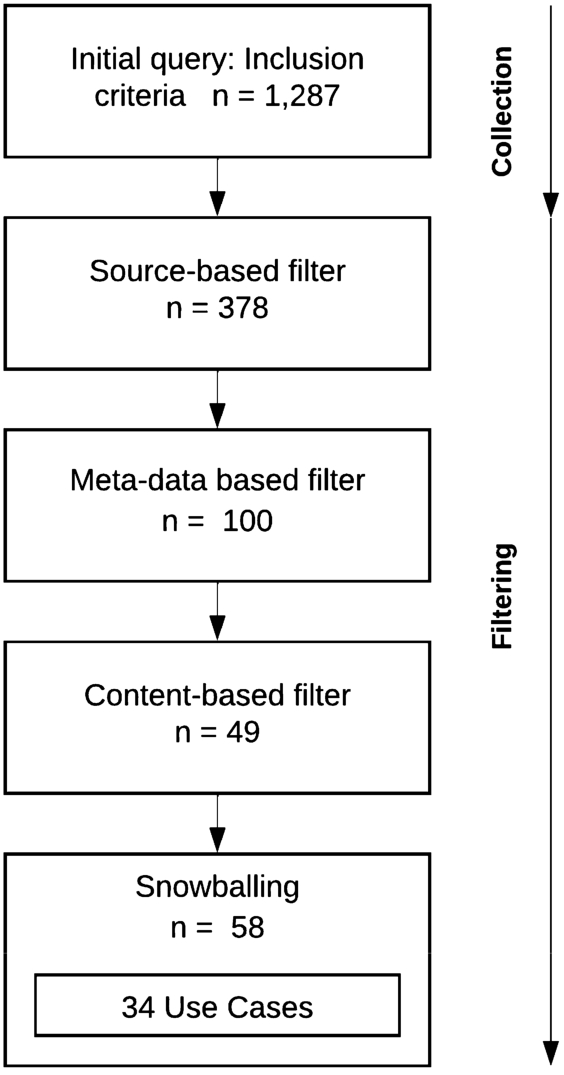

A list of venues representative of the papers found includes the International Semantic Web Conference (ISWC), the European Semantic Web Conference (ESWC), the World Wide Web Conference (WWW), the International Conference on Information and Knowledge Management (CIKM), the Conference on Artificial Intelligence (AAAI), the International Joint Conference on Artificial Intelligence (IJCAI), and the Conference on Empirical Methods in Natural Language Processing and International Joint Conference on Natural Language Processing (EMNLP-IJCNLP). Two reviewers filtered the papers in four subsequent steps (Fig. 1).

In the

The

The

The

Filtering relevant works of semantics to address bias in AI.

This section presents the definitions for semantics and the conceptualisations of bias in AI used for this paper’s analysis.

Semantics

There are various SW technologies (e.g. taxonomies, thesauri, ontologies, or knowledge graphs). This section defines the specific semantic resources in the surveyed papers to support a better understanding.

Lexical resources are representations of general language in a relational structure [70]. They have standard structured relationships and other properties for each concept, such as related and alternative terms. An example of this type of resource is WordNet [58], a commonly known lexical database of English.

An ontology defines a set of classes, attributes and relationships that model a knowledge domain with varying levels of expressivity [35]. For example, these formal and explicit specifications can be of a shared conceptualisation of meteorological variables (temperature, precipitation, visibility) to capture the domain of weather forecasting [31], the feelings and emotions conveyed by visual features to capture the psychology of human affect [43], or the reasoning steps of tasks involving problem-solving in specific domains (writing a risk assessment in industrial, insurance, health or environmental domains [53]). In particular, these forms of

A knowledge graph (KG) is a graph of data intended to accumulate and convey knowledge of the real world, whose nodes represent entities of interest and whose edges represent potentially different relations between these entities [37]. This form of data representation as a graph represents concepts, classes, properties, relationships and entity descriptions. ConceptNet [52] is an example of a KG of 1.6 million assertions of commonsense knowledge. A statement such as “cooking food can be fun” can be represented in the graph as

Finally, some works propose solutions based on the Linked Open Data (LOD), in which all SW technologies can be represented. LOD refers to a set of best practices for publishing and connecting structured data on the Web [11]. LOD relies on documents containing data in RDF (Resource Description Framework) format to make links between arbitrary

This survey aims to capture the role SW technologies such as those defined above play in addressing bias in AI. Specifically, we find research works that use semantics for three high-level tasks:

Bias in AI

We aim to help the understanding of bias in AI through examples that explain why it can occur and where it comes from, as this knowledge is necessary to understand the analysis of the harmful effects and impact of bias in Section 4. Bias can be defined as heterogeneities in data due to being generated by subgroups of people with their own characteristics and behaviours [56]. A model learned from biased data may lead to unfair and inaccurate predictions. Furthermore, bias can lead to unfairness due to systematic errors made by algorithms that lead to adverse or undesired outcomes, for example, of a particular group that has been historically disadvantaged [9,62]. This example of discriminatory behaviour is particularly concerning since AI systems have proven to reproduce or even amplify inequalities in society.

Categories of bias attending to the nature of the errors [7]

Categories of bias attending to the nature of the errors [7]

Table 2 presents the surveyed papers attending to the possible nature of the errors [7]. From a psychological perspective, systematic errors can occur due to the way humans process and interpret information and constitute a

From a statistical point of view, systematic deviations of the, possibly unknown, real distributions of the variables represented in the data can lead to inaccurate estimations and constitute a

From a sociological perspective, data may contain existing biases and beliefs that reflect historical and social inequalities, which the AI system may learn. It constitutes what is known as

This categorisation is crucial to reveal how semantics can help with data (i.e. statistical, cultural) and user-dependent (i.e. cognitive) biases. Another aspect to consider is where in the AI workflow these biases originate, so we rely on the framework in [64] for the domain of social media analysis as it closely reflects general practices in AI. We give this general overview as the description in the semantics-specific framework of Section 4 discusses the works using more concrete concepts of bias (bias at a lower level).

Categories of bias depending on the location in the AI workflow where bias originates [64]

We use the examples in the surveyed papers shown in Table 3. The first critical point of bias is at the data origin or source since any bias existing at the input of an AI system will appear at least in the same way at the output (i.e. “garbage in – garbage out” principle). This is the bias origin most predominant in the surveyed papers, particularly due to

Data collection is the second step in which bias can appear, and examples of this type found in the surveyed papers include

Data pre-processing is susceptible to bias, in particular in this study, of

Data analysis is the last step covered by this study that can cause bias, specifically at

Our analysis aims to understand better how bias impacts different AI systems and provides specific methodological examples that can apply to similar problems in future research. This section provides the review of bias assessment, representation and mitigation methodologies that use semantics, which we present following the order in Table 4.

Semantic resources used to address bias in AI

Semantic resources used to address bias in AI

Assessing biases is a fundamental task in analysing and interpreting model behaviours. It can reveal intrinsic biases that are difficult to detect due to the opaque nature of many AI systems [57]. The following examples of works are presented as semantics use cases to help with this problem.

Bias affecting specific groups of people

KGs can help assess recommendation disparities in user groups that are less active [29] (e.g. economically disadvantaged users). This problem constitutes a

The under-representation of certain demographic groups was assessed in drug exposure studies. Due to the heterogeneity and lack of metadata in the gene expression databases resulting from these medical studies, it is challenging to examine large-scale differences in sex representation of the data. This assessment is essential, as women are 50% more likely to suffer from adverse drug effects. In [27], they were able to assess sex bias in public repositories of biological data by mapping existing and inferred metadata (using ML models) to existing medical ontologies. Specifically using Cellosaurus and DrugBank databases to identify cell lines and drugs, respectively. They could label drug studies from all publicly available samples using named entity recognition to identify drug mentions in the metadata and normalisation to map every instance to all its possible names. This analysis generated a new resource with unduplicated and normalised data that allows examination across study platforms. As a result, they identified that sex labels are inconsistently reported, with most samples lacking this information. More importantly, they report the existence of sex biases in drug data (e.g., female under-representation in studies of nervous system drugs). This study draws attention to the lack of study and the importance of including sex as a study variable in future analyses.

Another use of KGs relevant for this task is to assess disparities in the presentation of news reported by different sources, i.e., with different political leaning [5]. This

Bias affecting individuals

The assessment of polarised web search queries is also essential because users are prone to look for information that reinforces their existing beliefs [18]. Especially when it comes to controversial topics, the

Recently, a research project presented a similar approach to assess confirmation bias and other similar group phenomena that occur when analysing, visualising and disseminating information on the Internet (group polarisation [8] and the belief echo chamber [60]). The ontology is the primary data structure for modelling groups and individuals in a network structure [67] and is used as a tool to find similarities and anomalies in the profiles resulting from the data collected by the system. They present a web information system to evaluate such effects, integrating NLP methods into this data structure to identify themes and sentiments towards them. This architecture allows tracking individual and group responses to different events (e.g., COVID restrictions, vaccination) by simply adding new terms to the ontology (e.g., from specific vaccines such as Pfizer, Moderna or Astra Zeneca), inferring the sentiment towards them. These results are promising for integrating AI methods for natural language understanding (NLU) into distributed open ecosystems, as this is fundamental to understanding these phenomena in the broader societal domain.

Bias affecting AI systems

Bias can cause many problems affecting AI systems.

Semantics are used to assess inconsistencies in the predictions of AI systems due to the use of a small, domain-specific corpus for training.

Similar artefacts in datasets are evaluated in pre-trained masked linguistic models, which are increasingly used in factual knowledge bases to extract information from a query string [14]. The task consists of using queries such as “Steve Jobs was born in [MASK]”, where

Example of two dataset artifacts (i.e.

Recently, a new framework for automatic decision making in the medical domain was proposed to meet the requirements of explainability, robustness, and reduced bias in machine learning models [38]. Through a series of experiments to address three fundamental challenges in medical research, the authors reason that a multimodal, decentralised and explainable infrastructure is needed, where KG can play a crucial role. In this second use case, a series of arguments are presented as to why KGs can benefit future human-IA interfaces to be effective in this field (e.g., integrating the characteristics of different medical data modalities). It concludes with the basics of

Training corpora also have limitations due to errors in manual annotations that compromise the reliability of the corresponding AI systems. Subjectivity and errors in human annotations constitute a significant problem in developing benchmarks for AI systems, so the assessment of bias in annotation tasks is vital. In [17], background knowledge from the WordNet lexical database is used to support the annotation task where the context is missing, i.e., the set of neighbouring words that provide domain information. Notably, a comparison of the precision of two lexicographers in a context-agnostic scenario for a word sense disambiguation annotation task and using WordNet parameters to provide context revealed that annotations consistently shift towards the most frequent sense of a word in the absence of context. Even though this analysis used few semantic parameters (conceptual and semantic distance and belonging to the dominant concept), its binding machine versus human annotation study could help demonstrate the importance of context in human annotation tasks.

We found three other examples of assessment of

Similarly, there can be bias when using user product reviews, blog posts and comments to support search engines, recommender systems, and market research applications, due to the subjectivity and ambiguity of this content [46]. As with the previous use case, this may compromise the quality and functionality of NLP systems, especially the degree to which they can detect mixed opinions. They propose a lexical induction approach because mapping subjectivity scores to opinion words in the text can detect review sentiment independently of individual language use. They use SentiWordNet to obtain these sentiment scores, which are used as additional input features for sentiment analysis. Despite the proposed method can only exploit several senses of each word (the overall score or the value of the first sense) without incorporating semantic relations, it shows the value of ontology-based approaches to avoid human biases arising from the use of machine-learned annotations.

Finally, we have found an excellent example of how inconsistencies in human-generated data used as ground truth to train automated decision-making systems can compromise the capability and effectiveness of these systems. The use of data-driven neural networks for automated radiology report generation is becoming a critical task in clinical practice [51]. In particular, image captioning approaches trained on medical images and their corresponding reports can significantly improve diagnostic radiology. However, the large volume of images that is a heavy workload for radiologists and, in some cases, lack of experience hinders the generation of these reports. The problem with using previous reports to train automation models is the variability and redundancy between the sentences used to describe the image, especially in describing the normal regions. For example, as shown in Fig. 3, the Blue text corresponds to the description of all normal image elements, while only the Red text indicates the abnormality. Since normal images are already overrepresented in the dataset, these deviations and repetitions aggravate the data imbalance and make the generation of sentences to describe normal regions more predominant. As a result, this bias in the data leads to errors where rare but significant abnormalities are not described (

Example of bias impact in image captioning for automatic radiology report generation when using human-generated data as ground truth for training automated decision-making systems [51].

There are some cases in which making the model’s systematic preferences and possible biases transparent and expressing them in a human-understandable way may be decisive for providing possible directions of improvement [61]. Therefore, in the following section, we present examples of higher-level semantic tasks for bias representation.

Bias affecting specific groups of people

KGs could help to represent systematic preferences that are consistently applied across the examples used as training data [61]. This is the second example of

There are three other examples of

The use of ontologies can be advantageous in improving the representation of minority groups, as shown in the following two examples. In particular, being able to represent differences in the way of expressing and perceiving the affection conveyed by an image across languages can avoid the discrimination of generalist models that only fit a majority language [43]. Language is one of the main characteristics of a culture, so not paying attention to the context of each language can end up damaging entire ethnic groups. In this example, an ontology (Multilingual Visual Sentiment Ontology,

On the other hand, the interpretation of which verbs trigger a given action varies from language to language [34]. The development of an ontology with videos to represent different actions enables identifying groups of verbs inclusive of different languages since videos have annotations in ten languages. The

Bias affecting AI systems

We present a use case that uses a KG to improve the representation of users’ interests beyond the items captured in the training data [20]. This example falls back to the

Bias affecting individuals

As final examples, we present three case studies that use semantics to represent consistent and predicable errors that can compromise how data is used and analysed. This

Semantics to mitigate bias

The development of methods to mitigate bias is essential to prevent low-quality results that often impact communities and make them victims of policy injustice, affect their social perceptions, or disadvantage them in many AI application areas [6]. This section presents work examples that leverage semantic knowledge to counteract the possible adverse effects of biased learning.

Bias affecting specific groups of people

Semantic knowledge can be used to mitigate stereotypical perspectives of marginalised groups that are shown to be learned by automated decision-making systems [6]. This is another example of

Bias affecting individuals

KGs have shown advantages in mitigating biases due to humans’ heuristic way in web searches. We present two use cases in which the structure of KGs can help counteract the

Similarly, the advantage of using a KG in the search interface is addressed in another study that aims to increase the efficiency, quality and user satisfaction with the information obtained after a web search [74]. To this end, they developed a KG-based interface prototype using the Open Information Extraction system to generate the entity-relationship-entity triplets of the text. Their qualitative study, based on a post-experiment evaluation, revealed that the KG interface helps to reduce the number of times required to view the source content during exploratory searches with respect to general hierarchical tree interfaces. These user-based studies serve to uncover important notions that shape the use of AI systems.

Bias affecting AI systems

Bias can cause many problems affecting AI systems.

Similar errors can affect the manual annotations often needed to train AI systems. The

The rest of the use cases in this section deal with other limitations regarding how data is used to train AI systems. One of the problems of recommender applications is

Having many features in the recommendation motivates the proposal of an entropy-based method for obtaining only the meaningful historical data of each user. The sparse factorisation approach proposed in [2] facilitates the training process by exploiting a higher level of expressiveness in the feature embeddings of the items provided by a KG. Facts and knowledge extracted from DBpedia provide customised recommendation lists, filtering out items with low information gain. Their method allows incorporating the implicit information provided by the KG into the latent space of features, showing an improvement in the quality of results on three benchmark data compared to other state-of-the-art methods. More importantly, their experimental evaluation shows that this method improves item diversity, which is critical for measuring popularity bias mitigation.

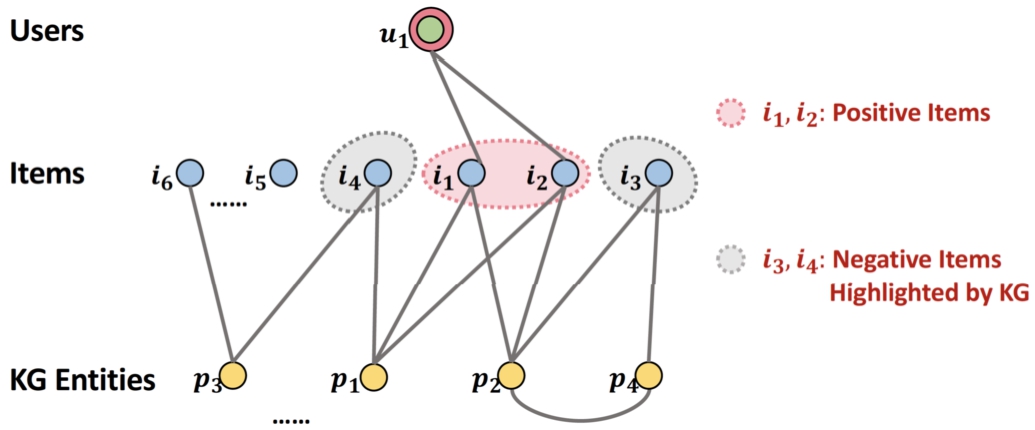

On the other hand, another limitation imposed by the platforms to collect information for recommendations is the small number of negative samples in the data, as most interactions are positive comments (clicks, purchases) [85]. This constitutes non-symmetric

Example of bias due non-symmetric missing data in a Recommender System (RS) that only collects positive samples [85].

Similarly, bias towards majority samples is a major challenge in current natural language generation (NLG) architectures. As a result, current neural approaches have difficulty generating coherent, grammatically correct text from structured data. The “divide-and-conquer” approach proposed in [24] is based on inducing a hierarchy from a corpus of unlabelled examples using a KG of entity and relation embeddings. The notion of similarity is used to show only the most relevant examples during training to avoid bias due to imbalances in the training data. Specifically, they apply this idea to two datasets containing linked data and textual descriptions (biography paragraphs with semantic mappings to Wikipedia infoboxes). Applying similarity of embedded inputs generates effective input-output pairs that consistently outperform competitive baseline approaches. Of particular interest in this study is partitioning the dataset according to the semantic and lexical similarity of the entries for training specialised models for each particular similarity group. This general idea can be transferred to other domains to address problems in data sparsity, such as image captioning or question answering applications.

The following three examples address the problem of

Second, a similar approach has been applied in an image captioning framework to allow implicit image relationships to be captured in the caption that may be relevant to describe the image. For example, if the image shows a “woman standing with her luggage” next to a sign, then it makes sense to speculate that she is waiting for the bus [39]. The ConceptNet commonsense base helps discover these relationships, so a similar strategy is incorporated into the caption generator output to increase the likelihood of latent concepts related to specific objects in the image. This example leverages semantic knowledge to allow the system to generalise beyond the training examples.

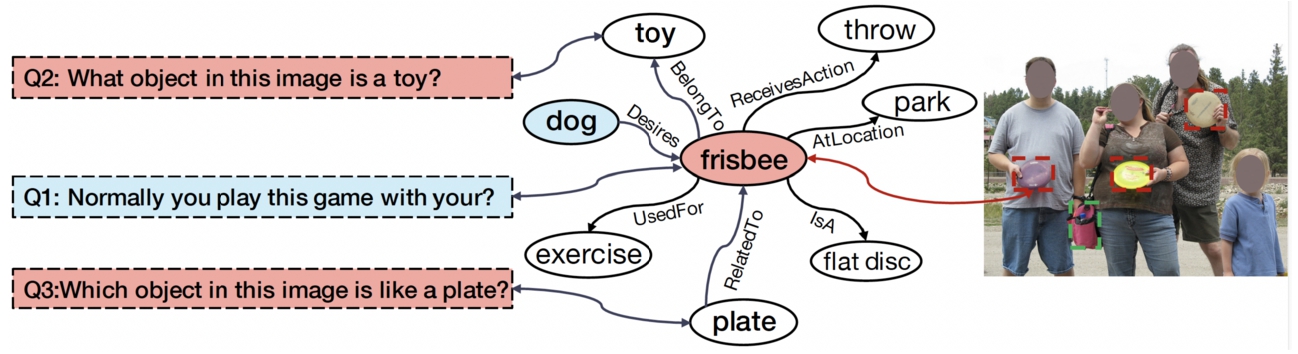

Third, the incorporation of external knowledge can help mitigate the errors of systems that respond to questions related to an input image when dealing with answers that did not appear during their training phase or that are not contained within the image scene [21]. In real-world contexts, most techniques fail to address answers if they are not within the image content and require external knowledge. For example, Question 1 (Q1) in Fig. 5 requires such external knowledge, as responses such as “dog” (an entity that desires the frisbee) cannot be inferred from the image. A KG allows an understanding of the open-world scene beyond what is captured in the image. The framework incorporates prior knowledge to guide the alignment process between the feature embeddings of the image-question pair and the corresponding target response. Using a pre-existing subset of DBpedia, ConceptNet and WebChild, this knowledge component represents the possible set of answers and concepts to enrich the relations between them. Their quantitative results and their comparison with other current methods support the exploration of knowledge-based systems to overcome overfitting errors.

Example of answer bias in a Visual Question Answering (VQA) system when dealing with concepts not seen during training or in the image scene [21].

Finally, two case studies have used semantics to mitigate problems in query formulations due to expressiveness limited to a small corpus leading to irrelevant and incorrect results. In image retrieval based on the semantic representation of scene graphs [19], WordNet and ConceptNet can be used to increase the precision of searches that include more complex concepts. For example, in cases where the system cannot infer that the entities “dog” and “cat” are relevant in the query “animals running on grass”. Their approach introduces a set of rules to find images with fuzzy descriptions and infer the name of the concepts they express to help with more complex searches and enhance semantic and knowledge-based methods for image retrieval processing.

In order to reduce the number of irrelevant results of a web crawler to retrieve social media information, the development of a domain ontology is proposed [76]. Specifically, the Travel&Tour ontology is developed using the Protege-OWL editor to model the specific domain of travel and tourism. The aim is to enrich the content of social media data with the properties and relations of the ontology to improve context-specific searches and take advantage of the domain knowledge provided by this type of data. For example, a search for an expedition-type tourist destination in South America (

Full taxonomy of semantic tasks and bias in AI. Abbrv.: Recommender System (RS), Information Retrieval (IR), Information Extraction (IE), Natural Language Understanding (NLU), Natural Language Generation (NLG), Visual Question Answering (VQA), Text Classification (Text Clf.), Hate Speech detection (HS), Sentiment Analysis (SA), Word Sense Disambiguation (WSD), Music Search and Recommendation (Mus. S/R), Clustering (Clus.), Image Retrieval (Im. R), Image Captioning (Im. C), Image Sentiment Analysis (Im. SA), Intelligence Activity (IA), Content based Filtering (Cnt. F), Collaborative based Filtering (Col. F), Knowledge-based Recommender (K-based R), Scene Graph (SG), Search Engine (SE), Natural Language Processing (NLP), Machine Learning (ML), Computing (Comp.), Linked Open Data (LOD), Lexical Resource (Lexical R), Knowledge Graph (KG)

This section highlights the main findings whereby semantics can address bias in AI systems. In addition, it outlines the opportunities for contributions and challenges for further developments in ethical AI.

Major findings

We summarise some conclusions from the use case analysis following Table 5. We highlight the main issues and applications where semantics has helped and the tasks for which each semantic technology is most valuable. In particular, we focus our discussion along the following lines:

The use of semantics to address bias in AI is on the rise, particularly in approaches for mitigating, representing and assessing bias.

The most researched application areas for biased AI systems using semantics are recommender and search systems and NLP applications.

KGs are primarily used for bias mitigation, whereas ontologies are mainly used to represent bias and lexical resources and KGs to assess bias.

Incorporating formal knowledge representations into systems contributes to better generalisation, a fairer balance between bias and accuracy, and more robust methods. There is significant use of KGs to address these

The approaches to addressing sociological and psychological challenges have used a more varied range of semantic resources. On the one hand, minority ethnic groups are often less represented than the general population, which constitutes one of the main

On the other hand, the fact that many AI systems rely on human annotations to learn how to make their future predictions compromises, in many cases, their reliability and truthfulness. Given that human annotations are often liable to subjectivity, interpretation and lack of sufficient knowledge, it constitutes one of the major

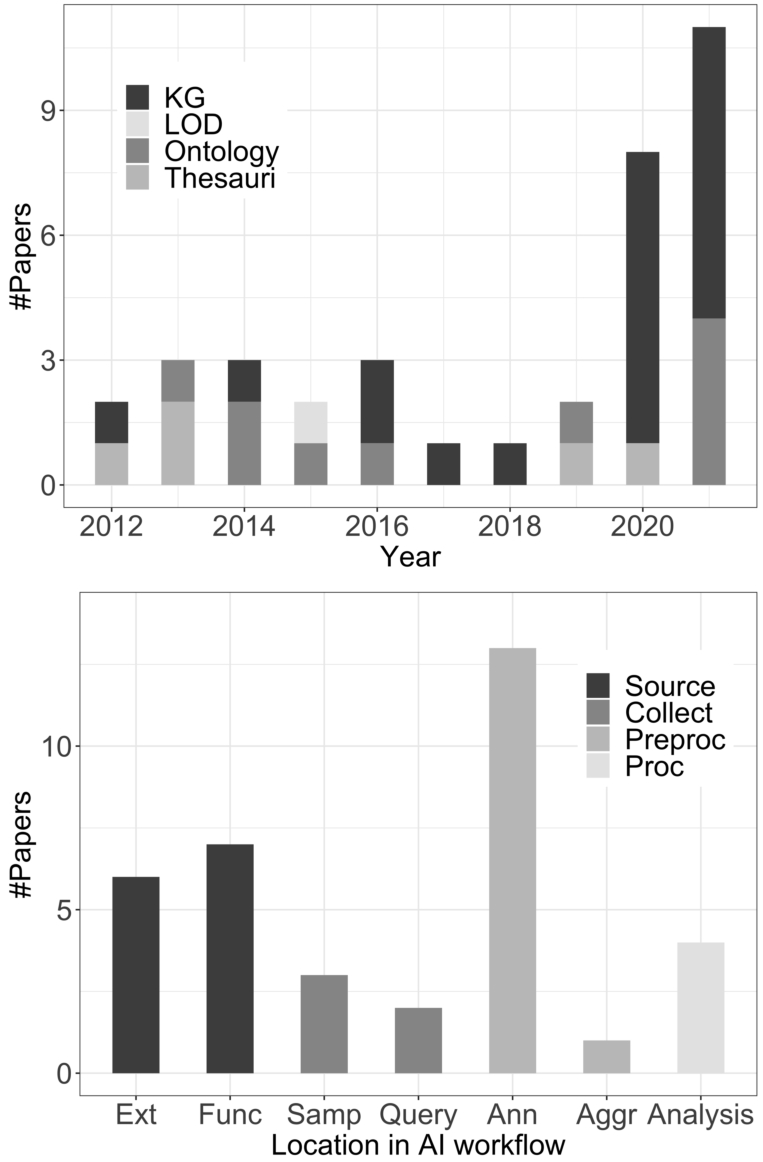

Semantic web technologies used in this study (top-figure). Categories depending on the location in the AI workflow where bias originates (bottom-figure). Abbreviations: lOD (linked open data), KG (knowledge graph), source (bias at source), collect (bias at collection), preproc (data pre-processing), proc (data analysis).

We discuss the opportunities for semantics based on our major findings to elucidate the connection and future contribution to common AI bias methods. Specifically, we draw attention to the fact that:

KGs are likely to become increasingly dominant in AI bias research, given their wider scope and potential value in assessing and mitigating bias in various domains.

Role of semantics in addressing bias related to the collection and annotation of data is likely to become a significant research direction in the near future.

Semantic techniques will have a bigger role in enabling and enforcing fairness, explainability, and data pre-processing (including data augmentation, enrichment, and correction techniques).

From all the semantic resources that we have analysed in this work, KGs are particularly representative in the last two years (Top-Fig. 6). Thus, we expect their use to increase in the coming years. In particular, ConceptNet [19,39,41,88], DBpedia [20,65] and Wikidata [54,61] were mostly used in this study.

Bias mitigation approaches were the most representative of our study period. As seen in the lower part of Fig. 6, semantics can address bias at various stages of the AI workflow and especially the bias coming from the data annotation. The problem lies partly in human annotators’ errors when processing information but crucially in the very limitations imposed by using annotated corpora to train AI systems. This is a general practice in AI but leads to systems with a capacity limited to the knowledge captured in a specific dataset.

Semantics shows great potential to contribute to several open lines of research on bias in AI.

We also emphasise the opportunities of semantics in future

We discuss the potential of semantics for developing data pre-processing techniques. We see an intersection of the area of knowledge representation with

Furthermore,

Finally, we discuss the opportunities of

Open issues and challenges

This final part of the discussion highlights the challenges and issues we believe future research on AI bias will address.

Generally, studies use two types of evaluation. User-based evaluation relies on user participation in the system through experimental or observational methods [34,54,74]. Besides, a common practice to evaluate the progress of AI systems is using baseline assessments, i.e., comparing approaches using benchmark datasets and specific algorithmic metrics.

The majority of works that address bias evaluate their approaches in downstream implementation. We found works using metrics generally used in recommendation and retrieval applications (ranking scores [20,21,41,85]) and NLP (textual similarity metrics [24,39,51], or general performance in multimodal [43] and text classification tasks [3,46,57,61,67,88]). It reveals the need to develop evaluation methods and metrics specific to bias, as an improvement in model performance generally does not reflect that the algorithm is not biased. The existence of a possible trade-off between overall performance and bias is an important topic of study in the bias literature [86]. However, only a few previous studies have considered formal definitions of fairness. For example, to ensure the quality and diversity of recommendations in individuals from disadvantaged groups [29] and underrepresented items [2], or to ensure that individuals from specific demographic groups are treated fairly by the system [6].

There is an ongoing debate about providing metrics that can be used to benchmark systems addressing bias. In many cases, evaluation frameworks account for demographic information about the individuals or groups affected by AI models. Still, it should take into account various forms of bias in existing models beyond the social categories that are considered as protected attributes by convention [10]. Moreover, these methods for measuring fairness can only reduce discrepancies about the characteristics captured in the data (“observed” space) [28]. While these may be relevant for prediction, they may not capture well all the characteristics that served as the basis for decision-making (the “construct” space), leading to the impossibility of a

The evaluation of forthcoming semantics-based methods to address bias requires a more critical evaluation that considers bias in the context of each particular application.

Secondly, semantic resources cannot be assumed to be free of bias.

The concept discussed in [25] of a

Previous works shed light on the

Demographic bias also propagates in automatic systems to generate KGs. Named entity recognition systems used for KG construction have shown a systematic exclusion in the detection of entities related to specific demographic categories like gender or ethnicity. For example, of black female names [59]. Gender disparities can occur in neural relation extraction systems when extracting specific links between entities (occupation [32]). As a result, bias and prejudice against vulnerable demographic groups propagate into downstream applications. Such harmful associations of specific professions to particular gender, religion, ethnicity and nationality groups, such as men being more likely to be bankers and women to be homemakers, can be found in embeddings extracted from commonly used KGs (Wikidata, Freebase) [26]. Nevertheless, there are works to address how data representation disparities affect specific demographic groups. One example of a data augmentation method adds new samples to a KG to balance facts that regard specific sensitive attributes (gender differences in occupations) [69]. This approach effectively mitigates bias in the resulting embeddings from DBpedia and Wikidata. This example stresses the importance of bringing awareness and accounting for the possible bias arising from the application of semantic resources.

Besides the lack of representation, another bias assessed in particular semantic resources is mainly due to low coverage and noise. Content disparities may exist in different languages. For example, to address

Statistical methods can serve to estimate the number of facts needed for relations to be representative of the real world [78]. Precisely, a method to calculate a lower bound of different relations could find that at least 46 million facts are missing to draw reasonable conclusions from DBpedia. Many of these missing entities may be due to the lack of type classes covered by DBpedia’s ontology and used to automatically extract information from Wikipedia’s infoboxes, which leads to only mapping a small subset to the graph [63]. Data descriptive methods appear as potential tools to assess these coverage problems, as shown in the analysis of missing data in specific languages in Wikidata [16].

On the other hand,

Future semantics-based approaches to address bias in AI should ensure sufficient demographic representation of the people affected by the system and sufficient coverage of the application of use. Additionally, they should use such semantic information in realistic settings that account for noise, mainly due to redundant facts in the captured knowledge.

Consequently, it is imperative to increase transparency and explainability by publishing the source and currency of the data used to generate semantic resources [87], but equally the methods used to construct them. Especially in the enterprise, this information is crucial to ensure the integration of SW technologies in techniques to address bias in AI.

Several factors introducing bias in the development of ontologies have been studied [45]. Specific philosophical views on whether an ontology should represent or interpret reality or its purpose constitute a bias arising from explicit choices. The same is true when capturing insights from competing scientific theories or when economic interests are at stake in deciding which domains deserve more attention. Other factors may propagate bias implicitly, such as specific levels of granularity, language, or underlying socio-cultural, political and religious motivations. There are examples of work that address these limitations, e.g., to define the scope of an ontology from the literature in a less biased way towards the selection of particular experts [36], or to compare the content coverage of the ontology with a target domain [55]. These examples show the importance of raising awareness of the possible ethical implications of using these knowledge resources.

Similar problems affect the creation of general-purpose, like DBpedia, and domain-specific KGs, GeoNames. Increasingly, KGs are being integrated into search and recommendation systems to provide highly personalised content. The problems involved in creating such personalised KGs can be even more detrimental than in more conventional methods [33]. Specifically, this representation of users is at risk of being biased towards specific aspects depending on the data source used to collect information, e.g., behaviours on social networks are different from conversations in forums. In addition, timely events affect the type of information shared, e.g., in elections or times of pandemics. This bias can compromise user satisfaction and ultimately aggravate the echo chamber phenomenon. With the evolution and application of semantic technologies in new fields, it is important to be aware of new use issues.

The ethical implications of making particular decisions and selecting particular sources of information during the development of SW technologies require careful consideration when establishing the grounds for their application in techniques to address bias.

In conclusion, we draw on four main challenges posed by bias and prejudice in AI systems. First is the need to address the lack of data in unforeseen situations, i.e., shifting from controlled to open environments. We need to develop a world model that would enable AI for a general purpose and improve human-machine communication to use AI as a collaborative partner. Finally, we need to establish appropriate trade-offs between conflictive criteria to enable these systems to be applied in a broader range of applications. Integrating domain knowledge with data-driven machine learning models in a hybrid approach is key to addressing the identified challenges of

This survey shows the applicability of semantics to address bias in AI. From over a thousand initial search results, we follow a systematic approach and present the analysis of 34 use case studies that use formal knowledge representations (lexical resources, ontologies, knowledge graphs, or linked data) to assess, represent, or mitigate bias. We provide an ample understanding and categorisation of bias, discussing the harms associated with bias and the impact it can have on individuals, groups, and AI systems.

Our findings show that semantics has helped in many AI applications, including information retrieval, recommender systems, and numerous natural language processing tasks. Given the increasing use of semantics in recent years, particularly KGs, we conclude that semantics could primarily support fairness, explainability, and data pre-processing.

We identify further challenges in AI bias research for the SW and AI communities. These are primarily the need to develop more robust bias evaluation metrics beyond established sensitive information captured by dataset features that may not capture all the relevant information needed to build fair AI systems. We also discuss necessary considerations before applying SW technologies to address AI bias, such as underrepresentation of specific demographic groups, low application coverage, noise, and data source selection bias when building these technologies.

This paper positions the work of the SW community in the algorithmic bias context and analyses the intersection of both areas to assist future work in identifying and nurturing the benefits of these technologies, and using them responsibly. Bias in AI is an urgent issue because it compromises the applicability of automated systems in society, and semantics has enormous potential to help give meaning to the data they use.

Footnotes

Acknowledgements

This work has received funding from the European Union’s Horizon 2020 research and innovation programme under Marie Sklodowska-Curie Actions (grant agreement number 860630) for the project “NoBIAS – Artificial Intelligence without Bias”. This work reflects only the authors’ views and the European Research Executive Agency (REA) is not responsible for any use that may be made of the information it contains.