Abstract

There is a large number of datasets published as Linked (Open) Data (LOD/LD). At the same time, there is also a multitude of tools for publication of LD. However, potential LD consumers still have difficulty discovering, accessing and exploiting LD. This is because compared to consumption of traditional data formats such as XML and CSV files, there is a distinct lack of tools for consumption of LD. The promoters of LD use the well-known 5-star Open Data deployment scheme to suggest that consumption of LD is a better experience once the consumer knows RDF and related technologies. This suggestion, however, falls short when the consumers search for an appropriate tooling support for LD consumption. In this paper we define a LD consumption process. Based on this process and current literature, we define a set of 34 requirements a hypothetical Linked Data Consumption Platform (LDCP) should ideally fulfill. We cover those requirements with a set of 94 evaluation criteria. We survey 110 tools identified as potential candidates for an LDCP, eliminating them in 3 rounds until 16 candidates for remain. We evaluate the 16 candidates using our 94 criteria. Based on this evaluation we show which parts of the LD consumption process are covered by the 16 candidates. Finally, we identify 8 tools which satisfy our requirements on being a LDCP. We also show that there are important LD consumption steps which are not sufficiently covered by existing tools. The authors of LDCP implementations may use this survey to decide about directions of future development of their tools. LD experts may use it to see the level of support of the state of the art technologies in existing tools. Non-LD experts may use it to choose a tool which supports their LD processing needs without requiring them to have expert knowledge of the technologies. The paper can also be used as an introductory text to LD consumption.

Introduction

The openness of data is often measured using the now widely accepted and promoted 5-star Open Data deployment scheme introduced by Sir Tim Berners-Lee.1

make your stuff available on the Web (whatever format) under an open license

make it available as structured data (e.g., Excel instead of image scan of a table)

make it available in a non-proprietary open format (e.g., CSV instead of Excel)

use URIs to denote things, so that people can point at your stuff

link your data to other data to provide context

The last star is also denoted as Linked Open Data (LD/LOD). More and more data publishers are convinced that the additional effort put into the last 2 stars, i.e. LD in RDF as opposed to CSV files, will bring the promised benefits to the users of their data. The publishers rightfully expect that the users will appreciate the 5-star data much more than, e.g., the 3-star CSV files given that the proper publication of 5-star data is considerably harder and more costly to achieve. In particular, they expect that the users will appreciate benefits such as better described and understandable data and its schema, safer data integration thanks to the global character of the IRIs and shared vocabularies, better reuse of tools, more context thanks to the links and the ability to (re)use only parts of the data.

Open Data users are familiar with 3-star publication formats and principles but it is hard to encourage them to use 5-star data. Often they do not know LD technologies, most notably the RDF data model, its serialization formats and the SPARQL query language. They refuse to learn them because they do not see the difference between 3-star and 5-star representations. For example, our initiative

There are also other resources reporting that learning LD technologies is a problem. For example, [117] describes a toolset for accessing LD by users without the knowledge of LD technologies. A recent blog post

Checked on 09.03.2018.

In this paper we do not aim at surveying and evaluating the problems of data availability, performance and quality as these are separate topics on their own. We are rather interested in the question of whether there are software tools or platforms available which would support Open Data users, both with and without expert LD knowledge, in consuming LD. It is not so important whether such platform is a single software tool or a set of compatible and reusable software tools integrated together. The need for building such a platform is also identified in [117].

We call such a hypothetical platform a

It is able to load a given LD dataset or its part.

Its user interface does not presume the knowledge of LD technologies so that users without such knowledge can use the tool. Most notably this includes the knowledge of the RDF data model, its serializations and the SPARQL query language.

It provides some non-RDF output the users can further work with. The output can be a visualization or a machine-readable output in a non-RDF format, e.g. a CSV file.

In the first part of the paper, we describe the LD consumption process and we identify requirements which specify how individual activities in the LD consumption process could be supported by an LDCP. The individual requirements are identified either based on existing W3C Recommendations, or based on research areas and approaches published in recent scientific literature related to some parts of the LD consumption process. Therefore, the individual proposed requirements are not novel. For each requirement we define a set of criteria to be used to decide whether a given software tool fulfills the requirement or not. In total, the paper presents 34 requirements covered by 94 evaluation criteria. The requirements and their evaluation criteria constitute a framework we use to evaluate the tools.

In the second part of the paper we present our contribution – a survey of existing software tools which can be used as LDCPs. To identify LDCP candidates we do a systematic review of recent scientific literature. We search for papers which describe software tools for LD consumption, visualization, discovery, exploration, analysis or processing. For each candidate we search for its on-line instance or demo or we install our own instance and we try to load our testing LD datasets to the instance. If we are successful the candidate is selected for further evaluation. As a result, we select 16 software tools. Each tool is evaluated using the whole set of criteria to determine the fulfilled requirements introduced in the first part of the paper. The individual requirements are neither disqualifying nor intended to select the best tool for LD consumption. They represent different LD consumption features. We show how each considered tool supports these features.

We distinguish two kinds of users in the evaluation. First, there are 3-star data consumers who are not familiar with LD technologies. These users initially motivated our work. We call them

Based on the evaluation, we show tools which are LDCPs – either in the minimal sense specified above or with some additional features represented by our requirements. Moreover, we show tools which offer something relevant for LD consumption in terms of our requirements even though they provide only RDF output. These tools do not fulfill condition 3 of the minimal LDCP defined above. However, their RDF output can be used as input for any LDCP and, therefore, they can be integrated with any LDCP and extend its LD consumption features.

Let us also note that the evaluation requirements are not defined by non-LD experts. They are LD expert requirements based on the current state of the art in the field of LD consumption. Our evaluation shows how the current state of the art in different parts of the LD consumption process is reflected in the 16 evaluated tools in a way usable by non-LD experts or at least by LD experts. LD experts will find interesting the details of the evaluation of the individual requirements or even the individual criteria. Moreover, we also aggregate the evaluation results to show to which extent the evaluated tools cover different parts of the LD consumption process. This result will help non-LD experts in selecting the tool they can use to consume LD depending on their specific needs.

This paper is based on our introductory study of the problem [74]. Since then, we have better defined requirements, better defined survey methodology and we survey considerably more tools which are also more recent, which we present here.

This paper is structured as follows. In Section 2 we provide a motivating example of a user scenario in which journalists want to consume LD and expect to have a platform that will enable them to enjoy the promised LD benefits. In Section 3 we describe our evaluation methodology. In Section 4 we define the LD consumption process, describe the identified requirements and cover them with evaluation criteria. In Section 5 we identify existing tools and evaluate them using the evaluation criteria. In Section 6 we survey related studies of LD consumption and in Section 7 we conclude.

To motivate our work, let us suppose a team of data journalists. For their article they need to collect data about the population of cities in Europe and display an informative map. The intended audience are statisticians, who are used to working with CSV files, so the journalists also want to publish the underlying data for them as CSV files attached to the articles.

The journalists are used to working with 3-star open data, i.e. they have to know where to find the necessary data sources, e.g. population statistics and descriptive data about cities including their location, they have to download them, integrate them and process them to get the output, i.e. a visualization and a CSV file.

There are several examples of such articles available on-line. For instance, there is the pan-European

The journalists now want to use LD because they heard that it is the highest possible level of openness according to the 5-star Open Data deployment scheme. They expect that the experience of working with LD will be somehow better than the experience of working with 3-star data. Naturally, they expect a tool they can use to work with such data and benefit from LD principles. On the other hand, they expect that they will be able to use the tool even without the knowledge of LD technologies such as RDF serialization formats or SPARQL. Such user expectations are described also in the literature. For example, [117] presents a scenario where non-LD experts search and analyze LD indexed in Open Data portals such as the Open Data portal of the EU commission11

In the rest of this section, we show how a LD consumption tool may enable our journalists to benefit from LD in different steps of the consumption process without having to know the LD technologies.

First, the journalists need to find data about cities in Europe, i.e. their description and location so that they can be placed on a map, and data about their population. Here, LD can help already. Recently, a number of national open data catalogs emerged and are being harvested by the European Data Portal (EDP),12

After identifying candidate datasets the journalists need to choose the ones that contain information needed for their goal. A helpful functionality of an LDCP could be various kinds of summaries of the candidate datasets and characterizations based on both the metadata of the dataset and the actual data in the dataset. Here we suppose that the metadata loaded from a catalog contain correct access information for each candidate dataset. For example, for each candidate dataset, the LDCP could offer human-readable labels and descriptions, vocabularies used, numbers of instances of individual classes and their interlinks, previews tailored to the vocabularies used or to the current use case (visual or textual, readable to non-LD experts), datasets linked form the candidate, datasets linking to the candidate, spatial and time coverage, etc. Using this information, the journalists would be able to choose a set of datasets containing the required information more easily.

Another feature that could help at this time is recommendation of related datasets. Based on available information about datasets already selected, the LDCP could suggest similar datasets. Given a set of datasets to be integrated, they can be further analyzed in order to find out whether they have a non-empty intersection, e.g. whether the population information specified in one dataset actually links to the cities and their locations present in another dataset.

There could be an interoperability issue between selected datasets caused by the existence of multiple vocabularies describing the same domain. In our example, it could be that the dataset containing locations of the cities could have the geocoordinates described using the

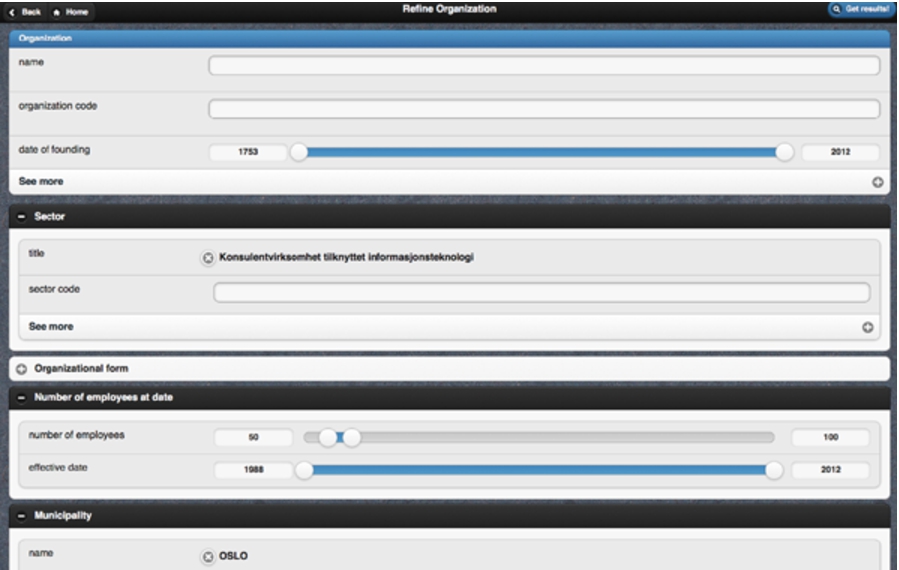

Our journalists now have to choose the entities and properties from those datasets that are needed for their goal. This could be done in a graphical way used e.g. by graphical SPARQL query builders such as SPARQLGraph [122], but not necessarily complicated by the full expressiveness of SPARQL.

Finally, the data should be prepared for further processing, and the journalists need to decide, in which data formats they will publish the results of their work. Since their readers, given the focus of the article, will typically be non-LD experts, a suitable format is one of the 3-star data ones, i.e. XML or CSV, so that readers can open the data for instance in their favorite spreadsheet editor. This format is also suitable for people simply used to using legacy tools. This would require assisted export to tabular data in CSV, tree data in XML, or perhaps generic graph data, e.g. in CSV for Gephi [14]. Another option is to publish the data as-is in the platform, i.e. in 5-star RDF. This would be the preferred way for experts, who could then use their favorite RDF processing tools, and for users with access to an LDCP, which, as we show in this survey, is still far from available. This could enable the readers to exploit for example some RDF enabled visualizations such as LinkedPipes Visualization [71]. Naturally, the journalists may decide to publish both versions of their data to satisfy the widest possible audience.

Users such as our journalists will never be able to exploit these features only using basic technologies like IRI dereferencing and SPARQL. They need a software tool, an LDCP, which will help them to use and to benefit from these features even without the knowledge of LD technologies.

The goal of the paper is to evaluate tools classified as LDCP candidates using a predefined list of requirements. In this section we describe our methodology for identification of the requirements, identification of the tools to be evaluated, for conducting their evaluation and for aggregation of their scores.

Requirements identification

To identify requirements to be used, we first define the LD consumption process based on existing literature which also introduces a similar process. For each step of the process we identify requirements that we see as crucial for an LDCP. We focus on requirements which can be satisfied by exploiting the benefits of consuming LD compared to consuming 3-star data. We then cover each requirement with a set of evaluation criteria which we later use to evaluate existing tools and decide if and to which extend they fulfill the requirement.

We identified each requirement based on the following existing resources:

An existing software tool which satisfies the requirement.

A technical specification (e.g., W3C Recommendation) which defines the requirement.

A published research paper which at least theoretically proposes a method fulfilling the requirement.

A published research paper which expresses the need for such a requirement.

These resources are evidence that requirements are meaningful and relevant. For each of the introduced requirements it must hold that it is supported by at least one of these types of evidence. The evidence identified to support a requirement by one author was then verified by another author, and possible conflicts were addressed in a discussion leading to a consensus.

In Section 4, we describe the evidence for each identified requirement. The evidence is not a complete survey of software tools, technical specifications and literature supporting the requirement. It only describes some relevant resources which support the requirement. If there is a research paper surveying resources relevant to the requirement available, we refer to it in the evidence.

Tools selection and evaluation

In this section, we describe the publication venues investigated, search engines used and the method used to identify tools which we classify as LDCP candidates, i.e. tools described in recent scientific literature as potentially relevant to LD consumption. Then we describe the method for selecting final candidates from the potential ones and the tool evaluation methodology.

Publication venues investigated

We have identified the most important venues where publications about relevant tools could appear. For each venue we investigated its main sessions and especially the posters and demo tracks. In addition, the workshops listed below have been considered. We investigated publications since 2013 (published till July 13th 2017). The relevant venues were:

World Wide Web Conference (WWW) 2013–2017

International Semantic Web Conference (ISWC) 2013–2016

Extended Semantic Web Conference (ESWC) 2013–2017

SEMANTiCS 2013–2016

OnTheMove Federated Conferences (OTM) 2013–2016

International Conference on Web Engineering (ICWE) 2013–2016

Linked Data on the Web workshop (LDOW) 2013–2017

International Workshop on Consuming Linked Data (COLD) 2013–2016

International Conference on Information Integration and Web-based Applications & Services (iiWAS) 2013–2016

In addition, we investigated the following journals for papers no older than 2013, the newest ones being accepted for publication on July 13th 2017:

Semantic Web journal (SWJ)

Journal of Web Semantics (JWS)

Based on titles and abstracts, two of the authors independently went through the investigated venues and picked potentially interesting candidate papers. Then they read the full text of the candidate papers. In the full text they searched for any mentions of an existing tool which could be used for any of the identified requirements. This resulted in 82 tools identified as potential candidates.

Search engines used

In addition to going through the above mentioned publication venues, we used the Scopus search engine17

For keywords, we used

The query returned 1416 candidate papers. Two of the authors independently went through the search result and identified additional potentially interesting candidate papers based on their title and abstract. In the full text of the candidate papers they searched for mentions of relevant tools. This resulted into 28 additional tools. All conflicts between the authors in this phase were resolved by a discussion leading to a consensus.

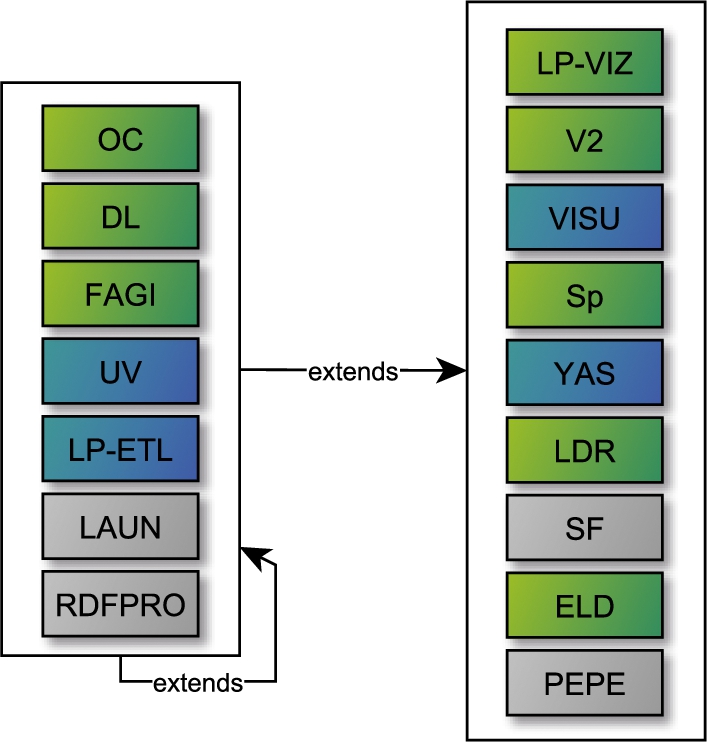

The list of potential candidates was iteratively filtered in 3 elimination rounds using preliminary criteria described below, to limit the number of tools to be later evaluated according to all our 94 criteria from Section 4. The list of all tools which passed round 1, and their progress through rounds 2 and 3 can be seen in Table 9, Table 10 and Table 11 in Appendix B.

Out of the 110 potential candidates, 65 were left after the first elimination round.

Out of the 65 potential candidates, 40 were left after the second elimination round. For each of the 40 candidates we had its running instance available which was tested in the following elimination rounds. These criteria may seem too restrictive, however, it is crucial for the evaluated tools to be easily and freely usable or installable, e.g. without registration, so that they can be assessed and used without the need for interaction with their maintainers.

For testing

Out of the 40 potential candidates, 16 were left after the third elimination round.

The 16 candidates were evaluated thoroughly based on all 94 of our criteria from Section 4. The evaluation results are presented in Section 5.

Each criterion was evaluated from the point of view of a non-LD expert and then from the point of view of an LD expert according to the scenario, which can be seen in Appendix C. When the scenario was passed without expert knowledge of LD technologies, we assigned “✓✓” to the tool for this criterion. If the scenario could be passed only with expert knowledge of LD technologies, we assigned “✓” to the tool for this criterion. Finally, if the scenario could not be done in any way, we assigned “–” to the tool for this criterion.

When evaluating a tool given a criterion and its scenario, we first studied the user interface of the tool for any indication of support for that scenario in that tool. Next, we searched for evidence of support for that criterion in the available tool documentation, i.e. articles and web. Only after we failed to find any mention of the desired functionality this way, we said that the tool failed the criterion.

For criteria dealing with dataset search, when the tool allowed us to search for individual RDF resources and we were able to identify the datasets the found resources belonged to, we considered the criteria passed.

Tool score aggregation and interpretation

Once a tool is evaluated, it has a non-LD expert pass “✓✓”, LD expert pass “✓” or a fail “–” mark assigned for each criterion. These detailed results can be seen in Table 6, Table 7 and Table 8 in Table A. Next, its

Requirements and evaluation criteria

We define the following steps of the LD consumption process:

discovery of candidate datasets available on the web based on a given user’s intent and selection of relevant datasets needed to fulfill the intent,

extraction of data from the relevant datasets,

manipulation of the extracted data, e.g. cleansing, linking, fusion, and structural and semantic transformation,

output of the resulting data into a required format.

Similar processes are considered in the related literature. For example, in [34] the authors describe the following process:

correctly identify relevant resources,

extract data and metadata that meet the user’s context and the requirements of their task or end goal,

pre-process this data to feed into the visualization tool available and the analysis required,

carry out initial exploratory analysis, using analytical and/or mining models.

Compared to our definition of the data consumption process, the process proposed in [34] concentrates more on data visualization and analysis. Nevertheless, it is comparable to ours. Step (a) corresponds to our step 1. Step (b) corresponds to our steps 1 and 2. Step (c) corresponds to our steps 3 and 4. Finally, step (d) is not covered by our process. We consider that the user works with the data as described in (d). However, this is out of the scope of an LDCP as it is performed in an external tool. Therefore, we do not evaluate this step.

There is also non-scientific literature describing the LD consumption process. In [15], the authors specify the following process:

specify concrete use cases,

evaluate relevant data sets,

check the respective licenses,

create consumption patterns,

manage alignment, caching and updating mechanisms,

create mash ups, GUIs, services and applications on top of the data

This process can also be aligned with ours. Steps (a) and (b) correspond to our step 1 – in (a) the users formulate their intent, in (b) they search for relevant datasets. Step (c) is a part of our step 3 (see Section 4.2.4). In step (d) the users identify and select relevant data from the datasets which corresponds to our step 2. Step (e) is a part of our step 3. Step (f) contains our step 4 and extends it with the actual creation of a service on top of the data. We expect that the users work with the data created in step 4 of our process in the same way as in step (f) from [15] but it is out of the scope of our process.

Our described process is generic and any data consumption process may be described in these steps, not only the LD consumption process. However, an LDCP may benefit from the LD principles in each step.

In the rest of this section, we describe the requirements for each of the described steps. In this paper, we aim explicitly at the requirements which may somehow benefit from LD principles and related technologies. For each requirement, we further specify evaluation criteria. The requirements are split into 5 requirement groups.

Therefore, there are no efficiency or performance requirements specified here. Once enough tools implement at least the minimal requirements identified here, their efficiency and performance may be evaluated.

We also do not evaluate how well a certain criterion is implemented regarding the user interface of the tool. What we evaluate is simply whether or not the tool implements at least some approach to address the criterion and whether LD expert knowledge is required or not.

We identified 34 requirements based on the existing tools and literature, split further into 94 fine grained criteria.

Dataset discovery

The consumption process starts with the user’s intent to discover datasets published on the web which contain the required data. Therefore, an LDCP should be able to gather metadata about available datasets and provide a search user interface on top of the metadata.

Gathering metadata

The primary goal of dataset discovery is to provide the users with information about existence of datasets which correspond to their intent. The provided information should consist of metadata characterizing the datasets, such as title, description, temporal and spatial information, URL where it can be accessed, etc. In this section, we define requirements on how an LDCP should gather such metadata.

The most natural way of gathering such information is loading dataset metadata from existing data catalogs. They usually provide a machine readable API, which an LDCP can use. Currently, the most wide-spread open data catalog API is the proprietary non-RDF

Besides the proprietary CKAN API metadata representation, more or less standardized RDF vocabularies for representing dataset metadata exist. First, there is the DCAT [46] vocabulary, a W3C Recommendation, and DCAT-AP v1.1, the DCAT application profile for European data catalogs recommended by European Commission, providing the vocabulary support for dataset metadata. There are existing data catalogs utilizing these vocabularies for providing access to dataset metadata. Examples of such data catalogs are the European Data Portal, integrating dataset metadata from various national data catalogs and the European Union Open Data Portal providing information about the datasets from the institutions and other bodies of the European Union. Besides DCAT, there is also the VoID [2] vocabulary, a W3C interest group note for representation of metadata about LD datasets. The metadata using these vocabularies is then accessible by the usual LD ways, i.e. in a data dump, in a SPARQL endpoint or using IRI dereferencing.

An LDCP should therefore support loading dataset metadata using a data catalog API and/or the LD principles and vocabularies. This leads us to our first requirement, Req. 1.

(CKAN API).

Given a URL of a CKAN instance, e.g.

The detailed information about a dataset typically contains, among others, a link for downloading the data and information about its data format, i.e. a MIME-type, in case of downloadable files.

Therefore, LDCP can consume such API in a way which allows it to directly access the cataloged data without unnecessary user interaction. SPARQL endpoints can be discovered in a similar fashion.

(DCAT-AP).

In the European Union, the DCAT-AP standard for data portals prescribes that dataset metadata should be represented in RDF, and lists classes and properties to be used. Therefore, LDCP can consume an RDF metadata dump or access a SPARQL endpoint, e.g. the European Data Portal endpoint,24

(RDF metadata in dump).

(Metadata in SPARQL endpoint).

(Metadata from IRI dereferencing).

The previous requirement assumes that metadata about datasets already exists provided through APIs of existing data catalogs. However, there is a more advanced way of gathering metadata. It is through implementation or support of a custom crawling and indexing service not necessarily relying solely on metadata found in data catalogs. Such a service can build its own index of datasets comprising automatically computed metadata based on the content of the datasets using so called

Examples of services which build dataset profiles automatically from the content of the datasets are Sindice [101] or LODStats [11]. The resulting Req. 2 can be viewed as a parallel to the development witnessed in the Web of Documents where first, there were web page catalogs and later came full-text search engines such as Google.

(Sindice).

Sindice [101] was an LD indexing service which then allowed to search the index data using the Sig.MA tool, which facilitated discovering the data sources of the search results. The service is, however, currently unavailable.

(Dataset profiling).

Search user interface

A user interface (UI) enables users to express their intent to search for datasets in the form of dataset search queries. In this section, we define requirements on the query language the users may use to express their dataset search queries and the assistance provided by LDCP to the users when writing such search queries. The way how search queries may be evaluated by an LDCP is discussed later in Section 4.1.3.

Most users are familiar with UIs provided by dominating dataset discovery services - open data catalogs (e.g., CKAN). They enable the users to express their intent as a list of keywords or key phrases. The users may also restrict the initial list of datasets discovered using keywords with facets. A facet is associated with a given metadata property, e.g.

These features are independent of the methods of gathering the metadata described in Section 4.1.1 and they can be offered without building on top of the LD principles. However, there exist many approaches in the literature which show how the UI may benefit from the LD principles to better support the user. Even though they are primarily intended for querying the content of datasets, they could be directly used by an LDCP for dataset discovery. We show examples of these works in the rest of this section and formulate requirements on the LDCP based on them.

There are two approaches to this problem in the literature. The first approach tries to provide the users with the power of SPARQL while hiding its complexity and enables them to express their intent in a natural language. The query is then translated to a SPARQL expression. For example, in [136], the authors parse a natural language expression to a generic domain independent SPARQL template which captures its semantic structure and then they identify domain specific concepts, i.e. classes, predicates, resources, combining NLP methods and statistics. In [42], the authors propose a natural language syntactical structure called Normalized Query Structure and show how a natural language query can be translated to this normalized structure and from here to SPARQL. In SimplePARQL [40], parts of the SPARQL query can be written as keywords, which are later rewritten into pure SPARQL.

The second approach offers search query languages which do not aim to be as expressive as SPARQL, but they somehow improve the expressive power of the keywords based languages. They achieve this by extending them with additional concepts. For example, [128] proposes a search query language which supports so called predicate-keyword pairs where a keyword may be complemented by RDF predicates which are related to the keywords in the searched datasets. In [52], the authors propose a novel query language NautiLOD which enables to specify navigation paths which are then searched in the datasets. A navigation path expression is simpler and easier to specify than a SPARQL query even though NautiLOD navigation paths are more powerful then SPARQL navigation paths.

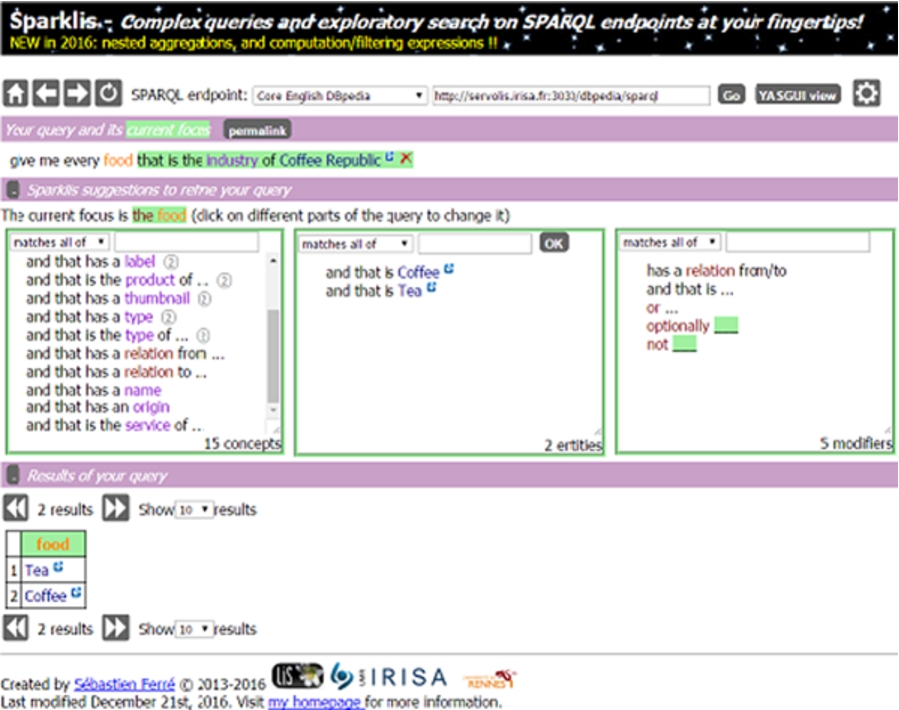

SPARKLIS user interface for formulating SPARQL queries.

(Sparklis).

Sparklis[50]26

(Fulltext search).

(Natural language based intent expression).

(Formal language based intent expression).

(Search query formulation assistance).

(Search query formulation assistance).

The user interface provided by Sparklis (see Fig. 1) assists the user with formulating a graph pattern expressed in a restricted natural English by providing labels of classes, labels of their individuals as well as their predicates.

(Seach query automated suggestions).

(Faceted search).

Search query evaluation

In this section we define requirements on how an LDCP can evaluate the user’s intent expressed as a search query using the provided search user interface. The evaluation means that the LDCP identifies a list of datasets corresponding to the intent. The simplest solution is provided by the existing open data catalogs. Keywords or other metadata values filled in a search form are matched against metadata records. Further restrictions expressed by the user using facets are evaluated by filtering of the metadata records of the datasets.

The current literature shows how this basic evaluation can be extended based on LD. The first possibility is to interpret the semantics of keywords or key phrases in the search query against given knowledge bases. The keywords are represented with semantically corresponding resources, i.e. individuals, concepts, classes or properties, from the given knowledge bases, possibly including their context. In [24], the authors first discover the semantics of entered keywords by consulting a given pool of ontologies to find a syntactical match of keywords and labels of resources in these ontologies. Then they automatically formulate a formal query based on the semantics. In [146], the authors discover semantics of keywords by matching them to extraction ontologies, i.e. linguistically augmented ontologies, to enable the system to extract information from texts. An LDCP may use these approaches in two ways – it may add semantically similar concepts to the search query or it may add semantically related ones as discussed in [145]. Both possibilities mean that the query provided by the user is expanded. Therefore, we speak about

An LDCP may also benefit from approaches for computing semantic relatedness of resources. For example, [39] shows how the semantic relatedness may be computed in a scalable way based on graph distance between two resources, i.e. average length of paths connecting the resources in a graph.

(Search query expansion).

(Search query expansion).

Using a knowledge base such as Wikidata, an LDCP may recognize terms in the search query as concepts from the knowledge base. For instance, it may recognize that the term

Another possibility is to extend the discovered list of datasets. It means that after the search query is evaluated, and either expanded or not, the resulting list of discovered datasets is extended with additional semantically related datasets. By the term “semantically related” we mean various kinds of semantic relationships among datasets. A simpler solution is to search for additional datasets which provide statements about the same resources, use the same vocabularies or link their resources to the resources in the already discovered datasets. These relationships are explicitly specified as RDF statements in the datasets. A more complex solution is to derive semantic relationships among datasets based on the similarity of their content [141].

An LDCP may also profit from approaches which recommend datasets for linking based on dataset similarity. For example, [45] introduces a dataset recommendation method based on cosine similarity of sets of concepts present in datasets. Similarly, the authors of [86] recommend datasets based on the similarity of resource labels present in the datasets. Having discovered a dataset, an LDCP may use such an approach to recommend other semantically similar datasets. Instead of recommending them for linking, they could be recommended as additional datasets possibly interesting to the user. We may also get back to simpler solutions and consider metadata profiles which may also contain some explicitly expressed semantic relationships between datasets. E.g., DCAT-AP discussed in Section 4.1.1 enables data publishers to specify semantic relationships between datasets in their metadata descriptions.

The discovery of related datasets is understood as one of the key features an LDCP should have for example in [79], where the authors show how a possibility of recommending additional semantically related datasets may improve the quality of scientific research in domains such as medicine or environmental research by supporting interdisciplinary research.

(Search results extension).

(Search results extension).

DCAT-AP considers the following predicates from the Dublin Core Vocabulary which can be used to express semantic relationships between datasets:

(Search results extension).

Displaying search results

An LDCP needs to display the discovered list of datasets to the user. For this, it needs to order the discovered list and display some information about each dataset. The current dataset discovery solutions, i.e. data catalogs, order the discovered list according to the keywords based similarity of the intent of the user and the metadata descriptions of the discovered datasets. Usually, they display an ordered list of datasets with basic metadata such as the name, description, publication date, and formats in which the dataset is available. However, there are more advanced ways of displaying the search results which can be built on top of LD.

First, the discovered datasets may be ranked and their list may be ordered according to this ranking. For example, in [38], the authors modify a well-known PageRank algorithm for ranking datasets. Dataset ranking can also be based on their quality scores surveyed in [144]. In [80], the authors also propose an algorithm based on PageRank but they moreover consider the relevance to the intent of the user. A review of ranking approaches is available in [63].

(Dataset ranking).

(Dataset ranking).

For example, an LDCP may combine a PageRank score of a dataset with the relevance of publishers of datasets where datasets published by public authorities are ranked higher than datasets published by other organizations.

(Content-based dataset ranking).

(Context-based dataset ranking).

(Intent-based dataset ranking).

Second, it is possible to show a (visual) preview or summary of the discovered datasets [20] to help the user select the appropriate datasets. For example, a timeline showing the datasets according to their creation dates, the dates of their last modification, their accrual periodicities or their combination. Other types of previews are a map showing their spatial coverage, a word cloud diagram from their keywords, etc.

The techniques which would allow an LDCP to create such previews of the datasets include support for the appropriate RDF vocabularies. Some of the LD vocabularies recently became W3C Recommendations,29

(Preview using the RDF Data Cube Vocabulary).

Given a statistical dataset modeled using the RDF Data Cube Vocabulary, a dataset preview (Req. 8) could contain a list of dimensions, measures and attributes or a list of concepts represented by them, based on its Data Structure Definition (DSD). In addition, the number of observations could be displayed and, in the case of simpler data cubes such as time series, a graphical line graph or bar chart can be shown automatically, as in LinkedPipes Visualization [70].

(Preview – W3C vocabularies).

(Preview – LOV vocabularies).

(Preview metadata).

The user may also require a dataset preview compiled not based on descriptive and statistical metadata provided by the publisher but based on metadata automatically computed from the dataset contents. This metadata include descriptive metadata (e.g., important keywords or spatial and temporal coverage), statistical metadata (e.g., number of resources or numbers of instances of different classes) and a schema. It is important to note that schemas are often very complex and their summaries are more useful for users. [103,134] propose techniques for schema summarization which create a subset of a given dataset schema which contains only the most important nodes and edges. Therefore, it is a reasonable requirement that an LDCP should be able to generate metadata independently of what is contained in the manually created metadata records.

(Data preview).

(Data preview).

A preview of data based on the data itself instead of relying on the metadata can be seen in the LODStats [11] dataset. From statistics such as the number of triples, entities, classes, languages and class hierarchy depth, one can get a better information contributing to the decision on whether or not to use such a dataset.

(Schema extraction using SPARQL).

For RDF datasets, the list of used vocabularies can be attached using the

and this one for predicates among classes with number of their occurences:

(Schema extraction).

Besides metadata, statistics and used vocabularies there are many other criteria regarding data quality that should also be presented to the user to support his decision making. We do not aim at specific quality indicators. The following requirement related to data quality is generic and it considers any meaningful LD quality indicator. A recent survey of such indicators and techniques for their computation is available [144].

(Quality indicators).

(Checking of availability).

One of the quality dimensions surveyed in [144] is

Data manipulation

A common problem of LD tools out there is their limited support for standards of representation and access to LD. An LDCP should support all existing serialization standards such as Turtle, JSON-LD, etc. and it should support loading the input in different forms such as dump files, SPARQL endpoints, etc. In this section, we motivate and define requirements on data input, its transformations, provenance and licensing support and we emphasize existing web standards.

Data input

An LDCP should support all the standard ways of accessing LD so that there are no unnecessary constraints on the data to be consumed. These include IRI dereferencing (Req. 11), SPARQL endpoint querying (Req. 12 and the ability to load RDF dumps in all standard RDF 1.1 serializations.33

There are at least two ways of how an LDCP should support IRI dereferencing. First, an LDCP should be able to simply access a URL and download a file in any of the standard RDF serializations, which we deal with later in Req. 13. Second, an LDCP should be able to use HTTP Content Negotiation37

(Usage of IRI dereferencing).

One of the possible data inputs can be a single RDF resource, e.g. the representation of Prague in DBpedia, for which the user might want to monitor, some property, e.g. population. The easiest way to do that is to simply access the resource IRI, to support following HTTP redirects and to ask for RDF data in our favorite RDF serialization (Req. 11). This process can be then repeated as necessary. It is an equivalent of running, e.g.:

(IRI dereferencing).

(Crawling).

Publishing data via a SPARQL endpoint is considered the most valuable way of publishing LD for the consumers. It provides instant access to the computing power of the publishers infrastructure and the consumer does not have to work with the data at all to be able to query it. Publishing data this way also enables users to do federated queries [108]. Therefore, this should be the easiest way of getting data using an LDCP. On the other hand, public SPARQL endpoints typically implement various limitations on the number of returned results or query execution time, which make it hard to get, e.g. a whole dataset this way. These limits, however, can be tackled using various techniques such as paging.

(RDF input using SPARQL).

(Accessing the DBpedia SPARQL endpoint).

Since DBpedia is the focal point of the whole LOD cloud, it is often used as a linking target to provide more context to published data. In addition to IRI dereferencing, an LDCP can use the DBpedia SPARQL endpoint to, e.g., suggest more related data of the same type, etc.

(SPARQL: querying).

(SPARQL: named graphs).

(SPARQL: custom query).

(SPARQL: authentication).

(SPARQL: advanced download).

(RDF dump load).

The previous techniques such as SPARQL querying and IRI dereferencing relied on the fact that the target service was able to provide the desired RDF data serialization. However, when there are RDF dumps already serialized, to be downloaded from the web, an LDCP needs to support all standardized serializations itself, as it cannot rely on any service for translation.

(RDF quads dump load).

One of the use cases for named graphs in RDF already adopted by LD publishers is to separate different datasets in one SPARQL endpoint. It is then quite natural for them to provide data dumps in an RDF quads enabled format such as N-Quads or TriG, e.g. statistical datasets from the Czech Social Security Administration.39

https://data.cssz.cz/dump/nove-priznane-duchody-dle-vyse-duchodu.trig

(Turtle file load).

(N-Triples file load).

(JSON-LD file load).

(RDFa file load).

(N-Quads file load).

(TriG file load).

(Handling of bigger files).

(CSV on the Web load).

(Direct mapping load).

(R2RML load).

Besides these traditional ways of getting LD, an LDCP should support the Linked Data Platform [8] specification for getting resources from containers. These can be, e.g. notifications according to the Linked Data Notifications W3C Recommendation [27,28].

(Linked Data Platform input).

(Loading data from Linked Data Platform).

The Linked Data Platform (LDP) specifications defines (among others) containers40

In addition to be able to load data in all standardized ways, an LDCP should also provide a way of saying that the data source should be periodically monitored for changes, e.g. like in the Dynamic Linked Data Observatory [64]. This includes the specification of the periodicity of the checks and the specification of actions to be taken when a change is detected. The actions could vary from a simple user notification to triggering a whole pipeline of automated data transformations. This way, the users can see what is new in the data used for their goals and whether the data is simply updated or needs their attention due to more complex changes. A short survey of existing approaches to change monitoring is provided in [44], Section 3.7.

(Monitoring of changes in input data).

(Keeping data up to date).

The EU Metadata Registry (MDR)42

(Monitoring of input changes: subscription).

When working with real world data sources, it may be necessary to keep track of versions of the input data which can change in time or even become unavailable. When more complex changes happen in the data, the whole consumption pipeline could be broken. Therefore, a version management mechanism should be available in an LDCP so that the user can work with a fixed snapshot of the input data and see what has changed and when. Such a mechanism could also be automated. For example, if a consuming client holds a replica of a source dataset, changes in the source may be propagated to the replica as shown in [48], creating a new version. Updates may also be triggered by events like Git commits, as can be seen in [7].

Another type of version management is support for handling a data source, which itself is available in multiple versions at one time, e.g. using the Memento [37] protocol.

(Version management).

(Manual versioning support).

(Automated versioning support).

(Source versioning support).

Analysis of semantic relationships

The requirements discussed in the previous sections support the users in discovering individual datasets isolated from one another. However, the users usually need to work with them as with one integrated graph of RDF data. Therefore, the users expect that the datasets are semantically related to each other and that they can use these semantic relationships for their integration. If a dataset is not in a required relationship with the other discovered datasets the users need to know this so that they can omit the dataset from further processing. We have briefly discussed possible kinds of semantic relationships in Req. 6. However, while Req. 6 only required an LDCP to show datasets which are somehow semantically related to the selected one, now the users expect a deeper analysis of the relationships.

Each of the existing semantic relationships among the discovered datasets should be presented to the users with a description of the relationship, i.e. the kind of the relationship and its deeper characteristics. The descriptions will help the users to understand the relationships and decide whether they are relevant for the integration of the datasets or not.

When the datasets share resources or their resources are linked, the deeper characteristics may involve the information about the classes of the resources, the ratio of the number of the shared or linked resources to the total number of the resources belonging to these classes, compliance of the datasets in the statements provided about the shared resources, etc. There are approaches which provide explanations of such relationships to users. For example, [104,105] propose a method for generating explanations of relationships between entities in the form of most informative paths between the entities. The approach can be extended to explaining relationships among datasets which contain related entities. In [123], the authors present another approach to explaining relationships between datasets based on so called

When the datasets neither share the resources nor there are links among them, the semantic relationship may be given by the fact that they share some parts of vocabularies, i.e. classes or predicates. Such relationships may be deduced from metadata collected by services such as LODStats [11].

(Semantic relationship analysis).

(LODVader).

LODVader [96] shows relationships among given datasets, demonstrated on the LOD cloud. Instead of relying on existing metadata in VoID, it generates its own based on the actual data. It is able to present relationships based on links between resources as well as shared vocabularies. Unfortunately, the software seems abandoned and the demo works no longer.

(Shared resources analysis).

(Link analysis).

(Shared vocabularies analysis).

As a supplemental requirement to the previous one is the support of methods for automated or semi-automated deduction of semantic relationships among datasets. These methods may be useful when semantic relationships cannot be directly discovered based on statements present in the datasets, but new statements must be deduced from the existing ones. There are tools like SILK [140], SLINT [97] or SERIMI [5] which support so called

(Semantic relationship deduction).

(Link discovery using SILK).

A classic example of adding semantic relations to existing data is link discovery. Let us, for instance, consider a dataset with useful information about all Czech addresses, towns, etc. called RÚIAN. Data on the web, however, typically links to DBpedia representations of Czech cities and regions. In order to be able to use information both from the LOD cloud and from RÚIAN, the data consumer needs to map, e.g., the Czech towns in the Czech dataset to the Czech towns in DBpedia, based on their names and based on regions in which they are located. This is a task well suited for a link discovery tool such as SILK, and an LDCP should be able to support this process.

(Link discovery).

(Ontology alignment).

Semantic transformations

The user now needs to process the discovered datasets. As we discuss later in Section 4.4, this means either visualizing the datasets or exporting them with the purpose of processing in an external tool. In general, it means to transform the datasets from their original form to another one, which is necessary for such processing. We can consider transformations, which transform one RDF representation of the datasets to another RDF representation or to other data models such as relational, tree, etc. In this section, we discuss the RDF to RDF transformations. The transformations to other data models are considered as outputs of the process and are covered in Section 4.4. A transformation involves various data manipulation steps which should be supported by an LDCP.

First, an LDCP must deal with the fact that there exist different vocabularies for the same domain. We say that such datasets are

(Transformations based on vocabularies).

(GoodRelations to Schema.org transformation).

In the process of development of the Schema.org vocabulary, a decision was made to import classes and properties of the GoodRelations vocabulary used for e-commerce into the Schema.org namespace. This decision is questionable and controversial43

(Vocabulary-based SPARQL transformations).

Another often required kind of data manipulation is inference. Inference can be used when additional knowledge about the concepts defined by the vocabulary is provided which can be used to infer new statements. Such knowledge is expressed in the form of so called

(Inference and resource fusion).

(Inference).

Many RDF vocabularies are defined with some inference rules in place. An example of inference which would be beneficial to support in an LDCP can be the SKOS vocabulary with its

Let us have two datasets we already mentioned in Example 18.1, Czech towns from DBpedia and the Czech registry containing information about all addresses and, among others, cities of the Czech Republic and let us consider we linked the corresponding entities using the

(RDFS inference).

(Resource fusion).

(OWL inference).

Besides transformations, the user will typically need the standard operations for data selection and projection. An LDCP can assist the user with specification of these operations by providing graphical representation of the required data subset, which may correspond to a graphical representation of a SPARQL query like in SPARQLGraph [122] and similar approaches.

(Selection and projection).

(Data language selection).

One type of selection may be limiting the dataset to a single language. For example, when working with the EU Metadata Registry (MDR), codelists are usually provided in all EU languages. Having the data in multiple languages may, however, not be necessary. An LDCP may support common selection techniques without requiring the user to write SPARQL queries.

(Assisted selection).

(Data projection).

An example of a projection may be picking only data which is relevant for a given use case, while omitting other properties of represented resources. The user may again be assisted by a graphical overview of the dataset with possibility to select relevant entities, their properties and links.

(Assisted projection).

Besides the above described specific data manipulation requirements, the user may need to define own specific transformations expressed in SPARQL.

(Custom transformations).

(Custom transformations).

Having the support for all kinds of data manipulations described above it is necessary to be able to combine them together so that the discovered datasets are transformed to the required form. The user may be required to create such data manipulation pipeline manually. However, we might also expect that an LDCP compiles such pipeline automatically and the user only checks the result and adjusts it when necessary.

(Automated data manipulation).

(Automated data manipulation).

An LDCP may be aware of what the data in each step of the consumption process looks like even as the process is being designed, e.g. by performing background operations on data samples, and it may recommend possible or probable next steps. Using the same principles, the data transformation pipelines may be even generated automatically, given that the requirements on the final data structure are known and the source data is accessible. This can be seen in e.g. LinkedPipes Visualization [71], where given an RDF data source, a list of possible visualizations formed by data transformation pipelines, is offered automatically, based on known vocabularies and input and output descriptions of the data processing components.

(Automated data manipulation).

Provenance and license management

For the output data to be credible and reusable, detailed provenance information should be available capturing every step of the data processing task, starting from the origin of the data to the final transformation step and data export or visualization. There is the PROV Ontology [119], a W3C Recommendation that can be used to capture provenance information in RDF.

(Provenance).

(Provenance).

When the goal of the LD consumption process is to gather data, combine it and pass it to another tool for processing, it might be useful to store information about this process. Specifically, the consumers of the transformed data might be interested in where the data comes from, how it was combined, what selection and projection was done, etc. This information can be stored as an accompanying manifest using the PROV Ontology [119] and may look like this (taken directly from the W3C Recommendation):

(Provenance input).

(Provenance output).

Part of metadata of a dataset should be, at least in case of open data, the information about the license under which the data is published, since that is what the first star of open data is for. A common problem when integrating data from various data sources is license management, i.e. determining whether the licenses of two data sources are compatible and what license to apply to the resulting data. This problem gets even more complicated when dealing with data published in different countries. A nice illustration of the problem is given as Table 3 in ODI’s Licence Compatibility47

(License management).

Based on open data license compatibility information such as the ODI’s Licence Compatibility table, an LDCP should advise its user on whether two data sources can be combined or not and what is the license of the result. The licenses of individual datasets can be obtained from its DCAT metadata, as in, e.g. the Czech National Open Data catalog.49

(License management).

Data visualization

The goal of using an LDCP is either to produce data for processing in other software or to produce visualizations of discovered data sets. Given the graph nature of RDF, the simplest visualization is a graph visualization of the data, reflecting its structure in RDF. Another type of visualizations are manually specified using a mapping of the RDF data to the inputs of visualization plugins, as in LinkDaViz [131]. Finally, visualizations can be automated, based on correct usage of vocabularies and a library of visualization tools as in the LinkedPipes Visualization (LP-VIZ) [70], which offers them for DCV, SKOS hierarchies and Schema.org GeoCoordinates on Google Maps.

(Visualization).

(Manual visualization in LinkDaViz).

An example of manual visualization is LinkDaViz. The user first selects the dataset to be visualized and then selects specific classes and properties from the dataset. Based on the selected properties and their data types, visualization types are recommended and more can be configured manually to get a visualization such as a map, a bar chart, etc.

(Automated visualization in LP-VIZ).

In contrast to manual visualization in Example 26.1, in LinkedPipes Visualization the user points to the dataset and the tool by itself, automatically, takes a look at what data is present in the dataset. It then offers possible visualizations, based on the found, well-known vocabularies supported by components registered in the tool.

(Graph visualization).

(Manual visualization).

(Vocabulary-based visualization).

Data output

The other possible goal of our user is to output raw data for further processing. The output data can be in various formats, the most popular may include RDF for LD, which can be exported in various standardized ways. The first means of RDF data output is to a dump file using standardized serializations. The inability to create a dump in all standardized formats (only some) will be classified as partial coverage of the requirement.

(RDF dump output).

(RDF dump output).

Various tools may need various RDF serializations to be able to process the output data. The main problem which can be seen quite often is that many applications consuming RDF only support triple formats and not quad formats. Therefore, a LDCP should support output in all standard RDF 1.1 serializations, which can be easily supported by using an existing library such as Apache Jena or Eclipse RDF4J.

(RDF/XML file dump).

(Turtle file dump).

(N-Triples file dump).

(JSON-LD file dump).

(RDFa file dump).

(N-Quads file dump).

(TriG file dump).

Another way of outputting RDF data is directly into a triplestore, using one of two standard ways, either the SPARQL Update query [106] or using the SPARQL Graph Store HTTP Protocol [100].

(RDF output using SPARQL).

(Loading data using the SPARQL Graph Store HTTP Protocol).

For larger datasets, where whole named graphs are manipulated, it is simpler to use the SPARQL Graph Store HTTP Protocol to load data. Basically, a data dump is sent over HTTP to the server instead of being inserted by a SPARQL query. However, despite being a W3C Recommendation, the implementation of the protocol differs among quad stores. Therefore, besides the endpoint URL, authentication options and the target named graph, an LDCP should be also aware of the specific implementation of the endpoint. This can be seen e.g. in LinkedPipes ETL’s Graph Store Protocol component.50

(SPARQL: named graphs support).

(SPARQL: authentication support).

(SPARQL Graph Store HTTP Protocol output).

Lastly, an LDCP should be able to write RDF data to Linked Data Platform compliant servers. This may also include support for W3C Recommendations building on top of the LDP, such as Linked Data Notifications [28] for sending notifications to inboxes on the web.

(Linked Data Platform output).

(Loading data to Linked Data Platform).

The Linked Data Platform (LDP) specifications defines (among others) how new entities should be added to existing containers. Therefore, an LDCP should be aware of this and support the handling of LDP containers. A specific use case may be sending messages according to the Linked Data Notifications W3C Recommendation.

(Linked Data Platform Containers Output).

Besides being able to output RDF, a perhaps even more common use case of an LDCP will be to prepare LD to be processed locally by other tools, which do not know LD. One choice for non-LD data export is a CSV for tabular data. The usual way of getting CSV files out of RDF data is by using the SPARQL SELECT queries. Such CSV files can now be supplied by additional metadata in JSON-LD according to the Model for Tabular Data and Metadata on the Web (CSVW) [129] W3C Recommendation. Besides tabular data, tree-like data are also popular among 3-star data formats. These include XML and JSON, both of which the user can get, when a standardized RDF serialization in one of those formats, i.e. RDF/XML and JSON-LD, is enough. This is already covered by Req. 27. However, for such data to be usable by other tools, it will probably have to be transformed to the JSON or XML format accepted by the tools and this is something that an LDCP should also support. For advanced graph visualizations it may be useful to export the data as graph data e.g. for Gephi [14]. Majority of people today do not know SPARQL and do not want to learn it. However, an LDCP should still allow them to work with LD and use the data in their own tools. Therefore, an LDCP should not only allow to export 3* data, but it should support doing so in a user friendly way, with predefined output formats for external tools and, ideally, predefined transformations from vocabularies used in the tool’s domain. This observation comes from a recent publication of a Czech registry of subsidies, which was published as LD only, with N-Triples dumps, dereferencable IRIs and a public SPARQL endpoint.51

(Gephi support).

To support graph visualization in Gephi, which can effectively visualize large data graphs, an LDCP should support output consumable by Gephi. Technically, this is a pair of CSV files with a specific structure. An LDCP could include a wizard for creation of such files.

(CSV file output).

(CSV on the Web output).

(XML file output).

(JSON file output).

(Output for specific third-party tools).

Developer and community support

For most of our requirements there already is a tool that satisfies them. The problem why there is no platform using these tools is caused by their incompatibility and the large effort needed to do so. An LDCP does not have to be a monolithic platform and can consist of multitude of integrated tools. However, the tools need to support easy integration, which motivates the next requirements. Each part of an LDCP and the LDCP itself should use the same API and configuration which it exposes for others to use. The API should again be based on standards, i.e. REST or SOAP based, and the configuration should be consistent with the rest of the data processing, which means in RDF with a defined vocabulary.

(API).

(API).

For instance, LinkedPipes ETL offers REST-like API used by its own frontend to perform operations like running a transformation, creating a transformation pipeline, etc. The API is documented by OpenAPI52

(API documentation).

(Full API coverage).

(RDF configuration).

(RDF configuration).

All configuration properties and projects of an LDCP should be also stored, or at least accessible, in RDF. This is so that this data can be read, managed and created by third-party RDF tools and also shared easily on the Web, as any other data. For instance, LinkedPipes ETL stores its transformation pipelines in RDF and makes their IRIs dereferencable, which eases their sharing and offers wider options for their editing.

(Configuration in open format).

(Configuration in RDF).

An important quality of a tool available on the web is the nature of its community. This includes the size of the community formed around that tool, its activities and repositories for sharing tool plugins and data processes created in the tool. The community should be active and maintain the tool, or at least share new plugins or new data processes.

Since one of the ideas of LD is distribution of effort, the data processes defined in an LDCP should themselves be shareable and described by an RDF vocabulary as it is with, e.g. the Linked Data Visualization Model (LDVM) pipelines [68]. For this, a community repository for sharing of LDCP plugins, i.e. reusable pieces of software, which can extend the functionality of the LDCP, like visualization components from Req. 26 and vocabulary-based transformers from Req. 19 should be present and identified for the LDCP.

In addition, the data transformation processes defined using the LDCP, i.e. the LDCP process configuration, should also be easily shareable with the community, facilitating effort distribution. An example of such a shared project could be a configuration allowing users to extract subsets of DBpedia as CSV files, etc.

(Tool community).

(Sharing on GitHub).

Git is a very popular tool for sharing of and collaboration on software and data, with GitHub as one of its most popular repository implementations. To spark the network effect, an LDCP should have a catalog of plugins and reusable projects, on which the community can collaborate. Since projects themselves should be stored as RDF (Req. 32), they can be easily shared on, e.g. GitHub. This can be illustrated by, e.g. LinkedPipes ETL, which has its repository of plugins53

(Repository for plugins).

(Repository for data processes).

(Community activity).

When the whole process of data gathering, processing and output is described in LDCP, it may be useful to expose the resulting transformation process as a web service, which can be directly consumed by e.g. web applications. This could facilitate live data views or customizable caching and update strategies.

(Deployment of services).

(Encapsulated SPARQL queries).

A very simple example of Req. 34 could be a SPARQL query, possibly with parameters, encapsulated as a web service. This is a common way of helping non-RDF applications consume live RDF data without even knowing it. However, the process behind the web service can be much more complex, and an LDCP could offer creation of such services to its users.

(Deployment of services).

Tools evaluation and description

Requirement group scores of evaluated candidates for non-LD experts. Empty cells represent 0%

Requirement group scores of evaluated candidates for non-LD experts. Empty cells represent 0%

Requirement group scores of evaluated candidates for LD experts. Empty cells represent 0%

In this section, we first briefly describe each evaluated tool and we point out to fulfilled requirements. Then we discuss the evaluation results and identify tools which can be considered as an LDCP because they satisfy at least the minimal conditions described in the introduction. Not all evaluated tools meet even the minimal conditions. However, it does not mean that they are useless for LD consumption. We also discuss the evaluation results for these tools in the text.

In this section, we introduce the 16 individual tools which we evaluated using our 94 criteria.

LinkedPipes visualization

LinkedPipes Visualization [71] (LP-VIZ) is a tool providing automated visualizations of LD, fulfilling Req. 8 Preview based on vocabularies and Req. 26 Visualization. It queries the input data given as an RDF dump or as a SPARQL endpoint for known vocabularies, and based on the result, it offers appropriate visualizations. These include an interactive RDF data cube visualization, various types of SKOS hierarchy visualizations and a map based visualization (see Fig. 2), which can be further filtered based on concepts connected to the points on the map. In addition, in the RDF data cube visualization it also employs Req. 11 IRI dereferencing to search for labels of entities.

As to the other parts of the LD consumption process, no requirements were satisfied in the Data output and Developer and community support groups.

V2

V2 [3] is an on-line demo for visualization of vocabularies used in RDF data. In the first step the user provides a list of SPARQL endpoints. V2 executes a set of predefined SPARQL queries to analyze the vocabularies used in the data and creates the dataset preview.

LinkedPipes Visualization – Points on a map.

V2 visualization.

On the top level of the preview, the used classes are presented (see Fig. 3). The user can select a class to see the information about the number of instances and about predicates used. V2 is simple, nevertheless it can be used to address the Dataset discovery part of the LD consumption process, specifically, it covers Data preview.

VISUalization Playground [3] (VISU) is an on-line service for visualization of SPARQL results. It uses predefined or user provided SPARQL SELECT queries to retrieve data from given SPARQL endpoints. Then it uses Google Charts Editor to create a wide range of visualizations (see Fig. 4) based on the output table, including line charts, tree charts or map visualizations.

VISU visualization.

The main focus of VISU is at Data visualization.

Sparklis [50] (Sp) is an on-line Linked Open Data exploration tool that does not require the user to have knowledge of SPARQL. The user can specify a SPARQL endpoint and explore its contents using so called

Sparklis user interface for data exploration.

In each step of the focus refinement process, the user is guided by suggestions for all three types of constrains. Sparklis offers an on–the–fly data visualization in the form of a table or, in case of geolocalized data, a map. A query corresponding to the current focus is accessible in the integrated YASGUI tool, which provides additional options for visualization and data export.

From the point of view of the LD Consumption process, Sparklis covers mainly Dataset discovery and Data manipulation.

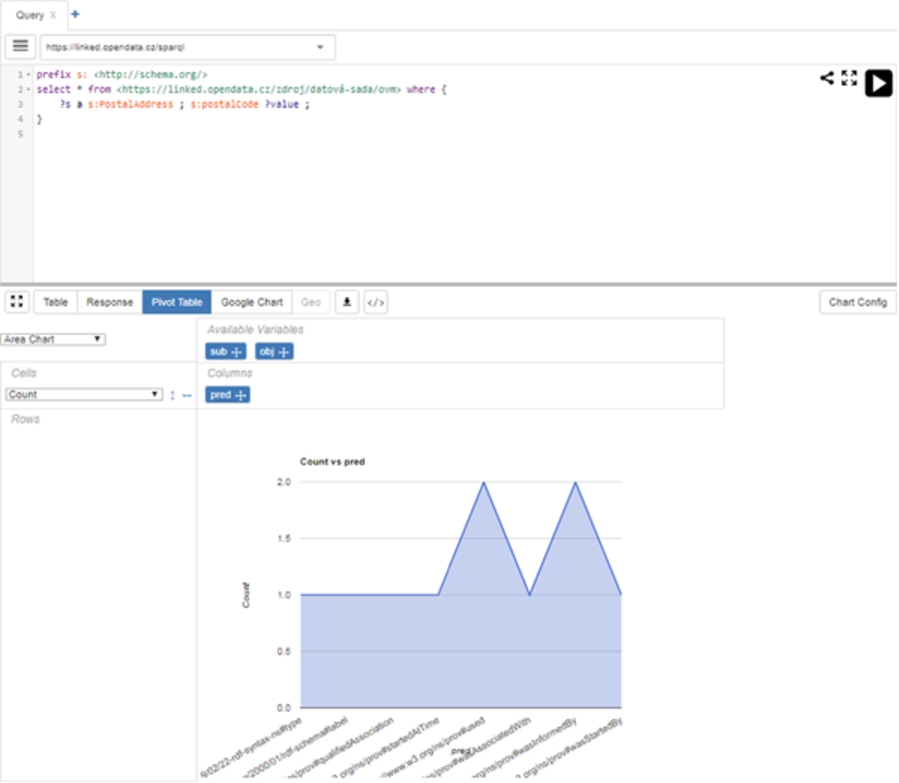

Yet Another SPARQL GUI [111] (YAS) is a web-based SPARQL query editor. As such it offers the ability for the user to specify a SPARQL query without knowing the precise syntax. Once the query is executed YASGUI provides different visualizations, such as table, pivot table, Google Chart (see Fig. 6) and Map visualizations, and export of SPARQL SELECT results to a CSV file.

YASGUI SPARQL query builder interface.

The aim of YASGUI is not to be used as a standalone tool, but rather be integrated as a component. Still, it covers several areas of the LD consumption process, mainly Data manipulation, Data visualization and Data output. It is currently used in multiple LD based tools,55

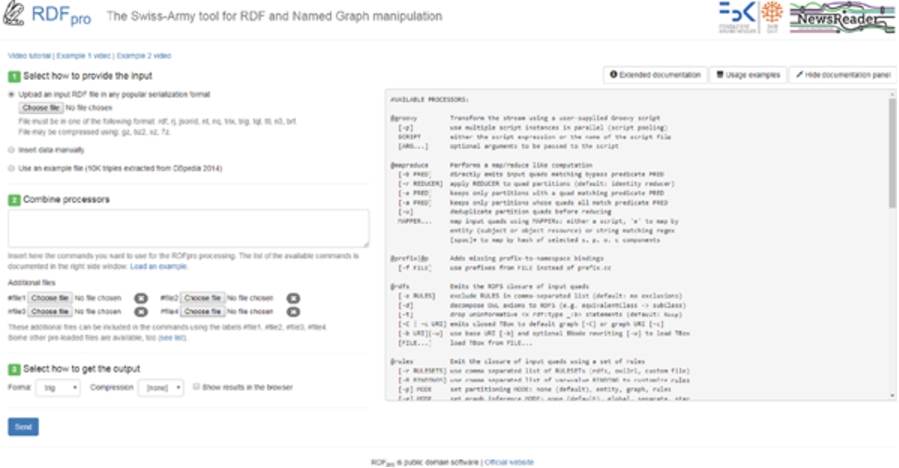

UnifiedViews [77] (UV) is a predecessor of LinkedPipes ETL, which means that it is also an open–source tool for design and execution of ETL pipelines (see Fig. 7), and it is also focused on RDF and linked data and features a library of reusable components. Its requirements coverage is therefore a subset of the coverage of LinkedPipes ETL.

UnifiedViews transformation pipeline.

LinkedPipes ETL transformation pipeline.

While it can be successfully used for LD publication, which is its primary purpose, its usage for LD consumption is limited to expert users only, due to its lack of proper documentation, incomplete API coverage and proprietary configuration formats.

LinkedPipes ETL [75] (LP-ETL) is an open-source tool for design and execution of ETL (Extract, Transform, Load) pipelines (see Fig. 8), with a library of more than 60 reusable components which can be used in the pipelines and which very well support the Data manipulation and Data output requirement groups. The tool is primarily focused on working with RDF data and features a thorough documentation, including how-tos and tutorials for typical LD ETL scenarios. LP-ETL also helps the user, who still has to be a LD expert though, to build a data transformation pipeline using smart suggestions of components to use. LinkedPipes ETL is developed as a replacement for UnifiedViews.

Regarding the LD Consumption process, the most notable features are its capability of working with larger data using so called data chunking approach [73] satisfying C 12.5 SPARQL: advanced download. In the Developer and community support requirements group, the satisfaction of C 31.3 Full API coverage and C 32.2 Configuration in RDF means that the tool can be easily integrated with other systems.

LinkedPipes ETL does not support the user in Dataset discovery or Data visualization.

OpenCube

OpenCube [65] (OC) is an open source project, which supports publication of statistical data using the RDF Data Cube Vocabulary and also provides visualizations and data analytics on top of the statistical cubes. OpenCube wraps another tool, the Information Workbench [57], and thus it has all the functionality provided by Information Workbench (see Fig. 9).

OpenCube user interface in Information Workbench.

OpenCube also integrates several other tools such as R and Grafter. The created visualization can be embedded into an HTML page as an interactive script.

Datalift [49] (DL) aims at semantic lifting of non-RDF data, but it can be also used to consume RDF data. It uses projects to group different data sources together and it allows users to perform transformations such as SPARQL CONSTRUCT, String to URI, IRI translation and Silk link discovery, supporting Data manipulation. Finally, it provides visualizations and RDF and CSV data export, fulfilling Req. 26 Visualization, Req. 27 RDF dump output, and Req. 30.1 CSV file output, respectively.

Datalift user interface.

The web-based user interface is simple and user friendly (see Fig. 10). Datalift provides out of the box manual visualization with support for several chart types like line chart, table chart, bar chart, timeline, tree map, geographical map, etc.

LODLaundromat [18] (LAUN) is an open–source service, with web user interface (see Fig. 11), designed to provide access to Linked Open Data. It aims to tackle the problem of data quality by converting the data into a single format, removing duplicates and blank nodes and by fixing syntax errors.

LOD Laundromat data input user interface.

Users can submit data via custom URLs for processing and cleaning. Once the data is clean, it can be downloaded or queried using a linked data fragments interface. LODLaundromat has strong support in the Data input functionality. As data output, it only supports C 27.3 N-Triples file dump.

Linked Data Reactor [67] (LDR) is a framework consisting of user interface components for Linked Data applications. It is built to follow the Flux56

LD-R user interface.

Nevertheless, once set up, most of the functionality is non-LD expert friendly. LD-R mainly covers requirements in the Data input and Data visualization sections.